Submitted:

10 February 2026

Posted:

11 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

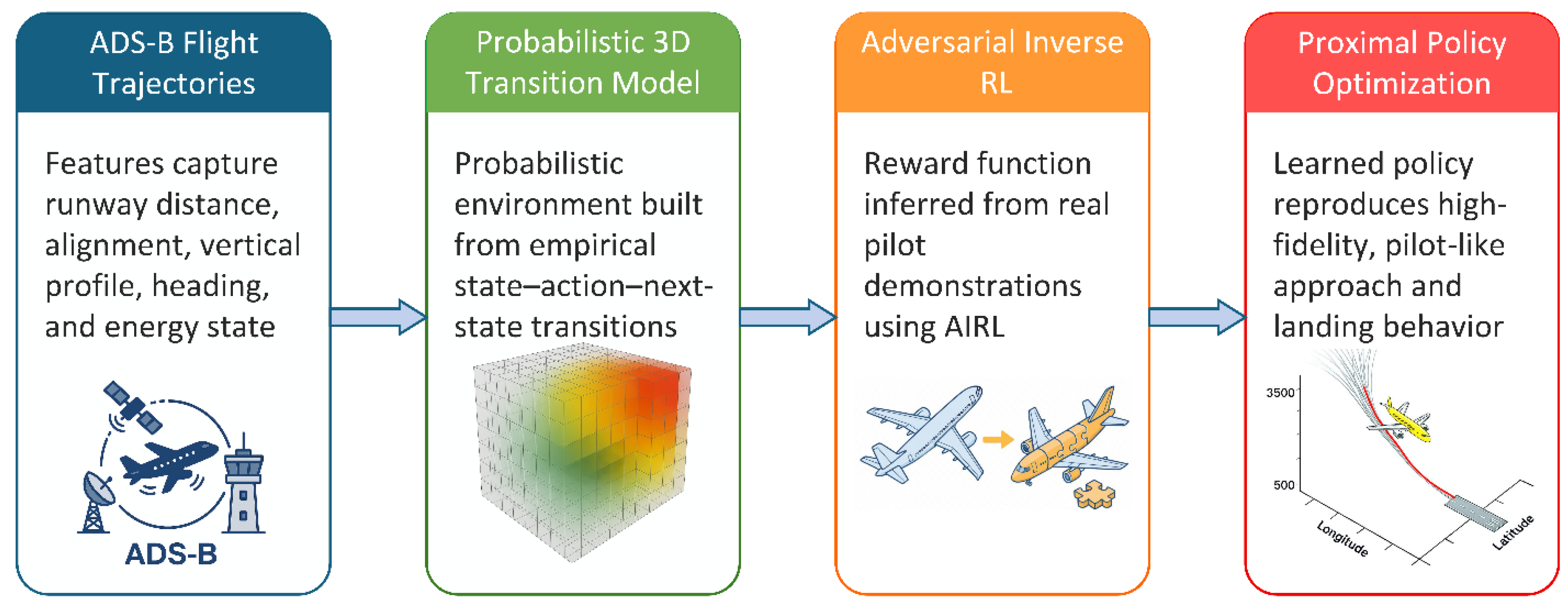

2. Learning Mechanisms of Learning Capable Aircraft

- The first section describes how we can collect and prepare the relevant data for a specific flight case, such as ADS-B data during approach to a specific airport.

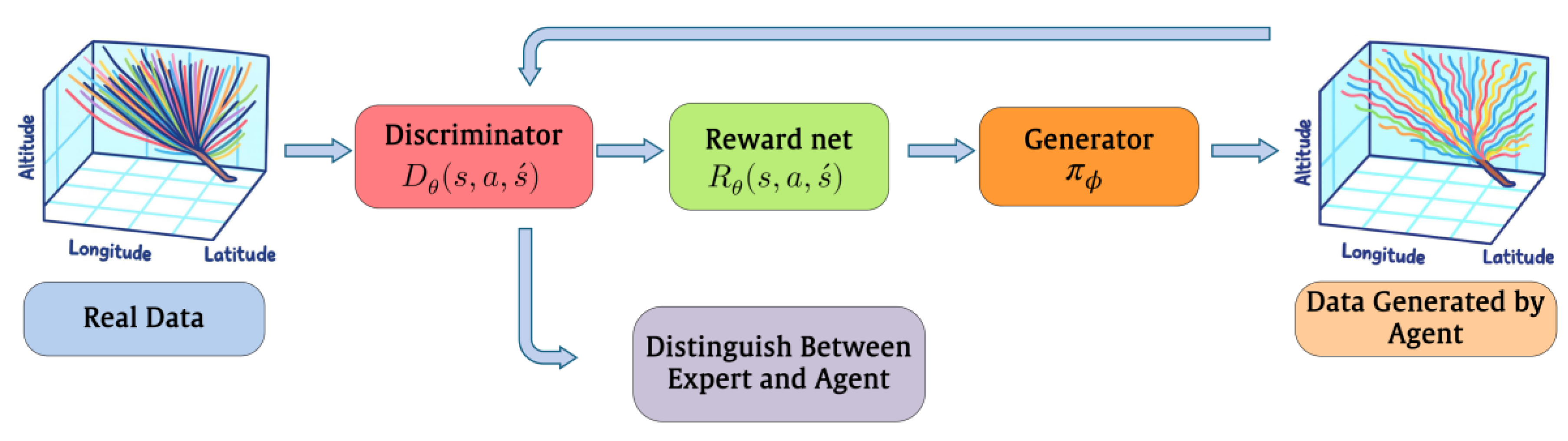

- The second section describes the learning process to form a robust, expert-consistent, 3D controller exclusively from real-world data associated with section (1)

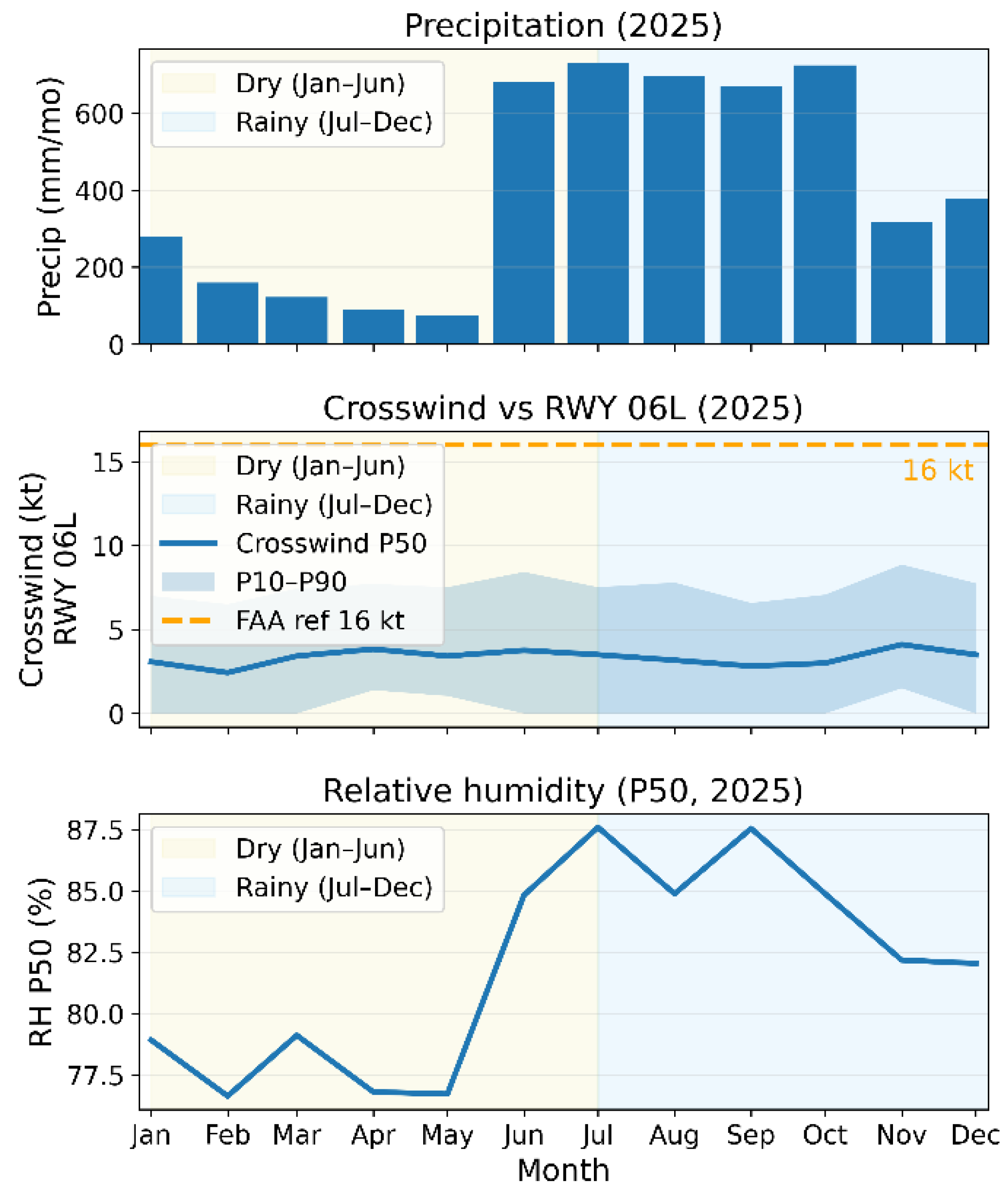

- The third section, describes how the process could be fine-tuned for a specific airport, which in this study is International Airport (PGUM).

- A new probabilistic model based on real-world data to extract policies without mathematical models.

- Extraction of applied reward functions directly from history of operation to feed into R-PPO, eliminating the need for trial-error in the process of reward adjustments.

- Provision of implicit robustness and stability based on experts in the field historical data.

- Provision of built-in validation process into the model with robustness to initial-state variations, and guarantees valid terminal conditions.

3. Literature Review

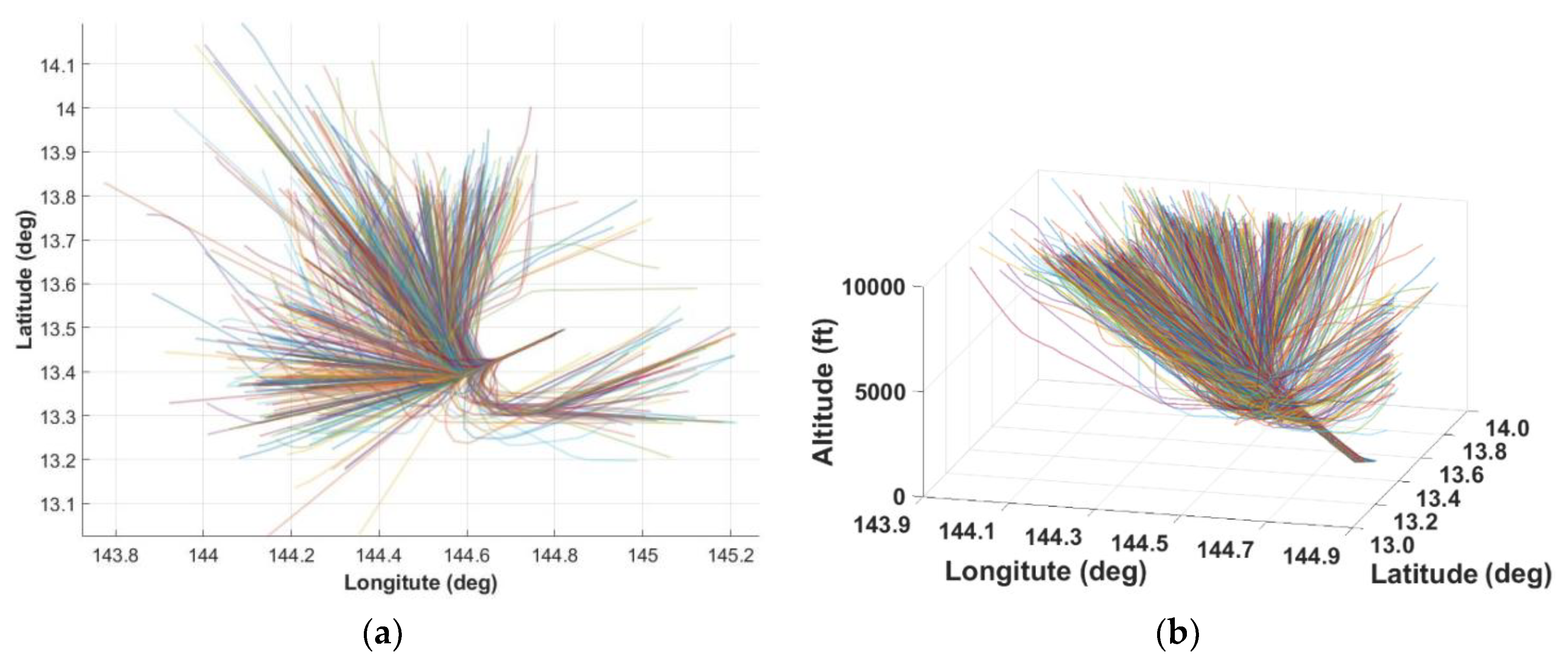

4. Dataset and Preprocessing

5. State–Action–Environment Modeling

5.1. State Representation

5.2. Robust Normalization and Grid Construction

5.3. Demonstration Extraction

5.4. Action Space and Expert Labeling

6. Training Methodology

6.1. Environment and Demonstration Preparation

6.2. Learning Framework and Model Architecture

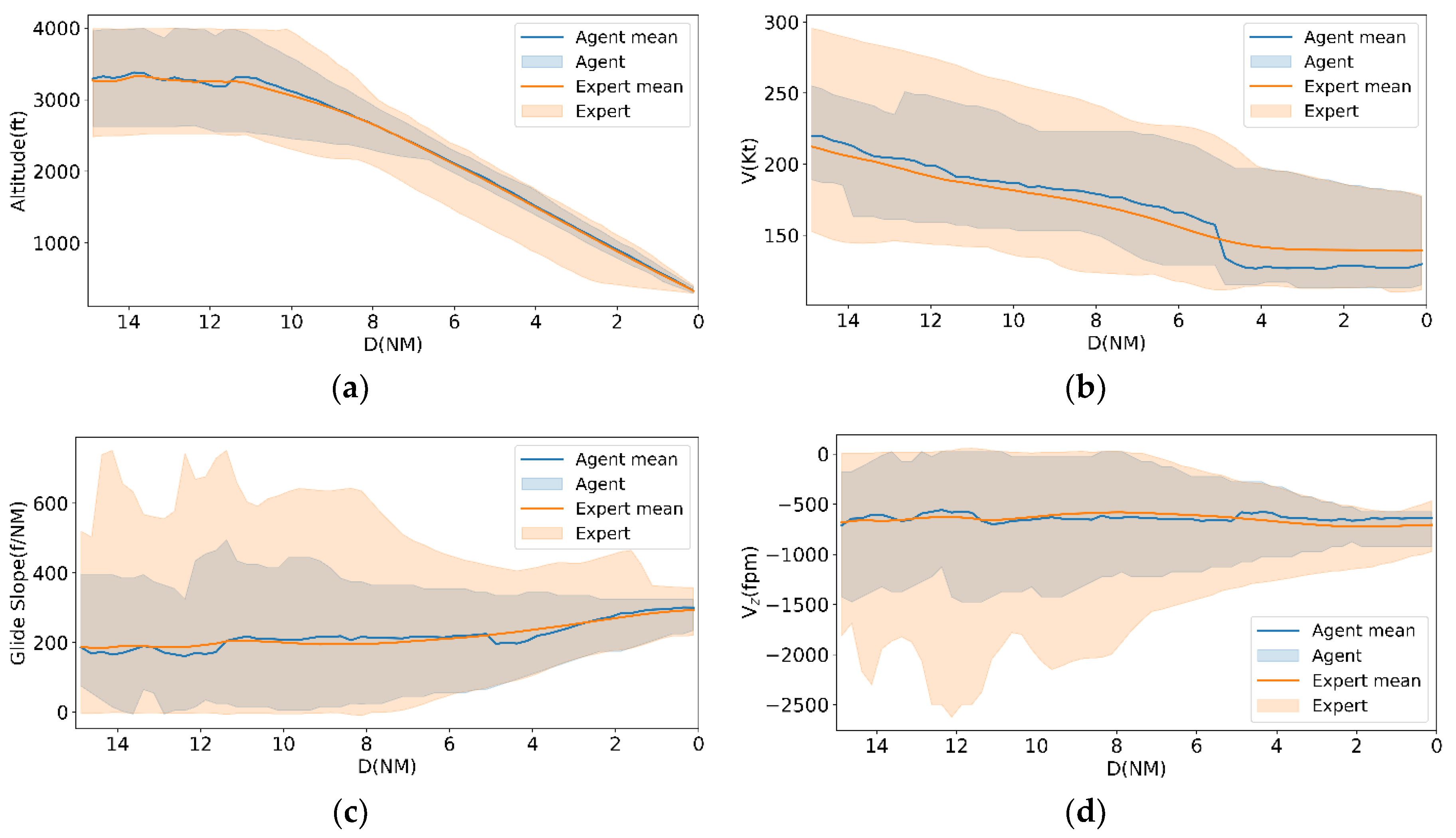

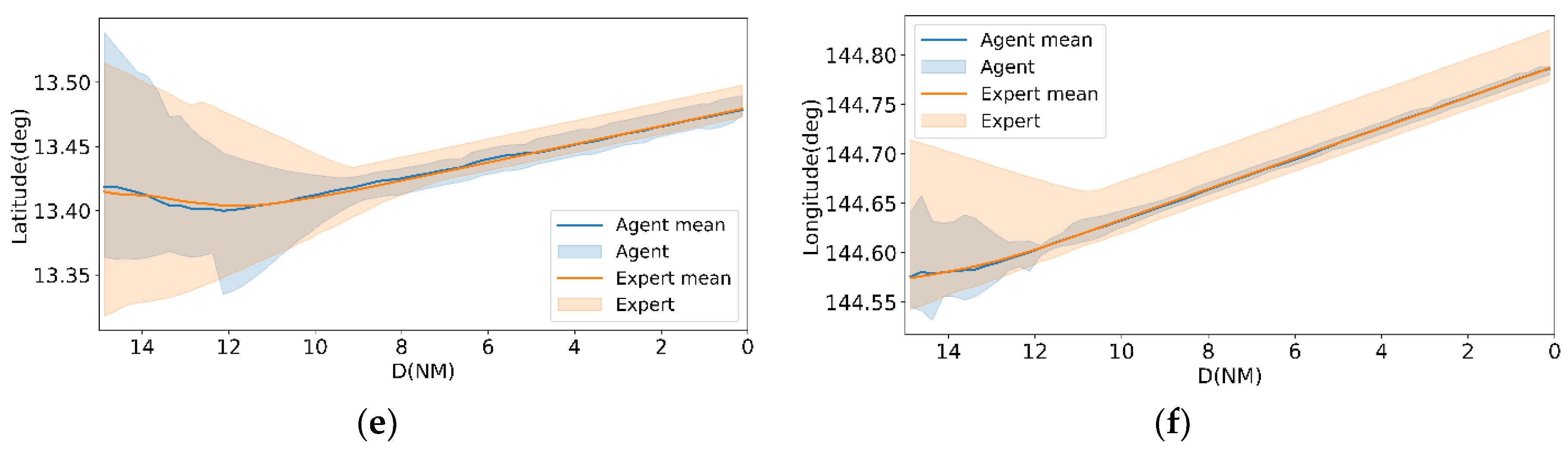

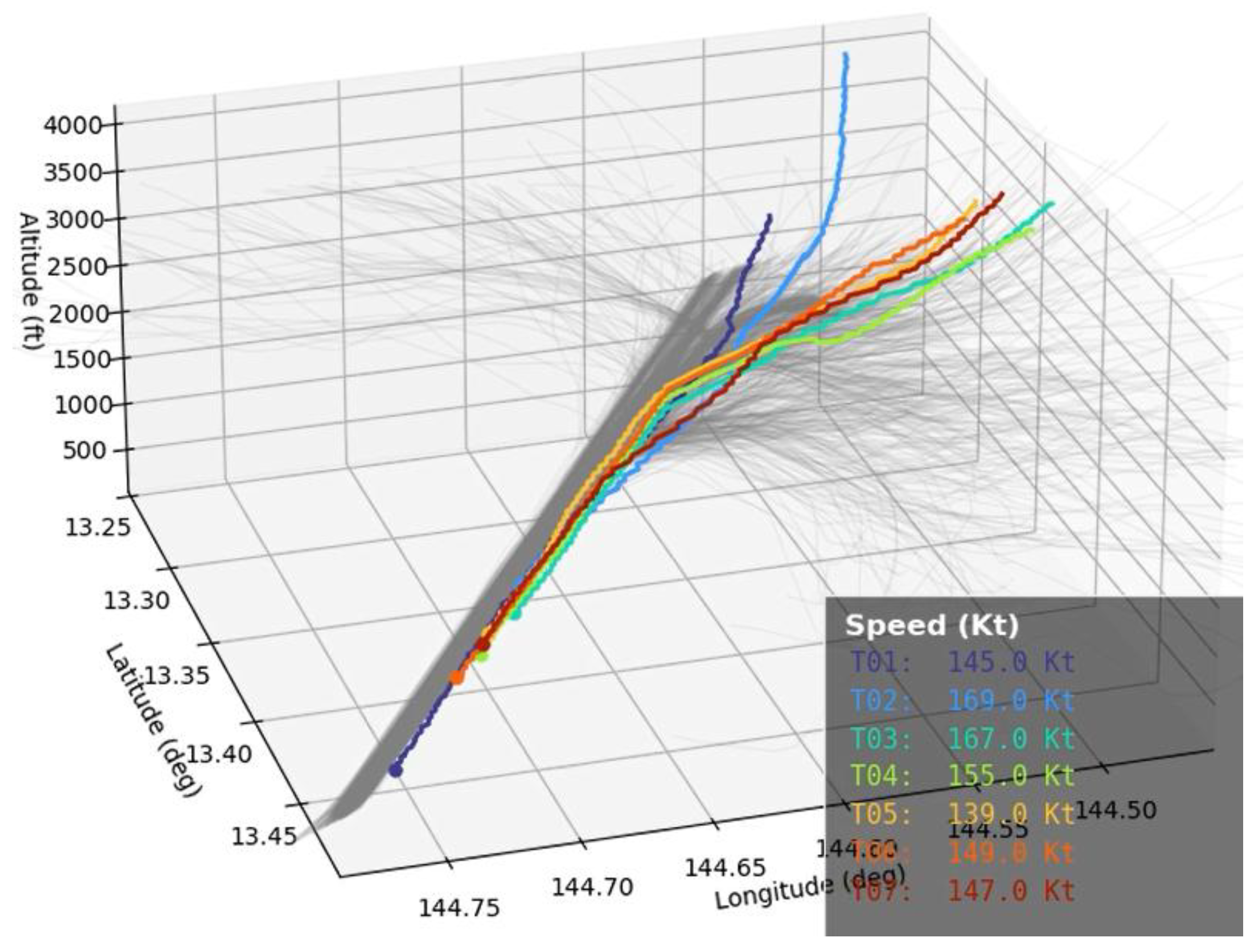

7. Validation and Evaluation

8. Discussion and Future Works

References

- Fu, J.; Luo, K.; Levine, S. Learning robust rewards with adversarial inverse reinforcement learning. ArXiv Prepr. 2017. [Google Scholar]

- Sun, J.; Yu, L.; Dong, P.; Lu, B.; Zhou, B. Adversarial inverse reinforcement learning with self-attention dynamics model. IEEE Robot. Autom. Lett., 2021. [Google Scholar]

- Venuto, D.; Chakravorty, J.; Boussioux, L.; Wang, J.; McCracken, G.; Precup, D. OIRL: Robust adversarial inverse reinforcement learning with temporally extended actions. ArXiv Prepr., 2020. [Google Scholar]

- Arnob, S. Y. Off-policy adversarial inverse reinforcement learning. ArXiv Prepr., 2020. [Google Scholar]

- Zhan, M.; Fan, J.; Guo, J. Generative adversarial inverse reinforcement learning with deep deterministic policy gradient. IEEE Access, 2023. [Google Scholar]

- Yuan, M.; Pun, M.-O.; Chen, Y.; Cao, Q. Hybrid adversarial inverse reinforcement learning. ArXiv Prepr. 2021. [Google Scholar]

- Wang, P.; Liu, D.; Chen, J.; Chan, C.-Y. Adversarial inverse reinforcement learning for decision making in autonomous driving. ArXiv Prepr., 2019. [Google Scholar]

- Wang, P.; Liu, D.; Chen, J.; Han-han, L.; Chan, C.-Y. Human-like decision making for autonomous driving via adversarial inverse reinforcement learning. ArXiv Prepr., 2019. [Google Scholar]

- Wang, P.; Liu, D.; Chen, J.; Li, H.; Chan, C.-Y. Decision making for autonomous driving via augmented adversarial inverse reinforcement learning. ArXiv Prepr., 2019. [Google Scholar]

- Yu, L.; Song, J.; Ermon, S. Multi-agent adversarial inverse reinforcement learning. ArXiv Prepr. 2019. [Google Scholar]

- Pattanayak, K.; Krishnamurthy, V.; Berry, C. Inverse-inverse reinforcement learning: How to hide strategy from an adversarial inverse reinforcement learner. In Proceedings of the IEEE Conference on Decision and Control (CDC), 2022. [Google Scholar]

- Chen, J.; Lan, T.; Aggarwal, V. Hierarchical adversarial inverse reinforcement learning. In IEEE Trans. Neural Netw. Learn. Syst.; 2023. [Google Scholar]

- Xiang, G.; Li, S.; Shuang, F.; Gao, F.; Yuan, X. SC-AIRL: Share-critic in adversarial inverse reinforcement learning for long-horizon tasks. IEEE Robot. Autom. Lett., 2024. [Google Scholar]

- Zhu, L. DRL-RNP: Deep Reinforcement Learning-Based Optimized RNP Flight Procedure Execution. Sensors 2022, vol. 22(no. 17). [Google Scholar] [CrossRef] [PubMed]

- Sun, H; Zhang, W; Yu, R; Zhang, Y. “Motion planning for mobile robots—Focusing on deep reinforcement learning: A systematic review”. IEEE Access 2021, 9, 69061–81. [Google Scholar] [CrossRef]

- Richter, D. J.; Calix, R. A. QPlane: An Open-Source Reinforcement Learning Toolkit for Autonomous Fixed Wing Aircraft Simulation. In in Proceedings of the 12th ACM Multimedia Systems Conference, in MMSys ’21, New York, NY, USA, Sept. 2021; Association for Computing Machinery; pp. 261–266. [Google Scholar] [CrossRef]

- Wang, W.; Ma, J. A review: Applications of machine learning and deep learning in aerospace engineering and aero-engine engineering. Adv. Eng. Innov. 2024, vol. 6, 54–72. [Google Scholar] [CrossRef]

- Hu, Z. Deep Reinforcement Learning Approach with Multiple Experience Pools for UAV’s Autonomous Motion Planning in Complex Unknown Environments. Sensors 2020, vol. 20(no. 7). [Google Scholar] [CrossRef] [PubMed]

- Neto, E. C. P. Deep Learning in Air Traffic Management (ATM): A Survey on Applications, Opportunities, and Open Challenges. Aerospace 2023, vol. 10(no. 4). [Google Scholar] [CrossRef]

- Hu, J.; Yang, X.; Wang, W.; Wei, P.; Ying, L.; Liu, Y. Obstacle Avoidance for UAS in Continuous Action Space Using Deep Reinforcement Learning. IEEE Access 2022, vol. 10, 90623–90634. [Google Scholar] [CrossRef]

- Ali, H.; Pham, D.-T.; Alam, S.; Schultz, M. A Deep Reinforcement Learning Approach for Airport Departure Metering Under Spatial–Temporal Airside Interactions. IEEE Trans. Intell. Transp. Syst. 2022, vol. 23(no. 12), 23933–23950. [Google Scholar] [CrossRef]

- Choi, J. Modular Reinforcement Learning for Autonomous UAV Flight Control. Drones 2023, vol. 7(no. 7). [Google Scholar] [CrossRef]

- Chronis, C.; Anagnostopoulos, G.; Politi, E.; Dimitrakopoulos, G.; Varlamis, I. Dynamic Navigation in Unconstrained Environments Using Reinforcement Learning Algorithms. IEEE Access 2023, vol. 11, 117984–118001. [Google Scholar] [CrossRef]

- Wada, D.; Araujo-Estrada, S. A.; Windsor, S.; Wada, D.; Araujo-Estrada, S. A.; Windsor, S. Unmanned Aerial Vehicle Pitch Control under Delay Using Deep Reinforcement Learning with Continuous Action in Wind Tunnel Test. Aerospace 2021, vol. 8(no. 9). [Google Scholar] [CrossRef]

- Azar, A.T.; Koubaa, A.; Ali Mohamed, N.; Ibrahim, H.A.; Ibrahim, Z.F.; Kazim, M.; Ammar, A.; Benjdira, B.; Khamis, A.M.; Hameed, I.A.; Casalino, G. “Drone deep reinforcement learning: A review”. Electronics 2021, 10(9), 999. [Google Scholar] [CrossRef]

- Lou, J. Real-Time On-the-Fly Motion Planning for Urban Air Mobility via Updating Tree Data of Sampling-Based Algorithms Using Neural Network Inference. Aerospace 2024, vol. 11(no. 1). [Google Scholar] [CrossRef]

- Zhu, H. Inverse Reinforcement Learning-Based Fire-Control Command Calculation of an Unmanned Autonomous Helicopter Using Swarm Intelligence Demonstration. Aerospace 2023, vol. 10(no. 3). [Google Scholar] [CrossRef]

- Brunke, L.; Greeff, M.; Hall, A.W.; Yuan, Z.; Zhou, S.; Panerati, J.; Schoellig, A.P. Safe learning in robotics: From learning-based control to safe reinforcement learning. Annual Review of Control, Robotics, and Autonomous Systems 2022, 5(1), 411–444. [Google Scholar] [CrossRef]

- Alipour, E.; Malaek, S. “Learning Capable Aircraft (LCA): an Efficient Approach to Enhance Flight-Safety,” under review.

- WMO Codes Registry : wmdr/DataFormat/FM-15-metar. 05 Jan 2026. Available online: https://codes.wmo.int/wmdr/DataFormat/FM-15-metar.

- Iowa Environmental Mesonet. 05 Jan 2026. Available online: https://mesonet.agron.iastate.edu/cgi-bin/request/asos.py.

- Climate trends and projections for Guam | U.S. Geological Survey. 05 Jan 2026. Available online: https://www.usgs.gov/publications/climate-trends-and-projections-guam.

- Advisory Circular No.:150-5300-13A; Airport Design, Updates to the standards for Taxiway Fillet Design. FAA, 2014.

- Freedman, D.; Diaconis, P. On the histogram as a density estimator: L2 theory. Z. Für Wahrscheinlichkeitstheorie Verwandte Geb. 1981, vol. 57(no. 4), 453–476. [Google Scholar]

- Fu, J.; Luo, K.; Levine, S. Learning robust rewards with adversarial inverse reinforcement learning. In Proceedings of the 6th International Conference on Learning Representations (ICLR), 2018. [Google Scholar]

- Ng, Y.; Harada, D.; Russell, S. Policy Invariance under Reward Transformations: Theory and Application to Reward Shaping. In Proceedings of the 16th International Conference on Machine Learning (ICML), 1999; Morgan Kaufmann; pp. 278–287. [Google Scholar]

- Schulman, J.; Wolski, F.; Dhariwal, P.; Radford, A.; Klimov, O. Proximal Policy Optimization Algorithms. ArXiv Prepr. ArXiv170706347, 2017. [Google Scholar]

- Aircraft Characteristics Database | Federal Aviation Administration. 31 Dec 2025. Available online: https://www.faa.gov/airports/engineering/aircraft_char_database.

- Use of Aircraft Approach Category During Instrument Approach Operations. FAA, 01/09/2023.

| No. | Airplane Type | Number of Flights | Number of Airplanes of this Type |

|---|---|---|---|

| 1 | Airbus A321-271N (A21N) | 56 | 15 |

| 2 | Boeing 767-346-ER (B763) | 91 | 9 |

| 3 | Boeing 737-8K5 (B738) | 384 | 34 |

| 4 | Airbus A330-200 (A332) | 1 | 1 |

| 5 | Airbus A330-322 (A333) | 18 | 10 |

| 6 | Boeing 777-2B5-ER (B772) | 31 | 5 |

| 7 | Boeing 777-3B5 (B773) | 66 | 4 |

| 8 | Boeing 777-3B5-ER (B77W) | 91 | 33 |

| 9 | Boeing 787-9 Dreamliner (B789) | 22 | 8 |

| 10 | Boeing 757-230(PCF) (B752) | 64 | 3 |

| 11 | Airbus A321-231 (A321) | 109 | 19 |

| 12 | Boeing 737 MAX 8 (B38M) | 106 | 2 |

| Total | 1039 | 142 |

| Symbol | Description | Unit | BIN Width (h) |

|---|---|---|---|

| D | Distance to touchdown | NM | 0.05 |

| XTE | Cross-track error | NM | 0.015 |

| H | Altitude | ft | 5 |

| V | Airspeed | kt | 2 |

| Vertical speed | fpm | 50 | |

| G/S | Glide Slope | ft/NM | 10 |

| Heading error | deg | 1 | |

| Heading rate | deg/s | 0.1 | |

| ft | 70 |

| Parameter | AIRL (Reward Inference) | R-PPO (Policy Optimization) | Description |

|---|---|---|---|

| Learning rate | 3 × 10⁻⁴ | 3 × 10⁻⁴ | Adam optimizer learning rate |

| n _steps (per update) | 2048 | 2048 | Number of environment steps before each PPO update |

| Batch size | 64 | 64 | Minibatch size for gradient updates |

| PPO epochs | 5 | 10 | Gradient passes per batch |

| Discount factor (γ) | 0.995 | 0.99 | Temporal discounting constant |

| GAE λ | 0.97 | 0.95 | Generalized Advantage Estimation factor |

| Target KL | 0.02 | 0.03 | Early-stop threshold on policy KL (Kullback–Leibler divergence) |

| Entropy coefficient | 0.01 | 0.01 | Exploration regularization term |

| Max gradient norm | 0.5 | 0.5 | Gradient clipping threshold |

| Discriminator updates per round | 2 | — | AIRL discriminator optimization steps |

| No. | Airplane Type (ICAO Code) | @ MLW (kt IAS) [38] | |

|---|---|---|---|

| 1 | Airbus A321-271N (A21N) | 136 | 104.6 |

| 2 | Boeing 767-346-ER (B763) | 140 | 107.7 |

| 3 | Boeing 737-8K5 (B738) | 144 | 110.8 |

| 4 | Airbus A330-200 (A332) | 136 | 104.6 |

| 5 | Airbus A330-322 (A333) | 137 | 105.4 |

| 6 | Boeing 777-2B5-ER (B772) | 140 | 107.7 |

| 7 | Boeing 777-3B5 (B773) | 149 | 114.6 |

| 8 | Boeing 777-3B5-ER (B77W) | 149 | 114.6 |

| 9 | Boeing 787-9 Dreamliner (B789) | 144 | 110.8 |

| 10 | Boeing 757-230(PCF) (B752) | 137 | 105.4 |

| 11 | Airbus A321-231 (A321) | 142 | 109.2 |

| 12 | Boeing 737 MAX 8 (B38M) | 145 | 111.5 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).