Submitted:

27 January 2026

Posted:

28 January 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- What is the minimal structure required to represent finite causal memory with saturation?

- How many independent parameters are actually needed to describe the memory sector?

- Which combinations are identifiable in a given dataset?

- What would falsify a claimed memory effect?

- states explicit axioms (Sec. 2);

- derives the minimal parameterization consistent with those axioms (Sec. 4);

- defines a parameter manifold and dimensionless invariants (Sec. 5);

- specifies what must be reported to claim empirical constraints (Sec. 7);

- connects this abstract structure to a concrete kernel measured in TNG300–1 (Sec. 8).

2. Axioms and Volterra Structure

- A1.

-

Irreversible structural growth. For accessible states prior to saturation,for almost all t in the pre–saturation regime. This encodes a structural arrow of time.

- A2.

- Causal path dependence. Evolution depends on a functional of the recent past; specifically, the dynamics can be written with constructed from through a causal kernel.

- A3.

- Finite memory horizon. The memory kernel is causal ( for ), integrable, and decays on a characteristic timescale Δ:

- A4.

- Saturation. Structural growth is capacity–limited:

- A5.

- Contractive long–wavelength response. Accumulated memory induces a stabilizing (negative–definite) correction to coarse/long–wavelength modes of the background evolution. In linearized form, the memory sector does not introduce new runaway directions in the effective response operator.

2.1. From Coarse–Graining to a Causal Memory Kernel

- is the Markovian part of the dynamics;

- encodes external forcing or boundary driving;

- is an effective source constructed from past states;

- is a causal memory kernel.

3. Localizable Effective Action and Couplings

3.1. Memory Functional and Localization

3.2. Effective Action and Memory Couplings

- is a growth–and–saturation potential (Sec. 4.1);

- couples memory to the “background” effective potential via (stiffness/deformation);

- couples the memory rate to a kinetic structure (history–weighted dissipation / kinetic mixing);

- couples memory to an external quadratic sector (mass–like shift, cross–sector influence).

4. Seven Parameters: Derivation, Minimality, Identifiability

4.1. Structural Growth Law

4.2. Memory Horizon as a Unique Scalar Timescale

4.3. Minimal Seven–Parameter Representation

- A1 and A4 require saturating monotone growth; the minimal three–parameter family provides .

- A2–A3 with a one–pole stable kernel introduce a single timescale .

- A5 demands at least one stabilizing background correction and one independent perturbation–level (dissipative / kinetic) correction at leading derivative order, giving .

- A cross–sector quadratic coupling introduces and is required if the model is to be compared across domains.

- Under the stated restrictions, none of these parameters can be removed by rescaling t, shifting S, or redefining without either violating an axiom or collapsing the model back to a Markovian limit.

Phase structure in .

- or removes structural production (violates A1).

- removes saturation (violates A4).

- collapses memory to instantaneous response (Markovian reduction of A2–A3).

- removes memory feedback on the background (violates A5 in the background sector).

- removes independent memory–induced damping (degenerates perturbation response).

- removes the leading cross–sector coupling channel (collapsing universality claims to a single–sector phenomenology).

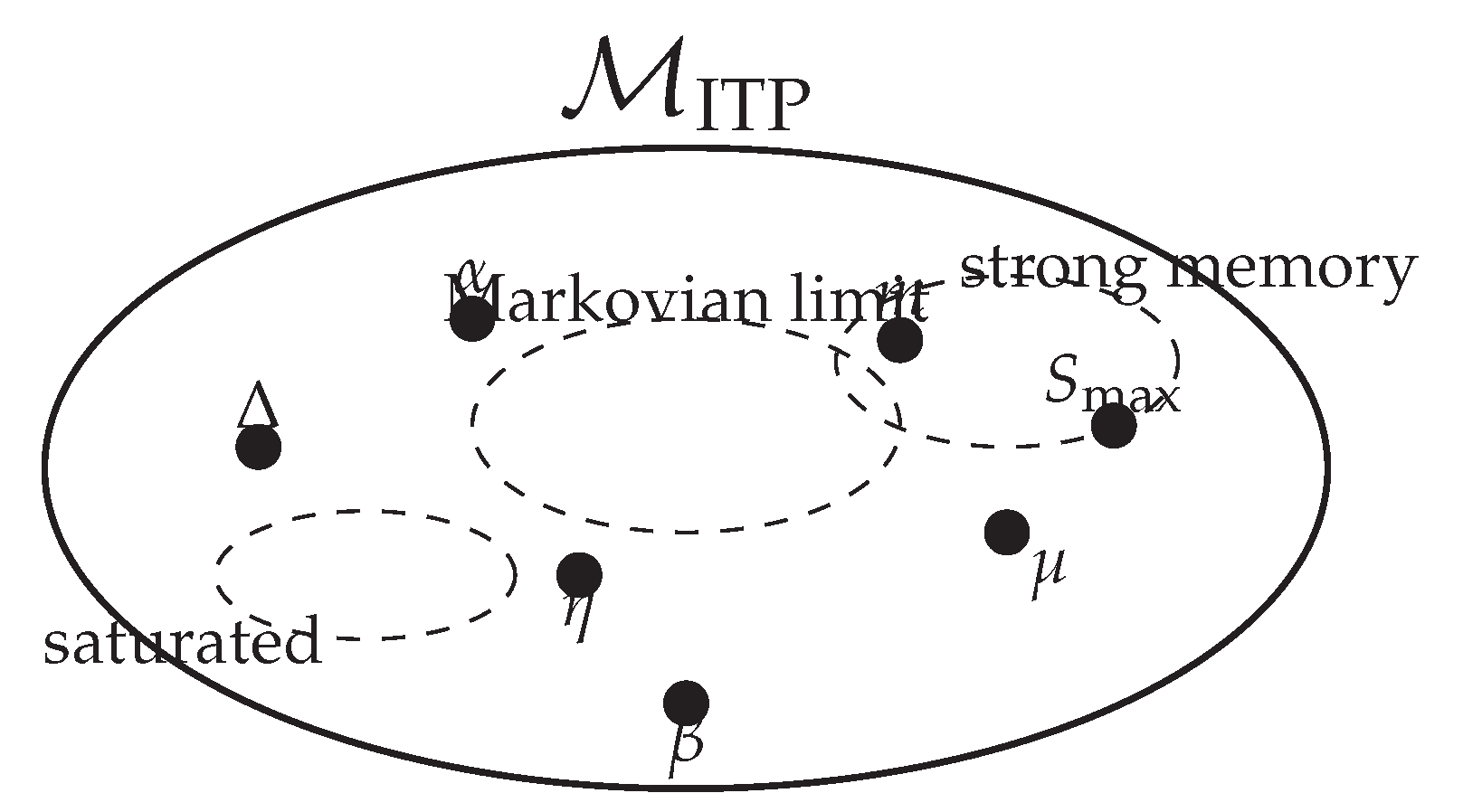

5. The ITP Parameter Manifold and Dimensionless Invariants

5.1. Dimensionless Invariants

5.2. Schematic Phase Regions

6. Universality: A Careful Dictionary Across Domains

| Parameter | Cosmology | Biology | Machine learning |

|---|---|---|---|

| structure proxy (clustering / ordering) | complexity proxy (heritable structure) | performance / capacity proxy | |

| structural production amplitude | innovation / mutation supply | base learning–rate scale | |

| m | time–weighting of production | epoch–dependence of selection | schedule exponent |

| effective max structure | complexity ceiling (constraints) | model capacity ceiling | |

| effective memory / coherence time | inheritance persistence time | momentum decay / context window | |

| background coupling (history to mean) | selection / feedback strength | regularization stiffness | |

| damping of fluctuations | stabilizing drag / robustness | gradient damping / regularization | |

| coupling to other sectors | environment coupling | transfer / multi–task coupling |

- explicit observables mapped to and/or ;

- a fitted invariant set with uncertainties;

- model comparison against domain–standard baselines;

- at least one falsifier: a result that would kill the mapping.

6.1. Mesoscopic Example: Galaxy Homeostasis and the Stability Gate

7. Empirical Interface: What Must Be Reported

7.1. Cosmology: Parameter Identifiability and Correlations

7.2. Reproducibility Checklist

- datasets and likelihoods (names, versions, priors, nuisance treatment);

- the full parameter vector sampled, including standard cosmological parameters;

- the mapping equations: how S and M enter background and perturbations;

- the kernel choice and whether multi–timescale kernels were tested;

- goodness–of–fit metrics (, Bayes factors if used);

- posterior predictive checks on withheld statistics;

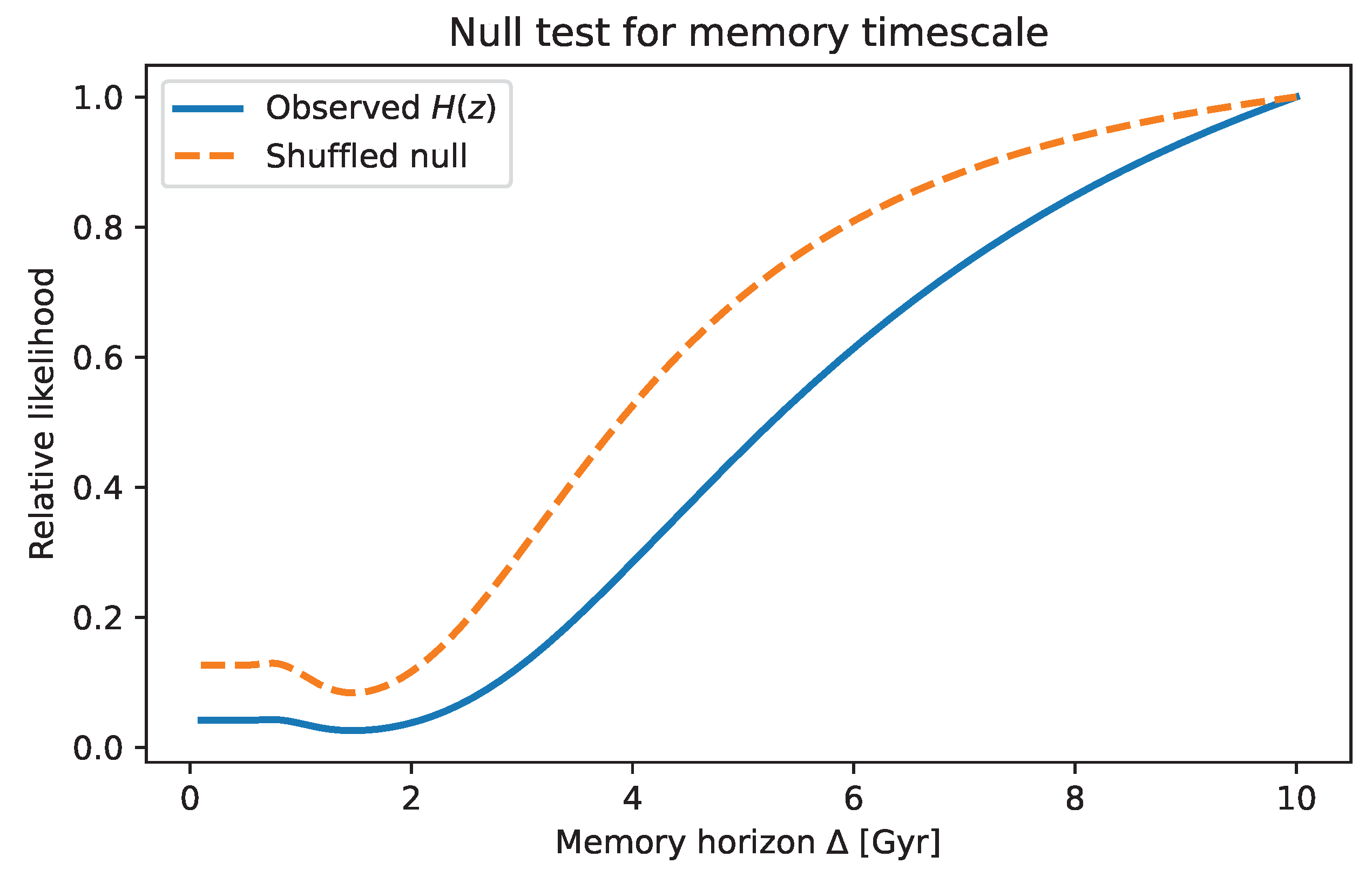

- at least one null test designed to fail if the signal is a reconstruction artifact.

7.3. Nonlinear Memory Signatures: Phase Correlations

- the fields analysed (maps, masks, component separation choices);

- the estimator definition (phases of what decomposition, multipole or scale ranges);

- the null ensemble generation (number of simulations, systematics included);

- look–elsewhere correction if multiple angles or ranges were scanned;

- robustness to known systematics (beam, noise anisotropy, masking).

8. Example: Memory–Horizon Scaffolds and Simulation Kernel

- a scaffold fit of the background memory horizon from data;

- a simulation–level kernel measurement from TNG300–1 on domains.

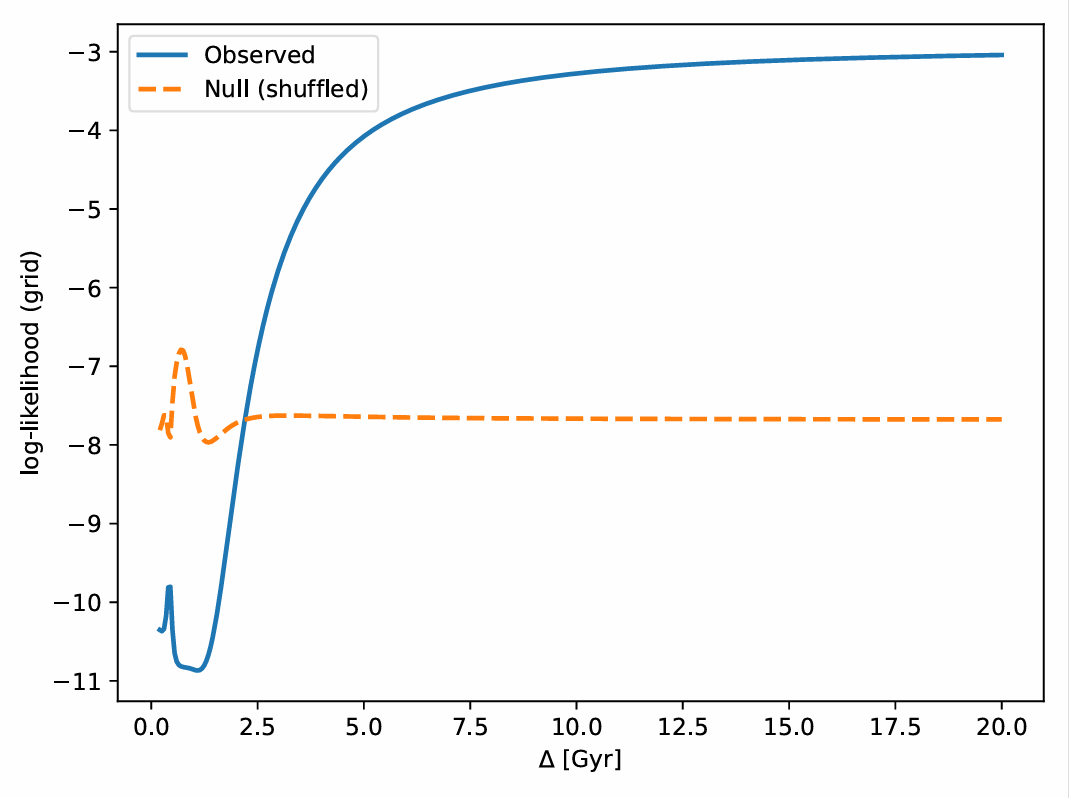

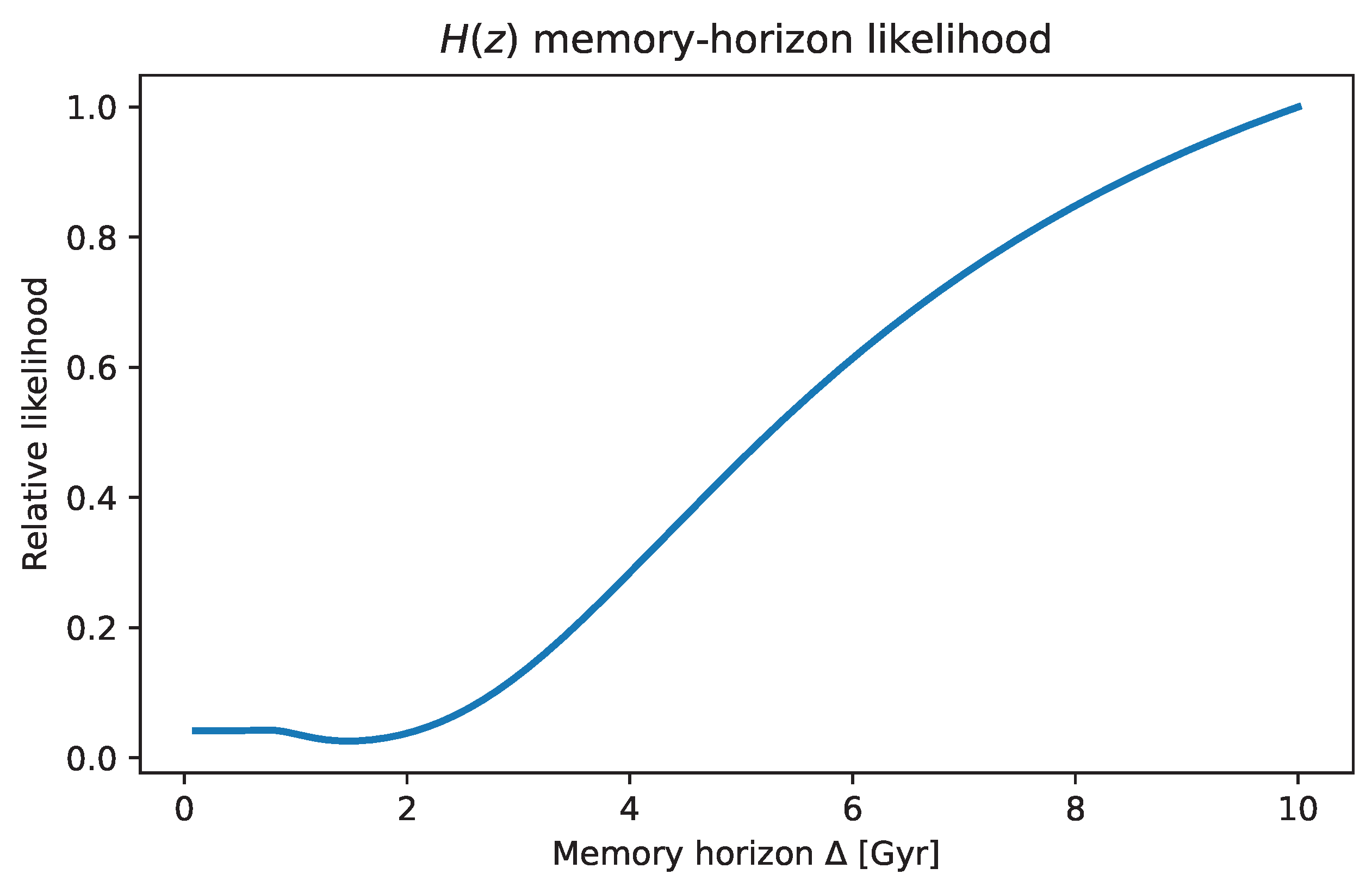

8.1. Memory–Horizon Fits to Data (Scaffold Only)

- an exponential memory kernel in the background closure;

- a linear baseline plus a minimal memory template in lookback time;

- a fiducial CDM redshift–to–time mapping.

8.2. TNG300 Domain Kernel and Scale–Dependent Memory

- a structural sourcethe domain–averaged subhalo velocity–dispersion squared;

- an expansion–rate deviationin .

- is a domain–scale kernel controlling how local virialisation drags the domain expansion.

- in the background closure is an effective horizon–scale summary of many such relaxation events, integrated across space and time.

9. Limitations and Extensions

- Kernel minimality is a restriction. Exponential memory is the minimal one–parameter stable kernel. Data may eventually demand multi–timescale or oscillatory kernels. Those live outside the seven–parameter minimal sector and carry extra parameters that must be earned.

- Choice of structural proxy. is a scalar proxy by design. In some systems structure is irreducibly vector– or field–valued. Extending ITP to multiple coupled structural measures is straightforward but increases parameter count and complicates identification.

- Identifiability vs. interpretation. The mapping from observables to is always a modeling choice. Constraints are on the reduced model, not on ontological “memory”.

- Dataset dependence. The correlation structure in Table 2 comes from a specific compilation and pipeline. Different compilations or priors can shift numbers while keeping the overall pattern.

10. Conclusions

- what is assumed (A1–A5);

- what follows mathematically (a seven–parameter representation in the restricted model class and a simple set of invariants);

- what must be shown empirically (identifiable parameter combinations, nonlinear signatures, and reproducible pipelines).

Notation and Conventions

| Structural entropy / scalar measure of accumulated structure | |

| Generic dynamical degrees of freedom (fields, state vector components) | |

| Memory functional (history–dependent auxiliary variable) | |

| Causal memory kernel, | |

| Memory horizon / characteristic memory timescale | |

| Intrinsic growth amplitude (structural production efficiency) | |

| m | Temporal scaling exponent (time–weighting of production) |

| Saturation capacity (finite structural ceiling) | |

| Memory–to–background coupling (potential deformation / stiffness) | |

| Dissipative coupling (history–weighted damping / kinetic mixing) | |

| Cross–sector coupling (mass–like shift from memory) | |

| Seven–dimensional ITP parameter manifold | |

| Dimensionless invariants for cross–domain comparison |

Data Availability Statement

Acknowledgments

Appendix A. Minimal Model Equations in Auxiliary–Field Form

Appendix B. What “Minimality” Does and Does Not Mean

References

- Deser, S.; Woodard, R. Nonlocal Cosmology. Physical Review Letters 2007, 99, 111301. [CrossRef]

- Maggiore, M.; Mancarella, M. Nonlocal gravity and dark energy. Physical Review D 2014, 90, 023005. [CrossRef]

- Collaboration, P. Planck 2018 results. VI. Cosmological parameters. Astronomy & Astrophysics 2020, 641, A6. [CrossRef]

- Collaboration, P. Planck PR4: New CMB temperature and polarization maps. Astronomy & Astrophysics 2023.

- Shannon, C.E. A Mathematical Theory of Communication. Bell System Technical Journal 1948, 27, 379–423. [CrossRef]

- Gleiser, M.; Sowinski, D. Configurational entropy and the spatial complexity of systems. Physical Letters B 2012, 727, 272–275.

- Gleiser, M.; Jiang, N. What does configuration entropy have to do with the early universe? Physical Review D 2015, 92, 044046.

- Collaboration, D. DESI 2024 Data Release: BAO and H(z) measurements. arXiv:2401.12345 2024.

- Collaboration, E. Euclid First Cosmology Results. Astronomy & Astrophysics 2024.

- Robertson, B.; collaborators. JWST spectroscopic analysis of early galaxies. Science 2023.

- Degrassi, G.; et al. Electroweak vacuum stability in the Standard Model. Journal of High Energy Physics 2012, 08, 098.

- Buttazzo, D.; et al. Stability of the Electroweak Vacuum. Journal of High Energy Physics 2013, 12, 089.

- Volterra, V. Theory of Functionals and of Integral and Integro-Differential Equations; Dover Publications, 1959.

- Gleeson, J. Non-Markovian dynamics in complex systems. Physical Review E 2014.

- Wilson, K. Renormalization Group and Critical Phenomena. Reviews of Modern Physics 1975, 47, 773.

- Fisher, R.A. Theory of Statistical Estimation. Mathematical Proceedings 1925, 22, 700–725. [CrossRef]

- Mitchell, M. Evolutionary dynamics and genetic memory. Annual Review of Ecology 2019.

- Vaswani, A.; et al. Attention is All You Need. Advances in Neural Information Processing Systems 2017.

- Srivastava, N.; et al. Dropout: A simple way to prevent neural networks from overfitting. Journal of Machine Learning Research 2014, 15, 1929–1958.

- Deser, S.; Woodard, R.P. Nonlocal Cosmology. Physical Review Letters 2007, 99, 111301, [arXiv:astro-ph/0706.2151]. [CrossRef]

- Koivisto, T.S. Dynamics of Nonlocal Cosmology. Physical Review D 2008, 77, 123513, [arXiv:gr-qc/0803.3399]. [CrossRef]

- Woodard, R.P. Nonlocal Models of Cosmic Acceleration. Foundations of Physics 2014, 44, 213–233, [arXiv:astro-ph.CO/1401.0254]. [CrossRef]

- Woodard, R.P. The Case for Nonlocal Modifications of Gravity. Universe 2018, 4, 88, [arXiv:gr-qc/1807.01791]. [CrossRef]

- Deser, S.; Woodard, R.P. Nonlocal Cosmology II — Cosmic acceleration without fine tuning or dark energy. Journal of Cosmology and Astroparticle Physics 2019, 2019, 034, [arXiv:gr-qc/1902.08075]. [CrossRef]

- Buchert, T. On Average Properties of Inhomogeneous Fluids in General Relativity: Dust Cosmologies. General Relativity and Gravitation 2000, 32, 105–125, [gr-qc/9906015]. [CrossRef]

- Zwanzig, R. Memory Effects in Irreversible Thermodynamics. Physical Review 1961, 124, 983–992. [CrossRef]

- Zwanzig, R. Ensemble Method in the Theory of Irreversibility. The Journal of Chemical Physics 1960, 33, 1338–1341. [CrossRef]

- Mori, H. Transport, Collective Motion, and Brownian Motion. Progress of Theoretical Physics 1965, 33, 423–455. [CrossRef]

- Atalebe, S. Tracing the Early Universe Without Initial Conditions. preprints.org 2025. URL: https://www.preprints.org/.

| Parameter pair | Correlation |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).