Submitted:

23 January 2026

Posted:

23 January 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Background

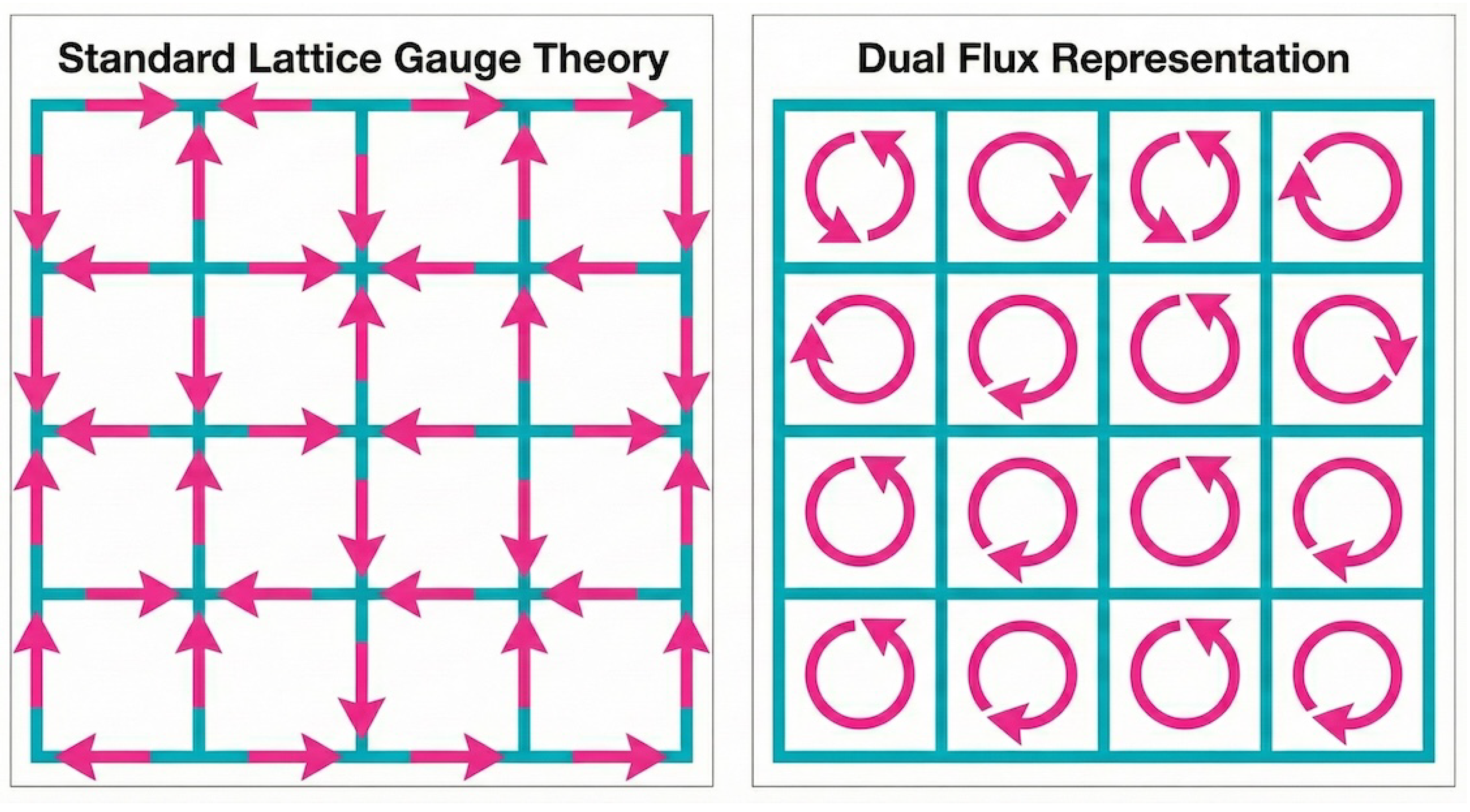

2.1. Link to Flux Transformation

2.2. Spatial -Conditioning

3. Generative Architecture: FluxUNet

3.1. Network Architecture (FluxUNet)

- Toroidal Topology (). All convolutional layers employ circular padding, enforcing periodic boundary conditions and preventing boundary artifacts.

- Residual Parameterization. We use residual blocks, , which bias the network toward incremental feature updates rather than abrupt re-mappings. This typically stabilizes optimization and reduces spurious high-frequency responses. In our setting, it yields smoother spatial predictions under periodic convolutions and improves numerical robustness.

- Smooth Nonlinearity and Normalization. We use SiLU activations and Group Normalization () instead of ReLU or BatchNorm. SiLU provides a smooth nonlinearity, and GroupNorm avoids dependence on batch statistics, which is beneficial when training vector fields with small or varying batch sizes.

3.2. Training Strategy: Ensemble Distillation

3.3. Sector-Projected Metropolis-Corrected Sampling (IMH)

- 1.

- Base distribution. Sample .

- 2.

- Flow proposal (CNF). Integrateusing an adaptive Runge–Kutta solver with tolerance to obtain . In parallel, we track an estimated log proposal density by integrating the instantaneous change-of-variables equation for the flow (divergence of ), using a Hutchinson trace estimator.

- 3.

- Toroidal wrapping. Map the angles to the principal branch

- 4.

-

Nearest-sector projection (global constraint). Compute the total wrapped fluxand project by shifting only the global mean:This step preserves the nearest integer sector label Q and enforces exactly.

- 5.

- IMH acceptance Accept with probability . The acceptance ratio is:

4. Results: Empirical Validation

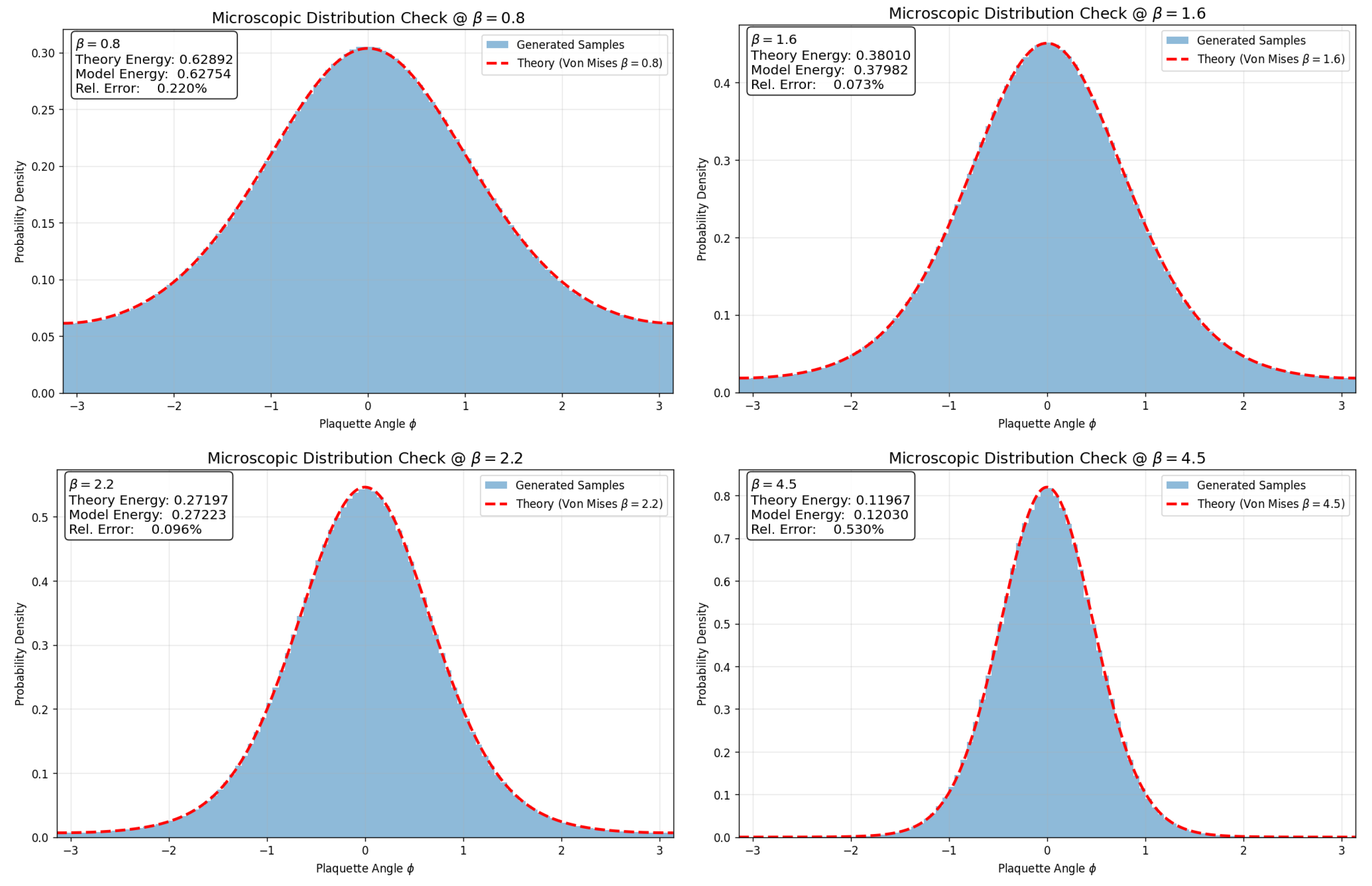

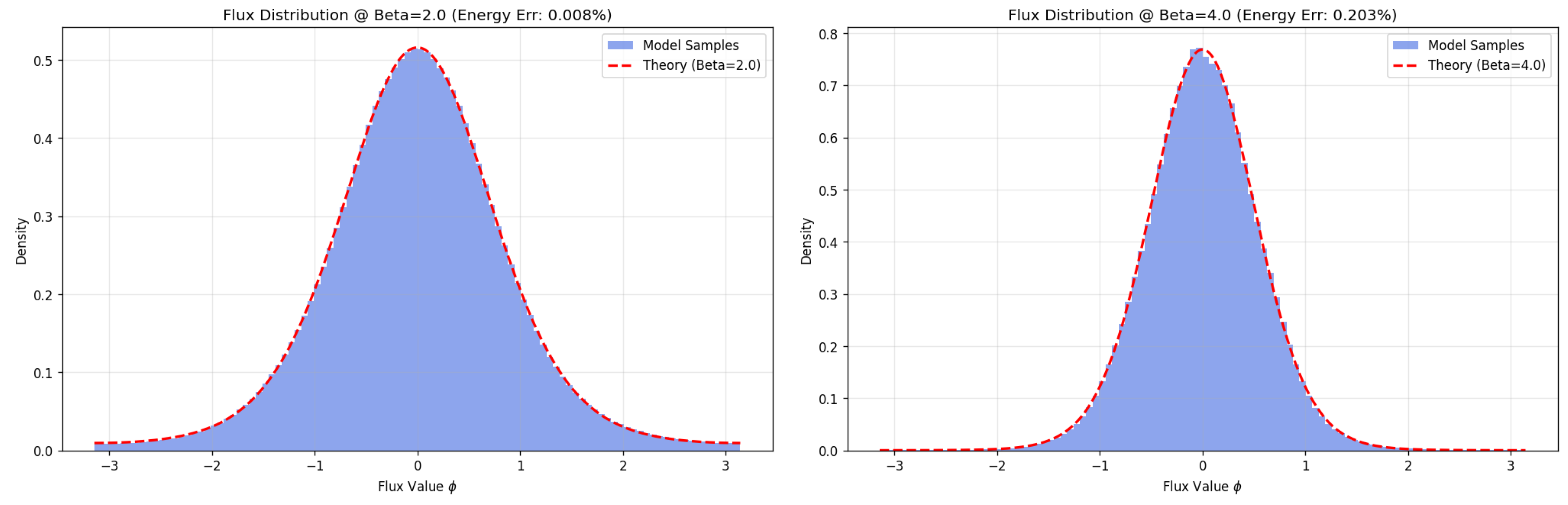

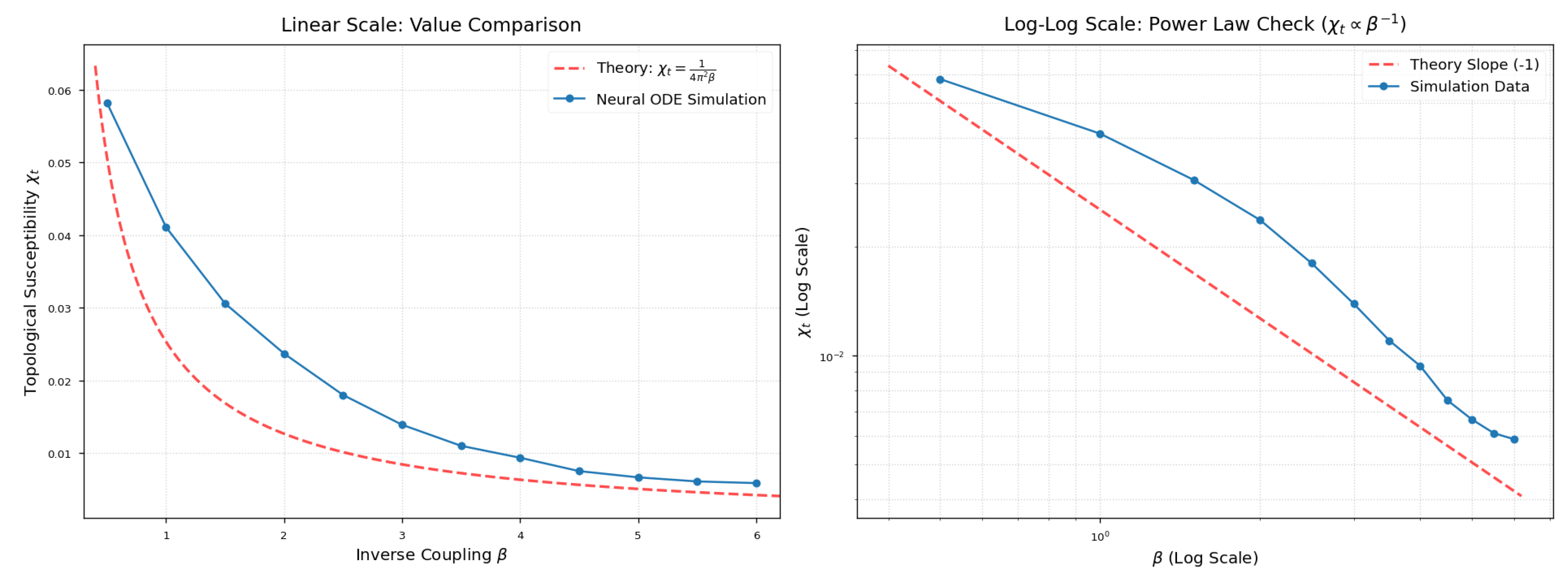

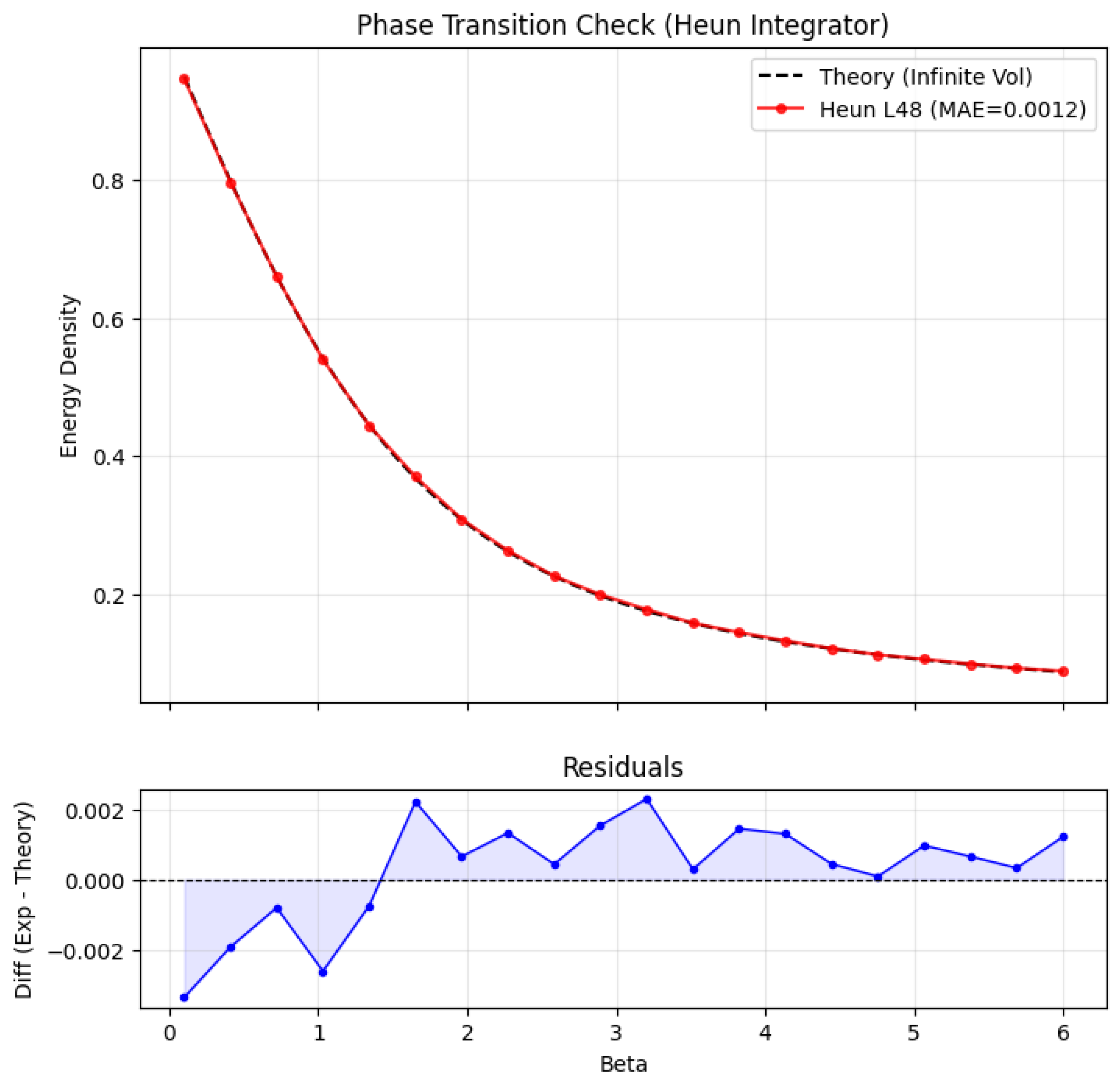

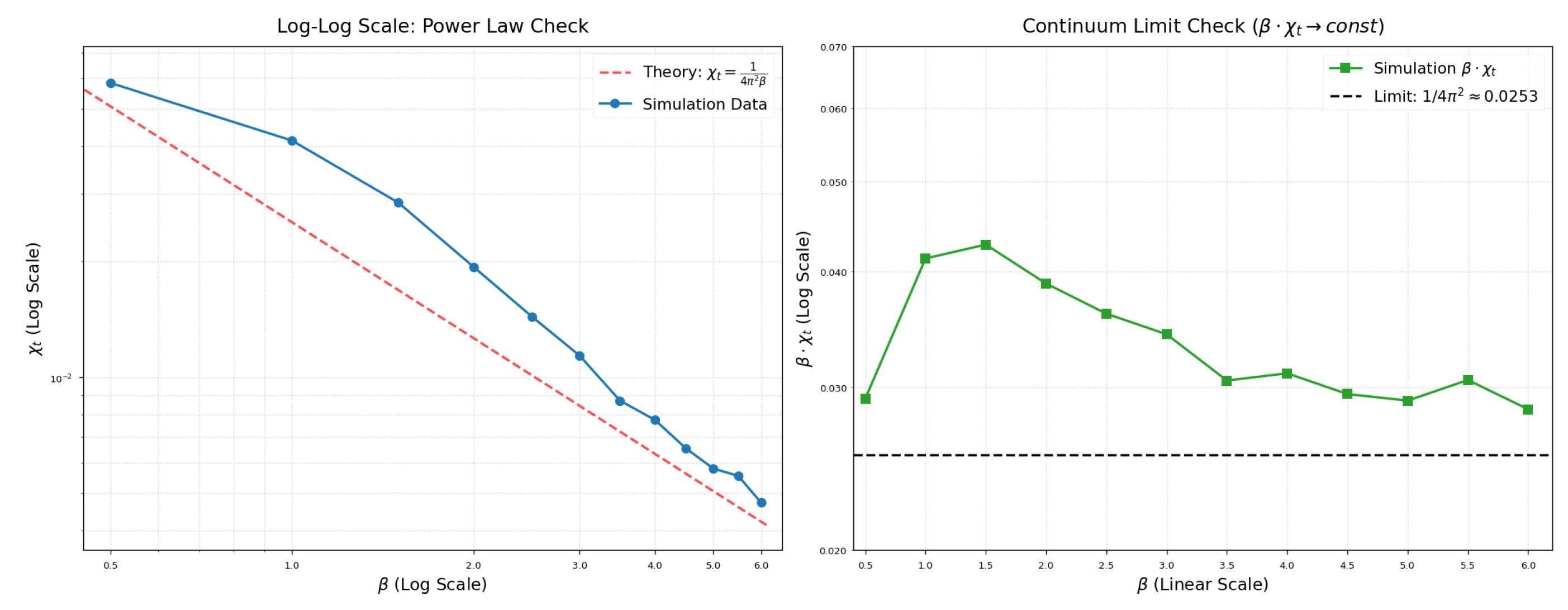

4.1. Thermodynamic Consistency Checks

4.2. Sampling Efficiency Benchmarks

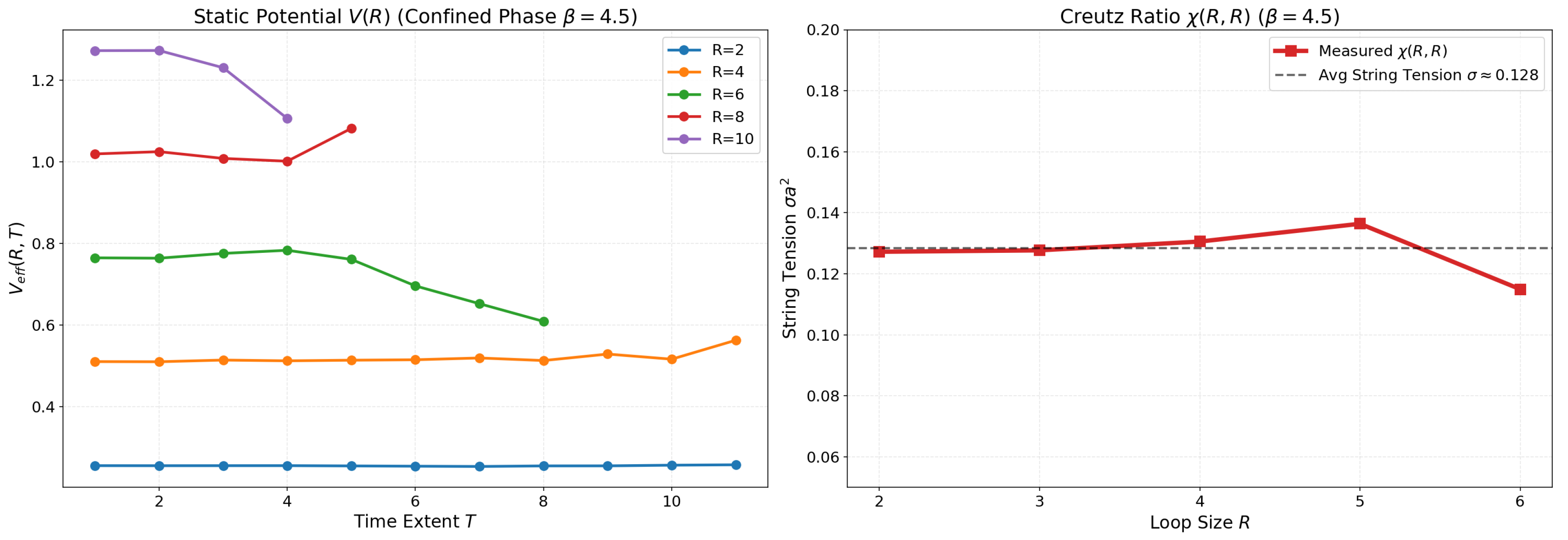

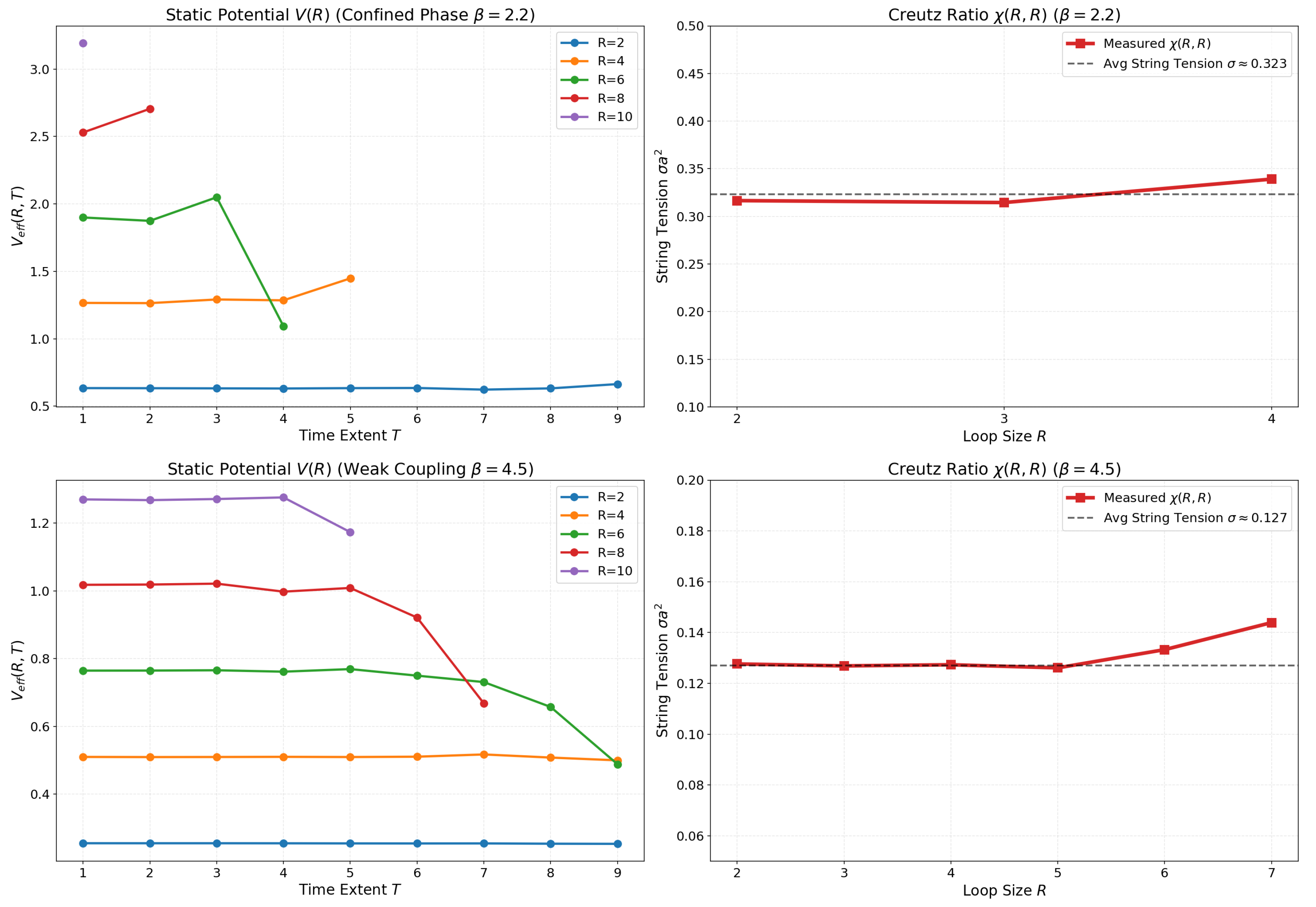

4.3. Confinement Diagnostics: V(R) and Creutz Ratios

- Strong Coupling (): The system exhibits harsh confinement characteristics. The potential rises steeply (), and Wilson loop signals decay rapidly, reflecting a "stiff" gauge field.

- Scaling Regime (): As the system approaches the continuum limit, the string tension softens significantly to . Remarkably, the model preserves the linear confining potential even in this weak coupling limit, consistent with confinement in 2D compact U(1) across couplings.

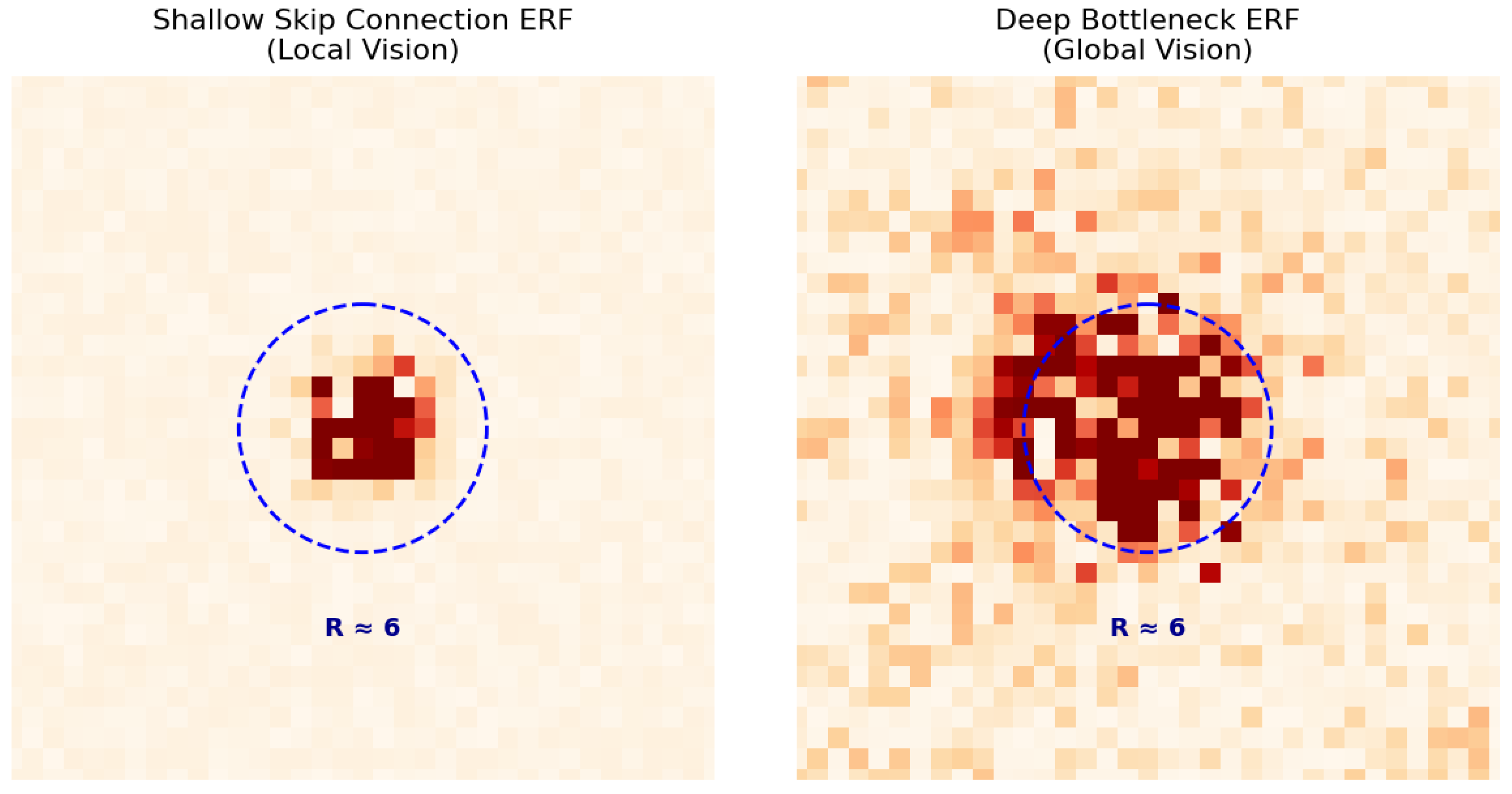

4.4. Multiscale Sensitivity (ERF Analysis)

- Local sensitivity (short-range features). In early and intermediate layers, the ERF is sharply localized: the response is concentrated in a compact neighborhood around the perturbed site. This indicates that these layers primarily encode short-range structure, such as local plaquette-angle fluctuations and short-wavelength textures. Such locality is consistent with the fact that many thermodynamic contributions are governed by local statistics, and it provides an inductive bias that avoids unnecessarily entangling distant regions when learning microscopic structure.

- Global sensitivity (long-range features). In the bottleneck layer, the ERF becomes broad and diffuse, indicating that deep features depend on information distributed across the lattice. This pattern is consistent with the network forming global summaries that cannot be inferred from a small patch alone. In particular, sector-dependent structure on a torus (e.g., correlations tied to the total-flux sector Q or other global modes) is inherently nonlocal; representing such effects requires access to long-range context. We emphasize that ERF demonstrates capacity for global dependence, not that the network explicitly computes Q.

4.5. Generalization to Spatial -Conditioning

5. Discussion

Dual Flux Transformation

Sampling Efficiency.

Local Topological Control

Theoretical Boundaries: Dimensionality and the Bianchi Constraint

Scaling Behavior

6. Conclusion

Appendix A. Validity of Direct Plaquette Generation in D=2

Appendix A.1. Discrete Variables

Appendix A.2. Absence of Local Bianchi Constraints in D=2

Appendix A.3. Local Surjectivity (Constructive Validity on a Patch)

Appendix A.4. Global Topology on the Torus T2

Appendix A.5. Conclusion

Appendix B. Training Data Generation (Local Metropolis, L = 48)

Configuration and schedule.

Warmup, thinning, and storage.

| Lattice size | |

| Batch size (parallel chains) | |

| range (per batch) | (permuted linspace) |

| Proposal amplitude | |

| Warmup sweeps | |

| Sweeps between saves | |

| Number of saved chunks | |

| Output format | FP32: and |

Appendix C. Supplementary Fidelity Checks

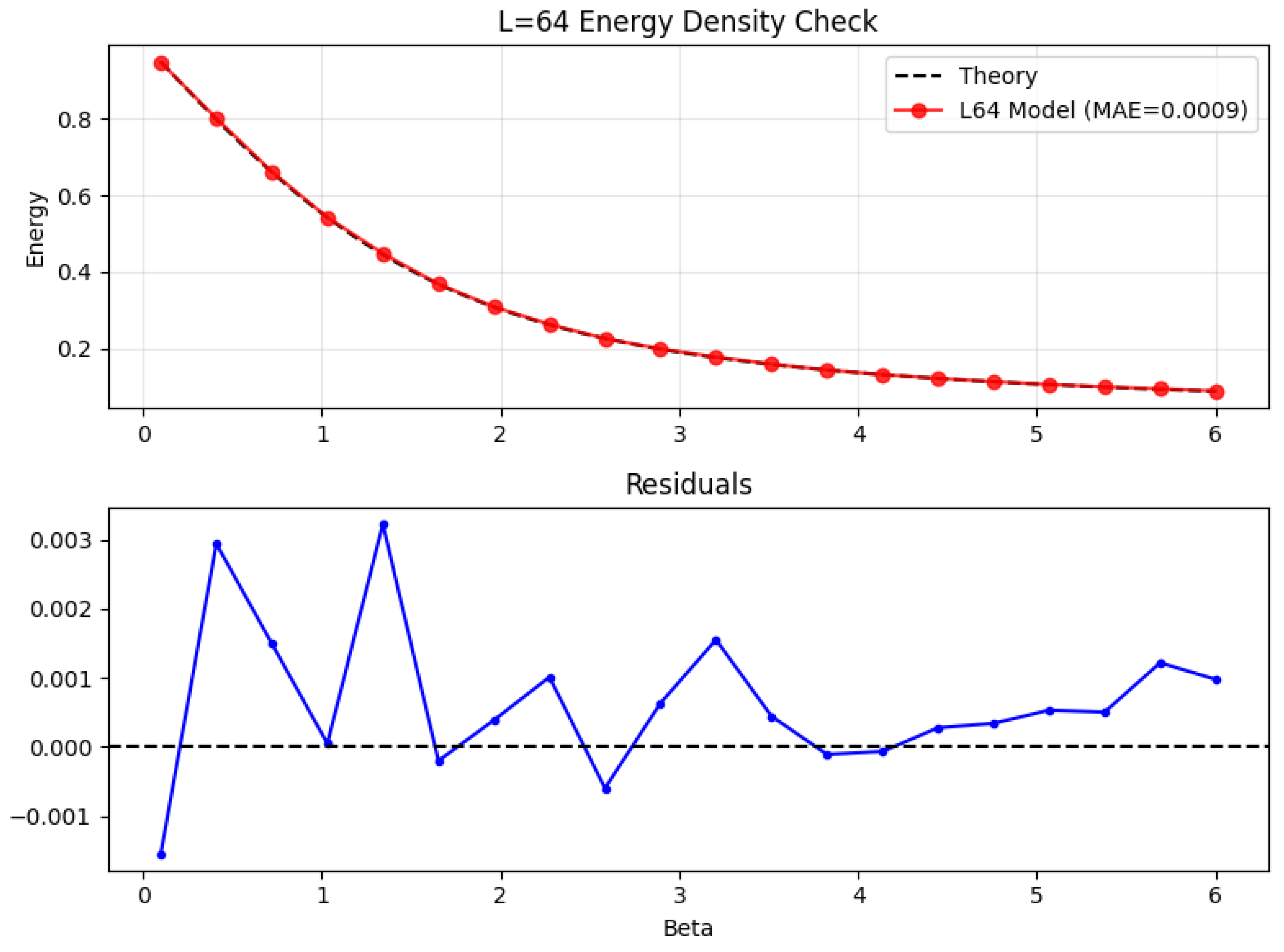

Appendix D. Scaling Validation: L = 64 Lattices

Appendix D.1. Thermodynamic Fidelity

Appendix D.2. Topological Susceptibility

Appendix D.3. Confinement Diagnostics

Appendix D.4. Sampling Efficiency

| Acc. | ESS/s | ||

| 2.0 | |||

| 4.0 | |||

| 5.0 | |||

| 6.0 |

Appendix D.5. Conclusion

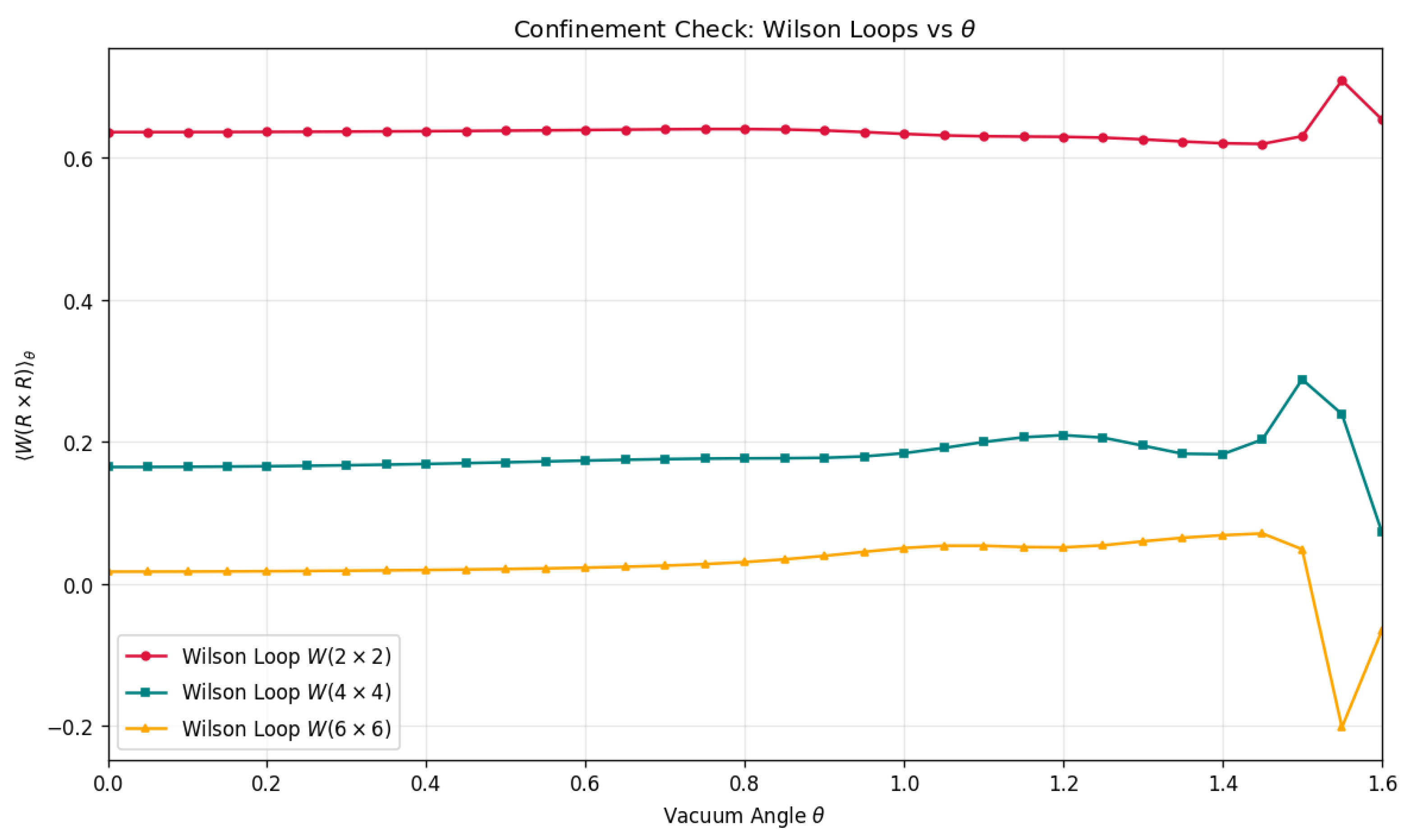

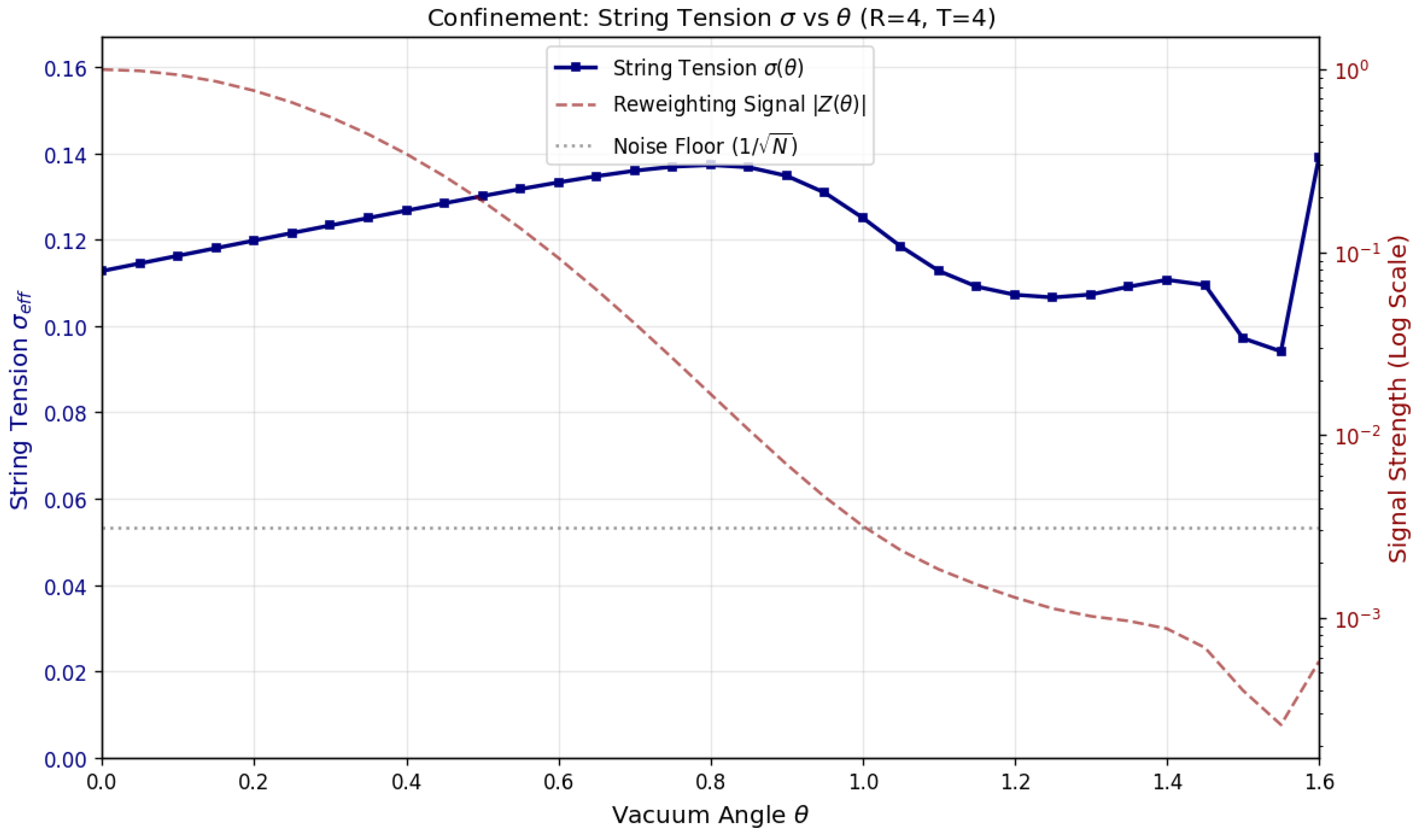

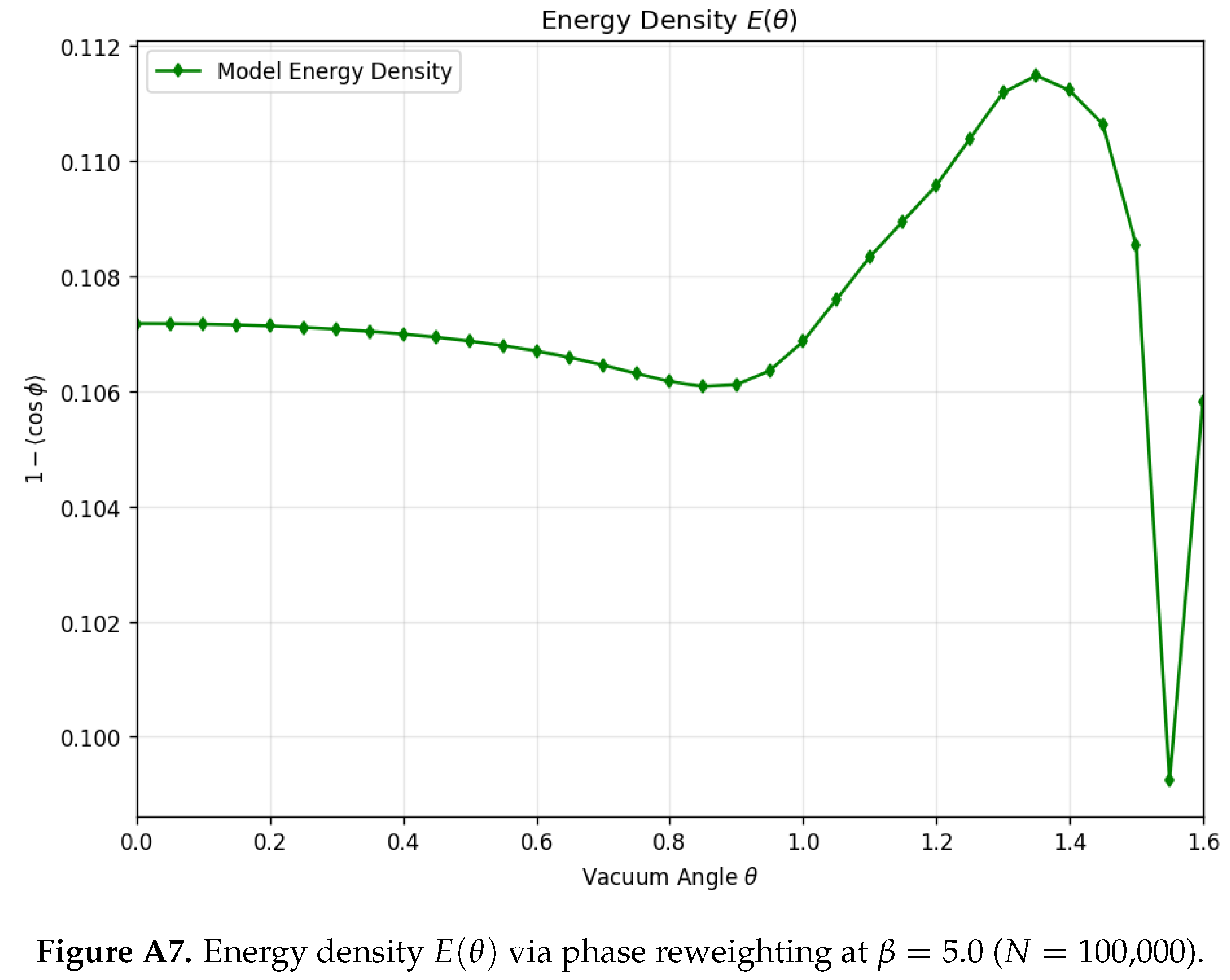

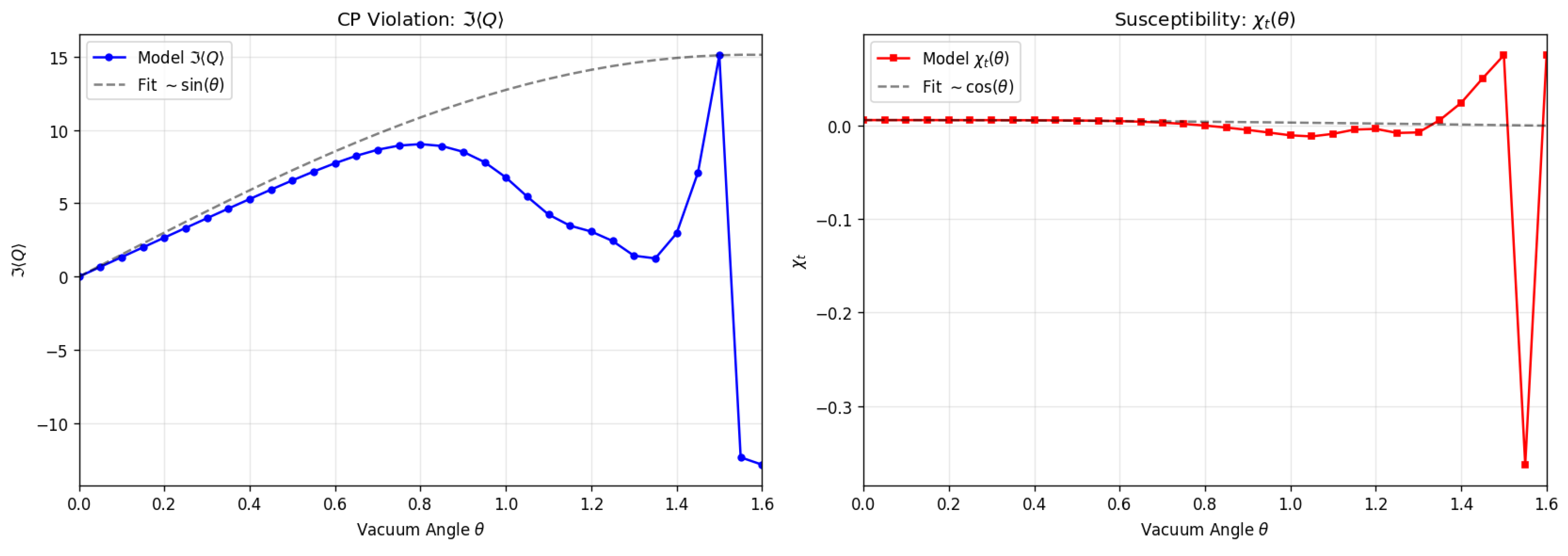

Appendix E. Finite-θ Vacuum Checks via Reweighting

Appendix E.1. Reweighting Protocol and CP-Even/CP-Odd Extraction

Appendix E.2. Analysis Window, Noise Floor, and Interpretation Scope

Appendix E.3. Observables Within the Analysis Window

- 1.

- Energy density : The action density, serving as a bulk thermodynamic check. The reconstructed values remain smooth and vary modestly over (Figure A7).

- 2.

- Wilson loops for : Probes of confinement at multiple length scales. Small and intermediate loops are broadly stable; larger loops exhibit increased sensitivity near (Figure A8).

- 3.

- Effective confinement proxy : Extracted from a fixed loop (), providing a finite-volume proxy for string tension. Over the majority of the trust window, evolves smoothly (see Figure A6).

- 4.

- CP-odd / topological response: Including and susceptibility-like proxy . These quantities are particularly sensitive to phase cancellations; the visible breakdown near is consistent with the analysis-window boundary (Figure A9).

Appendix E.4. Takeaway

References

- Albergo, M.S.; Kanwar, G.; Shanahan, P.E. Flow-based generative models for Markov chain Monte Carlo in lattice field theory. Physical Review D 2019, 100, 034515. [Google Scholar] [CrossRef]

- Abbott, R.; Albergo, M.S.; Botev, A.; Boyda, D.; Cranmer, K.; Hackett, D.C.; Kanwar, G.; et al. Normalizing flows for lattice gauge theory in arbitrary space-time dimension. arXiv 2023, arXiv:2305.02402. [Google Scholar] [CrossRef]

- Komijani, J.; et al. Super-resolving normalising flows for lattice field theories. SciPost Physics 2026, 19, 077. [Google Scholar]

- Kanwar, G.; Albergo, M.S.; et al. Equivariant flow-based sampling for lattice gauge theory. Physical Review Letters 2020, 125, 121601. [Google Scholar] [CrossRef] [PubMed]

- Albergo, M.S.; Boyda, D.; Hackett, D.C.; Kanwar, G.; et al. Introduction to Normalizing Flows for Lattice Field Theory. arXiv 2021, arXiv:2101.08176. [Google Scholar] [CrossRef]

- Favoni, M.; Ipp, A.; Müller, D.; Schuh, D. Lattice Gauge Equivariant Convolutional Neural Networks. Physical Review Letters 2022, 128, 032003. [Google Scholar] [CrossRef] [PubMed]

- Rezende, D.J.; Mohamed, S. Variational Inference with Normalizing Flows. International Conference on Machine Learning, 2015. [Google Scholar]

- Bander, M.; Itzykson, C. Quantum-field-theory calculation of the two-dimensional Ising model correlation function. Physical Review D 1977, 15, 463. [Google Scholar] [CrossRef]

- Savit, R. Duality in field theory and statistical systems. Reviews of Modern Physics 1980, 52, 453. [Google Scholar] [CrossRef]

- Lipman, Y.; Chen, R.T.; Ben-Hamu, H.; Nickel, M.; Le, M. Flow matching for generative modeling. arXiv 2022, arXiv:2210.02747. [Google Scholar]

- Song, Y.; Sohl-Dickstein, J.; Kingma, D.P.; Kumar, A.; Ermon, S.; Poole, B. Score-Based Generative Modeling through Stochastic Differential Equations. International Conference on Learning Representations 2021.

- Gerdes, M.; de Haan, P.; Bondesan, R.; Cheng, M.C.N. Non-Perturbative Trivializing Flows for Lattice Gauge Theories. arXiv 2024, arXiv:2410.13161. [Google Scholar]

- Rahaman, N.; Baratin, A.; Arpit, D.; Draxler, F.; Lin, M.; Hamprecht, F.; Bengio, Y.; Courville, A. On the spectral bias of neural networks. International Conference on Machine Learning 2019, 5301–5310. [Google Scholar]

| HMC (baseline) | FFM (our model) | |||||

|---|---|---|---|---|---|---|

| 2.0 | ||||||

| 4.0 | ||||||

| 5.0 | ||||||

| 6.0 | ||||||

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).