1. Introduction

Although being one of the top ten cancer killers [

1] and the seventh most prevalent form of neoplasm in the developed world [

2], kidney cancer has long gone unnoticed [

1]. More than 90% of kidney cancer cases are renal cell carcinoma (RCC) [

3], which refers to cancer that begin in the renal epithelium [

4]. Despite all the advancements in care, renal cell carcinoma is a cunning neoplasm that accounts for about 2% of cancer diagnoses and fatalities worldwide [

3], and is also the most dangerous renal malignancy despite all the improvements in management [

5]. The cortex of the kidney, which is made up of the glomerulus, tubular apparatus, and collecting duct, is where the majority of RCCs develop [

6]. In the fight against this aggressive malignancy, modifiable risk factors like smoking [

7], obesity [

7], uncontrolled hypertension [

8], poor diet [

9], alcohol assumption and occupational exposure [

10] are top candidates for prevention efforts [

11].

There are at minimum ten molecular and histological subtypes of this disease and ccRCC has been shown to be the most widespread and is liable for a majority of the cancer related deaths [

4]. Clear cell RCC, a renal stem cell tumour, typically found in the proximal nephron and tubular epithelium [

4], also known as conventional RCC [

12], makes up to 80% of RCC diagnoses [

13] and is more likely to hematogenously spread to the lungs, liver, and bones [

14]. Most ccRCC tumours have the same primary driving characteristic, which is the loss of Von Hippel-Lindau (VHL) tumour suppressor gene function normally present throughout the tumour [

15]. Clear cell RCC can be hereditary (4%) or random (>96%) [

16]. Most of the familial ccRCCs are caused by a hereditary VHL mutation [

11] and as a result of the abundance of cytoplasmic lipids in malignant cells, it exhibits the typical physical appearance of a well-circumscribed golden-yellow mass [

17]. Microscopically, the tumour exhibits a complex vascular network [

18] with tiny sinusoid-like capillaries dividing nests of malignant cells in addition to the typical clear cell morphology indicated by cytoplasmic lipid and glycogen build-up [

19].

Since approximately a century ago, the grading of RCC has been acknowledged as a prognostic marker [

20]. The tumour grade identifies whether cancer cells are regular or aberrant under a microscope. The more aberrant the cells seem and the higher the grade, the quicker the tumour is likely to spread and expand. Many different grading schemes have been proposed, initially focused on a collection of cytological characteristics and more recently on nuclear morphology.

The nuclear size (area, major axis, perimeter), nuclear shape (shape factor, nuclear compactness), and nucleolar prominence characteristics are the main emphasis of the Fuhrman grading of renal cell carcinomas. Even though Fuhrman grading is said to be widely used in clinical investigations, its predictive value and reliability are up for discussion [

21]. Fuhrman et al. [

22] showed in 1982 that tumours of grades 1, 2, 3 and 4 had considerably differing metastatic rates. When grade 2 and 3 tumours were pooled into a single cohort, they likewise demonstrated a strong correlation between tumour grade and survival [

22].

The International Society of Urologic Pathologists (ISUP) suggested a revised grading system for RCC in 2012 to address the shortcomings of the Fuhrman grading scheme [

23]. This system is primarily based on the assessment of nucleoli: grade 1 tumours have inconspicuous and basophilic nucleoli at 400 times magnification; grade 2 tumours have eosinophilic nucleoli at 400 times magnification; grade 3 tumours have visible nucleoli at 100 times magnification; and grade 4 tumours have extreme pleomorphism or rhabdoid and/or sarcomatoid morphology [

24]. For papillary and ccRCC, this grading system has been approved [

23]. The World Health Organization (WHO) recommended the ISUP grading system at a consensus conference in Zurich; as a result, the WHO/ISUP grading system is currently applied internationally [

24].

The abnormality of the tumour cells relative to normal cells is described by the tumour grade. It also characterises the tissues’ aberrant appearance when viewed under a microscope. The grade provides some insight into how cancer may act. A tumour classified as low grade is more likely to grow more slowly and spread less frequently than one with a high grade. So, grades 1 and 2 would be ranked as low grades and grades 3 and 4 as high-grade ccRCC.

Grading ultimately helps in the optimal management and treatment of tumours according to their prognostic behaviour concerning their respective grades. For instance elderly or very sick patients who are having small renal tumours (<4 cm) and high mortality rate, cryoablation, active surveillance or radiofrequency ablation may be considered to manage their conditions [

25]. It is crucial to be aware that, confident radiological diagnosis of low grade tumours in active surveillance can significantly impact clinical decisions hence eliminating the risk of overtreatment [

26]. As ccRCC is the most prevalent subtype (8 in 10 RCC’s) with the highest potential for metastasis, it requires careful characterisation [

27]. High-grade cancers have poorer prognosis, are more aggressive, have high risk post-operative recurrence and may metastasise [

26]. It is therefore very important to differentiate between different grades of ccRCC as high-grade ccRCC require immediate and exact management. Precision medicine together with personalised treatment has advanced with the advent of cutting-edge technology hence clinicians are interested in determining the grade of ccRCC before surgery or treatment to enable them advice on therapy and even predict cancer free survival if surgery has been conducted.

The diagnosis of ccRCC grade is commonly done based on pre-operative and post-operative methods. One such pre-operative method is biopsy. However, the accuracy of biopsy can be influenced by several factors, including the size and location of the tumour, the experience of the pathologist performing the biopsy, and the quality of the biopsy sample [

28]. Due to sampling errors, biopsy may not always provide an accurate representation of the overall tumour grade [

29]. Inter-observer variability can also lead to inconsistencies in the grading process. This can be especially problematic for tumours that are borderline between two grades [

30]. In some cases, a biopsy may not provide a definitive diagnosis as it only considers the cross-sectional area of the kidney, hence is not representative of the entire kidney [

31]. Moreover, ccRCC have high spatial and temporal heterogeneity hence a biopsy cannot represent the entirety of the tumour [

32]. Biopsy has a small chance of haemorrhage (3.5%) and a rare risk of track seeding (1:10,000) [

33,

34]. Due to the limitations highlighted for biopsy [

35], radical or partial nephrectomy treatment specimens are usually used as a definitive post-operative diagnosis of tumour grade.

Partial or radical nephrectomy being the definitive therapeutic approaches, small but significant number of patients are subjected to unnecessary surgery even though their management may not require surgical resection. Nephrectomy also increases the possibility of contracting chronic renal diseases that may result in cardiovascular ailments [

36]. This indicates a non-invasive accurate grading of ccRCC is essential for more effective and focused tumour management.

The assumption in most research and clinical practice is that solid renal masses are homogenous in nature or if heterogeneous, they have the same distribution throughout the tumour volume [

37]. More recent studies [

38] have highlighted that in some histopathologic classifications, different tumour subregions may have different rates of aggressiveness, hence heterogeneity plays a significant role in tumour progression. Ignoring such intra-tumoural differences may lead to inaccurate diagnosis, treatment and prognoses [

39].

The biological makeup of tumours is complex and therefore shows spatial differences within their structures. These variations may be in the expression of the gene or microscopic structure [

40]. The differences can be caused by several factors, among them being hypoxia which is the loss of oxygen in the cells and necrosis which is the death of cells. This is mostly synonymous with the tumour core. Likewise, high cell growth and tumour-infiltrating cells are factors associated with the periphery [

41].

Medical imaging analysis have been proved to be capable of detecting and quantifying the level of heterogeneity of tumours [

42,

43,

44]. This ability enables tumours to be categorised into different subregions depending on the level of heterogeneity. In relation to tumour grading, intra-tumoural heterogeneity may prove useful in determining the subregion of the tumour containing the most prominent features that enables successful grading of the tumour.

Radiomics, which is the extraction of high throughput features from medical images, is a modern technique that has been used in medicine to extract features which would not be otherwise visible using the naked eye alone [

45]. It was first proposed by Lambin et al. [

46] in 2012 to extract features taking into consideration the differences in solid masses. Radiomics eliminates the subjectivity in the extraction of tumour features from medical images as it works as an objective virtual biopsy [

47]. There are quite a number of studies that have applied radiomics in the classification of tumour subtypes, grading and even the staging of tumours [

48,

49].

Over the years, there has been tremendous progress in the field of medical imaging with the advent of artificial intelligence (AI). AI has enabled analysis of tumour subregions in a variety of clinical tasks and using several imaging modalities such as CT and MRI [

50]. However, these analyses have been limited to only few types of tumours, particularly brain tumours [

51] head and neck tumours [

52] and breast cancers [

53]. Until now there exists no study which has attempted to analyse the effect of intra-tumoural heterogeneity in the diagnosis, treatment and prognosis of renal masses and specifically ccRCC’s. This rationale formed the basis of the present study on the effect of intra-tumoural heterogeneity on the grading of ccRCC. To our knowledge, no such study has been conducted before hence this becomes the first paper to comprehensively focus on tumour subregions in renal tumours for prediction of tumour grade classification.

This research tested the hypotheses that radiomics combined with ML could significantly differentiate between high and low grade ccRCC for individual patients. This study sought answers to two major questions which previous research has not been able in answering;

Characterise the intra-tumoural heterogeneity in grading ccRCC,

Compare the diagnostic accuracy of radiomics and ML with the image guided biopsy in determining the grade of renal masses using resection (partial or complete) histopathology as reference standard,

2. Materials and Methods

2.1. Ethical Approval:

This study was approved by Institutional board and the access to patients’ data was granted under the Caldicott Approval Number: IGTCAL11334 dated 21 October 2022. Informed consent for the research was not required as CT scan image acquisition is a routine examination procedure for patients suspected of having ccRCC.

2.2. Study Cohorts:

The retrospective multicentre study used data from three centres which are either in partnership or satellite hospitals of the National Health Service (NHS), in a well-defined geographical area of Scotland, United Kingdom. The institutions included Ninewells Hospital Dundee, Stracathro General Hospital and Perth Royal Infirmary Hospital.

Data from the University of Minnesota Hospital and Clinic (UMHC) was also used [

54,

55]. Scan data was anonymised.

We accessed Tayside Urological Cancers (TUCAN) database [

56] for pathologically confirmed cases of ccRCC, between January 2004 and December 2022. A total of 396 patients with CT scan images were retrieved from Picture Archiving and Communication System (PACS) in DICOM format. This data formed our first cohort (cohort 1).

Retrospective-based analysis for pathologically confirmed ccRCC image data following partial or radical nephrectomy from UMHC stored in a public database [

57] (accessed on 21 May 2022) was done and is referred to as cohort 2. The database was queried for data between 2010 and 2018. A total of 204 patients with ccRCC CT scan images were collected.

The inclusion criteria for the study were as follows:

Availability of protocol based pre-operative contrast enhanced CT scan in the arterial phase.

Confirmed histopathology from partial or radical nephrectomy with grades reported by a uro-pathologist according to WHO/ISUP grading system.

The exclusion criteria were:

Patients with only biopsy histopathology.

Metastatic ccRCC.

CT scans with data to achieve a working acquisition for 3D image reconstruction.

Patients with bilateral tumours and patients who are having ipsilateral multiple (two or more) in the same kidney were excluded.

For more information on the UHMC dataset refer to [

54,

55].

2.3. CT Acquisition Technique:

The patients in cohort 1 were examined using up to five different CT helical/spiral scanners including: GE Medical Systems, Philips, Siemens, Canon Medical Systems and Toshiba with 512-row detectors. The detectors were also of different models including: Aquilion, Biograph128, Aquilion Lightning, Revolution EVO, Discovery MI, Ingenuity CT, LightSpeed VCT, Brilliance 64, Aquilion PRIME, Aquilion Prime SP, Brilliance 16P. The slice thickness were: 1.50, 0.63, 2.00, 1.25 and 1.00 mm. The arterial phase of the CT scan obtained 20-45 seconds after contrast injection was acquired using the following method: intravenous Omnipaque 300 contrast agent (80-100 mls), 3 ml/s contrast injection for the renal scan, 100-120 kVp with X-ray tube current of 100-560 mA depending on the size of the patient. For UMHC dataset refer to [

54,

55].

2.4. Data Curation:

The procedure used for data collection of each patient comprised of multiple stages: accessing Tayside Urological Cancers database, identification of patients using unique identifier (community health index number or CHI number), review of the medical records of the cohort, CT data acquisition, annotation of the data and finally quality assurance.

Anonymised data for cohort 1 was in DICOM format. For each patient, duplicated DICOM slices were removed since inconsistency in slice has incremental affects as how an image can be processed.

Image quality is an important factor in Machine learning modelling [

58]. We went through the images to remove low quality, poor resolution, low contrast and those with significant Poisson noise. These factors can be due to low dose scanning, patient motion, technical issues, ring and metal artefacts. fig1 represents a flowchart showing the exclusion and inclusion criteria of patients and their categorisation.

Tumour grades 1 and 2 were labelled as low grade whereas grade 3 and 4, were classified as high grade. This is because the clinical management for grade 1 and 2 are more or less similar, and similarly for grade 3 and 4.

Figure 1.

The diagrammatic representation of the exclusion and inclusion criteria for cohort 1 dataset.

Figure 1.

The diagrammatic representation of the exclusion and inclusion criteria for cohort 1 dataset.

2.5. Tumour Sub-volume Segmentation Technique

In cohort 1, CT image slices for each patient were converted to 3D NIFTI (Neuroimaging Informatics Technology Initiative) format using Python programming language version 3.9 [

59] and loaded into 3D Slicer 4.11.20210226 software [

60,

61] for segmentation. Manual segmentation was performed on the 3D image delineating the edges of the tumour slice by slice to obtain the VOI. To make the boundaries of the delineated slices smoother the median [

62] smoothing method was used, which removed small details while keeping smooth contours unchanged. The kernel size of the smoothing was 3mm i.e., 3x3 pixel.

The above procedure was performed by a blinded investigator (A.J.A.) with 14 years of experience in interpreting medical images who was unaware of the final pathological grade of the tumour. Confirmatory segmentation was done by another blinded investigator (A.J.) with 12 years of experience in using medical imaging technology on 20% of the samples to ascertain the accuracy of the first segmentation. Thereafter, the segmentations were assessed and ascertained by an independent experienced urological surgical oncologist (G.N.), taking into consideration radiology and histology reports. The gold standard pathology diagnosis was assumed to be partial or radical nephrectomy histopathology.

For cohort 2, Heller et al. [

54] did the segmentation by following a set of instructions including ensuring that the images of the patients contain the entire kidney, drawing a contour which includes the entire tumour capsule and any tumour or cyst but excluding all tissues other than renal parenchyma and drawing a contour that includes the tumour components but excludes all kidney tissues. In the present study only the delineation of kidney tumours was done by Heller et al. [

54].

To perform delineation a web-based interface was created on the HTML5 Canvas that allowed drawing contours on the images freehand. The image series were subsampled in the longitudinal direction regularly so that the number of annotated slices depicting a kidney was about 50% compared to the original. Interpolation was also performed. More information on the segmentation of cohort 2 dataset can be found on this report [

54].

The result of the segmentation for both cohort 1 and 2 was a binary mask of the tumour. In the present study the tumour was divided into different subregions based on the geometry of the tumour i.e., periphery and core. The periphery refers to regions towards the edges of the tumour whereas the core represents regions close to the centre of the tumour. The core was obtained by extracting 25%, 50% and 75% of the binary mask from the centre of the tumour as shown in

Figure 2, 3 and 4. The periphery was generated by extracting 25%, 50% and 75% of the binary mask starting from the edges of the tumour to form a rim as a hollow sphere as shown in fig2, fig3 and fig4. Mask generation was done using a python script which automatically generated the subregions through image subtraction techniques.

Figure 2.

Manual segmentation of the 3D image slices using Slicer 3D software. (a) Axial plane of the tumour. (b) Coronal plane of the tumour. (c) Sagittal plane of the tumour. (d) Generated 3D VOI from the 2D slices delineated.

Figure 2.

Manual segmentation of the 3D image slices using Slicer 3D software. (a) Axial plane of the tumour. (b) Coronal plane of the tumour. (c) Sagittal plane of the tumour. (d) Generated 3D VOI from the 2D slices delineated.

Figure 3.

Manual segmentation of the 3D image slices using Slicer 3D software. (a) 75% periphery and core of the tumour. (b) 50% periphery and core of the tumour. (c) 25% periphery and core of the tumour. (d) Overlap of periphery and core subregions.

Figure 3.

Manual segmentation of the 3D image slices using Slicer 3D software. (a) 75% periphery and core of the tumour. (b) 50% periphery and core of the tumour. (c) 25% periphery and core of the tumour. (d) Overlap of periphery and core subregions.

Figure 4.

Representation of the 3D segmented regions. (a) 75% periphery and core of the tumour. (b) 50% periphery and core of the tumour. (c) 25% periphery and core of the tumour. (d) Overlap of periphery and core subregions.

Figure 4.

Representation of the 3D segmented regions. (a) 75% periphery and core of the tumour. (b) 50% periphery and core of the tumour. (c) 25% periphery and core of the tumour. (d) Overlap of periphery and core subregions.

2.6. Radiomics Feature Computation

Similar to our previous research [

48], texture descriptors of the features were computed using the PyRadiomics module in python 3.6.1. [

63]. PyRadiomics is a module that has attempted to implement a standardised way of extracting radiomic features from medical images avoiding inter-observer variability [

64].

The parameters used in Pyradiomics were: Minimum region of interest (ROI) Dimension of 2, pad distance was set to 5, Normalisation False, Normaliser scale was 1. There was no removal of outliers, no resample pixel spacing and no pre-cropping of the image. SitkBSpline was used as the interpolator with the bin-width being set to 20.

On average, Pyradiomics generates approximates 1500 features for each image. Pyradiomics enables the extraction of 7 feature classes per 3D image. This was done with the 3D image in NIFTI format and the binary mask image. The feature categories extracted were as follows: First-order (19 features), Gray-Level Co-occurrence Matrix (GLCM) (24 features), Gray-Level Run-Length Matric (GLRLM) (16 features), Gray-Level Size-Zone Matrix (GLSZM) (16 features), Gray-Level Dependence Matrix (GLDM) (14 features), Neighbouring Gray-Tone Difference Matrix (NGTDM) (5 features) and the 3D Shape features (16 features). These features compute the texture intensity and the way they are spread in the image [

64].

In a previous study [

48] it had been shown that a combination of the original feature classes and filter features improved model performance significantly. We therefore extracted the filter classes features in addition to the original features. These filter classes included: Local Binary-Pattern (LBP-3D), Gradient, Exponential, Logarithm, Square-Root, Square, Laplacian of Gaussian (LoG) and Wavelet. The filter features are applied to every feature in the original feature classes for instance first-order statistic feature class has 19 features it follows that and it will have 19 LBP filter features. The filter class feature was named according to the name of the original feature and the name of the filter class. [

64].

2.7. Feature Processing and Feature Selection

The features extracted using PyRadiomics were standardised to assume a standard distribution. The scaling was performed using the Z score Equation (

1) for both the training and testing dataset independently, however using the mean and standard deviation generated from the training set. This was done to avoid leakage of information while also eliminating bias from the model.

All the features were transformed in such a way that it will have the properties of a standard normal distribution with mean (

)=0 and standard deviation (

)=1.

Where,

Z: Value after scaling the feature.

x: The feature.

: Mean of all the features in training set.

: Standard deviation of the training set.

Normalisation reduces the effect of different scanners, as well as any influence that intra-scanner differences may introduce in textural features with improved correlation to histopathological grade [

65]. The ground truth labels were denoted as 1 for high grade and 0 for low grade for the purpose of enabling the ML models to understand the data.

Machine learning models usually encounter the "curse of dimensionality" in the training dataset [

66], when the number of features in the dataset is greater than the number of samples. We therefore applied two feature selection techniques in an attempt to reduce the number of features and retain only those features with the highest importance in predicting the tumour grade. First the inter-feature correlation coefficient was computed and where two features had a correlation coefficient greater than 0.8, one of the features was dropped. Thereafter, we used XGBoost algorithm to further select the features with the highest importance for the model development.

2.8. Subsampling

In ML the spread of data among different classes is an important consideration before developing a ML model. Imbalance in data may lead the model to be biased towards the majority class, instead of learning the features of the data, the model will be “cramming” making the model inapplicable in real life scenario. In this research our data samples were imbalanced, and we therefore applied synthetic minority oversampling technique (SMOTE) to balance the data.

Care should be taken when using SMOTE as it should only be applied in the training set and not the validation and testing set, as if this were done there is possibility for the model to gain an improvement in operational performance due to data leakage [

67,

68].

2.9. Statistical Analysis

Common clinical features in this research were analysed using the SciPy package. Comparisons were done on the age, gender, tumour size, tumour volume against the pathological grade.

Chi-square test () was used to compare the associations between groups. Chi square test is a non-parametric test used when the data does not follow the assumptions of parametric tests, such as the assumption of normality in the distribution of the data. In this study, it was used to assess the differences between categorical groups. The student t-test is a popular statistical tool used when assessing the difference between two population means for continuous data. It is normally used where the population follows a normal distribution and the population variance is unknown.

In cases where statistical significance between the clinical data was obtained, the Point-Biserial Correlation Coefficient (rpb) was used to further confirm the significance. Pearson correlation coefficient was used to measure the linear correlation in the data between the radiomic features. The coefficient value range between -1 to 1, with one signifying a strong positive correlation. A set of features which had a correlation coefficient of 0.8 and above had one feature eliminated as they portrayed the same information.

McNemar’s statistical test which is a modified Chi square test, was used in the research to test if the difference between false negative (FN) and false positive (FP) was statistically significant. It was calculated from the confusion matrix using the stats module in SciPy library. The Chi square test for randomness was used to test whether the model predictions were different from random. The dice similarity coefficient was used to determine the inter-reader agreement for the segmentations.

All statistical tests assumed at a significant level of p<0.05 i.e., the null hypothesis was rejected when the p-value is less than 0.05.

The radiomic quality score (RQS) was also calculated to evaluate whether the research followed the scientific guidelines of radiomic studies. The study followed the established guidelines of transparent reporting of a multi-variable prediction model for individual prognosis or diagnosis (TRIPOD) [

69].

2.10. Model Construction, Validation and Evaluation

Several models were implemented to predict the pathological grade of ccRCC using the WHO/ISUP grading system as a gold standard. The models were constructed for cohort 1, 2 and the combined cohort. The ML models included: Support Vector Machine (SVM), Random Forest (RF), eXtreme Gradient Boosting (XGBoost/XGB), Naïve Bayes (NB), Multi-layer Perceptron Classifier (MLP), Long Short-Term Memory (LSTM), Logistic Regression (LR), Quadratic Discriminant Analysis (QDA), Light Gradient Boosting Machine (LightGBM/LGB), Category Boosting (CatBoost/CB) and Adaptive Boosting (AdaBoost/ADB). In total 231 distinct models were constructed i.e., 11 models for each of the three cohorts and for each tumour subregion (11 x 3 x 7).

For validation the dataset was divided into training and testing sets. For each cohort 67% of the data was used for training and 33% was left-out for testing. This formed part of our internal validation. Besides that, we took cohort 1 as the training set and cohort 2 as the testing set and vice versa. This formed part of our external validation. It should be noted that although cohort 1 has been taken to be analogous to a “single institution” dataset, it was obtained from a multicentre study and its comparison with cohort 2 was for external validation of the predictive models.

A subset of cohort 1 consisting of patients who underwent both CT guided percutaneous biopsy and nephrectomy (28 samples) were evaluated using two separate ML algorithms. One model was trained on cohort 1 but excluding the 28 patients who had both procedures conducted. While the second was trained on cohort 2 which acted as an external validator. The classifiers and subregions were determined for the two best performing classifiers and the best three performing tumour regions. The objective for this was to assess the accuracy of tumour grade prediction from biopsy results when compared to ML prediction using partial or nephrectomy histopathology as a gold standard. These 28 samples were referred to as cohort 4. In situations where biopsy results were indeterminate for a specific tumour, we concluded its final pathological grade as the opposite of the nephrectomy grade for that tumour i.e., if nephrectomy outcome was high grade but the biopsy result was indeterminate, we conclude that the biopsy has indicated low grade for the purpose of analyses.

Evaluation of the model performance was done using a number of metrics including Accuracy, Specificity, Sensitivity, Area Under the Curve of the Receiver Operating Characteristic curve (AUC-ROC), Mathew’s correlation coefficient (MCC), F1 Score, McNemar’s test and Chi-squared test.

4. Discussion

Clear cell renal cell carcinoma is the most common subtype of renal cell carcinoma and is responsible for a majority of renal cancer related-deaths. It makes up to 80% of RCC’s diagnosis [

5] and is more likely to metastasise to other organs [

14].

Important diagnostic criteria that must be derived include tumour grade, tumour stage, and the histological type of the tumour. For most cancer patients, histological grade is a crucial predictor of local invasion or systemic metastases and may affect how well they respond to treatment. To define the extent of the tumour; tumour staging based clinical assessment, imaging investigations, and histological assessment is required. A greater comprehension of the neoplastic situation and awareness of the limitations of diagnostic techniques are made possible by an understanding of the procedures involved in tumour diagnosis, tumour grading, and tumour staging.

The grading of RCC was determined as a prognostic marker more than a hundred years ago [

20]. It identifies a tumour as being either regular or aberrant when observed under a microscope, hence it is one of the factors that determines the ability of cancer to grow and spread to other adjacent cells.

To accurately grade a tumour, several grading schemas have been applied, from which the WHO/ISUP and the Fuhrman grading systems have been the most popular and widely accepted. Previously, grading had been focused on a collection of cytological characteristics of the tumour, however; more recently nuclear morphology has been a major area of focus. The Fuhrman grading system has been used for quite a long time [

70] with its worldwide adoption being in 1982 [

22]. Nuclear size, nuclear shape and the nucleolar prominence are major characteristics associated with the Fuhrman grading system [

70,

71]. Fuhrman et al. [

22], demonstrated in 1982 that grade 1, 2, 3 and 4 tumours had considerably different rates of metastasis, likewise they also demonstrated that when grade 2 and 3 were pooled together there was a significantly and strong correlation between tumour grade and survival [

22].

Despite these seemingly encouraging findings, there are several methodological issues with the Fuhrman study. Its reliance on retrospective data collected across a 13-year period, raises questions about potential biases [

20]. The system’s dependency on a small sample size of only 85 cases may also make its conclusions less generalisable [

20,

70]. The inclusion of several RCC subtypes without subtype-specific grading in the study leaves out the possibility of variances in tumour behaviour [

20,

70,

72].

It is difficult to grade consistently and accurately due to the complexity of the criteria, which calls for the evaluation of three nuclear factors simultaneously i.e., nuclear size, nuclear irregularity and nucleolar prominence [

70,

72] resulting into poor inter-observer reproducibility and interpretability. The lack of guidelines that can be utilised to assign weights to the different parameters when they are discordant to achieve a final grade makes the Fuhrman system even more controversial [

70,

71]. Furthermore, shape of nucleus was not well defined for different grades [

70]. Grading discrepancies are a result of conflict between the grading criteria and a lack of direction for resolving them [

20,

23,

72]. Additionally, imprecise standards for nuclear pleomorphism and nucleolar prominence adversely affect pathologists’ classifications resulting in increased variability [

70]. Even if a tumour is localised, grading according to the highest-grade area could result in an overestimation of tumour aggressiveness [

20,

70]. This system’s inconsistent behaviour and poor reproducibility [

72] raises questions regarding its dependability and potential effects on patient care and prognosis [

73]. Flaws with inter-observer repeatability [

73,

74] and the fact that the Fuhrman grading system is still widely used despite these flaws, shows there is need for more research and better grading methods.

An extensive and cooperative effort resulted in the development of the ISUP grading system for renal cell neoplasia as an alternative to Fuhrman grading system in 2012 [

70,

72]. The system was ratified and adopted by the WHO in 2015 and renamed as WHO/ISUP grading system [

20,

24]. As opposed to Fuhrman grading system, the ISUP system focuses on the nuclei prominence alone as the sole parameter that should be utilised when identifying tumour grade. This reduction in rating parameters has led to better grade distinction and increased predictive value. This has also eliminated the controversy around reproducibility that had been identified in the Fuhrman grading system. Previous studies have shown that there is clear separation between grade 2 and 3 in the WHO/ISUP grading system which was not the case with Fuhrman system. Indeed, Dugher et al. [

23] in their study highlighted that there was a downgrade of Fuhrman grade 2 and 3 to grade 1 and 2 respectively in the WHO/ISUP system. This indicates that besides the overlap of grades in Fuhrman, there was also an overestimation of grades, a problem that has been rectified with WHO/ISUP grading system [

23,

49,

75]. The WHO/ISUP grading system has been highly associated with the prognosis of patients [

76].

Pre-operative imaging guided biopsy is a diagnostic tool that is used to identify the tumour grade. However, there is inherent problems that had been identified with this approach. The fact that this is invasive in nature and would mean discomfort and may also cause other complications to patients when the procedure is performed [

35,

77]. Therefore, non-invasive testing, imaging and clinical evaluations may be necessary to confirm the presence of ccRCC and its grade without having to undergo such procedure.

Radiomics has gained traction in clinical practice in recent years, becoming a buzzword since 2016 [

45]. It refers to the extraction of high-dimensional quantitative image features in what is known as image texture analysis, and it describes the pixel intensity in medical images such as X-ray images, CT, MRI, CT/PET, CT/SPECT, US and Mammogram scans. It has been applied in a number of studies for the diagnosis, grading and staging of tumours.

Machine learning is a one of the major branches of AI, and a method that is used to train on a set of known data then tested on unknown data. It is an attempt to make machines more intelligent by determining spatial differences in data that would have been otherwise difficult for a human being to decipher. It has been used in combination with texture analyses particularly in tumour classification, grading and staging. It is capable of learning and improving through the analysis of image textural features thereby resulting in higher accuracy than native methods [

78].

Heterogeneity within tumours is a significant predictor of outcomes, with greater diversity within the tumour potentially linked to increase tumour severity. The level of tumour heterogeneity can be represented through images known as kinetic maps which are simply signal intensity time curves [

78,

79,

80]. Studies [

81,

82] that have utilised these maps end up averaging the signal intensity features throughout the solid mass hence regions with different levels of aggressiveness end up contributing equally in determining the final features. This leads to loss of information about the correct representation of the tumour [

83,

84].

In some studies, there have been attempts to preserve intra-tumoural heterogeneity by extracting the features at the periphery and the core, and analysing them separately [

42,

43,

44,

82]. However, this is still not enough as information in other subregions of the tumour are not considered.

In this research we undertook to investigate the influence of intra-tumoural subregion heterogeneity and biopsy on the accuracy of grading ccRCC. A total sample size of 391 patients with pathologically proven ccRCC from two broad data sets was used. The objective of the study was therefore to study the impact of intra-tumoural heterogeneity in grading ccRCC, compare and contrast ML and AI-based methods combined with CT radiomics signatures to biopsy, partial and radical nephrectomy in determining the grade of ccRCC. Finally, the research was to investigate the possibility of CT radiomics ML analysis to be used as an alternative to and thereby replacing the conventional WHO/ISUP grading system in the grading of ccRCC.

The experimental findings from our research have highlighted various aspects of discussion. From the results it was found that age, tumour size and tumour volume were statistically significant for cohorts 1, 2 and 3. However, for cohort 4 none of the clinical features were found to be significant. Upon further analysis of the statistically significant clinical features using the point-biserial correlation coefficient (rpb), none of the features were verified as significant.

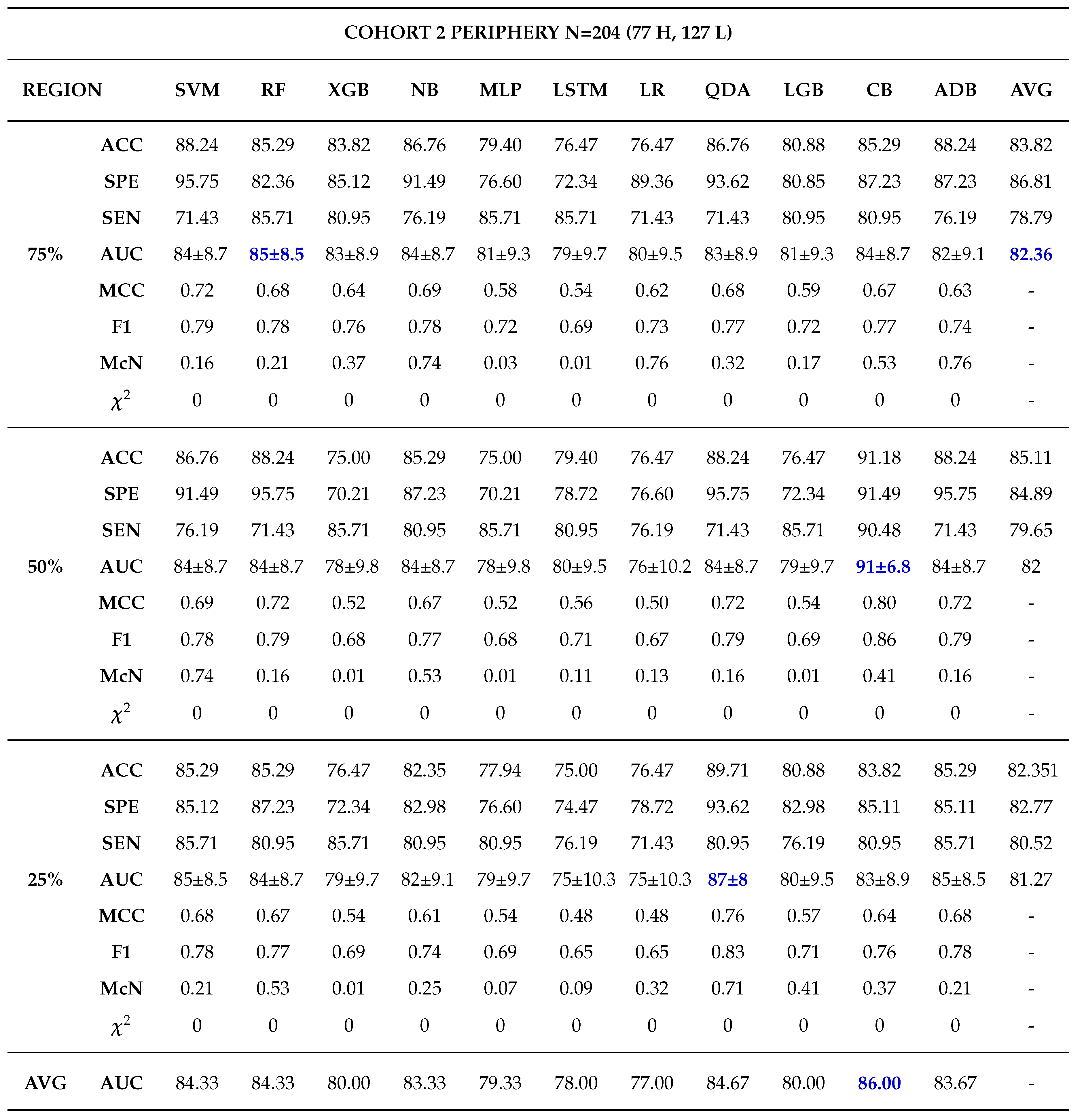

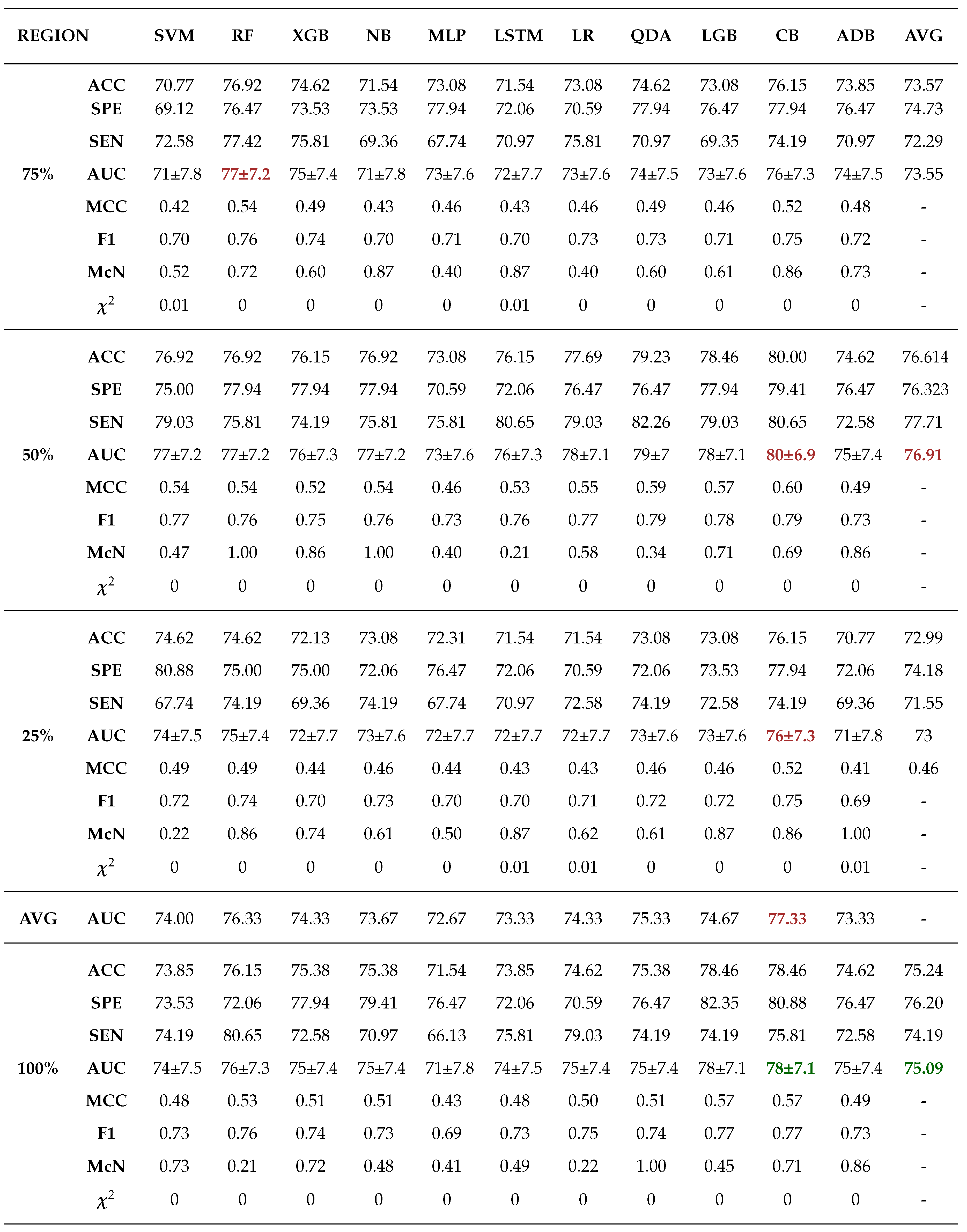

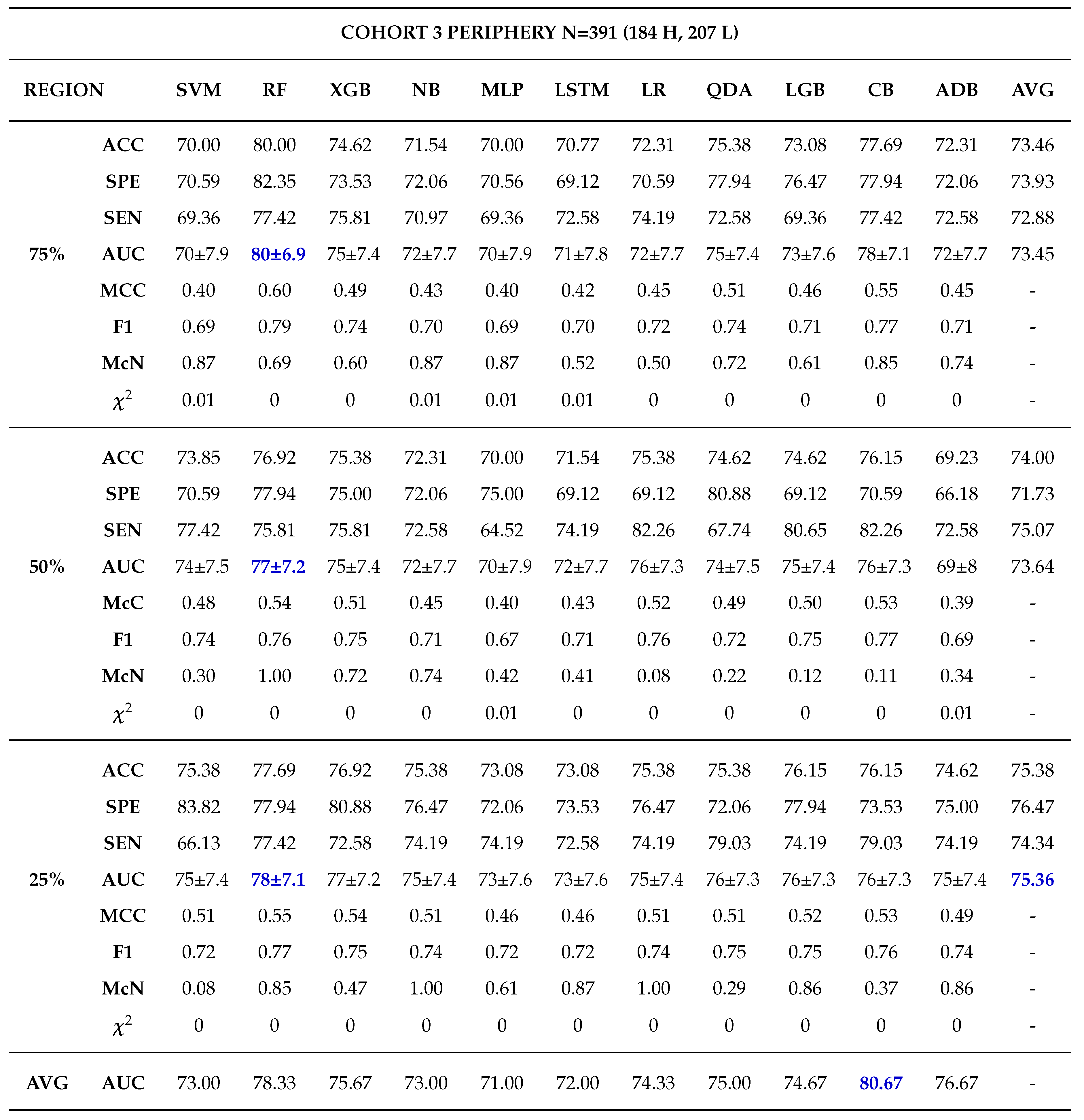

Moreover, 50% core tumour subregion was identified from the results as the best tumour subregion with the highest averaged performance for the models in cohorts 1, 2 and 3 with average AUCs of 77.91%, 87.64% and 76.91% respectively. It is worth noting that 25% periphery tumour subregion experienced an increase in its average performance for cohort 1 having an AUC of 78.64%, however this result was not statistically different from that of 50% core and it failed to register the best performances in the other cohorts.

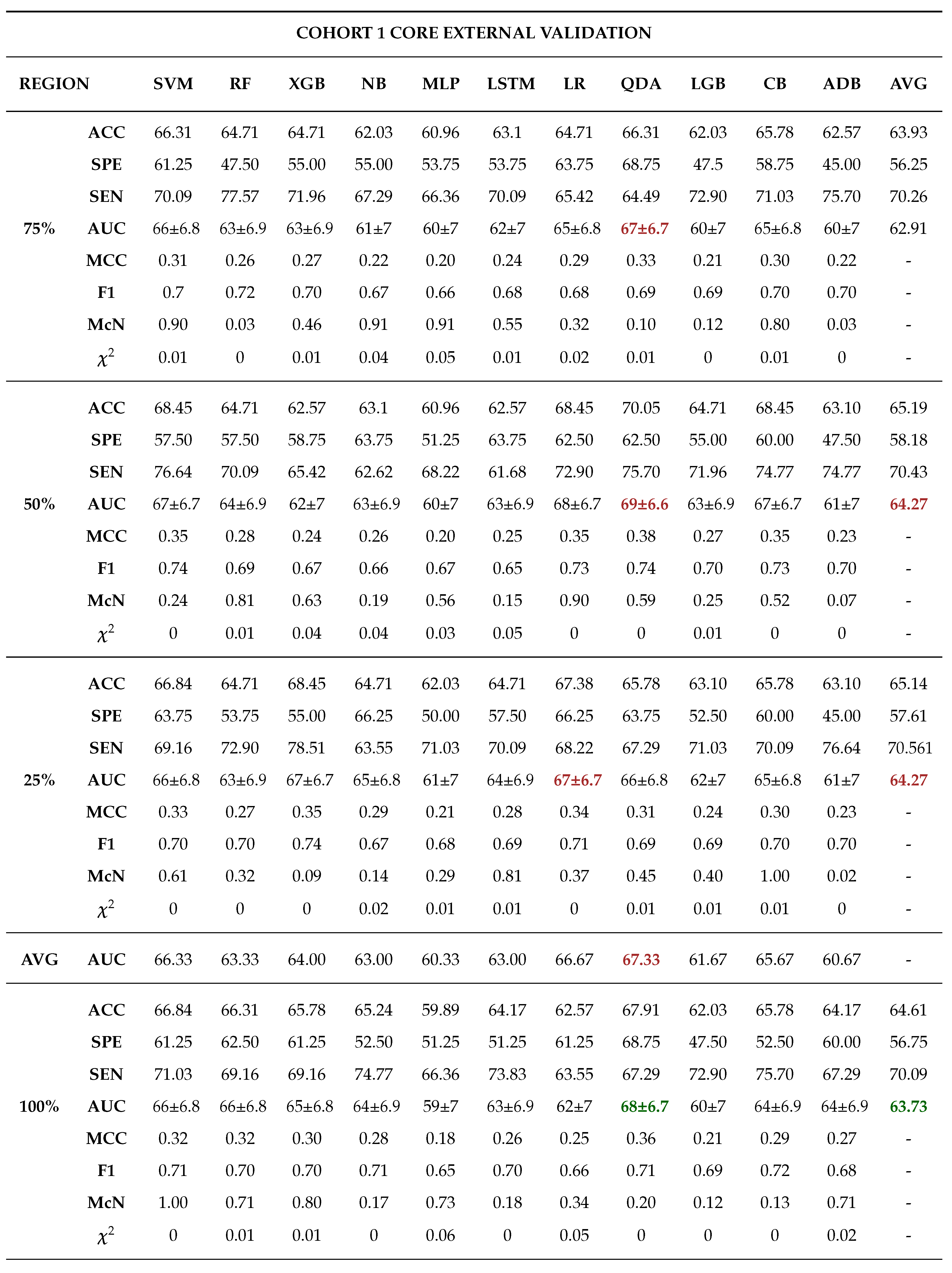

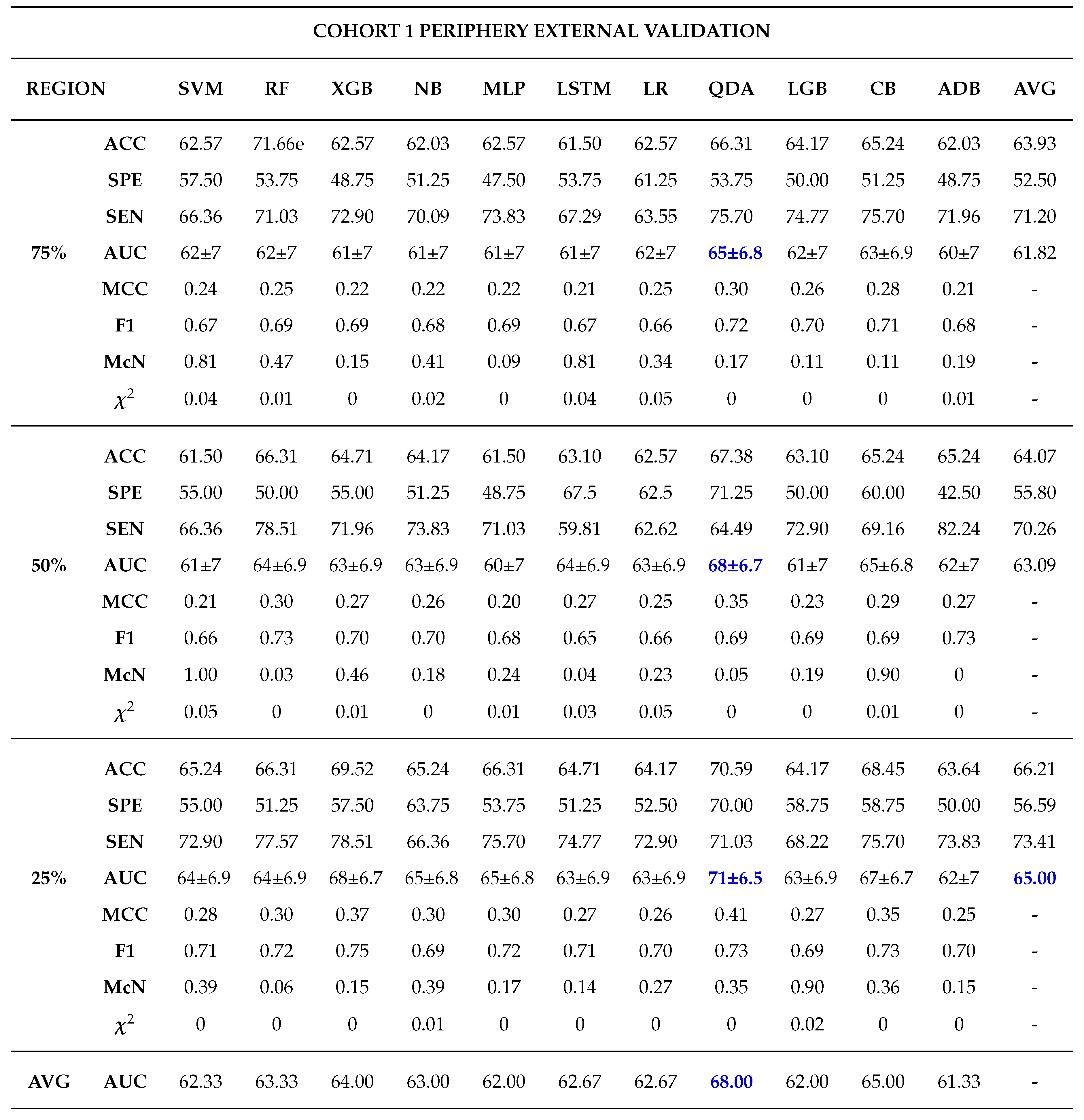

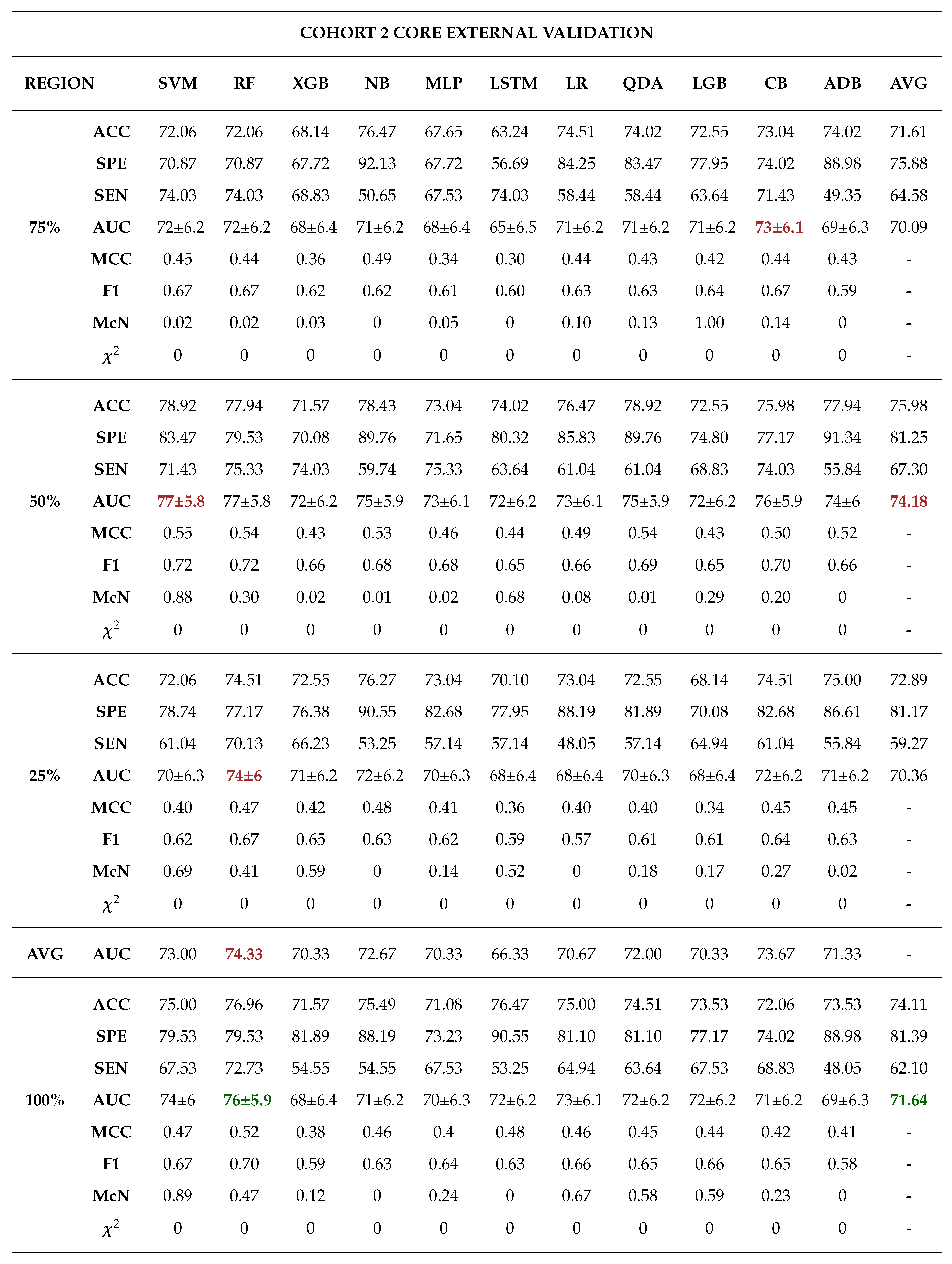

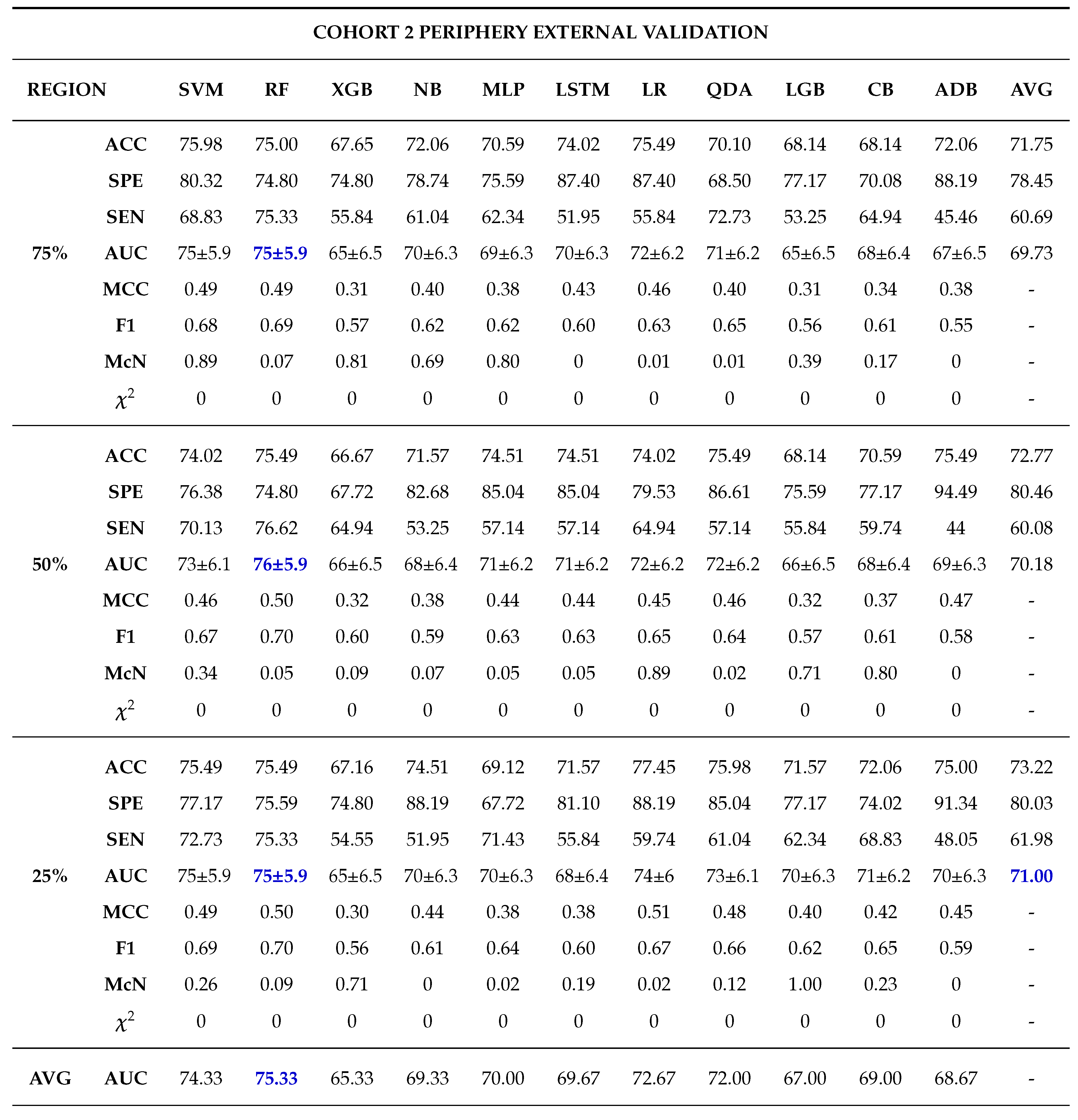

Among the 11 classifiers, the CatBoost classifier was the best model in all the three cohorts with an average AUCs of 80.00%, 86.50% and 79.00% for cohort 1, 2 and 3 respectively. Likewise, the best performing distinct classifiers per cohort was CatBoost with an AUC of 85% in 100% core, 91% in 50% periphery and 80% in 50% core for cohort 1, 2 and 3 respectively. On external validation, cohort 1 validated on cohort 2 data had the highest performance in 25% periphery with the highest AUC of 71% and the best classifier being QDA. Conversely, cohort 2 validated on cohort 1 data provided the best performance in the 50% core with an AUC of 77% and the best classifier was SVM.

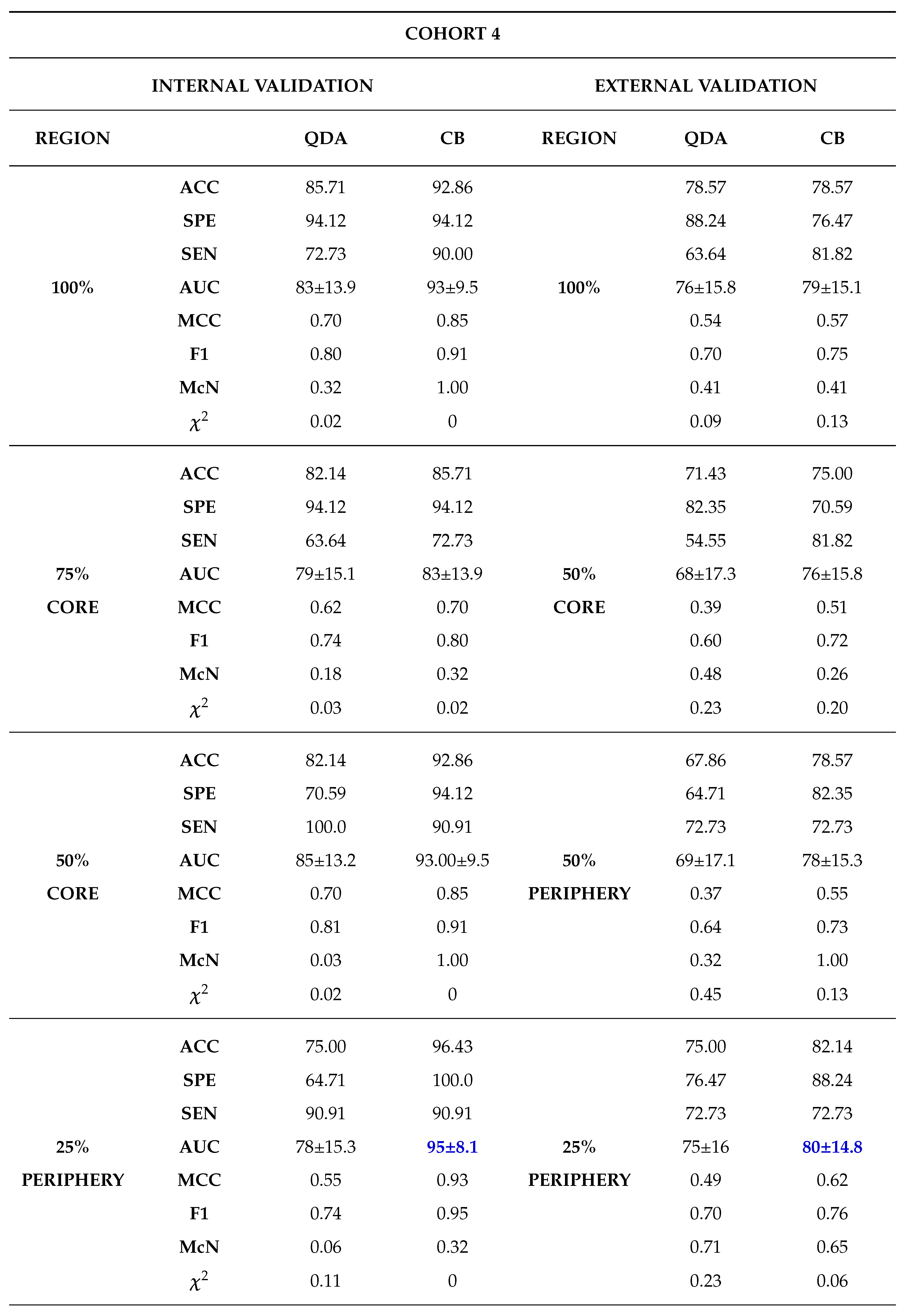

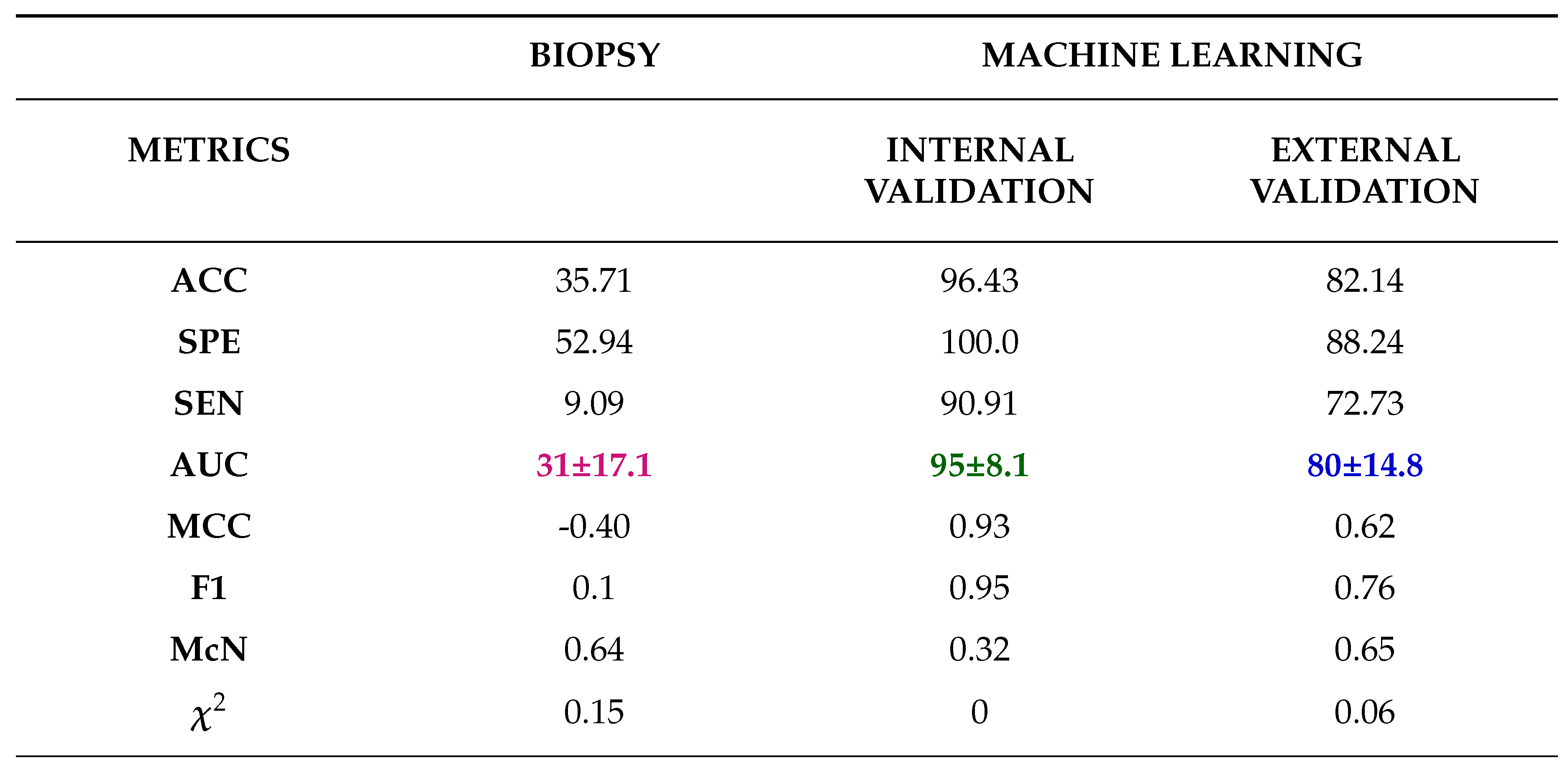

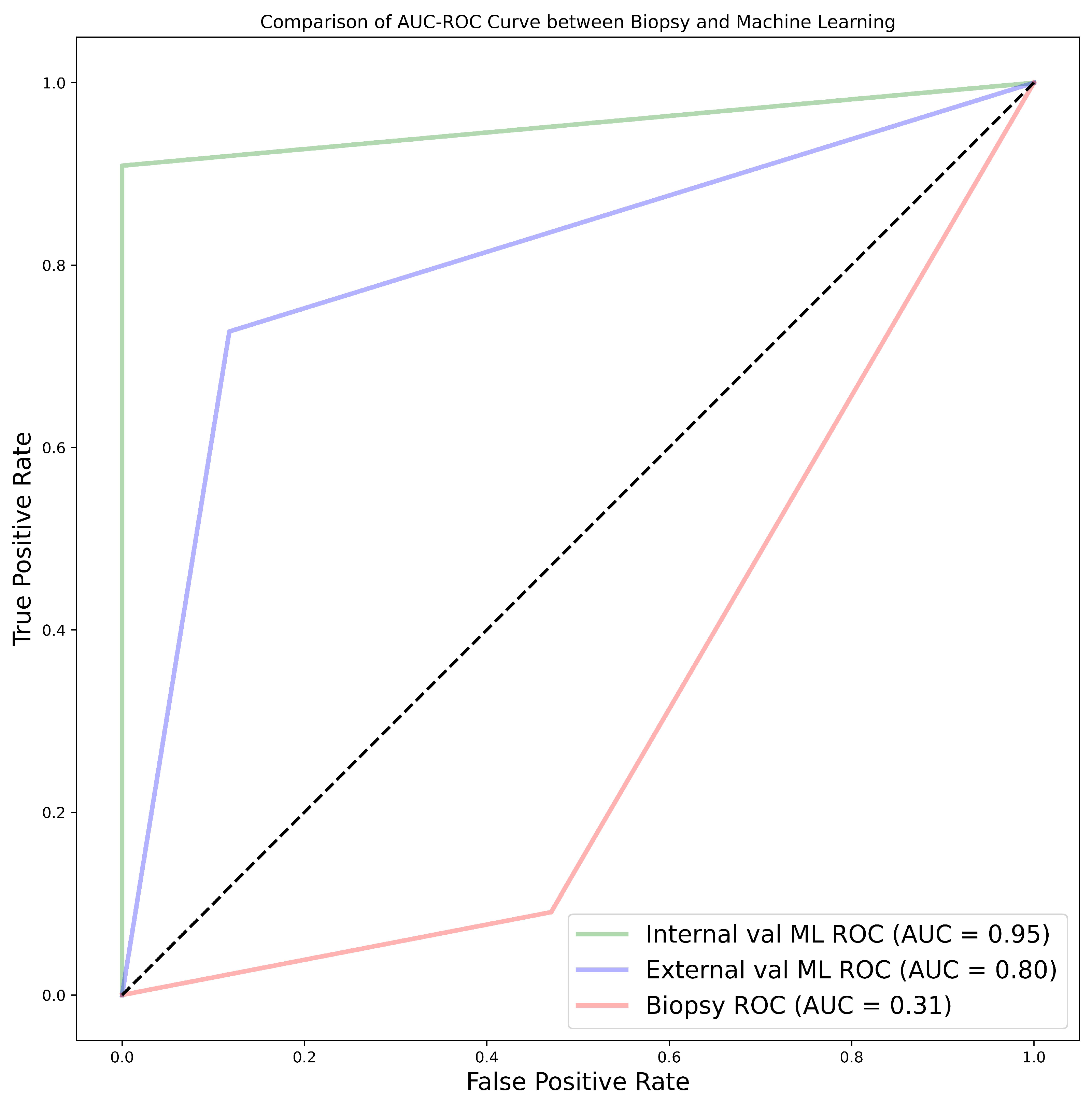

Finally, comparing between biopsy and ML classification of the 28 patients who did both biopsy and nephrectomy i.e., cohort 4, the ML model was found to be more accurate with the best AUC for internal validation being 95% and external validation being 80%. This was against an AUC of 31% found when biopsy was used. In this case nephrectomy results of grading were assumed as the ground truth.

Clinical feature significance is an important aspect in research as it gives a general overview of the data to be used in a study. Few studies have opted to include clinical features which are statistically significant to their ML radiomics models [

85,

86]. Takahashi et al. [

86] for instance incorporated 9 out of 12 clinical features into their prediction model due to them being statistically significant [

86]. In our study, age, tumour size and tumour volume were found to be statistically significant however, they were not integrated into ML radiomics model since a confirmatory test using the point-biserial correlation coefficient revealed non-significance. Nonetheless, there is lack of clear guidelines on the relationship between statistical significance and predictive significance. There is a misunderstanding that association statistic may result in predictive utility, however association only provide information regarding a population whereas predictive research focusses on either multi class or binary classification of a singular subject [

87]. Moreover, the degree of association between clinical features and the outcome is affected by sample size i.e., statistical significance is likely to increase with increase in sample size [

88]. This is clearly portrayed in previous research by Alhussaini et al. [

48]. Even in our own research cohort 4 data despite being from the same population as cohort 1 data, the age, tumour size, tumour volume and gender are not statistically significant indicating that the sample size might be the likely cause.

Zhao et al. [

89] in their prospective research presented interesting findings regarding tumour subregion in ccRCC. In their research they indicated that somatic copy number alterations (CANs), grade and necrosis are higher at tumour core compared to the tumour margin. Our findings using different tumour subregions tend to agree by the study by Zhao et al. [

89] even though they never constructed a predictive ML algorithm.

He et al. [

90] constructed 5 predictive CT scan models using Artificial Neural Network algorithm to predict the tumour grade of ccRCC using both conventional image features and the texture features. The best performing model in their study using the CMP and the texture features provided an accuracy of 91.76%. This is comparable to our study which attained the highest accuracy of 91.14% using the CatBoost classifier. However, He et al. [

90] didn’t use other metrics which could been have useful in analysing the overall success in the prediction. For instance, the research could have depicted a high accuracy but with bias towards one class. Moreover, the research findings were not externally validated hence the prediction performance is unclear for other datasets.

Similar to He et al. [

90]; Sun et al. [

91] also constructed an SVM algorithm to predict the pathological grade of ccRCC. The result of their research gave an AUC of 87%, sensitivity of 83% and specificity of 67%. However, we found that they erred by giving an overly optimistic AUC with a very low specificity. This can easily be seen by analysing our SVM results for the best performing SVM model which has an AUC of 86%, sensitivity of 80.95% and specificity of 91.49%. Our best model, the CatBoost classifier performed much better. Xv et al. [

92] set out to analyse the performance of SVM classifier using three feature selection algorithms for the differentiation of ccRCC pathological grades in both clinic-radiological and radiomics features. The three algorithms were LASSO, recursive feature elemention (RFE) and reliefF algorithm. Their best model performance was in the SVM-ReliefF with combined clinical and radiomics features with an AUC of 88% in the training, 85% in the validation and 82% in the testing. It is worth noting our research never used any of the feature selection algorithms used by Xv et al. [

92] however, our performance was still better than they reported.

Cui et al. [

93] used internal and external validation for the purpose of predicting the pathological grade of ccRCC. Their research achieved satisfactory performance with internal and external validation accuracy of 78% and 61% respectively in the corticomedullary phase (CMP) using the CatBoost classifier. Compared to their research our results had better performances when the CatBoost classifier was used for both the internal and external validation with an accuracy of 91.18% and 75.98% respectively in the CMP. Wang et al. [

94] also did a multicentre study using logistic regression model however they used both biopsy and nephrectomy as the ground truth despite all the challenges that have been highlighted regarding biopsy. The research didn’t report on the internal validation performance however their training AUC, sensitivity and specificity was 89%, 85% and 84% respectively. Likewise, their external validation AUC, sensitivity and specificity was 81%, 58% and 95% respectively. Their external validation performance was better than our performance using the LR model which gave an AUC, sensitivity and specificity of 74%, 59.74% and 88.19% respectively. However, in general our CatBoost classifier still outperformed their LR model. Moldovanu et al. [

95] investigated the use of multiphase CT using LR to predict the WHO/ISUP nuclear grade of ccRCC. When our results were compared with their validation set which was having AUC, sensitivity and specificity of 81%, 72.73% and 75.90% in the corticomedullary phase; our research exhibited a higher performance not only in the best performing model but also in the LR model which had an AUC, sensitivity and specificity of 84%, 71.43% and 95.75% respectively.

Yi et al. [

96] did research on the prediction of the WHO/ISUP pathological grade of ccRCC using both radiomics and clinical features using SVM model. The 264 samples used was from the nephrographic phase. We noted that there was massive class imbalance in the data with a ratio of low to high grade being 78:22, yet the research did not highlight how this was solved. Nonetheless, the testing accuracy of the research yielded an AUC of 80.17%, a performance which is lower than our research.

Similar to our study, Karagöz and Guvenis [

97] constructed a 3D radiomic feature based classifier to determine the nuclear grade of ccRCC using the WHO/ISUP grading system. The best results were obtained using the LightGBM with an AUC of 0.89. They also did tumour dilation and contraction by 2 mm which led them to conclude that ML algorithm is stable against deviation in segmentation by observers. Our best model outperforms their results and our sample size is much bigger thereby more trustable results. Demirjian et al. [

49] also constructed a 3D model using data from two institutions using RF, Adaboost and ElasticNet classifiers. The best performing model i.e., RF AUC of 0.73. This mode performance was lower than in our research. Having used a dataset graded using the Fuhrman system for testing may have led to poor results since WHO/ISUP and Fuhrman used different parameters while grading, hence it is impossible to have Fuhrman grade as the ground truth for a model trained using WHO/ISUP.

Shu et al. [

98] extracted radiomics features from the CMP and NP to construct 7 ML algorithms with the best model in the CMP achieving an accuracy of 0.974 in the MLP algorithm. The findings in the research are quite interesting except that the research was not clear on the gold standard used for grade prediction. This may bring us to a conclusion that biopsy was part of the gold standard. We have highlighted the controversies surrounding biopsy and if that be the case then the research may have been shrouded with such controversies.

Biopsy is a commonly used diagnostic tool for the identification of RCC subtypes. The diagnostic accuracy of biopsy for RCC has been reported to range from 86-98%, but this can be influenced by various factors [

35,

99,

100]. However, when it comes to grading RCC, the ranges of accuracy widen to between 43-76% [

35,

99,

100,

101,

102,

103,

104,

105,

106].

Nevertheless, Biopsy’s accuracy in classifying renal cell tumours is debatable (Millet et al., 2012). Different studies contend that kidney biopsy typically understates the final grade. For instance, biopsies underestimated the nuclear grade in 55% of instances and only properly identified 43% of the final nuclear grades [

101]. Particularly the final nuclear grade was marginally more likely to be understated in biopsies of bigger tumours, but histologic subtype analysis yielded more accurate results, especially when evaluating clear cell renal tumours. In the research by Blumenfeld et al. [

101] only one case of the nuclear grade being overestimated was seen. In the study by Millet et al. [

103] in 13 cases, biopsy underrated the grade, and in two cases, it inflated the grade.

In our study, we found that the accuracy of biopsy was 35.71% in determining the tumour grade with sensitivity and specificity of 9.09% and 52.94% respectively in the 28 samples in NHS (cohort 4) when nephrectomy is used as a gold standard. The results are in agreement with previous literatures which determined biopsy to be poor in predicting tumour grade.

The results obtained via biopsy was compared to our ML models. The models outperformed biopsy by far, in fact our worst performing model was still better than biopsy. The best model had an accuracy of 96.43%, sensitivity of 90.91% and specificity of 100% in the internal validation, which is a 60.72% improvement in accuracy. Likewise, in the external validation there was an improvement of 46.43% in accuracy having obtained accuracy, sensitivity and specificity of 82.14%, 72.73% and 88.24% respectively. We can therefore conclude that ML is able to distinguish low grade from high grade ccRCC with a better accuracy compared to biopsy and therefore should be considered over biopsy.

From previous research no paper has tackled the effect of tumour subregion with regards to the grading of ccRCC hence there were no literatures for which our results could be compared.

The current research has dived deeper into the possibility of pre-operatively grading ccRCC without the necessity of biopsy. Moreover, it has analysed the effect of the information contained in different tumour subregions on grading. It is the belief of the authors of this research that the study will assist clinicians in finding the best management strategies for patients of ccRCC as well as enable informative pre-treatment assessment that will allow tailoring treatment to individual patients.

The work encountered a few challenges which will be important to highlight. The samples used in this study were from different institutions and the scans were captured using different scanners and protocols. This may have lowered the overall performance of the models. However, it was important to use such data because the research was not meant to be institution specific instead generally applicable. Secondly, the retrospective nature of the research may have limited our work, as it is therefore recommended that more research needs to be done by a prospective study. Third, the current research assumed that the divided tumour subregions (25%, 50% and 75% core and periphery) are heterogeneous in nature. More research is encouraged using pixel intensity measures from different tumour subregions. Fourth, manual segmentation is not only time consuming but also subjected to observer variability so research on an automated tumour image segmentation technique is encouraged. Moreover, despite this being one of the few studies which has used a large sample size, we still consider our sample size to be low with respect to ML and AI which often uses larger datasets for training.