Submitted:

02 March 2026

Posted:

03 March 2026

You are already at the latest version

Abstract

Keywords:

Introduction

Behavioural Evidence for Pitch-Based Selective Listening

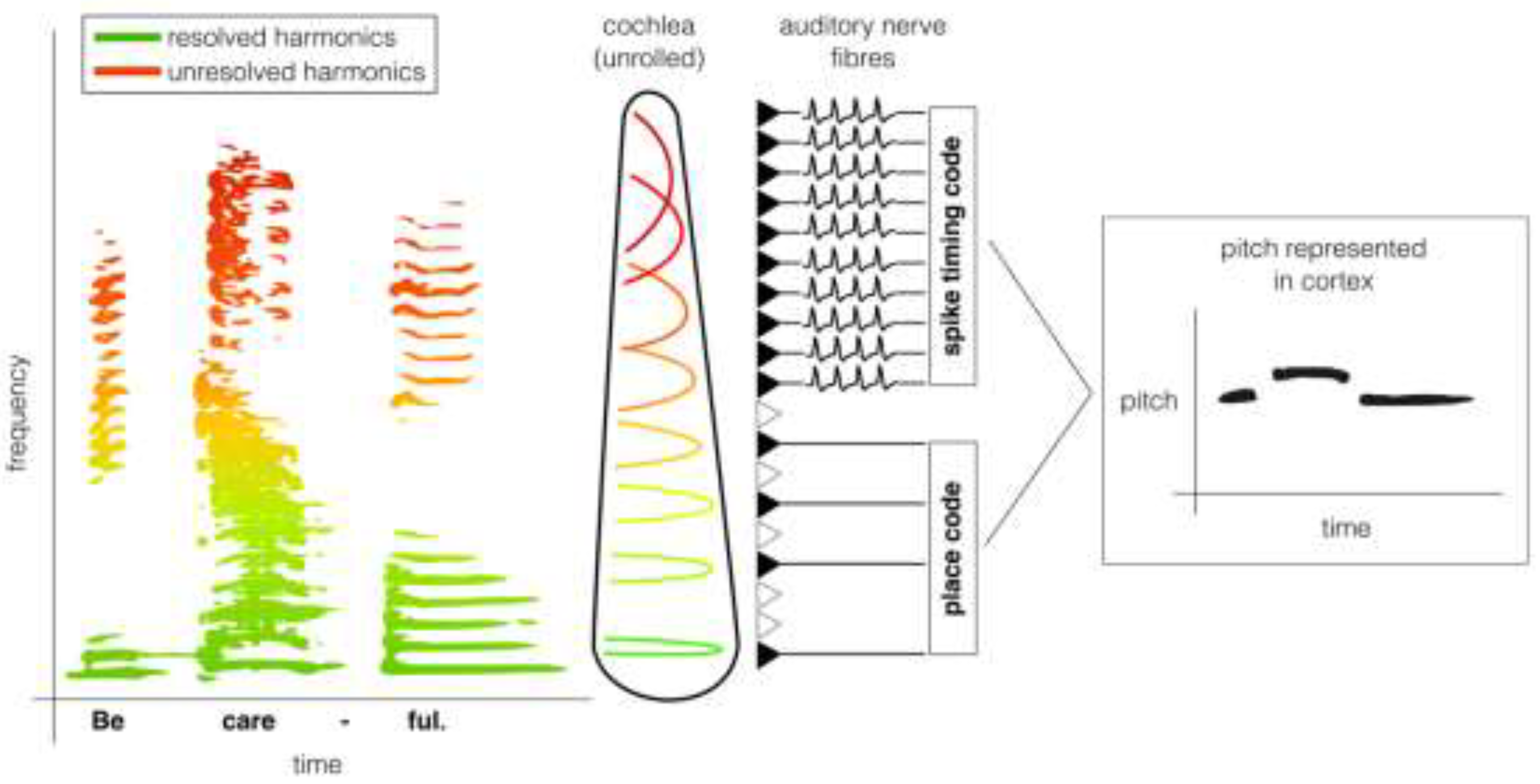

The Roles of Resolved Harmonics and Temporal Pitch Cues in Selective Listening

Neural Activity Supporting Pitch-Based Selective Listening

Does Attention Enhance the Target or Suppress the Distractor?

Conclusion

Abbreviations

| EEG | electroencephalography |

| MEG | magnetoencephalography |

| fMRI | functional magnetic resonance imaging |

| ECoG | electrocorticography |

| F0 | fundamental frequency |

Author Contributions

Funding

Conflicts of Interest

References

- Bregman, A.S. Auditory Scene Analysis: The Perceptual Organization of Sound; The MIT Press, 1990; ISBN 978-0-262-26920-9. [Google Scholar]

- Micheyl, C.; Oxenham, A.J. Pitch, Harmonicity and Concurrent Sound Segregation: Psychoacoustical and Neurophysiological Findings. Hear. Res. 2010, 266, 36–51. [Google Scholar] [CrossRef]

- Shamma, S.; Elhilali, M.; Ma, L.; Micheyl, C.; Oxenham, A.J.; Pressnitzer, D.; Yin, P.; Xu, Y. Temporal Coherence and the Streaming of Complex Sounds. In Basic Aspects of Hearing; Moore, B.C.J., Patterson, R.D., Winter, I.M., Carlyon, R.P., Gockel, H.E., Eds.; Springer: New York, NY, 2013; pp. 535–543. ISBN 978-1-4614-1590-9. [Google Scholar]

- Shinn-Cunningham, B.G. Object-Based Auditory and Visual Attention. Trends Cogn. Sci. 2008, 12, 182–186. [Google Scholar] [CrossRef]

- Fritz, J.B.; Elhilali, M.; Shamma, S.A. Adaptive Changes in Cortical Receptive Fields Induced by Attention to Complex Sounds. J Neurophysiol 2007, 98, 2337–2346. [Google Scholar] [CrossRef]

- Mesgarani, N.; Chang, E.F. Selective Cortical Representation of Attended Speaker in Multi-Talker Speech Perception. Nature 2012, 485, 233–236. [Google Scholar] [CrossRef] [PubMed]

- Grossberg, S. Pitch-Based Streaming in Auditory Perception. In Musical Networks: Parallel Distributed Perception and Performance; MIT Press: Cambridge, MA, 1996; pp. 117–140. [Google Scholar]

- ANSI-S1.1; Acoustical Terminology. Acoustical Society of America, 2013.

- Brosch, M.; Selezneva, E.; Bucks, C.; Scheich, H. Macaque Monkeys Discriminate Pitch Relationships. Cognition 2004, 91, 259–272. [Google Scholar] [CrossRef] [PubMed]

- Klinge, A.; Klump, G.M. Frequency Difference Limens of Pure Tones and Harmonics within Complex Stimuli in Mongolian Gerbils and Humans. J Acoust Soc Am 2009, 125, 304–314. [Google Scholar] [CrossRef] [PubMed]

- Osmanski, M.S.; Song, X.; Wang, X. The Role of Harmonic Resolvability in Pitch Perception in a Vocal Nonhuman Primate, the Common Marmoset ( Callithrix Jacchus ). J. Neurosci. 2013, 33, 9161–9168. [Google Scholar] [CrossRef]

- Walker, K.M.; Gonzalez, R.; Kang, J.Z.; McDermott, J.H.; King, A.J. Across-Species Differences in Pitch Perception Are Consistent with Differences in Cochlear Filtering. eLife 2019, 8, e41626. [Google Scholar] [CrossRef]

- Walker, K.M.M.; Schnupp, J.W.H.; Hart-Schnupp, S.M.B.; King, A.J.; Bizley, J.K. Pitch Discrimination by Ferrets for Simple and Complex Sounds. J. Acoust. Soc. Am. 2009, 126, 1321–1335. [Google Scholar] [CrossRef]

- Wang, X.; Walker, K.M.M. Neural Mechanisms for the Abstraction and Use of Pitch Information in Auditory Cortex. J. Neurosci. 2012, 32, 13339–13342. [Google Scholar] [CrossRef]

- O’Sullivan, J.; Herrero, J.; Smith, E.; Schevon, C.; McKhann, G.M.; Sheth, S.A.; Mehta, A.D.; Mesgarani, N. Hierarchical Encoding of Attended Auditory Objects in Multi-Talker Speech Perception. Neuron 2019, 104, 1195–1209.e3. [Google Scholar] [CrossRef]

- Morrill, R.J.; Bigelow, J.; DeKloe, J.; Hasenstaub, A.R. Audiovisual Task Switching Rapidly Modulates Sound Encoding in Mouse Auditory Cortex. eLife 2022, 11, e75839. [Google Scholar] [CrossRef] [PubMed]

- O’Connell, M.N.; Barczak, A.; Schroeder, C.E.; Lakatos, P. Layer Specific Sharpening of Frequency Tuning by Selective Attention in Primary Auditory Cortex. J. Neurosci. 2014, 34, 16496–16508. [Google Scholar] [CrossRef]

- Carlyon, R.P.; Cusack, R.; Foxton, J.M.; Robertson, I.H. Effects of Attention and Unilateral Neglect on Auditory Stream Segregation. J. Exp. Psychol. Hum. Percept. Perform. 2001, 27, 115–127. [Google Scholar] [CrossRef] [PubMed]

- Okita, T. Selective Attention and Event-Related Potentials. Jpn. J. Physiol. Psychol. Psychophysiol. 1985, 3, 11–22. [Google Scholar] [CrossRef]

- Shackleton, T.M.; Carlyon, R.P. The Role of Resolved and Unresolved Harmonics in Pitch Perception and Frequency Modulation Discrimination. J. Acoust. Soc. Am. 1994, 95, 3529–3540. [Google Scholar] [CrossRef]

- Eliades, S.J.; Wang, X. Neural Substrates of Vocalization Feedback Monitoring in Primate Auditory Cortex. Nature 2008, 453, 1102–1106. [Google Scholar] [CrossRef] [PubMed]

- Schneider, D.M.; Woolley, S.M.N. Sparse and Background-Invariant Coding of Vocalizations in Auditory Scenes. Neuron 2013, 79, 141–152. [Google Scholar] [CrossRef]

- Shamma, S.A.; Elhilali, M.; Micheyl, C. Temporal Coherence and Attention in Auditory Scene Analysis. Trends Neurosci. 2011, 34, 114–123. [Google Scholar] [CrossRef]

- Zion Golumbic, E.M.; Ding, N.; Bickel, S.; Lakatos, P.; Schevon, C.A.; McKhann, G.M.; Goodman, R.R.; Emerson, R.; Mehta, A.D.; Simon, J.Z.; et al. Mechanisms Underlying Selective Neuronal Tracking of Attended Speech at a “Cocktail Party.”. Neuron 2013, 77, 980–991. [Google Scholar] [CrossRef]

- Gatehouse, S.; Noble, W. The Speech, Spatial and Qualities of Hearing Scale (SSQ). Int. J. Audiol. 2004, 43, 85–99. [Google Scholar] [CrossRef] [PubMed]

- Parthasarathy, A.; Hancock, K.E.; Bennett, K.; DeGruttola, V.; Polley, D.B. Bottom-up and Top-down Neural Signatures of Disordered Multi-Talker Speech Perception in Adults with Normal Hearing. eLife 2020, 9, e51419. [Google Scholar] [CrossRef] [PubMed]

- Brokx, J.P.L.; Nooteboom, S.G. Intonation and the Perceptual Separation of Simultaneous Voices. J. Phon. 1982, 10, 23–36. [Google Scholar] [CrossRef]

- Brungart, D.S.; Simpson, B.D.; Ericson, M.A.; Scott, K.R. Informational and Energetic Masking Effects in the Perception of Multiple Simultaneous Talkers. J. Acoust. Soc. Am. 2001, 110, 2527–2538. [Google Scholar] [CrossRef]

- Assmann, P.F.; Summerfield, Q. The Contribution of Waveform Interactions to the Perception of Concurrent Vowels. J. Acoust. Soc. Am. 1994, 95, 471–484. [Google Scholar] [CrossRef]

- Assmann, P.F.; Summerfield, Q. Modeling the Perception of Concurrent Vowels: Vowels with Different Fundamental Frequencies. J. Acoust. Soc. Am. 1990, 88, 680–697. [Google Scholar] [CrossRef]

- Culling, J.F.; Darwin, C.J. Perceptual Separation of Simultaneous Vowels: Within and Across-formant Grouping by F0. J. Acoust. Soc. Am. 1993, 93, 3454–3467. [Google Scholar] [CrossRef] [PubMed]

- Zwicker, U.T. Auditory Recognition of Diotic and Dichotic Vowel Pairs. Speech Commun. 1984, 3, 265–277. [Google Scholar] [CrossRef]

- Hartmann, W.M.; McAdams, S.; Smith, B.K. Hearing a Mistuned Harmonic in an Otherwise Periodic Complex Tone. J. Acoust. Soc. Am. 1990, 88, 1712–1724. [Google Scholar] [CrossRef]

- Moore, B.C.J.; Glasberg, B.R.; Peters, R.W. Thresholds for Hearing Mistuned Partials as Separate Tones in Harmonic Complexes. J. Acoust. Soc. Am. 1986, 80, 479–483. [Google Scholar] [CrossRef] [PubMed]

- Moore, B.C.J.; Peters, R.W.; Glasberg, B.R. Thresholds for the Detection of Inharmonicity in Complex Tones. J. Acoust. Soc. Am. 1985, 77, 1861–1867. [Google Scholar] [CrossRef]

- Roberts, B.; Brunstrom, J.M. Perceptual Segregation and Pitch Shifts of Mistuned Components in Harmonic Complexes and in Regular Inharmonic Complexes. J. Acoust. Soc. Am. 1998, 104, 2326–2338. [Google Scholar] [CrossRef]

- McPherson, M.J.; Grace, R.C.; McDermott, J.H. Harmonicity Aids Hearing in Noise. Atten. Percept. Psychophys. 2022, 84, 1016–1042. [Google Scholar] [CrossRef] [PubMed]

- Popham, S.; Boebinger, D.; Ellis, D.P.W.; Kawahara, H.; McDermott, J.H. Inharmonic Speech Reveals the Role of Harmonicity in the Cocktail Party Problem. Nat. Commun. 2018, 9, 2122. [Google Scholar] [CrossRef] [PubMed]

- Roberts, B.; Brunstrom, J.M. Perceptual Fusion and Fragmentation of Complex Tones Made Inharmonic by Applying Different Degrees of Frequency Shift and Spectral Stretch. J. Acoust. Soc. Am. 2001, 110, 2479–2490. [Google Scholar] [CrossRef] [PubMed]

- McPherson, M.J.; McDermott, J.H. Diversity in Pitch Perception Revealed by Task Dependence. Nat. Hum. Behav. 2017, 2, 52–66. [Google Scholar] [CrossRef]

- Bregman, A.S.; Liao, C.; Levitan, R. Auditory Grouping Based on Fundamental Frequency and Formant Peak Frequency. Can. J. Psychol. 1990, 44, 400–413. [Google Scholar] [CrossRef]

- Singh, P.G. Perceptual Organization of Complex-tone Sequences: A Tradeoff between Pitch and Timbre? J. Acoust. Soc. Am. 1987, 82, 886–899. [Google Scholar] [CrossRef]

- Fishman, Y.I.; Steinschneider, M. Neural Correlates of Auditory Scene Analysis Based on Inharmonicity in Monkey Primary Auditory Cortex. J. Neurosci. 2010, 30, 12480–12494. [Google Scholar] [CrossRef]

- Homma, N.Y.; Bajo, V.M.; Happel, M.F.K.; Nodal, F.R.; King, A.J. Mistuning Detection Performance of Ferrets in a Go/No-Go Task. J. Acoust. Soc. Am. 2016, 139, EL246–EL251. [Google Scholar] [CrossRef]

- Klinge, A.; Klump, G. Mistuning Detection and Onset Asynchrony in Harmonic Complexes in Mongolian Gerbils. J. Acoust. Soc. Am. 2010, 128, 280–290. [Google Scholar] [CrossRef]

- Lohr, B.; Dooling, R.J. Detection of Changes in Timbre and Harmonicity in Complex Sounds by Zebra Finches (Taeniopygia Guttata) and Budgerigars (Melopsittacus Undulatus). J. Comp. Psychol. 1998, 112, 36–47. [Google Scholar] [CrossRef]

- Itatani, N.; Klump, G.M. Animal Models for Auditory Streaming. Philos. Trans. R. Soc. B Biol. Sci. 2017, 372, 20160112. [Google Scholar] [CrossRef]

- Dowling, W.J. The Perception of Interleaved Melodies. Cognit. Psychol. 1973, 5, 322–337. [Google Scholar] [CrossRef]

- Izumi, A. Auditory Stream Segregation in Japanese Monkeys. Cognition 2002, 82, B113–B122. [Google Scholar] [CrossRef]

- Ma, L.; Micheyl, C.; Yin, P.; Oxenham, A.J.; Shamma, S.A. Behavioral Measures of Auditory Streaming in Ferrets (Mustela Putorius). J. Comp. Psychol. 2010, 124, 317–330. [Google Scholar] [CrossRef] [PubMed]

- MacDougall-Shackleton, S.A.; Hulse, S.H.; Gentner, T.Q.; White, W. Auditory Scene Analysis by European Starlings (Sturnus Vulgaris): Perceptual Segregation of Tone Sequences. J. Acoust. Soc. Am. 1998, 103, 3581–3587. [Google Scholar] [CrossRef] [PubMed]

- Fay, R.R. Auditory Stream Segregation in Goldfish (Carassius Auratus). Hear. Res. 1998, 120, 69–76. [Google Scholar] [CrossRef]

- Geissler, D.B.; Ehret, G. Time-Critical Integration of Formants for Perception of Communication Calls in Mice. Proc. Natl. Acad. Sci. 2002, 99, 9021–9025. [Google Scholar] [CrossRef]

- Caporello Bluvas, E.; Gentner, T.Q. Attention to Natural Auditory Signals. Hear. Res. 2013, 305, 10–18. [Google Scholar] [CrossRef] [PubMed]

- Schwartz, Z.P.; David, S.V. Focal Suppression of Distractor Sounds by Selective Attention in Auditory Cortex. Cereb Cortex 2018, 28, 323–339. [Google Scholar] [CrossRef]

- Rodgers, C.C.; DeWeese, M.R. Neural Correlates of Task Switching in Prefrontal Cortex and Primary Auditory Cortex in a Novel Stimulus Selection Task for Rodents. Neuron 2014, 82, 1157–1170. [Google Scholar] [CrossRef]

- Moore, B.C.J.; Gockel, H.E. Resolvability of Components in Complex Tones and Implications for Theories of Pitch Perception. Hear. Res. 2011, 276, 88–97. [Google Scholar] [CrossRef]

- Carlyon, R.P. Masker Asynchrony Impairs the Fundamental-Frequency Discrimination of Unresolved Harmonics. J. Acoust. Soc. Am. 1996, 99, 525–533. [Google Scholar] [CrossRef] [PubMed]

- Carlyon, R.P. Encoding the Fundamental Frequency of a Complex Tone in the Presence of a Spectrally Overlapping Masker. J. Acoust. Soc. Am. 1996, 99, 517–524. [Google Scholar] [CrossRef]

- Shofner, W.P.; Campbell, J. Pitch Strength of Noise-Vocoded Harmonic Tone Complexes in Normal-Hearing Listeners. J. Acoust. Soc. Am. 2012, 132, EL398–EL404. [Google Scholar] [CrossRef]

- Shofner, W.P.; Chaney, M. Processing Pitch in a Nonhuman Mammal (Chinchilla Laniger). J Comp Psychol 2013, 127, 142–153. [Google Scholar] [CrossRef] [PubMed]

- Song, X.; Osmanski, M.S.; Guo, Y.; Wang, X. Complex Pitch Perception Mechanisms Are Shared by Humans and a New World Monkey. Proc Natl Acad Sci U A 2016, 113, 781–786. [Google Scholar] [CrossRef] [PubMed]

- Vliegen, J.; Moore, B.C.J.; Oxenham, A.J. The Role of Spectral and Periodicity Cues in Auditory Stream Segregation, Measured Using a Temporal Discrimination Task. J. Acoust. Soc. Am. 1999, 106, 938–945. [Google Scholar] [CrossRef]

- Vliegen, J.; Oxenham, A.J. Sequential Stream Segregation in the Absence of Spectral Cues. J. Acoust. Soc. Am. 1999, 105, 339–346. [Google Scholar] [CrossRef]

- Grimault, N.; Micheyl, C.; Carlyon, R.P.; Arthaud, P.; Collet, L. Influence of Peripheral Resolvability on the Perceptual Segregation of Harmonic Complex Tones Differing in Fundamental Frequency. J. Acoust. Soc. Am. 2000, 108, 263–271. [Google Scholar] [CrossRef]

- Madsen, S.M.K.; Dau, T.; Moore, B.C.J. Effect of Harmonic Rank on Sequential Sound Segregation. Hear. Res. 2018, 367, 161–168. [Google Scholar] [CrossRef]

- Madsen, S.M.K.; Dau, T.; Oxenham, A.J. No Interaction between Fundamental-Frequency Differences and Spectral Region When Perceiving Speech in a Speech Background. PLOS ONE 2021, 16, e0249654. [Google Scholar] [CrossRef]

- Tarka, V.M.; Gaucher, Q.; Walker, K.M.M. Pitch Selectivity in Ferret Auditory Cortex 2025.

- Fishman, Y.I.; Micheyl, C.; Steinschneider, M. Neural Representation of Harmonic Complex Tones in Primary Auditory Cortex of the Awake Monkey. J. Neurosci. 2013, 33, 10312–10323. [Google Scholar] [CrossRef] [PubMed]

- Itatani, N.; Klump, G.M. Neural Correlates of Auditory Streaming of Harmonic Complex Sounds With Different Phase Relations in the Songbird Forebrain. J. Neurophysiol. 2011, 105, 188–199. [Google Scholar] [CrossRef] [PubMed]

- Knyazeva, S.; Selezneva, E.; Gorkin, A.; Aggelopoulos, N.C.; Brosch, M. Neuronal Correlates of Auditory Streaming in Monkey Auditory Cortex for Tone Sequences without Spectral Differences. Front. Integr. Neurosci. 2018, 12. [Google Scholar] [CrossRef]

- Dolležal, L.-V.; Itatani, N.; Günther, S.; Klump, G.M. Auditory Streaming by Phase Relations between Components of Harmonic Complexes: A Comparative Study of Human Subjects and Bird Forebrain Neurons. Behav. Neurosci. 2012, 126, 797–808. [Google Scholar] [CrossRef] [PubMed]

- Roberts, B.; Glasberg, B.R.; Moore, B.C.J. Primitive Stream Segregation of Tone Sequences without Differences in Fundamental Frequency or Passband. J. Acoust. Soc. Am. 2002, 112, 2074–2085. [Google Scholar] [CrossRef]

- Oxenham, A.J. Questions and Controversies Surrounding the Perception and Neural Coding of Pitch. Front. Neurosci. 2023, 16, 1074752. [Google Scholar] [CrossRef]

- Alain, C.; Reinke, K.; He, Y.; Wang, C.; Lobaugh, N. Hearing Two Things at Once: Neurophysiological Indices of Speech Segregation and Identification. J. Cogn. Neurosci. 2005, 17, 811–818. [Google Scholar] [CrossRef]

- Alain, C.; Arnott, S.R.; Picton, T.W. Bottom–up and Top–down Influences on Auditory Scene Analysis: Evidence from Event-Related Brain Potentials. J. Exp. Psychol. Hum. Percept. Perform. 2001, 27, 1072–1089. [Google Scholar] [CrossRef]

- Hautus, M.J.; Johnson, B.W. Object-Related Brain Potentials Associated with the Perceptual Segregation of a Dichotically Embedded Pitch. J. Acoust. Soc. Am. 2005, 117, 275–280. [Google Scholar] [CrossRef] [PubMed]

- Alain, C.; Reinke, K.; McDonald, K.L.; Chau, W.; Tam, F.; Pacurar, A.; Graham, S. Left Thalamo-Cortical Network Implicated in Successful Speech Separation and Identification. NeuroImage 2005, 26, 592–599. [Google Scholar] [CrossRef]

- Hill, K.T.; Miller, L.M. Auditory Attentional Control and Selection during Cocktail Party Listening. Cereb. Cortex 2010, 20, 583–590. [Google Scholar] [CrossRef]

- Horton, C.; Srinivasan, R.; D’Zmura, M. Envelope Responses in Single-Trial EEG Indicate Attended Speaker in a ‘Cocktail Party. J. Neural Eng. 2014, 11, 046015. [Google Scholar] [CrossRef] [PubMed]

- Jaeger, M.; Mirkovic, B.; Bleichner, M.G.; Debener, S. Decoding the Attended Speaker From EEG Using Adaptive Evaluation Intervals Captures Fluctuations in Attentional Listening. Front. Neurosci. 2020, 14, 603. [Google Scholar] [CrossRef]

- Kerlin, J.R.; Shahin, A.J.; Miller, L.M. Attentional Gain Control of Ongoing Cortical Speech Representations in a “Cocktail Party.”. J. Neurosci. 2010, 30, 620–628. [Google Scholar] [CrossRef] [PubMed]

- O’Sullivan, J.A.; Power, A.J.; Mesgarani, N.; Rajaram, S.; Foxe, J.J.; Shinn-Cunningham, B.G.; Slaney, M.; Shamma, S.A.; Lalor, E.C. Attentional Selection in a Cocktail Party Environment Can Be Decoded from Single-Trial EEG. Cereb. Cortex 2015, 25, 1697–1706. [Google Scholar] [CrossRef]

- Power, A.J.; Foxe, J.J.; Forde, E.; Reilly, R.B.; Lalor, E.C. At What Time Is the Cocktail Party? A Late Locus of Selective Attention to Natural Speech. Eur. J. Neurosci. 2012, 35, 1497–1503. [Google Scholar] [CrossRef]

- Ding, N.; Simon, J.Z. Emergence of Neural Encoding of Auditory Objects While Listening to Competing Speakers. Proc. Natl. Acad. Sci. 2012, 109, 11854–11859. [Google Scholar] [CrossRef]

- Kong, Y.-Y.; Mullangi, A.; Ding, N. Differential Modulation of Auditory Responses to Attended and Unattended Speech in Different Listening Conditions. Hear. Res. 2014, 316, 73–81. [Google Scholar] [CrossRef]

- Pasley, B.N.; David, S.V.; Mesgarani, N.; Flinker, A.; Shamma, S.A.; Crone, N.E.; Knight, R.T.; Chang, E.F. Reconstructing Speech from Human Auditory Cortex. PLoS Biol. 2012, 10, e1001251. [Google Scholar] [CrossRef] [PubMed]

- Snyder, J.S.; Alain, C.; Picton, T.W. Effects of Attention on Neuroelectric Correlates of Auditory Stream Segregation. J. Cogn. Neurosci. 2006, 18, 1–13. [Google Scholar] [CrossRef]

- Sussman, E.; Ritter, W.; Vaughan, H.G. An Investigation of the Auditory Streaming Effect Using Event-Related Brain Potentials. Psychophysiology 1999, 36, 22–34. [Google Scholar] [CrossRef] [PubMed]

- Wilson, E.C.; Melcher, J.R.; Micheyl, C.; Gutschalk, A.; Oxenham, A.J. Cortical fMRI Activation to Sequences of Tones Alternating in Frequency: Relationship to Perceived Rate and Streaming. J. Neurophysiol. 2007, 97, 2230–2238. [Google Scholar] [CrossRef] [PubMed]

- Cusack, R. The Intraparietal Sulcus and Perceptual Organization. J. Cogn. Neurosci. 2005, 17, 641–651. [Google Scholar] [CrossRef]

- Gutschalk, A.; Oxenham, A.J.; Micheyl, C.; Wilson, E.C.; Melcher, J.R. Human Cortical Activity during Streaming without Spectral Cues Suggests a General Neural Substrate for Auditory Stream Segregation. J. Neurosci. 2007, 27, 13074–13081. [Google Scholar] [CrossRef]

- Larsen, E.; Cedolin, L.; Delgutte, B. Pitch Representations in the Auditory Nerve: Two Concurrent Complex Tones. J. Neurophysiol. 2008, 100, 1301–1319. [Google Scholar] [CrossRef]

- Palmer, A.R. Segregation of the Responses to Paired Vowels in the Auditory Nerve of the Guinea-Pig Using Autocorrelation. In The Auditory Processing of Speech; Schouten, M.E., Ed.; DE GRUYTER MOUTON, 1992; pp. 115–124. ISBN 978-3-11-013589-3. [Google Scholar]

- Sinex, D.G.; Guzik, H.; Li, H.; Henderson Sabes, J. Responses of Auditory Nerve Fibers to Harmonic and Mistuned Complex Tones. Hear. Res. 2003, 182, 130–139. [Google Scholar] [CrossRef]

- Tramo, M.J.; Cariani, P.A.; Delgutte, B.; Braida, L.D. Neurobiological Foundations for the Theory of Harmony in Western Tonal Music. Ann. N. Y. Acad. Sci. 2001, 930, 92–116. [Google Scholar] [CrossRef]

- Keilson, S.E.; Richards, V.M.; Wyman, B.T.; Young, E.D. The Representation of Concurrent Vowels in the Cat Anesthetized Ventral Cochlear Nucleus: Evidence for a Periodicity-Tagged Spectral Representation. J. Acoust. Soc. Am. 1997, 102, 1056–1071. [Google Scholar] [CrossRef] [PubMed]

- Fishman, Y.I.; Micheyl, C.; Steinschneider, M. Neural Representation of Concurrent Vowels in Macaque Primary Auditory Cortex. eNeuro 2016, 3, ENEURO.0071-16.2016. [Google Scholar] [CrossRef] [PubMed]

- Bar-Yosef, O.; Nelken, I. The Effects of Background Noise on the Neural Responses to Natural Sounds in Cat Primary Auditory Cortex. Front. Comput. Neurosci. 2007, 1. [Google Scholar] [CrossRef]

- Hamersky, G.R.; Shaheen, L.A.; Espejo, M.L.; Wingert, J.C.; David, S.V. Reduced Neural Responses to Natural Foreground versus Background Sounds in the Auditory Cortex. J. Neurosci. 2025, 45, e0121242024. [Google Scholar] [CrossRef] [PubMed]

- Mesgarani, N.; David, S.V.; Fritz, J.B.; Shamma, S.A. Mechanisms of Noise Robust Representation of Speech in Primary Auditory Cortex. Proc. Natl. Acad. Sci. 2014, 111, 6792–6797. [Google Scholar] [CrossRef]

- Moore, R.C.; Lee, T.; Theunissen, F.E. Noise-Invariant Neurons in the Avian Auditory Cortex: Hearing the Song in Noise. PLOS Comput. Biol. 2013, 9, e1002942. [Google Scholar] [CrossRef]

- Rabinowitz, N.C.; Willmore, B.D.B.; King, A.J.; Schnupp, J.W.H. Constructing Noise-Invariant Representations of Sound in the Auditory Pathway. PLoS Biol. 2013, 11, e1001710. [Google Scholar] [CrossRef]

- Saderi, D.; Buran, B.N.; David, S.V. Streaming of Repeated Noise in Primary and Secondary Fields of Auditory Cortex. J. Neurosci. 2020, 40, 3783–3798. [Google Scholar] [CrossRef]

- Souffi, S.; Lorenzi, C.; Varnet, L.; Huetz, C.; Edeline, J.-M. Noise-Sensitive But More Precise Subcortical Representations Coexist with Robust Cortical Encoding of Natural Vocalizations. J. Neurosci. 2020, 40, 5228–5246. [Google Scholar] [CrossRef]

- Fishman, Y.I.; Arezzo, J.C.; Steinschneider, M. Auditory Stream Segregation in Monkey Auditory Cortex: Effects of Frequency Separation, Presentation Rate, and Tone Duration. J. Acoust. Soc. Am. 2004, 116, 1656–1670. [Google Scholar] [CrossRef]

- Fishman, Y.I.; Reser, D.H.; Arezzo, J.C.; Steinschneider, M. Neural Correlates of Auditory Stream Segregation in Primary Auditory Cortex of the Awake Monkey. Hear. Res. 2001, 151, 167–187. [Google Scholar] [CrossRef] [PubMed]

- Micheyl, C.; Tian, B.; Carlyon, R.P.; Rauschecker, J.P. Perceptual Organization of Tone Sequences in the Auditory Cortex of Awake Macaques. Neuron 2005, 48, 139–148. [Google Scholar] [CrossRef] [PubMed]

- Elhilali, M.; Ma, L.; Micheyl, C.; Oxenham, A.J.; Shamma, S.A. Temporal Coherence in the Perceptual Organization and Cortical Representation of Auditory Scenes. Neuron 2009, 61, 317–329. [Google Scholar] [CrossRef]

- Kanwal, J.S.; Medvedev, A.V.; Micheyl, C. Neurodynamics for Auditory Stream Segregation: Tracking Sounds in the Mustached Bat’s Natural Environment. Netw. Comput. Neural Syst. 2003, 14, 413–435. [Google Scholar] [CrossRef]

- Noda, T.; Kanzaki, R.; Takahashi, H. Stimulus Phase Locking of Cortical Oscillation for Auditory Stream Segregation in Rats. PLoS ONE 2013, 8, e83544. [Google Scholar] [CrossRef]

- Pressnitzer, D.; Sayles, M.; Micheyl, C.; Winter, I.M. Perceptual Organization of Sound Begins in the Auditory Periphery. Curr. Biol. 2008, 18, 1124–1128. [Google Scholar] [CrossRef]

- Bee, M.A.; Klump, G.M. Primitive Auditory Stream Segregation: A Neurophysiological Study in the Songbird Forebrain. J. Neurophysiol. 2004, 92, 1088–1104. [Google Scholar] [CrossRef]

- Allen, E.J.; Burton, P.C.; Olman, C.A.; Oxenham, A.J. Representations of Pitch and Timbre Variation in Human Auditory Cortex. J. Neurosci. 2017, 37, 1284–1293. [Google Scholar] [CrossRef]

- Bizley, J.K.; Walker, K.M.M.; King, A.J.; Schnupp, J.W.H. Neural Ensemble Codes for Stimulus Periodicity in Auditory Cortex. J. Neurosci. 2010, 30, 5078–5091. [Google Scholar] [CrossRef]

- Bizley, J.K.; Walker, K.M.M.; Silverman, B.W.; King, A.J.; Schnupp, J.W.H. Interdependent Encoding of Pitch, Timbre, and Spatial Location in Auditory Cortex. J. Neurosci. 2009, 29, 2064–2075. [Google Scholar] [CrossRef]

- Gander, P.E.; Kumar, S.; Sedley, W.; Nourski, K.V.; Oya, H.; Kovach, C.K.; Kawasaki, H.; Kikuchi, Y.; Patterson, R.D.; Howard, M.A.; et al. Direct Electrophysiological Mapping of Human Pitch-Related Processing in Auditory Cortex. NeuroImage 2019, 202, 116076. [Google Scholar] [CrossRef]

- Griffiths, T.D.; Kumar, S.; Sedley, W.; Nourski, K.V.; Kawasaki, H.; Oya, H.; Patterson, R.D.; Brugge, J.F.; Howard, M.A. Direct Recordings of Pitch Responses from Human Auditory Cortex. Curr. Biol. 2010, 20, 1128–1132. [Google Scholar] [CrossRef]

- Steinschneider, M.; Reser, D.H.; Fishman, Y.I.; Schroeder, C.E.; Arezzo, J.C. Click Train Encoding in Primary Auditory Cortex of the Awake Monkey: Evidence for Two Mechanisms Subserving Pitch Perception. J. Acoust. Soc. Am. 1998, 104, 2935–2955. [Google Scholar] [CrossRef] [PubMed]

- de Cheveigné, A. Concurrent Vowel Identification. III. A Neural Model of Harmonic Interference Cancellation. J. Acoust. Soc. Am. 1997, 101, 2857–2865. [Google Scholar] [CrossRef]

- de Cheveigné, A.; McAdams, S.; Marin, C.M.H. Concurrent Vowel Identification. II. Effects of Phase, Harmonicity, and Task. J. Acoust. Soc. Am. 1997, 101, 2848–2856. [Google Scholar] [CrossRef]

- de Cheveigné, A.; McAdams, S.; Laroche, J.; Rosenberg, M. Identification of Concurrent Harmonic and Inharmonic Vowels: A Test of the Theory of Harmonic Cancellation and Enhancement. J. Acoust. Soc. Am. 1995, 97, 3736–3748. [Google Scholar] [CrossRef] [PubMed]

- de Cheveigné, A. Harmonic Cancellation—A Fundamental of Auditory Scene Analysis. Trends Hear. 2021, 25. [Google Scholar] [CrossRef]

- Steinmetzger, K.; Rosen, S. No Evidence for a Benefit from Masker Harmonicity in the Perception of Speech in Noise. J. Acoust. Soc. Am. 2023, 153, 1064–1072. [Google Scholar] [CrossRef]

- Nocon, J.C.; Gritton, H.J.; James, N.M.; Mount, R.A.; Qu, Z.; Han, X.; Sen, K. Parvalbumin Neurons Enhance Temporal Coding and Reduce Cortical Noise in Complex Auditory Scenes. Commun. Biol. 2023, 6, 1–14. [Google Scholar] [CrossRef]

- Homma, N.Y.; Happel, M.F.K.; Nodal, F.R.; Ohl, F.W.; King, A.J.; Bajo, V.M. A Role for Auditory Corticothalamic Feedback in the Perception of Complex Sounds. J. Neurosci. 2017, 37, 6149–6161. [Google Scholar] [CrossRef]

| Paradigm / Stimulus | Species | Behavioural Findings | Neurophysiological Findings |

|---|---|---|---|

|

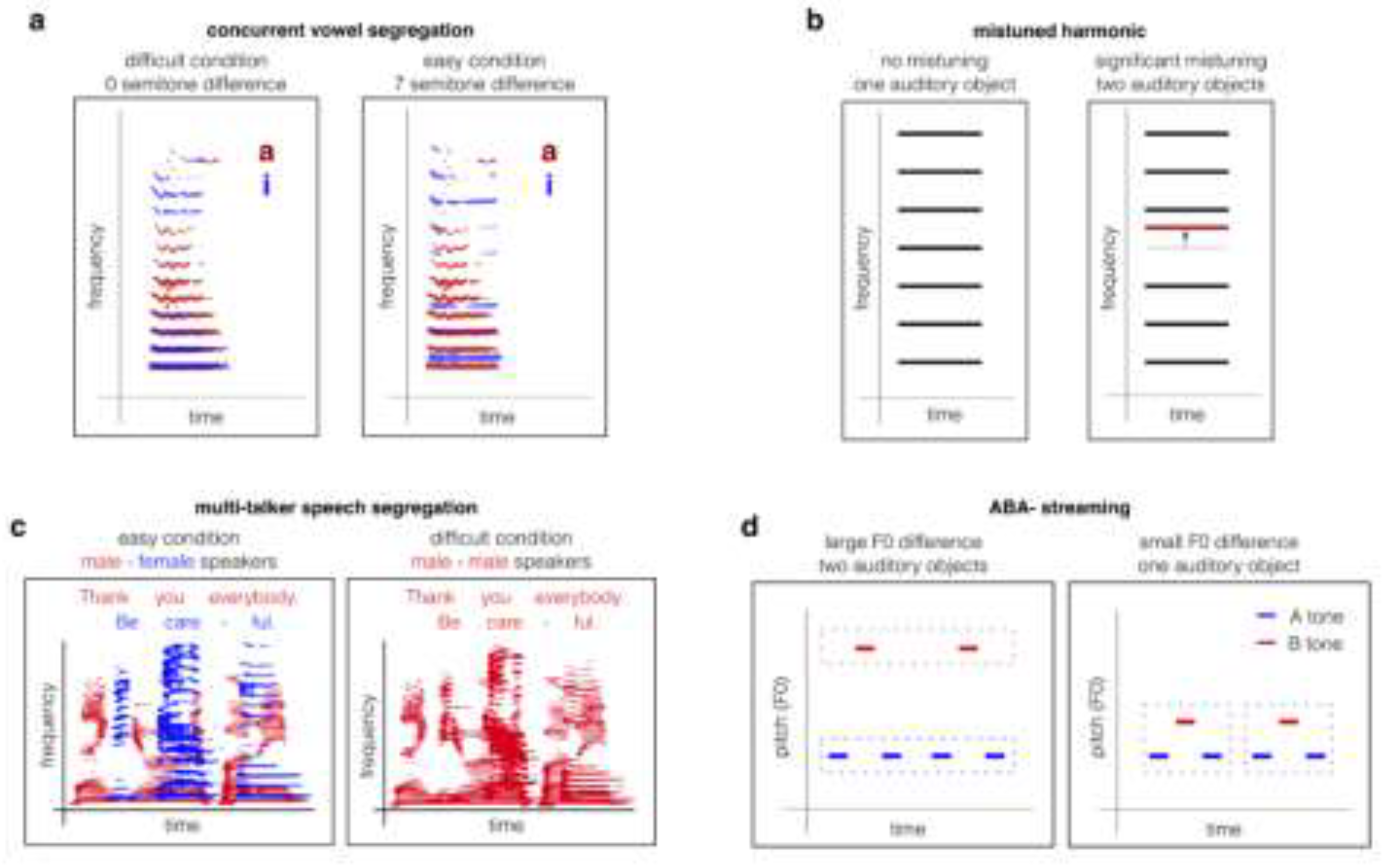

Concurrent vowels |

Human |

F0 differences improve reporting both vowels [29,30,31,32,120,122]. |

Increased activation in auditory cortex when both vowels are successfully identified [75,78]. |

| Non-human animals |

Neural encoding of both vowels in auditory nerve population using place and temporal codes. Population code for both vowels in place code in auditory cortex [98]. |

||

| Mistuned harmonics | Human |

Detection of mistuned harmonics leads to perceptual segregation of the mistuned component as a separate object [33,34,35,36]. |

EEG/MEG show distinct responses to mistuned vs. harmonic tones; fMRI implicates auditory cortex in detecting harmonic violations [76]. |

| Non-human animals |

Animals (ferrets, gerbils, birds) detect mistuned harmonics, demonstrating perceptual grouping based on harmonicity [43,44,45,46]. |

Auditory cortical and subcortical neurons differentiate harmonic from mistuned tones, reflecting harmonicity-based segregation mechanisms [43,126]. | |

|

Two-tone streaming paradigms (pure tones or harmonic complexes) |

Human |

Complex F0 or tone frequency separation drives perceptual segregation; small differences (<10%) yield fusion (“gallop”), larger separations yield two streams [1,41,42]. |

EEG shows early negativity for automatic feature binding, and later positivity (P400) with active attention. fMRI/MEG shows increased auditory cortical responses for segregated vs. fused streams [88,89,90,91,92]. |

| Non-human animals | Monkeys, ferrets, birds, and even fish segregate tone streams when large enough frequency difference. Lack of studies of complex F0 streaming [47,48,49,50]. |

Populations of auditory cortical neurons show more distinct responses to alternating tones when presented with a larger frequency difference. Similar effects for the temporal pitch differences of harmonic complexes [51,70,71,106,107,108,109,110,111,112,113]. |

|

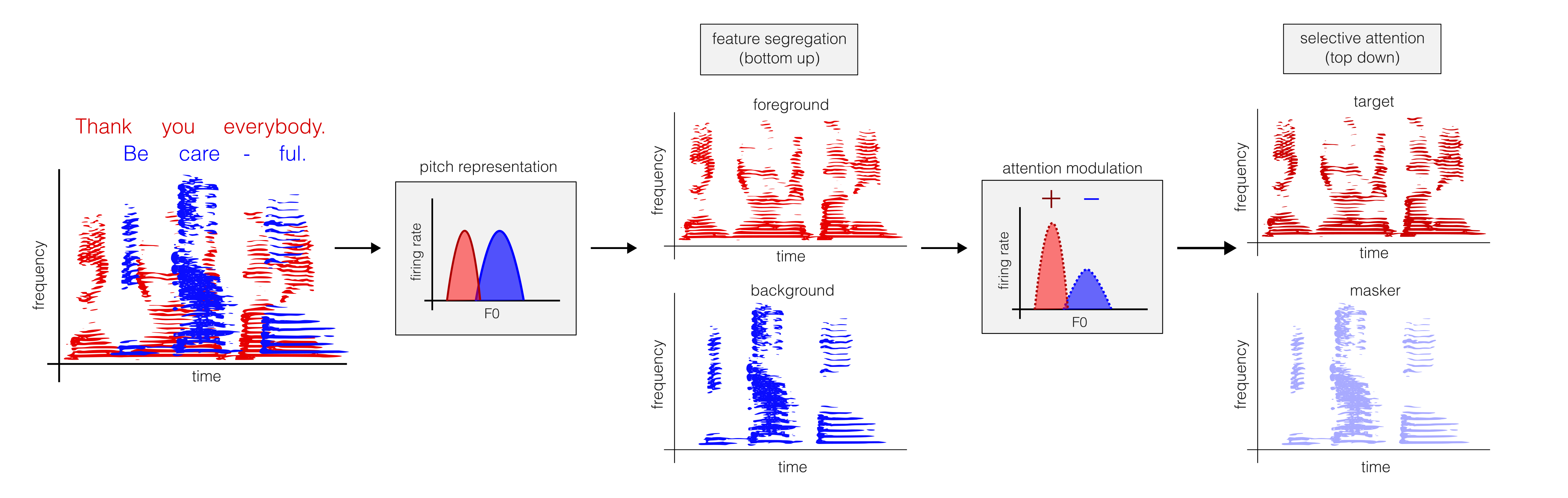

| Multi-talker speech | Human | Female/male voice differences (high/low F0, respectively) facilitate segregation and speech intelligibility [27,28,38]. |

EEG and ECoG: enhanced cortical tracking of attended speech envelope MEG/fMRI: selective enhancement of target voice in secondary auditory cortex [6,24,79,85,86,87]. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).