Submitted:

01 March 2026

Posted:

03 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. The Economic Significance and Transition of the Chinese Housing Market

1.2. Evolution of Predictive Modeling: From Traditional Methods to Machine Learning

1.3. Research Gaps and the Potential of Stacking Fusion Frameworks

2. Materials and Methods

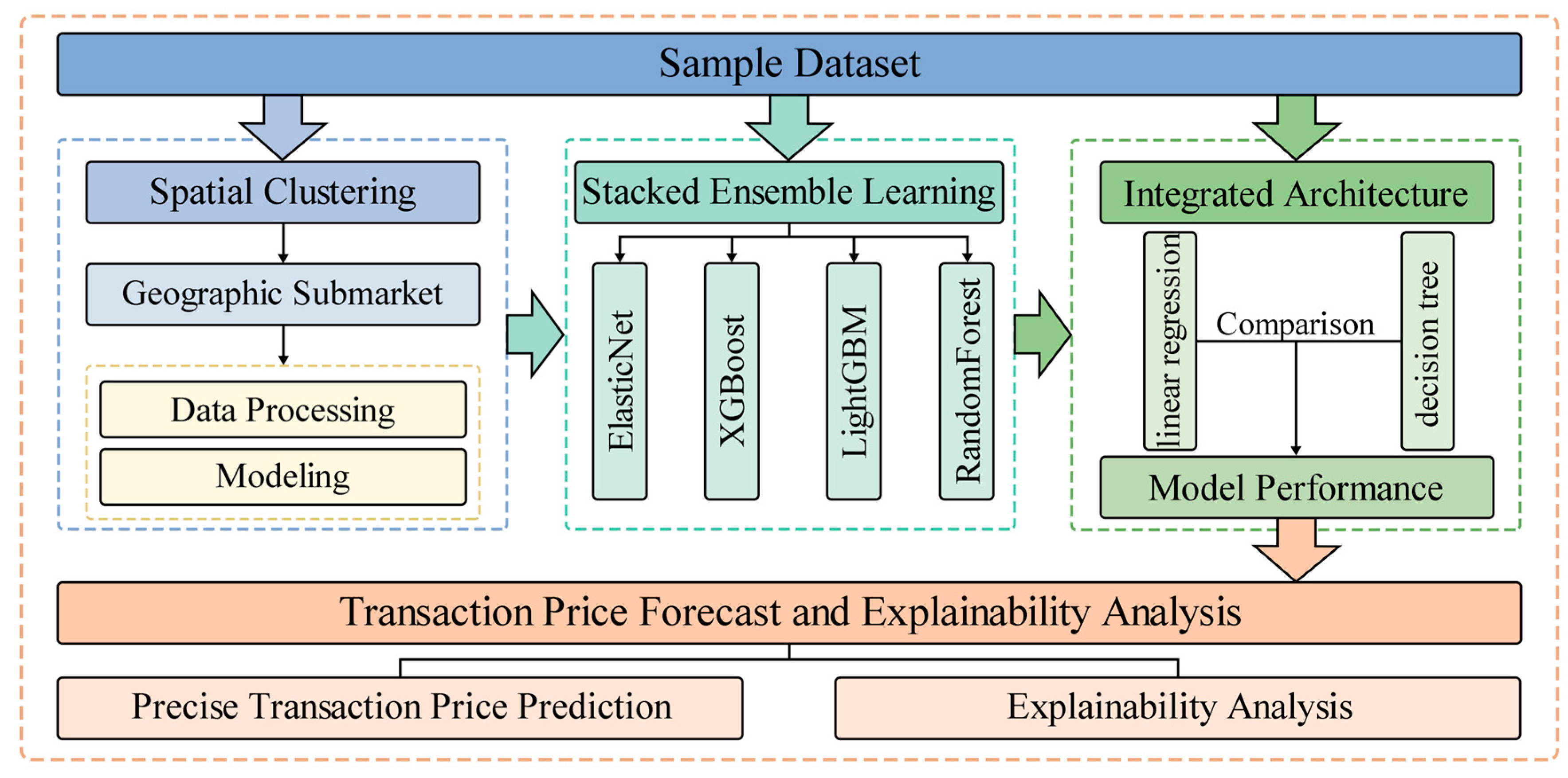

2.1. Research Objective and Overall Framework

2.2. Data Preprocessing

- Data Cleaning: Records with extreme outliers or significant missing values in core variables (e.g., price and area) were removed.

- Feature Engineering: Spatial features were derived from geographic coordinates, and continuous variables were standardized to eliminate scale effects.

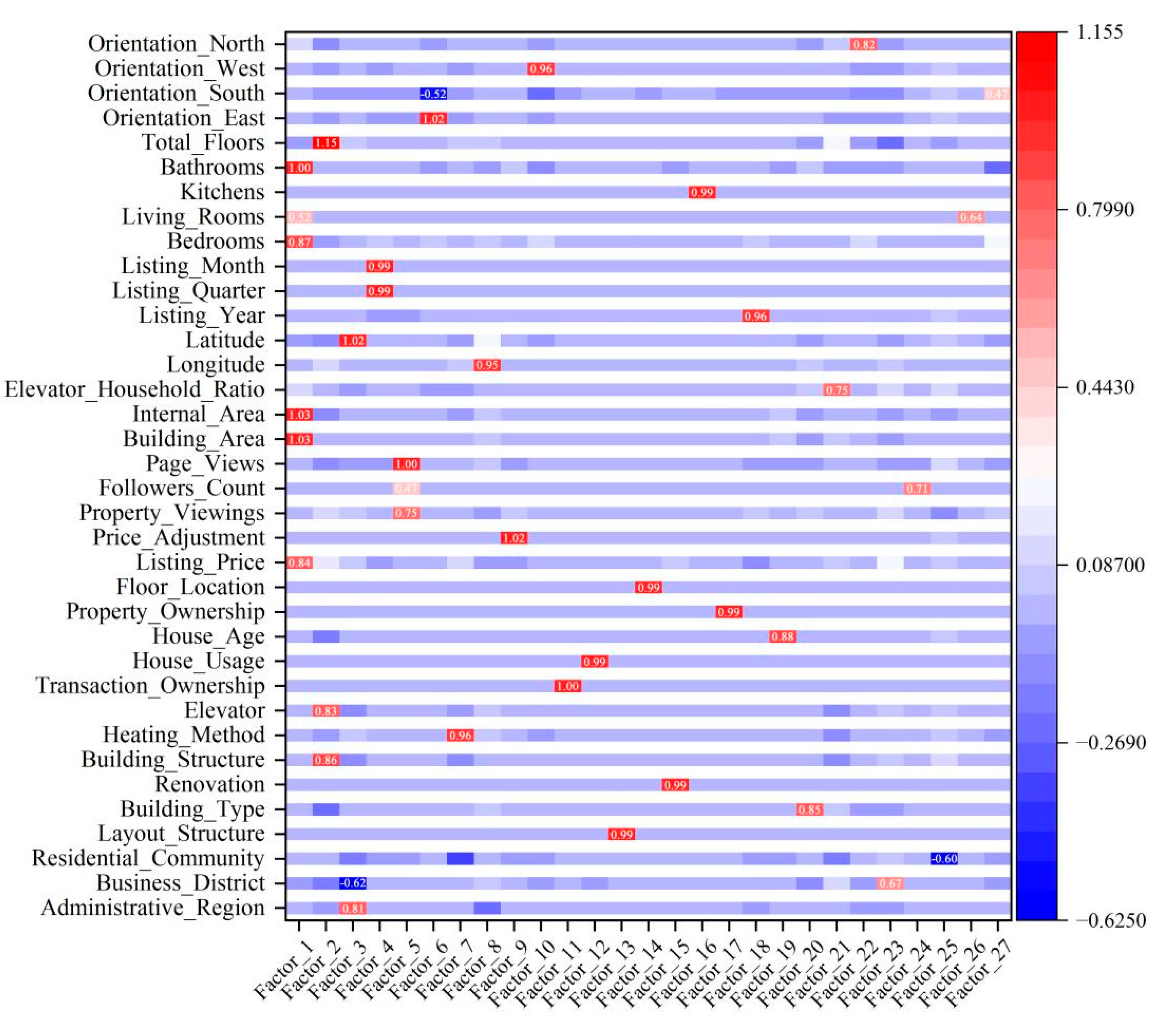

- Feature Dimensionality Reduction: Factor analysis was applied to address multicollinearity and extract interpretable common factors as key input variables for subsequent modeling.

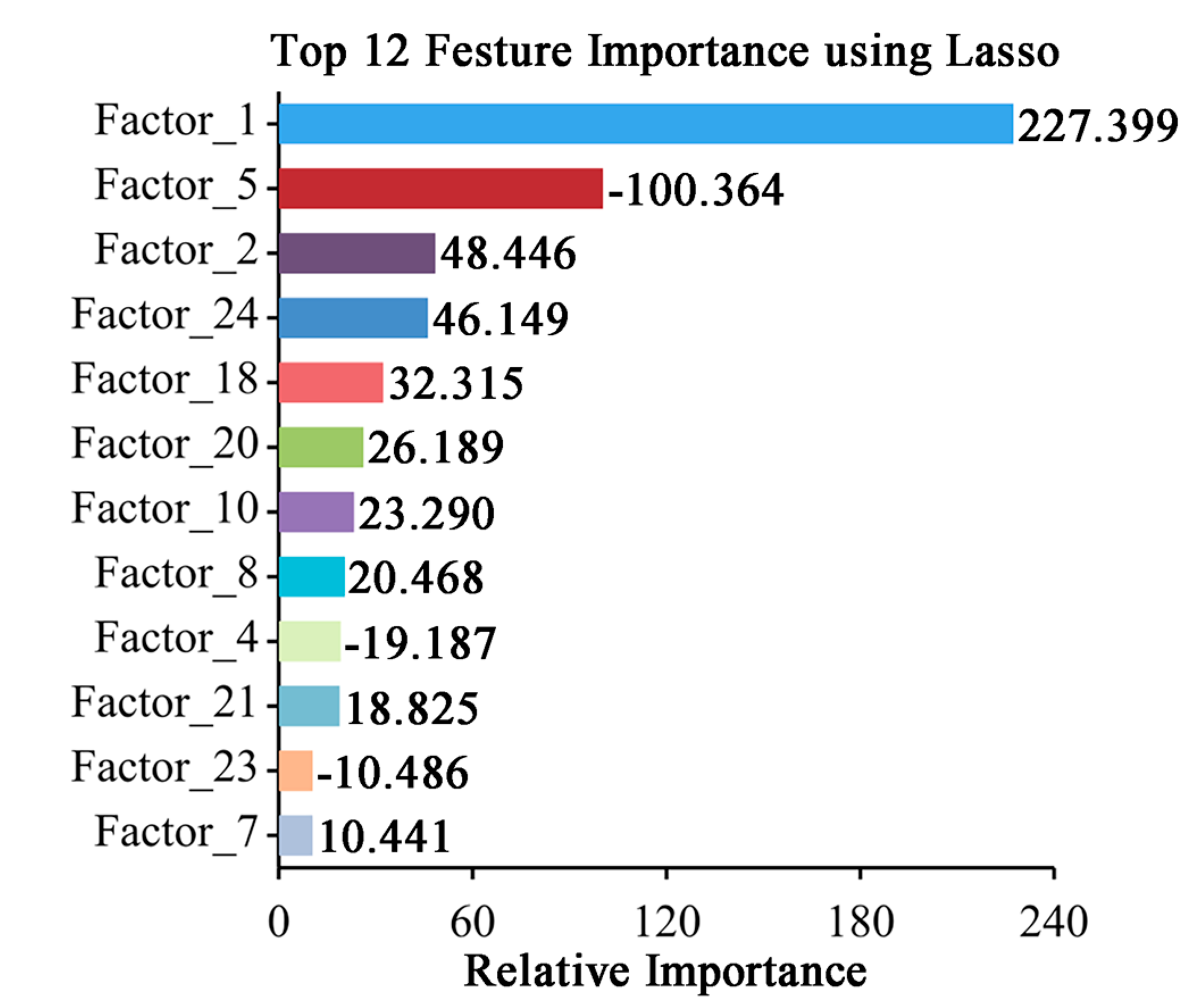

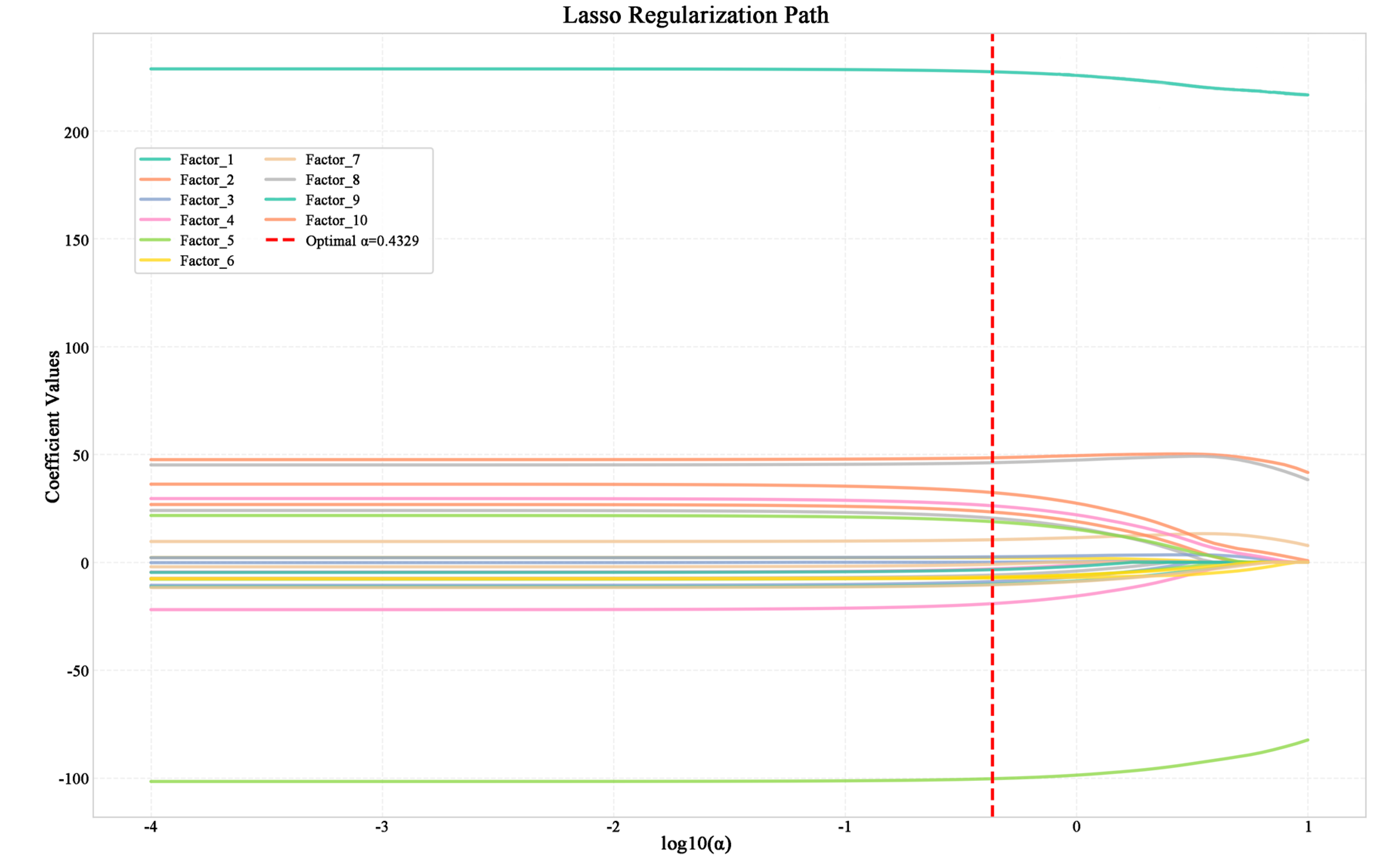

2.3. Lasso-Based Feature Selection

2.4. Model Construction Method

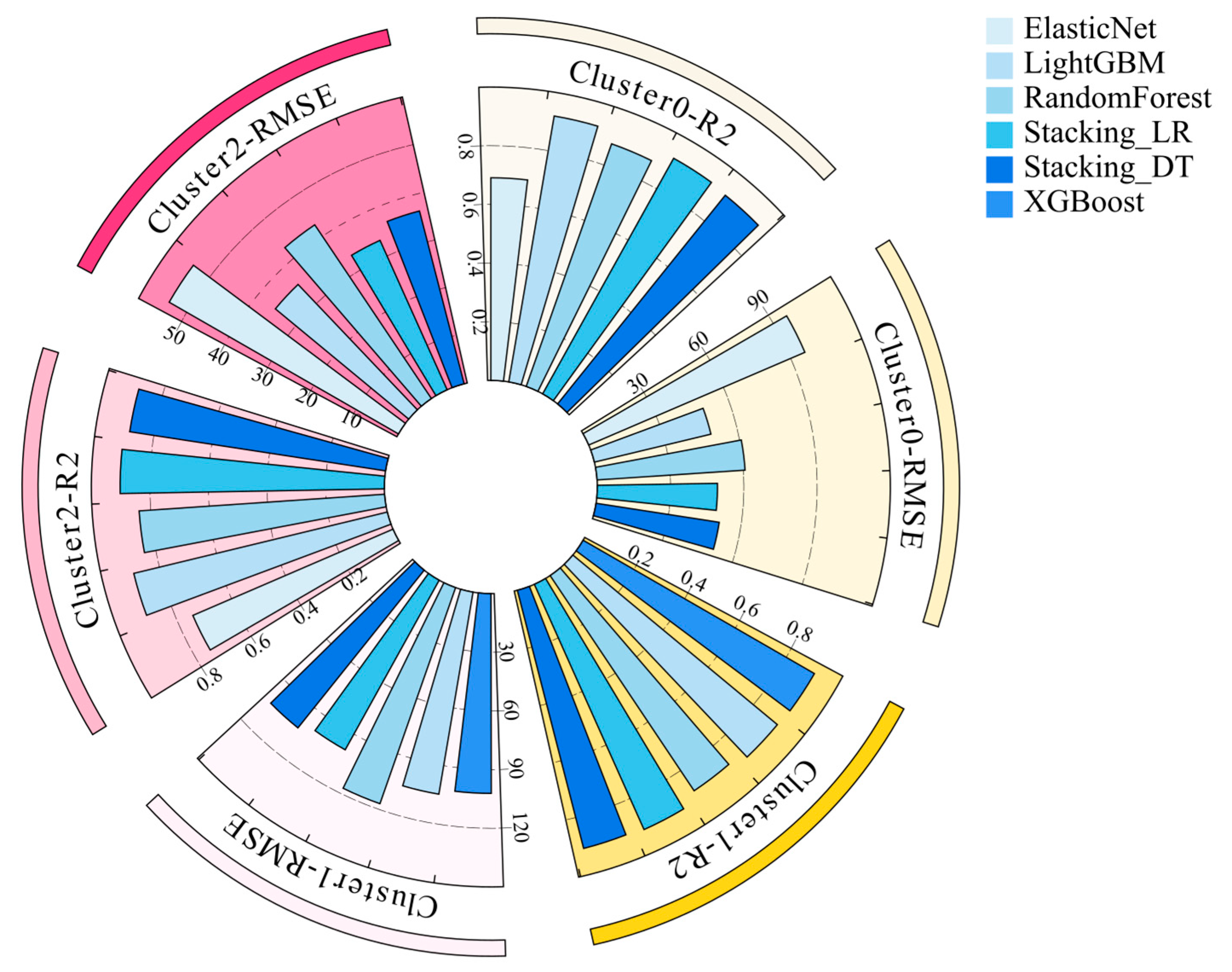

- Base Model Training: Four advanced machine learning algorithms with different inductive biases—ElasticNet, XGBoost, LightGBM, and RandomForest—were trained to capture the complex relationships between housing prices and feature factors within each submarket.

- Stacked Ensemble Learning: To combine the strengths of multiple base models and improve predictive robustness, a stacked ensemble approach was adopted. Predictions from the base models on the validation sets were used as meta—features and input into meta—models. The performance of linear regression (LR) and decision trees (DT) as meta—models was compared to assess the necessity of complex meta—model architectures.

2.5. Model Training and Evaluation

- Adjusted R2 in Equation (2): Measures the proportion of variance explained by the model while penalizing model complexity.

- Root Mean Square Error (RMSE) and Mean Absolute Error (MAE) in Equation (3)-(4): Quantify the magnitude of prediction errors in monetary terms (in units of 10,000 RMB per square meter).

2.6. Interpretability Analysis

3. Results and Discussion

3.1. Data Sources and Preprocessing

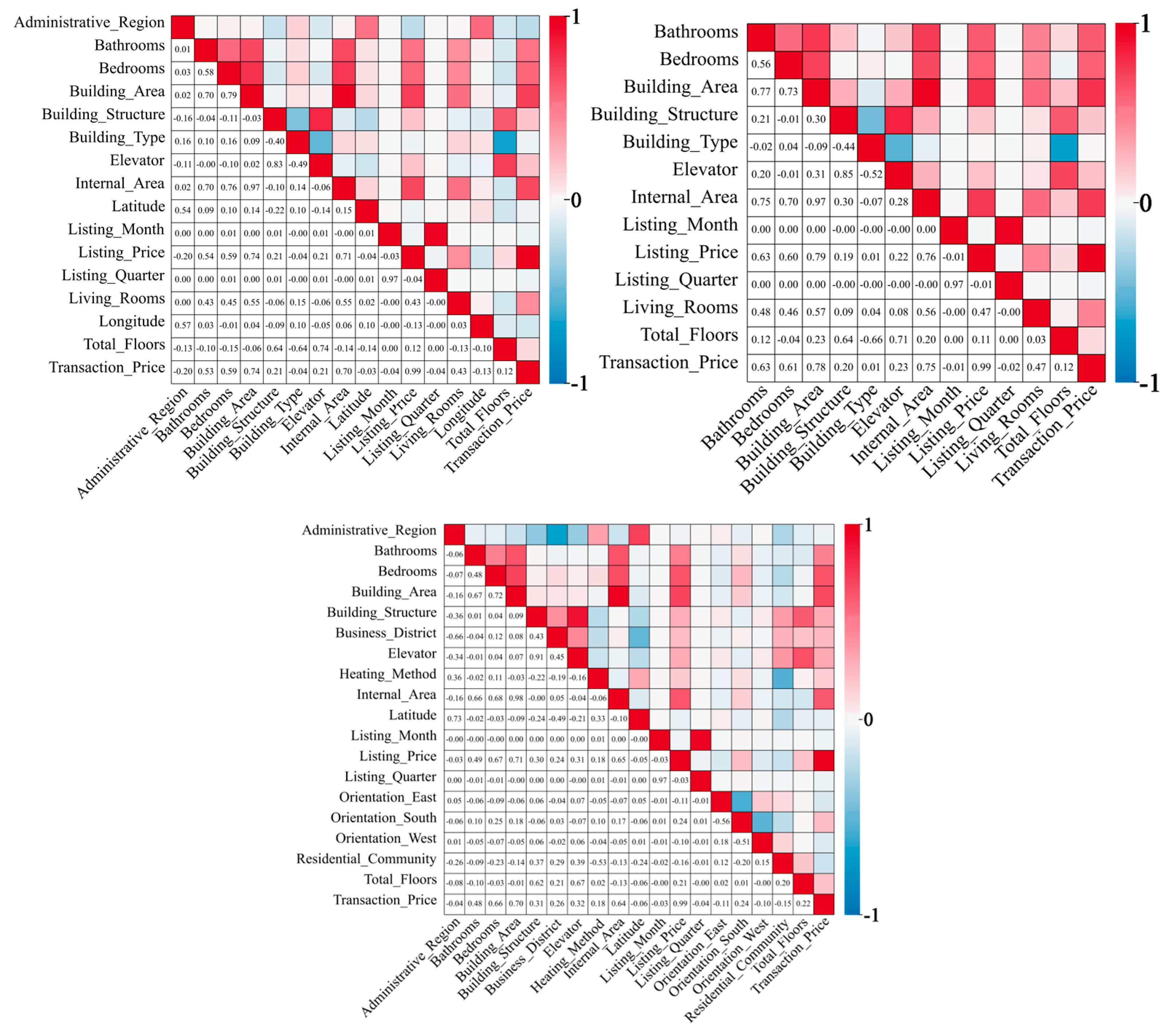

3.2. Feature Engineering

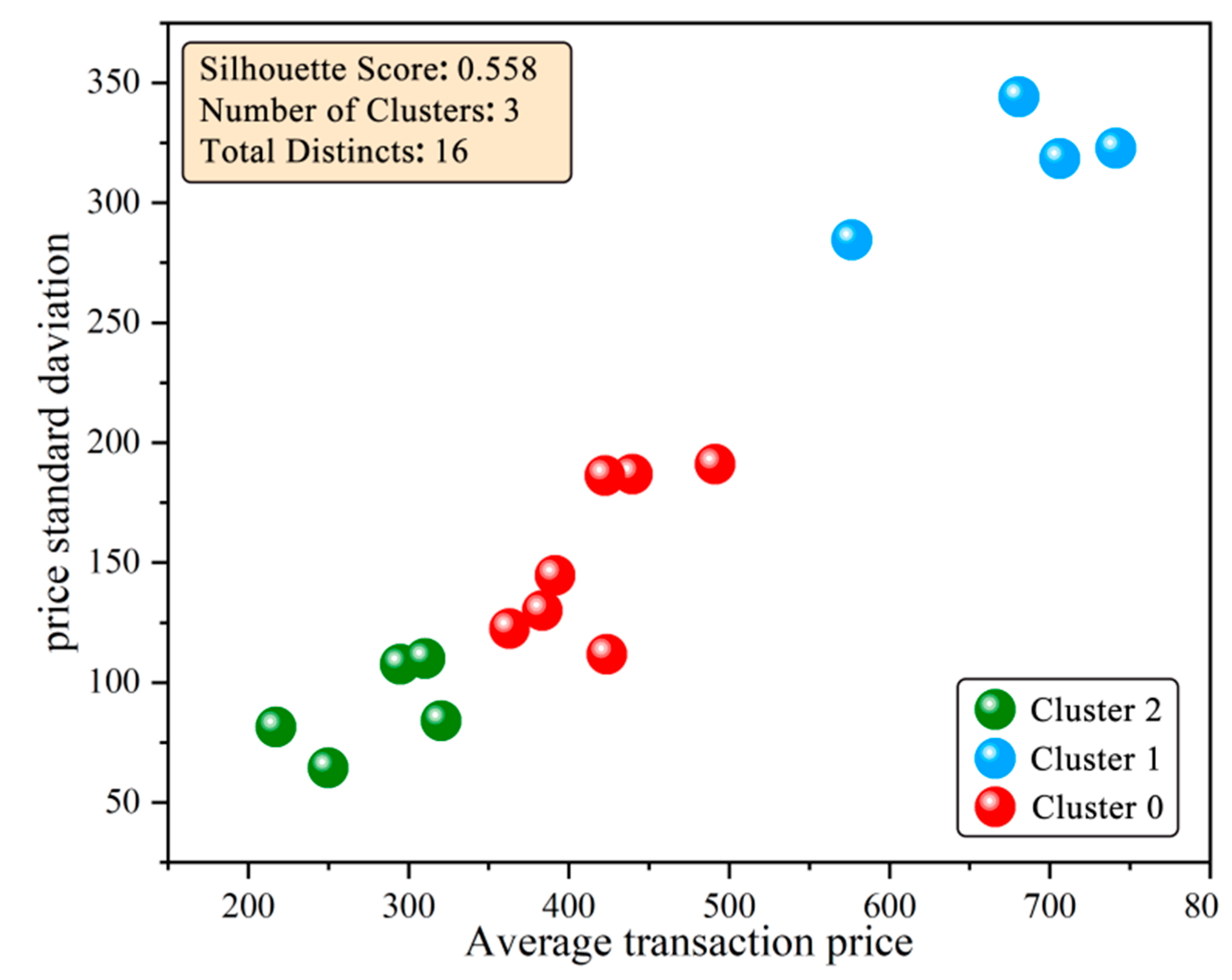

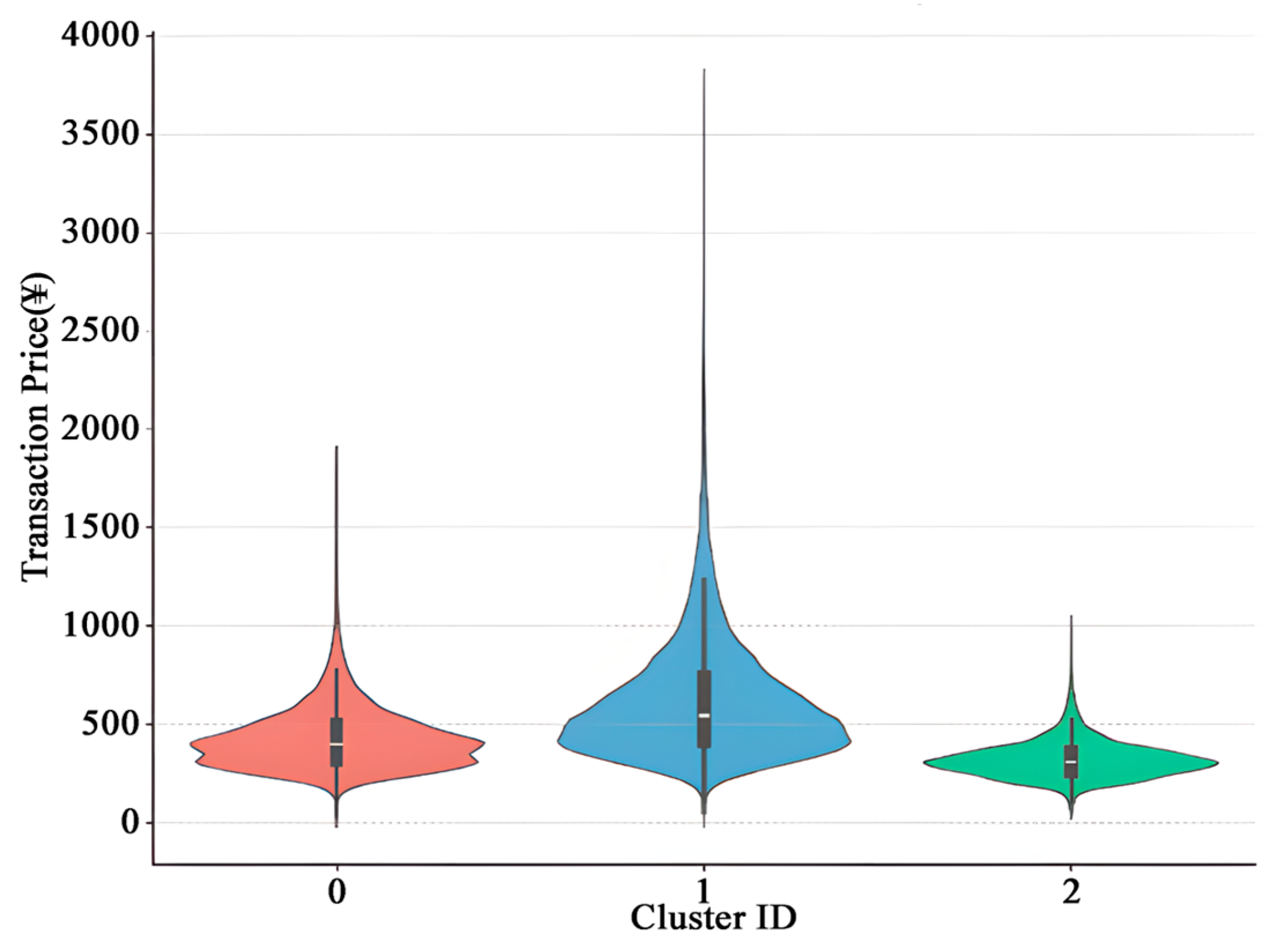

3.2.1. Spatial Clustering Analysis

3.2.2. Dimensionality Reduction

3.2.3. Variable Construction

3.2.4. Factor Analysis

3.2.5. Lasso Feature Selection

3.3. Model Performance Comparison

3.3.1. Factor Naming

- Scale Value Factor: Defined by the listing price, area, and the number of rooms, it reflects the value foundation formed by the physical size of the property

- Architectural Feature Factor: Defined by the building type, structure, elevator availability, and floor level, it captures the hardware attributes and construction quality of the property.

- Scale—Driven Attention Factor: Defined by the viewing frequency, the number of interested buyers, and the area, it reveals how the property’s basic scale drives market attention.

- Listing—Time Periodicity Factor: Defined by the listing quarter and month, it captures seasonal patterns in market activity.

- Core—Ideal—Location Factor: Defined by the business—district grade and longitude, it identifies advantageous locations characterized by commercial—resource concentration and spatial scarcity.

3.3.2. Model Performance Comparison

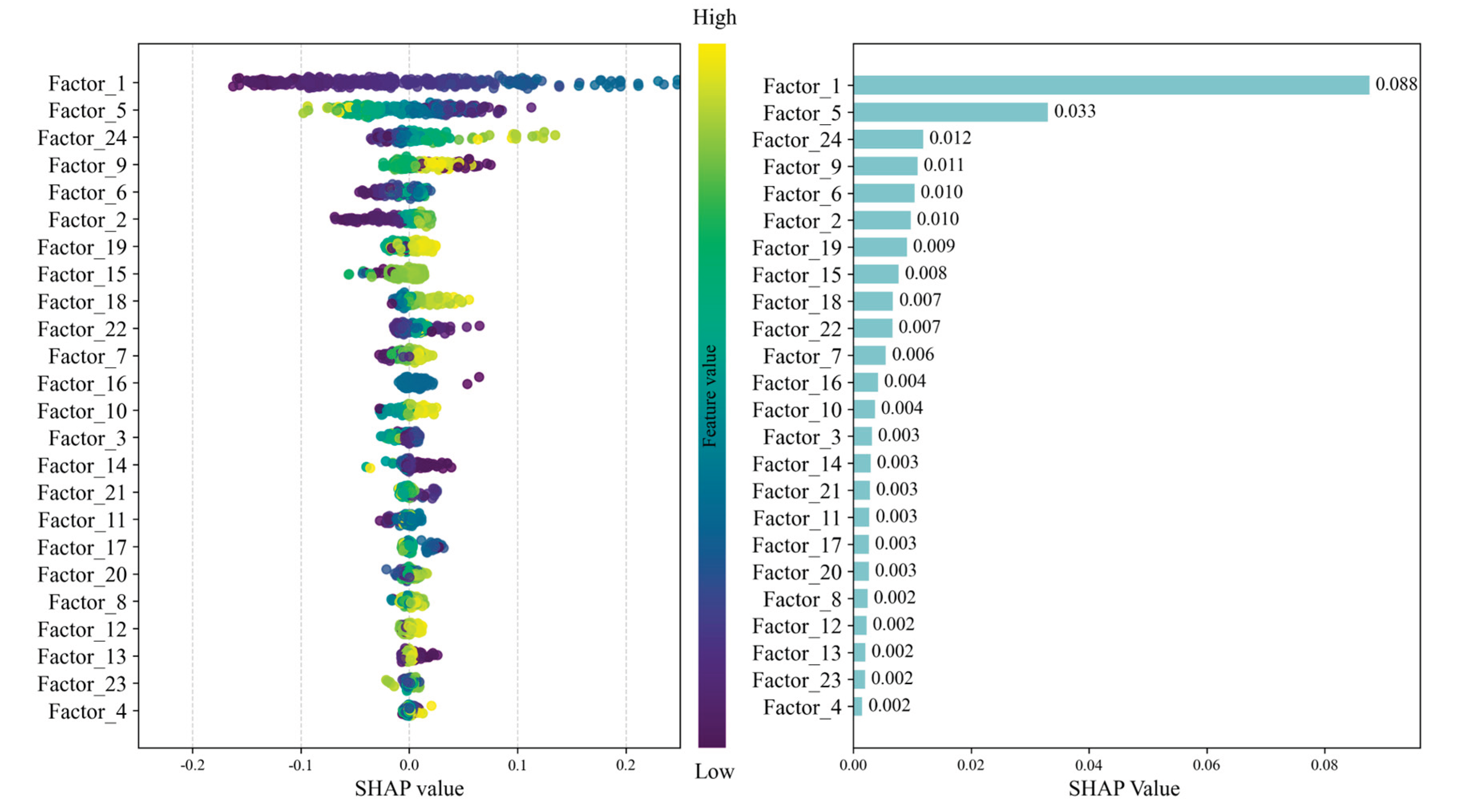

3.4. Feature Importance Analysis

4. Conclusions and Future Directions

Author Contributions

Data Availability Statement

Conflicts of Interest

References

- Zali, S.; Pahlavani, P.; Ghorbanzadeh, O.; Khazravi, A.; Ahmadlou, M.; Givekesh, S. Housing Price Modeling Using a New Geographically, Temporally, and Characteristically Weighted Generalized Regression Neural Network (GTCW-GRNN) Algorithm. BUILDINGS-BASEL 2025, 15. [Google Scholar] [CrossRef]

- Weinstock, L.R. Introduction to U.S. Economy: Housing Market.

- Ren, J.; Gao, X. Grid Density Algorithm-Based Second-Hand Housing Transaction Activity and Spatio-Temporal Characterization: The Case of Shenyang City, China. Isprs Int. J. Geo-inf. 2024, 13. [Google Scholar] [CrossRef]

- Bitter, C.; Mulligan, G.F.; Dall’erba, S. Incorporating Spatial Variation in Housing Attribute Prices: A Comparison of Geographically Weighted Regression and the Spatial Expansion Method. J. Geogr. Syst. 2007, 9, 7–27. [Google Scholar] [CrossRef]

- Wheeler, D.; Tiefelsdorf, M. Multicollinearity and Correlation among Local Regression Coefficients in Geographically Weighted Regression. J. Geogr. Syst. 2005, 7, 161–187. [Google Scholar] [CrossRef]

- Zhao, C.; Liu, F. Impact of Housing Policies on the Real Estate Market—Systematic Literature Review. Heliyon 2023, 9, e20704. [Google Scholar] [CrossRef] [PubMed]

- Ding, J.; Cen, W.; Wu, S.; Chen, Y.; Qi, J.; Huang, B.; Du, Z. A Neural Network Model to Optimize the Measure of Spatial Proximity in Geographically Weighted Regression Approach: A Case Study on House Price in Wuhan. Int. J. Geogr. Inf. Sci. 2024, 38, 1315–1335. [Google Scholar] [CrossRef]

- Yin, Z.; Sun, R.; Bi, Y. Spatial-Temporal Change Trend Analysis of Second-Hand House Price in Hefei Based on Spatial Network. Comput. Intell. Neurosci. 2022, 2022. [Google Scholar] [CrossRef] [PubMed]

- Wu, G.; Guo, W.; Niu, X. Spillover Effect Analysis of Home-Purchase Limit Policy on Housing Prices in Large and Medium-Sized Cities: Evidence from China. PLoS One 2023, 18. [Google Scholar] [CrossRef]

- Tekouabou, S.C.K.; Gherghina, S.C.; Kameni, E.D.; Filali, Y.; Idrissi Gartoumi, K. AI-Based on Machine Learning Methods for Urban Real Estate Prediction: A Systematic Survey. Arch. Comput. Methods Eng. 2024, 31, 1079–1095. [Google Scholar] [CrossRef]

- Zhang, J.; Liu, Z. Interval Prediction of Crude Oil Spot Price Volatility: An Improved Hybrid Model Integrating Decomposition Strategy, IESN and ARIMA. Expert Systems with Applications 2024, 252, 124195. [Google Scholar] [CrossRef]

- Pei, M.; Gong, R.; Ye, L.; Chen, L.; Sun, Y.; Tang, Y. Spatiotemporal Sparse Autoregressive Distributed Lag Model with Extended Regressors for Regional Wind Power Forecasting. Appl. Energy 2026, 404, 127205. [Google Scholar] [CrossRef]

- Kumari, P.; Goswami, V.; Harshith, N.; Pundir, R.S. Recurrent Neural Network Architecture for Forecasting Banana Prices in Gujarat, India. PLoS One 2023, 18. [Google Scholar] [CrossRef] [PubMed]

- Chen, C.W.S.; Chiu, L.M. Ordinal Time Series Forecasting of the Air Quality Index. Entropy 2021, 23. [Google Scholar] [CrossRef] [PubMed]

- Zhang, C.; Chen, K. Unravelling the Interplay of Crude Oil, Renewable Energy, and Commodity Price Volatility: A DCC-GARCH Model Approach on the Chinese Stock Market. Renewable Energy 2026, 256, 124128. [Google Scholar] [CrossRef]

- Li, Z.; Xie, S.; Zhang, Y.; Hu, J. A Study on House Price Prediction Based on Stacking-Sorted-Weighted-Ensemble Model. J. Internet Technol. 2022, 23, 1139–1146. [Google Scholar] [CrossRef]

- Mao, Y.; Duan, Y.; Guo, Y.; Wang, X.; Gao, S.; Ali, G. A Study on the Prediction of House Price Index in First-Tier Cities in China Based on Heterogeneous Integrated Learning Model. J. Math. 2022, 2022. [Google Scholar] [CrossRef]

- Rey-Blanco, D.; Zofío, J.L.; González-Arias, J. Improving Hedonic Housing Price Models by Integrating Optimal Accessibility Indices into Regression and Random Forest Analyses. Expert Syst. Appl. 2024, 235, 121059. [Google Scholar] [CrossRef]

- Amjad, M.; Ahmad, I.; Ahmad, M.; Wroblewski, P.; Kaminski, P.; Amjad, U. Prediction of Pile Bearing Capacity Using XGBoost Algorithm: Modeling and Performance Evaluation. Appl. Sci.-basel 2022, 12. [Google Scholar] [CrossRef]

- Dong, J.; Chen, Y.; Yao, B.; Zhang, X.; Zeng, N. A Neural Network Boosting Regression Model Based on XGBoost. Appl. Soft Comput. 2022, 125. [Google Scholar] [CrossRef]

- Simarmata, N.; Wikantika, K.; Tarigan, T.A.; Aldyansyah, M.; Tohir, R.K.; Fauzi, A.I.; Fauzia, A.R. Comparison of Random Forest, Gradient Tree Boosting, and Classification and Regression Trees for Mangrove Cover Change Monitoring Using Landsat Imagery. The Egyptian Journal of Remote Sensing and Space Sciences 2025, 28, 138–150. [Google Scholar] [CrossRef]

- Wang, J.; Ji, H.; Wang, L. Forecasting Second-Hand House Prices in China Using the GA-PSO-BP Neural Network Model. PLoS One 2025, 20. [Google Scholar] [CrossRef]

- Rampini, L.; Re Cecconi, F. Artificial Intelligence Algorithms to Predict Italian Real Estate Market Prices. JPIF 2022, 40, 588–611. [Google Scholar] [CrossRef]

- Yu, B.; Yan, D.; Wu, H.; Wang, J.; Chen, S. A Novel Prediction Model for the Sales Cycle of Second-Hand Houses Based on the Hybrid Kernel Extreme Learning Machine Optimized Using the Improved Crested Porcupine Optimizer. Buildings 2025, 15. [Google Scholar] [CrossRef]

- Shao, J.; Yu, L.; Zeng, N.; Hong, J.; Wang, X. A Multi-Scale Analysis Method with Multi-Feature Selection for House Prices Forecasting. Applied Soft Computing 2025, 171, 112779. [Google Scholar] [CrossRef]

- Huang, M.; Liu, D.; Ma, L.; Wang, J.; Wang, Y.; Chen, Y. A Prediction Method of Electromagnetic Environment Effects for UAV LiDAR Detection System. Complexity 2021. [Google Scholar] [CrossRef]

- Luo, Z.; Wang, H.; Li, S. Prediction of International Roughness Index Based on Stacking Fusion Model. Sustainability 2022, 14. [Google Scholar] [CrossRef]

- Guo, Y.; Liu, H.; Zhou, X.; Chen, J.; Guo, L. Research on Coal and Gas Outburst Risk Warning Based on Multiple Algorithm Fusion. Appl. Sci.-basel 2023, 13. [Google Scholar] [CrossRef]

- Chen, H.; Zhang, X. Path Planning for Intelligent Vehicle Collision Avoidance of Dynamic Pedestrian Using Att-LSTM, MSFM, and MPC at Unsignalized Crosswalk. IEEE Trans. Ind. Electron. 2022, 69, 4285–4295. [Google Scholar] [CrossRef]

- Chen, H.; Zhang, X.; Yang, W.; Lin, Y. A Data-Driven Stacking Fusion Approach for Pedestrian Trajectory Prediction. Transportmetrica B-Transp. Dyn. 2023, 11, 548–571. [Google Scholar] [CrossRef]

- Matsunaga, M. How to Factor-Analyze Your Data Right: Do’s, Don’ts, and How-to’s. International Journal of Psychological Research 2010, 3, 97–110. [Google Scholar] [CrossRef]

- Williams, J.S. Review of the Essentials of Factor Analysis. Contemporary Sociology 1974, 3, 411–411. [Google Scholar] [CrossRef]

- Hu, L.; Gao, L.; Li, Y.; Zhang, P.; Gao, W. Feature-Specific Mutual Information Variation for Multi-Label Feature Selection. Inf. Sci. 2022, 593, 449–471. [Google Scholar] [CrossRef]

- Enwere, K.; Nduka, E.; Ogoke, U. Comparative Analysis of Ridge, Bridge and Lasso Regression Models in the Presence of Multicollinearity. IPS Intelligentsia Multidisciplinary Journal 2023, 3, 1–8. [Google Scholar] [CrossRef]

- Fryer, D.; Strümke, I.; Nguyen, H. Shapley Values for Feature Selection: The Good, the Bad, and the Axioms. Available online: https://arxiv.org/abs/2102.10936v1 (accessed on 15 February 2026).

- Zhang, X.; Dai, C.; Li, W.; Chen, Y. Prediction of Compressive Strength of Recycled Aggregate Concrete Using Machine Learning and Bayesian Optimization Methods. Front. Earth Sci. 2023, 11. [Google Scholar] [CrossRef]

- Tao, S.; Peng, P.; Li, Y.; Sun, H.; Li, Q.; Wang, H. Supervised Contrastive Representation Learning with Tree-Structured Parzen Estimator Bayesian Optimization for Imbalanced Tabular Data. Expert Syst. Appl. 2024, 237, 121294. [Google Scholar] [CrossRef]

- Wang, H.; Liang, Q.; Hancock, J.T.; Khoshgoftaar, T.M. Feature Selection Strategies: A Comparative Analysis of SHAP-Value and Importance-Based Methods. J Big Data 2024, 11, 44. [Google Scholar] [CrossRef]

- Aldrees, A.; Khan, M.; Taha, A.T.B.; Ali, M. Evaluation of Water Quality Indexes with Novel Machine Learning and SHapley Additive ExPlanation (SHAP) Approaches. J. Water Process Eng. 2024, 58, 104789. [Google Scholar] [CrossRef]

- Huang, P.; Cai, J.; Wang, J.; Chen, H.; Zhang, P. High-Accuracy ETA Prediction for Long-Distance Tramp Shipping: A Stacked Ensemble Approach. J. Mar. Sci. Eng. 2026, 14. [Google Scholar] [CrossRef]

- Alsuqayh, N.; Mirza, A.; Alhogail, A. Exploring Feature Engineering and Explainable AI for Phishing Website Detection: A Systematic Literature Review. International Journal of Electrical and Computer Engineering (IJECE) 2025, 15, 5863–5878. [Google Scholar] [CrossRef]

- B, H.; Mb, H.; M, R. Psychometric Evaluation of the Perfectionism Scale’s Characteristics Regarding Physical Appearance in Patients Seeking Rhinoplasty Surgery. JPRAS open 2024, 41. [Google Scholar] [CrossRef]

- Al-Hadeedy, I.Y.; Ameen, Q.A.; Shaker, A.S.; Mohamed, A.H.; Taha, M.W.; Hussein, S.M. Using the Principal Component Analysis of Body Weight in Three Genetic Groups of Japanese Quail. Iop Conf. Ser.: Earth Environ. Sci 2023, 1252, 012148. [Google Scholar] [CrossRef]

- Užarević, Z.; Petković, F.; Koruga, A.S.; Kampić, I.; Popijač, Ž.; Soldo, S.B. The Multiple Sclerosis Intimacy and Sexuality Questionnaire-15: Validity, Reliability, and Factor Structure of Croatian Version. Journal of Health Sciences 2025, 15, 114–118. [Google Scholar] [CrossRef]

- Hu, J.-Y.; Wang, Y.; Tong, X.-M.; Yang, T. When to Consider Logistic LASSO Regression in Multivariate Analysis? Eur J Surg Oncol 2021, 47, 2206. [Google Scholar] [CrossRef] [PubMed]

| Cluster 1 | Cluster 2 | Cluster 3 |

| Fengtai, Yizhuang Development Zone, Changping, Shijingshan, Tongzhou, Daxing, Shunyi | Xicheng, Dongcheng, Haidian, and Chaoyang districts | Fangshan, Mentougou, Huairou, Pinggu, Miyun |

| type of variable | Feature Name |

| numeric type | Listing price, price adjustment (times), viewings (times), followers (people), views (times), net area, floor-to-ceiling ratio, gross floor area, longitude, latitude, listing year, listing quarter, listing month, rooms, living room, kitchen, bathroom, floor number, east, south, west, north |

| By typeBy type | administrative district, building type, house age, property ownership, floor level, unit layout, decoration status, building structure, heating method, elevator availability, transaction ownership, property use, business district, residential community |

| Test | Cluster 1 | Cluster 2 | Cluster 3 |

| Kaiser–Meyer–Olkin Measure | 0.7363 | 0.7317 | 0.6727 |

| Bartlett’s Test of Sphericity (χ2) | 1,012,164 | 951,913 | 150,991 |

| Bartlett’s Test p–value | 0.000 | 0.000 | 0.000 |

| Factor | Eigenvalue | Proportional Variance | Cumulative Variance |

| Factor_1 | 4.9028 | 0.1362 | 0.1362 |

| Factor_2 | 3.6668 | 0.1019 | 0.2380 |

| Factor_3 | 2.1857 | 0.0607 | 0.2988 |

| Factor_4 | 1.9370 | 0.0538 | 0.3526 |

| Factor_5 | 1.8217 | 0.0506 | 0.4032 |

| Factor_6 | 1.3719 | 0.0381 | 0.4413 |

| Factor_7 | 1.2226 | 0.0340 | 0.4752 |

| Factor_8 | 1.1849 | 0.0329 | 0.5081 |

| Factor_9 | 1.1210 | 0.0311 | 0.5393 |

| Factor_10 | 1.0451 | 0.0290 | 0.5683 |

| Factor_11 | 1.0356 | 0.0288 | 0.5971 |

| Factor_12 | 1.0247 | 0.0285 | 0.6255 |

| Factor_13 | 0.9992 | 0.0278 | 0.6533 |

| Factor_14 | 0.9920 | 0.0276 | 0.6809 |

| Factor_15 | 0.9693 | 0.0269 | 0.7078 |

| Factor_16 | 0.9350 | 0.0260 | 0.7338 |

| Factor_17 | 0.9298 | 0.0258 | 0.7596 |

| Factor_18 | 0.8981 | 0.0249 | 0.7845 |

| Factor_19 | 0.8796 | 0.0244 | 0.8090 |

| Factor_20 | 0.8379 | 0.0233 | 0.8322 |

| Factor_21 | 0.7538 | 0.0209 | 0.8532 |

| Factor_22 | 0.7000 | 0.0194 | 0.8726 |

| Factor_23 | 0.6320 | 0.0176 | 0.8902 |

| Factor_24 | 0.5935 | 0.0165 | 0.9067 |

| Factor | Factor Name |

| Factor 1 | Scale Value Factor |

| Factor 2 | Architectural Feature Factor |

| Factor 3 | Scale-Driven Attention Factor |

| Factor 4 | Listing-Time Periodicity Factor |

| Factor 5 | Core-Ideal-Location Factor |

| Cluster | Meta-Model | RMSE | MAE | R2 | MAPE (%) | Improvement Over Best Base Model* |

| Cluster1 | LinearRegression | 49.20 | 35.32 | 0.919 | 9.00 | −0.04% |

| DecisionTree | 51.03 | 36.27 | 0.913 | 9.24 | −0.71% | |

| Cluster2 | LinearRegression | 99.17 | 68.61 | 0.916 | 10.69 | +0.56% |

| DecisionTree | 103.36 | 70.00 | 0.909 | 10.93 | −0.23% | |

| Cluster3 | LinearRegression | 33.51 | 24.25 | 0.902 | 9.15 | +1.29% |

| DecisionTree | 36.52 | 26.59 | 0.883 | 10.08 | −0.77% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.