Submitted:

06 February 2026

Posted:

09 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- Swarm Intelligence Algorithms inspired by the hunting behavior, hierarchy, and reproductive behavior of plants (e.g., Dandelion Optimizer (DO) [25]), and animals among which are Particle Swarm Optimization (PSO) [5], Ant Colony Optimization (ACO) [20], Grey Wolf Optimizer (GWO) [7], Whale Optimization Algorithm (WOA) [8] and Blood-Sucking Leech Optimizer (BLSO) [13].

- The proposal of an Adaptive Gold Rush Optimizer (AGRO) that introduces key enhancements to the traditional GRO, a novel adaptive mechanism that prioritizes strategies improving solution quality, that can be applied to any optimization algorithm with fixed probabilities in the strategy selection.

- Additionally, AGRO explicitly incorporates objective function values to guide prospectors to promising regions.

- High performance results of AGRO against ten state-of-the-art algorithms, including GRO, BLSO, INFO, FOX, DO, WOA, GWO, and PSO.

2. Gold Rush Optimizer (GRO)

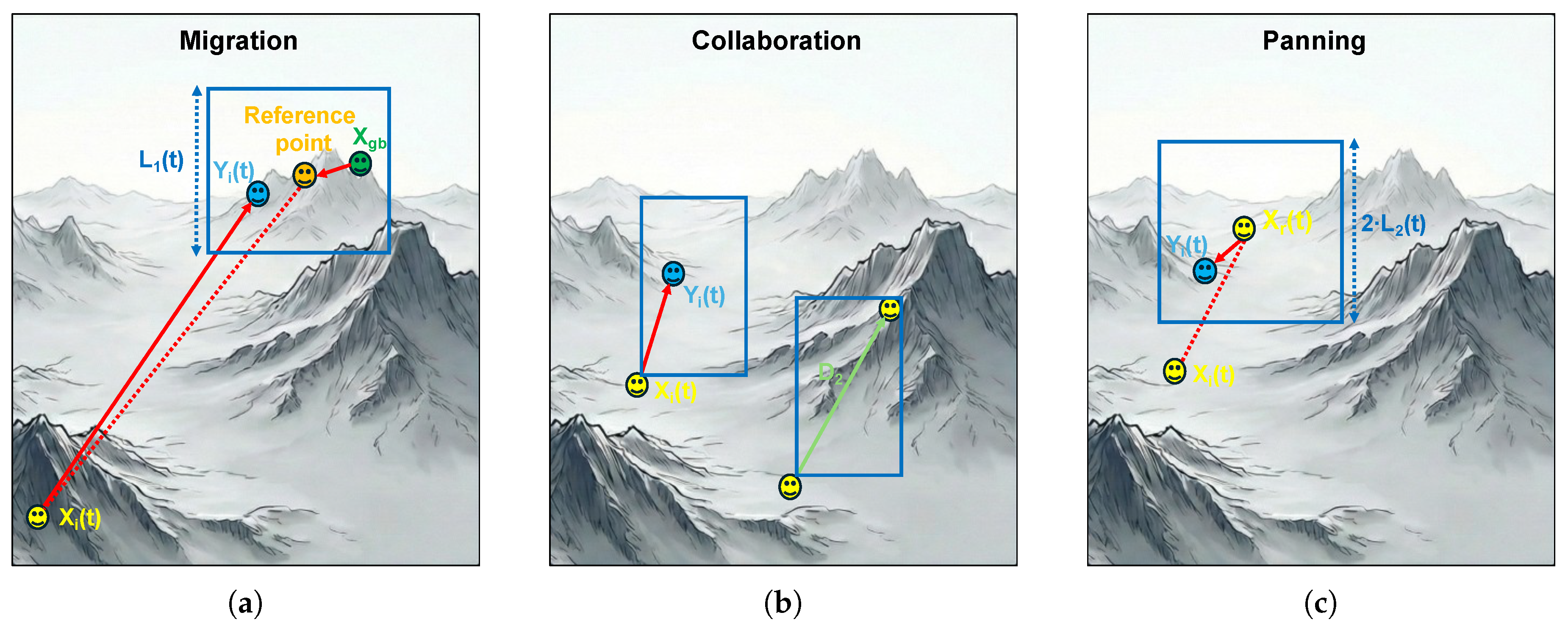

2.1. Migration (Global Oriented Search)

- is the current position of the i-th prospector in the j-th dimension at iteration t.

- is the position of the best solution in the j-th dimension found so far.

- is the position of the reference point in the j-th dimension, that guides the i-th prospector to prevent premature convergence and allow local exploration.

- is a random number in the interval .

- is a random vector that creates a weighted target point, allowing the prospector to explore the region around the destination rather than directly moving to it.

- is a vector that determines the step size of the movement.

- is the proposed position of the i-th prospector in the j-th dimension at iteration t according to the migration phase.

2.2. Collaboration (Information Sharing)

- and represent the position vectors of two randomly selected prospectors from the population ().

- is the differential vector representing the direction and magnitude of the interaction between the two selected agents.

- is a random number in the interval , acting as a scaling factor for the influence of the collaboration phase.

- is the proposed position of the i-th prospector in the j-th dimension at iteration t according to the collaboration phase.

2.3. Panning (Gold Mining)

3. Adaptive Gold Rush Optimizer (AGRO)

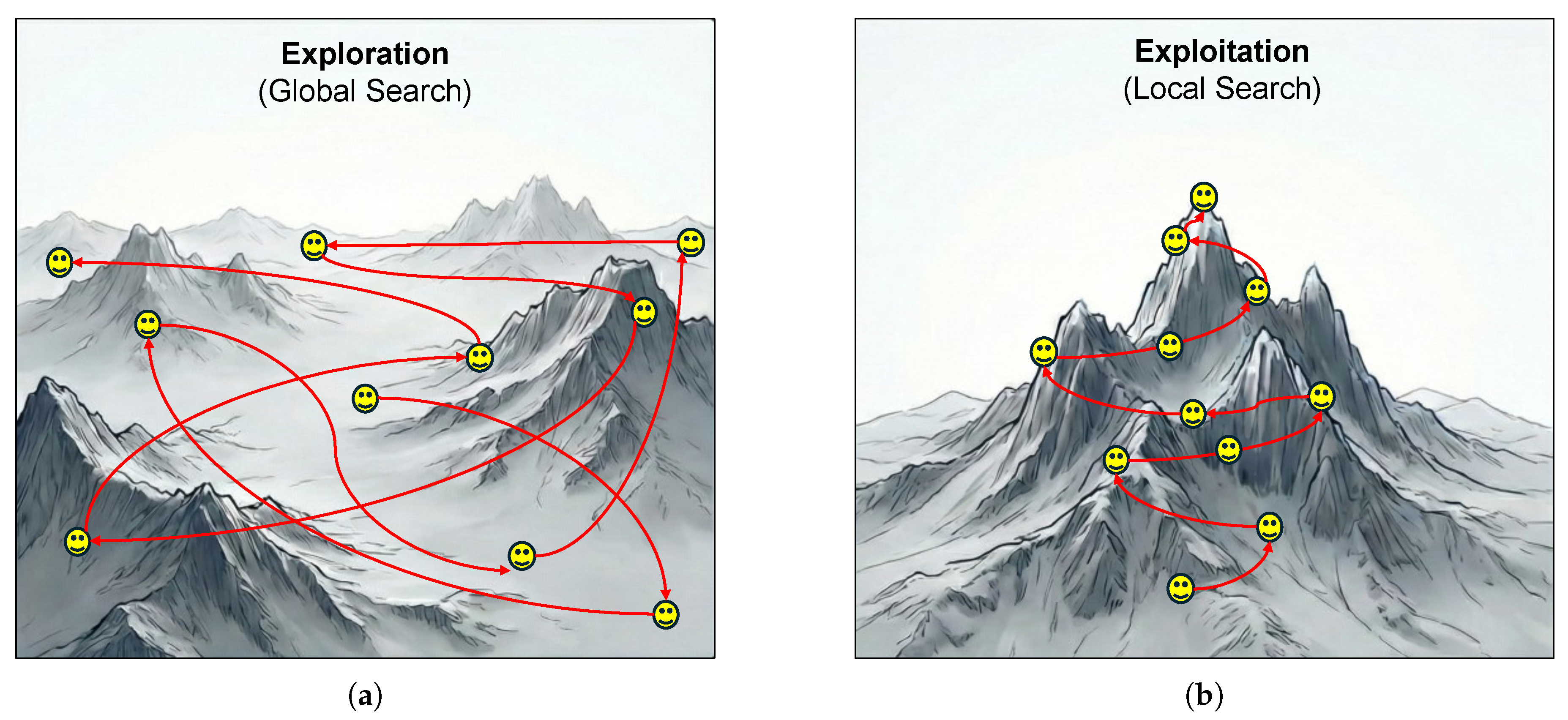

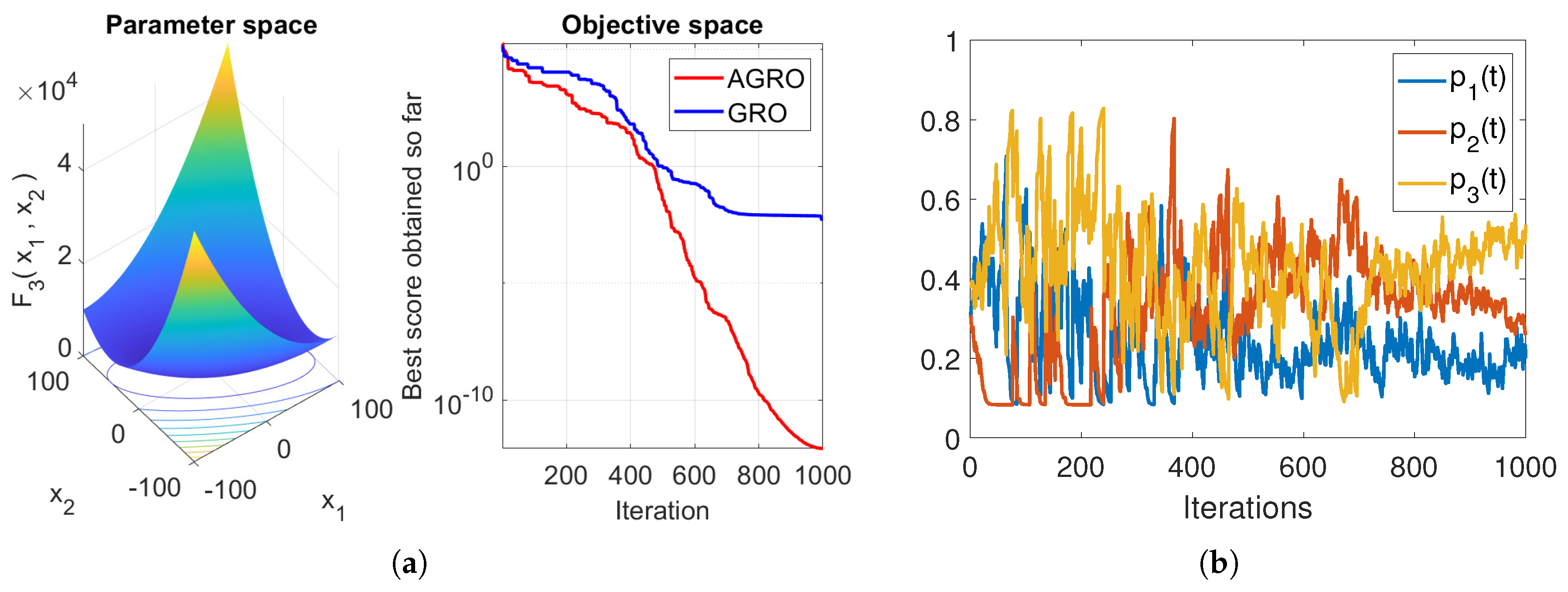

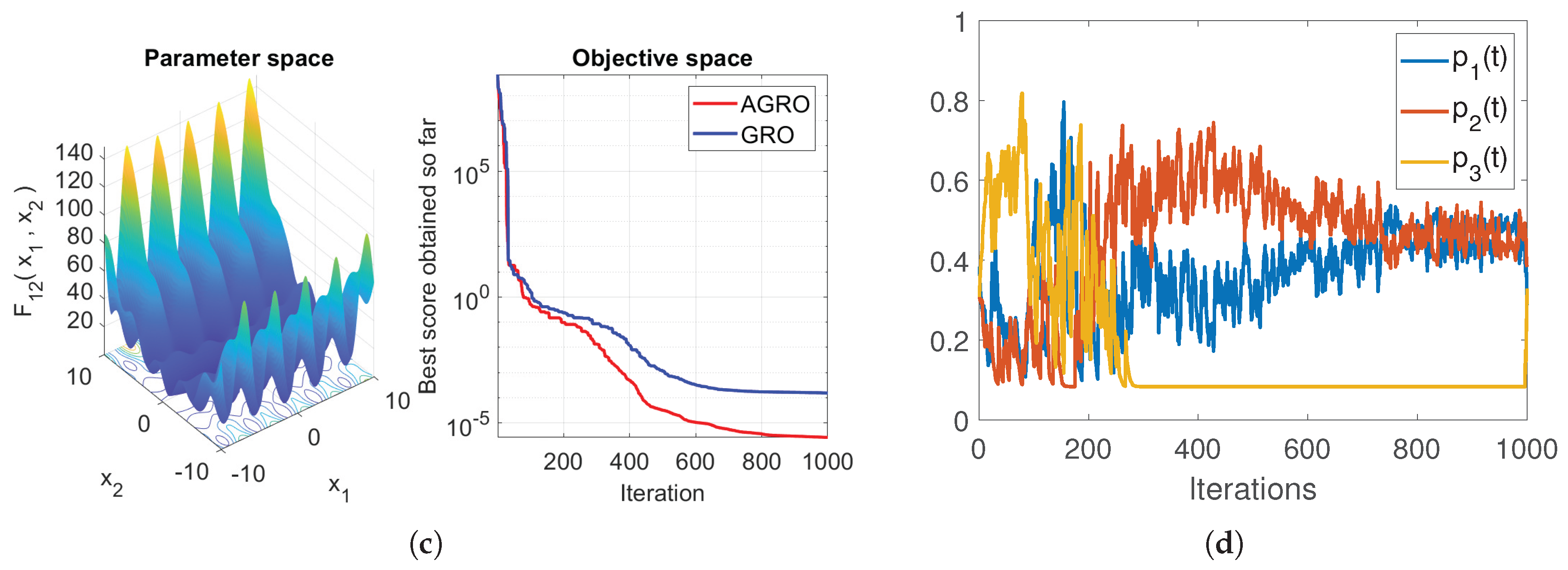

- Strategy Adaptation: In each iteration of GRO, the prospectors randomly select one of the three strategies to improve their positions. The efficiency of each strategy depends on the optimization data and on the progress of the GRO. To address this issue, AGRO utilizes a novel adaptive mechanism that prioritizes strategies by measuring in real time their performances, so that the most promising strategy is selected with higher probability.

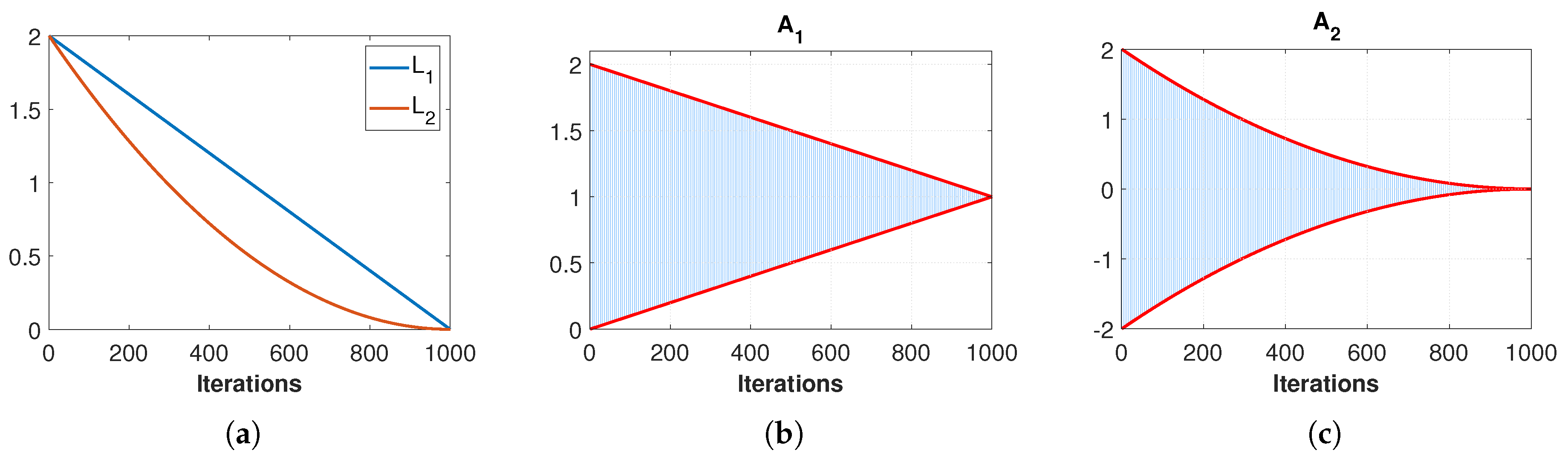

- Search Space of Reference point: In the Migration phase, the prospector computes a reference point (see Equation (8)), that defines the search space of the new proposed position of the prospector, based on the current global best solution . According to Equations (7) and (8), the search space always includes the origin point (), while the j-th dimension of the new position search space is depending on the distance of the prospector from the origin. AGRO redefines (see Equation (14)), to make the search space independent of , while excluding the origin point a priori.

- Incorporating fitness values: In the Panning phase, the prospector randomly selects another prospector and explores a region defined by their positions to search for gold. However, this random selection process increases the exploration ability of the phase, but since it does not take into account the values of the objective function on the prospectors positions, it has low exploitation ability. To overcome this issue AGRO incorporates objective function values in selection of the prospectors to guide prospectors towards promising regions and at the same time to keep the exploration ability of the GRO.

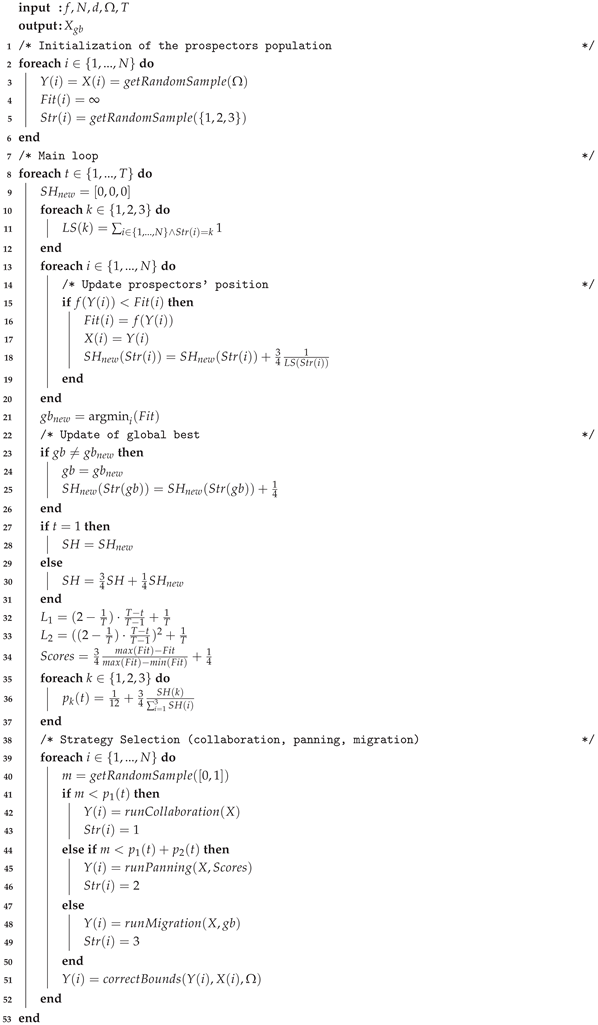

| Algorithm 1: The pseudocode of the proposed AGRO algorithm. |

|

- If Collaboration is selected, the new position is generated using the interaction between random agents.

- If Panning is selected, the algorithm utilizes the computed to guide the local search towards regions with better fitness, enhancing exploitation.

- If Migration is selected, the prospector moves towards the vicinity of the global best.

- Finally, a boundary control mechanism is applied: any proposed position that violates the search space limits is reverted to the prospector’s previous valid position (see line 51 of Algorithm 1).

4. Experimental Evaluation

4.1. Benchmark Test Functions

4.1.1. Classical Benchmark Functions

- Unimodal Functions (): Characterized by a single global optimum, these functions evaluate the exploitation capability and convergence speed of the algorithm.

- Multimodal Functions (): These functions possess multiple local optima, testing the algorithm’s exploration ability and its robustness against premature convergence.

- Fixed-dimension Multimodal Functions (): These are lower-dimensional problems with fixed search spaces, designed to assess stability in constrained environments.

4.1.2. Classical Benchmark Functions

4.1.3. CEC2017 Benchmark

4.1.4. CEC2019 Benchmark

4.2. Evaluation Metrics

- : This metric indicates the best score of the algorithm. It is calculated as:where represents the best fitness value found in the i-th run.

- : This metric indicates the accuracy of the algorithm and its ability to converge to the global optimum. This is the most important and robust metric and it is calculated as:

- Standard Deviation (): This metric evaluates the stability and robustness of the algorithm across different runs. A lower SD value indicates that the algorithm produces consistent results. It is defined as:

- The Rank represents the average ranking of a method across all experiments according to the performance indicator used. Notably, a lower Rank indicates superior performance.

- The Accuracy metric quantifies the relative performance of a method normalized between the best and worst solutions found. It is formulated as:where v is the value obtained by the method, m corresponds to the best value found among all methods (minimum for minimization problems), and M represents the worst value (maximum value). Based on this definition, the top-performing method achieves , while the worst-performing one yields .

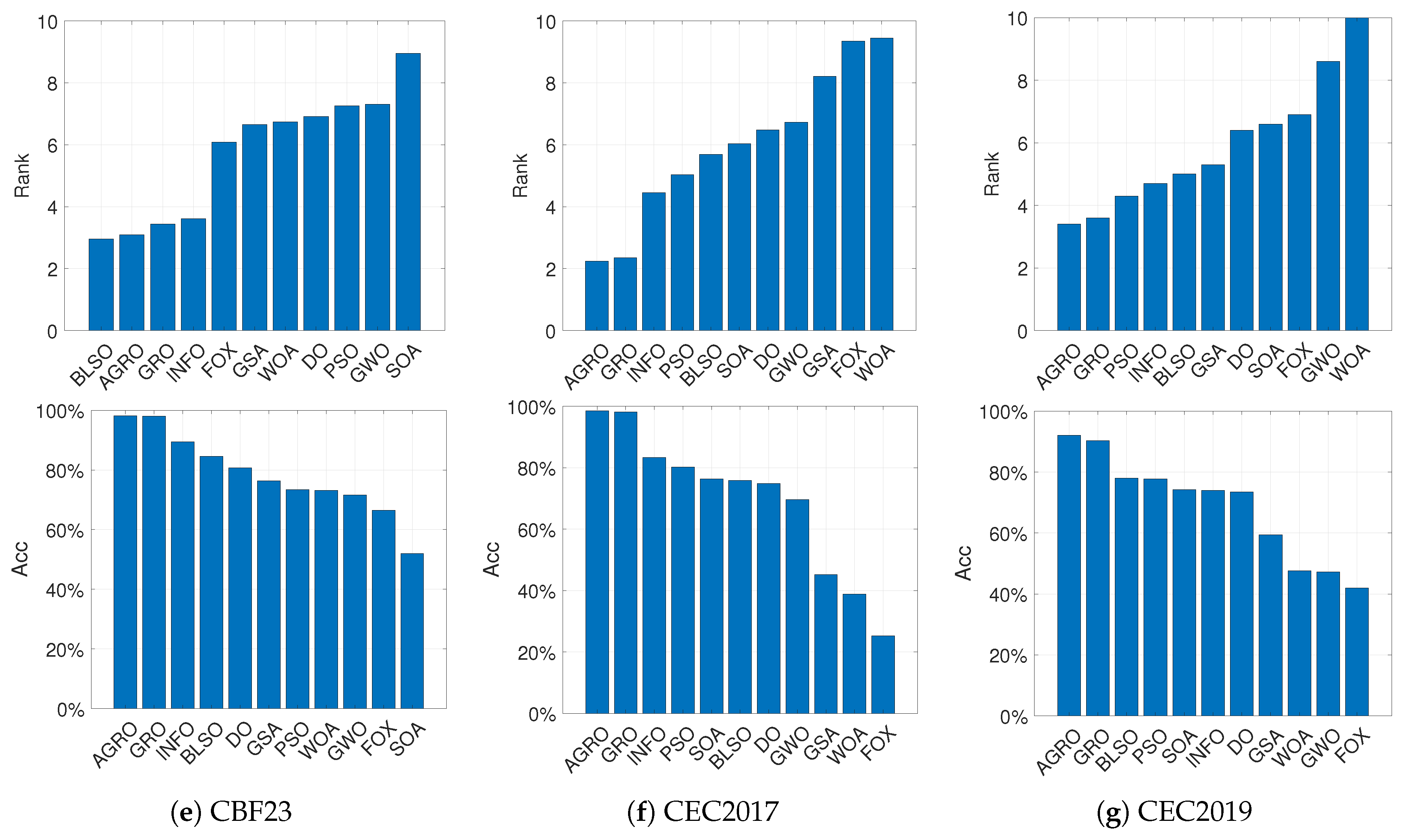

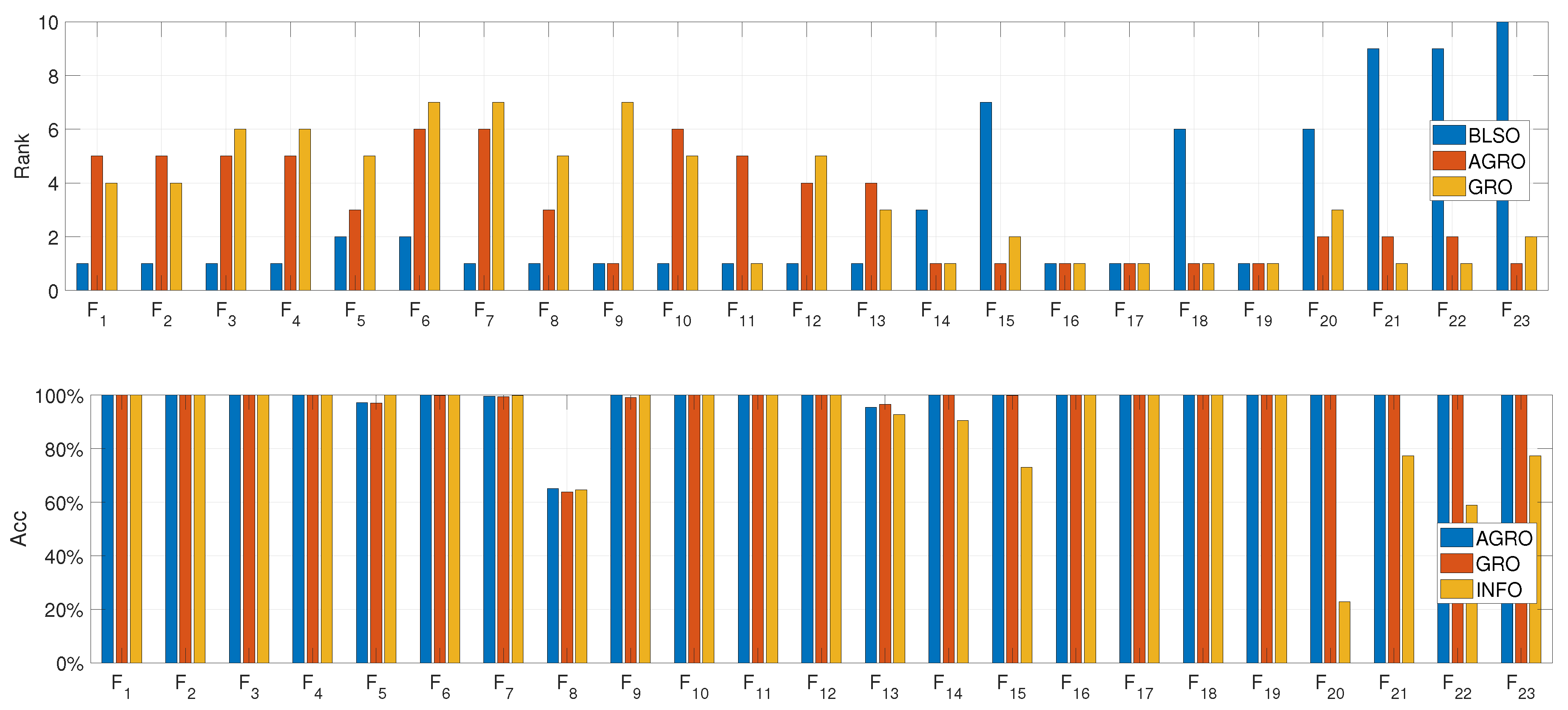

4.3. Performance Comparison of AGRO with Other Algorithms

4.3.1. Results on Classical Benchmark Functions

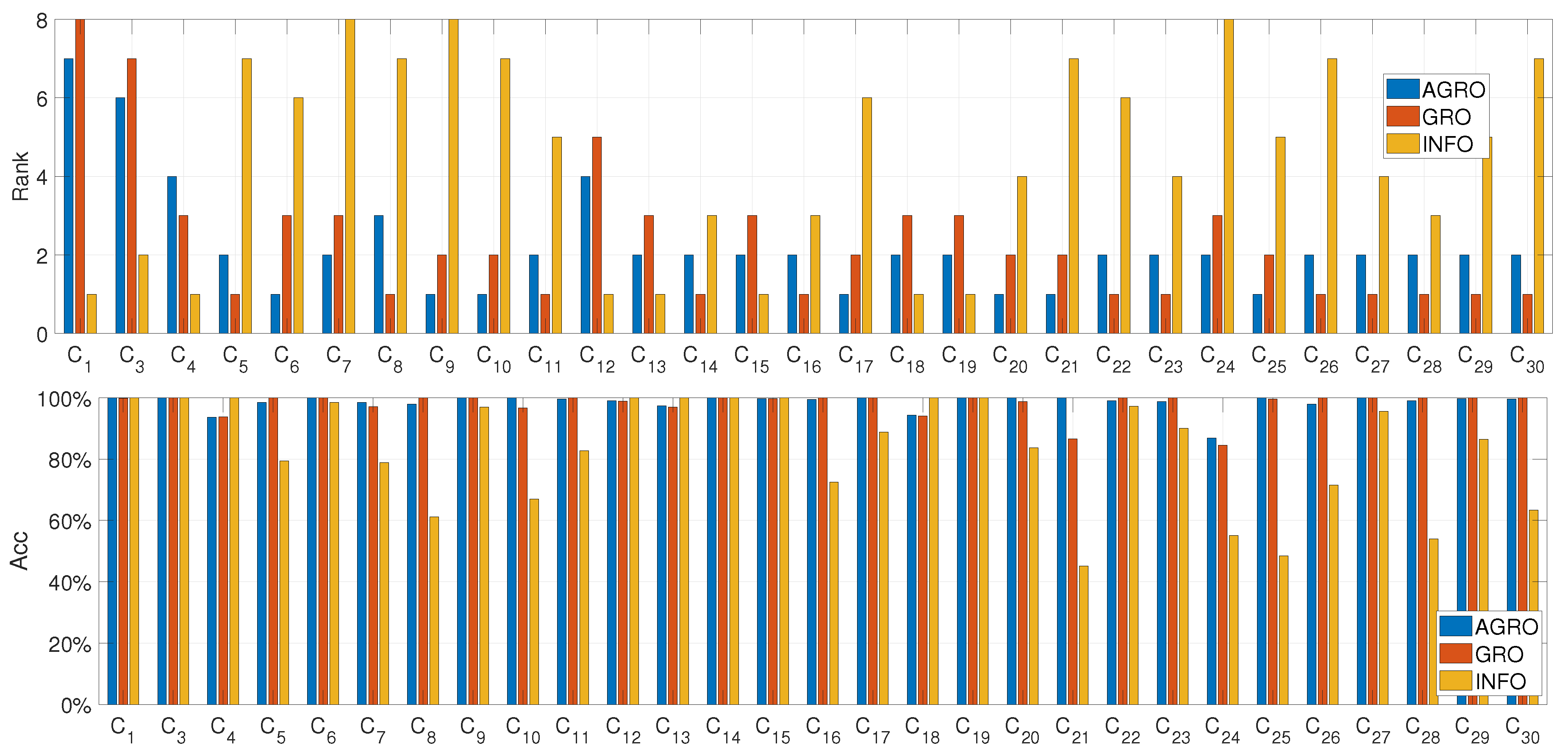

4.3.2. Results on CEC2017 Suite

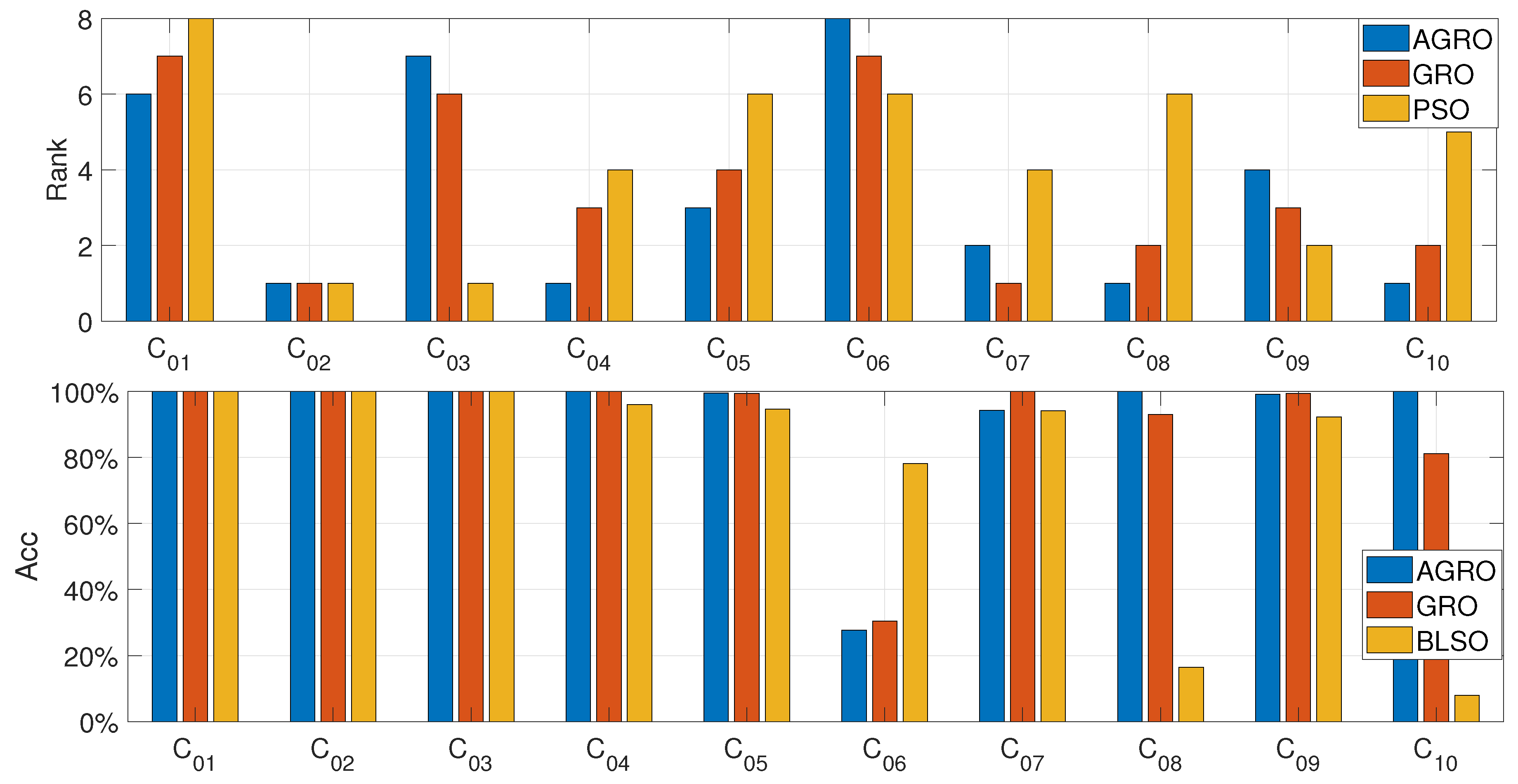

4.3.3. Results on CEC2019 Suite

5. Conclusions

- In terms of exploration, AGRO successfully avoided premature convergence in complex multimodal landscapes of CBF23 and CEC2017, significantly outperforming algorithms with static parameters.

- In terms of exploitation and precision, the algorithm achieved remarkable accuracy in the CEC2019 dataset, often locating the global optimum with high precision where other methods stagnated.

- Comparative analysis confirmed that AGRO consistently achieved the lowest average rank and highest accuracy across the majority of test functions, validating the effectiveness of the proposed adaptive mechanism.

Author Contributions

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Zaher, H.; Al-Wahsh, H.; Eid, M.; Gad, R.S.; Abdel-Rahim, N.; Abdelqawee, I.M. A novel harbor seal whiskers optimization algorithm. Alexandria Engineering Journal 2023, 80, 88–109. [Google Scholar] [CrossRef]

- Yang, X.S. Nature-inspired metaheuristic algorithms; Luniver press, 2010.

- Rizk-Allah, R.M.; Saleh, O.; Hagag, E.A.; Mousa, A.A.A. Enhanced tunicate swarm algorithm for solving large-scale nonlinear optimization problems. International Journal of Computational Intelligence Systems 2021, 14, 189. [Google Scholar] [CrossRef]

- Rajwar, K.; Deep, K.; Das, S. An exhaustive review of the metaheuristic algorithms for search and optimization: Taxonomy, applications, and open challenges. Artificial Intelligence Review 2023, 56, 13187–13257. [Google Scholar] [CrossRef] [PubMed]

- Kennedy, J.; Eberhart, R. Particle swarm optimization. In Proceedings of the Proceedings of ICNN’95-International Conference on Neural Networks. IEEE, 1995, Vol. 4, pp. 1942–1948. [CrossRef]

- Rashedi, E.; Nezamabadi-Pour, H.; Saryazdi, S. GSA: A gravitational search algorithm. Information sciences 2009, 179, 2232–2248. [Google Scholar] [CrossRef]

- Mirjalili, S.; Mirjalili, S.M.; Lewis, A. Grey wolf optimizer. Advances in engineering software 2014, 69, 46–61. [Google Scholar] [CrossRef]

- Mirjalili, S.; Lewis, A. The whale optimization algorithm. Advances in engineering software 2016, 95, 51–67. [Google Scholar] [CrossRef]

- Ahmadianfar, I.; Heidari, A.A.; Nouri, N.; Chen, H. INFO: An efficient optimization algorithm based on weighted mean of vectors. Expert Systems with Applications 2022, 195, 116516. [Google Scholar] [CrossRef]

- Zolfi, K. Gold rush optimizer: A new population-based metaheuristic algorithm. Operations Research and Decisions 2023, 33, 113–150. [Google Scholar] [CrossRef]

- Rutenbar, R.A. Simulated annealing algorithms: An overview. IEEE Circuits and Devices magazine 2002, 5, 19–26. [Google Scholar] [CrossRef]

- Prajapati, V.K.; Jain, M.; Chouhan, L. Tabu search algorithm (TSA): A comprehensive survey. In Proceedings of the 2020 3rd International Conference on Emerging Technologies in Computer Engineering: Machine Learning and Internet of Things (ICETCE). IEEE, 2020, pp. 1–8.

- Bai, J.; Nguyen-Xuan, H.; Atroshchenko, E.; Kosec, G.; Wang, L.; Wahab, M.A. Blood-sucking leech optimizer. Advances in Engineering Software 2024, 195, 103696. [Google Scholar] [CrossRef]

- Črepinšek, M.; Liu, S.H.; Mernik, M. Exploration and exploitation in evolutionary algorithms: A survey. ACM computing surveys (CSUR) 2013, 45, 1–33. [Google Scholar] [CrossRef]

- Holland, J.H. Adaptation in natural and artificial systems: An introductory analysis with applications to biology, control, and artificial intelligence; MIT press, 1992.

- Es-Haghi, M.S.; Shishegaran, A.; Rabczuk, T. Evaluation of a novel Asymmetric Genetic Algorithm to optimize the structural design of 3D regular and irregular steel frames. Frontiers of Structural and Civil Engineering 2020, 14, 1110–1130. [Google Scholar] [CrossRef]

- Kirkpatrick, S.; Gelatt, C.D., Jr.; Vecchi, M.P. Optimization by simulated annealing. science 1983, 220, 671–680. [Google Scholar] [CrossRef] [PubMed]

- Storn, R.; Price, K. Differential evolution–a simple and efficient heuristic for global optimization over continuous spaces. Journal of global optimization 1997, 11, 341–359. [Google Scholar] [CrossRef]

- Dorigo, M.; Maniezzo, V.; Colorni, A. Ant system: Optimization by a colony of cooperating agents. IEEE transactions on systems, man, and cybernetics, part b (cybernetics) 1996, 26, 29–41. [Google Scholar] [CrossRef] [PubMed]

- Dorigo, M.; Birattari, M.; Stutzle, T. Ant colony optimization. IEEE computational intelligence magazine 2007, 1, 28–39. [Google Scholar] [CrossRef]

- Nadimi-Shahraki, M.H.; Taghian, S.; Mirjalili, S. An improved grey wolf optimizer for solving engineering problems. Expert Systems with Applications 2021, 166, 113917. [Google Scholar] [CrossRef]

- Heidari, A.A.; Mirjalili, S.; Faris, H.; Aljarah, I.; Mafarja, M.; Chen, H. Harris hawks optimization: Algorithm and applications. Future generation computer systems 2019, 97, 849–872. [Google Scholar] [CrossRef]

- Hashim, F.A.; Hussien, A.G. Snake Optimizer: A novel meta-heuristic optimization algorithm. Knowledge-Based Systems 2022, 242, 108320. [Google Scholar] [CrossRef]

- Mohammed, H.; Rashid, T. FOX: A FOX-inspired optimization algorithm. Applied Intelligence 2023, 53, 1030–1050. [Google Scholar] [CrossRef]

- Zhao, S.; Zhang, T.; Ma, S.; Chen, M. Dandelion Optimizer: A nature-inspired metaheuristic algorithm for engineering applications. Engineering Applications of Artificial Intelligence 2022, 114, 105075. [Google Scholar] [CrossRef]

- Fan, S.; Wang, R.; Su, K.; Song, Y.; Wang, R. A Sequoia-Ecology-Based Metaheuristic Optimisation Algorithm for Multi-Constraint Engineering Design and UAV Path Planning. Results in Engineering 2025, 105130. [Google Scholar] [CrossRef]

- Askari, Q.; Younas, I.; Saeed, M. Political Optimizer: A novel socio-inspired meta-heuristic for global optimization. Knowledge-based systems 2020, 195, 105709. [Google Scholar] [CrossRef]

- Minh, H.L.; Sang-To, T.; Wahab, M.A.; Cuong-Le, T. A new metaheuristic optimization based on K-means clustering algorithm and its application to structural damage identification. Knowledge-Based Systems 2022, 251, 109189. [Google Scholar] [CrossRef]

- Zhang, Y.; Jin, Z. Group teaching optimization algorithm: A novel metaheuristic method for solving global optimization problems. Expert Systems with Applications 2020, 148, 113246. [Google Scholar] [CrossRef]

- Awad, N.H.; Ali, M.Z.; Liang, J.; Qu, B.; Suganthan, P.N. Problem definitions and evaluation criteria for the CEC 2017 special session and competition on single objective bound constrained real-parameter numerical optimization. Technical report, Nanyang Technological University, Singapore, 2016.

- Trojovská, E.; Dehghani, M. A new human-based metahurestic optimization method based on mimicking cooking training. Scientific Reports 2022, 12, 14861. [Google Scholar] [CrossRef]

- Price, K.V.; Awad, N.H.; Ali, M.Z.; Suganthan, P.N. Problem definitions and evaluation criteria for the CEC 2019 special session and competition on single objective bound constrained real-parameter numerical optimization. Technical report, Nanyang Technological University, Singapore, 2018.

| Acronym | Algorithm Name | Mechanism | Year |

|---|---|---|---|

| PSO | Particle Swarm Optimization [5] | Animals (birds): Mimics the behavior of bird flocks. | 1995 |

| GSA | Gravitational Search Algorithm [6] | Physics (masses): Based on the law of gravity and mass interactions. | 2009 |

| GWO | Grey Wolf Optimizer [7] | Animals (grey wolves): Mimics the leadership hierarchy and hunting mechanism of grey wolves in nature. | 2014 |

| WOA | Whale Optimization Algorithm [8] | Animals (Whales): Mimics the bubble-net hunting strategy of humpback whales. | 2016 |

| DO | Dandelion Optimizer [25] | Plants (dandelion seed): Inspired by the dissemination of dandelion seeds carried by the wind. | 2022 |

| FOX | FOX Optimizer [24] | Animals (fox): Mimics the foraging behavior of foxes in nature when hunting prey. | 2022 |

| INFO | Weighted Mean of Vectors [9] | Computational Geometry: Based on vector operations (weighted mean). | 2022 |

| GRO | Gold Rush Optimizer [10] | Human (gold prospectors): Mimics the behavior of gold prospectors during the Gold Rush era. | 2023 |

| BLSO | Blood-sucking Leech Optimizer [13] | Animals (leeches): Mimics the blood-sucking foraging behaviour of leeches. | 2024 |

| SOA | Sequoia Optimization Algorithm [26] | Plants (Sequoia): Inspired by the self-regulating and restorative phenomena observed in sequoia forest ecosystems. | 2025 |

| ID | Function | Range | |

|---|---|---|---|

| 0 | |||

| 0 | |||

| 0 | |||

| 0 | |||

| 0 | |||

| 0 | |||

| 0 |

| ID | Function | Range | |

|---|---|---|---|

| 0 | |||

| 0 | |||

| 0 | |||

| 0 | |||

| 0 |

| ID | Function | Dim (d) | Range | |

|---|---|---|---|---|

| 2 | ||||

| 4 | ||||

| 2 | ||||

| 2 | ||||

| 2 | 3 | |||

| 3 | ||||

| 6 | ||||

| 4 | ||||

| 4 | ||||

| 4 |

| Type | Functions | Description |

|---|---|---|

| Unimodal | Shifted and Rotated Unimodal Functions | |

| Multimodal | Shifted and Rotated Multimodal Functions | |

| Hybrid | Combinations of unimodal and multimodal sub-functions | |

| Composition | Merging properties of multiple sub-functions |

| Function | Function Name | Dim (d) | Range | |

|---|---|---|---|---|

| Storn’s Chebyshev Polynomial | 9 | 1 | ||

| Inverse Hilbert Matrix | 16 | 1 | ||

| Lennard-Jones Minimum Energy | 18 | 1 | ||

| Rastrigin | 10 | 1 | ||

| Griewank | 10 | 1 | ||

| Weierstrass | 10 | 1 | ||

| Modified Schwefel | 10 | 1 | ||

| Expanded Schaffer F6 | 10 | 1 | ||

| Happy Cat | 10 | 1 | ||

| Ackley | 10 | 1 |

| Rank | Algorithm | Best | Mean | SD |

|---|---|---|---|---|

| 1 | BLSO | 3.00 | 2.96 | 2.78 |

| 2 | AGRO | 3.43 | 3.09 | 3.78 |

| 3 | GRO | 3.43 | 3.43 | 3.74 |

| 4 | INFO | 2.09 | 3.61 | 4.65 |

| 5 | FOX | 5.83 | 6.09 | 4.61 |

| 6 | GSA | 5.83 | 6.65 | 6.48 |

| 7 | WOA | 7.13 | 6.74 | 7.48 |

| 8 | DO | 6.74 | 6.91 | 6.96 |

| 9 | PSO | 4.26 | 7.26 | 7.91 |

| 10 | GWO | 7.57 | 7.30 | 7.26 |

| 11 | SOA | 10.57 | 8.96 | 8.65 |

| Rank | Algorithm | Best | Mean | SD |

|---|---|---|---|---|

| 1 | AGRO | 3.24 | 2.24 | 2.86 |

| 2 | GRO | 3.52 | 2.34 | 3.03 |

| 3 | INFO | 2.72 | 4.45 | 4.86 |

| 4 | PSO | 4.34 | 5.03 | 5.17 |

| 5 | BLSO | 5.28 | 5.69 | 6.34 |

| 6 | SOA | 6.66 | 6.03 | 5.31 |

| 7 | DO | 6.93 | 6.48 | 6.45 |

| 8 | GWO | 7.48 | 6.72 | 6.45 |

| 9 | GSA | 8.34 | 8.21 | 6.90 |

| 10 | FOX | 8.17 | 9.34 | 9.21 |

| 11 | WOA | 9.14 | 9.45 | 9.41 |

| Rank | Algorithm | Best | Mean | SD |

|---|---|---|---|---|

| 1 | AGRO | 4.3 | 3.4 | 4.5 |

| 2 | GRO | 3.7 | 3.6 | 4.9 |

| 3 | PSO | 3.4 | 4.3 | 4.7 |

| 4 | INFO | 4.3 | 4.7 | 5.9 |

| 5 | BLSO | 4.9 | 5.0 | 4.9 |

| 6 | GSA | 5.8 | 5.3 | 4.9 |

| 7 | DO | 6.2 | 6.4 | 6.2 |

| 8 | SOA | 7.6 | 6.6 | 6.8 |

| 9 | FOX | 7.0 | 6.9 | 6.4 |

| 10 | GWO | 7.8 | 8.6 | 7.7 |

| 11 | WOA | 9.7 | 10.0 | 8.4 |

| Rank | Algorithm | Best | Mean |

|---|---|---|---|

| 1 | AGRO | 98.13% | 98.14% |

| 2 | GRO | 97.65% | 98.05% |

| 3 | INFO | 98.52% | 89.43% |

| 4 | BLSO | 98.92% | 84.54% |

| 5 | DO | 97.64% | 80.72% |

| 6 | GSA | 84.53% | 76.32% |

| 7 | PSO | 95.42% | 73.46% |

| 8 | WOA | 87.39% | 73.18% |

| 9 | GWO | 77.66% | 71.65% |

| 10 | FOX | 96.42% | 66.42% |

| 11 | SOA | 20.71% | 51.93% |

| Rank | Algorithm | Best | Mean |

|---|---|---|---|

| 1 | AGRO | 94.19% | 98.58% |

| 2 | GRO | 95.39% | 98.15% |

| 3 | INFO | 85.00% | 83.33% |

| 4 | PSO | 85.69% | 80.20% |

| 5 | SOA | 80.73% | 76.39% |

| 6 | BLSO | 81.98% | 75.83% |

| 7 | DO | 75.50% | 74.88% |

| 8 | GWO | 74.15% | 69.67% |

| 9 | GSA | 30.45% | 45.25% |

| 10 | WOA | 52.99% | 38.83% |

| 11 | FOX | 54.51% | 25.28% |

| Rank | Algorithm | Best | Mean |

|---|---|---|---|

| 1 | AGRO | 90.95% | 92.05% |

| 2 | GRO | 92.16% | 90.35% |

| 3 | BLSO | 81.42% | 77.99% |

| 4 | PSO | 88.69% | 77.78% |

| 5 | SOA | 70.80% | 74.27% |

| 6 | INFO | 81.71% | 73.96% |

| 7 | DO | 80.09% | 73.53% |

| 8 | GSA | 58.22% | 59.43% |

| 9 | WOA | 43.32% | 47.57% |

| 10 | GWO | 62.07% | 47.24% |

| 11 | FOX | 52.99% | 42.00% |

| Function | Metric | BLSO | AGRO | GRO | INFO | FOX |

|---|---|---|---|---|---|---|

| Mean | 0.00E+00 | 6.19E-76 | 2.54E-131 | 5.67E-55 | 0.00E+00 | |

| SD | 0.00E+00 | 2.88E-75 | 9.94E-131 | 2.53E-55 | 0.00E+00 | |

| Mean | 0.00E+00 | 8.11E-48 | 4.51E-83 | 2.92E-27 | 0.00E+00 | |

| SD | 0.00E+00 | 2.33E-47 | 1.74E-82 | 7.29E-28 | 0.00E+00 | |

| Mean | 0.00E+00 | 5.47E-04 | 2.42E-01 | 8.65E-52 | 0.00E+00 | |

| SD | 0.00E+00 | 2.05E-03 | 8.66E-01 | 1.67E-51 | 0.00E+00 | |

| Mean | 0.00E+00 | 9.01E-05 | 2.84E-04 | 5.23E-28 | 0.00E+00 | |

| SD | 0.00E+00 | 2.89E-04 | 1.26E-03 | 2.75E-28 | 0.00E+00 | |

| Mean | 2.53E+01 | 2.55E+01 | 2.60E+01 | 1.97E+01 | 2.88E+01 | |

| SD | 1.04E-01 | 3.25E-01 | 1.92E-01 | 8.76E-01 | 4.07E-02 | |

| Mean | 2.50E-15 | 1.75E-04 | 1.32E-03 | 6.30E-12 | 2.73E-03 | |

| SD | 6.96E-16 | 1.94E-04 | 1.86E-03 | 3.45E-11 | 1.13E-03 | |

| Mean | 4.88E-05 | 2.20E-03 | 3.82E-03 | 5.68E-04 | 5.60E-05 | |

| SD | 5.47E-05 | 1.42E-03 | 2.66E-03 | 4.05E-04 | 5.19E-05 | |

| Mean | -1.26E+04 | -9.09E+03 | -8.96E+03 | -9.05E+03 | -7.01E+03 | |

| SD | 8.22E-11 | 7.67E+02 | 6.12E+02 | 7.39E+02 | 6.10E+02 | |

| Mean | 0.00E+00 | 0.00E+00 | 1.10E+00 | 0.00E+00 | 0.00E+00 | |

| SD | 0.00E+00 | 0.00E+00 | 5.04E+00 | 0.00E+00 | 0.00E+00 | |

| Mean | 4.44E-16 | 6.60E-15 | 4.12E-15 | 4.44E-16 | 4.44E-16 | |

| SD | 0.00E+00 | 1.60E-15 | 6.49E-16 | 0.00E+00 | 0.00E+00 | |

| Mean | 0.00E+00 | 8.34E-04 | 0.00E+00 | 0.00E+00 | 0.00E+00 | |

| SD | 0.00E+00 | 3.23E-03 | 0.00E+00 | 0.00E+00 | 0.00E+00 | |

| Mean | 3.13E-16 | 7.91E-06 | 4.99E-05 | 4.30E-15 | 6.84E-05 | |

| SD | 1.02E-16 | 1.06E-05 | 4.45E-05 | 1.51E-14 | 2.05E-05 | |

| Mean | 3.33E-15 | 2.19E-02 | 1.66E-02 | 3.54E-02 | 1.00E-01 | |

| SD | 1.15E-15 | 4.10E-02 | 3.37E-02 | 4.73E-02 | 5.43E-01 | |

| Mean | 9.98E-01 | 9.98E-01 | 9.98E-01 | 1.91E+00 | 1.06E+01 | |

| SD | 1.13E-16 | 0.00E+00 | 0.00E+00 | 2.50E+00 | 3.97E+00 | |

| Mean | 8.24E-04 | 3.10E-04 | 3.21E-04 | 1.77E-03 | 3.71E-04 | |

| SD | 3.80E-04 | 4.38E-06 | 3.02E-05 | 5.06E-03 | 2.32E-04 | |

| Mean | -1.03E+00 | -1.03E+00 | -1.03E+00 | -1.03E+00 | -9.77E-01 | |

| SD | 5.05E-16 | 6.78E-16 | 6.78E-16 | 6.65E-16 | 2.07E-01 | |

| Mean | 3.98E-01 | 3.98E-01 | 3.98E-01 | 3.98E-01 | 3.98E-01 | |

| SD | 0.00E+00 | 0.00E+00 | 0.00E+00 | 0.00E+00 | 1.08E-10 | |

| Mean | 3.00E+00 | 3.00E+00 | 3.00E+00 | 3.00E+00 | 1.29E+01 | |

| SD | 2.50E-14 | 1.42E-15 | 1.26E-15 | 1.22E-15 | 2.51E+01 | |

| Mean | -3.86E+00 | -3.86E+00 | -3.86E+00 | -3.86E+00 | -3.86E+00 | |

| SD | 1.82E-15 | 2.71E-15 | 2.71E-15 | 2.71E-15 | 1.19E-07 | |

| Mean | -3.27E+00 | -3.32E+00 | -3.32E+00 | -3.27E+00 | -3.25E+00 | |

| SD | 5.99E-02 | 2.19E-14 | 4.20E-10 | 6.03E-02 | 5.96E-02 | |

| Mean | -5.76E+00 | -1.02E+01 | -1.02E+01 | -9.15E+00 | -5.74E+00 | |

| SD | 2.38E+00 | 3.77E-08 | 6.33E-12 | 2.60E+00 | 1.76E+00 | |

| Mean | -6.24E+00 | -1.04E+01 | -1.04E+01 | -8.21E+00 | -5.62E+00 | |

| SD | 2.89E+00 | 1.48E-13 | 1.51E-15 | 3.43E+00 | 1.62E+00 | |

| Mean | -6.28E+00 | -1.05E+01 | -1.05E+01 | -9.51E+00 | -6.03E+00 | |

| SD | 3.21E+00 | 1.98E-15 | 1.14E-15 | 2.66E+00 | 2.05E+00 |

| Function | Metric | AGRO | GRO | INFO | PSO | SOA |

|---|---|---|---|---|---|---|

| Mean | 5.47E+03 | 1.52E+04 | 1.00E+02 | 2.00E+03 | 2.17E+04 | |

| SD | 1.33E+04 | 3.51E+04 | 1.38E-02 | 1.89E+03 | 7.08E+03 | |

| Mean | 3.02E+02 | 3.04E+02 | 3.00E+02 | 3.00E+02 | 5.78E+03 | |

| SD | 4.41E+00 | 7.28E+00 | 4.01E-11 | 8.77E-14 | 3.33E+03 | |

| Mean | 4.03E+02 | 4.03E+02 | 4.00E+02 | 4.03E+02 | 4.05E+02 | |

| SD | 1.70E+00 | 1.77E+00 | 1.71E-01 | 9.76E-01 | 2.03E+00 | |

| Mean | 5.09E+02 | 5.07E+02 | 5.25E+02 | 5.23E+02 | 5.14E+02 | |

| SD | 2.92E+00 | 2.62E+00 | 1.02E+01 | 9.97E+00 | 8.33E+00 | |

| Mean | 6.00E+02 | 6.00E+02 | 6.01E+02 | 6.03E+02 | 6.00E+02 | |

| SD | 3.34E-04 | 8.90E-04 | 1.37E+00 | 8.03E+00 | 2.80E-01 | |

| Mean | 7.18E+02 | 7.19E+02 | 7.37E+02 | 7.27E+02 | 7.22E+02 | |

| SD | 2.61E+00 | 3.71E+00 | 1.31E+01 | 7.18E+00 | 5.75E+00 | |

| Mean | 8.09E+02 | 8.08E+02 | 8.21E+02 | 8.19E+02 | 8.08E+02 | |

| SD | 3.90E+00 | 2.87E+00 | 9.89E+00 | 7.85E+00 | 4.32E+00 | |

| Mean | 9.00E+02 | 9.00E+02 | 9.26E+02 | 9.00E+02 | 9.00E+02 | |

| SD | 1.94E-06 | 1.77E-05 | 4.96E+01 | 5.90E-01 | 2.67E-02 | |

| Mean | 1.32E+03 | 1.37E+03 | 1.77E+03 | 1.73E+03 | 1.69E+03 | |

| SD | 2.19E+02 | 1.87E+02 | 3.08E+02 | 2.83E+02 | 2.46E+02 | |

| Mean | 1.10E+03 | 1.10E+03 | 1.12E+03 | 1.13E+03 | 1.11E+03 | |

| SD | 1.11E+00 | 1.04E+00 | 1.78E+01 | 2.50E+01 | 5.16E+00 | |

| Mean | 4.06E+04 | 4.78E+04 | 7.50E+03 | 1.28E+04 | 1.10E+06 | |

| SD | 3.55E+04 | 5.77E+04 | 1.21E+04 | 1.17E+04 | 9.80E+05 | |

| Mean | 1.97E+03 | 2.06E+03 | 1.47E+03 | 1.02E+04 | 9.66E+03 | |

| SD | 3.07E+02 | 4.78E+02 | 1.92E+02 | 7.54E+03 | 4.50E+03 | |

| Mean | 1.44E+03 | 1.44E+03 | 1.45E+03 | 2.41E+03 | 5.17E+03 | |

| SD | 1.26E+01 | 1.38E+01 | 2.15E+01 | 9.86E+02 | 2.89E+03 | |

| Mean | 1.61E+03 | 1.62E+03 | 1.55E+03 | 2.74E+03 | 4.84E+03 | |

| SD | 4.81E+01 | 4.58E+01 | 4.59E+01 | 1.43E+03 | 2.55E+03 | |

| Mean | 1.61E+03 | 1.61E+03 | 1.76E+03 | 1.86E+03 | 1.82E+03 | |

| SD | 3.03E+01 | 2.18E+01 | 1.36E+02 | 1.07E+02 | 1.22E+02 | |

| Mean | 1.73E+03 | 1.73E+03 | 1.76E+03 | 1.78E+03 | 1.76E+03 | |

| SD | 9.52E+00 | 6.45E+00 | 4.81E+01 | 7.12E+01 | 3.22E+01 | |

| Mean | 3.31E+03 | 3.37E+03 | 1.86E+03 | 1.04E+04 | 6.94E+03 | |

| SD | 1.15E+03 | 1.08E+03 | 4.52E+01 | 8.09E+03 | 4.84E+03 | |

| Mean | 1.94E+03 | 1.96E+03 | 1.92E+03 | 4.78E+03 | 6.49E+03 | |

| SD | 1.83E+01 | 3.35E+01 | 1.48E+01 | 3.81E+03 | 3.95E+03 | |

| Mean | 2.01E+03 | 2.01E+03 | 2.06E+03 | 2.10E+03 | 2.09E+03 | |

| SD | 9.19E+00 | 1.02E+01 | 5.46E+01 | 6.26E+01 | 6.56E+01 | |

| Mean | 2.25E+03 | 2.27E+03 | 2.31E+03 | 2.30E+03 | 2.31E+03 | |

| SD | 5.44E+01 | 5.32E+01 | 4.46E+01 | 4.66E+01 | 1.80E+01 | |

| Mean | 2.29E+03 | 2.29E+03 | 2.30E+03 | 2.30E+03 | 2.30E+03 | |

| SD | 2.26E+01 | 2.54E+01 | 1.13E+00 | 7.88E-01 | 1.03E+00 | |

| Mean | 2.61E+03 | 2.61E+03 | 2.62E+03 | 2.63E+03 | 2.63E+03 | |

| SD | 4.11E+00 | 3.54E+00 | 1.08E+01 | 1.39E+01 | 1.55E+01 | |

| Mean | 2.67E+03 | 2.67E+03 | 2.75E+03 | 2.73E+03 | 2.75E+03 | |

| SD | 1.10E+02 | 1.02E+02 | 1.34E+01 | 7.84E+01 | 1.66E+01 | |

| Mean | 2.90E+03 | 2.90E+03 | 2.93E+03 | 2.93E+03 | 2.93E+03 | |

| SD | 8.18E+00 | 8.53E+00 | 2.26E+01 | 2.33E+01 | 2.05E+01 | |

| Mean | 2.90E+03 | 2.87E+03 | 3.22E+03 | 3.01E+03 | 3.33E+03 | |

| SD | 1.83E+01 | 7.76E+01 | 4.57E+02 | 3.68E+02 | 4.76E+02 | |

| Mean | 3.09E+03 | 3.09E+03 | 3.10E+03 | 3.12E+03 | 3.12E+03 | |

| SD | 2.45E+00 | 2.22E+00 | 1.91E+01 | 3.47E+01 | 2.34E+01 | |

| Mean | 3.14E+03 | 3.14E+03 | 3.28E+03 | 3.29E+03 | 3.34E+03 | |

| SD | 9.64E+01 | 9.64E+01 | 1.48E+02 | 1.44E+02 | 1.11E+02 | |

| Mean | 3.16E+03 | 3.16E+03 | 3.22E+03 | 3.23E+03 | 3.23E+03 | |

| SD | 1.28E+01 | 1.39E+01 | 6.67E+01 | 5.42E+01 | 5.24E+01 | |

| Mean | 2.12E+04 | 1.59E+04 | 5.34E+05 | 2.89E+05 | 2.81E+05 | |

| SD | 1.92E+04 | 9.01E+03 | 7.72E+05 | 4.92E+05 | 4.18E+05 |

| Function | Metric | AGRO | GRO | PSO | INFO | BLSO |

|---|---|---|---|---|---|---|

| Mean | 1.09E+08 | 1.16E+08 | 1.66E+08 | 1.56E+05 | 4.08E+04 | |

| SD | 1.32E+08 | 1.36E+08 | 1.35E+08 | 2.34E+05 | 1.49E+03 | |

| Mean | 1.73E+01 | 1.73E+01 | 1.73E+01 | 1.73E+01 | 1.73E+01 | |

| SD | 6.66E-15 | 6.53E-15 | 7.23E-15 | 7.23E-15 | 6.60E-10 | |

| Mean | 1.27E+01 | 1.27E+01 | 1.27E+01 | 1.27E+01 | 1.27E+01 | |

| SD | 4.81E-10 | 1.73E-10 | 3.61E-15 | 3.61E-15 | 3.61E-15 | |

| Mean | 7.85E+00 | 8.21E+00 | 2.18E+01 | 4.43E+01 | 4.49E+01 | |

| SD | 2.65E+00 | 3.34E+00 | 1.11E+01 | 3.27E+01 | 2.39E+01 | |

| Mean | 1.03E+00 | 1.04E+00 | 1.13E+00 | 1.12E+00 | 1.24E+00 | |

| SD | 1.59E-02 | 2.33E-02 | 8.91E-02 | 9.35E-02 | 1.46E-01 | |

| Mean | 7.98E+00 | 7.70E+00 | 5.71E+00 | 5.25E+00 | 3.11E+00 | |

| SD | 9.83E-01 | 1.07E+00 | 1.65E+00 | 2.15E+00 | 1.12E+00 | |

| Mean | 1.04E+02 | 7.66E+01 | 1.18E+02 | 2.76E+02 | 1.05E+02 | |

| SD | 1.01E+02 | 1.16E+02 | 1.29E+02 | 2.19E+02 | 1.23E+02 | |

| Mean | 2.68E+00 | 2.89E+00 | 5.09E+00 | 4.94E+00 | 5.20E+00 | |

| SD | 6.86E-01 | 7.91E-01 | 5.08E-01 | 1.09E+00 | 6.93E-01 | |

| Mean | 2.38E+00 | 2.37E+00 | 2.36E+00 | 2.40E+00 | 2.55E+00 | |

| SD | 2.35E-02 | 2.00E-02 | 1.68E-02 | 4.08E-02 | 1.20E-01 | |

| Mean | 1.50E+01 | 1.60E+01 | 1.94E+01 | 2.00E+01 | 2.00E+01 | |

| SD | 8.70E+00 | 7.80E+00 | 3.66E+00 | 3.48E-02 | 2.15E-02 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).