Submitted:

19 January 2026

Posted:

21 January 2026

You are already at the latest version

Abstract

Keywords:

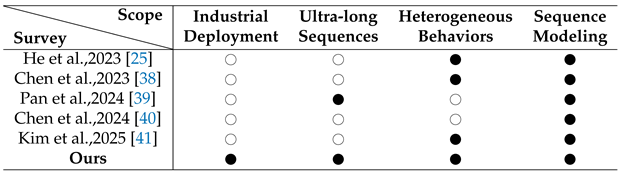

1. Introduction

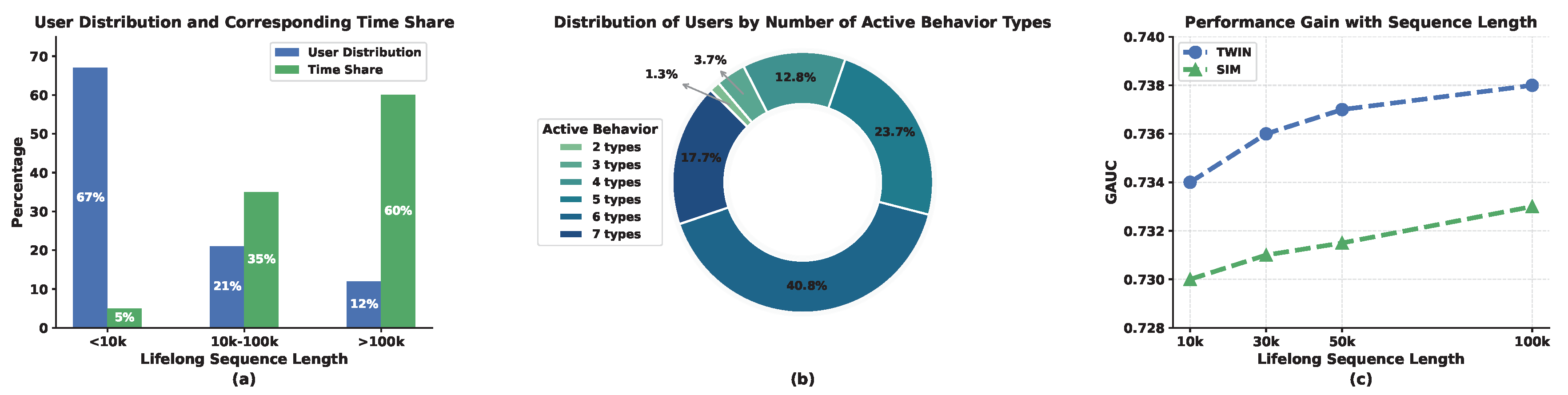

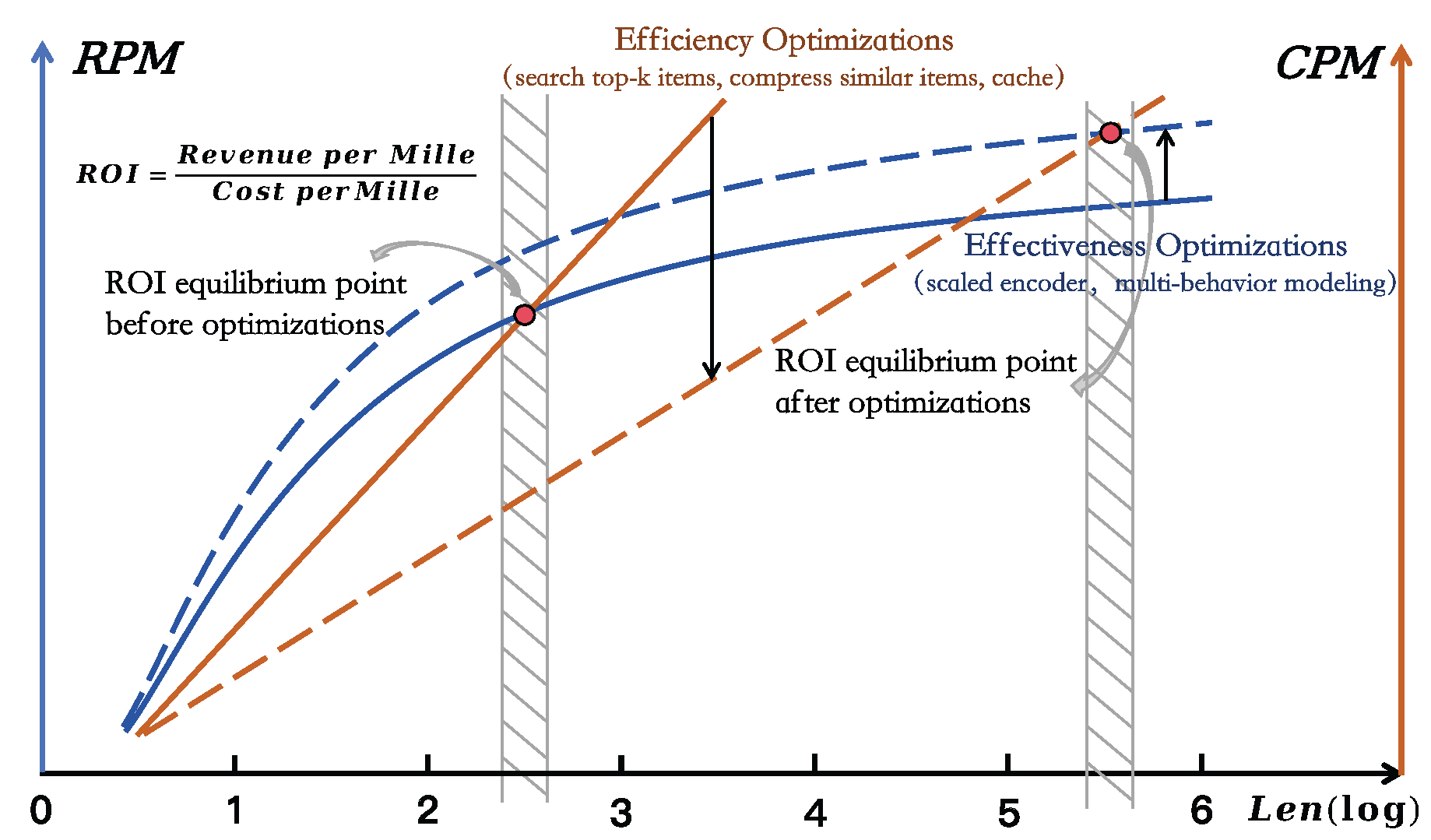

1.1. Efficiency-Effectiveness Balance in RS

1.2. Contribution of This Survey

- We provide the first unified and industrial-oriented survey on User Lifelong Behavior Modeling (ULBM) in large-scale recommender systems. From a lifecycle-aware perspective, we systematically review how ultra-long and heterogeneous user behavior sequences are modeled under real-world efficiency constraints, bridging the gap between academic formulations and industrial deployment.

- We formalize the Efficiency–Effectiveness Balance (EEB) as a central analytical framework for characterizing and comparing ULBM methods. By viewing existing approaches through the lens of constrained optimization, we reveal how different modeling paradigms embody distinct trade-offs between computational efficiency and representation expressiveness, offering a principled basis for method comparison.

- We distill common design patterns, implicit assumptions, and inherent limitations across industrial ULBM approaches, and summarize practical deployment insights from real-world systems. Based on these observations, we identify open challenges and promising research directions to guide future advances in lifelong user modeling.

1.3. Survey Organization

2. Background and Preliminaries

2.1. Problem Formulation of ULBM

2.2. ULBM in ranking system deployment

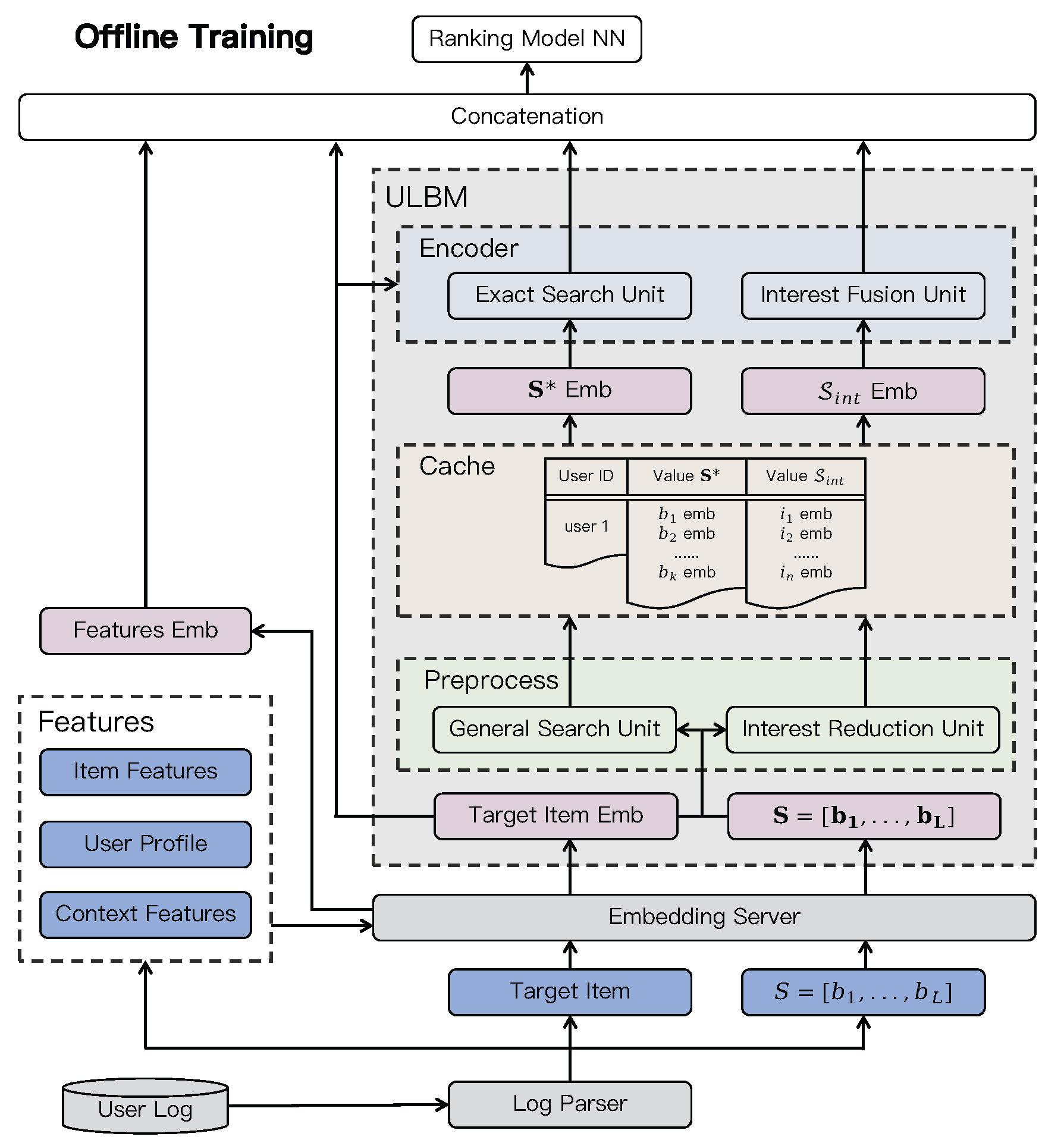

2.2.1. Offline Training

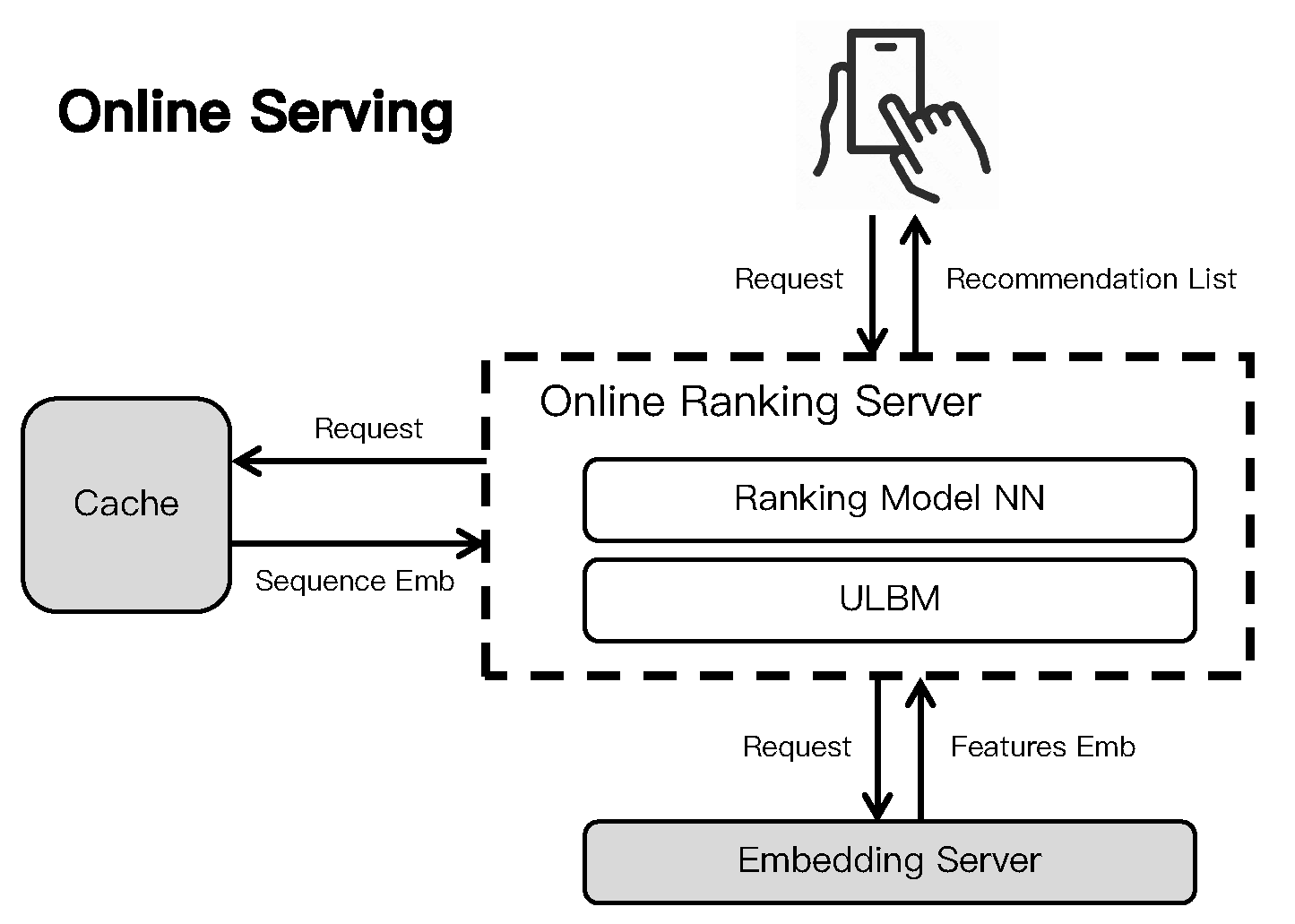

2.2.2. Online Serving

2.3. Fundamental Techniques for Behavior Modeling

2.3.1. Pooling-based Methods

2.3.2. Attention-based Methods

2.3.3. Graph-based Methods

2.3.4. Search-based and Compression-based Methods

3. Efficiency Optimizations for ULBM

3.1. Algorithmic Optimizations

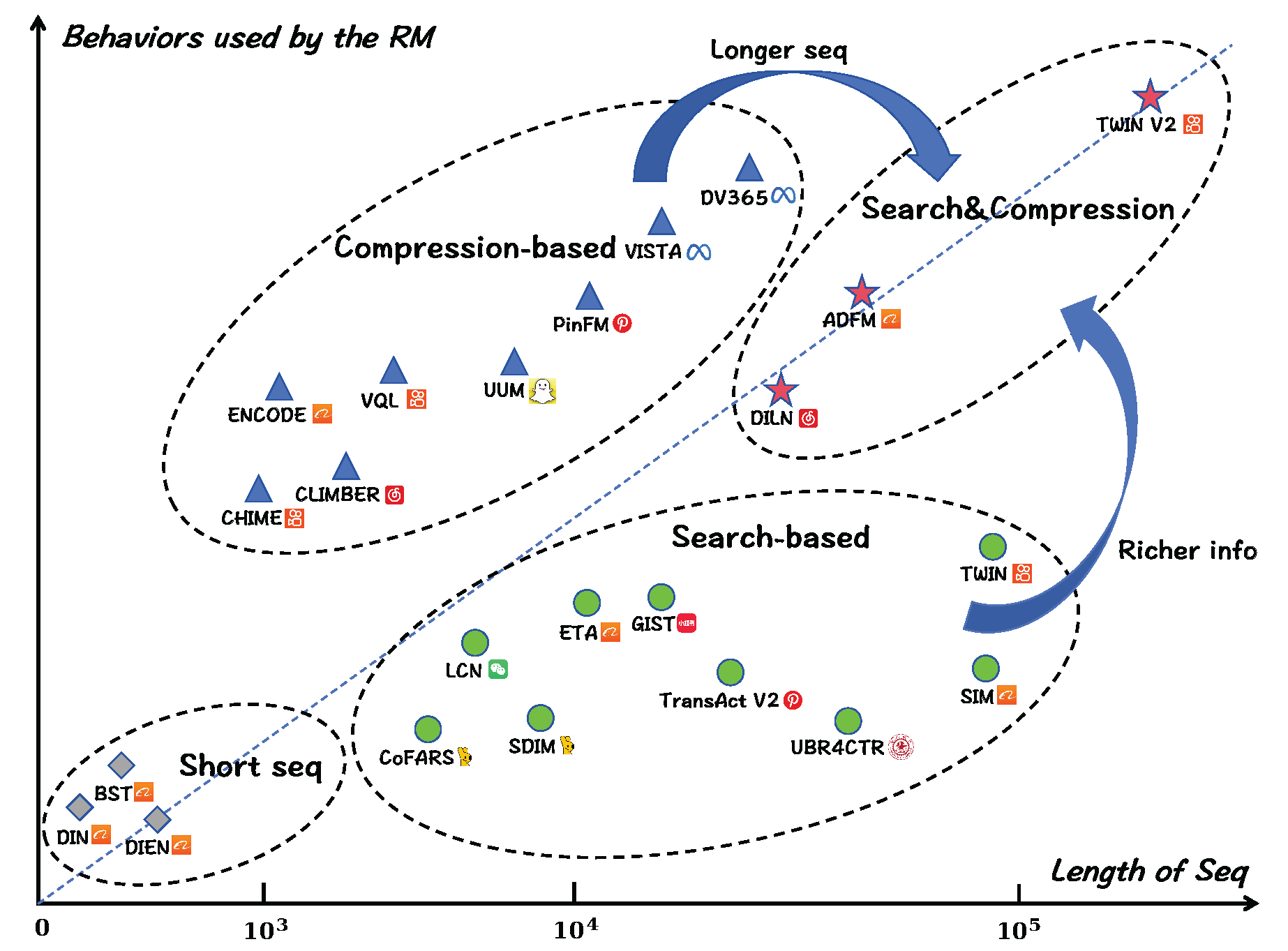

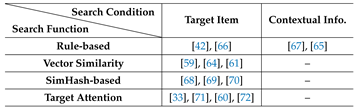

3.1.1. Search-Based Methods

3.1.2. Compression-Based Methods

3.1.3. Summary

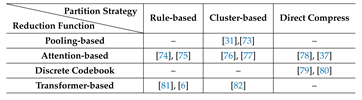

3.2. Infrastructure Optimizations

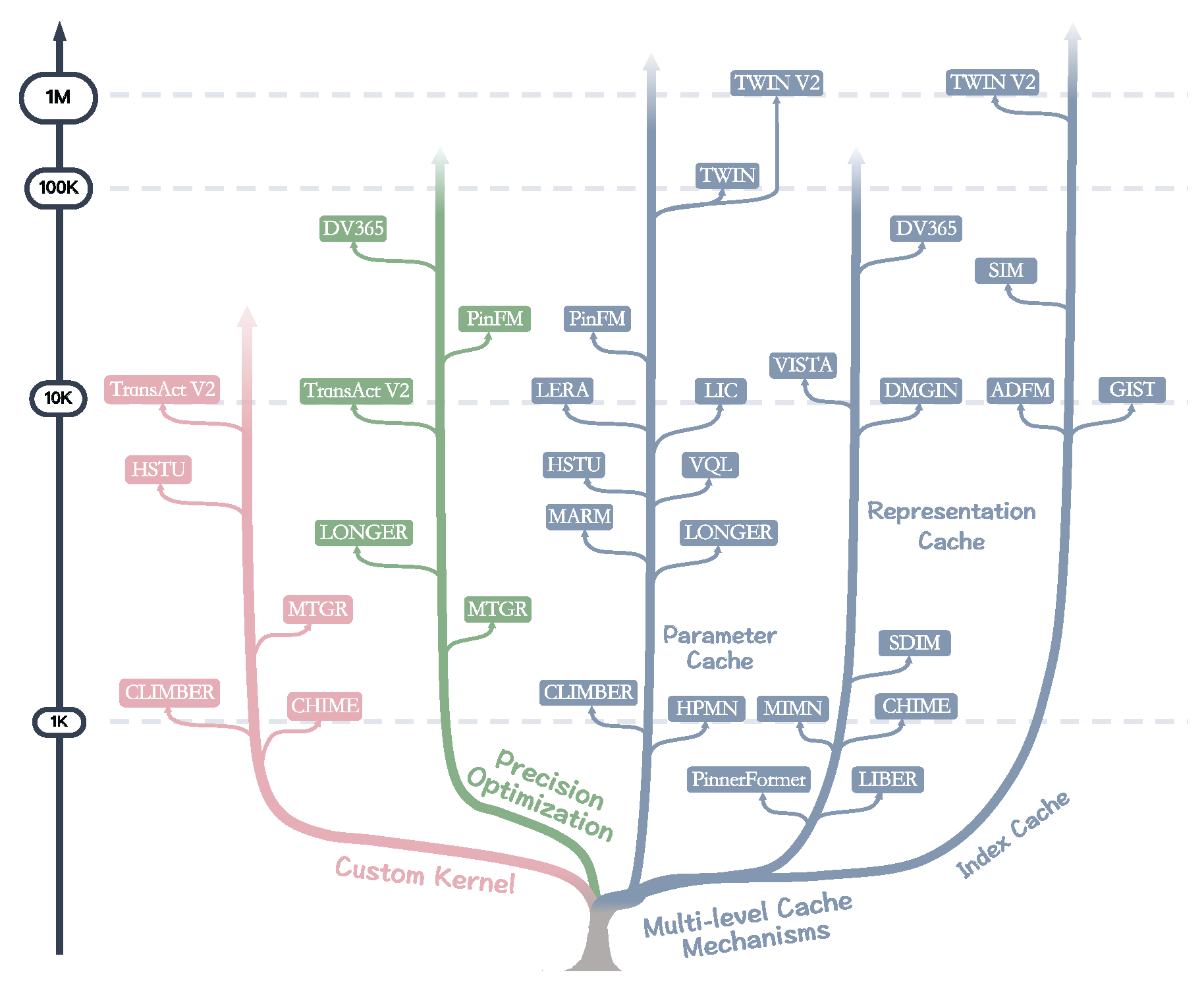

3.2.1. Custom Kernel

3.2.2. Precision Optimization

3.2.3. Multi-level Cache Mechanisms

3.2.4. Summary

3.3. Discussion

4. Effectiveness Optimizations for ULBM

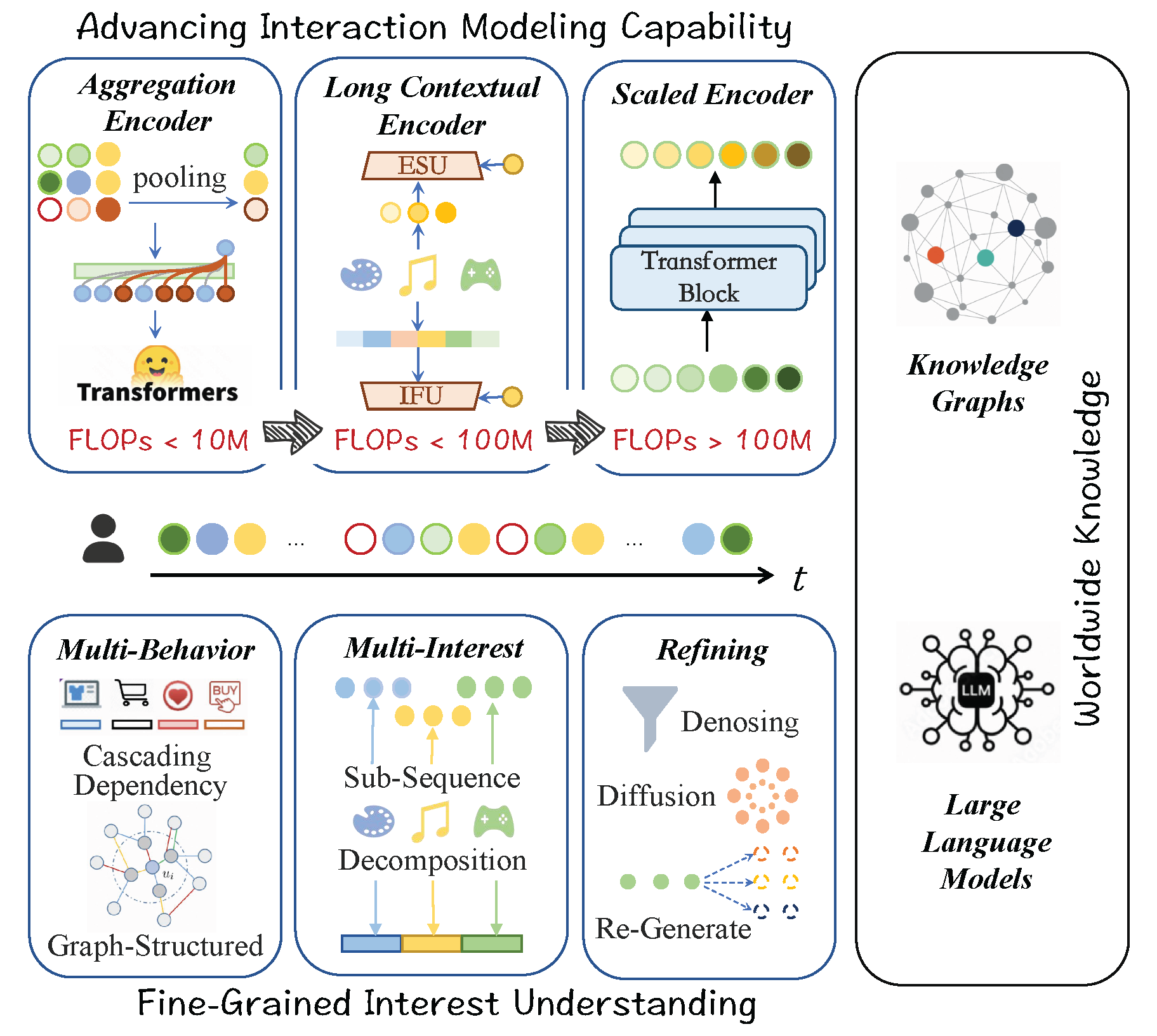

4.1. Advancing Interaction Modeling Capability

4.1.1. Aggregation Encoder

4.1.2. Long Contextual Encoder

4.1.3. Scaled Encoder

4.1.4. Summary

4.2. Fine-Grained User Interest Understanding

4.2.1. Multi-Behavior Modeling

4.2.2. Multi-Interest Modeling

4.2.3. Behavior Sequence Denoising

4.2.4. Behavior Sequence Generating

4.2.5. Summary

4.3. Incorporating Worldwide Knowledge

4.3.1. E

4.3.2. Large Language Models

4.3.3. Summary

4.4. Discussion

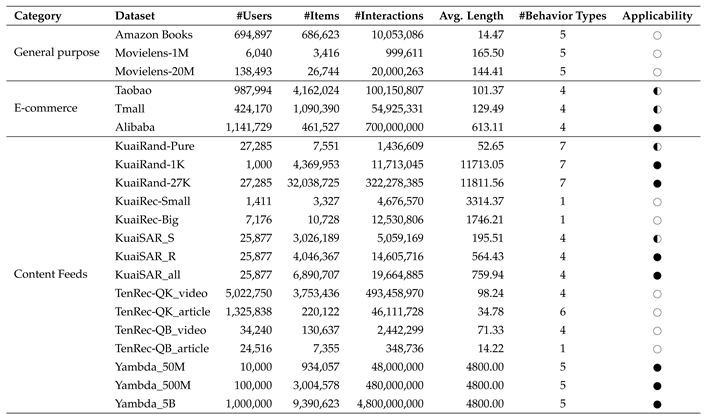

5. Dataset

5.1. General Purpose Datasets

5.2. E-Commerce Datasets

5.3. Content Feeds Datasets

6. Future Work

6.1. Unified Cross Stage ULBM

6.2. LLMs Augmented ULBM

6.3. Large-scale and End-to-End ULBM

6.4. Unified Cross Service ULBM

7. Conclusions

References

- Li, J.; Wang, M.; Li, J.; Fu, J.; Shen, X.; Shang, J.; McAuley, J. Text is all you need: Learning language representations for sequential recommendation. In Proceedings of the Proceedings of the 29th ACM SIGKDD Conference on Knowledge Discovery and Data Mining, 2023; pp. 1258–1267. [Google Scholar]

- Yi, C.; Chen, D.; Guo, G.; Tang, J.; Wu, J.; Yu, J.; Zhang, M.; Dai, S.; Chen, W.; Yang, W.; et al. Recgpt technical report. arXiv 2025, arXiv:2507.22879. [Google Scholar] [CrossRef]

- Zhang, Z.; Pei, H.; Guo, J.; Wang, T.; Feng, Y.; Sun, H.; Liu, S.; Sun, A. OneTrans: Unified Feature Interaction and Sequence Modeling with One Transformer in Industrial Recommender. arXiv 2025, arXiv:2510.26104. [Google Scholar] [CrossRef]

- Deng, J.; Wang, S.; Cai, K.; Ren, L.; Hu, Q.; Ding, W.; Luo, Q.; Zhou, G. Onerec: Unifying retrieve and rank with generative recommender and iterative preference alignment. arXiv 2025, arXiv:2502.18965. [Google Scholar] [CrossRef]

- Huang, J.T.; Sharma, A.; Sun, S.; Xia, L.; Zhang, D.; Pronin, P.; Padmanabhan, J.; Ottaviano, G.; Yang, L. Embedding-based retrieval in facebook search. In Proceedings of the Proceedings of the 26th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining, 2020; pp. 2553–2561. [Google Scholar]

- Lyu, W.; Tyagi, D.; Yang, Y.; Li, Z.; Somani, A.; Shanmugasundaram, K.; Andrejevic, N.; Adeputra, F.; Zeng, C.; Singh, A.K.; et al. DV365: Extremely Long User History Modeling at Instagram. In Proceedings of the Proceedings of the 31st ACM SIGKDD Conference on Knowledge Discovery and Data Mining V. 2; 2025; pp. 4717–4727. [Google Scholar]

- Guo, H.; Chen, B.; Tang, R.; Zhang, W.; Li, Z.; He, X. An embedding learning framework for numerical features in ctr prediction. In Proceedings of the Proceedings of the 27th ACM SIGKDD Conference on Knowledge Discovery & Data Mining, 2021; pp. 2910–2918. [Google Scholar]

- Cheng, H.T.; Koc, L.; Harmsen, J.; Shaked, T.; Chandra, T.; Aradhye, H.; Anderson, G.; Corrado, G.; Chai, W.; Ispir, M.; et al. Wide & deep learning for recommender systems. In Proceedings of the Proceedings of the 1st workshop on deep learning for recommender systems, 2016; pp. 7–10. [Google Scholar]

- Guo, H.; Tang, R.; Ye, Y.; Li, Z.; He, X. DeepFM: a factorization-machine based neural network for CTR prediction. arXiv 2017, arXiv:1703.04247. [Google Scholar]

- Huang, P.S.; He, X.; Gao, J.; Deng, L.; Acero, A.; Heck, L. Learning deep structured semantic models for web search using clickthrough data. In Proceedings of the Proceedings of the 22nd ACM international conference on Information & Knowledge Management, 2013; pp. 2333–2338. [Google Scholar]

- Hambarde, K.A.; Proenca, H. Information retrieval: recent advances and beyond. IEEE Access 2023, 11, 76581–76604. [Google Scholar] [CrossRef]

- Grbovic, M.; Cheng, H. Real-time personalization using embeddings for search ranking at airbnb. In Proceedings of the Proceedings of the 24th ACM SIGKDD international conference on knowledge discovery & data mining, 2018; pp. 311–320. [Google Scholar]

- Wang, K.; Wang, H.; Guo, W.; Liu, Y.; Lin, J.; Lian, D.; Chen, E. DLF: Enhancing explicit-implicit interaction via dynamic low-order-aware fusion for CTR prediction. In Proceedings of the Proceedings of the 48th International ACM SIGIR Conference on Research and Development in Information Retrieval; 2025; pp. 2213–2223. [Google Scholar]

- Sun, F.; Liu, J.; Wu, J.; Pei, C.; Lin, X.; Ou, W.; Jiang, P. BERT4Rec: Sequential recommendation with bidirectional encoder representations from transformer. In Proceedings of the Proceedings of the 28th ACM international conference on information and knowledge management, 2019; pp. 1441–1450. [Google Scholar]

- Zhu, H.; Li, X.; Zhang, P.; Li, G.; He, J.; Li, H.; Gai, K. Learning tree-based deep model for recommender systems. In Proceedings of the Proceedings of the 24th ACM SIGKDD international conference on knowledge discovery & data mining, 2018; pp. 1079–1088. [Google Scholar]

- Yi, X.; Yang, J.; Hong, L.; Cheng, D.Z.; Heldt, L.; Kumthekar, A.; Zhao, Z.; Wei, L.; Chi, E. Sampling-bias-corrected neural modeling for large corpus item recommendations. In Proceedings of the Proceedings of the 13th ACM conference on recommender systems, 2019; pp. 269–277. [Google Scholar]

- Li, C.; Liu, Z.; Wu, M.; Xu, Y.; Zhao, H.; Huang, P.; Kang, G.; Chen, Q.; Li, W.; Lee, D.L. Multi-interest network with dynamic routing for recommendation at Tmall. In Proceedings of the Proceedings of the 28th ACM international conference on information and knowledge management, 2019; pp. 2615–2623. [Google Scholar]

- Huang, Y.; Cui, B.; Zhang, W.; Jiang, J.; Xu, Y. Tencentrec: Real-time stream recommendation in practice. In Proceedings of the Proceedings of the 2015 ACM SIGMOD international conference on management of data, 2015; pp. 227–238. [Google Scholar]

- Pal, A.; Eksombatchai, C.; Zhou, Y.; Zhao, B.; Rosenberg, C.; Leskovec, J. Pinnersage: Multi-modal user embedding framework for recommendations at pinterest. In Proceedings of the Proceedings of the 26th ACM SIGKDD international conference on knowledge discovery & data mining, 2020; pp. 2311–2320. [Google Scholar]

- Li, X.; Chen, B.; Guo, H.; Li, J.; Zhu, C.; Long, X.; Li, S.; Wang, Y.; Guo, W.; Mao, L.; et al. Inttower: the next generation of two-tower model for pre-ranking system. In Proceedings of the Proceedings of the 31st ACM International Conference on Information & Knowledge Management, 2022; pp. 3292–3301. [Google Scholar]

- Qin, J.; Zhu, J.; Chen, B.; Liu, Z.; Liu, W.; Tang, R.; Zhang, R.; Yu, Y.; Zhang, W. Rankflow: Joint optimization of multi-stage cascade ranking systems as flows. In Proceedings of the Proceedings of the 45th International ACM SIGIR Conference on Research and Development in Information Retrieval, 2022; pp. 814–824. [Google Scholar]

- Zhou, R.; Wang, H.; Guo, W.; Jia, Q.; Xie, W.; Xu, X.; Liu, Y.; Lian, D.; Chen, E. MIT: A Multi-Tower Information Transfer Framework Based on Hierarchical Task Relationship Modeling. Proceedings of the Companion Proceedings of the ACM on Web Conference 2025, 2025, 651–660. [Google Scholar]

- Liu, C.; Cao, J.; Huang, R.; Zheng, K.; Luo, Q.; Gai, K.; Zhou, G. KuaiFormer: Transformer-Based Retrieval at Kuaishou. arXiv 2024, arXiv:2411.10057. [Google Scholar]

- Pi, Q.; Bian, W.; Zhou, G.; Zhu, X.; Gai, K. Practice on long sequential user behavior modeling for click-through rate prediction. In Proceedings of the Proceedings of the 25th ACM SIGKDD international conference on knowledge discovery & data mining, 2019; pp. 2671–2679. [Google Scholar]

- He, Z.; Liu, W.; Guo, W.; Qin, J.; Zhang, Y.; Hu, Y.; Tang, R. A survey on user behavior modeling in recommender systems. arXiv 2023, arXiv:2302.11087. [Google Scholar] [CrossRef]

- Kang, W.C.; McAuley, J. Self-attentive sequential recommendation. In Proceedings of the 2018 IEEE international conference on data mining (ICDM), 2018; IEEE; pp. 197–206. [Google Scholar]

- Feng, Y.; Lv, F.; Shen, W.; Wang, M.; Sun, F.; Zhu, Y.; Yang, K. Deep session interest network for click-through rate prediction. arXiv 2019, arXiv:1905.06482. [Google Scholar] [CrossRef]

- Hidasi, B.; Karatzoglou, A.; Baltrunas, L.; Tikk, D. Session-based recommendations with recurrent neural networks. arXiv 2015, arXiv:1511.06939. [Google Scholar]

- Liu, C.; Li, X.; Cai, G.; Dong, Z.; Zhu, H.; Shang, L. Noninvasive self-attention for side information fusion in sequential recommendation. Proceedings of the Proceedings of the AAAI conference on artificial intelligence 2021, 35, 4249–4256. [Google Scholar] [CrossRef]

- Yuan, E.; Guo, W.; He, Z.; Guo, H.; Liu, C.; Tang, R. Multi-behavior sequential transformer recommender. In Proceedings of the Proceedings of the 45th international ACM SIGIR conference on research and development in information retrieval, 2022; pp. 1642–1652. [Google Scholar]

- Si, Z.; Guan, L.; Sun, Z.; Zang, X.; Lu, J.; Hui, Y.; Cao, X.; Yang, Z.; Zheng, Y.; Leng, D.; et al. Twin v2: Scaling ultra-long user behavior sequence modeling for enhanced ctr prediction at kuaishou. In Proceedings of the Proceedings of the 33rd ACM International Conference on Information and Knowledge Management, 2024; pp. 4890–4897. [Google Scholar]

- Gao, C.; Li, S.; Zhang, Y.; Chen, J.; Li, B.; Lei, W.; Jiang, P.; He, X. KuaiRand: An Unbiased Sequential Recommendation Dataset with Randomly Exposed Videos. In Proceedings of the 31st ACM International Conference on Information and Knowledge Management, 2022, CIKM ’22; 2022; pp. 3953–3957. [Google Scholar] [CrossRef]

- Chang, J.; Zhang, C.; Fu, Z.; Zang, X.; Guan, L.; Lu, J.; Hui, Y.; Leng, D.; Niu, Y.; Song, Y.; et al. TWIN: TWo-stage interest network for lifelong user behavior modeling in CTR prediction at kuaishou. In Proceedings of the Proceedings of the 29th ACM SIGKDD Conference on Knowledge Discovery and Data Mining, 2023; pp. 3785–3794. [Google Scholar]

- Gupta, U.; Hsia, S.; Saraph, V.; Wang, X.; Reagen, B.; Wei, G.Y.; Lee, H.H.S.; Brooks, D.; Wu, C.J. Deeprecsys: A system for optimizing end-to-end at-scale neural recommendation inference. In Proceedings of the 2020 ACM/IEEE 47th Annual International Symposium on Computer Architecture (ISCA), 2020; IEEE; pp. 982–995. [Google Scholar]

- Naumov, M.; Mudigere, D.; Shi, H.J.M.; Huang, J.; Sundaraman, N.; Park, J.; Wang, X.; Gupta, U.; Wu, C.J.; Azzolini, A.G.; et al. Deep learning recommendation model for personalization and recommendation systems. arXiv 2019, arXiv:1906.00091. [Google Scholar] [CrossRef]

- Liu, Z.; Zou, L.; Zou, X.; Wang, C.; Zhang, B.; Tang, D.; Zhu, B.; Zhu, Y.; Wu, P.; Wang, K.; et al. Monolith: real time recommendation system with collisionless embedding table. arXiv 2022, arXiv:2209.07663. [Google Scholar] [CrossRef]

- Chen, Z.; Zhao, C.; Mo, K.C.; Jiang, Y.; Lee, J.H.; Chen, S.; Mahajan, K.C.; Jiang, N.; Ren, K.; Li, J.; et al. Massive Memorization with Hundreds of Trillions of Parameters for Sequential Transducer Generative Recommenders. arXiv 2025, arXiv:2510.22049. [Google Scholar] [CrossRef]

- Chen, X.; Li, Z.; Pan, W.; Ming, Z. A survey on multi-behavior sequential recommendation. arXiv 2023, arXiv:2308.15701. [Google Scholar] [CrossRef]

- Pan, L.W.; Pan, W.K.; Wei, M.Y.; Yin, H.Z.; Ming, Z. A survey on sequential recommendation. Frontiers of Computer Science 2026, 20, 2003606. [Google Scholar] [CrossRef]

- Chen, S.; Xu, Z.; Pan, W.; Yang, Q.; Ming, Z. A survey on cross-domain sequential recommendation. arXiv 2024, arXiv:2401.04971. [Google Scholar] [CrossRef]

- Kim, K.; Kim, S.; Lee, G.; Jung, J.; Shin, K. Multi-Behavior Recommender Systems: A Survey. In Proceedings of the Pacific-Asia Conference on Knowledge Discovery and Data Mining, 2025; Springer; pp. 435–452. [Google Scholar]

- Pi, Q.; Zhou, G.; Zhang, Y.; Wang, Z.; Ren, L.; Fan, Y.; Zhu, X.; Gai, K. Search-based user interest modeling with lifelong sequential behavior data for click-through rate prediction. In Proceedings of the Proceedings of the 29th ACM International Conference on Information & Knowledge Management, 2020; pp. 2685–2692. [Google Scholar]

- Zhou, G.; Deng, J.; Zhang, J.; Cai, K.; Ren, L.; Luo, Q.; Wang, Q.; Hu, Q.; Huang, R.; Wang, S.; et al. OneRec Technical Report. arXiv 2025, arXiv:2506.13695. [Google Scholar] [CrossRef]

- Covington, P.; Adams, J.; Sargin, E. Deep neural networks for youtube recommendations. In Proceedings of the Proceedings of the 10th ACM conference on recommender systems, 2016; pp. 191–198. [Google Scholar]

- Zhou, G.; Zhu, X.; Song, C.; Fan, Y.; Zhu, H.; Ma, X.; Yan, Y.; Jin, J.; Li, H.; Gai, K. Deep interest network for click-through rate prediction. In Proceedings of the Proceedings of the 24th ACM SIGKDD international conference on knowledge discovery & data mining, 2018; pp. 1059–1068. [Google Scholar]

- Chen, Q.; Zhao, H.; Li, W.; Huang, P.; Ou, W. Behavior sequence transformer for e-commerce recommendation in alibaba. In Proceedings of the Proceedings of the 1st international workshop on deep learning practice for high-dimensional sparse data, 2019; pp. 1–4. [Google Scholar]

- Xia, L.; Huang, C.; Xu, Y.; Dai, P.; Lu, M.; Bo, L. Multi-behavior enhanced recommendation with cross-interaction collaborative relation modeling. In Proceedings of the 2021 IEEE 37th international conference on data engineering (ICDE), 2021; IEEE; pp. 1931–1936. [Google Scholar]

- Jin, B.; Gao, C.; He, X.; Jin, D.; Li, Y. Multi-behavior recommendation with graph convolutional networks. In Proceedings of the Proceedings of the 43rd international ACM SIGIR conference on research and development in information retrieval, 2020; pp. 659–668. [Google Scholar]

- Wu, J.; Cai, R.; Wang, H. Déjà vu: A contextualized temporal attention mechanism for sequential recommendation. In Proceedings of the Proceedings of The Web Conference 2020, 2020; pp. 2199–2209. [Google Scholar]

- Ren, K.; Qin, J.; Fang, Y.; Zhang, W.; Zheng, L.; Bian, W.; Zhou, G.; Xu, J.; Yu, Y.; Zhu, X.; et al. Lifelong sequential modeling with personalized memorization for user response prediction. In Proceedings of the Proceedings of the 42nd International ACM SIGIR Conference on Research and Development in Information Retrieval, 2019; pp. 565–574. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, *!!! REPLACE !!!*; Polosukhin, I. Attention is all you need. Advances in neural information processing systems 2017, 30. [Google Scholar]

- Cen, Y.; Zhang, J.; Zou, X.; Zhou, C.; Yang, H.; Tang, J. Controllable multi-interest framework for recommendation. In Proceedings of the Proceedings of the 26th ACM SIGKDD international conference on knowledge discovery & data mining, 2020; pp. 2942–2951. [Google Scholar]

- Qi, T.; Wu, F.; Wu, C.; Yang, P.; Yu, Y.; Xie, X.; Huang, Y. HieRec: Hierarchical user interest modeling for personalized news recommendation. arXiv 2021, arXiv:2106.04408. [Google Scholar] [CrossRef]

- Song, W.; Shi, C.; Xiao, Z.; Duan, Z.; Xu, Y.; Zhang, M.; Tang, J. Autoint: Automatic feature interaction learning via self-attentive neural networks. In Proceedings of the Proceedings of the 28th ACM international conference on information and knowledge management, 2019; pp. 1161–1170. [Google Scholar]

- Hamilton, W.; Ying, Z.; Leskovec, J. Inductive representation learning on large graphs. Advances in neural information processing systems 2017, 30. [Google Scholar]

- Jiang, Y.; Huang, C.; Huang, L. Adaptive graph contrastive learning for recommendation. In Proceedings of the Proceedings of the 29th ACM SIGKDD conference on knowledge discovery and data mining, 2023; pp. 4252–4261. [Google Scholar]

- Gao, C.; Zheng, Y.; Li, N.; Li, Y.; Qin, Y.; Piao, J.; Quan, Y.; Chang, J.; Jin, D.; He, X.; et al. A survey of graph neural networks for recommender systems: Challenges, methods, and directions. ACM Transactions on Recommender Systems 2023, 1, 1–51. [Google Scholar] [CrossRef]

- Chang, J.; Gao, C.; Zheng, Y.; Hui, Y.; Niu, Y.; Song, Y.; Jin, D.; Li, Y. Sequential recommendation with graph neural networks. In Proceedings of the Proceedings of the 44th international ACM SIGIR conference on research and development in information retrieval, 2021; pp. 378–387. [Google Scholar]

- Hou, R.; Yang, Z.; Ming, Y.; Lu, H.; Zheng, Z.; Chen, Y.; Zeng, Q.; Chen, M. Cross-Domain LifeLong Sequential Modeling for Online Click-Through Rate Prediction. In Proceedings of the Proceedings of the 30th ACM SIGKDD Conference on Knowledge Discovery and Data Mining, 2024; pp. 5116–5125. [Google Scholar]

- Zhu, Y.; Jiang, G.; Chen, J.; Zhang, F.; Wu, Q.; Liu, Z. Long-Term Interest Clock: Fine-Grained Time Perception in Streaming Recommendation System. Proceedings of the Companion Proceedings of the ACM on Web Conference 2025, 2025, 1554–1557. [Google Scholar]

- Xia, X.; Joshi, S.; Rajesh, K.; Li, K.; Lu, Y.; Pancha, N.; Badani, D.; Xu, J.; Eksombatchai, P. TransAct V2: Lifelong User Action Sequence Modeling on Pinterest Recommendation. In Proceedings of the Proceedings of the 34th ACM International Conference on Information and Knowledge Management, 2025; pp. 6881–6882. [Google Scholar]

- Guo, T.; Li, X.; Yang, H.; Liang, X.; Yuan, Y.; Hou, J.; Ke, B.; Zhang, C.; He, J.; Zhang, S.; et al. Query-dominant User Interest Network for Large-Scale Search Ranking. In Proceedings of the Proceedings of the 32nd ACM International Conference on Information and Knowledge Management, 2023; pp. 629–638. [Google Scholar]

- Qin, J.; Zhang, W.; Wu, X.; Jin, J.; Fang, Y.; Yu, Y. User behavior retrieval for click-through rate prediction. In Proceedings of the Proceedings of the 43rd International ACM SIGIR Conference on Research and Development in Information Retrieval, 2020; pp. 2347–2356. [Google Scholar]

- Xu, W.; Li, H.; Ou, B.; Xu, L.; Qin, Y.; Su, R.; Xu, R. GIST: Cross-Domain Click-Through Rate Prediction via Guided Content-Behavior Distillation. arXiv 2025, arXiv:2507.05142. [Google Scholar]

- Feng, Z.; Xie, J.; Li, K.; Qin, Y.; Wang, P.; Li, Q.; Yin, B.; Li, X.; Lin, W.; Wang, S. Context-based Fast Recommendation Strategy for Long User Behavior Sequence in Meituan Waimai. Proceedings of the Companion Proceedings of the ACM Web Conference 2024, 2024, 355–363. [Google Scholar]

- Cai, Y.; Hou, J.; Zhu, Y.; Nie, Y. Interest Changes: Considering User Interest Life Cycle in Recommendation System. In Proceedings of the Proceedings of the 48th International ACM SIGIR Conference on Research and Development in Information Retrieval, 2025; pp. 2592–2596. [Google Scholar]

- Ren, Q.; Chai, Z.; Xiao, X.; Zheng, Y.; Wu, D. LongRetriever: Towards Ultra-Long Sequence based Candidate Retrieval for Recommendation. arXiv 2025, arXiv:2508.15486. [Google Scholar]

- Chen, Q.; Pei, C.; Lv, S.; Li, C.; Ge, J.; Ou, W. End-to-end user behavior retrieval in click-through rateprediction model. arXiv 2021, arXiv:2108.04468. [Google Scholar]

- Cao, Y.; Zhou, X.; Feng, J.; Huang, P.; Xiao, Y.; Chen, D.; Chen, S. Sampling is all you need on modeling long-term user behaviors for CTR prediction. In Proceedings of the Proceedings of the 31st ACM International Conference on Information & Knowledge Management, 2022; pp. 2974–2983. [Google Scholar]

- Xu, X.; Wang, H.; Guo, W.; Zhang, L.; Yang, W.; Yu, R.; Liu, Y.; Lian, D.; Chen, E. Multi-granularity interest retrieval and refinement network for long-term user behavior modeling in ctr prediction. In Proceedings of the Proceedings of the 31st ACM SIGKDD Conference on Knowledge Discovery and Data Mining V. 1; 2025; pp. 2745–2755. [Google Scholar]

- Feng, N.; Pan, J.; Wu, J.; Chen, B.; Wang, X.; Li, Q.; Hu, X.; Jiang, J.; Long, M. Long-Sequence Recommendation Models Need Decoupled Embeddings. arXiv 2024, arXiv:2410.02604. [Google Scholar] [CrossRef]

- Lv, X.; Cao, J.; Guan, S.; Zhou, X.; Qi, Z.; Zang, Y.; Li, M.; Wang, B.; Gai, K.; Zhou, G. MARM: Unlocking the Future of Recommendation Systems through Memory Augmentation and Scalable Complexity. arXiv 2024, arXiv:2411.09425. [Google Scholar] [CrossRef]

- Yan, J.; Jiang, L.; Cui, J.; Zhao, Z.; Bin, X.; Zhang, F.; Liu, Z. Trinity: Syncretizing Multi-/Long-Tail/Long-Term Interests All in One. In Proceedings of the Proceedings of the 30th ACM SIGKDD Conference on Knowledge Discovery and Data Mining, 2024; pp. 6095–6104. [Google Scholar]

- Meng, Y.; Guo, C.; Hu, X.; Deng, H.; Cao, Y.; Liu, T.; Zheng, B. User Long-Term Multi-Interest Retrieval Model for Recommendation. In Proceedings of the Proceedings of the Nineteenth ACM Conference on Recommender Systems, 2025; pp. 1112–1116. [Google Scholar]

- Ju, C.M.; Neves, L.; Kumar, B.; Collins, L.; Zhao, T.; Qiu, Y.; Dou, Q.; Zhou, Y.; Nizam, S.; Ozturk, R.A.; et al. Learning universal user representations leveraging cross-domain user intent at snapchat. In Proceedings of the Proceedings of the 48th International ACM SIGIR Conference on Research and Development in Information Retrieval, 2025; pp. 4345–4349. [Google Scholar]

- Zhou, W.J.; Zheng, Y.; Feng, Y.; Ye, Y.; Xiao, R.; Chen, L.; Yang, X.; Xiao, J. ENCODE: Breaking the Trade-Off Between Performance and Efficiency in Long-Term User Behavior Modeling. IEEE Transactions on Knowledge and Data Engineering 2024. [Google Scholar] [CrossRef]

- Wei, Z.; Liu, Q.; Xie, Q. Deep Multiple Quantization Network on Long Behavior Sequence for Click-Through Rate Prediction. In Proceedings of the Proceedings of the 48th International ACM SIGIR Conference on Research and Development in Information Retrieval, 2025; pp. 3090–3094. [Google Scholar]

- Guo, T.; Yang, Z.; Zeng, Q.; Chen, M. Context-Aware Lifelong Sequential Modeling for Online Click-Through Rate Prediction. arXiv 2024, arXiv:2502.12634. [Google Scholar]

- Li, K.; Tang, Y.; Cheng, Y.; Bai, Y.; Zeng, Y.; Wang, C.; Liu, X.; Jiang, P. VQL: An End-to-End Context-Aware Vector Quantization Attention for Ultra-Long User Behavior Modeling. arXiv 2025, arXiv:2508.17125. [Google Scholar]

- Bai, Y.; Xiang, R.; Li, K.; Tang, Y.; Cheng, Y.; Liu, X.; Jiang, P.; Gai, K. Chime: A compressive framework for holistic interest modeling. arXiv 2025, arXiv:2504.06780. [Google Scholar] [CrossRef]

- Xu, S.; Wang, S.; Guo, D.; Guo, X.; Xiao, Q.; Li, F.; Luo, C. An Efficient Large Recommendation Model: Towards a Resource-Optimal Scaling Law. arXiv 2025, arXiv:2502.09888. [Google Scholar] [CrossRef]

- Wei, Z.; Xie, Q.; Liu, Q. DMGIN: How Multimodal LLMs Enhance Large Recommendation Models for Lifelong User Post-click Behaviors. arXiv 2025, arXiv:2508.21801. [Google Scholar] [CrossRef]

- Liu, Q.; Hou, X.; Jin, H.; Chen, X.; Chen, J.; Lian, D.; Wang, Z.; Cheng, J.; Lei, J. Deep Group Interest Modeling of Full Lifelong User Behaviors for CTR Prediction. arXiv 2023, arXiv:2311.10764. [Google Scholar] [CrossRef]

- Xia, Y.; Zhong, R.; Gu, H.; Yang, W.; Lu, C.; Jiang, P.; Gai, K. Hierarchical tree search-based user lifelong behavior modeling on large language model. In Proceedings of the Proceedings of the 48th International ACM SIGIR Conference on Research and Development in Information Retrieval, 2025; pp. 1758–1767. [Google Scholar]

- Radford, A.; Kim, J.W.; Hallacy, C.; Ramesh, A.; Goh, G.; Agarwal, S.; Sastry, G.; Askell, A.; Mishkin, P.; Clark, J.; et al. Learning transferable visual models from natural language supervision. In Proceedings of the International conference on machine learning. PmLR, 2021; pp. 8748–8763. [Google Scholar]

- Dai, Z.; Lai, G.; Yang, Y.; Le, Q. Funnel-transformer: Filtering out sequential redundancy for efficient language processing. Advances in neural information processing systems 2020, 33, 4271–4282. [Google Scholar]

- Alzubaidi, L.; Zhang, J.; Humaidi, A.J.; Al-Dujaili, A.; Duan, Y.; Al-Shamma, O.; Santamaría, J.; Fadhel, M.A.; Al-Amidie, M.; Farhan, L. Review of deep learning: concepts, CNN architectures, challenges, applications, future directions. Journal of big Data 2021, 8, 53. [Google Scholar] [CrossRef]

- Li, X.; Liang, J.; Liu, X.; Zhang, Y. Adversarial filtering modeling on long-term user behavior sequences for click-through rate prediction. In Proceedings of the Proceedings of the 45th International ACM SIGIR Conference on Research and Development in Information Retrieval, 2022; pp. 1969–1973. [Google Scholar]

- Pan, M.; Yang, X.; Qiao, N.; Wang, D.; Mei, F.; Zhao, X.; Xu, S. Hierarchical User Long-term Behavior Modeling for Click-Through Rate Prediction. In Proceedings of the Proceedings of the 48th International ACM SIGIR Conference on Research and Development in Information Retrieval, 2025; pp. 2880–2884. [Google Scholar]

- Dao, T.; Fu, D.; Ermon, S.; Rudra, A.; Ré, C. Flashattention: Fast and memory-efficient exact attention with io-awareness. Advances in neural information processing systems 2022, 35, 16344–16359. [Google Scholar]

- Zhai, J.; Liao, L.; Liu, X.; Wang, Y.; Li, R.; Cao, X.; Gao, L.; Gong, Z.; Gu, F.; He, M.; et al. Actions speak louder than words: Trillion-parameter sequential transducers for generative recommendations. arXiv 2024, arXiv:2402.17152. [Google Scholar] [CrossRef]

- Rabe, M.N.; Staats, C. Self-attention does not need O(n2) memory. arXiv 2021, arXiv:2112.05682. [Google Scholar]

- Dao, T. Flashattention-2: Faster attention with better parallelism and work partitioning. arXiv 2023, arXiv:2307.08691. [Google Scholar] [CrossRef]

- Han, R.; Yin, B.; Chen, S.; Jiang, H.; Jiang, F.; Li, X.; Ma, C.; Huang, M.; Li, X.; Jing, C.; et al. Mtgr: Industrial-scale generative recommendation framework in meituan. In Proceedings of the Proceedings of the 34th ACM International Conference on Information and Knowledge Management, 2025; pp. 5731–5738. [Google Scholar]

- Chai, Z.; Ren, Q.; Xiao, X.; Yang, H.; Han, B.; Zhang, S.; Chen, D.; Lu, H.; Zhao, W.; Yu, L.; et al. Longer: Scaling up long sequence modeling in industrial recommenders. In Proceedings of the Proceedings of the Nineteenth ACM Conference on Recommender Systems, 2025; pp. 247–256. [Google Scholar]

- Chen, X.; Rajesh, K.; Lawhon, M.; Wang, Z.; Li, H.; Li, H.; Joshi, S.V.; Eksombatchai, P.; Yang, J.; Hsu, Y.P.; et al. Pinfm: foundation model for user activity sequences at a billion-scale visual discovery platform. In Proceedings of the Proceedings of the Nineteenth ACM Conference on Recommender Systems, 2025; pp. 381–390. [Google Scholar]

- Zhu, C.; Quan, S.; Chen, B.; Lin, J.; Cai, X.; Zhu, H.; Li, X.; Xi, Y.; Zhang, W.; Tang, R. LIBER: Lifelong User Behavior Modeling Based on Large Language Models. arXiv 2024, arXiv:2411.14713. [Google Scholar] [CrossRef]

- Pope, R.; Douglas, S.; Chowdhery, A.; Devlin, J.; Bradbury, J.; Heek, J.; Xiao, K.; Agrawal, S.; Dean, J. Efficiently scaling transformer inference. Proceedings of machine learning and systems 2023, 5, 606–624. [Google Scholar]

- Song, X.; Li, X.; Hu, J.; Wen, H.; Chen, Z.; Zhang, Y.; Zeng, X.; Zhang, J. Lrea: Low-rank efficient attention on modeling long-term user behaviors for ctr prediction. In Proceedings of the Proceedings of the 48th International ACM SIGIR Conference on Research and Development in Information Retrieval; 2025; pp. 2843–2847. [Google Scholar]

- Jun, H.; Cho, J.; Lee, K.; Son, H.Y.; Kim, K.; Jin, H.; Kim, K. Hbm (high bandwidth memory) dram technology and architecture. In Proceedings of the 2017 IEEE International Memory Workshop (IMW), 2017; IEEE; pp. 1–4. [Google Scholar]

- Zhou, G.; Mou, N.; Fan, Y.; Pi, Q.; Bian, W.; Zhou, C.; Zhu, X.; Gai, K. Deep interest evolution network for click-through rate prediction. Proceedings of the Proceedings of the AAAI conference on artificial intelligence 2019, 33, 5941–5948. [Google Scholar] [CrossRef]

- Chung, J.; Gulcehre, C.; Cho, K.; Bengio, Y. Empirical evaluation of gated recurrent neural networks on sequence modeling. arXiv 2014, arXiv:1412.3555. [Google Scholar] [CrossRef]

- Wu, B.; Yang, F.; Chan, Z.; Gu, Y.R.; Feng, J.; Yi, C.; Sheng, X.R.; Zhu, H.; Xu, J.; Ye, M.; et al. MUSE: A Simple Yet Effective Multimodal Search-Based Framework for Lifelong User Interest Modeling. arXiv 2025, arXiv:2512.07216. [Google Scholar] [CrossRef]

- Huang, T.; Zhang, Z.; Zhang, J. FiBiNET: combining feature importance and bilinear feature interaction for click-through rate prediction. In Proceedings of the Proceedings of the 13th ACM conference on recommender systems, 2019; pp. 169–177. [Google Scholar]

- Guan, L.; Yang, J.Q.; Zhao, Z.; Zhang, B.; Sun, B.; Luo, X.; Ni, J.; Li, X.; Qi, Y.; Fan, Z.; et al. Make It Long, Keep It Fast: End-to-End 10k-Sequence Modeling at Billion Scale on Douyin. arXiv 2025, arXiv:2511.06077. [Google Scholar]

- Zhu, J.; Fan, Z.; Zhu, X.; Jiang, Y.; Wang, H.; Han, X.; Ding, H.; Wang, X.; Zhao, W.; Gong, Z.; et al. Rankmixer: Scaling up ranking models in industrial recommenders. In Proceedings of the Proceedings of the 34th ACM International Conference on Information and Knowledge Management, 2025; pp. 6309–6316. [Google Scholar]

- Ye, Y.; Guo, W.; Chin, J.Y.; Wang, H.; Zhu, H.; Lin, X.; Ye, Y.; Liu, Y.; Tang, R.; Lian, D.; et al. FuXi-α: Scaling Recommendation Model with Feature Interaction Enhanced Transformer. Proceedings of the Companion Proceedings of the ACM on Web Conference 2025, 2025, 557–566. [Google Scholar]

- Ye, Y.; Guo, W.; Wang, H.; Zhu, H.; Ye, Y.; Liu, Y.; Guo, H.; Tang, R.; Lian, D.; Chen, E. Fuxi-∖beta: Towards a lightweight and fast large-scale generative recommendation model. arXiv 2025, arXiv:2508.10615. [Google Scholar]

- Huang, Y.; Chen, Y.; Cao, X.; Yang, R.; Qi, M.; Zhu, Y.; Han, Q.; Liu, Y.; Liu, Z.; Yao, X.; et al. Towards Large-scale Generative Ranking. arXiv 2025, arXiv:2505.04180. [Google Scholar] [CrossRef]

- Xia, L.; Xu, Y.; Huang, C.; Dai, P.; Bo, L. Graph meta network for multi-behavior recommendation. In Proceedings of the Proceedings of the 44th international ACM SIGIR conference on research and development in information retrieval, 2021; pp. 757–766. [Google Scholar]

- Wu, Z.; Pan, S.; Chen, F.; Long, G.; Zhang, C.; Yu, P.S. A comprehensive survey on graph neural networks. IEEE transactions on neural networks and learning systems 2020, 32, 4–24. [Google Scholar]

- Yan, M.; Cheng, Z.; Gao, C.; Sun, J.; Liu, F.; Sun, F.; Li, H. Cascading residual graph convolutional network for multi-behavior recommendation. ACM Transactions on Information Systems 2023, 42, 1–26. [Google Scholar] [CrossRef]

- Cheng, Z.; Han, S.; Liu, F.; Zhu, L.; Gao, Z.; Peng, Y. Multi-behavior recommendation with cascading graph convolution networks. In Proceedings of the Proceedings of the ACM Web Conference 2023, 2023; pp. 1181–1189. [Google Scholar]

- He, X.; Deng, K.; Wang, X.; Li, Y.; Zhang, Y.; Wang, M. Lightgcn: Simplifying and powering graph convolution network for recommendation. In Proceedings of the Proceedings of the 43rd International ACM SIGIR conference on research and development in Information Retrieval, 2020; pp. 639–648. [Google Scholar]

- Wei, W.; Huang, C.; Xia, L.; Xu, Y.; Zhao, J.; Yin, D. Contrastive meta learning with behavior multiplicity for recommendation. In Proceedings of the Proceedings of the fifteenth ACM international conference on web search and data mining, 2022; pp. 1120–1128. [Google Scholar]

- Gou, Y.; Yao, Y.; Zhang, Z.; Wu, Y.; Hu, Y.; Zhuang, F.; Liu, J.; Xu, Y. Controllable multi-behavior recommendation for in-game skins with large sequential model. In Proceedings of the Proceedings of the 30th ACM SIGKDD Conference on Knowledge Discovery and Data Mining, 2024; pp. 4986–4996. [Google Scholar]

- Sun, Y.; Huang, S.; Che, L.; Lu, H.; Luo, Q.; Gai, K.; Zhou, G. MPFormer: Adaptive Framework for Industrial Multi-Task Personalized Sequential Retriever. In Proceedings of the Proceedings of the 34th ACM International Conference on Information and Knowledge Management, 2025; pp. 2832–2841. [Google Scholar]

- Lai, W.; Jin, B.; Zhang, Y.; Zheng, Y.; Zhao, R.; Dong, J.; Lei, J.; Wang, X. Modeling Long-term User Behaviors with Diffusion-driven Multi-interest Network for CTR Prediction. In Proceedings of the Proceedings of the Nineteenth ACM Conference on Recommender Systems, 2025; pp. 289–298. [Google Scholar]

- Li, Z.; Sun, A.; Li, C. Diffurec: A diffusion model for sequential recommendation. ACM Transactions on Information Systems 2023, 42, 1–28. [Google Scholar] [CrossRef]

- Xie, W.; Wang, H.; Zhang, L.; Zhou, R.; Lian, D.; Chen, E. Breaking determinism: Fuzzy modeling of sequential recommendation using discrete state space diffusion model. Advances in Neural Information Processing Systems 2024, 37, 22720–22744. [Google Scholar]

- Tang, J.; Dai, S.; Shi, T.; Xu, J.; Chen, X.; Chen, W.; Jian, W.; Jiang, Y. Think Before Recommend: Unleashing the Latent Reasoning Power for Sequential Recommendation. CoRR abs/2503.22675 (2025). doi: 10. 48550. arXiv preprint ARXIV.2503.22675 2025.

- Dai, S.; Tang, J.; Wu, J.; Wang, K.; Zhu, Y.; Chen, B.; Hong, B.; Zhao, Y.; Fu, C.; Wu, K.; et al. OnePiece: Bringing Context Engineering and Reasoning to Industrial Cascade Ranking System. arXiv 2025, arXiv:2509.18091. [Google Scholar] [CrossRef]

- Han, Y.; Wang, H.; Wang, K.; Wu, L.; Li, Z.; Guo, W.; Liu, Y.; Lian, D.; Chen, E. End4rec: Efficient noise-decoupling for multi-behavior sequential recommendation. arXiv 2024, arXiv:2403.17603. [Google Scholar]

- Zhang, C.; Han, Q.; Chen, R.; Zhao, X.; Tang, P.; Song, H. Ssdrec: Self-augmented sequence denoising for sequential recommendation. In Proceedings of the 2024 IEEE 40th International Conference on Data Engineering (ICDE), 2024; IEEE; pp. 803–815. [Google Scholar]

- Wang, Y.; Liu, Z.; Yang, L.; Yu, P.S. Conditional denoising diffusion for sequential recommendation. In Proceedings of the Pacific-Asia conference on knowledge discovery and data mining, 2024; Springer; pp. 156–169. [Google Scholar]

- Roychowdhury, S.; Wang, D.; Ge, Q.; Mu, J.; Reddy, S. COFFEE: COdesign Framework for Feature Enriched Embeddings in Ads-Ranking Systems. arXiv 2026, arXiv:2601.02807. [Google Scholar] [CrossRef]

- Wang, W.; Xu, Y.; Feng, F.; Lin, X.; He, X.; Chua, T.S. Diffusion recommender model. In Proceedings of the Proceedings of the 46th international ACM SIGIR conference on research and development in information retrieval, 2023; pp. 832–841. [Google Scholar]

- Xie, W.; Zhou, R.; Wang, H.; Shen, T.; Chen, E. Bridging user dynamics: Transforming sequential recommendations with schrödinger bridge and diffusion models. In Proceedings of the Proceedings of the 33rd ACM International Conference on Information and Knowledge Management, 2024; pp. 2618–2628. [Google Scholar]

- Liu, Q.; Yan, F.; Zhao, X.; Du, Z.; Guo, H.; Tang, R.; Tian, F. Diffusion augmentation for sequential recommendation. In Proceedings of the Proceedings of the 32nd ACM International conference on information and knowledge management, 2023; pp. 1576–1586. [Google Scholar]

- Yin, M.; Wang, H.; Guo, W.; Liu, Y.; Zhang, S.; Zhao, S.; Lian, D.; Chen, E. Dataset regeneration for sequential recommendation. In Proceedings of the Proceedings of the 30th ACM SIGKDD Conference on Knowledge Discovery and Data Mining, 2024; pp. 3954–3965. [Google Scholar]

- Hogan, A.; Blomqvist, E.; Cochez, M.; d’Amato, C.; Melo, G.D.; Gutierrez, C.; Kirrane, S.; Gayo, J.E.L.; Navigli, R.; Neumaier, S.; et al. Knowledge graphs. ACM Computing Surveys (Csur) 2021, 54, 1–37. [Google Scholar]

- Wang, X.; He, X.; Cao, Y.; Liu, M.; Chua, T.S. Kgat: Knowledge graph attention network for recommendation. In Proceedings of the Proceedings of the 25th ACM SIGKDD international conference on knowledge discovery & data mining, 2019; pp. 950–958. [Google Scholar]

- Yang, Y.; Huang, C.; Xia, L.; Li, C. Knowledge graph contrastive learning for recommendation. In Proceedings of the Proceedings of the 45th international ACM SIGIR conference on research and development in information retrieval, 2022; pp. 1434–1443. [Google Scholar]

- Xuan, H.; Liu, Y.; Li, B.; Yin, H. Knowledge enhancement for contrastive multi-behavior recommendation. In Proceedings of the Proceedings of the sixteenth ACM international conference on web search and data mining, 2023; pp. 195–203. [Google Scholar]

- Brown, T.; Mann, B.; Ryder, N.; Subbiah, M.; Kaplan, J.D.; Dhariwal, P.; Neelakantan, A.; Shyam, P.; Sastry, G.; Askell, A.; et al. Language models are few-shot learners. Advances in neural information processing systems 2020, 33, 1877–1901. [Google Scholar]

- Touvron, H.; Lavril, T.; Izacard, G.; Martinet, X.; Lachaux, M.A.; Lacroix, T.; Rozière, B.; Goyal, N.; Hambro, E.; Azhar, F.; et al. Llama: Open and efficient foundation language models. arXiv 2023, arXiv:2302.13971. [Google Scholar] [CrossRef]

- Wu, L.; Zheng, Z.; Qiu, Z.; Wang, H.; Gu, H.; Shen, T.; Qin, C.; Zhu, C.; Zhu, H.; Liu, Q.; et al. A survey on large language models for recommendation. World Wide Web 2024, 27, 60. [Google Scholar] [CrossRef]

- Wang, Y.; Xun, J.; Hong, M.; Zhu, J.; Jin, T.; Lin, W.; Li, H.; Li, L.; Xia, Y.; Zhao, Z.; et al. Eager: Two-stream generative recommender with behavior-semantic collaboration. In Proceedings of the Proceedings of the 30th ACM SIGKDD Conference on Knowledge Discovery and Data Mining, 2024; pp. 3245–3254. [Google Scholar]

- Lin, J.; Shan, R.; Zhu, C.; Du, K.; Chen, B.; Quan, S.; Tang, R.; Yu, Y.; Zhang, W. Rella: Retrieval-enhanced large language models for lifelong sequential behavior comprehension in recommendation. Proceedings of the Proceedings of the ACM Web Conference 2024, 2024, 3497–3508. [Google Scholar]

- Shan, R.; Zhu, J.; Lin, J.; Zhu, C.; Chen, B.; Tang, R.; Yu, Y.; Zhang, W. Full-Stack Optimized Large Language Models for Lifelong Sequential Behavior Comprehension in Recommendation. ACM Transactions on Recommender Systems, 2025. [Google Scholar]

- Guo, C.; She, J.; Cai, K.; Wang, S.; Hu, Q.; Luo, Q.; Zhou, G.; Gai, K. MISS: Multi-Modal Tree Indexing and Searching with Lifelong Sequential Behavior for Retrieval Recommendation. In Proceedings of the Proceedings of the 34th ACM International Conference on Information and Knowledge Management, 2025; pp. 5683–5690. [Google Scholar]

- Xi, Y.; Liu, W.; Lin, J.; Cai, X.; Zhu, H.; Zhu, J.; Chen, B.; Tang, R.; Zhang, W.; Yu, Y. Towards open-world recommendation with knowledge augmentation from large language models. In Proceedings of the Proceedings of the 18th ACM Conference on Recommender Systems, 2024; pp. 12–22. [Google Scholar]

- Liu, Z.; Wang, S.; Wang, X.; Zhang, R.; Deng, J.; Bao, H.; Zhang, J.; Li, W.; Zheng, P.; Wu, X.; et al. Onerec-think: In-text reasoning for generative recommendation. arXiv 2025, arXiv:2510.11639. [Google Scholar]

- Kong, X.; Jiang, J.; Liu, B.; Xu, Z.; Zhu, H.; Xu, J.; Zheng, B.; Wu, J.; Wang, X. Think before Recommendation: Autonomous Reasoning-enhanced Recommender. arXiv 2025, arXiv:2510.23077. [Google Scholar] [CrossRef]

- McAuley, J.; Targett, C.; Shi, Q.; Van Den Hengel, A. Image-based recommendations on styles and substitutes. In Proceedings of the Proceedings of the 38th international ACM SIGIR conference on research and development in information retrieval, 2015; pp. 43–52. [Google Scholar]

- Harper, F.M.; Konstan, J.A. The movielens datasets: History and context. Acm transactions on interactive intelligent systems (tiis) 2015, 5, 1–19. [Google Scholar] [CrossRef]

- Tianchi, *!!! REPLACE !!!*. User Behavior Data from Taobao for Recommendation. 2018. [Google Scholar]

- Tianchi, *!!! REPLACE !!!*. IJCAI-15 Repeat Buyers Prediction Dataset, 2018.

- Tianchi, *!!! REPLACE !!!*. Taobao Display Advertising Click-Through Rate Prediction Dataset. 2018. [Google Scholar]

- Yuan, G.; Yuan, F.; Li, Y.; Kong, B.; Li, S.; Chen, L.; Yang, M.; YU, C.; Hu, B.; Li, Z.; et al. Tenrec: A Large-scale Multipurpose Benchmark Dataset for Recommender Systems. In Proceedings of the Thirty-sixth Conference on Neural Information Processing Systems Datasets and Benchmarks Track, 2022. [Google Scholar]

- Ploshkin, A.; Tytskiy, V.; Pismenny, A.; Baikalov, V.; Taychinov, E.; Permiakov, A.; Burlakov, D.; Krofto, E. Yambda-5B—A Large-Scale Multi-Modal Dataset for Ranking and Retrieval. In Proceedings of the Proceedings of the Nineteenth ACM Conference on Recommender Systems, 2025; pp. 894–901. [Google Scholar]

- Qiu, Z.; Wu, X.; Gao, J.; Fan, W. U-BERT: Pre-training user representations for improved recommendation. Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence 2021, 35, 4320–4327. [Google Scholar] [CrossRef]

- Wang, J.; Zeng, Z.; Wang, Y.; Wang, Y.; Lu, X.; Li, T.; Yuan, J.; Zhang, R.; Zheng, H.T.; Xia, S.T. Missrec: Pre-training and transferring multi-modal interest-aware sequence representation for recommendation. In Proceedings of the Proceedings of the 31st ACM International Conference on Multimedia, 2023; pp. 6548–6557. [Google Scholar]

- Ma, L.; Zhang, R.; Han, Y.; Yu, S.; Wang, Z.; Ning, Z.; Zhang, J.; Xu, P.; Li, P.; Ju, W.; et al. A comprehensive survey on vector database: Storage and retrieval technique, challenge. arXiv 2023, arXiv:2310.11703. [Google Scholar] [CrossRef]

- Pan, J.J.; Wang, J.; Li, G. Survey of vector database management systems. The VLDB Journal 2024, 33, 1591–1615. [Google Scholar] [CrossRef]

- Zhu, D.; Chen, J.; Shen, X.; Li, X.; Elhoseiny, M. Minigpt-4: Enhancing vision-language understanding with advanced large language models. arXiv 2023, arXiv:2304.10592. [Google Scholar]

- Thirunavukarasu, A.J.; Ting, D.S.J.; Elangovan, K.; Gutierrez, L.; Tan, T.F.; Ting, D.S.W. Large language models in medicine. Nature medicine 2023, 29, 1930–1940. [Google Scholar] [CrossRef]

- Demszky, D.; Yang, D.; Yeager, D.S.; Bryan, C.J.; Clapper, M.; Chandhok, S.; Eichstaedt, J.C.; Hecht, C.; Jamieson, J.; Johnson, M.; et al. Using large language models in psychology. Nature Reviews Psychology 2023, 2, 688–701. [Google Scholar] [CrossRef]

- Zhao, Z.; Fan, W.; Li, J.; Liu, Y.; Mei, X.; Wang, Y.; Wen, Z.; Wang, F.; Zhao, X.; Tang, J.; et al. Recommender systems in the era of large language models (llms). IEEE Transactions on Knowledge and Data Engineering 2024, 36, 6889–6907. [Google Scholar] [CrossRef]

- Vullam, N.; Vellela, S.S.; Reddy, V.; Rao, M.V.; SK, K.B.; et al. Multi-agent personalized recommendation system in e-commerce based on user. In Proceedings of the 2023 2nd International Conference on Applied Artificial Intelligence and Computing (ICAAIC), 2023; IEEE; pp. 1194–1199. [Google Scholar]

- Wang, Y.; Jiang, Z.; Chen, Z.; Yang, F.; Zhou, Y.; Cho, E.; Fan, X.; Lu, Y.; Huang, X.; Yang, Y. Recmind: Large language model powered agent for recommendation. Proceedings of the Findings of the Association for Computational Linguistics: NAACL 2024, 2024, 4351–4364. [Google Scholar]

- Shen, Z.; Zhang, M.; Zhao, H.; Yi, S.; Li, H. Efficient attention: Attention with linear complexities. In Proceedings of the Proceedings of the IEEE/CVF winter conference on applications of computer vision, 2021; pp. 3531–3539. [Google Scholar]

- Team, K.; Zhang, Y.; Lin, Z.; Yao, X.; Hu, J.; Meng, F.; Liu, C.; Men, X.; Yang, S.; Li, Z.; et al. Kimi linear: An expressive, efficient attention architecture. arXiv 2025, arXiv:2510.26692. [Google Scholar] [CrossRef]

- Gu, A.; Goel, K.; Ré, C. Efficiently modeling long sequences with structured state spaces. arXiv 2021, arXiv:2111.00396. [Google Scholar]

- Gu, A.; Dao, T. Mamba: Linear-time sequence modeling with selective state spaces. In Proceedings of the First conference on language modeling, 2024. [Google Scholar]

- Chronis, C.; Varlamis, I.; Himeur, Y.; Sayed, A.N.; Al-Hasan, T.M.; Nhlabatsi, A.; Bensaali, F.; Dimitrakopoulos, G. A survey on the use of federated learning in privacy-preserving recommender systems. IEEE Open Journal of the Computer Society 2024, 5, 227–247. [Google Scholar] [CrossRef]

- Reddy, M.S.; Karnati, H.; Sundari, L.M. Transformer based federated learning models for recommendation systems. IEEE Access, 2024. [Google Scholar]

- Feng, C.; Feng, D.; Huang, G.; Liu, Z.; Wang, Z.; Xia, X.G. Robust privacy-preserving recommendation systems driven by multimodal federated learning. IEEE Transactions on Neural Networks and Learning Systems 2024, 36, 8896–8910. [Google Scholar] [CrossRef]

|

|

|

| Model | Original Seq. Length |

# Representations | Dimensions |

|---|---|---|---|

| MIMN | 1,000 | 2*m, m=4/6/8 | 16 |

| CHIME | 1,000 | 1 | 64 |

| SDIM | 16 | 128 | |

| DMGIN | 10,000 | 50 | 16 |

| DV365 | avg. 40,000 | 58 | 256 |

| VISTA | 12,000 | 256 |

| Model | Cache Type |

Used in Training |

Train Complexity | Infer Complexity |

|---|---|---|---|---|

| TWIN | K | ✓ | ||

| MARM | KV | ✓ | ||

| PinFM | KV | ✓ | ||

| LONGER | KV | ✗ | ||

| Climber | KV | ✓ | ||

| VQL | V | ✗ | ||

| LIC | QK | ✓ | ||

| LERA | KV | ✗ |

|

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).