1. Introduction

Maternal mortality remains a global and highly unequal public health problem: in 2023 approximately 260,000 women died from causes related to pregnancy and childbirth, with more than 90% of these deaths concentrated in low- and middle-income countries, most of which are preventable with adequate obstetric care. These recent estimates highlight not only the magnitude of the problem, but also how inequalities in access to health services increase the risk of maternal death in vulnerable populations [

1].

The main direct causes of maternal death continue to be postpartum hemorrhage (PPH) and hypertensive disorders of pregnancy (pre-eclampsia and eclampsia). Severe postpartum hemorrhage accounts for a large proportion of immediate maternal deaths, frequently due to uterine atony or obstetric causes amenable to rapid intervention; pre-eclampsia/eclampsia is associated with multiple organ failure and seizures, and is responsible for tens of thousands of maternal deaths annually. High-impact literature and recent syntheses reinforce that the combination of late detection, lack of supplies (e.g., magnesium sulfate), and delays in interventions contribute to these outcomes [

2,

3].

Proven clinical interventions reduce mortality from these causes: the administration of magnesium sulfate decreases the risk of eclampsia among women with severe pre-eclampsia, while agents such as tranexamic acid and active management protocols of the third stage reduce deaths from postpartum hemorrhage when applied promptly. However, the universal implementation of these measures faces limitations in infrastructure, professional training, and supply chain, especially in contexts with lower capacity for obstetric care [

3,

4].

National and multicenter studies have shown that hypertensive disorders of pregnancy (pre-eclampsia/eclampsia and chronic hypertension) are important causes of maternal death and that the prevalence and adverse outcomes are disproportionately higher among black women; analyses of large hospital databases demonstrate that hospitalizations with a diagnosis of PPH have a higher prevalence and a greater share in maternal deaths, indicating an increased burden in these groups [

5].

Research investigating social and environmental determinants shows that non-Hispanic Black women have substantially higher rates of hypertensive disorders, for example, in cohorts analyzed, PPH rates reached 32% among Black women vs. 23% among white women, and that contextual factors (residential segregation, chronic stress, socioeconomic deprivation) explain part, but not all, of this difference, pointing to structural determinants that increase the risk [

6].

Regarding PPH, recent epidemiological studies show an increased risk of PPH in women from minority ethnic groups, with the highest elevation observed among black women, which implies a greater likelihood of severe hemorrhagic events and the need for interventions; these findings highlight the obstetric vulnerability of black women to hemorrhagic complications [

7].

In addition to the higher incidence, there is evidence of inequality in management: retrospective cohorts that evaluated patients with postpartum hemorrhage and transfusion reported that black patients are less likely to receive anti-hemorrhagic interventions at higher levels (e.g., late access to hemostatic agents or definitive procedures), suggesting that the disparity in treatment contributes to worse maternal outcomes in this group [

8].

Surveillance studies and analyses of maternal deaths in low- and middle-income settings have also documented a similar pattern: recent assessments of deaths from postpartum hemorrhage and hemorrhage point to higher concentrations of deaths among black, Indigenous, and women from more vulnerable areas, with a large proportion of deaths occurring in the immediate postpartum period and with signs that delays in detection and clinical response (even among patients who received prenatal care) contribute to lethality [

9].

In recent years, promising evidence has emerged regarding the role of artificial intelligence (AI) tools in preventing maternal outcomes, for example, predictive models for the risk of pre-eclampsia or hemorrhage, algorithms that analyze vital signs in real time, and early warning systems (EWS) that signal early maternal deterioration. Recent reviews show high performance in retrospective studies and potential reductions in adverse events when AI systems are integrated into perinatal care flows, although most solutions still need multicenter validation, clinical impact assessment, and cost-effectiveness studies [

10,

11].

However, enthusiasm for AI coexists with concrete risks of reproducing and amplifying ethnic-racial inequalities: algorithms trained on unrepresentative databases or those contaminated by historical biases may underestimate risk in minority groups, allocate resources unequally, or recommend inappropriate conduct. Reviews and studies on algorithmic justice document multiple mechanisms, biased data, spurious proxies, and performance evaluations not stratified by race/skin color that lead to worse outcomes for Black, Indigenous, and other minority populations. Recent reports in the literature and scientific press also show cases where AI tools decrease sensitivity to symptoms in women and ethnic minority groups, which, in obstetrics, can translate into delays in recognizing pre-eclampsia or hemorrhage [

12,

13].

In recent years, the incorporation of AI-based tools has been proposed as an innovative strategy for the prevention of adverse maternal outcomes, especially due to its ability to predict early risks of pre-eclampsia and postpartum hemorrhage from large volumes of clinical and vital data. Predictive models and early warning systems have been demonstrating promising results in highly complex contexts, indicating potential to reduce delays in interventions and save lives [

10,

11].

Therefore, the responsible implementation of AI in the prevention of maternal mortality requires: (1) large and representative databases; (2) external validation and performance evaluations stratified by race, socioeconomic level, and place of care; (3) model transparency and continuous clinical supervision; and (4) public policies that prioritize equity, training, and infrastructure so that AI functions as a tool for reducing inequalities, and not as a mechanism for deepening them. This combined agenda of science, regulation, and equity is a condition for algorithms to truly contribute to the reduction of deaths from postpartum hemorrhage and hypertensive disorders [

3,

10,

12].

However, there is a critical gap in the scientific literature on how AI can reproduce or mitigate racial and ethnic inequalities in maternal health. Most models developed to date are trained on databases predominantly composed of white women from high-income countries, raising concerns about the generalizability of the algorithms and their accuracy in racially diverse populations. This lack of representativeness may result in underestimation of risk in Black and Indigenous women, contributing to perpetuating historical inequities already documented in obstetric care [

12,

13].

The intersection of AI, maternal health, and racial equity is a research field advancing at an exponential rate. Technological innovations emerge faster than the scientific community’s capacity to evaluate them through traditional systematic reviews, which can take years to complete. To address this need for timely knowledge without sacrificing methodological rigor, this study adopts the format of a rapid review. This approach, recognized for its ability to produce syntheses of evidence quickly [

14], is particularly suitable for mapping an emerging body of literature, identifying key ethical and methodological challenges, and urgently guiding the development of fairer policies and technologies. The specificities and limitations inherent in this methodological choice are discussed transparently in the subsequent sections.

Therefore, it becomes necessary to describe and critically analyze the racial inequalities associated with the use of AI in the prevention of maternal mortality, particularly in cases of gestational hypertension and postpartum hemorrhage, which remain among the leading causes of preventable death. Understanding these disparities is essential for the development of equitable technological solutions that incorporate principles of algorithmic justice, data diversity, and ethical governance. Thus, this study aims to describe, through a review of national and international literature on racial inequalities in the manipulation of AI tools as a means of preventing maternal mortality due to hypertension and postpartum hemorrhage.

2. Materials and Methods

A rapid literature review was conducted with the aim of synthesizing evidence on the use of AI tools in preventing maternal mortality due to gestational hypertension and postpartum hemorrhage in black women. This rapid review followed systematic review principles but applied predefined methodological simplifications such as restrictions on publication period, languages, and scope of the search to enable the accelerated production of evidence, as recommended for rapid review methodologies [

14]. The protocol for this review can be found on the Open Science Framework - OSF platform (

https://doi.org/10.17605/OSF.IO/B76MS).

The research question was constructed based on the PICO acronym [

15], considering pregnant and postpartum women as population (P); the use of AI tools, predictive models or monitoring systems as exposure/intervention (I/E); standard care without AI or non-racialized populations as comparator (C); and maternal mortality due to gestational hypertension or postpartum hemorrhage as outcome (O).

Inclusion criteria were established that encompassed studies published in the last five years, in English, Portuguese or Spanish. In addition to studies that presented data stratified by race, studies that, while not providing specific quantitative analyses on race, explicitly discussed issues of racial inequality, algorithmic bias, or the need for validation of artificial intelligence in racialized populations were also included. These studies were considered relevant because they conceptually contributed to the understanding of the ethical, methodological, and equity implications of applying AI to maternal health. Thus, the review encompasses both empirical evidence and critical analyses that address the interface between AI and racial inequalities in the prevention of maternal mortality. Exclusion criteria included studies without racial distinction, without maternal and obstetric application of AI, single case reports, editorials or letters to the editor without empirical data, and works without access to the full text.

The search was conducted in the U.S. National Library of Medicine (PubMed)/Medical Literature Analysis and Retrieval System Online (MEDLINE), Excerpta Medica dataBASE (EMBASE), Web of Science, Scopus, and the Latin American and Literatura Latino-Americana e do Caribe em Ciências da Saúde (LILACS) databases. A combination of controlled vocabulary (MeSH/DeCS) and free-text terms related to maternal mortality, hypertensive disorders of pregnancy, postpartum hemorrhage, artificial intelligence, and racial or ethnic inequalities was used. Search strategies were adapted to the syntax and indexing standards of each information source, following the same conceptual structure. Below, we present the example of the strategy applied in PubMed: (“Maternal Mortality”[Mesh] OR “Maternal Mortality”[tiab] OR “maternal death”[tiab] OR “maternal deaths”[tiab]) AND (“Hypertension, Pregnancy-Induced”[Mesh] OR “Pre-Eclampsia”[Mesh] OR “Eclampsia”[Mesh] OR “Postpartum Hemorrhage”[Mesh] OR “hypertensive disorders of pregnancy”[tiab] OR “pregnancy-induced hypertension”[tiab] OR “gestational hypertension”[tiab] OR “pre-eclampsia”[tiab] OR “preeclampsia”[tiab] OR “eclampsia”[tiab] OR “postpartum hemorrhage”[tiab] OR “post-partum hemorrhage”[tiab]) AND (“Artificial Intelligence”[Mesh] OR “Machine Learning”[Mesh] OR “Algorithms”[Mesh] OR “Decision Support Systems, Clinical”[Mesh] OR “artificial intelligence”[tiab] OR “machine learning”[tiab] OR “predictive model”[tiab] OR “predictive models”[tiab] OR “risk stratification”[tiab] OR “clinical decision support”[tiab]) AND (“Ethnic Groups”[Mesh] OR “African Continental Ancestry Group”[Mesh] OR “Health Status Disparities”[Mesh] OR “racial disparities”[tiab] OR “ethnic disparities”[tiab] OR “racial inequality”[tiab] OR “ethnic inequality”[tiab] OR “Black women”[tiab] OR “women of African descent”[tiab]).

The selection of studies occurred in two stages. Initially, titles and abstracts were screened using web Rayyan [

16], which allowed for the rapid marking of potentially eligible studies and the identification of divergences between reviewers. Two independent reviewers conducted the screening, and divergences were resolved by consensus or with the intervention of a third reviewer when necessary. Subsequently, the full texts were evaluated according to the inclusion and exclusion criteria, recording the reasons for exclusion of each study. A flowchart adapted from the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) was developed to document the selection process [

17].

Data extraction was performed using a standardized form that captured the following domains: (1) bibliographic information (author, year, country); (2) methodological characteristics (study design, sample size, population characteristics); (3) details of the AI intervention or model (type of algorithm, input variables used, validation procedures, performance metrics); (4) racial and ethnic variables (categories used, proportion of Black or Indigenous women, stratified results, and whether race/ethnicity was included as a predictor or effect modifier); (5) maternal outcomes related to hypertensive disorders or postpartum hemorrhage; (6) reported measures of association or predictive performance stratified by race/ethnicity; and (7) equity-related elements, such as identification of algorithmic bias, data representativeness, or unequal model performance across racial groups. The form also included fields for study limitations, gaps identified, and authors’ recommendations for equity and AI governance. Data extraction was performed by one reviewer and independently checked by a second, with disagreements documented and resolved by consensus.

The analysis of the studies consisted of a descriptive narrative synthesis. The studies were grouped by type of AI intervention, racial population, type of outcome (hypertension or hemorrhage), and geographic context. Summary tables were presented with study characteristics, measures of association, and findings specific to the racial population. Gaps in evidence, methodological heterogeneity, and reported limitations in the studies were identified, including the absence of data by race, a reduced number of Black participants, and a lack of validation of AI in racialized populations. The interpretation considered both the effects of AI and aspects related to racial equity in maternal outcomes.

The presentation of the results included a detailed description of the number of studies identified, included and excluded, distribution by country, racial population, type of AI and outcome, and a selection flowchart. The findings were discussed in the context of racial inequalities, emphasizing studies that reported specific data on Black women, highlighting where knowledge gaps persisted. The limitations of the rapid review were explained, including the restricted search to certain databases, the absence of grey literature, and possible biases arising from screening by a single reviewer, as well as the absence of meta-analysis.

Since this is a review of published literature, no primary data was collected, therefore approval by an ethics committee was not required. However, ethical criteria were observed regarding respect for copyright, integrity in data presentation, and inclusive and sensitive language concerning racialized populations. Transparency regarding the abbreviated steps and methodological limitations was also ensured, so that readers could adequately assess the reliability and applicability of the findings.

3. Results

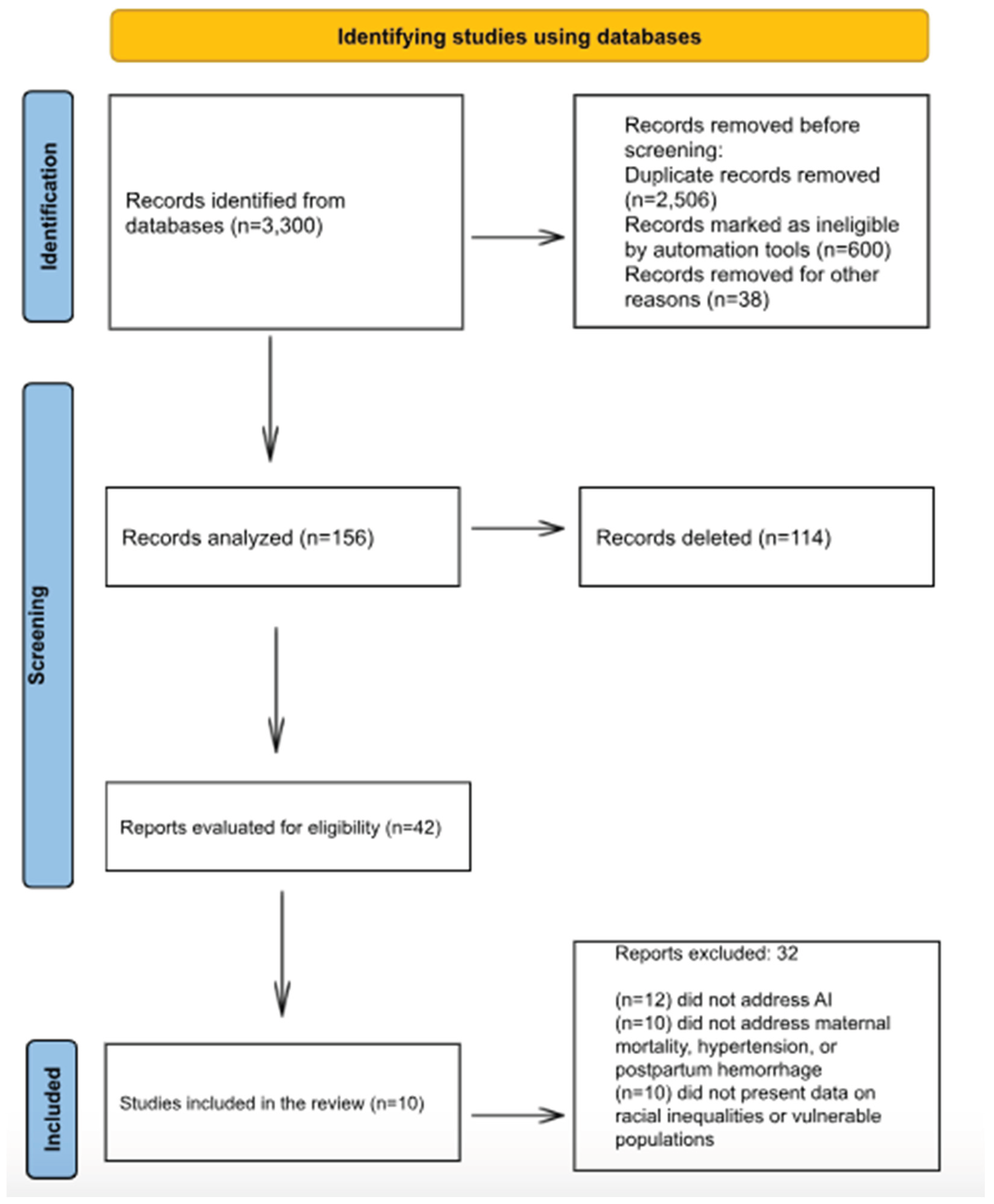

Initially, 3,300 records were identified in the databases. Before screening, 3,144 records were removed: 2,506 due to duplication, 600 deemed ineligible by automated tools, and 38 excluded for other reasons. After this stage, 156 records were analyzed, of which 114 were deleted, leaving 42 reports evaluated for eligibility. Of these, 32 were excluded for not meeting the inclusion criteria: 12 did not address artificial intelligence, 10 did not address maternal mortality, hypertension, or postpartum hemorrhage, and 10 did not present data on racial inequalities or vulnerable populations. At the end of the process, 10 studies were included in the review.

Figure 1.

Flowchart of the process for identifying, screening, determining eligibility, and including studies in the review.

Figure 1.

Flowchart of the process for identifying, screening, determining eligibility, and including studies in the review.

The presentation of the results was descriptive, narrative, and visual, seeking to integrate quantitative and qualitative aspects of the included studies. The evidence was synthesized in tables, charts, and figures to facilitate understanding of the main characteristics, gaps, and thematic implications. Initially, a descriptive table was created containing the main information from the included studies: author, year, country, design, type of intervention or exposure, and main findings, allowing for a panoramic view of the available evidence on the use of AI in preventing maternal mortality due to hypertension and postpartum hemorrhage.

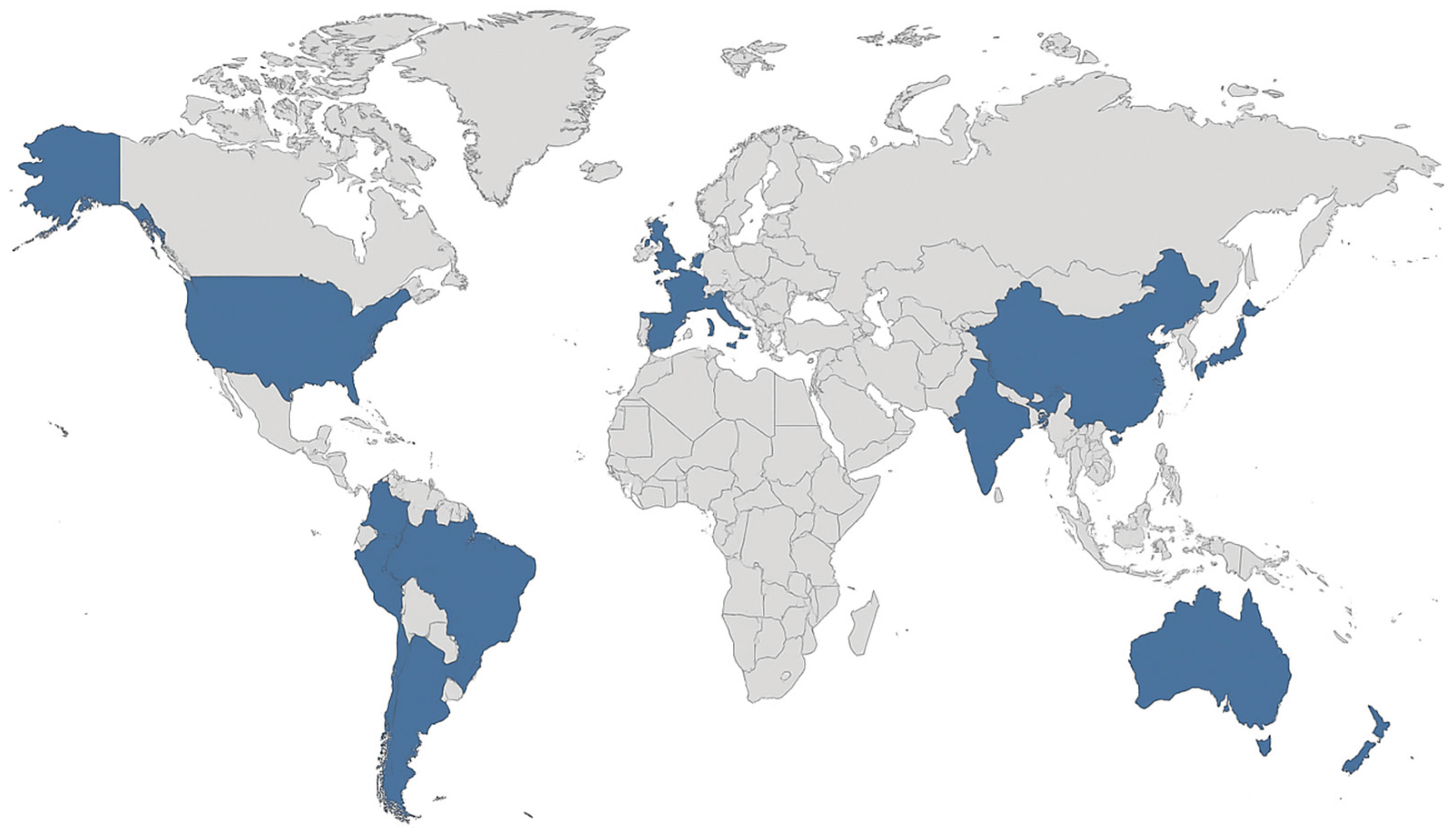

To support the spatial analysis of scientific production, a thematic world map was developed, representing the geographical distribution of the included studies by country and continent. The map was designed in a minimalist style, highlighting the countries where the research was conducted (United States, United Kingdom, Iran, Kenya, and global multicenter studies), allowing visualization of regional asymmetries in scientific production on the topic, as illustrated in

Figure 2.

The ten studies included in the review were predominantly conducted in the United States (n=4), followed by the United Kingdom (n=2), Iran (n=1), and Kenya (n=1), in addition to two multicenter or global studies (n=2). The thematic map illustrates this geographical distribution, highlighting the predominance of research in high-income countries, especially in North America, with emphasis on the United States, where the most robust databases and the most developed artificial intelligence models are concentrated. In Europe, the significant presence of the United Kingdom is observed, which also stands out in the production of studies focused on the validation of predictive models in maternal health. In Asia, there is a punctual representation of Iran, while in Africa only Kenya was identified as a research setting, reflecting the scarcity of research conducted in lower-income contexts. Finally, the multicenter and global studies demonstrate an articulation between different continents, reinforcing the need for international cooperation and diversity of contexts for the equitable advancement of artificial intelligence applications in reproductive health.

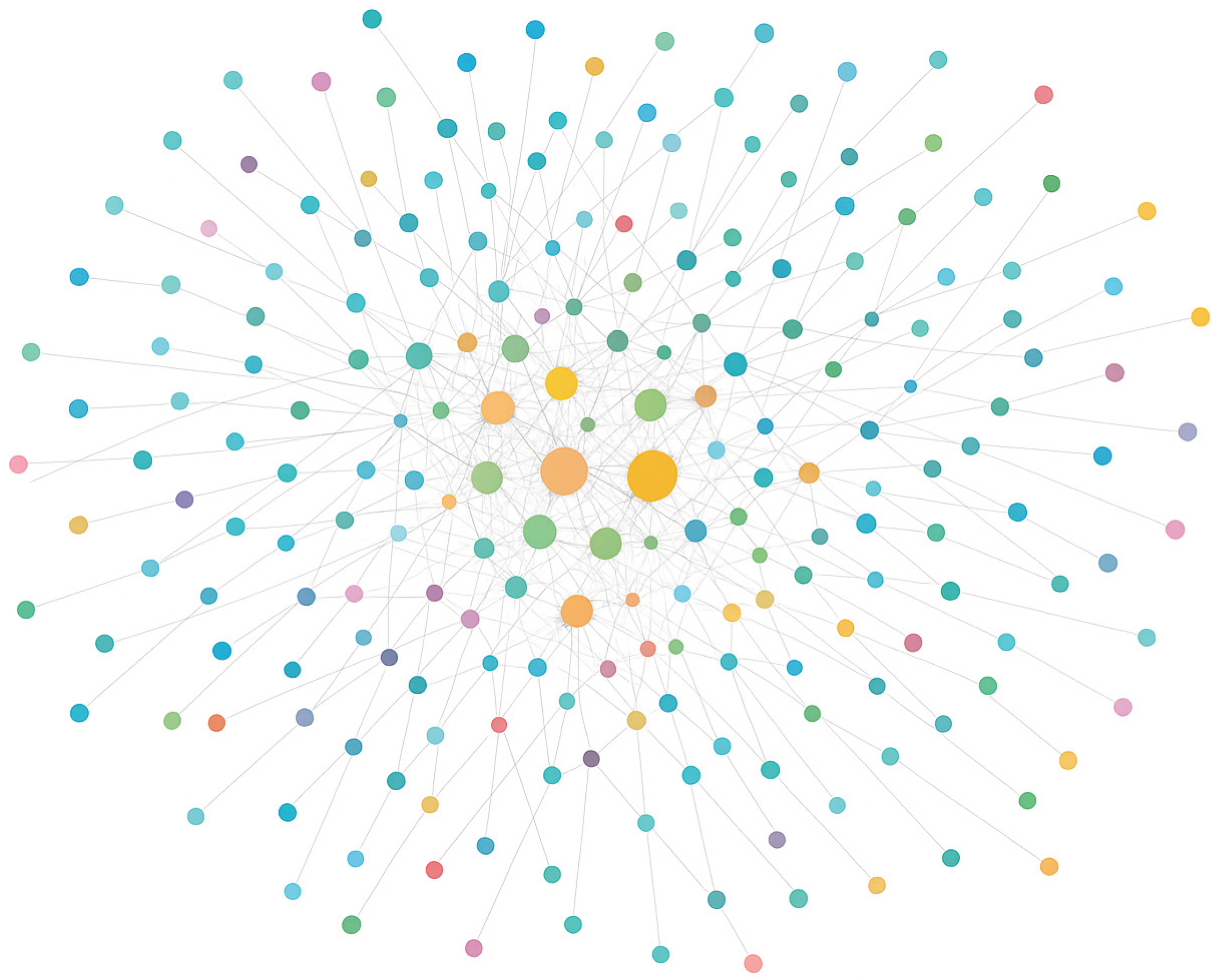

This network visualization represents connections between countries (United States, United Kingdom, Iran, Kenya, Global) and dominant research themes (postpartum hemorrhage, hypertensive disorders, equity, bias, AI validation) identified in the literature. Node size reflects the frequency of occurrence, while link density represents thematic co-occurrence across studies. The structure highlights strong clustering around AI bias and equity in high-income countries and sparse connections in lower-income contexts, underscoring global disparities in research production.

Figure 3.

Network of countries and research themes in AI and maternal mortality.

Figure 3.

Network of countries and research themes in AI and maternal mortality.

The included studies demonstrated that AI and machine learning tools are increasingly being applied to predict critical obstetric complications, especially postpartum hemorrhage and hypertensive disorders of pregnancy and have achieved high discriminatory performance in diverse geographic contexts (United States, Iran, Kenya). At the same time, the most recent literature underscores the need for explicit attention to equity: data diversity, validation in racialized populations, and recognition of algorithmic bias emerge as central factors for AI to contribute to reducing, rather than exacerbating, maternal inequalities. In short, while the technology shows great promise for preventing maternal mortality, its equitable effectiveness still depends on the inclusion of vulnerable populations, such as black women, and on the structuring of databases and models that consider race, socioeconomic conditions and care context.

Table 1.

Applications of artificial intelligence in predicting maternal mortality: summary of included studies.

Table 1.

Applications of artificial intelligence in predicting maternal mortality: summary of included studies.

| Author/Year |

Country |

Study Design |

Intervention/Exposure |

Key Findings |

| Susanu et al., 202418

|

Multicenter |

Prospective study |

ML algorithms for predicting intra- and postpartum hemorrhage |

Models predicted hemorrhage risk with high accuracy; need for validation in racialized populations to reduce mortality in vulnerable groups |

| Ahmadzia et al., 202419

|

USA |

Model development study |

ML for predicting postpartum hemorrhage and transfusion |

ML identified high risk of postpartum hemorrhage; highlights need for testing in black women |

| Mapari et al., 202420

|

Global |

Narrative review |

AI in maternal health |

AI can improve early detection; racial disparities may persist if black women underrepresented |

| McAdams & Green, 202421

|

USA |

Narrative review |

AI in obstetrics, maternal-fetal medicine, and neonatology |

AI tools may reproduce biases, increasing mortality in Black women; emphasize hypertension and hemorrhage |

| Khan et al., 202422

|

UK |

Dataset development |

OxMat multimodal dataset for maternal-infant health AI |

Robust dataset; potential to mitigate disparities including mortality from hemorrhage or hypertension; validation in black women needed |

| Singh et al., 202423

|

USA |

Annotation tool development |

AI tool for analyzing maternal safety reports |

Identified disparities in risk factors; AI may help mitigate higher postpartum hemorrhage mortality in black women |

| Shah et al., 202324

|

Kenya |

Observational study |

ML for predicting postpartum hemorrhage |

Models predicted hemorrhage in vulnerable population; populations with limited care access show higher maternal mortality |

| Mehrnoush et al., 202325

|

Iran |

Retrospective study |

ML for predicting postpartum hemorrhage |

XGBoost predicted hemorrhage; socio-economic factors, similar to those affecting black women, influence mortality |

| Ansbacher-Feldman et al., 202226

|

UK |

Cohort study |

ML for predicting preeclampsia using first-trimester data |

Inclusion of racial variables improved accuracy; black women have higher hypertensive disorder risk |

| Westcott et al., 202227

|

USA |

Retrospective cohort |

ML in 30,867 women |

Models predicted hemorrhage; racialized populations, especially black women, have higher mortality; need race-specific validation |

The ten studies included in this rapid review collectively demonstrate the increasing application of AI and machine learning (ML) in predicting critical maternal complications, particularly PPH and hypertensive disorders of pregnancy. Prospective, retrospective, cohort, and model development studies across diverse geographical contexts including the USA, Iran, Kenya and multicenter settings consistently showed high predictive performance [

25,

27]. These findings indicate that AI has substantial potential to identify women at elevated risk of severe maternal outcomes, enabling timely interventions and potentially reducing mortality.

Several studies highlighted that black women and other racialized populations experience higher rates of maternal mortality due to PPH and hypertensive disorders, and that AI models may perpetuate existing disparities if racial diversity is not adequately represented in the training datasets [

21,

23,

26]. Only a few studies explicitly incorporated racial variables or analyzed differential performance across racial groups, demonstrating a critical gap in the equitable application of AI in maternal health. Reviews and methodological papers further emphasize that the inclusion of diverse populations and socio-demographic variables is essential to prevent bias and ensure AI-driven tools contribute to reducing, rather than exacerbating, maternal health disparities.

Furthermore, qualitative synthesis tables were constructed to organize the cross-sectional findings emerging from the critical reading of the articles.

Table 2 (“Main gaps identified in studies on AI and maternal mortality”) brings together methodological and conceptual deficiencies observed in the studies, such as the absence of external validation, lack of racial variables, and scarcity of representative data.

Table 3 (“Recommendations for an equitable implementation of AI in reproductive health”) presents practical and ethical guidelines derived from the analysis of the findings, emphasizing aspects such as data diversity, racial validation, algorithmic transparency, and integration with equity policies.

Table 2 highlights the main methodological and structural gaps in the analyzed studies, emphasizing the absence of external validation, the use of non-representative databases, the scarcity of racial variables, the low transparency of the algorithms, and the limitations of technological infrastructure in low-income countries. Furthermore, it shows that most AI models focus on predicting intermediate obstetric complications, such as postpartum hemorrhage and pre-eclampsia, without directly assessing the impact on maternal mortality, which restricts the applicability of the results in real-world contexts.

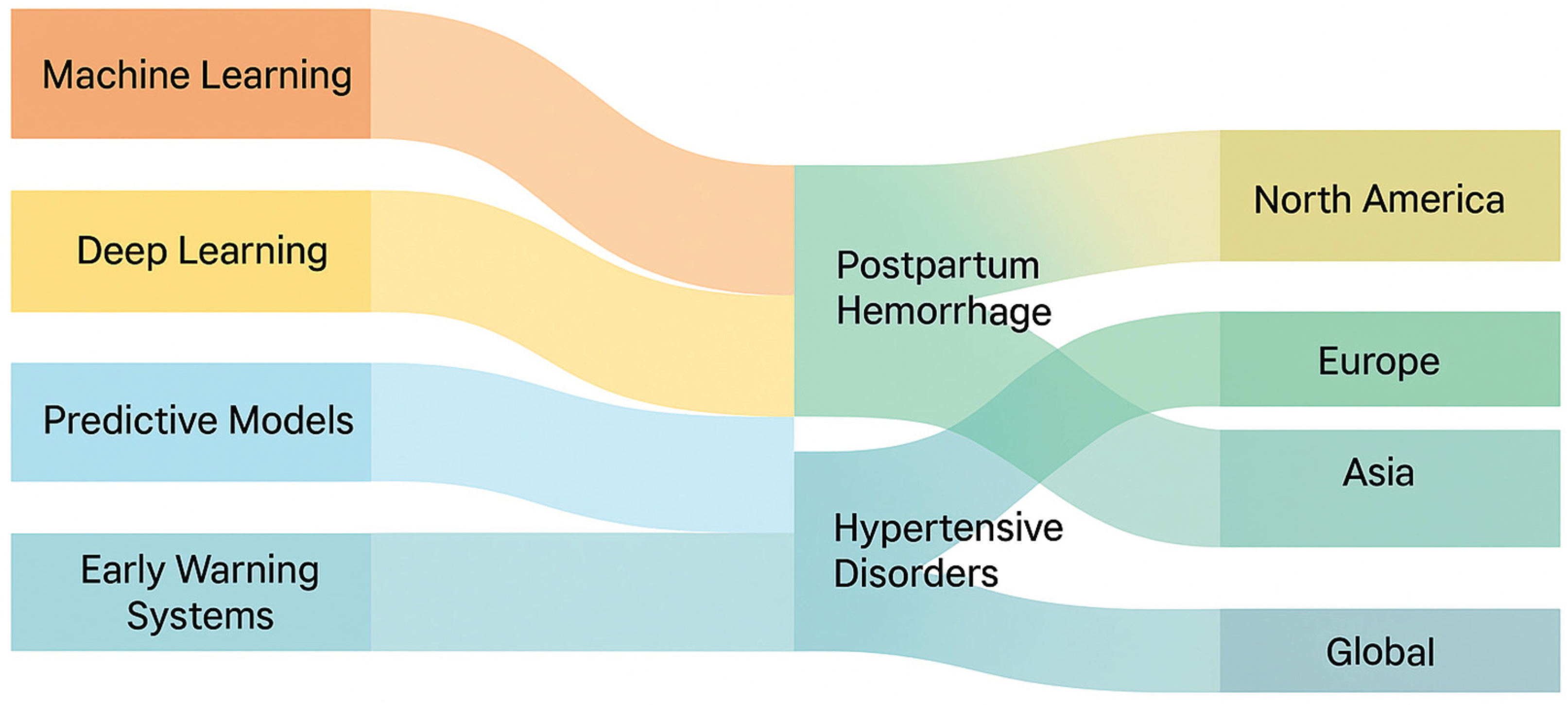

The Sankey diagram visualizes the relationship between the types of artificial intelligence used (machine learning, deep learning, predictive models, early warning systems), maternal complications (postpartum hemorrhage and hypertensive disorders of pregnancy), and the regions where studies were conducted. The width of the flows represents the relative frequency of studies across these dimensions, revealing that most AI applications focused on postpartum hemorrhage and were concentrated in North America and Europe.

Figure 4.

Sankey Diagram of AI types, maternal complications and regions.

Figure 4.

Sankey Diagram of AI types, maternal complications and regions.

Table 3, in turn, presents a set of practical and ethical recommendations to promote the equitable implementation of these technologies. Among the proposed measures are strengthening data diversity, racial and contextual validation of models, transparency and auditability of algorithms, clinical training of professionals, integration of AI tools with public policies on racial equity, and the promotion of interdisciplinary research.

Taken together, the two frameworks indicate that the technical advancement of AI in reproductive health must be accompanied by an agenda of reproductive justice, diversity, and ethical governance, in order to ensure that technological innovation effectively contributes to reducing, rather than perpetuating, racial inequalities in maternal mortality. Finally, the results were organized according to previously identified thematic categories: AI technical performance, racial equity and validation, implications for practice, and limitations and future directions, which allowed for a structured discussion aligned with the objective of the review.

4. Discussion

The studies included in this review show that AI and ML models perform well in predicting serious maternal complications, especially PPH and hypertensive disorders of pregnancy, often exceeding 0.95 in high-income cohorts. For example, in a large retrospective study in the United States with 30,867 women, the ML model achieved an AUROC of 0.979 for PPH prediction. This level of performance confirms the potential of AI to anticipate high-risk events, which are known to be responsible for the majority of preventable maternal deaths [

28,

29,

30]. However, recent systematic reviews indicate that most models remain internally validated, with a scarcity of external validation and execution in middle- or low-income contexts, which limits generalization [

31].

Even more serious is the fact that few studies have presented specific analysis by race or have included racial variables in a robust way, which constitutes a critical gap when considering the known disparities: black women in the US often have maternal mortality rates 2-4 times higher than white women [

32].

Among the reviewed articles, only a few mentioned the inclusion of race as a variable or the need for validation in racialized populations, such as the study on pre-eclampsia in the United Kingdom, which found that the inclusion of racial variables improved the model’s accuracy [

33]. However, this type of inclusion remains the exception, which reinforces the risk that AI may reproduce or even amplify existing inequalities if applied without attention to the representativeness of the data. When models are trained predominantly on white women, they learn patterns specific to white women’s physiology, presentation of disease, and healthcare access. For example, if vital signs are monitored more frequently in white women, the model learns to rely on frequent vital sign measurements. When applied to Black women with less frequent monitoring, the model may fail because it receives ‘incomplete data.’ This is not a biological difference, but a difference in data quality that reflects healthcare inequities. Review studies highlight that algorithmic bias can occur when racialized groups are underrepresented or when models are trained on predominantly white or high-income populations [

21,

34].

In terms of a global context, the findings of studies in low- and middle-income countries (e.g., the Kenya study on PPH) are particularly relevant: they demonstrate that AI models can work in vulnerable and resource-poor environments, suggesting applicability beyond the centers of excellence in high-income countries [

24]. However, even in these contexts, there is a lack of data that allows for the segmentation of vulnerable racial/ethnic groups, which implies that the benefits of AI may not reach Black or Indigenous women equitably. A recent international review emphasizes that, for HPP prediction, there is great heterogeneity among studies, many with limited validation and virtually none focused on racialized populations or specific regions of vulnerability [

31].

From the perspective of maternal mortality due to gestational hypertension, the use of AI still shows less evidence compared to PPH, but presents promising indications, for example, the Pre-eclampsia Integrated Estimate of Risk – Machine Learning (PIERS-ML) model for pre-eclampsia improved the identification of women at high risk of adverse outcome within 2 days [

35]

The discussion on algorithmic fairness is central: well-designed AI tools can be powerful allies in reducing inequalities, but if implemented without attention to the diversity of the database, validation in vulnerable groups, and algorithm transparency, they can perpetuate the status quo. Studies such as Fair Machine Learning for Healthcare demonstrate that racial, socioeconomic index, and intersectional factors (e.g., race+sex+class) interact in the performance and impact of AI models, so algorithmic justice requires explicit recognition of these dimensions [

36].

In maternal health, it is essential to distinguish between algorithmic bias and structural inequalities. Consider two scenarios in predicting postpartum hemorrhage (PPH): Algorithmic Bias: A model trained on predominantly white women learns that “elevated blood pressure” predicts PPH. However, Black women receive fewer prenatal visits and less frequent blood pressure monitoring due to systemic racism. When applied to Black women, the model underestimates risk because it receives incomplete data not because of biological differences, but because of healthcare inequities. This is algorithmic bias: the model produces worse predictions for Black women. Reflecting Structural Inequality: A model learns that “living in a neighborhood with limited obstetric services” predicts adverse outcomes. Black women are overrepresented in such neighborhoods due to segregation and disinvestment. When the model predicts higher risk for Black women, it is not biased; it is accurately reflecting a real structural inequality. The problem is not the model, but the underlying inequality. The distinction matters: if a model exhibits algorithmic bias, we must improve the model. If a model reflects structural inequalities, improving the model alone is insufficient; we must address underlying causes. However, the reviewed studies do not make this distinction explicit, and most did not report performance stratified by race. This represents a critical gap for equitable implementation [

37].

The reviewed studies fail to address intersectionality and how multiple, overlapping forms of discrimination affect maternal health [

38]. Black women experiencing maternal mortality are not a homogeneous group: they include low-income women, rural women, women experiencing intimate partner violence, women with substance use disorders, and women navigating immigration status [

39]. These intersecting vulnerabilities interact in ways that single-axis analysis cannot capture. An AI model trained on data from women with regular prenatal care may fail for women experiencing homelessness or violence populations disproportionately represented among Black women who die in pregnancy or childbirth. A model reporting 95% accuracy “for Black women” may mask 70% accuracy for low-income Black women in rural areas. This represents a hierarchy of vulnerability where the most vulnerable are left behind [

40]. To ensure equity, AI systems must: (1) evaluate performance for intersectional subgroups; (2) involve women with lived experience of multiple marginalization; and (3) recognize that technology alone cannot address structural inequities driving maternal mortality [

41,

42].

A cross-sectional limitation of the reviewed studies relates to the outcome of maternal mortality itself; many focused on predicting risk or serious complications (HPP, pre-eclampsia) as a proxy for mortality, but few explicitly assessed maternal deaths or included mortality stratified by race. This compromises the certainty of inferring that a reduction in mortality among Black women will be achieved with the adoption of AI. Recent reviews indicate that for PPH, the overall mortality rate remains high in low-income countries, and that most AI models have not yet been tested in contexts where mortality is higher, a context where Black and Indigenous women are underrepresented [

29].

The included studies demonstrate that AI and machine learning exhibit high technical performance in predicting serious obstetric complications, especially postpartum hemorrhage and hypertensive disorders of pregnancy [

27]. Other investigations, such as those by Susanu et al. (2024) and Ahmadzia et al. (2024), have demonstrated similar accuracy in predicting hemorrhagic risk, with the ability to identify high-risk women early [

18,

19]. Recent systematic reviews also indicate that these models outperform conventional methods and can reduce critical delays in obstetric emergency settings [

28]. However, most of the evidence comes from high-income settings, with limited internal validation and a scarcity of multicenter external tests, which compromises the generalizability of the findings [

20,

25].

Racial equity in model validation is one of the main gaps identified. Only a small number of studies have included racial variables or evaluated the performance of algorithms among different ethnic groups [

26]. The work of Ansbacher-Feldman et al. (2022) showed that the inclusion of racial characteristics and biomarkers increased the accuracy in predicting pre-eclampsia, but this approach is still the exception [

26]. Narrative research and methodological reviews, such as those by McAdams & Green (2024) and Mapari et al. (2024), warn that models based on predominantly white databases may reproduce or amplify existing racial disparities in maternal mortality [

20,

21]. Furthermore, the multicenter study by Shah et al. (2023) in a Kenyan population showed that, although AI can work in resource-limited contexts, the absence of racial stratification and diversity data compromises its equitable effectiveness [

24]. Thus, external validation in racialized populations is an essential step to ensure algorithmic justice and representativeness in maternal health [

22,

23].

The practical implications of integrating AI into maternal health are broad. Effective predictive models can optimize care flows, identify at-risk pregnant women early, and support clinical decisions based on real-time data [

18,

19,

27]. The responsible adoption of these tools requires staff training, interoperability with electronic records, and continuous supervision to avoid biased interpretations. Recent literature argues that AI systems should be evaluated from an equity perspective, including sensitivity analyses stratified by race/ethnicity and socioeconomic conditions [

21,

23]. In highly vulnerable contexts, such as in low- and middle-income countries, the ethical use of AI can expand access to early detection of complications, provided it is associated with policies to strengthen data infrastructure and governance [

24,

25]. Therefore, AI has the potential to reduce inequalities if implemented in an inclusive, transparent, and supervised manner.

In summary, this review reveals that while AI systems show promise for predicting maternal complications, current applications systematically fail to address racial equity. The gap is not primarily technical models that can achieve high accuracy but rather structural: they are developed, validated, and will be implemented without adequate attention to racial representation, fairness, and the lived experiences of Black women. Closing this gap requires not incremental improvements to existing models, but a fundamental reorientation of AI development in maternal health toward principles of algorithmic justice, community engagement, and racial equity. This is not optional; it is essential for AI to fulfill its promise of reducing, rather than perpetuating, global maternal inequalities.

The limitations observed in this review reflect the current stage of AI research in maternal mortality. Most studies assessed intermediate outcomes (PPH, pre-eclampsia) rather than maternal mortality itself, which limits the ability to infer the true impact of AI tools on preventing deaths [

19,

25]. A major constraint was the scarcity of consistent racial and ethnic data: because most studies did not report performance stratified by race, this review was unable to compare algorithmic behavior across groups, which is central to equity analysis. Likewise, the absence of external validation, subgroup analyses, and cost-effectiveness assessments reduces the certainty of the available evidence.

In addition, limitations inherent to the rapid review methodology must be acknowledged, including a restricted search strategy, reduced use of duplicate screening and data extraction, and the exclusion of gray literature, all of which may have resulted in relevant studies being missed. The evidence base also reflects a narrow focus on hypertensive disorders and postpartum hemorrhage, excluding other major contributors to maternal mortality among Black women such as cardiomyopathy, thromboembolic events, and sepsis thereby limiting the broader generalization of findings.

Future directions include: (1) developing multicenter and racially diverse datasets that explicitly include Black and Indigenous women; (2) conducting external validation in low-resource settings; (3) ensuring algorithmic transparency and reporting of performance metrics by subgroup; and (4) strengthening ethical and regulatory frameworks for equitable AI development in reproductive health [

20,

23,

26,

27]. Finally, interdisciplinary approaches integrating data science, epidemiology, and racial studies are essential for AI to fulfill its promise of reducing rather than perpetuating global maternal inequalities.

5. Conclusions

Artificial intelligence has shown high technical performance in predicting severe maternal complications, particularly postpartum hemorrhage and hypertensive disorders of pregnancy. The evidence synthesized in this review demonstrates that predictive and monitoring models can identify women at risk with great accuracy and may support clinical decision-making to prevent adverse outcomes. However, most studies remain internally validated and were conducted in high-income settings, limiting their generalizability to racially diverse or resource-limited populations. A persistent challenge is the underrepresentation of Black and Indigenous women in the datasets used to train and validate these algorithms. Without explicit consideration of race, ethnicity, and social determinants, AI systems risk reproducing structural inequities that already characterize maternal mortality patterns worldwide. Therefore, algorithmic equity and transparency must become essential requirements in the development and implementation of AI for maternal health.

The findings highlight that equitable artificial intelligence requires diverse and representative databases, external validation in vulnerable populations, and ongoing interdisciplinary collaboration among data scientists, clinicians, and public health experts. In practice, this means aligning technological innovation with reproductive justice and racial equity agendas. Future research should prioritize inclusive model design, evaluate real-world clinical impact, and ensure that algorithmic tools contribute effectively to reducing preventable maternal deaths among black women and other marginalized groups. Only through this integrative approach can AI move from a promising technological resource to a transformative instrument for global maternal health equity.

Author Contributions

Conceptualization, dos Santos GG, Njoku A and Costa ICP.; methodology, dos Santos GG and Costa ICP.; formal analysis, dos Santos GG, Njoku A, dos Santos CMP, Viana LHF, de Sousa MG, Cappello CH, de Oliveira C, de Andrade LH and Lima CF.; investigation, dos Santos GG, Mass ACP, Ribeiro EES, de Oliveira LE, Lima MJCS, Ferro TA, Pirozi LRR and Peregrino AAF.; writing—original draft preparation, dos Santos GG, Njoku A and Costa ICP.; writing—review and editing, Njoku, Costa ICP, CMP, Viana LHF, de Sousa MG, Cappello CH, de Oliveira C, de Andrade LH, Lima CF, Mass ACP, Ribeiro EES, de Oliveira LE, Lima MJCS, Ferro TA, Pirozi LRR and Peregrino AAF. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

Not applicable.

Conflicts of Interest

The authors declare no conflicts of interest.

Abbreviations

| AI |

Artificial intelligence |

| EMBASE |

Excerpta Medica dataBASE |

| EWS |

Early warning systems |

| LILACS |

Literatura Latino-Americana e do Caribe em Ciências da Saúde |

| MEDLINE |

Medical Literature Analysis and Retrieval System Online |

| ML |

Machine learning |

| OSF |

Open Science Framework |

| PICO |

Population, exposure/intervention, comparator and outcomes |

| PIERS-ML |

Pre-eclampsia Integrated Estimate of Risk – Machine Learning |

| PPH |

Postpartum hemorrhage |

| PRISMA |

Preferred Reporting Items for Systematic Reviews and Meta-Analyses |

| PubMed |

U.S. National Library of Medicine |

References

- World Health Organization (WHO). Maternal mortality [Internet]. Geneva: WHO; 7 Apr 2025. Accessed on: November 20, 2025. Available from: https://www.who.int/news-room/fact-sheets/detail/maternal-mortality.

- Yunas, I.; Islam, M.A.; Sindhu, K.N.; Devall, A.J.; Podesek, M.; Alam, S.S.; Kundu, S.; Mammoliti, K.M.; Aswat, A.; Price, M.J.; Zamora, J.; Oladapo, O.T.; Gallos, I.; Coomarasamy, A. Causes of and risk factors for postpartum haemorrhage: a systematic review and meta-analysis. Lancet. 2025, 405, 1468–1480. [Google Scholar] [CrossRef]

- World Health Organization (WHO). Pre-eclampsia [Internet]. Geneva: WHO; 4 Apr 2025. Accessed on: November 20, 2025. Available from: https://www.who.int/news-room/fact-sheets/detail/pre-eclampsia.

- Anti-fibrinolytics Trialists Collaborators – Obstetric Trialists Group; Ker K, Shakur-Still H, Sentilhes L, Pacheco LD, Saade G, Deneux-Tharaux C, Brenner A, Mansukhani R, Ageron FX, Prowse D, Chaudhri R, Olayemi O, Roberts, I. Tranexamic acid for the prevention of postpartum bleeding: Protocol for a systematic review and individual patient data meta-analysis. Gates Open Res. 2023, 7, 3. [CrossRef]

- Ford, N.D.; Cox, S.; Ko, J.Y.; Ouyang, L.; Romero, L.; Colarusso, T.; Ferre, C.D.; Kroelinger, C.D.; Hayes, D.K.; Barfield, W.D. Hypertensive Disorders in Pregnancy and Mortality at Delivery Hospitalization - United States, 2017-2019. MMWR Morb Mortal Wkly Rep. 2022, 71, 585–591. [Google Scholar] [CrossRef] [PubMed]

- Keith, M.H.; Martin, M.A. Social Determinant Pathways to Hypertensive Disorders of Pregnancy Among Nulliparous U.S. Women. Womens Health Issues. 2024, 34, 36–44. [Google Scholar] [CrossRef]

- Jardine J, Gurol-Urganci I, Harris T, Hawdon J, Pasupathy D, van der Meulen J, Walker, K; NMPAProject Team Risk of postpartum haemorrhage is associated with ethnicity: Acohort study of 981 801 births in England. BJOG 2022, 129, 1269–1277. [CrossRef]

- Guan, C.S.; Boyer, T.M.; Darwin, K.C.; Henshaw, C.; Michos, E.D.; Lawson, S.; Vaught, A.J. Racial disparities in care escalation for postpartum hemorrhage requiring transfusion. Am J Obstet Gynecol MFM. 2023, 5, 100938. [Google Scholar] [CrossRef]

- Khan, Z.L.; Balie, G.M.; Chauke, L. Hypertensive Disorders of Pregnancy Deaths: A Four-Year Review at a Tertiary/Quaternary Academic Hospital. Int J Environ Res Public Health. 2025, 22, 978. [Google Scholar] [CrossRef]

- El Arab, R.A.; Al Moosa, O.A.; Albahrani, Z.; Alkhalil, I.; Somerville, J.; Abuadas, F. Integrating Artificial Intelligence into Perinatal Care Pathways: A Scoping Review of Reviews of Applications, Outcomes, and Equity. Nurs Rep. 2025, 15, 281. [Google Scholar] [CrossRef] [PubMed]

- Chimwaza Y, Hunt A, Oliveira-Ciabati L, Bonnett L, Abalos E, Cuesta C, Souza JP, Bonet M, Brizuela V, Lissauer D; World Health Organization Global Maternal Sepsis Study Research Group. Early warning systems for identifying severe maternal outcomes: findings from the WHO global maternal sepsis study. EClinicalMedicine. 2024, 79, 102981. [CrossRef]

- Chen, R.J.; Wang, J.J.; Williamson, D.F.K.; Chen, T.Y.; Lipkova, J.; Lu, M.Y.; Sahai, S.; Mahmood, F. Algorithmic fairness in artificial intelligence for medicine and healthcare. Nat Biomed Eng. 2023, 7, 719–742. [Google Scholar] [CrossRef]

- Haider, S.A.; Borna, S.; Gomez-Cabello, C.A.; Pressman, S.M.; Haider, C.R.; Forte, A.J. The Algorithmic Divide: A Systematic Review on AI-Driven Racial Disparities in Healthcare. J Racial Ethn Health Disparities. 2024 Dec 18. [CrossRef]

- Booth A, Sommer I, Noyes J, Houghton C, Campbell F; Cochrane Rapid Reviews Methods Group and Cochrane Qualitative and Implementation Methods Group (CQIMG). Rapid reviews methods series: guidance on rapid qualitative evidence synthesis. BMJ Evid Based Med. 2024, 29, 194–200. [CrossRef]

- Eriksen, M.B.; Frandsen, T.F. The impact of patient, intervention, comparison, outcome (PICO) as a search strategy tool on literature search quality: a systematic review. J Med Libr Assoc. 2018, 106, 420–431. [Google Scholar] [CrossRef]

- Ouzzani, M.; Hammady, H.; Fedorowicz, Z.; Elmagarmid, A. Rayyan—a web and mobile app for systematic reviews. Syst Rev. 2016, 5, 210. [Google Scholar] [CrossRef] [PubMed]

- Lengane, N.I.; Some, M.J.M.; Tall, M.; Ouermi, A.S.; Nikiema, J.P.; Ouoba, J.W.; Kadyogo, M.; Sereme, M. Le fibromatosis colli: une tumeur cervicale rare du nourrisson [Fibromatosis colli: a rare cervical tumor of the infant]. Pan Afr Med J. 2020, 37, 370. [Google Scholar] [CrossRef]

- Susanu, C.; Hărăbor, A.; Vasilache, I.A.; Harabor, V.; Călin, A.M. Predicting Intra- and Postpartum Hemorrhage through Artificial Intelligence. Medicina (Kaunas). 2024, 60, 1604. [Google Scholar] [CrossRef]

- Ahmadzia, H.K.; Dzienny, A.C.; Bopf, M.; Phillips, J.M.; Federspiel, J.J.; Amdur, R.; Rice, M.M.; Rodriguez, L. Machine Learning Models for Prediction of Maternal Hemorrhage and Transfusion: Model Development Study. JMIR Bioinform Biotechnol. 2024, 5, e52059. [Google Scholar] [CrossRef] [PubMed]

- Mapari, S.A.; Shrivastava, D.; Dave, A.; Bedi, G.N.; Gupta, A.; Sachani, P.; Kasat, P.R.; Pradeep, U. Revolutionizing Maternal Health: The Role of Artificial Intelligence in Enhancing Care and Accessibility. Cureus. 2024, 16, e69555. [Google Scholar] [CrossRef] [PubMed]

- McAdams, R.M.; Green, T.L. Equitable Artificial Intelligence in Obstetrics, Maternal-Fetal Medicine, and Neonatology. Obstet Gynecol. 2024 Mar 28. [CrossRef]

- Asiedu, M.; Dieng, A.; Haykel, I.; Rostamzadeh, N.; Pfohl, S.; Nagpal, C.; Nagawa, M.; Oppong, A.; Koyejo, S.; Heller, K. The Case for Globalizing Fairness: A Mixed Methods Study on Colonialism, AI, and Health in Africa. arXiv [Internet]. 2024 Mar 11. https://arxiv.org/abs/2403.03357.

- Liu, Y.; Wang, W.; Gao, G.G.; Agarwal, R. Echoes of Biases: How Stigmatizing Language Affects AI Performance [preprint]. arXiv [Internet]. 2023 May 17. https://arxiv.org/abs/2305.10201.

- Mehrnoush, V.; Ranjbar, A.; Farashah, M.V.; Darsareh, F.; Shekari, M.; Jahromi, M.S. Prediction of postpartum hemorrhage using traditional statistical analysis and a machine learning approach. AJOG Glob Rep. 2023, 3, 100185. [Google Scholar] [CrossRef]

- Shah, S.Y.; Saxena, S.; Rani, S.P.; Nelaturi, N.; Gill, S.; Tippett Barr, B.; Were, J.; Khagayi, S.; Ouma, G.; Akelo, V.; Norwitz, E.R.; Ramakrishnan, R.; Onyango, D.; Teltumbade, M. Prediction of postpartum hemorrhage (PPH) using machine learning algorithms in a Kenyan population. Front Glob Womens Health. 2023, 4, 1161157. [Google Scholar] [CrossRef]

- Ansbacher-Feldman, Z.; Syngelaki, A.; Meiri, H.; Cirkin, R.; Nicolaides, K.H.; Louzoun, Y. Machine-learning-based prediction of pre-eclampsia using first-trimester maternal characteristics and biomarkers. Ultrasound Obstet Gynecol. 2022, 60, 739–745. [Google Scholar] [CrossRef]

- Westcott, J.M.; Hughes, F.; Liu, W.; Grivainis, M.; Hoskins, I.; Fenyo, D. Prediction of Maternal Hemorrhage Using Machine Learning: Retrospective Cohort Study. J Med Internet Res. 2022, 24, e34108. [Google Scholar] [CrossRef]

- Mathewlynn, S.J.; Soltaninejad, M.; Collins, S.L. Artificial Intelligence and Postpartum Hemorrhage. Matern Fetal Med. 2025, 7, 22–28. [Google Scholar] [CrossRef]

- Wakefield, B.M.; Zapf, M.A.; Ende, H.B. Artificial intelligence in prediction of postpartum hemorrhage: a primer and review. Int J Obstet Anesth. 2025, 63, 104694. [Google Scholar] [CrossRef]

- Vasudevan, L.; Kibria, M.G.; Kucirka, L.M.; Shieh, K.; Wei, M.; Masoumi, S.; Balasubramanian, S.; Victor, A.; Conklin, J.L.; Gurcan, M.N.; Stuebe, A.M.; Page, D. Machine Learning Models to Predict Risk of Maternal Morbidity and Mortality From Electronic Medical Record Data: Scoping Review. J Med Internet Res. 2025, 27, e68225. [Google Scholar] [CrossRef]

- Sirichaisit, K.; Kaladee, K.; Chankong, W.; Romsaiyud, W. Artificial intelligence prediction models for postpartum hemorrhage: a systematic review and meta-analysis. J Southeast Asian Med Res [Internet]. 2025, 9, e0240. [Google Scholar] [CrossRef]

- Shara, N.; Mirabal-Beltran, R.; Talmadge, B.; Falah, N.; Ahmad, M.; Dempers, R.; Crovatt, S.; Eisenberg, S.; Anderson, K. Use of Machine Learning for Early Detection of Maternal Cardiovascular Conditions: Retrospective Study Using Electronic Health Record Data. JMIR Cardio. 2024, 8, e53091. [Google Scholar] [CrossRef]

- Özhüner, Y.; Özhüner, E. The Role of Artificial Intelligence in Improving Maternal and Fetal Health During the Perinatal Period. Mediterr Nurs Midwifery. 2025 May 5; E-pub ahead of print. 5 May. [CrossRef]

- Layton, A.T. Artificial Intelligence and Machine Learning in Preeclampsia. Arterioscler Thromb Vasc Biol. 2025, 45, 165–171. [Google Scholar] [CrossRef] [PubMed]

- Montgomery-Csobán T, Kavanagh K, Murray P, Robertson C, Barry SJE, Vivian Ukah U, Payne BA, Nicolaides KH, Syngelaki A, Ionescu O, Akolekar R, Hutcheon JA, Magee LA, von Dadelszen P; PIERS Consortium. Machine learning-enabled maternal risk assessment for women with pre-eclampsia (the PIERS-ML model): a modelling study. Lancet Digit Health. 2024, 6, e238–e250. [CrossRef]

- Valentine, A.A.; Charney, A.W.; Landi, I. Fair Machine Learning for Healthcare Requires Recognizing the Intersectionality of Sociodemographic Factors, a Case Study [preprint]. arXiv [Internet]. 2024 May 30. https://arxiv.org/abs/2407.15006. 30 May.

- Obermeyer, Z.; Powers, B.; Vogeli, C.; Mullainathan, S. Dissecting racial bias in an algorithm used to manage the health of populations. Science. 2019, 366, 447–453. [Google Scholar] [CrossRef] [PubMed]

- Crenshaw, K. Mapping the margins: Intersectionality, identity politics, and violence against women of color. Stanford Law Review, v. 43, n. 6, p. 1241-1299, 1991. Accessed on: November 20, 2025. Available from: https://blogs.law.columbia.edu/critique1313/files/2020/02/1229039.pdf. 20 November.

- Crear-Perry, J.; Correa-de-Araujo, R.; Lewis Johnson, T.; McLemore, M.R.; Neilson, E.; Wallace, M. Social and Structural Determinants of Health Inequities in Maternal Health. J Womens Health (Larchmt). 2021, 30, 230–235. [Google Scholar] [CrossRef]

- Joy Buolamwini, Timnit Gebru. Gender Shades: Intersectional Accuracy Disparities in Commercial Gender Classification. Proceedings of the 1st Conference on Fairness, Accountability and Transparency, PMLR 81:77-91, 2018. Accessed on: November 20, 2025. Available from: https://proceedings.mlr.press/v81/buolamwini18a.html.

- Ross LJ, Roberts LJ, Derkas E, Peoples W, Bridgewater P (Ed.). Radical reproductive justice: Foundation, theory, practice, critique. New York: Feminist Press, 2017.

- Petersen, E.E.; Davis, N.L.; Goodman, D.; Cox, S.; Syverson, C.; Seed, K.; Shapiro-Mendoza, C.; Callaghan, W.M.; Barfield, W. Racial/Ethnic Disparities in Pregnancy-Related Deaths - United States, 2007-2016. MMWR Morb Mortal Wkly Rep. 2019, 68, 762–765. [Google Scholar] [CrossRef]

- Oliveira, E.N.; Oliveira, F.R.; Lima, G.F.; Martins, P.; Costa, M.S.A.; Fernandes, M.M.B.C.; Ximenes Neto, F.R.G.; Almeida, P.C.; Rodrigues, C.S. A teoria crítica da raça em produções brasileiras: revisão narrativa. Contrib Cienc Sociais. 2024, 17, 297–311. [Google Scholar] [CrossRef]

- Lima, F.; Gaudenzi, P. Racismo, iniquidades raciais e subjetividade: ver, dizer e fazer. Saude Soc. 2023, 32. [Google Scholar] [CrossRef]

- Canêdo, V.R. A inteligência artificial como instrumento de perpetuação do privilégio branco no mercado de trabalho. Reflexão Crítica Dir. 2025, 13, 72–89. [Google Scholar] [CrossRef]

- Queiroz, G.M. A inteligência artificial e o reconhecimento facial: impactos à população negra no Brasil [dissertação]. São Paulo: Instituto Brasileiro de Ensino, Desenvolvimento e Pesquisa; 2023. 106 f. Accessed on: December 1, 2025. Available from:https://repositorio.idp.edu.br//handle/123456789/4800.

|

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).