Submitted:

01 December 2025

Posted:

02 December 2025

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

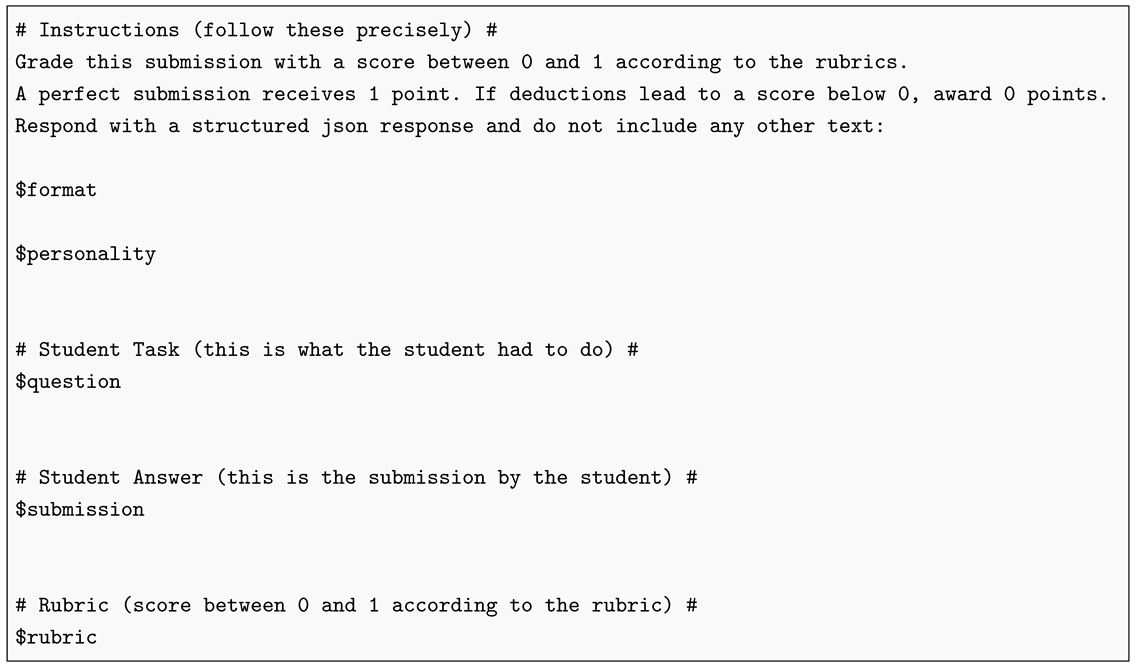

2. Materials and Methods

2.1. Data

2.2. SURE Pipeline

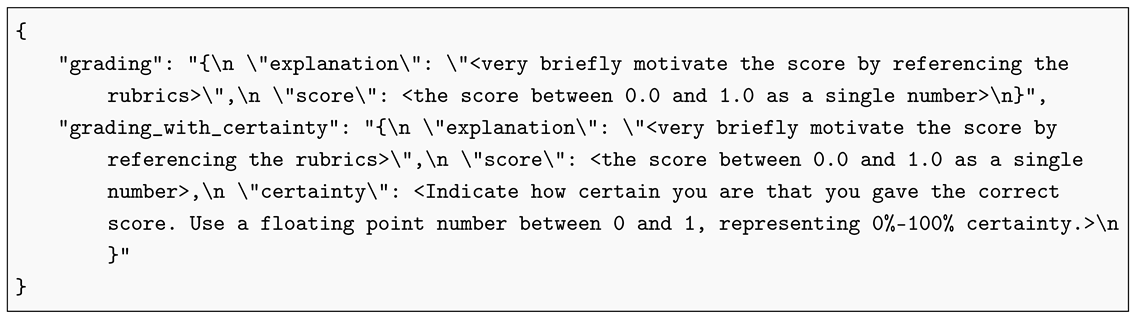

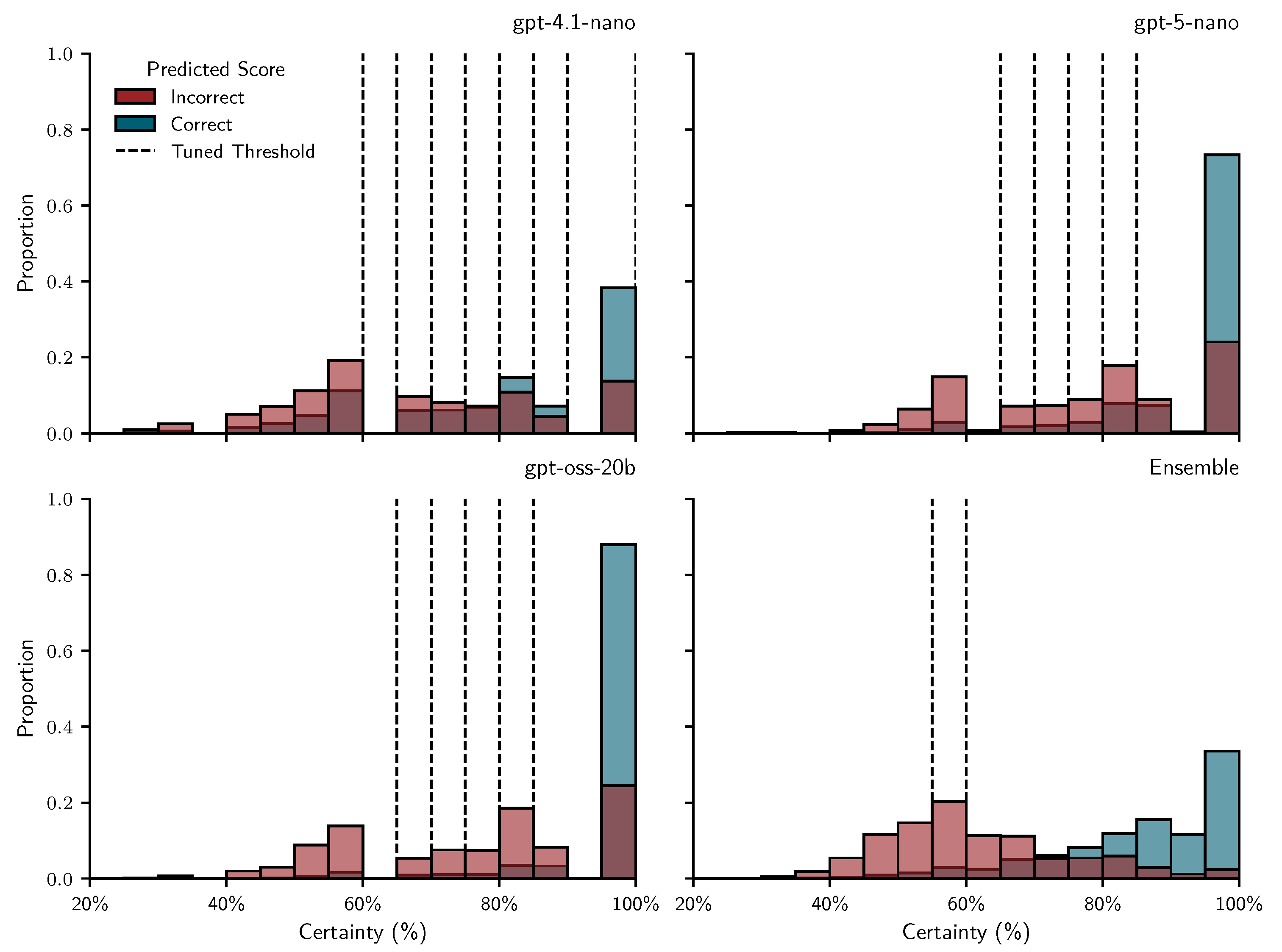

2.2.1. Repeated Prompting for Score Prediction and Uncertainty Estimation

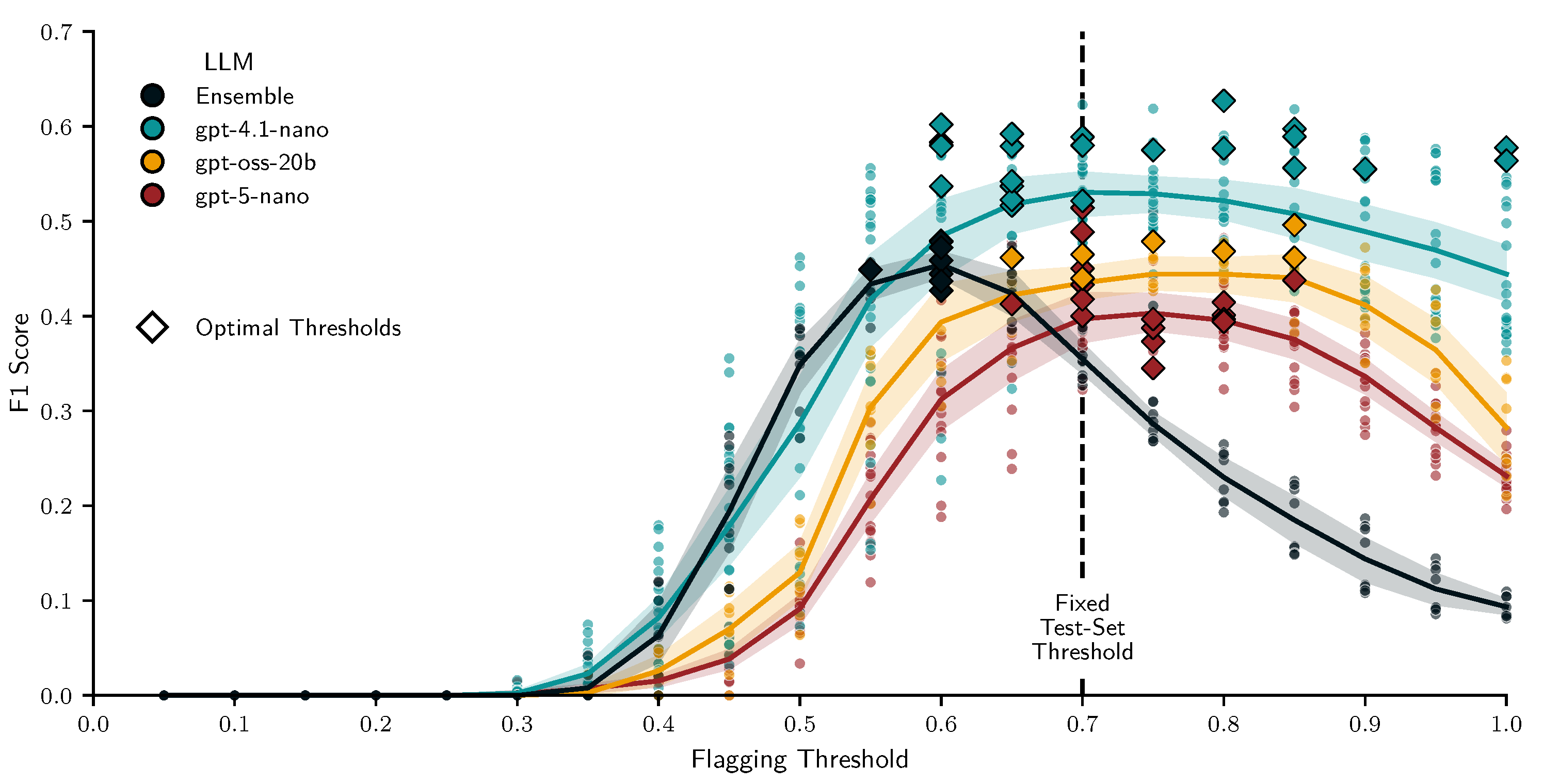

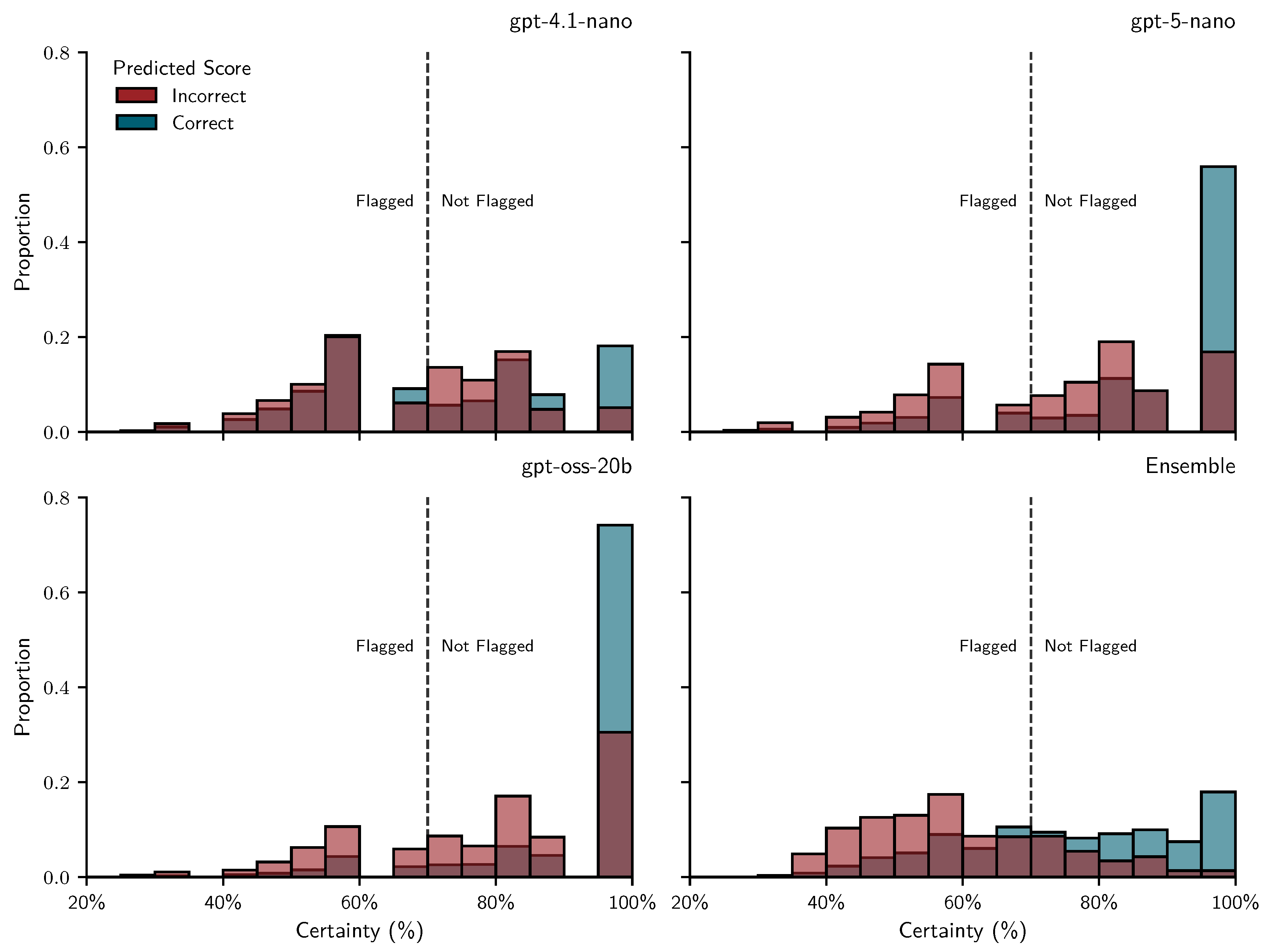

2.2.2. Flagging Low-Certainty Scores and Simulating Human Regrading

- TP: LLM is uncertain (flagged) and incorrect — a useful flag

- FP: LLM is uncertain (flagged) but correct — unnecessary teacher effort

- TN: LLM is confident (unflagged) and correct — ideal automatic grading

- FN: LLM is confident (unflagged) but incorrect — undetected error

2.3. LLM Configurations and Diversification Strategies

2.3.1. Parameter Variations

2.3.2. Prompt Perturbations

- Shuffled rubrics – Each rubric consisted of a list of subtractive grading criteria (Korthals et al. 2025). When this intervention was active, we randomly sampled 20 criteria orderings from all possible permutations. For rubrics with fewer than four criteria (), permutations were repeated equally until reaching 20. Otherwise, criteria followed the original order.

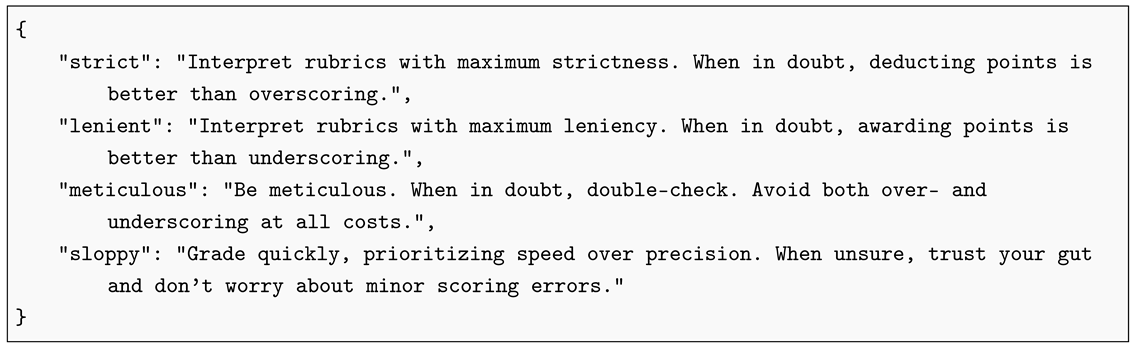

- Grader personas – We defined four personas: strict, lenient, meticulous, and sloppy (see Listing A3). When enabled, we sampled each persona 5 times to add a persona to each of the 20 prompts. Otherwise, prompts contained no persona.

- Multilingual prompting – We used gpt-5-nano to translate all prompt components (base prompt, questions, rubrics, and persona snippets) into German, Spanish, French, Japanese, and Chinese, and verified the translations by back-translating to English via DeepL (DeepL n.d.). When this intervention was active, we sampled equally across the six languages (including English). Otherwise, all 20 prompts were in English.

2.3.3. Post-Hoc Ensembles

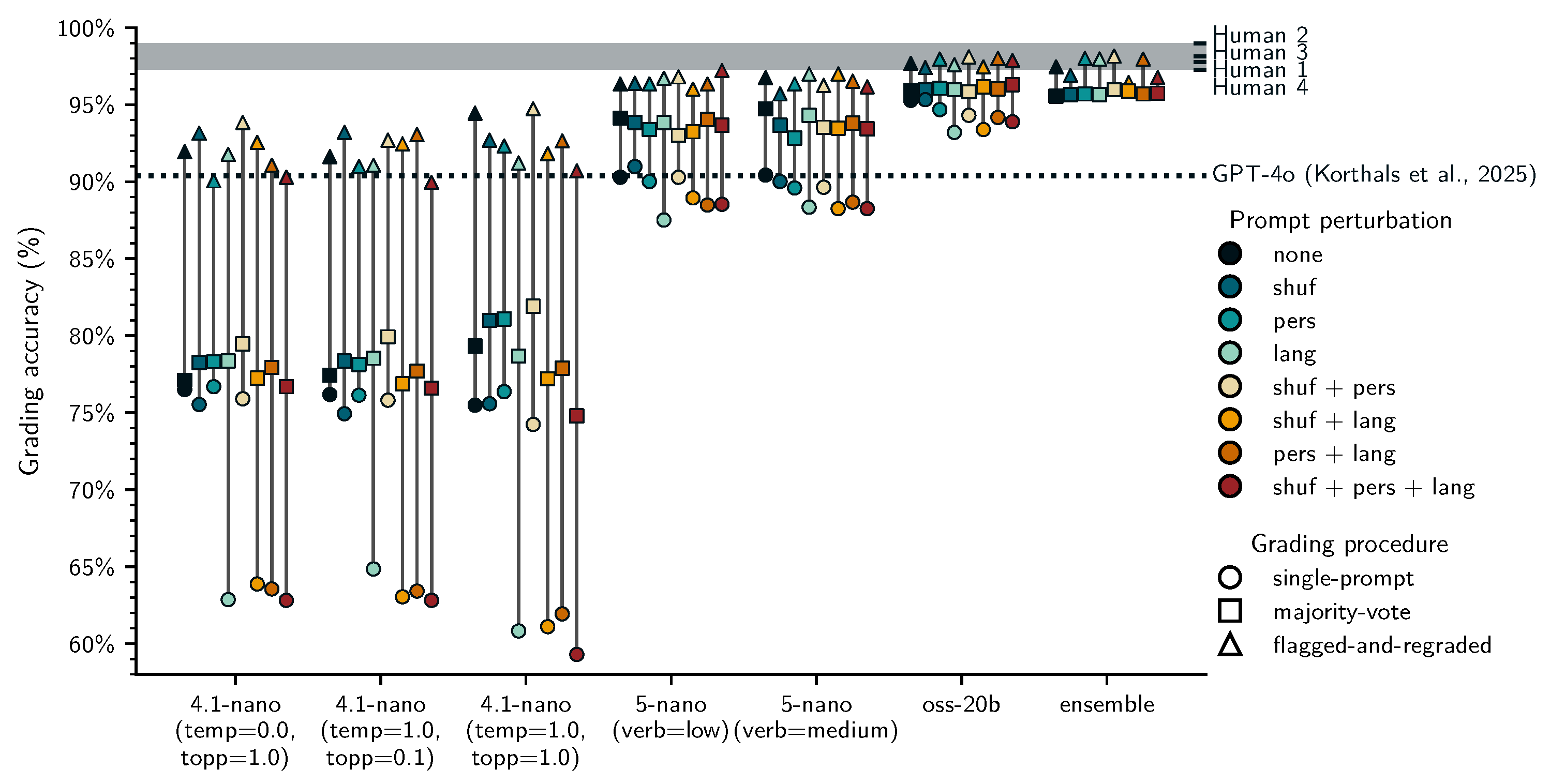

2.4. Grading Procedures

- Majority-voting (MV): Fully automated grading based on the most frequent scores assigned to each student answer across 20 repeated grading iterations. We computed these for all 56 conditions (prompting + post-hoc ensembles).

- Single-prompt (SP): Fully automated grading based on a single score we sampled from the 20 iterations for each student answer. We applied this only to the 48 prompting conditions, ensembles per definition aggregate the outputs from multiple prompts.

- SURE: Human-in-the-loop grading based on majority-voting with simulated human regrading of flagged scores. We assessed SURE for all 56 conditions (prompting + post-hoc ensembles). In the training set we tuned separate uncertainty thresholds by maximizing the score for each of the 56 conditions. In the test set we used the median of these 56 thresholds as a fixed certainty threshold.

2.5. Research Questions

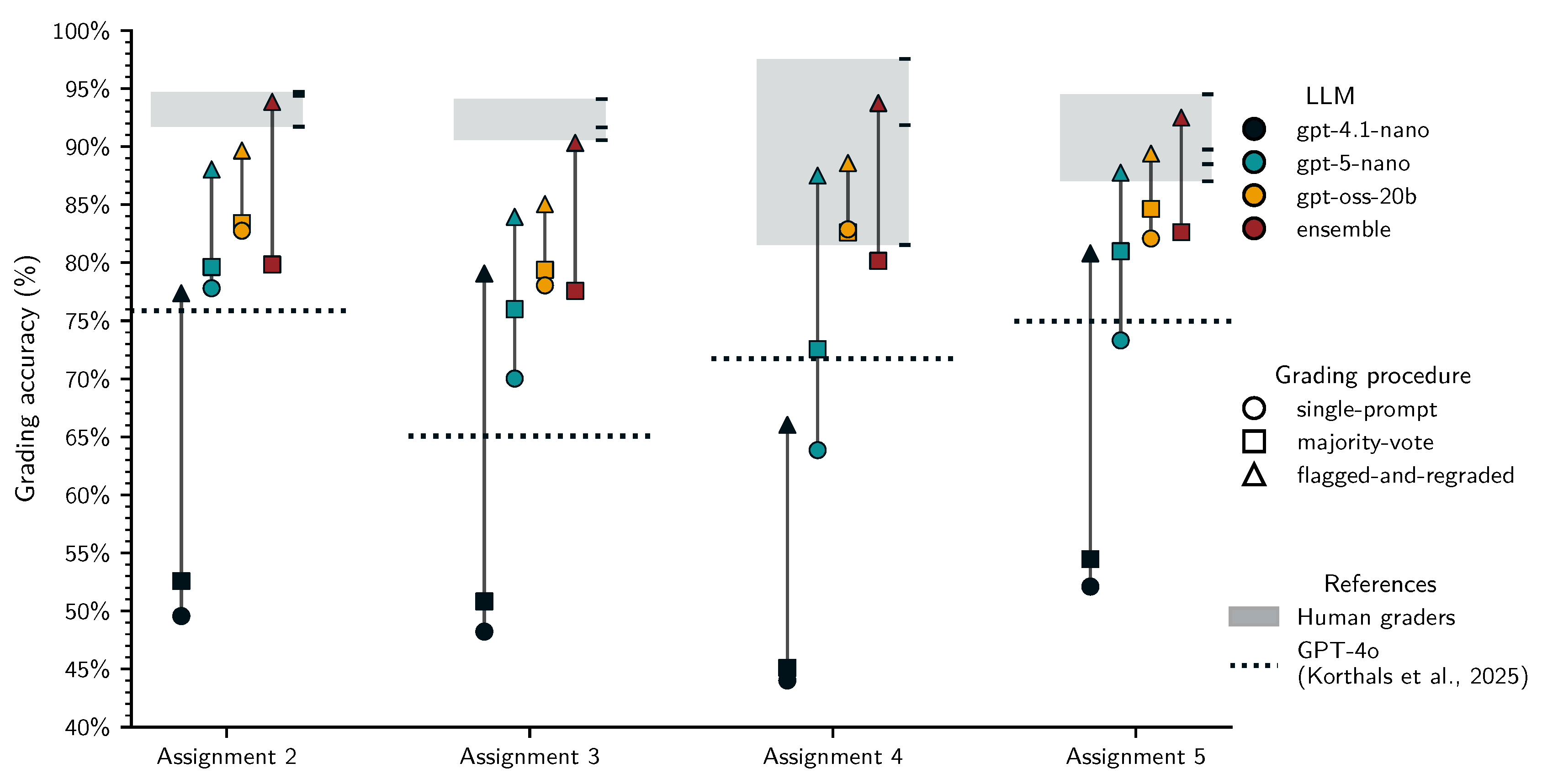

- RQ1: Can majority-voting improve the accuracy of fully automated LLM grading?

- RQ2: Can SURE improve the accuracy over fully automated LLM grading?

- RQ3: Can diversification strategies (token sampling, prompt perturbations, LLM ensembles) improve the SURE protocol?

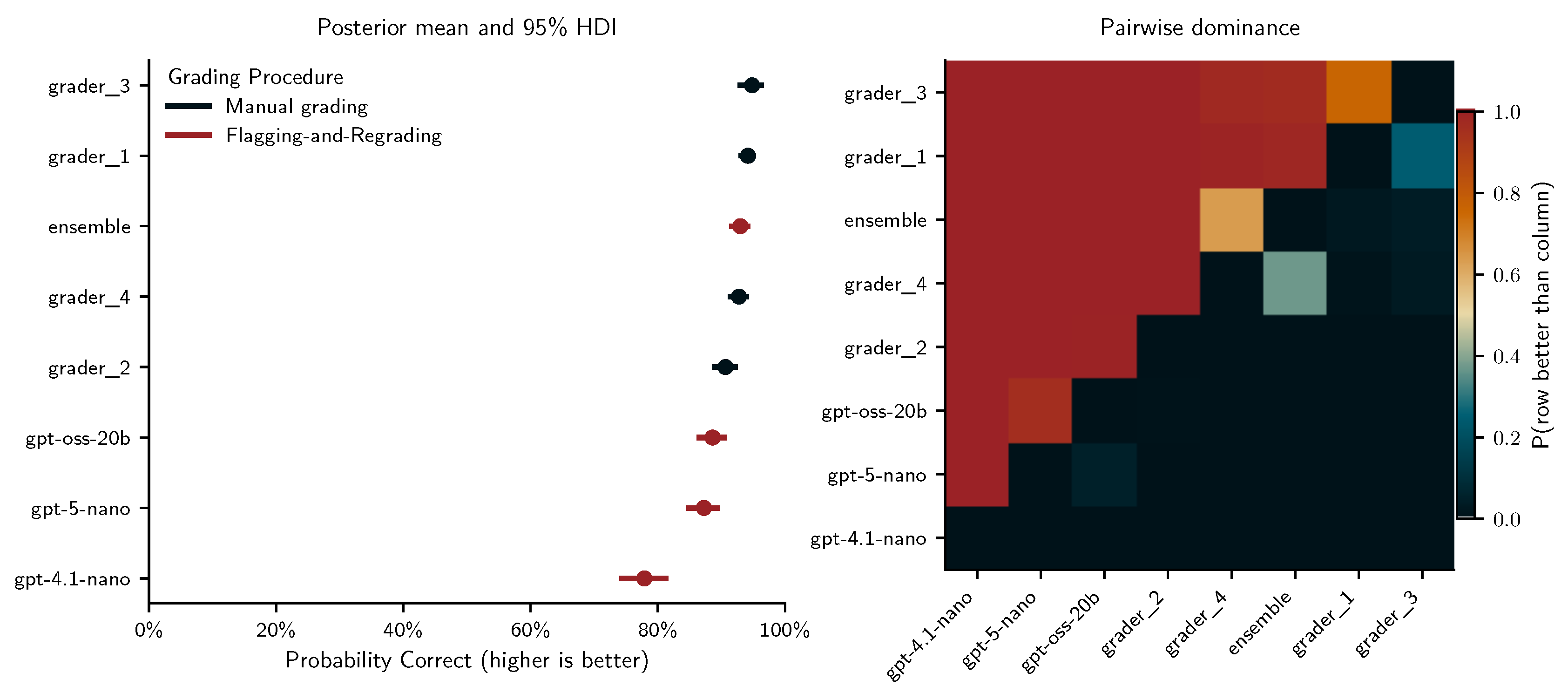

- RQ4: How effective (accuracy) and efficient (time spent grading) is SURE compared to fully manual grading?

2.6. Exploratory Analyses on the Training Set

2.6.1. Grading Procedures and Diversification Strategies

- A negative coefficient for single-prompt grading would indicate that majority-grading improves fully automated grading (RQ1).

- A positive coefficient for SURE that is larger than those for SP and MV would indicate that the proposed pipeline improves accuracy over automated grading (RQ2).

- Positive coefficients for any of the diversification strategies – particularly in combination with SURE – would indicate that diversification strategies are beneficial (RQ3).

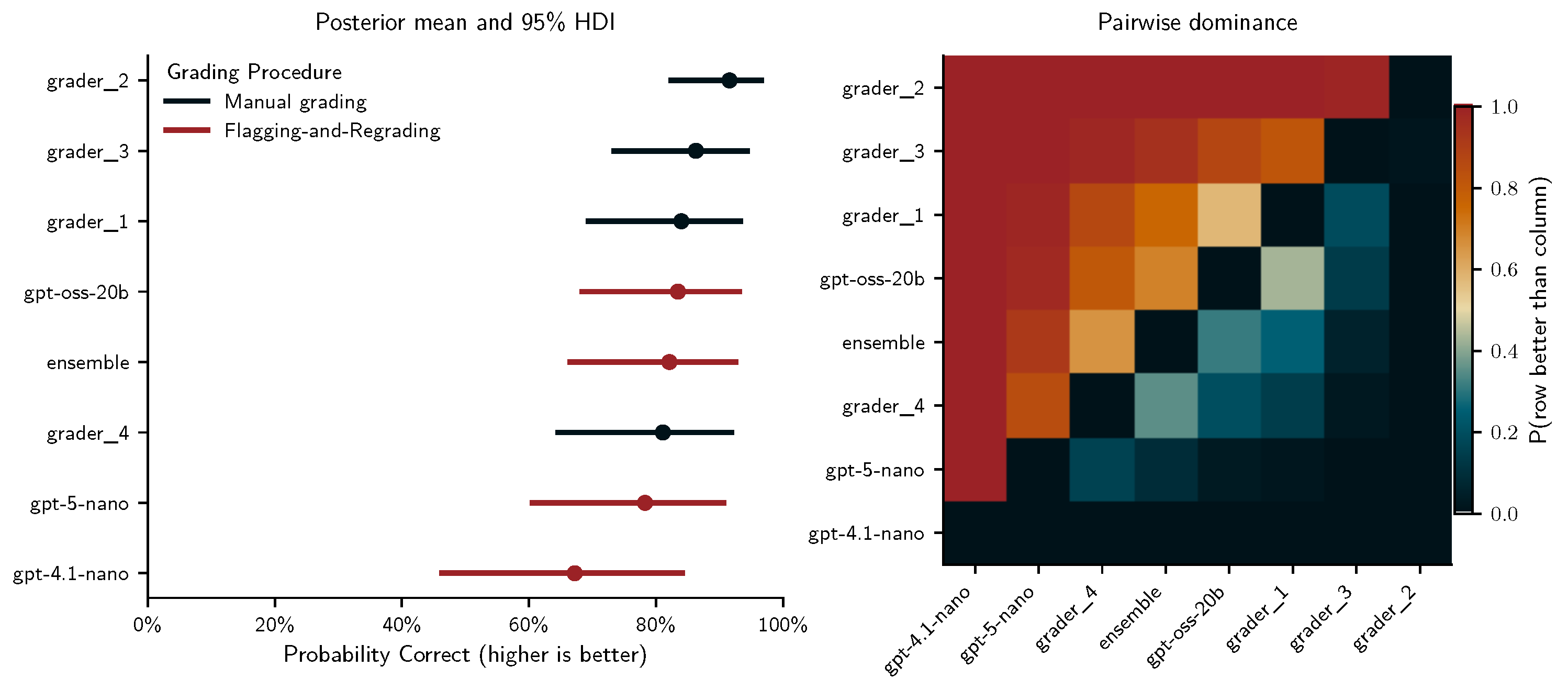

2.6.2. Comparing Single-Prompt, Majority-Voting, SURE and Manual Grading

- gpt-4.1-nano with temperature and top_p set to 1.0.

- gpt-5-nano with text_verbosity set to medium.

- gpt-oss-20b with text_verbosity set to medium.

- ensemble based on the three selected LLM configurations.

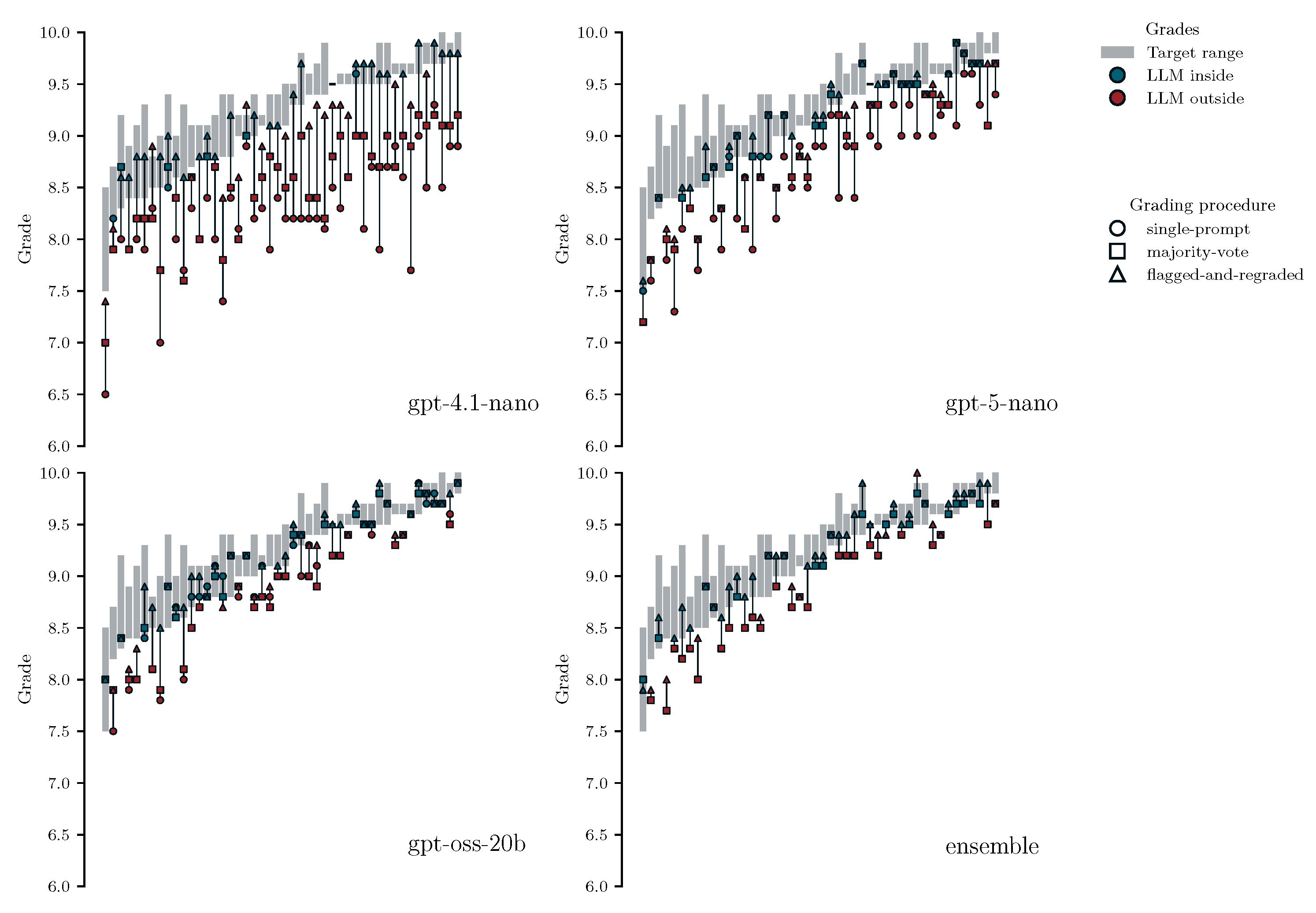

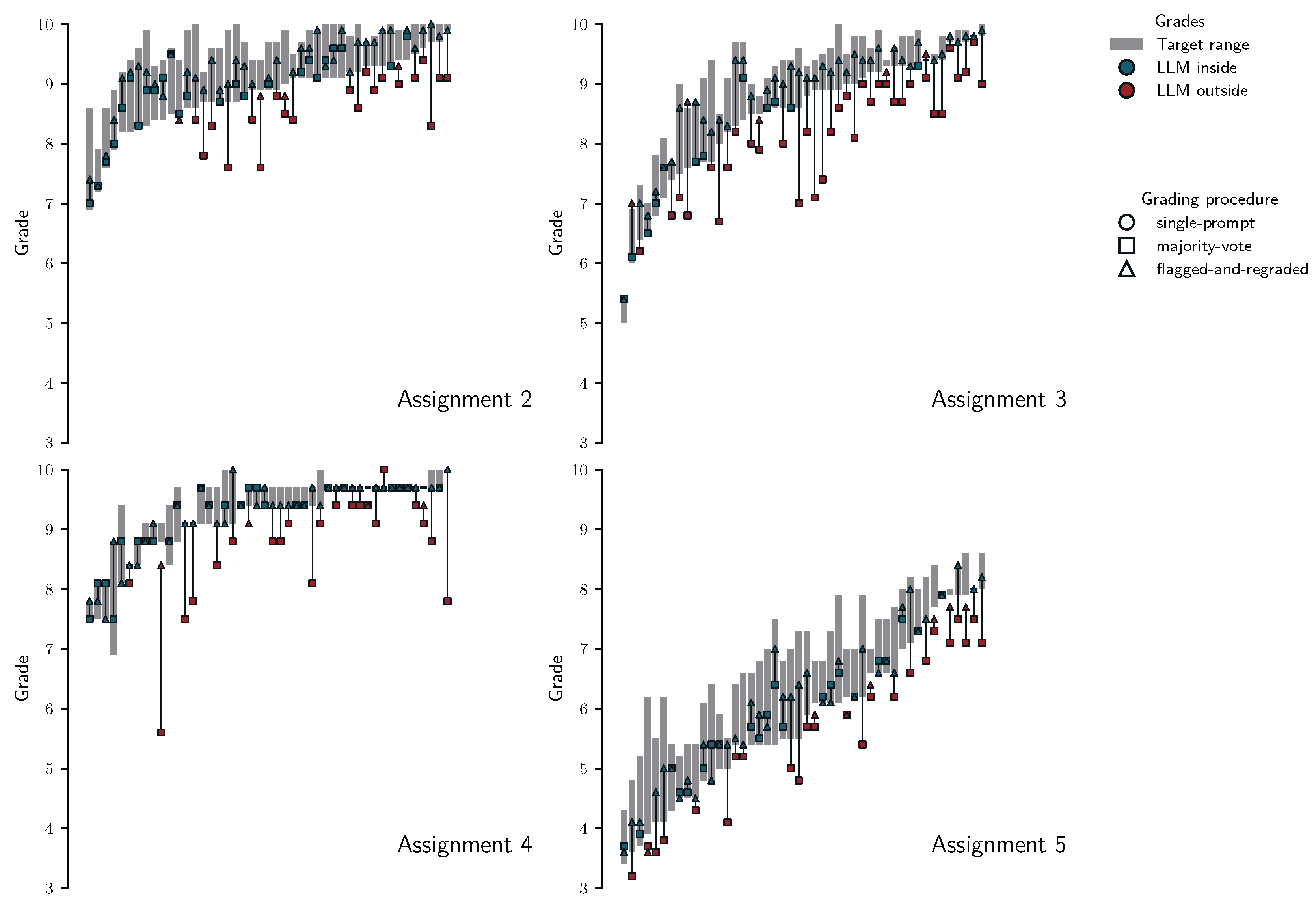

- If majority-voted grades would be more aligned with target ranges than grades based on single-prompts this would lend support that majority-voting improves fully automated gradign (RQ1)

- If grades from SURE would be more aligned than fully automated grades (SP and MV) this would indicate the benefit of SURE (RQ2).

- By assessing the proportion of SURE grades that fall inside the target ranges and the maximum and median deviations from target range boundaries we assess whether SURE may be suitable to replace manual grading (RQ4).

2.7. Planned Analyses on the Test Set

- gpt-4.1-nano with temperature and top_p set to 1.0.

- gpt-5-nano with text_verbosity set to medium.

- gpt-oss-20b with text_verbosity set to medium.

- ensemble based on the three selected LLM configurations.

3. Results

3.1. Exploratory Findings on the Training Set

3.1.1. Descriptive Findings

3.1.2. Grading Procedures and Diversification Strategies

- gpt-4.1-nano with temperature and top_p set to 1.0.

- gpt-5-nano with default "medium" text_verbosity.

- gpt-oss-20b with default "medium" text_verbosity.

- ensemble based on the three selected LLM configurations.

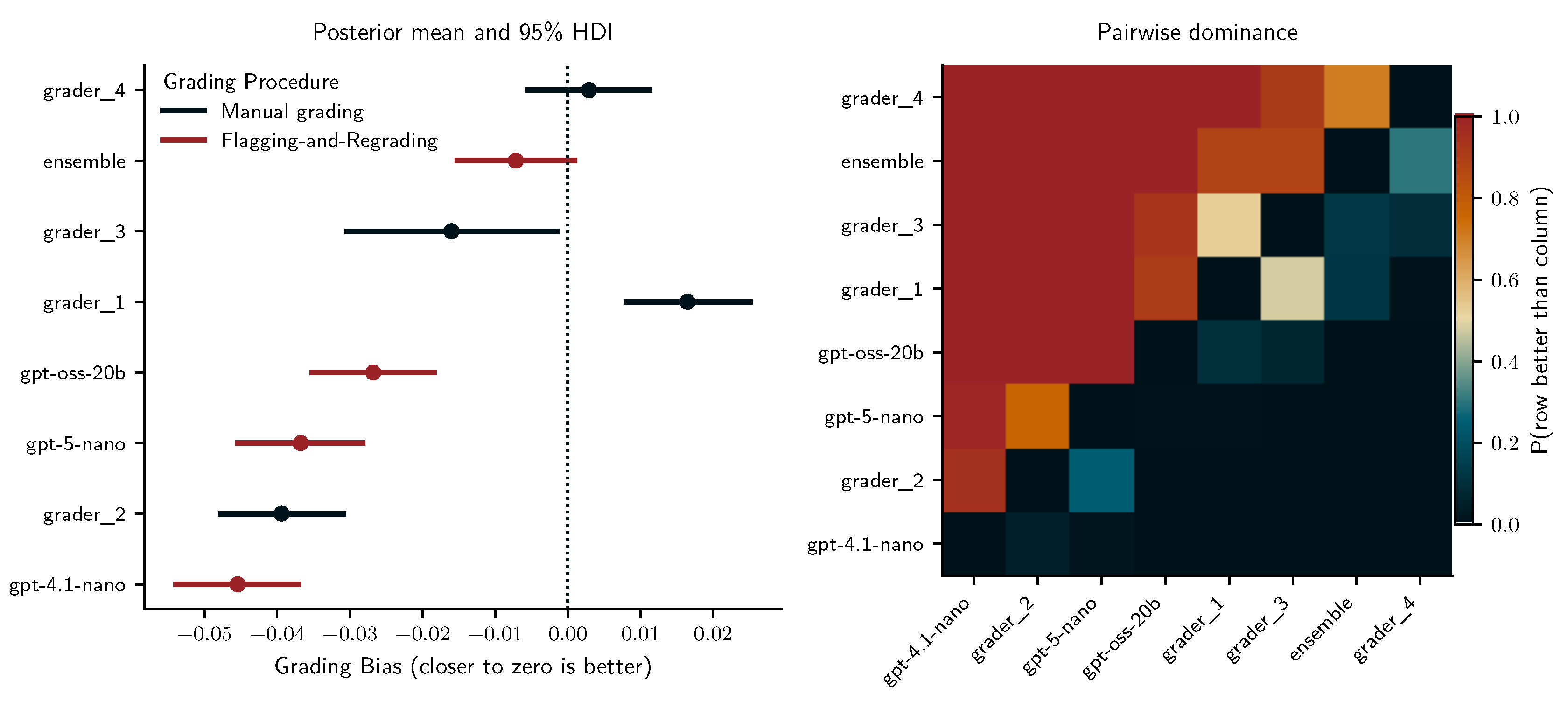

3.1.3. Comparing Single-Prompt, Majority-Voting, SURE and Manual Grading

3.1.4. Summary of Training Set Results

3.2. Test Set Validation

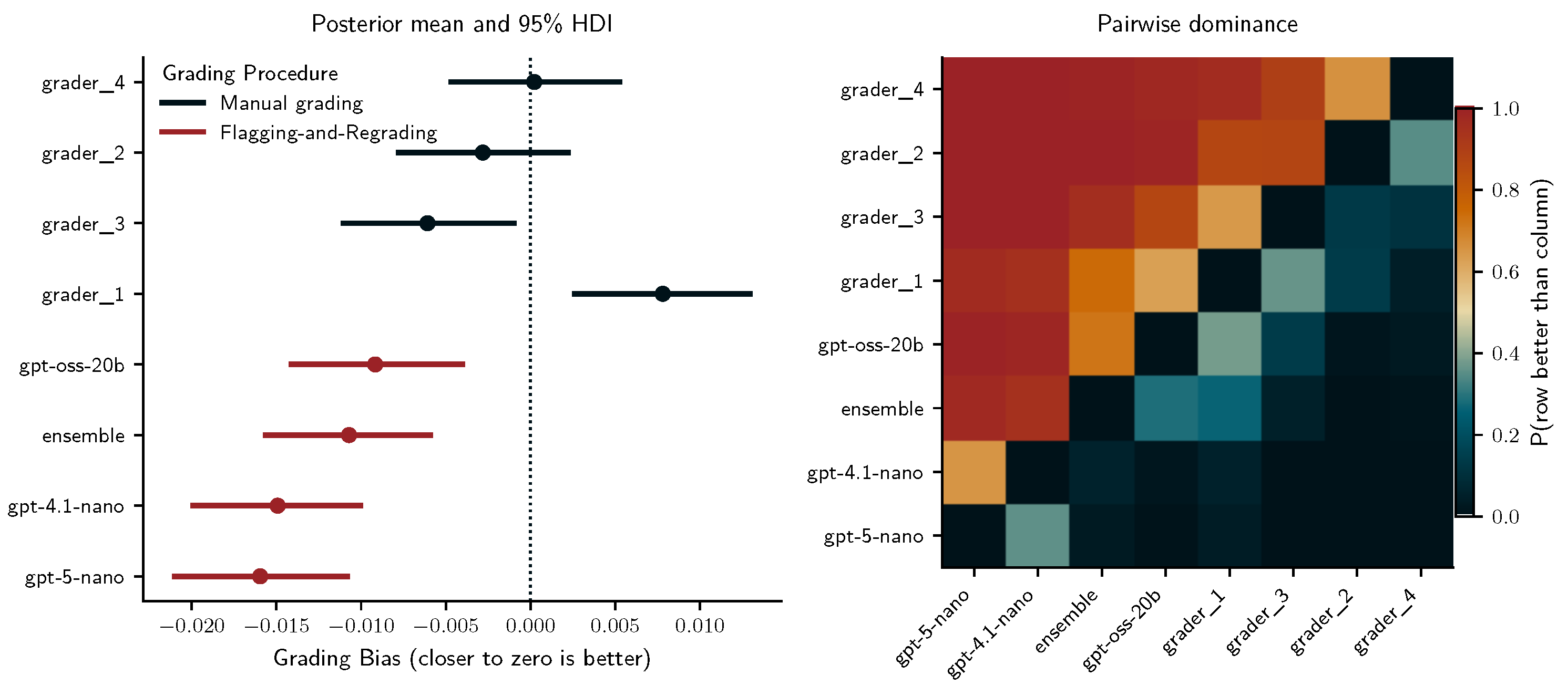

3.2.1. Descriptive Findings

3.2.2. Comparing Single-Prompt, Majority-Voting, SURE and Manual Grading

3.3. Summary of Results

4. Discussion

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| LLMs | Large language models |

| AI | Artificial intelligence |

| SURE | Selective Uncertainty-based Re-Evaluation |

| IRT | Item Response Theory |

| TP | True positive |

| FP | False positive |

| TN | True negative |

| FN | False negative |

| SP | single-prompt |

| MV | majority-voting |

Appendix A. LLM Prompts

References

- Alves, J. V., Leitão, D., Jesus, S., Sampaio, M. O. P., Liébana, J., Saleiro, P., Figueiredo, M. A. T., & Bizarro, P. (2025, April). A benchmarking framework and dataset for learning to defer in human-AI decision-making. Scientific Data, 12(1), 506. Available online: https://www.nature.com/articles/s41597-025-04664-y (accessed on 3 November 2025). Publisher: Nature Publishing Group . [CrossRef]

- Armfield, D., Chen, E., Omonkulov, A., Tang, X., Lin, J., Thiessen, E., & Koedinger, K. 2025. Avalon: A Human-in-the-Loop LLM Grading System with Instructor Calibration and Student Self-assessment. In A. I. Cristea, E. Walker, Y. Lu, O. C. Santos, & S. Isotani (Eds.), Artificial Intelligence in Education. Posters and Late Breaking Results, Workshops and Tutorials, Industry and Innovation Tracks, Practitioners, Doctoral Consortium, Blue Sky, and WideAIED (pp. 111–118). Cham: Springer Nature Switzerland. [CrossRef]

- Capretto, T., Piho, C., Kumar, R., Westfall, J., Yarkoni, T., & Martin, O. A. (2022, January). Bambi: A simple interface for fitting Bayesian linear models in Python. arXiv. Available online: http://arxiv.org/abs/2012.10754 (accessed on 26 November 2025). arXiv:2012.10754 [stat] . [CrossRef]

- Chen, J., & Mueller, J. (2024, August). Quantifying Uncertainty in Answers from any Language Model and Enhancing their Trustworthiness. IIn L.-W. Ku, A. Martins, & V. Srikumar (Eds.), Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers) (pp. 5186–5200). Bangkok, Thailand: Association for Computational Linguistics. Available online:https://aclanthology.org/2024.acl-long.283/ (accessed on 3 November 2025). [CrossRef]

- Cohn, C., S, A. T., Mohammed, N., & Biswas, G. (2025, April). CoTAL: Human-in-the-Loop Prompt Engineering for Generalizable Formative Assessment Scoring. Available online: https://arxiv.org/abs/2504.02323v3 (accessed on 28 November 2025).

- DeepL. (n.d.). DeepL Translate: The world’s most accurate translator. Available online: https://www.deepl.com/translator (accessed on 3 November 2025).

- European Parliament and Council of the European Union. (2024, July). Regulation (EU) 2024/1689 of the European Parliament and of the Council of 13 June 2024 laying down harmonised rules on artificial intelligence and amending Regulations (EC) No 300/2008, (EU) No 167/2013, (EU) No 168/2013, (EU) 2018/858, (EU) 2018/1139 and (EU) 2019/2144 and Directives 2014/90/EU, (EU) 2016/797 and (EU) 2020/1828 (Artificial Intelligence Act) (Text with EEA relevance). Official Journal of the European Union, 2024/1689. Available online: https://eur-lex.europa.eu/eli/reg/2024/1689/oj (accessed on 28 August 2025).

- Flodén, J. 2025. Grading exams using large language models: A comparison between human and AI grading of exams in higher education using ChatGPT. British Educational Research Journal 51(1), 201–224. Available online: https://onlinelibrary.wiley.com/doi/abs/10.1002/berj.4069 (accessed on 21 August 2025). [CrossRef]

- Fröhling, L., Demartini, G., & Assenmacher, D. (2025, August). Personas with Attitudes: Controlling LLMs for Diverse Data Annotation. In A. Calabrese, C. de Kock, D. Nozza, F. M. Plaza-del Arco, Z. Talat, & F. Vargas (Eds.), Proceedings of the The 9th Workshop on Online Abuse and Harms (WOAH) (pp. 468–481). Vienna, AustriaAssociation for Computational Linguistics. Available online: https://aclanthology.org/2025.woah-1.43/ (accessed on 3 November 2025).

- Grévisse, C. (2024, September). LLM-based automatic short answer grading in undergraduate medical education. BMC Medical Education 24(1), 1060. Available online: (accessed on 21 August 2025). [CrossRef]

- Hastie, T., Tibshirani, R., & Friedman, J. 2009. Ensemble Learning. In T. Hastie, R. Tibshirani, & J. Friedman (Eds.), The Elements of Statistical Learning: Data Mining, Inference, and Prediction (pp. 605–624). New York, NYSpringer. Available online: (accessed on 28 November 2025). [CrossRef]

- Holtzman, A., Buys, J., Du, L., Forbes, M., & Choi, Y. (2019, September). The Curious Case of Neural Text Degeneration. Available online: https://openreview.net/forum?id=rygGQyrFvH (accessed on 3 November 2025).

- Horton, P., Florea, A., & Stringfield, B. (2025, December). Conformal validation: A deferral policy using uncertainty quantification with a human-in-the-loop for model validation. Machine Learning with Applications, 22, 100733. Available online: https://www.sciencedirect.com/science/article/pii/S2666827025001161 (accessed on 3 November 2025). [CrossRef]

- Hossain, S. (2019, June). Visualization of Bioinformatics Data with Dash Bio. SciPy 2019. Available online: https://proceedings.scipy.org/articles/Majora-7ddc1dd1-012 (accessed on 3 November 2025). [CrossRef]

- Ishida, T., Liu, T., Wang, H., & Cheung, W. K. (2024, May). Large Language Models as Partners in Student Essay Evaluation. arXiv. Available online: http://arxiv.org/abs/2405.18632 (accessed on 22 November 2024). Number: arXiv:2405.18632 arXiv:2405.18632 . [CrossRef]

- Johnson, M., & Zhang, M. (2024, December). Examining the responsible use of zero-shot AI approaches to scoring essays. Scientific Reports 14(1), 30064. Available online: https://www.nature.com/articles/s41598-024-79208-2 (accessed on 28 November 2025). Publisher: Nature Publishing Group . [CrossRef]

- Kortemeyer, G., & Nöhl, J. (2025, April). Assessing confidence in AI-assisted grading of physics exams through psychometrics: An exploratory study. Physical Review Physics Education Research, 21(1), 010136. Available online: https://link.aps.org/doi/10.1103/PhysRevPhysEducRes.21.010136 (accessed on 3 November 2025). Publisher: American Physical Society . [CrossRef]

- Korthals, L., Rosenbusch, H., Grasman, R., & Visser, I. (2025). Grading University Students with LLMs: Performance and Acceptance of a Canvas-Based Automation. In A. I. Cristea, E. Walker, Y. Lu, O. C. Santos, & S. Isotani (Eds.), Artificial Intelligence in Education. Posters and Late Breaking Results, Workshops and Tutorials, Industry and Innovation Tracks, Practitioners, Doctoral Consortium, Blue Sky, and WideAIED (pp. 36–43).Springer Nature Switzerland. [CrossRef]

- Lu, Y., Bartolo, M., Moore, A., Riedel, S., & Stenetorp, P. (2022, May). Fantastically Ordered Prompts and Where to Find Them: Overcoming Few-Shot Prompt Order Sensitivity. In S. Muresan, P. Nakov, & A. Villavicencio (Eds.), Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers) (pp. 8086–8098). Dublin, Ireland: Association for Computational Linguistics. Available online: https://aclanthology.org/2022.acl-long.556/ (accessed on 3 November 2025). [CrossRef]

- Microsoft. (n.d.). Azure Machine Learning - ML as a Service | Microsoft Azure. Available online: https://azure.microsoft.com/en-us/products/machine-learning (accessed on 3 November 2025).

- OpenAI. (n.d.-a). Batch API - OpenAI API. Available online: https://platform.openai.com (accessed on 3 November 2025).

- OpenAI. (n.d.-b). GPT-4.1 nano. Available online: https://platform.openai.com/docs/models/gpt-4.1-nano (accessed on 3 November 2025).

- OpenAI. (n.d.-c). GPT-5 nano. Available online: https://platform.openai.com/docs/models/gpt-5-nano (accessed on 3 November 2025).

- OpenAI. (n.d.-d). gpt-oss-20b. Available online: https://platform.openai.com/docs/models/gpt-oss-20b (accessed on 3 November 2025).

- OpenAI. (n.d.-e). How should I set the temperature parameter? Available online: https://platform.openai.com/docs/faq/how-should-i-set-the-temperature-parameter (accessed on 3 November 2025).

- OpenAI. 2025. Using GPT-5. Available online: https://platform.openai.com (accessed on 3 November 2025).

- OpenAI, Hurst, A., Lerer, A., Goucher, A. P., Perelman, A., Ramesh, A., & et al. (2024, October). GPT-4o System Card. arXiv. Available online: http://arxiv.org/abs/2410.21276 (accessed on 19 February 2025). [CrossRef]

- Peeperkorn, M., Kouwenhoven, T., Brown, D., & Jordanous, A. (2024, May). Is Temperature the Creativity Parameter of Large Language Models? arXiv. Available online: http://arxiv.org/abs/2405.00492 (accessed on 3 November 2025). arXiv:2405.00492 [cs] . [CrossRef]

- Polat, M. 2020. Analysis of Multiple-Choice versus Open-Ended Questions in Language Tests According to Different Cognitive Domain Levels. Novitas-ROYAL (Research on Youth and Language), 14(2), 76–96. Available online: https://eric.ed.gov/?id=EJ1272114 (accessed on 3 November 2025). Publisher: Children’s Research Center-Turkey ERIC Number: EJ1272114.

- Schneider, J., Schenk, B., & Niklaus, C. (2024, July). Towards LLM-based Autograding for Short Textual Answers. arXiv. Available online: http://arxiv.org/abs/2309.11508 (accessed on 3 November 2025). arXiv:2309.11508 [cs] . [CrossRef]

- Strong, J., Men, Q., & Noble, A. (2025, February). Trustworthy and Practical AI for Healthcare: A Guided Deferral System with Large Language Models. arXiv. Available online: http://arxiv.org/abs/2406.07212 (accessed on 3 November 2025). arXiv:2406.07212 [cs] . [CrossRef]

- Team, R. C. 2022. R: A Language and Environment for Statistical Computing. Vienna, Austria: R Foundation for Statistical Computing. Available online: https://www.R-project.org/ (accessed on).

- Tekin, S. F., Ilhan, F., Huang, T., Hu, S., & Liu, L. (2024, November). LLM-TOPLA: Efficient LLM Ensemble by Maximising Diversity. In Y. Al-Onaizan, M. Bansal, & Y.-N. Chen (Eds.), Findings of the Association for Computational Linguistics: EMNLP 2024 (pp. 11951–11966). Miami, Florida, USA: Association for Computational Linguistics. Available online: https://aclanthology.org/2024.findings-emnlp.698/ (accessed on 3 November 2025). [CrossRef]

- Van Rossum, G., & Drake Jr, F. L. 1995. Python reference manual. Centrum voor Wiskunde en Informatica Amsterdam.

- Wang, J., Wang, J., Athiwaratkun, B., Zhang, C., & Zou, J. (2024, June). Mixture-of-Agents Enhances Large Language Model Capabilities. arXiv. Available online: http://arxiv.org/abs/2406.04692 (accessed on 3 November 2025). arXiv:2406.04692 [cs] . [CrossRef]

- Wang, Q., Pan, S., Linzen, T., & Black, E. (2025, November). Multilingual Prompting for Improving LLM Generation Diversity. In C. Christodoulopoulos, T. Chakraborty, C. Rose, & V. Peng (Eds.), Proceedings of the 2025 Conference on Empirical Methods in Natural Language Processing (pp. 6378–6400). Suzhou, China: Association for Computational Linguistics. Available online: https://aclanthology.org/2025.emnlp-main.324/ (accessed on 3 November 2025).

- Wang, X., Wei, J., Schuurmans, D., Le, Q., Chi, E., Narang, S., Chowdhery, A., & Zhou, D. (2023, March). Self-Consistency Improves Chain of Thought Reasoning in Language Models. arXiv. Available online: http://arxiv.org/abs/2203.11171 (accessed on 5 September 2025). arXiv:2203.11171 [cs] . [CrossRef]

- Wang, Y., Huang, J., Du, L., Guo, Y., Liu, Y., & Wang, R. (2025, December). Evaluating large language models as raters in large-scale writing assessments: A psychometric framework for reliability and validity. Computers and Education: Artificial Intelligence, 9, 100481. Available online: https://www.sciencedirect.com/science/article/pii/S2666920X25001213 (accessed on 28 November 2025). [CrossRef]

- Westfall, J. (2017, February). Statistical details of the default priors in the Bambi library. arXiv. Available online: http://arxiv.org/abs/1702.01201 (accessed on 3 November 2025). arXiv:1702.01201 [stat] . [CrossRef]

- Yang, H., Li, M., Zhou, H., Xiao, Y., Fang, Q., Zhou, S., & Zhang, R. (2025, July). Large Language Model Synergy for Ensemble Learning in Medical Question Answering: Design and Evaluation Study. Journal of Medical Internet Research, 27, e70080. Available online: https://pmc.ncbi.nlm.nih.gov/articles/PMC12337233/ (accessed on 3 November 2025). [CrossRef]

- Yavuz, F., Çelik, O., & Yavaş Çelik, G. 2025. Utilizing large language models for EFL essay grading: An examination of reliability and validity in rubric-based assessments. British Journal of Educational Technology, 56(1), 150–166. Available online: https://onlinelibrary.wiley.com/doi/abs/10.1111/bjet.13494 (accessed on 3 November 2025). _eprint: https://bera-journals.onlinelibrary.wiley.com/doi/pdf/10.1111/bjet.13494 . [CrossRef]

| 1 | Graders 1, 2, and 4 graded all assignments, while grader 3 only graded the first and last assignments. |

| 2 | Assignment 4 originally had 10 questions, but we removed two because they required graders to run a program for counting lines or opening a link to evaluate a Dash app, for which our LLM grading setup was not suited. |

| LLM | temperature | top_p | text verbosity | shuffled rubrics | varied personas | varied languages | n conditions |

|---|---|---|---|---|---|---|---|

| Prompting conditions | |||||||

| gpt-4.1-nano | 0 / 1 | 0.1 / 1 | - | no / yes | no / yes | no / yes | 24 |

| gpt-5-nano | - | - | low / medium | no / yes | no / yes | no / yes | 16 |

| gpt-oss-20b | - | - | medium | no / yes | no / yes | no / yes | 8 |

| Post-hoc conditions | |||||||

| ensemble | 1 (gpt-4.1-nano) | 1 (gpt-4.1-nano) | medium (gpt-5-nano & gpt-oss-20b) | no / yes | no / yes | no / yes | 8 |

| student | question | condition | procedure | correct | error |

|---|---|---|---|---|---|

| 1 | #R23 | 1 | SP | 0 | -0.5 |

| 1 | #R23 | 1 | MV | 0 | 0.25 |

| 1 | #R23 | 1 | SURE | 1 | 0 |

| 2000 | #R23 | 1 | MV | 0 | 0.25 |

| 2000 | #R23 | 1 | SURE | 1 | 0 |

| condition | llm | temp | topp | verb | shuf | pers | lang |

|---|---|---|---|---|---|---|---|

| 1 | gpt-4.1-nano | 0 | 1 | 0 | 0 | 0 | 0 |

| 2 | gpt-4.1-nano | 1 | 1 | 0 | 0 | 0 | 0 |

| 3 | gpt-4.1-nano | 1 | 1 | 0 | 1 | 0 | 0 |

| 2000 | ensemble | 1 | 1 | 1 | 0 | 0 | 0 |

| student | question | grader | correct | error |

|---|---|---|---|---|

| 1 | #R23 | grader-1 | 0 | 0.25 |

| 1 | #R23 | grader-2 | 0 | -0.5 |

| 1 | #R23 | grader-3 | 1 | 0 |

| 1 | #R23 | grader-4 | 1 | 0 |

| 1 | #R23 | gpt-4.1-nano | 0 | -0.75 |

| 1 | #R23 | gpt-5-nano | 0 | 0.25 |

| 1 | #R23 | gpt-oss-20b | 1 | 0 |

| 1 | #R23 | ensemble | 1 | 0 |

| Coefficient | Mean | 2.5% HDI | 97.5% HDI |

|---|---|---|---|

| HDI excludes zero | |||

| Intercept | 1.601 | 1.199 | 1.980 |

| procedure[single-prompt] | -0.230 | -0.300 | -0.161 |

| procedure[SURE] | 1.311 | 1.219 | 1.400 |

| llm[gpt-5-nano] | 1.437 | 1.319 | 1.557 |

| llm[gpt-oss-20b] | 1.958 | 1.821 | 2.100 |

| llm[ensemble] | 1.989 | 1.830 | 2.147 |

| topp(llm=gpt-4.1-nano; temp=1) | 0.176 | 0.075 | 0.275 |

| languages | -0.080 | -0.167 | -0.002 |

| procedure[SURE] : llm[ensemble] | -0.742 | -0.880 | -0.601 |

| procedure[SURE] : llm[gpt-5-nano] | -0.632 | -0.751 | -0.513 |

| procedure[SURE] : llm[gpt-oss-20b] | -0.686 | -0.831 | -0.542 |

| topp(llm=gpt-4.1-nano; temp=1) : procedure[single-prompt] | -0.158 | -0.233 | -0.082 |

| languages : procedure[single-prompt] | -0.527 | -0.580 | -0.475 |

| languages : llm[gpt-5-nano] | 0.295 | 0.192 | 0.397 |

| languages : llm[gpt-oss-20b] | 0.283 | 0.160 | 0.397 |

| languages : shuffle_rubrics | -0.082 | -0.140 | -0.024 |

| languages : topp(llm=gpt-4.1-nano; temp=1) | -0.169 | -0.260 | -0.073 |

| 1|student_sigma | 0.355 | 0.282 | 0.435 |

| 1|question_sigma | 1.253 | 1.004 | 1.531 |

| HDI includes zero | |||

| temp(llm=gpt-4.1-nano) | -0.002 | -0.100 | 0.099 |

| verb(llm=gpt-5-nano) | 0.067 | -0.076 | 0.195 |

| shuffle_rubrics | 0.067 | -0.013 | 0.152 |

| personalities | -0.003 | -0.086 | 0.077 |

| procedure[single-prompt] : llm[ensemble-3.5] | -0.018 | -1.987 | 1.846 |

| procedure[single-prompt] : llm[gpt-5-nano] | -0.098 | -0.189 | 0.004 |

| procedure[single-prompt] : llm[gpt-oss-20b] | 0.111 | -0.002 | 0.230 |

| temp(llm=gpt-4.1-nano) : procedure[single-prompt] : | -0.003 | -0.072 | 0.075 |

| temp(llm=gpt-4.1-nano) : procedure[SURE] | 0.010 | -0.078 | 0.113 |

| temp(llm=gpt-4.1-nano) : shuffle_rubrics | -0.034 | -0.125 | 0.060 |

| temp(llm=gpt-4.1-nano) : personalities | 0.011 | -0.085 | 0.101 |

| temp(llm=gpt-4.1-nano) : languages | 0.021 | -0.076 | 0.111 |

| topp(llm=gpt-4.1-nano; temp=1) : procedure[SURE] | 0.035 | -0.065 | 0.130 |

| topp(llm=gpt-4.1-nano; temp=1) : shuffle_rubrics | -0.030 | -0.125 | 0.063 |

| topp(llm=gpt-4.1-nano; temp=1) : personalities | 0.000 | -0.099 | 0.088 |

| verb(llm=gpt-5-nano) : procedure[single-prompt] : | -0.048 | -0.161 | 0.070 |

| verb(llm=gpt-5-nano) : procedure[SURE] | -0.035 | -0.175 | 0.112 |

| verb(llm=gpt-5-nano) : shuffle_rubrics | -0.081 | -0.196 | 0.037 |

| verb(llm=gpt-5-nano) : personalities | -0.062 | -0.173 | 0.062 |

| verb(llm=gpt-5-nano) : languages | 0.047 | -0.065 | 0.164 |

| shuffle_rubrics : procedure[SURE] | 0.032 | -0.032 | 0.099 |

| shuffle_rubrics : procedure[single-prompt] : | -0.014 | -0.065 | 0.036 |

| shuffle_rubrics : llm[ensemble-3.5] | -0.127 | -0.269 | 0.022 |

| shuffle_rubrics : llm[gpt-5-nano] | 0.004 | -0.099 | 0.121 |

| shuffle_rubrics : llm[gpt-oss-20b] | -0.030 | -0.153 | 0.092 |

| shuffle_rubrics : personalities | -0.007 | -0.062 | 0.051 |

| personalities : procedure[SURE] | -0.009 | -0.077 | 0.053 |

| personalities : procedure[single-prompt] : | 0.002 | -0.053 | 0.053 |

| personalities : llm[ensemble-3.5] | 0.123 | -0.023 | 0.260 |

| personalities : llm[gpt-5-nano] | 0.015 | -0.093 | 0.121 |

| personalities : llm[gpt-oss-20b] | 0.064 | -0.059 | 0.181 |

| personalities : languages | -0.023 | -0.080 | 0.037 |

| languages : procedure[SURE] | -0.036 | -0.106 | 0.022 |

| languages : llm[ensemble-3.5] | 0.101 | -0.046 | 0.240 |

| 1|condition_sigma | 0.027 | 0.000 | 0.052 |

| LLM | Grading Procedure | % in Target Range | Maximum Grade Deviation | Median Grade Deviation |

|---|---|---|---|---|

| Assignment 1 | ||||

| gpt-4.1-nano | SP | 6.522 | 1.9 | 0.85 |

| MV | 8.696 | 1.2 | 0.5 | |

| SURE | 60.870 | 0.4 | 0.1 | |

| gpt-5-nano | SP | 19.565 | 1.1 | 0.40 |

| MV | 47.826 | 0.7 | 0.1 | |

| SURE | 60.870 | 0.5 | 0.1 | |

| gpt-oss-20b | SP | 54.348 | 0.7 | 0.1 |

| MV | 52.174 | 0.6 | 0.1 | |

| SURE | 73.913 | 0.3 | 0 | |

| ensemble | MV | 45.652 | 0.7 | 0.1 |

| SURE | 73.913 | 0.4 | 0 | |

| Regrading (min) and time savings (%) | |||||

|---|---|---|---|---|---|

| Grader | Manual (min) | gpt-4.1-nano | gpt-5-nano | gpt-oss-20b | Ensemble |

| Assignment 1 | |||||

| Grader 1 | 186 | 85 (54%) | 22 (88%) | 19 (90%) | 22 (88%) |

| Grader 2 | 195 | 95 (51%) | 25 (87%) | 24 (88%) | 26 (87%) |

| Grader 3 | 399 | 203 (49%) | 56 (87%) | 57 (86%) | 68 (83%) |

| Grader 4 | 238 | 115 (52%) | 30 (87%) | 33 (86%) | 38 (84%) |

| LLM | Grading Procedure | % in Target Range | Maximum Grade Deviation | Median Grade Deviation |

|---|---|---|---|---|

| Assignment 2 | ||||

| gpt-4.1-nano | SP | 4.444 | 2.8 | 1.1 |

| MV | 8.889 | 2.8 | 1.0 | |

| SURE | 42.222 | 1.2 | 0.2 | |

| gpt-5-nano | SP | 33.333 | 1.8 | 0.3 |

| MV | 46.667 | 1.7 | 0.3 | |

| SURE | 73.333 | 1.2 | 0.1 | |

| gpt-oss-20b | SP | 55.556 | 1.1 | 0.2 |

| MV | 57.778 | 1.2 | 0.2 | |

| SURE | 75.556 | 1.0 | 0.1 | |

| ensemble | MV | 55.556 | 1.4 | 0.2 |

| SURE | 91.111 | 0.4 | 0.1 | |

| Assignment 3 | ||||

| gpt-4.1-nano | SP | 4.348 | 2.8 | 0.85 |

| MV | 15.217 | 2.4 | 0.7 | |

| SURE | 71.739 | 0.6 | 0.1 | |

| gpt-5-nano | SP | 8.696 | 2.7 | 0.8 |

| MV | 13.043 | 1.8 | 0.4 | |

| SURE | 50 | 1.2 | 0.2 | |

| gpt-oss-20b | SP | 26.087 | 1.5 | 0.3 |

| MV | 32.609 | 1.7 | 0.3 | |

| SURE | 52.174 | 1.4 | 0.1 | |

| ensemble | MV | 26.087 | 1.8 | 0.3 |

| SURE | 89.13 | 0.3 | 0.1 | |

| Assignment 4 | ||||

| gpt-4.1-nano | SP | 4.348 | 3.1 | 1.55 |

| MV | 2.174 | 2.5 | 1.3 | |

| SURE | 19.565 | 1.6 | 0.6 | |

| gpt-5-nano | SP | 19.565 | 4.4 | 0.8 |

| MV | 34.783 | 3.8 | 0.3 | |

| SURE | 73.913 | 1.9 | 0.0 | |

| gpt-oss-20b | SP | 54.348 | 3.5 | 0.3 |

| MV | 47.826 | 3.2 | 0.3 | |

| SURE | 60.87 | 1.3 | 0.0 | |

| ensemble | MV | 54.348 | 3.2 | 0.3 |

| SURE | 91.304 | 0.4 | 0.0 | |

| Assignment 5 | ||||

| gpt-4.1-nano | SP | 21.739 | 2.1 | 0.65 |

| MV | 10.87 | 2.3 | 0.65 | |

| SURE | 54.348 | 0.9 | 0.2 | |

| gpt-5-nano | SP | 32.609 | 2.6 | 0.45 |

| MV | 47.826 | 1.2 | 0.2 | |

| SURE | 69.565 | 0.9 | 0.1 | |

| gpt-oss-20b | SP | 58.696 | 1.5 | 0.2 |

| MV | 76.087 | 0.8 | 0.1 | |

| SURE | 80.435 | 0.4 | 0.0 | |

| ensemble | MV | 47.826 | 0.9 | 0.2 |

| SURE | 84.783 | 0.4 | 0.0 | |

| Regrading (min) and time savings (%) | |||||

|---|---|---|---|---|---|

| Grader | Manual (min) | gpt-4.1-nano | gpt-5-nano | gpt-oss-20b | Ensemble |

| Assignment 2 | |||||

| Grader 1 | 137 | 59 (57%) | 34 (75%) | 22 (84%) | 62 (55%) |

| Grader 2 | 224 | 102 (54%) | 50 (78%) | 30 (87%) | 95 (58%) |

| Grader 4 | 194 | 93 (52%) | 39 (80%) | 27 (86%) | 82 (58%) |

| Assignment 3 | |||||

| Grader 1 | 323 | 168 (48%) | 70 (78%) | 51 (84%) | 163 (50%) |

| Grader 2 | 380 | 214 (44%) | 91 (76%) | 67 (82%) | 201 (47%) |

| Grader 4 | 253 | 147 (42%) | 67 (74%) | 49 (81%) | 142 (44%) |

| Assignment 4 | |||||

| Grader 1 | 125 | 57 (54%) | 66 (47%) | 27 (78%) | 89 (29%) |

| Grader 2 | 145 | 65 (55%) | 87 (40%) | 30 (79%) | 107 (26%) |

| Grader 4 | 94 | 39 (59%) | 50 (47%) | 21 (78%) | 69 (27%) |

| Assignment 5 | |||||

| Grader 1 | 162 | 91 (44%) | 44 (73%) | 31 (81%) | 89 (45%) |

| Grader 2 | 185 | 96 (48%) | 58 (69%) | 37 (80%) | 99 (46%) |

| Grader 3 | 294 | 160 (46%) | 89 (70%) | 51 (83%) | 161 (45%) |

| Grader 4 | 192 | 99 (48%) | 55 (71%) | 36 (81%) | 105 (45%) |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).