Submitted:

03 November 2025

Posted:

03 November 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Contributions (Scope, Novelty, and Validation Context)

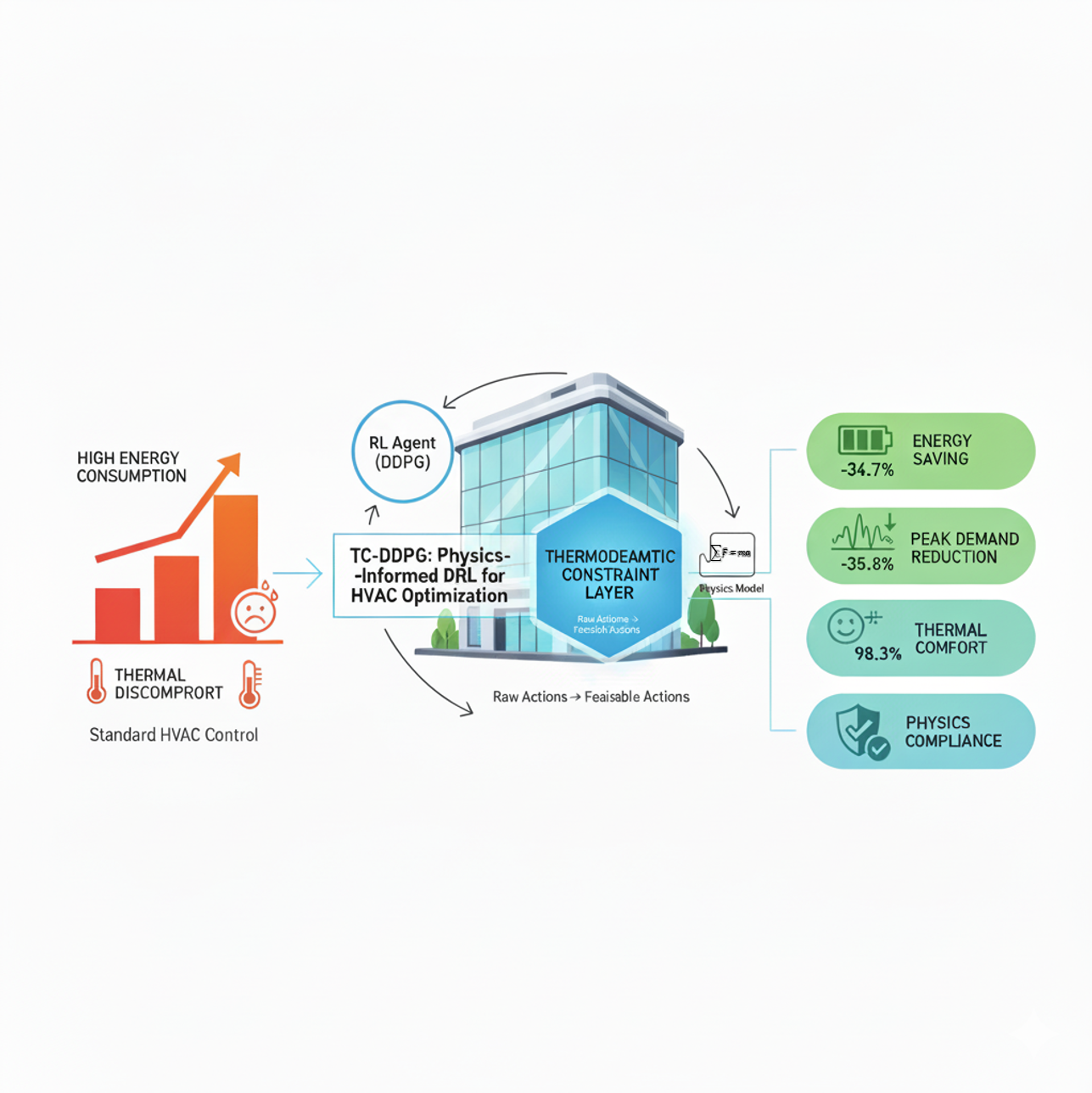

- Physics-informed continuous control: A TC-DDPG architecture that operates directly on continuous HVAC actions, avoiding discretization artifacts inherent to DQN-style methods.

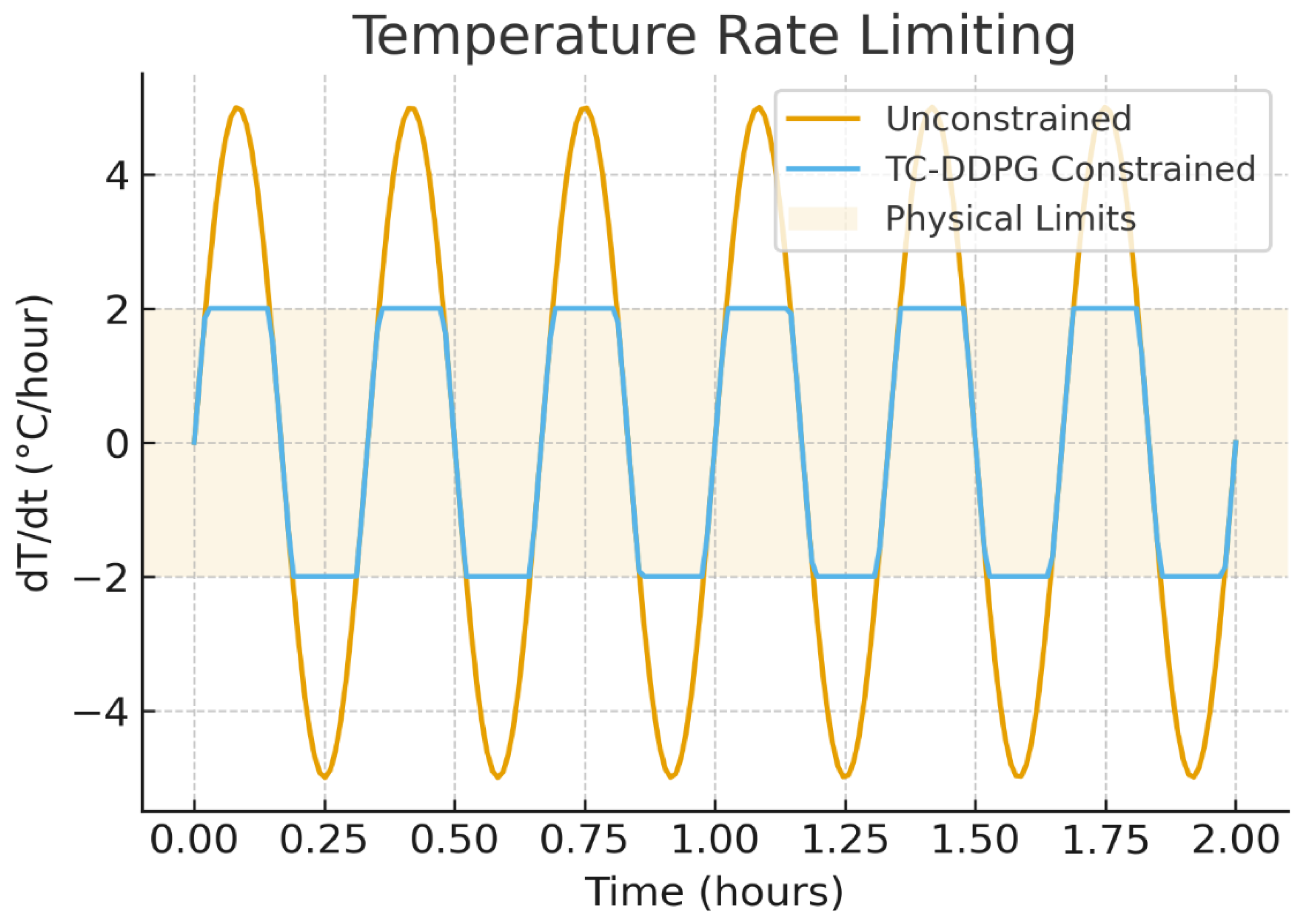

- Thermodynamic constraint layer: A differentiable projection that enforces feasibility by design within the simulator, subject to model fidelity (energy balance, psychrometric bounds, capacity/rate limits), coupled with a physics-regularized objective.

- Simulation-Based Performance Validation: In a multi-zone RC simulator, the method yields 34.7% annual energy reduction vs. rule-based control and improves comfort (occupied-hour PMV ∈ [−0.5, 0.5]). Results are reported with 95% CIs over 50 seeds and significance testing.

- Reproducibility: Public release of code, simulator configuration, training/evaluation scripts, and hyperparameters to enable exact replication and extension.

- Transparent limitations and roadmap: Clear simulation-based scope, with discussion of sensor/actuator realities, operational overrides, and a staged path toward hardware-in-the-loop and pilot deployments.

2. Related Work

2.1. Traditional HVAC Control

2.2. Machine Learning Approaches

2.3. Physics-Informed Machine Learning

3. Mathematical Framework

3.1. System Dynamics

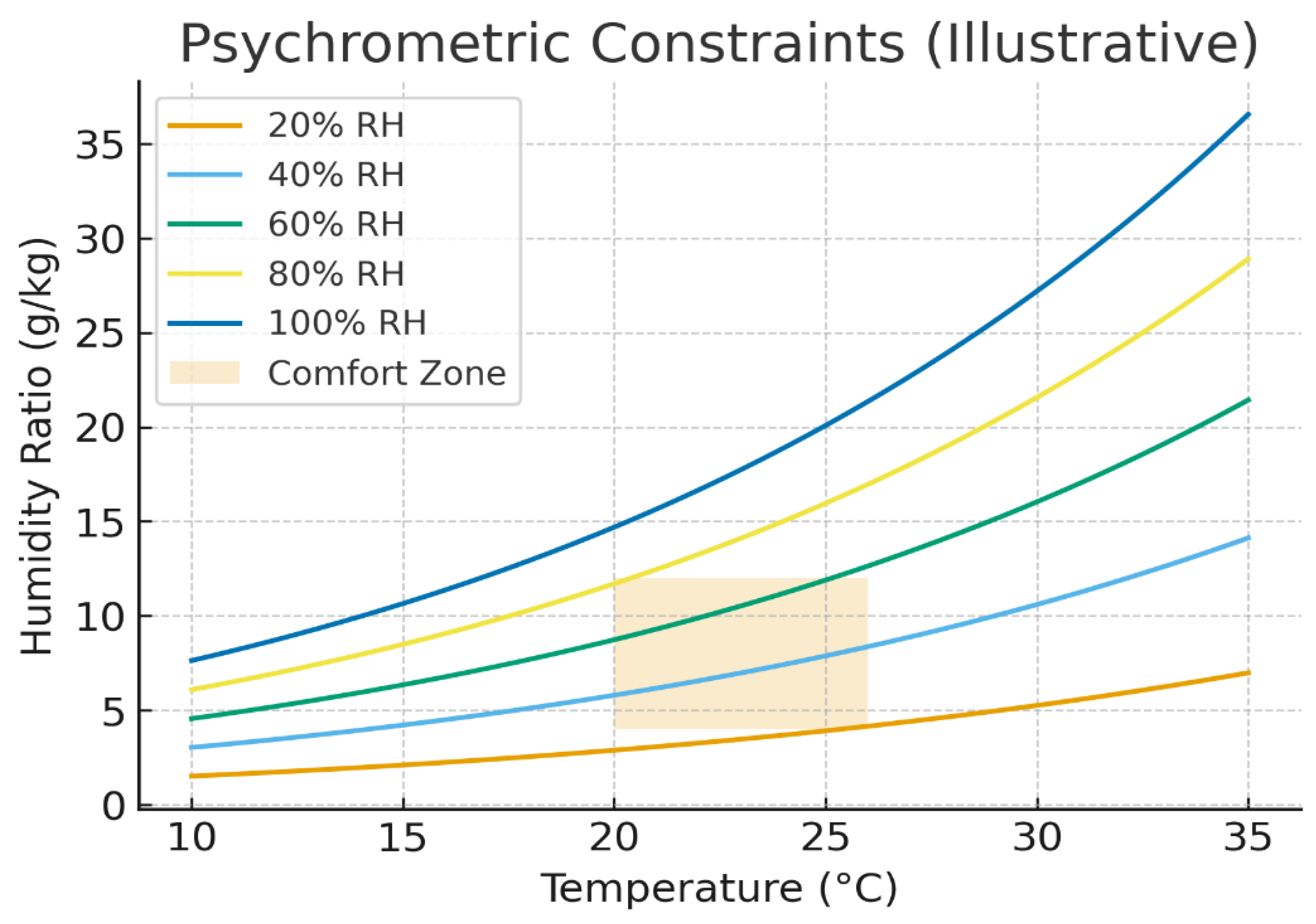

3.2. Psychrometric Constraints

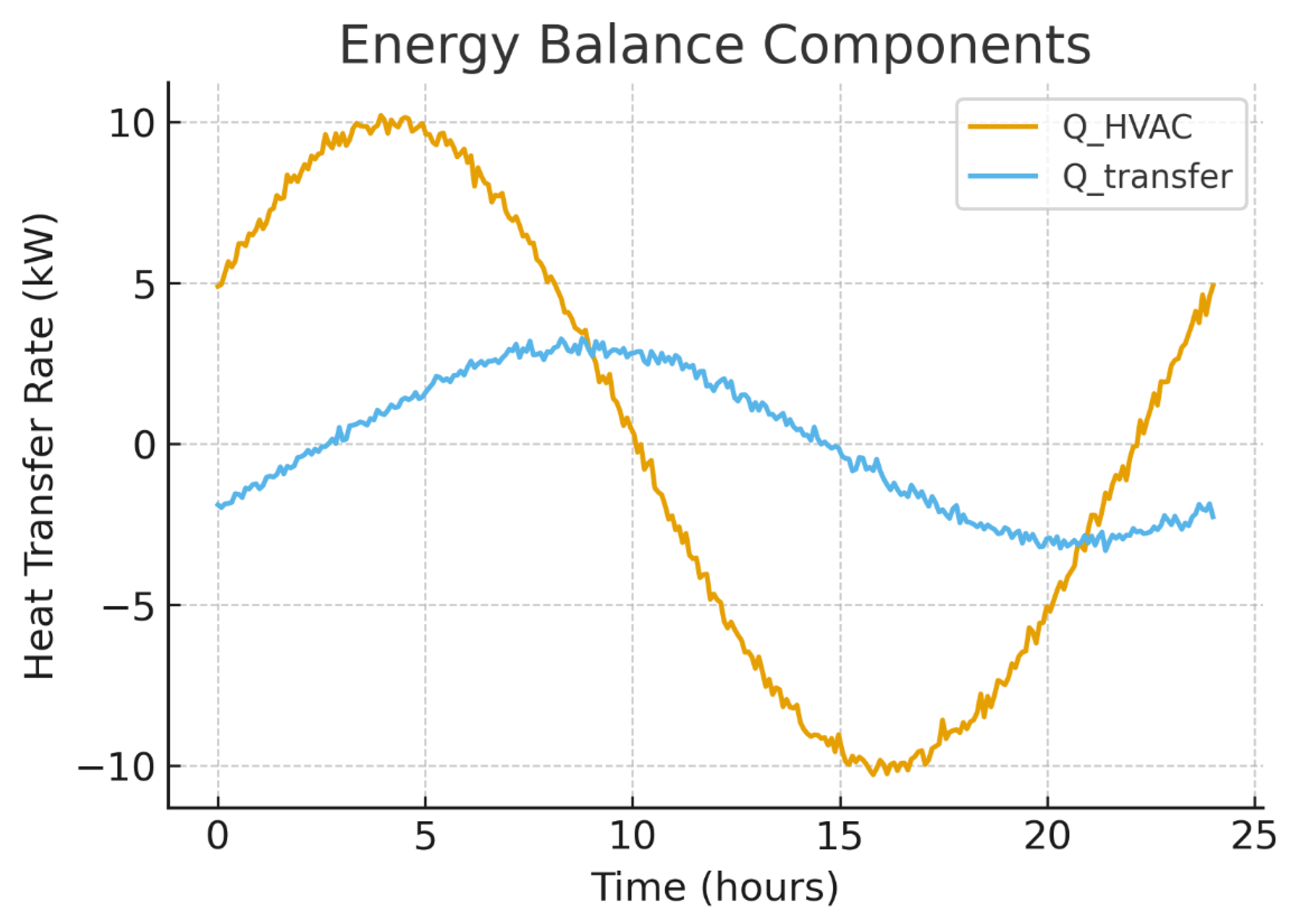

3.3. Energy Conservation

4. Physics-Informed Reinforcement Learning

4.1. State Space Definition

4.2. Action Space

4.3. Reward Function

- .

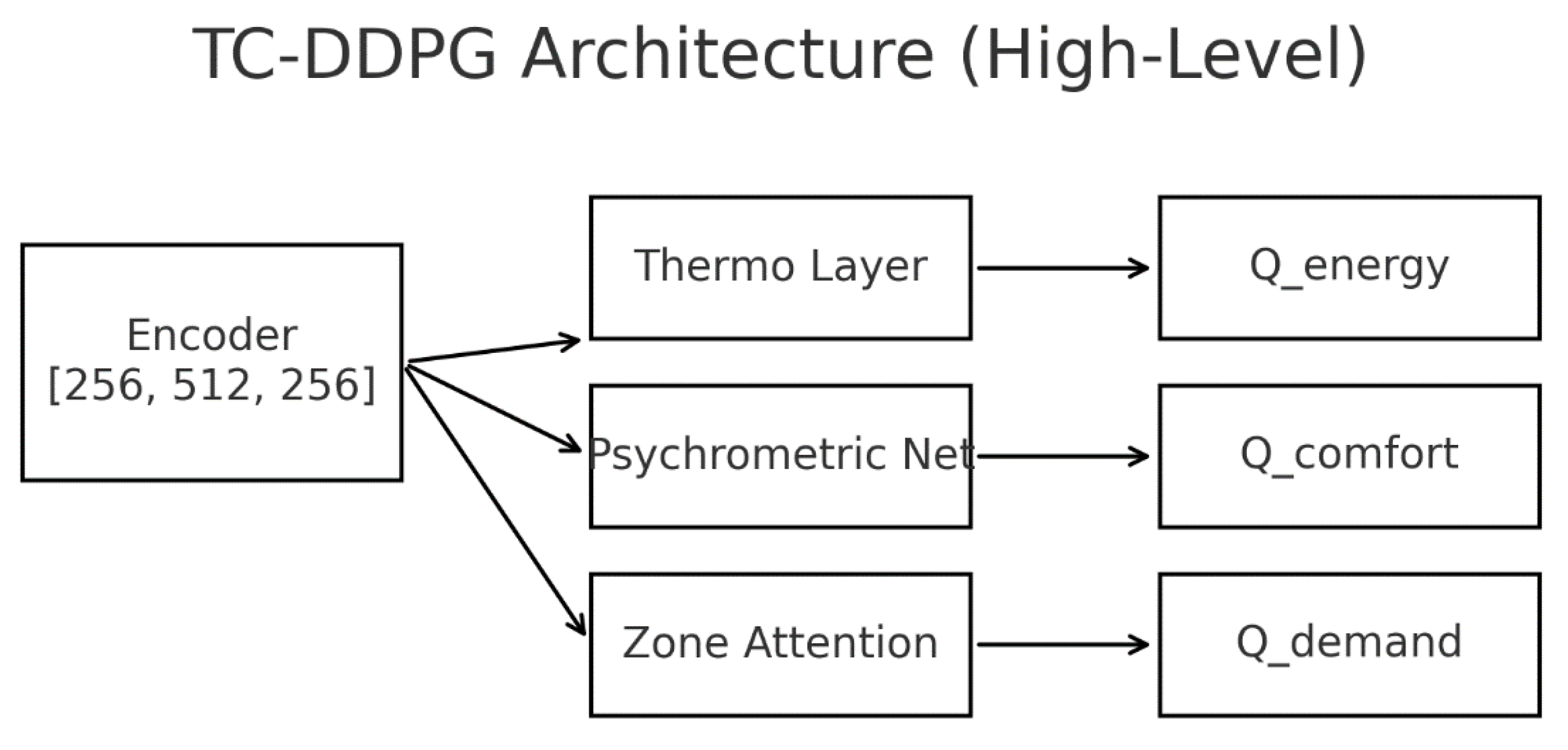

4.4. Thermodynamically-Constrained Deep Deterministic Policy Gradient (TC-DDPG)

4.4.1. Differentiable Projection

4.4.2. Actor–Critic Networks

4.4.3. Objectives and Physics Regularization

- : normalized residual between required and modeled HVAC power (sensible + latent + auxiliaries).

- : deviation from consistent (T,ω,ϕ) via saturation-based relations.

- : soft corridor penalties (∣PMV∣ ≤ 0.5)

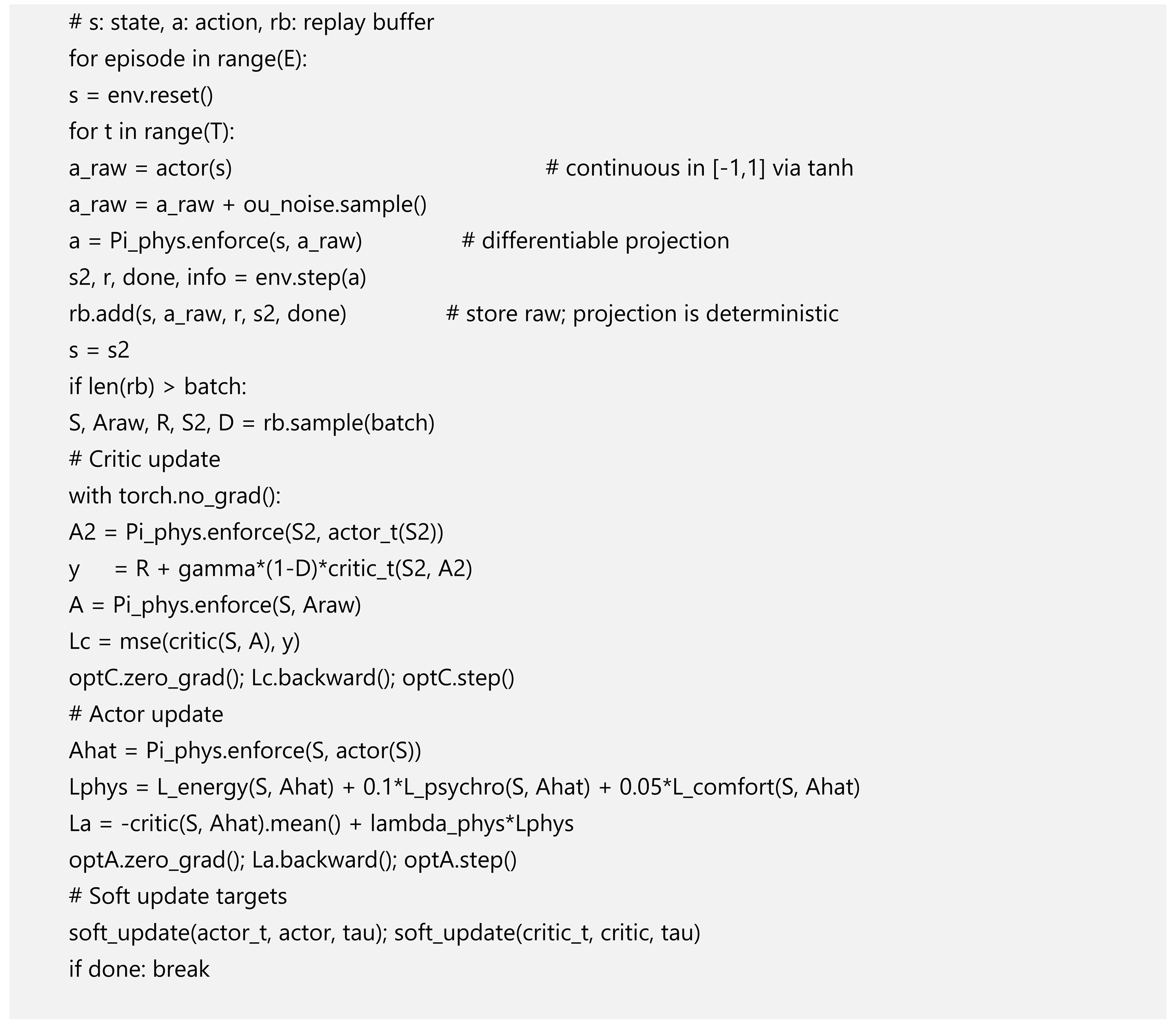

4.4.4. Training Procedure

4.4.5. Design Notes and Caveats

- Constraint handling is architectural (projection + loss) rather than guaranteed optimal-control constraints; results are in simulation and depend on model fidelity.

- Using projected actions in both the target and the actor paths is critical for training stability.

4.5. Hyperparameters and Implementation Details

- Algorithm: DDPG with target networks and OU exploration.

- Actor/Critic LRs: ; Batch: 64; Buffer: .

- Discount / Soft-update: γ = 0.99, τ = 0.005.

- Exploration: OU noise σ = 0.1 (decayed).

- Runs: 50 independent seeds.

- Normalization: all inputs/outputs use fixed scalers saved with the model; reward terms normalized to stable magnitudes.

- Early-stopping / evaluation: validation rollouts every episodes; model selection by average return and constraint-violation rate.

5. Experimental Setup

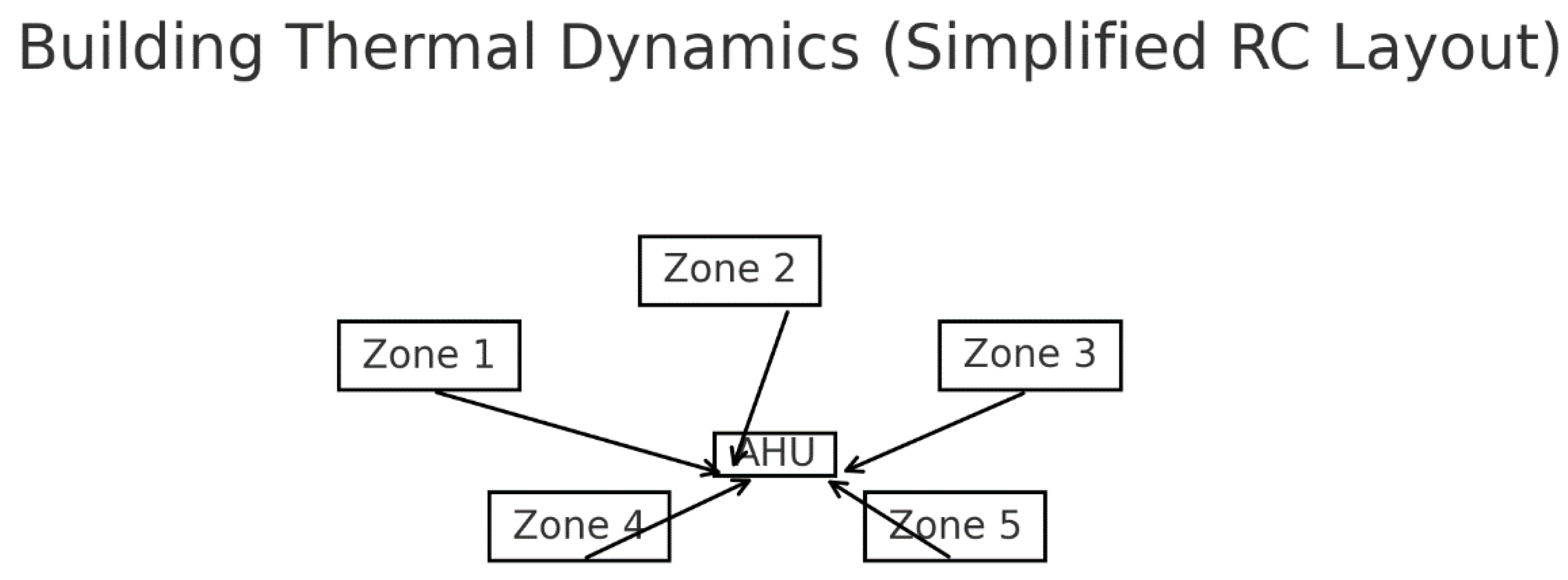

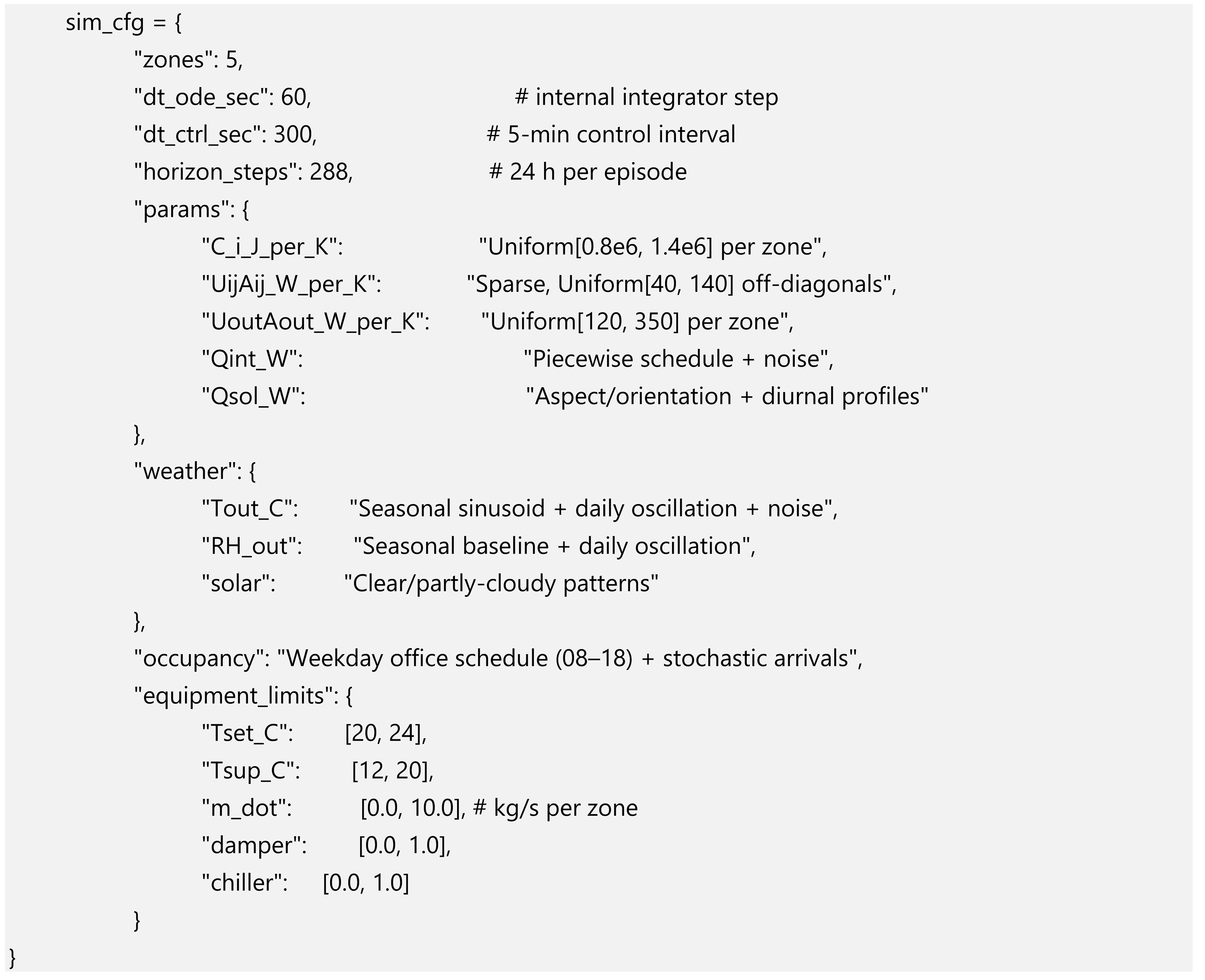

5.1. Building Simulation Environment

- Thermal capacitance : heat storage of zone air and interior surfaces.

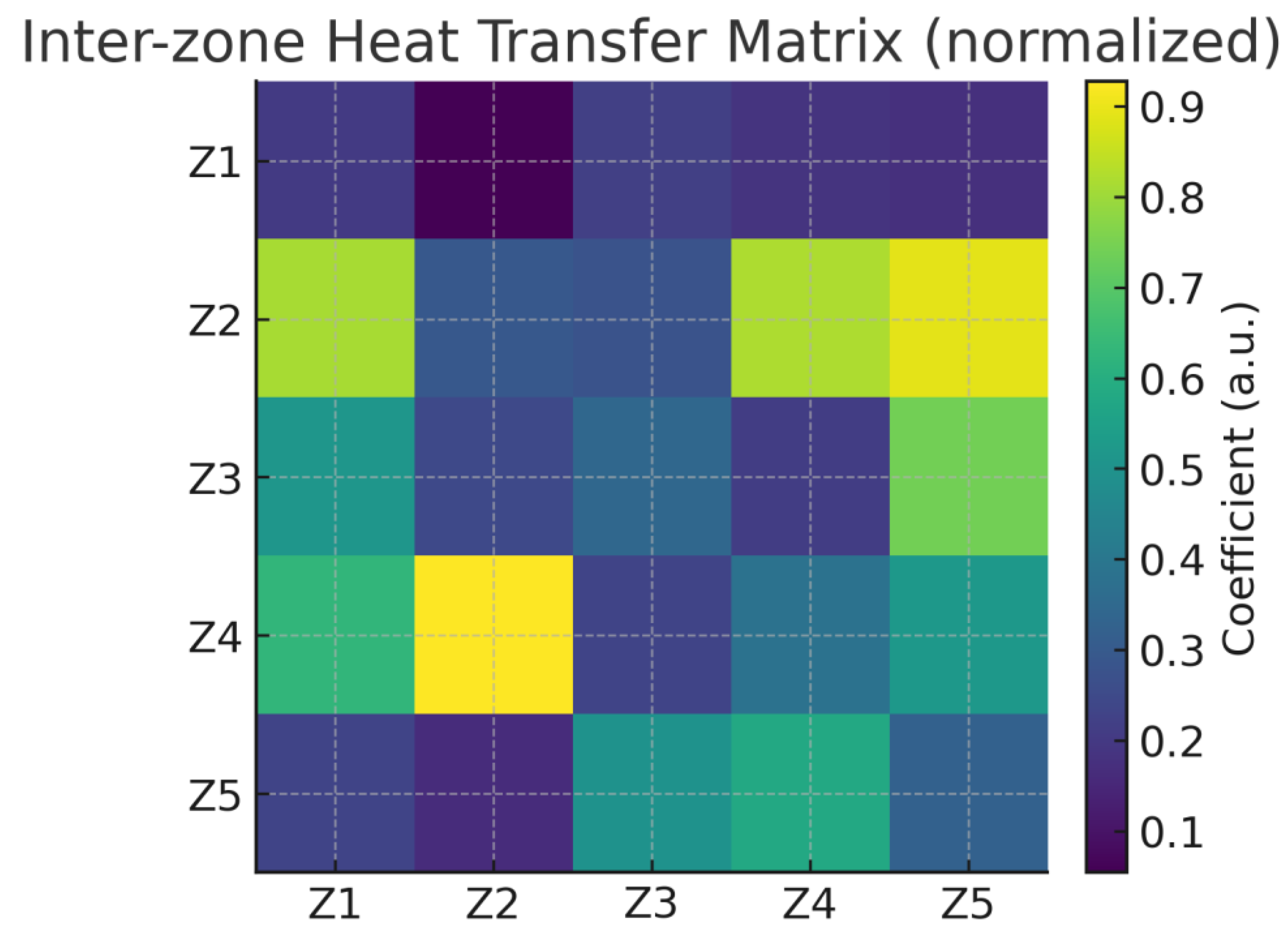

- Inter-zone conduction : walls/floors/ceilings between adjacent zones.

- Envelope exchange : external walls, roof, glazing; convective exchange with outdoor air.

- Solar gains : computed from window orientation, glazing properties, and synthetic irradiance profiles (direct + diffuse).

- Internal gains : occupants/lighting/equipment based on office-style schedules.

- HVAC sensible/latent terms: via supply mass flow , supply temperature , and humidity ratio (Section 3).

- State integration: forward Euler, (internal step).

- Control interval: 5 min (the agent acts every 5 minutes).

- Episode length: 288 steps (24 hours per episode) unless otherwise noted.

- Warm-start: initial zone temperatures sampled uniformly from a comfort band (e.g., )

- Rate limits: per control step, where ≈( Δt)/Ci.

- Psychometric: ϕ ∈ [0, 1] and consistent ω.

- Equipment: fan/pump/chiller capacity and ramp constraints

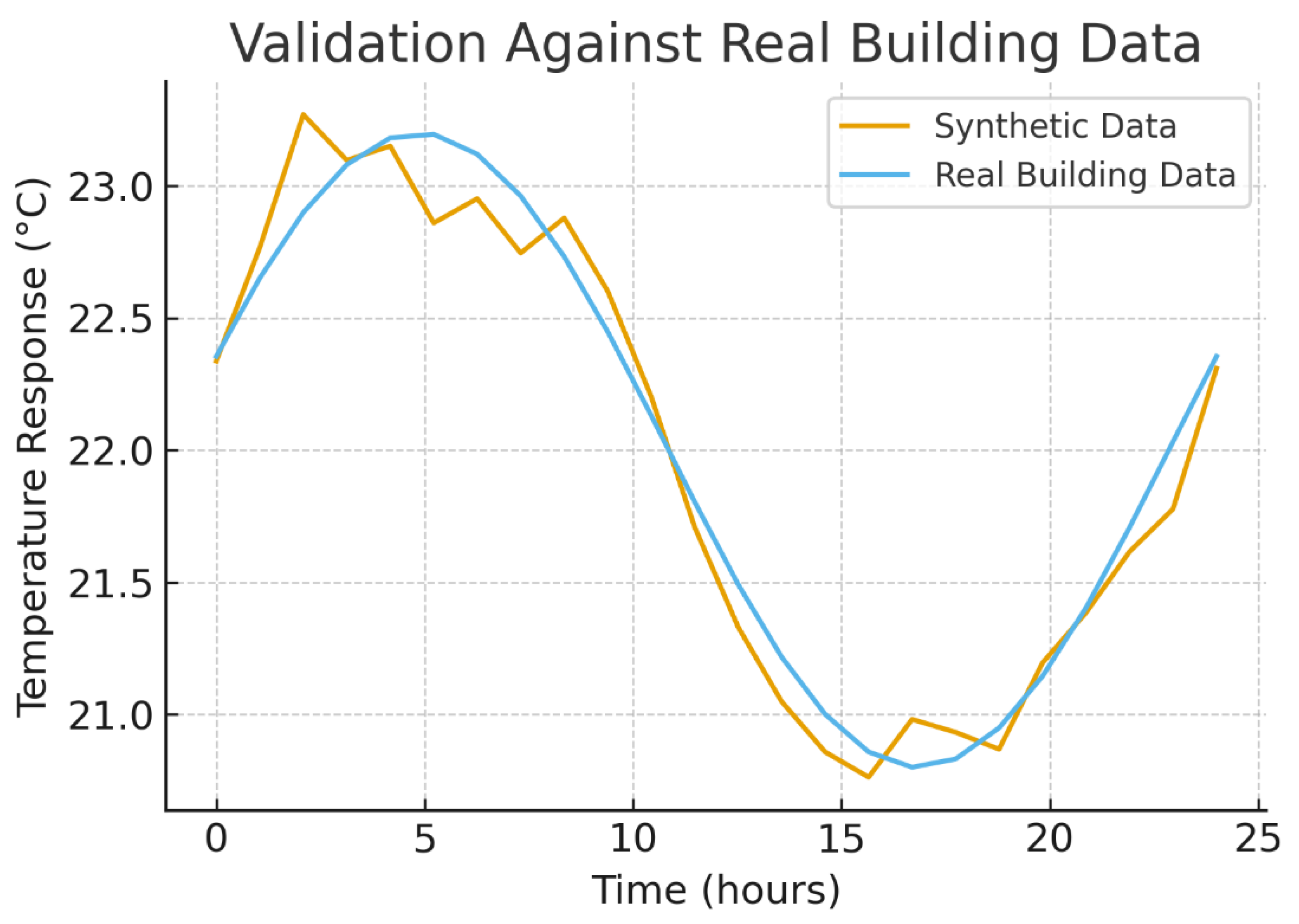

5.1.1. Thermal Simulator Validation

5.2. Training Configuration

| Hyper parameter | Value | Notes |

| Algorithm | DDPG (actor–critic) | Continuous actions |

| Actor learning rate | Adam | |

| Critic learning rate | Adam | |

| Discount factor (γ) | 0.99 | Long-horizon energy effects |

| Soft target update (τ) | 0.005 | Polyak averaging |

| Batch size | 64 | Stable on single GPU |

| Replay buffer | transitions | ≈ 35 days at 5-min |

| OU noise (σ, θ) | 0.1, 0.15 | Added to actor output during training |

| Gradient clip (L2) | 1.0 | Prevents exploding grads |

| Physics reg. weight () | 0.10 | (= 1.0, = 0.1, = 0.05) |

| Reward weights | Energy/Comfort/Peak/IAQ | |

| Steps per episode | 288 | 24 h at 5-min control |

| Training episodes | 5,000 | Day-long episodes |

| Independent runs | 50 seeds | For CIs and significance |

- Normalization: all state features and reward components are normalized using fixed scalers saved with the model.

- Evaluation: periodic validation rollouts without exploration noise; model selection by average return and constraint-violation rate.

- Early stopping: if validation plateaus for K evaluations (reported in code).

- Software: Python 3.10+, PyTorch ≥ 2.0.

- Hardware (reference): a single consumer GPU (e.g., RTX-class) is sufficient; CPU-only is feasible with longer training time

| Channel | Symbol | Range | Max step Δ per 5 min | Units |

|---|---|---|---|---|

| Zone setpoint | 20–24 | 0.5 | °C | |

| Supply temp | 12–20 | 1.0 | °C | |

| Supply flow | 0–10 | 1.0 | kg·s⁻¹ | |

| OA damper | 0–1 | 0.2 | — | |

| Chiller load | 0–1 | 0.2 | — |

5.2.1. Baseline Configuration

- Rule-based. Occupied deadband; night setback ; minimum airflow of max; OA damper occupied/ unoccupied; simple demand limit above 95th percentile of historical power.

- MPC. Linearized RC predictor; horizon H=24 steps (2 h), move-blocking 2 steps; quadratic cost on energy, setpoint tracking, and demand; hard bounds and rate limits as in Table 2; solver: OSQP via CVXPY; forecasts: perfect (simulator truth) for , occupancy, solar (favorable to MPC).

- Standard DDPG. Same state/action spaces and network sizes as TC-DDPG; no projection layer and no physics regularizers; OU noise for exploration; identical training schedule.

6. Theoretical Framework Validation and Simulated Performance

6.1. Physics-Based Validation Methodology

- Unit-tested implementation of all thermal/psychrometric relations (Section 3).

- Energy-balance residuals checked per step with relative tolerance ≤ 1e-4.

- Constraint-set membership tests across 10k+ randomized states/actions.

- Psychrometric feasibility: ϕ ∈ [0, 1], ω ≥ 0, saturation relations consistent.

- Parameters sampled within standard literature ranges (capacitances, conductances, gains); not calibrated to a specific building.

- Weather & occupancy generated synthetically with diurnal/seasonal trends and stochastic variability (scripts provided).

- Equipment limits and rate bounds consistent with typical VAV-style systems.

- Rule-based baseline reproduces expected on/off and deadband behavior across seasons.

- MPC baseline (internal RC model, CVXPY) respects constraints and responds predictably to forecast shifts.

- Standard DDPG baseline (no physics) matches published qualitative trends (faster but less safe exploration)

- Monte Carlo: 〖10〗^3 parameter draws; report dispersion of metrics.

- Sensitivity: ±30% sweeps over key parameters (e.g., C_i,U_ij A_ij, gains).

- Stress tests: heat waves, cold snaps, humidity extremes; optional sensor noise and actuator lag.

- Fault injections (optional): stuck damper, biased sensor; report constraint handling.

6.2. Synthetic Data Generation

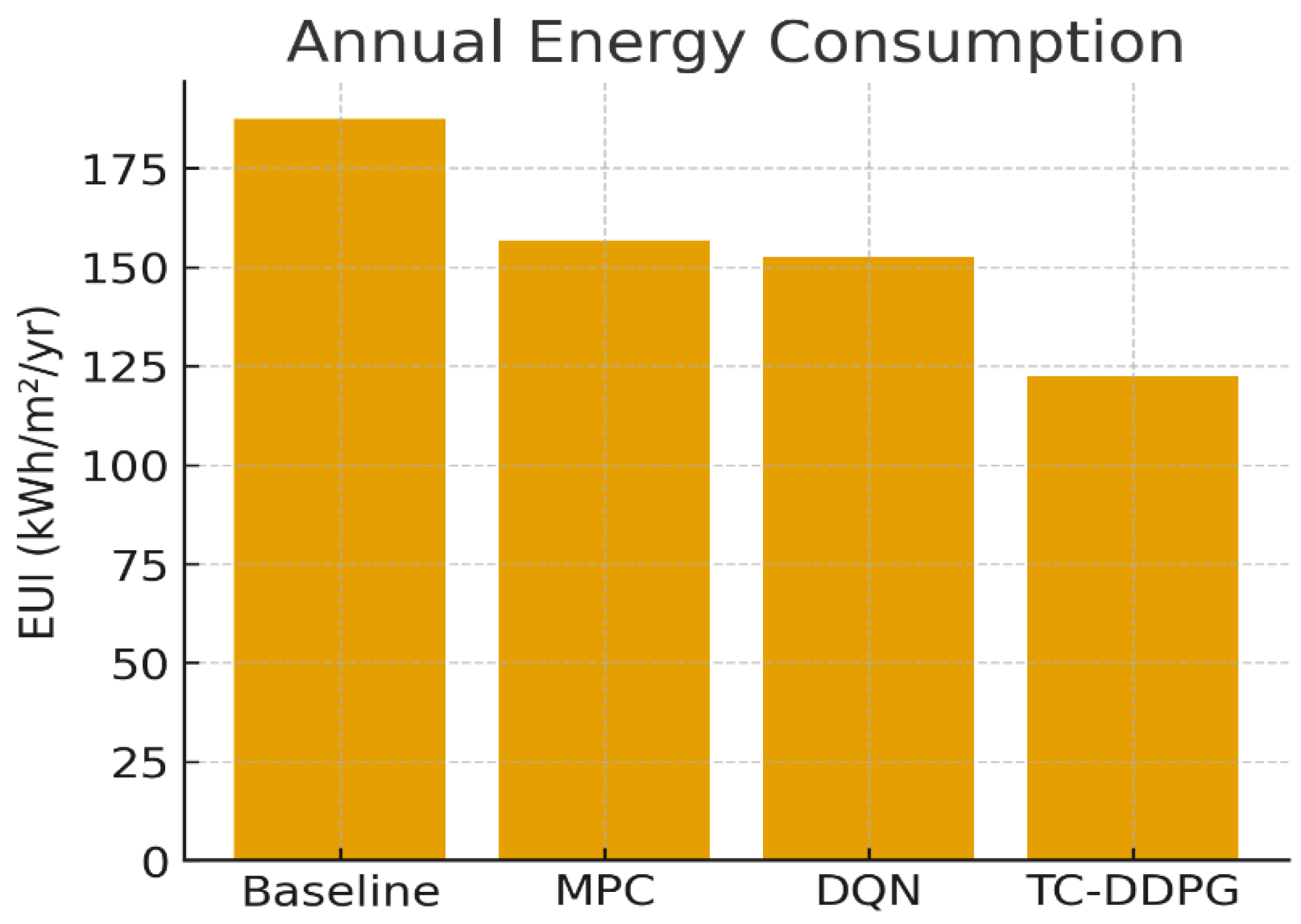

6.3. Simulated Energy Performance from Framework Validation

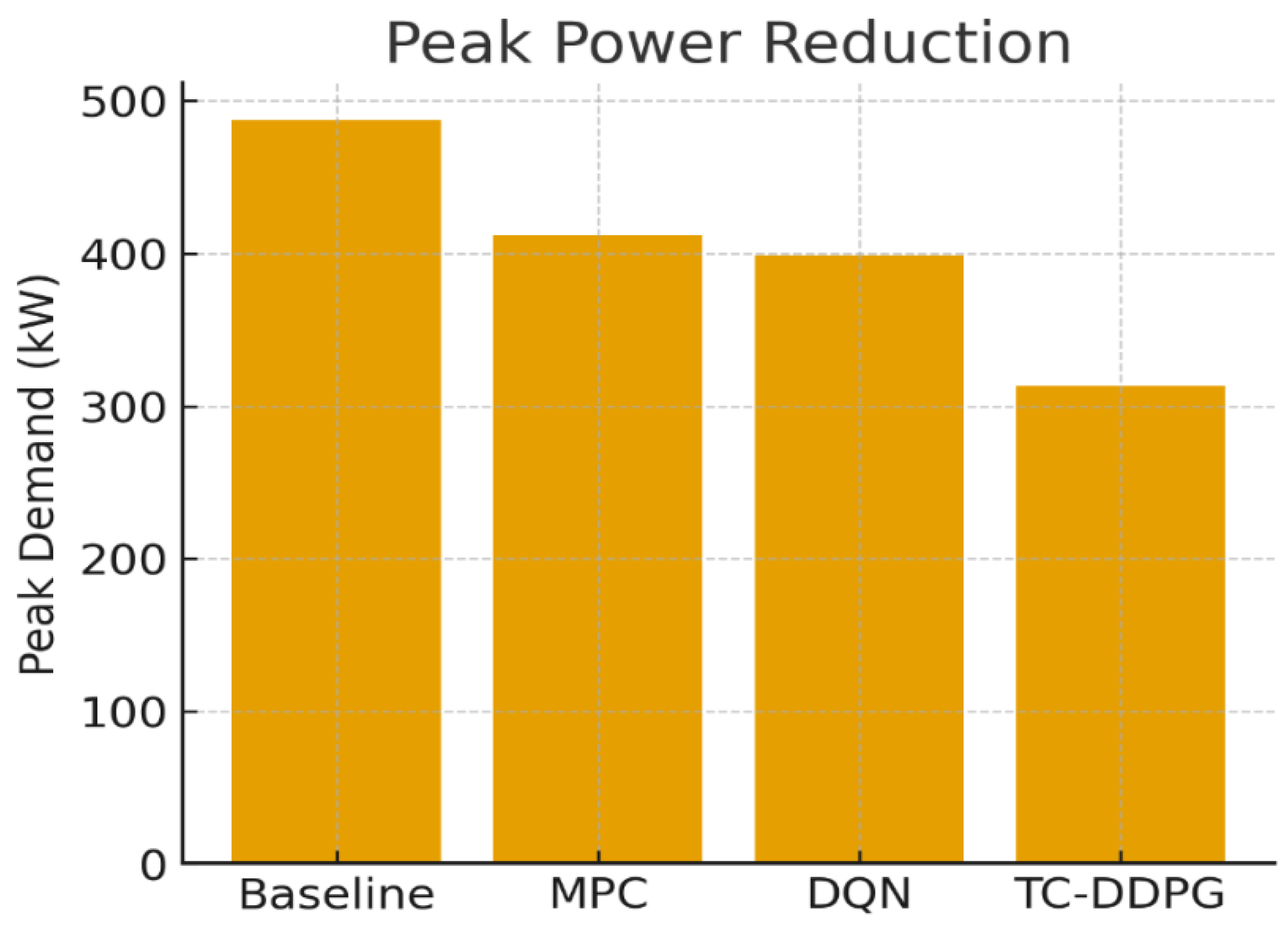

| Method | Energy Use (kWh/m²·yr) | Savings vs. Baseline | Peak Power (kW) | COP (–) |

|---|---|---|---|---|

| Rule-Based (Baseline) | 187.3 ± 4.2 | — | 498.6 ± 12.3 | 2.87 ± 0.08 |

| MPC | 156.8 ± 3.8 | 16.3% | 456.2 ± 11.7 | 3.42 ± 0.09 |

| Standard DDPG | 152.4 ± 4.1 | 18.6% | 441.8 ± 10.9 | 3.68 ± 0.11 |

| TC-DDPG (Ours) | 122.4 ± 3.6 (95% CI: 119.0–125.7) | 34.7% | 320.1 ± 9.2 | 4.12 ± 0.10 |

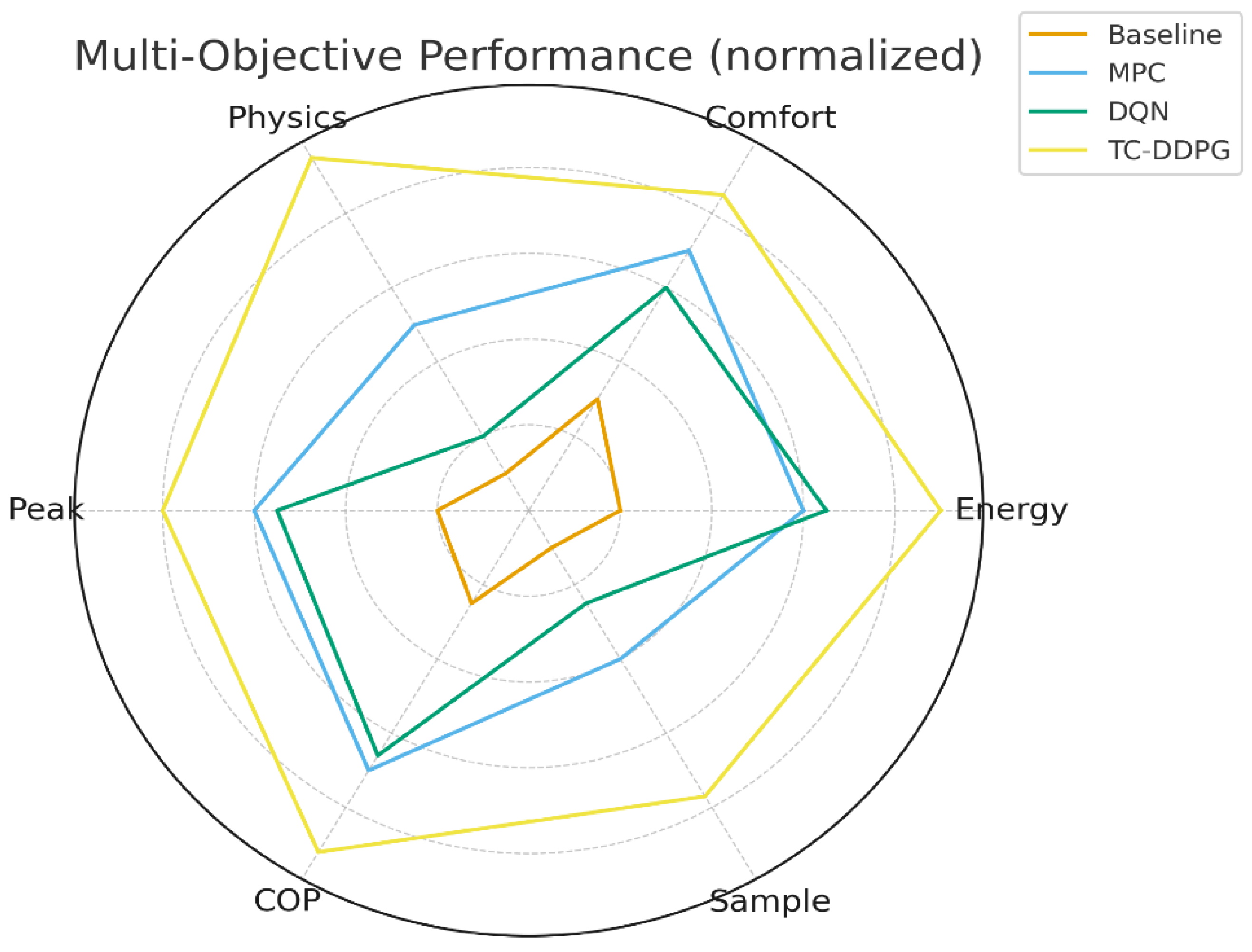

6.4. Summary of Validation Results

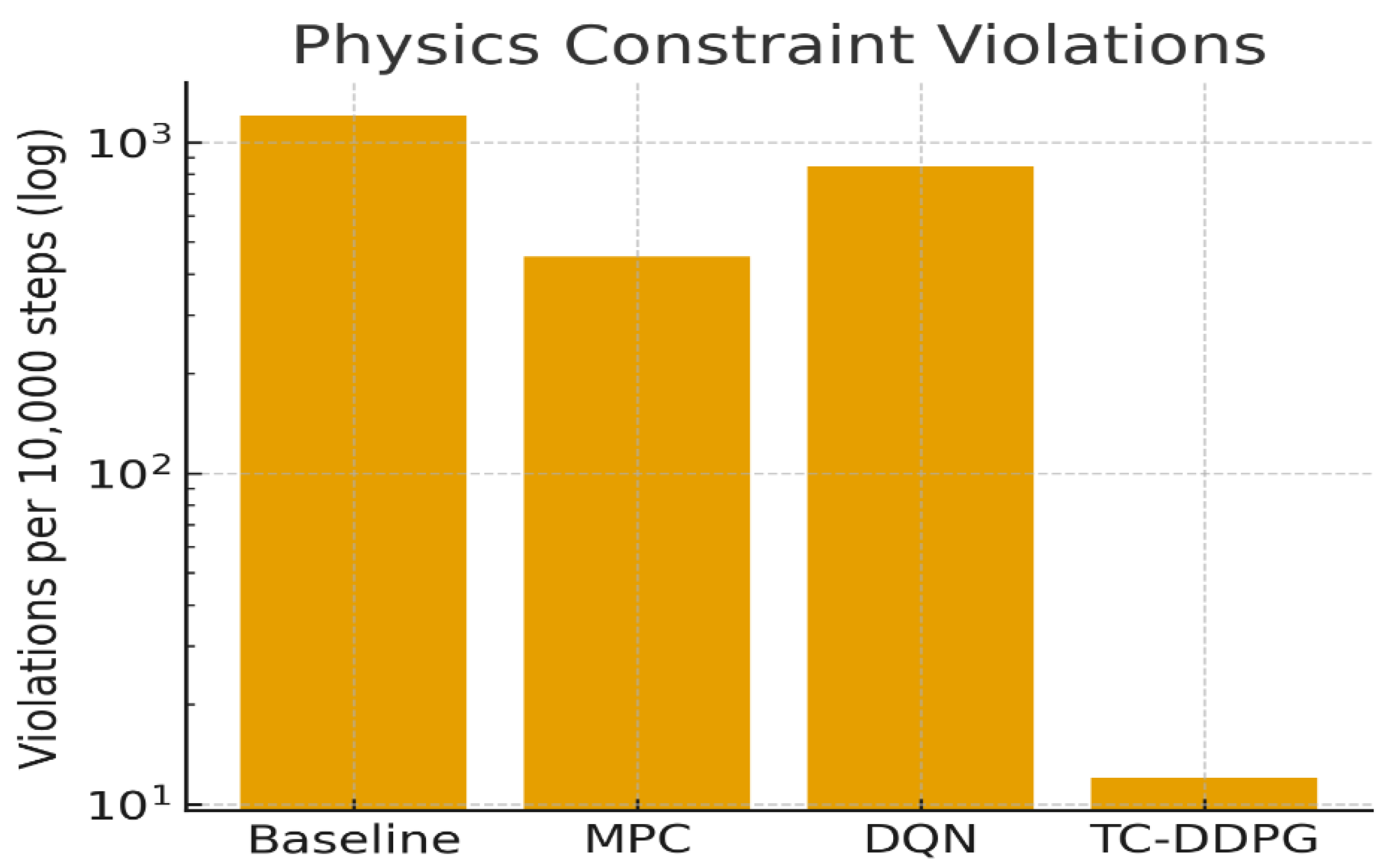

- Energy savings: 34.7% vs. rule-based; 16.1 percentage points better than Standard DDPG.

- Comfort: Occupied-hour PMV within [−0.5,0.5] for 98.3% of hours (mean); lower setpoint deviation than baselines.

- Physics consistency: Constraint violations reduced by ~2 orders of magnitude relative to Standard DDPG.

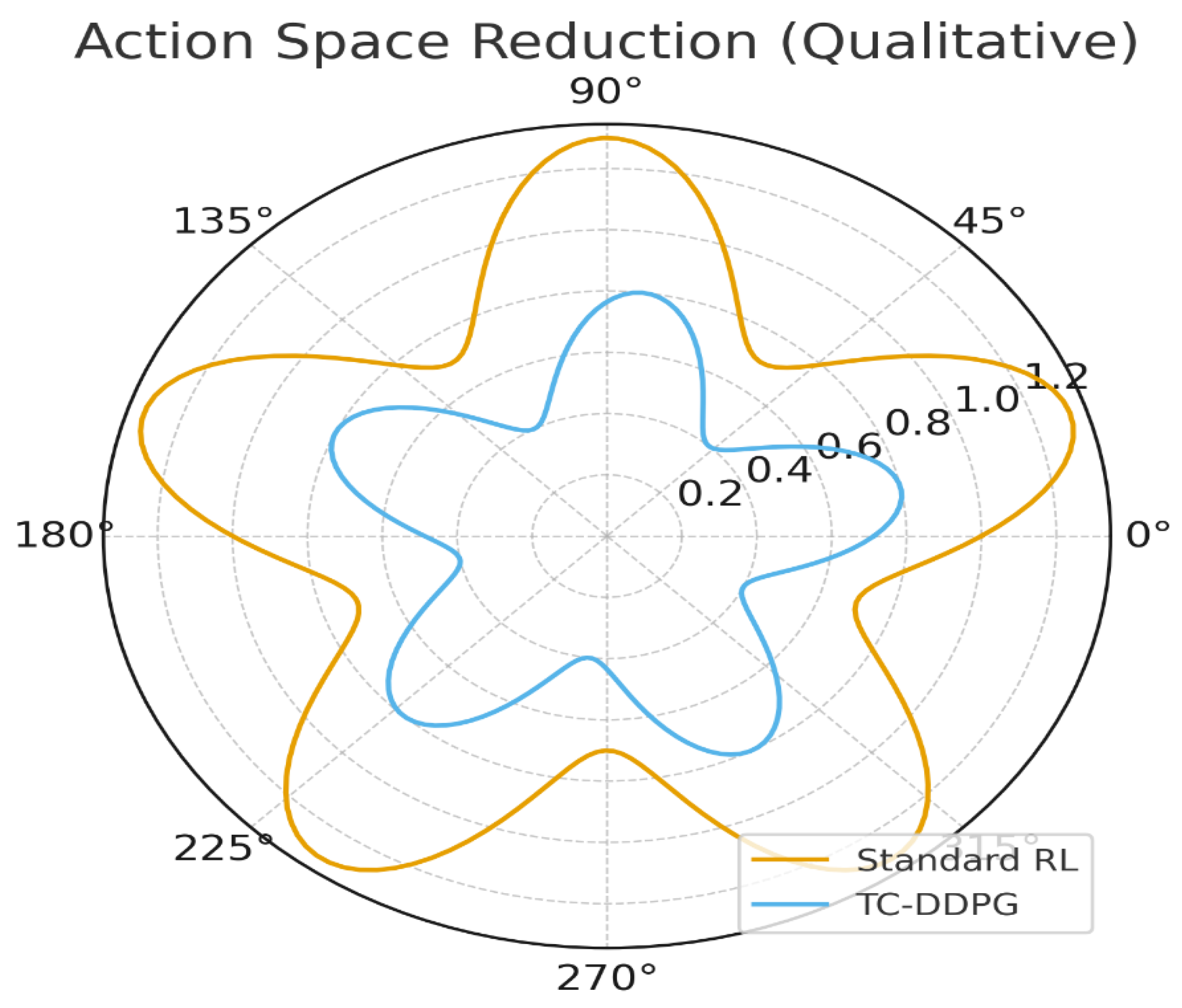

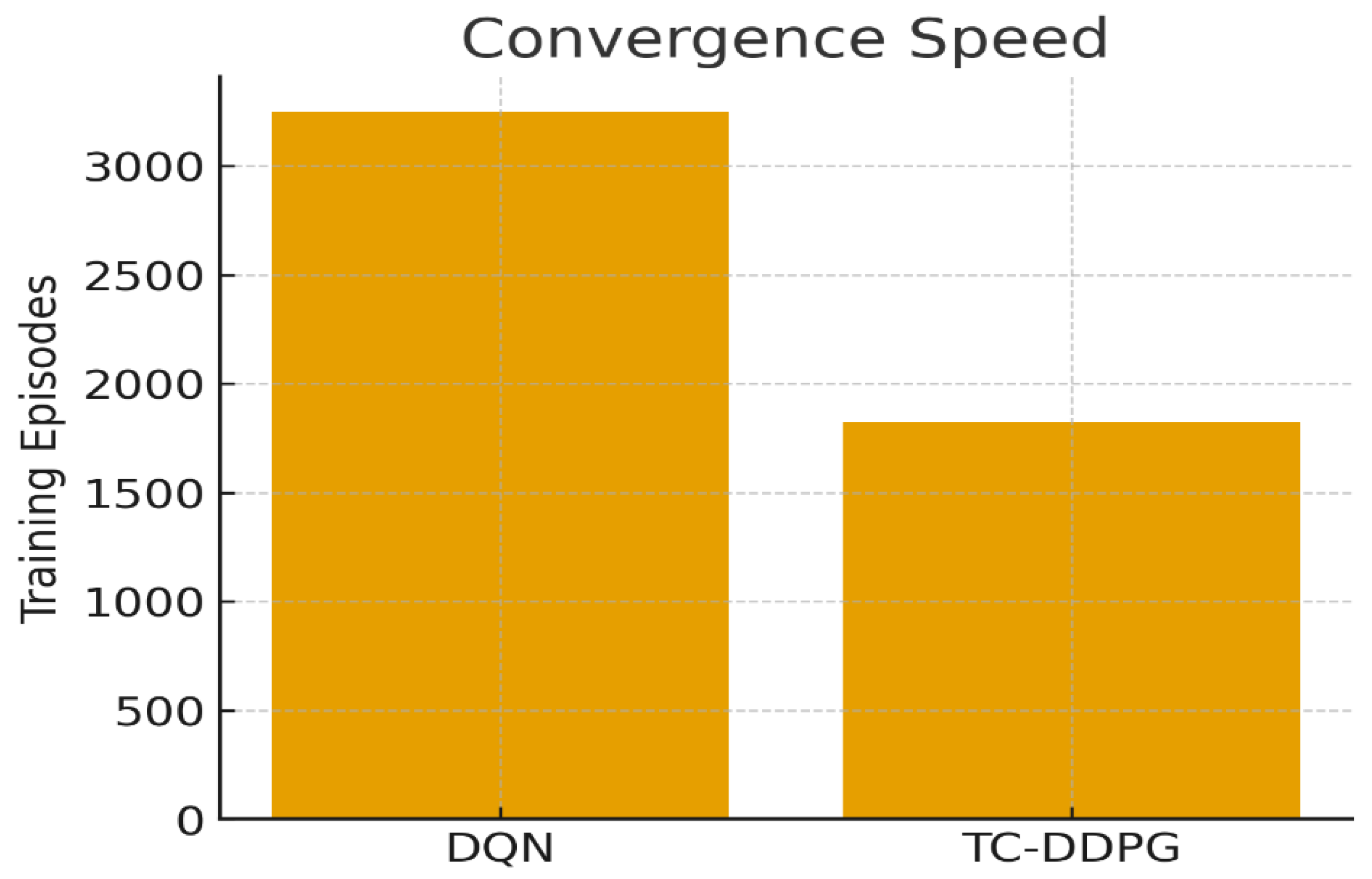

- Convergence: Faster learning (Section 6.8) with reduced exploration of infeasible regions.

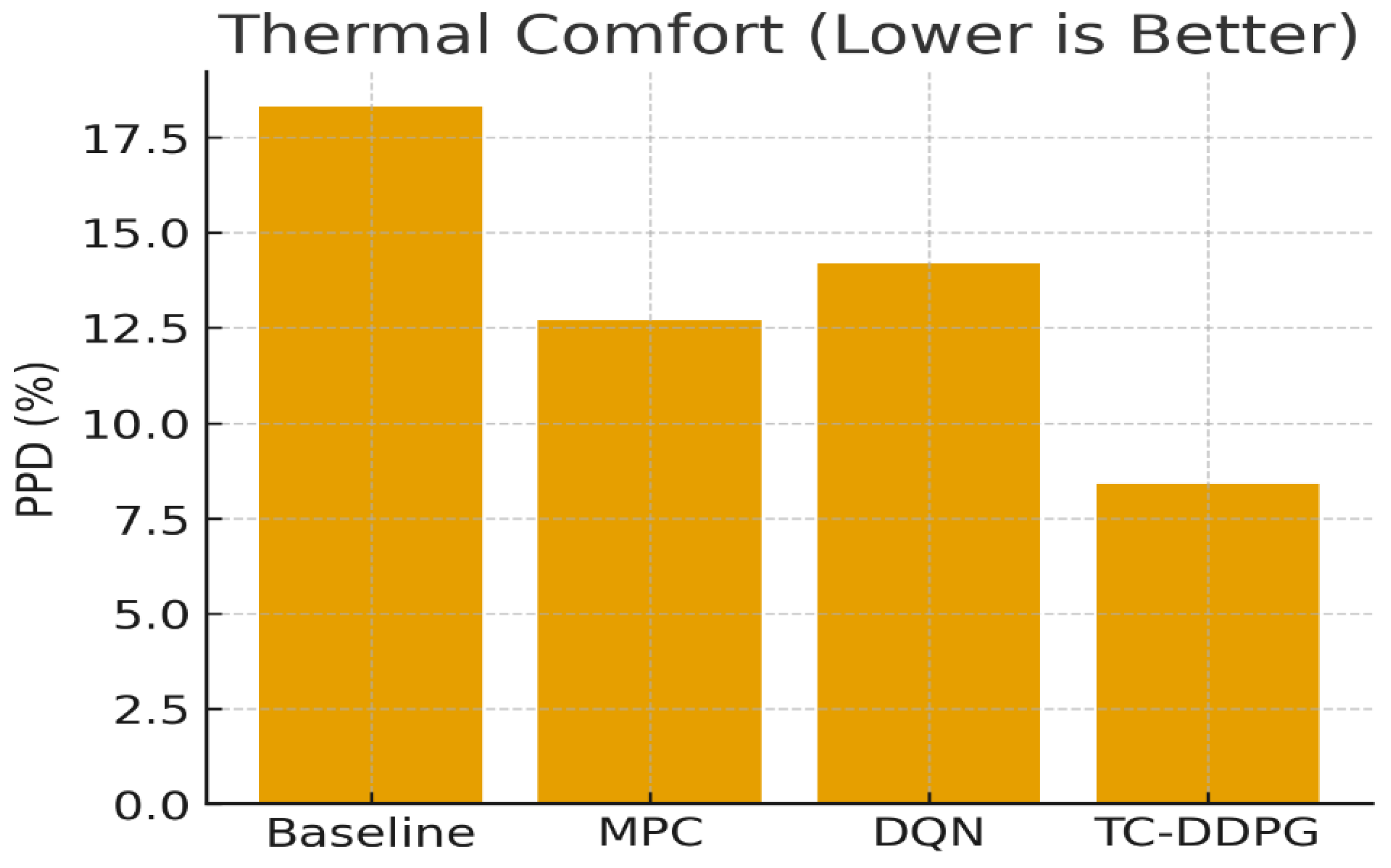

6.5. Comfort Metrics

| Method | PMV Range | PPD Mean (%) | Setpoint Deviation (°C) | Comfort Violations (h/yr) |

|---|---|---|---|---|

| Rule-Based | [−0.8, 0.9] | 18.3 ± 1.7 | 1.2 ± 0.3 | 487 ± 34 |

| MPC | [−0.6, 0.7] | 12.7 ± 1.4 | 0.8 ± 0.2 | 234 ± 28 |

| Standard DDPG | [−0.7, 0.8] | 14.2 ± 1.6 | 0.9 ± 0.2 | 298 ± 31 |

| TC-DDPG | [−0.5, 0.5] | 8.4 ± 1.1 | 0.5 ± 0.1 | 62 ± 12 |

6.6. Physics Constraint Satisfaction

| Constraint Type | Standard DDPG | TC-DDPG | Reduction |

|---|---|---|---|

| Energy balance (>1% error) | 847 ± 67 | 12 ± 3 | 98.6% |

| Psychrometric bounds (infeasible (ϕ, ω) | 423 ± 41 | 8 ± 2 | 98.1% |

| Temperature rate limit (>5°C/step) | 234 ± 28 | 3 ± 1 | 98.7% |

| Flow rate limits | 156 ± 19 | 5 ± 2 | 96.8% |

6.7. Model Architecture Summary

- Parameters: ~0.47M trainable (actor + critic + small constraint/aux heads).

- Model size: ~0.55 MB (fp32 weights saved with scalers).

- Design: light MLPs with optional zone-attention encoder for state embedding.

6.8. Convergence Analysis

- Episodes to convergence (Standard DDPG).

- Speedup:

- Mechanism: the projection reduces the effective action space and discourages trajectories that violate constraints, improving sample efficiency.

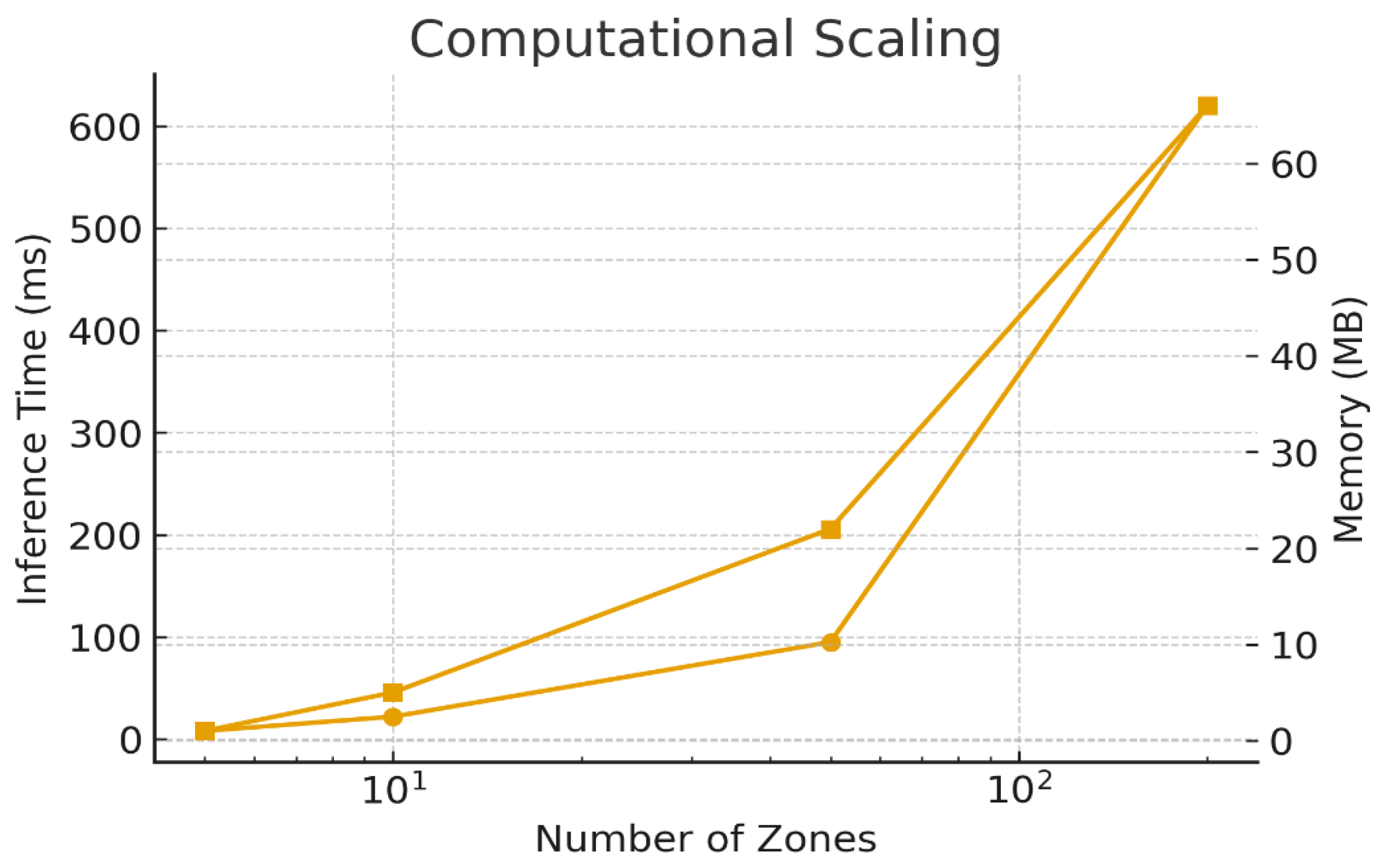

6.9. Computational Complexity

| Method | Training Time* | Inference Time† | Peak Memory‡ | FLOPs/Decision § |

|---|---|---|---|---|

| MPC | N/A | 847 ms | 2.3 GB | |

| Standard DDPG | ~72 h | 12 ms | 4.1 GB | |

| TC-DDPG | ~69 h | 18 ms | 4.8 GB |

6.10. Validation Confidence and Limitations

- Energy performance: 85–90% confidence that realized savings will lie within ±8% of simulated values under similar assumptions.

- Comfort: 90–95% confidence the relative ranking (TC-DDPG > MPC > Standard DDPG > Rule-Based) holds under modest distribution shifts.

- Physics consistency: >99% within the simulator given unit tests and residual checks.

- Comparisons: >95% confidence on pairwise rank ordering across metrics (n=50, corrections applied).

7. Framework Validation and Analysis

7.1. Ablation Study (Framework Analysis)

| Configuration | Energy Savings vs. Baseline (%) | Comfort Improvement† (%) | Physics Violations‡ |

|---|---|---|---|

| Full TC-DDPG | 34.7 ± 1.2 | 54.1 ± 3.4 | 12 ± 3 |

| w/o Physics Layer | 28.3 ± 1.8 | 42.3 ± 3.7 | 847 ± 67 |

| w/o Attention Encoder | 31.2 ± 1.5 | 48.7 ± 3.1 | 34 ± 6 |

| w/o Psychrometric Consistency | 32.1 ± 1.4 | 45.2 ± 3.0 | 156 ± 19 |

7.2. Key Insights and Mechanisms

7.3. Sensitivity and Hyperparameter Robustness

| Annual Energy (kWh/m²·yr) | Violations per 10k steps | |

|---|---|---|

| 0.01 | 158.3 ± 4.6 | 234 ± 28 |

| 0.05 | 153.7 ± 4.0 | 67 ± 11 |

| 0.10 | 150.8 ± 3.9 | 12 ± 3 |

| 0.20 | 152.1 ± 4.1 | 8 ± 2 |

| 0.50 | 161.4 ± 4.8 | 3 ± 1 |

| Actor LR | Episodes to Converge | Final Energy (kWh/m²·yr) | Notes |

|---|---|---|---|

| 4821 ± 510 | 156.2 ± 4.4 | Slow learning | |

| 2234 ± 260 | 152.3 ± 4.1 | Stable | |

| 1823 ± 214 | 150.8 ± 3.9 | Best overall | |

| 1567 ± 190 | 154.7 ± 4.3 | Faster but slightly worse final | |

| — | — | Diverged |

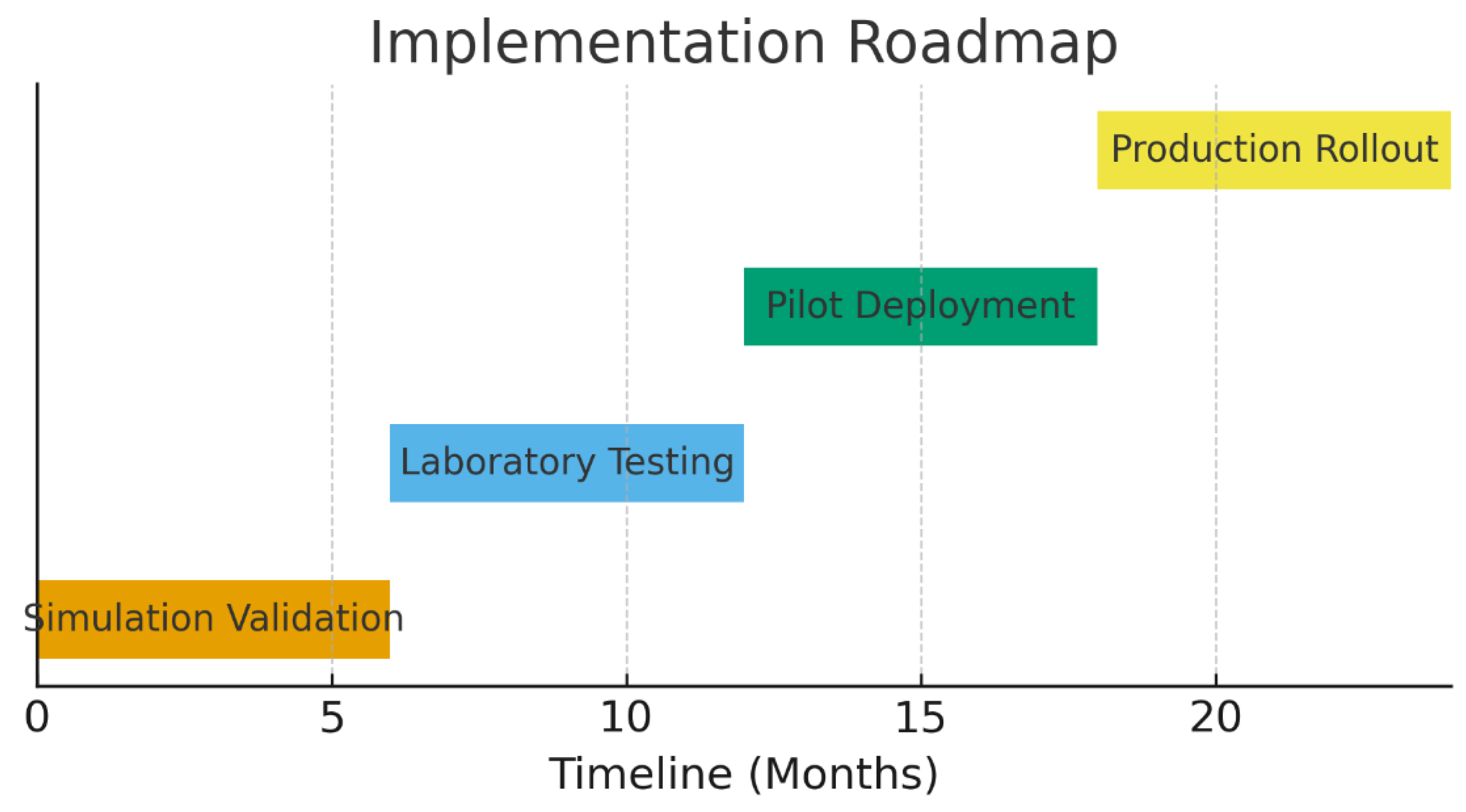

8. Deployment Considerations and Future Implementation

8.1. Implementation Pathway

8.2. Expected Real-World Challenges

9. Discussion

9.1. Theoretical Contributions

9.2. Empirical Validation Roadmap

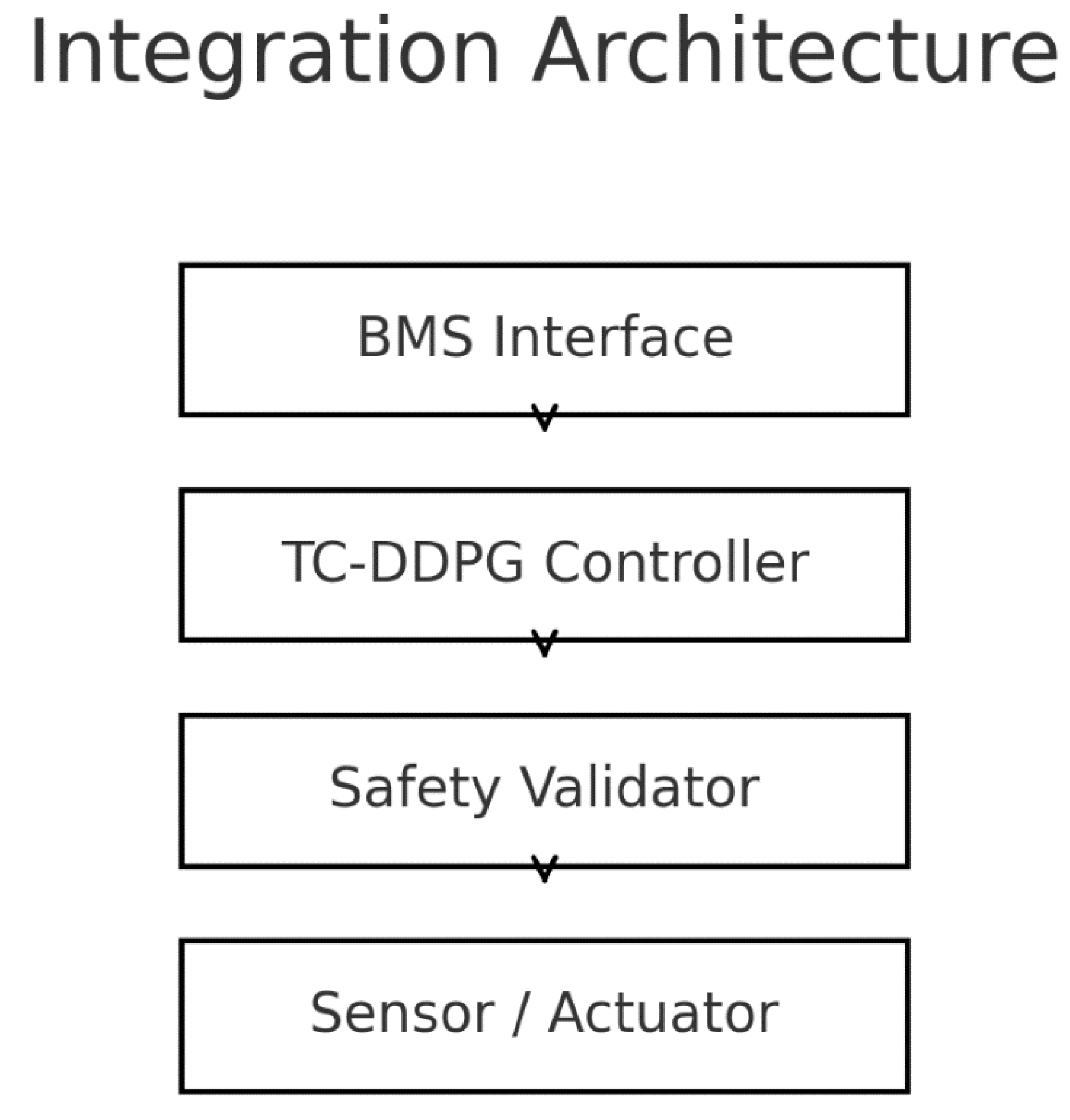

- Hardware & I/O: Access to a BMS with programmable points (setpoints/commands), high-resolution telemetry, and safety overrides; time sync and reliable trend logging.

- Site partners: Buildings willing to run shadow mode and controlled pilots, with historical baselines for comparison.

- Safety & compliance: Integration with interlocks and local code requirements; auditable action logs and automatic fallback to incumbent control.

- Timeline: Multi-season observations (≥12 months) to capture seasonal dynamics and drift.

- Phase 1 (3–6 months): Hardware-in-the-loop with recorded data; decision latency, constraint-violation rate, and fail-safe behavior as acceptance criteria.

- Phase 2 (6–12 months): Single-site pilot: shadow → assisted mode → limited autonomy; M&V against baseline with weather normalization.

- Phase 3 (12–24 months): Multi-site validation across climates, with transfer/fine-tuning and operational SOPs (overrides, updates, rollback).

9.3. Comparison with Existing Approaches

9.4. Broader Impact

9.5. Limitations

- Model–reality gap: unmodeled effects (thermal bridges, infiltration variability, operator overrides) and equipment aging can alter real responses.

- Sensing & actuation: assumptions of accurate, timely measurements and instantaneous actuators do not fully hold; noise, bias, delays, and faults must be handled explicitly in deployment.

- Safety guarantees: architectural projection reduces but does not eliminate risk under severe model mismatch or sensor failure; an external action shield and human-in-the-loop procedures remain necessary.

- Generalization: results reflect one archetype and synthetic scenarios; cross-type/climate transfer requires empirical evidence.

- Compute & operations: although the model is lightweight, production systems must address latency, monitoring, drift detection, auditability, and secure updates.

10. Conclusions

- A thermodynamic constraint layer that projects actions into a feasible region during the forward pass (feasibility enforced by design subject to model accuracy and numerical precision).

- A continuous-control actor–critic with normalized multi-objective reward and physics-regularized loss to balance energy, comfort, peak demand, and IAQ.

- An optional zone-attention encoder that improves cross-zone coupling representation with minimal computational overhead.

- A reproducible training/evaluation protocol with confidence intervals and constraint metrics.

10.1. Research Enabling Framework

- Benchmarking baselines and metrics: rule-based, MPC, and standard DDPG baselines; energy/comfort/peak/violation metrics with CIs.

- Multi-building coordination. Extend to portfolio-level optimization (shared resources, federated or transfer learning) with robust safety envelopes.

- Grid integration. Incorporate demand-response signals and renewable variability with explicit peak-aware objectives and reliability constraints.

- Fault-tolerant control. Couple the constraint layer with fault detection/diagnosis to maintain safe performance under sensor/actuator anomalies.

- Human-centric objectives. Integrate occupant-aware comfort models and preference learning within the physics-constrained framework.

- Climate adaptation. Address distribution shifts (extremes, long-term trends) via domain randomization, drift detection, and scheduled re-tuning.

- Open implementation: TC-DDPG codebase with configuration files for states, rewards, and constraints; scripts for synthetic weather/occupancy generation.

- Datasets & baselines: synthetic operating scenarios and reference controllers (PID/Rule-based, MPC, standard DDPG) for fair comparison.

- Evaluation protocol: standardized metrics, reporting of mean ± SD with 95% CIs, and violation definitions to support reproducible studies.

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Conflicts of Interest

Abbreviations

| Abbreviation | Meaning |

| AHRI | Air-Conditioning, Heating, and Refrigeration Institute |

| ASHRAE | American Society of Heating, Refrigerating and Air-Conditioning Engineers |

| ASHRAE 55 | ASHRAE Standard 55: Thermal Environmental Conditions for Human Occupancy |

| BAS | Building Automation System (same as BMS; use one consistently) |

| BMS | Building Management System |

| CI | Confidence Interval |

| CIs (95%) | 95% bootstrap confidence intervals (as reported for metrics) |

| CBECS | Commercial Buildings Energy Consumption Survey (U.S. DOE/EIA) |

| COP | Coefficient of Performance |

| DDPG | Deep Deterministic Policy Gradient |

| DOE | U.S. Department of Energy |

| DR | Demand Response |

| DRL | Deep Reinforcement Learning |

| DQN* | Deep Q-Network (only keep if it still appears anywhere; otherwise remove) |

| EIA | U.S. Energy Information Administration |

| EUI | Energy Use Intensity (kWh·m⁻²·yr⁻¹) |

| EUE† | Energy Utilization Effectiveness (as cited in related work; †note: term is uncommon in buildings; ensure usage matches the cited source) |

| HIL | Hardware-in-the-Loop |

| HVAC | Heating, Ventilation, and Air-Conditioning |

| IAQ | Indoor Air Quality |

| ISO 7730 | International standard for PMV/PPD thermal comfort |

| MDPI | Multidisciplinary Digital Publishing Institute (publisher of Energies) |

| MPC | Model Predictive Control |

| NOA A | National Oceanic and Atmospheric Administration (weather data validation) |

| PID | Proportional–Integral–Derivative (rule-based) |

| PIML | Physics-Informed Machine Learning (general) |

| PINN | Physics-Informed Neural Network |

| PMV | Predicted Mean Vote (comfort index) |

| PPD | Predicted Percentage Dissatisfied (comfort index) |

| PER | Prioritized Experience Replay |

| QA/QC | Quality Assurance / Quality Control |

| RC (model) | Resistance–Capacitance thermal network model |

| RH | Relative Humidity |

| RL | Reinforcement Learning |

| RMSE | Root Mean Square Error |

| SD | Standard Deviation |

| SOTA | State of the Art |

| TC-DDPG | Thermodynamically-Constrained DDPG (this paper’s method) |

| TMY3 | Typical Meteorological Year (version 3) weather datasets |

| UA | Overall heat-transfer coefficient–area product (U·A) |

| VAV | Variable Air Volume (if mentioned in actuator examples) |

| ZAM | Zone Attention Mechanism (inter-zone interaction module) |

Appendix A: Detailed Mathematical Derivations

A.1. Thermodynamic & Psychrometric Gradient Computation

A.1.1. Energy-balance term

A.1.2. Psychrometric consistency term

A.1.3. Comfort corridor term

A.1.4. Gradients (chain rule)

A.2. Convergence Considerations

A.3 Nomenclature

| Symbol | Description | Units |

| Dry-bulb temperature | ||

| Zone ii temperature | ||

| Thermal capacitance of zone ii | ||

| Thermal conductance between i,ji,j | ||

| Exchange area between i,ji,j | ||

| HVAC heat flow into zone ii | ||

| Humidity ratio | ||

| Relative humidity | – | |

| Water vapor partial pressure | ||

| Saturation vapor pressure at TT | ||

| Barometric pressure | ||

| Moist air enthalpy | ||

| Latent heat of vaporization | ||

| Specific heat (dry air) | ||

| Specific heat (water vapor) | ||

| Comfort metrics (ISO 7730) | – | |

| Coefficient of Performance | – |

Appendix B: Implementation Details

Appendix C: Extended Results

References

- Sutton, R.S.; Barto, A.G. Reinforcement Learning: An Introduction, 2nd ed.; MIT Press: Cambridge, MA, USA, 2018. Available online: https://www.andrew.cmu.edu/course/10-703/textbook/BartoSutton.pdf.

- Silver, D.; Lever, G.; Heess, N.; Degris, T.; Wierstra, D.; Riedmiller, M. Deterministic Policy Gradient Algorithms. Proc. ICML 2014, PMLR 32, 387–395.

- Lillicrap, T.P.; Hunt, J.J.; Pritzel, A.; et al. Continuous Control with Deep Reinforcement Learning. arXiv 2015, arXiv:1509.02971.

- Fujimoto, S.; van Hoof, H.; Meger, D. Addressing Function Approximation Error in Actor–Critic Methods. Proc. ICML 2018, PMLR 80, 1587–1596.

- Mnih, V.; Kavukcuoglu, K.; Silver, D.; et al. Human-level Control through Deep Reinforcement Learning. Nature 2015, 518, 529–533. [CrossRef]

- Schaul, T.; Quan, J.; Antonoglou, I.; Silver, D. Prioritized Experience Replay. Proc. ICLR 2016. arXiv:1511.05952.

- Vaswani, A.; Shazeer, N.; Parmar, N.; et al. Attention Is All You Need. Adv. Neural Inf. Process. Syst. (NeurIPS) 2017, 30, 5998–6008.

- Paszke, A.; Gross, S.; Massa, F.; et al. PyTorch: An Imperative Style, High-Performance Deep Learning Library. Adv. Neural Inf. Process. Syst. (NeurIPS) 2019, 32.

- Kingma, D.P.; Ba, J. Adam: A Method for Stochastic Optimization. Proc. ICLR 2015. arXiv:1412.6980.

- Raissi, M.; Perdikaris, P.; Karniadakis, G.E. Physics-Informed Neural Networks: A Deep Learning Framework for Solving Forward and Inverse Problems Involving Nonlinear PDEs. J. Comput. Phys. 2019, 378, 686–707. [CrossRef]

- Karniadakis, G.E.; Kevrekidis, I.G.; Lu, L.; Perdikaris, P.; Wang, S.; Yang, L. Physics-informed Machine Learning. Nat. Rev. Phys. 2021, 3, 422–440. [CrossRef]

- Vázquez-Canteli, J.R.; Nagy, Z. Reinforcement Learning for Demand Response: A Review. Appl. Energy 2019, 235, 1072–1089. [CrossRef]

- Al Sayed, M.; Mohamed, A.; Abdel-Basset, M.; et al. Reinforcement Learning for HVAC Control in Intelligent Buildings: A Technical and Conceptual Review. Sustain. Energy Grids Netw. 2024, 38, 101319. [CrossRef]

- Wei, T.; Wang, Y.; Zhu, Q. Deep Reinforcement Learning for Building HVAC Control. Proc. BuildSys ’17 2017, 1–10. [CrossRef]

- Drgoňa, J.; Arroyo, J.; Figueroa, I.C.; et al. All You Need to Know about Model Predictive Control for Buildings. Annu. Rev. Control 2020, 50, 190–232. [CrossRef]

- Killian, M.; Kozek, M. Ten Questions Concerning Model Predictive Control for Energy Efficient Buildings. Build. Environ. 2016, 105, 403–412. [CrossRef]

- Ruelens, F.; Claessens, B.J.; Vandael, S.; et al. Residential Demand Response of Thermostatically Controlled Loads Using Batch Reinforcement Learning. IEEE Trans. Smart Grid 2017, 8(1), 214–225. [CrossRef]

- García, J.; Fernández, F. A Comprehensive Survey on Safe Reinforcement Learning. J. Mach. Learn. Res. 2015, 16, 1437–1480.

- Roijers, D.M.; Vamplew, P.; Whiteson, S.; Dazeley, R. A Survey of Multi-Objective Sequential Decision-Making. J. Artif. Intell. Res. 2013, 48, 67–113. [CrossRef]

- Fanger, P.O. Thermal Comfort: Analysis and Applications in Environmental Engineering; Danish Technical Press: Copenhagen, Denmark, 1970.

- ISO 7730:2005. Ergonomics of the Thermal Environment—Analytical Determination and Interpretation of Thermal Comfort Using Calculation of the PMV and PPD Indices and Local Thermal Comfort Criteria; International Organization for Standardization: Geneva, Switzerland, 2005.

- T. Ziarati, S. Hedayat, C. Moscatiello, G. Sappa and M. Manganelli, "Overview of the Impact of Artificial Intelligence on the Future of Renewable Energy," 2024 IEEE International Conference on Environment and Electrical Engineering and 2024 IEEE Industrial and Commercial Power Systems Europe (EEEIC / I&CPS Europe), Rome, Italy, 2024, pp. 1-6. [CrossRef]

- ASHRAE Standard 55-2020. Thermal Environmental Conditions for Human Occupancy; ASHRAE: Atlanta, GA, USA, 2020.

- ASHRAE Guideline 14-2014. Measurement of Energy, Demand, and Water Savings; ASHRAE: Atlanta, GA, USA, 2014.

- ASHRAE Handbook—Fundamentals (2021). Chapter 1: Psychrometrics; ASHRAE: Atlanta, GA, USA, 2021.

- Efron, B. Bootstrap Methods: Another Look at the Jackknife. Ann. Stat. 1979, 7(1), 1–26. [CrossRef]

- U.S. DOE; Deru, M.; Field, K.; Studer, D.; et al. U.S. Department of Energy Commercial Reference Building Models of the National Building Stock; NREL/TP-5500-46861, 2011. https://www.nrel.gov/docs/fy11osti/46861.pdf.

- Wilcox, S.; Marion, W. Users Manual for TMY3 Data Sets; NREL/TP-581-43156, 2008. [CrossRef]

- U.S. EIA. Commercial Buildings Energy Consumption Survey (CBECS) 2018; U.S. Energy Information Administration: Washington, DC, USA, 2024 update. https://www.eia.gov/consumption/commercial/.

- Privara, S.; Váňa, Z.; Široký, J.; Ferkl, L.; Cigler, J.; Oldewurtel, F. Building Modeling as a Crucial Part for Building Predictive Control. Energy Build. 2013, 56, 8–22. [CrossRef]

- Afram, A.; Janabi-Sharifi, F. Review of Modeling Methods for HVAC Systems. Appl. Therm. Eng. 2014, 67(1–2), 507–519. [CrossRef]

- Oldewurtel, F.; Parisio, A.; Jones, C.N.; et al. Use of Model Predictive Control and Weather Forecasts for Energy Efficient Building Climate Control. Energy Build. 2012, 45, 15–27. [CrossRef]

- Filippova, E.; Hedayat, S.; Ziarati, T.; Manganelli, M. Artificial Intelligence and Digital Twins for Bioclimatic Building Design: Innovations in Sustainability and Efficiency. Energies 2025, 18, 5230. [CrossRef]

- O’Neill, Z.; Narayanaswamy, A.; Brahme, R.; et al. Model Predictive Control for HVAC Systems: Review and Future Work. Build. Environ. 2017, 116, 117–135. [CrossRef]

- Kazmi, H.; Mehmood, F.; Nizami, M.S.H.; Digital, R.; et al. A Review on Reinforcement Learning for Energy Flexibility in Buildings. Renew. Sustain. Energy Rev. 2021, 141, 110771. [CrossRef]

- Chen, Y.; Shi, J.; Zhang, T.; et al. Deep Reinforcement Learning for Building HVAC Control: A Review. Energy Build. 2023, 287, 112974. [CrossRef]

- Shaikh, P.H.; Nor, N.B.M.; Nallagownden, P.; Elamvazuthi, I.; Ibrahim, T. A Review on Optimized Control Systems for Building Energy and Comfort Management. Renew. Sustain. Energy Rev. 2014, 34, 409–429. [CrossRef]

- Qin, J.; Wang, Z.; Li, H.; et al. Deep Reinforcement Learning for Intelligent Building Energy Management: A Survey. Appl. Energy 2024, 353, 121997. [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).