1. Introduction

AI ethics has become essential for managing algorithmic systems in society. Key principles, such as transparency, fairness, and accountability, guide responses to issues like data bias and social exclusion (Floridi et al., 2018). However, many AI ethics frameworks adopt a technocratic approach, viewing AI as merely a tool that requires technical fixes while overlooking its connection to existing power imbalances and historical injustices (Greene et al., 2019; Birhane, 2021).

While numerous ethics guidelines promote code disclosure, audits, and design constraints, these often overlook the political aspects of algorithmic systems and the role communities can play in challenging and reshaping AI power through collective action. This raises an important question: How can activist strategies redefine transparency, accountability, and bias mitigation as key areas of political engagement in AI governance?

To address this inquiry, we begin by mapping mainstream AI ethics principles onto the tactical framework of Saul Alinsky's Rules for Radicals, identifying areas of both alignment and tension. We illustrate these dynamics through two comprehensive case studies: the facial-recognition ban in San Francisco and the data strike by gig workers in New York City, demonstrating how each inferred "rule" manifests in practice. Building on these insights, we propose ten tactical "Rules for Radical AI" intended for use by activists and scholars.

In concluding this analysis, we reflect on the methodological limitations and ethical risks involved while also outlining potential avenues for future research. This discussion culminates in a call to transform AI governance from passive compliance to active contestation.

This project draws heavily from Alinsky's grassroots organizing model, which moves beyond mere incremental policy reform to offer a robust method for challenging established systems of control (Alinsky, 1971). In the context of the algorithmic era, his approach provides a valuable framework for resisting AI systems that reinforce surveillance, exacerbate inequality, and undermine community empowerment.

Rather than settling for superficial ethical adjustments, we advocate for a more profound engagement with the political economies of algorithmic power. AI should not be viewed solely as a technological construct but as a critical arena for social struggle shaped by historical legacies of colonialism, capitalist forces, and institutional inequities (Noble, 2018; Zuboff, 2019; Tacheva & Ramasubramanian, 2023). The "Rules for Radical AI" that emerge from this analysis function as both an activist epistemology and a community-led resistance toolkit aimed at reframing governance to emphasize democratic accountability.

This reframing aligns with the calls from decolonial and feminist scholars who advocate for moving beyond narrow, instrumental approaches to prioritize justice, contestation, and the inclusion of diverse knowledge systems (Ayana et al., 2024; Toupin, 2023). By examining AI ethics through the lens of radical organizing, we uncover overlooked opportunities for civic engagement, algorithmic dissent, and collective action, thereby paving the way for new research agendas focused on reclaiming the socio-political contexts in which AI technologies operate.

2. What Alinsky Would Think of AI

If Saul Alinsky were alive today, he would likely see artificial intelligence as a key battleground for power and democratic control rather than a marvel to celebrate. In "Rules for Radicals," he wrote for the disenfranchised "Have-Nots," aiming to challenge established privilege. His emphasis was on practical confrontation tools: visibility, pressure, ridicule, coalition-building, and disruption.

2.1. Alinsky and the Logic of Systemic Power

Alinsky’s philosophy emphasizes that systems do not change on their own; they require organized pressure to do so. This idea remains highly relevant today in the AI landscape, where power is concentrated in a few multinational companies, black-box algorithms significantly impact public life, and the individuals affected often lack the means to challenge their treatment (Zuboff, 2019; Eubanks, 2018). Similar to the bureaucracies and political machines in Alinsky’s time, today’s AI systems often lack direct democratic accountability, operating behind a veil of technical expertise and complexity. Alinsky would likely ask:

Who builds these systems, and for what purpose?

Who is made invisible by them?

How can those most impacted by AI take action to regain control?

The similarities are evident. His idea of “rubbing raw the sores of discontent” to inspire action mirrors the need to expose algorithmic harms, like racial bias, surveillance, and exclusion. Dominant AI ethics often aim to manage these issues instead of addressing them directly.

2.2. Related Work: Participatory AI and Civic Tech Scholarship

Recent work on participatory AI governance has focused on using civic technologies to enhance decision-making processes. The OECD (2025) emphasizes that AI, along with tools such as blockchain and virtual reality, can enhance citizen engagement, increase government transparency, and support adaptable models based on experiences from countries like the Netherlands, Portugal, and Spain. Taiwan’s vTaiwan initiative exemplifies this by conducting large-scale digital consultations using AI-driven surveys and crowdsourced policy analysis to shape parliamentary reforms (Friedrich Naumann Stiftung, 2024).

Moreover, the ACM's “Participatory Turn in AI Design” outlines a framework for co-designing algorithms with communities, focusing on iterative feedback and equity metrics (Delgado et al., 2023). Research on participatory engineering emphasizes the balance between technical constraints and socio-political goals, advocating for flexible governance structures that ensure accountability while allowing adaptability (Venkatachalapathy, 2025).

Civic tech projects provide concrete examples of co-governance. A report from New America highlights how U.S. cities have established AI oversight bodies and citizen assemblies to oversee the deployment of algorithms in policing and public services (New America, 2023). These initiatives demonstrate both the feasibility and the challenges of integrating democratic processes into AI systems.

3. Framing and Previewing the Ten Rules

3.1. Critique of Compliance-Driven Ethics

Despite the growing focus on ethical AI, many governance frameworks still overlook the political and economic contexts affecting algorithm deployment. Instead of addressing deep-rooted issues, these frameworks tend to legitimize existing systems by offering superficial technical solutions (Greene et al., 2019; Tacheva & Ramasubramanian, 2023).

To change this approach, we propose a new framework called "Rules for Radical AI," inspired by Saul Alinsky’s grassroots activism. This framework emphasizes resistance, civic engagement, and strategic disruption over minor reforms. It encourages viewing AI not as a neutral technology in need of ethical tweaks but as a battlefield for social change.

Table 1 shows how standard AI ethics can be reinterpreted to highlight power, contestation, and accountability. It connects standard AI governance frameworks with radical interpretations that emphasize power dynamics, civic agency, and conflict.

3.2. Why Rules for Radicals Still Apply to AI

Although “Rules for Radicals” was not designed for the digital age, its tactical approach offers a valuable perspective on current discussions about AI. Applying Alinsky's principles to AI emphasizes that it is a form of political infrastructure that requires political action to resist, shape, or reclaim.

Table 2 outlines how to apply Alinsky's organizing principles to challenge and reshape algorithmic systems. It translates his rules into actionable strategies for this context.

These adaptations are practical strategies employed by today's activists and civic technologists to address algorithmic bias, counter surveillance technologies, and foster public engagement in AI governance. Examples include city councils banning facial recognition, gig workers organizing data strikes, and community-led AI audits.

3.3. A Radical AI Is a Democratic AI

Alinsky emphasized that change comes not from moral appeals or dialogue with the powerful but through strategic disruption and organizing communities. “Rules for Radical AI” calls for a focus on civic resistance and empowering democratic agency within algorithmic governance.

Instead of simply seeking fairness in AI, radical AI examines who benefits, who resists, and how power can be redistributed. This aligns with Alinsky’s vision: using tactics to challenge and fundamentally reshape power structures rather than merely softening them.

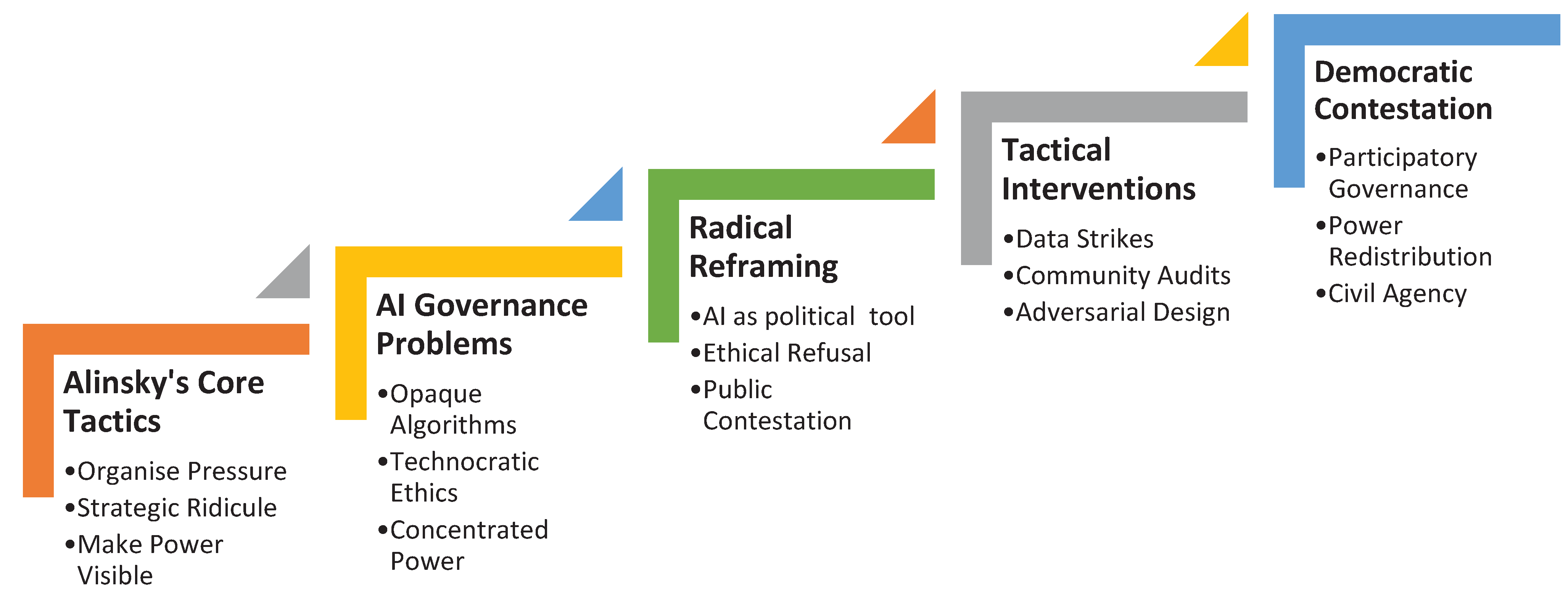

Figure 1 illustrates the key counter-framework discussed in this paper, showing a progression from Alinsky’s foundational organizing principles to the civic actions required for democratizing AI governance.

This diagram shows the progression from Alinsky’s core tactics to strategies for resisting algorithms and rethinking AI ethics through contestation, civic involvement, and democratic action.

4. Rules for Radical AI

The reinterpretations of

Figure 2 yield ten tactical principles that counteract dominant AI logic and propose alternative resistance strategies. These principles are grouped into four key themes: Visibility, Contestation, Collective Action, and Embedding Change.

Visibility

Reveal the Code

Illuminate the Data

Contestation

- 3.

Disrupt AI Neutrality

- 4.

Organize a Data Strike

- 5.

Invert Default Settings

Collective Action

- 6.

Build Cross-Sector Alliances

- 7.

Elevate Community Voices

Embedding Change

- 8.

Practice Reflexive Critique

- 9.

Seize Policy Windows

- 10.

Institutionalize Civic Oversight

These rules serve as a toolkit for scholars to reconsider AI ethics, moving beyond just corporate self-regulation or minimal legal standards. From this perspective, AI is not just a governance issue but a political project that requires a political response.

5. Methodological Approach: Mapping Alinsky’s Rules to AI

To derive the ten Rules for Radical AI, we conducted a systematic mapping process in three phases:

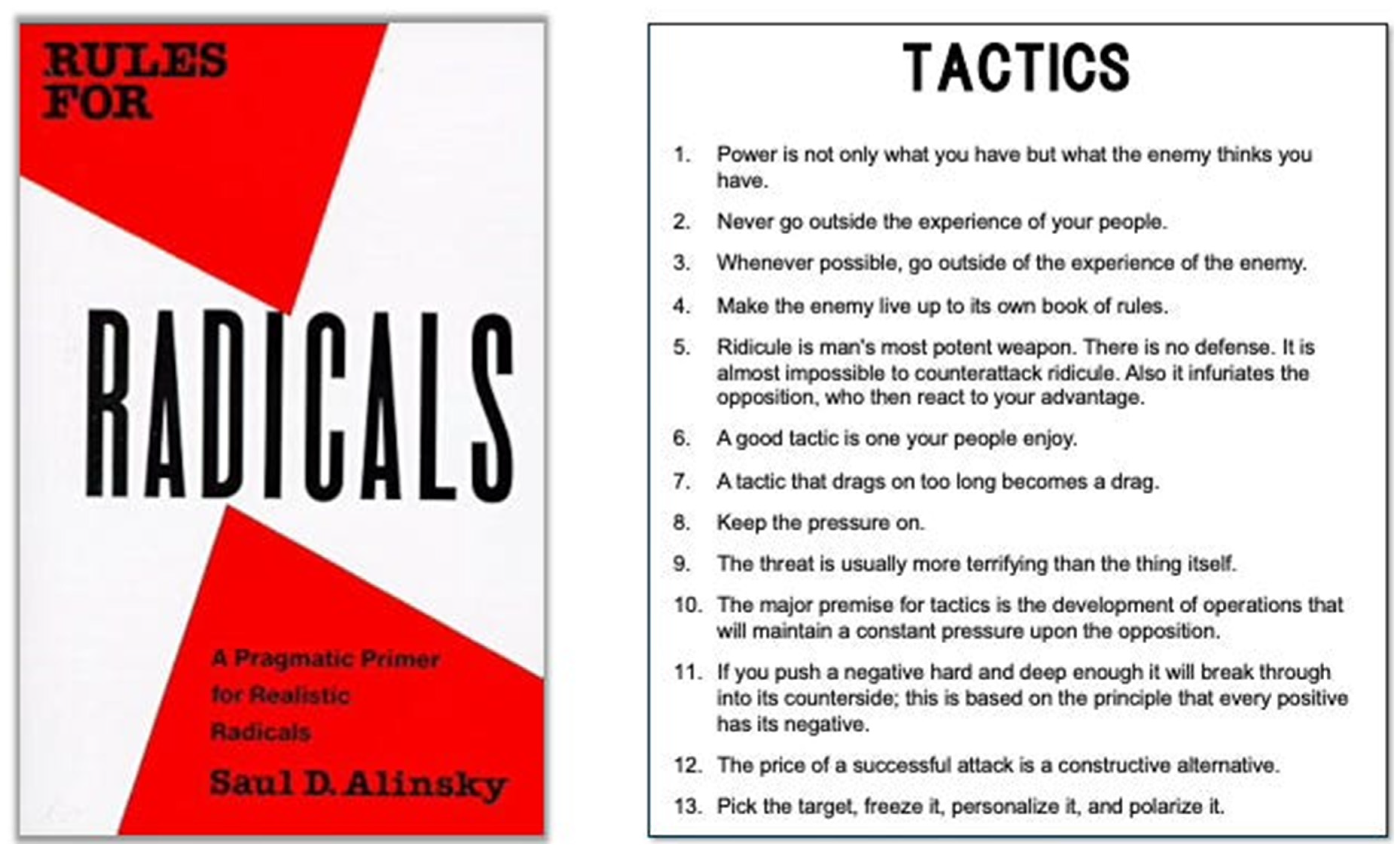

Textual Analysis: We analyzed Saul Alinsky’s Rules for Radicals, highlighting key tactics such as community organizing, pressure application, and symbolic actions (see

Figure 2).

Contextual Translation: These principles were discussed in a session with experts in AI ethics, civic tech, and AI enthusiasts who were tasked with evaluating their relevance to algorithmic power dynamics and proposed changes to improve digital infrastructures and reduce algorithmic opacity.

Validation and Refinement: We tested the draft rules using current AI cases, such as surveillance bans and data strikes, discussed in the next section, to assess their relevance. Feedback from practitioners helped us consolidate overlapping principles and refine rule definitions to address AI-specific issues such as data asymmetry and model explainability.

This approach grounded our framework in established activist tactics while adapting to the dynamics of AI systems.

6. Illustrative Public Cases

We present two detailed examples of how activists and affected communities have implemented the Rules for Radical AI in real-life situations.

Banning Facial Recognition in San Francisco

In 2019, community groups and civil liberties organizations in San Francisco used transparency tactics to push for a ban on facial recognition software by the Police Department. Activists filed public records requests to uncover contracts and agreements, revealing that vendors often exaggerated accuracy and concealed biases against people of color and women (Conger et al., 2019). By characterizing these devices as tools of surveillance capitalism instead of impartial security resources, advocates held both companies and elected officials accountable for their ethical promises (Rule: Make the enemy adhere to their own set of rules; Apply Pressure). They maintained public focus through regular press conferences, coalition-organized discussions, and artistic protests that mocked corporate claims of objectivity (Rule: Ridicule is man’s most effective weapon; Keep the pressure on). After six months of continuous civic advocacy, the Board of Supervisors voted unanimously to pass a law banning the use of facial recognition technology in all municipal departments.

Data Strike by Gig Workers in New York City

In 2022, ride-hailing and food delivery workers in New York City conducted an online “data strike” to regain control over unclear rating and dispatch algorithms. They employed open-source tools to gather anonymized trip and delivery data, revealing consistent downgrades due to traffic issues or less profitable areas. This dataset was released under a Creative Commons license, encouraging civic technologists to develop counter-algorithms that anticipated unfair de-rating situations (Rule: Collaborate and Share; Empower Affected Communities). By disseminating these findings to local media and the Taxi and Limousine Commission, the coalition compelled public hearings on the fairness of algorithms (Rule: Question Everything; Design for Disruption). The campaign reached a significant conclusion: the city mandated that all platforms conduct quarterly fairness audits and provide workers with direct access to their algorithmic profiles, allowing them to challenge and rectify erroneous ratings instantly.

7. Scope & Limits

The Rules for Radical AI present strong tactics for challenging algorithmic power; however, we must also acknowledge potential critiques and ethical issues.

Backlash and Escalation: Radical tactics, such as public shaming or direct disruption, can lead to legal retaliation or increased surveillance from powerful entities. Activists should assess risks, build broad coalitions, and establish exit strategies to minimize harm.

Ethical Boundaries: Data strikes and unauthorized data releases can pose risks to privacy, consent, and unintended harm to others. Practitioners must practice ethical reflexivity, consult with affected communities, and adhere to principles of transparency and accountability.

Reputation and Public Perception: Using ridicule or pressure can damage public trust and is seen as unfair. Activists should focus on clear messaging, seek neutral endorsements, and align their efforts with common societal values.

Co-optation and Dilution: Institutional actors might use radical language or tactics to justify minor reforms. To maintain momentum, it is essential to continuously engage with grassroots groups, regularly assess outcomes, and be prepared to adjust strategies in response to any potential co-optation.

To address challenges effectively, activists should implement ongoing community discussions, formal ethical reviews, and contingency plans. This approach will help them navigate the limitations of radical tactics while maximizing their potential for change.

8. Implications and New Research Directions

The “Rules for Radical AI” framework redefines AI governance as a contested space of values, power, and resistance rather than just a technocratic problem. By prioritizing civic and political agency over compliance-based ethics, it highlights important implications for future research and institutional practices.

8.1. Challenging the Technocratic Ethos

Contemporary AI ethics frameworks are primarily created by multinational corporations, international organizations, and top academic institutions. While they focus on ideals like "trustworthy AI," they often overlook the underlying issues causing harm, such as surveillance capitalism, racial profiling, and digital exclusion (Greene et al., 2019; Eubanks, 2018). A radical perspective argues that technical excellence is irrelevant if the system is inherently unjust. Future research must address:

Who determines ethical priorities in AI development?

Whose harms are acknowledged, and whose are overlooked?

What kinds of epistemic justice are ignored in mainstream AI discussions?

8.2. Reclaiming Civic Agency in AI Systems

Radical AI views governance as not just a matter of legal regulation but also as a means of empowering communities. Since AI systems significantly impact crucial aspects of public life, such as credit, bail, employment, and healthcare, governance should prioritize public involvement and civic participation. This change opens up new areas for research.

Participatory AI Design: How can communities collaboratively design or manage algorithmic systems?

Algorithmic Dissent: What methods (like reverse engineering, data strikes, and adversarial testing) can citizens use to challenge AI harms?

Tech Activism: How do artists, hackers, and organizers disrupt AI systems and narratives?

8.3. From Ethical AI to Algorithmic Resistance

The idea of ethical refusal—the right to abstain from designing or deploying specific systems—challenges the current push for constant innovation (Crawford, 2021). Radical AI urges researchers and practitioners to skip, postpone, or adjust AI development when the potential harms exceed the benefits. This encourages investigation into:

Whistleblowing and Professional Ethics: What risks and moral duties do insiders face when opposing unethical AI practices?

Slow Tech and Degrowth: Can AI development be integrated within post-growth or anti-extractive frameworks?

Decolonizing AI: How can indigenous, feminist, or postcolonial perspectives reshape our understanding of "intelligence" and "governance"?

8.4. Institutional Realignment

Most AI governance remains concentrated in high-income countries, mainly involving corporate and technocratic elites. A more radical approach advocates for decentralization, pluralism, and cross-border accountability. Future research should focus on these areas.

Build cross-border algorithmic governance coalitions in the Global South.

Establish community-led audit and redress mechanisms as alternatives to state or platform oversight.

Highlight successful resistance case studies, such as facial recognition bans, data justice movements, and non-state actor algorithmic audits.

8.5. Emergent Research Questions from the Framework

This new perspective raises important research questions that question the existing assumptions about AI governance in institutions, methods, and knowledge.

How can grassroots organizations influence the design of algorithms and policy in local governance?

What strategies help communities resist algorithmic surveillance?

How do civic actors address power imbalances in AI use, particularly in low-resource settings?

What does practical algorithmic refusal entail, and how can it be legally and institutionally protected?

Is it possible to create a model of AI accountability that prioritizes counter-power over compliance?

This shift extends beyond critique; it creates a new path for scholars, practitioners, and organizers seeking to integrate AI systems into democratic, just, and participatory frameworks—ensuring that technology serves the people, not the other way around.

9. Conclusion

Radical artificial intelligence necessitates that we advance beyond regulations inscribed in code to strategies formulated by communities, as genuine accountability is established through the processes of collective contestation.

This counter-framework paper argues that mainstream approaches to AI ethics, though well-intentioned, often focus too much on institutional legitimacy and technocratic logic. This focus can obscure the structural injustices, economic monopolies, and civic disempowerment present in the algorithmic age. By adopting the activist philosophy of Saul Alinsky, we propose a radical perspective that prioritizes resistance, disruption, and democratization of AI systems.

The Rules for Radical AI do not serve as an alternative ethical checklist but as a framework for understanding power, resistance, and civic agency in a world dominated by AI. Drawing from Alinsky’s strategic pragmatism, we shift the focus from compliance to confrontation, transparency to strategic exposure, and from design principles to public contestation. This change is significant and will reshape how AI research, policy, and activism are envisioned in the future.

Future work in AI governance must focus on power dynamics, not just technical accuracy. It should prioritize the right to refuse, the need for organization, and the validity of dissent as key elements of accountability. Researchers should investigate how grassroots movements influence the deployment of AI, how communities create oversight mechanisms, and how various interventions can disrupt harmful systems.

Revisiting Alinsky’s ideas helps us understand AI as a battleground for political struggle rather than just a technical field. Ethical AI should aim to redistribute voice, visibility, and agency. As decision-making becomes increasingly automated, adopting this radical approach is both timely and essential.

References

- Ayana, G.; Dese, K.; Daba Nemomssa, H.; Habtamu, B.; Mellado, B.; Badu, K.; Yamba, E.; Dzevela, Kong Jude. Decolonizing global AI governance: assessment of the state of decolonized AI governance in Sub-Saharan Africa. Royal Society Open Science 2024, 11(8). [Google Scholar] [CrossRef] [PubMed]

- Birhane, A. Algorithmic injustice: a Relational Ethics Approach. Patterns 2021, 2(2), 100205. [Google Scholar] [CrossRef] [PubMed]

- Calzada, I. The Right to Have Digital Rights in Smart Cities. Sustainability 2021, 13(20), 11438. [Google Scholar] [CrossRef]

- Conger, K.; Fausset, R.; Kovaleski, S. F. San Francisco Bans Facial Recognition Technology. The New York Times. 14 May 2019. Available online: https://www.nytimes.com/2019/05/14/us/facial-recognition-ban-san-francisco.html.

- Crawford, K. Atlas of AI: Power, Politics, and the Planetary Costs of Artificial Intelligence; Yale University Press, 2021. [Google Scholar]

- Delgado, F.; Yang, S.; Madaio, M.; Yang, Q. The Participatory Turn in AI Design: Theoretical Foundations and the Current State of Practice. 2023. [Google Scholar] [CrossRef]

- Eubanks, V. Automating Inequality: How high-tech tools profile, police, and punish the poor; St. Martin’s Press, 2018. [Google Scholar]

- Floridi, L.; Cowls, J.; Beltrametti, M.; Chatila, R.; Chazerand, P.; Dignum, V.; Luetge, C.; Madelin, R.; Pagallo, U.; Rossi, F.; Schafer, B.; Valcke, P.; Vayena, E. An Ethical Framework for a Good AI Society: Opportunities, Risks, Principles, and Recommendations. Minds and Machines 2018, 28(4), 689–707. [Google Scholar] [CrossRef] [PubMed]

- Naumann, Friedrich; Stiftung. AI Governance: How Public Participation Can Improve AI Governance: vTaiwan’s Initiatives. Friedrich Naumann Foundation. 22 October 2024. Available online: https://www.freiheit.org/taiwan/how-public-participation-can-improve-ai-governance-vtaiwans-initiatives.

- Greene, D.; Hoffmann, A. L.; Stark, L. Better, Nicer, Clearer, Fairer: A Critical Assessment of the Movement for Ethical Artificial Intelligence and Machine Learning. In Proceedings of the 52nd Hawaii International Conference on System Sciences; 2019. [Google Scholar] [CrossRef]

-

New America AI for the People, By the People. 2023. Available online: https://www.newamerica.org/the-thread/artificial-intelligence-governance/.

- Noble, S. U. Algorithms of Oppression: How Search Engines Reinforce Racism; NYU Press, 2018. [Google Scholar] [CrossRef]

- OECD. Tackling Civic Participation Challenges with Emerging Technologies; OECD Publishing, 2025; Available online: https://www.oecd.org/content/dam/oecd/en/publications/reports/2025/04/tackling-civic-participation-challenges-with-emerging-technologies_bbe2a7f5/ec2ca9a2-en.pdf?

- Alinsky, Saul David. Rules for radicals: A practical primer for realistic radicals; New York: Vintage, 1971. [Google Scholar]

- Tacheva, Z.; Ramasubramanian, S. AI Empire: Unraveling the interlocking systems of oppression in generative AI’s global order. Big Data & Society 2023, 10(2). [Google Scholar] [CrossRef]

- Toupin, S. Shaping feminist artificial intelligence. New Media & Society 2023, 26(1), 146144482211507. [Google Scholar] [CrossRef]

- Venkatachalapathy, R. Participatory Engineering of Algorithmic Social Technologies: An Extended Book Review of David G. Robinson’s Voices in the Code. Harvard Data Science Review 2025, 7(2). [Google Scholar] [CrossRef]

- Zuboff, S. The Age of Surveillance Capitalism: The Fight for a Human Future at the New Frontier of Power. Public Affairs. 2019.

|

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).