3. Entropy in complex systems: an examination of solids

The connection between thermodynamic entropy and degrees of freedom has been established for the classical ideal gas [

6] via physical interpretation of the function,

, which arises because the dimensionless change in entropy at constant volume in a system with constant-volume heat capacity

Cv is,

Comparison with the Boltzmann entropy, yields , which has been interpreted as the total number of ways of arranging the energy among the degrees of freedom in the system. This interpretation arises from the property of the Maxwellian distribution that it is the product of three independent Gaussian distributions of the velocity in each of the x, y and z directions. It was argued that each particle has access to, in effect, velocity states in each of the three directions, making a total of states for N particles across the three dimensions. The quantity .

The restriction to constant volume is important as it means that no energy is supplied to the system to do work and any energy that is supplied is distributed among the degrees of freedom. In a solid, the difference between the constant-volume and constant-pressure heat capacities is generally small and in practice the restriction to constant volume is not so important. Nonetheless, the change in entropy in equation (19) will still hold, but with Cv being dependent on temperature and therefore included in the integration.

The fact that the heat capacity varies with temperature means that the active degrees of freedom within the system are not so readily identifiable. In solids, it is generally accepted that the heat capacity is given by the Debye model, which accounts for a T3 dependence at low temperatures as the heat capacity tends to zero as T tends to zero. At high temperatures, the heat capacity approaches the Dulong-Petit limit of , which corresponds to six degrees of freedom per atom with the kinetic and potential energies each characterised by three degrees of freedom. As is well known, the basis of the Debye model of the heat capacity of solids is the existence of quantized vibrations and unlike a classical solid, in which the energy of the oscillation can steadily decrease simply through a reduction in the amplitude, the energy of a quantized oscillator cannot be continuously reduced. This means that at low temperatures some atoms cease to oscillate, implying that as the temperature is raised degrees of freedom are activated. However, the Debye theory of the heat capacity of a solid is not formulated in terms of degrees of freedom, but quantized oscillations and translating these into degrees of freedom is not straightforward.

The phonon spectrum of a crystalline solid always contains so-called “acoustic mode” vibrations, regardless of the solid. Despite its name, the frequency of the phonons can extend into GHz and beyond at the edge of the Brillouin zone, where the wave vector

, with

a being the lattice spacing. Close to the centre of the Brillouin zone the frequency tends to zero at very small wavevectors and these acoustic mode phonons represent travelling waves with relatively long wavelengths moving at the velocity of sound. These are the modes that are excited at very low temperatures and for which the solid acts as a continuum [

7]. Therefore, these vibrations do not correspond to the classical picture of atoms vibrating randomly relative to their neighbours but constitute a collective motion of a large number of atoms. The average energy per atom is probably very small and it is not clear just how many effective degrees of freedom are active. By contrast, at the edge of the Brillouin zone,

, meaning that the group velocity is close to, or could even be, zero. These are not travelling waves but isolated vibrations. Some materials also contain higher frequency phonon modes, called optical mode phonons because the frequency extends into the THz and beyond and the phonons themselves can be excited by optical radiation.

The detailed phonon structure for any given solid can be quite complex and the Debye theory, being general in nature, does not take this detail into account. Rather, it represents the internal energy as the integral over a continuous range of phonon frequencies with populations given by Bose-Einstein statistics and an arbitrary cut off for the upper frequency [

7]. The specific heat is then derived from the variation of internal energy with temperature. Despite the wide acceptance of the Debye theory, it is also recognised that it does not constitute a complete description of the specific heats. Einstein was the first to consider the specific heat of a solid as arising from quantized oscillations, but he used a single frequency rather than a spectrum of frequencies. The transition to a continuous spectrum of phonon frequencies changed the low temperature behaviour. Instead of the

T2 dependence of the specific heat derived by Einstein, Debye’s theory gives

at very low temperatures. All this is well known and forms the staple of undergraduate courses in this topic, but what is perhaps not so well known is that, according the Blackman [ref 7, p24], “The experimental evidence for the existence of a

T3 region is, on the whole, weak…”. Moreover, the Debye theory gives rise to a single characteristic temperature, the Debye temperature for a given solid which should define the heat capacity over the whole temperature range, but in fact does not. Low temperature heat capacities generally require a different Debye temperature from high temperature values.

This discussion of the inadequacies of the Debye model is important because in the course of this work it has become apparent that the high temperature specific heats of the solids, mainly metals, considered in this work also deviates from the expected variation and makes the association between the specific heat and the active degrees of freedom even more obscure. As discussed by Blackman [ref 7, p14], at high temperatures the Debye model should approach the classical limit of 3

Nk and were it to do so the argument could be made that at any intermediate temperature the heat capacity represented the effective number of active degrees of freedom. However, in [

5] the author presented data for the molar heat capacity of silicon over the whole temperature range from 0K to the melting point [

8]. Close to the melting point (1687K) the constant pressure heat capacity is 28.93 J mol

-1 K

-1, which, assuming

½kBT per degree of freedom, is equivalent to 7 degrees of freedom per atom. Even the constant volume heat capacity,

Cv, for which data is available up to 800K [

9], is already 25 J mol

-1 K

-1 at 800K, which equates to six degrees of freedom per atom (3

NAk, NA being Avogadro’s number corresponds to 24.9 J mol

-1 K

-1). Clearly, the heat capacity does not directly represent the active degrees of freedom because there is no classical theory which allows for 7 degrees of freedom per atom. This means that the function

, which gives the number of ways of distributing the energy among the degrees of freedom in an ideal gas, has no directly comparable meaning in a solid.

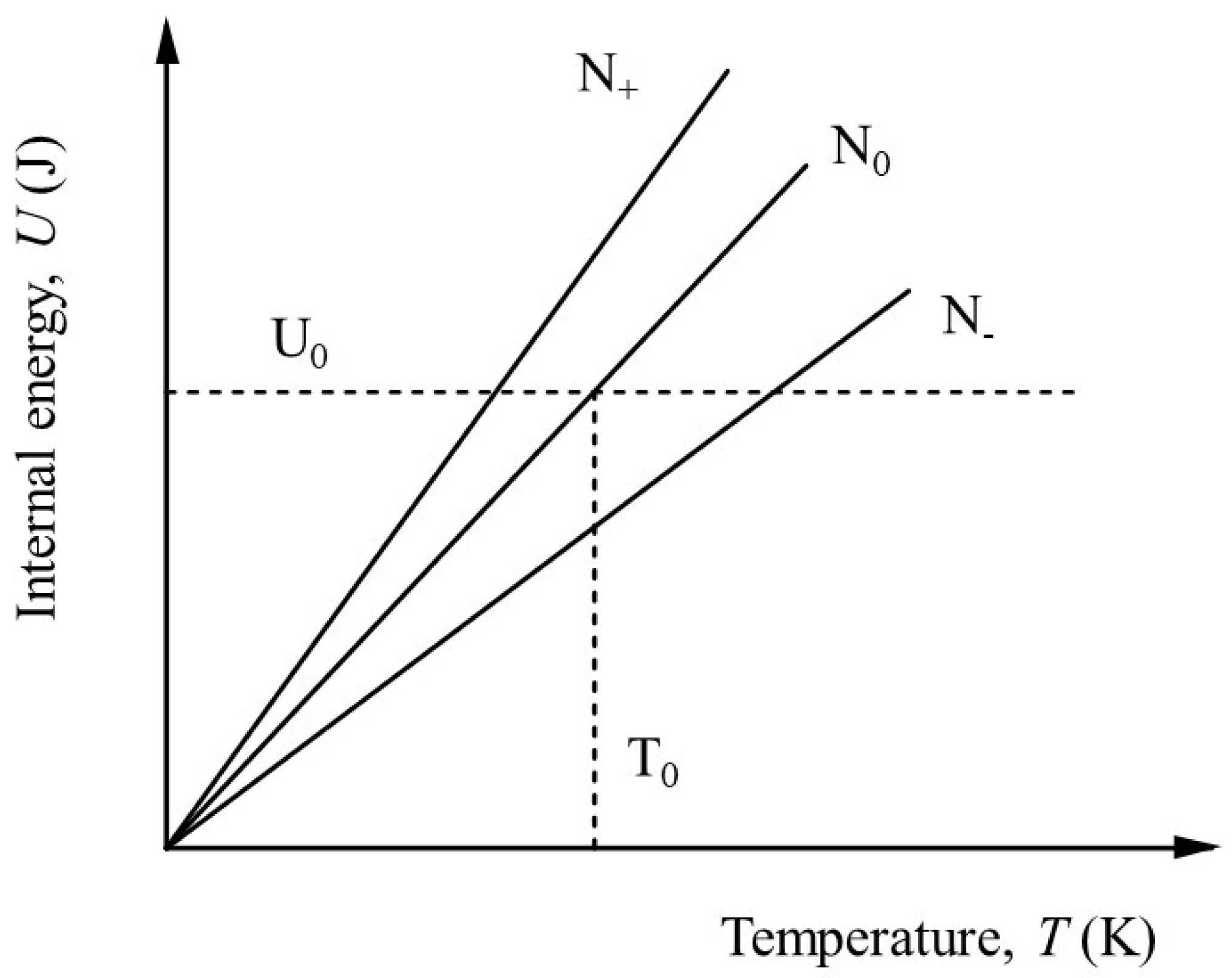

Nonetheless, we can suppose that there exists at any given temperature

β(T) active degrees of freedom, each associated with an average of

½kBT of energy. Then, for one mole of substance, the internal energy can be written as,

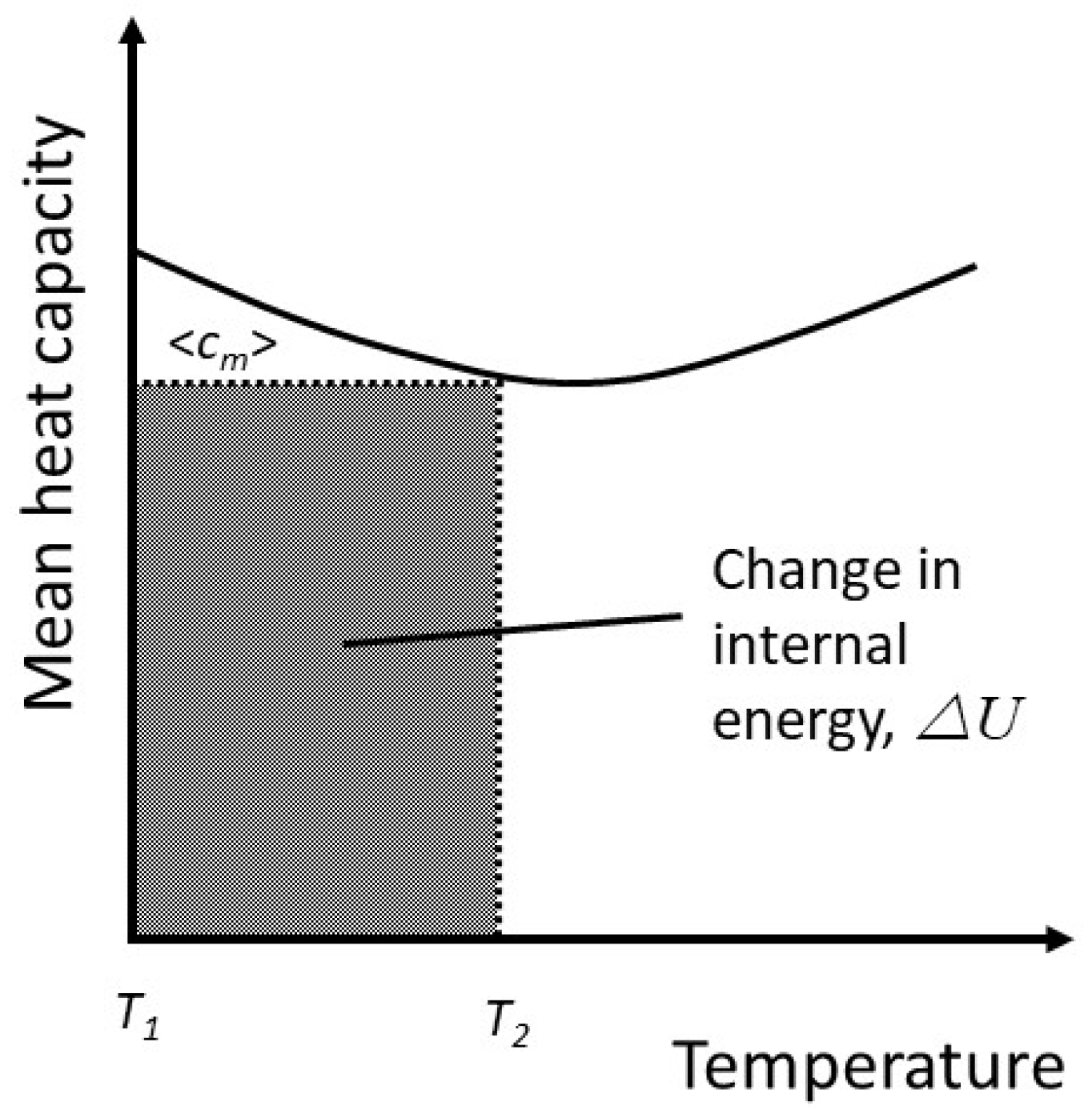

The meaning of this is perhaps not immediately apparent, but it is equivalent to taking an average heat capacity. For simplicity, let

has the units of Joules, but it can be considered a temperature if we effectively rescale the absolute temperature so that

If the molar heat capacity at constant volume is

, then for 1 mole,

By definition, the internal energy at some value of

is,

The angular brackets denote an average. Therefore

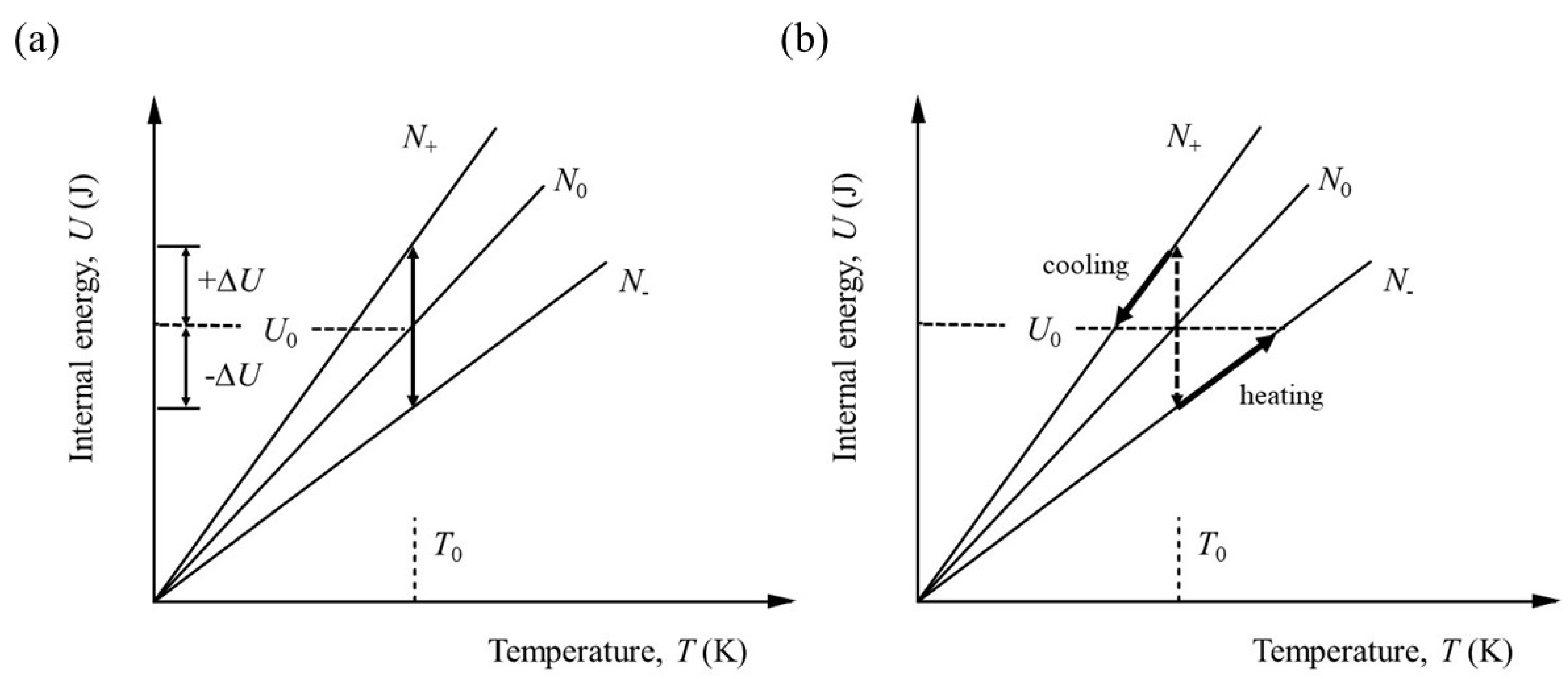

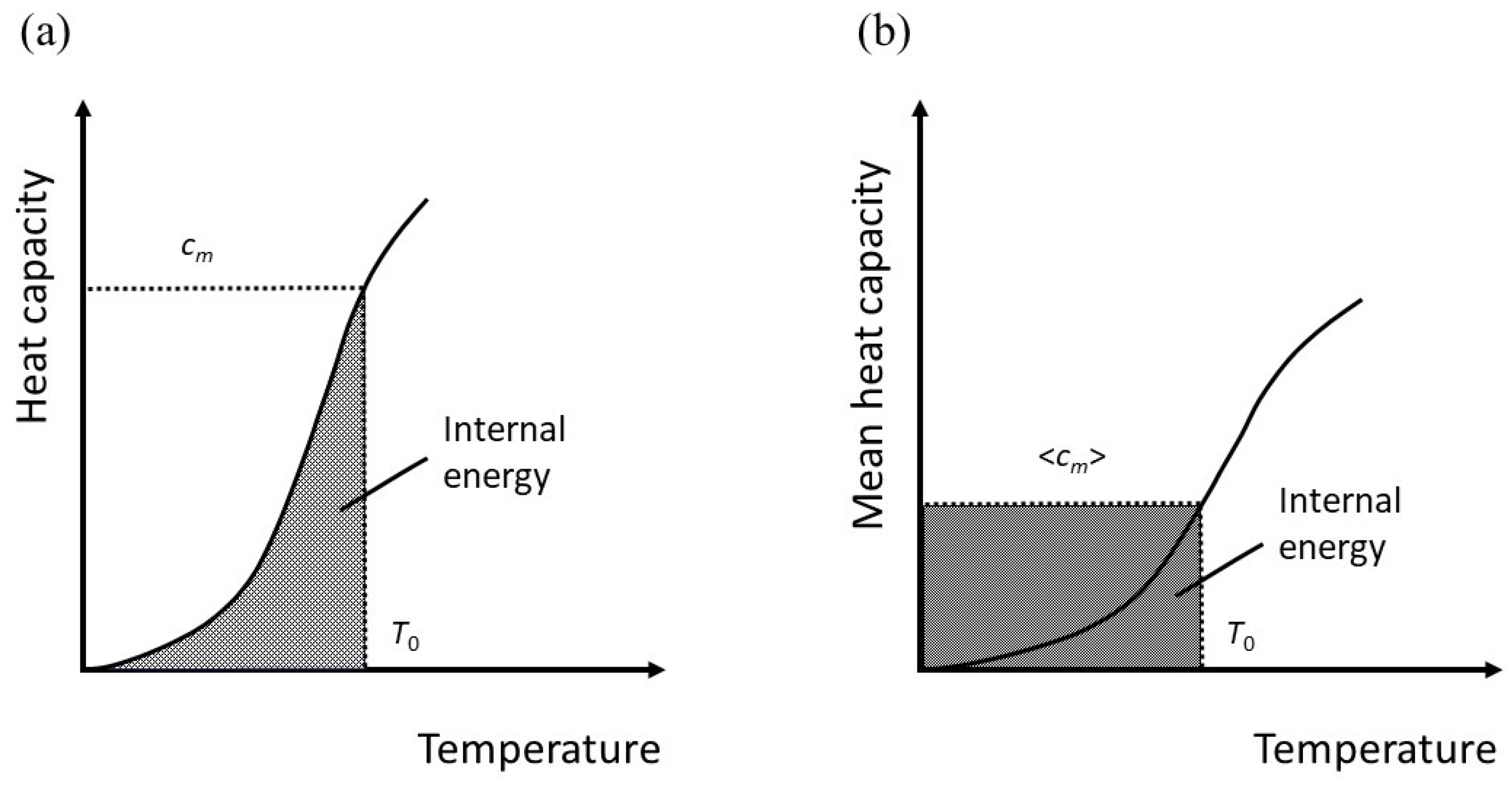

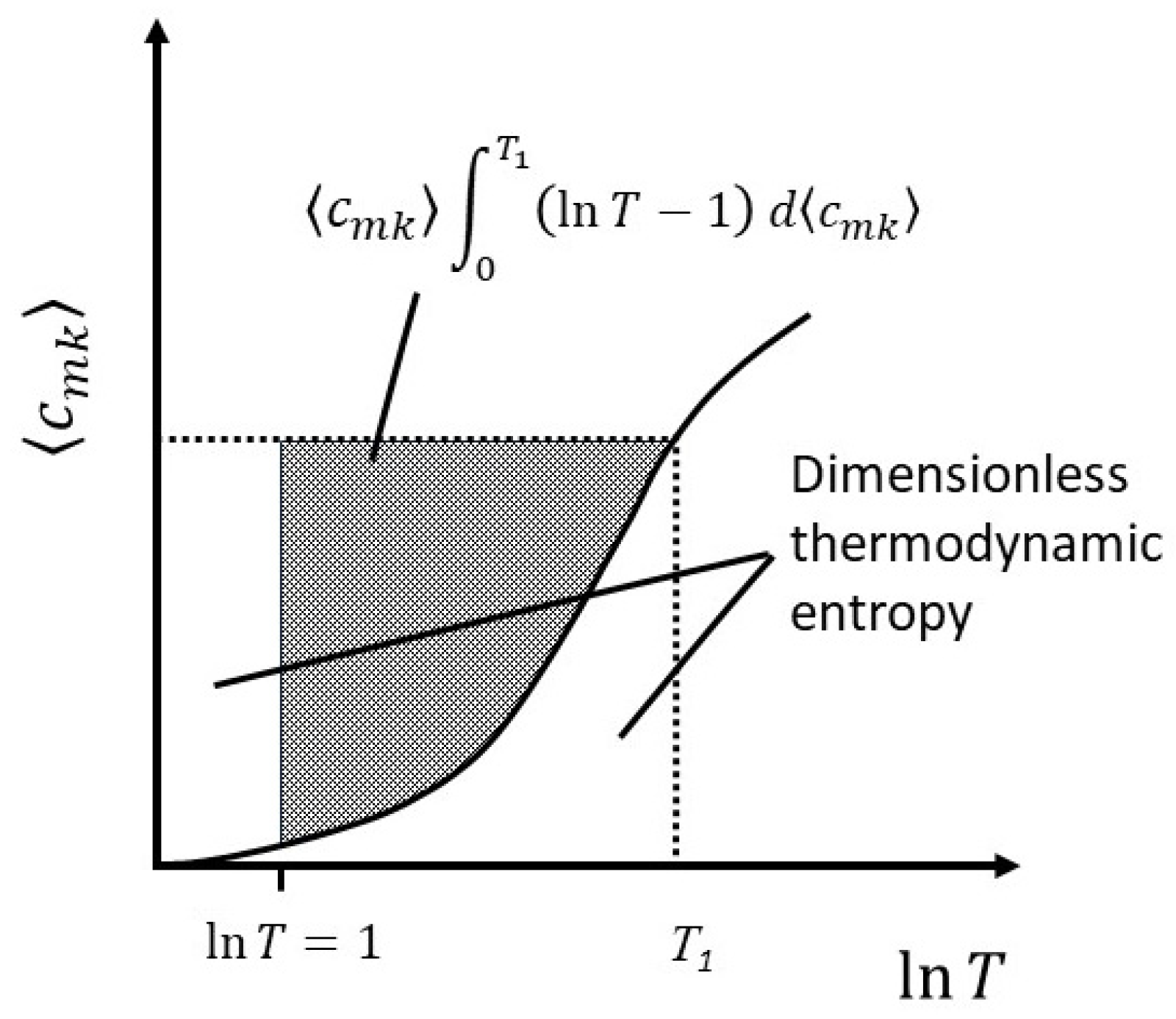

This is equivalent to the geometrical transformation indicated in

Figure 3(a) and 3(b).

By comparison with equation (22),

By definition, for simple solids for which the heat capacity increases with temperature,

, so we can expect the number of active degrees of freedom to be less than would be indicated by the heat capacity alone. Making use of equations (22) and (26) together, we have,

In other words, for a heat capacity that varies with temperature, the effective number of degrees of freedom is less than twice the dimensionless heat capacity, becoming equal only when all the degrees of freedom have been activated and . This is true regardless of the system, whether solid, liquid or gas. It should be noted, however, that equation (27) does not account for latent heat and therefore implies a restriction to the solid state or at least to ideal systems in which phase changes do not occur.

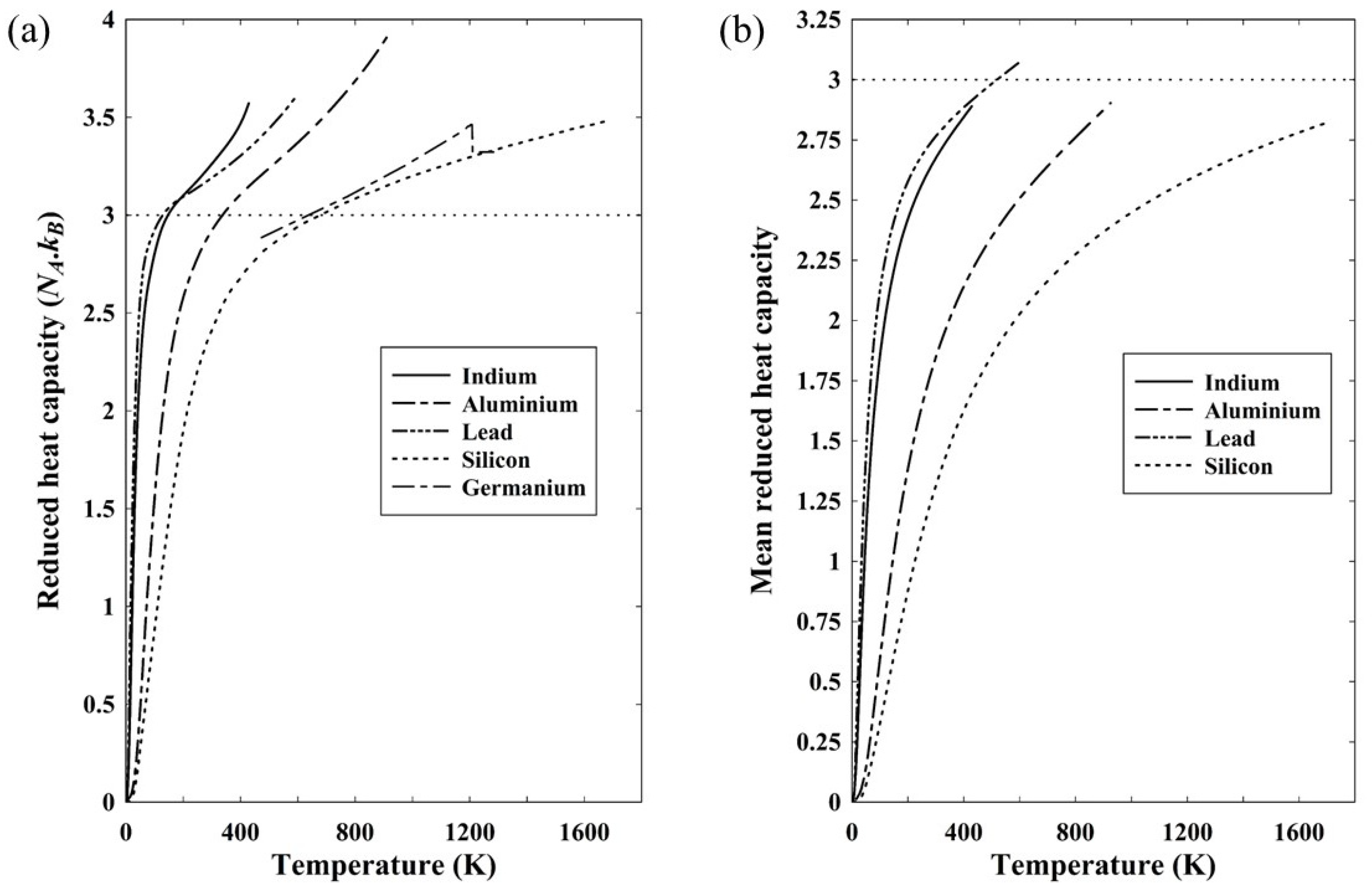

Figure 4(a) shows the reduced heat capacity of five solids based on the constant pressure heat capacity. As discussed in relation to equation (22), the heat capacity at constant volume should be used to give the internal energy, but it is assumed here that the difference between constant volume and constant pressure is small. Included in

Figure 4(a) is data for silicon and, for comparison, germanium as a similar semiconducting material, though the available data is restricted in its temperature range [

10]. The reduced heat capacity is the dimensionless heat capacity of equation (22) normalized to Avogadro’s number and a reduced heat capacity of 3 would therefore correspond to the classical Dulong-Petit limit of a molar heat capacity of 3

NAk. In all cases, the reduced heat capacity exceeds 3 at a temperature close to the Debye temperature.

Figure 4(b) shows the mean reduced heat capacity of four of the solids for which data down to

T=0 is readily available.

There is nothing particular about the elements represented in

Figure 4 other than that they have moderate to low melting points. They were otherwise chosen because an internet search produced a complete range of data for each of the three metals, aluminium [

11], indium [

12] and lead [

13]. It is noticeable that, with the exception of lead, all four have a mean reduced heat capacity below three. Even for lead, the discrepancy is less than 3%, with the maximum value being 3.08. Arblaster claims an accuracy of 0.1% in his data for temperatures exceeding 210K [

13], but it should be noted that in all cases in

Figure 4(a), the heat capacity relates to constant pressure rather than constant volume. Strictly, the latter is required to calculate the internal energy and hence the mean heat capacity, but, experimentally, measurement at constant pressure is much easier to undertake. Transformation to constant volume is straightforward given knowledge of the compressibility as well as the thermal expansivity [

7] and reduces the heat capacity slightly, but without doing the calculations it is not possible to say for certain that the mean reduced heat capacity at constant volume will stay below 3.

Figure 4(a) shows very clearly, however, that the heat capacity is not a direct representation of the active degrees of freedom. By definition, from equations (20) and (26), the mean heat capacity is a direct representation of the number of effective degrees of freedom and it is clear that even up to the melting point, the small excess with lead notwithstanding, there are less than 3

kT associated with each constituent atom.

The mean heat capacity can now be used to attach a physical meaning to the thermodynamic entropy. From equation (20), the change in internal energy is

Under the assumption that the internal energy is partitioned equally among active degrees of freedom, the change in internal energy comprises two components: the change in the average energy among the degrees of freedom already activated and the distribution of some energy into newly activated degrees of freedom, each of which contains an average energy

. Both of these terms contribute to the entropy. However, as the change in internal energy is written in terms of

TB, it is necessary to divide the entropy by

kB to give the dimensionless quantity,

Upon substitution of equation (29), the dimensionless entropy change is,

Here we make use of the fact that

It is notable that the entropy is still given as a function of the absolute temperature in Kelvins and the reason for this will become clear.

The first term on the right in equation (31) can be interpreted as the change in the function

, which has already been defined for a classical ideal gas as the number of arrangements by direct comparison of the thermodynamic entropy and Boltzmann’s entropy [

6]. Straightforward differentiation of lnW with respect to lnT yields

The change in thermodynamic entropy therefore represents the fractional change in the number of arrangements or, equivalently, the change in the Boltzmann entropy, plus the addition of new degrees of freedom. Integrating equation (31) by parts to get the total entropy at some temperature

T1, we find,

At

,

, but

and the lower limit is also zero. Therefore,

The first term on the RHS is recognizable as the Boltzmann entropy,

. In the second term on the RHS,

is a positive number for

T>1, and greater than unity for

. Therefore, for systems in which the heat capacity varies with temperature the thermodynamic entropy at any given temperature above approximately 3K is less than the Boltzmann entropy for the simple reason that the number of arrangements at any given temperature depends only on the number of degrees of freedom active at that temperature, whereas the thermodynamic entropy, being given by an integral over all temperature, accounts for the fact that the number of degrees of freedom have changed over the temperature range. This relationship is illustrated in

Figure 5.