Submitted:

25 April 2026

Posted:

28 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- Which AI-enabled career coaching use cases do experts identify as most relevant and feasible for women’s career development, particularly in male-dominated fields?

- What career challenges, transition points, and support needs should these use cases be designed to address?

- In what ways do existing career support models and practices fall short in meeting these needs?

- What design, risk, and governance requirements do experts identify for the responsible development and use of such tools?

2. Materials and Methods

3. Results

3.1. Theme 1. Integrated Expert Lenses & Theoretical Foundations

3.1.1. Multidisciplinary Career Frameworks (n=14)

3.1.2. Critique of the Androcentric Model (n=11)

3.2. Theme 2. Biopsychosocial Ecosystem of Women’s Careers

3.2.1. Gender-Specific Life Transitions (n=10)

3.2.2. Systemic-Psychological Interaction (n=13)

3.3. Theme 3. AI Architecture: Mechanics of Intervention

3.3.1. Adaptive Intervention Modalities (n=11)

3.3.2. System Intelligence and Coordination (n=5)

3.3.3. Affective Gating and Readiness (n=5)

3.4. Theme 4. The Human-AI Coaching Dialectic

3.4.1. Technological Affordances/Strengths (n=13)

3.4.2. Technological Limitations (n=15)

3.5. Theme 5. Ethical Governance & the Agency Risk

3.5.1. Reductionism Bias (n=7)

3.5.2. Safety, Privacy & Clinical Safeguards (n=11)

3.5.3. Dependency & Agency Erosion (n=8)

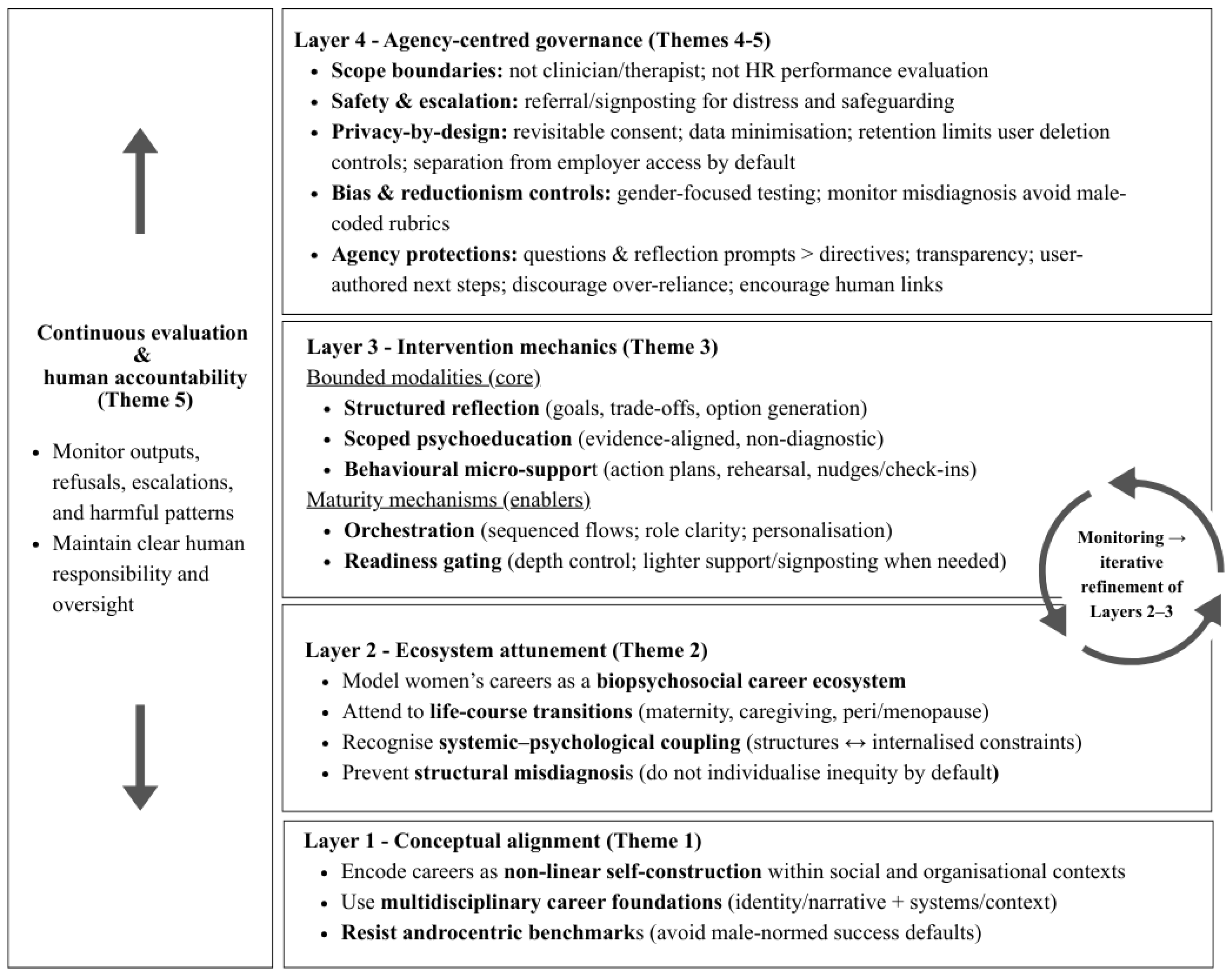

3.6. Theme 5. Cross-Theme Synthesis: Agency-Centred Framework

4. Discussion

4.1. Practical Implications

4.2. Limitations

4.3. Future Research

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Appendix A

| Theme | Potential Use Cases |

| Life-Work balance | - Setting boundaries - Reducing mental load - Practicing conversations with managers to uphold balance without career penalty |

| Remote influence | - Building visibility in virtual environments - Navigating organisational politics remotely - Growing careers without co-location |

| Promotion & self-advocacy | - Negotiation skills for promotions - Effective self-promotion - Building a network of allies |

| Leadership & transitions | - Coaching for leadership roles - Supporting career returners (e.g., post-maternity leave) - Navigating career pivots |

| Bias response | - Assertiveness training - Responding to microaggressions - Navigating salary negotiations through a bias-aware lens |

| Inclusive well-being | - Preventing burnout - Building resilience - Managing emotional labour |

| Mentor stories | - AI-curated inspirational journeys from diverse women leaders |

| AI literacy | - Building confidence in using AI tools - Developing skills for AI-integrated workplaces - Understanding AI ethics |

| Women's physiological changes | - Navigating career and leadership through physiological changes (menstrual cycle, maternity, pre and menopause) - Strategies for managing, emotional states, energy and workload |

Appendix B

| # | Domain expertise | Title | Gender | Years of experience | Region |

| 1 | AI coaching | Founder/executive | M | 10-14 | EU |

| 2 | AI coaching | Founder/executive | M | 10-14 | EU |

| 3 | Executive and leadership coaching | Senior executive coach | F | 20+ | UK/ I |

| 4 | Career development and HR | Senior executive coach | F | 20+ | NA |

| 5 | Mental health and women’s health | Psychiatrist and menopause specialist | F | 5-9 | NA |

| 6 | Executive and leadership coaching | Senior executive coach |

F | 20+ | NA |

| 7 | AI ethics and coaching | Senior executive coach, AI ethics advisor | F | 10-14 | UK/ I |

| 8 | Executive and leadership coaching | Senior executive coach | F | 20+ |

UK/ I |

| 9 | Career development practitioner | Senior executive coach |

F | 15-19 |

UK/ I |

| 10 | Executive and leadership coaching and HR | Senior executive coach |

F | 20+ |

NA |

| 11 | General practice and women’s health | General practitioner and menopause specialist | F | 15-19 |

UK/ I |

| 12 | Career development and counselling | Career development academic | F | 10-14 | EU |

| 13 | Career development and counselling | Career development academic | F | 15-19 |

EU |

| 14 | AI ethics and DEI (Diversity, equity, and inclusion) | AI strategy and ethics advisor | F | 15-19 |

NA |

| 15 | DEI (Diversity, equity, and inclusion) | DEI consultant | F | 5-9 |

EU |

| 16 | Organisational development and HR | Organisational transformation advisor | F | 20+ |

EU |

Appendix C

Appendix C.1. Interview Guide

- Brief introduction and explanation of the purpose of the study.

- Remind the participant of confidentiality and the right to withdraw at any time.

- Confirm consent to audio and video recording.

- Outline the structure of the interview.

- Could you briefly describe your background and experience in career development, coaching, AI technologies, and/or supporting women?

- In your professional experience, what are the most common career challenges faced by women in their career journeys?

- Where do you see gaps or limitations in existing career development models or applications for women?

- Which career development or coaching frameworks or models do you typically draw on in your work (e.g., SCCT, Career Construction)?

- How do these frameworks inform the way you structure your coaching or assess the needs of women, particularly those navigating male-dominated fields like tech?

- Which elements of these models do you think could be meaningfully translated into an AI-based coaching tool to support women’s career development?

- How might AI systems support, if at all, coaching processes such as identity exploration, values clarification, goal attainment, or life-role integration for women in tech?

-

Do you think AI can replicate a human coach – that is, do as well, underperform, or outperform a human coach?

- o

- If underperform: where do you see the limitations of AI in this area?

- o

- If as well or outperform: in your view, what enables AI to match or exceed the capabilities of human coaches? Do you see any limitations despite this?

- In your view, what role, if any, might AI play in addressing gender-specific barriers or advancing equity in career coaching / development?

- What limitations or risks do you foresee, if any, in using AI for career development, particularly in ways that could impact women’s wellbeing, autonomy, safety, or inclusion?

- Based on your clinical experience, what are some of the most significant physiological changes women typically experience throughout their working lives (e.g., menstrual cycle, pregnancy, post-partum, perimenopause, menopause)?

- How might these changes affect women’s energy levels, emotional states, cognitive focus, or general capacity to manage professional responsibilities?

- In your view, how well are these physiological experiences currently understood and supported in workplace environments?

-

Are there any specific support strategies (clinical or behavioural) that could be adapted or translated into career coaching or workplace interventions?

- o

- Strategies to help managing biological changes, emotional states, energy/ workload, cognitive shifts

- What role, if any, could technology – particularly AI – play in supporting women to navigate their careers in light of these physiological changes?

- Are there any ethical considerations or risks that should be kept in mind when designing AI tools addressing women’s health in the context of professional life?

-

Based on preliminary findings, here are some AI career coaching use cases identified in the literature [present short list].

- o

- Which of these use cases do you find most promising or relevant? Why?

- o

- Are there any that you would modify, remove, or further develop?

- Are there any additional use cases or innovative applications of AI for career coaching that come to mind based on your experience?

- Of the use cases discussed, which do you believe would have the greatest impact on supporting women in tech? Why?

- How feasible do you believe it is to implement these use cases in real-world coaching environments?

- What ethical considerations or risks should be taken into account when designing AI career coaching solutions for women in tech?

- Is there anything else you would like to add that you feel is important for this research?

- Would you be open to being contacted for follow-up clarification if necessary?

References

- Beg, M. J. (2025). Responsible AI integration in mental health research: Issues, guidelines, and best practices. Indian Journal of Psychological Medicine, 47(1), 5–8. [CrossRef]

- Bimrose, J., McMahon, M., & Watson, M. (2013). Career trajectories of older women: implications for career guidance. British Journal of Guidance & Counselling, 41(5), 587–601. [CrossRef]

- Blake, A. (2018). Your body is your brain: Leverage your somatic intelligence to find purpose, build resilience, deepen relationships and lead more powerfully. Trokay Press.

- Braun, V., & Clarke, V. (2019). Reflecting on reflexive thematic analysis. Qualitative Research in Sport, Exercise and Health, 11(4), 589–597. [CrossRef]

- Braun, V., & Clarke, V. (2022). Thematic analysis: A practical guide. London: SAGE.

- Brey, P. & Dainow, B. (2024). Ethics by design for artificial intelligence. AI Ethics 4, 1265–1277. [CrossRef]

- Brown, A., Bimrose, J., Barnes, S. A., & Hughes, D. (2012). The role of career adaptabilities for mid-career changers. Journal of Vocational Behavior, 80(3), 754-761. [CrossRef]

- Charness, G., Le Bihan, Y. and Villeval, M. C. (2024). Mindfulness training, cognitive performance and stress reduction. Journal of Economic Behavior & Organization, 217, 207-226. [CrossRef]

- Creswell, J. W., & Poth, C. N. (2018). Qualitative inquiry and research design: Choosing among five approaches (4th ed.). Thousand Oaks, CA: Sage.

- Dattathrani, S. & De’, R. (2023). The Concept of Agency in the Era of Artificial Intelligence: Dimensions and Degrees. Information Systems Frontier v.25, p.29–54. [CrossRef]

- De Looff, P. C., Cornet, L. J. M., Embregts, P. J. C. M., Nijman, H. L. I., & Didden, H. C. M. (2018). Associations of sympathetic and parasympathetic activity in job stress and burnout: A systematic review. PLOS ONE, 13(10), e0205741. [CrossRef]

- Del Corso, J. & Rehfuss, M. C. (2011). The role of narrative in career construction theory. Journal of Vocational Behavior. Volume 79, Issue 2, Pages 334-339. [CrossRef]

- European Commission. (2024). The gender pay gap situation in the EU: Facts and figures. https://commission.europa.eu/strategy-and-policy/policies/justice-and-fundamental-rights/gender-equality/equal-pay/gender-pay-gap-situation-eu_en.

- European Parliament and Council of the European Union. (2016). Regulation (EU) 2016/679 of the European Parliament and of the Council of 27 April 2016 on the protection of natural persons with regard to the processing of personal data and on the free movement of such data, and repealing Directive 95/46/EC (General Data Protection Regulation). Official Journal of the European Union, L 119, 1–88. http://data.europa.eu/eli/reg/2016/679/oj.

- Gibson, C. (1995). An Investigation of Gender Differences in Leadership Across Four Countries. Journal of International Business Studies 26, 255–279. [CrossRef]

- Graßmann, C., & Schermuly, C. C. (2021). Coaching With Artificial Intelligence: Concepts and Capabilities. Human Resource Development Review, 20(1), 106-126. [CrossRef]

- Greenhaus, J.H., Callanan, G.A., & Godshalk, V.M. (2018). Career Management for Life (5th ed.). Routledge. [CrossRef]

- Guo, Y., Guo, M., Su, J., Yang, Z., Zhu, M., Li, H., Qiu, M., & Liu, S.S. (2024). Bias in Large Language Models: Origin, Evaluation, and Mitigation. Computation and Language. arXiv. [CrossRef]

- Jack, G., Riach, K., Bariola, E., Pitts, M., Schapper, J., & Sarrel, P. (2016). Menopause in the workplace: What employers should be doing. Maturitas, Volume 85, 2016, Pages 88-95. [CrossRef]

- Haase, J. (2025). Augmenting coaching with GenAI: Insights into use, effectiveness, and future potential. arXiv. [CrossRef]

- Hodges, T. D., & Clifton, D. O. (2004). Strengths-Based Development in Practice. In P. A. Linley & S. Joseph (Eds.), Positive psychology in practice (pp. 256–268). John Wiley & Sons, Inc. [CrossRef]

- Holland, J. L. (1997). Making vocational choices: A theory of vocational personalities and work environments (3rd ed.). Psychological Assessment Resources.

- Ibarra H., Carter N.M. & Silva C. (2010). Why men still get more promotions than women. Harvard business review, 88(9), 80–126.

- Iqbal, A. & Maldonado Garcia, M. (2020). Gendered Narratives and Linguistic Force-Dynamics: Influencing Career Paths of Women. South Asian Journal of Social Studies and Economics. [CrossRef]

- Kim, T. W., Usman, U., Garvey, A., & Duhachek, A. (2026). From algorithm aversion to AI dependence: Deskilling, upskilling, and emerging addictions in the GenAI age. Consumer Psychology Review, 9(1), 142–164. [CrossRef]

- Laine, J., Minkkinen, M., & Mäntymäki, M. (2025). Understanding the Ethics of Generative AI: Established and New Ethical Principles. Communications of the Association for Information Systems, 56, 1-25. [CrossRef]

- Law, B. (2009). Building on what we know: Community-interaction and its importance for contemporary careers-work. The Career-leaning NETWORK. http://www.hihohiho.com/memory/cafcit.pdf.

- Lent, R.W., Brown, S. D., & Hackett, G. (1994). Toward a Unifying Social Cognitive Theory of Career and Academic Interest, Choice, and Performance. Journal of Vocational Behavior, 45(1), 79-122. [CrossRef]

- Lent, R. W., & Brown, S. D. (2008). Social Cognitive Career Theory and Subjective Well-Being in the Context of Work. Journal of Career Assessment, 16(1), 6-21. [CrossRef]

- Malterud, K., Siersma, V. D., & Guassora, A. D. (2016). Sample Size in Qualitative Interview Studies: Guided by Information Power. Qualitative health research, 26(13), 1753–1760. [CrossRef]

- Makarem, Y. & Wang, J. (2020). Career experiences of women in science, technology, engineering, and mathematics fields: A systematic literature review. Human Resource Development Quarterly. 2020;31:91–111. [CrossRef]

- McDonald, P., Brown, K. & Bradley, L. (2005), Have traditional career paths given way to protean ones?: Evidence from senior managers in the Australian public sector. Career Development International, Vol. 10 No. 2 pp. 109–129.: . [CrossRef]

- McIntosh, B., McQuaid, R., Munro, A., & Dabir-Alai, P. (2012), Motherhood and its impact on career progression. Gender in Management: An International Journal, Vol. 27 No. 5 pp. 346–364. [CrossRef]

- Montag, C., Nakov, P., & Ali, R. (2024). Considering the IMPACT framework to understand the AI-well-being-complex from an interdisciplinary perspective. Telematics and Informatics Reports, 12, 100112. [CrossRef]

- Mosconi, L. (2024). The menopause brain: New science empowers women to navigate the pivotal transition with knowledge and confidence. Penguin Press.

- Nakao, Y., Stumpf, S., Ahmed, S., Naseer, A. & Strappelli, L. (2022). Toward Involving End-users in Interactive Human-in-the-loop AI Fairness. ACM Trans. Interact. Intell. Syst. 12, 3, Article 18, 30 pages. [CrossRef]

- Ndabeni, L.L., Mashigo, P.M., Mbongi Ndabeni, M., & Rugimbana, R. (2020). Mainstreaming Gender in the Analyses of Science Technology and Innovation Policies. International Journal of Science and Research, Volume 9 Issue 6, June. https://dx.doi.org/10.21275/SR20427234931.

- O’Neil, D. A. & Bilimoria, D. (2005). Women’s career development phases: Idealism, endurance, and reinvention. Career Development International, 10(3), 168–189. [CrossRef]

- O’Neil, D. A., Hopkins, M. M., & Bilimoria, D. (2008). Women’s careers at the start of the 21st century: Patterns and paradoxes. Journal of Business Ethics, 80, 727–743. [CrossRef]

- O’Neil, D. A. & Hopkins M. M. (2015). The impact of gendered organizational systems on women’s career advancement. Organizational Psychology. Frontiers in Psychology, Volume 6 - 2015. [CrossRef]

- O'Neill, M. T., Jones, V., & Reid, A. (2023). Impact of menopausal symptoms on work and careers: a cross-sectional study. Occupational medicine (Oxford, England), 73(6), 332–338. [CrossRef]

- Passmore, J. & Tee, D. (2023). Can Chatbots like GPT-4 replace human coaches: Issues and dilemmas for the coaching profession, coaching clients and for Organisations. The Coaching Psychologist. 19(1), 47-54. [CrossRef]

- Passmore, J., Olafsson, B. & Tee, D. (2025a). A systematic literature review of artificial intelligence (AI) in coaching: insights for future research and product development. Journal of Work-Applied Management, Vol. ahead-of-print. [CrossRef]

- Passmore, J., Tee, D. R., & Rutschmann, R. (2025b). Getting better all the time: using professional human coach competencies to evaluate the quality of AI coaching agent performance. Coaching: An International Journal of Theory, Research and Practice, 1–17. [CrossRef]

- Passmore, J., Tee, D., Palermo, G. & Rutschmann, R. (2025c). Human coaches & AI Coaching Agents: An exploratory quasi experimental design study of workplace client attitudes performance. Journal of Work - Applied Management, http://doi.org/10.1108/JWAM-02-2025-0032.

- Patton, W. & McMahon, M. (2006). The Systems Theory Framework of Career Development and Counseling: Connecting Theory and Practice. Int J Adv Counselling 28, 153–166. [CrossRef]

- Portell-Fonolla, S., El Fassi, Y., Gaspar, A.G., Correia, L., & Pinto, J. C. (in press). AI-Driven Career Development: A Scoping Review of Applications and Interventions for Women’s Career Development. International Journal of Educational and Vocational Guidance.

- Pryor, R. G. L., & Bright, J. (2003). The Chaos Theory of Careers. Australian Journal of Career Development, 12(3), 12-20. [CrossRef]

- Rahim A.G., Akintunde, O., Afolabi, A.A., & Okikiola I.O. (2018). The glass ceiling conundrum: Illusory belief or barriers that impede Women’s career advancement in the workplace. Journal of Evolutionary Studies in Business, 3(1), 137-166. [CrossRef]

- Ryan, S., Charter, R., Ussher, J., Perich, T., Power, R., & Sperring, S. (2025). Navigating menopause at work: A rapid review and narrative synthesis of psycho-educational and behavioral interventions to support menopausal women in the workplace. Women’s Reproductive Health. Advance online publication. [CrossRef]

- Ryan, M.K. & Morgenroth, T. (2024). Why We Should Stop Trying to Fix Women: How Context Shapes and Constrains Women's Career Trajectories. Annual Review Psychology. 75:555-572. [CrossRef]

- Savickas, M. L. (2005). The Theory and Practice of Career Construction. In S. D. Brown & R. W. Lent (Eds.), Career development and counseling: Putting theory and research to work (pp. 42–70). John Wiley & Sons, Inc.

- Stamarski, C.S. & Son Hin, L.S. (2015). Gender inequalities in the workplace: the effects of organizational structures, processes, practices, and decision makers’ sexism. Front. Psychol. 6:1400. [CrossRef]

- Steen, M. (2013). Co-Design as a Process of Joint Inquiry and Imagination. Design Issues 2013; 29 (2): 16–28. [CrossRef]

- Steenkamp, B., & Terblanche, N. (2026). Exploring artificial intelligence coaching’s role in translating business training into real-world applications. SA Journal of Human Resource Management, 24, 12 pages. [CrossRef]

- Stratton S. J. (2024). Purposeful Sampling: Advantages and Pitfalls. Prehospital and disaster medicine, 39(2), 121–122. [CrossRef]

- Sullivan, S., & Baruch, Y. (2009). Advances in career theory and research: A critical review and agenda for future exploration. Journal of Management, 35(6), 1542–1571. [CrossRef]

- Sullivan, S. E. & Al Ariss, A. (2021). Making sense of different perspectives on career transitions: A review and agenda for future research. Human Resource Management Review, Volume 31, Issue 1, 100727. [CrossRef]

- Super, D. E., Savickas, M. L., & Super, C. M. (1996). The life-span, life-space approach to careers. In D. Brown & L. Brooks (Eds.), Career choice and development (3rd ed., pp. 121-178). San Francisco: Jossey-Bass.

- Terblanche, N., Molyn, J., de Haan E., & Nilsson, V.O. (2022). Comparing artificial intelligence and human coaching goal attainment efficacy. PLoS ONE 17(6): e0270255. [CrossRef]

- Terblanche, N., Molyn, J., Williams, K., & Maritz, J. (2023), Performance matters: students' perceptions of Artificial Intelligence Coach adoption factors, Coaching: An International Journal of Theory, Research and Practice, Vol. 16 No. 1, pp. 100-114. [CrossRef]

- Terblanche, N. H. D. (2024). Artificial Intelligence (AI) Coaching: Redefining People Development and Organizational Performance. The Journal of Applied Behavioral Science, 60(4), 631-638. [CrossRef]

- Terblanche, N.H.D., Van Heerden, M., & Hunt, R. (2024), The influence of an artificial intelligence chatbot coach assistant on the human coach-client working alliance. Coaching: An International Journal of Theory, Research and Practice, Vol. 17 No. 2, pp. 1-18. [CrossRef]

- Torres, A. J. C., Barbosa-Silva, L., Oliveira-Silva, L. C., Miziara, O. P. P., Guahy, U. C. R., Fisher, A. N., & Ryan, M. K. (2024). The Impact of Motherhood on Women’s Career Progression: A Scoping Review of Evidence-Based Interventions. Behavioral Sciences, 14(4), 275. [CrossRef]

- Trauth, E. M. (2006). Theorizing gender and information technology research. In E. M. Trauth (Ed.), Encyclopedia of gender and information technology (pp. 1154–1159). IGI Global. [CrossRef]

- Tupper, H., & Ellis, S. (2020). The squiggly career: Ditch the ladder, discover opportunity, design your career. Penguin.

- Whitmore, J. (2009). Coaching for Performance: Growing Human Potential and Purpose-The Principles and Practice of Coaching and Leadership. 4th Edition, Nicholas Brealey Publishing, London.

- Worth, N. (2016). Who we are at work: Millennial women, everyday inequalities and insecure work. Gender, Place & Culture, 23(9), 1302–1314. [CrossRef]

- Zallio, M., Ike, C. B., & Chivăran, C. (2025). Designing Artificial Intelligence: Exploring Inclusion, Diversity, Equity, Accessibility, and Safety in Human-Centric Emerging Technologies. AI, 6(7), 143. [CrossRef]

| Theme | Sub-theme | n/16 | % |

| Integrated expert lenses & theoretical foundations |

Multidisciplinary career frameworks | 14 | 88% |

| Critique of androcentric model | 11 | 69% | |

| Biopsychosocial ecosystem of women’s careers | Gender-specific life transitions | 10 | 63% |

| Systemic-psychological interaction | 13 | 81% | |

| Adaptive intervention modalities | 11 | 69% | |

| AI architecture: mechanics of intervention |

System intelligence & orchestration | 5 | 31% |

| Affective gating & readiness | 5 | 31% | |

| The Human-AI coaching dialectic |

Technological affordances/ strengths | 13 | 81% |

| Limitations | 15 | 94% | |

| Ethical governance & the agency risk |

Algorithmic bias & individualistic reductionism | 7 | 44% |

| Safety, privacy & clinical safeguards | 11 | 69% | |

| Dependency & agency erosion | 8 | 50% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).