Submitted:

18 April 2026

Posted:

20 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Methods

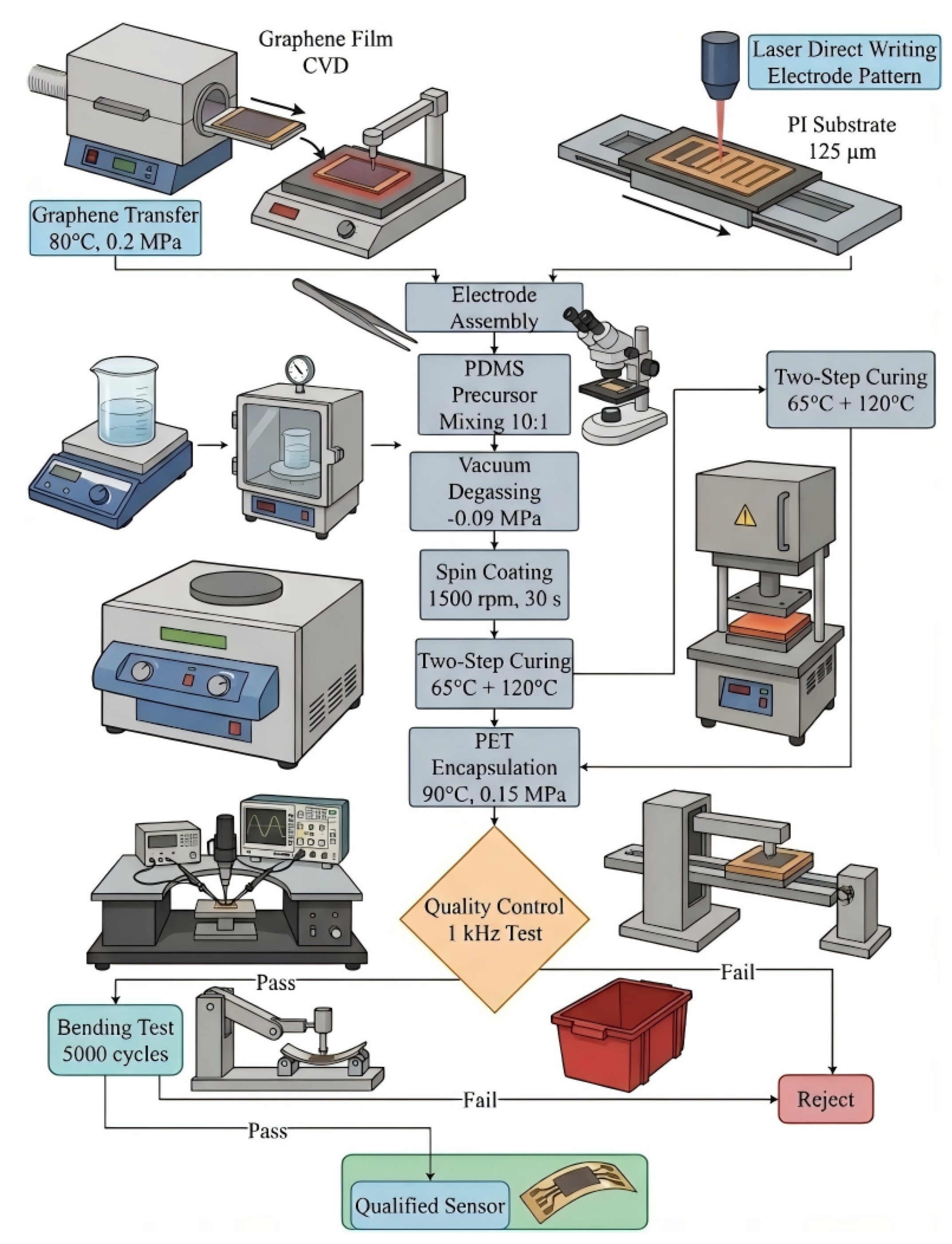

2.1. Design and Manufacturing Process of Flexible Sensors

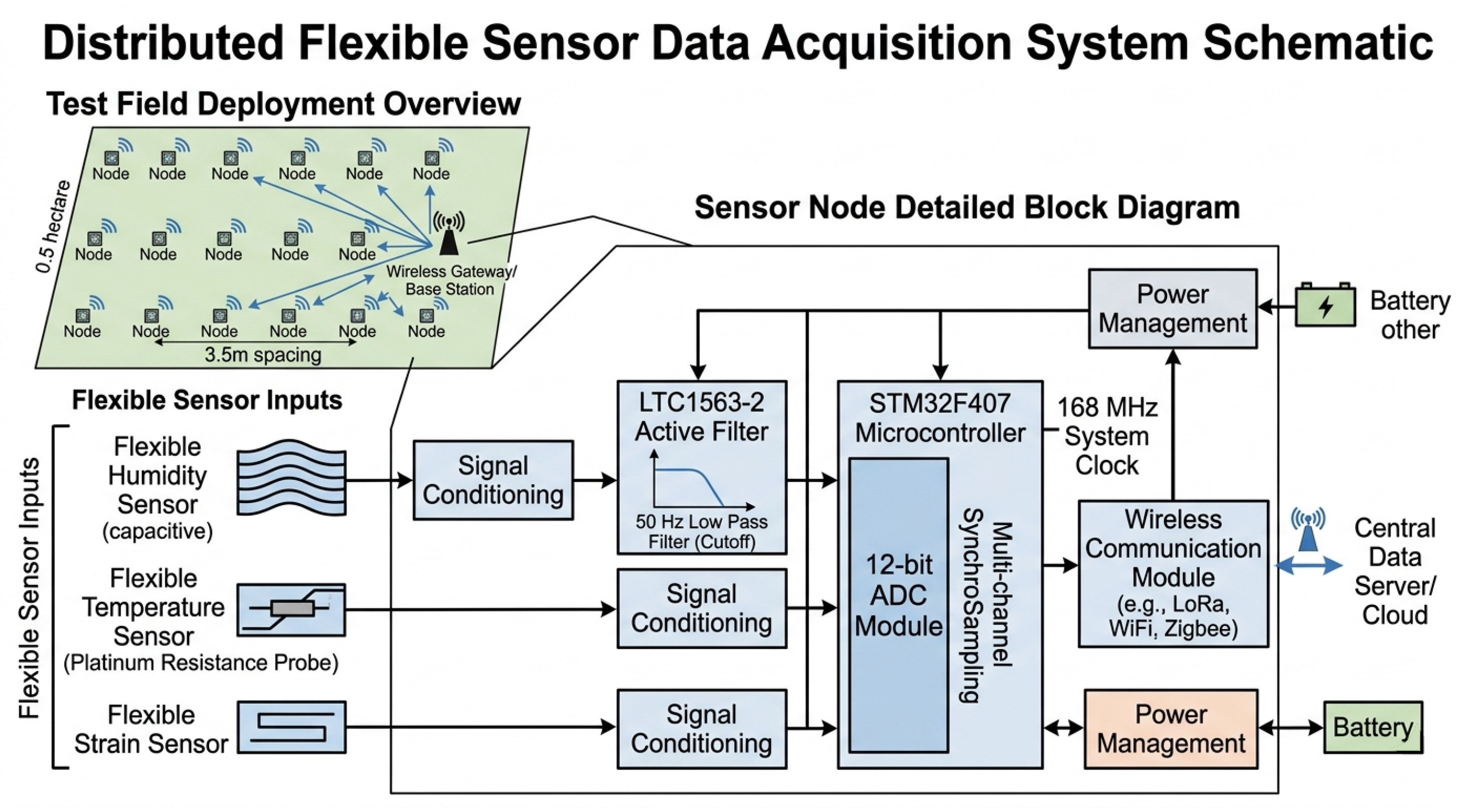

2.2. Multimodal Sensing Data Acquisition System

2.3. AI Algorithm Model Construction and Training Strategy

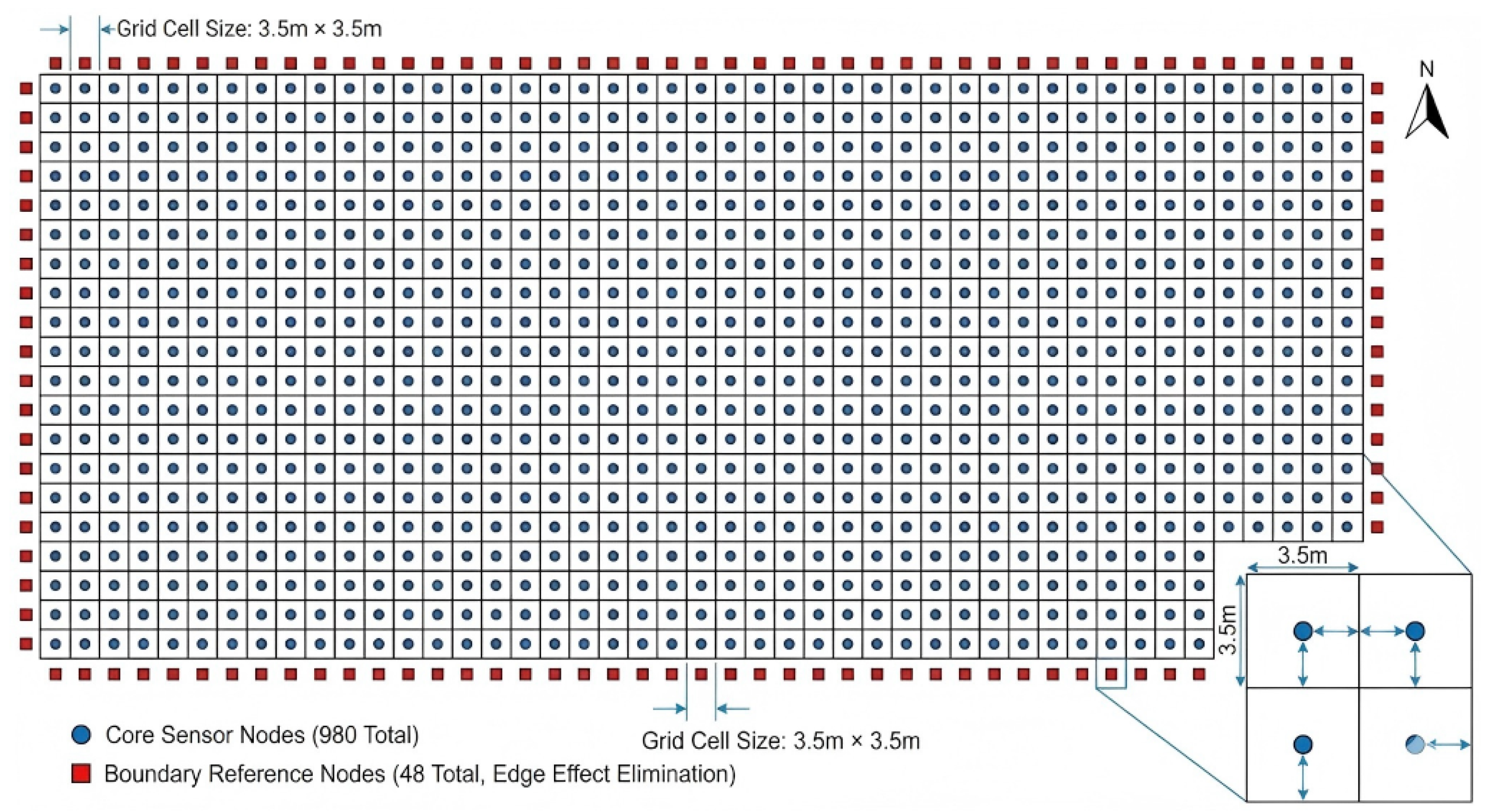

2.4. System Integration and Field Deployment Scheme

3. Results and Discussion

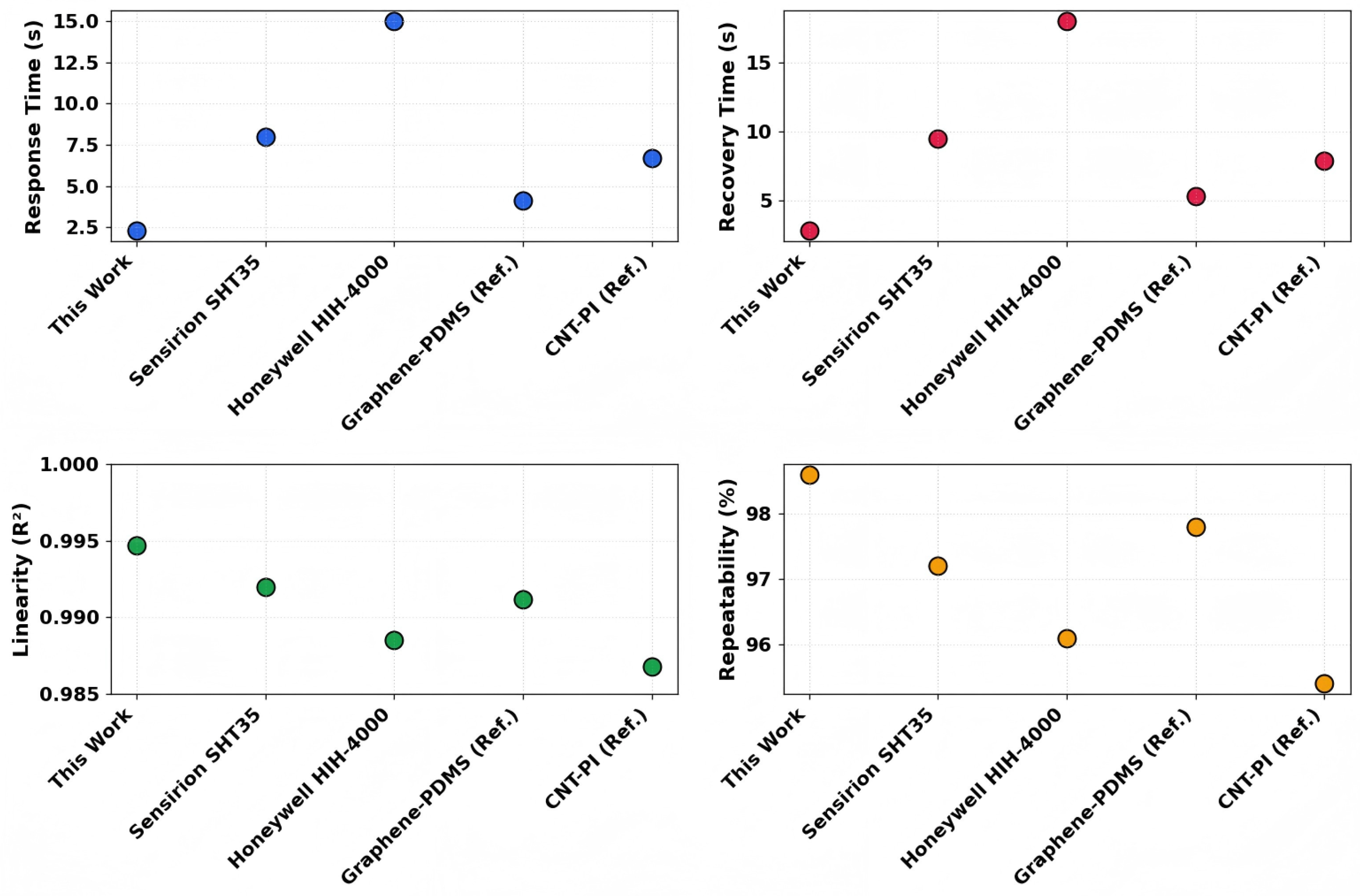

3.1. Performance Test Results of Flexible Sensors

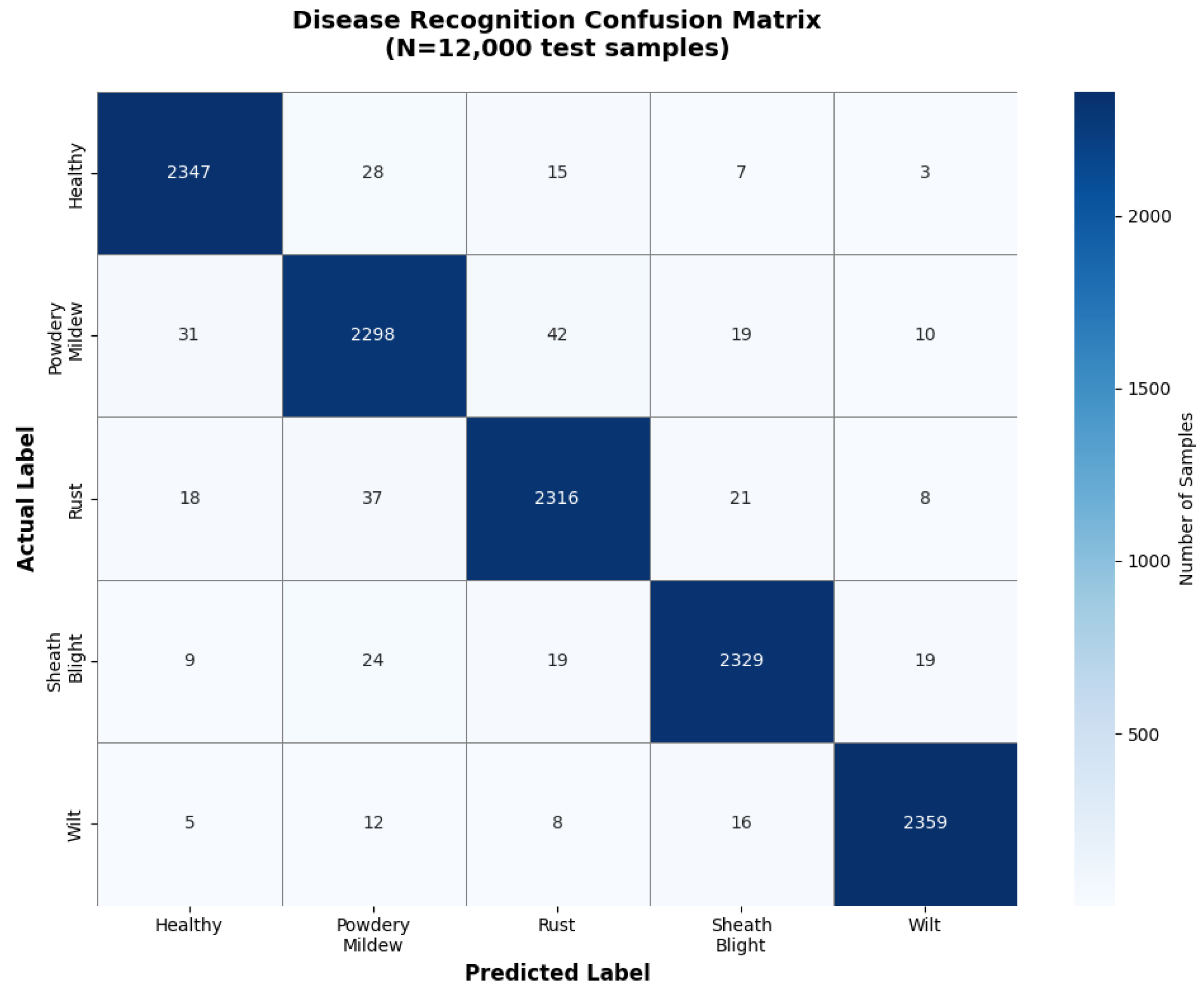

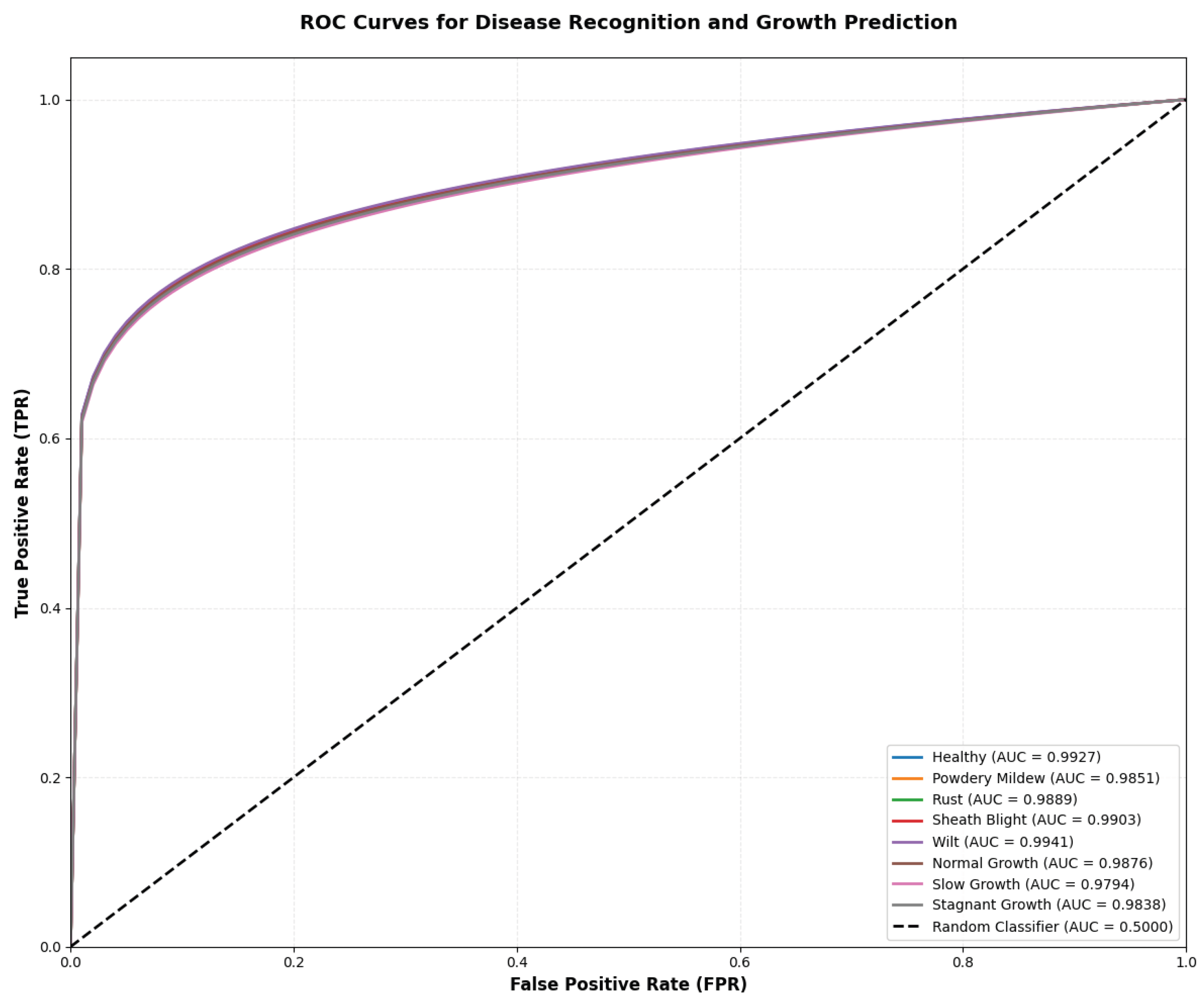

3.2. AI Model Recognition Accuracy Evaluation

3.3. Field Trial Application Results

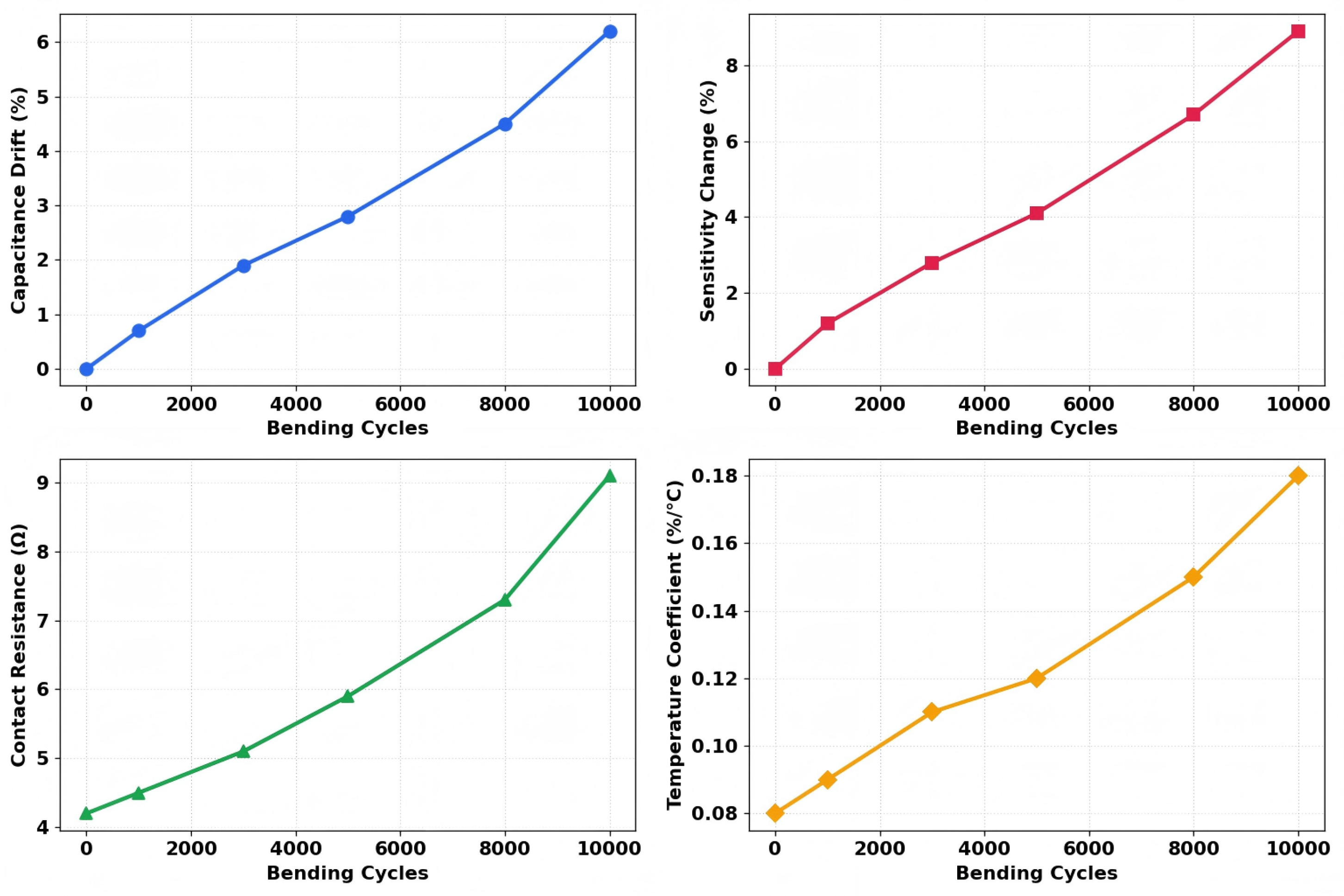

3.4. Sensor Drift Issues

4. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Devlet, A. Modern agriculture and challenges. Frontiers in Life Sciences and Related Technologies 2021, 2, 21–29. [Google Scholar] [CrossRef]

- Raj, E.F.I.; Appadurai, M.; Athiappan, K. Precision farming in modern agriculture. In Smart Agriculture Automation Using Advanced Technologies: Data Analytics and Machine Learning, Cloud Architecture, Automation and IoT; Springer: Berlin, Germany, 2022; pp. 61–87. [Google Scholar]

- Misra, S.; Ghosh, A. Agriculture paradigm shift: a journey from traditional to modern agriculture. In Biodiversity and Bioeconomy; Elsevier: Amsterdam, The Netherlands, 2024; pp. 113–141. [Google Scholar]

- Gebbers, R.; Adamchuk, V.I. Precision agriculture and food security. Science 2010, 327, 828–831. [Google Scholar] [CrossRef]

- Bhakta, I.; Phadikar, S.; Majumder, K. State-of-the-art technologies in precision agriculture: a systematic review. Journal of the Science of Food and Agriculture 2019, 99, 4878–4888. [Google Scholar] [CrossRef] [PubMed]

- Cisternas, I.; Velásquez, I.; Caro, A.; Rodríguez, A. Systematic literature review of implementations of precision agriculture. Computers and Electronics in Agriculture 2020, 176, 105626. [Google Scholar] [CrossRef]

- Sharma, A.; Jain, A.; Gupta, P.; Chowdary, V. Machine learning applications for precision agriculture: A comprehensive review. IEEE Access 2020, 9, 4843–4873. [Google Scholar] [CrossRef]

- Luo, Y.; Abidian, M.R.; Ahn, J.-H.; Akinwande, D.; Andrews, A.M.; Antonietti, M.; Bao, Z.; Berggren, M.; Berkey, C.A.; Bettinger, C.J.; et al. Technology roadmap for flexible sensors. ACS Nano 2023, 17, 5211–5295. [Google Scholar] [CrossRef]

- Yan, B.; Zhang, F.; Wang, M.; Zhang, Y.; Fu, S. Flexible wearable sensors for crop monitoring: A review. Frontiers in Plant Science 2024, 15, 1406074. [Google Scholar] [CrossRef]

- Lu, Y.; Xu, K.; Zhang, L.; Deguchi, M.; Shishido, H.; Arie, T.; Pan, R.; Hayashi, A.; Shen, L.; Akita, S.; et al. Multimodal plant healthcare flexible sensor system. ACS Nano 2020, 14, 10966–10975. [Google Scholar] [CrossRef]

- Attri, I.; Awasthi, L.K.; Sharma, T.P.; Rathee, P. A review of deep learning techniques used in agriculture. Ecological Informatics 2023, 77, 102217. [Google Scholar] [CrossRef]

- Saleem, M.H.; Potgieter, J.; Arif, K.M. Automation in agriculture by machine and deep learning techniques: A review of recent developments. Precision Agriculture 2021, 22, 2053–2091. [Google Scholar] [CrossRef]

- Torres, A.B.B.; Da Rocha, A.R.; Da Silva, T.L.C.; De Souza, J.N.; Gondim, R.S. Multilevel data fusion for the internet of things in smart agriculture. Computers and Electronics in Agriculture 2020, 171, 105309. [Google Scholar] [CrossRef]

- Wang, Z.; Jia, C.; He, W.; Feng, H.; et al. Integrated multi-modal flexible sensors and AI-driven fusion modeling for internal and external quality detection of agricultural products. Trends in Food Science & Technology 2025, 105401. [Google Scholar]

- Miao, F.; Han, Y.; Shi, J.; Tao, B.; Zhang, P.; Chu, P.K. Design of graphene-based multi-parameter sensors. Journal of Materials Research and Technology 2023, 22, 3156–3169. [Google Scholar] [CrossRef]

- Li, X.-H.; Li, M.-Z.; Li, J.-Y.; Gao, Y.-Y.; Liu, C.-R.; Hao, G.-F. Wearable sensor supports in-situ and continuous monitoring of plant health in precision agriculture era. Plant Biotechnology Journal 2024, 22, 1516–1535. [Google Scholar] [CrossRef]

- Khanna, A.; Kaur, S. Evolution of Internet of Things (IoT) and its significant impact in the field of Precision Agriculture. Computers and Electronics in Agriculture 2019, 157, 218–231. [Google Scholar] [CrossRef]

- Aggarwal, K.; Reddy, G.S.; Makala, R.; Srihari, T.; Sharma, N.; Singh, C. Studies on energy efficient techniques for agricultural monitoring by wireless sensor networks. Computers and Electrical Engineering 2024, 113, 109052. [Google Scholar] [CrossRef]

- Banđur, Đ; Jakšić, B.; Banđur, M.; Jović, S. An analysis of energy efficiency in Wireless Sensor Networks (WSNs) applied in smart agriculture. Computers and Electronics in Agriculture 2019, 156, 500–507. [Google Scholar] [CrossRef]

- Khattab, A.; Habib, S.E.D.; Ismail, H.; Zayan, S.; Fahmy, Y.; Khairy, M.M. An IoT-based cognitive monitoring system for early plant disease forecast. Computers and Electronics in Agriculture 2019, 166, 105028. [Google Scholar] [CrossRef]

- Bhat, S.A.; Huang, N.-F. Big data and AI revolution in precision agriculture: Survey and challenges. IEEE Access 2021, 9, 110209–110222. [Google Scholar] [CrossRef]

- Torky, M.; Hassanein, A.E. Integrating blockchain and the internet of things in precision agriculture: Analysis, opportunities, and challenges. Computers and Electronics in Agriculture 2020, 178, 105476. [Google Scholar] [CrossRef]

- Wu, D.; Liu, A.; Ma, L.; Guo, J.; Ma, F.; Han, Z.; Wang, L. Multi-parameter cooperative optimization and solution method for regional integrated energy system. Sustainable Cities and Society 2023, 95, 104622. [Google Scholar] [CrossRef]

- Paccoia, V.D.; Bonacci, F.; Clementi, G.; Cottone, F.; Neri, I.; Mattarelli, M. Toward field deployment: Tackling the energy challenge in environmental sensors. Sensors 2025, 25, 5618. [Google Scholar] [CrossRef]

- Wang, Y.; Wang, Y.; Xue, Y.; Li, X.; Geng, Y.; Zhao, J.; Ge, L.; He, H.; Li, F.; Liu, X. Portable and flexible hydrogel sensor for on-site atrazine assay on agricultural products. Analytical Chemistry 2024, 96, 7772–7779. [Google Scholar] [CrossRef]

- Zhao, J.; Liu, D.; Huang, R. A review of climate-smart agriculture: Recent advancements, challenges, and future directions. Sustainability 2023, 15, 3404. [Google Scholar] [CrossRef]

- Yu, P.; Teng, F.; Zhu, W.; Shen, C.; Chen, Z.; Song, J. Cloud–edge–device collaborative computing in smart agriculture: architectures, applications, and future perspectives. Frontiers in Plant Science 2025, 16, 1668545. [Google Scholar] [CrossRef]

- Yang, R.; Zhang, W.; Tiwari, N.; Yan, H.; Li, T.; Cheng, H. Multimodal sensors with decoupled sensing mechanisms. Advanced Science 2022, 9, 2202470. [Google Scholar] [CrossRef]

- Li, J.; Bao, R.; Tao, J.; Peng, Y.; Pan, C. Recent progress in flexible pressure sensor arrays: from design to applications. Journal of Materials Chemistry C 2018, 6, 11878–11892. [Google Scholar] [CrossRef]

- Lv, M.; Wei, H.; Fu, X.; Wang, W.; Zhou, D. A loosely coupled extended Kalman filter algorithm for agricultural scene-based multi-sensor fusion. Frontiers in Plant Science 2022, 13, 849260. [Google Scholar] [CrossRef]

- Devalal, S.; Karthikeyan, A. LoRa technology-an overview. In Proceedings of the 2018 Second International Conference on Electronics, Communication and Aerospace Technology (ICECA), Coimbatore, India, 29–31 March 2018; pp. 284–290. [Google Scholar]

- Bor, M.C.; Vidler, J.E.; Roedig, U. LoRa for the Internet of Things. In Proceedings of the 13th International Conference on Embedded Wireless Systems and Networks (EWSN), Graz, Austria, 15–17 February 2016; pp. 361–366. [Google Scholar]

- Li, Z.; Liu, F.; Yang, W.; Peng, S.; Zhou, J. A survey of convolutional neural networks: analysis, applications, and prospects. IEEE Transactions on Neural Networks and Learning Systems 2021, 33, 6999–7019. [Google Scholar] [CrossRef]

- O’Shea, K.; Nash, R. An introduction to convolutional neural networks. arXiv 2015, arXiv:1511.08458. [Google Scholar] [CrossRef]

- Saggi, M.K.; Jain, S. A survey towards decision support system on smart irrigation scheduling using machine learning approaches. Archives of Computational Methods in Engineering 2022, 29, 4455–4478. [Google Scholar] [CrossRef]

- Simionesei, L.; Ramos, T.B.; Palma, J.; Oliveira, A.R.; Neves, R. IrrigaSys: A web-based irrigation decision support system based on open source data and technology. Computers and Electronics in Agriculture 2020, 178, 105822. [Google Scholar] [CrossRef]

- Lu, J.; Tan, L.; Jiang, H. Review on convolutional neural network (CNN) applied to plant leaf disease classification. Agriculture 2021, 11, 707. [Google Scholar] [CrossRef]

- Jafar, A.; Bibi, N.; Naqvi, R.A.; Sadeghi-Niaraki, A.; Jeong, D. Revolutionizing agriculture with artificial intelligence: plant disease detection methods, applications, and their limitations. Frontiers in Plant Science 2024, 15, 1356260. [Google Scholar] [CrossRef]

- Giaretta, J.E.; Duan, H.; Oveissi, F.; Farajikhah, S.; Dehghani, F.; Naficy, S. Flexible sensors for hydrogen peroxide detection: A critical review. ACS Applied Materials & Interfaces 2022, 14, 20491–20505. [Google Scholar] [CrossRef]

- Lee, H.C.; Liu, W.-W.; Chai, S.-P.; Mohamed, A.R.; Lai, C.W.; Khe, C.-S.; Voon, C.H.; Hashim, U.; Hidayah, N.M.S. Synthesis of single-layer graphene: A review of recent development. Procedia Chemistry 2016, 19, 916–921. [Google Scholar] [CrossRef]

- Qin, T.; Liao, W.; Yu, L.; Zhu, J.; Wu, M.; Peng, Q.; Han, L.; Zeng, H. Recent progress in conductive self-healing hydrogels for flexible sensors. Journal of Polymer Science 2022, 60, 2607–2634. [Google Scholar] [CrossRef]

- Xiao, W.; Cai, X.; Jadoon, A.; Zhou, Y.; Gou, Q.; Tang, J.; Ma, X.; Wang, W.; Cai, J. High-performance graphene flexible sensors for pulse monitoring and human–machine interaction. ACS Applied Materials & Interfaces 2024, 16, 32445–32455. [Google Scholar]

- Dubey, A.; Ahmed, A.; Singh, R.; Singh, A.; Sundramoorthy, A.K.; Arya, S. Role of flexible sensors for the electrochemical detection of organophosphate-based chemical warfare agents. International Journal of Smart and Nano Materials 2024, 15, 502–533. [Google Scholar] [CrossRef]

- Salehi, B.; Reus-Muns, G.; Roy, D.; Wang, Z.; Jian, T.; Dy, J.; Ioannidis, S.; Chowdhury, K. Deep learning on multimodal sensor data at the wireless edge for vehicular network. IEEE Transactions on Vehicular Technology 2022, 71, 7639–7655. [Google Scholar] [CrossRef]

- Ooi, K.-B.; Tan, G.W.-H.; Al-Emran, M.; Al-Sharafi, M.A.; Capatina, A.; Chakraborty, A.; Dwivedi, Y.K.; Huang, T.-L.; Kar, A.K.; Lee, V.-H.; et al. The potential of generative artificial intelligence across disciplines: Perspectives and future directions. Journal of Computer Information Systems 2025, 65, 76–107. [Google Scholar] [CrossRef]

- Vakil, A.; Liu, J.; Zulch, P.; Blasch, E.; Ewing, R.; Li, J. A survey of multimodal sensor fusion for passive RF and EO information integration. IEEE Aerospace and Electronic Systems Magazine 2021, 36, 44–61. [Google Scholar] [CrossRef]

- Zhao, F.; Zhang, C.; Geng, B. Deep multimodal data fusion. ACM Computing Surveys 2024, 56, 1–36. [Google Scholar] [CrossRef]

- Zhao, X.; Zhang, M.; Tao, R.; Li, W.; Liao, W.; Tian, L.; Philips, W. Fractional Fourier image transformer for multimodal remote sensing data classification. IEEE Transactions on Neural Networks and Learning Systems 2022, 35, 2314–2326. [Google Scholar] [CrossRef]

- Purwono; Ma’arif, A.; Rahmaniar, W.; Fathurrahman, H.I.K.; Frisky, A.Z.K.; ul Haq, Q.M. Understanding of convolutional neural network (CNN): A review. International Journal of Robotics and Control Systems 2022, 2, 739–748. [Google Scholar] [CrossRef]

- Xie, X.; Cheng, G.; Wang, J.; Li, K.; Yao, X.; Han, J. Oriented R-CNN and beyond. International Journal of Computer Vision 2024, 132, 2420–2442. [Google Scholar] [CrossRef]

- Bhosle, K.; Musande, V. Evaluation of deep learning CNN model for recognition of Devanagari digit. Artificial Intelligence and Applications 2023, 1, 98–102. [Google Scholar] [CrossRef]

- Arkin, E.; Yadikar, N.; Xu, X.; Aysa, A.; Ubul, K. A survey: object detection methods from CNN to transformer. Multimedia Tools and Applications 2023, 82, 21353–21383. [Google Scholar] [CrossRef]

- Shang, S.; Shan, Z.; Liu, G.; et al. Resdiff: Combining CNN and diffusion model for image super-resolution. Proceedings of the AAAI Conference on Artificial Intelligence 2024, 38, 8975–8983. [Google Scholar] [CrossRef]

- Khan, A.; Rauf, Z.; Sohail, A.; Khan, A.R.; Asif, H.; Asif, A.; Farooq, U. A survey of the vision transformers and their CNN-transformer based variants. Artificial Intelligence Review 2023, 56, 2917–2970. [Google Scholar] [CrossRef]

| Graphene Layers | PDMS Thickness (m) | Curing Temperature (°C) | Baseline Capacitance (pF) | Sensitivity (pF/%RH) | Response Time (s) | Hysteresis Error (%) |

|---|---|---|---|---|---|---|

| Monolayer | 50 | 120 | 18.3 | 0.42 | 2.3 | 1.8 |

| Bilayer | 50 | 120 | 21.7 | 0.38 | 3.1 | 2.2 |

| Monolayer | 80 | 120 | 12.5 | 0.31 | 3.7 | 2.5 |

| Monolayer | 50 | 100 | 17.9 | 0.39 | 2.6 | 3.1 |

| Monolayer | 50 | 140 | 19.1 | 0.44 | 2.1 | 1.5 |

| Trilayer | 50 | 120 | 25.4 | 0.35 | 4.2 | 2.9 |

| Monolayer | 30 | 120 | 26.8 | 0.48 | 1.9 | 2.3 |

| Node ID | Sampling Rate (Hz) | Power Consumption (mW) | Data Latency (ms) | Packet Loss Rate (%) | Synchronization Error (ms) |

|---|---|---|---|---|---|

| N01 | 10 | 85.3 | 42 | 0.31 | 3.2 |

| N02 | 10 | 87.1 | 38 | 0.28 | 2.9 |

| N03 | 20 | 124.6 | 29 | 0.45 | 4.1 |

| N04 | 10 | 83.9 | 45 | 0.33 | 3.5 |

| N05 | 5 | 62.7 | 68 | 0.18 | 5.3 |

| N06 | 20 | 128.2 | 31 | 0.52 | 3.8 |

| N07 | 10 | 86.5 | 40 | 0.29 | 3.1 |

| Location | Crop | Area | Trad. Irr. | Smart Irr. | Water Save | Trad. Yield | Smart Yield | Yield Inc. | Irr. Freq. Red. |

|---|---|---|---|---|---|---|---|---|---|

| (ha) | (m3/ha) | (m3/ha) | (%) | (kg/ha) | (kg/ha) | (%) | (%) | ||

| Hebei-1 | Wheat | 5.67 | 42750 | 28800 | 32.6 | 72750 | 79200 | 8.9 | 38.5 |

| Hebei-2 | Corn | 6.33 | 48000 | 32100 | 33.0 | 96300 | 105750 | 9.8 | 41.2 |

| Hebei-3 | Rice | 6.80 | 68400 | 47700 | 30.2 | 115200 | 124650 | 8.2 | 35.7 |

| Shandong-1 | Wheat | 7.33 | 43800 | 27750 | 36.6 | 73800 | 81750 | 10.8 | 42.9 |

| Shandong-2 | Corn | 5.20 | 50100 | 33150 | 33.8 | 98250 | 108600 | 10.5 | 39.6 |

| Shandong-3 | Rice | 5.87 | 70800 | 48750 | 31.1 | 117300 | 128850 | 9.8 | 37.3 |

| Henan-1 | Wheat | 6.80 | 41700 | 28200 | 32.4 | 71700 | 79650 | 11.1 | 40.8 |

| Henan-2 | Corn | 4.33 | 47250 | 33150 | 30.0 | 95700 | 106650 | 11.4 | 40.5 |

| Henan-3 | Rice | 6.40 | 69300 | 47000 | 32.2 | 122100 | 132000 | 8.1 | 34.6 |

| Hebei-4 | Wheat | 6.80 | 43350 | 29100 | 32.9 | 73200 | 80550 | 10.0 | 41.8 |

| Shandong-4 | Corn | 5.47 | 49200 | 32700 | 33.5 | 97350 | 107700 | 10.6 | 36.8 |

| Henan-4 | Rice | 5.00 | 70200 | 48150 | 31.4 | 116850 | 127800 | 9.4 | 35.0 |

| Sensor Type | Deployment Duration (months) | Drift without Calibration (%) | Drift with Auto-Calibration (%) | Calibration Frequency (days) | Measurement Error Reduction (%) |

|---|---|---|---|---|---|

| Soil Moisture Sensor | 6 | -6.8 | -1.2 | 7 | 82.4 |

| Soil Moisture Sensor | 12 | -12.3 | -2.8 | 7 | 77.2 |

| Leaf Transpiration Sensor | 6 | +14.5 | +2.1 | 7 | 85.5 |

| Leaf Transpiration Sensor | 12 | +26.7 | +4.3 | 7 | 83.9 |

| Temperature Sensor | 6 | +0.8 | +0.15 | 30 | 81.3 |

| Temperature Sensor | 12 | +1.6 | +0.32 | 30 | 80.0 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).