Submitted:

15 April 2026

Posted:

15 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Literature Reviews

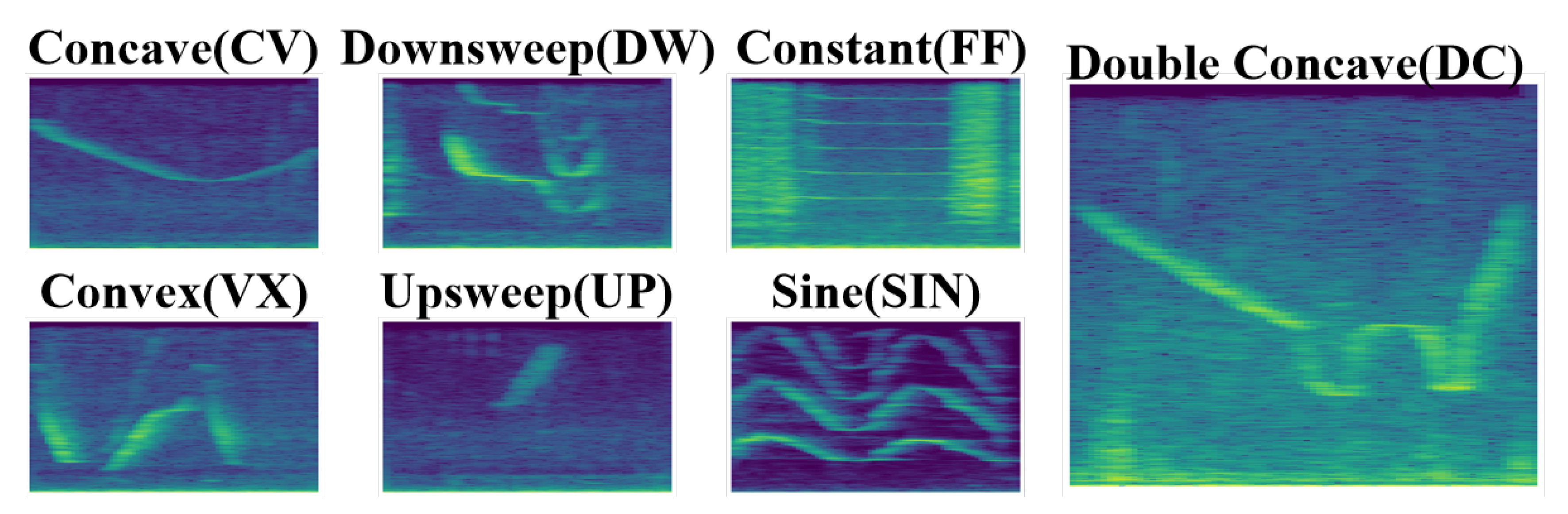

2.1. Dolphin Whistle Signal Types

2.2. Acoustic Signal Classification

2.3. Underwater Acoustic Propagation Modeling

3. Methodology

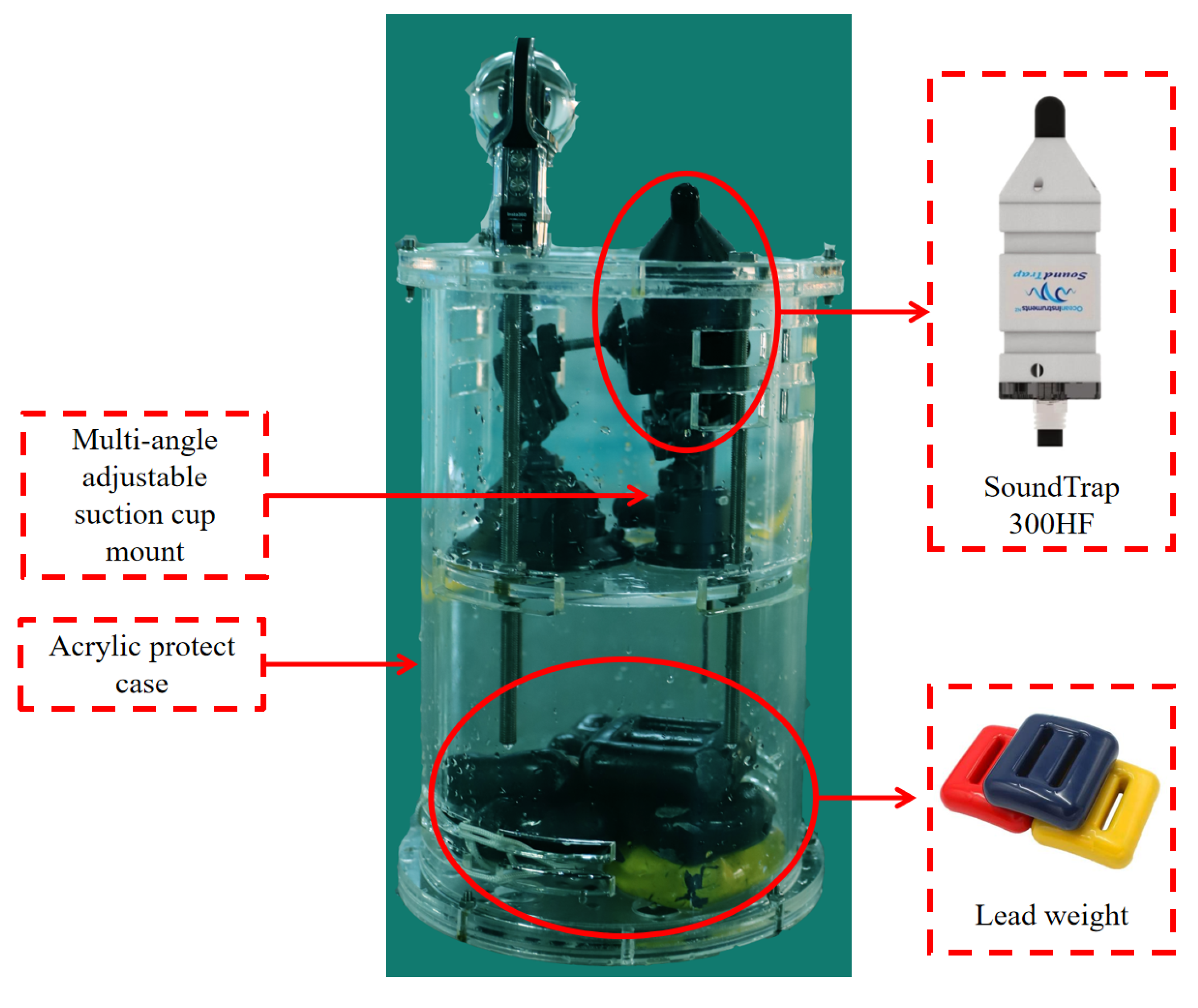

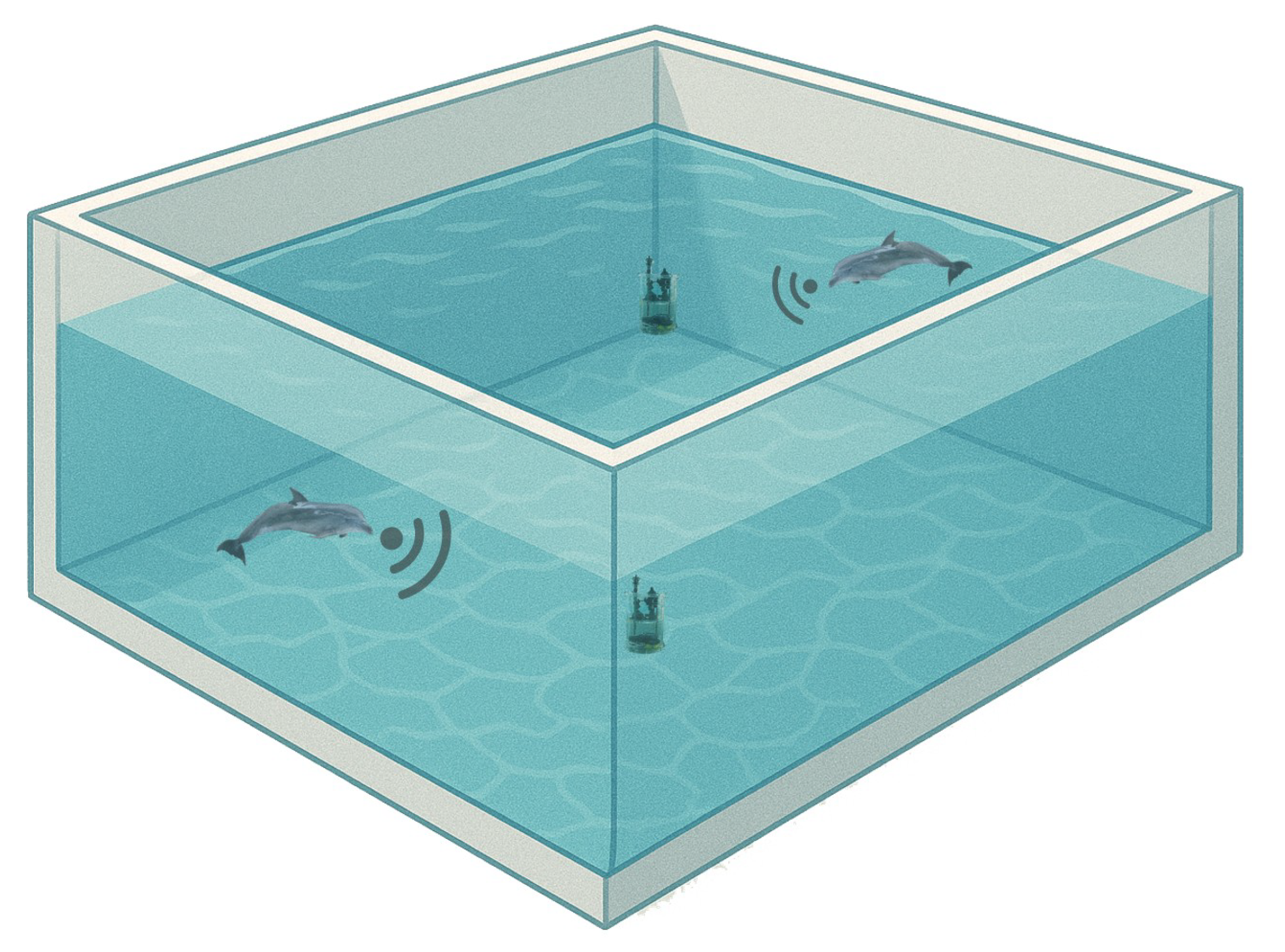

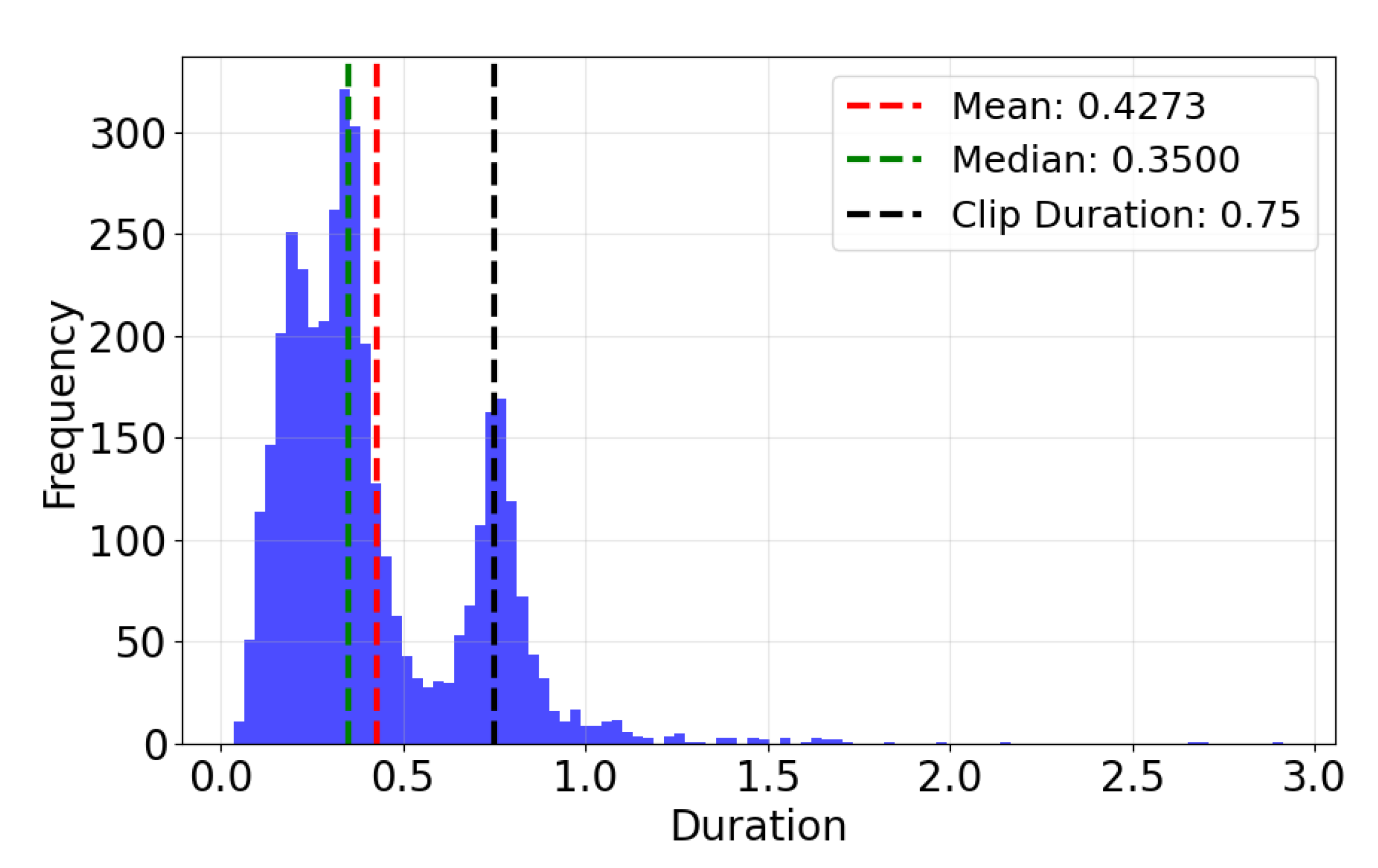

3.1. Data Collection and Annotation

- Constant: nearly flat contour with frequency variation under 1 kHz across the time span of the signal.

- Upsweep: the fundamental frequency increases over time.

- Downsweep: the fundamental frequency decreases over time.

- Concave: the fundamental frequency first decreases, then increases over time.

- Convex: the fundamental frequency first increases, then decreases over time.

- Sine: sinusoidal-like contour.

- Double concave: two consecutive concave contours concatenated together.

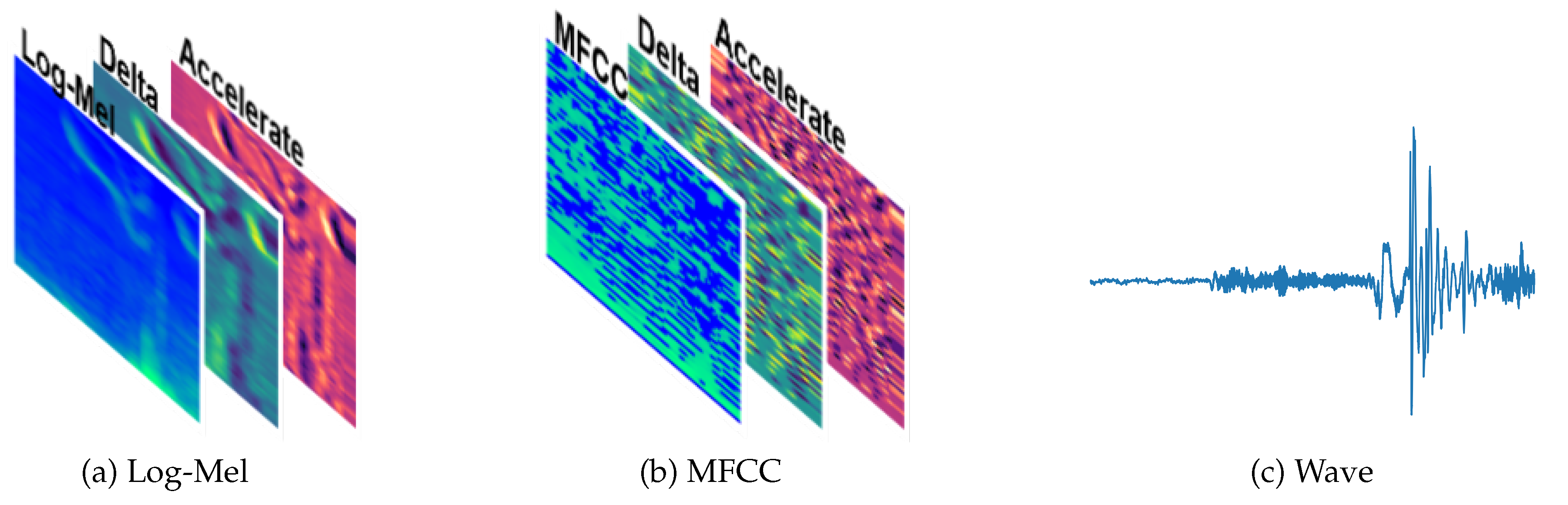

3.2. Deep Learning Classification Methods

- MobileNet [29]: a lightweight CNN that uses depthwise separable convolutions to reduce computation complexity while maintaining strong performance, which is suitable for mobile applications.

- Xception [30]: the “Extreme Inception” model that decouples spatial and channel-wise convolutions for more efficient feature extraction.

- ResNet (Residual Network) [31]: introduces residual connections (shortcuts) to enable effective training of very deep networks.

- ResNeXt [32]: extends ResNet with grouped convolutions and a cardinality parameter, capturing a broader range of feature interactions.

- SE-ResNeXt [33]: combines ResNeXt with Squeeze-and-Excitation blocks to model channel dependencies and enhance feature recalibration.

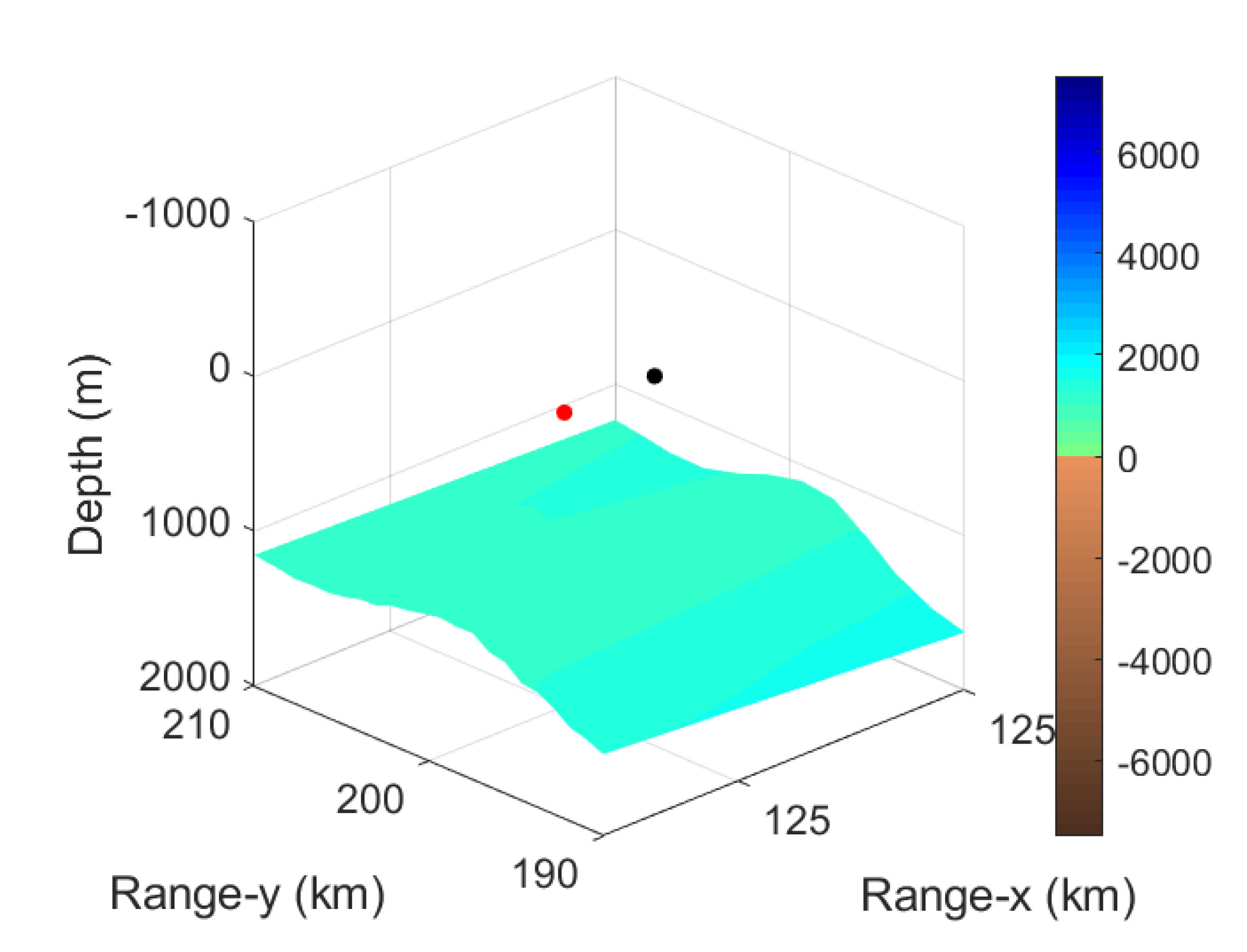

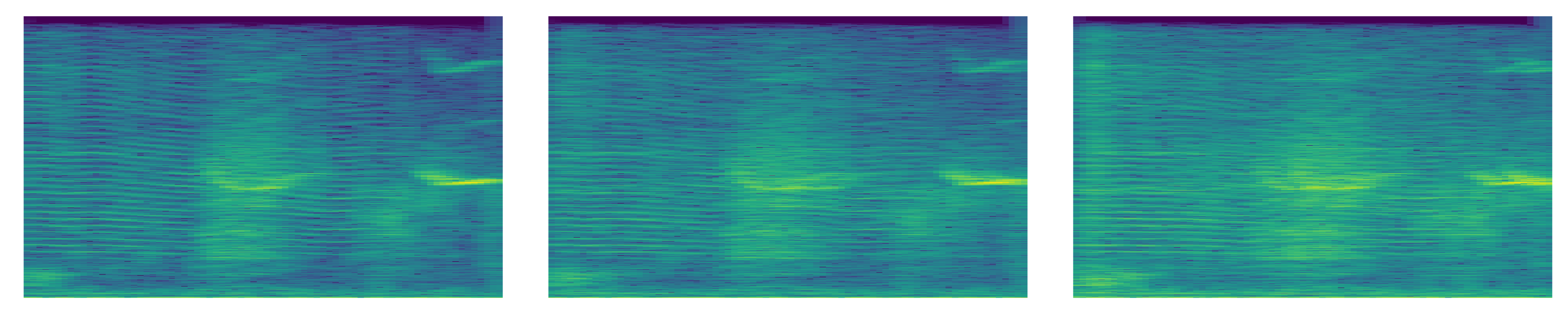

3.3. Simulated Marine Whistle Signals

4. Experiment and Results

4.1. Input Data Pre-Processing and Experiment Set Up

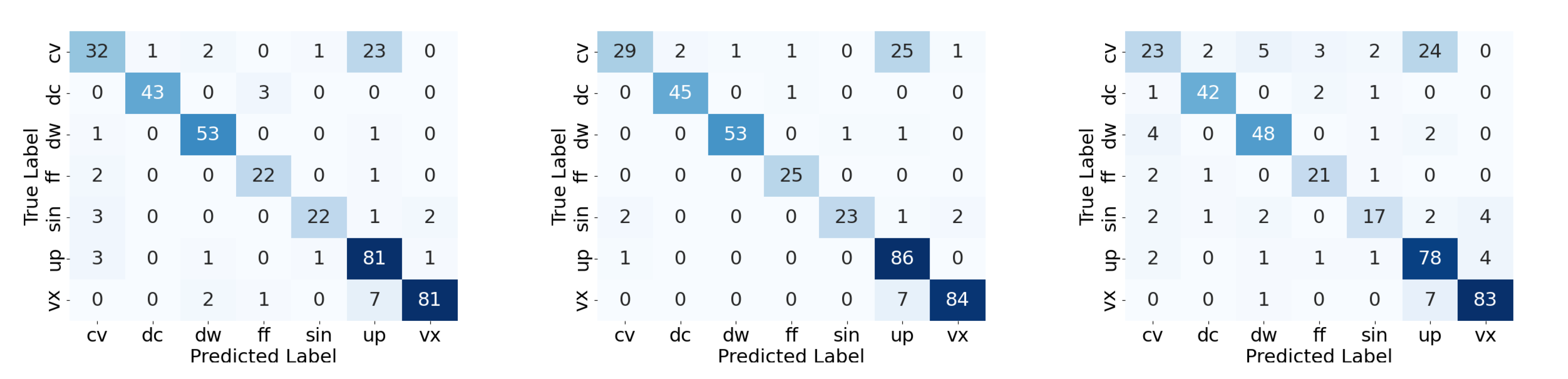

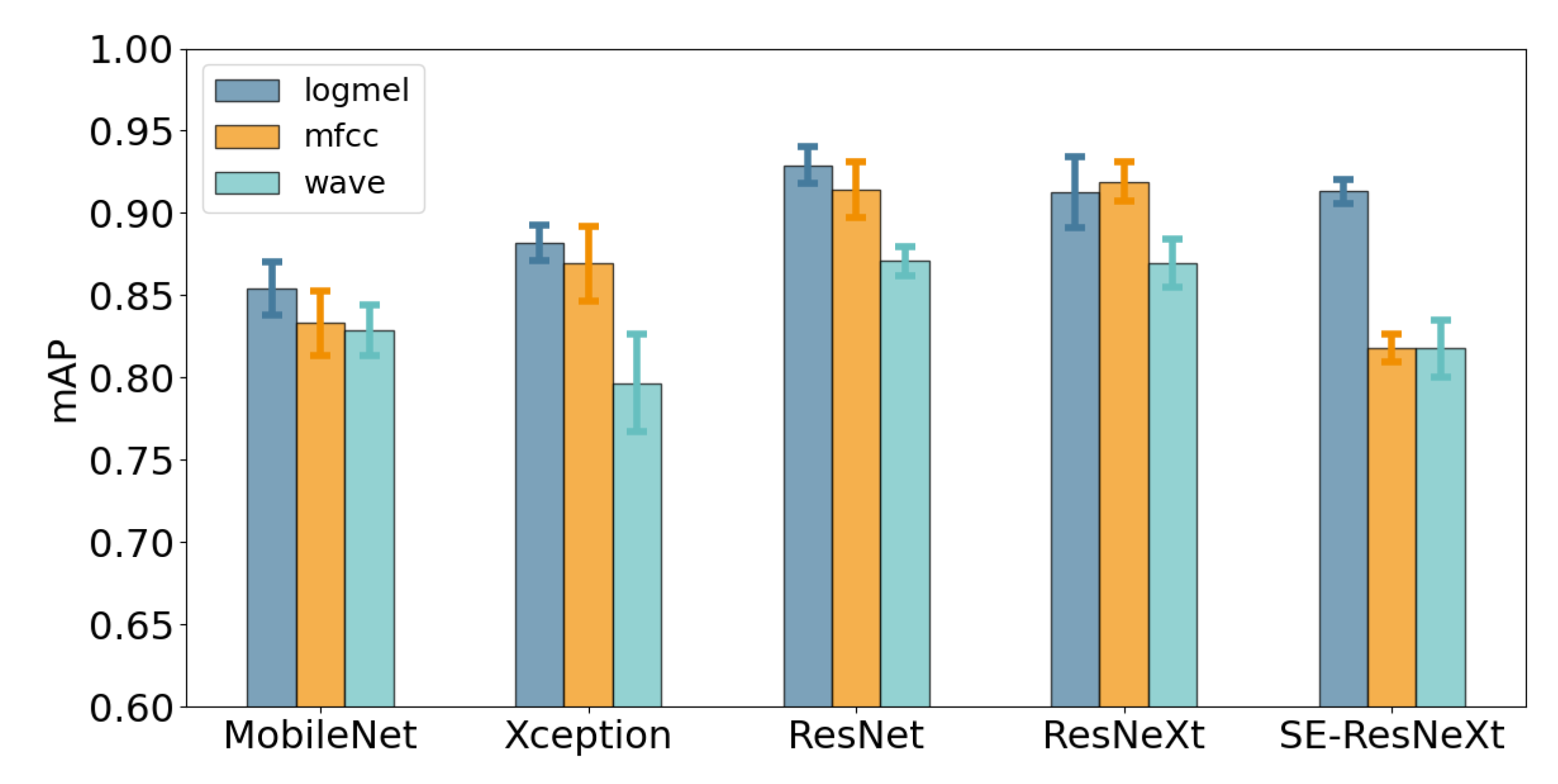

4.2. Results

5. Conclusions and Future Work

Acknowledgments

References

- Au, W. Echolocation signals of wild dolphins. Acoustical Physics 2004, 50, 454–462. [Google Scholar] [CrossRef]

- Au, W.W. The sonar of dolphins; Springer Science & Business Media, 1993. [Google Scholar]

- Janik, V.M.; Sayigh, L.S. Communication in bottlenose dolphins: 50 years of signature whistle research. Journal of Comparative Physiology A 2013, 199, 479–489. [Google Scholar] [CrossRef] [PubMed]

- Luís, A.R.; Couchinho, M.N.; Dos Santos, M.E. A quantitative analysis of pulsed signals emitted by wild bottlenose dolphins. PLOS one 2016, 11, e0157781. [Google Scholar] [CrossRef] [PubMed]

- Haughey, R.; Hunt, T.N.; Hanf, D.; Passadore, C.; Baring, R.; Parra, G.J. Distribution and habitat preferences of Indo-Pacific bottlenose dolphins (Tursiops aduncus) inhabiting coastal waters with mixed levels of protection. Frontiers in Marine Science 2021, 8, 617518. [Google Scholar] [CrossRef]

- Sayigh, L.S.; Janik, V.M.; Jensen, F.H.; Scott, M.D.; Tyack, P.L.; Wells, R.S. The Sarasota Dolphin Whistle Database: A unique long-term resource for understanding dolphin communication. Frontiers in Marine Science 2022, 9, 923046. [Google Scholar] [CrossRef]

- Di Nardo, F.; De Marco, R.; Lucchetti, A.; Scaradozzi, D. A WAV file dataset of bottlenose dolphin whistles, clicks, and pulse sounds during trawling interactions. Scientific Data 2023, 10, 650. [Google Scholar] [CrossRef]

- Wall, C.C.; Haver, S.M.; Hatch, L.T.; Miksis-Olds, J.; Bochenek, R.; Dziak, R.P.; Gedamke, J. The next wave of passive acoustic data management: How centralized access can enhance science. Frontiers in Marine Science 2021, 8, 703682. [Google Scholar] [CrossRef]

- Matthews, J.; Rendell, L.E.; Gordon, J.C.D.; Macdonald, D. A review of frequency and time parameters of cetacean tonal calls. Bioacoustics 1999, 10, 47–71. [Google Scholar] [CrossRef]

- McCowan, B. A new quantitative technique for categorizing whistles using simulated signals and whistles from captive bottlenose dolphins (Delphinidae, Tursiops truncatus). Ethology 1995, 100, 177–193. [Google Scholar] [CrossRef]

- Janik, V.M.; Slater, P.J. Context-specific use suggests that bottlenose dolphin signature whistles are cohesion calls. Animal behaviour 1998, 56, 829–838. [Google Scholar] [CrossRef]

- Beeman, K. SIGNAL Sound Analysis System; Belmont, MA, 1996. [Google Scholar]

- Hawkins, E.R.; Gartside, D.F. Whistle emissions of Indo-Pacific bottlenose dolphins (Tursiops aduncus) differ with group composition and surface behaviors. The Journal of the Acoustical Society of America 2010, 127, 2652–2663. [Google Scholar] [CrossRef] [PubMed]

- Azevedo, A.F.; Flach, L.; Bisi, T.L.; Andrade, L.G.; Dorneles, P.R.; Lailson-Brito, J. Whistles emitted by Atlantic spotted dolphins (Stenella frontalis) in southeastern Brazil. The Journal of the Acoustical Society of America 2010, 127, 2646–2651. [Google Scholar] [CrossRef] [PubMed]

- Rui-chao, X.; Fu-qiang, N.; Yan-ming, Y.; Yue-kun, H.; Wei, L. Study on automatic extraction of bottlenose dolphin whistles from the background of ocean noise. 2nd International Conference on Information, Communication and Engineering, 2019. [Google Scholar]

- Harley, H.E. Whistle discrimination and categorization by the Atlantic bottlenose dolphin (Tursiops truncatus): A review of the signature whistle framework and a perceptual test. Behavioural processes 2008, 77, 243–268. [Google Scholar] [CrossRef] [PubMed]

- Gillespie, D.; Caillat, M.; Gordon, J.; White, P. Automatic detection and classification of odontocete whistles. The Journal of the Acoustical Society of America 2013, 134, 2427–37. [Google Scholar] [CrossRef]

- Lopez-Otero, P.; Docio-Fernandez, L.; Cardenal-L pez, A. Using Discrete Wavelet Transform to Model Whistle Contours for Dolphin Species Classification. Proceedings 2018, 2. [Google Scholar] [CrossRef]

- Roch, M.; Soldevilla, M.; Burtenshaw, J.; Henderson, E.; Hildebrand, J. Gaussian mixture model classification of odontocetes in the Southern California Bight and the Gulf of California. The Journal of the Acoustical Society of America 2007, 121, 1737–48. [Google Scholar] [CrossRef]

- YANG, W.; SUN, X.; ZHANG, Y.; WEI, C.; YANG, Y.; NIU, F. An automatic classification method for whistles of bottlenose dolphin (Tursiops truncates). ACTA ACUSTICA 2016, 41, 181–188. [Google Scholar] [CrossRef]

- Xu, K.; Zhu, B.; Kong, Q.; Mi, H.; Ding, B.; Wang, D.; Wang, H. General audio tagging with ensembling convolutional neural networks and statistical features. The Journal of the Acoustical Society of America 2019, 145, EL521–EL527. [Google Scholar] [CrossRef]

- GAO, D.; GAO, D.; LI, X. Deep learning-based recognition of click signals of typical marine mammals. Journal of Shaanxi Normal University,Natural Science Edition 2019, 47, 37–437. [Google Scholar]

- Yang, W.; Luo, W.; Zhang, Y. Classification of odontocete echolocation clicks using convolutional neural network. The Journal of the Acoustical Society of America 2020, 147, 49–55. [Google Scholar] [CrossRef]

- Etter, P.C. Underwater acoustic modeling and simulation; CRC press, 2018. [Google Scholar]

- Wang, L.; Heaney, K.; Pangerc, T.; Theobald, P.; Robinson, S.P.; Ainslie, M. Review of underwater acoustic propagation models. 2014. [Google Scholar]

- Porter, M.B.; Bucker, H.P. Gaussian beam tracing for computing ocean acoustic fields. The Journal of the Acoustical Society of America 1987, 82, 1349–1359. [Google Scholar] [CrossRef]

- Bowlin, J.B.; Spiesberger, J.L.; Duda, T.F.; Freitag, L.F. Ocean acoustical ray-tracing software RAY. Technical report. 1992. [Google Scholar]

- Porter, M.B. The KRAKEN normal mode program. 1992. [Google Scholar]

- Howard, A.G. Mobilenets: Efficient convolutional neural networks for mobile vision applications. arXiv 2017, arXiv:1704.04861. [Google Scholar] [CrossRef]

- Chollet, F. Xception: Deep learning with depthwise separable convolutions. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2017; pp. 1251–1258. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2016; pp. 770–778. [Google Scholar]

- Xie, S.; Girshick, R.; Dollár, P.; Tu, Z.; He, K. Aggregated residual transformations for deep neural networks. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2017; pp. 1492–1500. [Google Scholar]

- Hu, J.; Shen, L.; Sun, G. Squeeze-and-excitation networks. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2018; pp. 7132–7141. [Google Scholar]

- Santos-Domínguez, L.; Vázquez, M.; Llorca, J.M.; Carballo, A. ShipsEar: An underwater vessel noise database. Applied Acoustics Accessed. 2016, 114, 155–163. (accessed on 2025-06-08). [Google Scholar] [CrossRef]

| ID | Label | Mean Duration | Variance | Count |

|---|---|---|---|---|

| CV | Concave | 0.5103 | 0.0710 | 586 |

| DC | Double Concave | 0.7600 | 0.0069 | 462 |

| DW | Downsweep | 0.3430 | 0.0078 | 549 |

| FF | Constant | 0.3690 | 0.0291 | 256 |

| SIN | Sine | 0.8787 | 0.1064 | 278 |

| UP | Upsweep | 0.1909 | 0.0055 | 870 |

| VX | Convex | 0.3603 | 0.0205 | 912 |

| Total Whistle Count | 3913 | |||

| waveform (bs, 1, ) | ||

|---|---|---|

| Conv1d out channels=32, kernel size=11, stride=1, padding=5 (bs, 32, ) |

Conv1d out channels=32, kernel size=51, stride=5, padding=25 (bs, 32, ) |

Conv1d out channels=32, kernel size=101, stride=10, padding=50 (bs, 32, ) |

| BatchNorm1d | BatchNorm1d | BatchNorm1d |

| ReLU | ||

| Conv1d out channels=32, kernel size=3, stride=1, padding=1 |

Conv1d out channels=32, kernel size=3, stride=1, padding=1 |

Conv1d out channels=32, kernel size=3, stride=1, padding=1 |

| BatchNorm1d | BatchNorm1d | BatchNorm1d |

| ReLU | ||

| MaxPool1d kernel size=150, stride=150 |

MaxPool1d kernel size=30, stride=30 |

MaxPool1d kernel size=10, stride=10 |

| unsqueeze (bs,1, 32, ) cat (bs,1, 96, ) | ||

| Conv2d kernel size=(7,7), stride=(2, 2), padding=(3, 3), bias=False (bs, 64, 48, ) | ||

| Model | Input | mAP | CV | DC | DW | FF | SIN | UP | VX |

|---|---|---|---|---|---|---|---|---|---|

| MobileNet | logmel | 0.8584 | 0.6348 | 0.9651 | 0.9531 | 0.8483 | 0.8059 | 0.8345 | 0.9672 |

| mfcc | 0.8572 | 0.6529 | 0.9809 | 0.9600 | 0.8386 | 0.7816 | 0.8234 | 0.9626 | |

| wave | 0.8319 | 0.5290 | 0.9491 | 0.9510 | 0.8785 | 0.7554 | 0.8066 | 0.9539 | |

| Xception | logmel | 0.9231 | 0.7643 | 0.9896 | 0.9866 | 0.9373 | 0.9105 | 0.8924 | 0.9812 |

| mfcc | 0.9130 | 0.7345 | 0.9901 | 0.9901 | 0.9382 | 0.8751 | 0.8834 | 0.9797 | |

| wave | 0.8842 | 0.6709 | 0.9662 | 0.9598 | 0.9171 | 0.8322 | 0.8748 | 0.9684 | |

| ResNet | logmel | 0.8341 | 0.5819 | 0.9237 | 0.9470 | 0.7960 | 0.7998 | 0.8303 | 0.9598 |

| mfcc | 0.8086 | 0.5368 | 0.9359 | 0.9235 | 0.7902 | 0.7474 | 0.7696 | 0.9568 | |

| wave | 0.8073 | 0.5071 | 0.9246 | 0.9364 | 0.8233 | 0.7066 | 0.8136 | 0.9398 | |

| ResNeXt | logmel | 0.8468 | 0.5994 | 0.9367 | 0.9616 | 0.8249 | 0.8010 | 0.8374 | 0.9663 |

| mfcc | 0.8282 | 0.5608 | 0.9365 | 0.9576 | 0.7520 | 0.8287 | 0.8051 | 0.9570 | |

| wave | 0.8412 | 0.5545 | 0.9719 | 0.9595 | 0.8570 | 0.7561 | 0.8330 | 0.9563 | |

| SE- ResNeXt |

logmel | 0.8790 | 0.6605 | 0.9752 | 0.9711 | 0.8689 | 0.8469 | 0.8600 | 0.9705 |

| mfcc | 0.8925 | 0.6954 | 0.9834 | 0.9833 | 0.8828 | 0.8950 | 0.8385 | 0.9687 | |

| wave | 0.8445 | 0.5758 | 0.9591 | 0.9410 | 0.8893 | 0.7762 | 0.8249 | 0.9453 |

| Model | Input | mAP | CV | DC | DW | FF | SIN | UP | VX |

|---|---|---|---|---|---|---|---|---|---|

| MobileNet | logmel | 0.8541 | 0.5812 | 0.9545 | 0.9563 | 0.8793 | 0.8351 | 0.8171 | 0.9555 |

| mfcc | 0.8331 | 0.5371 | 0.9571 | 0.9588 | 0.8185 | 0.8217 | 0.7904 | 0.9481 | |

| wave | 0.8290 | 0.5040 | 0.9234 | 0.9256 | 0.8824 | 0.7948 | 0.8257 | 0.9471 | |

| Xception | logmel | 0.8820 | 0.6667 | 0.9738 | 0.9659 | 0.8853 | 0.8769 | 0.8528 | 0.9530 |

| mfcc | 0.8692 | 0.6218 | 0.9769 | 0.9664 | 0.9024 | 0.8569 | 0.7992 | 0.9608 | |

| wave | 0.7968 | 0.4716 | 0.8944 | 0.9329 | 0.8016 | 0.7563 | 0.7791 | 0.9417 | |

| ResNet | logmel | 0.9291 | 0.7582 | 0.9855 | 0.9888 | 0.9398 | 0.9436 | 0.9058 | 0.9822 |

| mfcc | 0.9142 | 0.7365 | 0.9829 | 0.9907 | 0.9392 | 0.8840 | 0.8883 | 0.9779 | |

| wave | 0.8709 | 0.6389 | 0.9558 | 0.9504 | 0.8616 | 0.8500 | 0.8658 | 0.9739 | |

| ResNeXt | logmel | 0.9123 | 0.7342 | 0.9821 | 0.9894 | 0.9291 | 0.9099 | 0.8617 | 0.9800 |

| mfcc | 0.9191 | 0.7614 | 0.9870 | 0.9879 | 0.9477 | 0.8962 | 0.8789 | 0.9748 | |

| wave | 0.8697 | 0.6102 | 0.9580 | 0.9511 | 0.8552 | 0.8658 | 0.8748 | 0.9729 | |

| SE- ResNeXt |

logmel | 0.9131 | 0.7166 | 0.9860 | 0.9734 | 0.9431 | 0.9223 | 0.8774 | 0.9731 |

| mfcc | 0.8180 | 0.4824 | 0.9511 | 0.9329 | 0.8096 | 0.7952 | 0.7990 | 0.9558 | |

| wave | 0.8177 | 0.5190 | 0.9149 | 0.9096 | 0.8337 | 0.7780 | 0.8166 | 0.9524 |

| Model | Data | SNR | ||||||

|---|---|---|---|---|---|---|---|---|

| Test on | Train on | Pure | 40 | 30 | 20 | 10 | 0 | |

| MobileNet | org | org | 0.8584 | 0.8567 | 0.8571 | 0.8341 | 0.7916 | 0.6091 |

| sim | 0.8351 | 0.8341 | 0.8297 | 0.8164 | 0.7497 | 0.5519 | ||

| all | 0.8527 | 0.8527 | 0.8506 | 0.8397 | 0.7962 | 0.6052 | ||

| sim | org | 0.8450 | 0.8450 | 0.8440 | 0.8262 | 0.7767 | 0.5615 | |

| sim | 0.8446 | 0.8442 | 0.8411 | 0.8279 | 0.7494 | 0.5377 | ||

| all | 0.8532 | 0.8538 | 0.8519 | 0.8366 | 0.7887 | 0.5798 | ||

| Xception | org | org | 0.9231 | 0.9226 | 0.9202 | 0.9109 | 0.8649 | 0.6987 |

| sim | 0.9055 | 0.9059 | 0.9048 | 0.8921 | 0.8368 | 0.6727 | ||

| all | 0.9124 | 0.9127 | 0.9103 | 0.9010 | 0.8450 | 0.6763 | ||

| sim | org | 0.9148 | 0.9152 | 0.9139 | 0.8989 | 0.8394 | 0.6579 | |

| sim | 0.9091 | 0.9090 | 0.9074 | 0.8936 | 0.8272 | 0.6530 | ||

| all | 0.9063 | 0.9063 | 0.9042 | 0.8965 | 0.8296 | 0.6448 | ||

| ResNet | org | org | 0.8341 | 0.8341 | 0.8287 | 0.8148 | 0.7263 | 0.5082 |

| sim | 0.8075 | 0.8078 | 0.8031 | 0.7841 | 0.6987 | 0.4925 | ||

| all | 0.8416 | 0.8407 | 0.8404 | 0.8255 | 0.7627 | 0.5427 | ||

| sim | org | 0.8217 | 0.8214 | 0.8169 | 0.7944 | 0.6996 | 0.4949 | |

| sim | 0.8158 | 0.8156 | 0.8112 | 0.7936 | 0.7057 | 0.4886 | ||

| all | 0.8444 | 0.8441 | 0.8425 | 0.8249 | 0.7514 | 0.5322 | ||

| ResNeXt | org | org | 0.8468 | 0.8463 | 0.8463 | 0.8369 | 0.7810 | 0.5579 |

| sim | 0.8378 | 0.8374 | 0.8333 | 0.8272 | 0.7549 | 0.5186 | ||

| all | 0.8554 | 0.8538 | 0.8521 | 0.8429 | 0.7812 | 0.5364 | ||

| sim | org | 0.8292 | 0.8301 | 0.8254 | 0.8213 | 0.7495 | 0.5389 | |

| sim | 0.8473 | 0.8480 | 0.8467 | 0.8286 | 0.7652 | 0.5155 | ||

| all | 0.8532 | 0.8539 | 0.8521 | 0.8395 | 0.7649 | 0.5304 | ||

| SE-ResNeXt | org | org | 0.8790 | 0.8785 | 0.8777 | 0.8628 | 0.7856 | 0.5532 |

| sim | 0.8596 | 0.8592 | 0.8605 | 0.8434 | 0.7498 | 0.5930 | ||

| all | 0.8862 | 0.8864 | 0.8863 | 0.8696 | 0.7967 | 0.5955 | ||

| sim | org | 0.8693 | 0.8693 | 0.8637 | 0.8474 | 0.7687 | 0.5271 | |

| sim | 0.8721 | 0.8715 | 0.8717 | 0.8500 | 0.7513 | 0.5880 | ||

| all | 0.8865 | 0.8873 | 0.8877 | 0.8676 | 0.7841 | 0.5745 | ||

| Model | Data | SNR | ||||||

|---|---|---|---|---|---|---|---|---|

| Test on | Train on | Pure | 40 | 30 | 20 | 10 | 0 | |

| MobileNet | org | org | 0.8572 | 0.8560 | 0.8564 | 0.8312 | 0.7571 | 0.5550 |

| sim | 0.6775 | 0.6762 | 0.6675 | 0.6266 | 0.5084 | 0.3511 | ||

| all | 0.8685 | 0.8688 | 0.8691 | 0.8485 | 0.7853 | 0.5469 | ||

| sim | org | 0.8489 | 0.8484 | 0.8496 | 0.8187 | 0.7179 | 0.4989 | |

| sim | 0.6852 | 0.6836 | 0.6748 | 0.6287 | 0.5168 | 0.3441 | ||

| all | 0.8629 | 0.8625 | 0.8590 | 0.8366 | 0.7656 | 0.5142 | ||

| Xception | org | org | 0.9130 | 0.9136 | 0.9135 | 0.9003 | 0.8463 | 0.6774 |

| sim | 0.8997 | 0.8992 | 0.8971 | 0.8799 | 0.8121 | 0.6520 | ||

| all | 0.9290 | 0.9292 | 0.9273 | 0.9073 | 0.8590 | 0.7059 | ||

| sim | org | 0.9043 | 0.9040 | 0.9013 | 0.8825 | 0.8188 | 0.6262 | |

| sim | 0.9106 | 0.9101 | 0.9049 | 0.8859 | 0.8107 | 0.6256 | ||

| all | 0.9261 | 0.9265 | 0.9232 | 0.9005 | 0.8376 | 0.6702 | ||

| ResNet | org | org | 0.8086 | 0.8083 | 0.8022 | 0.7709 | 0.6603 | 0.4151 |

| sim | 0.7704 | 0.7699 | 0.7700 | 0.7533 | 0.6511 | 0.4011 | ||

| all | 0.8415 | 0.8406 | 0.8357 | 0.8294 | 0.7266 | 0.4885 | ||

| sim | org | 0.7835 | 0.7846 | 0.7803 | 0.7476 | 0.6338 | 0.4037 | |

| sim | 0.7842 | 0.7832 | 0.7825 | 0.7651 | 0.6537 | 0.3975 | ||

| all | 0.8418 | 0.8422 | 0.8379 | 0.8290 | 0.7201 | 0.4843 | ||

| ResNeXt | org | org | 0.8282 | 0.8277 | 0.8284 | 0.8105 | 0.7276 | 0.5182 |

| sim | 0.7842 | 0.7829 | 0.7801 | 0.7585 | 0.6634 | 0.4478 | ||

| all | 0.8470 | 0.8470 | 0.8472 | 0.8299 | 0.7419 | 0.5198 | ||

| sim | org | 0.8155 | 0.8151 | 0.8160 | 0.7930 | 0.7025 | 0.4737 | |

| sim | 0.8046 | 0.8040 | 0.8022 | 0.7764 | 0.6756 | 0.4501 | ||

| all | 0.8328 | 0.8333 | 0.8349 | 0.8177 | 0.7247 | 0.5015 | ||

| SE-ResNeXt | org | org | 0.8925 | 0.8926 | 0.8919 | 0.8727 | 0.8013 | 0.5903 |

| sim | 0.8694 | 0.8707 | 0.8680 | 0.8584 | 0.7818 | 0.5858 | ||

| all | 0.9031 | 0.9030 | 0.9021 | 0.8908 | 0.8180 | 0.6100 | ||

| sim | org | 0.8822 | 0.8823 | 0.8768 | 0.8530 | 0.7670 | 0.5355 | |

| sim | 0.8829 | 0.8827 | 0.8824 | 0.8694 | 0.7873 | 0.5749 | ||

| all | 0.9006 | 0.9003 | 0.8958 | 0.8823 | 0.8009 | 0.5813 | ||

| Model | Data | SNR | ||||||

|---|---|---|---|---|---|---|---|---|

| Test on | Train on | Pure | 40 | 30 | 20 | 10 | 0 | |

| MobileNet | org | org | 0.8319 | 0.8325 | 0.8312 | 0.8236 | 0.7878 | 0.5671 |

| sim | 0.7752 | 0.7751 | 0.7737 | 0.7688 | 0.7293 | 0.4885 | ||

| all | 0.8531 | 0.8530 | 0.8530 | 0.8497 | 0.8072 | 0.5968 | ||

| sim | org | 0.7927 | 0.7928 | 0.7941 | 0.7896 | 0.7435 | 0.5272 | |

| sim | 0.7804 | 0.7799 | 0.7790 | 0.7719 | 0.7196 | 0.4777 | ||

| all | 0.8473 | 0.8475 | 0.8484 | 0.8407 | 0.7936 | 0.5689 | ||

| Xception | org | org | 0.8842 | 0.8837 | 0.8855 | 0.8794 | 0.8428 | 0.6524 |

| sim | 0.8514 | 0.8518 | 0.8512 | 0.8503 | 0.8152 | 0.6325 | ||

| all | 0.8949 | 0.8945 | 0.8946 | 0.8897 | 0.8532 | 0.6877 | ||

| sim | org | 0.8639 | 0.8638 | 0.8643 | 0.8553 | 0.8118 | 0.6085 | |

| sim | 0.8620 | 0.8627 | 0.8616 | 0.8591 | 0.8102 | 0.6156 | ||

| all | 0.8898 | 0.8895 | 0.8901 | 0.8856 | 0.8377 | 0.6519 | ||

| ResNet | org | org | 0.8073 | 0.8074 | 0.8078 | 0.7992 | 0.7555 | 0.5308 |

| sim | 0.7479 | 0.7485 | 0.7490 | 0.7537 | 0.7096 | 0.4904 | ||

| all | 0.8610 | 0.8611 | 0.8605 | 0.8593 | 0.8198 | 0.6349 | ||

| sim | org | 0.7834 | 0.7835 | 0.7838 | 0.7762 | 0.7171 | 0.4918 | |

| sim | 0.7539 | 0.7540 | 0.7572 | 0.7573 | 0.6999 | 0.4799 | ||

| all | 0.8598 | 0.8600 | 0.8607 | 0.8590 | 0.8111 | 0.6193 | ||

| ResNeXt | org | org | 0.8412 | 0.8407 | 0.8426 | 0.8387 | 0.8083 | 0.6170 |

| sim | 0.8189 | 0.8190 | 0.8188 | 0.8176 | 0.7810 | 0.5695 | ||

| all | 0.8678 | 0.8679 | 0.8683 | 0.8647 | 0.8354 | 0.6616 | ||

| sim | org | 0.8232 | 0.8235 | 0.8226 | 0.8172 | 0.7748 | 0.5544 | |

| sim | 0.8276 | 0.8275 | 0.8260 | 0.8246 | 0.7794 | 0.5563 | ||

| all | 0.8619 | 0.8621 | 0.8611 | 0.8594 | 0.8266 | 0.6428 | ||

| SE-ResNeXt | org | org | 0.8445 | 0.8446 | 0.8443 | 0.8361 | 0.7856 | 0.5685 |

| sim | 0.7909 | 0.7910 | 0.7896 | 0.7839 | 0.7413 | 0.5734 | ||

| all | 0.8702 | 0.8704 | 0.8693 | 0.8621 | 0.8272 | 0.6070 | ||

| sim | org | 0.8183 | 0.8177 | 0.8177 | 0.8063 | 0.7457 | 0.5179 | |

| sim | 0.8076 | 0.8075 | 0.8050 | 0.7998 | 0.7474 | 0.5537 | ||

| all | 0.8688 | 0.8688 | 0.8684 | 0.8589 | 0.8069 | 0.5787 | ||

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.