Submitted:

13 April 2026

Posted:

14 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Scope, Search Strategy, and Positioning of This Review

2.1. Literature Search and Selection Strategy

2.2. Terminology Used in This Review

2.3. What Distinguishes This Review from Recent Reviews

| Period covered | Main scope | Environmental integration covered? | Phenomics integration covered? | Deployment/validation focus? | Distinctive focus relative to the present review |

|---|---|---|---|---|---|

| Broad methodological literature to 2022 [1] | Deep learning for crop genomic selection with environmental data | Yes | Indirect | Limited | Broad model survey; less emphasis on 2023-2026 comparative multimodal evidence and deployment framing |

| Historical genomic-selection literature to 2023 [2] | General genomic selection for crop improvement | Partial | Limited | Limited | Genomic-selection background; less specific emphasis on multi-environment prediction under explicit G×E and TPE logic |

| Historical drone-phenotyping literature to 2023 [5] | Drone imaging and phenotyping for breeding | Indirect | Yes | Limited | Sensor-platform overview; less emphasis on whether phenomics alters breeder-relevant prediction |

| Broad AI literature to 2023 [7] | AI methods across crop science | Partial | Partial | Limited | Broad AI coverage; less specific emphasis on multimodal prediction for breeding deployment |

| Historical field-phenotyping literature to 2024 [9] | Field crop phenotyping methods and trajectories | Indirect | Yes | Limited | Phenomics context; less explicit integration with genomic and environmental prediction |

| Historical genomic-selection literature to 2024 [10] | Applications and prospects of genomic selection | Partial | Limited | Partial | Breeding background; less emphasis on recent deployment scenarios, baseline choice, and reporting standards |

3. Why Multi-Environment Genomic Prediction Has Become a Bottleneck

3.1. Prediction Targets in Breeding Are Deployment Specific

3.2. Why Marker-Only Models Can Underperform for Environmentally Contingent Traits

3.3. The Target Population of Environments Is Not a Background Concept

4. Environmental Representation: Envirotyping, Enviromics, and Crop Context

4.1. What Counts as Useful Environmental Information

4.2. Envirotyping and Enviromics Should Not Be Conflated

4.3. Feature Engineering Versus Sequence-Based Environmental Encoding

4.4. Crop Growth Models and Ecophysiological Mediation

4.5. Evidence from Representative Recent Studies

| Crop/trait(s) | Study scale | Data layers | Model family | Comparator baseline | Best reported value / gain | Deployment stage |

| Sesame; 9 agronomic traits | Diversity panel; 2 seasons [93] | Markers + MET field data | GBLUP, Bayes, RKHS, marker×environment | Single-environment models | 15%-58% improvement in predictive ability, under multi-environment analyses relative to single-environment models | Early-to-mid stage MET support |

| Grain sorghum hybrids; hybrid performance | US sorghum production environments [32] | Markers + envirotype typologies | Hierarchical Bayesian reaction norms | Alternative envirotype and relationship structures in the same study | Study-specific qualitative improvement in new-environment prediction, relative to alternative envirotype and relationship structures in the same study; no single pooled number reported here | Sparse hybrid MET support |

| Maize; multi-trial performance | 4,402 varieties; 195 trials; 87.1% missing [37] | Markers + environmental covariates | MegaLMM with environmental regressions on latent factors | Univariate GBLUP | Study-specific qualitative improvement in new-environment prediction under extreme missingness, relative to univariate GBLUP; no single pooled number reported here | Large-network sparse testing |

| Field pea; seed protein and seed yield | 300 candidates; 3 contrasting environments [94] | Markers + multi-trait multi-environment phenotypes | MTME genomic prediction | Additive G-BLUP | Study-specific qualitative improvement in whole- and split-environment prediction, relative to additive G-BLUP; no single pooled number reported here | Preliminary MET support |

| Maize; grain yield | Large multi-environment trial dataset [34] | Markers + engineered environmental descriptors | Tree-based ML G+E and GEI models | Factor-analytic multiplicative mixed model | Up to 7% improvement in mean prediction accuracy, under the authors' study-specific CV settings relative to a factor-analytic multiplicative mixed model | Mid-to-late stage MET prediction |

| Maize hybrids; grain moisture and grain yield | 2,126 hybrids; 34 environments; 9,355 SNPs [35] | Markers + 19 climatic factors / reduced climate sets | GBLUP-GE variants | GBLUP and reduced-climate GBLUP-GE variants | Prediction accuracy of 0.731 for grain moisture and 0.331 for grain yield, under cross-region and 10-fold validation for the full GBLUP-GE19CF model | Regional MET recommendation |

| Maize, rice, and wheat; agronomic traits | Benchmark-scale multi-crop datasets [44] | Markers + daily environmental sequences | GEFormer with gMLP, dynamic convolution, and attention | 6 statistical and 4 ML comparators | Study-specific qualitative improvement in the hardest genotype/environment withholding settings, relative to six statistical and four ML comparators; no single pooled number reported here | Hard extrapolation benchmarking |

| Maize hybrids; plasticity, stability, and genomic prediction | Large multi-environment hybrid dataset [45] | Markers + reduced environmental parameters + trait-associated markers | AutoML framework | Marker-only genome-wide models | 14.02%-28.42% improvement in predictive ability, under the authors' study-specific genomic prediction settings relative to marker-only genome-wide models | Climate-adaptive hybrid selection |

| Crop/trait(s) | Study scale | Data layers | Model family | Comparator baseline | Best reported value / gain | Deployment stage |

| Winter wheat; grain yield | Winter wheat breeding dataset [95] | Genomic inputs + UAS-derived phenotypes | Genomic-only, phenotypic-only, and combined models | Genomic-only and phenotypic-only models | Study-specific qualitative improvement in combined-genomic-plus-UAS prediction, relative to genomic-only and phenotypic-only models; no single pooled number reported here | Advanced yield testing |

| Winter wheat; grain yield | 2,994 lines; 2 sites; 2 years [28] | Markers + multispectral, hyperspectral, and visual phenomics | Phenomic-only, genomic-only, and combined models | Genomic-only and best phenomic-only models | Phenomic-only R² about 0.39-0.47, with combined models improving 6%-12% over the best phenomic-only model in cross-location prediction | Advanced yield testing |

| Coffea canephora; yield | Diverse population; 2 locations; 4 harvest seasons [87] | Genomic markers + NIR-based phenomics | Genomic selection vs phenomic selection | Genomic-only and phenomic-only predictors | Study-specific qualitative competitive performance of NIR-based phenomic predictors, relative to genomic-only predictors in within- and across-location prediction; no single pooled number reported here | Perennial selection support |

| Eucalyptus; multiple agronomic traits | Tree breeding populations adapted to arid environments [78] | SNP markers + spectral phenomics | MLP, CNN, and Bayesian models | Bayesian alphabet models | Prediction accuracy of 0.13-0.80 for MLP and 0.16-0.82 for CNN, relative to 0.08-0.66 for Bayesian models across traits | Tree breeding trait support |

| Winter wheat; grain yield | 4,094 genotypes; 11,593 plots; 2019-2022 [59] | Markers + UAS spectral reflectance indices | Univariate and multivariate genomic prediction | Base genomic prediction control | At least 16% higher prediction accuracy than the genomic control, when test-year NDVI was available under leave-one-year-out validation; cross-year reliability remained limited | Late-stage seasonal decision support |

| Sesame; longitudinal traits and yield | Diversity panel over growing seasons [75] | Markers + temporal high-throughput phenotyping | Random regression, longitudinal GP, multi-trait GP | Single-trait longitudinal analysis | Study-specific qualitative improvement in future-phenotype forecasting and multi-trait prediction, relative to single-trait longitudinal analysis; no single pooled number reported here | Early repeated-phenotyping selection |

4.6. Environmental Extrapolation Remains Conditional

5. AI and Statistical Learning Architectures: What the Recent Evidence Actually Supports

5.1. Strong Baselines Still Define the Standard of Proof

5.2. When Machine Learning Adds Value

5.3. Where Deep Learning Is Most Credible

5.4. Interpretation, Uncertainty, and the Credibility of Model Choice

5.5. Benchmark Hygiene, Leakage, and Fair Comparison

| Issue | Typical manifestation | When most severe | Practical response |

|---|---|---|---|

| Kinship leakage [37] | Closely related genotypes occur in both training and test folds | Family-structured breeding populations | Use family-aware splits or pedigree/genomic relationship constraints |

| Environmental leakage [26] | Training and test sets share near-duplicate year-location contexts | Repeated trial networks and short time spans | Use leave-one-environment, leave-one-year, or site-withholding designs |

| Timing leakage [59,60,75] | Late-season phenomics or weather summaries are used for early-stage claims | Operationally compressed breeding timelines | State explicitly when each data layer becomes available |

| Misaligned environmental covariates [19,26,33,34,38] | Raw weather tables are added without stage alignment | Traits tied to developmental windows | Use stage-aware envirotyping or crop-model-informed summaries |

| Severe missing-data burden [37,80,81,94] | Sparse genotype-environment matrices distort apparent gains | Network trials and sparse testing | Report missingness pattern and compare against sparse-data-aware baselines |

| Weak baseline choice [34,37,42,43,44] | AI models are compared only with marker-only baselines | Method-comparison papers | Benchmark against strong factor-analytic, reaction-norm, or mixed-model baselines |

| Unclear decision framing [37,44,45,53,59] |

Accuracy is reported without deployment stage, uncertainty, or cost context | Late-stage recommendation or expensive field validation | Report scenario, uncertainty, and deployment use-case together |

5.6. Minimum Reporting Recommendations for Future Studies

6. Phenomics-Assisted and Multimodal Prediction

6.1. Why Phenomic Markers Are Not Redundant with Genomic Markers

6.2. Timing of Phenomic Acquisition Matters as Much as Sensor Quality

6.3. Temporal Phenotyping Changes the Prediction Problem

6.4. Multimodal Fusion Is Promising, But Not All Data Layers Earn Their Cost

6.5. Interpreting Cases Where Genomic and Phenomic Signals May Diverge

7. From Prediction Accuracy to Breeding Use

7.1. Validation Design Must Mirror the Breeding Question

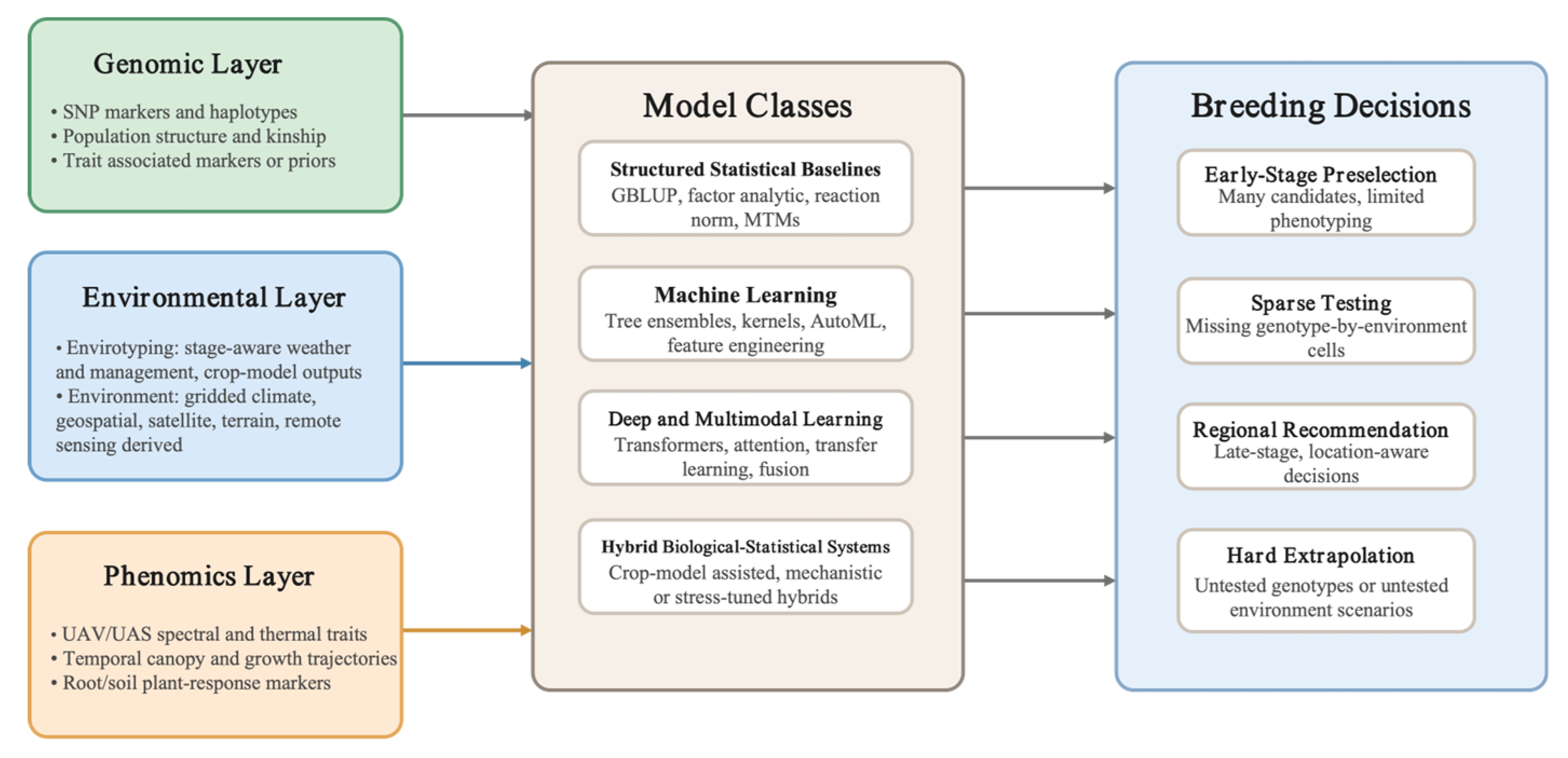

7.2. Breeding Stage Determines Which Model Family Is Realistic

7.3. Uncertainty and Economic Decision Value Should Be Reported Together

7.4. A Practical Framework for Stage-Specific Deployment

7.5. Practical Design Rules for Readers and Future Authors

8. Current Limitations and Priorities for the Next Phase

9. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Sheikh Jubair; Michael Domaratzki. Crop genomic selection with deep learning and environmental data: A survey. Frontiers in Artificial Intelligence 2023, 5, 1040295-1040295. [CrossRef]

- Rabiya Parveen; Mankesh Kumar; Swapnil Swapnil; Digvijay Singh; Monika Shahani; Zafar Imam; Jyoti Prakash Sahoo. Understanding the genomic selection for crop improvement: current progress and future prospects. Molecular Genetics and Genomics 2023, 298(4), 813-821. [CrossRef]

- Jason Walsh; Eleni Mangina; Sónia Negrão. Advancements in Imaging Sensors and AI for Plant Stress Detection: A Systematic Literature Review. Plant Phenomics 2024, 6, 0153-0153. [CrossRef]

- Andekelile Mwamahonje; Zamu Mdindikasi; Devotha Mchau; Emmanuel T. Mwenda; Daines Nicodem Sanga; Ana Luísa Garcia-Oliveira; Chris O. Ojiewo. Advances in Sorghum Improvement for Climate Resilience in the Global Arid and Semi-Arid Tropics: A Review. Agronomy 2024, 14(12), 3025-3025. [CrossRef]

- Boubacar Gano; Sourav Bhadra; Justin M. Vilbig; Nurzaman Ahmed; Vasit Sagan; Nadia Shakoor. Drone-based imaging sensors, techniques, and applications in plant phenotyping for crop breeding: A comprehensive review. The Plant Phenome Journal 2024, 7(1). [CrossRef]

- Lixia Sun; Mingyu Lai; Fozia Ghouri; Muhammad Amjad Nawaz; Fawad Ali; Faheem Shehzad Baloch; Muhammad Azhar Nadeem; Muhammad Aasım; Muhammad Qasim Shahid. Modern Plant Breeding Techniques in Crop Improvement and Genetic Diversity: From Molecular Markers and Gene Editing to Artificial Intelligence—A Critical Review. Plants 2024, 13(19), 2676-2676. [CrossRef]

- Suvojit Bose; Saptarshi Banerjee; Soumya Kumar; Akash Saha; Debalina Nandy; Soham Hazra. Review of applications of artificial intelligence (AI) methods in crop research. Journal of Applied Genetics 2024, 65(2), 225-240. [CrossRef]

- Guilong Lu; Purui Liu; Qibin Wu; Shuzhen Zhang; Peifang Zhao; Yuebin Zhang; Youxiong Que. Sugarcane breeding: a fantastic past and promising future driven by technology and methods. Frontiers in Plant Science 2024, 15, 1375934-1375934. [CrossRef]

- Lukas Roth; Afef Marzougui; Achim Walter. A review of the journey of field crop phenotyping: From trait stamp collections and fancy robots to phenomics-informed crop performance predictions. Journal of Plant Physiology 2025, 311, 154542-154542. [CrossRef]

- Diana M. Escamilla; Dongdong Li; Karlene L. Negus; Kiara L. Kappelmann; Aaron Kusmec; Adam Vanous; Patrick S. Schnable; Xianran Li; Jianming Yu. Genomic selection: Essence, applications, and prospects. The Plant Genome 2025, 18(2), e70053-e70053. [CrossRef]

- Ana Luísa Garcia-Oliveira; Sangam L. Dwivedi; Subhash Chander; Charles Nelimor; Diaa Abd El Moneim; Rodomiro Ortíz. Breeding Smarter: Artificial Intelligence and Machine Learning Tools in Modern Breeding—A Review. Agronomy 2026, 16(1), 137-137. [CrossRef]

- Shaoming Huang; Krishna Kishore Gali; Gene Arganosa; Bunyamin Tar ̓an; Rosalind Bueckert; Thomas D. Warkentin. Breeding indicators for high-yielding field pea under normal and heat stress environments. Canadian Journal of Plant Science 2023, 103(3), 259-269. [CrossRef]

- Carlos A. Robles-Zazueta; Leonardo Crespo-Herrera; Francisco J. Piñera-Chávez; Carolina Rivera-Amado; Guðbjörg I. Aradóttir. Climate change impacts on crop breeding: Targeting interacting biotic and abiotic stresses for wheat improvement. The Plant Genome 2023, 17(1), e20365-e20365. [CrossRef]

- Jacob van Etten; Kauê de Sousa; Jill E. Cairns; Matteo Dell’Acqua; Carlo Fadda; Davíd Güereña; Joost van Heerwaarden; Teshale Assefa; Rhys Manners; Anna Müller. Data-driven approaches can harness crop diversity to address heterogeneous needs for breeding products. Proceedings of the National Academy of Sciences 2023, 120(14), e2205771120-e2205771120. [CrossRef]

- Osval A. Montesinos-López; Leonardo Crespo-Herrera; Carolina Saint Pierre; Alison R. Bentley; Roberto de la Rosa-Santamaria; José Alejandro Ascencio-Laguna; Afolabi Agbona; Guillermo Gerard; Abelardo Montesinos-López; José Crossa. Do feature selection methods for selecting environmental covariables enhance genomic prediction accuracy?. Frontiers in Genetics 2023, 14, 1209275-1209275. [CrossRef]

- J. R. Adams; Michiel E. de Vries; Fred A. van Eeuwijk. Efficient Genomic Prediction of Yield and Dry Matter in Hybrid Potato. Plants 2023, 12(14), 2617-2617. [CrossRef]

- Yoselin Benitez-Alfonso; Beth K Soanes; Sibongile Zimba; Besiana Sinanaj; Liam German; Vinay Sharma; Abhishek Bohra; Anastasia Kolesnikova; Jessica Dunn; Azahara C. Martín. Enhancing climate change resilience in agricultural crops. Current Biology 2023, 33(23), R1246-R1261. [CrossRef]

- Tingting Guo; Jialu Wei; Xianran Li; Jianming Yu. Environmental context of phenotypic plasticity in flowering time in sorghum and rice. Journal of Experimental Botany 2023, 75(3), 1004-1015. [CrossRef]

- Chloé Elmerich; Michel-Pierre Faucon; M. A. García; Patrice Jeanson; Guénolé Boulch; Bastien Lange. Envirotyping to control genotype x environment interactions for efficient soybean breeding. Field Crops Research 2023, 303, 109113-109113. [CrossRef]

- Artūrs Katamadze; Omar Vergara-Díaz; Estefanía Uberegui; Ander Yoldi-Achalandabaso; J. L. Araus; Rubén Vicente. Evolution of wheat architecture, physiology, and metabolism during domestication and further cultivation: Lessons for crop improvement. The Crop Journal 2023, 11(4), 1080-1096. [CrossRef]

- Hans-Peter Piepho; Justin Blancon. Extending Finlay–Wilkinson regression with environmental covariates. Plant Breeding 2023, 142(5), 621-631. [CrossRef]

- Mark Cooper; Owen Powell; Carla Gho; Tom Tang; Carlos D. Messina. Extending the breeder’s equation to take aim at the target population of environments. Frontiers in Plant Science 2023, 14, 1129591-1129591. [CrossRef]

- Jeffrey B. Endelman. Fully efficient, two-stage analysis of multi-environment trials with directional dominance and multi-trait genomic selection. Theoretical and Applied Genetics 2023, 136(4), 65-65. [CrossRef]

- Ashok Singamsetti; P.H. Zaidi; K. Seetharam; Madhumal Thayil Vinayan; Tiago Olivoto; Anima Mahato; Kartik Madankar; Munnesh Kumar; Kumari Shikha. Genetic gains in tropical maize hybrids across moisture regimes with multi-trait-based index selection. Frontiers in Plant Science 2023, 14, 1147424-1147424. [CrossRef]

- Paul Adunola; Maria Amélia Gava Ferrão; Romário Gava Ferrão; Aymbiré Francisco Almeida da Fonseca; P. S. Volpi; Marcone Comério; Abraão Carlos Verdin Filho; Patricio Muńoz; Luís Felipe V. Ferrão. Genomic selection for genotype performance and environmental stability in Coffea canephora. G3 Genes Genomes Genetics 2023, 13(6). [CrossRef]

- Marco Lopez-Cruz; Fernando Aguate; Jacob D. Washburn; Natalia de León; Shawn M. Kaeppler; Dayane Cristina Lima; Ruijuan Tan; Addie Thompson; Laurence Willard De La Bretonne; Gustavo de los Campos. Leveraging data from the Genomes-to-Fields Initiative to investigate genotype-by-environment interactions in maize in North America. Nature Communications 2023, 14(1), 6904-6904. [CrossRef]

- Văn Hiếu Nguyễn; Rose Imee Zhella Morantte; Vitaliano Lopena; Holden Verdeprado; Rosemary Murori; Alexis Ndayiragije; Sanjay Katiyar; Md Rafiqul Islam; Roselyne U. Juma; H. Flandez-Galvez. Multi-environment Genomic Selection in Rice Elite Breeding Lines. Rice 2023, 16(1). [CrossRef]

- Robert Jackson; Jaap B. Buntjer; Alison R. Bentley; Jacob Lage; Ed Byrne; Chris Burt; Peter Jack; Simon Berry; Edward Flatman; Bruno Poupard. Phenomic and genomic prediction of yield on multiple locations in winter wheat. Frontiers in Genetics 2023, 14, 1164935-1164935. [CrossRef]

- Carlos D. Messina; Carla Gho; Graeme Hammer; Tom Tang; Mark Cooper. Two decades of harnessing standing genetic variation for physiological traits to improve drought tolerance in maize. Journal of Experimental Botany 2023, 74(16), 4847-4861. [CrossRef]

- Diriba Tadese; Hans-Peter Piepho; Jens Hartung. Accuracy of prediction from multi-environment trials for new locations using pedigree information and environmental covariates: the case of sorghum (Sorghum bicolor (L.) Moench) breeding. Theoretical and Applied Genetics 2024, 137(8), 181-181. [CrossRef]

- Alper Adak; Seth C. Murray; Jacob D. Washburn. Deciphering temporal growth patterns in maize: integrative modeling of phenotype dynamics and underlying genomic variations. New Phytologist 2024, 242(1), 121-136. [CrossRef]

- Noah D. Winans; Jales M. O. Fonseca; Ramasamy Perumal; Patricia E. Klein; Robert R. Klein; William L. Rooney. Envirotyping can increase genomic prediction accuracy of new environments in grain sorghum trials depending on mega-environment. Crop Science 2024, 64(5), 2519-2533. [CrossRef]

- Rafael T Resende; Lee T. Hickey; Cibele Hummel do Amaral; Lucas Lemes de Souza Peixoto; Gustavo Eduardo Marcatti; Yunbi Xu. Satellite-enabled enviromics to enhance crop improvement. Molecular Plant 2024, 17(6), 848-866. [CrossRef]

- Igor Kuivjogi Fernandes; Caio Canella Vieira; Kaio Olímpio das Graças Dias; Samuel B. Fernandes. Using machine learning to combine genetic and environmental data for maize grain yield predictions across multi-environment trials. Theoretical and Applied Genetics 2024, 137(8), 189-189. [CrossRef]

- Jingxin Wang; Liwei Liu; Kunhui He; Takele Weldu Gebrewahid; Shang Gao; Qiu Tian; Zhanyi Li; Yiqun Song; Y. Y. Guo; Yanwei Li. Accurate genomic prediction for grain yield and grain moisture content of maize hybrids using multi-environment data. Journal of Integrative Plant Biology 2025, 67(5), 1379-1394. [CrossRef]

- Fatma Ozair; Alper Adak; Seth C. Murray; Ryan Timothy Alpers; Alejandro Castro Aviles; Dayane Cristina Lima; Jode W. Edwards; David Ertl; Michael A. Gore; Candice N. Hirsch. Phenotypic plasticity in maize grain yield: Genetic and environmental insights of response to environmental gradients. The Plant Genome 2025, 18(3), e70078-e70078. [CrossRef]

- Haixiao Hu; Renaud Rincent; Daniel E. Runcie. MegaLMM improves genomic predictions in new environments using environmental covariates. Genetics 2024, 229(1), 1-41. [CrossRef]

- Abdulqader Jighly; Anna Weeks; Brendan Christy; Garry J. O’Leary; Surya Kant; Rajat Aggarwal; David Hessel; Kerrie Forrest; Frank Technow; Josquin Tibbits. Integrating biophysical crop growth models and whole genome prediction for their mutual benefit: a case study in wheat phenology. Journal of Experimental Botany 2023, 74(15), 4415-4426. [CrossRef]

- Pratishtha Poudel; Bryan Naidenov; Charles Chen; Phillip D. Alderman; Stephen M. Welch. Integrating genomic prediction and genotype specific parameter estimation in ecophysiological models: overview and perspectives. in silico Plants 2023, 5(1). [CrossRef]

- Freddy Mora; Carlos Maldonado; Luma Alana Vieira Henrique; Renan Santos Uhdre; Carlos Alberto Scapim; Claudete Aparecida Mangolim. Multi-trait and multi-environment genomic prediction for flowering traits in maize: a deep learning approach. Frontiers in Plant Science 2023, 14, 1153040-1153040. [CrossRef]

- Abelardo Montesinos-López; Carolina Rivera; Francisco Pinto; Francisco Piñera; David S. González-González; Matthew Reynolds; Paulino Pérez-Rodríguez; Huihui Li; Osval A. Montesinos-López; José Crossa. Multimodal deep learning methods enhance genomic prediction of wheat breeding. G3 Genes Genomes Genetics 2023, 13(5). [CrossRef]

- Admas Alemu; Johanna Åstrand; Osval A. Montesinos-López; Julio Isidro y Sánchez; Javier Fernández-Gónzalez; Wuletaw Tadesse; Ramesh R. Vetukuri; Anders S. Carlsson; Alf Ceplitis; José Crossa. Genomic selection in plant breeding: Key factors shaping two decades of progress. Molecular Plant 2024, 17(4), 552-578. [CrossRef]

- Qi-Xin Zhang; Tianneng Zhu; Lin Feng; Dunhuang Fang; Xuejun Chen; Xiang-Yang Lou; Zhijun Tong; Bingguang Xiao; Haiming Xu. mmGEBLUP: an advanced genomic prediction scheme for genetic improvement of complex traits in crops through integrative analysis of major genes, polygenes, and genotype–environment interactions. Briefings in Bioinformatics 2024, 26(1). [CrossRef]

- Yao Zhou; Ming Yao; Chuang Wang; Ke Li; Junhao Guo; Yingjie Xiao; Jianbing Yan; Jianxiao Liu. GEFormer: A genotype-environment interaction-based genomic prediction method that integrates the gating multilayer perceptron and linear attention mechanisms. Molecular Plant 2025, 18(3), 527-549. [CrossRef]

- Kunhui He; Tingxi Yu; Shang Gao; Shoukun Chen; Liang Li; Xuecai Zhang; Changling Huang; Yunbi Xu; Jiankang Wang; B. M. Prasanna. Leveraging Automated Machine Learning for Environmental Data-Driven Genetic Analysis and Genomic Prediction in Maize Hybrids. Advanced Science 2025, 12(17), e2412423-e2412423. [CrossRef]

- Cuiling Wu; Yiyi Zhang; Zhiwen Ying; Ling Li; Jun Wang; Hui Yu; Mengchen Zhang; Xianzhong Feng; Xinghua Wei; Xiaogang Xu. A transformer-based genomic prediction method fused with knowledge-guided module. Briefings in Bioinformatics 2023, 25(1). [CrossRef]

- Wanjie Feng; Pengfei Gao; Xutong Wang. AI breeder: Genomic predictions for crop breeding. New Crops 2023, 1, 100010-100010. [CrossRef]

- Mohsen Yoosefzadeh-Najafabadi; Sepideh Torabi; Dan Tulpan; Istvan Rajcan; Milad Eskandari. Application of SVR-Mediated GWAS for Identification of Durable Genetic Regions Associated with Soybean Seed Quality Traits. Plants 2023, 12(14), 2659-2659. [CrossRef]

- Dwaipayan Sinha; Arun Kumar Maurya; Gholamreza Abdi; Muhammad Majeed; Rachna Agarwal; Rashmi Mukherjee; Sharmistha Ganguly; Robina Aziz; Manika Bhatia; Aqsa Majgaonkar. Integrated Genomic Selection for Accelerating Breeding Programs of Climate-Smart Cereals. Genes 2023, 14(7), 1484-1484. [CrossRef]

- Wanchao Zhu; Rui Han; Xiaoyang Shang; Tao Zhou; Chengyong Liang; Xiaomeng Qin; Hong Chen; Zaiwen Feng; Hongwei Zhang; Xingming Fan. The CropGPT project: Call for a global, coordinated effort in precision design breeding driven by AI using biological big data. Molecular Plant 2023, 17(2), 215-218. [CrossRef]

- Xiaoding Ma; Hao Wang; Shengyang Wu; Bing Han; Di Cui; Jin Liu; Qıang Zhang; Xiuzhong Xia; Peng Song; Cuifeng Tang. DeepCCR: large-scale genomics-based deep learning method for improving rice breeding. Plant Biotechnology Journal 2024, 22(10), 2691-2693. [CrossRef]

- Hai Wang; Mengjiao Chen; Xin Wei; Rui Xia; Dong Pei; Xuehui Huang; Bin Han. Computational tools for plant genomics and breeding. Science China Life Sciences 2024, 67(8), 1579-1590. [CrossRef]

- Hao Wang; Shen Yan; Wenxi Wang; Yongming Cheng; Jingpeng Hong; Qiang He; Xianmin Diao; Yunan Lin; Yanqing Chen; Yongsheng Cao. Cropformer: An interpretable deep learning framework for crop genomic prediction. Plant Communications 2024, 6(3), 101223-101223. [CrossRef]

- Jinchen Li; Zikang He; Guomin Zhou; Shen Yan; Jianhua Zhang. DeepAT: A Deep Learning Wheat Phenotype Prediction Model Based on Genotype Data. Agronomy 2024, 14(12), 2756-2756. [CrossRef]

- Kai Tong; Xiaojing Chen; Shen Yan; Liangli Dai; Yuxue Liao; Zhaoling Li; Ting Wang. PlantMine: A Machine-Learning Framework to Detect Core SNPs in Rice Genomics. Genes 2024, 15(5), 603-603. [CrossRef]

- Jinlong Li; Dongfeng Zhang; Feng Yang; Qiusi Zhang; Shouhui Pan; Xiangyu Zhao; Qi Zhang; Yanyun Han; Jinliang Yang; Kaiyi Wang. TrG2P: A transfer-learning-based tool integrating multi-trait data for accurate prediction of crop yield. Plant Communications 2024, 5(7), 100975-100975. [CrossRef]

- Hao Wu; Rui Han; Liang Zhao; Mengyao Liu; Hong Chen; Weifu Li; Lin Li. AutoGP: An intelligent breeding platform for enhancing maize genomic selection. Plant Communications 2025, 6(4), 101240-101240. [CrossRef]

- Ran Li; Dongfeng Zhang; Yanyun Han; Zhongqiang Liu; Qiusi Zhang; Qi Zhang; Xiaofeng Wang; Shouhui Pan; Jiahao Sun; Kaiyi Wang. Hybrid Deep Learning Approaches for Improved Genomic Prediction in Crop Breeding. Agriculture 2025, 15(11), 1171-1171. [CrossRef]

- Andrew W. Herr; Peter Schmuker; Arron H. Carter. Large-scale breeding applications of unoccupied aircraft systems enabled genomic prediction. The Plant Phenome Journal 2024, 7(1). [CrossRef]

- David Hobby; Alain J Mbebi; Zoran Nikoloski. Towards genetic architecture and genomic prediction of crop traits from time-series data: Challenges and breakthroughs. Journal of Plant Physiology 2025, 312, 154566-154566. [CrossRef]

- Chenji Zhang; Sirong Jiang; Yangyang Tian; Xiaorui Dong; Jianjia Xiao; Yanjie Lu; Tiyun Liang; Hongmei Zhou; Dabin Xu; Han Zhang. Smart breeding driven by advances in sequencing technology. Modern Agriculture 2023, 1(1), 43-56. [CrossRef]

- Muhammad Ahtasham Mushtaq; Hafiz Ghulam Muhu-Din Ahmed; Yawen Zeng. Applications of Artificial Intelligence in Wheat Breeding for Sustainable Food Security. Sustainability 2024, 16(13), 5688-5688. [CrossRef]

- Jiayi Fu; S.Q. Zheng; Longjiang Fan; Xiaoming Zheng; Qian Qian. Breeding 5.0: Artificial intelligence (AI)-decoded germplasm for accelerated crop innovation. Journal of Integrative Plant Biology 2025. [CrossRef]

- Yukang Zeng; Xiaoming Xu; Jiale Jiang; Shaohang Lin; Zehui Fan; Yao Meng; Ailijiang Maimaiti; Penghao Wu; Jiaojiao Ren. Genome-wide association analysis and genomic selection for leaf-related traits of maize. PLoS ONE 2025, 20(5), e0323140-e0323140. [CrossRef]

- My Abdelmajid Kassem. Harnessing Artificial Intelligence and Machine Learning for Identifying Quantitative Trait Loci (QTL) Associated with Seed Quality Traits in Crops. Plants 2025, 14(11), 1727-1727. [CrossRef]

- Donghyun Jeon; Yuna Kang; Solji Lee; Se-Hyun Choi; Yeonjun Sung; Tae-Ho Lee; Changsoo Kim. Digitalizing breeding in plants: A new trend of next-generation breeding based on genomic prediction. Frontiers in Plant Science 2023, 14, 1092584-1092584. [CrossRef]

- Javier Mendoza-Revilla; Evan Trop; Liam Gonzalez; Maša Roller; Hugo Dalla-Torre; Bernardo P. de Almeida; Guillaume Richard; Jonathan Caton; Nicolás López Carranza; Marcin J. Skwark. A foundational large language model for edible plant genomes. Communications Biology 2024, 7(1), 835-835. [CrossRef]

- Andrew Callister; Germano Costa-Neto; Ben P. Bradshaw; Stephen Elms; José Crossa; Jeremy Brawner. Enviromic prediction enables the characterization and mapping of Eucalyptus globulus Labill breeding zones. Tree Genetics & Genomes 2024, 20(1). [CrossRef]

- Saulo Fabrício da Silva Chaves; Michelle B. Damacena; Kaio Olimpio G. Dias; Caio Varonill de Almada Oliveira; Leonardo Lopes Bhering. Factor analytic selection tools and environmental feature-integration enable holistic decision-making in Eucalyptus breeding. Scientific Reports 2024, 14(1), 18429-18429. [CrossRef]

- Rafael Tassinari Resende; Alencar Xavier; Pedro Italo T. Silva; Marcela Pedroso Mendes; Diego Jarquín; Gustavo Eduardo Marcatti. GIS-based G × E modeling of maize hybrids through enviromic markers engineering. New Phytologist 2024, 245(1), 102-116. [CrossRef]

- Parisa Sarzaeim; Francisco Muñoz-Arriola; Diego Jarquín; Hasnat Aslam; Natalia de León. CLIM4OMICS: a geospatially comprehensive climate and multi-OMICS database for maize phenotype predictability in the United States and Canada. Earth system science data 2023, 15(9), 3963-3990. [CrossRef]

- Francisco Pinto; Mainassara Zaman-Allah; Matthew Reynolds; Urs Schulthess. Satellite imagery for high-throughput phenotyping in breeding plots. Frontiers in Plant Science 2023, 14, 1114670-1114670. [CrossRef]

- Hoa Thi Nguyen; Md. Arifur Rahman Khan; Thuong Thi Nguyen; Nhi Thi Pham; Nguyen Thi Anh Thu; Touhidur Rahman Anik; Minh Nguyen; Mao Li; Kien Huu Nguyen; Uttam Kumar Ghosh. Advancing Crop Resilience Through High-Throughput Phenotyping for Crop Improvement in the Face of Climate Change. Plants 2025, 14(6), 907-907. [CrossRef]

- Pengpeng Zhang; Jingyao Huang; Yuntao Ma; Xiujuan Wang; Mengzhen Kang; Youhong Song. Crop/Plant Modeling Supports Plant Breeding: II. Guidance of Functional Plant Phenotyping for Trait Discovery. Plant Phenomics 2023, 5, 0091-0091. [CrossRef]

- Idan Sabag; Ye Bi; Maitreya Mohan Sahoo; Ittai Herrmann; Gota Morota; Zvi Peleg. Leveraging genomics and temporal high-throughput phenotyping to enhance association mapping and yield prediction in sesame. The Plant Genome 2024, 17(3), e20481-e20481. [CrossRef]

- Claudia Aviles Toledo; Melba M. Crawford; Mitchell R. Tuinstra. Integrating multi-modal remote sensing, deep learning, and attention mechanisms for yield prediction in plant breeding experiments. Frontiers in Plant Science 2024, 15, 1408047-1408047. [CrossRef]

- Q. Zou; Shuaishuai Tai; Qi Yuan; Yang Nie; Heping Gou; Longfei Wang; Chuanxiu Li; Jing Yi; Fangchun Dong; Zhen Yue. Large-scale crop dataset and deep learning-based multi-modal fusion framework for more accurate GG×EE genomic prediction. Computers and Electronics in Agriculture 2024, 230, 109833-109833. [CrossRef]

- Freddy Mora-Poblete; Daniel Mieres-Castro; Antônio Teixeira do Amaral Júnior; Matías Balach; Carlos Maldonado. Integrating deep learning for phenomic and genomic predictive modeling of Eucalyptus trees. Industrial Crops and Products 2024, 220, 119151-119151. [CrossRef]

- Yang Xu; Wenyan Yang; Jiayong Qiu; Kai Zhou; Guangning Yu; Yu-Xiang Zhang; Xin Wang; Yuxin Jiao; Xinyi Wang; Shujun Hu. Metabolic marker-assisted genomic prediction improves hybrid breeding. Plant Communications 2024, 6(3), 101199-101199. [CrossRef]

- Julián García-Abadillo; Paul Adunola; Fernando Silva Aguilar; Jhon Henry Trujillo-Montenegro; John J. Riascos; Reyna Persa; Julio Isidro y Sánchez; Diego Jarquín. Sparse testing designs for optimizing predictive ability in sugarcane populations. Frontiers in Plant Science 2024, 15, 1400000-1400000. [CrossRef]

- Nelson Lubanga; Beatrice Elohor Ifie; Reyna Persa; Ibnou Dieng; Ismail Rabbi; Diego Jarquín. Sparse testing designs for optimizing resource allocation in multi-environment cassava breeding trials. The Plant Genome 2025, 18(1), e20558-e20558. [CrossRef]

- Rahul Kumar; Sankar Prasad Das; Burhan U. Choudhury; Amit Kumar; Nitish Ranjan Prakash; Ramlakhan Verma; Mridul Chakraborti; Ayam Gangarani Devi; Bijoya Bhattacharjee; Rekha Das. Advances in genomic tools for plant breeding: harnessing DNA molecular markers, genomic selection, and genome editing. Biological Research 2024, 57(1), 80-80. [CrossRef]

- Daniel Crozier; Fabian Leon; Jales M. O. Fonseca; Patricia E. Klein; Robert R. Klein; William L. Rooney. Inbred phenotypic data and non-additive effects can enhance genomic prediction models for hybrid grain sorghum. Crop Science 2023, 63(3), 1183-1196. [CrossRef]

- Paolo Annicchiarico; Abco J. de Buck; Dimitrios Ν. Vlachostergios; Dennis Heupink; Avraam Koskosidis; Nelson Nazzicari; Margherita Crosta. White Lupin Adaptation to Moderately Calcareous Soils: Phenotypic Variation and Genome-Enabled Prediction. Plants 2023, 12(5), 1139-1139. [CrossRef]

- Johanna Åstrand; Firuz Odilbekov; Ramesh R. Vetukuri; Alf Ceplitis; Aakash Chawade. Leveraging genomic prediction to surpass current yield gains in spring barley. Theoretical and Applied Genetics 2024, 137(12), 260-260. [CrossRef]

- Zitong Li; Qian-Hao Zhu; Philippe Moncuquet; Iain W. Wilson; Danny Llewellyn; Warwick N. Stiller; Shiming Liu. Quantitative genomics-enabled selection for simultaneous improvement of lint yield and seed traits in cotton (Gossypium hirsutum L.). Theoretical and Applied Genetics 2024, 137(6), 142-142. [CrossRef]

- Paul Adunola; E Flores; E. M. Riva-Souza; Maria Amélia Gava Ferrão; João Felipe de Brites Senra; Marcone Comério; Marcelo Curitiba Espíndula; Abraão Carlos Verdin Filho; P. S. Volpi; Aymbiré Francisco Almeida da Fonseca. A comparison of genomic and phenomic selection methods for yield prediction in Coffea canephora. The Plant Phenome Journal 2024, 7(1). [CrossRef]

- Alem Gebremedhin; Yongjun Li; Arun S. K. Shunmugam; Shimna Sudheesh; Hossein Valipour Kahrood; Matthew Hayden; Garry M. Rosewarne; Sukhjiwan Kaur. Genomic selection for target traits in the Australian lentil breeding program. Frontiers in Plant Science 2024, 14, 1284781-1284781. [CrossRef]

- Alper Adak; Seth C. Murray; José Ignacio Varela; Valentina Infante; Jennifer Wilker; Claudia Irene Calderón; Nithya Subramanian; Natalia de León; Jianming Yu; Matthew A. Stull. Photoperiod associated late flowering reaction norm: Dissecting loci and genomic-enviromic associated prediction in maize. Field Crops Research 2024, 311, 109380-109380. [CrossRef]

- Ali Raza; Shanza Bashir; Tushar Khare; Benjamin Karikari; Rhys G. R. Copeland; Monica Jamla; Saghir Abbas; Sidra Charagh; Spurthi N. Nayak; Ivica Djalović. Temperature-smart plants: A new horizon with omics-driven plant breeding. Physiologia Plantarum 2024, 176(1). [CrossRef]

- Javaid Akhter Bhat; Xianzhong Feng; Zahoor Ahmad Mir; Aamir Raina; Kadambot H. M. Siddique. Recent advances in artificial intelligence, mechanistic models, and speed breeding offer exciting opportunities for precise and accelerated genomics-assisted breeding. Physiologia Plantarum 2023, 175(4), e13969-e13969. [CrossRef]

- Troels Mouritzen; Katharina Meurer; Elesandro Bornhofen; Luc Janss; Martin Weih; Stig Uggerhøj Andersen. Faba bean genetics and crop growth models – progress to date and opportunities for integration. Plant and Soil 2025, 514(1), 47-64. [CrossRef]

- Idan Sabag; Ye Bi; Zvi Peleg; Gota Morota. Multi-environment analysis enhances genomic prediction accuracy of agronomic traits in sesame. Frontiers in Genetics 2023, 14, 1108416-1108416. [CrossRef]

- Rica Amor Saludares; Sikiru Adeniyi Atanda; Lisa Piche; Hannah Worral; Françoise Dalprá Dariva; Kevin McPhee; Nonoy Bandillo. Multi-trait multi-environment genomic prediction of preliminary yield trial in pulse crop. The Plant Genome 2024, 17(3), e20496-e20496. [CrossRef]

- Osval A. Montesinos-López; Andrew W. Herr; José Crossa; Arron H. Carter. Genomics combined with UAS data enhances prediction of grain yield in winter wheat. Frontiers in Genetics 2023, 14, 1124218-1124218. [CrossRef]

- Hermann Gregor Dallinger; Franziska Löschenberger; Herbert Bistrich; Christian Ametz; Herbert Hetzendorfer; Laura Morales; Sebastian Michel; Hermann Buerstmayr. Predictor bias in genomic and phenomic selection. Theoretical and Applied Genetics 2023, 136(11), 235-235. [CrossRef]

- Mohsen Yoosefzadeh-Najafabadi; Mohsen Hesami; Milad Eskandari. Machine Learning-Assisted Approaches in Modernized Plant Breeding Programs. Genes 2023, 14(4), 777-777. [CrossRef]

- Pengfei Gao; Haonan Zhao; Zheng Luo; Y.-T. Lin; Wanjie Feng; Yaling Li; Fanjiang Kong; Xia Li; Chao Fang; Xutong Wang. SoyDNGP: a web-accessible deep learning framework for genomic prediction in soybean breeding. Briefings in Bioinformatics 2023, 24(6). [CrossRef]

- Elżbieta Wójcik-Gront; Bartłomiej Zieniuk; Magdalena Pawełkowicz. Harnessing AI-Powered Genomic Research for Sustainable Crop Improvement. Agriculture 2024, 14(12), 2299-2299. [CrossRef]

- Chaokun Yan; Jiabao Li; Qi Feng; Junwei Luo; Huimin Luo. ResDeepGS: A deep learning-based method for crop phenotype prediction. Methods 2025, 244, 65-74. [CrossRef]

- Rita Dublino; Maria Raffaella Ercolano. Artificial intelligence redefines agricultural genetics by unlocking the enigma of genomic complexity. The Crop Journal 2025, 13(5), 1350-1362. [CrossRef]

- Abu Saleh Muhammad Saimon; Mohammad Moniruzzaman; Md Shafiqul Islam; M. Ahmed; Md. Mizanur Rahaman; Sazzat Hossain; Mia Md Tofayel Gonee Manik. Integrating Genomic Selection and Machine Learning: A Data-Driven Approach to Enhance Corn Yield Resilience Under Climate Change. Journal of Environmental and Agricultural Studies 2023, 4(2), 20-27. [CrossRef]

- Rajib Roychowdhury; Soumya Prakash Das; Amber Gupta; Parul Parihar; K. Chandrasekhar; Umakanta Sarker; Ajay Kumar; Devade Pandurang Ramrao; C. Sudhakar. Multi-Omics Pipeline and Omics-Integration Approach to Decipher Plant’s Abiotic Stress Tolerance Responses. Genes 2023, 14(6), 1281-1281. [CrossRef]

- Xiaoming He; Danning Wang; Yong Jiang; Meng Li; Manuel Delgado-Baquerizo; Chloee M. McLaughlin; Caroline Marcon; Li Guo; Marcel Baer; Yudelsy Antonia Tandrón Moya. Heritable microbiome variation is correlated with source environment in locally adapted maize varieties. Nature Plants 2024, 10(4), 598-617. [CrossRef]

- Jon Bančič; Philip B. Greenspoon; R. Chris Gaynor; Gregor Gorjanc. Plant breeding simulations with AlphaSimR. Crop Science 2024, 65(1). [CrossRef]

- Nathan Fumia; Ramakrishnan M. Nair; Ya-Ping Lin; Cheng-Ruei Lee; Hung-Wei Chen; Eric von Wettberg; Michael B. Kantar; Roland Schafleitner. Leveraging genomics and phenomics to accelerate improvement in mungbean: A case study in how to go from GWAS to selection. The Plant Phenome Journal 2023, 6(1). [CrossRef]

- Danuta Cembrowska-Lech; Adrianna Krzemińska; Tymoteusz Miller; Anna Nowakowska; Cezary Adamski; Martyna Radaczyńska; Grzegorz Mikiciuk; Małgorzata Mikiciuk. An Integrated Multi-Omics and Artificial Intelligence Framework for Advance Plant Phenotyping in Horticulture. Biology 2023, 12(10), 1298-1298. [CrossRef]

- Andrew W. Herr; Alper Adak; Matthew E. Carroll; Dinakaran Elango; Soumyashree Kar; Changying Li; Sarah E. Jones; Arron H. Carter; Seth C. Murray; Andrew H. Paterson. Unoccupied aerial systems imagery for phenotyping in cotton, maize, soybean, and wheat breeding. Crop Science 2023, 63(4), 1722-1749. [CrossRef]

- Sizhe Xu; Xingang Xu; Qingzhen Zhu; Meng Yang; Guijun Yang; Haikuan Feng; Min Yang; Qilei Zhu; Hanyu Xue; Binbin Wang. Monitoring leaf nitrogen content in rice based on information fusion of multi-sensor imagery from UAV. Precision Agriculture 2023, 24(6), 2327-2349. [CrossRef]

- David Hobby; Hao Tong; Marc C. Heuermann; Alain J Mbebi; Roosa A. E. Laitinen; Matteo Dell’Acqua; Thomas Altmann; Zoran Nikoloski. Predicting plant trait dynamics from genetic markers. Nature Plants 2025, 11(5), 1018-1027. [CrossRef]

- Adnan Amin; Wajid Zaman; SeonJoo Park. Harnessing Multi-Omics and Predictive Modeling for Climate-Resilient Crop Breeding: From Genomes to Fields. Genes 2025, 16(7), 809-809. [CrossRef]

- Shameela Mohamedikbal; Hawlader Abdullah Al-Mamun; Mitchell Bestry; Jacqueline Batley; David Edwards. Integrating multi-omics and machine learning for disease resistance prediction in legumes. Theoretical and Applied Genetics 2025, 138(7), 163-163. [CrossRef]

- Ce Liu; Shengli Du; Aimin Wei; Zhihui Cheng; Huanwen Meng; Yike Han. Hybrid Prediction in Horticulture Crop Breeding: Progress and Challenges. Plants 2024, 13(19), 2790-2790. [CrossRef]

- Meng Geng; Søren K. Rasmussen; Cecilie S. L. Christensen; Weiyao Fan; Anna Maria Torp. Molecular breeding of barley for quality traits and resilience to climate change. Frontiers in Genetics 2023, 13, 1039996-1039996. [CrossRef]

- Cristiana Paina; Per L. Gregersen. Recent advances in the genetics underlying wheat grain protein content and grain protein deviation in hexaploid wheat. Plant Biology 2023, 25(5), 661-670. [CrossRef]

- Naveen Puppala; Spurthi N. Nayak; Álvaro Sanz-Sáez; Charles Chen; Mura Jyostna Devi; Nivedita Nivedita; Yin Bao; Guohao He; Sy M. Traore; David A. Wright. Sustaining yield and nutritional quality of peanuts in harsh environments: Physiological and molecular basis of drought and heat stress tolerance. Frontiers in Genetics 2023, 14, 1121462-1121462. [CrossRef]

- Marlon-Schylor L. le Roux; K. Kunert; Christopher A. Cullis; Anna-Maria Botha. Unlocking Wheat Drought Tolerance: The Synergy of Omics Data and Computational Intelligence. Food and Energy Security 2024, 13(6). [CrossRef]

- Keo Corak; Rue K. Genger; Philipp W. Simon; Julie C. Dawson. Comparison of genotypic and phenotypic selection of breeding parents in a carrot (Daucus carota) germplasm collection. Crop Science 2023, 63(4), 1998-2011. [CrossRef]

- Prabhu Govindasamy; Senthilkumar Muthusamy; Muthukumar Bagavathiannan; Jake Mowrer; Prasanth Tej Kumar Jagannadham; Aniruddha Maity; Hanamant M. Halli; G. K. Sujayananad; Rajagopal Vadivel; Das T. K. Nitrogen use efficiency—a key to enhance crop productivity under a changing climate. Frontiers in Plant Science 2023, 14, 1121073-1121073. [CrossRef]

- Vishnu Ramasubramanian; Cleiton Antônio Wartha; Lovepreet Singh; Paolo Vitale; Sushan Ru; Siddhi J. Bhusal; Aaron J. Lorenz. GS4PB: An R Shiny application to facilitate a genomic selection pipeline for plant breeding. The Plant Genome 2025, 18(4), e70150-e70150. [CrossRef]

- Mohammad Muzahidur Rahman Bhuiyan; Inshad Rahman Noman; M. A. Aziz; Md Mizanur Rahaman; Md Rashedul Islam; Mia Md Tofayel Gonee Manik; Kallol Das. Transformation of Plant Breeding Using Data Analytics and Information Technology: Innovations, Applications, and Prospective Directions. Frontiers in Bioscience-Elite 2025, 17(1), 27936-27936. [CrossRef]

- Aubrey Streit Krug; Emily B. M. Drummond; David L. Van Tassel; Emily Warschefsky. The next era of crop domestication starts now. Proceedings of the National Academy of Sciences 2023, 120(14). [CrossRef]

| Main modality / model type | Uncertainty reported? | Ranking stability reported? | Compute burden reported? | Sensing / data-acquisition burden discussed? | Deployment stage explicit? |

|---|---|---|---|---|---|

| Environmental covariates + MegaLMM [37] | No | Partial | No | No | Yes |

| Engineered envirotyping + tree-based ML [34] | No | No | Yes | No | Yes |

| Daily environmental sequences + deep learning [44] | No | No | Partial | No | Yes |

| AutoML with environmental feature reduction [45] | No | No | No | Partial | Partial |

| Bias analysis in genomic vs phenomic selection [96] | Partial | No | No | No | Partial |

| UAS phenomics + genomic prediction [59] | No | Partial | Yes | Partial | Yes |

| Temporal high-throughput phenotyping + longitudinal GP [75] | No | Partial | No | Partial | Yes |

| Breeding Stage operational use case | Typical candidate number | Data realistically available at decision time | Recommended validation split | Model families that are realistic for the stage | Main decision target |

|---|---|---|---|---|---|

| Early preselection untested genotypes in mostly familiar contexts | 1,000–50,000+ | Markers, pedigree, family structure, coarse site-year labels, sometimes basic historical environment summaries | Family-aware CV or untested genotype in tested environment splits | GBLUP, simple G×E terms, reaction norms, tree models only when strong covariates already exist | Cull lines and prioritize retention |

| Sparse testing across METs recovering missing G x E cells | 200–10,000 | Markers, historical environmental covariates, trial history, partial phenotype matrices, possibly stage-aware envirotyping summaries | Leave-site-year-out, leave-one-environment-out, or sparse-testing mask recovery | Factor-analytic models, reaction norms, MTME, engineered feature ML, environment-aware mixed models | Fill missing trial cells and support advancement |

| Late-stage regional recommendation placement and advancement decisions | 20–1,000 | Markers, site histories, richer environmental profiles, partial phenomics, management context, sometimes current-season UAS or sensor data | Leave-year-out or region holdout with explicit ranking-stability checks | Multimodal fusion, interpretable DL, hybrid biological-statistical models, phenomics-augmented GP when timing is honest | Placement, regional recommendation, product advancement |

| Untested genotype in untested environment hard extrapolation | case-specific | Markers plus dense environmental histories; phenomics only if available before the decision | Joint genotype-and-environment withholding with strict temporal and relatedness control | Reaction norms with strong envirotyping, sequence-based DL only when scale, diversity | Stress-test transportability and quantify decision risk |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.