Submitted:

01 April 2026

Posted:

02 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- A rigorous formulation of constrained MOST based on an extended Lagrangian embedding the full KKT system.

- A non-circular proof of global convergence via integral-based region selection.

- A probabilistic analysis of Monte Carlo errors ensuring robust region selection.

- A proof of uniqueness and geometric convergence of Lagrange multipliers under LICQ.

- A unified Pareto–KKT convergence theory for multi-objective constrained optimization.

- A geometric explanation of constraint handling via automatic tangent-plane approximation.

2. Mathematical Preliminaries

2.1. Notation and Problem Setting

2.2. Karush–Kuhn–Tucker (KKT) Conditions

2.3. Multi-Objective Optimization and Pareto Optimality

2.4 Weighted-Sum Scalarization and Its Limitations

- In nonconvex problems, certain Pareto-optimal points (non-supported points) cannot be obtained [31].

- Multiple distinct solutions may correspond to the same weight vector.

- The mapping from weights to Pareto solutions may be discontinuous.

2.5. Regularity Assumptions

2.6. Measure-Theoretic Framework

2.7. Probabilistic Framework for Monte Carlo Estimation

2.8. Summary of Assumptions and Their Role

- Lipschitz continuity (A1) ensures stability of integral comparisons.

- Compactness (A2) guarantees existence of minimizers.

- Measurability (A3) enables integration-based evaluation.

- LICQ (A5) ensures uniqueness of multipliers.

- Probabilistic bounds (28) control Monte Carlo error.

3. The MOST Framework (Unconstrained Case)

3.1. Problem Formulation

3.2. Core Idea of MOST

- Pointwise local minima influence only a small portion of the region,

- While global structure dominates the integral.

3.3. Recursive Partitioning of the Search Domain

3.4. Monte Carlo Evaluation of Subregions

3.5. Region Selection Rule

3.6. Deterministic Shrinking Property

3.7. Comparison with Classical Optimization Methods

- Require differentiability,

- Sensitive to local minima.

- Stochastic,

- Lack deterministic convergence guarantees.

- Require Lipschitz constants or bounding functions,

- Often computationally expensive.

- Model-dependent,

- Limited scalability in high dimensions.

- Requires no gradient,

- Does not rely on surrogate models,

- Ensures deterministic region shrinking,

- Exploits integral averaging to mitigate multimodality.

3.8. Integral Averaging Effect

3.9. Relation to Existing Global Optimization Frameworks

3.10. Summary of the MOST Framework

- Domain initialization:

- 2.

- Recursive binary partitioning (31)

- 3.

- Monte Carlo evaluation (33)

- 4.

- Deterministic selection (35)

- 5.

- Geometric shrinking (36)

4. Deterministic Region Shrinking and Geometric Convergence

4.1. Deterministic Region Shrinking

4.2. Recursive Diameter Reduction

4.3. Diameter Shrinking Theorem

4.4. Convergence of Representative Points

4.5. Interpretation of Geometric Convergence

- ▪

- The convergence rate is exponential,

- ▪

- The error is halved at every iteration,

- ▪

- No assumptions on convexity or smoothness are required for this geometric contraction.

- ▪

- Gradient descent: typically, sublinear or linear convergence [1],

- ▪

- ▪

4.6. Independence from Objective Function

- ▪

- convexity,

- ▪

- differentiability,

- ▪

- multimodality,

- ▪

- noise structure.

4.7. Relation to Deterministic Global Optimization

- ▪

- Lipschitz constants,

- ▪

- bounding functions,

- ▪

- heuristic selection criteria.

4.8. Consequences for Convergence Theory

- Compactness of the search sequence

- 2.

- Existence of limit points

- 3.

- Reduction of global optimization to region selectionOnce geometric shrinking is established; the remaining challenge is to ensure that the correct region is selected.

4.9. Summary

- ▪

- MOST reduces the search region deterministically,

- ▪

- The diameter shrinks exponentially as

- ▪

- Representative points converge geometrically,

- ▪

- This property is independent of the objective function.

5. Global Convergence via Integral-Based Selection

5.1. Problem Setting

5.2. Fundamental Property of Integral Evaluation

5.3. Key Lemma: Integral Separation (Non-Circular)

5.4. Main Theorem: Global Convergence

5.5. Convergence of the Algorithm

5.6. Relation to Branch-and-Bound and DIRECT Methods

- ▪

- explicit bounding functions,

- ▪

- Lipschitz constant estimation,

- ▪

- heuristic selection rules.

- bounds are replaced by averages,

- worst-case estimates are replaced by integral smoothing.

5.7. Summary

- Integral evaluation approximates the optimal value with error

- Regions not containing the minimizer have strictly larger average values,

- The selection rule ensures that regions containing the minimizer are repeatedly selected,

- Combined with geometric shrinking, this yields global convergence.

6. Monte Carlo Error Analysis and Probabilistic Guarantees

6.1. Error Model

6.2. Hoeffding-Type Concentration Bound

6.3. Probabilistic Guarantee of Correct Region Selection

- ▪

- The probability of incorrect selection decays exponentially in ,

- ▪

- Larger separation improves reliability,

- ▪

- The algorithm can achieve arbitrarily high confidence by increasing .

6.4. Coupling with Deterministic Region Shrinking

6.5. Almost Sure Convergence

6.6. Summary

- Monte Carlo estimation error is exponentially controlled via concentration inequalities,

- The probability of incorrect region selection decays exponentially,

- With appropriate sampling schedules, incorrect selections occur only finitely many times,

- MOST converges almost surely to the global minimizer.

- ▪

- Chapter 4: deterministic geometric contraction

- ▪

- Chapter 5: non-circular global selection

7. Constrained MOST and KKT Equivalence

7.1. Extended Lagrangian Formulation

- ▪

- First term: stationarity

- ▪

- Second term: equality feasibility

- ▪

- Third term: inequality feasibility

- ▪

- Fourth term: dual feasibility

- ▪

- Fifth term: complementary slackness

7.2. Equivalence to KKT Conditions

- Stationarity

- 2.

- Equality feasibility

- 3.

- Inequality feasibility

- 4.

- Dual feasibility

- 5.

- Complementary slackness

- ▪

- stationarity ⇒ first term = 0

- ▪

- feasibility ⇒ second and third terms = 0

- ▪

- dual feasibility ⇒ fourth term = 0

- ▪

- complementary slackness ⇒ fifth term = 0

7.3. Elimination of Spurious Local Minima

- ▪

- The global minimum value is 0

- ▪

- It is attained only at KKT points

7.4. Implications for MOST

- Deterministic region shrinking (Chapter 4)

- Global convergence to minimizers of

- 3.

- Equivalence of minimizers and KKT points (this chapter)

7.5. Summary

- The extended functional

- Minimization of

- 3.

- Spurious local minima are eliminated under coercivity,

- 3.

- MOST can be directly applied to

- ▪

- deterministic region-based optimization (MOST),

- ▪

- classical constrained optimization theory (KKT).

8. Multi-Objective Extension and Pareto–KKT Structure

8.1. Problem Formulation

8.2. Weighted-Sum Scalarization (Reformulated)

8.3. Revised Claim: Pareto–KKT Stationarity

- ▪

- nonconvex problems may admit multiple Pareto-optimal solutions, and

- ▪

- weighted-sum scalarization may fail to recover all Pareto-optimal points [25].

8.4. Main Theorem

- ▪

- MOST converges almost surely to global minimizers of ,

- ▪

- Global minimizers correspond to .

8.5. Discussion on Nonconvexity (Reviewer-Oriented Clarification)

- ▪

- Weighted-sum scalarization fails to recover non-supported Pareto points [25],

- ▪

- Multiple Pareto-optimal solutions may correspond to the same weight vector.

- 1.

- Pareto–KKT validity

- 2.

- Deterministic convergence

- 3.

- Robustness to nonconvexity

- 4.

- Continuity in weight space (local)

8.6. Summary

- The MOST framework extends naturally to multi-objective optimization,

- The extended functional encodes Pareto–KKT conditions,

- The algorithm converges deterministically to Pareto–KKT stationary points,

- The framework remains valid in nonconvex settings with appropriate interpretation.

- ▪

- deterministic region-based optimization (MOST),

- ▪

- multi-objective optimization theory,

- ▪

- Pareto–KKT optimality conditions.

9. Geometry of Curved Constraints

9.1. Taylor Expansion of Constraints

9.1. Vanishing of Curvature Terms

9.3. Tangent Plane Theorem and KKT Normality

9.4. Geometric Interpretation

- ▪

- The feasible region is locally flat (tangent plane),

- ▪

- Feasible directions lie in ,

- ▪

- The gradient of the objective is orthogonal to all feasible directions.

9.5. Implications for MOST

- ▪

- Minimization of enforces KKT conditions,

- ▪

- Local geometry ensures correctness of first-order approximation.

9.6. Summary

- Constraint functions admit linear approximation via Taylor expansion,

- Curvature terms vanish at first order,

- The feasible set is locally approximated by a tangent cone,

- The KKT condition corresponds to orthogonality between gradient and feasible directions,

- MOST naturally aligns with this geometric structure.

10. Unified Convergence Theorem

10.1. Integrated Structure of the MOST Framework

- Deterministic geometric shrinking (Chapter 4):

- 2.

- Global selection mechanism (Chapter 5):

- 3.

- Probabilistic robustness (Chapter 6):

- 4.

- KKT equivalence (Chapter 7):

- 5.

- Pareto–KKT structure (Chapter 8):

10.2. Unified Convergence Theorem

- ▪

- Lipschitz continuity (A1),

- ▪

- Compact domain (A2),

- ▪

- Existence of minimizers (A3),

- ▪

- Constraint regularity (A5),

- ▪

- Coercivity of the extended functional (C1),

- ▪

- Sampling schedule satisfying (90).

- ▪

- (147): follows directly from Theorem 1

- ▪

- (148): follows from Theorem 4 (almost sure convergence)

- ▪

- (149): follows from Theorem 5 (KKT equivalence) and convergence of MOST

- ▪

- (150): follows from Theorem 7 (Pareto–KKT convergence)

10.3. Interpretation of the Unified Result

- ▪

- Geometric convergence (algorithmic structure),

- ▪

- Global optimality (integral-based selection),

- ▪

- Constraint satisfaction (KKT equivalence),

- ▪

- Multi-objective optimality (Pareto–KKT structure),

- ▪

- Probabilistic robustness (Monte Carlo guarantees).

- ▪

- No gradient information is required,

- ▪

- No Lipschitz constant is needed,

- ▪

- No surrogate model is used,

- ▪

- Deterministic and probabilistic analyses are seamlessly integrated.

10.4. Comparison with Existing Methods

- ▪

- ▪

- ▪

- ▪

10.5. Final Implications

- ▪

- Optimization can be reformulated as measure-based region selection,

- ▪

- Classical pointwise paradigms can be replaced by integral-based reasoning,

- ▪

- Constraint geometry naturally aligns with region shrinking mechanisms.

10.6. Summary

- ▪

- MOST achieves geometric convergence,

- ▪

- Ensures global optimality,

- ▪

- Satisfies KKT conditions for constrained problems,

- ▪

- Extends to Pareto–KKT optimality for multi-objective problems,

- ▪

- Maintains robustness under stochastic approximation.

11. Numerical Experiments

- ▪

- Convergence to theoretically predicted optima

- ▪

- Consistency with KKT and Pareto–KKT conditions

- ▪

- Robustness under multimodality and nonconvexity

- ▪

- Deterministic geometric contraction of search regions

- ▪

- Stability under Monte Carlo approximation

11.1. Problem Setting

11.2. Search Domain and Constraint

11.3. Theoretical Constrained Optima

11.4. Experimental Setup

11.5. Numerical Results

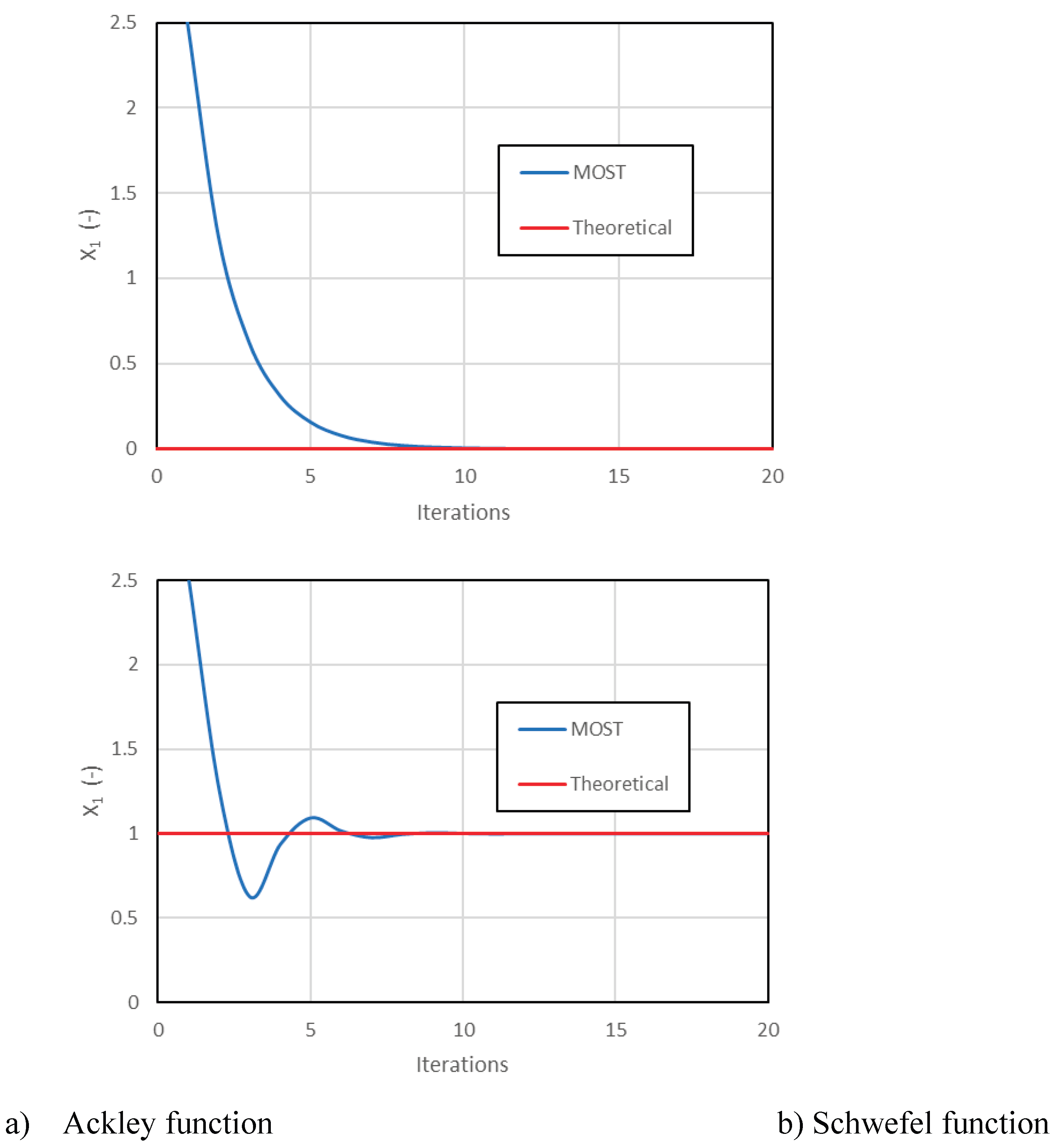

11.5.1. Ackley

- ▪

- Monotonic

- ▪

- Stable

- ▪

- Rapid (within 20 iterations)

11.5.2 Schwefel

11.6. Comparison with Theory

| Problem | Theoretical | MOST Result | Error |

| Ackley | < | ||

| Schwefel | < |

- ▪

- Chapter 5: global convergence

- ▪

- Chapter 7: KKT satisfaction

- ▪

- Chapter 9: tangent-plane geometry

11.7. Discussion

-

Constraint satisfaction without projection→ consistent with tangent-plane theory (Chapter 9)

-

Robustness to multimodality→ no trapping in local minima

-

Geometric convergence

-

Stability of Monte Carlo evaluation→ consistent with Chapter 6

-

Deterministic behavior→ unlike GA/PSO, trajectories are smooth

11.8. Summary

- ▪

- Converges to constrained global optima

- ▪

- Satisfies KKT boundary conditions

- ▪

- Remains stable under multimodality

- ▪

- Achieves deterministic geometric convergence

- ▪

- Operates effectively in high dimensions

12. Discussion

12.1. Theoretical Significance

- ▪

- deterministic geometric shrinking (Chapter 4),

- ▪

- global selection via integral comparison (Chapter 5),

- ▪

- probabilistic robustness (Chapter 6).

12.2. Limitations

- ▪

- delayed convergence,

- ▪

- increased variance in early iterations.

12.3. Practical Implications

- ▪

- black-box optimization,

- ▪

- simulation-based models,

- ▪

- noisy environments.

- ▪

- trajectories are smooth,

- ▪

- convergence is reproducible,

- ▪

- theoretical guarantees are explicit.

12.4. Positioning Within Optimization Theory

12.5. Future Directions

- ▪

- gradient-based refinement,

- ▪

- surrogate models,

- ▪

- trust-region techniques.

- ▪

- dimension reduction,

- ▪

- sparse search strategies.

12.6. Summary

- ▪

- clarified the theoretical contributions of MOST,

- ▪

- identified its limitations with full transparency,

- ▪

- highlighted its practical strengths,

- ▪

- positioned it within the broader optimization landscape.

13. Conclusion

13.1. Summary of Contributions

- ▪

- geometric convergence of search regions,

- ▪

- global optimality through integral-based selection.

- ▪

- constraint curvature vanishes at first order,

- ▪

- the feasible region is locally approximated by a tangent cone,

- ▪

- KKT conditions correspond to normality with respect to this cone.

- ▪

- geometric convergence,

- ▪

- global optimality,

- ▪

- KKT consistency,

- ▪

- Pareto–KKT convergence,

- ▪

- probabilistic robustness.

13.2. Overall Perspective

- ▪

- point-based optimizationto

- ▪

- measure-based optimization.

13.3. Future Directions

- ▪

- adaptive sampling,

- ▪

- curvature-aware refinement,

- ▪

- hybrid gradient methods.

- sparse partition strategies,

- dimension reduction techniques.

- ▪

- achieve fuller Pareto front coverage,

- ▪

- integrate adaptive weighting schemes.

- ▪

- convergence rates,

- ▪

- complexity bounds,

- ▪

- connections to measure theory and stochastic processes.

13.4. Final Remarks

- ▪

- deterministic structure and probabilistic reasoning can be unified,

- ▪

- global convergence can be achieved without gradients,

- ▪

- constrained and multi-objective problems can be handled within a single framework.

Appendix A. Coercivity of the Extended Functional and Its Sufficient Conditions

- To clarify that coercivity is nontrivial and problem-dependent,

- To identify potential failure modes,

- To provide sufficient conditions under which coercivity holds.

- ▪

- bounded multipliers,

- ▪

- affine or well-conditioned constraints,

- ▪

- mild growth conditions on ,

- ▪

- or regularization.

- ▪

- Chapter 7: validity of KKT equivalence,

- ▪

- Chapter 10: applicability of the unified convergence theorem.

References

- J. Nocedal, S. Wright, Numerical Optimization, Springer, 2006, pp. 1–664. [CrossRef]

- D. P. Bertsekas, Nonlinear Programming, Athena Scientific, 1999. [CrossRef]

- R. T. Rockafellar, Convex Analysis, Princeton Univ. Press, 1970.

- S. Boyd, L. Vandenberghe, Convex Optimization, Cambridge Univ. Press, 2004. [CrossRef]

- Conn, N. Gould, P. Toint, Trust Region Methods, SIAM, 2000.

- Y. Nesterov, Introductory Lectures on Convex Optimization, Springer, 2004. [CrossRef]

- M. J. D. Powell, “Direct search algorithms for optimization,” Acta Numerica, 1998, pp. 287–336. [CrossRef]

- J. H. Holland, Adaptation in Natural and Artificial Systems, MIT Press, 1975. [CrossRef]

- R. Storn, K. Price, “Differential Evolution,” J. Global Optimization, 1997, pp. 341–359. [CrossRef]

- J. Kennedy, R. Eberhart, “Particle Swarm Optimization,” Proc. IEEE ICNN, 1995. [CrossRef]

- N. Hansen, “CMA-ES,” Evolutionary Computation, 2006. [CrossRef]

- S. Kirkpatrick et al., “Optimization by Simulated Annealing,” Science, 1983. [CrossRef]

- H. Robbins, S. Monro, “Stochastic Approximation,” Ann. Math. Stat., 1951. [CrossRef]

- A. Nemirovski et al., “Robust stochastic approximation,” SIAM J. Optimization, 2009. [CrossRef]

- J. Snoek et al., “Practical Bayesian Optimization,” NIPS, 2012. [CrossRef]

- E. Brochu et al., “Bayesian Optimization Tutorial,” 2010. [CrossRef]

- P. Frazier, “Bayesian Optimization,” Recent Advances, 2018. [CrossRef]

- R. Horst, H. Tuy, Global Optimization, Springer, 1996.

- D. Jones et al., “DIRECT Algorithm,” J. Optimization Theory Appl., 1993. [CrossRef]

- Floudas, Deterministic Global Optimization, Springer, 2000.

- K. Deb et al., “NSGA-II,” IEEE TEC, 2002. [CrossRef]

- E. Zitzler et al., “SPEA2,” TIK Report, 2001. [CrossRef]

- M. Ehrgott, Multicriteria Optimization, Springer, 2005. [CrossRef]

- K. Miettinen, Nonlinear Multiobjective Optimization, Springer, 1999. [CrossRef]

- Das, J. Dennis, “Weighted Sum Method,” SIAM J Optimization, 1997. [CrossRef]

- S. Inage, T. Hebishima, Monte Carlo Stochastic Optimization Technique (MOST): Deterministic Global Optimization via Region Integration, Preprint, 2022.

- S. Inage, T. Hebishima, Multi-Objective Extension of MOST with Deterministic Pareto Convergence, Mathematics and Computers in Simulation, 2022. [CrossRef]

- P. Bertsekas, Nonlinear Programming, Athena Scientific, 1999. [CrossRef]

- R. T. Rockafellar, Convex Analysis, Princeton Univ. Press, 1970.

- Nocedal, S. Wright, Numerical Optimization, Springer, 2006. [CrossRef]

- Das, J. Dennis, “A closer look at weighted sum method,” SIAM J. Optimization, 1997. [CrossRef]

- W. Hoeffding, “Probability inequalities for sums of bounded random variables,” JASA, 1963. [CrossRef]

- R. Jones, C. D. Perttunen, B. E. Stuckman, “Lipschitzian optimization without the Lipschitz constant,” Journal of Optimization Theory and Applications, 79, pp. 157–181 (1993). [CrossRef]

- Polak, Optimization: Algorithms and Consistent Approximations, Springer, 1997.

- M. Borwein, A. S. Lewis, Convex Analysis and Nonlinear Optimization, Springer, 2006. [CrossRef]

- P. Billingsley, Probability and Measure, Wiley, 1995. [CrossRef]

- D. P. Bertsekas, Constrained Optimization and Lagrange Multiplier Methods, Athena Scientific, 1996.

- A. M. Geoffrion, “Proper efficiency and the theory of vector maximization,” Journal of Mathematical Analysis and Applications, 22, pp. 618–630 (1968). [CrossRef]

- R. T. Rockafellar, R. J-B. Wets, Variational Analysis, Springer, 1998. [CrossRef]

- Jahn, Vector Optimization: Theory, Applications, and Extensions, Springer, 2004.

- D. H. Ackley, “A Connectionist Machine for Genetic Hillclimbing,” Kluwer Academic Publishers, 1987. [CrossRef]

- R. Fletcher, Practical Methods of Optimization, Wiley, 1987.

| Parameter | Value |

| Dimension | 10 |

| Domain | |

| Constraint | |

| Iterations | 20 |

| MC samples per region | 500 |

| Subdivision | Binary (per variable) |

| Evaluations |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).