Submitted:

04 March 2026

Posted:

05 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

2.1. Few-Shot Remote Sensing Scene Classification

2.2. Data Augmentation

3. Materials and Methods

3.1. Problem Definition

3.2. Method Overview

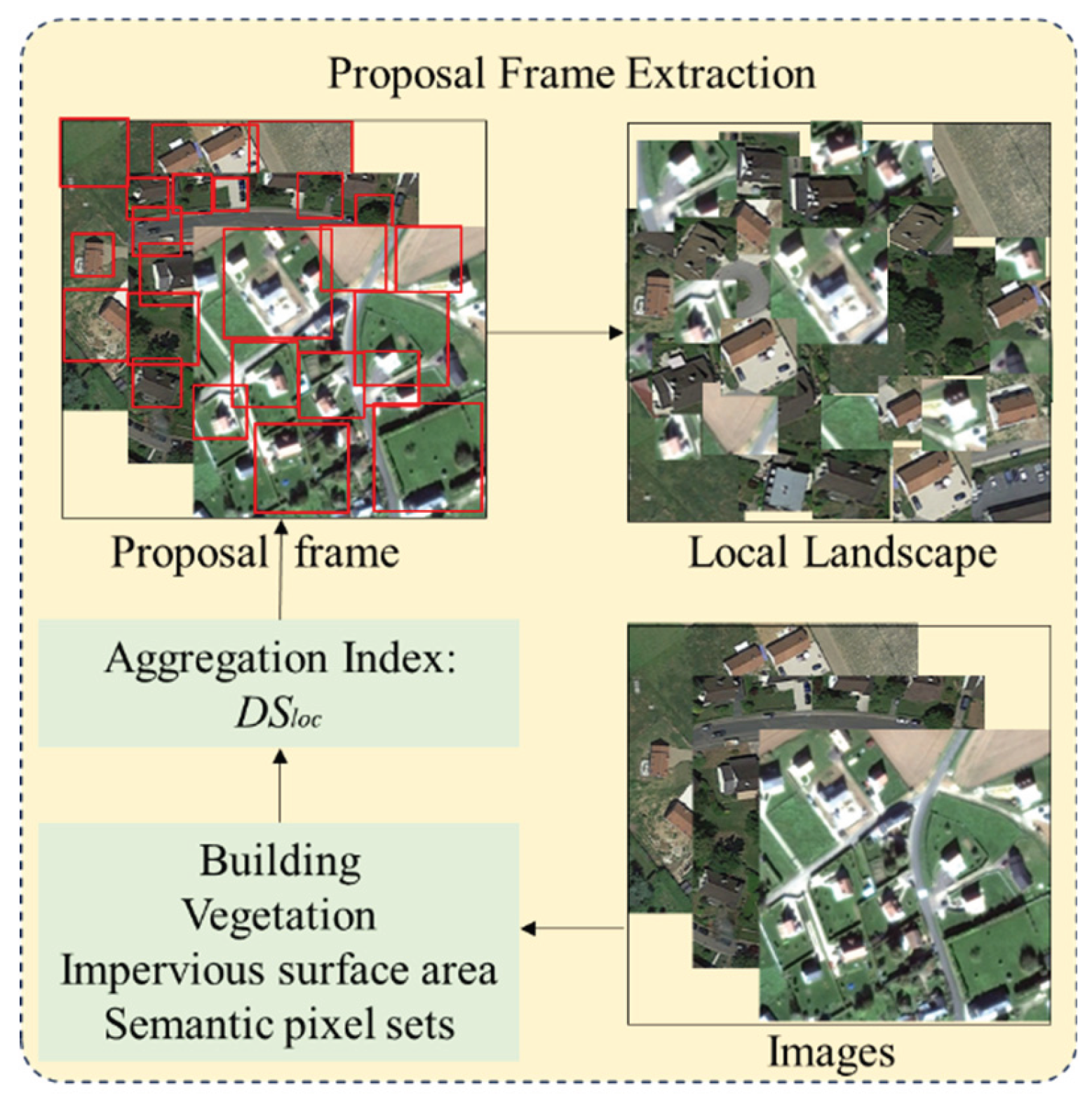

3.3. Diffusion Augmentation

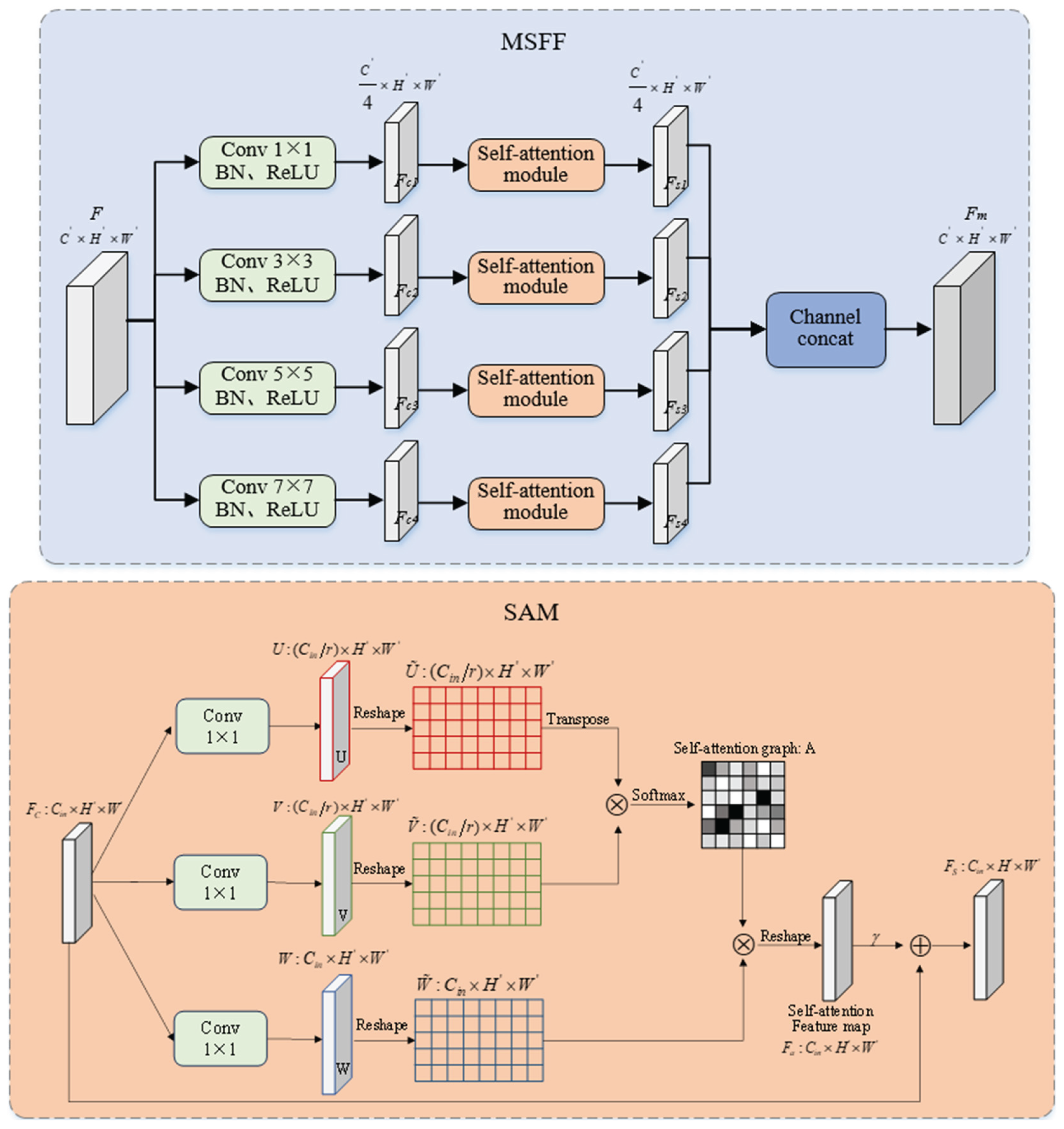

3.4. Dual Attention Fusion Modul (DAFM)

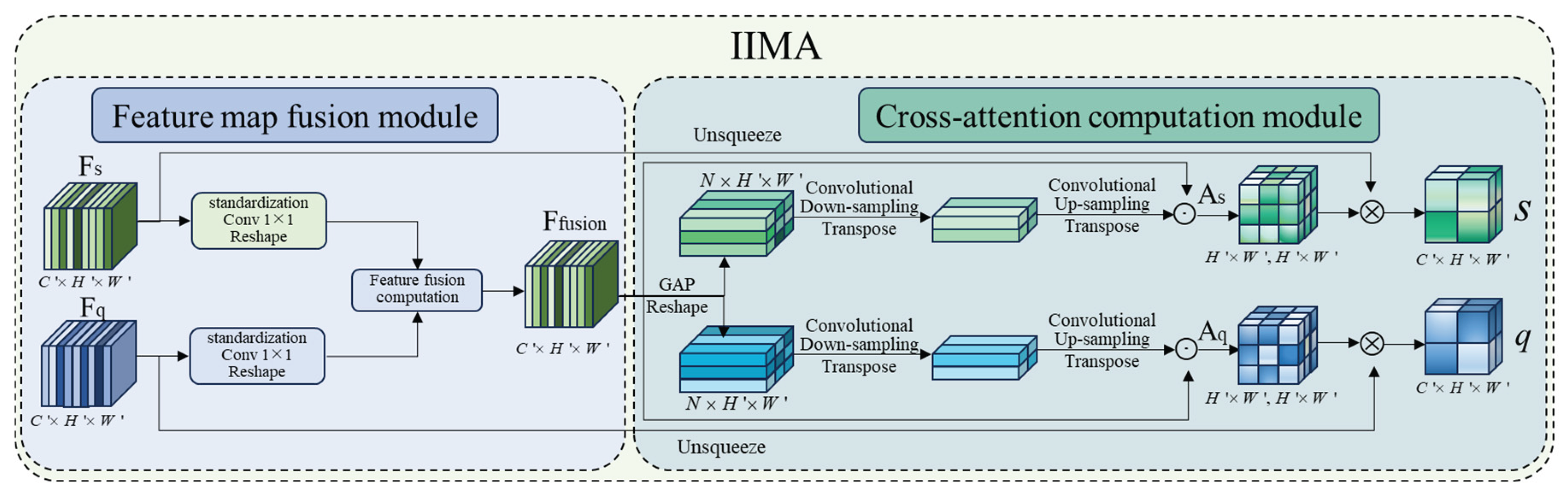

3.5. Information Interaction Mutual Attention (IIMA)

3.6. Loss Function

4. Results

4.1. Dataset Introduction

4.2. Implementation Details

4.3. Results Comparison of Multiple Models

4. Discussion

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| FSRSSC | Few-shot remote sensing scene classification |

| MMFF-Net | multimodal feature fusion network |

| DA | Diffusion augmentation |

| MSFF | Multiscale feature fusion |

| DAFM | Dual attention fusion module |

| IIMA | Information interaction mutual attention |

| RSS | Remote sensing scene |

| SAM | Self-attention module |

| UCM | UC Merced |

| NWPU | Northwestern Polytechnical University |

| FLOPs | Floating-point operations |

References

- M. Zhai, H. Liu, and F. Sun, “Lifelong learning for scene recognition in remote sensing images,” IEEE Geosci. Remote Sens. Lett., vol. 16, no. 9, pp. 1472–1476, Sep. 2019. [CrossRef]

- L. Zhang and L. Zhang, “Artificial intelligence for remote sensing data analysis: A review of challenges and opportunities,” IEEE Geosci. Remote Sens. Mag., vol. 10, no. 2, pp. 270–294, Jun. 2022.

- Q. Zhao, S. Lyu, Y. Li, Y. Ma, and L. Chen, “MGML: Multigranularity multilevel feature ensemble network for remote sensing sceneclassification,” IEEE Trans. Neural Netw. Learn. Syst., vol. 34, no. 5, pp. 2308–2322, May 2023. [CrossRef]

- Z. Ji, L. Hou, X. Wang, G. Wang, and Y. Pang, “Dual contrastive networkfor few-shot remote sensing image scene classification,” IEEE Trans. Geosci. Remote Sens., vol. 61, 2023, Art. no. 5605312. [CrossRef]

- J. Li, K. Zheng, J. Yao, L. Gao, and D. Hong, “Deep unsupervised blind hyperspectral and multispectral data fusion,” IEEE Geosci. Remote Sens. Lett., vol. 19, 2022, Art. no. 6007305. [CrossRef]

- L. Xing, S. Shao, Y. Ma, Y. Wang, W. Liu, and B. Liu, “Learning to cooperate: Decision fusion method for few-shot remote-sensing scene classification,” IEEE Geosci. Remote Sens. Lett., vol. 19, pp. 1–5, 2022. [CrossRef]

- M. Gong, J. Li, Y. Zhang, Y. Wu, and M. Zhang, “Two-path aggregation attention network with quad-patch data augmentation for few-shot scene classification,” IEEE Trans. Geosci. Remote Sens., vol. 60, 2022, Art. no. 4511616. [CrossRef]

- H. Ji, Z. Gao, Y. Zhang, Y. Wan, C. Li, and T. Mei, “Few-shot scene classification of optical remote sensing images leveraging calibrated pretext tasks,” IEEE Trans. Geosci. Remote Sens., vol. 60, 2022, Art. no. 562551. [CrossRef]

- J. Li, M. Gong, H. Liu, Y. Zhang, M. Zhang, and Y. Wu, “Multi-form ensemble self-supervised learning for few-shot remote sensing scene classification,” IEEE Trans. Geosci. Remote Sens., vol. 61, 2023, Art. no. 4500416. [CrossRef]

- H. Ji, H. Yang, Z. Gao, C. Li, Y. Wan, and J. Cui, “Few-shot scene classification using auxiliary objectives and transductive inference,” IEEE Geosci. Remote Sens. Lett., vol. 19, pp. 1–5, 2022. [CrossRef]

- X. Li, F. Pu, R. Yang, R. Gui, and X. Xu, “AMN: Attention metric network for one-shot remote sensing image scene classification,” Remote. Sens., vol. 12, no. 24, Dec. 2020, Art. no. 4046. [CrossRef]

- L. Li, J. Han, X. Yao, G. Cheng, and L. Guo, “DLA-MatchNet for few-shot remote sensing image scene classification,” IEEE Trans. Geosci. Remote Sens., vol. 59, no. 9, pp. 7844–7853, Sep. 2021. [CrossRef]

- X. Li, D. Shi, X. Diao, and H. Xu, “SCL-MLNet: Boosting few-shot remote sensing scene classification via self-supervised contrastive learning,” IEEE Trans. Geosci. Remote Sens., vol. 60, 2022, Art. no. 5801112. [CrossRef]

- Q. Zeng and J. Geng, “Task-specific contrastive learning for few-shot remote sensing image scene classification,” ISPRS J. Photogramm., vol. 191, pp. 143–154, Sep. 2022. [CrossRef]

- Y. Xu et al., “Attention-based contrastive learning for few-shot remote sensing image classification,” IEEE Trans. Geosci. Remote Sens., vol. 62, Apr. 2024, Art. no. 5620317. [CrossRef]

- H. Zhang, X. Zhang, G. Meng, C. Guo, and Z. Jiang, “Few-shot multi-class ship detection in remote sensing images using attention feature map and multi-relation detector,” Remote Sens., vol. 14, no. 12, 2022, Art. no. 2790.). [CrossRef]

- Z. Cui, W. Yang, L. Chen, and H. Li, “MKN: Metakernel networks for few-shot remote sensing scene classification,” IEEE Trans. Geosci. Remote Sens., vol. 60, 2022, Art. no. 4705611. [CrossRef]

- H. Li et al., “RS-MetaNet: Deep metametric learning for few-shot remote sensing scene classification,” IEEE Trans. Geosci. Remote Sens., vol. 59, no. 8, pp. 6983–6994, Aug. 2021. [CrossRef]

- A. Qin et al., “Deep updated subspace networks for few-shot remote sensing scene classification,” IEEE Trans. Geosci. Remote Sens., vol. 62, Jan. 2024, Art. no. 5606714. [CrossRef]

- Teng L, Gao S. Multimodal Feature Calibration Network for Few-Shot Remote Sensing Image Scene Classification[C]//2025 IEEE 6th International Seminar on Artificial Intelligence, Networking and Information Technology (AINIT).0[2026-01-13]. DOI:10.1109/AINIT65432.2025.11035263.

- C. Shorten and T. M. Khoshgoftaar, “A survey on image data augmentation for deep learning,” J. Big Data, vol. 6, no. 1, pp. 1–48, 2019. [CrossRef]

- S. Gidaris, P. Singh, and N. Komodakis, “Unsupervised representation learning by predicting image rotations,” 2018, arXiv:1803.07728.

- Ji H, Gao Z, Lu Y, et al. Semi-supervised few-shot classification with multitask learning and iterative label correction[J]. IEEE Transactions on Geoscience and Remote Sensing, 2024, 62: 1-15. [CrossRef]

- Liu Y, Li J, Gong M, et al. Collaborative self-supervised evolution for few-shot remote sensing scene classification[J]. IEEE Transactions on Geoscience and Remote Sensing, 2024. [CrossRef]

- C. Zhang, Y. Cai, G. Lin, and C. Shen, “DeepEMD: Few-shot image classification with differentiable earth mover’s distance and structured classifiers,” in Proc. IEEE/CVF Conf. Comput. Vis. Pattern Recognit.,Jun. 2020, pp. 12203–12213.

- J.-H. Kim, W. Choo, and H. O. Song, “Puzzle mix: Exploiting saliency and local statistics for optimal mixup,” in Proc. Int. Conf. Mach. Learn., 2020, pp. 5275–5285.

- S. Yun, D. Han, S. Chun, S. J. Oh, Y. Yoo, and J. Choe, “CutMix: Regularization strategy to train strong classifiers with localizable features,” in Proc. IEEE/CVF Int. Conf. Comput. Vis. (ICCV), Oct. 2019, pp. 6023–6032.

- K. Li, Y. Zhang, K. Li, and Y. Fu, “Adversarial feature hallucination networks for few-shot learning,” in Proc. IEEE/CVF Conf. Comput. Vis. Pattern Recognit. (CVPR), Jun. 2020, pp. 13470–13479.

- R. Ni, M. Goldblum, A. Sharaf, K. Kong, and T. Goldstein, “Data augmentation for meta-learning,” in Proc. 38th Int. Conf. Mach. Learn., 2021, pp. 8152–8161.

- S. Yang, L. Liu, and M. Xu, “Free lunch for few-shot learning: Distribution calibration,” 2021, arXiv:2101.06395.

- Chen X, He K. Exploring simple siamese representation learning[C]//Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. 2021: 15750-15758.

- Hou L, Ji Z, Wang X, et al. Diversity-infused network for unsupervised few-shot remote sensing scene classification[J]. IEEE Geoscience and Remote Sensing Letters, 2024, 21: 1-5. [CrossRef]

- Zhu Y, Han J, Pan B, et al. DiffPR-Net: Few-shot remote sensing scene classification based on generative diffusion and prototype rectified model[J]. IEEE Transactions on Geoscience and Remote Sensing, 2025. [CrossRef]

- T. Mikolov, K. Chen, G. S. Corrado, and J. Dean, “Efficient estimation of word representations in vector space,” in Proc. Int. Conf. Learn. Representations, 2013, pp. 1–12.

- Qin A, Chen F, Li Q, et al. Few-shot remote sensing scene classification via subspace based on multiscale feature learning[J]. IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing, 2024. [CrossRef]

- Wang C, Li J, Tanvir A, et al. Zero-shot remote sensing scene classification method based on local-global feature fusion and weight mapping loss[J]. IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing, 2023, 17: 2763-2776. [CrossRef]

- X. Huang and L. Zhang, “Multidirectional and multiscale morphological index for automatic building extraction from multispectral GeoEye-1 imagery,” Photogrammetric Eng. Remote Sens., vol. 77, pp. 721–732, 2011. [CrossRef]

- Y. Fu et al., “Winter wheat nitrogen status estimation using UAV-based RGB imagery and Gaussian processes regression,” Remote Sens., vol. 12, no. 22, 2020, Art. no. 3778. [CrossRef]

- C. Deng and C. Wu, “BCI: A biophysical composition index for remote sensing of urban environments,” Remote Sens. Environ., vol. 127, pp. 247–259, 2012. [CrossRef]

- R. Tao and J. Qiao, “Fast component tree computation for images of limited levels” IEEE Trans. Pattern Anal. Mach. Intell., vol. 45, no. 3, pp. 3059–3071, Mar. 2023. [CrossRef]

- Türkşen, I. Burhan. “Fuzzy functions with LSE.” Applied Soft Computing 8.3 (2008): 1178-1188. [CrossRef]

- Y. Yang and S. Newsam, “Bag-of-visual-words and spatial extensions for land-use classification,” in Proc. 18th SIGSPATIAL Int. Conf. Adv.Geograph. Inf. Syst., Nov. 2010, pp. 270–279. [CrossRef]

- C. Zhang, Y. Cai, G. Lin, and C. Shen, “DeepEMD: Few-shot image classification with differentiable earth mover’s distance and structured classifiers,” in Proc. IEEE Conf. Comput. Vis. Pattern Recognit., 2020, pp. 12200–12210.

- G. Cheng, J. Han, and X. Lu, “Remote sensing image scene classification: Benchmark and state of the art,” Proc. IEEE, vol. 105, no. 10, pp. 1865–1883, Oct. 2017.

- G. Cheng et al., “SPNet: Siamese-prototype network for few-shot remote sensing image scene classification,” IEEE Trans. Geosci. Remote Sens., vol. 60, pp. 1–11, 2022. [CrossRef]

- B. Zhang et al., “SGMNet: Scene graph matching network for few-shot remote sensing scene classification,” IEEE Trans. Geosci. Remote Sens., vol. 60, pp. 1–15, 2022. [CrossRef]

- O. Vinyals, C. Blundell, T. Lillicrap, K. Kavukcuoglu, and D. Wierstra, “Matching networks for one shot learning,” in Proc. Annu. Conf. Neural Inf. Process. Syst., vol. 29, 2016, pp. 3630–3638.

- C. Finn, P. Abbeel, and S. Levine, “Model-agnostic meta-learning for fast adaptation of deep networks,” in Proc. Int. Conf. Mach. Learn. PMLR, 2017, pp. 1126–1135.

- J. Snell, K. Swersky, and R. Zemel, “Prototypical networks for few-shot learning,” in Proc. Annu. Conf. Neural Inf. Process. Syst., 2017, vol. 30, pp. 4080–4090.

- F. Sung, Y. Yang, L. Zhang, T. Xiang, P. H. Torr, and T. M. Hospedales, “Learning to compare: Relation network for few-shot learning,” in Proc. IEEE Conf. Comput. Vis. Pattern Recognit., 2018, pp. 1199–1208.

- M. Zhai, H. Liu, and F. Sun, “Lifelong learning for scene recognition in remote sensing images,” IEEE Geosci. Remote. Sens. Lett., vol. 16, no. 9, pp. 1472–1476, Sep. 2019. [CrossRef]

- D. Wertheimer, L. Tang, and B. Hariharan, “Few-shot classification with feature map reconstruction networks,” in Proc. IEEE Conf. Comput. Vis. Pattern Recognit., 2021, pp. 8008–8017.

- Y. Liu, W. Zhang, C. Xiang, T. Zheng, D. Cai, and X. He, “Learning to affiliate: Mutual centralized learning for few-shot classification,” in Proc. IEEE Conf. Comput. Vis. Pattern Recognit., 2022, pp. 14391–14400.

- F. Hao, F. He, J. Cheng, and D. Tao, “Global-local interplay in semantic alignment for few-shot learning,” IEEE Trans Circuits Syst Video Technol, vol. 32, no. 7, pp. 4351–4363, Jul. 2022. [CrossRef]

- W. Chen, C. Si, Z. Zhang, L. Wang, Z. Wang, and T. Tan, “Semantic prompt for few-shot image recognition,” in Proc. IEEE/CVF Conf. Comput. Vis. Pattern Recognit., 2023, pp. 23581–23591.

- J. Ma, W. Lin, X. Tang, X. Zhang, F. Liu, and L. Jiao, “Multi-pretext-task prototypes guided dynamic contrastive learning network for few-shot remote sensing scene classification,” IEEE Trans. Geosci. Remote Sens., vol. 61, pp. 1–16, 2023. [CrossRef]

- M. Hamzaoui, L. Chapel, M.-T. Pham, and S. Lefèvre, “Hyperbolic prototypical network for few shot remote sensing scene classification,” Pattern Recognit. Lett., vol. 177, pp. 151–156, 2024. [CrossRef]

- J. Deng, Q. Wang, and N. Liu, “Masked second-order pooling for few-shot remote sensing scene classification,” IEEE Geosci. Remote Sens. Lett., vol. 21, pp. 1–5, 2024. [CrossRef]

| Datasets | Training classes | Validation classes | Testing classes |

|---|---|---|---|

| UCM | airplane, baseball diamond, chaparral, denser residential, freeway, golf course, harbor, mobile home park, overpass, parking lot | agricultural, tanks, forest, runway, sparse residential | beach, river, buildings, medium residential, intersection, storage, tennis court |

| AID | airport, park, bare land, center, desert, farmland, industrial, medium residential, parking, playground, pond, viaduct, railway station, resort, school, stadium | baseball field, bridge, church, commercial area, port, meadow, river, storage tanks | beach, commercial, dense residential, mountain, forest, sparse residential, square |

| NWPU-RESISC45 | airport, baseball diamond, bridge, chaparral, church, cloud, desert, forest, freeway, golf course, harbor, island, lake, meadow, mountain, overpass, palace, rectangular farmland, railway, roundabout, sea ice, sparse residential, stadium, wetland, thermal | beach, terrace, power station, industrial area, mobile home park, railway station, river, snowberg, storage tank, tennis court | airplane, basketball court, circular farmland, dense residential, ground track field, intersection, medium residential, parking lot, runway, ship, |

| Method | Year | 5-way 1-shot | 5-way 5-shot | ||||

| UCM | AID | NWPU-RESISC45 | UCM | AID | NWPU-RESISC45 | ||

| MatchingNet [47] | 2016 | 34.70 | 33.87 | 37.61 | 52.71 | 50.40 | 47.10 |

| MAML [48] | 2017 | 48.86±0.74 | 43.20±0.77 | 48.40±0.82 | 60.78±0.62 | 60.37±0.75 | 62.90±0.69 |

| ProtoNet [49] | 2017 | 52.27±0.20 | 54.32±0.86 | 40.33±0.18 | 69.86±0.15 | 67.80±0.64 | 63.82±0.56 |

| RelationNet [50] | 2018 | 48.08±1.67 | 54.62±0.80 | 66.43±0.73 | 61.88±0.50 | 68.80±0.66 | 78.35±0.51 |

| LLSR [51] | 2019 | 39.47 | 45.18 | 51.43 | 57.40 | 61.76 | 72.90 |

| DeepEMD [43] | 2020 | 58.47±0.76 | 61.04±0.77 | 64.39±0.84 | 70.42±0.58 | 74.51±0.55 | 78.01±0.56 |

| DLA-MatchNet [12] | 2021 | 53.76±0.62 | 61.99±0.94 | 68.80±0.70 | 63.01±0.51 | 75.03±0.67 | 81.63±0.46 |

| FRN [52] | 2021 | 50.89±0.37 | 62.29±0.37 | 64.98±0.42 | 68.34±0.30 | 79.33±0.24 | 81.65±0.25 |

| MCL-Katz [53] | 2022 | 50.73 | 55.28 | 63.30 | 68.95 | 75.66 | 80.78 |

| GLIML [54] | 2022 | 56.41±0.62 | 61.28±0.61 | 66.86±0.68 | 70.40±0.41 | 79.56±0.41 | 78.91±0.45 |

| SPNet [45] | 2022 | 57.64±0.73 | - | 67.84±0.87 | 73.52±0.51 | - | 83.94±0.50 |

| SCL-MLNet [13] | 2022 | 51.37±0.79 | 59.46±0.96 | 62.21±1.12 | 68.09±0.92 | 76.31±0.68 | 80.86±0.76 |

| SPFSR [55] | 2023 | 55.40±1.11 | 60.01±1.09 | 65.97±1.22 | 71.38±0.77 | 75.40±0.76 | 80.72±0.79 |

| MPCLNet [56] | 2023 | 56.46±0.21 | 60.61±0.43 | 55.94±0.04 | 76.57±0.07 | 76.78±0.08 | 76.24±0.12 |

| HProtoNet [57] | 2024 | 57.51±0.95 | 59.78±0.58 | 66.41±0.87 | 75.08±0.29 | 75.87±0.35 | 82.71±0.41 |

| MSoP-Net [58] | 2024 | 54.27±0.60 | - | 67.05±0.80 | 69.77±0.38 | - | 82.02±0.46 |

| FS-RSSCvS [35] | 2024 | 59.05±0.84 | 61.62±0.75 | 67.05±0.80 | 76.34±0.51 | 81.32±0.45 | 84.00±0.46 |

| Ours | 60.23±0.75 | 61.78±0.92 | 69.58±0.36 | 77.65±0.48 | 82.76±0.64 | 84.82±0.51 | |

| Method | Params (M) |

5-way 1-shot | 5-way 5-shot | ||||

| Time(ms) | FLOPs(G) | Memory(G) | Time(ms) | FLOPs(G) | Memory(G) | ||

| FRN | 0.98 | 47 | 24.00 | 4.35 | 56 | 29.65 | 4.35 |

| GLIML | 12.63 | 63 | 282.05 | 6.24 | 76 | 352.57 | 6.55 |

| MCL-Katz | 0.98 | 31 | 22.59 | 7.26 | 39 | 28.24 | 8.10 |

| SPFSR | 10.32 | 542 | 104 | 5.37 | 608 | 130 | 6.19 |

| MPCLNet | 45.01 | - | - | - | - | - | - |

| MSoP-Net | 2.10 | - | - | - | - | - | - |

| FS-RSSCvS | 1.85 | 47 | 26.91 | 5.95 | 41 | 33.63 | 6.46 |

| Ours | 1.87 | 45 | 27.10 | 6.22 | 53 | 33.86 | 6.78 |

| DA | MSFF | DAFM | IIMA | 5-way | |||||

|---|---|---|---|---|---|---|---|---|---|

| UCM | AID | NWPU-RESISC45 | |||||||

| 1-shot | 5-shot | 1-shot | 5-shot | 1-shot | 5-shot | ||||

| ✓ | ✗ | ✗ | ✗ | 43.73±0.35 | 58.26±0.45 | 43.84±0.65 | 62.46±0.54 | 49.24±0.57 | 65.15±0.78 |

| ✗ | ✓ | ✗ | ✗ | 42.85±0.67 | 56.83±0.71 | 43.12±0.64 | 60.39±0.84 | 47.57±0.64 | 63.57±0.38 |

| ✗ | ✗ | ✓ | ✗ | 42.79±0.58 | 57.63±0.47 | 42.57±0.46 | 61.78±0.36 | 48.95±0.46 | 64.13±0.56 |

| ✗ | ✗ | ✗ | ✓ | 41.64±0.76 | 55.61±0.54 | 41.85±0.65 | 59.17±0.68 | 46.38±0.72 | 62.64±0.41 |

| ✓ | ✓ | ✓ | ✗ | 59.36±0.54 | 76.95±0.73 | 60.32±0.57 | 80.41±0.65 | 68.85±0.43 | 83.83±0.76 |

| ✓ | ✓ | ✗ | ✓ | 57.67±0.68 | 74.18±0.34 | 58.01±0.83 | 81.53±0.47 | 67.73±0.56 | 82.51±0.63 |

| ✓ | ✗ | ✓ | ✓ | 58.21±0.83 | 75.36±0.47 | 59.18±0.65 | 79.67±0.49 | 66.57±0.82 | 81.34±0.58 |

| ✓ | ✓ | ✓ | ✓ | 60.23±0.75 | 77.65±0.48 | 61.78±0.92 | 82.76±0.64 | 69.58±0.36 | 84.82±0.51 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).