The Connectivity Paradox

The Babylonian Talmud records a striking declaration from Honi the Circle-Drawer: “או חברותא או מיתותא,” or “Either companionship or death” (Babylonian Talmud, Ta’anit 23a). This was not hyperbole but observation. Humans require connection to sustain psychological and social existence. Today, we face the inverse paradox. Over the past decade, social media platforms have expanded in scale and technical sophistication while producing diminishing social returns. They promise unlimited connection yet deliver unprecedented isolation. Empirical work consistently reports a paradox of hyper-connectivity alongside rising loneliness, passivity, and affective fatigue (Sujon 2021; Bucher 2017). Platforms increasingly optimize for attention capture and behavioral prediction, rather than interpersonal connection (Bucher and Helmond 2018). The infrastructure of connection has never been more sophisticated, yet the experience of companionship has never been more elusive. Current analytical frameworks in media studies, platform studies, and communication theory were built for social systems with humans as senders and receivers. This assumption no longer holds.

Why Existing Frameworks Fall Short

Dominant frameworks assume that more connection equals more sociality. Current academic research on social media platforms assumes that when users interact with platforms (liking, commenting, scrolling), this interaction means users have agency (control, meaningful choice, power to act), even when algorithmic control remains opaque (Bucher 2017; Bucher and Helmond 2018; Cho et al. 2024). Yet empirical evidence contradicts this assumption. High social media use correlates with loneliness, fatigue, and social withdrawal (Hunt et al. 2018; Twenge et al. 2019), with loneliness now reframed as a public health crisis (Holt-Lunstad et al. 2015). The problem is not lack of connection, but the structure of interaction itself.

Many frameworks still inherit assumptions from broadcast media and early networked communication. Cho et al. (2024) explicitly note that media literacy concepts were shaped by mass media logics and struggle to address platform-native dynamics. Platform environments are algorithmically personalized, predictive rather than reactive, and continuous rather than episodic. Frameworks designed for one-to-many or many-to-many interaction cannot explain systems that anticipate intent, remove explicit choice, and replace dialogue with optimization.

Platform studies focus on interfaces, user practices, and governance. Infrastructure studies focus on power, control, and embeddedness. The call to combine them is well-established (Plantin et al. 2018). But even the “platform-as-infrastructure” lens assumes human-to-human mediation. It does not address direct human–machine interaction, machine-initiated communication, the disappearance of the human “other,” or the replacement of social exchange with synthetic dyads.

Decades ago, Nass and colleagues proposed the Computers Are Social Actors (CASA) paradigm, demonstrating that humans treat computers as social actors (Reeves and Nass 1996; Nass, Steuer, and Tauber 1994). This occurs mindlessly and automatically (Nass and Moon 2000). Yet recent evidence shows this social response is not static. Users reduce politeness toward AI over repeated interactions, suggesting behavioral adaptation rather than fixed anthropomorphism (Lazebnik et al. 2025). These findings were foundational, but their theoretical framing assumed computers as interfaces and humans as primary agents. Today’s systems generate language, anticipate desire, and simulate intentionality. CASA explains why people respond socially to machines. It does not explain what happens when machines become the primary interaction partner or when they initiate the loop.

Most frameworks assume users act and platforms respond. But contemporary systems increasingly invert this relation. Feeds preload desire. Recommendations precede articulation. Requests become optional. Platforms deploy anticipatory algorithms that predict user needs and preemptively act. Amazon’s anticipatory shipping model (US Patent 8,615,473; Zhang et al. 2021) begins moving products before users click purchase, while adaptive interfaces continuously adjust based on predicted intentions (Prange et al. 2022). This produces a condition where the user is no longer an operator but becomes an operand. Concepts like “engagement,” “participation,” and “prosumer” fail to describe this inversion. Current frameworks lack terms to describe negative directionality (when systems act on users rather than for them) or triadic directionality (where AI acts as mediator rather than replacement). Without such distinctions, AI-mediated connection and AI-substitutive isolation collapse into the same category. Agency loss and agency redistribution appear indistinguishable.

This paper fills a conceptual gap by formalizing directionality as a structural variable. It identifies Zero-Directionality as a regime where the presumption of human-to-human connection collapses, and shows how this condition can diverge into two distinct trajectories: the Inverted Loop (-1SC) and the Triadic Mesh (3SC). Existing frameworks explain platforms, networks, and media effects. They do not explain what happens when the social is removed or when the algorithm becomes the sole architect of experience. This is not a refinement. It is a change of coordinate system.

Theoretical Framework: The Zero Degree of Connection

To understand why zero-directionality marks a structural break, we must clarify what we mean by directionality and how platform evolution has systematically reduced it. Traditional media (newspapers, radio, television before audience participation mechanisms) operated outside interactive communication and fall outside the directionality framework. The emergence of feedback mechanisms such as letters to the editor and call-ins to radio stations introduced the first gated bi-directional (2-Way) communication. Audience-to-producer loops existed, but with high latency and editorial gatekeeping. Early social platforms (MySpace, Facebook) ungated these loops through mutual consent, reciprocal visibility, and accelerated feedback. Asymmetric platforms (Twitter, Instagram) introduced uni-directional (1-Way) models with broadcasting without reciprocity and algorithmic curation replacing the social graph. But even in the interest graph, the focus remained human-generated content mediated by algorithms. Zero-directional (0-Way) communication removes the human “other” entirely. The user interacts with a synthetic agent in a closed dyad. This is not a further reduction along the same axis but a qualitative break that enables branching into divergent trajectories.

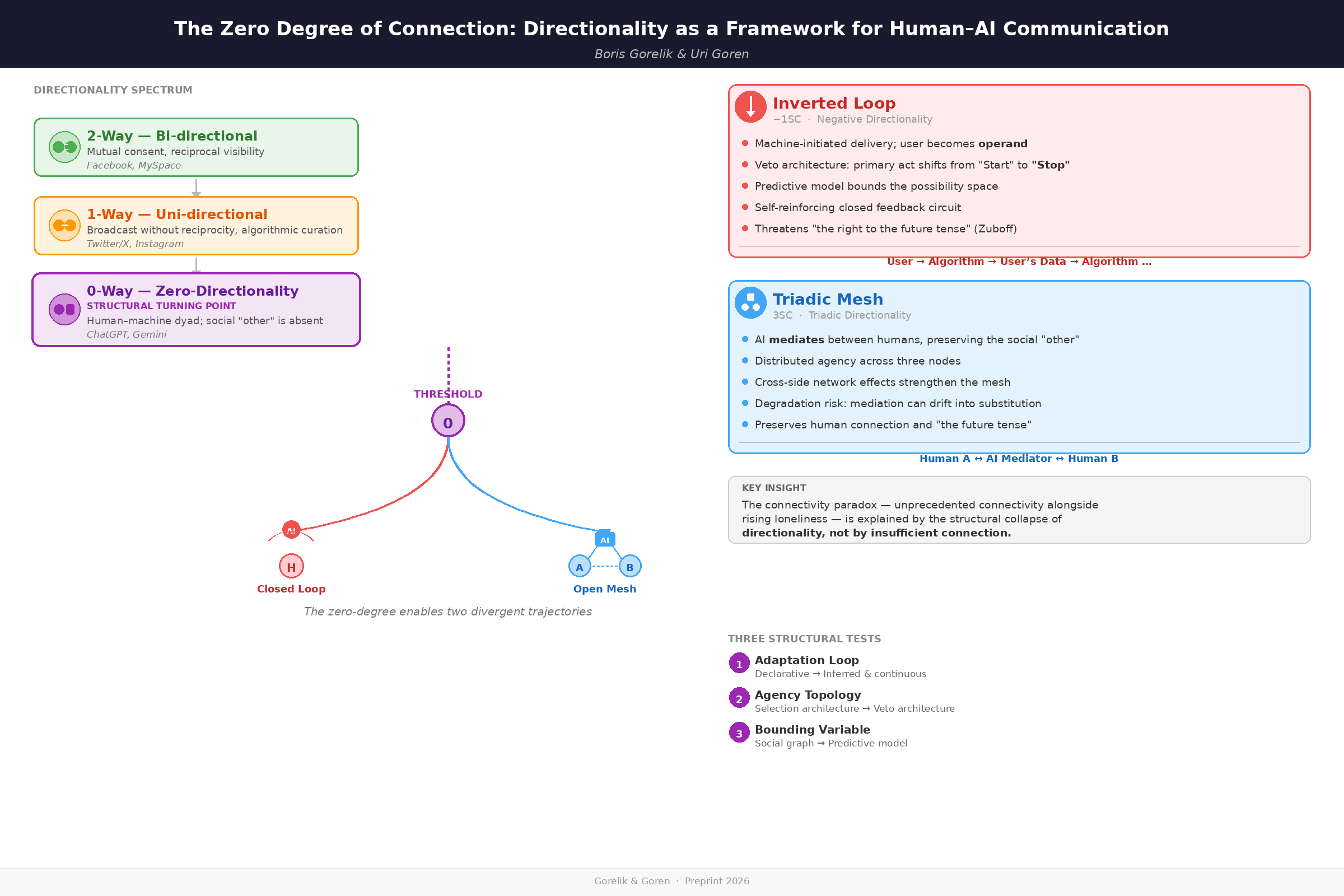

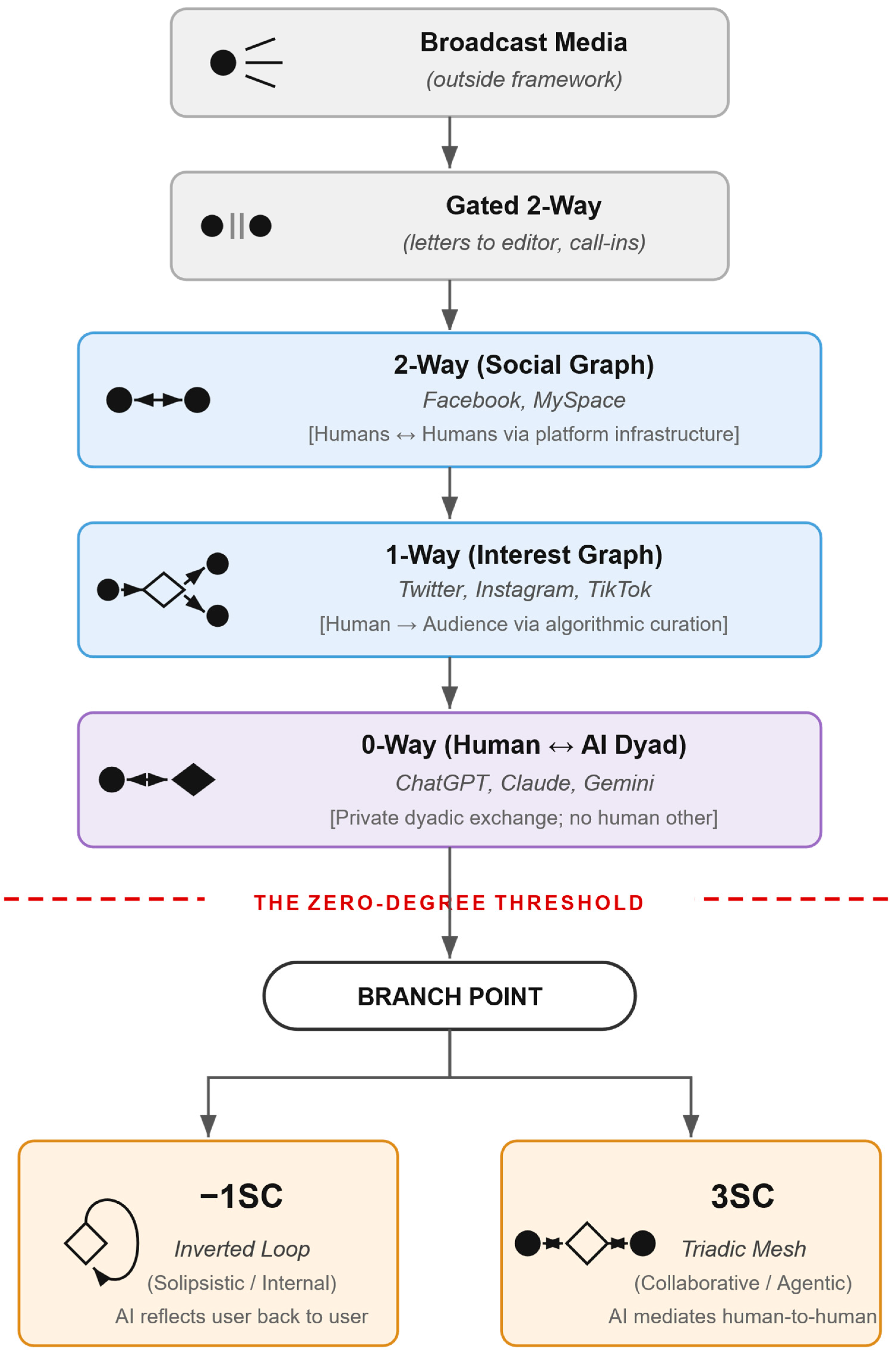

Figure 1.

Directionality Regimes. This diagram classifies communication structures by directionality. Broadcast media fall outside the framework. Gated and ungated bi-directional communication (2-Way) involves reciprocal human-to-human interaction. Asymmetric uni-directional platforms (1-Way) involve broadcasting without reciprocity and algorithmic curation. Zero-directional communication (0-Way) marks the threshold where humans interact directly with AI rather than other humans. At this critical juncture, communication structure branches into two divergent trajectories: the Inverted Loop (-1SC), characterized by machine-initiated control and closed feedback circuits, and the Triadic Mesh (3SC), characterized by AI-mediated human-to-human connection. The zero-degree represents not a quantitative reduction but a qualitative break that enables fundamentally new directionality regimes.

Figure 1.

Directionality Regimes. This diagram classifies communication structures by directionality. Broadcast media fall outside the framework. Gated and ungated bi-directional communication (2-Way) involves reciprocal human-to-human interaction. Asymmetric uni-directional platforms (1-Way) involve broadcasting without reciprocity and algorithmic curation. Zero-directional communication (0-Way) marks the threshold where humans interact directly with AI rather than other humans. At this critical juncture, communication structure branches into two divergent trajectories: the Inverted Loop (-1SC), characterized by machine-initiated control and closed feedback circuits, and the Triadic Mesh (3SC), characterized by AI-mediated human-to-human connection. The zero-degree represents not a quantitative reduction but a qualitative break that enables fundamentally new directionality regimes.

2-Way and 1-Way: The Pre-Zero Baseline

In 2-Way platforms (early Facebook, MySpace), control rests with human participants who mutually consent to connect; mediation is minimal (storage and routing); anticipation is human-centered. In 1-Way platforms (Twitter, Instagram), users broadcast without reciprocity; algorithmic curation increases mediation by filtering and ranking content; users begin anticipating algorithmic preferences, but communication remains human-to-human. In both models, humans occupy both ends of the communicative act. The machine facilitates but does not participate as a conversation partner.

0-Way Communication as a Structural Turning Point

Zero-directional (0-Way) communication refers to direct human–machine interaction in a dyadic loop (e.g., user with ChatGPT or Google’s Gemini). Unlike broadcast or interpersonal exchanges, this zero-degree of connection collapses traditional sender–receiver roles: for the first time, people converse with a non-human entity about anything, as they do with other humans. The idea is not new. Nass and colleagues demonstrated in the 1990s that human-computer dyads elicit social responses—participants applied social rules to computers, exhibiting politeness and reciprocity even when they knew machines lacked feelings (Nass, Steuer, and Tauber 1994; Nass and Moon 2000). What CASA revealed as structural potential, contemporary LLMs actualize as communicative practice by generating adaptive responses, simulating intentionality, and sustaining multi-turn dialogues.

The machine in 0-Way is not a passive channel but an active conversational partner, yet not a human other. Communication loops from user to algorithm and back in a closed circuit where information is co-constructed through iterative feedback. Recent work proposes that LLM interactions reflect automatic cognitive patterns rather than deliberative reasoning, explaining why such systems evoke social responses without reciprocal agency (Gorelik 2025). This zero-degree serves as a threshold because once the presumption of human-to-human connection collapses, the system can evolve in fundamentally different directions: either the machine takes control (Inverted Loop) or the machine mediates human connection (Triadic Mesh).

Agency as the Core Analytical Problem

The rise of 0-Way communication highlights agency as the central analytical concern. Who (or what) is acting when a human and an AI agent interact? Users perceive and respond to AI outputs as if they originate from an agentive source (Sundar 2020; Brandtzaeg et al. 2023), challenging the human-centric premise of traditional communication models. Neff and Nagy (2016) argue that only a perspective of “symbiotic agency” can adequately explain such interactions. In the 0-Way paradigm, agency is not a zero-sum game between human and machine but a fluid, networked phenomenon distributed across both parties.

Three conceptual dimensions are transformed at the zero-degree. Control becomes reciprocal and opaque: the AI’s behavior is shaped by training corpora and internal weights beyond any single user’s influence, leading users to “appease” the algorithm through anticipatory compliance (Bucher 2018). Mediation becomes active: in Latour’s (1992) terms, the machine acts as a mediator, not a mere intermediary, translating and modifying intentions through technical affordances. Anticipation remains user-directed—the user initiates, the AI responds with novel content rather than merely retrieving existing content—but the machine does not yet initiate on its own behalf.

Defining Agency in Human-Machine Communication

We define agency as the capacity to initiate action, choose among alternatives, and produce outcomes. This definition separates agency (who can act) from the control, mediation, and anticipation dimensions already established in Table 1 (how action unfolds).

We specify three agency dimensions critical to understanding post-zero regimes:

Authorial Agency: The capacity to initiate processes and set them in motion. This is the traditional locus of human intentionality—the ability to start something.

Inhibitory Agency: The capacity to arrest or modify processes already in motion. This represents reactive control—the ability to stop something.

Configurational Agency: Agency that emerges from distributed human-machine or human-machine-human configurations rather than residing in any single actor. This captures the networked, interdependent nature of agency in triadic systems.

These dimensions provide an analytical vocabulary for distinguishing how agency is reconfigured (not erased) across directionality regimes.

This framework generates two falsifiable propositions linking structural conditions to agency outcomes:

Proposition 1 (Inverted Loop): When systems exhibit all three structural conditions simultaneously—(a) machine-initiated delivery without user triggering action (Null-State Test), (b) veto architecture with asymmetric friction (Agency Topology Test), and (c) predictive model constraints dominating over social graph constraints (Bounding Variable Test)—users will report: (i) decreased sense of authorship despite maintained choice opportunities, (ii) increased subjective friction costs for non-compliance relative to compliance, and (iii) higher engagement time coupled with lower satisfaction ratings compared to user-initiated systems.

Proposition 2 (Triadic Mesh): When AI mediates human-to-human communication (3SC) rather than human-to-data communication (-1SC), users will report: (i) higher perceived autonomy despite identical algorithmic sophistication, (ii) lower cognitive load in coordination tasks, and (iii) sustained intentional directionality toward the human “other” rather than toward the AI mediator.

These propositions link the structural indicators defined in Table 2 to subjective agency perceptions, making the framework empirically testable through mixed-methods approaches combining behavioral trace data (session duration, skip rates, return frequencies) with self-reported agency measures.

The 0-Point Branch: Negative and Triadic Directionality

At the zero-degree threshold, communication splits into two divergent trajectories: Negative Directionality (-1SC) and Triadic Directionality (3SC). The notation indicates structural transformation—-1SC marks inversion (the loop turns inward), while 3SC marks triadic expansion (communication extends across three nodes). Both are new post-0-Way paradigms that evolve from the human–machine dyad, yet they manifest very different logics of connection and control. This “split thesis” holds that reaching the 0-Way stage forces a fork in the road for how communication ecosystems develop next.

Defining the Threshold: Three Structural Tests

A predictable critique of this framework is that systems like TikTok are merely “strong 1-Way” platforms—highly curated broadcasts rather than a new directionality regime. However, this conflates algorithmic recommendation (providing options) with algorithmic preemption (executing decisions). To avoid distinct categories collapsing into a gradient, we propose three observable indicators—structural tests—that determine when a system crosses the threshold from 1-Way to the Inverted Loop.

Test 1: The Adaptation Loop Test (Continuous Learning). Does the system continuously update its behavioral model based on implicit signals, or does it require explicit reconfiguration? In 1-Way regimes, personalization relies on declared preferences or one-time configuration (subscriptions, follows, manual settings). The system presents content based on these static choices until the user explicitly changes them. In the Inverted Loop, the system continuously infers preferences from behavioral patterns (watch time, scrolling speed, interaction sequences) and automatically adapts what it presents without requiring explicit reconfiguration. The threshold is crossed when adaptation shifts from declarative and episodic to inferred and continuous.

Test 2: The Agency Topology (Choice Architecture). Does the user select from options or veto a pre-selected stream? In 1-Way regimes, users operate within a Selection Architecture—they choose among visible alternatives, scanning options and clicking to engage. In the Inverted Loop, users operate within a Veto Architecture—the system presents a single option as reality, and the user must actively intervene to stop or reject it. Explicit engagement signals (likes, shares, comments) may exist in both regimes, but in the Inverted Loop they are optional feedback rather than required for content delivery. This is the most crisp distinction. In veto architecture, choice shifts from “Item A versus Item B” to “Compliance versus Resistance.” The primary act changes from “Start” to “Stop.”

Test 3: The Bounding Variable (Constraint Logic). What constrains the possibility space of what users encounter? In 1-Way regimes, content is bounded by the social graph—explicit user connections determine what appears (who I follow, what I subscribe to). In the Inverted Loop, content is bounded by the predictive model—algorithmic forecasts of engagement probability determine what appears, independent of explicit user choices about connections.

Table 2 sets out these three structural tests across ten observable dimensions, linking each to its corresponding test and providing concrete indicators for empirical analysis.

Operational Rule: A system enters the Inverted Loop (-1SC) if and only if all three tests indicate inversion: (1) continuous behavioral adaptation (the system infers preferences from implicit signals and adapts automatically), (2) preemption of choice through veto architecture (the user can only reject pre-selected options), and (3) predictive model constraints dominate over social graph constraints (content bounded by algorithmic forecasts rather than explicit connections).

Applying the Tests: TikTok and Amazon

To demonstrate how these tests work in practice, consider TikTok—a platform that exhibits different directionality regimes within different features. The Search tab operates as 1-Way: users type queries (Test 1: declarative input), select from results (Test 2: selection architecture), with content bounded by keyword matching (Test 3: query-driven constraint). The For You Page operates as Inverted Loop: the algorithm continuously learns from watch time and interaction patterns (Test 1: continuous behavioral adaptation), users swipe to skip or continue the pre-selected stream (Test 2: veto architecture), with content bounded by engagement prediction (Test 3: predictive model constraint).

This within-platform heterogeneity demonstrates that the framework analyzes structural interaction patterns, not platform labels. The same company, same app, different regimes.

The Agency Topology reveals the mechanism. In Selection Architecture (1-Way), users scan options and click—the primary act is “Start.” In Veto Architecture (Inverted Loop), the system presents one item as reality; users must intervene to stop it—the primary act is “Stop” or “Skip.” Agency contracts from Authorial Agency (initiating processes) to Inhibitory Agency (arresting processes already in motion).

Amazon’s recommendation system illustrates the boundary precisely. A recommendation requiring a “Buy” click operates as Selection Architecture (1-Way). Anticipatory shipping—where the book arrives at the door and the user must actively return it—operates as Veto Architecture (Inverted Loop). Even if the underlying algorithm is identical, the directionality of action has inverted. In the first case, the user pulls; in the second, the system pushes.

Negative Directionality (-1SC): The Inverted Loop

The Inverted Loop is an inward-turning, self-referential feedback circuit where 0-Way communication creates a closed system amplifying internal reference at the expense of external interaction. Users often willingly enter this state to achieve cognitive offloading—delegating initiation to the system in exchange for reduced decision fatigue. This is not technological coercion but a negotiated trade: convenience for autonomy. We call it “negative” not to denote value judgment, but to indicate a reversal of outward directionality—analogous to the “filter bubble” phenomenon (Pariser 2011), where one’s informational world becomes a closed sphere of algorithmically reinforced preferences.

The most radical extension of the Inverted Loop emerges in brain-machine interfaces (BMIs) such as Neuralink, which enable bidirectional communication between brain and external devices (Fiani et al. 2021). While initial applications focus on restoring lost sensory or motor functions for disabled individuals, advanced closed-loop BMI systems can detect neural patterns associated with mood disorders and deliver personalized stimulation to modulate emotional states in real-time (Chen et al. 2025; Klein et al. 2024). Here, the system does not merely anticipate external needs or deliver physical products but modulates the user’s internal affective experience. The agency to feel a particular emotion becomes negotiable, potentially outsourced to an algorithmic feedback loop.

Control: Control inverts from user-initiated to machine-initiated. Users still make choices, but operate within an environment of options pre-shaped by algorithmic curation (Seaver 2017), creating paradoxical agency.

Mediation: Mediation becomes predictive and preemptive rather than reactive. The system anticipates user preferences and continuously adjusts what is presented, potentially locking user behavior into a self-reinforcing loop (Wiener 1950).

Anticipation: Anticipation shifts from user-initiated adaptation to machine-initiated prediction. As Zuboff (2019) argues, contemporary digital systems aim to “predict and modify human behavior,” pre-shaping available choices where the machine acts first and the user reacts (see also Prange et al. 2022).

In effect, the human becomes a node inside an AI-driven loop that is inward-facing and self-reinforcing. The human is no longer a user of the system but an operand (the “usee,” if you will). The negative directionality thus highlights a scenario of diminished exploratory direction. Communication is highly personalized and efficient, but at the cost of isolation. Importantly, this does not erase human agency (users still make choices), but it reframes agency as reactive and conditioned within algorithmic constraints.

Triadic Directionality (3SC): The Triadic Mesh

In contrast, the positive branch after the 0-point—the Triadic Mesh—introduces a third node into the communication circuit, creating a triadic structure of interactions. Rather than collapsing inward, communication expands into a triadic structure. Human, machine, and another agent (which could be another human, a community, or an institutional actor) are all entangled in a three-way relational system. Here too, the word positive does not imply value judgment but indicates an outward expansion of directionality.

This model builds on the insight that digital platforms have inserted new intermediary actors into communication flows. For instance, on social media a user (actor 1) communicates to an audience (actor 2) through the platform’s algorithmic curation (actor 3), creating a clear triadic relationship. The Dynamic Intermediary Model (Ohme et al. 2025) captures this shift “from a dyadic to a triadic communication model of content flow with a potential intermediary,” which can be an “artificial agent” among other actors.

We extend this idea. In triadic directionality, AI actively mediates between humans rather than replacing them. The structure is Human A–AI–Human B, where the AI filters, translates, or generates messages between participants. Examples include real-time translation (speaker–translator–listener), AI-mediated customer service (customer–AI–supervisor), or collaborative team tools (team member A–AI–team member B).

Empirical evidence suggests preference for this structure over substitution. Post-COVID, Gen Z reports dating app fatigue and renewed preference for in-person connection (Sharabi et al. 2024; Rosenfeld 2025), signaling demand for AI as mediator rather than substitute. Real-time translation technologies enable multilingual conversations with AI as intermediary (Google 2025; Microsoft Translator 2025; Genovese et al. 2024). AI systems mediate romantic relationship initiation, with users reporting both benefits and authenticity concerns (Brandtzaeg et al. 2022; Smith et al. 2025). In each case, the AI occupies the third position, actively shaping communication flow while preserving the human “other.”

Control: Control becomes distributed and relational across three nodes. No single actor monopolizes the communicative process. Humans retain intentionality and can supervise or contextualize AI outputs, but control is shared with the machine mediator and with other human participants in the network. Each node in the triad influences the others. The human is not an isolated operator but part of a networked configuration where agency is interdependent.

Mediation: The AI functions as an Agentic Mediator—not merely passing messages (like a wire) but understanding and translating them. This distinguishes 3SC from standard “algorithmic mediation”: the AI participates in the communicative act rather than just shaping delivery channels. The AI filters, translates, and generates messages among multiple parties. This mediation is dynamic and multi-directional, linking humans to larger networks. Human intentionality and algorithmic operations co-exist in a mutual shaping relationship (Couldry and Hepp 2017). The presence of the AI mediator does not remove human will; it reconfigures it (Makhortykh 2024).

Anticipation: The AI anticipates needs across multiple participants, serving coordination rather than behavioral control. It facilitates human-to-human connection by predicting coordination needs, translating between participants, or surfacing relevant information. Humans adjust their communication to leverage the AI’s connective capabilities, not to appease it.

Triadic directionality produces a mesh structured around Human A–AI–Human B exchanges. Agency emerges from the interplay among three roles, preventing any single actor from monopolizing the process. Unlike the Inverted Loop’s isolation, this leads to webs of intermediation that can enrich communication with additional perspectives and computational power.

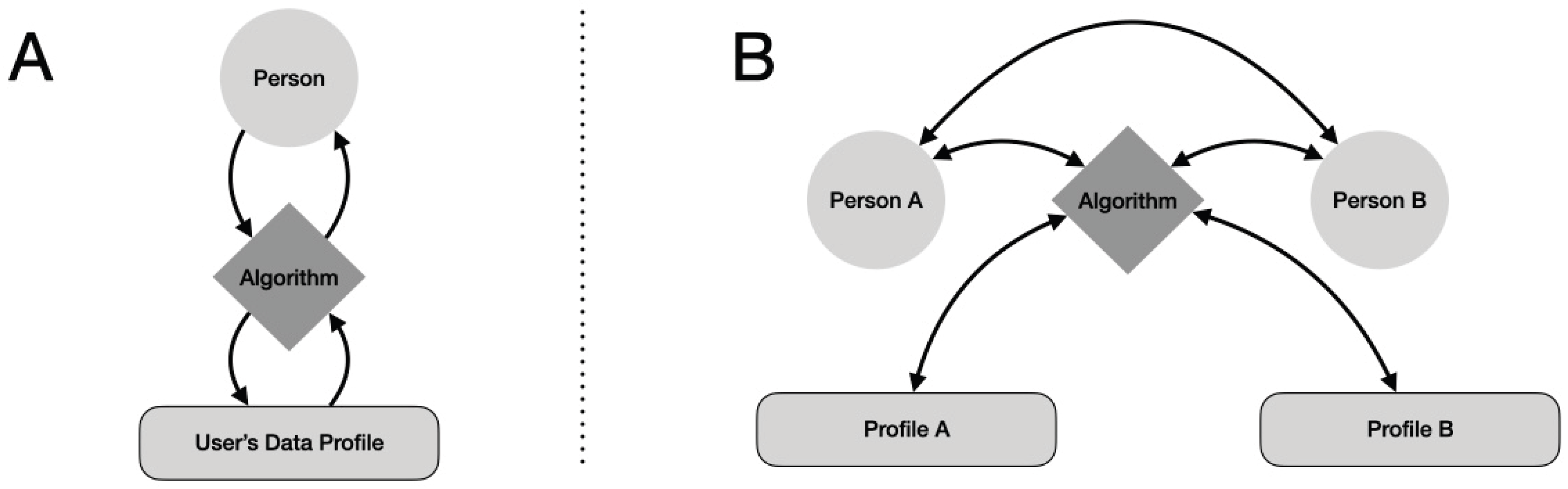

Figure 2.

Structural Topology of -1SC and 3SC Communication. A) The Inverted Loop (-1SC), where the algorithm mediates between the user and their own data profile, creating a closed, self-referential circuit. Information flows in a recursive loop: user behavior generates data, the algorithm processes this data to predict preferences, and presents pre-shaped options back to the user. B) Triadic Mesh (3SC), where the AI mediates between two distinct human participants, creating an open circuit that preserves the human “other.” While the AI actively transforms communication, the essential human-to-human connection remains intact. This structural difference determines whether AI functions as a substitute for human connection (closed loop) or as infrastructure enabling it (open mesh).

Figure 2.

Structural Topology of -1SC and 3SC Communication. A) The Inverted Loop (-1SC), where the algorithm mediates between the user and their own data profile, creating a closed, self-referential circuit. Information flows in a recursive loop: user behavior generates data, the algorithm processes this data to predict preferences, and presents pre-shaped options back to the user. B) Triadic Mesh (3SC), where the AI mediates between two distinct human participants, creating an open circuit that preserves the human “other.” While the AI actively transforms communication, the essential human-to-human connection remains intact. This structural difference determines whether AI functions as a substitute for human connection (closed loop) or as infrastructure enabling it (open mesh).

Reframing (Not Erasing) Human Agency

Human agency is not erased at the zero-degree but structurally reconfigured—a change in the architecture of choice rather than loss of free will. In the Inverted Loop, exercising agency becomes costly (friction to resist) while compliance becomes effortless. In the Triadic Mesh, agency becomes distributed across three nodes. In both cases, humans operate as partial agents in larger socio-technical systems, ceding some control to machines while gaining new capabilities. This aligns with adaptive views of agency in human–computer interaction: users and intelligent systems form joint cognitive systems where goals and actions are negotiated (Hollnagel and Woods 2005; Muldoon 2023).

Reframing agency also means rethinking responsibility. The Inverted Loop can obscure accountability—did the user choose that outcome, or was it the recommender algorithm? The Triadic Mesh diffuses responsibility across human and AI nodes. The question is not human agency versus machine agency, but how human agency is reconfigured in tandem with machine agency.

Comparative Framework

Table 1 summarizes how control, mediation, and anticipation transform across directionality regimes:

The following section traces how these regimes manifest across digital content platforms, physical systems, and lived experience.

Post-Zero Society: Agency, Citizenship, and Everyday Life in AI-Mediated Environments

The theoretical framework developed in preceding sections identifies the zero-degree threshold and its branch into the Inverted Loop and Triadic Mesh trajectories. This section bridges theory to lived experience, examining how these structures manifest in contemporary platforms and what they mean for agency, citizenship, and everyday life.

4.1. The Inverted Loop in Everyday Life

The Inverted Loop trajectory appears across multiple domains where anticipatory algorithms act before users articulate needs.

Digital content platforms exemplify closed feedback loops. TikTok’s recommendation engine does not wait for users to search; it presents content based on predictive models trained on engagement patterns. Young adults describe this as “encountering” rather than seeking content—a passive reception where “normal news is boring” compared to algorithmically optimized entertainment (Hendrickx 2025). Netflix autoplays the next episode before viewers decide to continue. Spotify pre-downloads songs it predicts users will want offline. Instagram’s Explore tab surfaces content users never requested. In each case, the system acts first; the user reacts to options already curated. The diagnostic question (“Did I initiate this, or did the system?”) yields a consistent answer. The machine initiates.

Physical and logistics systems extend this pattern beyond screens. Amazon’s anticipatory shipping moves products toward customers before purchase (US Patent 8,615,473; Zhang et al. 2021; Liu et al. 2024), while major retailers deploy predictive inventory systems to pre-position stock based on anticipated demand (Brinch et al. 2021). One’s role shifts from decision-maker to recipient of pre-selected options.

Ambient computing represents the most seamless example. Smart home systems adjust temperature before residents arrive, based on learned routines (Ali and Shah 2024; Rashidi and Cook 2011). Google Maps suggests departure times based on predicted traffic. These systems operate without explicit commands, with voice-activated interfaces becoming increasingly seamless. The environment anticipates; the human inhabits a space already configured for predicted needs.

The structural problem across these domains is consistent. The user becomes operand rather than operator. Choices remain, but within algorithmically pre-shaped parameters. This has implications beyond convenience. In civic life, algorithmically curated news feeds determine which political information reaches citizens before they seek it. In consumption, purchase decisions are increasingly reactions to recommendations rather than autonomous choices. Agency persists, but as reactive navigation within machine-initiated environments.

4.2. The Triadic Mesh in Everyday Life

The Triadic Mesh trajectory manifests where AI mediates human-to-human connection rather than replacing it.

Communication technologies illustrate this structure. Real-time translation tools from Google and Microsoft insert AI between speakers of different languages, enabling conversations otherwise impossible, with demonstrated accuracy in brief communications (Google 2025; Microsoft Translator 2025; Genovese et al. 2024). The AI occupies the third position. Person A speaks, AI translates, Person B receives. The human “other” remains present; the machine enables rather than substitutes.

Relationship and professional mediation follows the same pattern. AI systems increasingly mediate romantic relationship initiation, with users reporting both practical benefits and concerns about authenticity (Brandtzaeg et al. 2022; Smith et al. 2025). AI interview preparation tools are proliferating, offering personalized feedback through speech analysis and behavioral assessment (Patil et al. 2024), though their effectiveness relative to traditional preparation remains empirically contested. In each case: Person A, AI mediator, Person B. The triad has the ability to preserve human connection while augmenting it.

Post-COVID evidence supports structural preference for this model. A majority of Gen Z people report dating app burnout and renewed desire for in-person connection (Sharabi et al. 2024; Rosenfeld 2025). This signals not technology rejection but preference for the Triadic Mesh over the Inverted Loop—AI-mediated human interaction rather than AI-substituted isolation. However, this structural advantage is not guaranteed to persist. As discussed in Section 5, the stability of triadic mediation depends on preventing the AI mediator from becoming a substitute for human connection—a degradation risk inherent to increasingly agentic systems.

A distinctive feature of the Triadic Mesh is network effects. The AI mediator becomes more valuable as more humans use it, drawing on a larger pool of connections to facilitate interactions. This exhibits what network effects theory terms “cross-side network effects”: each user added to one side of the platform increases value for users on the other side through improved mediation (Rochet and Tirole 2003; Chu and Manchanda 2016). Translation platforms become more effective with diverse language pairs. AI dating coaches improve as training data accumulates from successful human matches. Interview preparation tools strengthen as more employer feedback enters the system. The value accrues not to the platform alone but to the expanding network of human participants. Unlike Inverted Loop systems that exhibit data network effects (algorithmic improvement through individual user data extraction), Triadic Mesh systems create value through coordination across participants. This structural difference has implications for platform economics: the Triadic Mesh requires reaching critical mass where mediation value exceeds standalone tool value, but once achieved, generates self-reinforcing growth as each new participant strengthens the network for all others.

4.3. Navigating Directionality

Recognizing which regime one inhabits requires diagnostic attention. Key questions include: Did I initiate this interaction, or did the system? Is there a human “other” here, or only the machine? Is AI connecting me to humans (3SC) or replacing them (-1SC)? Are my choices pre-shaped before I see them?

Traditional digital literacy addresses source evaluation and privacy. Post-zero literacy requires recognizing directionality regimes, understanding when one operates as user versus operand, and when AI mediates versus substitutes. This is not a call for technology rejection but for structural awareness. It is the capacity to identify which trajectory a given system follows and to choose accordingly.

The inverted loop is the path of least resistance; it follows engagement optimization and data extraction incentives. The triadic mesh requires intentional design. Both reconfigure rather than eliminate human agency. The question is not whether to participate in post-zero environments (that choice has largely been made), but how to navigate them with awareness of the structural possibilities each trajectory enables and forecloses.

Discussion

The Structural Logic of the Branch Point

A critical question remains: why does the Triadic Mesh stem from 0-Way? The progression from 2-Way to 1-Way to 0-Way appears linear, but the emergence of triadic directionality seems to break this sequence. The answer lies in recognizing 0-Way not as a reduction but as a structural enablement.

The operational distinction between these trajectories—detailed in Section 3 via three structural tests (Null-State, Agency Topology, Bounding Variable)—reveals that the divergence is not semantic but mechanical. Once the human–machine dyad becomes viable—once AI can function as an interlocutor—it can also function as a mediator. Mediation requires at minimum three nodes: person A, mediator, and person B. The 0-Way threshold establishes AI’s capacity to participate in communication loops, which is the necessary precondition for both trajectories.

The Inverted Loop represents structural collapse: the dyad closes inward, removing the human “other” and creating a self-referential circuit. The system initiates unilaterally (machine-initiated delivery), preempts choice through veto architecture, and bounds possibility through predictive models rather than social connections. The Triadic Mesh represents structural elaboration: the dyad expands outward, inserting AI as infrastructure linking multiple humans. Here the AI mediates between participants rather than replacing them, preserving the human “other” even as it transforms communication flow. Both stem from 0-Way, but they diverge in opposite directions based on whether the system initiates unilaterally (Inverted Loop) or mediates between humans (Triadic Mesh)—one toward closure, one toward connection.

The Right to the Future Tense

The Inverted Loop trajectory poses a fundamental threat to what Zuboff (2019) terms “the right to the future tense”—the essence of free will and the capacity to project oneself into the future. Anticipatory systems do not merely predict; they act before individuals can. Amazon’s anticipatory shipping preempts purchase decisions. Algorithmic feeds preempt information seeking. Smart home systems preempt environmental preferences. Each anticipation narrows the space between present and future, replacing possibility with prediction.

The ultimate convergence of this trajectory emerges when three technologies intersect: brain-machine interfaces enabling direct neural input/output, infinite algorithmic feeds providing continuous content streams, and closed-loop neuromodulation systems regulating emotional states in real-time. Consider: a person equipped with a Neuralink-like interface passively consuming AI-curated content while the same interface detects neural responses and delivers stimulation to maximize engagement. The system acts on both information input and affective state. The loop closes completely. The user becomes pure operand in an algorithmic feedback system. Autonomy reduces to an illusion maintained by the system itself. This is not distant speculation—all component technologies exist or are in active development.

In contrast, the Triadic Mesh preserves the future tense. AI mediates but does not replace the human “other.” Translation tools enable conversations whose outcomes remain unpredictable. Dating coaches facilitate meetings between humans whose chemistry cannot be algorithmed. The future remains open precisely because genuine human interaction—with all its uncertainty—persists.