Submitted:

05 March 2026

Posted:

06 March 2026

Read the latest preprint version here

Abstract

Keywords:

I. Introduction

II. Why Linux Seems Attractive for Avionics

A. Engineering Richness and Rapid Development

B. Advances in Real-Time Responsiveness

C. Cost, Reuse, and Availability of Expertise

III. What Airworthiness Really Requires

IV. Core Limitations of Linux in Safety-Critical Avionics

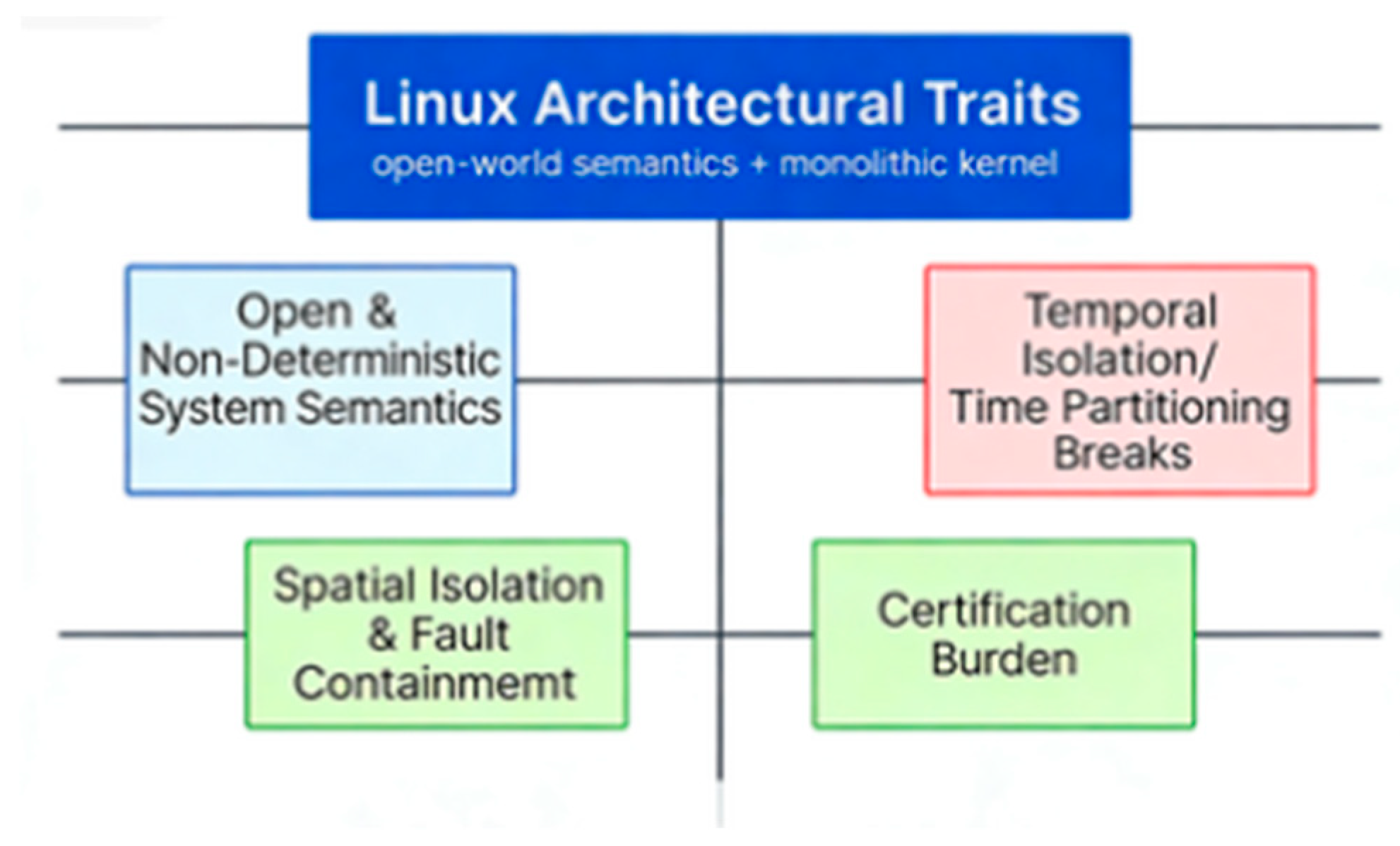

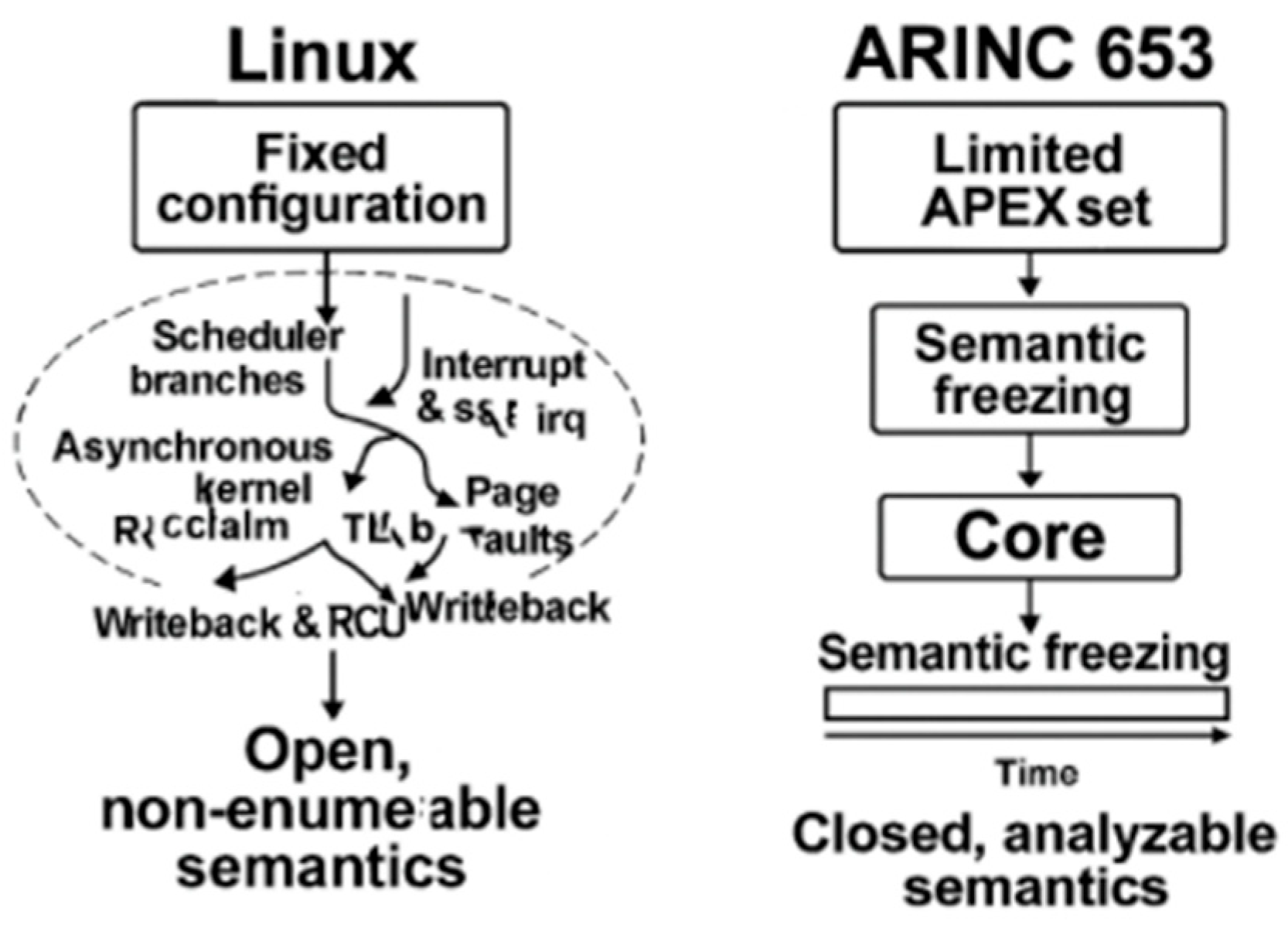

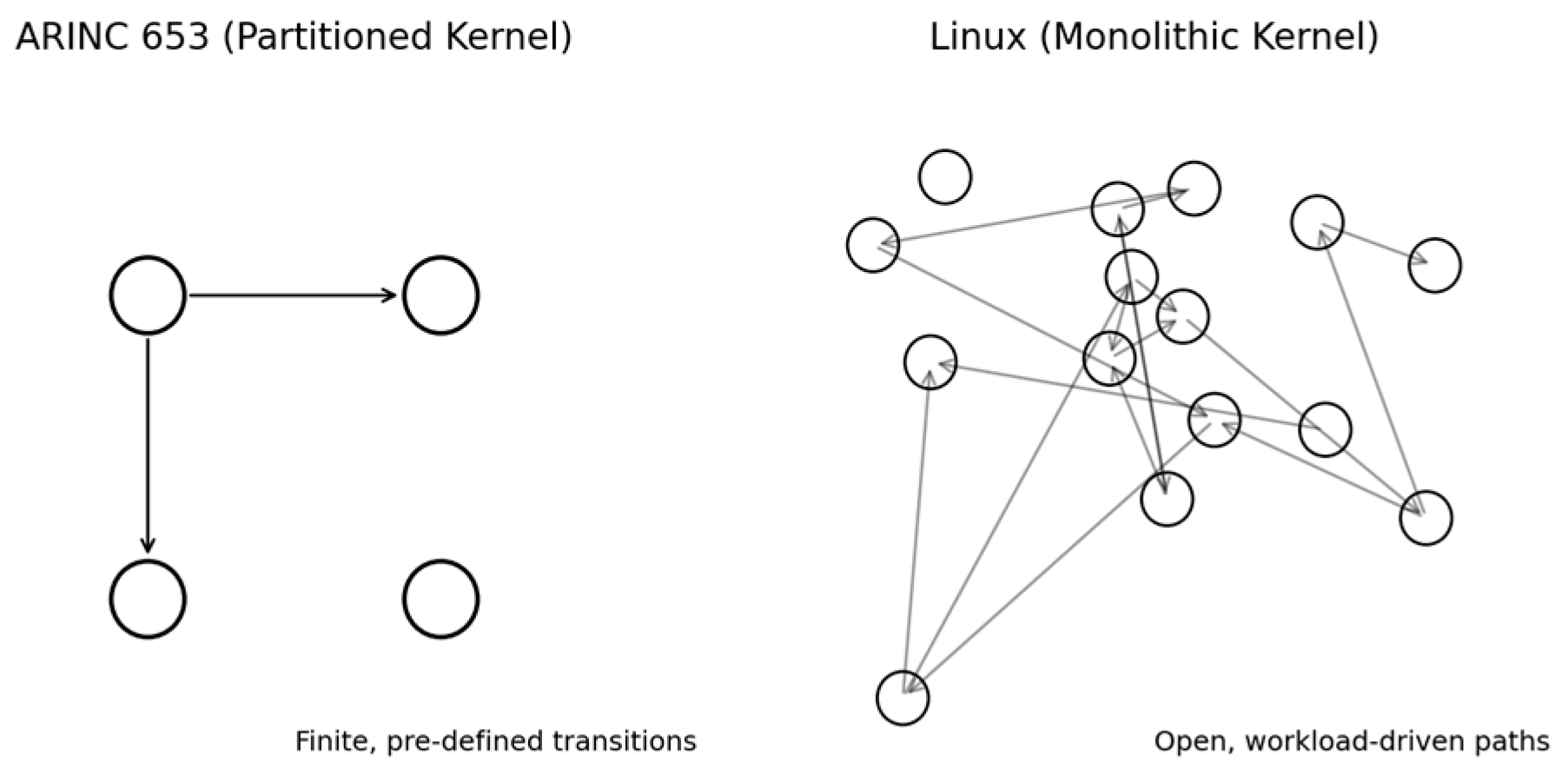

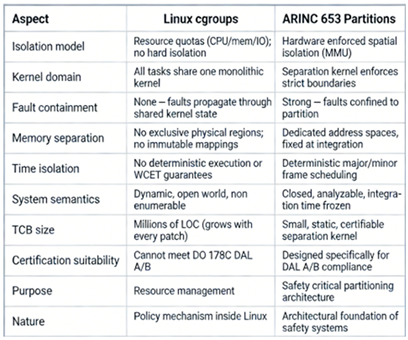

A. Open System Semantics with Unpredictable Behavior

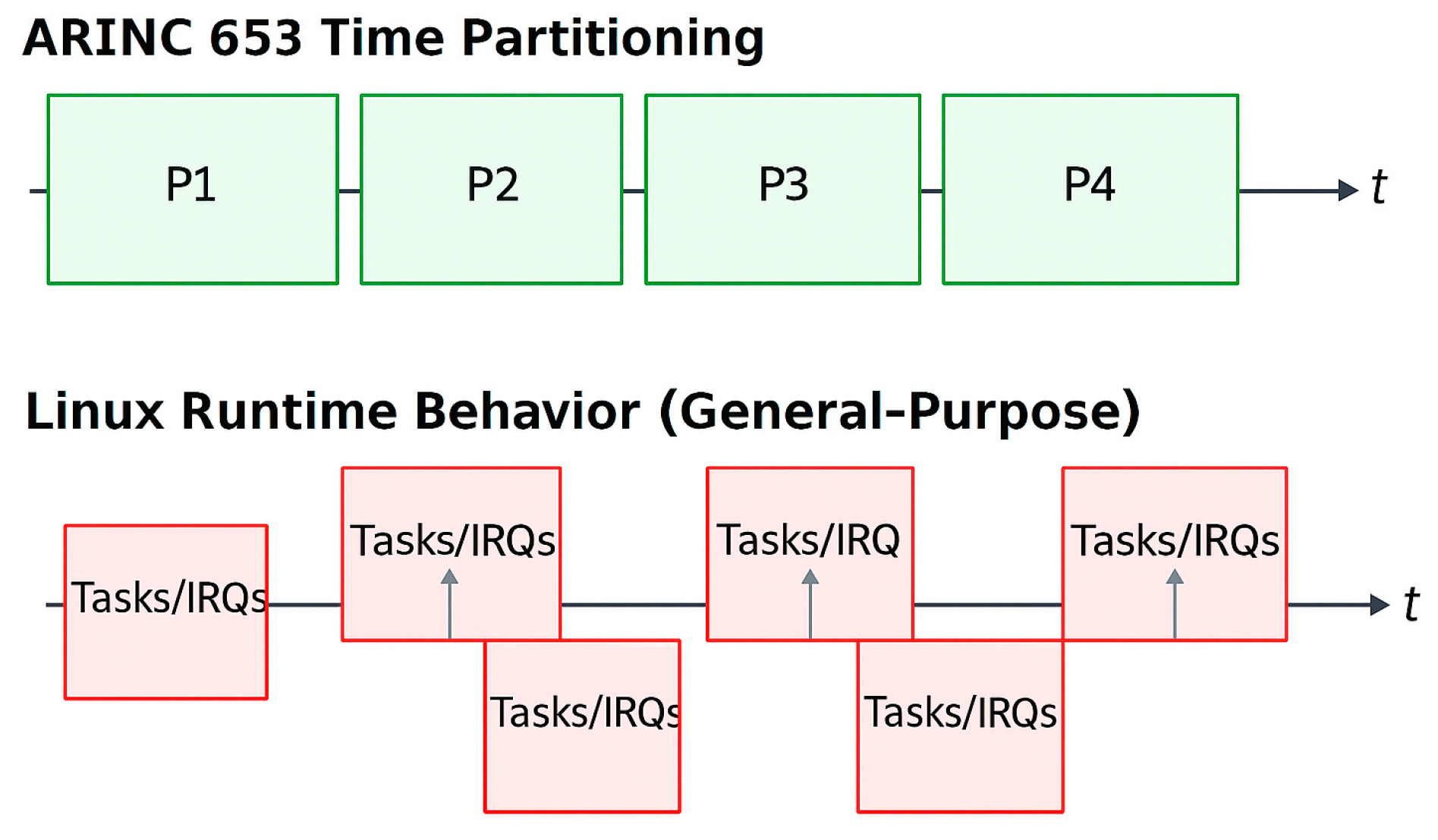

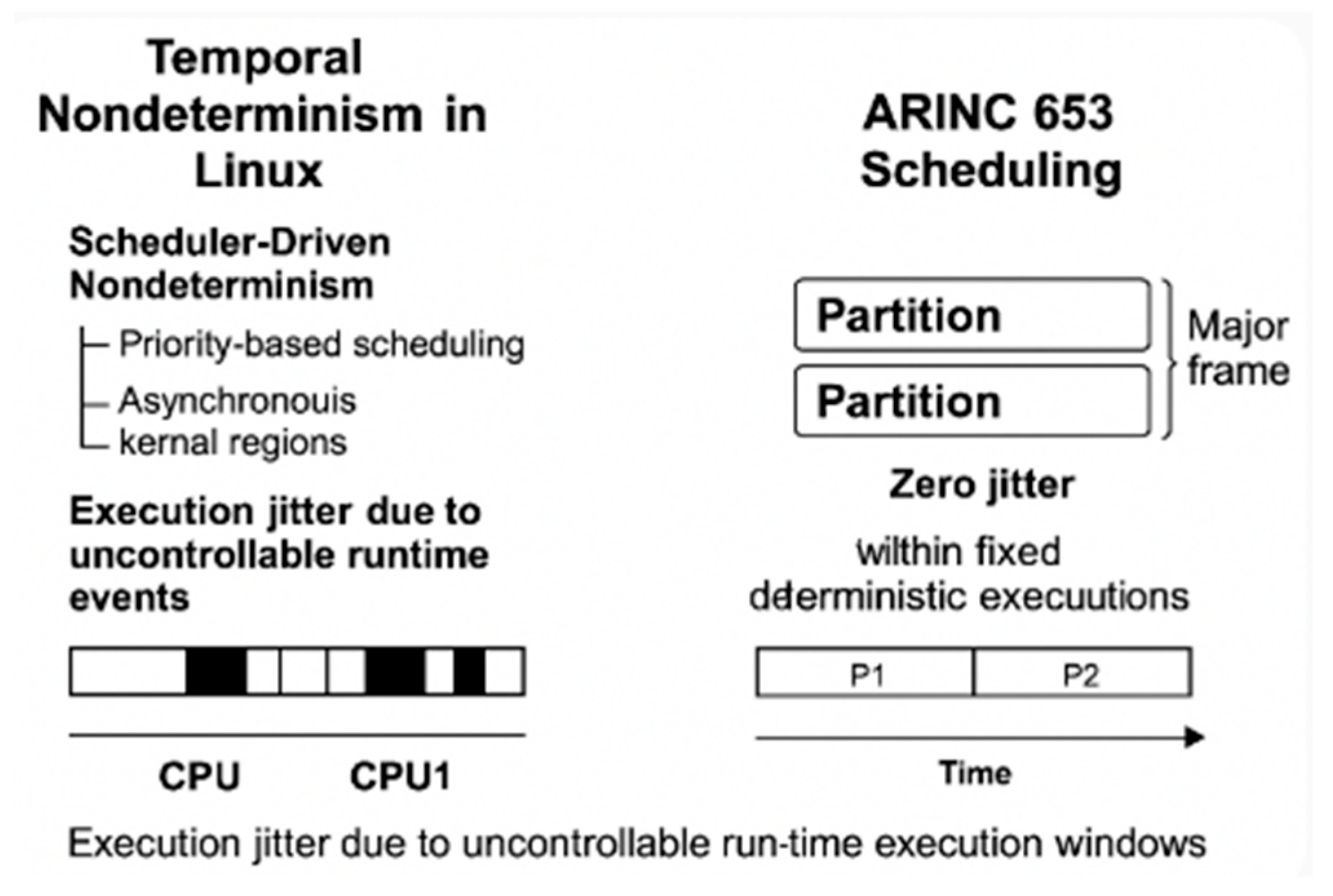

B. Lack of Temporal Determinism

- spinlock-protected critical sections,

- per-CPU data updates,

- scheduler state transitions, and

- low-level exception-handling paths.

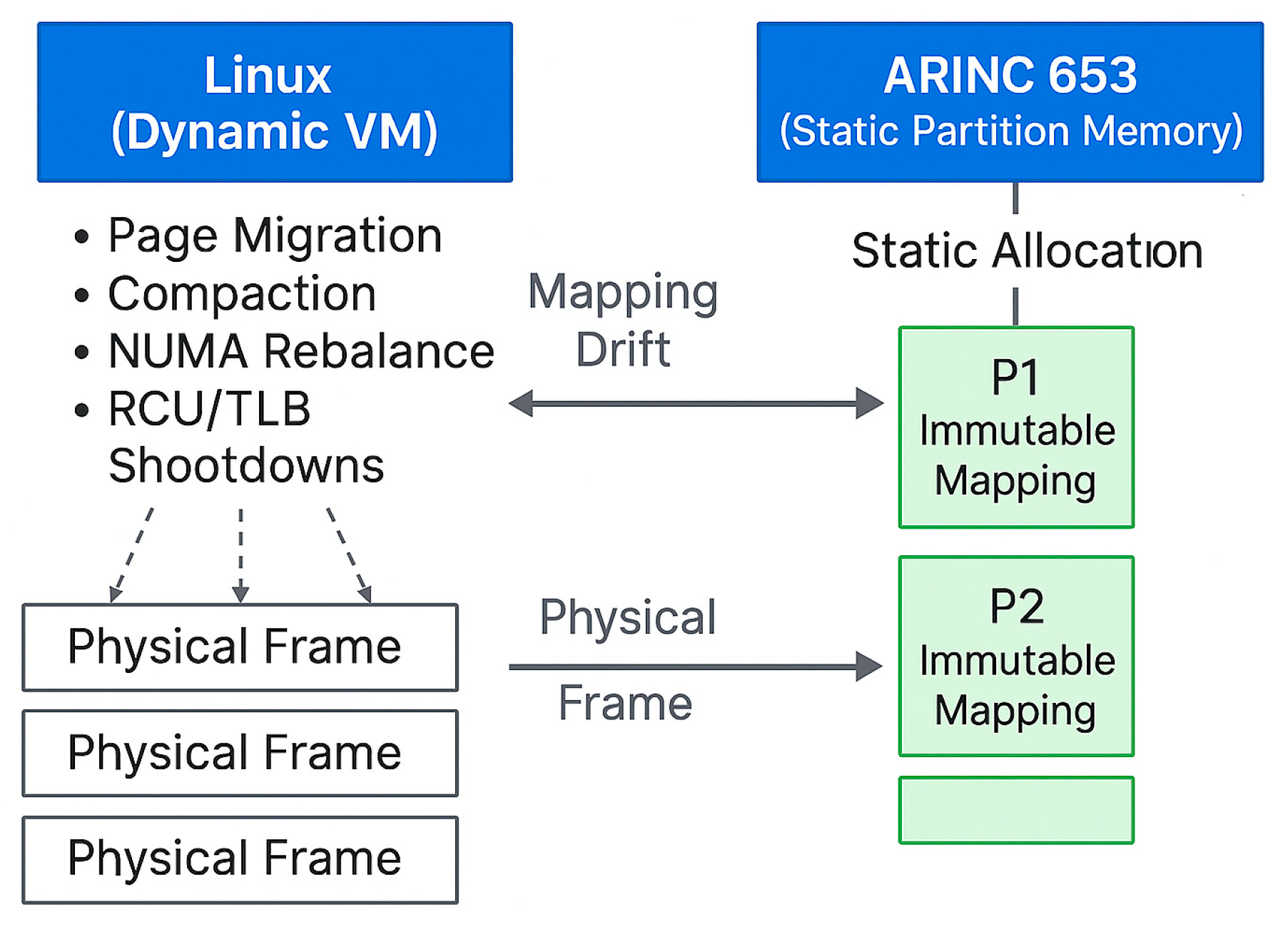

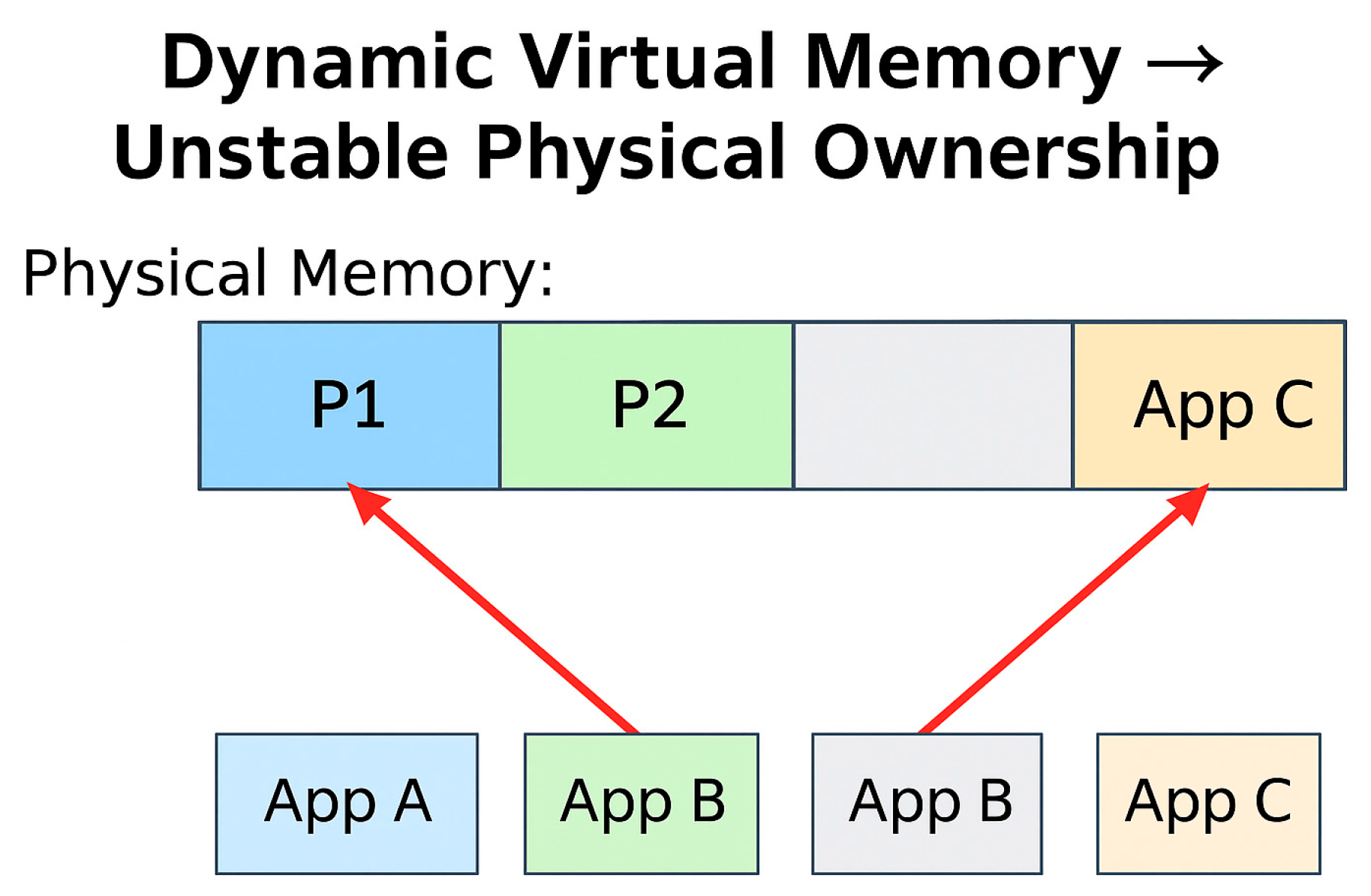

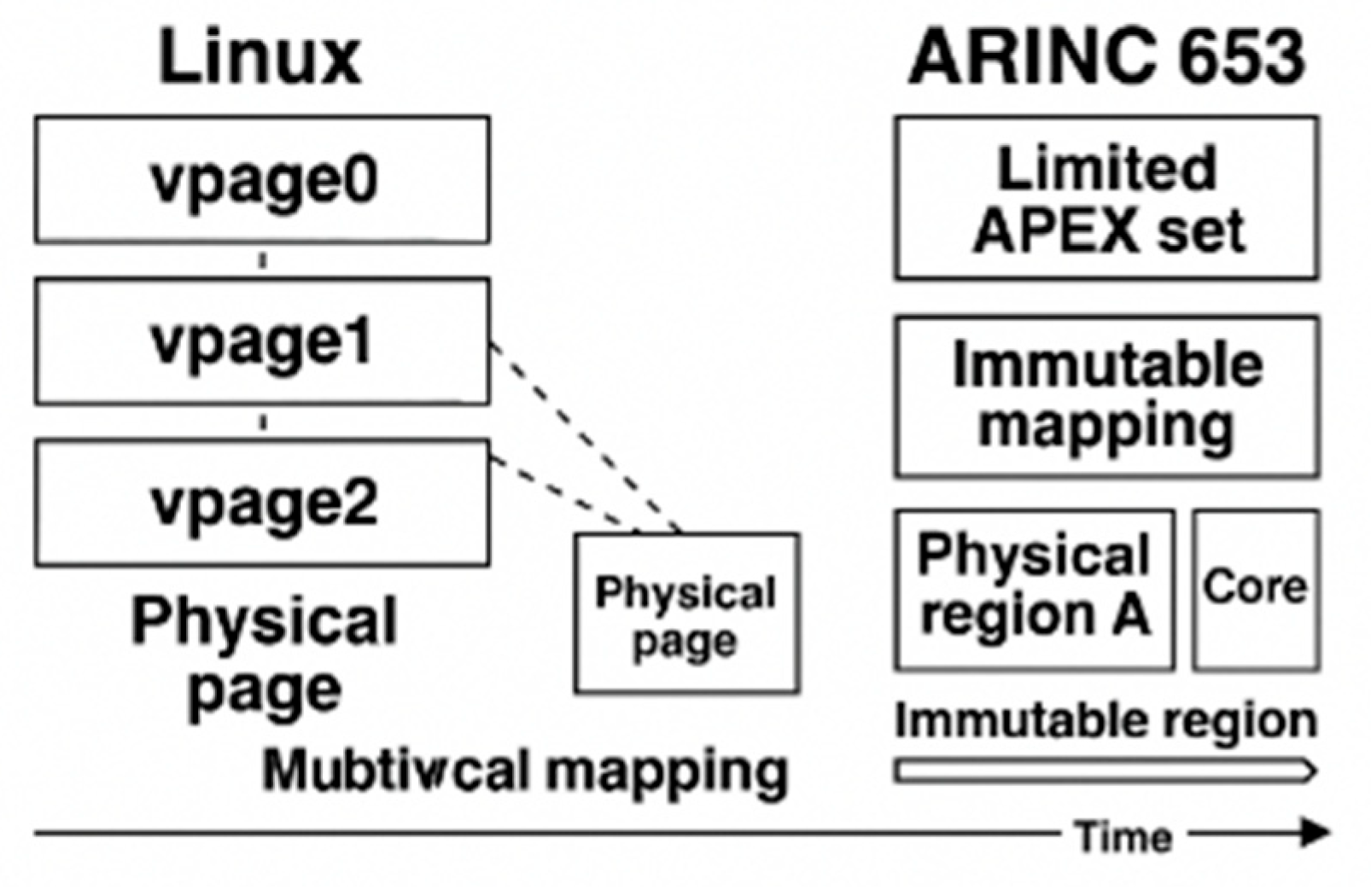

C. No Physical Memory Isolation

- Demand paging and on-demand allocation. Page tables are populated lazily, and physical pages may be allocated, remapped, or reclaimed during runtime based on memory pressure and process behavior.

- Page reclaim and compaction. Under memory pressure, the kernel evicts or relocates physical pages, invoking reclaim, compaction, or write-back paths that modify page-table mappings without application involvement.

- Dynamic page-table updates and TLB shootdowns. Linux frequently updates page attributes, permission bits, and mapping structures, triggering cross-CPU TLB invalidations and modifying the effective physical-memory layout during operation.

- Shared kernel-memory structures. The kernel’s slab allocators, per-CPU buffers, and driver subsystems allocate and free kernel memory dynamically; these regions are globally shared and not partition-scoped.

D. Driver Contamination of Kernel Global State

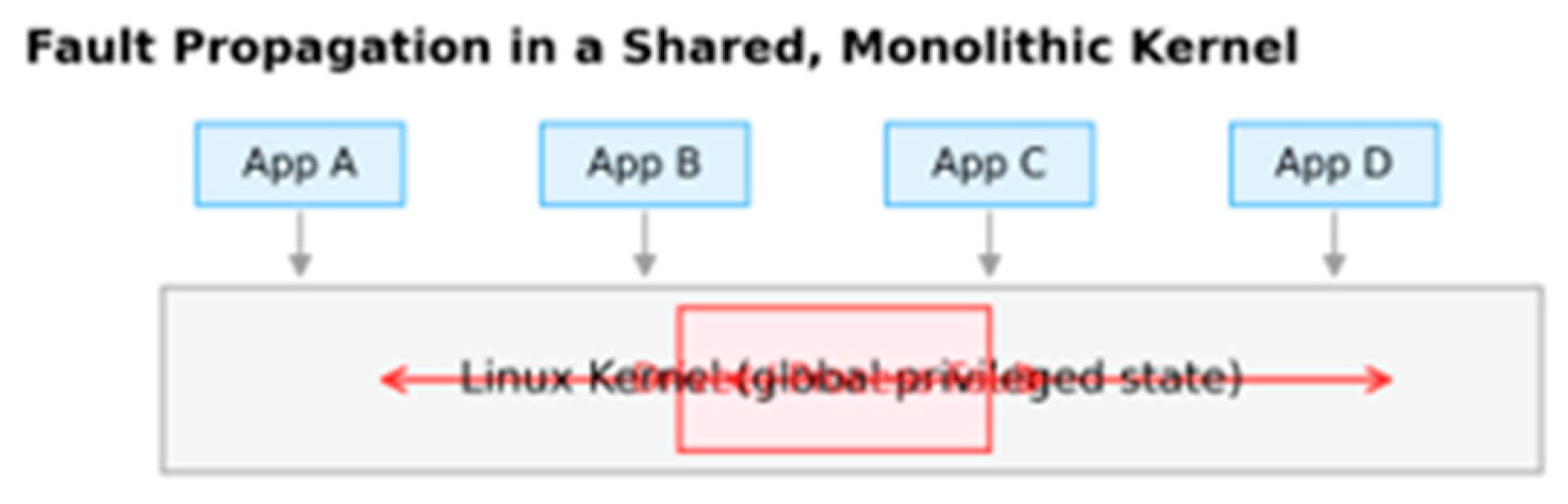

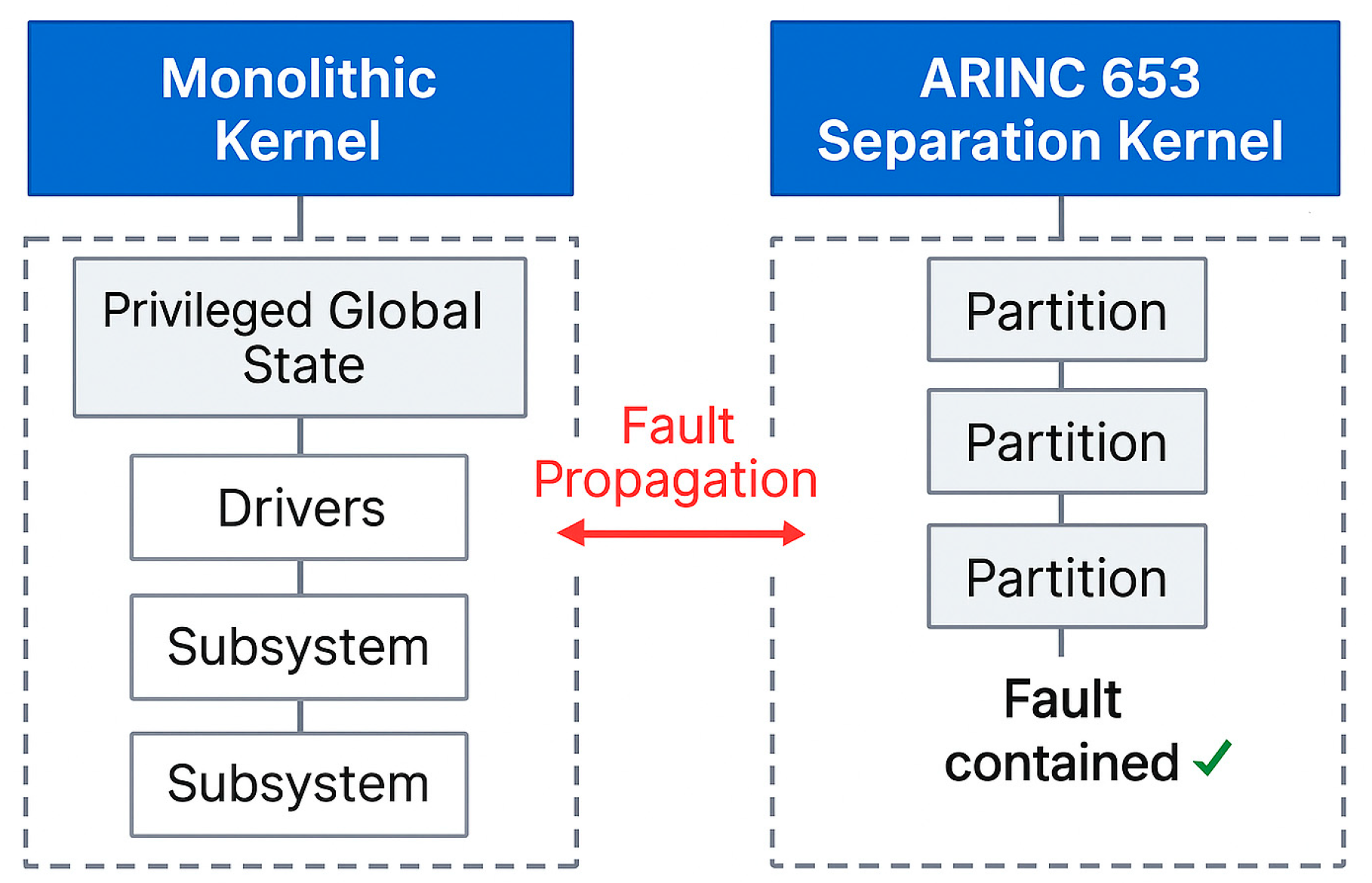

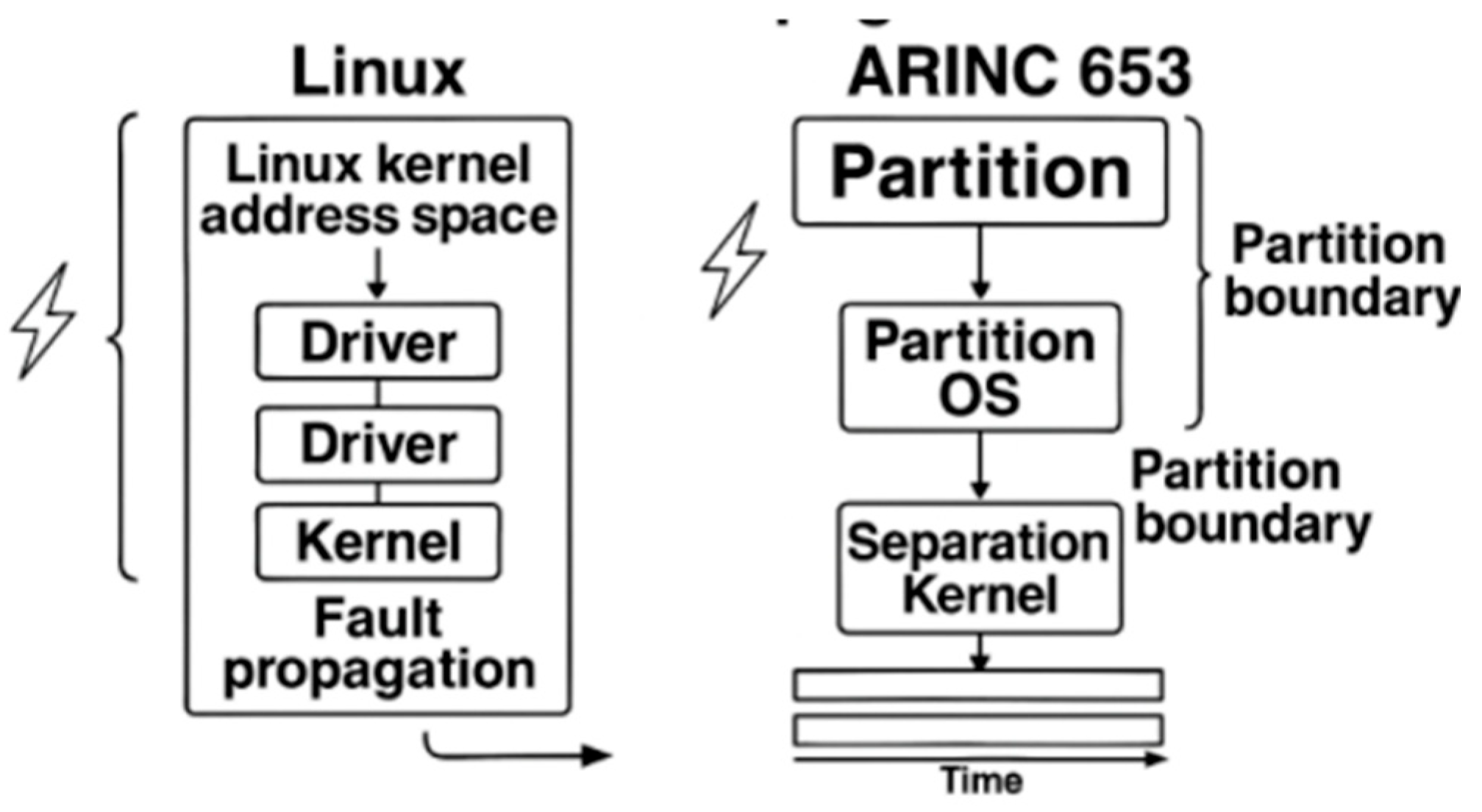

E. Lack of Fault Isolation

- shared kernel memory regions used by subsystems such as the scheduler, VFS, networking, and memory management;

- global locks and synchronization primitives that serialize access across unrelated components;

- reference counters and object life-cycle structures (e.g., file descriptors, network buffers, slab objects);

- interrupt-handling and softirq pathways, whose execution contexts are shared across all processes regardless of their criticality.

- hardware Memory Management Unit (MMU) isolation with statically defined, non-overlapping physical memory regions;

- a minimal, rigorously verified separation kernel responsible only for scheduling partitions and mediating controlled IPC;

- complete disallowance of shared kernel-writable global state between partitions;

- fault-containment boundaries that ensure a failure inside one partition cannot corrupt the separation kernel or any other partition.

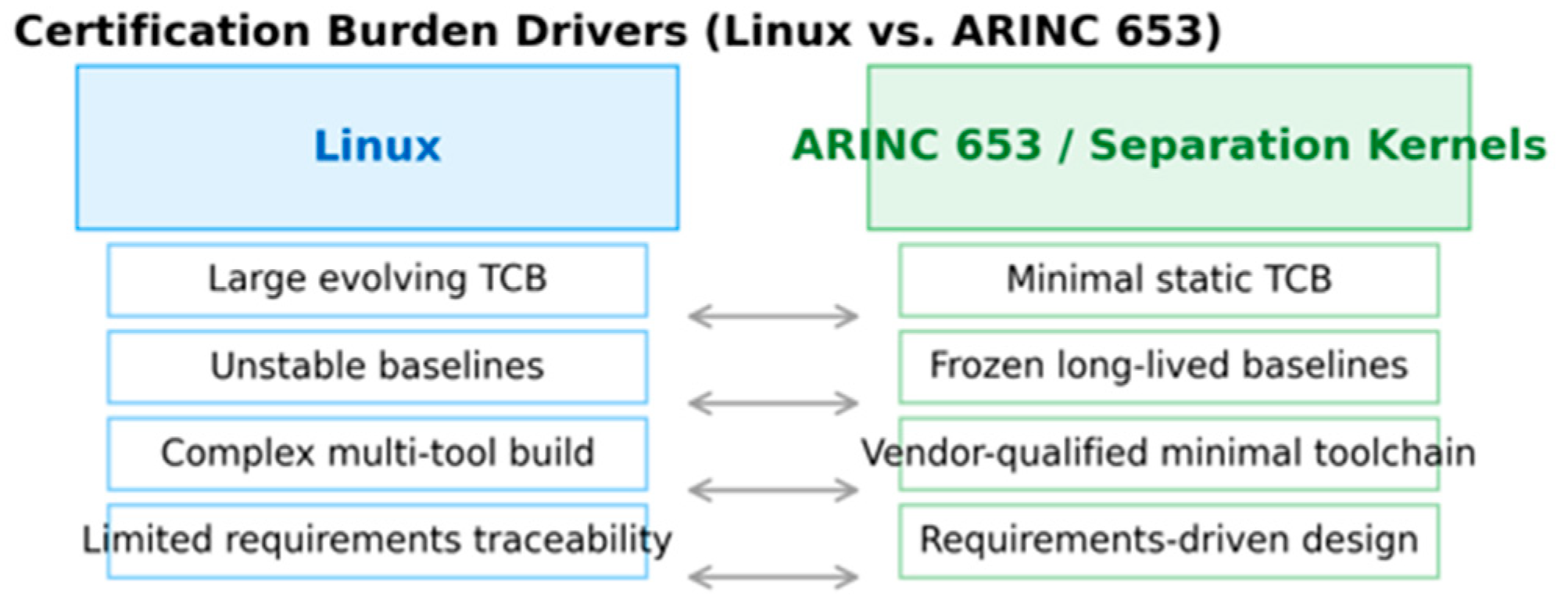

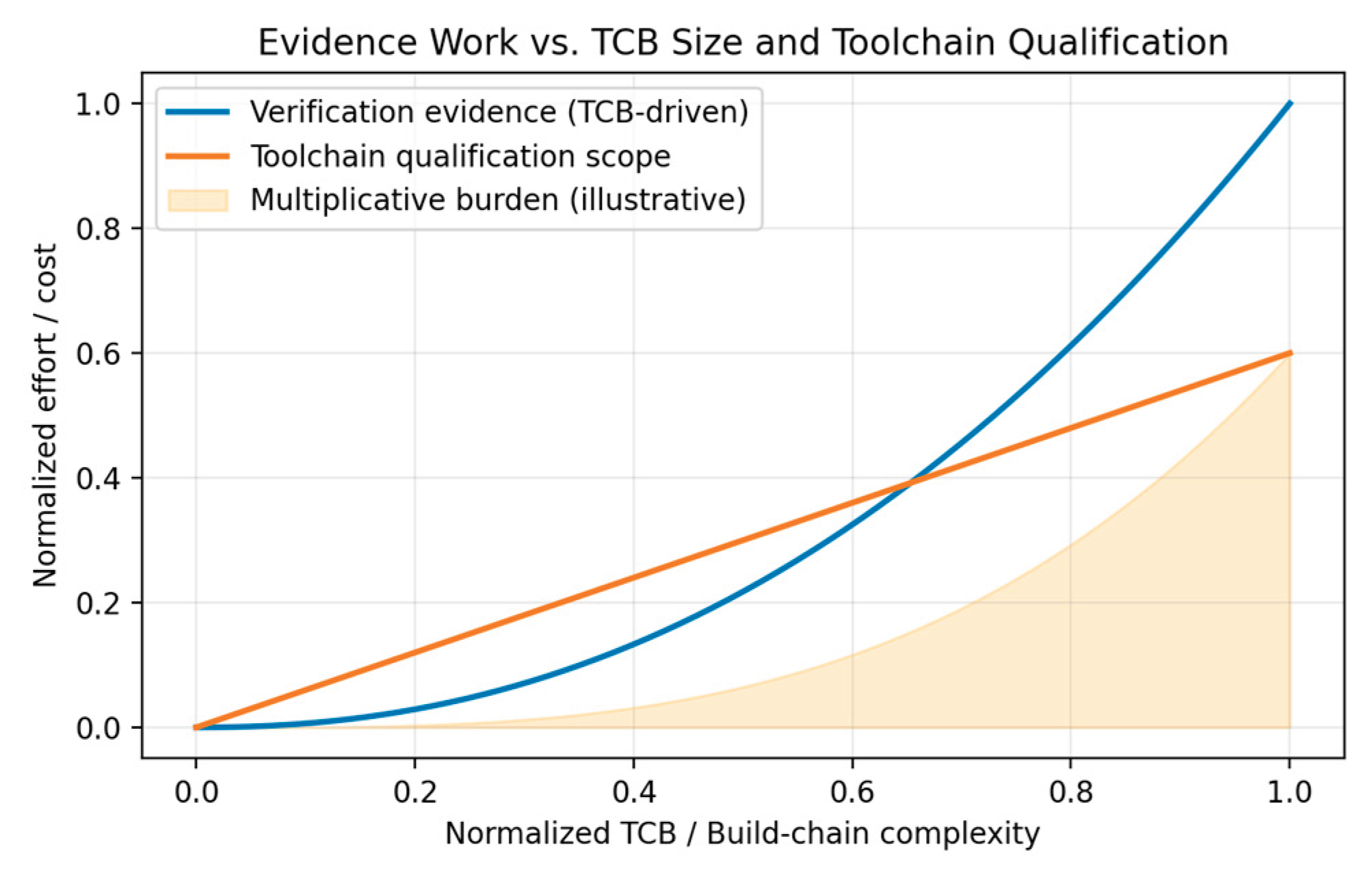

F. Overly Large Trusted Computing Base (TCB)

- Declaring all kernel subsystems, all bundled drivers, and all architecture-specific code paths as part of the certifiable airborne software item.

- Producing planning artifacts (PSAC, SDP, SVP, SCMP, SQAP) that must describe how each subsystem achieves determinism, verifiability, testability, and configuration stability.

- Defining an assurance strategy for kernel-wide concurrency, memory sharing, interrupt handling, scheduling, dynamic allocation, and toolchain behaviors.

- The kernel contains vast quantities of implementation-driven code, developed incrementally without DAL-style requirements decomposition.

- Core subsystems (scheduler, MM, VFS, network stack, timers, softirq) lack formalized HLR/LLR specifications, making traceability impossible.

- Architectural behavior depends on dynamic global state, hardware-dependent heuristics, and runtime conditions, violating DO-178C expectations of predictable and reviewable design behavior.

- Many kernel paths have implicit behavior (e.g., locking, RCU semantics, memory reclaim conditions) that cannot be fully captured in DAL-style requirements.

- Structural coverage analysis up to MC/DC at the source level

- Verification of all exception paths, error handlers, corner cases, and architecture-specific branches

- Robustness testing against abnormal inputs and worst-case conditions

- Review of all interfaces, data flows, and shared states

- Linux’s scale makes these obligations infeasible:

- The kernel’s millions of lines of code require astronomical verification effort.

- Many kernel paths depend on hardware behavior, timing, interrupts, speculative execution, and concurrency, making complete test coverage impossible.

- Dynamic subsystems (e.g., memory reclaim, workqueues, softirq, RCU) make it impractical to achieve deterministic coverage closure, because behavior varies with load, timing, and configuration.

- MC/DC on the kernel would require analyzing tens of thousands of complex decision points, many interacting across subsystems.

- Every version, file, requirement, and tool must be baselined and traceable.

- Any change requires impact analysis, regression evidence, and re-verification for affected DAL objectives.

- Linux’s characteristics conflict sharply with these requirements:

- The kernel evolves at a rapid pace with constant patch churn across all subsystems.

- Security fixes, driver updates, and architectural changes modify the kernel’s global behavior, invalidating prior baselines.

- Maintaining a stable, frozen Linux baseline contradicts the upstream model and imposes massive regression testing costs.

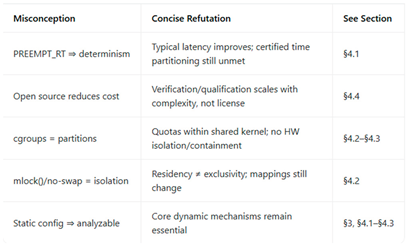

- The Linux toolchain (kbuild, gcc/llvm, binutils, scripts) forms a complex, multi-tool configuration that must itself be DO-330 qualified for use in DAL A/B—effectively infeasible.

- Its development is distributed across thousands of contributors with no DAL-style independence.

- No QA organization can audit or ensure compliance of upstream kernel changes.

- The kernel’s enormous TCB size makes independent reviews impractical, as QA must assess the entire lifecycle—from requirements to design to code—for millions of lines and hundreds of contributors.

- The separation kernel is purpose-designed to be extremely small, static, and analyzable.

- Device drivers and applications run outside the certified kernel, in isolated partitions.

- The separation kernel’s TCB is on the order of tens of thousands of lines, not millions, making DO-178C planning, verification, configuration control, and QA processes achievable at DAL A.

G. Continuous Patch Stream Destabilizes Certified Baselines

- locking primitives

- interrupt and softirq threading

- scheduling heuristics

- preemption and concurrency models

H. Complex Toolchain Imposes Prohibitive DO-330 Qualification Burden

V. Common Misunderstandings and Why They Fail

A. Linux + RT Patches Provide Temporal Isolation

B. Open Source Reduces Cost

C. Cgroups Provide Partition–Equivalent Isolation

- run-queue state

- wakeup timing

- load balancing

- kthread activity

- interrupt/softirq interference

- interrupts

- softirq execution

- RCU callbacks

- memory reclaim

- kworkers

- driver activity

- is not guaranteed a specific minimum amount of available memory,

- does not receive memory pre-allocated at system initialization, and

- cannot rely on invariant memory availability during execution.

- specific virtual address regions,

- exclusive physical memory ranges, or

- hardware-protected, partition-specific address mappings.

- allocated from any NUMA node,

- reclaimed under pressure,

- compacted or migrated by the kernel,

- remapped due to page-table updates, or

- affected by global MM subsystem activity.

- MMU/MPU protections a minimal, static separation kernel

- no shared kernel-writable global state

- no driver execution inside the kernel

- fault containment boundaries

|

D. Using Mlock() and Disabling Swap

- physical page allocation,

- page migration,

- reclaim under memory pressure,

- compaction,

- NUMA balancing,

- page-table updates,

- kernel-side memory sharing.

- reserve a guaranteed amount of memory for an application,

- ensure that locked pages come from a specific physical memory range,

- prevent the kernel from compacting, migrating, or remapping those pages,

- isolate the locked pages from interference caused by other processes,

- protect the physical region with MMU hardware boundaries.

- Linux may still:

- migrate mlocked pages during compaction,

- modify their page-table entries,

- trigger TLB shootdowns,

- reclaim adjacent pages and cause cache/TLB side effects,

- allocate kernel memory into nearby physical ranges.

E. Static Configuration Can Make Linux Deterministic

F. Abundant Linux Ecosystem

- the number of tools involved in the build pipeline,

- the number of transformations applied to source artifacts,

- the number of scripts and generators that must be controlled or assessed, and

- the number of potential interactions requiring justification or qualification under DO-330.

|

VI. Recommendations

- Partitioning Kernels / Type-1 Hypervisors: XtratuM + LithOS, POK, JetOS

- Separation Kernels + User-Space ARINC Services: seL4 (MCS), Muen SK

- Commercial Certifiable Platforms: VxWorks 653, INTEGRITY-178B, LynxOS-178, PikeOS, DeOS

VII. Conclusions

References

- ARINC Industry Activities, ARINC Specification 653P1-3: Avionics Application Software Standard Interface, Part 1. ARINC: Annapolis, MD, USA, 2015.

- ARINC Industry Activities, ARINC Specification 653P3-2: Avionics Application Software Standard Interface, Part 3. ARINC: Annapolis, MD, USA, 2014.

- RTCA Inc. DO-178C: Software Considerations in Airborne Systems and Equipment Certification. RTCA: Washington, DC, USA, 2011.

- EUROCAE, ED-12C: Software Considerations in Airborne Systems and Equipment Certification; EUROCAE: Paris, France, 2011.

- RTCA Inc. DO-297: Integrated Modular Avionics (IMA) Design Guidance and Certification Considerations. RTCA: Washington, DC, USA, 2005.

- RTCA Inc. DO-330: Software Tool Qualification Considerations. RTCA: Washington, DC, USA, 2011.

- He, F.; Xiong, H.; Zhou, X. Overview of key technologies for ARINC 653 partitioned operating systems. Acta Aeronaut. Astronaut. Sin. 2014, vol. 35(no. 7), 1777–1796. [Google Scholar]

- Huang, R. Research on ARINC 653 partition isolation mechanism for IMA. Ph.D. dissertation, Sch. Electr. Eng., Northwestern Polytech. Univ., Xi’an, China, 2020. [Google Scholar]

- Lopez, I.; Parra, P.; Urueña, M. XtratuM: a hypervisor for partitioned embedded real-time systems. Proc. 18th Int. Conf. Real-Time Netw. Syst. (RTNS), Paris, France, 2010; pp. 1–6. [Google Scholar]

- Delange, J.; Pautet, L.; Faucou, S. POK: an ARINC 653 compliant operating system for high-integrity systems. In Reliable Software Technologies – Ada-Europe 2010; Springer: Berlin, Germany, 2010; pp. 172–185. [Google Scholar]

- Huber, B.; Lackorzynski, A.; Warg, A. seL4: formal verification of a high-assurance microkernel. Commun. ACM 2014, vol. 57(no. 3), 107–115. [Google Scholar]

- Kuz, I.; Elphinstone, K.; Heiser, G. MCS: temporal isolation in the seL4 microkernel. Proc. 11th Oper. Syst. Platforms Embedded Real-Time Appl. (OSPERT), New York, NY, USA, 2015; IEEE; pp. 1–6. [Google Scholar]

- Härtig, H.; Lackorzynski, A.; Warg, A. The Muen Separation Kernel: Design and Formal Verification; Tech. Univ. Dresden: Dresden, Germany, 2018. [Google Scholar]

- Bovet, D.; Cesati, M. Understanding the Linux Kernel; O’Reilly Media: Sebastopol, CA, USA, 2005; pp. 1–986. [Google Scholar]

- Gleixner, T. PREEMPT_RT Patch Overview and Design Philosophy; Linux Foundation: San Francisco, CA, USA, 2019; Available online: https://wiki.linuxfoundation.org/realtime/start (accessed on 2 March 2026).

- Wind River Systems Inc., VxWorks 653 Platform Datasheet, 2022. Available online: https://www.windriver.com (accessed on 2 March 2026).

- Green Hills Software Inc., INTEGRITY-178B RTOS for Avionics. 2021. Available online: https://www.ghs.com (accessed on 2 March 2026).

- SYSGO AG. PikeOS Safety-Certifiable RTOS and Hypervisor. 2024. Available online: https://www.sysgo.com (accessed on 2 March 2026).

- DDC-I Inc. DeOS Safety-Critical RTOS. 2024. Available online: https://www.ddci.com (accessed on 2 March 2026).

Biography

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.