1. Introduction

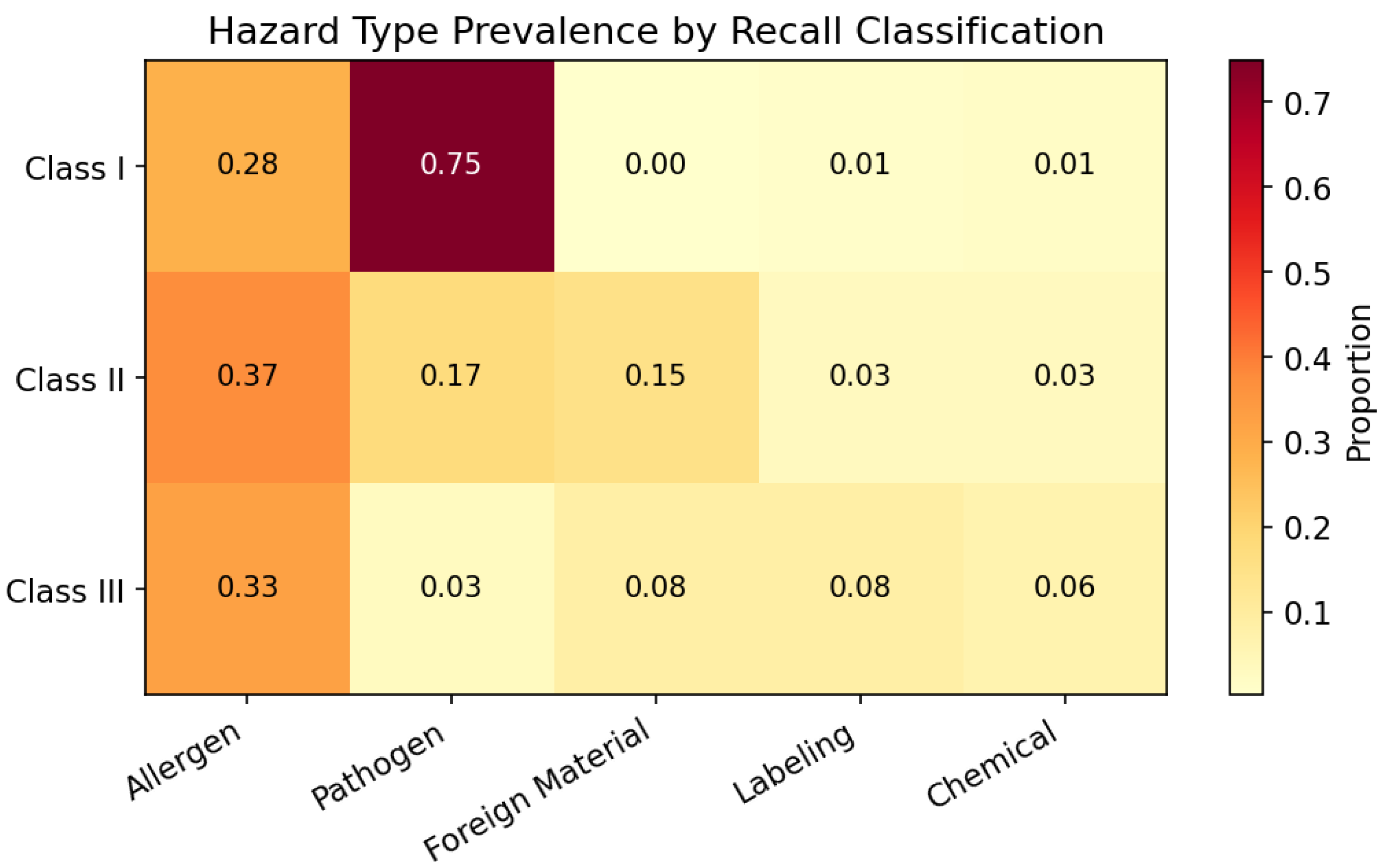

The U.S. Food and Drug Administration (FDA) classifies food recalls into three severity tiers: Class I denotes situations where exposure to a violative product carries a reasonable probability of serious adverse health consequences or death; Class II covers cases where exposure may cause temporary or medically reversible health effects; and Class III applies when the product is unlikely to cause any adverse health consequence [

1]. This tiered system is central to regulatory decision-making, guiding resource allocation, public notification urgency, and follow-up monitoring intensity. The classification process currently relies on expert evaluation, which, although thorough, faces growing time pressure as the volume of recall events continues to rise. Between 2012 and 2025, the openFDA Food Enforcement database accumulated over 28,000 recall records spanning a diverse array of product categories, hazard types, and reporting firms [

2]. The public health stakes are considerable: the most recent U.S. estimates attribute approximately 9.4 million illnesses, 55,961 hospitalisations, and 1,351 deaths annually to major foodborne pathogens alone [

3], imposing an economic burden exceeding

$75 billion per year [

4]. At the firm level, severe recall events can erode over

$100 million in shareholder value within days [

5,

6], with Class I events in the low-moisture food sector alone associated with median market capitalisation losses of

$243 million [

7]. Moreover, the economic disruption propagates along the entire supply chain [

8], underscoring the need for accurate, automated severity triage. The FDA itself has recognised this imperative in its New Era of Smarter Food Safety blueprint, which identifies predictive analytics as a core element of modernised food safety [

9]. This strategic direction builds on the foundation laid by the Food Safety Modernization Act (FSMA) [

10], which shifted the U.S. regulatory paradigm from reactive response to preventive control. FSMA grants the FDA mandatory recall authority and requires firms to maintain hazard analysis and preventive control plans, creating a regulatory environment in which predictive severity triage tools could directly support compliance and enforcement workflows.

Machine learning (ML) has emerged as a promising tool for automating risk assessment in food safety [

11,

12]. A growing body of literature has demonstrated the potential of supervised classifiers on the European Rapid Alert System for Food and Feed (RASFF) database, achieving accuracies ranging from 74% to 97.8% across various formulations [

13,

14,

15]. Natural language processing (NLP) techniques have further expanded the feature space available for food-safety models, with recent work applying Transformer-based architectures to hazard detection tasks [

16]. Importantly, recent large-scale empirical studies have demonstrated that gradient-boosted tree models remain competitive with or superior to deep learning on medium-scale tabular data [

17,

18], providing a strong empirical basis for the model family employed in this study.

Despite this progress, almost all existing studies target the EU RASFF database or other non-FDA sources. To date, no peer-reviewed study has attempted systematic Class I/II/III severity prediction on openFDA food recall data. Two tangentially related works exist: an unpublished preprint that analyses openFDA recall patterns but predicts termination status rather than severity [

19], and a study of FDA drug (not food) recalls limited to 235 records [

20]. This leaves a significant gap in the literature for a rigorous ML benchmark on the largest publicly available food recall database in the United States.

Beyond the absence of FDA-specific benchmarks, a more fundamental concern pervades the food-safety ML literature: evaluation methodology. The prevailing practice is to split data randomly into training and test sets, implicitly assuming that test-time instances are drawn from the same distribution as training data. This assumption is violated when the same reporting entity (e.g., a food manufacturer) contributes multiple records, because recalls from the same firm share product types, hazard profiles, and distribution patterns. Recent econometric work has formally demonstrated that naively applying standard cross-validation to panel data—data with repeated observations of the same entities over time—leads to severely inflated out-of-sample performance [

21]. Food recall databases, where the same firms contribute multiple records across years, constitute precisely such panel structures. Bouzembrak and Marvin [

22] provided early evidence of this issue in their Bayesian network study on RASFF food fraud, observing that accuracy dropped from 80% on previously seen country–product combinations to 52% on unseen ones. However, their observation remained incidental rather than systematic.

In clinical ML, the analogous problem—patient-level data leakage—has received extensive attention. Studies on histopathological image classification [

23] and ECG analysis [

24] have demonstrated that failing to segregate data by patient identity inflates reported performance substantially. Kapoor and Narayanan [

25] further provided a comprehensive taxonomy of leakage scenarios across ML-based science. Yet food-safety research has largely not internalised these lessons: none of the recent high-performing RASFF studies [

13,

14] report group-aware or temporal evaluation protocols.

This study addresses the above gaps through four objectives. First, we construct the first comprehensive ML benchmark for FDA food recall severity classification, comprising 28,448 records, 1,437 engineered features, five tuned classifiers, and a rule-based baseline. Second, we design a multi-layer leakage audit with four splitting strategies (Random, Group-by-firm, Temporal, Group+Temporal), a firm-mode baseline, and identity-masking experiments to quantify entity-level autocorrelation. Third, we apply a factorial design (firm overlap × time overlap) to decompose the contributions of firm-level memorisation and temporal concept drift. Fourth, based on our findings, we propose concrete evaluation protocol recommendations for food-safety ML research.

The remainder of this paper is organised as follows.

Section 2 reviews related work on food-safety ML and data leakage.

Section 3 describes the data, feature engineering, models, and evaluation protocols.

Section 4 presents results across all evaluation dimensions.

Section 5 discusses implications, practical value, and limitations.

Section 6 concludes with recommendations.

6. Conclusions

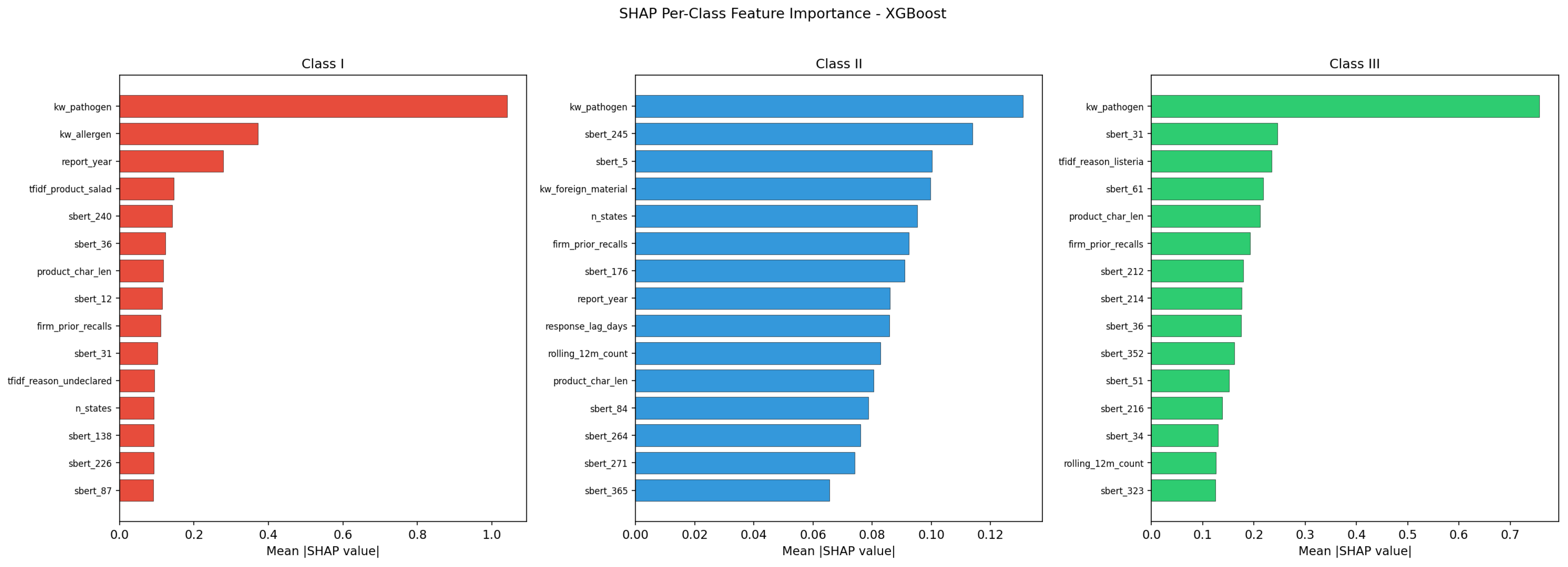

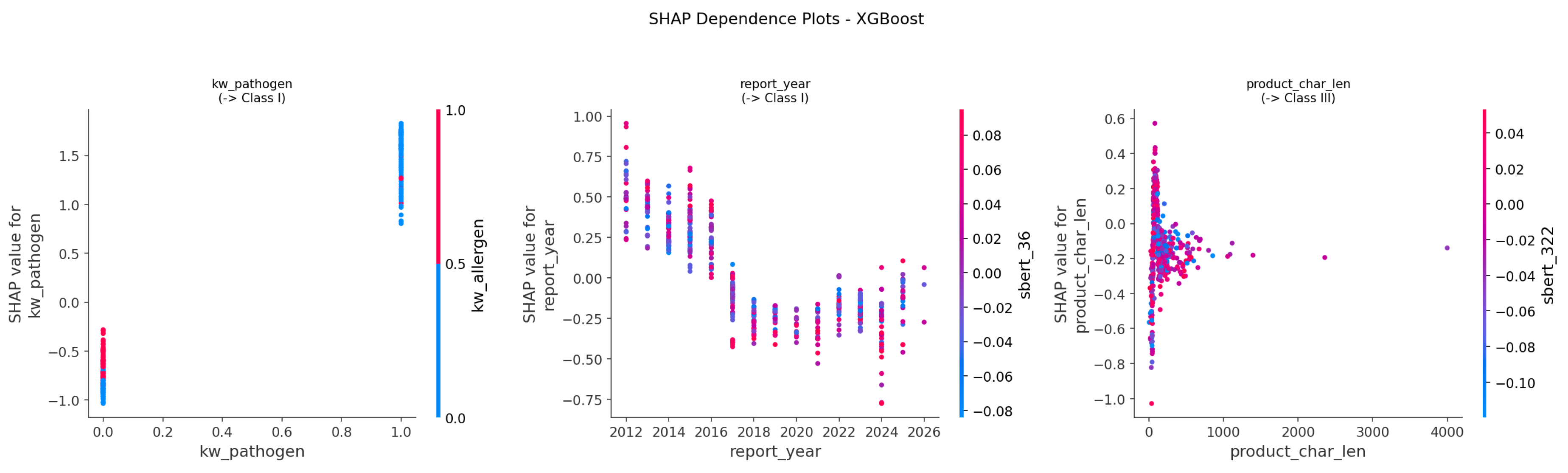

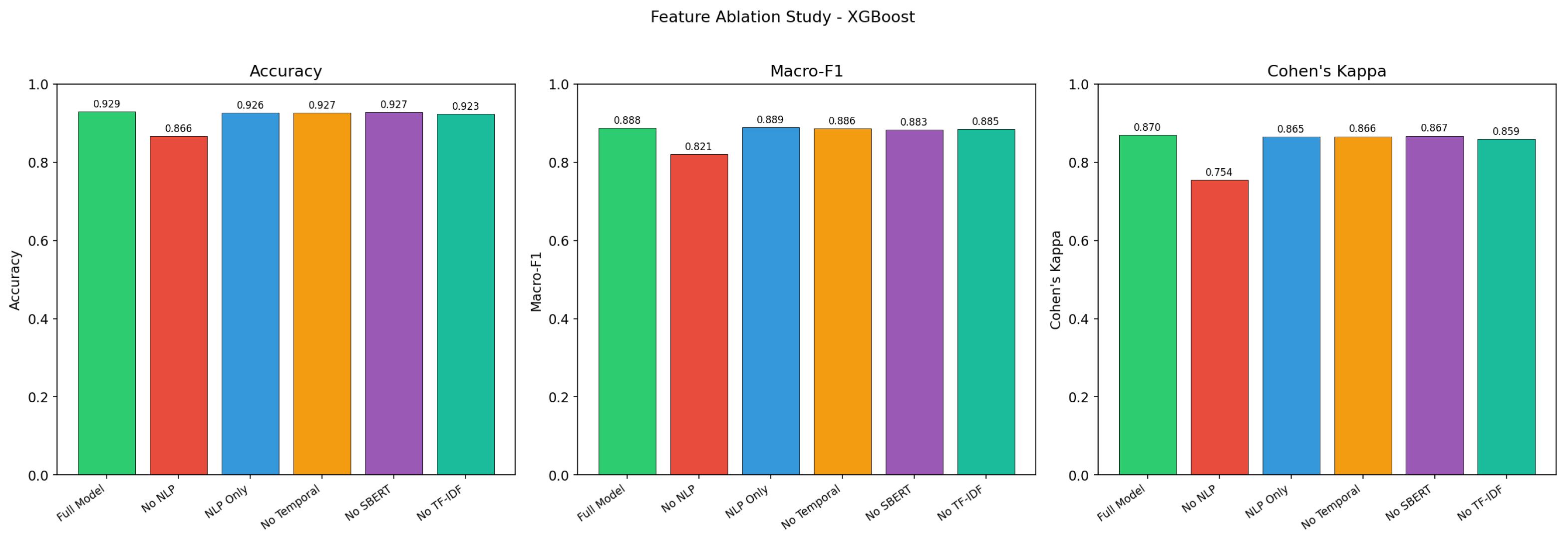

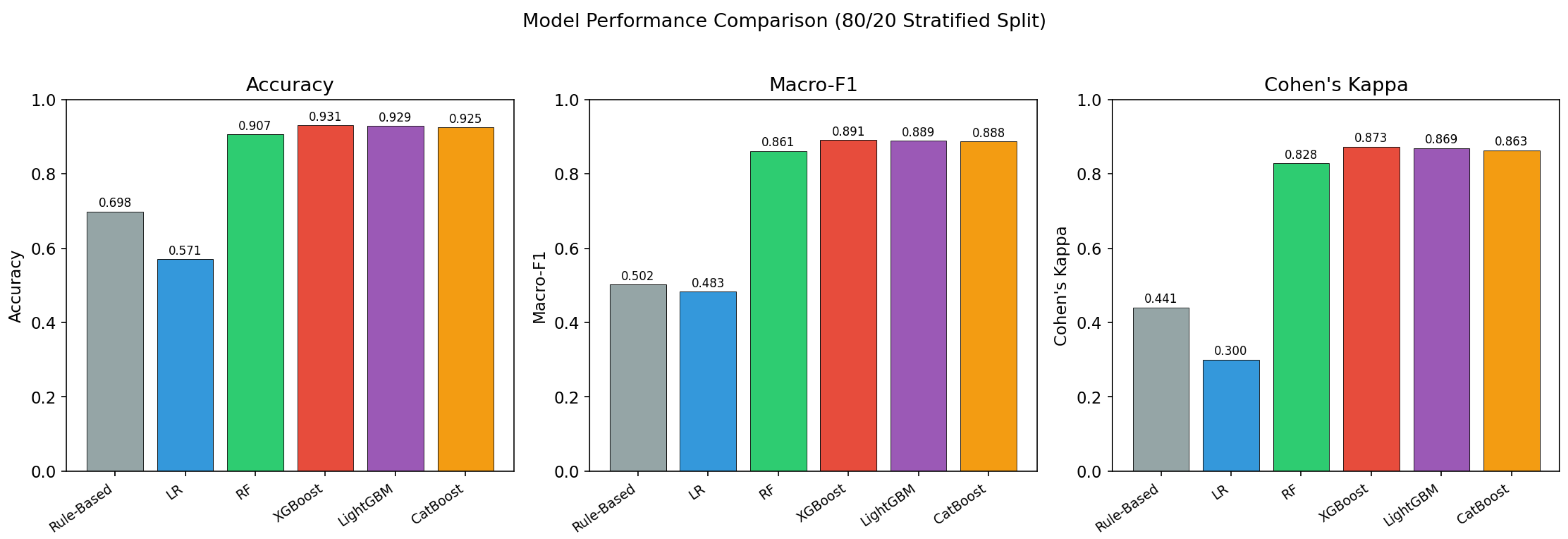

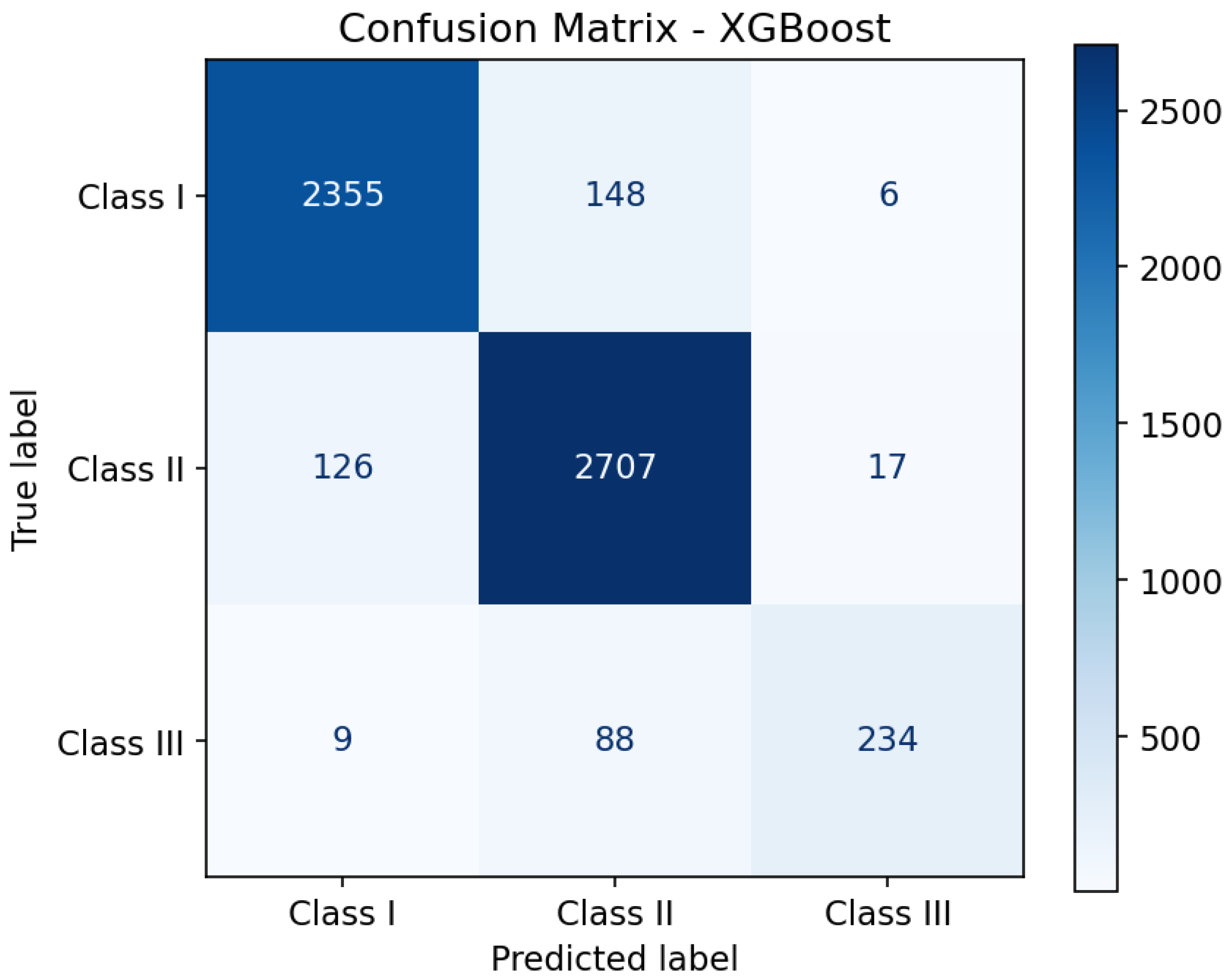

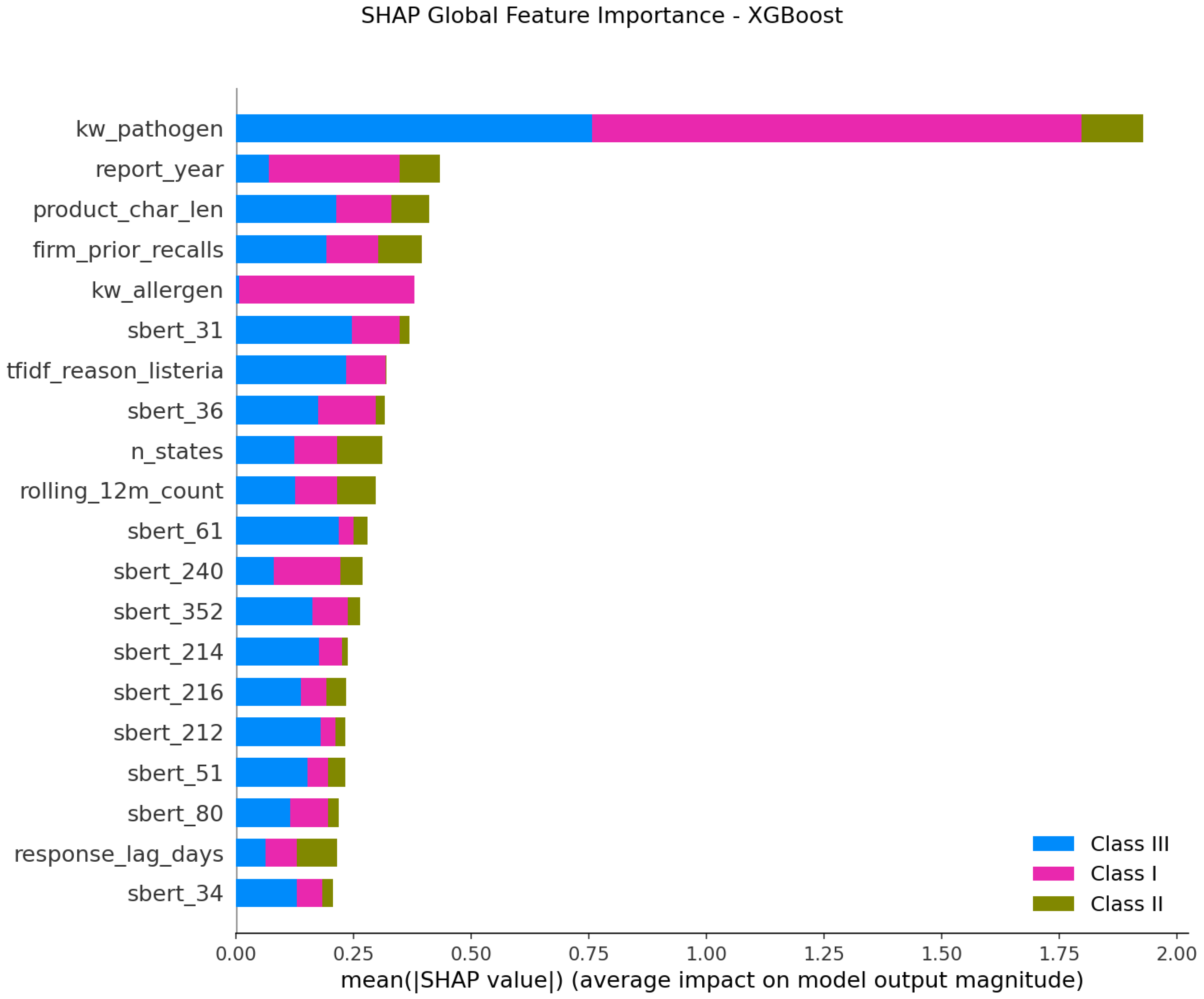

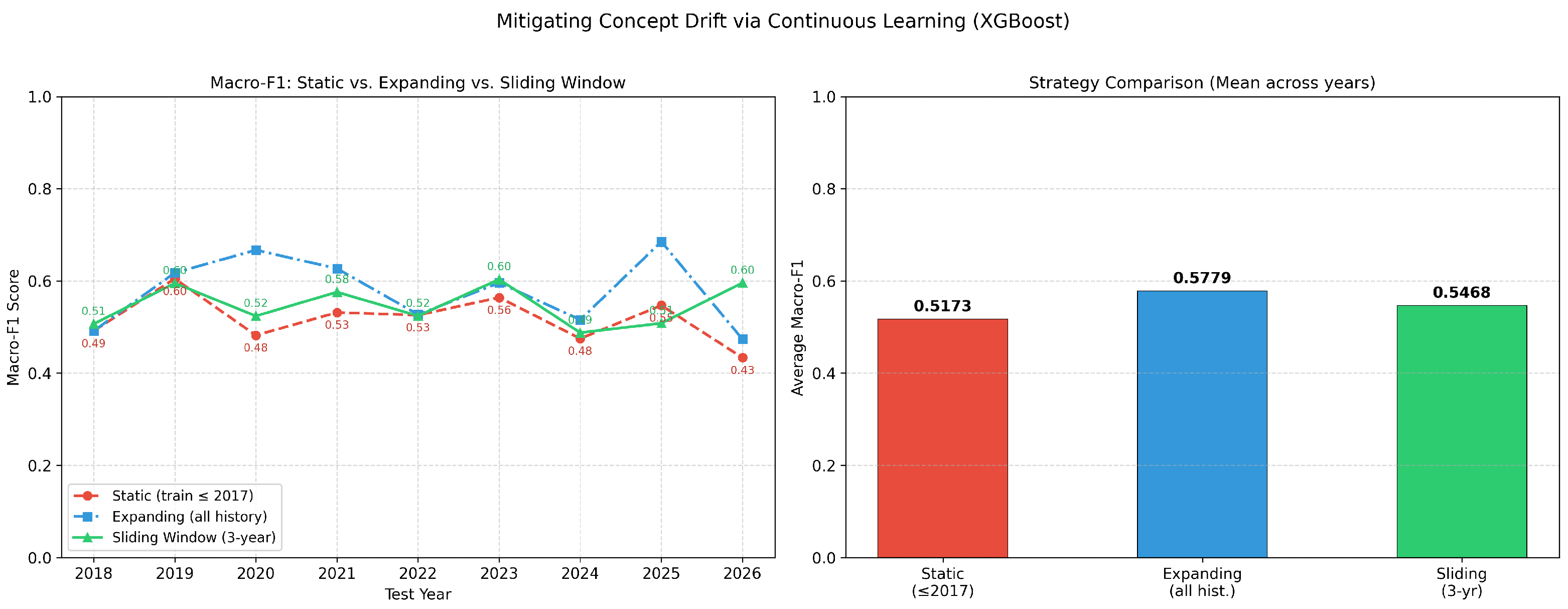

This study constructed the first comprehensive machine learning benchmark for FDA food recall severity classification (Class I/II/III), comprising 28,448 openFDA enforcement records (2012–2025), a 1,437-dimensional feature space combining TF-IDF, Sentence-BERT, structured, and temporal features, and five tuned classifiers evaluated under four distinct splitting protocols.

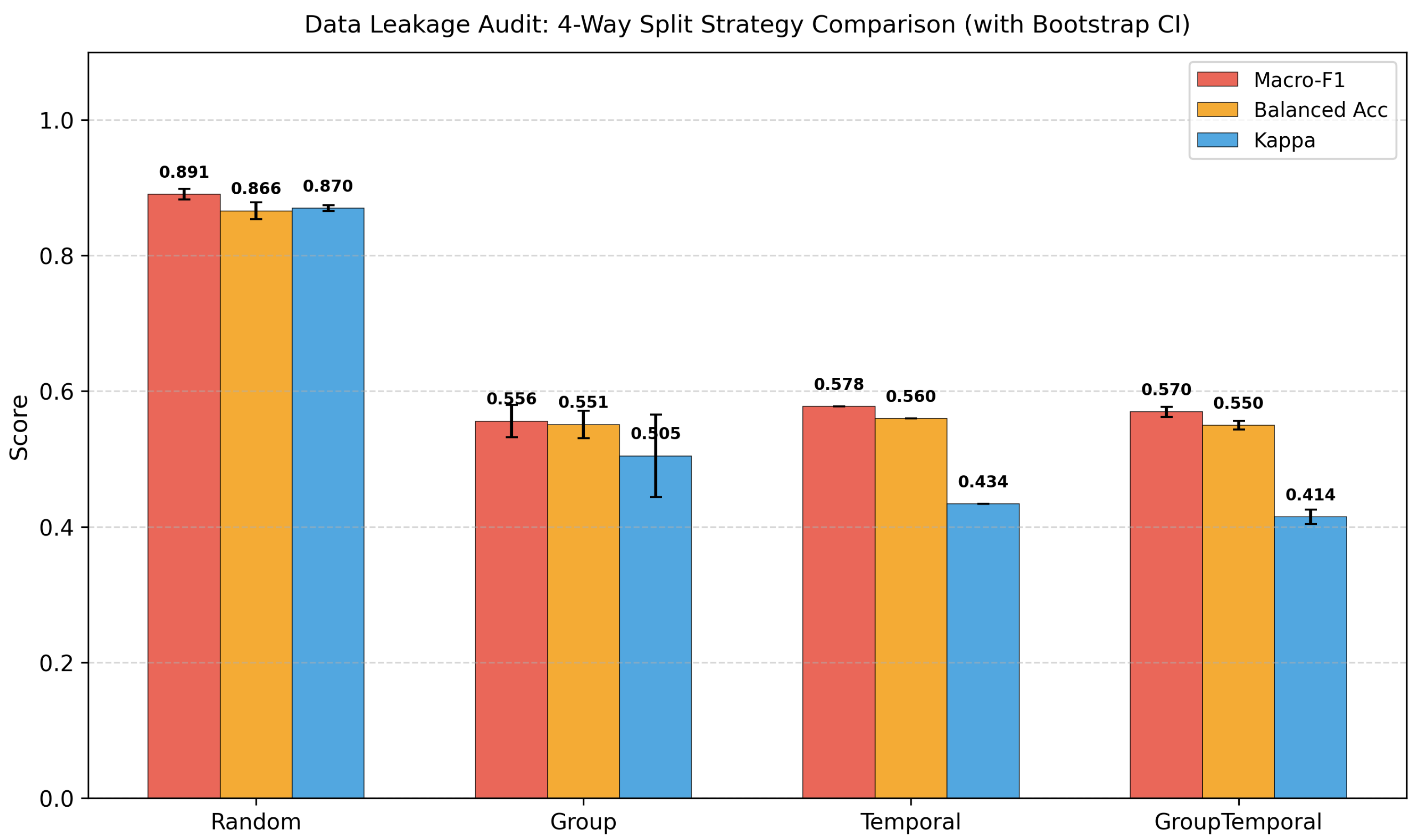

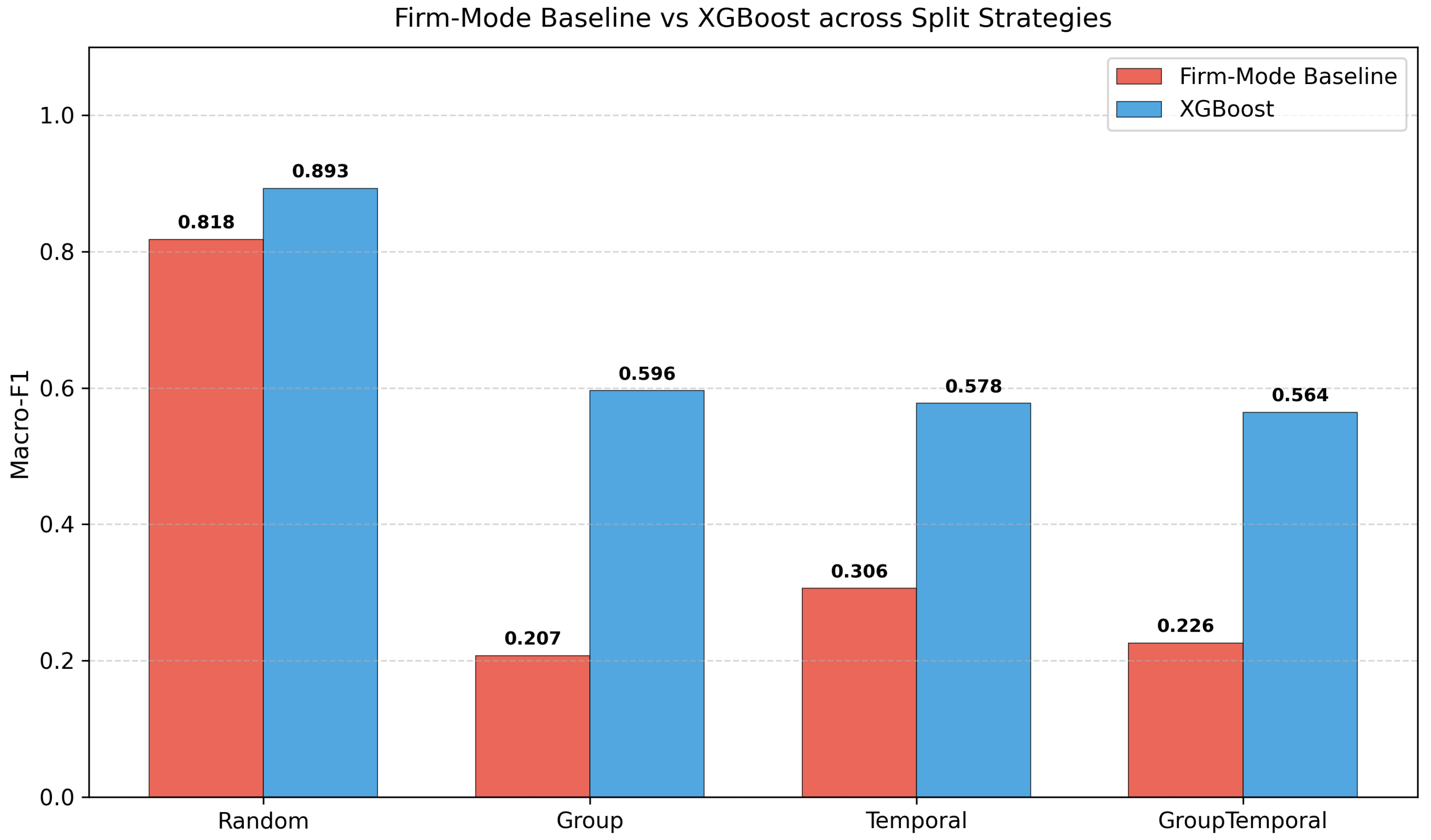

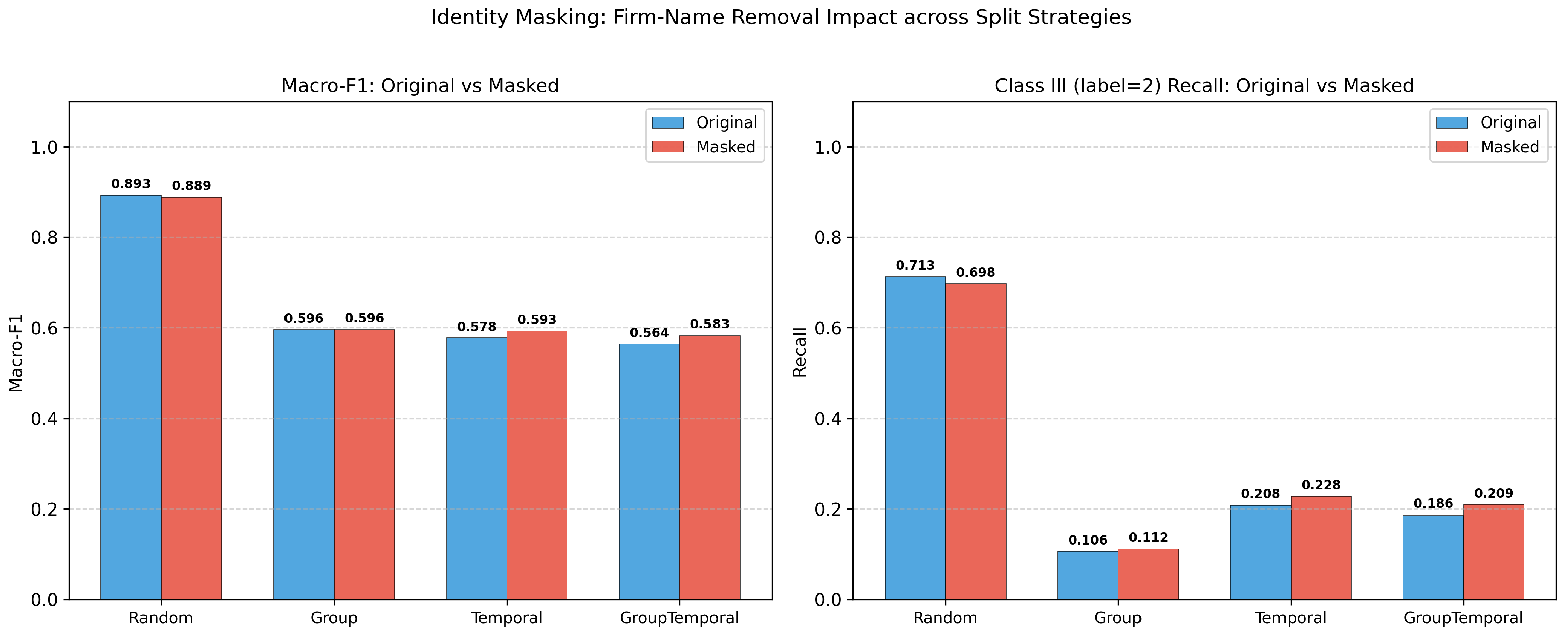

Our multi-layer leakage audit reveals that the standard random-split Macro-F1 of 0.89 is inflated by approximately 0.32 points due to entity-level autocorrelation. Under group-aware, temporal, and combined splitting, true generalisation performance converges to approximately 0.57. A firm-mode baseline achieves 0.82 under random evaluation, demonstrating that 92% of apparent performance stems from entity memorisation. A factorial decomposition shows that firm overlap and temporal continuity are highly collinear—removing either suffices to expose the true performance floor. Identity-masking experiments confirm that the leakage is structural rather than attributable to explicit name tokens. Despite these sobering findings, XGBoost still exceeds the firm-mode baseline by 0.339 under the strictest evaluation, indicating genuine learned patterns beyond entity identity.

We recommend that food-safety ML studies (1) report results under both random and group-aware or temporal evaluation protocols, (2) disclose entity-overlap statistics between training and test sets, and (3) include entity-prior baselines to contextualise model performance. These measures are computationally inexpensive and can be adopted with minimal disruption to existing research workflows. Future work should extend the leakage audit to other food-safety databases (e.g., EU RASFF), conduct feature ablation under group-aware splits, and explore domain-adapted language models for food-safety text classification.