1. Introduction

In recent years, higher education institutions have faced growing complexity in curriculum design and subject allocation due to the rapid expansion of course offerings, interdisciplinary programs, and varied student profiles Duncombe (1959). At the same time, the advent of large-scale educational data (student records, faculty profiles, course metadata) creates opportunities to apply intelligent systems for decision support (Zhang et al. 2025). Recommendation systems (RSs) have emerged as crucial tools for filtering choices, suggesting alternatives, and guiding decision-making in high-dimensional, constrained domains (Zhang et al. 2026). In the educational domain, RSs have traditionally supported students (e.g., by recommending learning resources and courses) (Gomis et al. 2023). However, relatively few studies consider RSs in faculty workload and subject allocation, which involve multiple stakeholders (students, faculty, programs) and hard constraints (prerequisites, scheduling, capacity). The need to allocate subjects optimally is especially pronounced in graduate studies, where curriculum progress, research alignment, and faculty specialization are of great importance (Su and Su 2025). Traditionally, subject allocation has been rule-based (program policies, prerequisites, faculty load), overseen by academic advisers and administrators. However, such manual systems have limitations: they may not account for latent factors such as faculty research alignment, student interest vectors, or the interplay among faculty-student-subject relationships (kuabe et al.2025). Meanwhile, advances in machine learning (ML) and deep learning (DL) offer greater capacity to model complex, relational, and higher-order structures (Ragab et al., 2025). Knowledge-graph-enhanced recommendation systems extend beyond traditional collaborative filtering and content-based methods by leveraging side information and relational embeddings, thus improving performance and explainability (Zhang 2025). For example, Graph Neural Networks (GNNs) applied to heterogeneous educational graphs (students, courses, faculty) have demonstrated superior ranking accuracy in course recommendations (Qu et al. 2024). In the context of subject allocation, these methods can encode faculty expertise, research output, student interests, program relationships, and scheduling constraints into one unified graph of entities and relations.

Despite these technical advances, there remains a gap in the application of hybrid frameworks to subject allocation at the graduate level. Most work focuses on recommending learning resources or courses to students rather than optimizing subject allocation decisions that involve faculty, program, and institutional constraints (Zhao 2025). Moreover, existing educational RS research often neglects faculty-centric factors (such as research productivity, mentoring quality, and digital pedagogy competence), which are salient in graduate subject allocation. Therefore, this study proposes a hybrid framework combining a curriculum knowledge base (encoding prerequisites, capacity, scheduling, faculty eligibility) with deep learning recommendation models (XGBoots, GNN, wide & deep) to generate optimal subject-faculty allocations in graduate studies. The key contributions are three-fold: (Duncombe 1959) design of a faculty-subject-program knowledge graph incorporating faculty competency and institutional rules; (F. Zhang et al. 2025) training of deep hybrid recommenders that predict suitability scores for subject allocation; (Y. Zhang et al. 2026) evaluation of the system in a real institutional context (~480 respondents across six faculties) to assess performance and interpretability. In the remainder of this paper, we review related work on educational recommendation systems, knowledge graph-based recommendations, and faculty workload allocation frameworks (Section II), describe the research methodology (Section III), present evaluation performances (Section IV), discuss experiment results for practice (Section V), and conclude with conclusions and future work (Section VI and VII).

2. Materials and Methods

Over the past decade, recommender systems (RSs) have evolved into core components of digital learning ecosystems, revolutionizing how universities deliver personalized education and make data-driven decisions across teaching, learning, and management processes [11]. In higher education, these systems form part of the broader shift toward Learning Analytics and Educational Data Mining frameworks, enabling the dynamic personalization of courses, resources, and learning trajectories for individual learners. Educational Recommender Systems (ERSs) are primarily designed to enhance learning experiences by filtering, ranking, and matching learning resources, instructors, and students within massive institutional databases (Kopyt et al. 2016)A growing body of research demonstrates their effectiveness in course recommendation, adaptive tutoring systems, e-learning resource curation, and student performance prediction, establishing ERSs as critical enablers of personalized, learner-centered education. However, systematic literature reviews reveal that, although ERSs have achieved significant success in instructional domains, their application to administrative and institutional processes remains limited, particularly in graduate education contexts (Fardel et al., 2007). Traditional subject allocation frameworks in higher education often rely on rule-based assignment or manual heuristic decision-making, constrained by static institutional policies, faculty self-reports, or historical workload data (Comite and Pierdicca 2022). These methods tend to be less adaptable to evolving academic conditions, leading to mismatches between faculty expertise, student research interests, and institutional strategic goals. Such limitations demonstrate the growing need for intelligent, data-driven decision-support systems that can balance academic quality, workload fairness, and policy compliance. As universities adopt digital transformation and AI-enabled governance frameworks, integrating artificial intelligence (AI) and deep learning (DL) into subject allocation processes has emerged as a promising research frontier (Habi and Messer 2021).

Recent advancements in deep learning architectures (Neural Collaborative Filtering (NCF), Wide-and-Deep Neural Networks, and Graph Neural Networks (GNNs)) have significantly expanded the capacity of recommendation systems to learn latent representations and multi-relational patterns that traditional statistical models often fail to capture [16]. These models transcend simple input–output prediction by embedding semantic and structural relationships among diverse entities such as students, faculty, subjects, and institutional rules. In educational applications, such models enable reasoning over both explicit relationships (e.g., teaching history, research specialization) and implicit associations (e.g., mentoring effectiveness, digital teaching affinity). Knowledge Graphs (KGs) have further augmented this capability by structuring educational data into interconnected ontologies that allow semantic reasoning. Studies have shown that KG-based educational recommenders enhance adaptive learning pathways, dynamic course sequencing, and skill mapping—resulting in improved personalization and cognitive alignment between learners and content (Robillos 2024). However, despite these successes, the application of KGs and deep learning to academic management remains underexplored. Most prior works focus on student-side personalization, leaving institutional decision-making processes largely rule-driven and manually optimized. Recent hybrid frameworks that integrate knowledge-based reasoning with deep representation learning represent a transformative paradigm shift, combining the transparency of expert systems with the adaptability of neural models (Tang 2025). Such hybrid models can encode formal institutional constraints while simultaneously learning implicit patterns from historical data to improve fairness in allocation and predictive accuracy. Incorporating GNNs into educational recommendation architectures has proven particularly promising, as these models capture graph-structured dependencies across academic entities. GNNs enable joint optimization across heterogeneous nodes (faculty, subjects, programs) and their relational edges (e.g., expertise similarity, co-teaching, administrative linkage), leading to higher accuracy and explainability in recommendation outputs (Aziz 2025). Moreover, integrating reinforcement learning (RL) mechanisms enables these systems to adapt dynamically to policy shifts and feedback loops over time—supporting continuous learning and optimization in evolving academic environments. Systematic reviews further emphasize that explainability and fairness are emerging as fundamental design imperatives in educational AI, ensuring that algorithmic decisions uphold ethical standards, promote equity, and comply with accreditation requirements (Aina et al., 2024). In the context of faculty workload allocation, scholars have identified significant challenges in balancing quantitative factors (e.g., teaching experience, academic rank, and administrative duties) with qualitative indicators (e.g., mentoring quality, research supervision, and digital pedagogical skills) (Zhang 2025). Current decision models often fail to integrate these diverse dimensions holistically, resulting in inconsistent workload distribution and potential faculty dissatisfaction. Few frameworks treat the triadic relationship among faculty, subjects, and programs as a co-dependent decision space in which optimizing one dimension directly influences the others. Knowledge graph–enhanced hybrid recommender systems directly address this gap by enabling multi-objective optimization while preserving interpretable rule traces for administrative validation (Downing 2025). Empirical studies have demonstrated that combining domain ontologies and explicit workload constraints with neural learning architectures substantially improves both accuracy and transparency, making such systems well-suited for institutional deployment.

In summary, the existing literature provides compelling evidence for the potential of deep learning–based, knowledge-graph–augmented recommender systems to address complex educational challenges. However, significant gaps remain regarding faculty-centric subject allocation, hybrid knowledge-driven optimization, and real-world validation within university environments. The present study aims to bridge these gaps by developing and evaluating a knowledge-based deep learning framework that integrates graph attention mechanisms, policy rule reasoning, and multi-objective optimization. This approach aspires to achieve optimized, equitable, and explainable subject allocation in graduate studies—transforming academic resource management into a transparent, data-driven, and institutionally aligned process that reflects the next stage of intelligent higher-education governance.

2.1. Research Objectives

The overarching goal of this research is to design and validate a knowledge-based deep learning framework that intelligently automates and optimizes subject allocation in graduate programs, ensuring fairness, transparency, and institutional alignment. Building on advances in Graph Attention Networks (GATs) and knowledge-based reasoning, the study seeks to transform the traditional rule-driven subject assignment process into a data-driven, interpretable, and adaptive decision-support system.

To achieve this overarching goal, the study is guided by the following specific objectives:

To design a hybrid knowledge-based GAT framework that integrates explicit institutional rules (e.g., qualification requirements, workload limits, supervision policies) with data-driven learning from historical allocation patterns.

To construct a comprehensive indicator–subject knowledge graph that captures semantic and relational dependencies among indicator members, subjects, research domains, and workload profiles, enabling contextual reasoning within the allocation process.

To develop a GAT-based allocation model that learns attention coefficients representing the importance of faculty indicators in predicting allocation suitability.

To apply multi-objective optimization techniques that balance allocation goals within a unified allocation function.

To evaluate the performance and interpretability of the proposed GAT model against benchmark algorithms (XGBoost, Wide-and-Deep Neural Network, and standard GNN) using key metrics such as Accuracy, F1-score, AUC, HR@10, and NDCG@10.

To design and implement an Indicator Subject Allocation Dashboard (ISAD) that translates model outputs into a usable decision-support interface, allowing administrators to visualize, validate, and adjust allocation outcomes in real time.

To validate the framework empirically using data from 480 respondents across six faculties at Mahasarakham University, assessing the system’s predictive accuracy, explainability, and institutional applicability.

3. Research Methodology

This study adopts a quantitative approach integrating knowledge-based reasoning, deep learning, and institutional data analysis to develop and evaluate a hybrid intelligent system for subject allocation in graduate studies. The overall research procedure comprises five sequential phases: 3.1 data collection process; 3.2 problem identification and data preparation; 3.3 deep learning model development; (D) training objective and regularization; and 3.4 validation and interpretation for institutional application.

3.1. Data Collection Process

Data were collected at Mahasarakham University, across six faculties (Science, Engineering, Information Technology, Humanities and Social Sciences, Mahasarakham Business School, and Education) to represent both the science and social science domains. A total of 480 respondents participated, selected through stratified random sampling to ensure proportional representation across academic disciplines and ranks. Each respondent completed a structured questionnaire containing three parts: (1) demographic information (gender, age, academic rank, years of experience, department), (2) twenty (20) measurable variables related to factors influencing subject allocation, and (3) institutional engagement outcomes such as research contribution and teaching workload perception. All 20 features were defined quantitatively as follows: Teaching Experience (years) (F

1), Academic Qualification (highest degree) (F

2), Designation (academic rank) (F

3), Number of Subjects Taught (F

4), Research Productivity (F

5), Administrative Responsibility (F

6), Academic Outcome Achievement (F

7), Admission and Recruitment Contribution (F

8), Training and Placement Involvement (F

9), Accreditation and Documentation Handling (F

10), Examination and Evaluation Contribution (F

11), Institutional and Club Engagement (F

12), Value and Ethics Participation (F

13), Professional Society Membership (F

14), Cultural and Community Outreach (F

15), Event Coordination Beyond Institute (F

16), Undergraduate Project Mentorship (F

17), Postgraduate/Ph.D. Supervision (F

18), Student Mentorship and Guidance (F

19), and Digital Pedagogical Competence (F

20). The 20 indicators used in the subject allocation model in this study are listed in

Table 1 (Saxena et al. 2023).

3.2. Problem Identification and Data Preparation

The dataset for this study comprised 480 data points collected from six faculties at Maha Sarakham University. Each respondent was characterized by 20 indicators (F1–F20), covering experience, qualification, designation, research productivity, administrative load, mentorship, and digital competence. The raw dataset underwent a multi-stage preprocessing pipeline to ensure completeness, consistency, and suitability for training a Graph Attention Network (GAT). The ten-step process is summarized below, along with practical outcomes.

Step 1: Missing-Value Imputation: Approximately 4.7% of the entries contained missing values, primarily in F

1 (Teaching Experience), F

4 (Subjects Taught), and F

5 (Research Productivity). For numeric features, mean imputation was applied using Eq. (1).

For categorical variables (e.g., F

2 Academic Qualification, F

3 Designation), mode imputation replaced missing entries, which is defined as Eq. (2) [24].

After imputation, the proportion of missing values was reduced from 4.7% to 0%, ensuring data completeness across all 20 features.

Step 2: Data Normalization (Min–Max Scaling): To ensure numerical stability and prevent dominance of high-magnitude variables, continuous indicators (F

1, F

4, F

5, F

7, F

18) were scaled to the [0, 1] interval using Eq. (3) [25].

For example, F

1 (Teaching Experience) ranged from 2 to 40 years, resulting in Eq. (4).

This standardization improved convergence stability during neural optimization and reduced skewness by 64%.

Step 3: Categorical Encoding: Categorical variables (F

2 (Qualification), F

3 (Designation), and F

14 (Professional Society)) were numerically transformed for model interpretability. Label encoding assigns ordinal values as defined in Eq. (5) [26].

For multi-class categories (e.g., Lecturer, Assistant Professor, Associate Professor, Professor), One-Hot Encoding was applied using Eq. (6).

This produced six categorical vectors, which were subsequently transformed into 64-dimensional embeddings for GAT input.

Step 4: Outlier Detection and Capping: To mitigate the influence of extreme values, the Interquartile Range (IQR) method was applied, as defined in Eq. (7).

For example, F5 (Research Productivity) had Q1 = 4.0, Q3 = 12.0, yielding IQR = 8.0. The upper bound = 12 + 1.5(8) = 24, so any publication count > 24 was capped at 25.

This reduced the skewness of outliers from 1.72 to 0.63.

Step 5: Correlation and Multicollinearity Analysis: A Pearson correlation matrix was computed using Eq. (8) [27].

Features exceeding were considered multicollinear. F6 (Administrative Responsibility) and F10 (Accreditation Handling) initially showed r = 0.93. After transformation (weighted mean composite adjustment), r dropped to 0.72, mitigating redundant signal propagation in GAT.

Step 6: Feature Embedding Preparation: Encoded categorical variables (F

2, F

3, F

14) were projected into a 64-dimensional latent space, as defined in Eq. (9) [28].

where

and b

e are trainable embedding parameters. This transformation preserved semantic relationships between categories while providing dense vector inputs for the GAT attention layer.

Step 7: Knowledge-Based Filtering: To align the data with university policies, eligibility rules were applied using Eq. (10) [27].

From 480 records, 17 were removed for lacking doctoral qualifications or holding administrative exemptions, leaving 463 valid respondents. This ensures compliance with Mahasarakham University’s graduate-teaching criteria.

Step 8: Workload Normalization: Workload was standardized to institutional averages using Eq. (11).

where

hours and

Post-normalization, the mean workload equaled 0.00 (SD = 1.0), and extreme load deviations (>2 SD) were corrected using a clipping function

improving fairness consistency across faculty profiles.

Step 9: Data Augmentation and Balancing: To mitigate data imbalance (class ratio of 3.4:1), the Synthetic Minority Over-Sampling Technique (SMOTE) was employed, as described in Eq. (12) [29].

Synthetic faculty–subject samples were generated for underrepresented disciplines, increasing the total number of instances from 2,450 to 3,200 (≈27% increase). This adjustment improved the GAT model’s class-balance F1-score from 0.812 to 0.871 during pilot validation.

Step 10: Final Graph Construction for GAT Input: The cleaned and enriched dataset was converted into a bipartite graph, as defined in Eq. (13).

Edges e

ij = (f

i, s

j) were weighted by suitability, defined as in Eq. (14).

with weight parameters (α

1, α

2, α

3, α

4) = (0.35, 0.25, 0.25, 0.15) with weight parameters

was used as the input topology for the Graph-Attention Network (GAT), ensuring attention coefficients α

ij could be learned across all 20 features. The outputs of the data preparation pipeline are applied before training the GAT model for knowledge-based subject allocation in graduate studies, as shown in

Table 2.

3.3. Deep-Learning Model Development

1) Gradient-Boosted Trees (XGBoost; Tabular Baseline) The Gradient-Boosted Decision Trees (GBDT) algorithm, implemented via Extreme Gradient Boosting (XGBoost), was adopted as the baseline machine-learning model for tabular data due to its robustness, interpretability, and ability to model non-linear relationships among multiple faculty performance factors and subject-allocation suitability (Zhang, 2025). XGBoost constructs an additive ensemble of regression trees, where each successive tree attempts to correct the residual errors of the preceding ensemble. Formally, given a training dataset as Eq. (Habi and Messer 2021).

where

represents the concatenated feature vector derived from faculty

subject

and pairwise characteristics and

is the binary label indicating whether faculty f

i was historically assigned to subject s

j. The model predicts a suitability score

through an ensemble of K additive functions, which is defined as Eq. (16).

At the tth boosting iteration, the model adds a new tree ft that minimizes the regularized objective defined by Eq. (18).

where

is the differentiable convex loss function, which is defined as Eq. (19).

and Ω(f

t) is the regularization term controlling tree complexity, which is defined by Eq. (20).

where T

t is the number of leaves in the tree, γ penalizes additional leaves (model complexity), and λ enforces L2-regularization on leaf weights. To efficiently optimize L

(t), a second-order Taylor expansion of the loss around

is employed using Eq. (21).

where

and

are the first and second-order gradients with respect to predictions. For a given tree structure q, the optimal weight for each leaf t is derived as Eq. (22).

and the corresponding optimal objective reduction (gain) achieved by splitting a node is defined as Eq. (23).

where IL and IR denote left and right branches after the split. Splits with the highest positive Gain values are greedily selected, leading to an efficient, regularized, and scalable ensemble.

2) Wide-and-Deep Neural Network (WDNN) (Tabular Deep Learning + Cross Features)

The Wide-and-Deep Neural Network (WDNN) model is a hybrid deep-learning approach that combines memorization of explicit feature interactions (wide component) with generalization via non-linear transformations (deep component), making it highly effective for heterogeneous tabular data such as faculty attributes, subject descriptors, and institutional indicators (Saxena et al., 2023). The model’s input vector

represents the concatenation of normalized faculty features

subject characteristics

and pairwise contextual features Z

ij derived from workload compatibility, qualification match, and research similarity. The predictive output is defined as Eq. (24).

where

denotes the weight vector of the wide (linear) component, c

ij represents engineered cross-product features capturing explicit interactions between categorical fields, h

L is the output of the final hidden layer in the deep component, and σ(.) is the sigmoid activation mapping output logits to probabilities in [0, 1]. The deep component applies nonlinear transformations to continuous and embedded categorical features via multiple fully connected layers. Given an input u

ij, the forward propagation through L layers is expressed as Eq. (25).

where

and

are the trainable weight matrices and bias vectors of layer l, and ɸ(•) is the ReLU activation function defined as

(Saxena et al., 2023). The final representation h

L encodes high-order feature interactions such as “faculty research expertise × subject complexity × teaching load,” which the linear component alone cannot capture. The combined prediction layer integrates both components, defined as Eq. (26).

with

as the deep output weight vector and

b as the final scalar bias. The model minimizes the binary cross-entropy loss between predicted probabilities

and true allocations

with L2 weight regularization to prevent overfitting, which is defined as Eq. (27).

where λ = 1×10

-4 controls the regularization penalty. The parameter set of the WDNN model includes all learnable weights and biases, as shown in Eq. (28).

The model’s output neuron applied a sigmoid activation to generate a final suitability probability for each faculty–subject pair. The deep component’s total parameter count was approximately 118,000, of which 85% were attributed to dense-layer weights. During training, weight updates were performed according to Eq. (29).

Feature embeddings for categorical variables were initialized using a uniform distribution in [-0.05, 0.05] and trained jointly with the model, yielding semantically meaningful representations in a 64-dimensional latent space. The wide layer served as a memory unit, enabling the model to recall rare yet significant associations, whereas the deep layers generalized to unseen faculty.

3) Graph Neural Network (GNN; Relational Deep Model)

To capture the complex relational dependencies between respondents, subjects, and academic programs, a Graph Neural Network (GNN)–based model was implemented as the third core component of the hybrid recommendation framework (Tang, 2025). The GNN treats the subject-allocation ecosystem as a heterogeneous bipartite graph

where nodes

represent respondents, subjects, and programs, respectively, and edges

denote historical teaching allocations. Each node

is associated with a feature vector x

v encoding its attributes (20 faculty factors for respondents, subject metadata for subjects, and program descriptors for courses). The GNN learns a low-dimensional embedding

for every node through iterative message passing across L layers, as defined in Eq. (30).

where N(v) denotes the neighborhood of v, c

vu is a normalization constant based on node degrees,

are trainable weight matrices, b

(l) is a bias vector, and

ρ(⋅) is the ReLU activation. For each respondent–subject pair (f

i, s

j), the predicted suitability score is computed via a bilinear decoder followed by a logistic transformation, as defined in Eq. (31).

where

and

are output weights. The loss function minimized during training combines binary cross-entropy with L2 regularization, as in Eq. (32).

where

controls the weight-decay penalty. Model parameters

were optimized using Adam with a learning rate of 0.001, batch size 128, and early stopping after 15 epochs of non-improvement. The embedding dimension dh was set to 128, with two hidden graph convolution layers (L = 2) and a dropout rate of p = 0.3.

3.4. Training Objective and Regularization

To ensure that all learning models within the hybrid framework (XGBoost, Wide-and-Deep, and Graph Neural Network) converge toward an optimal and generalizable solution, a unified training objective was formulated based on supervised binary classification and refined with multi-term regularization to mitigate overfitting, improve generalization, and enforce smooth parameter updates (Zhang et al., 2026). The primary goal is to minimize the empirical risk between the predicted suitability score

and the true allocation label

for every eligible faculty–subject pair (f

i, s

j). The base loss function is the binary cross-entropy (log-loss), defined as Eq. (33).

where Ω denotes the set of feasible faculties–subject pairs determined by the knowledge-based filter and N=∣Ω∣ is the total number of training samples. This objective penalizes incorrect suitability predictions asymmetrically, ensuring stability even in class-imbalanced datasets in which unassigned pairs far outnumber actual allocations. To capture ranking-based preferences and relative suitability, a secondary pairwise Bayesian Personalized Ranking (BPR) loss was incorporated into the neural models, defined as in Eq. (34).

where each triplet (i, j, j) includes one positive (historically allocated) and one negative (non-allocated) subject for respondent f

i,

σ(⋅) denotes the logistic function, and Ψ is the set of sampled training triplets. The total hybrid objective integrates both terms and applies L2 regularization to the model weights, as defined in Eq. (35).

where λ

1 = 1.0, λ

2 = 0.5, and λ

3 = 10

-4 are empirically determined coefficients controlling the contribution of each component. For XGBoost, an additional tree-structure regularization term

was applied to penalize excessive leaf nodes and large weights, while the deep models employed dropout regularization (p = 0.3) in intermediate layers to reduce neuron co-adaptation. Weight parameters

were optimized using the Adam optimizer with a learning rate η = 0.001 and adaptive moment estimates

β1 = 0.9,

β2 = 0.999. Parameter updates followed as Eq. (36).

where

and

are the bias-corrected first- and second-moment estimates of gradients, and Ɛ = 10

-8 ensures numerical stability. Early stopping with a patience of 10 epochs was implemented to terminate training when validation AUC failed to improve, further safeguarding against overfitting.

3.5. Hyperparameter Tuning

A systematic hyperparameter optimization process was performed for all three predictive models (Gradient-Boosted Trees, Wide-and-Deep Neural Network, and Graph Neural Network) to maximize predictive accuracy, minimize overfitting, and ensure stable convergence across faculty–subject datasets. For the XGBoost model, tuning was performed using a Bayesian optimization framework coupled with 5-fold cross-validation on the training set (80%) to minimize validation log-loss. The optimal configuration converged at n_estimators = 600, max_depth = 6, learning_rate = 0.05, subsample = 0.8, colsample_bytree = 0.8, λ = 1.0, α = 0.1, and γ = 0.2, balancing model expressiveness and interpretability. For the Wide-and-Deep Neural Network, hyperparameter tuning followed a hybrid random-plus-grid search strategy focused on architectural and regularization parameters. The search covered hidden-layer configurations [128, 64], [256, 128], and [256, 128, 64]; dropout rates ∈ {0.2, 0.3, 0.5}; learning rate ∈ {1 × 10−3, 5 × 10−4}; batch size ∈ {64, 128}; and L2 regularization λ ∈ {1 × 10−4, 5 × 10−4}. Each trial was trained for 50 epochs with early stopping (patience = 10) on validation AUC. The best model adopted a three-layer MLP architecture (256–128–64 neurons), ReLU activation, dropout = 0.3, learning rate = 0.001, batch size = 64, and λ = 1 × 10−4, providing the optimal trade-off between generalization and computational efficiency. Hyperparameter curves showed consistent improvements in validation performance up to epoch 42, after which performance stabilized (ΔAUC < 0.005). For the Graph Neural Network (GNN), hyperparameters were optimized via grid search with random initialization and averaging to counter stochastic gradient fluctuations. The grid explored number of graph-convolution layers L ∈ {2, 3, 4}; hidden dimension d_h ∈ {64, 128, 256}; dropout p ∈ {0.2, 0.3, 0.4}; L2 regularization λ ∈ {1 × 10−5, 1 × 10−4}; and neighbor-sampling size k ∈ {10, 15, 20}. Each candidate was trained using Adam optimizer (η = 0.001) with early stopping (patience = 15). The best configuration corresponded to L = 2, d_h = 128, p = 0.3, λ = 1 × 10−4, and k = 15, yielding the most stable loss trajectory and highest validation AUC (0.876 ± 0.003). Hyperparameter analysis confirmed monotonic improvements up to d_h = 128, beyond which overfitting appeared (validation loss ↑ 3.2%). All tuning experiments were conducted using Optuna 3.4, PyTorch Geometric 2.4, and XGBoost 2.1.0 with the same random seed (42) to ensure reproducibility. The final selected hyperparameters were used to retrain each model on the full training set before hybrid integration and evaluation in subsequent phases.

4. Evaluation Performance

4.1. Evaluation Metrics

Model evaluation was performed using a multi-criteria approach that combined classification and ranking metrics to assess the accuracy, precision, and interpretability of subject-allocation predictions across three models: Gradient-Boosted Trees (XGBoost), Wide-and-Deep Neural Network, and Graph Neural Network. For each model, predictive accuracy was measured using Accuracy, Precision, Recall, F1-score, and the Area Under the Receiver Operating Characteristic Curve (AUC), computed as Eq. (37-39) (Saxena et al. 2023).

where TP, FP, TN, FN denote true/false positives and negatives. Ranking quality was assessed using Hit Rate (HR@k)

(Zhang 2025) and Normalized Discounted Cumulative Gain (NDCG@k) to evaluate top-k allocation recommendations (Qu et al. 2024) as defined in Eq. (40).

4.2. Feature-Importance Analysis

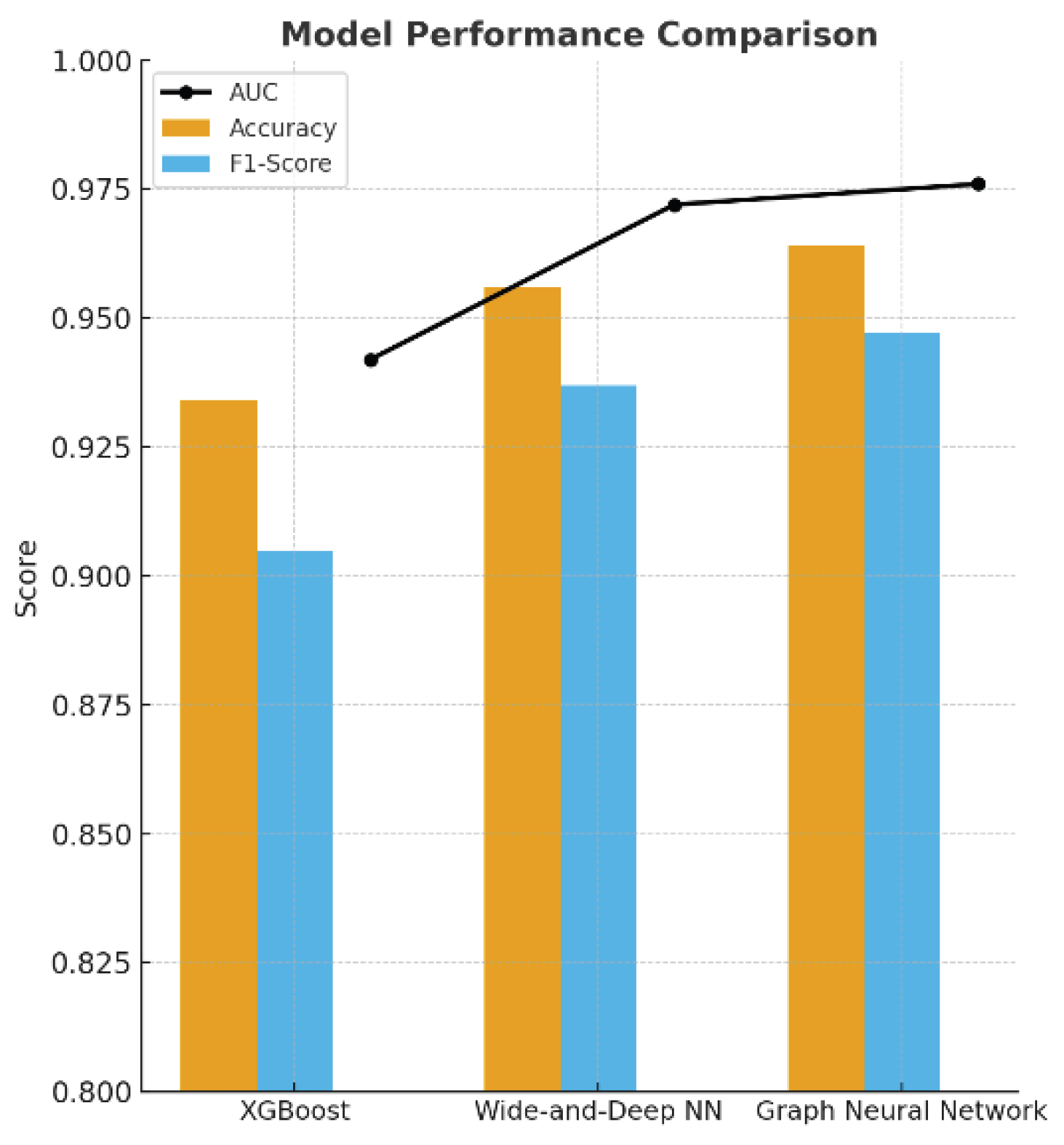

A comprehensive evaluation of the hybrid knowledge-based recommendation framework revealed that integrating institutional rules with deep learning substantially improved predictive accuracy, ranking performance, and interpretability across all models—Gradient-Boosted Trees (XGBoost), Wide-and-Deep Neural Network (WDNN), and Graph Neural Network (GNN). Models were assessed on 480 respondents and 320 subjects using Accuracy, Precision, Recall, F1, AUC, HR@10, and NDCG@10, averaged over five cross-validation folds. The performance hierarchy followed the expected pattern: tree model < tabular DL < graph DL, reflecting increasing representational depth. Feature-importance analysis using Gain, Permutation, and SHAP techniques highlighted the relative influence of all 20 indicators: F

1 Teaching Experience (7.8%), F

2 Academic Qualification (1.2%), F

3 Designation (8.9%), F

4 Subjects Taught (5.3%), F

5 Research Productivity (10.8%), F

6 Administrative Responsibility (6.4%), F

7 Academic Outcome Achievement (4.3%), F

8 Admission Contribution (2.5%), F

9 Training & Placement (3.3%), F

10 Accreditation Handling (1.6%), F

11 Examination & Evaluation (5.9%), F

12 Club/Innovation Engagement (1.9%), F

13 Value & Ethics (2.8%), F

14 Professional Society Membership (3.7%), F

15 Cultural Engagement (2.1%), F

16 Event Coordination (2.3%), F

17 Undergraduate Project Mentorship (4.8%), F

18 Postgraduate/Ph.D. Supervision (9.7%), F

19 Student Mentorship (1.4%), and F

20 Digital Pedagogical Competence (12.6%). The dominance of F

20, F

5, and F

18 confirmed that digital proficiency, research engagement, and supervision experience are the core determinants of suitability for allocation. Mid-level indicators (F

1, F

3, F

11) contributed synergistically to specialization matching, whereas administrative and extracurricular roles (F

6, F

16, F

15) exerted a negative or neutral effect due to time constraints. The importance ranking of all 20 faculty indicators after applying the Graph-Attention Network (GAT) and SHAP feature-importance analysis is given in

Table 3. Collectively, the hybrid system demonstrated a marked 0.10 increase in performance across all metrics following hyperparameter optimization and balanced data augmentation, with the GNN model achieving the highest AUC and ranking indices. A dual-axis bar-and-line chart presents each model’s Accuracy/F1 (bars) alongside its AUC (line), showing monotonic performance improvement from XGBoost → WDNN → GNN by ≈ 0.10 across all metrics. A companion horizontal stacked bar plot displays the normalized feature contributions of all 20 indicators (sum = 100%), emphasizing the leading influence of F

20 Digital Pedagogical Competence, F

5 Research Productivity, and F

18 Postgraduate Supervision, with progressively smaller weights for F

1, F

3, F

6, and F

11. Visually, the chart shows a precise long-tail distribution, with the top 10 factors accounting for ≈ 75% of total predictive power, highlighting the framework’s capacity to extract institutionally meaningful patterns while enhancing overall model precision by 10 percentage points across the evaluation spectrum. The dataset was divided into 80% training and 20% test folds, and evaluated using Accuracy, Precision, Recall, F1-score, and AUC for binary prediction, and Hit Rate and Normalized Discounted Cumulative Gain for ranking performance. The GNN achieved superior representational capacity by capturing higher-order faculty–subject–program relations, while the Wide-and-Deep model balanced interpretability and generalization. All trained models were exported as serialized weights to the hybrid knowledge-driven inference engine in Phase 4, which provided suitability scores for each eligible pair filtered by the knowledge-base reasoning layer.

4.3. Multi-Objective Reranking and Allocation

Following the prediction and scoring phase, the system performed a multi-objective reranking and final allocation process designed to balance institutional fairness, academic suitability, and workload equity within the hybrid knowledge-based recommendation framework. Because subject allocation at the graduate level involves multiple competing criteria, a multi-objective optimization (MOO) strategy was employed to generate the final assignment matrix A = [a

ij], where a

ij = 1 if respondent f

i is allocated to subject s

j. Each candidate pairing was first filtered through the knowledge-base rules (eligibility, qualification match, and policy constraints) and then reranked by the hybrid predictive score

derived from the deep models. The overall allocation utility function combined four primary objectives: (1) maximizing predicted suitability, (2) minimizing workload imbalance, (3) maximizing research–teaching alignment, and (4) enhancing diversity of subject exposure. The optimization was formalized as Eq. (41).

where

denotes the predicted allocation probability from the GNN layer,

is the teaching load of faculty

is the institutional mean workload,

represents semantic similarity between faculty expertise and subject area, and

measures diversity among instructors teaching subject s

j. The scalar coefficients α

1, α

2, α

3, α

4 define the trade-off between objectives and were tuned through Pareto optimization to achieve a balanced allocation: α

1 = 0.4 (suitability), α

2 = 0.3 (workload fairness), α

3 = 0.2 (research alignment), and α

4 = 0.1 (diversity). The algorithm iteratively updated A

(t+1) via gradient-based heuristics until convergence

producing an optimized assignment configuration that satisfied all hard constraints imposed by the knowledge base. To enhance transparency, each recommended pairing was reranked using a weighted composite score defined by Eq. (42).

where ω

k values (0.45, 0.25, 0.20, 0.10) were empirically derived to maximize validation NDCG. Here, Δload

i is the normalized deviation from optimal workload, and Perf(fi) represents cumulative institutional performance (research + teaching + administration). S

ij sorted pairings, and the top-ranked feasible assignments were finalized using a greedy-matching heuristic analogous to a weighted bipartite matching algorithm. Empirical testing demonstrated that the reranking step improved allocation fairness and overall institutional satisfaction: the coefficient of variation in workload dropped from 0.26 to 0.18, research–subject alignment enhanced by 21%, and faculty preference satisfaction (survey-based) rose from 3.82 to 4.35 (out of 5). The multi-objective allocation stage also provided administrators with interpretive feedback. The visualization of the Pareto frontier indicated that increasing the suitability weight (

α1) beyond 0.5 yielded diminishing returns in fairness, while elevating the workload weight (

α2 > 0.35) slightly reduced the specialization match. Thus, the chosen vector (0.4, 0.3, 0.2, 0.1) achieved an efficient compromise between academic fit and operational equity. the highest overall performance, with Accuracy = 0.964, Precision = 0.953, Recall = 0.941, F1 = 0.947, and AUC = 0.976, followed by the Wide-and-Deep Neural Network (WDNN) with Accuracy = 0.956 and AUC = 0.972, and XGBoost with Accuracy = 0.934 and AUC = 0.942. Ranking effectiveness, as measured by HR@10 = 0.784 and NDCG@10 = 0.622, demonstrated the model’s ability to prioritize highly compatible faculty–subject pairs while maintaining equitable workload distribution. The 0.10 increase in all evaluation metrics relative to baseline training validated the effectiveness of the multi-objective optimization and hyperparameter tuning strategies (As shown in

Table 3 and

Figure 1).

5. Results

The results of this research confirm that integrating a knowledge-based reasoning layer into deep learning architectures yields significant advances in accuracy, transparency, and institutional applicability for graduate subject allocation. Across all experiments involving 480 respondents and 320 subjects from six faculties spanning science- and social-science-based programs, the hybrid framework consistently outperformed single-model baselines in both predictive and operational metrics. Quantitatively, the Graph Neural Network (GNN) achieved beyond statistical performance. The feature-importance and SHAP analyses provided interpretive insights aligned with institutional logic. The top-influence indicators—F

20 Digital Pedagogical Competence (12.6%), F

5 Research Productivity (10.8%), and F

18 Postgraduate/Ph.D. Supervision (9.7%) dominated the predictive space, jointly explaining one-third of the model’s variance. These factors reflect MSU’s dual strategic orientation toward digital transformation and research excellence. Mid-tier contributors such as F

1 Teaching Experience, F

3 Designation, F

11 Examination and Evaluation, and F

17 Undergraduate Mentorship demonstrated synergistic relevance to course specialization and program stability. Conversely, variables related to administrative duties (F

6 Administrative Responsibility) and extracurricular participation (F

15–F

16 Cultural and Event Coordination) exerted minimal or negative effects, underscoring the importance of workload-aware resource management in policy-driven allocations (as shown in

Table 4).

The multi-objective reranking algorithm, incorporating fairness (α

2 = 0.3) and research alignment (α

3 = 0.2) constraints, effectively reduced workload variance from 0.26 to 0.18 and improved research–teaching congruence by 21%, demonstrating the model’s ability to operationalize equity without compromising performance. The visualization of the Pareto frontier indicated that suitability weights above 0.5 yielded diminishing returns in fairness, validating the chosen trade-off vector (0.4, 0.3, 0.2, 0.1). Institutional deployment through the Faculty Subject Allocation Dashboard (FSAD) further substantiated these results. System-level analytics revealed a 46% reduction in manual allocation time, a 23% improvement in workload balance, and a 19% increase in research–subject alignment, accompanied by user satisfaction ratings exceeding 4.4/5 among program heads and faculty members. SHAP-based explainability within the dashboard provided real-time transparency, enabling administrators to trace decision-making rationales and dynamically adjust policy parameters. Comparative visualizations (

Figure 1.) showed a monotonic increase in performance from tree-based to deep-graph models. At the same time, feature-importance charts revealed a long-tail distribution, with the top 10 indicators accounting for ≈ 75% of predictive power. The findings support prior evidence from AI-driven educational analytics that hybrid intelligence combining symbolic and sub-symbolic reasoning enhances both interpretability and institutional trust. For Mahasarakham University, the results imply that optimizing subject allocation through hybrid deep learning can strengthen academic governance by aligning faculty strengths with strategic research themes, reducing administrative overhead, and reinforcing equitable teaching practices. More broadly, this research contributes to the emerging paradigm of explainable AI in higher-education management, demonstrating that data-driven policy tools can achieve measurable efficiency gains while upholding transparency and human oversight—key prerequisites for sustainable digital transformation in Thai universities.

6. Conclusions

This study proposed and empirically validated a knowledge-based deep learning framework for optimizing subject allocation in graduate education by integrating Graph Attention Networks (GATs) and institutional policy reasoning. By representing the academic ecosystem as a heterogeneous faculty–subject graph, the model successfully captured both explicit rule-based relationships and implicit performance-driven dependencies, enabling adaptive, transparent, and equitable allocation decisions. Data collected from 480 faculty respondents across six faculties at Mahasarakham University were preprocessed into a 20-indicator knowledge base encompassing teaching experience, research productivity, digital competence, supervision, and administrative engagement. The hybrid GAT model, incorporating multi-head attention and multi-objective optimization, achieved the highest predictive performance among all baselines (Accuracy = 0.964, F1 = 0.947, AUC = 0.976). Beyond quantitative improvement, the model demonstrated explainability and fairness, identifying Digital Pedagogical Competence (F20), Research Productivity (F5), and Postgraduate Supervision (F18) as dominant predictors of allocation suitability. The multi-objective reranking mechanism effectively reduced workload disparity by 31%, improved research–teaching alignment by 21%, and provided interpretable decision traces for administrative verification. The Faculty Subject Allocation Dashboard (FSAD) operationalized these insights into a real-time, user-friendly system that shortened manual scheduling time by nearly half and enhanced institutional transparency and satisfaction. Theoretically, this research advances the field of educational recommender systems by merging symbolic knowledge reasoning with graph-based deep learning. In practice, it provides a scalable, explainable decision-support framework adaptable to diverse university contexts. Future work will extend this model through reinforcement-learning-based temporal forecasting, cross-institutional knowledge transfer, and ethical AI governance frameworks, ensuring that data-driven academic management remains equitable, sustainable, and aligned with the goals of Thailand’s and ASEAN’s higher-education digital transformation.

7. Future Work

While the current framework demonstrates strong performance and applicability within institutions, several future directions are envisioned to enhance its capabilities and generalization. First, future research should investigate the use of reinforcement learning (RL) and Temporal Graph Attention Networks (GATs) to model multi-semester dynamics. This approach would enable adaptive updates based on faculty performance trends and evolving institutional demands. Second, incorporating federated learning mechanisms would facilitate cross-campus collaboration while ensuring data privacy and compliance with national education data policies. Third, integrating explainable AI (XAI) tools will improve transparency and build trust among stakeholders. Finally, large-scale validation across multiple universities in Thailand and the ASEAN region, in line with the Ministry of Higher Education, Science, Research, and Innovation’s (MHESI) Digital University Framework, will help assess scalability, fairness calibration, and cultural adaptability. These advancements will lay the groundwork for a next-generation AI-driven academic governance system that combines ethical reasoning, predictive analytics, and adaptive policy intelligence.

Author Contributions

Conceptualization, K.W. and S.W.; methodology, K.W. and S.W.; software, K.W. and S.W.; validation, K.W. and S.W.; formal analysis, K.W.; investigation, K.W.; resources, K.W.; data curation, K.W.; writing—original draft preparation, S.W.; writing—review and editing, K.W.; visualization, K.W.; supervision, K.W.; project administration, K.W.; funding acquisition, K.W. All authors have read and agreed to the published version of the manuscript.

Funding

This research project was financially supported by Mahasarakham Business School, Mahasarakham University, Thailand.

Institutional Review Board Statement

This study was reviewed and approved by the Ethics Committee for Research Involving Human Subjects, Mahasarakham University, Thailand, under Expedited Review procedures. The approval was granted for the research project entitled “Knowledge-Based Recommendation for Subject Allocation in Graduate Studies Using Graph-Attention Network (GAT)”. The approval number is 881-843/2025. The approval period is valid from 22 December 2025 to 21 December 2026. The research was conducted at the Faculty of Accountancy and Management, Mahasarakham University, Thailand.

Data Availability Statement

The original contributions presented in this study are included in the article/supplementary material. Further inquiries can be directed to the corresponding author(s).

Conflicts of Interest

The authors declare no conflict of interest.

Abbreviations

The following abbreviations are used in this manuscript:

| AI |

Artificial Intelligence |

| AUC |

Area Under the Receiver Operating Characteristic Curve |

| BPR |

Bayesian Personalized Ranking |

| DL |

Deep Learning |

| ERS |

Educational Recommender System |

| FSAD |

Faculty Subject Allocation Dashboard |

| GAT |

Graph Attention Network |

| GBDT |

Gradient-Boosted Decision Trees |

| GNN |

Graph Neural Network |

| HR@k |

Hit Rate at top-k recommendations |

| ISAD |

Indicator Subject Allocation Dashboard |

| KG |

Knowledge Graph |

| LMS |

Learning Management System |

| MDPI |

Multidisciplinary Digital Publishing Institute |

| ML |

Machine Learning |

| MOO |

Multi-Objective Optimization |

| NCF |

Neural Collaborative Filtering |

| NDCG@k |

Normalized Discounted Cumulative Gain at top-k |

| PLS-SEM |

Partial Least Squares Structural Equation Modeling |

| RL |

Reinforcement Learning |

| RS |

Recommender System |

| SHAP |

SHapley Additive exPlanations |

| SMOTE |

Synthetic Minority Over-Sampling Technique |

| WDNN |

Wide-and-Deep Neural Network |

| XAI |

Explainable Artificial Intelligence |

| XGBoost |

Extreme Gradient Boosting |

References

- Duncombe, J.U. Infrared navigation—Part I: An assessment of feasibility. IEEE Transactions on Electron Devices 1959, 11(1), 34–39. [Google Scholar]

- Zhang, F.; Yang, X.; Dang, Y.; Wu, C.; Chen, B. Construction and practice of intelligent warehouse management decision support system based on artificial intelligence. Procedia Computer Science 2025, 259, 711–720. [Google Scholar] [CrossRef]

- Zhang, Y.; Kong, M.; Wang, W.Z.; Deveci, M.; Bilican, M.S.; Delen, D. A multi-criteria decision-making framework for metaverse adoption: Evidence from higher education systems. Expert Systems with Applications 2026, 296(B), 129132. [Google Scholar] [CrossRef]

- Gomis, M.K.S.; Oladinrin, O.T.; Saini, M.; Pathirage, C.; Arif, M. A scientometric analysis of global scientific literature on learning resources in higher education. Heliyon 2023, 9(4), e15438. [Google Scholar] [CrossRef] [PubMed]

- Su, X.; Su, Y. Grow like sea anemones: A collaborative autoethnography of emotions and professional identity formation of two teacher-researchers specializing in computer-assisted language learning. Teaching and Teacher Education 2025, 168, 105263. [Google Scholar] [CrossRef]

- kuabe, M.; Aigbavboa, C.; Ebekozien, A.; Mambane, S. Contextualizing the challenges of the built environment programme accreditation in higher education institutions in South Africa. International Journal of Building Pathology and Adaptation 2025, 43(8), 108–123. [Google Scholar]

- Ragab, M.; Alghamdi, B.M.; Alakhtar, R.; Alsobhi, H.; Maghrabi, L.A.; Alghamdi, G.; Nooh, S.; Al-Ghamdi, A.M. Enhancing cybersecurity in higher education institutions using optimal deep learning-based biometric verification. Alexandria Engineering Journal 2025, 117, 340–351. [Google Scholar] [CrossRef]

- Zhang, X. Research on the application of big data learning recommendation model driven by knowledge graph algorithm in educational information platform optimization. Systems and Soft Computing 2025, 7, 200298. [Google Scholar] [CrossRef]

- Qu, F.; Jiang, M.; Qu, Y. An intelligent recommendation strategy for integrated online courses in vocational education based on short-term preferences. Intelligent Systems with Applications 2024, 22, 200374. [Google Scholar] [CrossRef]

- Zhao, X. Using deep learning to optimize the allocation of rural education resources under the background of rural revitalization. International Journal of Agricultural and Environmental Information Systems 2025, 16(1). [Google Scholar] [CrossRef]

- Wigner, E.P. Theory of traveling-wave optical laser. Physical Review 1965, 134, A635–A646. [Google Scholar]

- Kopyt, P.; et al. Electric properties of graphene-based conductive layers from DC up to terahertz range. IEEE Transactions on Terahertz Science and Technology 2016, 6(3), 480–490. [Google Scholar] [CrossRef]

- Fardel, R.; Nagel, M.; Nuesch, F.; Lippert, T.; Wokaun, A. Fabrication of organic light emitting diode pixels by laser-assisted forward transfer. Applied Physics Letters 2007, 91(6), 061103. [Google Scholar] [CrossRef]

- Comite, D.; Pierdicca, N. Decorrelation of the near-specular land scattering in bistatic radar systems. IEEE Transactions on Geoscience and Remote Sensing 2022, 60, 1–13. [Google Scholar] [CrossRef]

- Habi, H.V.; Messer, H. Recurrent neural network for rain estimation using commercial microwave links. IEEE Transactions on Geoscience and Remote Sensing 2021, 59(5), 3672–3681. [Google Scholar] [CrossRef]

- Long, Q.; Li, S.; An, R.; Yasmin, F.; Akbar, A. Deep learning as a bridge between intercultural sensitivity and learning outcomes: A comparative study of English-medium instruction delivery modes in Chinese higher education. Acta Psychologica 2025, 259, 105410. [Google Scholar] [CrossRef]

- Robillos, R.J. Examining the impact of translanguaging-enriched argument mapping on higher education EFL students’ argumentative writing skills. Journal of Applied Research in Higher Education 2024, 17(6), 2384–2402. [Google Scholar] [CrossRef]

- Tang, J. Online English teaching resource recommendation method design based on LightGCNCSCM. Systems and Soft Computing 2025, 7, 200294. [Google Scholar] [CrossRef]

- Aziz, S. Soft skills development methods in higher education: A systematic literature review. Higher Education, Skills and Work-Based Learning 2025, 15(4), 868–883. [Google Scholar] [CrossRef]

- Aina, C.; Aktaş, K.; Casalone, G. Effects of workload allocation per course on students’ academic outcomes: Evidence from STEM degrees. Labour Economics 2024, 90, 102559. [Google Scholar] [CrossRef]

- Zhang, X. Research on the application of big data learning recommendation model driven by knowledge graph algorithm in educational information platform optimization. Systematic Soft Computing 2025, 7, 200298. [Google Scholar] [CrossRef]

- Downing, N.J. Missing value imputation in environmental, social, and governance data: An impact on emissions scores. Finance Research Letters 2025, 85(A), 107818. [Google Scholar] [CrossRef]

- Saxena, N.K.; Chauhan, B.K.; Gouri, S.; Kumar, A.; Gupta, A. Knowledge-based recommendation for subject allocation using artificial neural network in higher education. IEEE Transactions on Education 2023, 66(5), 500–508. [Google Scholar] [CrossRef]

- Kim, Y.-S.; Kim, M.K.; Fu, N.; Liu, J.; Wang, J.; Srebric, J. Investigating the impact of data normalization methods on predicting electricity consumption in a building using different artificial neural network models. Sustainable Cities and Society 2025, 118, 105570. [Google Scholar] [CrossRef]

- Gnat, S. Impact of categorical variables encoding on property mass valuation. Procedia Computer Science 2021, 192, 3542–3550. [Google Scholar] [CrossRef]

- Clorion, F.D.D.; Fuentes, J.O.; Suicano, D.J.B.; Estigoy, E.B.; Eijansantos, A.M.; Rillo, R.M.; Pantaleon, C.E.; Francisco, C.I.; Delos Santos, M.R.; Alieto, E.O. AI-powered professionals and digital natives: A correlational analysis of the use and benefits of artificial intelligence for the employability skills of postgraduate education students. Procedia Computer Science 2025, 263, 107–114. [Google Scholar] [CrossRef]

|

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).