Submitted:

28 February 2026

Posted:

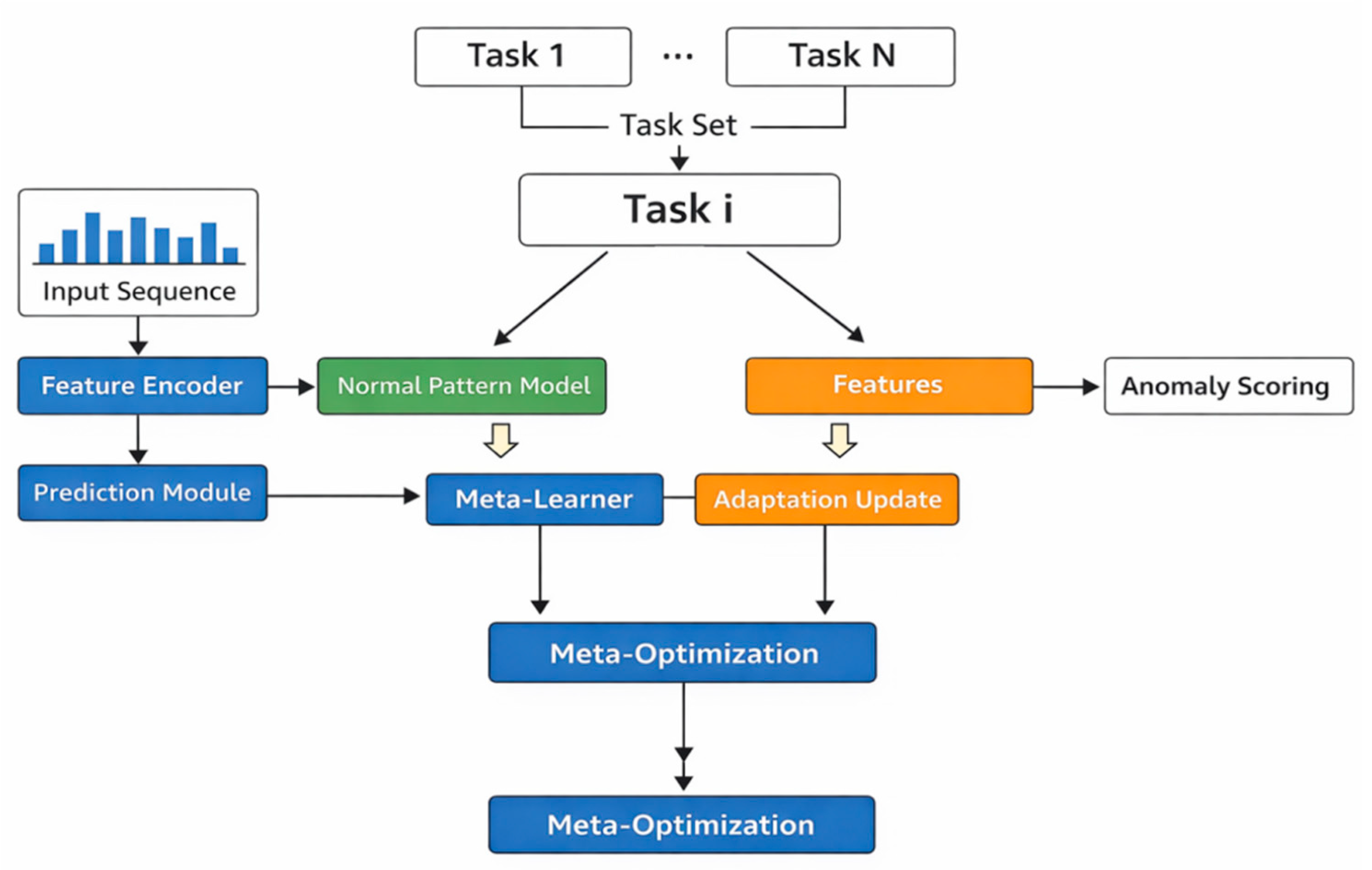

02 March 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Methodology Foundation

3. Method

4. Implementation

Dataset

5. Evaluation

5.1. Evaluate Metric

5.2. Experimental Results

6. Conclusions

References

- V. Ramamoorthi, “Machine learning models for anomaly detection in microservices,” Quarterly Journal of Emerging Technologies and Innovations, vol. 5, no. 1, pp. 41-56, 2020.

- J. Nobre, E. J. S. Pires and A. Reis, “Anomaly detection in microservice-based systems,” Applied Sciences, vol. 13, no. 13, p. 7891, 2023.

- P. P. Naikade, “Automated anomaly detection and localization system for a microservices based cloud system,” Ph.D. dissertation, The University of Western Ontario, Canada, 2020.

- Q. Du, T. Xie and Y. He, “Anomaly detection and diagnosis for container-based microservices with performance monitoring,” Proceedings of the International Conference on Algorithms and Architectures for Parallel Processing, pp. 560-572, 2018.

- Hrusto, E. Engström and P. Runeson, “Optimization of anomaly detection in a microservice system through continuous feedback from development,” in Proceedings of the 10th IEEE/ACM International Workshop on Software Engineering for Systems-of-Systems and Software Ecosystems, pp. 13-20, 2022.

- Z. Xie, C. Pei, W. Li et al., “From point-wise to group-wise: A fast and accurate microservice trace anomaly detection approach,” in Proceedings of the 31st ACM Joint European Software Engineering Conference and Symposium on the Foundations of Software Engineering, pp. 1739-1749, 2023.

- M. Khanahmadi, A. Shameli-Sendi, M. Jabbarifar et al., “Detection of microservice-based software anomalies based on OpenTracing in cloud,” Software: Practice and Experience, vol. 53, no. 8, pp. 1681-1699, 2023.

- R. Ying, Q. Liu, Y. Wang and Y. Xiao, “AI-based causal reasoning over knowledge graphs for data-driven and intervention-oriented enterprise performance analysis,” 2025.

- X. Zhang, Q. Wang and X. Wang, “Joint cross-modal representation learning of ECG waveforms and clinical reports for diagnostic classification,” Transactions on Computational and Scientific Methods, vol. 6, no. 2, 2026.

- X. Yang, Y. Wang, Y. Li and S. Sun, “Semantics-aware denoising: A PLM-guided sample reweighting strategy for robust recommendation,” arXiv preprint arXiv:2602.15359, 2026.

- J. Yang, S. Sun, Y. Wang, Y. Wang, X. Yang and C. Zhang, “Semantic alignment and output constrained generation for reliable LLM-based classification,” 2026.

- F. Wang, Y. Ma, T. Guan, Y. Wang and J. Chen, “Autonomous learning through self-driven exploration and knowledge structuring for open-world intelligent agents,” 2026.

- Y. Ou, S. Huang, F. Wang, K. Zhou and Y. Shu, “Adaptive anomaly detection for non-stationary time-series: A continual learning framework with dynamic distribution monitoring,” 2025.

- J. Li, Q. Gan, R. Wu, C. Chen, R. Fang and J. Lai, “Causal representation learning for robust and interpretable audit risk identification in financial systems,” 2025.

- X. T. Li, X. P. Zhang, D. P. Mao and J. H. Sun, “Adaptive robust control over high-performance VCM-FSM,” Optics and Precision Engineering, vol. 25, pp. 2428-2436, 2017.

- R. Liu, L. Yang, R. Zhang and S. Wang, “Generative modeling of human-computer interfaces with diffusion processes and conditional control,” arXiv preprint arXiv:2601.06823, 2026.

- K. Cao, Y. Zhao, H. Chen, X. Liang, Y. Zheng and S. Huang, “Multi-hop relational modeling for credit fraud detection via graph neural networks,” 2025.

- C. Hu, Z. Cheng, D. Wu, Y. Wang, F. Liu and Z. Qiu, “Structural generalization for microservice routing using graph neural networks,” in Proceedings of the 2025 3rd International Conference on Artificial Intelligence and Automation Control (AIAC), pp. 278-282, 2025.

- X. Hu, Y. Kang, G. Yao, T. Kang, M. Wang and H. Liu, “Dynamic prompt fusion for multi-task and crossdomain adaptation in LLMs,” in Proceedings of the 2025 10th International Conference on Computer and Information Processing Technology (ISCIPT), pp. 483-487, 2025.

- X. Song, Y. Liu, Y. Luan, J. Guo and X. Guo, “Controllable abstraction in summary generation for large language models via prompt engineering,” arXiv preprint arXiv:2510.15436, 2025.

- S. Pan and D. Wu, “Trustworthy summarization via uncertainty quantification and risk awareness in large language models,” in Proceedings of the 2025 6th International Conference on Computer Vision and Data Mining (ICCVDM), pp. 523-527, 2025.

- N. Lyu, J. Jiang, L. Chang, C. Shao, F. Chen and C. Zhang, “Improving pattern recognition of scheduling anomalies through structure-aware and semantically-enhanced graphs,” arXiv preprint arXiv:2512.18673, 2025.

- B. Chen, “FlashServe: Cost-efficient serverless inference scheduling for large language models via tiered memory management and predictive autoscaling,” 2025.

- J. Chen, F. Liu, J. Jiang et al., “TraceGra: A trace-based anomaly detection for microservice using graph deep learning,” Computer Communications, vol. 204, pp. 109-117, 2023.

- M. Panahandeh, A. Hamou-Lhadj, M. Hamdaqa et al., “ServiceAnomaly: An anomaly detection approach in microservices using distributed traces and profiling metrics,” Journal of Systems and Software, vol. 209, p. 111917, 2024.

- Z. Zhang, J. Wang, B. Li et al., “ReconRCA: Root cause analysis in microservices with incomplete metrics,” Proceedings of the 2025 IEEE International Conference on Web Services (ICWS), pp. 116-126, 2025.

- J. Tian, M. Li, L. Chen et al., “iADCPS: Time series anomaly detection for evolving cyber-physical systems via incremental meta-learning,” arXiv preprint arXiv:2504.04374, 2025.

- Q. Liu, S. Lee and J. Paparrizos, “EasyAD: A demonstration of automated solutions for time-series anomaly detection,” Proceedings of the VLDB Endowment, vol. 18, no. 12, pp. 5431-5434, 2025.

| Method | Acc | Precision | Recall | F1-Score |

| TraceGra [24] | 0.7321 | 0.7214 | 0.7098 | 0.7155 |

| ServiceAnomaly [25] | 0.7546 | 0.7423 | 0.7351 | 0.7387 |

| ReconRCA [26] | 0.7684 | 0.7565 | 0.7482 | 0.7523 |

| Iadcps [27] | 0.7819 | 0.7701 | 0.7627 | 0.7663 |

| EasyAD [28] | 0.7957 | 0.7844 | 0.7736 | 0.7789 |

| Ours | 0.8342 | 0.8217 | 0.8153 | 0.8185 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).