Submitted:

26 February 2026

Posted:

27 February 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction and Motivation

1.1. The Measurement Error Challenge in Quantum Computing

1.2. Gap in Existing Characterization Methodologies

1.3. Our Contribution: A Comprehensive Framework

2. Theoretical Framework for Quantum Measurement Noise

2.1. Probabilistic Models of Measurement Noise

2.1.1. Response Matrices and Conditional Error Rates

2.1.2. Correlated Noise Models

2.2. Impact of Measurement Noise on Quantum Algorithms

2.2.1. Effect on NISQ Applications

2.2.2. Implications for Fault-Tolerant Computing

2.3. Limitations of Current Noise Models

2.3.1. Assumption of Statistical Independence

2.3.2. Neglect of Temporal Variations

2.3.3. Uniformity Assumptions

3. Historical Development of Qubit Classification Methodologies

3.1. Foundations and the Experimental Era (2000–2010)

3.1.1. From Demonstrations to Quantum Standards

3.1.2. The Emergence of Multidimensional Measurements

3.2. The Era of Scaling and Complexity (2010–2018)

3.2.1. Classification Based on Individual Performance Metrics

3.2.2. Cluster-Based Methodologies

3.2.3. Spatial Classification Methods

3.2.4. Algorithm-Conscious Classification

3.2.5. Dynamic Classification Methodologies

3.3. The Contemporary Era (2022–Present): Towards Comprehensive Performance Metrics

3.3.1. From Individual Physical Measures to Integrated Standards

- Quantum Volume (QV): A holistic metric that combines usable qubit count, gate fidelity, and executable circuit depth to benchmark a processor’s ability to run general, random circuits [22].

- Circuit Layer Operations Per Second (CLOPS): A metric focusing on computational throughput, measuring the execution rate of quantum circuit layers while accounting for classical control, reset, and readout operations [23].

- Algorithmic Qubits (AQ): An application-oriented metric that evaluates processor performance on specific, commercially relevant algorithms rather than random circuits, reflecting the industry’s shift towards benchmarking for practical utility [24].

3.3.2. Comprehensive Performance Classification

- Architectural characteristics, such as qubit connectivity topology and crosstalk profiles.

- Explicit correlations between low-level physical metrics (noise, coherence) and application-level algorithmic outcomes.

- Integration of performance temporal stability into the evaluation framework, acknowledging the observed fluctuations in NISQ device parameters over time [12].

| Methodology | Advantages | Limitations | Suitable Domains |

|---|---|---|---|

| Binary Classification | Simplicity of implementation; fast evaluation | Oversimplification; ignores inter-qubit variance | Early-stage prototypes |

| Multi-class Classification | Higher accuracy; better characterization of variance | Computational complexity; sensitivity to threshold selection | Medium-scale systems |

| Dynamic Classification | Adapts to time-dependent performance drifts | Requires continuous calibration data and monitoring infrastructure | Long-term operational systems |

| Proposed Framework | Comprehensive, quantitative, and application-aware | Requires accurate calibration and real-time monitoring | Advanced QC; scalable systems |

4. Our Category-Based Error Budgeting Framework

4.1. Contributions to Classification Methodology

4.1.1. Methodological Innovations

4.1.2. Integration with Previous Methodologies

- Integrating with IBM’s metric-based individual characterization

- Extending spatial classification with quantitative impact analysis

- Adding temporal dimension to hybrid classification approaches

- Providing bridging between classification and error correction requirements

4.2. Theoretical Foundations

4.2.1. Core Theoretical Claim

Claim: Incorporating category-resolved and correlation-weighted measurement errors into decoder weights provides strictly more informative noise representation for fault-tolerant decoders than uniform or spatially-averaged error models, given fixed calibration information.

4.2.2. Qubit-Specific Measurement Error Rates

4.2.3. Total Expected Measurement Error

4.2.4. Category-Based Partitioning

| Criteria | Definition Method | Typical Applications |

|---|---|---|

| Spatial Layout | Physical location on processor chip | Thermal gradient analysis, crosstalk mapping |

| Coherence Properties | , , dephasing rates | Coherence-limited algorithm mapping |

| Hardware Zones | Shared control/readout electronics | Resource contention optimization |

| Gate Fidelity Classes | CNOT error rates, single-qubit gate errors | Gate-aware compilation |

| Error Rate Thresholds | Empirical error rate bins | Error mitigation strategy selection |

4.2.5. Category Error Sums and Fractional Contributions

4.2.6. New Classification Metrics

5. Advanced Extensions and Generalizations

5.1. From Relative to Absolute Error Rates

5.2. Correlated Noise Modeling: Covariance Matrix Extension

5.3. Temporal Dynamics and Non-Stationary Errors

5.4. Algorithm-Specific Category Analysis

- Algorithm A (Circuit-Heavy): A deep random circuit benchmark (e.g., Quantum Volume circuit) with high two-qubit gate density.

- Algorithm B (Measurement-Heavy): A variational quantum eigensolver (VQE) for a small molecule, with repeated state preparation and measurement.

- Algorithm C (Memory-Dominated): A dynamical decoupling or idle benchmarking sequence.

- Analyze the circuit to compute qubit usage frequencies and gate-type frequencies .

- Calculate algorithm-specific category contributions using:

- Compare these algorithm-specific rankings with the static category contributions to identify cases where qubit importance shifts significantly based on algorithmic context.

6. Decoder-Aware Formulation and Fault-Tolerance Integratio

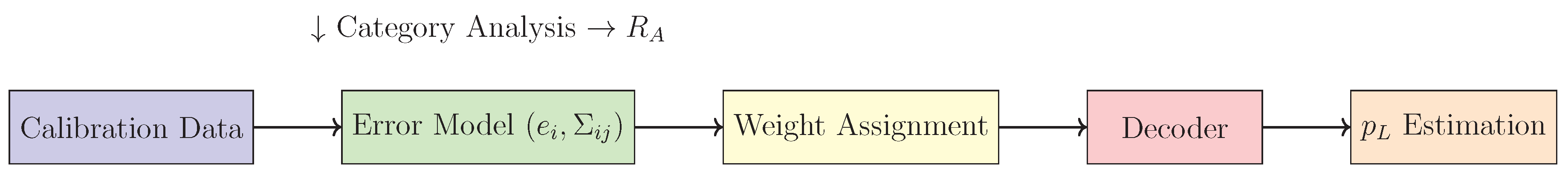

6.1. Position in the Fault-Tolerance Pipeline

6.2. Weight Assignment for Minimum-Weight Perfect Matching Decoders

6.3. Logical Error Rate Estimation with Heterogeneous Noise

6.4. Integration with Union-Find and Other Decoders

7. Experimental Procedures: Proposed Implementation of Theoretical Framework

7.1. Experimental Setup and Initial Data Acquisition

-

Platform Selection and Access: Gain programmable access to a suitable superconducting, trapped-ion, or other quantum processing unit (QPU). Ensure the platform provides:

- Standard calibration data (readout error, , , gate errors).

- The ability to define and execute custom calibration circuits.

- (Ideally) access to time-series calibration data to test temporal extensions.

-

Baseline Calibration Data Collection: Execute standard characterization experiments for all N qubits to estimate:

- Individual qubit readout error rates, , via repeated preparation and measurement in the and states.

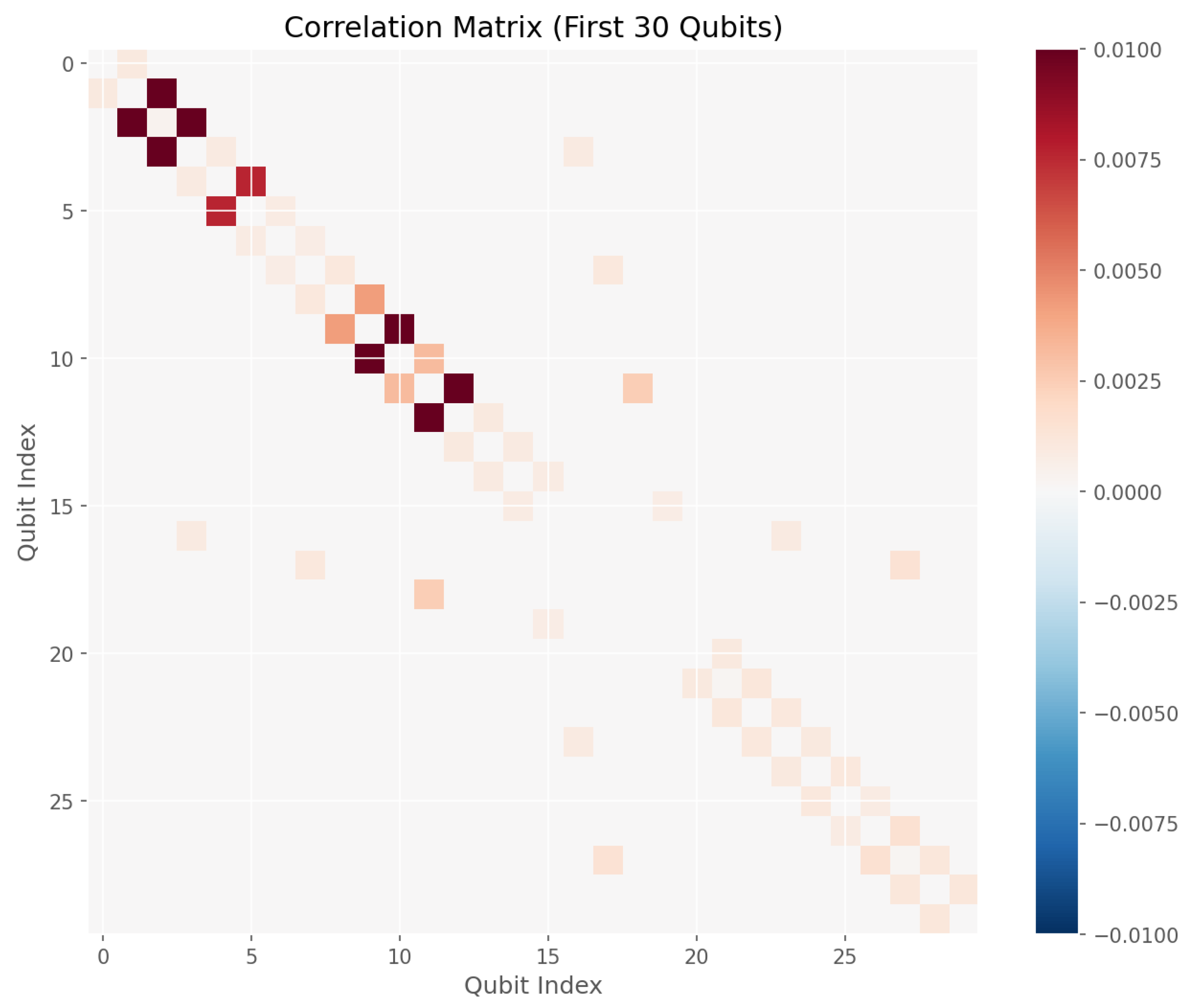

- For a subset of qubit pairs (especially those sharing readout resonators or control lines), perform correlated readout characterization. This involves preparing and measuring correlated two-qubit states (e.g., , ) to estimate the covariance matrix elements .

-

Definition of Theoretical Categories: Based on the hypothesis to be tested, define disjoint qubit categories using the processor’s metadata. Examples include:

- Spatial Classification: Group qubits by physical location on the chip (e.g., edge vs. center).

- Resource-Based Classification: Group qubits that share a common readout line, multiplexing resonator, or control channel.

- Error-Rate Classification: Partition qubits into low, medium, and high error rate bins based on initial measurements.

Record the category membership for each qubit, .

7.2. Implementation and Evaluation of Category-Based Budgeting

-

Calculation of Category Contributions: Apply the core theoretical formulas to the aggregated calibration data.

- Compute the total measurement error .

- For each category , calculate its error sum and its relative contribution rate .

- Compute the disproportionality factor .

-

Correlation-Aware Extension: Integrate the covariance data.

- Compute the correlation-corrected total error .

- Recalculate the category sums, separating internal and cross-category correlation contributions: .

- Recompute and using and .

-

Benchmarking Against Prior Classification Methods: Evaluate the differences in identifying critical qubits using:

- Our proposed category-budgeting method (ranking by or contribution to ).

- A simple threshold-based method (flagging all qubits with ).

- A pure spatial analysis (comparing average error rates of pre-defined zones).

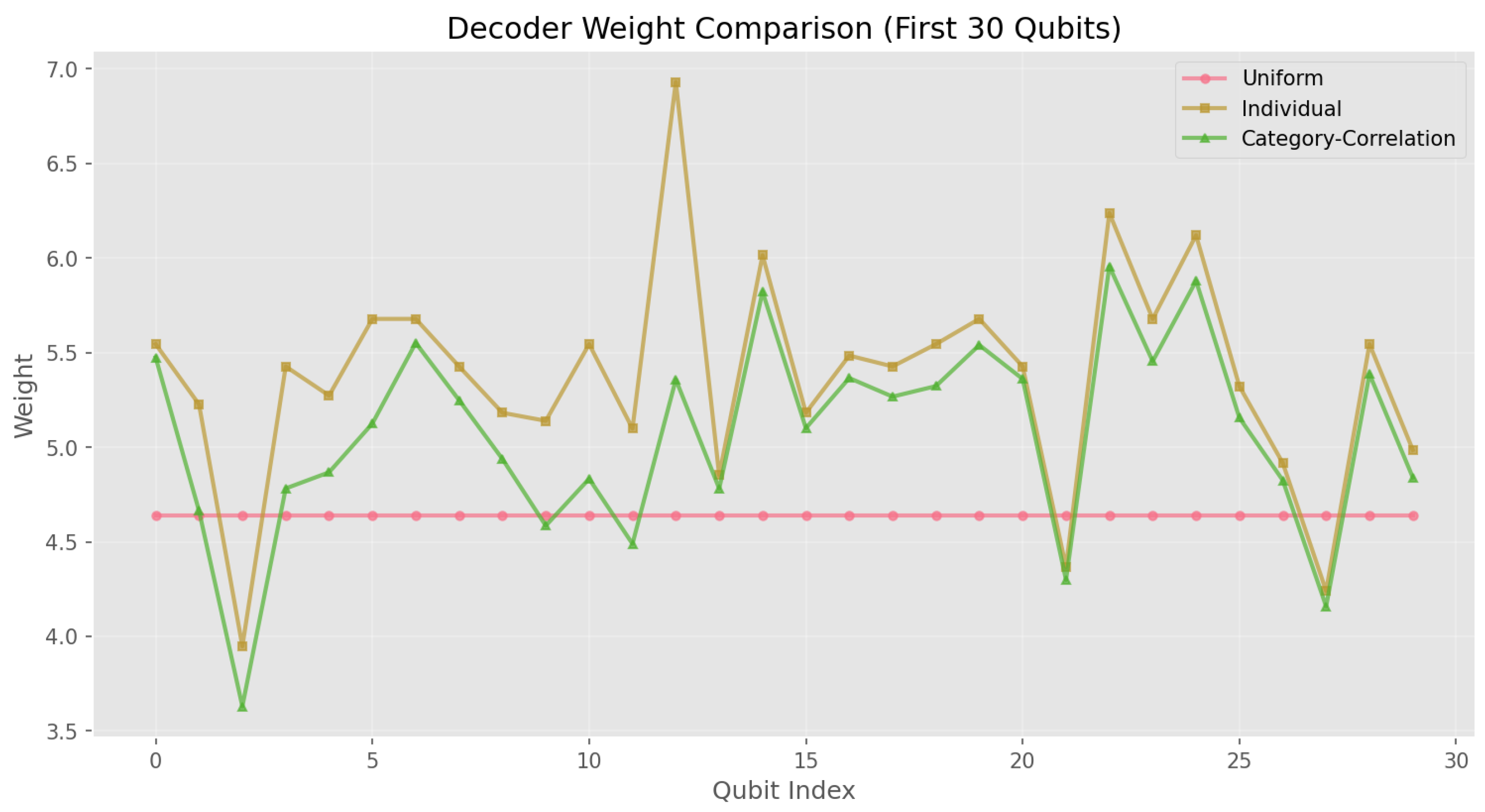

7.3. Integration and Testing with Decoder-Aware Formulation

-

Construction of Weighted Error Models: For a target error-correcting code (e.g., a distance-3 surface code), generate a corresponding decoding graph. Assign weights to graph edges based on the underlying physical qubit error data.

- Uniform Model: Assign a constant weight

- Individual Model: For an edge associated with qubit i, assign

- Category-Correlation Model: Assignwhere is the neighborhood in the decoding graph and is a tunable parameter (initially set to 1).

-

Decoder Performance Comparison: Conduct simulated (or small-scale hardware) syndrome extraction experiments.

- Simulate the generation of syndrome data for the chosen code under a phenomenological noise model that incorporates the measured and, optionally, correlated jump events based on .

- Run the decoder (e.g., Minimum-Weight Perfect Matching or Union-Find) using the three different weight assignments defined above.

- Record the decoder’s success rate in identifying the correct error chain for each instance.

-

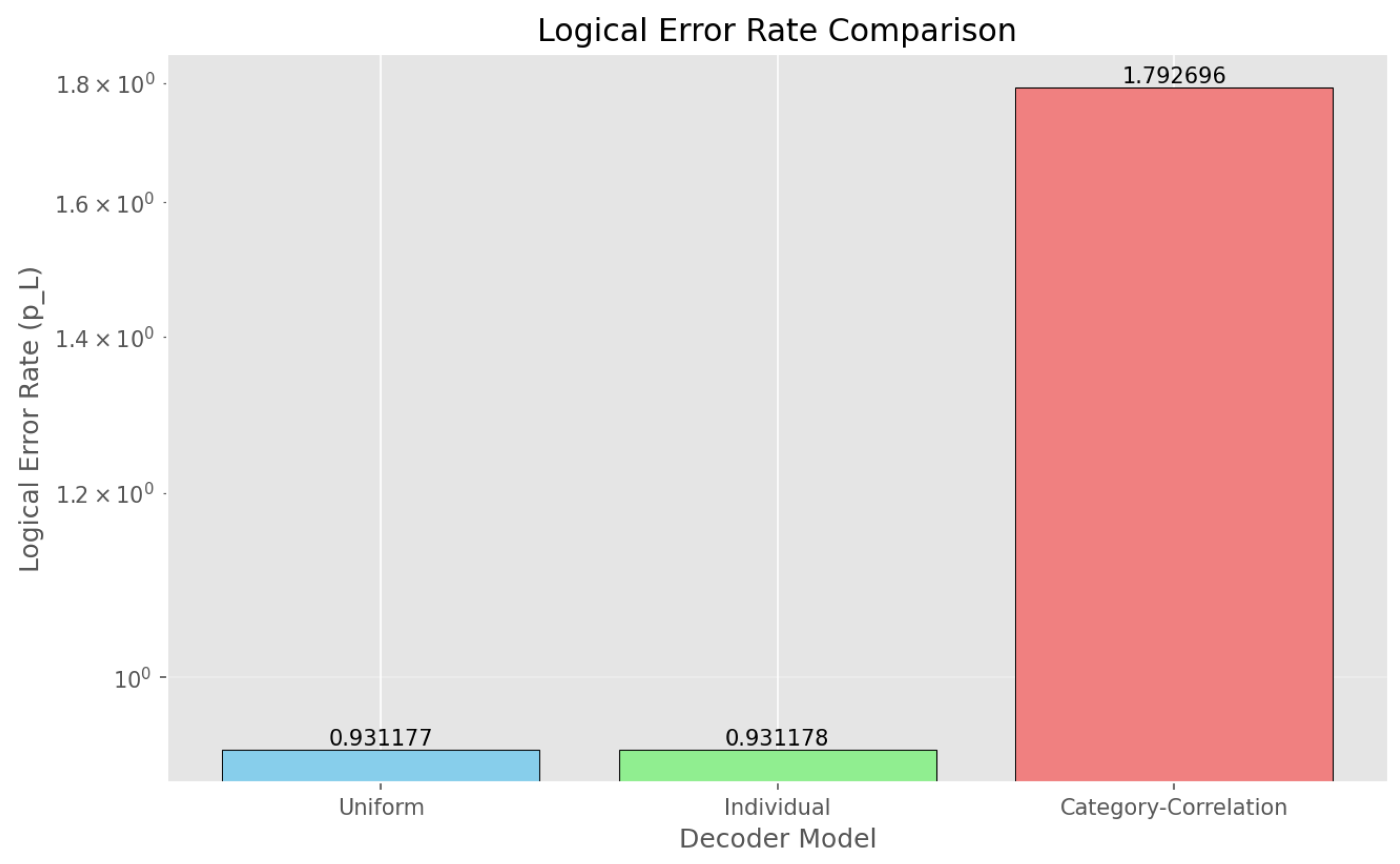

Logical Error Rate Estimation: Use the simulation outcomes to infer logical performance.

- For each weighting scheme, estimate the logical error rate by tallying the frequency of logical errors after correction.

- Compare

7.4. Validation of Theoretical Extensions

-

Temporal Dynamics Analysis: Collect time-series calibration data from the same QPU over an extended period (e.g., 24-48 hours, taking snapshots every 1-2 hours).

- For each time snapshot t, recompute , , and .

- Calculate the time-averaged category contribution and the Temporal Stability Index, .

- Compute the temporal correlation function for different time lags between snapshots.

-

Context-Sensitivity Testing: Execute calibration routines under different operational contexts.

- Context 1: Standard idle calibration.

- Context 2: Calibration preceded by high-intensity two-qubit gate operations on neighboring qubits (to induce potential crosstalk or heating).

- Context 3: Calibration using different readout pulse amplitudes or durations.

- For each context c, compute the context-dependent category contributions . Calculate the Context Sensitivity Metric, , for qubits that change category ranking across contexts.

7.5. Summary of Deliverables

- Category-to-Qubit Mapping Dataset: A comprehensive dataset mapping each qubit to its assigned category, accompanied by calculated category contribution rates and disproportionality factors . This validates the core budget-based classification methodology.

- Classification Method Comparison Matrix: A comparative matrix showing critical qubit sets identified by our proposed method versus baseline approaches (threshold-based, spatial-only, and uniform methods), demonstrating enhanced discriminative power.

- Decoder Performance Benchmarks: Quantitative decoder success rates and logical error probability estimates under different noise models (uniform, individual, and category-correlated), establishing the practical utility of our framework for fault-tolerant protocols.

- Temporal Evolution Visualizations: Time-series plots tracking over extended periods, along with temporal correlation functions , validating the framework’s ability to capture non-stationary device behavior.

- Context Sensitivity Quantification: Numerical values of the Context Sensitivity Metric for qubits exhibiting significant performance variation across different operational contexts, demonstrating the necessity of context-aware classification.

8. Analysis of Qubit Calibration Results for IBM Boston – 19 February 2026

Performance Analysis Report of the IBM Boston Quantum Processor

8.1. Executive Summary

Key Findings:

- Total error budget (): 1.505362

- Correlation-aware total error (): 3.449852 (129% increase relative to )

- Number of two-qubit gates: 352

-

Most disproportionate categories:

- -

- Very_High_Error ()

- -

- Very_Low_Coherence ()

8.2. Applied Methodology

Step 7.1 – Data Collection

- Single-qubit parameters: , , readout error, single-qubit gate errors

- Two-qubit gate data: CZ errors, RZZ errors, gate durations

- Connectivity information: 352 coupled qubit pairs

Step 7.2 – Qubit Classification

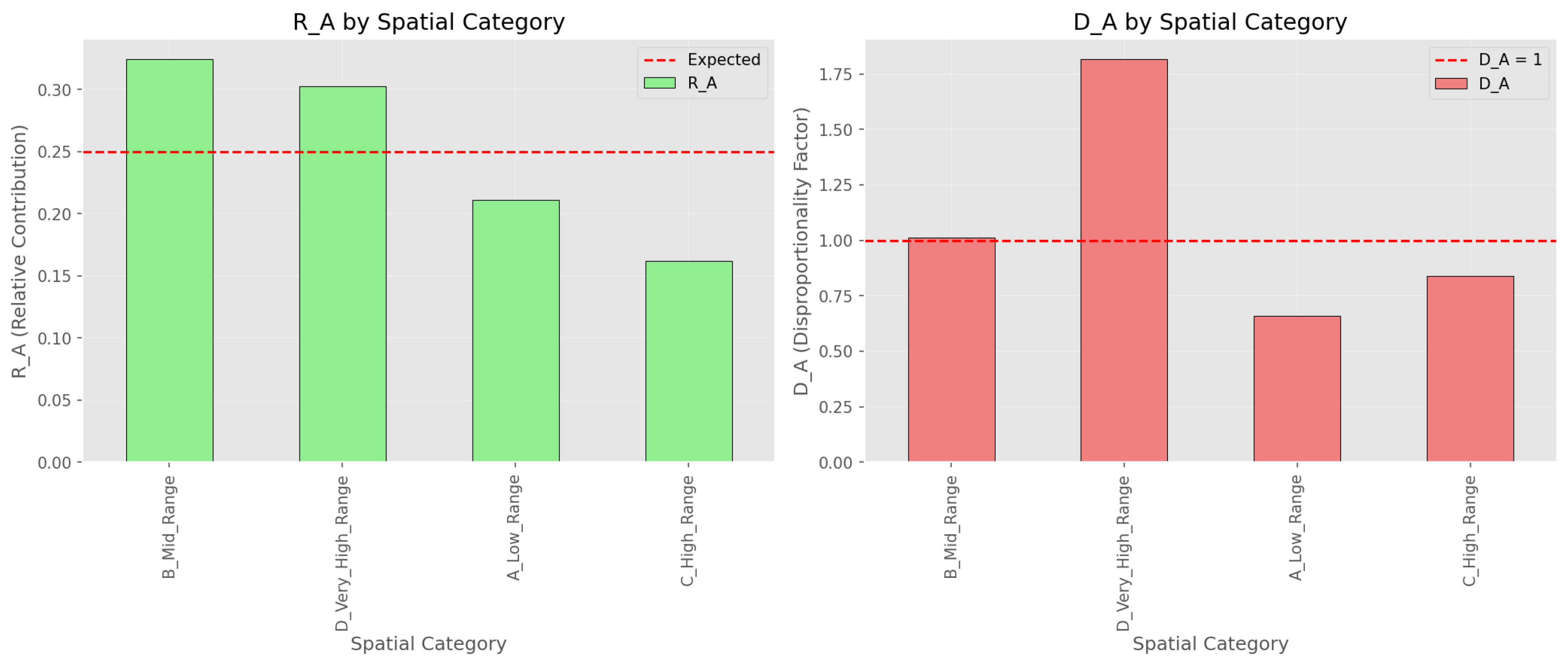

(A) Spatial Classification

- A_Low_Range (0–49): 50 qubits

- B_Mid_Range (50–99): 50 qubits

- C_High_Range (100–129): 30 qubits

- D_Very_High_Range (130–155): 26 qubits

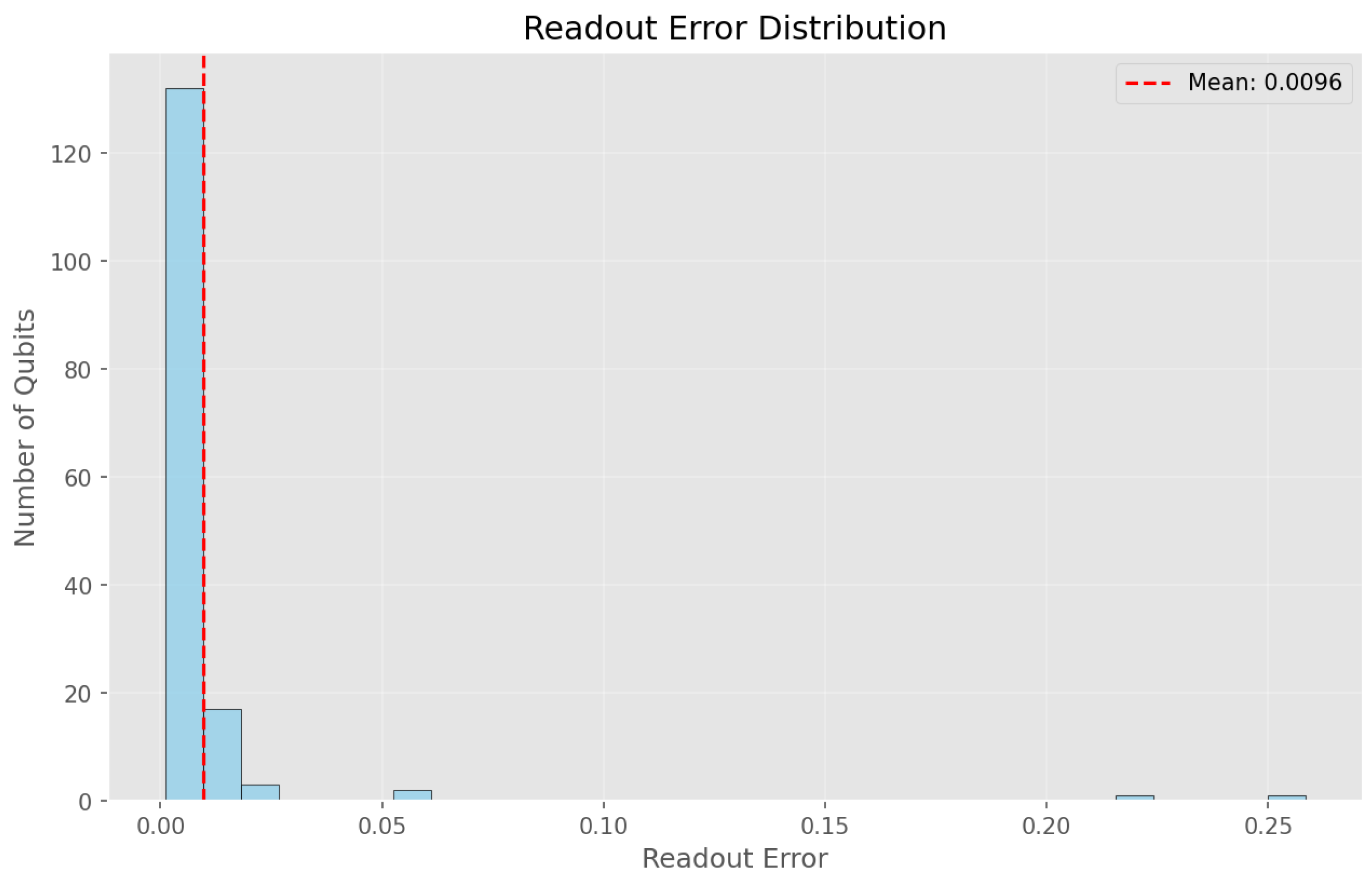

(B) Error-Rate Classification

- Low_Error (): 75 qubits (48%)

- Medium_Error (0.005–0.01): 57 qubits (36.5%)

- High_Error (0.01–0.02): 18 qubits (11.5%)

- Very_High_Error (): 6 qubits (4%)

(C) Coherence Classification

- High_Coherence (): 84 qubits (54%)

- Medium_Coherence (200–300 ): 55 qubits (35%)

- Low_Coherence (100–200 ): 9 qubits (6%)

- Very_Low_Coherence (): 8 qubits (5%)

8.3. Category-Based Budget Analysis

- : total error within the category

- : relative contribution

- : disproportion factor relative to the global average

Spatial Classification Results

| Category | Count | |||

| B_Mid_Range | 50 | 0.4883 | 0.324 | 1.012 |

| D_Very_High_Range | 26 | 0.4558 | 0.303 | 1.817 |

| A_Low_Range | 50 | 0.3179 | 0.211 | 0.659 |

| C_High_Range | 30 | 0.2434 | 0.162 | 0.841 |

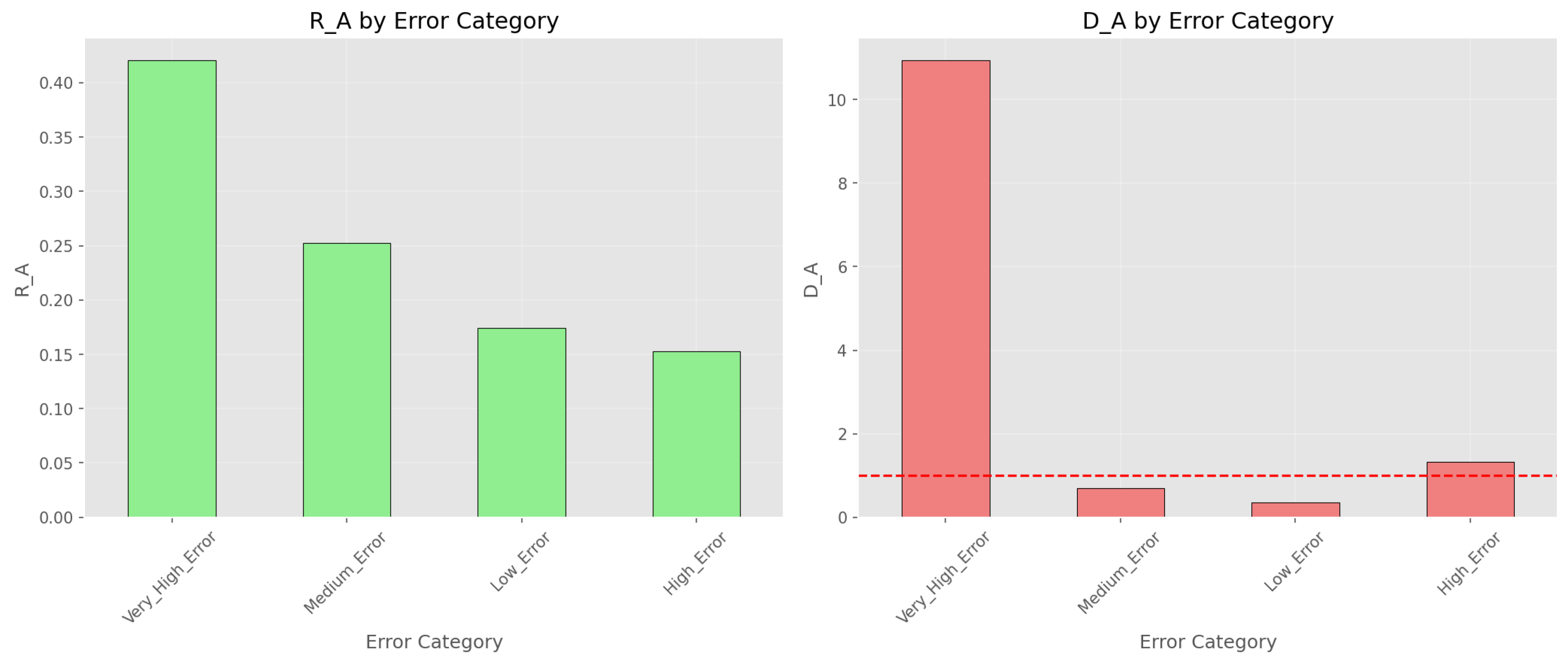

Error-Rate Classification Results

| Category | Count | |||

| Very_High_Error | 6 | 0.6330 | 0.421 | 10.934 |

| Medium_Error | 57 | 0.3804 | 0.253 | 0.692 |

| Low_Error | 75 | 0.2622 | 0.174 | 0.362 |

| High_Error | 18 | 0.2297 | 0.153 | 1.323 |

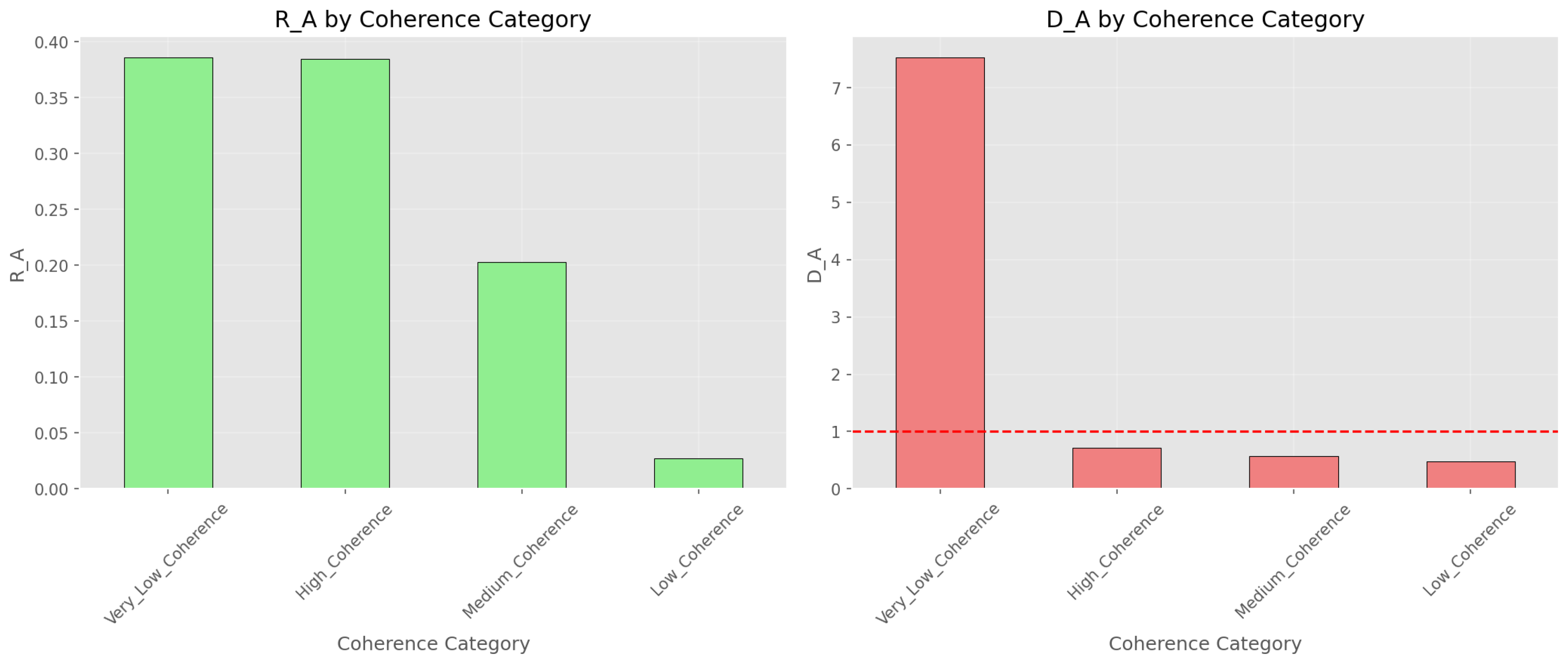

Coherence Classification Results

| Category | Count | |||

| Very_Low_Coherence | 8 | 0.5805 | 0.386 | 7.520 |

| High_Coherence | 84 | 0.5786 | 0.384 | 0.714 |

| Medium_Coherence | 55 | 0.3049 | 0.203 | 0.575 |

| Low_Coherence | 9 | 0.0413 | 0.027 | 0.475 |

8.4. Visual Analysis of Qubit Performance

8.5. Correlation-Aware Extension

- Correlation-induced increase: 129%

8.6. Step 7.3 – Decoder Integration

- Uniform weighting

- Individual weighting

- Category-Correlation weighting

Logical Error Rate Estimates

| Model | Description | |

| Uniform | 0.9312 | Global average error |

| Individual | 0.9312 | Per-qubit errors |

| Category-Correlation | 1.7927 | Category and correlation aware |

8.7. Identification of Critical Qubits

- Readout error: 0.2178

- Appears in multiple high-error couplings (see Figure 8)

- Readout error: 0.2585 (highest in system, see Figure 2 tail)

8.8. Conclusions and Recommendations

- Strong heterogeneity exists: 4% of qubits contribute 42% of total error (Figure 4).

- Ignoring correlations underestimates total error by 129% (Figure 8).

-

Critical categories (Figure 6):

- Very_High_Error ()

- Very_Low_Coherence ()

- D_Very_High_Range (spatial, )

- Further investigation of strong correlated pairs (e.g., 84–85, 85–86, 146–147) is necessary.

- Longitudinal monitoring of the Very_Low_Coherence group is advised.

- Consider circuit remapping to avoid high-error qubits in critical logical paths.

8.9. Final Remarks

Data Availability Statement

Acknowledgments

References

- Bravyi, S.; et al. Mitigating measurement errors in multi-qubit experiments. Phys. Rev. A 2021, 103, 042605. [Google Scholar] [CrossRef]

- Endo, S.; Cai, Z.; Benjamin, S. C.; Yuan, X. Hybrid quantum-classical algorithms and quantum error mitigation. J. Phys. Soc. Jpn. 2021, 90, 032001. [Google Scholar] [CrossRef]

- Fowler, A. G.; Mariantoni, M.; Martinis, J. M.; Cleland, A. N. Surface codes: Towards practical large-scale quantum computation. Phys. Rev. A 2012, 86, 032324. [Google Scholar] [CrossRef]

- Dennis, E.; Kitaev, A.; Landahl, A.; Preskill, J. Topological quantum memory. J. Math. Phys. 2002, 43, 4452–4505. [Google Scholar] [CrossRef]

- Temme, K.; Bravyi, S.; Gambetta, J. M. Error mitigation for short-depth quantum circuits. Phys. Rev. Lett. 2017, 119, 180509. [Google Scholar] [CrossRef]

- Gambetta, J. M.; et al. Characterization of addressability by simultaneous randomized benchmarking. Phys. Rev. Lett. 2012, 109, 240504. [Google Scholar] [CrossRef] [PubMed]

- Chubb, C. T.; Flammia, S. T. Statistical mechanical models for quantum codes with correlated noise. Ann. Inst. Henri Poincaré D 2021, 8, 269–321. [Google Scholar] [CrossRef]

- Quantum AI, Quantum AI. Exponential suppression of bit or phase flip errors with repetitive error correction. Nature 2021, 595, 383–387. [Google Scholar] [CrossRef] [PubMed]

- Arute, F.; et al. Quantum supremacy using a programmable superconducting processor. Nature 2019, 574, 505–510. [Google Scholar] [CrossRef] [PubMed]

- Kelly, J.; et al. State preservation by repetitive error detection in a superconducting quantum circuit. Nature 2015, 519, 66–69. [Google Scholar] [CrossRef] [PubMed]

- Delfosse, N.; Nickerson, N. H. Almost-linear time decoding algorithm for topological codes. Quantum 2021, 5, 595. [Google Scholar] [CrossRef]

- Proctor, T.; Rudinger, K.; Young, K.; Nielsen, E.; Blume-Kohout, R. Measuring the capabilities of quantum computers. Phys. Rev. Lett. 2022, 128, 230502. [Google Scholar] [CrossRef]

- Chuang, I. L.; Gershenfeld, N.; Kubinec, M. Experimental implementation of fast quantum searching. Phys. Rev. Lett. 1998, 80, 3408–3411. [Google Scholar] [CrossRef]

- Vandersypen, L. M. K.; et al. Experimental realization of Shor’s quantum factoring algorithm using nuclear magnetic resonance. Nature 2001, 414, 883–887. [Google Scholar] [CrossRef]

- Clarke, J.; Wilhelm, F. K. Superconducting quantum bits. Nature 2008, 453, 1031–1042. [Google Scholar] [CrossRef]

- DiCarlo, L.; et al. Demonstration of two-qubit algorithms with a superconducting quantum processor. Nature 2009, 460, 240–244. [Google Scholar] [CrossRef]

- Kandala, A.; et al. Hardware-efficient variational quantum eigensolver. Nature 2017, 549, 242–246. [Google Scholar] [CrossRef]

- Murali, P.; et al. Noise-adaptive compiler mappings for NISQ computers. ASPLOS 2019, 1015–1029. [Google Scholar] [CrossRef]

- Linke, N. M.; et al. Experimental comparison of two quantum computing architectures. Proc. Natl. Acad. Sci. USA 2017, 114, 3305–3310. [Google Scholar] [CrossRef] [PubMed]

- Klimov, P. V.; et al. Fluctuations of energy-relaxation times in superconducting qubits. Phys. Rev. Lett. 2018, 121, 090502. [Google Scholar] [CrossRef] [PubMed]

- Havlíček, V.; et al. Supervised learning with quantum-enhanced feature spaces. Nature 2019, 567, 209–212. [Google Scholar] [CrossRef] [PubMed]

- Cross, A. W.; et al. Validating quantum computers using randomized model circuits. Phys. Rev. A 2019, 100, 032328. [Google Scholar] [CrossRef]

- Wack, A.; et al. Quality, Speed, and Scale: Measuring performance of near-term quantum computers. arXiv 2021, arXiv:2110.14108. [Google Scholar]

- Cerezo, M.; et al. Variational quantum algorithms. Nat. Rev. Phys. 2021, 3, 625–644. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).