1. Introduction

The UL plays a fundamental role in human autonomy, as its joints enable the execution of essential movements required for daily activities. Due to its continuous use, the UL is highly susceptible to injuries and pathologies that may compromise motor function, significantly impacting an individual’s independence. Motor disability of the UL primarily arises from musculoskeletal disorders (such as fractures, carpal tunnel syndrome, arthritis, and epicondylitis) and neurological conditions (including stroke, cerebral palsy, spinal cord injury, multiple sclerosis, and Parkinson’s disease) [

1,

2].

According to the World Health Organization’s Rehabilitation Need Estimator, until the year 2021, approximately 440 million prevalent cases of fractures were reported globally, with nearly 38 million occurring in the Americas. In the case of cerebral palsy, an estimated 63 million individuals were affected worldwide, including 3.7 million in the Americas. Stroke exhibited a global prevalence of 51 million cases, with 1.9 million in the same region. Regarding osteoarthritis, 370 million cases were documented globally, of which 31 million were located in the Americas [

3].

Neurodegenerative diseases also represent a significant healthcare burden. Parkinson’s disease affects approximately 5.4 million individuals globally, including 300,000 in the Americas. Similarly, multiple sclerosis accounts for 1.5 million cases worldwide, with 82,000 cases reported in the Americas [

3]. Although the WHO Estimator does not provide specific details regarding impairments of the upper limb, several studies have reported that fractures in this region are common across different demographic groups, with the radius and ulna being the most frequently affected bones [

4,

5,

6,

7]. Stroke, in turn, is one of the leading causes of functional impairment of the upper limb, with approximately 35% of patients experiencing weakness during the acute phase and 22% presenting with mild to moderate weakness [

8]. In the case of pediatric cerebral palsy, 83% of cases involve upper limb impairment, and 69% of those present limitations in manual control [

9].

Given the impact of these conditions on the upper limb, rehabilitation plays a crucial role in restoring patients’ independence and quality of life [

10]. Therapeutic interventions focus on recovering movement, coordination, and strength through guided repetitive exercises during physical therapy sessions [

11]. However, conventional rehabilitation faces challenges such as the lack of patient engagement and motivation due to the monotonous nature of therapy sessions [

12], highlighting the need for appropriate strategies that promote active participation and foster patient interest in the rehabilitation process.

In the medical field, Virtual Reality (VR) is used to reduce costs and enhance quality across various applications, including diagnosis [

13], education [

14,

15,

16,

17,

18], rehabilitation [

19,

20], and telemedicine [

21]. Among these, rehabilitation has emerged as one of the most prominent areas of application, second only to education. Within the context of rehabilitation, VR has proven to be a valuable tool in motor rehabilitation, offering interactive environments that promote the repetitive execution of therapeutic exercises in a more engaging and motivating manner. In particular, the integration of SG into VR-based rehabilitation has proven especially effective. By incorporating gamification elements that stimulate interest and commitment, SG not only enhances the overall user experience but also improves treatment adherence. For instance, their implementation has been associated with improved UL function and increased patient participation [

22].

On the other hand, the design of SG aimed at UL rehabilitation must consider the specific movements and motor skills targeted during therapy. In this regard, Kai-Lun Liao et al [

23] developed three mini-games, each focused on a particular type of UL movement. The purpose of this system is to encourage patient engagement through the performance of interactive tasks that promote treatment adherence. Moreover, authors implemented the user-centered design approach, which is essential to identify and address the specific requirements of the target population. This strategy enables the customization of virtual environment (VE) content, the adaptation of game mechanics, and the delivery of meaningful feedback, thereby meeting therapeutic needs without compromising the quality of the user experience [

24,

25].

In addition, natural interaction devices serve as key tools for integrating VR and SG into upper limb rehabilitation. Natural User Interfaces enable intuitive interpretation of human body movements through depth sensors and specialized cameras, as found in devices such as the Kinect and LMC [

26]. These technologies capture the user’s natural motion, facilitating smoother interaction with VE specifically designed for therapeutic rehabilitation.

The integration of the LMC into rehabilitation systems has shown multiple benefits, particularly in improving the accuracy of upper limb gesture acquisition and enhancing user immersion during motor tasks [

27,

28]. For example, Ángela Aguilera-Rubio et al. [

27] developed a virtual reality intervention protocol based on activities of daily living, supplemented with conventional rehabilitation exercises. In this context, the LMC was employed as the primary interaction device to promote motor function recovery in patients with post-stroke sequelae. The results of these studies underscore the feasibility and therapeutic potential of the LMC as a tool for VR-based rehabilitation.

In recent years, multiple SG have been specifically designed for upper limb rehabilitation using the LMC. These developments aim to improve motor skills through the controlled execution of targeted movements, offering an engaging and complementary alternative to traditional physical therapy. For example, the study by Cuesta-Gómez et al. [

29], in which a game composed of six interactive scenarios was developed to enhance coordination and dexterity in patients with multiple sclerosis. These scenarios replicate conventional exercises—such as wrist flexion and extension—within an immersive VE that fosters patient engagement.

Similarly, Cabrera Hidalgo et al. [

30] developed a video game using the Unity graphics engine, focused on enhancing hand–eye coordination and fine motor skills in children. The high precision of the LMC sensor enabled detailed tracking of phalangeal movements, as well as palm and wrist positioning, thus providing enriched motor feedback. Another work is the RehabHand system, proposed by A. de los Reyes-Guzmán et al. [

28], which comprises seven VR applications designed to stimulate manipulative skills in patients with spinal cord injuries. These applications enable the execution of motor tasks through precise, real-time tracking of hand movements captured by the LMC.

On the other hand, adopting a playful-musical approach, Shah et al. [

31] developed a VE inspired by the video game Guitar Hero, aimed at improving fine motor skills of the upper limb. The game engages patients through music playback, during which musical notes appear and must be reached using specific gestures. The system recognizes movements such as wrist extension and hand opening, using the initial position of the fist as a reference point. Additionally, it incorporates rhythmic auditory feedback to reinforce the association between stimuli and motor responses. Prior to each session, an individualized calibration process is conducted to adapt the system to the patient’s range of motion, thereby ensuring a personalized and therapeutically effective experience.

For their part, Mukhopadhyay et al. [

32] proposed a series of interactive games combining the use of Kinect and Leap Motion Controller to enhance upper limb movement coordination and precision. These games aim to transform repetitive exercises into engaging activities, reducing monotony and promoting treatment adherence. Finally, Maldonado et al. [

33] developed a serious game using Oculus Rift and Leap Motion Controller, designed for the rehabilitation of children with cerebral palsy. The game consists of a VE in which patients must identify and hit objects by launching virtual balls from their hands. During each session, the system records detailed performance metrics, including the number of throws, movement accuracy, and total activity time. These data allow therapists to individually monitor patient progress and dynamically adjust therapeutic strategies.

These studies highlight the effectiveness of the LMC as a natural interaction device in the design of serious games for upper limb rehabilitation. Its ability to accurately capture hand movements, combined with its integration into virtual environments, enables the development of innovative, personalized, and engaging therapeutic experiences.

Although some of the aforementioned developments incorporate immersive VR, most choose for desktop VR systems, where interaction primarily occurs through a monitor. This choice aims to mitigate side effects commonly associated with immersive VR, such as fatigue, nausea, and dizziness, which may arise during or after use, and in some cases, persist for days or even weeks, potentially hindering the adoption of this technology [

34,

35].

However, desktop VR lacks the ability to provide peripheral imagery, as visualization is restricted to the screen width. As a result, this limitation can affect spatial correspondence, i.e., the relationship between the user’s physical movements and their representation within the VE. This correspondence is essential to ensure that real-world actions are accurately reflected in the virtual world, enabling more natural and effective interaction.

It has been demonstrated that haptic feedback enhances the sense of immersion in virtual environments. This type of feedback is generally classified into two main categories: kinesthetic and tactile. Kinesthetic feedback provides information regarding the user’s body position and movement, with force feedback being one of its most common applications [

36].

Tactile feedback, on the other hand, can be divided into three subcategories: electrotactile, thermal, and vibrotactile. Vibrotactile feedback employs vibratory actuators or motors in contact with the user’s skin to deliver sensory information. Since electrotactile and thermal feedback can potentially cause irritation or discomfort, vibrotactile feedback is the most widely used in VR applications [

37].

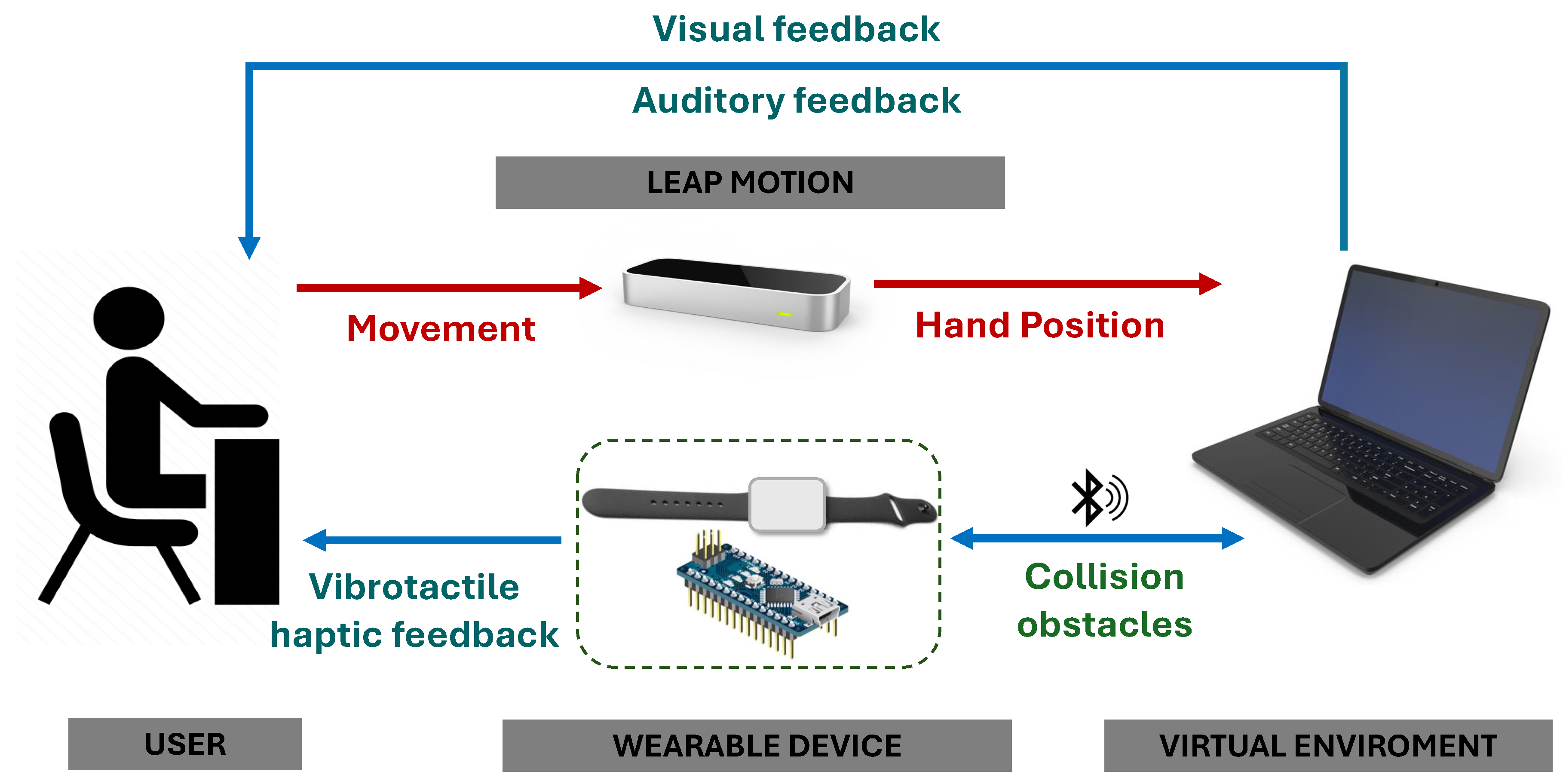

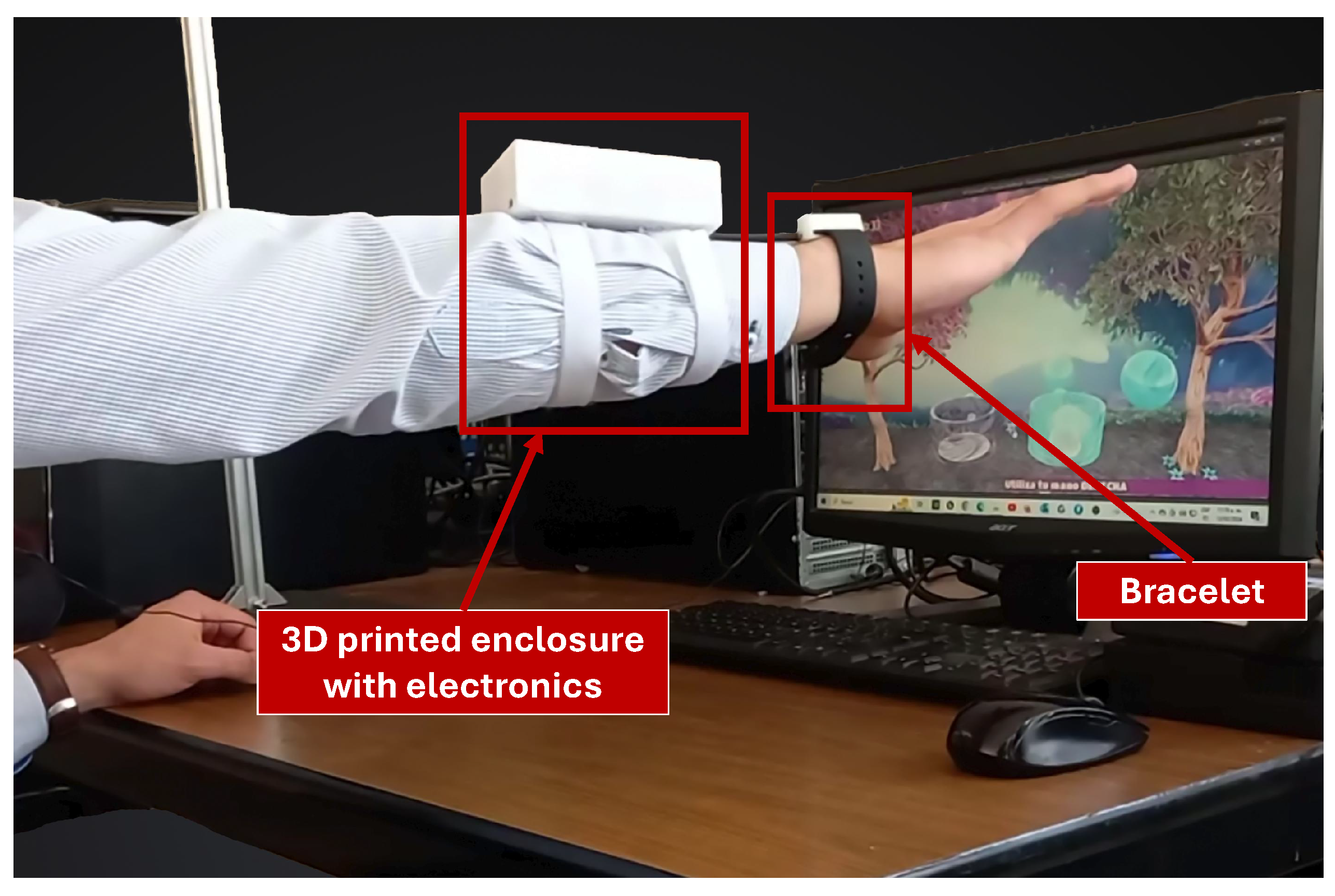

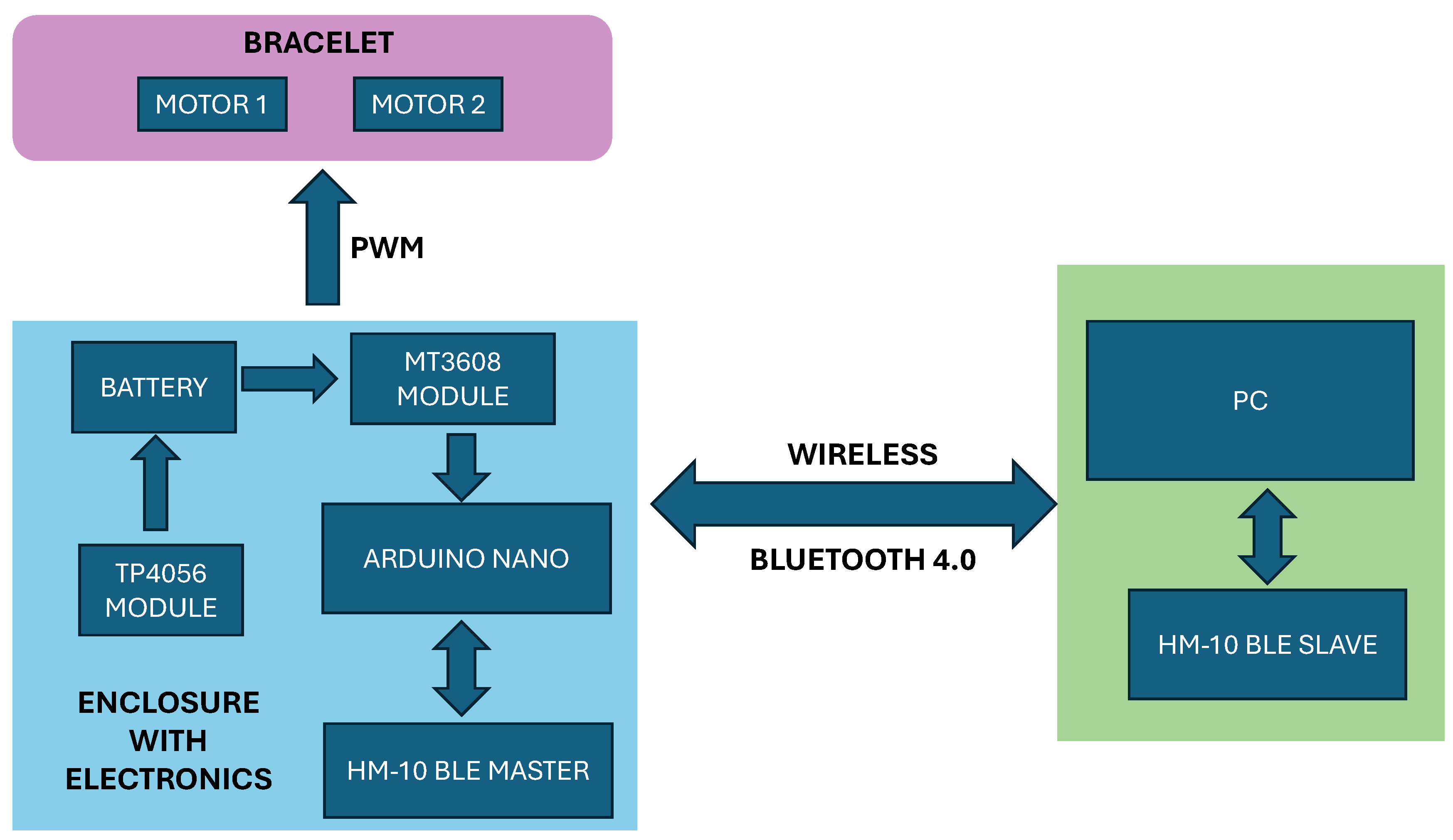

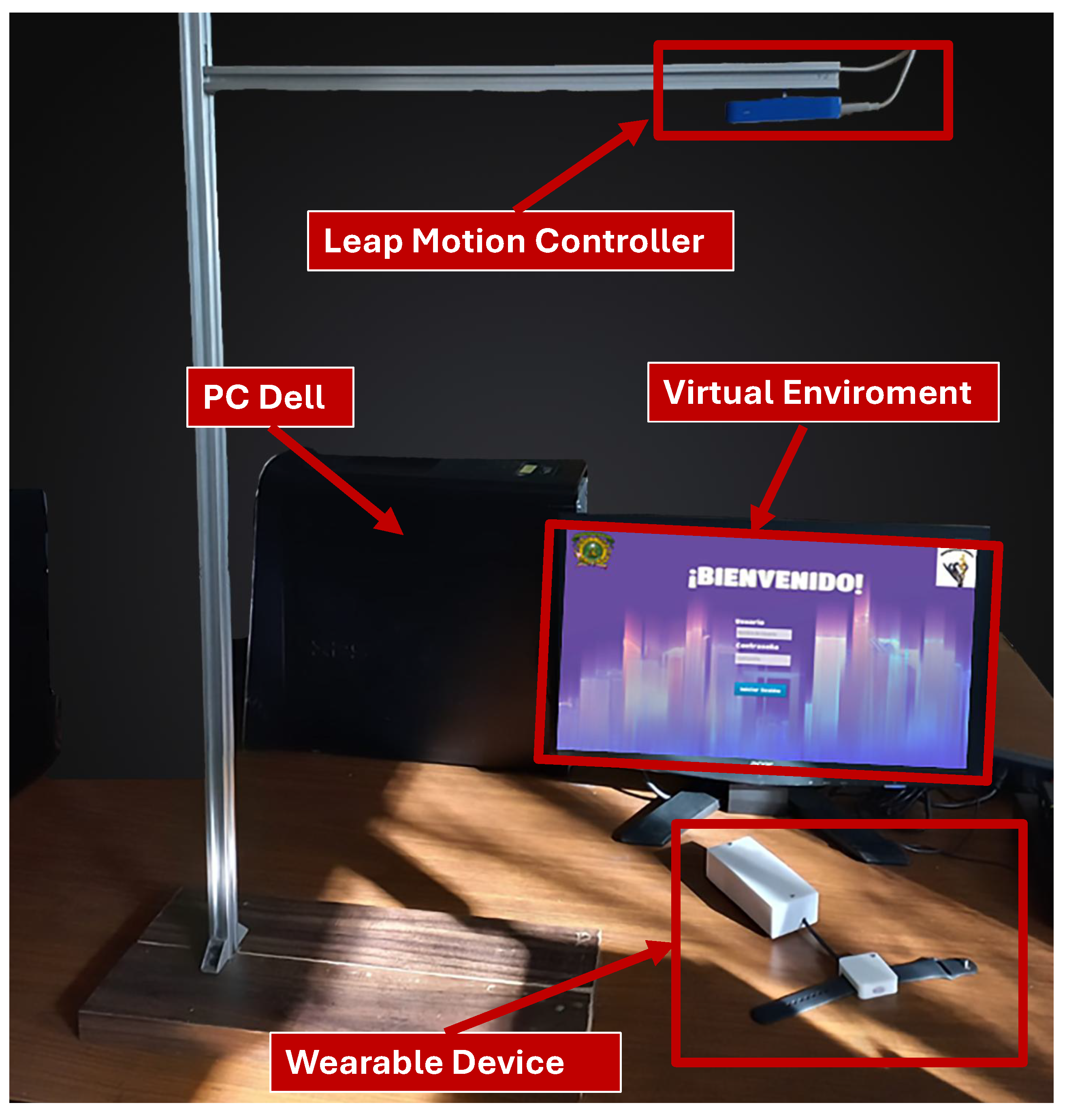

This work presents the development of an upper limb rehabilitation system that integrates a virtual reality tool based on SG, an optical hand-tracking device LMC, and a custom wearable device with vibrotactile feedback. This integration enables users to perform closed kinematic chain movements, which contributes to improving spatial correspondence within the VE. Moreover, the environment was designed following User-Centered Design principles, allowing for the creation of a first functional prototype of the system, including both the VE and the vibrotactile device. Subsequently, a preliminary evaluation was conducted with healthy users, focusing on functionality, usability, and mental workload.

It is worth mentioning that, unlike previous developments lacking haptic feedback, such as those proposed by Aguilera-Rubio et al. [

27], De los Reyes-Guzmán et al. [

28], Shah et al. [

31], and Mukhopadhyay et al. [

32], this work implements vibrotactile haptic feedback with the aim of enhancing user interaction with the VE by enabling the execution of closed kinematic chain movements and improving the spatial correspondence between real and virtual actions.

This article is organized as follows:

Section 2 presents the materials and methods used for the development of the VR system, including the collection of user requirements, the design of virtual scenarios, and the development and integration of the custom wearable device.

Section 3 describes the system evaluation protocol with preliminary experiments.

Section 4 reports the results related to user experience, system usability, and mental workload.

Section 5 discusses the findings and conclusions of the study.

3. Preliminary Experiments

To evaluate the system’s performance, 26 healthy participants aged between 18 and 45 years old were recruited. Participants were divided into two groups. In Group 1, 15 participants used the system without the wearable device; however, only 13 assessments were considered valid after discarding two due to factors that affected performance during testing, such as technical errors. Similarly, in Group 2, where the complete system (Leap Motion Controller with wearable device) was used, two evaluations were also excluded from 11 assessments.

3.1. Evaluation Protocol Description

Since this project requiered evaluating the user experience in healthy participants, and in this stage of the project was classified as minimal-risk research, it has been followed the principles established in the Declaration of Helsinki and the Regulations of the General Health Law on Health Research (Title Two: Ethical Aspects of Research in Human Subjects, Chapter I, Article 17). Moreover, authors submitted the protocol which has been approved by the Research Ethics Committee of the Faculty of Medicine, Autonomous University of the State of Mexico with number of register 008.2025 (see

Appendix A).

It is worth mentioning that participants with a history of upper limb injuries were excluded. The entire session from the initial explanation to the completion of the final part of the questionnaire, lasted approximately 40 minutes. Moreover, the session was divided into two parts: one using the dominant hand and the other using the non-dominant hand. In the first part, which lasted about 19 minutes, the participant played all levels of each scenario. In the second part, which lasted approximately 2 minutes, the participant played only the final level of the Fish scenario.

Prior to participation, all individuals were first informed about the duration of the test, the study’s objectives, and the content of each session. They received a comprehensive informed consent form detailing the objectives of the research project, the interaction process with the VE, the testing procedure, and the confidentiality of data, which would be used exclusively for research purposes. If they agreed to participate, they signed the form.

To ensure clear understanding, an explanatory video was provided (

click here to open the video link) illustrating the system’s functionality and demonstrating user interaction with the VE, such as a general overview of the scenarios, levels, exercises, and mechanics involved.

Afterwards, a questionnaire consisting of three sections was designed to collect participant feedback. Prior to starting the first experimental session, participants were asked to complete the first section of the questionnaire. The remaining sections were administered during the experiment, as described below. The three sections of the questionnaire are described as follows:

The first section (given before starting the test) collects demographic information from the participant, including their level of computer proficiency, experience with video games and VR, as well as prior use of optical motion trackers. This data enables an analysis of whether these variables are associated with user performance in the VE.

The second section (given after each scenario during the test) consists of a table with six questions to assess the mental workload of each scenario using the NASA Task Load Index (NASA TLX). The dimensions evaluated are: mental demand, physical demand, temporal demand, performance, effort, and frustration level. The first three relate to the task’s imposed workload, while the latter three pertain to the user’s interaction with the task. Each dimension is rated on a scale from 1 to 100, where 1 indicates a very low level and 100 a very high level. The Raw NASA TLX version [

40] was used, as it allows for comparison of individual dimensions without assigning weights, thus avoiding the loss of relevant information. Additionally, as supported in [

41], this version facilitates simpler application and contributes to more accurate responses.

-

The final section (administered at the end of the test) explores the user’s experience with the system. It comprises four subsections: the first assesses the user’s interest in the system as well as its aesthetic and pragmatic qualities through the short version of the AttrakDiff questionnaire, consisting of 10 pairs of opposing adjectives selected according to the user’s perception.

The second subsection includes 10 questions based on the SUS scale [

42] to assess system usability. Additionally, authors also include questions and statements (

Table 5) to evaluate aspects such as physical discomfort, enjoyment of the scenarios, and the information provided by the VE.

The remaining two subsections aim to identify specific errors encountered during the tests, whether within the VE or while using the wearable device, and to recognize opportunities for improvement.

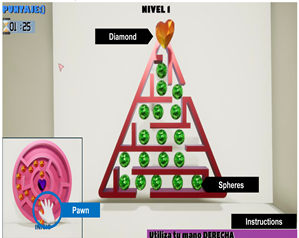

Before starting the session, participants were given time to familiarize themselves with the system using the Bubbles scenario. The duration of this familiarization phase depended on each participant. The goal was to ensure proper hand placement under the LMC device, help them identify the interaction area, and observe how their movements were represented in the virtual environment. This phase was not timed to create a relaxed, pressure-free atmosphere.

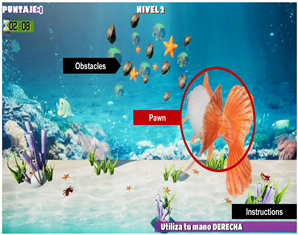

Next, the first part of the session began, using the participant’s dominant hand with the Bubbles, Maze, and Fish scenarios, in that order. After completing the third level of each scenario, a brief pause was taken to complete the second section of the questionnaire. Then, the tutorial for the next scenario was shown before continuing.

For the second part, the researcher reconfigured the system to enable control with the non-dominant hand and selected the “By game and level” mode, choosing the third level of the Fish scenario. Upon completion, participants were asked to complete the third and final section of the questionnaire.

3.2. Experimental Testing

Functionality tests were conducted using two groups. The first group used the system without the integration of the wearable device, while the second group tested the complete system. Furthermore, computer proficiency was assessed using a self-reported scale ranging from 0 (low experience) to 10 (high experience), This information was used to characterize participants’ technical background in both experimental groups.

- 1.

-

Group 1 (System without Wearable Device): This group consisted of 6 men and 7 women. Regarding educational background, 8 participants were undergraduate students, 4 were master’s students, and 1 was a PhD student.

Based on the data collected in the first section of the demographic questionnaire, participants rated their computer proficiency as follows: two rated themselves as 9, one as 8.5, six as 8, three as 7, and one as 6. Weekly computer usage was reported as follows: four users between 1 and 10 hours, two between 11 and 20 hours, four between 21 and 30 hours, and three for more than 40 hours per week.

Regarding video game usage, six participants reported playing seldom, three occasionally, and four frequently. Finally, eight participants were familiar with VR and had previously used a VR system. One participant was familiar with the LMC; in contrast, five participants reported knowledge of the Kinect sensor.

- 2.

-

Group 2 (Complete System): This group included 4 men and 5 women. Regarding educational background, six participants had completed high school, two held a bachelor’s degree, and one held a master’s degree.

Based on the data collected in the first section of the demographic questionnaire, participants rated their computer proficiency as follows: two rated themselves as 9, two as 8, one as 7, and four as 6. Weekly computer usage was distributed as follows: two participants reported between 10 and 20 hours, three between 21 and 30 hours, three between 31 and 40 hours, one between 41 and 50 hours, and one for 60 hours per week.

Regarding video game usage, one participant did not play video games, three played seldom, two played occasionally, and three played always. Lastly, eight participants were familiar with VR, and five of them had previously used a VR system. No participants were aware of the LMC or had previously used it; in contrast, six had interacted with the Kinect sensor.

4. Analysis of Results

To assess the subjective perception of the VR system, participants completed the short AttrakDiff questionnaire, which evaluates both pragmatic and hedonic qualities of interactive products. Also, to evaluate the cognitive and physical demands associated with each VR scenario, NASA-TLX was employed, which captures perceived workload across six dimensions. Finally, system usability was evaluated with the System Usability Scale, providing a global score of ease of use, learnability, and user satisfaction associated with the system.

4.1. User Experience with the AttrakDiff-Short Questionnaire

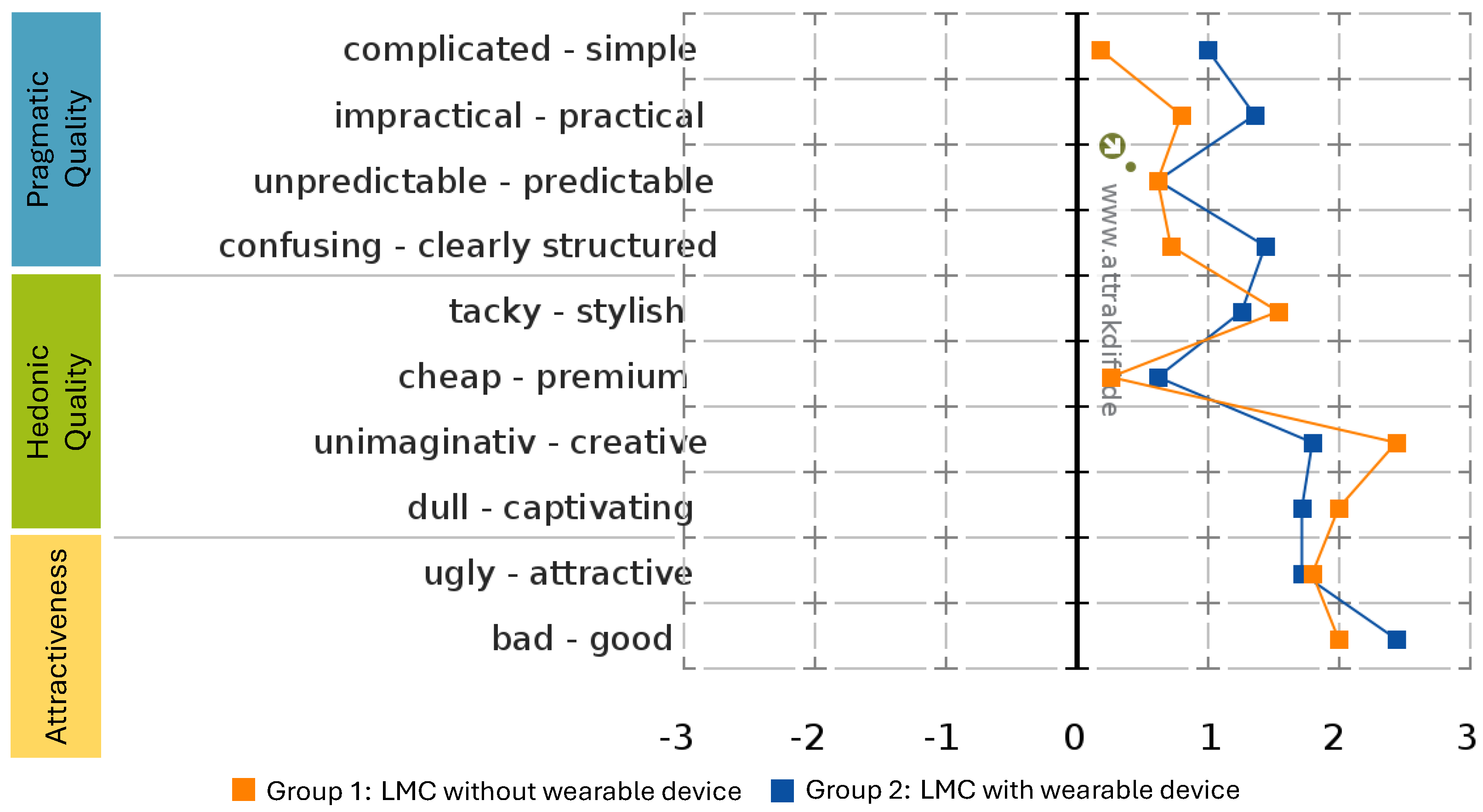

Figure 5 shows the results of the AttrakDiff questionnaire, comparing user perceptions of the VR system with and without the wearable device. Overall, both system configurations obtained positive values across most word pairs, indicating that the VR system was generally perceived as usable and acceptable, even without the wearable device. This suggests that the Leap Motion–based interaction alone provides a functional and understandable interface for upper limb rehabilitation tasks.

However, the system with the wearable device (Group 2) consistently achieved higher positive scores across all evaluated dimensions. In particular, it was perceived as more simple, practical, and clearly structured, reflecting strong pragmatic quality. Additionally, it stood out in hedonic aspects, being described as more premium. In terms of overall appeal, users rated it as more good compared to the version without the device.

In contrast, the system without the wearable device (Group 1), although positively evaluated, received comparatively lower ratings and occasionally approached negative perceptions such as cheap, uncreative, or complicated. These findings indicate that, while both systems are functional, the integration of the wearable device not only enhances system functionality but also significantly enriches the user’s subjective experience by improving engagement, perceived quality, spatial correspondene, and interaction realism, making the system more intuitive and attractive.

Based on the results shown in

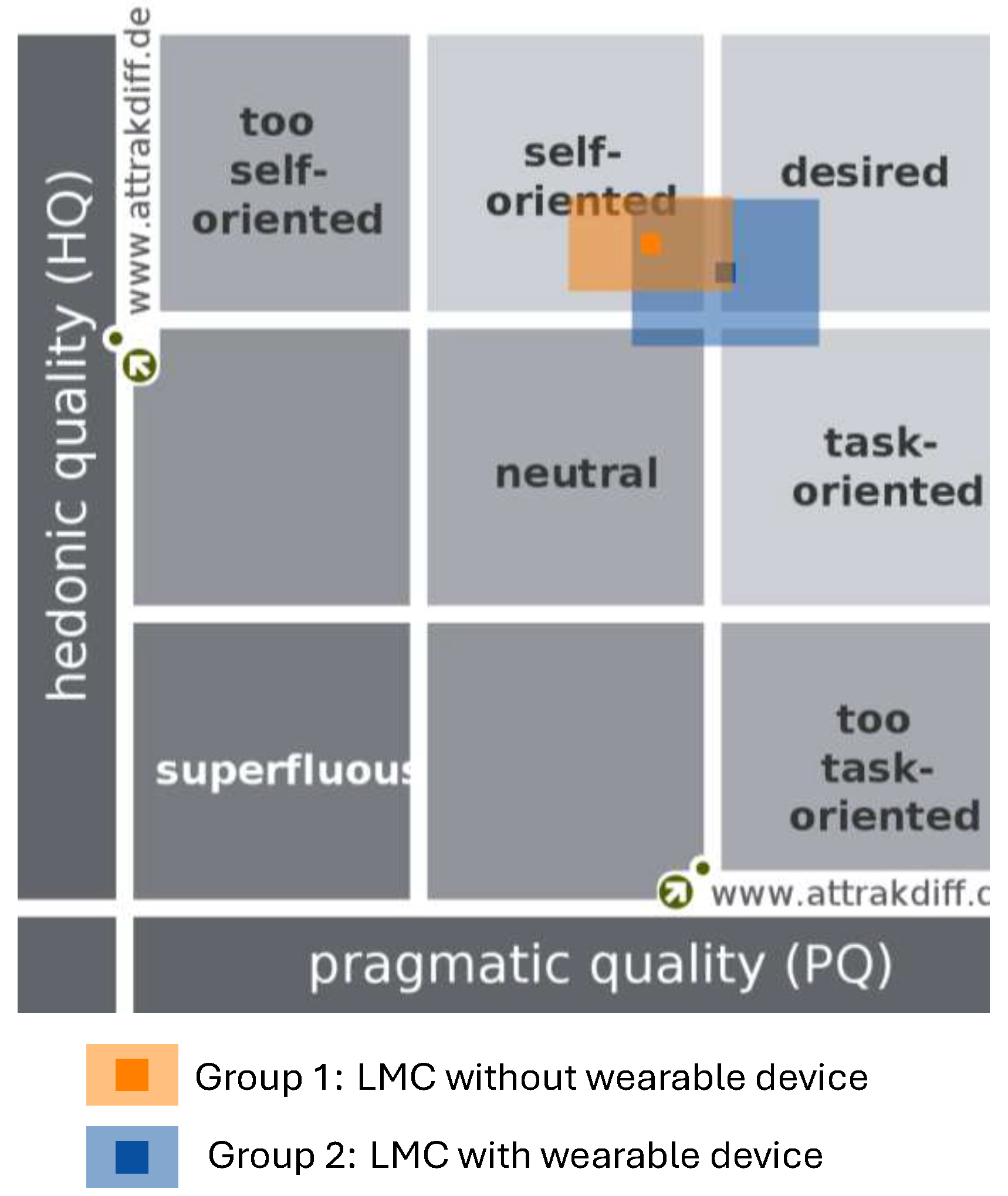

Figure 5, the system type representation presented in

Figure 6 was obtained. The VR system with the wearable device (represented in blue) was positioned within the

desired region, indicating that users perceived it as both highly functional and attractive. This positioning reflects an optimal balance between pragmatic quality (task effectiveness) and hedonic quality (user engagement), which characterizes an ideal user experience.

In contrast, the VR system without the wearable device (represented in orange) was located in the self-oriented region. Although this system was generally perceived as functional, its position suggests a less engaging experience, mainly limited by reduced pragmatic quality. Consequently, further improvements in task support and interaction effectiveness are required for the system to reach the desired region, which, according to the results, can be achieved through the integration of the wearable device.

4.2. NASA-TLX Workload

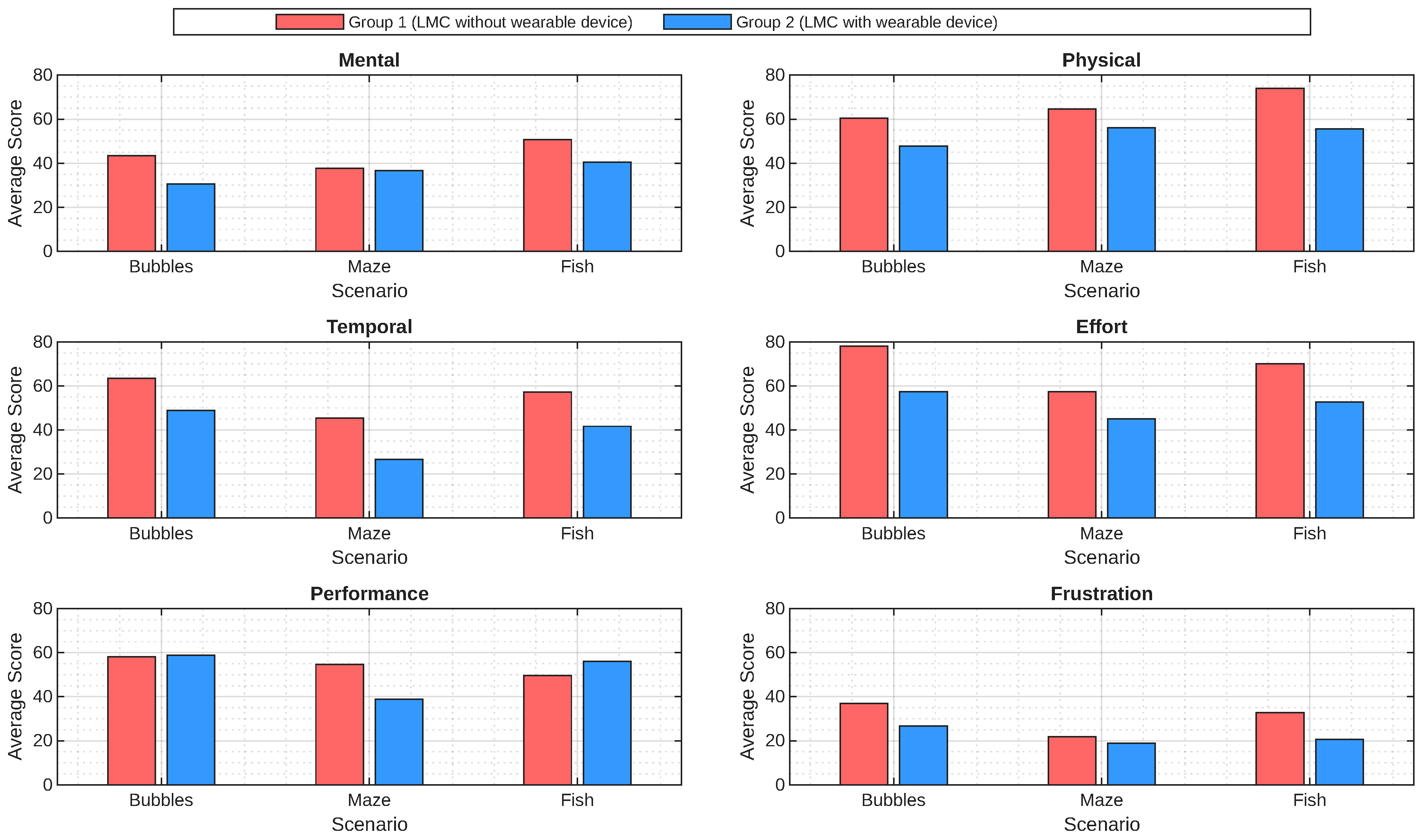

Figure 7 presents the average scores obtained for each dimension across the three virtual environment (VE) scenarios for both Group 1 and Group 2. These results allow for the evaluation of the cognitive and physical demands associated with each VR scenario.

Regarding the

Mental demand dimension, Group 1 reported average scores of 43.46, 37.69, and 50.76 in the

Bubbles,

Maze, and

Fish scenarios, respectively. As can be seen, Group 2 obtained values of 30.55 in

Bubbles, 36.66 in

Maze, and 40.55 in

Fish, Group 1 reported higher mental workload in the first and third scenarios, while a slight decrease is observed in the

Maze scenario when the wearable device is used. According to previous studies, scores between 30 and 50 are considered suitable for medium-complexity tasks that require sustained attention while avoiding mental fatigue [

43,

44,

45].

It is worth mentioning that keeping a low mental demand is particularly important in rehabilitation tasks, especially during the early learning phase, as high mental demand in VR applications can compromise motor learning [46].

In the Physical demand dimension, Group 1 reported average scores of 60.38 in Bubbles, 64.61 in Maze, and 74.07 in Fish, whereas Group 2 shown lower scores across all scenarios, with values of 47.77, 56.11, and 55.55 for the Bubbles, Maze, and Fish scenarios, respectively. These results indicate a higher perceived level of physical exertion in Group 1 compared with Group 2. The values obtained in Maze scenario indicate higher physical demand, associated with the involvement of multiple joints (shoulder, elbow, and phalanges), as well as with the wide range of the interaction area, which allows the execution of movements with greater amplitude. Similarly, the Fish scenario, which demands precise and controlled movements, was associated with higher perceived physical demand in Group 1 when controlling the pawn (represented by the fish). In contrast, Group 2 reported lower physical demand in this scenario when using the wearable device with vibrotactile feedback, which was associated with fewer compensatory movement patterns related to physical fatigue.

Regarding

Temporal demand, Group 1 shown average scores of 63.53 in

Bubbles, 45.38 in

Maze, and 57.15 in

Fish, whereas Group 2 obtained values of 48.88, 26.66, and 41.66 in the

Bubbles,

Maze, and

Fish scenarios, respectively.

Lower temporal demand values were consistently observed in Group 2 across all three scenarios. It is worth noting that the average scores obtained in the Maze scenario were lower for both groups compared with the other two scenarios, as level progression time depends on the speed at which the user performs the task. In contrast, in the Bubbles and Fish scenarios, level progression time is predetermined. According to [47], Temporal demand typically ranges from 14 to 61.5 and increases with task speed and resistance. Lower levels of urgency or time pressure have been shown to facilitate sustained engagement with the activity, which is particularly beneficial in rehabilitation contexts, as it reduces the likelihood of disengagement and supports task completion.

The results for the Effort dimension indicate that Group 1 consistently perceived a higher level of effort than Group 2 across all scenarios. In the Fish and Bubbles scenarios, Group 1 reported higher levels (70 and 78.07, respectively), whereas Group 2 (in Fish and Bubbles scenarios) reported moderate effort values (45 and 52.77). A similar trend was observed in the Maze scenario, where Group 1 reported (57.46) higher effort compared to Group 2 (45.55), although both groups remained within a moderate range. Overall, these findings suggest that the use of the wearable device was associated with a reduction in perceived effort, potentially indicating a more efficient interaction with the virtual environment.

For the

Performance dimension, the results indicate comparable perceived performance between both groups in the

Bubbles and

Fish scenarios. In

Bubbles, Group 1 obtained an average score of 58.07, while Group 2 scored 58.88, showing nearly identical perceptions of task success. Similarly, in the

Fish scenario, both groups reported close values, with Group 1 scoring 49.61 and Group 2 scoring 56.11. In contrast, a more pronounced difference was observed in the

Maze scenario. Group 1 reported an average score of 54.61, whereas Group 2 obtained a lower score of 38.88. Since lower scores in this dimension indicate a higher perceived level of performance, these results suggest that participants in Group 2 perceived their task execution in the

Maze scenario as more efficient. This dimension reflects how successful participants felt when completing the tasks; values closer to zero indicate a higher perception of performance, whereas values approaching 100 represent poorer perceived performance. According to [

41], intermediate scores such as those observed in this study indicate that participants did not perceive complete success, but also did not experience a strong sense of failure.

In this context, the relatively higher Performance scores observed in Group 2 in the Bubbles and Fish scenarios indicate a less favorable perception of task performance, despite successful task completion. This outcome may be related to the subjective nature of the Performance dimension, as well as to the time-constrained execution characterizing these scenarios, which may increase participants’ sensitivity to minor errors or deviations and influence their self-assessment of performance.

Finally, for the Frustration dimension, the results reveal a differentiated pattern across scenarios. Lower levels of frustration were observed in Group 2 for the Bubbles (26.66 vs. 36.92 in Group 1) and Fish (32.84 vs. 20.55 in Group 1) scenarios. These results suggest that the integration of the wearable device contributed to reducing negative emotional responses during interaction, likely by improving movement guidance, spatial correspondence, and feedback clarity. In contrast, in the Maze scenario, Group 1 reported lower frustration levels (21.92) compared to Group 2 (30.9). This difference may be associated with the higher cognitive and motor coordination demands imposed by the Maze task, where the additional vibrotactile feedback could have increased attentional load or task awareness in Group 2, leading to a higher perception of frustration despite improved performance.

Overall, the results indicate that participants in Group 2 experienced lower frustration in two out of the three evaluated scenarios, even in cases where perceived performance was not consistently higher. This pattern suggests greater tolerance to task difficulty and reduced self-imposed pressure when interacting with the complete system. Conversely, Group 1, although reporting comparable or better perceived performance in some scenarios, exhibited higher frustration levels, which may be related to increased expectations, reduced sensory feedback, or greater sensitivity to errors during task execution.

4.3. System Usability Scale (SUS)

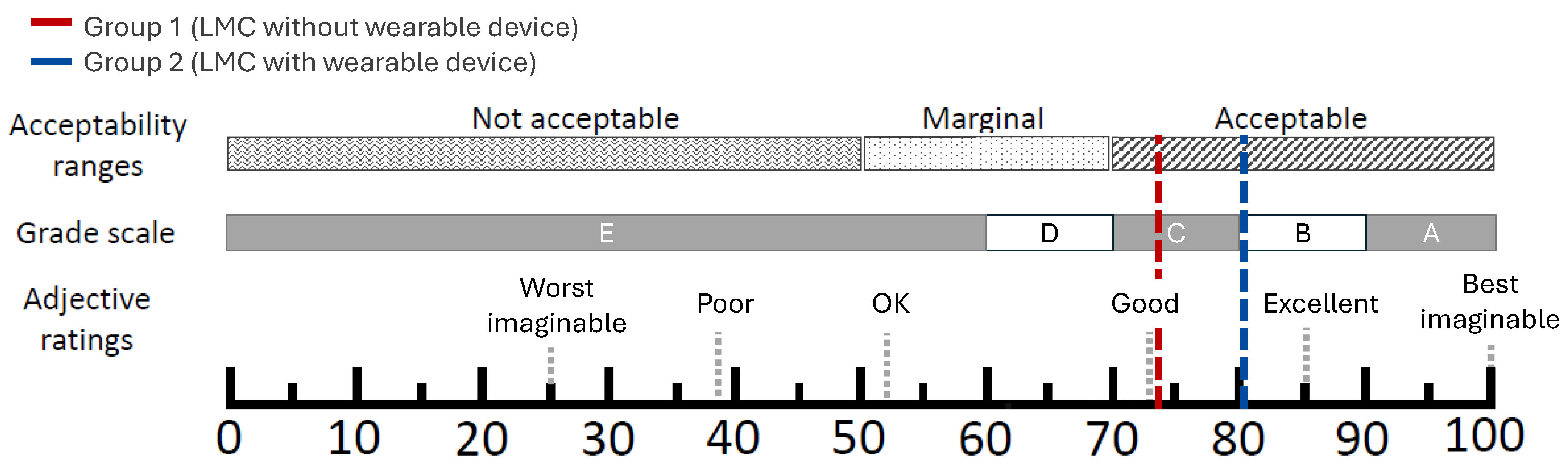

Table 6 and

Table 7 report the individual SUS scores obtained by participants in Group 1 and Group 2, respectively, and

Figure 8 summarizes the corresponding group averages within the standard SUS acceptability and adjective rating scales. Group 1 (system without the

wearable device) achieved an average SUS score of 74.42, which is above the widely used benchmark value of 68 and is therefore classified as

good usability [

48]. When the

wearable device was integrated, Group 2 reached a higher average SUS score of 80.83. According to the interpretation shown in

Figure 8, both averages fall within the

acceptable range; however, Group 2 shifts toward a higher adjective rating (from

good toward

excellent), indicating a clear improvement in perceived usability when using the complete system.

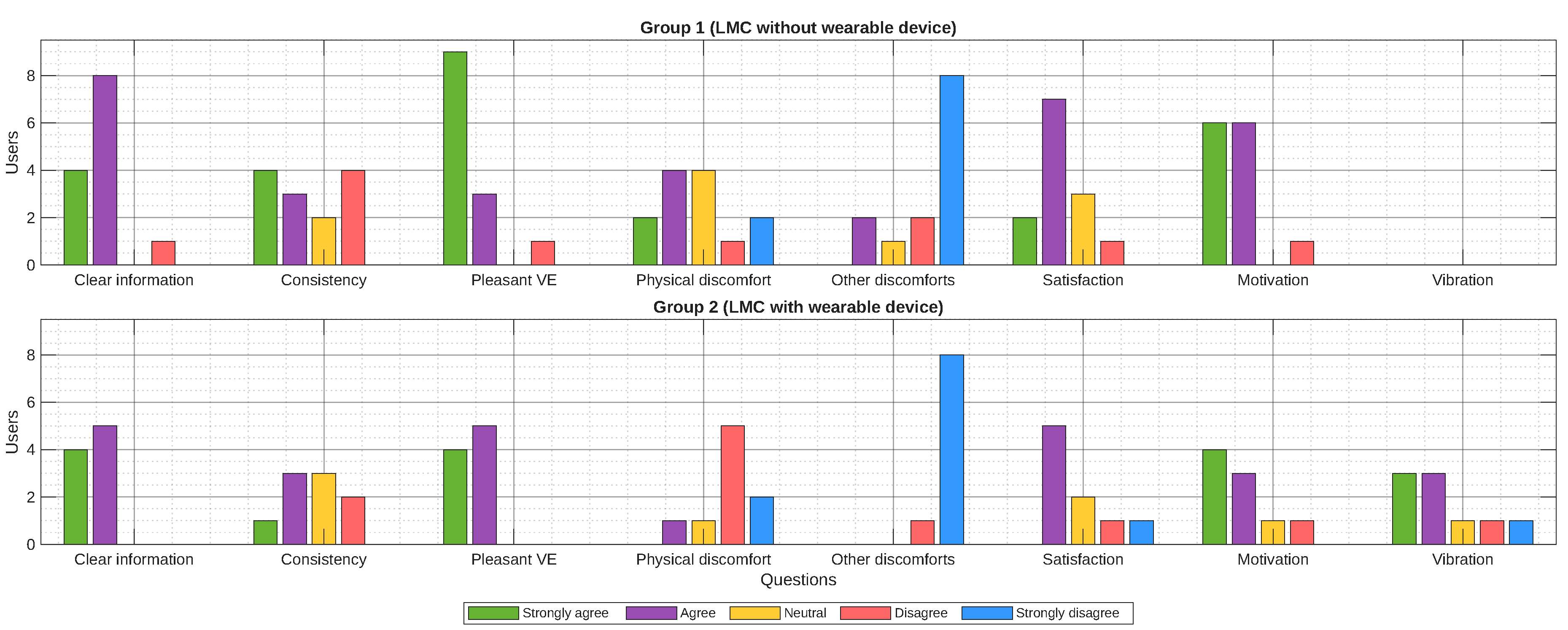

Regarding the statements and additional questions included in the SUS questionnaire, the results are presented in

Figure 9. Group 2 exhibits a higher concentration of positive responses, particularly in the categories

Clear information,

Consistency, and

Pleasant VE, where ratings of

Strongly agree predominate. These results indicate that participants perceived the information presented in the virtual environment as clear and coherent, and that the movements performed were consistent with those displayed on screen. Overall, Group 2 shows fewer negative responses compared to Group 1.

In contrast, Group 1 displays a more dispersed response pattern, with a greater tendency toward negative ratings such as Disagree and Strongly disagree, especially in aspects related to Satisfaction, Motivation, and the perception of discomfort. It is worth noting that although some participants in Group 2 reported experiencing physical discomfort, this factor did not significantly affect the overall evaluation of the system. Taken together, these findings suggest that the integration of the wearable device contributes to a more positive and consistent user experience.

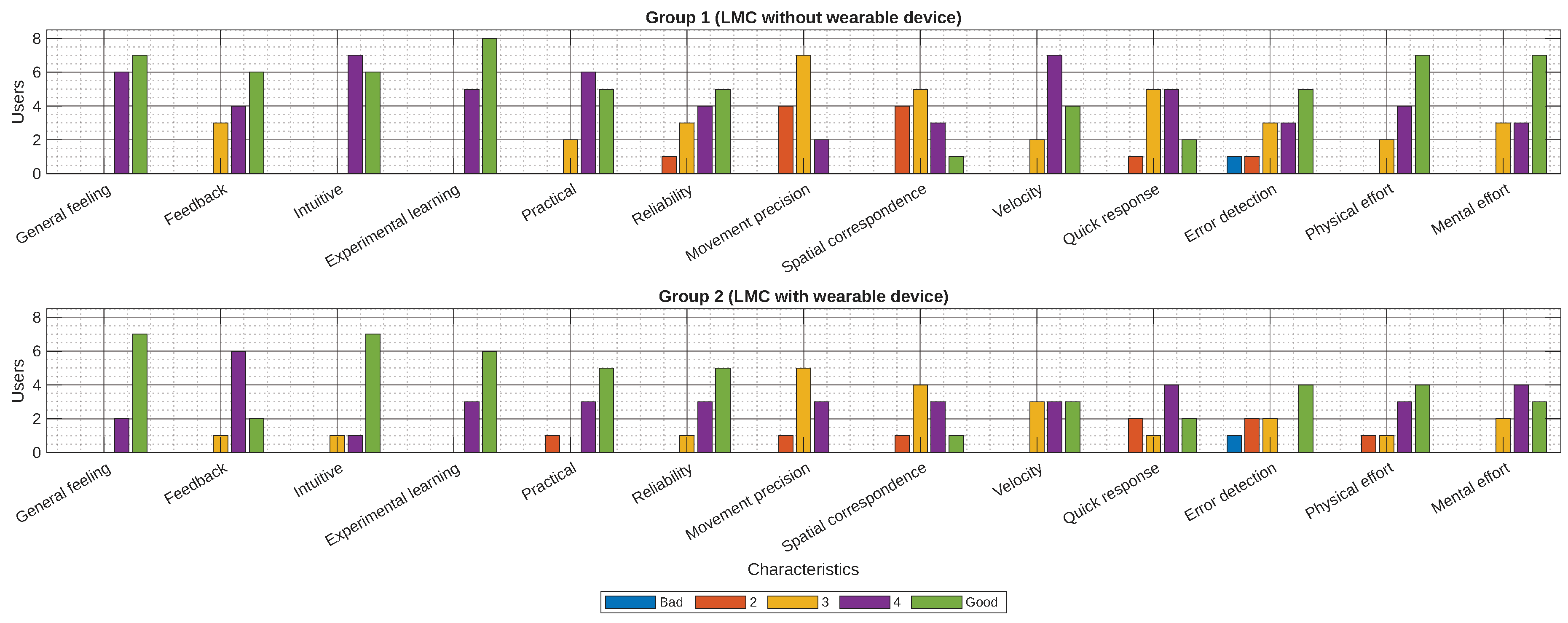

Figure 10 presents the results of the general system evaluation based on participant perception. When using the complete system (Group 2), none of the evaluated features received the lowest rating (1,

poor), whereas Group 1 recorded poor ratings in 9 out of the 13 assessed features. This contrast indicates a more consistently positive perception of the system when the wearable device was integrated. The most relevant characteristics are discussed below.

In Group 2, the feature Experimental learning received the highest ratings, followed by General feeling, with most scores ranging between 4 and 5. Similarly, participants in Group 1 also assigned high scores to Experimental learning; however, ratings for General feeling were more variable, ranging from 2 to 5. Experimental learning reflects the system’s ability to support learning through practice, whereas General feeling describes participants’ emotional state after completing the tasks, with lower ratings indicating stress or frustration and higher ratings reflecting curiosity or calmness.

For the Feedback feature, which evaluates the quality of information provided to the user, Group 2 obtained more positive ratings. This improvement can be attributed to the inclusion of vibrotactile feedback, which complemented the visual and auditory feedback available in Group 1.

Regarding Movement precision, defined as the perceived similarity between users’ physical gestures and their visual representation on screen, Group 2 received higher ratings than Group 1, indicating a clearer correspondence between action and visual feedback.

In terms of Spatial correspondence, which refers to how effectively feedback supports the identification of the relative position of virtual objects within the manipulation metaphor, Group 2 demonstrated a notable improvement. The vibrotactile feedback enhanced spatial perception within the virtual environment, allowing participants to identify object locations with greater accuracy.

Finally, for the Quick response feature, understood as the ability of both the virtual environment and the wearable device to respond promptly to user actions—participants in Group 2 reported a clearer sense of immediacy, which contributed to smoother and more fluid interaction.

Overall, the results obtained from the SUS, AttrakDiff, and NASA-TLX evaluations provide a consistent and complementary assessment of the proposed system. The SUS results indicate that both configurations achieved acceptable usability levels, with a clear improvement when the wearable device was integrated. This improvement is further supported by the AttrakDiff analysis, where the complete system was positioned in the desired region, reflecting a balanced combination of pragmatic quality and hedonic appeal, while the system without the wearable device remained in a more self-oriented region. Finally, the NASA-TLX results reveal that the inclusion of the wearable device generally reduced perceived workload, frustration, and temporal pressure, while improving perceived performance in key scenarios. Together, these findings suggest that the wearable device not only enhances usability but also positively impacts user experience and interaction quality, resulting in a more efficient, engaging, and user-centered virtual rehabilitation system.

5. Discusion and Conclusions

The results obtained in this study allow a comprehensive discussion of the proposed system from the perspectives of usability, user experience, and perceived workload. The evaluation outcomes directly address the objectives established in

Section 1, particularly the assessment of whether the integration of a wearable vibrotactile device improves interaction quality and user experience in a virtual environment for upper-limb rehabilitation.

From a usability standpoint, the SUS results indicate that both system configurations achieved acceptable usability levels. However, the system incorporating the wearable device consistently outperformed the baseline configuration, reaching higher usability ratings and a more favorable adjective classification. This suggests that while Leap Motion–based interaction alone is sufficient to support task execution, the addition of vibrotactile feedback provides a clearer and more efficient interaction paradigm.

The AttrakDiff evaluation further supports this observation. Participants interacting with the complete system positioned it within the desired region, reflecting a balanced combination of pragmatic quality and hedonic quality. In contrast, the system without the wearable device was perceived as more self oriented, indicating that although it was functional, it lacked elements that promote engagement and emotional involvement. These findings highlight the relevance of hedonic factors in rehabilitation oriented virtual environments, where sustained motivation and engagement are essential.

Workload related results obtained through the NASA-TLX questionnaire provide additional insight into user interaction. In general, participants using the wearable assisted system reported lower levels of frustration, temporal demand, and perceived effort across most scenarios. Even in tasks where performance differences were not pronounced, reduced frustration suggests greater tolerance to task difficulty and a more comfortable interaction experience. This is particularly relevant in rehabilitation contexts, where excessive cognitive or temporal pressure may negatively affect adherence.

A key factor underlying these improvements is spatial correspondence. The results show that vibrotactile feedback significantly enhances the perception of alignment between real and virtual actions, allowing users to identify the relative position of virtual objects with greater accuracy. Improved spatial correspondence not only contributes to better task execution but also strengthens immersion and confidence during interaction. These findings reinforce the role of multimodal feedback especially haptic cues in improving interaction quality within desktop based virtual rehabilitation systems.

Despite these advantages, some limitations were identified. In certain scenarios, such as the Maze task, the addition of vibrotactile feedback may increase attentional demands, leading to slightly higher perceived frustration. This suggests that feedback intensity and task complexity must be carefully balanced to avoid cognitive overload, particularly in more constrained or precision oriented tasks.

This work presented the design and evaluation of a wearable-assisted virtual system for upper limb rehabilitation. Integrating Leap Motion based interaction with vibrotactile feedback. The experimental results demonstrate that the inclusion of the wearable device leads to consistent improvements in usability, user experience, and perceived workload, fulfilling the primary objectives of the study.

The combined analysis of SUS, AttrakDiff, and NASA-TLX evaluations confirms that the wearable device enhances interaction quality by enriching feedback, improving spatial correspondence, and reducing frustration and temporal pressure during task execution. While the baseline system already provides acceptable usability, the complete system achieves higher usability ratings and a more favorable balance between pragmatic and hedonic qualities, resulting in a more engaging and intuitive user experience.

From a broader perspective, these findings underline the importance of incorporating haptic feedback into virtual rehabilitation environments, particularly in desktop based systems where immersion is otherwise limited. The proposed system demonstrates the potential of wearable vibrotactile devices to strengthen the perception of real virtual alignment and support more natural and effective interaction.

Nevertheless, this study represents an initial validation of the proposed approach. Future work will focus on further miniaturization of the wearable device to reduce weight and visual impact, as well as on refining feedback strategies to better adapt to task complexity. In addition, closer involvement of clinical specialists and future clinical validation studies will be essential to assess the system’s therapeutic effectiveness and its impact on motor rehabilitation outcomes.

Finally, it is important to emphasize that the proposed system is intended as a complementary tool to conventional physical therapy rather than a replacement for healthcare professionals. It supports rehabilitation processes by improving engagement, interaction quality, and user experience in virtual environments designed for motor rehabilitation.