B. Experimental Results

This paper first conducts a comparative experiment, and the experimental results are shown in

Table 1.

As shown in

Table 1, there are significant performance differences among different models in backend load prediction tasks. Traditional time-series models, such as LSTM and BILSTM, show advantages in capturing the temporal dependencies of single nodes. However, due to their lack of ability to model structural relationships between services, their prediction accuracy is limited in complex topological environments. The MLP model can statically fit multidimensional features, but it fails to effectively represent temporal evolution. This results in higher errors, especially in the MAPE metric. These observations indicate that single-dimensional modeling based solely on time or feature variables cannot fully capture the dynamic coupling characteristics and nonlinear load variations of backend systems.

Transformer and BERT models, which are based on attention mechanisms, achieve higher prediction accuracy than traditional methods. This suggests that global dependency modeling contributes positively to capturing backend load variations. However, these models still focus mainly on temporal sequence correlations and do not fully exploit structural information among services. The Informer model performs more stably in long-sequence prediction, with further reductions in MSE and RMSE metrics. This demonstrates that the sparse attention mechanism improves adaptability to long-term dependencies. Nevertheless, when facing dynamic topologies and parallel multi-node loads, its ability to generalize structural relationships remains limited.

The model proposed in this paper achieves the best results across all four evaluation metrics, showing significant improvements in MSE and MAE compared with other methods. This demonstrates the effectiveness of graph-structured temporal dynamic learning in complex backend systems. By jointly modeling time-varying topologies and temporal node features, the model captures load propagation and structural dependencies with fine granularity, maintaining stable prediction performance in dynamic environments. The results confirm that a modeling framework integrating spatial structural constraints with temporal dynamics can better represent the real operational patterns of backend systems, providing a reliable predictive foundation for resource scheduling and performance optimization in cloud platforms.

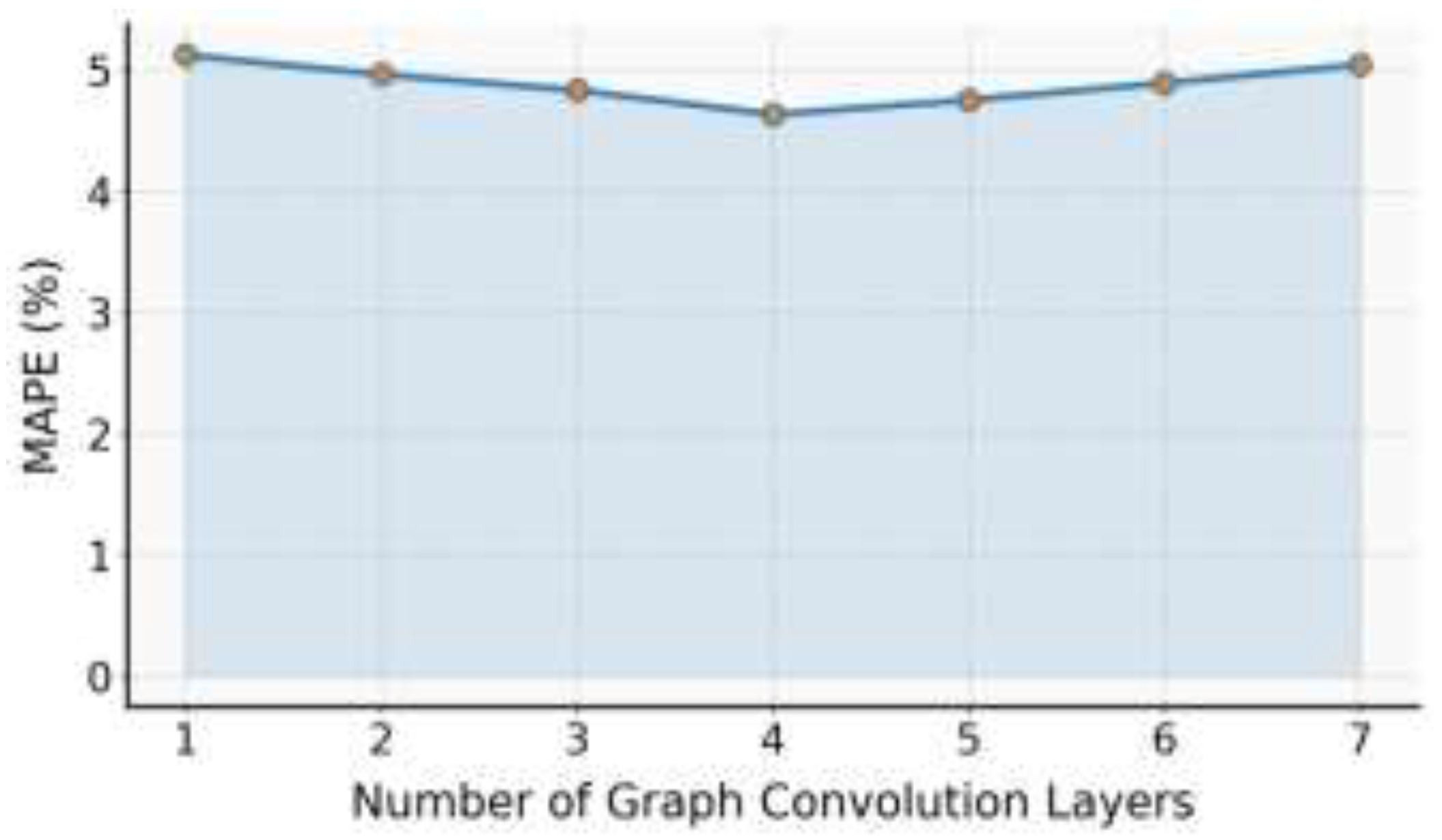

This paper also presents an experiment on the sensitivity of the number of graph convolution layers to the MAPE model, and the experimental results are shown in

Figure 2.

As shown in

Figure 2, the number of graph convolution layers has a noticeable impact on the model’s MAPE metric. When the number of layers increases from one to four, the MAPE value shows a decreasing trend. This indicates that adding more convolution layers effectively enhances the model’s ability to represent structural dependencies among nodes. Deeper graph convolution layers can aggregate information from a wider range of neighboring nodes, allowing the model to capture more complex backend service topology features. As a result, the model performs better in global structure perception and load variation modeling. This stage of performance improvement suggests that a moderate increase in layer depth helps strengthen the model’s spatial feature extraction capability.

However, when the number of graph convolution layers exceeds four, the MAPE value rises slightly. This suggests that an overly deep structure may introduce excessive feature smoothing or noise accumulation. As the number of layers increases, node features may lose distinctiveness through repeated propagation, which weakens the model’s sensitivity to local features and dynamic dependencies. This effect is more pronounced under dynamic topologies, since the relationships among nodes change over time. In such cases, deeper convolutions may cause temporal and spatial features to mix improperly, reducing the model’s generalization performance.

Overall, the number of graph convolution layers plays a balancing role in spatiotemporal joint modeling. A shallow structure cannot capture global dependencies effectively, while an overly deep one may lose fine-grained dynamic features. Experimental results show that a four-layer convolution structure achieves a good balance between spatial structure perception and temporal dynamic learning. This demonstrates the adaptive capability of graph-structured temporal dynamic learning algorithms in complex backend load prediction scenarios.

The sensitivity analysis further confirms the importance of hierarchical design in the model architecture. A reasonable convolution depth not only enhances the stability of spatial feature aggregation but also maintains robustness in dynamic environments. This finding provides valuable guidance for optimizing graph model architectures in cloud system environments. It shows that hierarchical modeling mechanisms that consider topological changes have significant practical value and theoretical importance in load prediction.

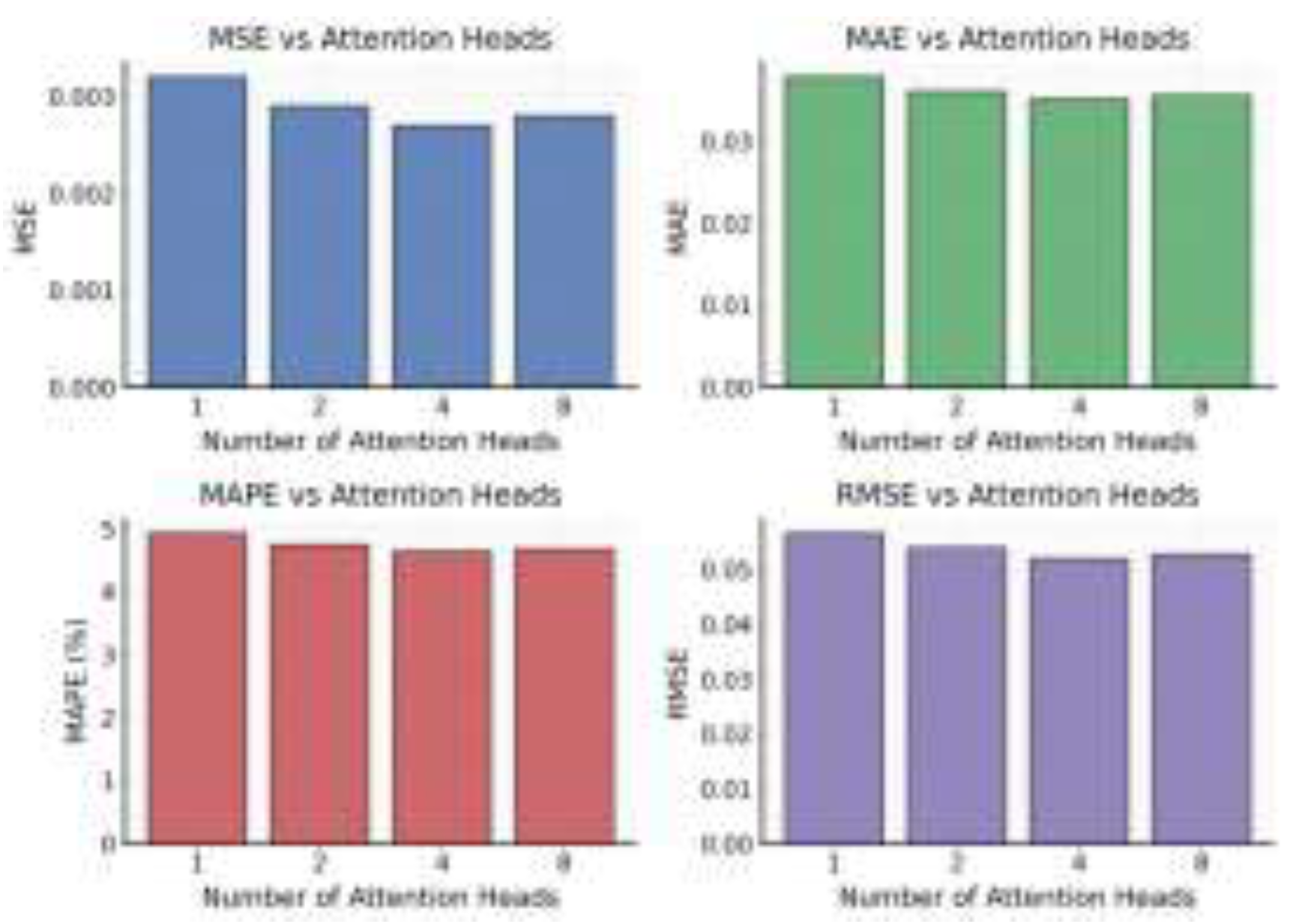

This paper also presents the impact of the number of attention heads on the experimental results of the model, as shown in

Figure 3.

As shown in

Figure 3, the number of attention heads has a clear regulating effect on the overall prediction performance of the model. When the number of attention heads increases from one to four, the MSE, MAE, MAPE, and RMSE values all show a downward trend. This indicates that the multi-head mechanism allows the model to capture more comprehensive multidimensional dependencies within the input sequence. With more attention heads, the model gains stronger abilities in feature decomposition and multi-perspective information aggregation, which improves its global perception when modeling multisource monitoring data from backend systems. This mechanism enhances the resolution of spatiotemporal features and helps the model maintain stable predictive performance under complex topological structures and dynamic dependency conditions.

When the number of attention heads continues to increase to eight, the model’s performance slightly declines. This suggests that too many attention heads can lead to feature redundancy and information interference. Since the multi-head mechanism requires competition among attention weights across different dimensions, an excessive number of heads may introduce noise. As a result, key features can become weakened or averaged, reducing prediction accuracy. This effect is more pronounced when dealing with high-dimensional load metrics and multi-node dependencies, showing that the attention head configuration must balance representational capacity and computational complexity.

From the perspective of joint spatial-temporal modeling, an appropriate number of attention heads strengthens the model’s sensitivity to both local anomalies and global trends in backend systems. A moderate number of heads ensures effective information fusion across multi-node topologies and enhances robustness in capturing temporal features. Especially under dynamic topology conditions, the attention head mechanism helps the model identify critical relational patterns among key nodes, preventing over-smoothing or loss of feature information. This leads to improved generalization performance in overall prediction.

In summary, the model achieves its best performance with four attention heads, indicating that this configuration provides an optimal balance between global dependency modeling and feature diversity. The experimental results further confirm the essential role of the multi-head attention mechanism in complex system load prediction. They also provide interpretable and effective guidance for parameter optimization in dynamic modeling of backend services. This finding offers theoretical and practical support for future adaptive structural adjustment and lightweight deployment of such models.

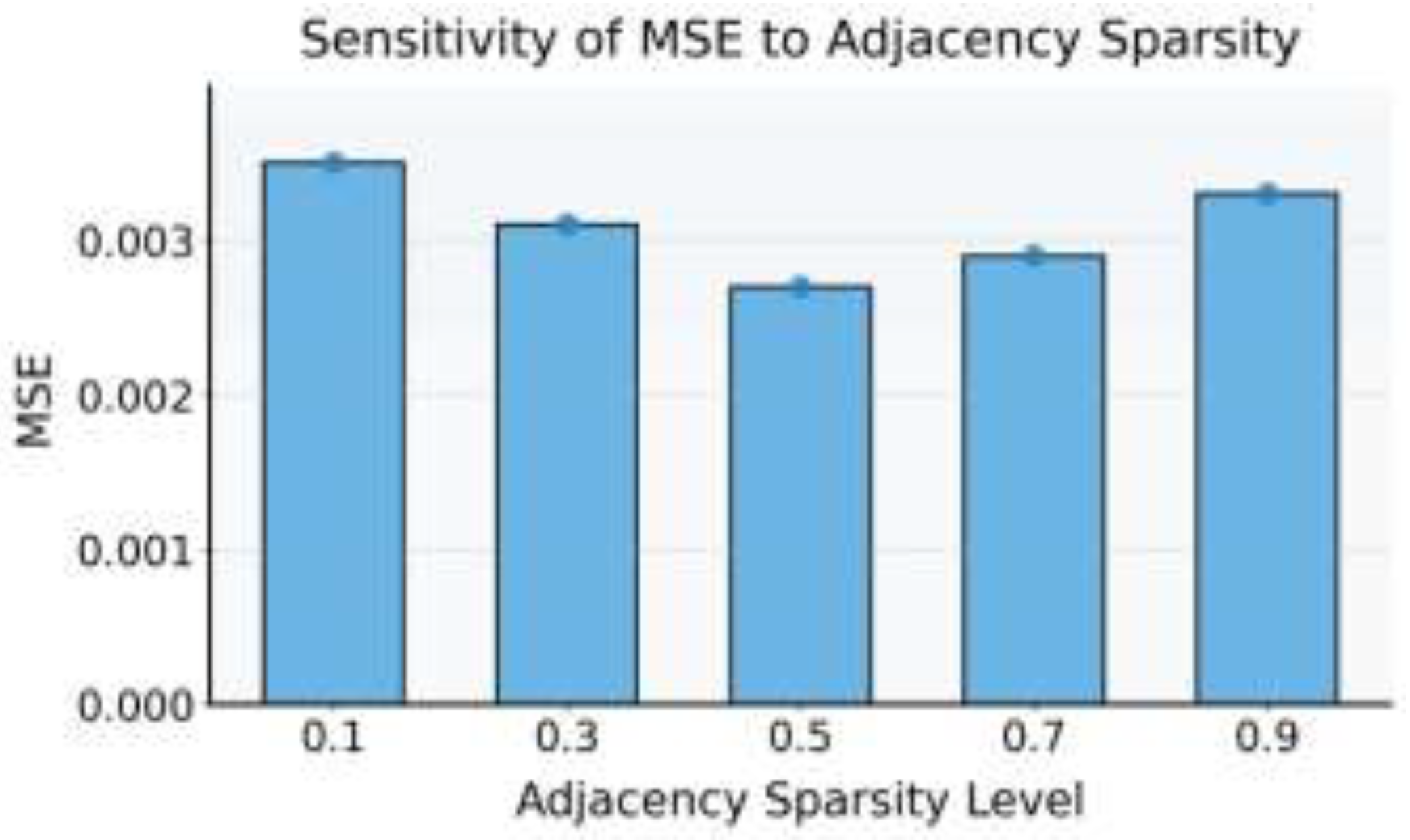

This paper also presents an experiment on the sensitivity of adjacency sparsity to the model’s MSE, and the experimental results are shown in

Figure 4.

As shown in

Figure 4, the sparsity of the adjacency matrix has a significant impact on the model’s MSE metric. When the sparsity increases from 0.1 to 0.5, the MSE gradually decreases. This indicates that moderately increasing the sparsity of the adjacency matrix helps improve prediction accuracy. The trend suggests that an overly dense graph structure may introduce redundant edge information, causing noise accumulation during feature propagation and reducing the model’s ability to identify key structural relationships. By controlling the sparsity of the adjacency matrix, the model can focus more effectively on information exchange between highly correlated nodes, thereby strengthening the expression of structural dependencies during feature propagation.

When the sparsity exceeds 0.5, the MSE shows a slight increase. This indicates that an overly sparse adjacency matrix weakens the structural integrity of the system, making it difficult for the graph convolution layers to capture sufficient contextual information. As a result, the model’s global perception ability becomes limited, and long-range dependencies between nodes cannot be fully modeled, leading to prediction deviations. In dynamic topology environments, excessive sparsity means that the model cannot capture multilayer relational information under changing topologies, which affects the continuity and stability of spatiotemporal feature fusion.

Overall, the sparsity of the adjacency matrix plays a crucial balancing role in graph-structured temporal dynamic learning. A reasonable sparsity setting achieves an optimal trade-off between feature redundancy reduction and structural preservation. It allows the model to focus on high-value dependencies while maintaining global topological consistency. Experimental results show that when the sparsity is around 0.5, the model achieves the best MSE performance. This further confirms the effectiveness of the proposed method in graph structure optimization and dynamic relationship modeling.

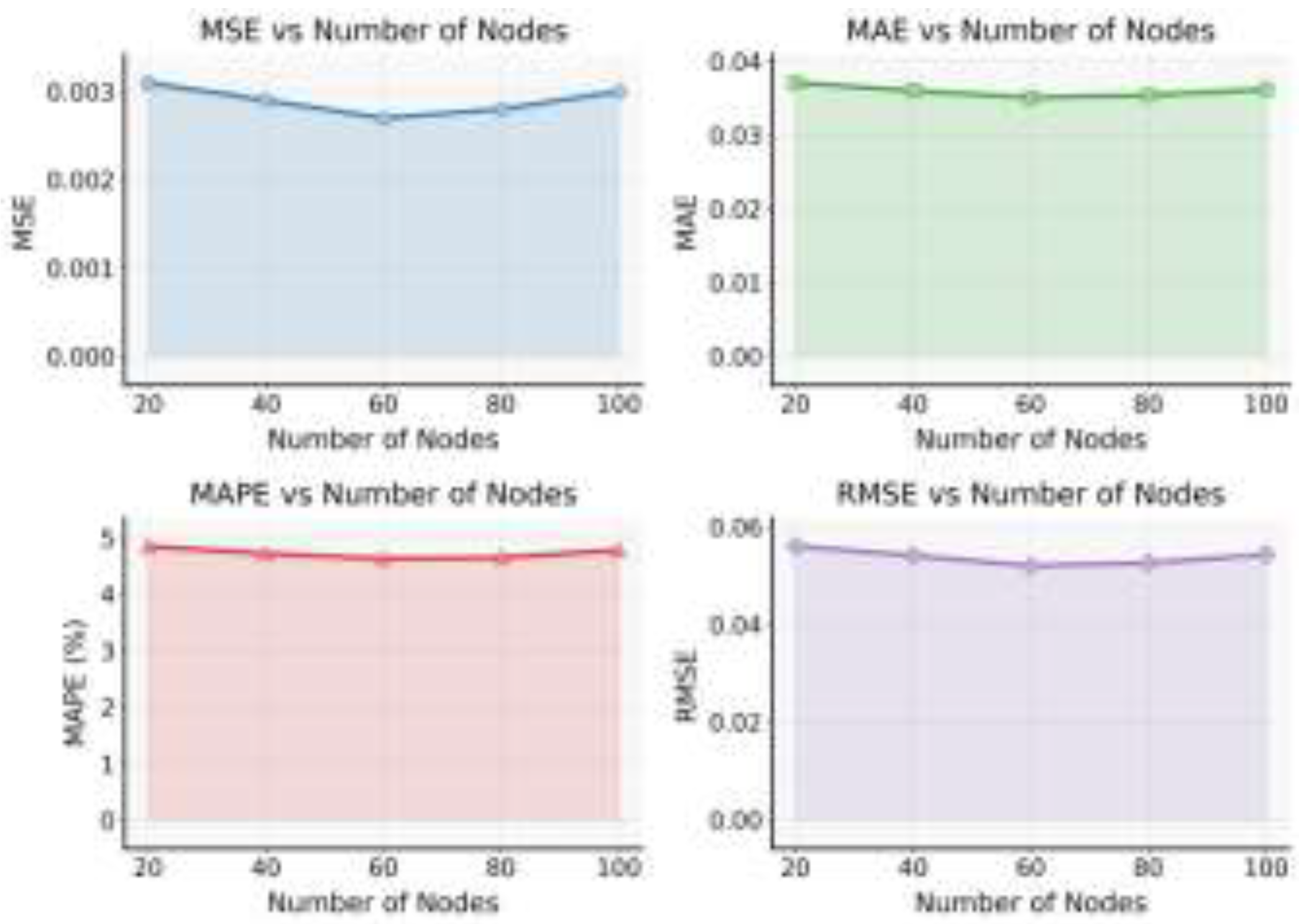

This paper presents the impact of the number of nodes on the experimental results of the model, and the experimental results are shown in

Figure 5.

As shown in

Figure 5, the number of nodes has a clear impact on the overall performance of the model. When the number of nodes increases from 20 to 60, the MSE, MAE, MAPE, and RMSE values all decrease. This indicates that as the network scale expands, the model can learn more structural features and interaction patterns among services. A larger number of nodes provides richer topological information for the graph structure, allowing the model to develop stronger structural perception during spatial feature propagation. As a result, the model achieves higher accuracy in dynamic load prediction tasks. This stage of performance improvement shows that increasing the number of nodes strengthens the model’s ability to model global dependencies and helps capture the multi-level characteristics of complex backend systems.

When the number of nodes continues to increase to 100, all four metrics rise slightly. This suggests that an excessively large graph structure introduces computational complexity and feature redundancy. With more nodes, noise diffusion and gradient smoothing can occur during feature propagation and aggregation, which reduces the distinctiveness of feature representations. In addition, some nodes carry light loads or weak correlations in the system. Their excessive participation can weaken the relationships among high-impact nodes, leading to information dilution during spatiotemporal feature fusion. Therefore, the increase in node count does not monotonically improve model performance and must be controlled according to system scale and dependency density.

From the perspective of spatiotemporal modeling, the number of nodes determines the density of the graph and the complexity of propagation paths. When the number of nodes is small, the model mainly relies on local features for prediction, which may cause it to overlook global topological information. When the number of nodes is too large, the propagation paths become excessively long, and the model may face time-delay accumulation and association drift during temporal dynamic modeling. A reasonable node scale achieves a balance between local sensitivity and global consistency, enabling the model to maintain structural stability and predictive robustness under dynamic topology variations.

Overall, the model achieves the best performance when the number of nodes is moderate, around 60. This verifies the adaptive capability of the graph-structured temporal dynamic learning algorithm in modeling complex backend systems. The result shows that the model maintains good generalization and stability across different node scales, providing valuable insights for dynamic scheduling and resource allocation in backend services. This finding further highlights the importance of structural scale design in load prediction and provides both theoretical and practical guidance for efficient modeling in large-scale cloud systems.