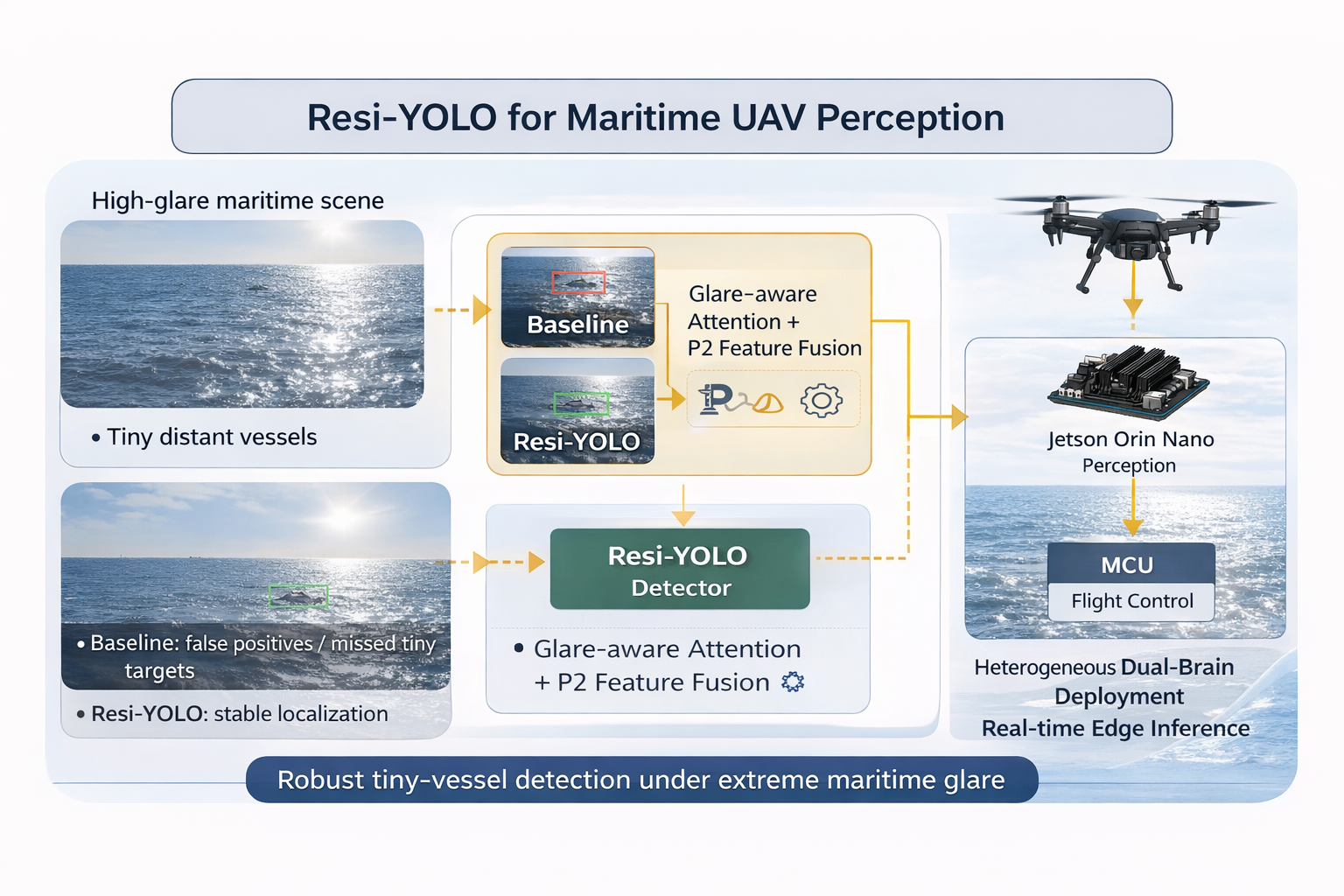

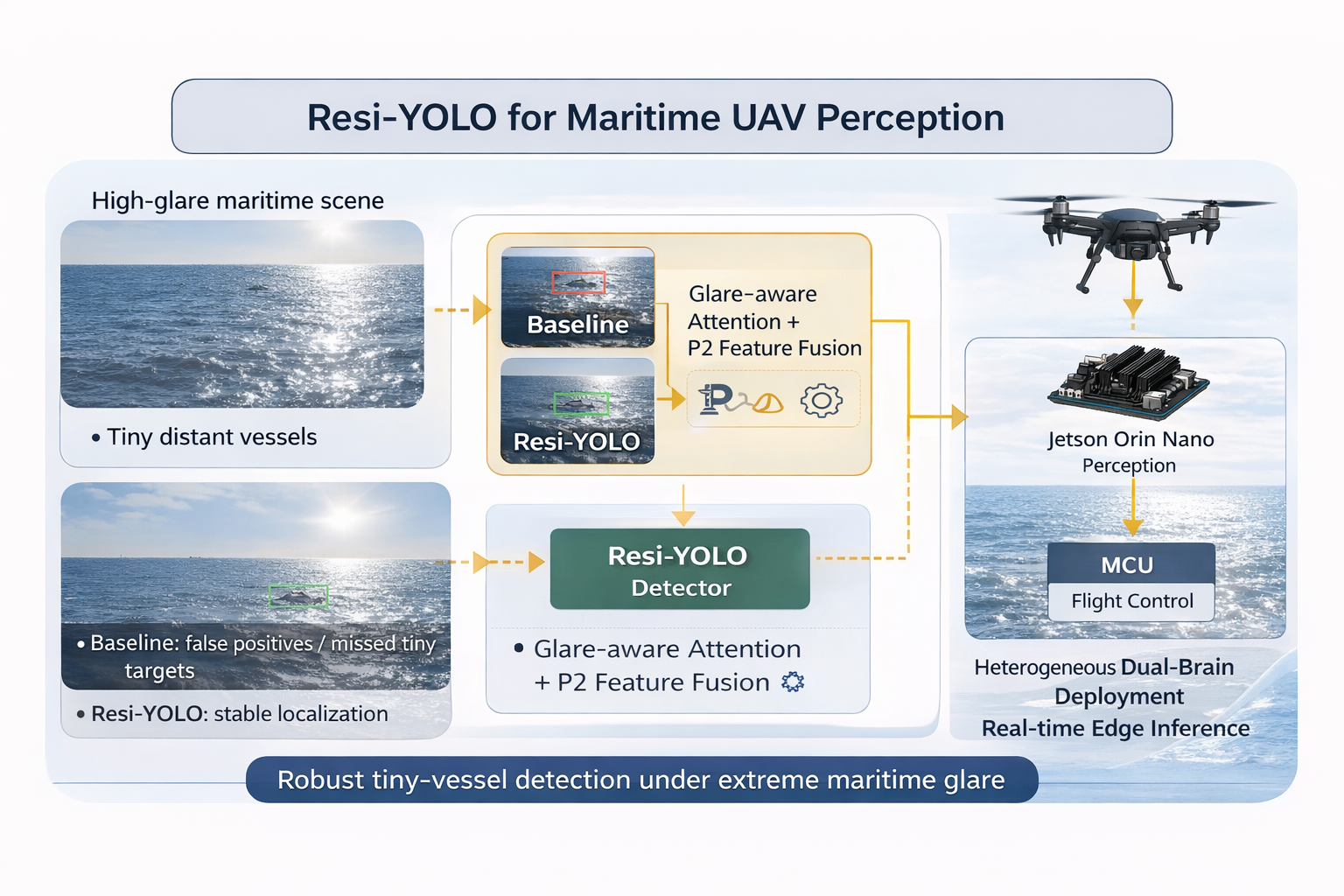

Maritime UAV perception must reliably detect and track tiny vessels under harsh specular glare. In practice, detection failures are dominated by two coupled factors: (i) vessels often occupy only a few pixels, causing small-object recall collapse, and (ii) sun glint and sea-surface reflections generate over-exposed regions that trigger false positives and unstable associations. This paper presents Resi-YOLO, a system-level pipeline that improves tiny-vessel sensitivity while preserving embedded throughput on a Jetson Orin Nano. At the model level, Resi-YOLO combines a P2-enhanced feature path with an attention-based glare suppression module to strengthen high-resolution semantics and suppress glare-induced artifacts; optional SAHI-style slicing is supported for ultra-high-resolution scenes. At the system level, we adopt a heterogeneous dual-brain deployment, where the Orin Nano performs primary inference and an MCU-based safety-island tracker mitigates delay/jitter via time-stamped measurement replay and IMM-UKF updates. We further define a Glare Severity Score (GSS) to stratify evaluation by illumination intensity for transparent robustness reporting beyond average mAP. Experiments on maritime detection and tracking sequences demonstrate consistent improvements over YOLO baselines in tiny-object regimes and high-glare conditions, while sustaining real-time operation with approximately 100 ms end-to-end latency on the Orin Nano under TensorRT FP16 deployment.