1. Introduction

Target detection in resource-constrained environments is a major challenge. By studying target detection algorithms in resource-constrained environments, we can provide great help for personnel search and rescue, traffic management and other civilian fields. As the representative of resource-constrained environments, the battlefield environment includes desert, jungle, mountain, city, ocean, etc. The diversified environment can provide a good research support for us. By studying the military target recognition algorithm in the battlefield environment, its technology can be further applied to personnel search and rescue, traffic management and other aspects. The battlefield environment is a complicated, informatization data space, and the detection algorithms must be most incredibly responsive and specific, adapting to a continuously changing panorama for more and more intelligence accumulating and opportunity evaluation [

1,

2,

3]. The development of efficient and robust target detection strategies has become a hot research direction in resource-constrained environments.

The target detection algorithm in resource-constrained environments is constantly optimized. The first such approaches were based on segmentation techniques, which have separated possible targets from the background elements [

4]. Fuzzy inference systems were later added to these to deal with environmental uncertainties and classification ambiguities [

5]. Breakthroughs further took the form of Charge Coupled Device image processing for greater detection in variable lighting [

6]. All weather detection of submarines was obtained by fusing SAR data to early detection schemes, greatly improving capabilities [

7,

8].

Being applied as a target detection milestone, the ICA was a key milestone in the evolution of military target detection. Idea of ICA is shown by Twari et al. [

9] in which it is used on hyperspectral images to detect military targets, which provides better distinction between camouflaged objects and their surrounding environment. Consequently, spectrally modeled algorithms in conjunction with ICA resulted in improvements of detection accuracy in a resource-constrained environment that is complex.

However, deployment of such advancements in cluttered, unpredictable operational settings turned out to be a challenge for traditional methods. With high sensitivity to the variations in target appearance and orientation together with the environmental factors, they were dependent on the manually engineered features and thus could not be practically utilized in real resource-constrained scenarios.

The emergence of deep learning to know has revolutionized object detection methodologies, heralding a paradigm shift. The improvement of convolutional neural networks, like SqueezeNet [

10], set the level for more effective detection frameworks. The following improvement of the area-based totally CNN (R-CNN) family, such as rapid R-CNN [

11], Faster R-CNN [

12], and Mask R-CNN [

13], resolved some of the shortcomings from the early designs and made the manner for extra robust detection structures.

At the same time, single stage detection frameworks and the Unmarried Shot MultiBox Detector (SSD) [

14] as well as the YOLO family of detectors [

15] appeared, simultaneously offering computationally efficient solutions that favored speedy detection at the expense of precision. The assignment of finding a balance between computational efficiency and precision, however, keeps forming research in the field.

Target detection in resource-constrained environments is extremely challenging with respect to conventional object detection. But objectives in resource-constrained environments are typically small on a sensor scale of view, are often partially obscured. Also, complex situations with changing climate, not to mention limited computational resources and need for real-time processing, make it intractable to develop powerful detection systems.

Despite such progress in civilian object detection using deep learning frameworks, their use in resource-constrained environments would be constrained. However, although a variety of conventional detection algorithms have been developed using general purpose approaches for general use, the performance of these algorithms in complex environments is not ideal. Two challenges remain: (1) Algorithms need to adapt to complex and diverse environments; (2) The computational burden of the most advanced model exceeds the available resources for tactical problems, which brings great inconvenience to use.

This paper presents a novel approach to target detection in resource-constrained environments that specifically addresses these challenges through a hierarchical feature fusion architecture optimized for multiscale, camouflaged target detection while maintaining computational efficiency. Our key contributions include:

A lightweight MSCDNet (Multi-Scale Context Detail Network) architecture that solves the computational resource constraints in resource-constrained environments.

A Multi-Scale Fusion (MSF) module that addresses the challenge of detecting targets with significant dimensional variations, camouflage, and partial occlusion.

A Context Merge Module (CMM) that overcomes the difficulty of integrating features from different scales for comprehensive target representation.

A Detail Enhance Module (DEM) that preserves critical edge and texture details essential for distinguishing camouflaged targets in complex environments.

The remainder of this paper is organized as follows:

Section 2 reviews related work in object detection and military target recognition, so as to provide reference for us to solve target recognition under limited resource environments in the civil field.

Section 3 details our proposed methodology, including the YOLO11n architecture, Multi-Scale Fusion module, Context Merge Module, and Detail Enhance Module.

Section 4 presents experimental results and comparative analysis.

Section 5 concludes with a summary of findings and directions for future research.

2. Related Work

2.1. Traditional Military Target Detection Methods

Early military target detection relied on distinctive visual characteristics through edge and contour features. Sun et al. [

16] proposed adaptive boosting for SAR automatic target recognition, demonstrating how ensemble learning could improve feature-based detection in radar imagery. The application of texture-based features such as Histogram of Oriented Gradients (HOG) [

17] and Local Binary Patterns (LBP) improved discrimination between military targets and background elements. Zhang et al. [

18] developed face detection based on multi-block LBP representation, a technique that was later adapted for military personnel detection. For non-rigid targets like soldiers, frameworks based on Deformable Part Models (DPM) offered flexibility in handling diverse postures and equipment configurations.

In sensor technology, Pei et al. [

19] further explored multiview SAR automatic target recognition optimization, demonstrating how multiple perspectives can enhance detection reliability. Che et al. [

20] developed multi-spectral fusion techniques combining infrared and visible light images. Addressing illumination variation issues, while Li et al. [

21] specifically researched target classification in low-light night vision conditions.

Motion characteristics of military targets also serve as important cues in traditional methods. Salmon et al. [

22] studied the effects of motion on in-vehicle system operation in battle management systems, highlighting practical usability challenges in tactical environments. Bajracharya et al. [

23] developed a fast stereo-based system for detecting and tracking pedestrians from moving vehicles, techniques later adapted for soldier tracking. Chaves [

24] investigated Kalman filtering for low-cost GPS-based collision warning systems in vehicle convoys, demonstrating practical tracking applications.

Despite achieving success in specific controlled environments, these traditional methods faced numerous challenges: sensitivity to environmental changes, insufficient robustness against camouflaged targets, dependence on expert experience for feature engineering, and lack of end-to-end learning capabilities. Boult et al. [

25] addressed some of these limitations through specialized visual surveillance systems for non-cooperative and camouflaged targets in complex settings, but fundamental challenges remained that motivated researchers to transition toward deep learning methods.

2.2. General Deep Learning Methods for Military Target Detection

The emergence of deep learning, particularly Convolutional Neural Networks (CNNs), has fundamentally transformed military target detection research. Zhao et al. [

26] applied the Faster R-CNN framework to storage tank detection using high-resolution aerial imagery, significantly improving detection accuracy. Peng et al. [

27] utilized Faster R-CNN for multi-object extraction in complex backgrounds under artificial intelligence contexts, addressing background interference issues. Naz et al. [

28] explored soldier detection using unattended acoustic and seismic sensors, optimizing detection systems for various battlefield conditions.

Single-stage detectors such as YOLO and SSD have also been widely applied to military scenarios due to their efficiency. Xu and Wu [

29] improved the YOLOv3 model with DenseNet for multi-scale remote sensing target detection, achieving balance between speed and accuracy. Wang et al. [

30] developed a lightweight detector based on SSD with depth-separable convolution, balancing computational efficiency and detection performance. These general object detection frameworks provide fundamental solutions for military target detection but require domain-specific adaptations to address unique military challenges.

With advancements in deep learning technology, emerging network architectures have been continuously applied to military target detection. Feature Pyramid Networks (FPN) have been widely used to address scale variations in military targets. Wang et al. [

31] developed a multi-scale infrared military target detection system based on 3X-FPN feature fusion network, capable of processing targets at different scales. RetinaNet and its Focal Loss have been applied to address the foreground-background class imbalance in detection tasks. Liu et al. [

32] improved RetinaNet for high precision detection of transmission line defects, effectively enhancing detection sensitivity.

Transformer architecture has recently been introduced to military target detection with promising results. Pushkarenko and Zaslavskyi [

33] researched areas in Ukraine affected by military actions using remote sensing data and deep learning architectures. Li et al. [

34] developed a military target detection framework based on Swin Transformer, utilizing SwinF with feature fusion for enhanced target detection. These advanced architectures demonstrate superior performance in modeling long-range dependencies and contextual relationships, crucial for understanding complex battlefield scenarios.

To address specific requirements of military applications, researchers have developed specialized techniques. Zhuang et al. [

35] proposed military target detection methods based on EfficientDet and Generative Adversarial Networks, simulating conditions such as smoke, dust, and partial occlusion. Sun et al. [

36] introduced YOLO-E, a lightweight object detection algorithm specifically designed for military targets. Jani et al. [

37] reviewed model compression methods for YOLOv5, addressing deployment constraints in resource-limited tactical environments. Zhang et al. [

38] investigated multi-scale feature fusion networks for object detection in very high resolution optical remote sensing images, lowering deployment thresholds for field operations.

2.3. Deep Learning Methods for Specific Target Detection

The deep learning method can solve the unique characteristics of different targets, and take tanks and soldiers as examples for analysis.

For tank detection, Fan et al. [

39] developed a fast detection and reconstruction method for tank barrels based on component prior and deep neural networks in the terahertz regime, demonstrating how component-level detection can substantially improve performance. Ma et al. [

40] proposed an end-to-end method with transformers for 3-D detection of oil tanks from single SAR images, not only detecting overall position but also identifying key components, providing richer feature information for model recognition.

Addressing camouflage issues in target detection, Song et al. [

41] developed a multi-granularity context perception network for open set recognition of camouflaged objects, incorporating spatial and channel attention modules while utilizing contextual information to determine actual target contours against complex terrain backgrounds. Naeem et al. [

42] explored multi-sensor fusion technologies, combining data with integrated multi-sensor data fusion algorithms that dynamically adjust sensor importance according to environmental conditions. Lv et al. [

43] researched recognition of deformation military targets in complex scenes via MiniSAR submeter images, highlighting the importance of integrating different sensor modalities.

Soldier detection presents different challenges due to non-rigid characteristics, multiple postures, and group behaviors. Wu and Zhou [

44] designed video-based martial arts combat action recognition and position detection using deep learning, incorporating modules capable of adapting to morphological changes in different tactical postures. For camouflage detection, Choudhary [

45] developed real-time pixelated camouflage texture generation techniques combining multi-scale texture descriptors to capture minute differences between camouflage equipment and natural environments. Barnawi et al. [

46] provided a comprehensive review of landmine detection using deep learning techniques, enhancing detection through preprocessing techniques and designing feature extraction networks adapted to challenging environments.

Group behavior analysis for soldiers has also received significant attention. Anzer et al. [

47] proposed frameworks incorporating semi-supervised graph neural networks that model spatial relationships for detecting tactical patterns. Wang et al. [

48] developed free-walking pedestrian inertial navigation systems based on dual foot-mounted IMU, capable of understanding complex movements. These specialized approaches have significantly advanced target detection technology by addressing the unique challenges of each target type.

2.4. Key Challenges of Target Detection in Resource-Constrained Environments

Although target detection based on resource-constrained environments has made progress in deep learning, there are still some challenges. Camouflage and concealment reduce detection accuracy by up to 40%, while scale variation and small target detection are obstacles, especially in aerial reconnaissance where targets occupy less than 1% of the image. Weather conditions like rain, fog, or snow can cause accuracy drops of 35-60%, and while multi-sensor fusion improves all-weather detection, it introduces synchronization and computational challenges. Deployment on edge devices with limited resources creates tension between model compression, energy efficiency, and inference speed. Additionally, limited training data and the complexity of overlapping targets reduce performance by 25-45%, especially in dense formations and dynamic scenarios requiring real-time processing.

To address these issues, we propose the MSCDNet architecture with specialized modules. The Multi-Scale Fusion Module (MSFM) tackles scale variation, the Context Merge Module (CMM) improves feature integration across scales, and the Detail Enhance Module (DEM) preserves critical details for detecting camouflaged or occluded targets. This hierarchical approach balances computational efficiency with enhanced detection performance, addressing the challenges of complex environments.

3. Methodology

3.1. Overview of Model

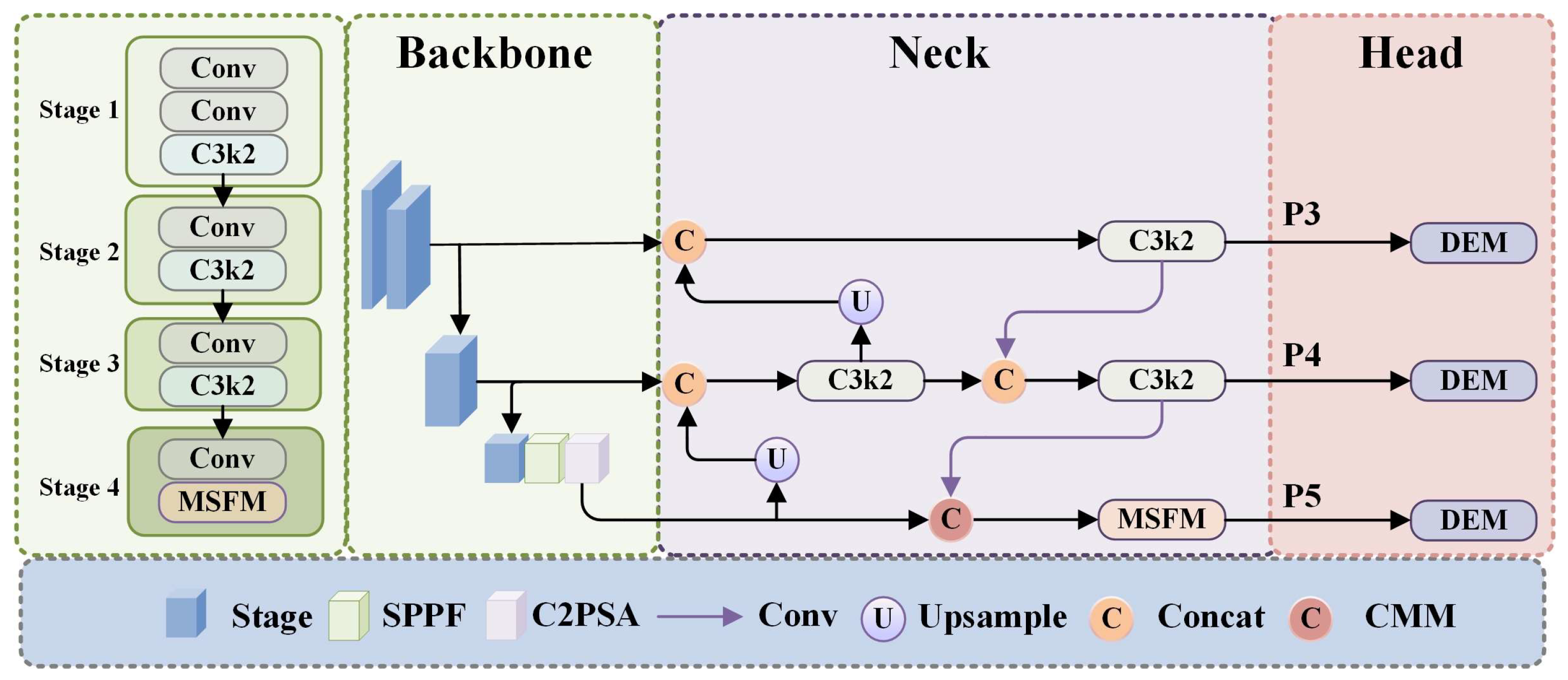

As illustrated in

Figure 1, the MSCDNet (Multi-Scale Context Detail Network) incorporates a Lightweight Perception Net toward lightweight network of efficient visual feature extract and object detection. The feature representation of this model is constructed gradually across multiple scales yet guaranteed computational efficiency on resource constrained environments. First it is composed of some convolutional layer to set up some basic features and reduce spatial dimensions. C3k2[

49] modules with a 2x2 kernel and bottleneck design that process these features are optimized for computational efficiency and representation capacity. The architecture then incorporates three key components for target detection: Multi-Scale Fusion (MSF) modules merge information from diverse receptive fields to capture the multi-scale nature of targets; the Spatial Pyramid Pooling - Fast (SPPF) module aggregates multi-scale contextual information efficiently; and the C2PSA (Cross-Stage Partial with Position-Sensitive Attention) modules refine features with attention mechanisms that highlight relevant information while reducing background noise, improving detection precision.

It implements a design of architecture with an advanced feature pyramid network that combines features across multiple scales through upsampling operations and Concat modules in the detection head. As context aware mechanism and adaptive feature modulation strategies, the Cross-Modal Modulation (CMM) modules replace the simple concatenation approach with a more powerful way of cross-scale feature integration by making use of the complementary information provided by multiple feature levels. C3k2 modules will further amplify the feature's discriminative power after being refined at each scale of the feature pyramid. Finally, these multi scale features pass through the Detail Enhance Module (DEM) that processes them to the final predictions while keeping critical details hence especially important for military target identification.

The perception network obtained in this modular design is a construction, refinement and integration of visual features at different scales. The network is composed of each specialized module, which helps to detect objects of different sizes, and is computationally efficient. Through this seamless coordination, MSCDNet can generate superior detection performance in the presence of complex environments with targets of intricate morphologies, high background interference, and diverse scale, whilst maintaining a lightweight and suitable for constrained resource edge deployments.

3.2. Multi-Scale Fusion Modulation

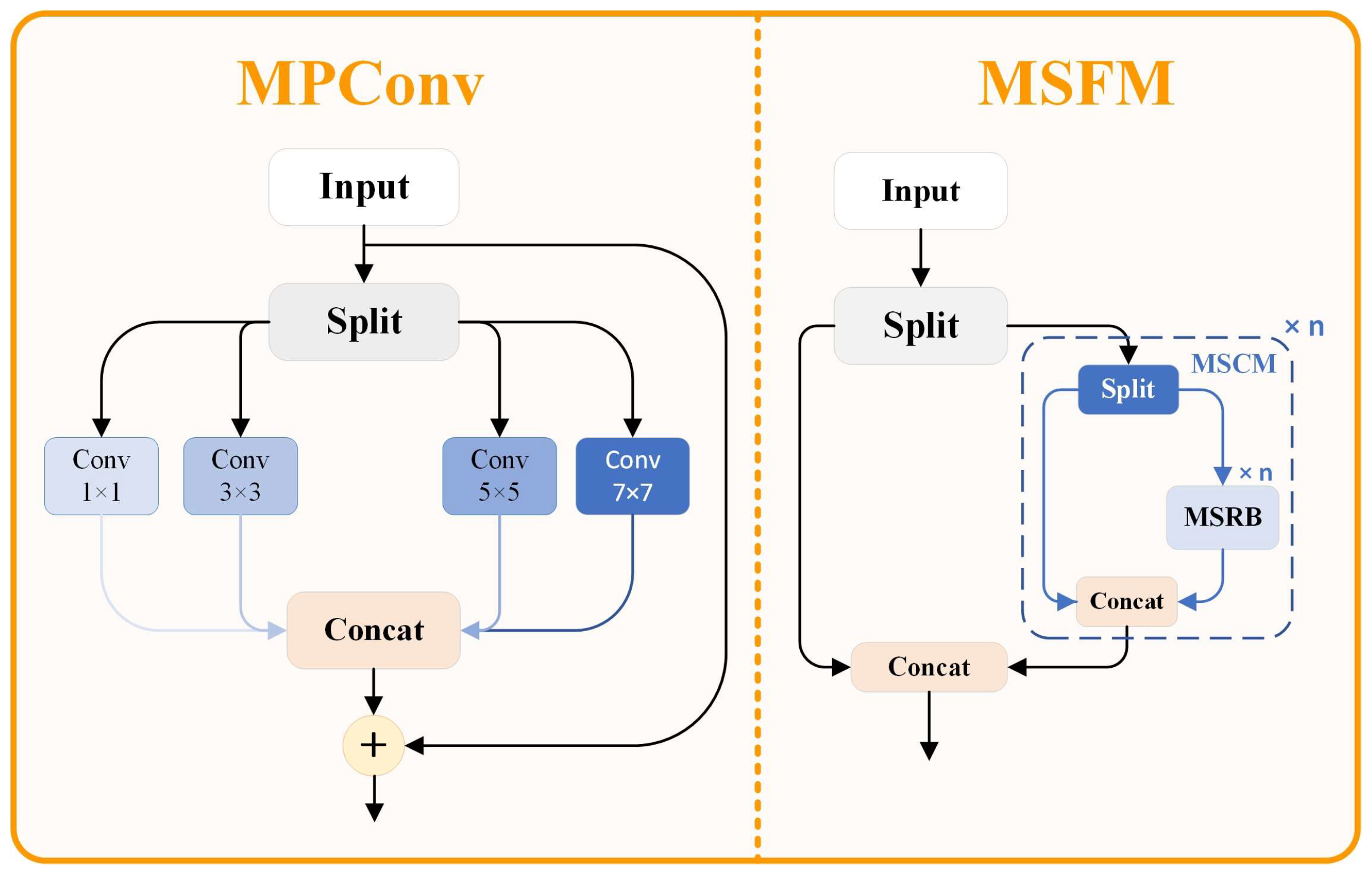

The use of traditional object detection networks proves difficult when applied to resource-constrained settings. The detection targets include objects of various sizes extending from tiny ones at distance to large equipment near the scene therefore leading to substantial scale variability. The position and shape of the target in the complex environments also have great changes. The detection requires superior algorithms which can extract features efficiently. Basic features extraction techniques succeed during their intended operation though they collapse at capturing multi-scale details and retaining spatial data especially within challenging environments. The MSFM (Multi-Scale Feature Modulation) framework serves as our proposed detection enhancement solution by applying multi-scale convolution strategies and effective feature union approaches in a structure demonstrated in

Figure 2.

The efficient multi-scale convolution modules within the MSFM structure function to improve feature representation quality. Partial feature separation together with advanced multi-scale convolution strategies enables this framework to improve its capability for targets with different scales. The MPConv (Multi-scale Parallel Convolution) module stands as the central aspect of the MSFM by maximizing input information from different receptive fields through parallel multi-scale convolution together with advanced feature reorganization methods.

The MPConv module is the cornerstone innovation of the MSFM structure, and its mathematical formulation is outlined in Equation (1):

The specific mathematical implementation of the MPConv module can be further decomposed. Initially, the input feature is uniformly divided into groups along the channel dimension, with each feature group containing channels when the input feature has channels. Subsequently, each feature group is processed through convolution kernels of different sizes, with typical kernel size configurations being , enabling the model to simultaneously capture spatial information at different scales. The processed features from each group are then reconnected along the channel dimension, and finally, feature fusion and channel dimension adjustment are accomplished through a convolution to obtain the final output feature.

The MPConv module functions through a Fourier analysis method that performs multi-scale frequency selection filtering. Organizational structure of convolutional filters includes large and small kernels that extract different frequency ranges where the size of convolutional filters determines the range of information acquired with large filters maintaining contours and big features whereas small ones capture edges and tiny details. The sequence of parallel multi-scale filtering operations maintains plentiful frequency content without eliminating high-frequency details as traditional convolution frameworks would typically do. The frequency response of the filters can be represented via Fourier transformation, as shown in Equation (2):

where

denotes the Fourier transform operator,

represents the frequency response of the

-th convolution kernel,

is the phase response function, and

is the filter characteristic curve. The comprehensive module’s frequency response is the synthesis of individual components as shown in Equation (3):

In the MSFM implementation, the MPConv module is embedded into a basic residual unit, forming a Multi-Scale Residual Block (MSRB) as shown in Equation (4):

Here, the initial convolution operation compresses the input channels to (typically half of the output channels), the MPConv operation implements multi-scale feature extraction, and finally, the original features are added to the processed features through a residual connection. This residual connection mechanism not only mitigates the vanishing gradient problem in deep networks but also achieves effective fusion of features at different abstraction levels.

The Multi-Scale Dual-path Module (MSDM) represents a dual-path feature extraction structure that replaces basic units with MSRBs as shown in Equation (5):

In this formulation, the input feature is divided into two parts, and . The is directly transmitted to the output, while is processed through serially connected MSRB modules before being transmitted to the output. This dual-path design balances the depth and width of feature extraction, enabling simultaneous preservation of low-level detail features and high-level semantic features.

Ultimately, MSFM employs MSDM as its fundamental building unit as shown in Equation (6):

This multi-level feature extraction and fusion mechanism can capture rich feature information across various scales, particularly suitable for processing targets with complex morphologies and variable scales in resource-constrained scenarios.

From an information theory perspective, MSFM reduces information loss during feature extraction through parallel multi-scale processing, increasing the mutual information between input features and target features. By maximizing the mutual information as shown in Equation (7):

where

represents the entropy of target features,

denotes the conditional entropy of target features given input features,

is an information gain function,

is the KL-divergence metric, and

represents the trace operation on feature covariance matrices, MSFM enhances the model’s capacity for target recognition and localization with unprecedented accuracy.

Instead of traditional multi-scale feature extraction methods, MSFM has several key advantages. MPConv is a parallel module that processes convolutions at various scales in parallel, thus maintaining the spatial information across scales and keeping away from the information loss in cascaded convolutions occurring with conventional convolutions. The second contribution is that the channel grouping strategy reduces computational complexity, but maintains satisfactory feature extraction capability, and has orders of magnitude lower computational complexity than typical multi-branch structures. Third, MSFM handles the vanishing gradient problem of deep networks when using residual connections for the feature fusion of low- and high-level features. The efficient use of multi-scale convolution in the MSFM structure enables significant improvement of feature representation over multi-scale target identification in resource-constrained detection scenarios.

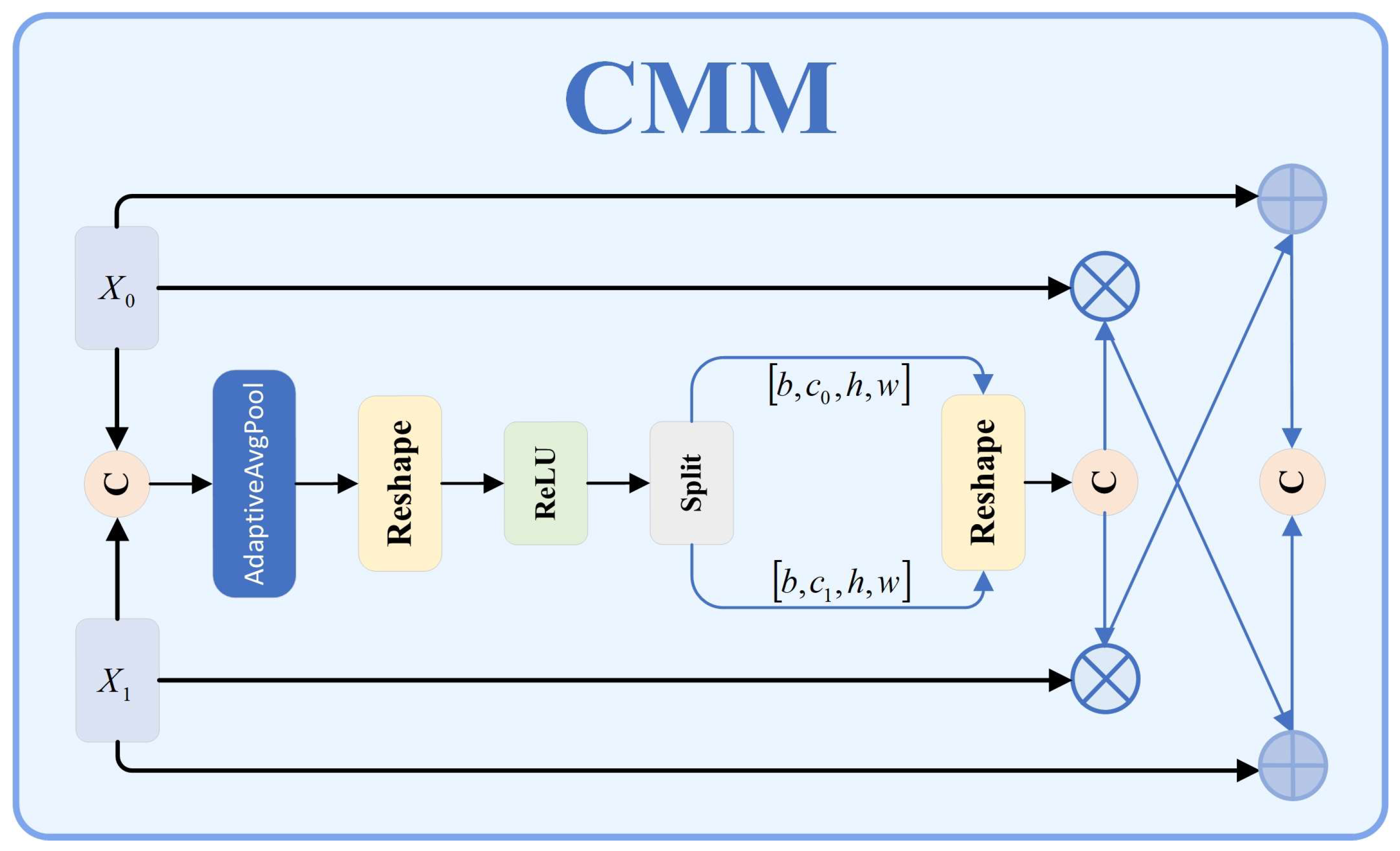

3.3. Context Merge Module

Traditional object detection networks simply concatenate or fuse the feature by summing the features. Nevertheless, the performance in target detection is poor because many specific targets have complicated shapes, different scales, and strong interference of the background. Conventional fusion techniques do not fully capture complementary information at the different feature levels; hence, they sacrifice efficiency in fusion and target feature capture. The issue of concatenation operations is that they simply add features of different levels without considering contextual correlations or adapting to their own features, which leads to reduction in detection accuracy. We propose the Context Guided Modulation Module (CMM)as shown in

Figure 3, which involves context aware mechanisms and adaptive modulation strategies to improve the feature extraction, and to achieve higher accuracy and robustness of specific target recognition.

The key idea of the CMM design is to leverage contextual information to enable the adaptive fusion of features at varying levels to achieve synergy between different levels' features, making the most of the complementary feature relations to improve the model's feature roughness. In the module, there are four key components: feature adjustment layer, feature concatenation operation, channel attention mechanism, and complementary feature fusion. The CMM module first makes a feature adjustment layer to make the channel dimension compatible between features at different levels. When the number of channels in input feature x₀ differs from that in feature x₁, a 1×1 convolution operation is applied to feature x₀ to adjust its channel count to match that of feature .

Second, the adjusted feature is concatenated with feature along the channel dimension to form a feature concatenation vector .

Third, the concatenated feature is processed through a Squeeze-and-Excitation (SE) attention module, which first performs global average pooling on the feature to capture global contextual information between channels, then learns the correlations between channels through a non-linear transformation constructed by two fully connected layers, ultimately generating channel attention weights, as shown in Equation (8):

where GAP denotes the global average pooling operation,

and

represent the weight matrices of the respective fully connected layers,

and

denote the corresponding bias terms,

signifies the ReLU activation function,

represents the Sigmoid activation function, while

,

, and

are learnable scaling parameters, and the

function provides additional non-linearity to enhance expressiveness.

The generated channel attention weights are subsequently bifurcated into two segments corresponding to weights for and respectively. These weights are then applied to the original features through element-wise multiplication to derive weighted feature representations.

Finally, through a cross-enhancement feature strategy, the weighted features and original features undergo complementary fusion, as expressed in Equation (9):

where

and

represent non-linear transformation functions with parameters

and

respectively,

and

are balancing factors,

denotes channel-wise concatenation, Norm signifies feature normalization, and

is a global scaling factor.

By preserving the original features and subsequently integrating context enhanced information from other features, the Context guided Modulation Module (CMM) helps the feature fusion remain efficient by preserving complementarity. Within the enhanced YOLO11 network, the CMM is strategically made to replace traditional feature concatenation as part of the multi-scale feature fusion. It is used at critical stages (from higher level to lower-level features, P₅→P₄, P₄→P₃; from lower level (P₃) to higher level (P₄, P₄) features). Optimizing feature data to each scale of the environment, this multi-tiered approach is an improvement to the detection of military targets at multiple sizes. The CMM retains diverse feature characteristics by combining channel attention with complementary fusion, adapting to them to weight and integrate more precisely. Finally, cross enhancement further improves the accuracy of detection of specific targets like small and multi scale targets in cluttered environments. Experiment results demonstrate the advantage of using YOLO11 network over the CMM for specific target detection. CMM’s principles also suggest ways of dealing with feature fusion issues in other computer vision areas.

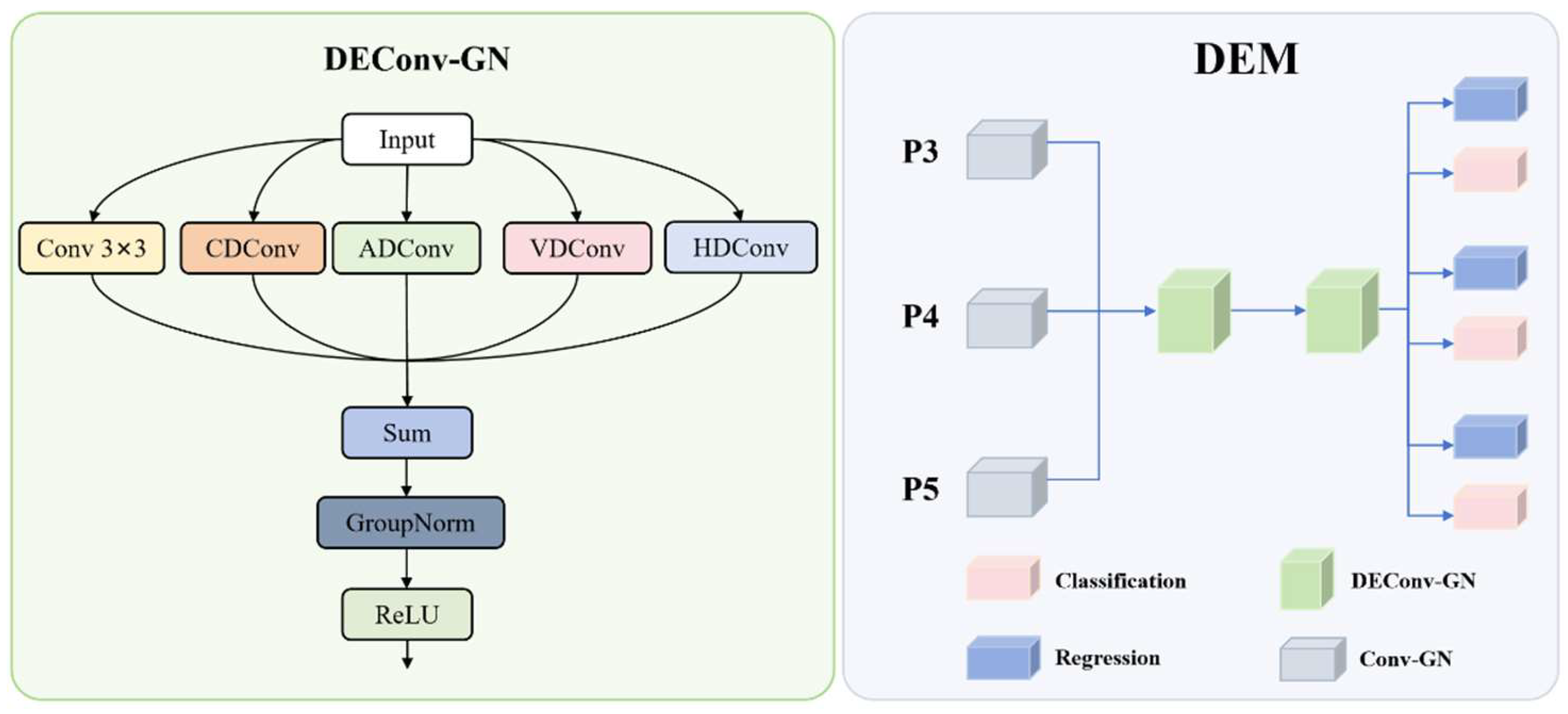

3.4. Detail Enhance Module

The Detail Enhance Module is a step up from object detection technology, mainly as regards to applications such as tanks and soldier detection. Based on strong capabilities in advanced detection frameworks, this detection head incorporates the modifications that help feature representation and detection precision, especially for difficult targets. DEM architecture pulls together grouped normalization and detail enhanced convolutions to bring in an innovative way of feature processing. The innovation resides in the shared convolutional structure, which is trained to capture the details at the boundaries that are imperative for precise identification of specific targets against clutter and challenging environments. The DEM structure diagram is shown in

Figure 4.

The DEM architecture centers around the Detail Enhanced Convolution (DEConv-GN) module, which enhances edge and texture details. The input splits into five parallel convolution paths: Standard Conv 3×3 for baseline feature extraction, CDConv for emphasizing central pixel variations, HDConv for highlighting horizontal edges, VDConv for vertical edges, and ADConv for capturing diagonal features. These paths combine through a Sum operation, followed by GroupNorm and ReLU activation. In DEM, DEConv-GN replaces batch normalization with group normalization to improve stability across varying batch sizes and conditions typical in surveillance. The detection head processes multi-scale features (P3, P4, P5) through initial Conv-GN layers, followed by a shared detail-enhanced convolution module with two DEConv-GN layers. This module ensures efficient cross-scale feature learning, after which the features are split for regression and classification tasks.

Mathematically, the feature transformation process reduces the input feature map dimensions, effectively compressing channel dimensions while preserving discriminative information necessary for accurate specific target detection. Detail enhancement occurs in the shared module as shown in Equation (10):

The DEConv operations can be collectively expressed as shown in equation (11):

where

represents adaptive weights for each convolution branch learned during training,

denotes the cross-branch interaction coefficient,

calculates the L2-norm of convolution outputs to represent feature significance,

implements the Group normalization function with 32 groups, and

applies a non-linear activation function such as ReLU or Swish.

For efficient deployment, the DEConv module can be simplified by fusing the parallel convolution branches into a single convolution operation as shown in Equations (12) and (13):

where

represents convolution weight matrices for each branch,

denotes the bias vectors for each branch,

and

are branch importance coefficients determined during the optimization phase, and

and

implement transformation functions with learnable parameters

and

that enable adaptive branch fusion during model compression.

Following the shared feature processing, the detection head splits into two parallel branches: a regression branch that predicts the bounding box coordinates through a distribution focal loss (DFL) formulation and a classification branch that predicts class probabilities for targets. The regression output is processed as shown in equation (14):

where

represents a scale-specific adjustment factor for different feature levels,

denotes the number of bins for distribution focal loss,

is the feature normalization coefficient, and

implements an adaptive scaling function with parameter

controlling detection confidence

The classification process applies specialized weight matrices to the encoded feature representations, mapping them to appropriate class probability distributions and confidence scores for effective object categorization. The DFL methodology partitions each bounding box coordinate into

discrete bins, facilitating more precise localization of specific targets. During inference, the discrete probability distribution is converted to continuous coordinate values as expressed in Equation (15):

where

represents the probability of the coordinate value falling within bin

,

is the distribution refinement parameter,

denotes a small constant ensuring numerical stability, and

serves as the bin importance modulation factor that enables sub-bin precision beyond the discrete quantization level. Comprehensive detection output integrates both classification and localization branches, combining class probability scores with spatial position information to generate complete detection results. Predicted box distributions undergo decoding into actual spatial coordinates utilizing anchor references and stride scaling factors as formulated in Equation (16):

Applying the new DEM architecture approach is a step to solve the target detection challenge in a resource-constrained environment with advanced convolution techniques, as traditional approaches sometimes disregard the useful textural and edge details. It allows for fast inference time for real time deployment because its parameter sharing is efficient and strong detection capabilities are maintained while reducing model size. Due to its good performance in detecting small objects, which are essential for long-range search and rescue, early target identification, this architecture is favorable. DEM's stable performance under various lighting and camouflage conditions is ensured by group normalization, and field tests confirm it to be superior in detecting camouflaged targets and at detecting distant personnel. It performs computationally efficiently in timing and accuracy, in the context of search and rescue operations, to support timely and accurate information and to optimize resource use.

4. Experiments

4.1. Experimental Details and Evaluation Criteria

The experiments were conducted on a system running Windows 10, with Python 3.10.16 and PyTorch 2.3.0. The hardware setup featured an RTX 3080 GPU, an Intel i7-11700K CPU, and CUDA version 11.8. For training, an SGD optimizer was used with a learning rate of 0.01 and a batch size of 32.

In the object detection tasks there are common performance metrics such as precision, recall, average precision (AP) and mean average precision (mAP). Osmosis is used to evaluate accuracy by precision, i.e. the ratio of correctly identified objects from all detected objects. Sensitivity (recall) is defined as proportion of positive samples that are correctly identified and is only a fraction of positive samples.

Often these two metrics show inverse relationship between them. Higher recall means that the model is indeed finding most of the true positives, but the precision will decrease because of false positives. High precision means that the model is likely to make correct predictions; however, high precision also means that some objects may be missing; hence, precision falls.

The Equation (17) – (20) for calculating recall, precision, AP, and mAP are as follows:

4.2. Datasets

In order to carry out research on efficient target detection in resource-constrained environments, we present a comprehensive dataset of 4616 images carefully chosen to enable solely tank and soldier detection in intricate environments as seen in

Figure 5 and

Figure 6. Then having a dataset which consist of real-world footage from the Russia Ukraine war and high-quality military simulation image that are close real battlefield but with controlled variation.

To create a challenging dataset, we have intentionally designed the dataset to include such detection scenarios as multi-scale targets, terrain occlusion, environmental obscurants (smoke and fog), advanced camouflage and image degradation (which is typical in reconnaissance video). The dataset concentrates on aerial views obtained from unmanned aerial vehicles (UAVs) and complemented by ground level perspectives, corresponding to the visual difficulties in performing modern complex environments.

It is divided into 3 parts 3,231 images for training, 924 images for testing, and 461 images for validation. The purpose of this carefully assembled set is to serve as the basis for the creation of reliable detection algorithms to work in the harsh visual environment, resulting in better performance of the automated target detection systems.

4.3. Ablation Study

This ablation study evaluates the contributions of three key modules in MSCDNet: the Multi-Scale Fusion Model (MSFM) as shown in

Table 1, the Context Merge Module (CMM), and the Detail Enhance Module (DEM). The baseline model, with 2.58M parameters and 6.3G FLOPs, achieved an mAP50-95 of 38.2%. MSFM improved precision by 3.1% and mAP50-95 by 0.9%, while reducing parameters by 0.05M. CMM increased recall by 1.3% and mAP50-95 by 0.4%. DEM reduced parameters by 0.32M, decreased FLOPs by 0.3G, and increased mAP50-95 by 1.4%. The combinations of MSFM-CMM, MSFM-DEM, and CMM-DEM demonstrated further improvements: MSFM-CMM raised mAP50-95 by 1.3% and precision by 3.6%; MSFM-DEM achieved a 0.7% increase in mAP50-95 and reduced parameters by 0.32M; CMM-DEM reached 39.6% mAP50-95 with reduced computational demands.

The complete architecture incorporating all three modules achieved superior performance with precision increasing to 86.1%, recall improving to 68.1%, and mAP50-95 reaching 40.1%, while utilizing only 2.22M parameters and 6.0G FLOPs. These results validate our design approach, showing MSF enhances feature representation, CMM improves cross-modal information use, and DEM optimizes efficiency without sacrificing accuracy.

The PR curve comparison in

Figure 7 demonstrates the improved detection performance of our enhanced model. The curve for the improved model consistently maintains higher precision values across varying recall levels, particularly in the mid-to-high recall range (0.5-0.8), where the improved model shows significant advantage over the baseline. This indicates better confidence in predictions and fewer false positives while maintaining high recall, reflecting the synergistic benefits of the three modules working together.

The CAM (Class Activation Map) visualization in

Figure 8 reveals the attention mechanism differences between the baseline and improved models. The improved model demonstrates more focused and precise activation regions that closely align with the actual target objects, particularly highlighting discriminative features rather than background elements. This enhanced attention localization explains precision improvements, as the model more accurately concentrates on relevant target features while effectively suppressing background interference, a direct result of the MSF's improved feature representation and CMM's enhanced cross-modal information integration.

4.4. Comparison with State-of-the-Arts

MSCDNet demonstrates an exceptional efficiency-accuracy balance compared to contemporary object detection models as shown in

Table 2 and

Figure 9. Traditional architecture like SSD show modest performance, with mAP50-95 8.3% lower than our model while requiring over 5 times more computational resources. More advanced models like DETR [

50] and TOOD [

51] offer improved accuracy but demand substantially higher computational resources, with TOOD requiring 33 times more FLOPs than our approach.

Recently lightweight models have provided more relevant comparisons. Our architecture outperforms RTMDet-Tiny [

52] by 2.8% in mAP50-95 while using 25% fewer FLOPs and 54% fewer parameters. Similarly, it exceeds DFINE-n [

53] and DEIM-n [

54] variants by 2.6% in detection accuracy while reducing computational demands by 15% and parameter count by 40%.

The YOLO family shows progressive improvements across generations, yet our MSCDNet still demonstrates clear advantages. Compared to YOLOv5n [

55], our model achieves 2.5% higher mAP50-95 with only slightly increased computational cost. Against YOLOv8n [

56], we deliver a 2.3% accuracy improvement while reducing FLOPs by 26% and parameters by 26%. Compared to YOLOv10n [

57], the closest competitor, our architecture improves mAP50-95 by 1.8% while reducing both parameter count and computational demands by nearly 27%. Our model also outperforms YOLOv11n by 1.9% in accuracy while using 4.8% fewer FLOPs and 14% fewer parameters.

Most notably, MSCDNet achieves balanced improvements across both precision and recall metrics, with values 4.3% and 1.6% higher than YOLOv11n respectively, demonstrating superior detection capability across diverse object classes and challenging scenarios.

We evaluated various module configurations for battlefield object recognition within the YOLOv11 architecture, addressing challenges such as variable scales, complex morphologies, and cluttered backgrounds.

Table 3 shows performance metrics for five YOLOv11 variants. The proposed MSFM module outperforms others with 84.9% precision, surpassing the C3K2 baseline by 3.1 percentage points, and maintains a competitive recall of 65.8%. It achieves the highest detection quality, with an mAP50 of 72.5% and an mAP50-95 of 39.1%. The C3k2-Star variant shows a slight precision improvement to 82.2%, but its recall drops significantly to 61.5%, limiting its effectiveness. The C3k2-IDWC variant strikes a balance with 83.0% precision and good computational efficiency at 6.1 GFLOPS, making it the most lightweight option. The MAN module achieves 83.2% precision but struggles with recall at 62.2% and introduces higher computational costs at 8.4 GFLOPS and 3.77M parameters. MSFM stands out by providing superior detection performance without additional computational overhead, maintaining the same GFLOPS as the baseline C3K2, with only minor parameter differences, making it the optimal choice for object recognition in resource-constrained environments due to its balanced performance and efficiency.

Table 4 presents the performance metrics of various FPN architectures, highlighting the advantages of our approach over existing methods. Traditional FPN shows significant limitations in military target detection. The Slimneck architecture exhibits suboptimal feature representation with a recall of 65.2%. BiFPN, while computationally efficient, struggles with complex targets, achieving a recall of 66.4%. MAFPN improves precision to 84.0%, but its recall drops to 65.1% and requires higher computational resources at 7.1 GFLOPS. Our architecture overcomes these limitations, achieving optimal precision of 82.4% and superior recall of 67.8%, surpassing Slimneck by 2.6%, BiFPN by 1.4%, and MAFPN by 2.7%. This improvement translates into better mean Average Precision metrics, with an mAP50 of 72.6% and mAP50-95 of 38.6%. Remarkably, our design maintains a moderate computational cost of 6.3 GFLOPS—comparable to BiFPN and 11.3% lower than MAFPN. This balance is achieved through the MSFM module, which enhances multi-scale feature extraction, and the CMM module, which enables advanced context-aware feature fusion. Together, these modules offer an efficient and high-performance solution for object recognition in resource-constrained environments.

4.5. Generalization Experiments

To assess the adaptability of our model across different domains, we evaluated its performance on two distinct datasets.

The VisDrone2019[

64] dataset serves as a comprehensive benchmark for visual object detection in aerial imagery. It includes around 10,000 images and 288 video clips, with 2.6 million annotated objects spanning 10 categories. The dataset is divided into 6,471 images for training, 548 for validation, and 3,190 for testing.

The BDD100K[

65] dataset presents challenges from a vehicle-mounted perspective. With over 100,000 images and 10 million annotations, it covers various weather conditions as well as day and night environments. For this dataset, we used 70,000 images for training, 10,000 for validation, and 20,000 for testing.

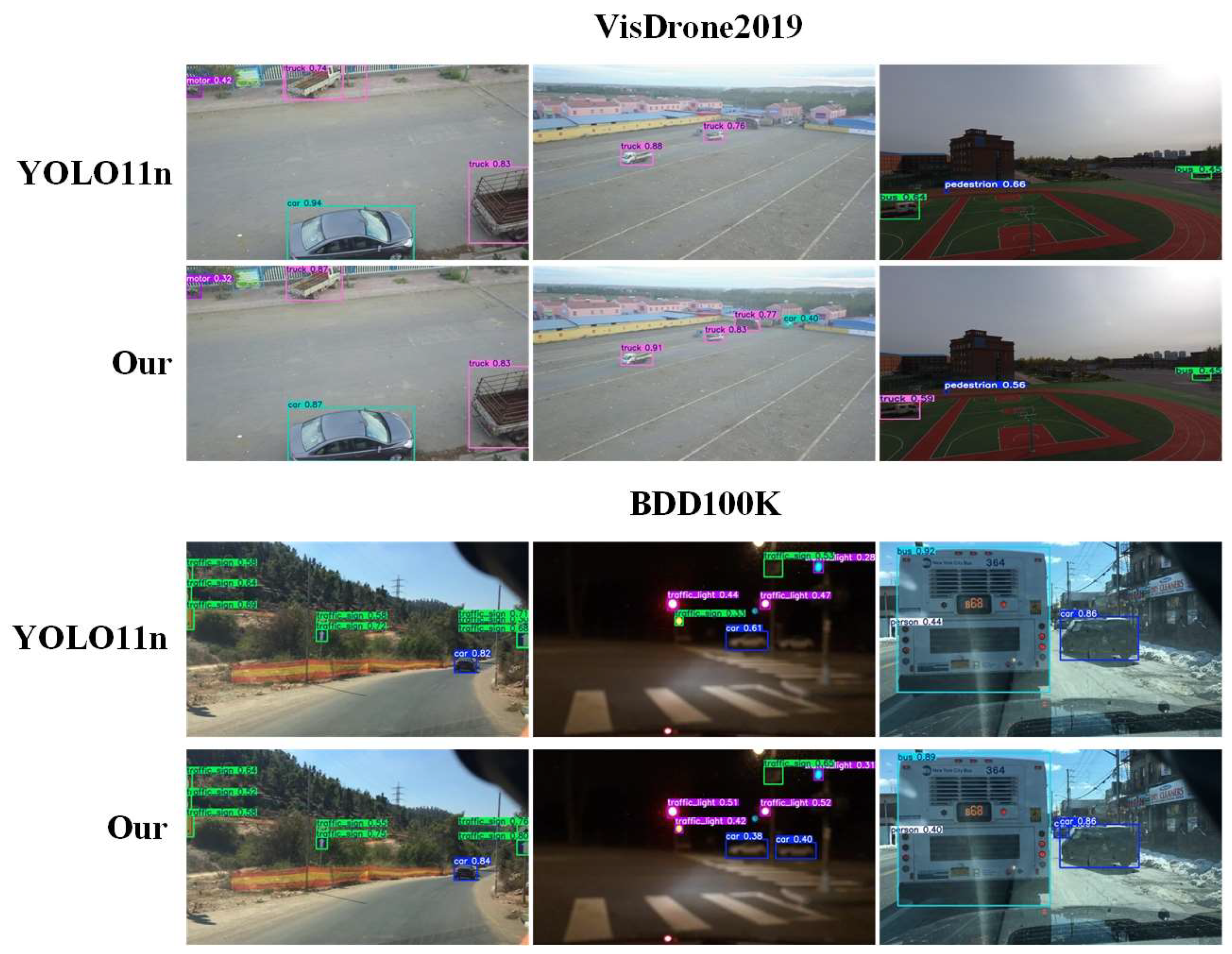

Comparing our model to the baseline YOLO11n, we observed consistent improvements across both datasets as shown in

Table 5. On VisDrone, precision increased from 45.5% to 45.7%, a gain of 0.2%. Recall improved from 33.4% to 35.1%, an increase of 1.7%. For detection accuracy, mAP50 rose from 33.7% to 34.8%, a gain of 1.1%, and mAP50-95 improved from 19.6% to 20.1%, an increase of 0.5%. On BDD100K, precision improved from 58.8% to 61.1%, a gain of 2.3%. However, recall decreased from 41.5% to 40.0%, a reduction of 1.5%. Overall detection performance still improved, with mAP50 increasing from 42.5% to 43.6%, a gain of 1.1%, and mAP50-95 rising from 27.8% to 29.0%, an improvement of 1.2%.

These results highlight the superior detection performance of our model across different domains.

Figure 10 demonstrates the improved detection effectiveness of our model before and after the enhancements, evaluated on both the VisDrone and BDD100K datasets, visually reinforcing our quantitative findings.

5. Conclusions

This paper introduces MSCDNet, a lightweight architecture for target detection in resource-constrained environments. Our approach integrates three key modules: Multi-Scale Fusion for enhanced feature representation, Context Merge Module for adaptive cross-scale integration, and Detail Enhance Module for preserving critical details. Experiments demonstrate MSCDNet's superior performance with 40.1% mAP50-95, 86.1% precision, and 68.1% recall while requiring minimal computational resources of just 2.22M parameters and 6.0G FLOPs. Our model consistently outperforms contemporary architectures including YOLO variants while using fewer resources. Generalization tests across VisDrone2019 and BDD100K datasets confirm its effectiveness in diverse scenarios.

Despite these achievements, limitations remain in extreme weather conditions and Severe occlusion. Future work should explore cross-modal fusion for all-weather capability, adaptive computation mechanisms, self-supervised learning approaches, and hardware-aware optimizations to further enhance MSCDNet's applicability in resource-constrained environments where detection reliability directly impacts the application value in civilian fields such as personnel search and rescue, traffic management, etc.

Author Contributions

Conceptualization, K.W. and G.H.; methodology, K.W.; software, K.W.; validation, G.H., X.L.; formal analysis, K.W., X.L.; investigation, K.W., X.L.; resources, G.H.; data curation, G.H.; writing—original draft preparation, K.W.; writing—review and editing, K.W., G.H., X.L.; visualization, K.W.; supervision, G.H.; project administration, G.H.; funding acquisition, G.H. All authors have read and agreed to the published version of the manuscript.

Funding

This research received no external funding.

Data Availability Statement

The data presented in this study are available on request from the corresponding author.

Conflicts of Interest

The authors declare no conflicts of interest.

Statement

Current research is limited to the Lightweight Computer Vision Algorithms in Object Detection, the main value of this research is to promote the application ability of computer vision technology in resource-constrained environments, which is of great value to many civil fields such as disaster relief, traffic safety, and does not pose a threat to public health or national security. Authors acknowledge the dual use potential of the research involving the target detection technology and confirm that all necessary precautions have been taken to prevent potential misuse. As an ethical responsibility, authors strictly adhere to relevant national and international laws about DURC. Authors advocate for responsible deployment, ethical considerations, regulatory compliance, and transparent reporting to mitigate misuse risks and foster beneficial outcomes.

References

- Jia, L.; Pang, W. Overview of Battlefield Debris Data Fusion Technology for Situation Awareness. In Proceedings of the 2024 Asia-Pacific Conference on Software Engineering, Social Network Analysis and Intelligent Computing (SSAIC); 2024; pp. 474–478. [Google Scholar]

- Dehghan, M.; Khosravian, E. A Review of Cognitive UAVs: AI-Driven Situation Awareness for Enhanced Operations. 2024, 2, 54–65. [Google Scholar] [CrossRef]

- Tiwari, K.; Arora, M.; Singh, D. An assessment of independent component analysis for detection of military targets from hyperspectral images. Int. J. Appl. Earth Obs. Geoinformation 2011, 13, 730–740. [Google Scholar] [CrossRef]

- Palm, H.C.; Ajer, H.; Haavardsholm, T.V. Detection of military objects in LADAR images. 2008. [Google Scholar]

- Deveci, M.; Kuvvetli, Y.; Akyurt, İ.Z. Survey on military operations of fuzzy set theory and its applications. J. Nav. Sci. Eng. 2020, 16, 117–141. [Google Scholar]

- Riedl, J.L. CCD Sensor Array and Microprocessor Application to Military Missile Tracking. In Modern Utilization of Infrared Technology II; SPIE, 1976; Volume 95, pp. 148–154. [Google Scholar]

- Schaber, G.G. SAR Studies in the Yuma Desert, Arizona Sand Penetration, Geology, and the Detection of Military Ordnance Debris. Remote. Sens. Environ. 1999, 67, 320–347. [Google Scholar] [CrossRef]

- Lv, J.; Zhu, D.; Geng, Z.; Han, S.; Wang, Y.; Yang, W.; Ye, Z.; Zhou, T. Recognition of Deformation Military Targets in the Complex Scenes via MiniSAR Submeter Images With FASAR-Net. IEEE Trans. Geosci. Remote. Sens. 2023, 61, 1–19. [Google Scholar] [CrossRef]

- Tiwari, K.C.; Arora, M.K.; Singh, D.P.; Yadav, D.S. Military target detection using spectrally modeled algorithms and independent component analysis. Opt. Eng. 2013, 52, 026402. [Google Scholar] [CrossRef]

- Iandola, F.N.; Han, S.; Moskewicz, M.W.; Ashraf, K.; Dally, W.J.; Keutzer, K. SqueezeNet: AlexNet-level accuracy with 50x fewer parameters and< 0.5 MB model size. arXiv 2016, arXiv:1602.07360. [Google Scholar]

- Girshick, R. Fast r-cnn. In Proceedings of the IEEE international conference on computer vision; 2015; pp. 1440–1448. [Google Scholar]

- Ren, S.; He, K.; Girshick, R.; Sun, J. Faster R-CNN: Towards real-time object detection with region proposal networks. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 39, 1137–1149. [Google Scholar] [CrossRef] [PubMed]

- He, K.; Gkioxari, G.; Dollár, P.; Girshick, R. Mask r-cnn. In Proceedings of the IEEE international conference on computer vision; 2017; pp. 2961–2969. [Google Scholar]

- Liu, W.; Anguelov, D.; Erhan, D.; Szegedy, C.; Reed, S.; Fu, C.Y.; Berg, A.C. Ssd: Single shot multibox detector. In Proceedings of the Computer Vision–ECCV 2016: 14th European Conference, Amsterdam, The Netherlands, 11–14 October 2016; Proceedings, Part I 14. Springer International Publishing, 2016; pp. 21–37. [Google Scholar]

- Hussain, M. YOLO-v1 to YOLO-v8, the Rise of YOLO and Its Complementary Nature toward Digital Manufacturing and Industrial Defect Detection. Machines 2023, 11, 677. [Google Scholar] [CrossRef]

- Sun, Y.; Liu, Z.; Todorovic, S.; Li, J. Adaptive boosting for SAR automatic target recognition. IEEE Trans. Aerosp. Electron. Syst. 2007, 43, 112–125. [Google Scholar] [CrossRef]

- Zhang, L.; Shi, Z.; Wu, J. A Hierarchical Oil Tank Detector With Deep Surrounding Features for High-Resolution Optical Satellite Imagery. IEEE J. Sel. Top. Appl. Earth Obs. Remote. Sens. 2015, 8, 4895–4909. [Google Scholar] [CrossRef]

- Zhang, L.; Chu, R.; Xiang, S.; Liao, S.; Li, S.Z. Face detection based on multi-block lbp representation. In Proceedings of the Advances in Biometrics: International Conference, ICB 2007, Seoul, Korea, 27–29 August 2007; Proceedings, Part I 14. Springer: Berlin/Heidelberg, 2007; pp. 11–18. [Google Scholar]

- Pei, J.; Huang, Y.; Sun, Z.; Zhang, Y.; Yang, J.; Yeo, T.-S. Multiview Synthetic Aperture Radar Automatic Target Recognition Optimization: Modeling and Implementation. IEEE Trans. Geosci. Remote. Sens. 2018, 56, 6425–6439. [Google Scholar] [CrossRef]

- Che, J.; Fang, L.; Zhong, Z.; Su, X.; Ma, Q.; Yu, G. A survey of automatic target recognition technology based on multi-source data fusion. IET Conf. Proc. 2025, 2024, 1592–1599. [Google Scholar] [CrossRef]

- Li, Y.; Luo, Y.; Zheng, Y.; Liu, G.; Gong, J. Research on Target Image Classification in Low-Light Night Vision. Entropy 2024, 26, 882. [Google Scholar] [CrossRef] [PubMed]

- Salmon, P.M.; Lenné, M.G.; Triggs, T.; Goode, N.; Cornelissen, M.; Demczuk, V. The effects of motion on in-vehicle touch screen system operation: A battle management system case study. Transp. Res. Part F: Traffic Psychol. Behav. 2011, 14, 494–503. [Google Scholar] [CrossRef]

- Bajracharya, M.; Moghaddam, B.; Howard, A.; Brennan, S.; Matthies, L.H. A Fast Stereo-based System for Detecting and Tracking Pedestrians from a Moving Vehicle. Int. J. Robot. Res. 2009, 28, 1466–1485. [Google Scholar] [CrossRef]

- Chaves, S.M. Using Kalman filtering to improve a low-cost GPS-based collision warning system for vehicle convoys. 2010. [Google Scholar]

- Boult, T.; Micheals, R.; Gao, X.; Eckmann, M. Into the woods: visual surveillance of noncooperative and camouflaged targets in complex outdoor settings. Proc. IEEE 2001, 89, 1382–1402. [Google Scholar] [CrossRef]

- Zhao, Q. Aboveground Storage Tank Detection Using Faster R-CNN and High-Resolution Aerial Imagery. Master's thesis, Duke University, 2021. [Google Scholar]

- Peng, S. Multi-object extraction technology for complex background based on faster regions-CNN algorithm in the context of artificial intelligence. Serv. Oriented Comput. Appl. 2024, 19, 15–27. [Google Scholar] [CrossRef]

- Naz, P.; Hengy, S.; Hamery, P. Soldier detection using unattended acoustic and seismic sensors. In Ground/Air Multisensor Interoperability, Integration, and Networking for Persistent ISR III; SPIE, 2012; Volume 8389, pp. 183–194. [Google Scholar]

- Xu, D.; Wu, Y. Improved YOLO-V3 with DenseNet for Multi-Scale Remote Sensing Target Detection. Sensors 2020, 20, 4276. [Google Scholar] [CrossRef]

- Wang, H.; Qian, H.; Feng, S.; Wang, W. L-SSD: lightweight SSD target detection based on depth-separable convolution. J. Real-Time Image Process. 2024, 21, 1–15. [Google Scholar] [CrossRef]

- Wang, S.; Du, Y.; Zhao, S.; Gan, L. Multi-Scale Infrared Military Target Detection Based on 3X-FPN Feature Fusion Network. IEEE Access 2023, 11, 141585–141597. [Google Scholar] [CrossRef]

- Liu, J.; Jia, R.; Li, W.; Ma, F.; Abdullah, H.M.; Ma, H.; Mohamed, M.A. High precision detection algorithm based on improved RetinaNet for defect recognition of transmission lines. Energy Rep. 2020, 6, 2430–2440. [Google Scholar] [CrossRef]

- Pushkarenko, Y.; Zaslavskyi, V. Research on the state of areas in Ukraine affected by military actions based on remote sensing data and deep learning architectures. Radioelectron. Comput. Syst. 2024, 2024, 5–18. [Google Scholar] [CrossRef]

- Li, T.; Wang, H.; Li, G.; Liu, S.; Tang, L. SwinF: Swin Transformer with feature fusion in target detection. J. Phys. Conf. Ser. 2022, 2284, 012027. [Google Scholar] [CrossRef]

- Zhuang, X.; Li, D.; Wang, Y.; Li, K. Military target detection method based on EfficientDet and Generative Adversarial Network. Eng. Appl. Artif. Intell. 2024, 132. [Google Scholar] [CrossRef]

- Sun, Y.; Wang, J.; You, Y.; Yu, Z.; Bian, S.; Wang, E.; Wu, W. YOLO-E: a lightweight object detection algorithm for military targets. Signal, Image Video Process. 2025, 19, 1–12. [Google Scholar] [CrossRef]

- Jani, M.; Fayyad, J.; Al-Younes, Y.; Najjaran, H. Model compression methods for YOLOv5: A review. arXiv arXiv:2307.11904, 2023.

- Zhang, W.; Jiao, L.; Liu, X.; Liu, J. Multi-scale feature fusion network for object detection in VHR optical remote sensing images. IGARSS 2019-2019 IEEE International Geoscience and Remote Sensing Symposium; IEEE, 2019; pp. 330–333. [Google Scholar]

- Fan, L.; Wang, H.; Yang, Q.; Chen, X.; Deng, B.; Zeng, Y. Fast Detection and Reconstruction of Tank Barrels Based on Component Prior and Deep Neural Network in the Terahertz Regime. IEEE Trans. Geosci. Remote. Sens. 2022, 60, 1–17. [Google Scholar] [CrossRef]

- Ma, C.; Zhang, Y.; Guo, J.; Hu, Y.; Geng, X.; Li, F.; Lei, B.; Ding, C. End-to-End Method With Transformer for 3-D Detection of Oil Tank From Single SAR Image. IEEE Trans. Geosci. Remote. Sens. 2021, 60, 1–19. [Google Scholar] [CrossRef]

- Song, Z.; Kang, X.; Wei, X.; Dian, R.; Liu, J.; Li, S. Multi-granularity Context Perception Network for Open Set Recognition of Camouflaged Objects. IEEE Trans. Multimedia 2024, PP, 1–14. [Google Scholar] [CrossRef]

- Naeem, W.; Sutton, R.; Xu, T. An integrated multi-sensor data fusion algorithm and autopilot implementation in an uninhabited surface craft. Ocean Eng. 2012, 39, 43–52. [Google Scholar] [CrossRef]

- Lv, J.; Zhu, D.; Geng, Z.; Han, S.; Wang, Y.; Yang, W.; Ye, Z.; Zhou, T. Recognition of Deformation Military Targets in the Complex Scenes via MiniSAR Submeter Images With FASAR-Net. IEEE Trans. Geosci. Remote. Sens. 2023, 61, 1–19. [Google Scholar] [CrossRef]

- Wu, B.; Zhou, J. Video-Based Martial Arts Combat Action Recognition and Position Detection Using Deep Learning. IEEE Access 2024, 12, 161357–161374. [Google Scholar] [CrossRef]

- Choudhary, S. Real time pixelated camouflage texture generation. Doctoral dissertation, School of Computer Science, UPES, Dehradun, 2023. [Google Scholar]

- Barnawi, A.; Budhiraja, I.; Kumar, K.; Kumar, N.; Alzahrani, B.; Almansour, A.; Noor, A. A comprehensive review on landmine detection using deep learning techniques in 5G environment: open issues and challenges. Neural Comput. Appl. 2022, 34, 21657–21676. [Google Scholar] [CrossRef]

- Anzer, G.; Bauer, P.; Brefeld, U.; Faßmeyer, D. Detection of tactical patterns using semi-supervised graph neural networks. In 16th MIT sloan sports analytics conference. 2022; 1–15. [Google Scholar]

- Wang, Q.; Fu, M.; Wang, J.; Sun, L.; Huang, R.; Li, X.; Jiang, Z.; Huang, Y.; Jiang, C. Free-walking: Pedestrian inertial navigation based on dual foot-mounted IMU. Def. Technol. 2023, 33, 573–587. [Google Scholar] [CrossRef]

- Jocher, G. YOLO11. 2024. Available online: https://github.com/ultralytics/ultralytics/tree/main.

- Carion, N.; Massa, F.; Synnaeve, G.; Usunier, N.; Kirillov, A.; Zagoruyko, S. End-to-end object detection with transformers. In Computer Vision—ECCV 2020; Springer International Publishing: Cham, Switzerland, 2020; pp. 213–229. [Google Scholar]

- Feng, C.; Zhong, Y.; Gao, Y.; Scott, M.R.; Huang, W. Tood: Task-aligned one-stage object detection. In Proceedings of the 2021 IEEE/CVF International Conference on Computer Vision (ICCV), Montreal QC, Canada, 10–17 October 2021; pp. 3490–3499. [Google Scholar]

- Lyu, C.; Zhang, W.; Huang, H.; Zhou, Y.; Wang, Y.; Liu, Y.; Zhang, S.; Chen, K. Rtmdet: An empirical study of designing real-time object detectors. arXiv arXiv:2212.07784, 2022.

- Peng, Y.; Li, H.; Wu, P.; Zhang, Y.; Sun, X.; Wu, F. D-FINE: redefine regression Task in DETRs as Fine-grained distribution refinement. arXiv arXiv:2410.13842, 2024.

- Huang, S.; Lu, Z.; Cun, X.; Yu, Y.; Zhou, X.; Shen, X. DEIM: DETR with Improved Matching for Fast Convergence. arXiv arXiv:2412.04234, 2024.

- Jocher, G.; Stoken, A.; Borovec, J.; Changyu, L.; Hogan, A.; Diaconu, L.; Dave, P. ultralytics/yolov5: v3. 0. Zenodo. 2020. [Google Scholar]

- Glenn, J. YOLOv8. 2023. Available online: https://github.com/ultralytics/ultralytics/tree/main.

- Wang, A.; Chen, H.; Liu, L.; Chen, K.; Lin, Z.; Han, J.; Ding, G. Yolov10: Real-time end-to-end object detection. arXiv arXiv:2405.14458, 2024.

- Ma, X.; Dai, X.; Bai, Y.; Wang, Y.; Fu, Y. Rewrite the stars. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; 2024; pp. 5694–5703. [Google Scholar]

- Yu, W.; Zhou, P.; Yan, S.; Wang, X. Inceptionnext: When inception meets convnext. In Proceedings of the IEEE/cvf conference on computer vision and pattern recognition; 2024; pp. 5672–5683. [Google Scholar]

- Feng, Y.; Huang, J.; Du, S.; Ying, S.; Yong, J.-H.; Li, Y.; Ding, G.; Ji, R.; Gao, Y. Hyper-YOLO: When Visual Object Detection Meets Hypergraph Computation. IEEE Trans. Pattern Anal. Mach. Intell. 2024, 1–14. [Google Scholar] [CrossRef] [PubMed]

- Li, H.; Li, J.; Wei, H.; Liu, Z.; Zhan, Z.; Ren, Q. Slim-neck by GSConv: A better design paradigm of detector architectures for autonomous vehicles. arXiv arXiv:2206.02424, 10, 2022.

- Chen, J.; Mai, H.; Luo, L.; Chen, X.; Wu, K. Effective feature fusion network in BIFPN for small object detection. 2021 IEEE international conference on image processing (ICIP); IEEE, 2021; pp. 699–703. [Google Scholar]

- Yang, Z.; Guan, Q.; Zhao, K.; Yang, J.; Xu, X.; Long, H.; Tang, Y. Multi-branch Auxiliary Fusion YOLO with Re-parameterization Heterogeneous Convolutional for Accurate Object Detection. In Chinese Conference on Pattern Recognition and Computer Vision (PRCV); Springer Nature Singapore: Singapore, 2024; pp. 492–505. [Google Scholar]

- Du, D.; Zhu, P.; Wen, L.; Bian, X.; Lin, H.; Hu, Q.; Zhang, L. VisDrone-DET2019: The vision meets drone object detection in image challenge results. In Proceedings of the IEEE/CVF international conference on computer vision workshops; 2019; pp. 0–0. [Google Scholar]

- Yu, F.; Chen, H.; Wang, X.; Xian, W.; Chen, Y.; Liu, F.; Darrell, T. Bdd100k: A diverse driving dataset for heterogeneous multitask learning. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition; 2020; pp. 2636–2645. [Google Scholar]

|

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).