Submitted:

05 February 2026

Posted:

06 February 2026

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

2. Background and Related Work

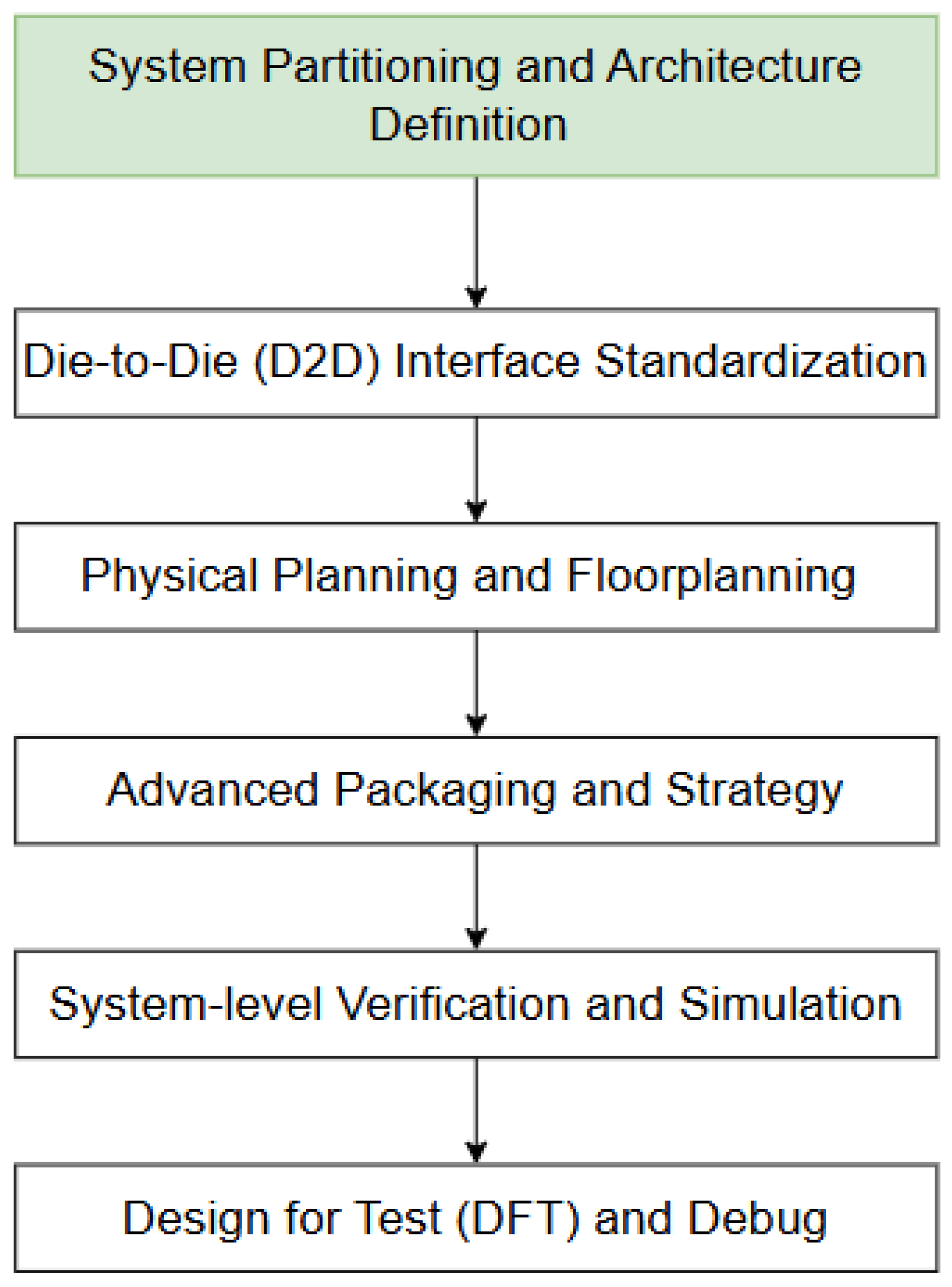

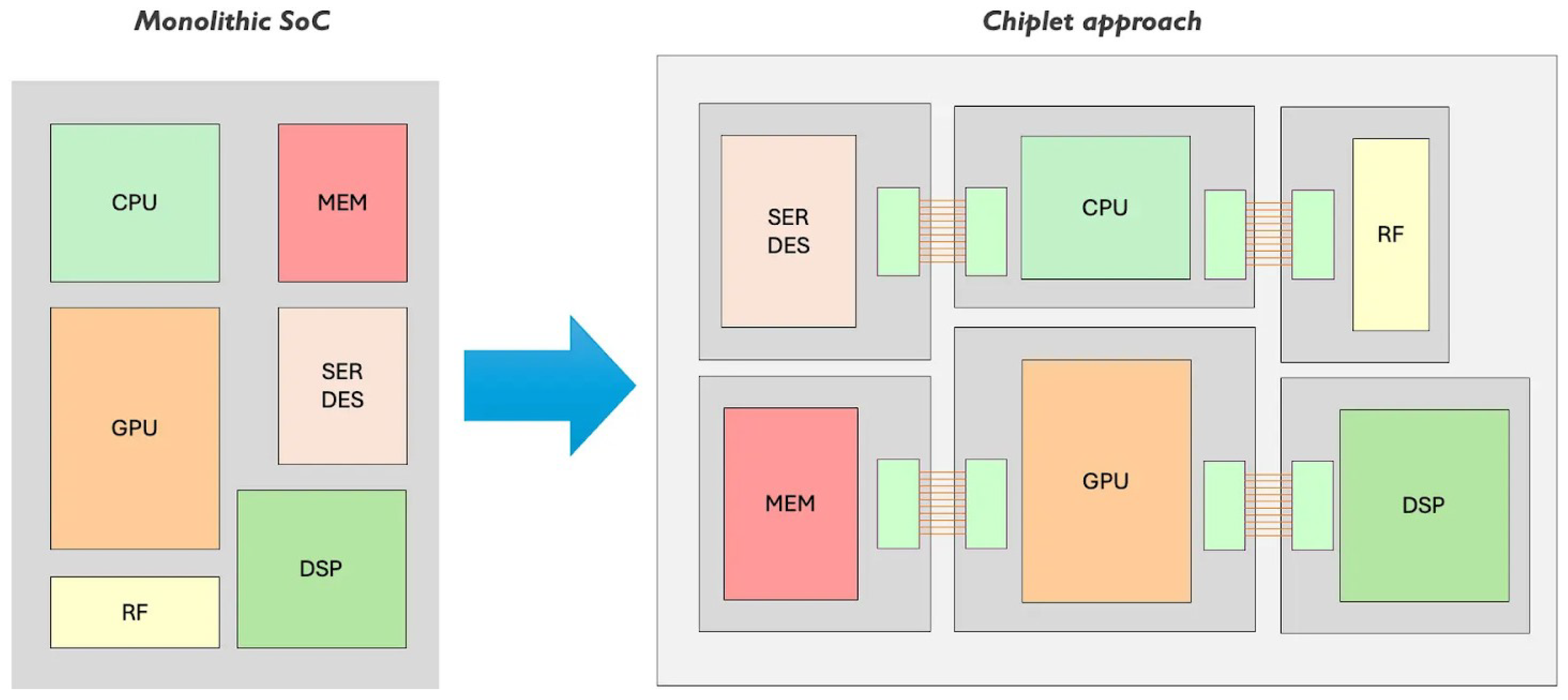

2.1. Chiplet-Based System Architecture

2.2. Communication Challenges in Multi-Chiplet Systems

2.3. Task Mapping and Workload Partitioning

2.4. Interconnect Network Design and Dataflow Mapping

2.5. Problem Statement and Research Gaps

3. Experimental Methodology

3.1. System Model and Simulation Framework

3.2. Benchmark Workloads

3.3. Performance Metrics and Evaluation Methodology

- Inter-chiplet Communication Latency: Average latency for data crossing chiplet boundaries, varying from optimal minimization to worst-case scenarios

- Inter-chiplet Traffic Percentage: Percentage of total memory and computation traffic that must traverse inter-chiplet links

- Network Congestion: Utilization percentage of inter-chiplet links, where congestion exceeding 70% represents unsustainable operation

- System Throughput: Images per second for DNN inference (images/s), scaled relative to throughput on a single high-performance monolithic chip

- Energy Efficiency: Tera-operations per Watt (TOPS/W) including both computation and communication overhead

- Network Communication Overhead: Percentage of total execution time consumed by inter-chiplet communication

4. Experimental Results and Characterization

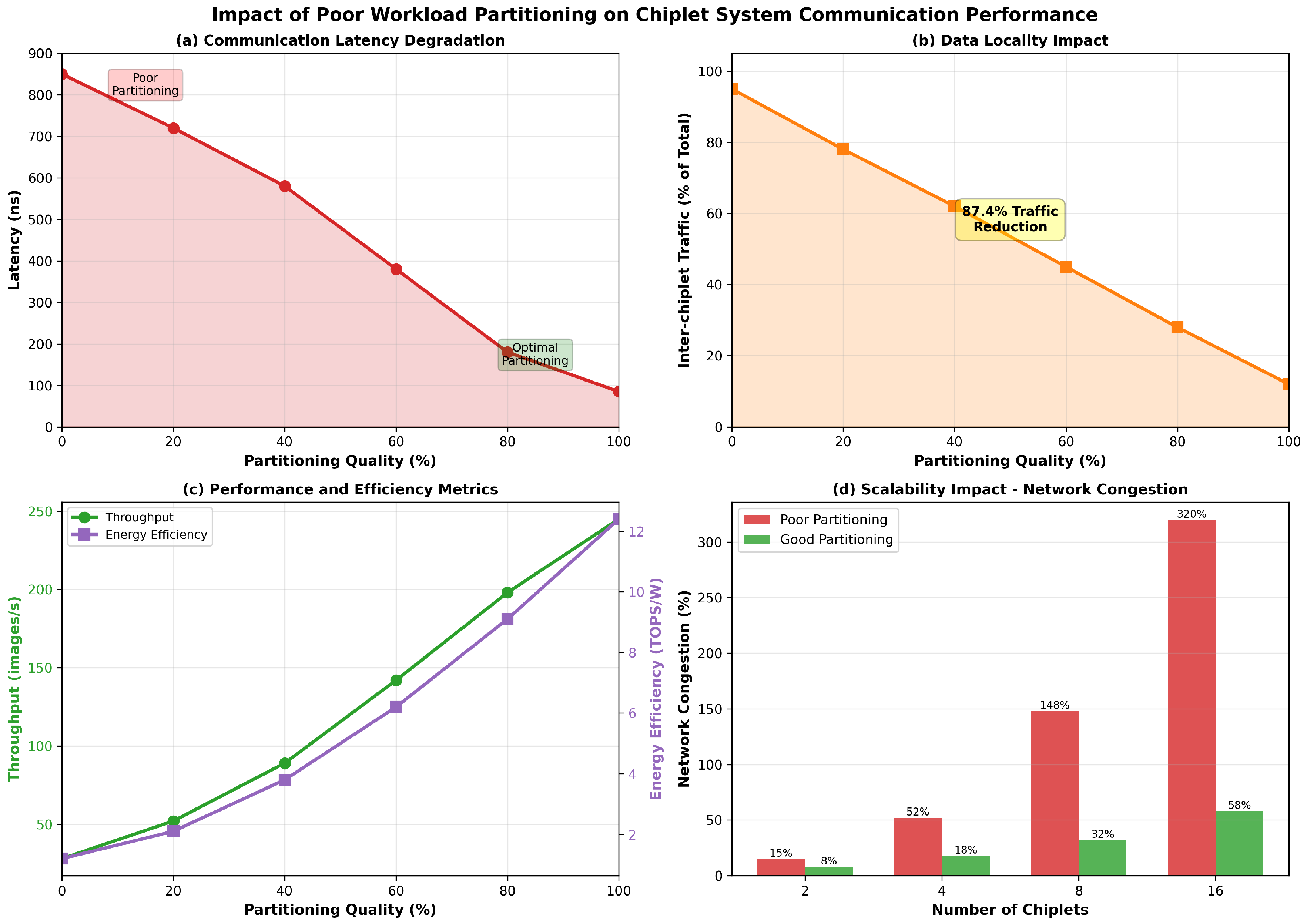

4.1. Communication Latency Degradation

- At 0% partitioning quality (completely random task allocation), inter-chiplet communication latency reaches 850 nanoseconds, representing a 10× increase over optimal placement (85 ns).

- Even modest partitioning improvements (20% quality) reduce latency to 720 ns, which is a notable 15% improvement from worst-case.

- The relationship is highly non-linear: the first 20% of optimization effort reduces latency by 15%, while the final 20% of optimization effort (80% → 100%) reduces latency by more than 50%.

- Diminishing returns indicate that while optimal partitioning is necessary, even conservative optimizations can yield substantial latency reductions.

4.2. Data Locality and Inter-Chiplet Traffic Reduction

- Poor partitioning (0% quality) forces 95% of all data movement to traverse inter-chiplet links.

- Optimal partitioning reduces inter-chiplet traffic to merely 12% of total traffic.

- This represents an 87.4% reduction in inter-chiplet traffic, reflecting the critical importance of data locality.

- The traffic reduction is nearly monotonic, indicating that partitioning optimizations consistently improve data locality.

4.3. System Performance and Energy Efficiency

- System throughput increases from 28 images/second (poor partitioning) to 245 images/second (optimal partitioning), about 8.75× improvement.

- Energy efficiency improves from 1.2 TOPS/W to 12.4 TOPS/W close to a 10.3× improvement.

- Both metrics improve monotonically with partitioning quality, though with some non-linearity.

- The relationship suggests that even moderately optimized partitioning can capture substantial performance gains: moving from 0% to 50% quality improves throughput by 5× (28 → 142 images/s) and energy by 5.17× (1.2 → 6.2 TOPS/W).

4.4. Network Congestion and System Scalability

- Poor partitioning creates severe congestion that scales superlinearly with chiplet count.

- For a 2-chiplet system, poor partitioning results in 15% link utilization; for 16 chiplets, this escalates to 320% (indicating traffic exceeding available bandwidth, requiring queuing and retransmissions).

- Optimized partitioning maintains congestion below 60% even for 16 chiplets

- The congestion difference grows dramatically with scale: for 16 chiplets, poor partitioning exhibits 320% utilization, while optimized partitioning maintains only 58%, a 5.5× difference.

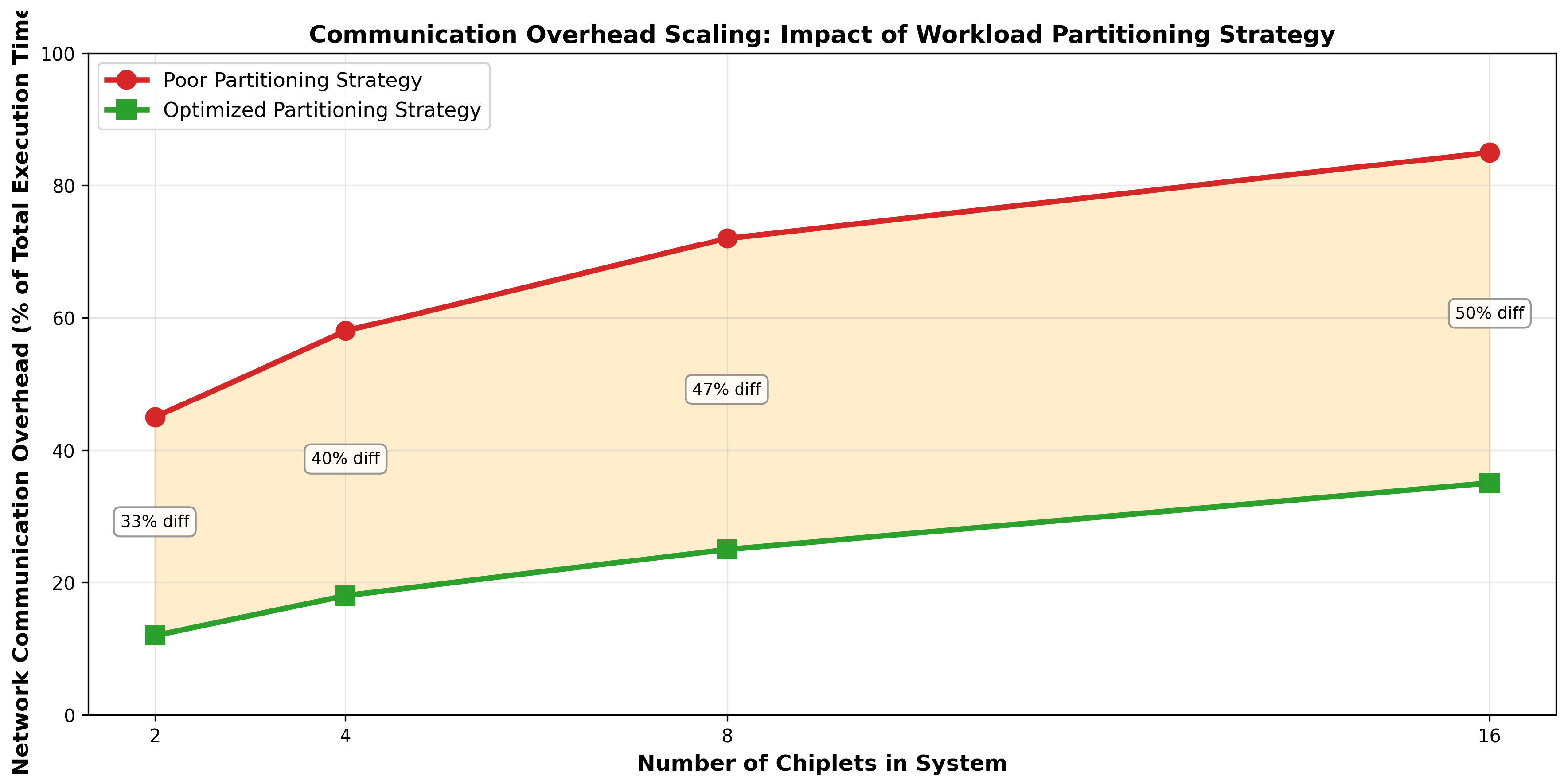

4.5. Communication Overhead Scaling and Execution Time Impact

- In poorly-partitioned 2-chiplet systems, communication overhead consumes 45% of execution time

- This escalates dramatically with scale: in poorly-partitioned 16-chiplet systems, communication overhead reaches 85% of execution time.

- In contrast, optimized partitioning maintains communication overhead at 12% for 2-chiplet systems and 35% for 16-chiplet systems

- The difference between poor and optimized partitioning grows with scale: 33% overhead difference for 2 chiplets increases to 50% overhead difference for 16 chiplets.

5. Analysis and Discussion

5.1. Root Causes of Performance Degradation

- First, excessive inter-chiplet traffic: Random task allocation distributes communicating tasks across chiplets with uniform probability, forcing 95% of data movement through inter-chiplet links. This overwhelming majority of traffic is concentrated on limited-bandwidth interconnects, causing immediate congestion.

- Communication latency amplification: As congestion accumulates, queuing delays compound the inherent latency of inter-chiplet communication. A single poorly placed task pair might incur only 85 ns of additional latency; millions of such pairs across thousands of tasks can create multiplicative delays.

- Memory system inefficiency: Chiplet systems implement cache coherence and memory consistency across multiple independent memory hierarchies. Poor partitioning breaks data locality, forcing coherence traffic and remote memory accesses that multiply the communication burden.

- Network deadlock and flow control: As congestion exceeds available bandwidth, network flow control mechanisms activate, introducing additional stalls and inefficiencies. Beyond certain congestion thresholds (typically 70-80%), networks enter pathological regimes where further load increases paradoxically reduce throughput.

5.2. Comparison with Prior Work and Validation

5.3. Implications for System Design

- Partitioning quality is critical: The difference between poor and optimized partitioning creates 8.75× throughput differences. For systems where performance is commoditized (as in many data center deployments), this difference directly translates to capacity and cost implications.

- Second, scaling is constrained by partitioning quality: Superlinear congestion scaling shows that naive partitioning strategies become unworkable beyond 8 chiplets. Systems aspiring to 16+ chiplets must employ sophisticated communication-aware partitioning or face fundamental performance walls.

- Communication overhead dominates in poorly partitioned systems: With 16 chiplets, it reaches 85% of execution time. This implies that, for large systems, partitioning optimization is more important than improvements in processor frequency, cache size, or memory bandwidth.

- Optimization effort is well invested: The nonlinear improvements in partitioning quality suggest that even heuristic solutions achieving 60-80% optimization quality capture most of the benefit. This makes practical optimization algorithms feasible for system deployment.

5.4. Limitations and Future Work

6. Related Work and Design Alternatives

6.1. Optimization and Scheduling Approaches

6.2. Communication Architecture Innovations

6.3. Co-Optimization Frameworks

7. Conclusions

- Communication latency scales with partitioning quality: Poor partitioning incurs 10× higher inter-chiplet latency (850 ns vs. 85 ns) compared to optimal partitioning, with highly non-linear improvements.

- Data locality is critical: Optimized partitioning reduces inter-chiplet traffic from 95% to 12%, an 87.4% reduction that reflects the fundamental importance of task co-location.

- Performance improvements are substantial: System throughput improves 8.75× (28 → 245 images/s), and energy efficiency improves 10.3× (1.2 → 12.4 TOPS/W) with optimal partitioning.

- Scaling is severely constrained by partitioning quality: Network congestion scales superlinearly with system size in poorly-partitioned systems, reaching 320% utilization in 16-chiplet systems while remaining below 60% in optimized designs.

- Communication overhead dominates poorly partitioned systems: At 16 chiplets, poor partitioning creates 85% communication overhead versus 35% with optimized partitioning, rendering computation nearly irrelevant to performance.

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Abbreviations

| SiP | System-in-Package |

Appendix A

Appendix A.1. Experimental Data Summary

| Partitioning Quality (%) | Inter-chiplet Latency (ns) | Inter-chiplet Traffic (%) |

|---|---|---|

| 0 | 850 | 95 |

| 20 | 720 | 78 |

| 40 | 580 | 62 |

| 60 | 380 | 45 |

| 80 | 185 | 28 |

| 100 | 85 | 12 |

| Partitioning Quality (%) | Throughput (image/s) | Energy Efficiency (TOPS/W) |

|---|---|---|

| 0 | 28 | 1.2 |

| 20 | 52 | 2.1 |

| 40 | 89 | 3.8 |

| 60 | 142 | 6.2 |

| 80 | 198 | 9.1 |

| 100 | 245 | 12.4 |

| Chiplet Count | Poor Partitioning (%) | Good Partitioning (%) |

|---|---|---|

| 2 | 15 | 8 |

| 4 | 52 | 18 |

| 8 | 148 | 32 |

| 16 | 320 | 58 |

References

- Sharma, H.; Doppa, J.; Ogras, U.; Pande, P. Designing High-Performance and Thermally Feasible Multi-Chiplet Architectures Enabled by Non-Bendable Glass Interposer. ACM Transactions on Embedded Computing Systems 2025, 24, 1–28. [Google Scholar] [CrossRef]

- Wang, X.; Wang, Y.; Jiang, Y.; Singh, A.K.; Yang, M. On task mapping in multi-chiplet based many-core systems to optimize inter-and intra-chiplet communications. IEEE Transactions on Computers 2024, 74, 510–525. [Google Scholar] [CrossRef]

- Liu, X.; Zhao, Y.; Zou, M.; Liu, Y.; Hao, Y.; Li, X.; Zhang, R.; Wen, Y.; Hu, X.; Du, Z.; et al. VariPar: Variation-Aware Workload Partitioning in Chiplet-Based DNN Accelerators. IEEE Transactions on Computer-Aided Design of Integrated Circuits and Systems 2025. [Google Scholar]

- Zhang, J.; Fan, X.; Ye, Y.; Wang, X.; Xiong, G.; Leng, X.; Xu, N.; Lian, Y.; He, G. INDM: Chiplet-based interconnect network and dataflow mapping for DNN accelerators. IEEE Transactions on Computer-Aided Design of Integrated Circuits and Systems 2023, 43, 1107–1120. [Google Scholar] [CrossRef]

- Zhang, J.; Wang, X.; Ye, Y.; Lyu, D.; Xiong, G.; Xu, N.; Lian, Y.; He, G. M2M: A Fine-Grained Mapping Framework to Accelerate Multiple DNNs on a Multi-Chiplet Architecture. IEEE Transactions on Very Large Scale Integration (VLSI) Systems 2024. [Google Scholar]

- Mallya, N.B.; Strikos, P.; Goel, B.; Ejaz, A.; Sourdis, I. A Performance Analysis of Chiplet-Based Systems. In Proceedings of the 2025 Design, Automation & Test in Europe Conference (DATE); IEEE, 2025; pp. 1–7. [Google Scholar]

- Pavlidis, V.F.; Friedman, E.G. Interconnect-based design methodologies for three-dimensional integrated circuits. Proceedings of the IEEE 2009, 97, 123–140. [Google Scholar] [CrossRef]

- Yang, Z.; Ji, S.; Chen, X.; Zhuang, J.; Zhang, W.; Jani, D.; Zhou, P. Challenges and opportunities to enable large-scale computing via heterogeneous chiplets. In Proceedings of the 2024 29th Asia and South Pacific Design Automation Conference (ASP-DAC); IEEE, 2024; pp. 765–770. [Google Scholar]

- Tan, Z.; Cai, H.; Dong, R.; Ma, K. Nn-baton: Dnn workload orchestration and chiplet granularity exploration for multichip accelerators. In Proceedings of the 2021 ACM/IEEE 48th Annual International Symposium on Computer Architecture (ISCA); IEEE, 2021; pp. 1013–1026. [Google Scholar]

- Shao, Y.S.; Clemons, J.; Venkatesan, R.; Zimmer, B.; Fojtik, M.; Jiang, N.; Keller, B.; Klinefelter, A.; Pinckney, N.; Raina, P.; et al. Simba: Scaling deep-learning inference with multi-chip-module-based architecture. In Proceedings of the Proceedings of the 52nd annual IEEE/ACM international symposium on microarchitecture, 2019; pp. 14–27. [Google Scholar]

- Randall, D.S. Cost-Driven Integration Architectures for Multi-Die Silicon Systems. PhD thesis, University of California, Santa Barbara, 2020. [Google Scholar]

- MURALI, K.R.M. Emerging chiplet-based architectures for heterogeneous integration. INTERNATIONAL JOURNAL 2025, 11, 1081–1098. [Google Scholar]

- Medina, R.; Kein, J.; Ansaloni, G.; Zapater, M.; Abadal, S.; Alarcón, E.; Atienza, D. System-level exploration of in-package wireless communication for multi-chiplet platforms. In Proceedings of the Proceedings of the 28th Asia and South Pacific Design Automation Conference, 2023; pp. 561–566. [Google Scholar]

- Kanani, A.; Pfromm, L.; Sharma, H.; Doppa, J.; Pande, P.; Ogras, U. THERMOS: Thermally-Aware Multi-Objective Scheduling of AI Workloads on Heterogeneous Multi-Chiplet PIM Architectures. ACM Transactions on Embedded Computing Systems 2025, 24, 1–26. [Google Scholar] [CrossRef]

- Cai, Q.; Xiao, G.; Lin, S.; Yang, W.; Li, K.; Li, K. ABSS: An adaptive batch-stream scheduling module for dynamic task parallelism on chiplet-based multi-chip systems. ACM Transactions on Parallel Computing 2024, 11, 1–24. [Google Scholar] [CrossRef]

- Ye, Y.; Liu, Z.; Liu, J.; Jiang, L. ASDR: an application-specific deadlock-free routing for chiplet-based systems. In Proceedings of the Proceedings of the 16th International Workshop on Network on Chip Architectures, 2023; pp. 46–51. [Google Scholar]

- Mahmud, M.T.; Wang, K. A flexible hybrid interconnection design for high-performance and energy-efficient chiplet-based systems. IEEE Computer Architecture Letters 2024. [Google Scholar]

- Karempudi, V.S.P.; Bashir, J.; Thakkar, I.G. An analysis of various design pathways towards multi-terabit photonic on-interposer interconnects. ACM Journal on Emerging Technologies in Computing Systems 2024, 20, 1–34. [Google Scholar] [CrossRef]

- Chen, S.; Li, S.; Zhuang, Z.; Zheng, S.; Liang, Z.; Ho, T.Y.; Yu, B.; Sangiovanni-Vincentelli, A.L. Floorplet: Performance-aware floorplan framework for chiplet integration. IEEE Transactions on Computer-Aided Design of Integrated Circuits and Systems 2023, 43, 1638–1649. [Google Scholar] [CrossRef]

- Graening, A.; Patel, D.A.; Sisto, G.; Lenormand, E.; Perumkunnil, M.; Pantano, N.; Kumar, V.B.; Gupta, P.; Mallik, A. Cost-Performance Co-Optimization for the Chiplet Era. In Proceedings of the 2024 IEEE 26th Electronics Packaging Technology Conference (EPTC); IEEE, 2024; pp. 40–45. [Google Scholar]

- Mishty, K.; Sadi, M. Chiplet-gym: Optimizing chiplet-based ai accelerator design with reinforcement learning. IEEE Transactions on Computers 2024. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).