Submitted:

30 January 2026

Posted:

02 February 2026

You are already at the latest version

Abstract

Keywords:

Introduction

Boltzmann Statistical Entropy

‘If we apply these to the second law, the quantity that we usually refer to as entropy can be identified with the probability of a respective state. ... The system of bodies we are talking about is in some state at the beginning of time; through the interaction of the bodies this state changes; according to the second law this change must always occur in such a way that the total entropy of all the bodies increases; according to our present interpretation this means nothing other than the probability of the overall state of all these bodies becomes ever greater; the system of bodies always passes from some less probable to some more probable state.’

Gibbs Statistical Entropy

‘The merit of having systematized this system, described it in a sizable book and given it a characteristic name belongs to one of the greatest of American scientists, perhaps the greatest as regards pure abstract thought and theoretical research, namely Willard Gibbs, until his recent death professor at Yale College. He called this science statistical mechanics.’

‘In every theoretical study of transport processes, it is necessary to clearly understand where irreversibility is introduced. If it is not introduced, the theory is incorrect. An approach that preserves time-reversal symmetry inevitably leads to zero or infinite values for transport coefficients. If we do not see where irreversibility has been introduced, we do not understand what we are doing.’

Phase Space Mixing

‘Mixing systems formed the basis of Nikolai Sergeevich Krylov’s pioneering work on the foundations of statistical mechanics, a very talented and early deceased student of V. A. Fock. ... According to Krylov, “... the laws of statistics and thermodynamics exist because for statistical systems (which are mixing-type systems), the uniform law of distribution of initial microscopic states within the empirically determined region of phase space ΔГo is valid. ... In this work, the concept of ergodicity is not considered. We reject the acceptance of the ergodic hypothesis. We proceed from the notion of motions of the mixing type. … Such a mixing is due to the fact that in the n-dimensional configuration space, trajectories close at the beginning diverge very quickly, so that their normal distance increases exponentially».’

‘Krylov’s idea is expressed quite clearly. At the heart is the concept of mixing, which can be used to describe the physical process of relaxation — the transition of a system to a stationary state, regardless of its initial state. Since Gibbs, the idea of the need for mixing in statistical mechanics has been repeatedly proposed, but it is probably Krylov who first connected mixing with the local characteristic of motion in such systems — exponential instability.’

‘In physics, it has been common to think that in systems with a large number of degrees of freedom, such as systems of statistical mechanics, the transitive case and mixing are of primary importance, while systems with a small number of degrees of freedom exhibit regular behavior. Kolmogorov notes that this idea appears to be based on a predominant focus on linear systems and a small set of integrable classical problems, and that these ideas have limited significance. Kolmogorov’s key idea is that there is no gap between two types of behavior – regular and complex, irregular – multidimensional systems can demonstrate regular motion, and systems with a small number of degrees of freedom can be chaotic.’

From Gibbs Entropy to Subjective Entropy

‘We may imagine a great number of systems of the same nature, but differing in the configurations and velocities which they have at a given instant, and differing not merely infinitesimally, but it may be so as to embrace every conceivable combination of configuration and velocities. And here we may set the problem, not to follow a particular system through its succession of configurations, but to determine how the whole number of systems will be distributed among the various conceivable configurations and velocities at any required time, when the distribution has been given for some one time.’

Carnap for Objective Entropy

‘I had some talks separately with John von Neumann, Wolfgang Pauli, and some specialists in statistical mechanics on some questions of theoretical physics with which I was concerned. I certainly learned very much from these conversations; but for my problems in the logical and methodological analysis of physics, I gained less help than I had hoped for. … My main object was not the physical concept, but the use of the abstract concept for the purposes of inductive logic. Nevertheless, I also examined the nature of the physical concept of entropy in its classical statistical form, as developed by Boltzmann and Gibbs, and I arrived at certain objections against the customary definitions, not from a factual-experimental, but from a logical point of view. It seemed to me that the customary way in which the statistical concept of entropy is defined or interpreted makes it, perhaps against the intention of the physicists, a purely logical instead of physical concept; if so, it can no longer be, as it was intended to be, a counterpart to the classical macro-concept of entropy introduced by Clausius, which is obviously a physical and not a logical concept. The same objection holds in my opinion against the recent view that entropy may be regarded as identical with the negative amount of information. I had expected that in the conversations with the physicists on these problems, we would reach, if not an agreement, then at least a clear mutual understanding. In this, however, we did not succeed, in spite of our serious efforts, chiefly, it seemed, because of great differences in point of view and in language.’

‘The concept of entropy in thermodynamics (S_th) had the same general character as the other concepts in the same field, e.g., temperature, heat, energy, pressure, etc. It served, just like these other concepts, for the quantitative characterization of some objective property of a state of a physical system, say, the gas g in the container in the laboratory at the time t.’

‘Dear Mr. Carnap! I have studied your manuscript a bit; however I must unfortunately report that I am quite opposed to the position you take. Rather, I would throughout take as physically most transparent what you call “Method II”. In this connection I am not all influenced by recent information theory (…) Since I am indeed concerned that the confusion in the area of foundations of statistical mechanics not grow further (and I fear very much that a publication of your work in this present form would have this effect).’

Back to Boltzmann

‘it is also clear that Gibbs’s measure of entropy is unable to replace Boltzmann’s measure of entropy in the treatment of irreversible phenomena in isolated systems, since it indiscriminately includes the initial nonequilibrium states with the final equilibrium.’

‘The Gibbs entropy is an efficient tool for computing entropy values in thermal equilibrium when applied to the Gibbsian equilibrium ensembles, but the fundamental definition of entropy is the Boltzmann entropy. We have discussed the status of the two notions of entropy and of the corresponding two notions of thermal equilibrium, the “ensemblist” and the “individualist” view. Gibbs’s ensembles are very useful, in particular as they allow the efficient computation of thermodynamic functions, but their role can only be understood in Boltzmann’s individualist framework.’

‘philosophical discussions in statistical mechanics face an immediate difficulty because unlike other theories, statistical mechanics has not yet found a generally accepted theoretical framework or a canonical formalism.’

‘Finally, there is no way around recognising that BSM is mostly used in foundational debates, but it is GSM that is the practitioner’s workhorse. When physicists have to carry out calculations and solve problems, they usually turn to GSM which offers user-friendly strategies that are absent in BSM. So either BSM has to be extended with practical prescriptions, or it has to be connected to GSM so that it can benefit from its computational methods.’

‘What, then, is this “measure of entropy”? Roughly speaking, what we do is to count all the different possible submicroscopic states that could form a particular macroscopic state, and the number of these states N is a measure of the entropy of the macroscopic state. The larger N is, the greater the entropy.’

‘This is, indeed, essentially the famous definition of entropy given by the great Austrian physicist Ludwig Boltzmann in 1872.’

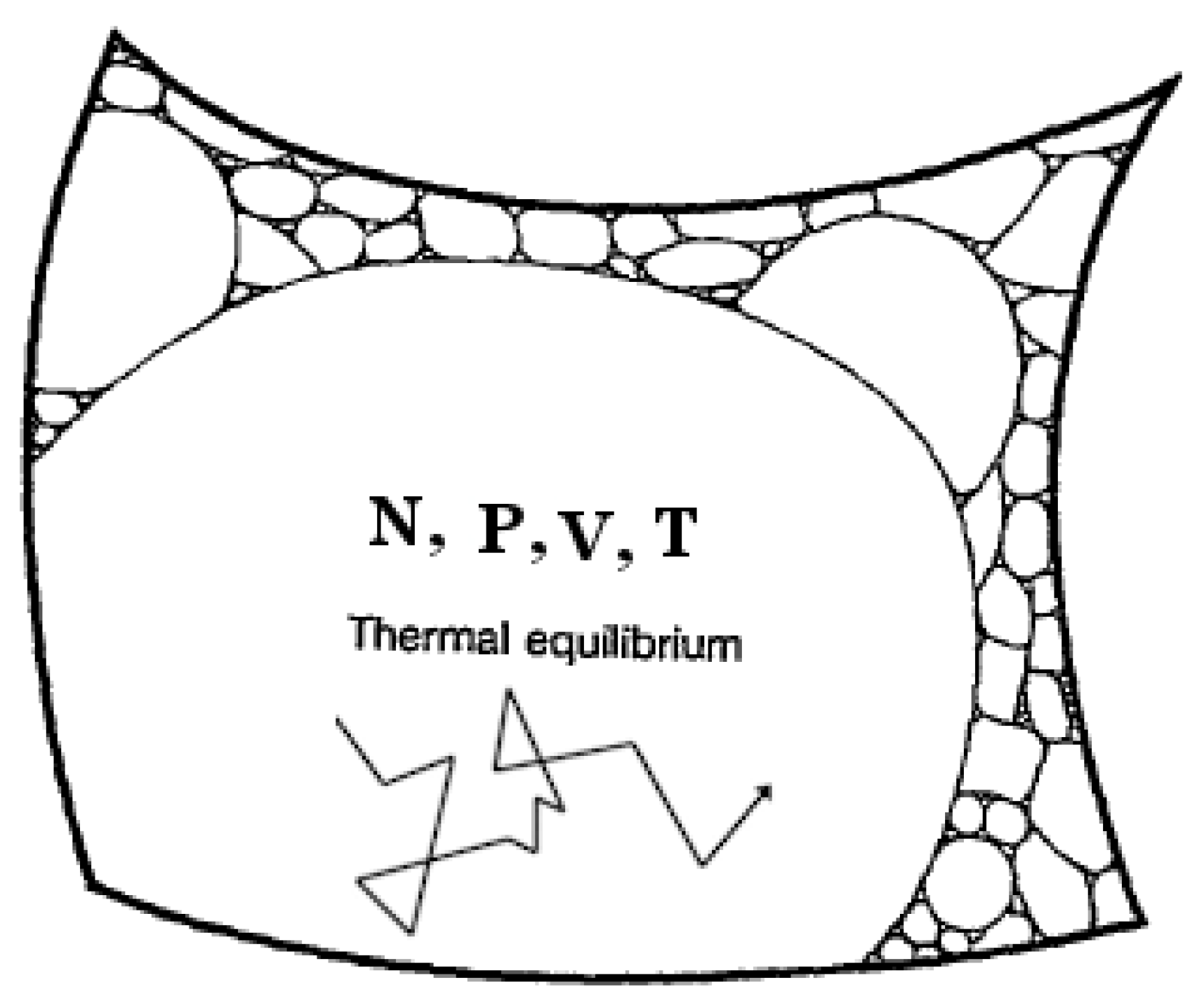

‘it is best that we return to the notion of phase space … the phase space P, of some physical system, is a conceptual space, normally of a very large number of dimensions, each of whose points represents a complete description of the submicroscopic state of the (say, classical) physical system being considered.’

‘Now, in order to define the entropy, we need to collect together - into a single region called a coarse-grained region - all those points in P which are considered to have the same values for their macroscopic parameters. In this way, the whole of P will be divided into such coarse-graining regions. … Thus, the phase space P will be divided up into these regions, and we can think of the volume V of such a region as providing a measure of the number different ways that different submicroscopic states can go to make up the particular macroscopic state defined by its coarse-graining region.’

Macrostate Entropy

‘In order to see how this helps in our understanding of the 2nd Law, it is important to appreciate how stupendously different in size the various coarse-graining regions are likely to be, at least in the kind of situation that is normally encountered in practice. The logarithm in Boltzmann’s formula, together with the smallness of k in commonplace terms, tends to disguise the vastness of these volume differences, so that it is easy to overlook the fact that tiny entropy differences actually correspond to absolutely enormous differences in coarse-graining volumes. … Since the (vastly) larger volume corresponds to an (albeit usually only slightly) larger entropy, we see, in general rough terms why the expression for the entropy increases unstoppably over time. This is exactly what we would expect according to the second law.’

Non-Equilibrium Macrostates and Microstates

Entropy of Non-Equilibrium States and Kinetics

Discussion

References

- Cercignani, C. Ludwig Boltzmann: the man who trusted atoms; 1998. [Google Scholar]

- Uffink, J. Boltzmann’s Work in Statistical Physics; Zalta, Edward N., Ed.; The Stanford Encyclopedia of Philosophy. First published 2004; substantive revision 2014.

- M. J. Klein, Max Planck and the beginnings of the quantum theory. Archive for History of Exact Sciences 1962, 1, 459–479.

- Brush, S. The Kind of Motion We Call Heat; 1976. [Google Scholar]

- Gibbs, J. W. Elementary Principles in Statistical Mechanics: Developed with Especial Reference to the Rational Foundation of Thermodynamics; 1902. [Google Scholar]

- Ehrenfest, P.; Ehrenfest, T. The Conceptual Foundations of the Statistical Approach in Mechanics (1911); 1959. [Google Scholar]

- L. Boltzmann, Wissenschaftliche Abhandlungen, Vol. I, II, and III, 1909. Über die beziehung dem zweiten Haubtsatze der mechanischen Wärmetheorie und der Wahrscheinlichkeitsrechnung respektive den Sätzen über das Wärmegleichgewicht. 1877, v. II, paper 42, 164–223.

- Rudnyi, E. Erwin Schrödinger and Negative Entropy; 2025; preprint. [Google Scholar] [CrossRef]

- Atkins, P.; de Paula, J. Atkins’ Physical Chemistry; 2002. [Google Scholar]

- Ya, A. Borshchevsky, Physical Chemistry. In Statistical Thermodynamics; 2023; Volume 2. (In Russian) [Google Scholar]

- Garberoglio, G.; Gaiser, C.; Gavioso, R. M.; et al. Ab initio calculation of fluid properties for precision metrology. Journal of Physical and Chemical Reference Data 2023, 52(no. 3). [Google Scholar] [CrossRef]

- Zubarev, D. N.; Morozov, V.; Röpke, G. Statistical Mechanics of Nonequilibrium Processes; 1996. [Google Scholar]

- Mukhin, R. R. Development of the Concept of Dynamic Chaos in the USSR. In 1950-1980s; (in Russian). 2010. [Google Scholar]

- Frigg, R.; Werndl, C. Can somebody please say what Gibbsian statistical mechanics says? The British Journal for the Philosophy of Science 2021, 72:1, 105–129. [Google Scholar] [CrossRef]

- Carnap, R. Two essays on entropy; Univ of California Press, 1977. [Google Scholar]

- J. Anta Pulido, Historical and Conceptual Foundations of Information Physics. PhD Thesis, 2021.

- Goldstein, S.; Lebowitz, J. L.; Tumulka, R.; Zanghì, N. Gibbs and Boltzmann entropy in classical and quantum mechanics. Statistical mechanics and scientific explanation: Determinism, indeterminism and laws of nature 2020, 519–581. [Google Scholar]

- Frigg, R.; Werndl, C. Philosophy of Statistical Mechanics. In The Stanford Encyclopedia of Philosophy; 2023. [Google Scholar]

- Review of the Universe. , Thermodynamics, Entropy, universe-review.ca, дoступ 16.11 2025.

- Roger Penrose, Fashion, Faith, and Fantasy in the New Physics of the Universe, 2016. 3. Fantasy. 3.3. The second law of thermodynamics.

- Rudnyi, E. Clausius Inequality in Philosophy and History of Physics; 2025; preprint. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).