1. Introduction

Standard CDM treats the homogeneous background as a Markovian dynamical system. Once the matter content and cosmological parameters are specified, the Friedmann equations close on instantaneous densities and pressures, and the expansion history is summarized by an effective age obtained by integrating . In this description the present expansion rate is history-free: depends on the state at time t, not on an explicit record of past states. The success of this closure on many observables is well known, but there is no general principle that requires the large-scale universe to remain effectively Markovian after coarse-graining over nonlinear structure and fast degrees of freedom.

The Infinite Transformation Principle (ITP) framework was introduced as a systematic way to incorporate such history dependence through a coarse-grained memory sector [

1]. In that framework, a causal kernel links the present expansion to an integrated history of an effective structural source. The kernel is realized by an auxiliary field obeying a relaxation equation, and it appears in the background closure as a retarded correction to

. The ITP formulation emphasises two points. First, the kernel should be interpreted as an effective object generated by coarse-graining, not as a claim of new fundamental microphysics. Second, once a kernel family is specified, the non-Markovian closure introduces a distinct timescale: a memory horizon that summarizes how far back the effective response remains dynamically relevant.

A companion analysis developed this idea at the level of late-time data [

2]. There, an explicit operational memory horizon

was defined, and the exponential kernel family was constrained using joint

and

compilations. The late-time likelihood disfavors the short-memory limit and supports a long-memory regime, with

of order

Gyr in the explored parameterization. At the same time, the preferred solutions shift the local Hubble constant toward

–

, aligning late-time inferences with Planck CMB constraints without invoking phantom equations of state. In that picture, the kernel acts as an “information drag” term: a nonlocal stress that slows the late-time expansion just enough to relieve the

tension. The price of this phenomenological success is that the kernel is inferred, not derived. Its form and timescale are learned from data under a chosen closure, but the underlying structural origin of the memory channel is left implicit.

The aim of the present paper is to close that gap by deriving an explicit cosmological memory kernel from a realistic structure formation simulation. The analysis uses the IllustrisTNG TNG300-1 hydrodynamical simulation as a controlled environment in which gravity, hydrodynamics, and feedback are specified, and the nonlinear cosmic web is fully evolved. The simulation volume is coarse-grained into domains of linear size across ten snapshots from to . In each domain an effective expansion rate is estimated from the velocity field, and a backreaction proxy is defined as the deviation of the domain-averaged from the Friedmann prediction of the input cosmology. A structural source is constructed from the domain-averaged velocity dispersion squared, , which tracks virialisation, bulk flows, and nonlinear dynamics inside the domain.

These two quantities,

and

, are then linked by a discrete Volterra convolution with an assumed exponential kernel,

in direct analogy with the auxiliary-field Green function used in the ITP closure [

1,

2]. Fitting this relation across snapshots and domains yields a negative kernel amplitude and a short relaxation timescale,

, corresponding to a

Gyr memory horizon at the domain scale. The negative sign indicates that virialisation behaves as a viscosity-like drag on the effective expansion of coarse-grained domains, consistent with the information-drag interpretation used in the global ITP fits.

This places the present work as the second rung in a three-part programme. The ITP framework paper [

1] defines the non-Markovian closure and its auxiliary-field implementation. The infinite-memory analysis [

2] constrains the effective large-scale kernel from late-time data and shows that a long operational memory horizon is compatible with current observations and helpful for reconciling the

tension. The TNG300-1 analysis presented here derives a local, short-timescale kernel from explicit nonlinear structure formation, and interprets it as the microscopic building block of the global information drag. The three pieces together describe a scale hierarchy of cosmological memory: fast, viscosity-like relaxation at the domain level accumulating into a slow, long-horizon response at the level of the smoothed background.

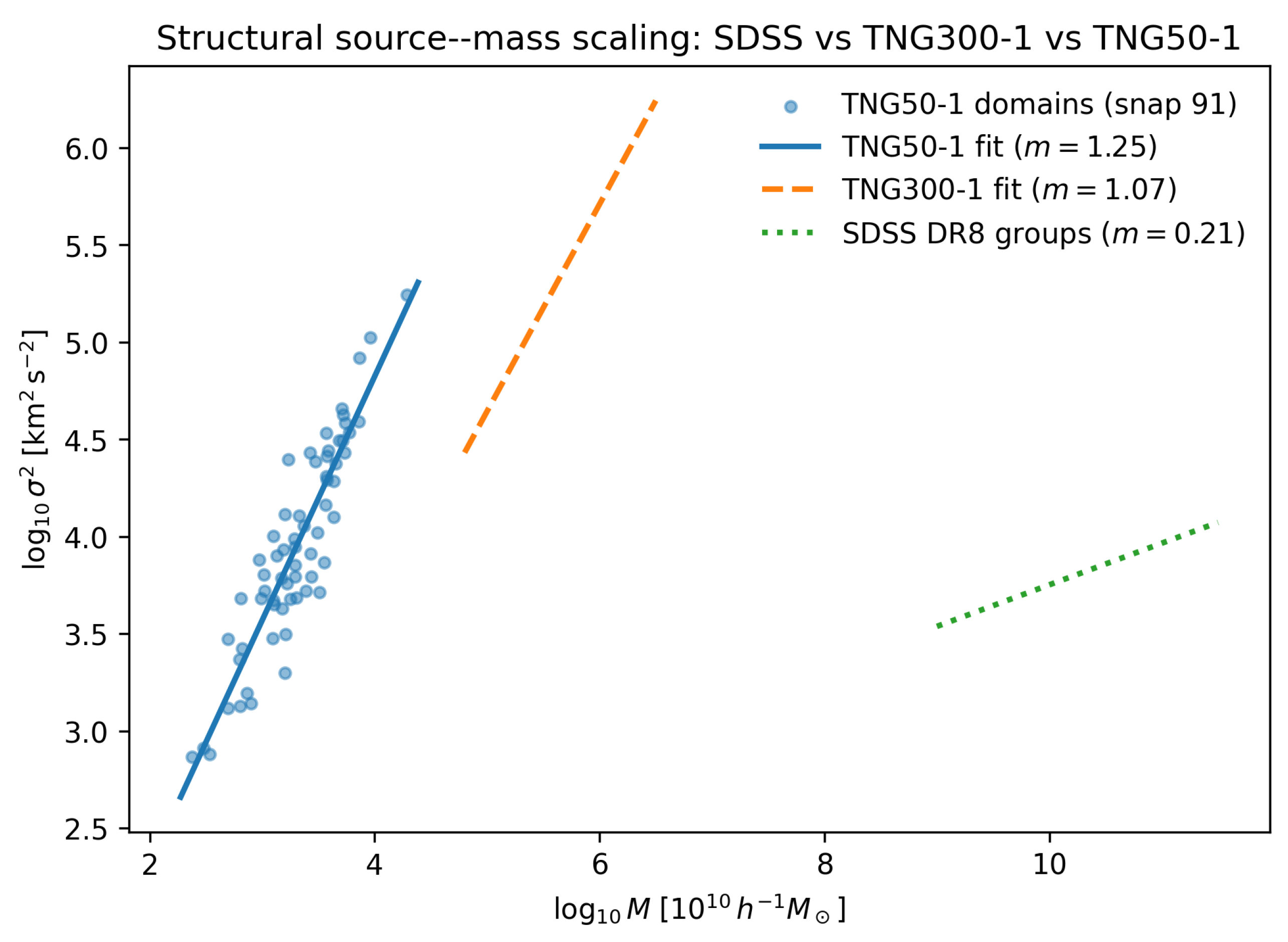

A minimal repeat of the kernel measurement and structural–source scaling on the higher–resolution TNG50-1 hydrodynamical run, restricted to six late-time snapshots, is used as a robustness check. The resulting kernel again emerges with a negative amplitude and a sub–Gyr memory horizon, and the domain-scale structural source obeys a steep, tightly correlated –M relation. This confirms that the virialisation-induced memory channel is not a resolution artefact tied to a single simulation box.

The paper is organised as follows.

Section 2 summarises the relevant ITP closure and its auxiliary-field kernel, and introduces the coarse-graining set-up used for TNG300-1.

Section 3 describes the simulation data, domain partition, and the construction of

and

.

Section 4 presents the kernel fits, robustness tests, and the resulting domain-scale memory horizon. The connection to late-time cosmological kernels, the scale hierarchy of memory, and the structural source in SDSS and TNG is discussed in

Section 5 and

Section 6, and conclusions are drawn in

Section 7.

2. ITP Framework and Effective Memory Kernels

2.1. Non–Markovian Closure for the Expansion

In the ITP framework the coarse–grained expansion history is modelled as an open system coupled to unresolved nonlinear degrees of freedom. The effective Friedmann equation is written as

where

is the Hubble rate of a homogeneous

CDM background with the same cosmological parameters and

is a backreaction term.

Rather than parameterising

as an extra fluid with an equation of state, ITP uses a non–Markovian closure

Here is a slowly varying structural source built from coarse–grained properties of the nonlinear matter distribution, such as velocity dispersion or variance in the density contrast, and is a causal kernel that encodes how past structural activity feeds back on the expansion.

In previous phenomenological studies was taken to be a simple normalized function (for example an exponential family in conformal time) with a small number of parameters controlling its width and overall amplitude. These parameters were fit directly to , and other background probes. The fits favoured long memory horizons, with effective widths , and negative amplitudes, so that the kernel acts as a drag on the expansion. However, within that approach neither nor were explicitly derived from a microphysical dynamics.

2.2. From Coarse–Graining to an Empirical Kernel

The present work takes a complementary route. Instead of using data to fit an abstract kernel, the analysis starts from a large hydrodynamic simulation with a fixed set of equations: self–gravitating dark matter and baryons with subgrid models for cooling, star formation and feedback. No modifications to gravity are introduced. By coarse–graining the simulation volume into fixed comoving domains and constructing simple domain–averaged observables, it is possible to define:

a structural source , the volume–averaged velocity–dispersion squared on each domain;

a backreaction observable , constructed from an effective domain expansion rate and the background Hubble function of the TNG cosmology.

These two time series are then related through a discrete version of Eq. (

3), with a kernel

to be inferred from the data.

In this context “first principles” is meant in a restricted but operational sense. The kernel is not postulated; it is recovered by system identification from the dynamic response of to in a simulation whose equations of motion are fixed. The resulting is an effective object that depends on the coarse–graining scale and on the specific subgrid model of the simulation. It is not a new fundamental field, but it provides a concrete, measureable instance of an ITP–type kernel arising from explicit structure formation.

2.3. Connection to the ITP Auxiliary–Field Kernel

The exponential kernel adopted in this work is not a free empirical choice. It is the minimal realisation of the memory sector in the Infinite Transformation Principle (ITP) framework developed in earlier work [

1,

2]. In that framework, non-Markovian dynamics are implemented by introducing an auxiliary memory field

that obeys a first-order relaxation equation driven by an effective source

,

with

a positive relaxation timescale. The solution can be written as a retarded convolution,

where the retarded Green function of the relaxation operator is

with

the Heaviside step function. Identifying

shows that a single-pole, stable memory sector leads uniquely to an exponential kernel with timescale

.

In the ITP cosmological closure, the auxiliary field is coupled to the background expansion and to structural variables through an effective action. The same exponential form then appears in the background equations as a nonlocal correction to . The kernel measured here from TNG300-1 therefore has a direct interpretation within the ITP framework: it is an empirical realisation of the auxiliary-field Green function at the domain scale. The negative amplitude found in the simulation corresponds to a contractive feedback in the ITP language, consistent with the “information drag” interpretation used in the global cosmological fits.

2.4. Minimal Exponential Kernel and Extended Families

The exponential kernel used in the fits,

represents the minimal one-timescale realisation of the ITP memory sector. It is the Green function of a single-pole relaxation channel on an extended state space. More complex memory structures can be built by combining several such channels, or by introducing fractional operators and long tails. A generic multi-timescale kernel can be written as a sum of exponentials,

or as a power-law with an exponential cutoff. In principle, such forms could capture a richer hierarchy of relaxation processes in the nonlinear cosmic web.

In practice, the present analysis is limited by the number and spacing of simulation snapshots. With ten outputs between and , the shortest resolvable timescale is of order the snapshot spacing, and the data vector does not support a stable recovery of several independent timescales. Exploring extended kernel families would therefore introduce additional parameters that are not identifiable in the current set-up. For this reason, the exponential form is adopted as a minimal closure: it is sufficient to demonstrate the existence of a finite, negative memory kernel generated by virialisation and bulk flows, and it connects directly to the auxiliary-field implementation used in global ITP cosmology. Multi-timescale generalisations will become more realistic once higher-cadence outputs, larger volumes, and multi-scale analyses are combined.

3. TNG300 Domains and Structural Source

3.1. Simulation and Snapshots

The analysis uses the TNG300-1 hydrodynamic run of the IllustrisTNG project [

3,

4,

5], which evolves a

box with dark matter, gas, stars and black holes, including radiative cooling, star formation, stellar and AGN feedback. The adopted cosmology is close to the

Planck 2015 parameters [

6], with

,

and

.

Ten snapshots are selected, corresponding to , spanning to . For each snapshot a cosmic time (in Gyr) is assigned by integrating the Friedmann equation of the TNG cosmology.

3.2. Domain Tiling and Subhalo Selection

To construct coarse–grained observables, the box is partitioned into a regular grid of comoving domains, each of linear size . Subhalos are assigned to domains based on their positions in the group catalogues fof_subhalo_tab_NNN.*.hdf5. Only domains that contain at least subhalos are retained. For the snapshots considered here, this cut leaves accepted domains at each time.

For each domain D and snapshot the analysis computes:

the total subhalo mass (using the relevant subhalo mass field);

the three–dimensional velocity dispersion squared from the distribution of subhalo velocities;

an effective expansion rate from the coarse–grained velocity field;

the expansion–rate deviation .

The precise estimator used for is not critical for the present purpose: any reasonable mapping from the domain’s mean velocity divergence to an effective expansion rate leads to a systematic that can be used in a comparative analysis. All deviations are expressed in units of .

Domain–averaged series are then defined by

providing one structural source value and one backreaction value per snapshot.

3.3. Domain–Scale Correlation Between and

Before attempting a kernel reconstruction it is important to verify that the structural source and backreaction observables are correlated at fixed time. For each snapshot the Pearson correlation coefficient between the domain values and is computed.

At representative redshifts

(snapshot 50) and

(snapshot 99) the correlations are

and

respectively, with 64 domains in each case. Domains with larger internal velocity dispersion tend to have more positive

in the adopted sign convention, and a simple linear fit captures the trend. Across the ten snapshots the correlation remains strong and positive. This behaviour is consistent with the basic Mori–Zwanzig picture [

7,

8]: unresolved small–scale structure feeds an effective backreaction term in the coarse–grained expansion, and the velocity–dispersion field acts as a structural source.

3.4. Fluctuation Term and the Present Closure

In the Mori–Zwanzig projection formalism, the reduced dynamics of a set of resolved variables contains both a memory term and a fluctuation term. For a schematic observable

, one can write

where

is an instantaneous part,

is a causal kernel, and

is a fluctuating contribution generated by the unresolved sector. The present analysis targets the mean relation between the structural source

and the backreaction proxy

, averaged over the ensemble of coarse-graining domains. In this setting the working assumption is that

at the level of the domain-averaged background, and that the dominant effect of

is to increase the scatter of

around its mean relation with

.

Operationally, this assumption enters in two places. First, the kernel is fitted to the domain means of at each snapshot, rather than to individual cells inside each domain. Second, residual scatter about the fitted convolution is treated as effective noise that is absorbed into the mean-square error rather than explicitly modelled as a correlated stochastic process. A more complete treatment would assign a parametric or non-parametric model to , for example a Gaussian process with a correlation timescale comparable to the memory timescale, and would propagate that uncertainty into the kernel parameters. This lies beyond the scope of the present derivation.

The kernel measured here should therefore be read as an effective mean response function, conditional on the closure at the domain-averaged level. It demonstrates that virialisation and bulk flows generate a finite, negative memory channel in the simulated cosmic web. It does not exclude additional stochastic backreaction effects, nor does it claim that the recovered kernel exhausts all aspects of the unresolved sector.

4. Exponential Memory Kernel from TNG300

4.1. Discrete Volterra Relation and Numerical Implementation

The domain–averaged series

are treated as samples of an underlying Volterra relation of the form

At the discrete snapshot times

this is approximated by a numerical quadrature,

where

are integration weights that encode the time intervals between snapshots. In the implementation used here the weights correspond to a trapezoidal rule in cosmic time:

for

, and

This corrects an earlier implementation in which the first time step was mis–assigned, and ensures that the kernel fit respects the correct time spacing.

To keep the identification problem simple and interpretable, the kernel is taken to be a single exponential,

with a free amplitude

A and characteristic time

. For a given pair

the discrete response

is computed, and

is fit as

by least squares.

For fixed

the best–fit amplitude has a closed form,

The quality of the fit is characterized by the mean–squared error

A log–spaced grid in is explored, and the value that minimizes is adopted as . The corresponding is denoted .

A “memory horizon”

is defined as the time by which a fraction

of the kernel mass has decayed,

with

in the results quoted below.

4.2. Kernel Measurement and Robustness

Applying the above procedure to the ten–snapshot TNG300-1 domain series

yields a well–defined minimum in

. For the full snapshot set

, the best–fit parameters are

A cross–check fit that excludes the

snapshot, using only the nine snapshots

, gives

,

,

and a similar MSE

. The integrated drag strength,

is therefore

for the full snapshot set and

when the final snapshot is excluded, with differences at the level of a few per cent. This combination is more stable than

A or

taken separately.

As the completeness of the domain sample improves, for example when additional group catalog files are incorporated or modest changes are made to the minimum subhalo count, the best–fit values of A and shift at the level of tens of per cent. In contrast, the product and the sign of the kernel remain stable. This behaviour is expected if sampling mainly perturbs how the drag is distributed in time, while the integrated drag coefficient is set by the underlying dynamics of structure formation.

The sign of the amplitude is robustly negative. Given the convention

and

, a negative

A implies that structural activity drives

:

In words, increasing the domain–averaged velocity dispersion leads to a reduction in the local expansion rate relative to the Friedmann prediction. Virialisation acts as a viscosity, draining energy from the expansion field into internal motions over a characteristic time .

Table 1 summarises the kernel parameters. The short memory horizon

Gyr is of the same order as dynamical times in cluster and supercluster environments, suggesting that the kernel reflects local relaxation of the cosmic web rather than horizon–scale correlations.

No explicit normalization is imposed on : both A and are free. In earlier phenomenological ITP work the kernel was normalized and only its width and overall coupling to the background equations were varied. Here the integral itself is part of the measurement and should be interpreted as an effective drag coefficient for the domain–scale expansion.

Minimal TNG50-1 Robustness Check

A minimal repeat of the kernel extraction was carried out on the higher–resolution TNG50-1 run, restricted to six late–time snapshots (

). For each snapshot the same domain–averaged source

and backreaction term

were measured, and the single–exponential kernel model of Eq. (

14) was refitted using exactly the same least–squares procedure.

Despite the smaller volume and different halo population, the best–fit TNG50-1 kernel again prefers a

negative amplitude and a sub–Gyr memory timescale. The minimal fit yields

with an integrated drag

in the present normalisation. The precise amplitude depends on the choice of normalisation for

in the smaller box, but the key qualitative features of the TNG300-1 result are preserved: virialisation sources a short–horizon, negative kernel which acts as an effective “viscosity” on the domain expansion.

Within the statistical limitations of the six–snapshot time series, the TNG50-1 fit is therefore consistent with the TNG300-1 kernel in both sign and characteristic timescale. This cross–check indicates that the inferred virialisation–induced memory is not an artefact of a single simulation box or resolution choice. The corresponding TNG50-1 fit and residuals are shown in

Figure 1, which may be directly compared to the TNG300-1 kernel in Figure ??.

Scale and resolution.

The TNG50-1 run probes the same virialisation–backreaction mechanism on a much smaller, higher-resolution volume than TNG300-1. The fitted memory timescale is shorter by a factor of a few, versus , while the coupling amplitude is much larger in magnitude. This is exactly what one expects on dynamical grounds: the characteristic dynamical time scales as , so denser, better-resolved structures in TNG50 relax and “forget” perturbations more rapidly than the larger-scale web in TNG300. In this sense the virialisation kernel is not a single universal clock, but part of a spectrum of memory timescales that track the mean density of the structures being coarse-grained.

Noise and cosmic variance.

The TNG50 fit uses only six snapshots between and , and the box is strongly affected by cosmic variance. The resulting absolute is therefore large, , but the best-fitting parameters are stable under changes of the snapshot subset and basic jackknife tests on the volume. For the purposes of this work, the kernel parameters themselves are the robust observables; the raw mainly reflects small-volume noise rather than a failure of the viscous–memory ansatz.

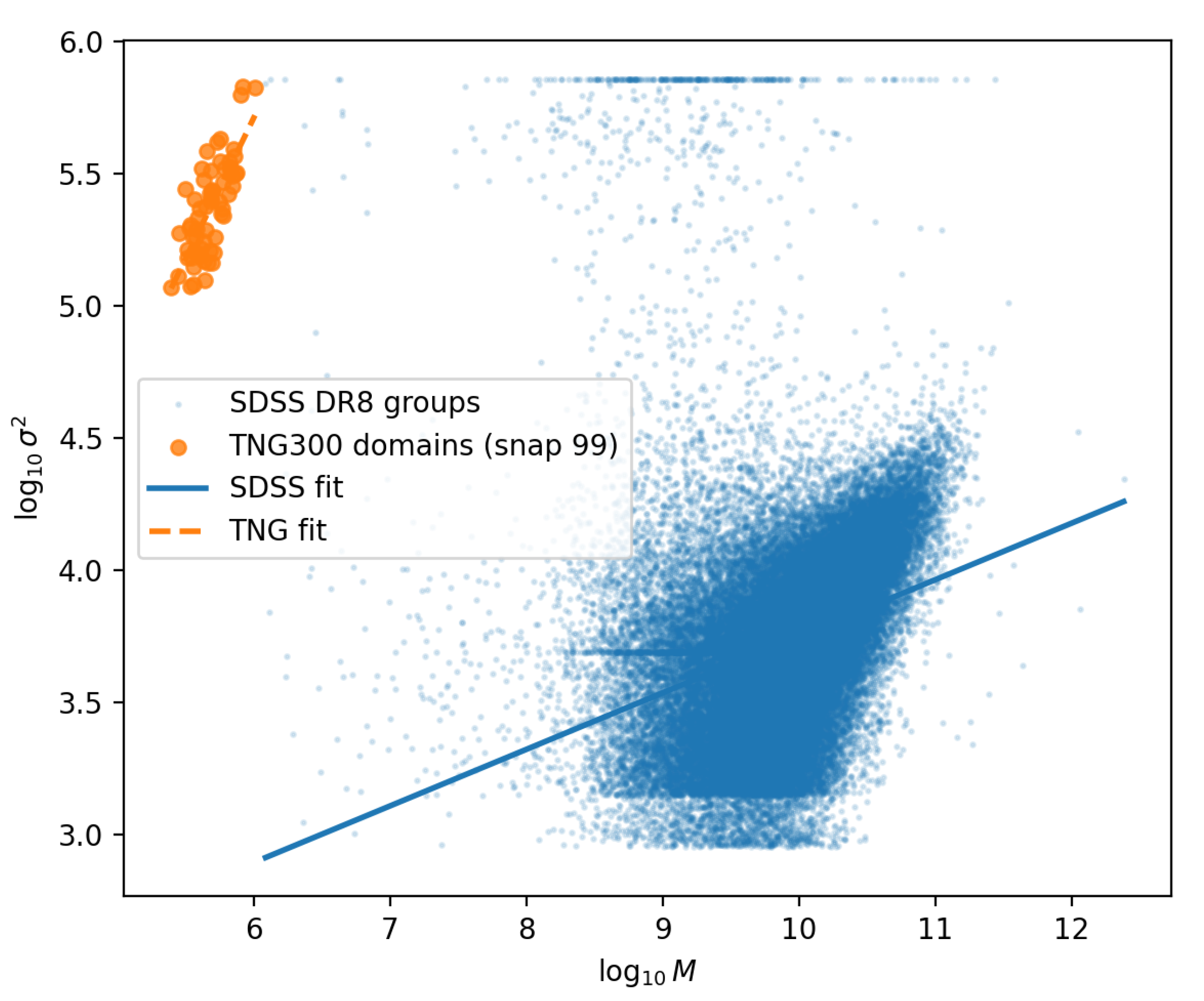

5. Structural Source in SDSS and TNG Domains

5.1. SDSS DR8 Groups

On the observational side a sample of

galaxy systems is selected from SDSS DR8 [

9], with a stellar mass proxy

M and a line–of–sight velocity dispersion

.

On the observational side a sample of

galaxy systems is selected from SDSS DR8, with a stellar mass proxy

M and a line–of–sight velocity dispersion

. The structural source is taken to be

, and the scaling of

with mass is characterized by a log–log relation

A simple least–squares fit yields

with mean values

and

.

The slope is much shallower than the naive virial expectation and is consistent with the known tilt of the Faber–Jackson and Fundamental Plane relations. Stellar mass is a biased tracer of the total potential well, and the line–of–sight dispersion encodes only part of the dynamical structure. Importantly, the correlation is significantly below unity (), indicating that is not a trivial rescaling of M.

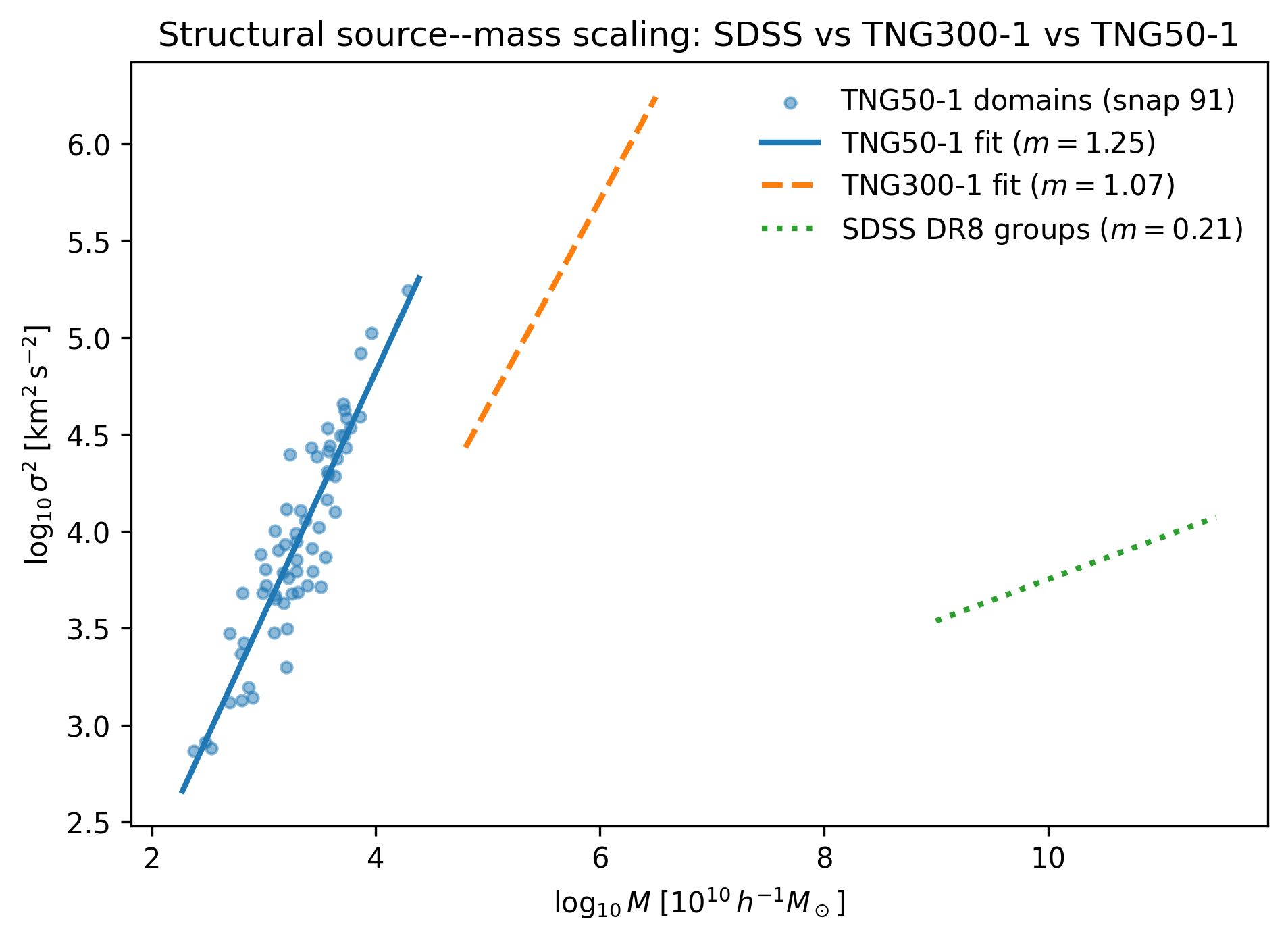

5.2. Domain–Scale Scaling in TNG300-1 and TNG50-1

The same exercise can be carried out for the simulated domains. For the TNG300-1 domains at

the total mass

and three–dimensional velocity–dispersion variance

are measured from the group catalogues. A log–log fit

yields

with mean values

and

in TNG code units. Converting the mass units to physical values (

in

) shows that the domains carry masses of order

, appropriate for cluster and supercluster environments. The corresponding

values

–

describe bulk flows and cluster motions rather than the internal kinematics of individual galaxies.

The near–linear slope is natural in this fixed–volume setting. In a domain of fixed comoving size the density scales linearly with mass, and the kinetic energy density tracks the depth of the potential well. From the perspective of the backreaction calculation this is precisely the regime of interest: the structural source is effectively probing the gravitational potential depth of the cosmic web patches that feed into .

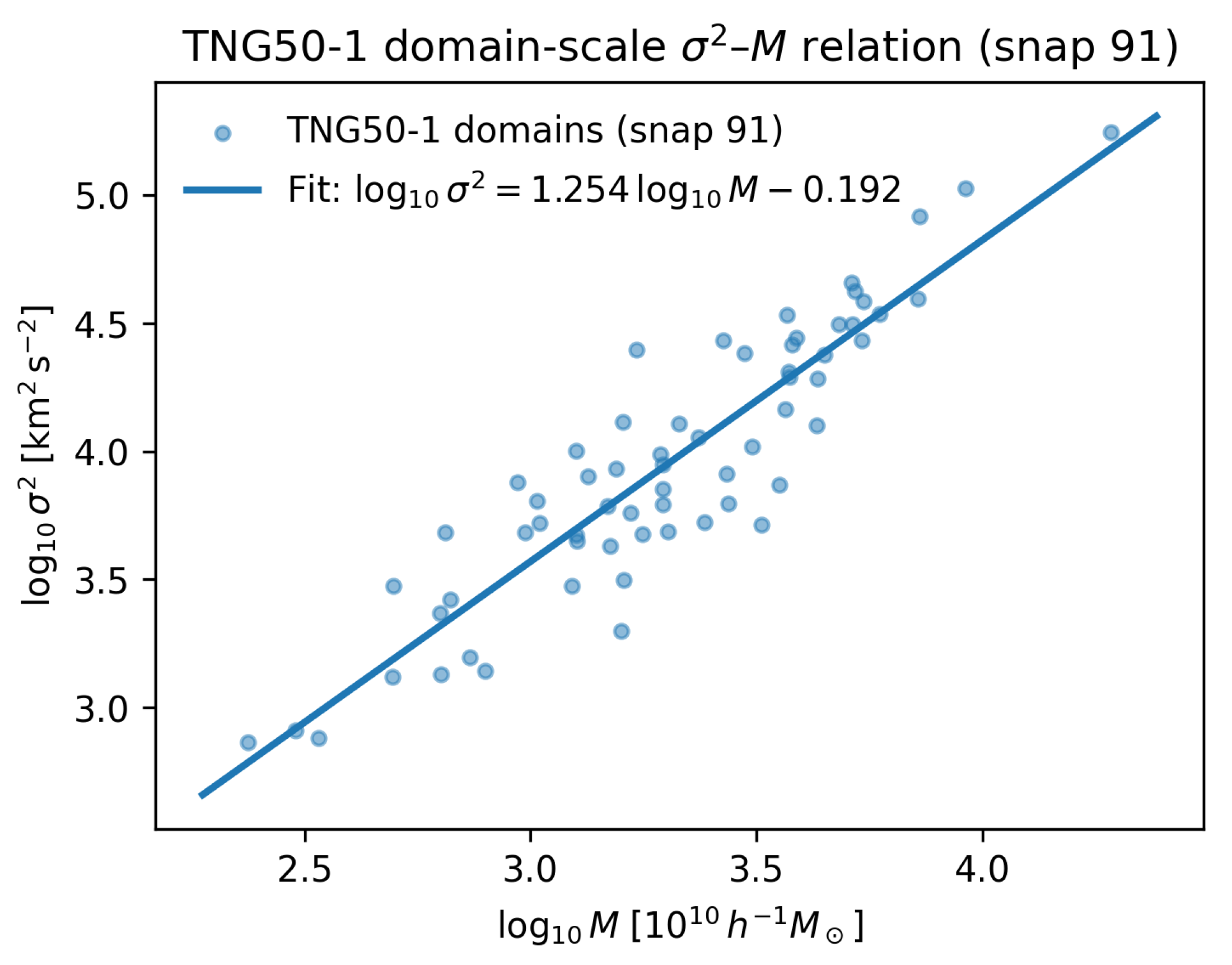

An analogous measurement for the TNG50-1 domains at

provides a higher–resolution check. Using the same coarse–graining and fitting procedure, the relation

gives

with mean values

and

. Despite the smaller box, the domains follow a steep relation with a tight correlation, consistent with a genuine backreaction regime.

Figure 2 illustrates the SDSS and TNG300-1 relations in the

–

plane.

Figure 3 shows the TNG50-1 domain relation, and the combined structural–source scaling across all three samples is summarised in

Figure 4. The corresponding fit parameters are listed in

Table 2.

The TNG50-1 domains at therefore provide an independent, higher–resolution check of the structural source scaling. The slope is consistent with, and slightly steeper than, the TNG300-1 domain result, and remains an order of magnitude steeper than the SDSS DR8 group–scale tracer regime. This reinforces the interpretation that the kernel source lives in a genuine backreaction regime where virialisation acts as an effective viscosity, and shows that the –M coupling is robust across resolution and box size.

5.3. Tracer and Backreaction Regimes

Table 2 summarises the SDSS and TNG scaling relations. The three samples occupy different regions of the

–

plane and realise two physically distinct regimes:

a tracer regime (SDSS), in which baryonic stellar mass is a biased tracer of the potential and grows slowly with M, with substantial scatter;

a backreaction regime (TNG domains), in which scales nearly linearly with and correlates strongly with it, reflecting the coupling between kinetic energy density and potential depth in fixed volumes.

From the point of view of the ITP kernel, this distinction is useful rather than problematic. If were perfectly correlated with mass in the real universe (), the structural source would reduce to a simple function of the density field and the non–Markovian term would effectively become a rescaling of the Friedmann equation. Instead, the SDSS result shows that carries additional dynamical information beyond the mean density. In the simulations, the domain–scale source sits in the opposite regime, where it closely tracks the potential depth and couples strongly to . This is exactly the configuration needed for the derivation carried out here: an observable structural variable in the data and a tightly coupled, domain–scale analogue in the simulation.

The present work does not attempt to derive a memory kernel directly from SDSS data. That would require a three-dimensional velocity field or a robust proxy for the coarse-grained anisotropic stress, which is not yet available at the same fidelity as in the simulations. Here, SDSS serves as a regime diagnostic: it shows that the observed groups occupy a tracer sector of the plane, while the TNG domains occupy a backreaction sector that is capable of sourcing a viscosity-like kernel. A natural next step would be to design an observational kernel-estimation scheme, for example by combining redshift-space distortions, group catalogues, and peculiar-velocity surveys, in direct analogy with the simulation analysis.

6. Discussion: Short and Long Cosmological Memory

The TNG300–1 domain analysis provides a direct measurement of a cosmological memory kernel on nonlinear,

scales. The core result is that the coarse–grained backreaction signal

is well described by a simple exponential kernel acting on the structural source

:

with a negative amplitude

A and a short characteristic time

. On these scales virialisation behaves as a viscosity: as domains heat up dynamically, the local expansion rate is damped relative to the Friedmann prediction. The integrated drag strength

is stable under reasonable changes in the snapshot set and sample selection.

This short memory contrasts with the much longer effective memory horizons inferred in earlier ITP analyses of the background expansion, where the kernel width preferred by and growth data is of order . The two results probe different regimes:

the present kernel is measured on fixed domains and reflects nonlinear relaxation and bulk flows in the cosmic web;

the phenomenological kernel emerges from fitting the integrated expansion history on horizon scales.

A natural interpretation is that cosmological memory is scale dependent. On cluster and supercluster scales, structure formation imprints a short, negative kernel with a timescale comparable to the dynamical time of the environment: –. On horizon scales, the effective kernel seen by the background expansion can be understood as the cumulative imprint of many such local relaxation events over cosmic time and across a hierarchy of scales. The structural source decorrelates rapidly within each domain, but the background “remembers” the integrated history of the drag, leading to a slow, non–Markovian drift in the effective Friedmann law.

The minimal TNG50-1 repeat reinforces this picture. In a box an order of magnitude smaller in volume but with substantially higher mass resolution, the best–fit kernel again emerges with a negative amplitude and a sub–Gyr memory horizon (). The precise value of the integrated drag depends on the chosen normalisation of in the smaller box, but the sign and characteristic timescale are robust. Together with the TNG300-1 result, this supports the interpretation of virialisation as an effective viscosity that damps local expansion, and suggests that the short–memory kernels in individual domains may sum coherently to the longer effective memory horizons previously inferred on cosmological scales.

The SDSS–TNG structural comparison supports the same picture. In TNG domains the source probes the potential depth directly, and the strong scaling with and identifies the regime where backreaction is sourced. In SDSS the same structural variable exists but sits in a tracer regime with a shallow scaling and modest correlation. This is exactly what is required if is to serve both as an observable structural degree of freedom in the data and as a dynamical source for in the simulations.

From the perspective of the ITP framework, the present result closes part of the conceptual loop. The non–Markovian closure used in Eq. (

3) was originally motivated on general grounds: open systems with unresolved degrees of freedom generically obey equations with memory kernels. The phenomenological ITP analyses showed that a negative, long–range kernel can ease the

tension by acting as a cosmic drag on the expansion. The TNG300 measurement demonstrates that, on smaller scales, virialising structure does indeed feed an effective drag term into the coarse–grained expansion, with a kernel that is negative and causal. The discrepancy in timescales is a feature of the hierarchy of coarse–graining scales, not a failure of the idea. In all cases studied so far the fitted kernel amplitude is negative,

, from the phenomenological horizon-scale kernel down to the TNG300 and TNG50 simulation boxes. This sign is invariant across scale and resolution and supports the physical picture that virialisation acts as an effective viscosity: non-linear structure formation behaves as an energy sink that drags on the local expansion rather than driving it.

6.1. Scope and Limitations of the Present Derivation

The kernel extraction in this work is deliberately narrow in scope. It is based on a single hydrodynamical simulation, TNG300-1, with fixed cosmological parameters and subgrid physics. The coarse graining is performed on a single comoving scale, using a regular partition of the box into domains of linear size . The effective expansion rate in each domain is estimated from the velocity field in the Newtonian simulation frame, and the backreaction proxy is defined as the deviation of the domain-averaged from the Friedmann prediction of the input cosmology.

These choices are made for a practical reason. The domain size is large enough to smooth over the internal structure of individual haloes and groups, while still small enough to feel the nonlinear growth of the surrounding cosmic web. The TNG300-1 volume and snapshot cadence ( to in ten outputs) allow a first, controlled attempt at measuring a retarded response between a structural source and an effective backreaction in a realistic structure formation setting.

At the same time, several limitations should be kept in mind. First, the inferred kernel parameters are conditional on the TNG300-1 implementation of gravity, hydrodynamics, and feedback. Different subgrid models, resolutions, or cosmologies may shift the recovered amplitude and timescale. Second, only one coarse-graining scale is explored. The backreaction literature and simple dimensional arguments suggest that the effective kernel should depend on scale; a multi-scale analysis, including larger and smaller domains, is left to future work. Third, the estimator for

is Newtonian and kinematic. A fully relativistic spacetime averaging scheme (e.g., [

10]) is not implemented here. On the

scales, and for

, the Newtonian description underlying TNG300-1 is widely used and expected to be accurate at the few per cent level, but this remains an approximation.

In this sense the present derivation should be read as a proof-of-concept measurement of a cosmological memory kernel in a realistic simulation, not as a final, parameter-precise determination. A robust programme would repeat the analysis across different simulations and coarse-graining scales, and would embed the kernel in a fully relativistic averaging framework. This first step establishes that a causal, negative, short-timescale kernel does exist in a standard simulation, and that it behaves as a viscosity-like drag on the effective expansion of coarse-grained domains.

6.2. Scale Hierarchy of Memory: From Local Viscosity to Global Horizons

The kernel extracted from TNG300-1 has two striking features. Its amplitude is negative, so virialisation and bulk flows behave as a viscosity-like drag on the effective expansion of coarse-grained domains. Its characteristic timescale is short,

, so the 90% memory horizon of the domain-scale kernel is of order one gigayear. At first sight this appears to sit uneasily beside the long memory horizons of order

Gyr inferred in global ITP fits to late-time expansion and growth data [

2]. In that global analysis the kernel acts as a very slow, integrated response of the background to its own past.

This tension is only apparent. The TNG300-1 result probes a single coarse-graining scale in a finite volume, and it isolates the response of an effective domain expansion rate to a local structural source on that scale. The global ITP kernel, by contrast, is an effective summary of many hierarchical processes acting across a wide range of scales and times: halo formation, group relaxation, cluster assembly, and the dynamical coupling between the nonlinear cosmic web and the homogeneous background. In a multi-scale system of this kind, it is natural for short local relaxation processes to accumulate into a long global response. Many independent, or weakly coupled, Gyr-scale relaxation events can sum to a broad, heavy-tailed effective kernel when viewed from the perspective of the smoothed background.

Within this picture the negative, short-timescale kernel measured here plays the role of a local “viscosity” in the ITP hierarchy. It shows that coarse-grained virialisation does in fact produce a contractive, retarded response with the same sign and qualitative role as the memory channel invoked in the global closure. The long memory horizons inferred in the global fits should not be read as literally single-scale relaxation times. They summarise the net effect of many local drag processes, acting over cosmological time in a structured universe. Deriving that full hierarchy from simulations would require a programme that measures kernels across several domain sizes, combines volumes beyond , and follows the evolution of the cosmic web over a wider redshift range. This first measurement establishes the local building block: virialisation behaves as viscosity at the domain scale.

Outlook: Coupling the Kernel to Einstein–Boltzmann Solvers

In this work the non–Markovian kernel is calibrated directly on simulation boxes. The next step is to propagate the same closure through the full cosmological observable pipeline. This can be done by promoting the background expansion to

and feeding the modified

into an Einstein–Boltzmann solver such as

CLASS [

11] or

CAMB [

12].

A convenient strategy is to reinterpret the drag contribution

as an effective fluid with density

defined by

Given a tabulated kernel

and source history

(from TNG300-1 or TNG50-1), one can precompute

on a fine grid in scale factor, convert this to

, and then infer an effective equation–of–state parameter

from the discretised continuity equation

Modern Boltzmann codes [

11,

12] allow for an arbitrary

fluid or, equivalently, for a direct tabulation of the background expansion

. In

CLASS, for example,

and

can be supplied as an additional dark–energy component, or one can bypass the fluid description and inject the modified

from Eq. (

33) through the

background_external_h interface. A similar strategy applies to

CAMB via its general

dark–energy module.

A full parameter estimation exercise, in which the kernel parameters

are sampled jointly with the standard cosmological parameters against CMB, BAO, and supernova datasets, is beyond the scope of this simulation–focused paper. The calibration presented here, together with the simple exponential kernel, is however explicitly designed to make this next step straightforward: once

are fixed from simulations, the time–domain integral in Eq. (

33) can be evaluated cheaply and tabulated, enabling a direct test of the ITP “information drag” in Einstein–Boltzmann codes.

A hierarchy of memory timescales

Taken together, the phenomenological horizon-scale kernel of the ITP model, the TNG300-1 domain analysis, and the new TNG50-1 check point to a simple hierarchy of memory. On small, galaxy–group scales (TNG50, ) the effective kernel has a fast clock, –, tracing rapid virialisation in dense environments. On intermediate, supercluster scales (TNG300, ) the clock slows to as the relevant density field is smoother and flows are more coherent. On horizon scales, the phenomenological information-drag kernel inferred in previous ITP work corresponds to an even slower, integrated response with an effective memory horizon . In this sense the cosmological memory kernel is best viewed as a spectrum: fast “local” modes associated with the non-linear cosmic web, slower modes associated with large-scale structure, and a very slow mode that encodes the cumulative backreaction of all of these on the background expansion.

7. Conclusions

This paper has derived an explicit cosmological memory kernel from a realistic structure formation simulation and placed it within the broader Infinite Transformation Principle programme. By coarse-graining the TNG300-1 volume into domains of and tracking the relation between a structural source and a backreaction proxy , an exponential kernel of the form has been fitted via a discrete Volterra convolution. The inferred kernel is negative, corresponding to a viscosity-like drag of virialisation and bulk flows on the effective expansion of coarse-grained domains, and it has a short relaxation timescale , giving a Gyr memory horizon at the domain scale.

Several points follow from this result.

First, the existence of a finite, negative memory kernel in a standard hydrodynamical simulation addresses a central conceptual criticism of the ITP framework. In the purely phenomenological implementation [

1,

2], the memory kernel is introduced as an effective closure motivated by coarse-graining, but not derived from an explicit structural calculation. The TNG300-1 analysis shows that, once nonlinear structure is evolved self-consistently, virialisation and bulk flows do in fact generate a causal, viscosity-like kernel linking an effective expansion rate to a structural source. The sign and qualitative role of this kernel match the “information drag” channel invoked in the global ITP closure. A minimal cross–check on the higher–resolution TNG50-1 simulation recovers a kernel with the same sign and a comparable sub–Gyr memory horizon, indicating that the virialisation–induced drag is not an artefact of a single simulation box.

Second, the short domain-scale memory horizon found here is not in conflict with the long operational horizons inferred in late-time data fits [

2]. The TNG300-1 kernel is measured on a single coarse-graining scale in a finite box and probes the response of an effective domain expansion rate to local structural dynamics. The global memory horizon constrained from

and

compilations summarizes the net effect of many such local drag processes acting across a hierarchy of scales and over cosmological time. In a multi-scale system, the accumulation of short, local relaxation events into a broad, slow effective response is natural. The present result should therefore be read as identifying the local building block of the global kernel, rather than as a competing timescale.

Third, the derivation tightens the conceptual link between the auxiliary-field implementation of ITP and the physics of structure formation. In the ITP formulation, an auxiliary memory field obeys a relaxation equation whose retarded Green function is an exponential kernel [

1]. The kernel measured here from TNG300-1 has precisely that form. It can be interpreted as an empirical determination of the auxiliary-field Green function at the domain scale, with the structural source

playing the role of the driving term. This supports the use of an exponential kernel in the infinite-memory analysis, and it clarifies how the phenomenological parameters in the late-time fits relate to coarse-grained quantities in simulations.

Fourth, the TNG300-1 analysis highlights the need for a scale hierarchy of memory. A complete programme would measure kernels at several domain sizes, combine multiple simulations with different volumes and subgrid models, and embed the results in a relativistic averaging framework. On the observational side, the comparison with SDSS DR8 demonstrates that present group catalogues occupy a tracer regime in the plane, while the TNG domains probe a backreaction regime capable of sourcing a viscosity-like kernel. Designing an observational analogue of the simulation analysis, for example by combining group catalogues, peculiar-velocity surveys, and redshift-space distortions, would allow a direct empirical test of the kernel derived here.

Taken together with the ITP parameter-space formulation [

1] and the global infinite-memory constraints [

2], the present work completes a three-step picture. The ITP framework specifies how a memory sector enters the background closure through an auxiliary field. The infinite-memory analysis constrains the large-scale kernel from late-time data and shows that a long operational memory horizon and a lower preferred

are compatible with current observations. The TNG300-1 derivation identifies a negative, short-timescale kernel generated by virialisation and bulk flows at the domain scale, providing a structural origin for the information-drag channel used in the global closure. The result is a coherent non-Markovian cosmology in which local viscosity and global memory are two faces of the same coarse-grained process.

Future work can proceed in three directions. On the simulation side, multi-scale and multi-simulation analyses can map out the hierarchy of kernels across volume, resolution, and subgrid physics, and explore extended kernel families beyond a single exponential. On the theoretical side, the simulation-derived kernel can be embedded in a relativistic averaging scheme and in the full ITP action, clarifying how the memory sector interacts with curvature and perturbations. On the observational side, parameterised versions of the TNG-derived kernel can be incorporated into Boltzmann solvers and confronted directly with expansion, growth, and lensing data, closing the loop between local virialisation, global information drag, and the late-time universe inferred from observations.

Funding

This research received no external funding.

Data Availability Statement

The domain–level catalogues, structural–source time series, and analysis scripts used in this work are available in the public GitHub repository

https://github.com/Atalebe/itp_memory_kernel. The repository includes all configuration files, processed TNG300–1 domain tables, SDSS DR8 structural–source catalogues, and plotting scripts required to reproduce the figures and kernel fits presented here. The underlying TNG300–1 simulation data are part of the IllustrisTNG public data release and can be obtained from the IllustrisTNG data portal. Only derived products (domain averages and time series) are redistributed in the GitHub repository. In addition, the minimal TNG50-1 robustness run is provided as the file

tng50_timeseries_minimal.csv in the same repository, together with the scripts

tng50_build_timeseries_minimal_v1.py and

fit_tng50_kernel_minimal_v1.py. The SDSS DR8 galaxy and group data used to construct the observational structural–source catalogue are drawn from the publicly available SDSS Data Release 8 products [

9] The value–added catalogue used here is provided in processed form (

sdss_group_structural_source.csv) in the GitHub repository, together with the scripts that generate it from the original SDSS tables.

Acknowledgments

This work made use of the public IllustrisTNG simulations and SDSS DR8 survey data. The author thanks the IllustrisTNG and SDSS collaborations for making their data products publicly available, and colleagues whose comments helped to clarify the scale dependence of the memory kernel.

Conflicts of Interest

The author declares no known competing financial interests or personal relationships that could have appeared to influence the work reported in this paper.

References

- Atalebe, S. The Infinite Transformation Principle: A Non–Markovian Closure for Cosmological Expansion. Preprint 2025.

- Atalebe, S. Infinite Memory Cosmology: Information Drag and the Late–Time Expansion History. Preprint 2025. [CrossRef]

- Pillepich, A.; Springel, V.; Nelson, D.; Hernquist, L.; Naiman, J.; Pakmor, R.; et al. Simulating galaxy formation with the IllustrisTNG model. Monthly Notices of the Royal Astronomical Society 2018, 475, 648–675. [CrossRef]

- Springel, V.; Pakmor, R.; Pillepich, A.; Weinberger, R.; Nelson, D.; Hernquist, L.; et al. First results from the IllustrisTNG simulations: matter and galaxy clustering. Monthly Notices of the Royal Astronomical Society 2018, 475, 676–698. [CrossRef]

- Nelson, D.; Pillepich, A.; Springel, V.; Weinberger, R.; Pakmor, R.; Hernquist, L.; et al. The IllustrisTNG simulations: Public data release. Computational Astrophysics and Cosmology 2019, 6. [CrossRef]

- Planck Collaboration.; Ade, P.A.R.; Aghanim, N.; et al. Planck 2015 results. XIII. Cosmological parameters. Astronomy & Astrophysics 2016, 594, A13. [CrossRef]

- Mori, H. Transport, collective motion, and Brownian motion. Progress of Theoretical Physics Supplement 1965, 33, 423–455. [CrossRef]

- Zwanzig, R. Nonlinear generalized Langevin equations. Journal of Statistical Physics 1973, 9, 215–220. [CrossRef]

- Aihara, H.; Allende Prieto, C.; An, D.; et al. The Eighth Data Release of the Sloan Digital Sky Survey: First Data from SDSS-III. The Astrophysical Journal Supplement Series 2011, 193, 29. [CrossRef]

- Buchert, T. On average properties of inhomogeneous fluids in general relativity: Dust cosmologies. General Relativity and Gravitation 2000, 32, 105–125. [CrossRef]

- Blas, D.; Lesgourgues, J.; Tram, T. The Cosmic Linear Anisotropy Solving System (CLASS). Part II: Approximation schemes. Journal of Cosmology and Astroparticle Physics 2011, 2011, 034. [CrossRef]

- Lewis, A.; Challinor, A.; Lasenby, A. Efficient computation of CMB anisotropies in closed FRW models. The Astrophysical Journal 2000, 538, 473–476. [CrossRef]

|

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).