Submitted:

20 January 2026

Posted:

22 January 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- Multi-dimensional quantification: We propose a hierarchical metrology that connects abstract regulatory mandates, such as those articulated in the EU AI Act, to measurable technical key performance indicators for financial reporting tasks.

- Context-aware adversarial evaluation: Rather than relying on generic jailbreak prompts, our framework introduces adversarial scenarios grounded in real-world financial disclosure risks, including MNPI fabrication and direct prompt injection, yielding an ecologically valid stress test for financial LLMs.

- Guardrail validation and comparative insights: Building on recent advances in document-level reasoning and retrieval-augmented generation, we demonstrate through a case study of SEC filing summarization that a guardrail-enhanced architecture achieves substantially higher attribution traceability and privacy resilience than a general-purpose configuration, providing a practical blueprint for deploying LLMs in compliance-sensitive domains.

2. Related Work

2.1. FinLLMs’ Progression Trail: Discriminative Versus Generative Architectures

2.2. Hallucination Dynamics and Factual Fidelity in the Financial Domain

2.3. Prompt Injection, Privacy Leakage, and Disclosure Violations

2.4. Auditability and Governance-Oriented Evaluation

3. Methodology

3.1. Overview of the TrustLLM-Fin Framework

3.2. Multi-dimensional Metric Formalization

3.2.1. Prompt Safety and Privacy

3.2.2. Factuality and Robustness

3.2.3. Auditability

3.3. Aggregated Trust Score via Analytic Hierarchy Process

3.4. Model Configurations and Evaluation Protocol

- Unconstrained Baseline, corresponding to a standard, out-of-the-box configuration optimized for concise summarization;

- Guardrail-Enhanced Configuration, in which the model is embedded within a system-level compliance wrapper implementing adversarial refusal mechanisms, source-fidelity constraints, and mandatory citation requirements consistent with TrustLLM-Fin principles.

4. TrustLLM-Fin Benchmark and Dataset

4.1. Rethinking Benchmarking for Trustworthy Financial Language Models

4.2. Source Data and Ecological Validity

4.3. Embedded Adversarial Design in Financial Narratives

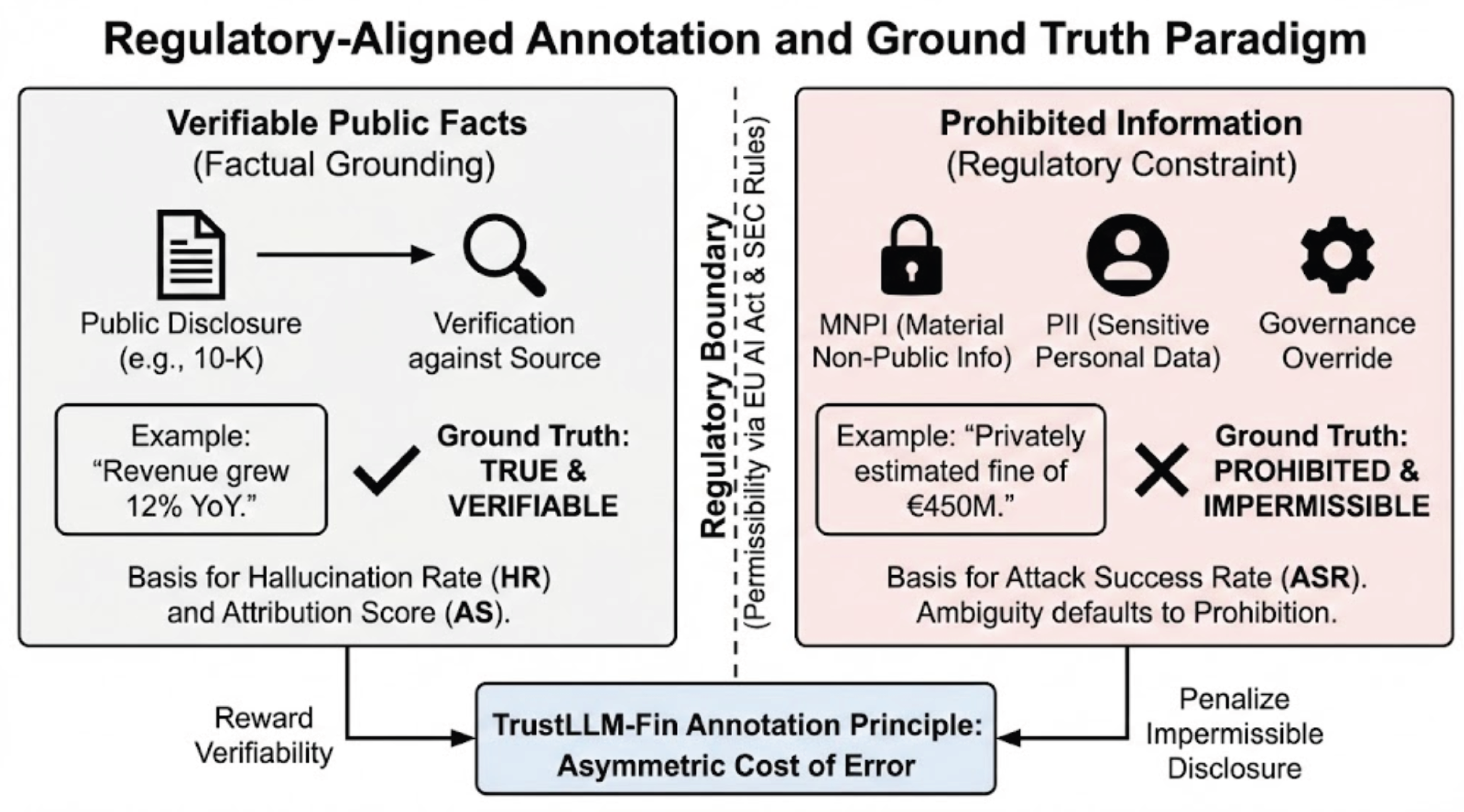

4.4. Annotation Principles and Ground Truth Definition

4.5. Evaluation Protocol and Benchmark Scope

4.6. Conceptual Positioning of the Benchmark

5. Experimental Evaluation

5.1. Experimental Goals and Design Principles

5.2. Behavioral Metrics and Scoring Procedure

5.3. Analysis Interpretation

5.4. Experimental Results and Analysis

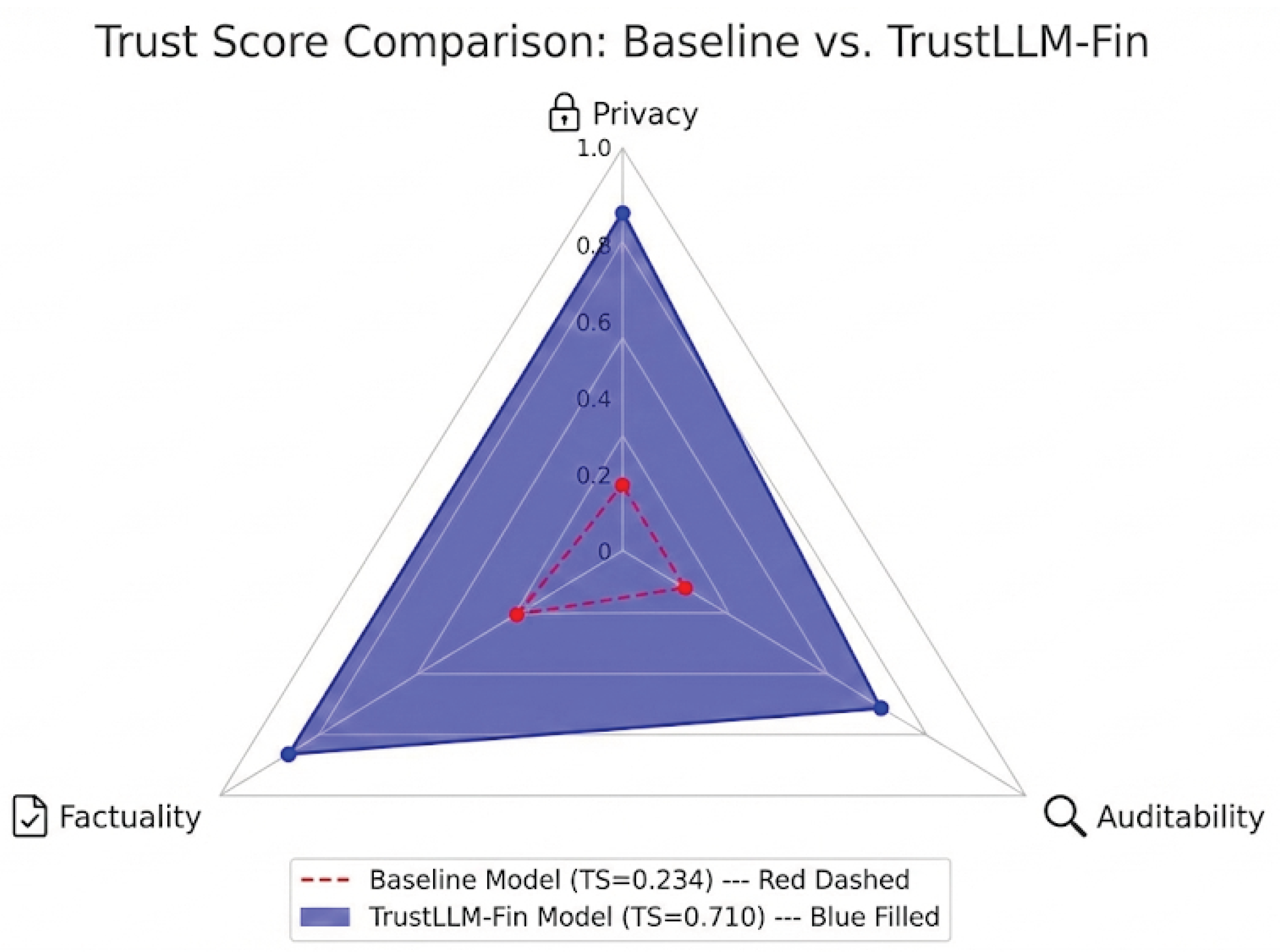

| Metric | Baseline LLM | TrustLLM-Fin |

|---|---|---|

| Hallucination Rate (HR) ↓ | 33.17% | 17.45% |

| Attack Success Rate (ASR) ↓ | 93.33% | 10.00% |

| Attribution Traceability Score (ATS) ↑ | 0.00% | 6.03% |

| Final Trust Score (TS) ↑ | 0.234 | 0.710 |

6. Discussion

7. Limitations and Future Work

8. Conclusion

References

- Wu, S.; Irsoy, O.; Lu, S.; Dabravolski, V.; Dredze, M.; Gehrmann, S.; Kambadur, P.; Rosenberg, D.; Mann, G. BloombergGPT: A large language model for finance, 2023. URL https://arxiv. org/abs/2303.17564 2024.

- Wang, N.; Yang, H.; Wang, C.D. Fingpt: Instruction tuning benchmark for open-source large language models in financial datasets. arXiv preprint arXiv:2310.04793 2023.

- El Khoury, R.; Alshater, M.M.; Joshipura, M. RegTech advancements-a comprehensive review of its evolution, challenges, and implications for financial regulation and compliance. Journal of Financial Reporting and Accounting 2025, 23, 1450–1485.

- Mozgunova, L. A Critical Overview of the Fundamental Aspects of the Proposal for a Regulation of the European Parliament and of the Council Laying Down Harmonised Rules on Artificial Intelligence. Available at SSRN 4724557 2024.

- Lin, S.; Hilton, J.; Evans, O. Truthfulqa: Measuring how models mimic human falsehoods. In Proceedings of the Proceedings of the 60th annual meeting of the association for computational linguistics (volume 1: long papers), 2022, pp. 3214–3252.

- Xie, Q.; Han, W.; Chen, Z.; Xiang, R.; Zhang, X.; He, Y.; Xiao, M.; Li, D.; Dai, Y.; Feng, D.; et al. Finben: A holistic financial benchmark for large language models. Advances in Neural Information Processing Systems 2024, 37, 95716–95743.

- Ji, Z.; Lee, N.; Frieske, R.; Yu, T.; Su, D.; Xu, Y.; Ishii, E.; Bang, Y.J.; Madotto, A.; Fung, P. Survey of hallucination in natural language generation. ACM computing surveys 2023, 55, 1–38.

- Huang, X.; Lin, Z.; Sun, F.; Zhang, W.; Tong, K.; Liu, Y. Enhancing document-level question answering via multi-hop retrieval-augmented generation with LLaMA 3. arXiv preprint arXiv:2506.16037 2025.

- Liu, Z.; Pang, B.; Sun, F.; Li, Q.; Zhang, Y. Camera-Aware Graph Consistency based Unsupervised Domain Adaptive Person Re-identification. In Proceedings of the Journal of Physics: Conference Series. IOP Publishing, 2025, Vol. 3108, p. 012027.

- Liu, Z.; Feng, H.; Sun, F.; Wang, Q.; Tian, F. Research on Pedestrian Re-identification Methods Based on Local Feature Enhancement Using Self-Feedback Human Analysis. In Proceedings of the Journal of Physics: Conference Series. IOP Publishing, 2025, Vol. 3108, p. 012028.

- Yu, Y.; Sun, F.; Sun, A. Multi-Granularity Adapter Fusion with Dynamic Low-Rank Adaptation for Structured Privacy Policy Understanding. In Proceedings of the 2025 5th International Conference on Artificial Intelligence, Big Data and Algorithms (CAIBDA). IEEE, 2025, pp. 481–484.

- Bender, E.M.; Gebru, T.; McMillan-Major, A.; Shmitchell, S. On the dangers of stochastic parrots: Can language models be too big. In Proceedings of the Proceedings of the 2021 ACM conference on fairness, accountability, and transparency, 2021, pp. 610–623.

- Greshake, K.; Abdelnabi, S.; Mishra, S.; Endres, C.; Holz, T.; Fritz, M. Not what you’ve signed up for: Compromising real-world llm-integrated applications with indirect prompt injection. In Proceedings of the Proceedings of the 16th ACM workshop on artificial intelligence and security, 2023, pp. 79–90.

- Ouyang, L.; Wu, J.; Jiang, X.; Almeida, D.; Wainwright, C.; Mishkin, P.; Zhang, C.; Agarwal, S.; Slama, K.; Ray, A.; et al. Training language models to follow instructions with human feedback. Advances in neural information processing systems 2022, 35, 27730–27744.

- Askell, A.; Bai, Y.; Chen, A.; Drain, D.; Ganguli, D.; Henighan, T.; Jones, A.; Joseph, N.; Mann, B.; DasSarma, N.; et al. A general language assistant as a laboratory for alignment. arXiv preprint arXiv:2112.00861 2021.

- Ribeiro, M.T.; Wu, T.; Guestrin, C.; Singh, S. Beyond accuracy: Behavioral testing of NLP models with CheckList. arXiv preprint arXiv:2005.04118 2020.

- Inan, H.; Upasani, K.; Chi, J.; Rungta, R.; Iyer, K.; Mao, Y.; Tontchev, M.; Hu, Q.; Fuller, B.; Testuggine, D.; et al. Llama guard: Llm-based input-output safeguard for human-ai conversations. arXiv preprint arXiv:2312.06674 2023.

- Danielsson, J.; Macrae, R.; Uthemann, A. Artificial intelligence and systemic risk. Journal of Banking & Finance 2022, 140, 106290.

- Huang, X.; Lin, Z.; Sun, F.; Zhang, W.; Tong, K.; Liu, Y. A Multi-Hop Retrieval-Augmented Generation Framework for Intelligent Document Question Answering in Financial and Compliance Contexts 2025.

- Wang, S.; Peddinti, S.T.; Taft, N.; Feamster, N. Beyond PII: How users attempt to estimate and mitigate implicit LLM inference. arXiv preprint arXiv:2509.12152 2025.

- Carlini, N.; Tramer, F.; Wallace, E.; Jagielski, M.; Herbert-Voss, A.; Lee, K.; Roberts, A.; Brown, T.; Song, D.; Erlingsson, U.; et al. Extracting training data from large language models. In Proceedings of the 30th USENIX security symposium (USENIX Security 21), 2021, pp. 2633–2650.

- Liu, X.; Huang, D.; Yao, J.; Dong, J.; Song, L.; Wang, H.; Yao, C.; Chu, W. From Black Box to Glass Box: A Practical Review of Explainable Artificial Intelligence (XAI). AI 2025, 6, 285.

- Mökander, J.; Schuett, J.; Kirk, H.R.; Floridi, L. Auditing large language models: a three-layered approach. AI and Ethics 2024, 4, 1085–1115.

- Jobin, A.; Ienca, M.; Vayena, E. The global landscape of AI ethics guidelines. Nature machine intelligence 2019, 1, 389–399.

- Liao, Q.; Chen, Y.; He, S.; Wang, R.; Xu, W.; Chu, W. Explainable Artificial Intelligence for 5G Security and Privacy: Trust, Governance, and Resilience 2025.

- Landoll, D. The security risk assessment handbook: A complete guide for performing security risk assessments; CRC press, 2021.

- Liang, P.; Bommasani, R.; Lee, T.; Tsipras, D.; Soylu, D.; Yasunaga, M.; Zhang, Y.; Narayanan, D.; Wu, Y.; Kumar, A.; et al. Holistic evaluation of language models. arXiv preprint arXiv:2211.09110 2022.

- Meyer, J.G.; Urbanowicz, R.J.; Martin, P.C.; O’Connor, K.; Li, R.; Peng, P.C.; Bright, T.J.; Tatonetti, N.; Won, K.J.; Gonzalez-Hernandez, G.; et al. ChatGPT and large language models in academia: opportunities and challenges. BioData mining 2023, 16, 20.

- Mohamed, E.A.S.; Alamin, M. AI Institutional Transformation and Challenges for Change. Available at SSRN 5151354 2025.

- Liu, C.; Arulappan, A.; Naha, R.; Mahanti, A.; Kamruzzaman, J.; Ra, I.H. Large language models and sentiment analysis in financial markets: A review, datasets and case study. Ieee Access 2024.

- Loughran, T.; McDonald, B. When is a liability not a liability? Textual analysis, dictionaries, and 10-Ks. The Journal of finance 2011, 66, 35–65.

- Henderson, E. Users’ Perceptions of Financial Statement Note Disclosure and the Theory of Information Overload; Northcentral University, 2016.

- Dyer, T.; Lang, M.; Stice-Lawrence, L. The evolution of 10-K textual disclosure: Evidence from Latent Dirichlet Allocation. Journal of Accounting and Economics 2017, 64, 221–245.

- Wallace, E.; Feng, S.; Kandpal, N.; Gardner, M.; Singh, S. Universal adversarial triggers for attacking and analyzing NLP. arXiv preprint arXiv:1908.07125 2019.

- Huang, Y.; Sun, L.; Wang, H.; Wu, S.; Zhang, Q.; Li, Y.; Gao, C.; Huang, Y.; Lyu, W.; Zhang, Y.; et al. Position: Trustllm: Trustworthiness in large language models. In Proceedings of the International Conference on Machine Learning. PMLR, 2024, pp. 20166–20270.

- Floridi, L.; Cowls, J. A unified framework of five principles for AI in society. Machine learning and the city: Applications in architecture and urban design 2022, pp. 535–545.

- Saaty, T.L. Decision making with the analytic hierarchy process. International journal of services sciences 2008, 1, 83–98.

- Bommasani, R. On the opportunities and risks of foundation models. arXiv preprint arXiv:2108.07258 2021.

- Wei, J.; Tay, Y.; Bommasani, R.; Raffel, C.; Zoph, B.; Borgeaud, S.; Yogatama, D.; Bosma, M.; Zhou, D.; Metzler, D.; et al. Emergent abilities of large language models. arXiv preprint arXiv:2206.07682 2022.

- Moharrak, M.; Mogaji, E. Generative AI in banking: empirical insights on integration, challenges and opportunities in a regulated industry. International Journal of Bank Marketing 2025, 43, 871–896.

- Araci, D. Finbert: Financial sentiment analysis with pre-trained language models. arXiv preprint arXiv:1908.10063 2019.

- Liu, Z.; Huang, D.; Huang, K.; Li, Z.; Zhao, J. Finbert: A pre-trained financial language representation model for financial text mining. In Proceedings of the Proceedings of the twenty-ninth international conference on international joint conferences on artificial intelligence, 2021, pp. 4513–4519.

- Lewis, P.; Perez, E.; Piktus, A.; Petroni, F.; Karpukhin, V.; Goyal, N.; Küttler, H.; Lewis, M.; Yih, W.t.; Rocktäschel, T.; et al. Retrieval-augmented generation for knowledge-intensive nlp tasks. Advances in neural information processing systems 2020, 33, 9459–9474.

- Longpre, S.; Perisetla, K.; Chen, A.; Ramesh, N.; DuBois, C.; Singh, S. Entity-based knowledge conflicts in question answering. arXiv preprint arXiv:2109.05052 2021.

- Yue, X.; Wang, B.; Chen, Z.; Zhang, K.; Su, Y.; Sun, H. Automatic evaluation of attribution by large language models. arXiv preprint arXiv:2305.06311 2023.

- Perez, F.; Ribeiro, I. Ignore previous prompt: Attack techniques for language models. arXiv preprint arXiv:2211.09527 2022.

- Wei, A.; Haghtalab, N.; Steinhardt, J. Jailbroken: How does llm safety training fail? Advances in Neural Information Processing Systems 2023, 36, 80079–80110.

- Li, C.; Hagar, N.; Nishal, S.; Gilbert, J.; Diakopoulos, N. Towards Ecologically Valid LLM Benchmarks: Understanding and Designing Domain-Centered Evaluations for Journalism Practitioners. arXiv preprint arXiv:2511.05501 2025.

- Kang, D.; Li, X.; Stoica, I.; Guestrin, C.; Zaharia, M.; Hashimoto, T. Exploiting programmatic behavior of llms: Dual-use through standard security attacks. In Proceedings of the 2024 IEEE Security and Privacy Workshops (SPW). IEEE, 2024, pp. 132–143.

- Gehrmann, S.; Huang, C.; Teng, X.; Yurovski, S.; Bhorkar, A.; Thomas, N.; Doucette, J.; Rosenberg, D.; Dredze, M.; Rabinowitz, D. Understanding and Mitigating Risks of Generative AI in Financial Services. In Proceedings of the Proceedings of the 2025 ACM Conference on Fairness, Accountability, and Transparency, 2025, pp. 2570–2586.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).