Submitted:

24 December 2025

Posted:

25 December 2025

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

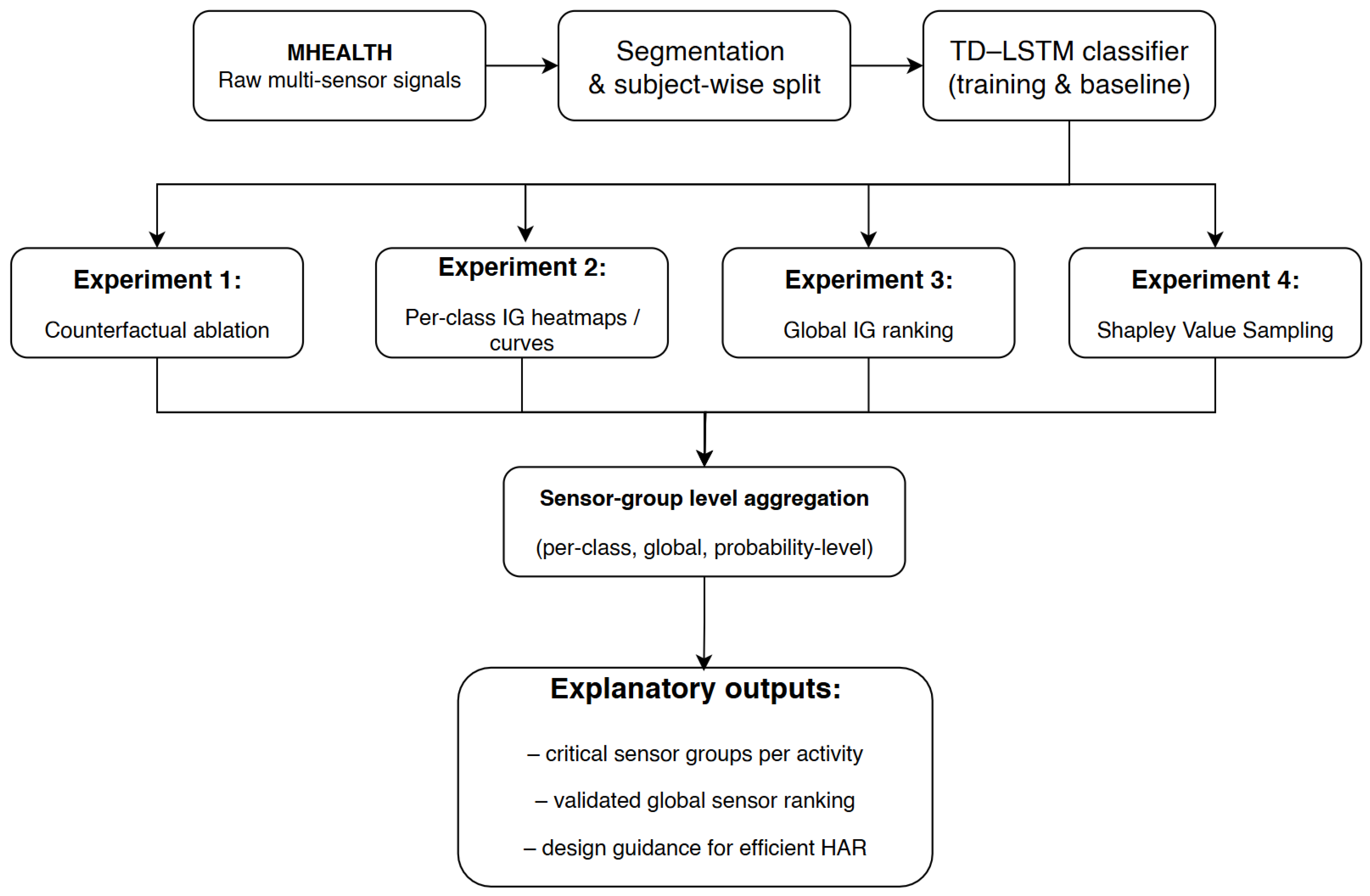

- Experiment 1: Multi-level counterfactual ablation. We remove each of the eight sensor groups in turn and quantify (i) global accuracy degradation, (ii) per-class accuracy changes, and (iii) probability shifts for correctly classified samples. This experiment provides causal evidence of sensor importance and reveals activity-specific and confidence-level effects.

- Experiment 2: Per-class Integrated Gradients attributions. We compute Integrated Gradients (IG) for all 12 activities, yielding (i) channel-level time–feature heatmaps and (ii) temporal sensor-group attribution curves. These visualisations expose which sensor groups and time segments drive predictions for each activity, enabling biomechanically grounded interpretation.

- Experiment 3: Global IG sensor-group ranking. We aggregate IG attributions across the test set to obtain a dataset-wide ranking of sensor groups, providing a global view of how the TD–LSTM distributes importance across modalities and body locations.

- Experiment 4: Shapley Value validation. We apply Shapley Value Sampling to derive an independent, model-agnostic global importance ranking. Comparing Shapley and IG rankings allows us to validate the stability of conclusions across gradient-based and game-theoretic perspectives.

- We introduce, to the best of our knowledge, the first multi-method XAI framework for wearable HAR that jointly employs counterfactual ablation, IG, and Shapley Value Sampling on a common TD–LSTM backbone. This design enables systematic cross-validation of explanations across complementary theoretical paradigms.

- We provide a sensor-group-level analysis that connects XAI outputs directly to hardware components. Our multi-level counterfactual study quantifies not only global accuracy drops but also per-class and probability-level degradation when individual sensor groups are removed, yielding actionable guidance for sensor selection and pruning.

- We deliver a comprehensive per-class interpretability study on the MHEALTH dataset, combining IG heatmaps and temporal sensor-group curves with global IG and Shapley rankings. The resulting, biomechanically plausible patterns support concrete design recommendations for resource-constrained HAR deployments and illustrate how multi-method XAI can bridge the gap between model internals and domain knowledge.

2. Related Work

| Study | XAI Method(s) | Model | Dataset | Sensor Types | Classes | Analysis Level | Ablation | Per-Class |

|---|---|---|---|---|---|---|---|---|

| Harris et al. [38] | PCA + Ablation | SVM | Custom | IMU, sEMG | 4 | Sensor placement | ✓ | ✗ |

| Ronao & Cho [39] | None | CNN | WISDM | ACC | 6 | - | ✗ | ✗ |

| Yin et al. [5] | Attention weights | CNN-BiLSTM | UCI-HAR | ACC, GYRO | 6 | Feature-level | ✗ | ✗ |

| Khatun et al. [6] | Self-attention | CNN-LSTM | MHEALTH, UCI-HAR | ACC, GYRO, MAG | 12, 6 | Feature-level | ✗ | ✗ |

| Khan et al. [10] | LIME | Random Forest | Custom EEG | EEG | 4 | Feature-level | ✗ | ✗ |

| Arrotta et al. [18] | LIME, SHAP | Various ML | KU-HAR, PAMAP2 | ACC, GYRO | 18, 12 | Feature-level | ✓ | ✗ |

| Borella et al. [19] | SHAP | Ensemble | Custom IMU | IMU (5 locations) | Material handling | Sensor-level | ✗ | ✗ |

| Pellano et al. [20] | CAM, Grad-CAM | EfficientGCN | NTU-RGB+D | Skeleton | 60 | Spatial-temporal | ✗ | ✓ |

| Jeyakumar et al. [40] | Concept Bottleneck | CNN-LSTM | Custom | ACC, GYRO | 12 complex | Concept-level | ✗ | ✓ |

| Wang et al. [41] | Ablation study | Het-CNN | OPPORTUNITY, PAMAP2 | Multi-sensor | 18, 12 | Architecture | ✓ | ✗ |

| El-Adawi et al. [42] | None | DenseNet+GAF | MHEALTH | ACC, GYRO, MAG | 12 | - | ✗ | ✗ |

| Wei & Wang [17] | Attention weights | TCN-Attention | Custom | ACC, GYRO | 6 | Temporal | ✗ | ✗ |

| Vijayvargiya et al. [4] | LIME | LSTM | Custom sEMG | sEMG | Lower limb acts | Feature-level | ✗ | ✗ |

| Kim et al. [43] | Grad-CAM | CNN | Custom audio | Audio | 7 daily sounds | Spectrogram | ✗ | ✓ |

| Arrotta et al. [44] | LIME, LLM eval | Various | CASAS | Smart home | 10 | Event-level | ✗ | ✗ |

| Our Work | Ablation, IG, SHAP | TD-LSTM | MHEALTH | ACC, ECG, GYRO, MAG | 12 | Multi-level | ✓ | ✓ |

| + Counterfactual | + MaxPool | (8 sensor groups) | (Global, per-class, | ✓ | ✓ | |||

| probability-level) |

3. Methodology

3.1. Dataset and Preprocessing

3.2. Model Architecture

3.3. Sensor Grouping for Interpretability

3.4. Counterfactual Sensor Ablation Analysis

3.5. Temporal Attributions with Integrated Gradients

3.6. Global Feature Importance via Shapley Value Sampling

3.7. Sensor-Level Attribution Aggregation

4. Results

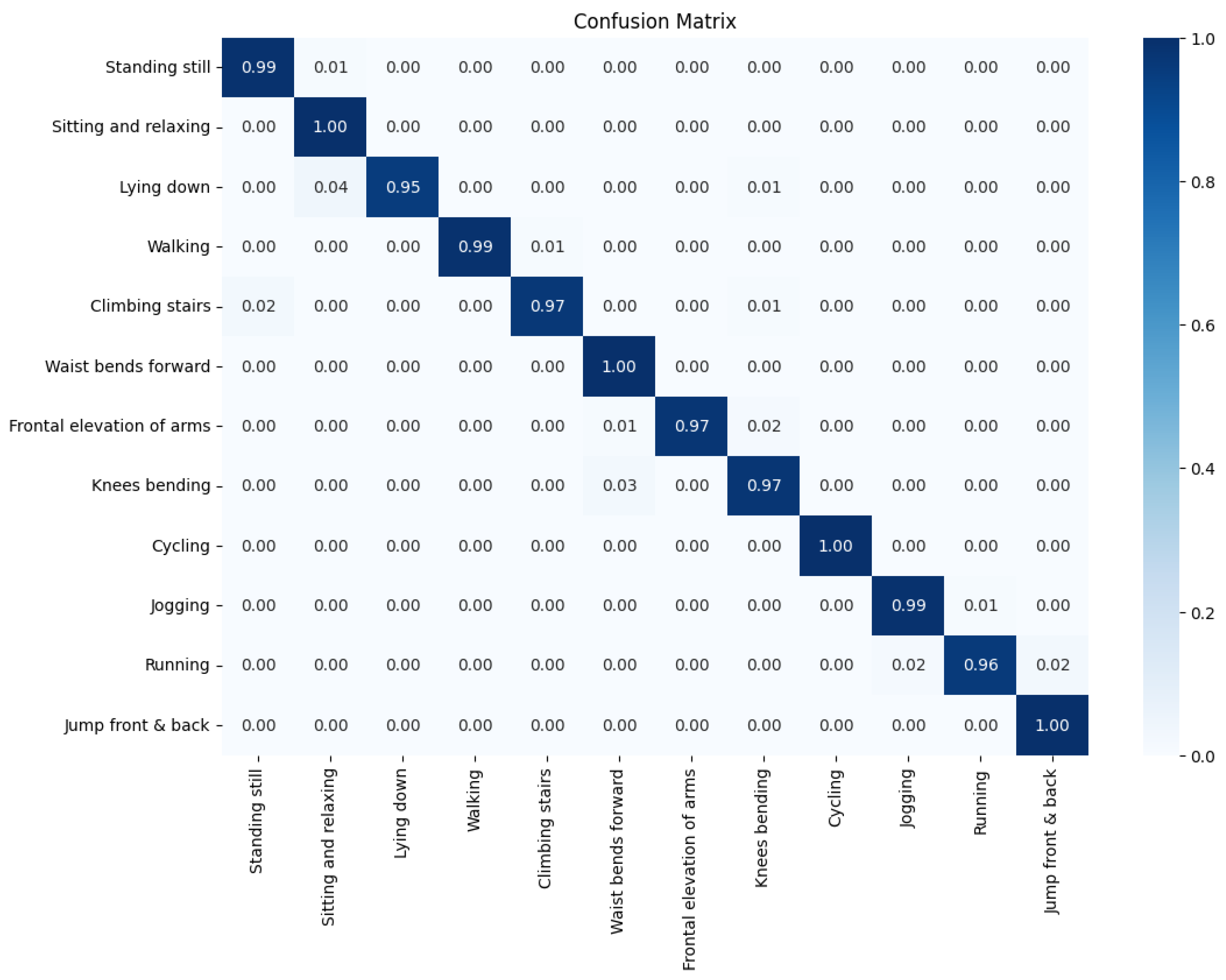

4.1. Baseline Classification Performance

4.2. Experiment 1: Counterfactual Sensor-Group Ablation

4.2.1. Global Impact of Removing Individual Sensor Groups

4.2.2. Class-Wise and Probability-Level Sensitivity

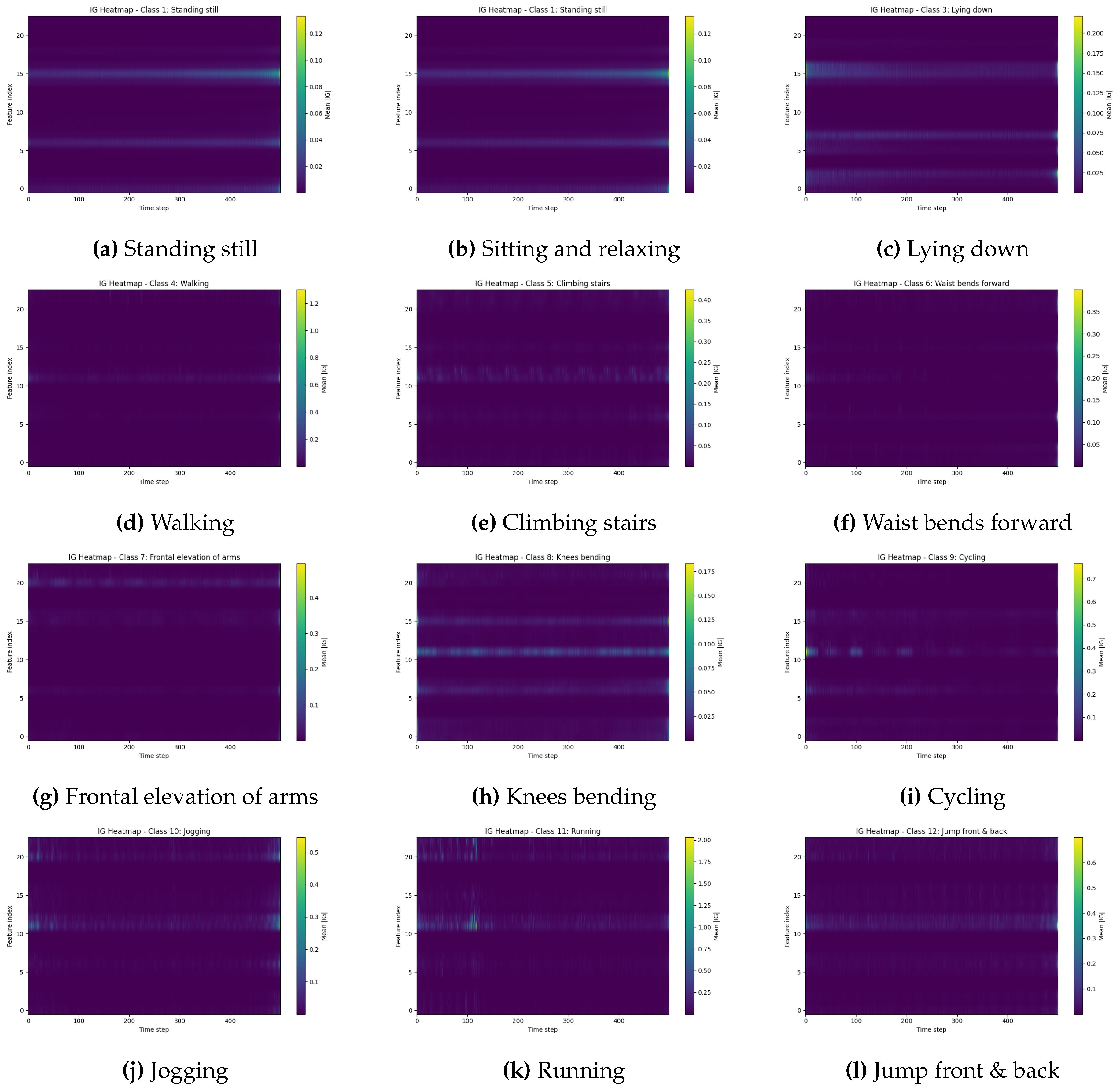

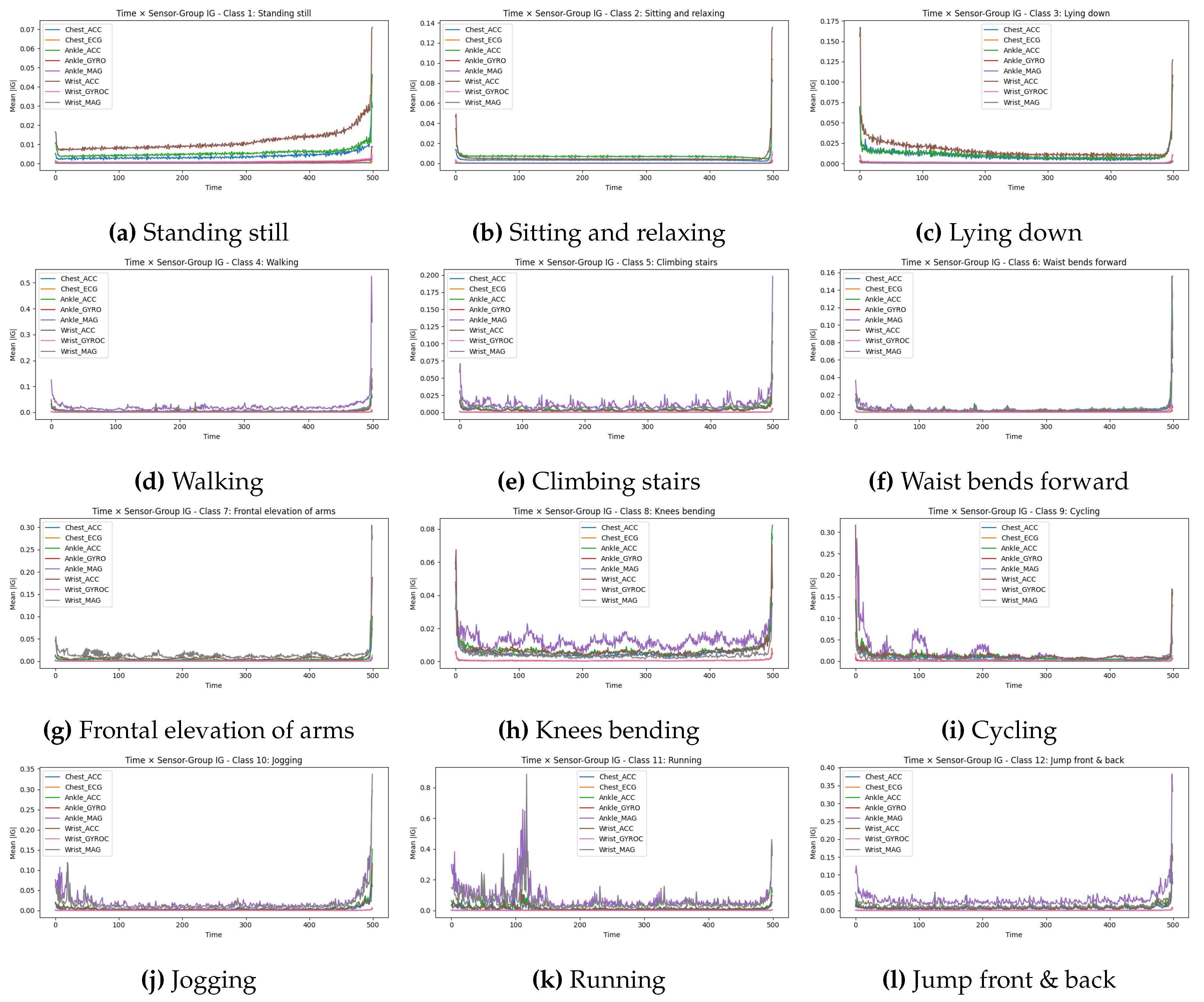

4.3. Experiment 2: Class-Specific Integrated Gradients

4.3.1. Time–Feature IG Heatmaps

4.3.2. Temporal Sensor-Group Attribution Curves

4.4. Experiment 3: Global Integrated Gradients Ranking

4.5. Experiment 4: Shapley Value Validation

4.6. Statistical Considerations and Ranking Stability

5. Discussion

5.1. Biomechanically Plausible Explanations

5.2. Implications for Sensor Selection and Deployment

5.3. Value of Combining Multiple XAI Methods

5.4. Trust, Debugging, and Clinical/Industrial Relevance

5.5. Practical Implications for System Design

5.6. Limitations and Future Work

6. Conclusion

Author Contributions

References

- Zhang, S.; Li, Y.; Zhang, S.; Shahabi, F.; Xia, S.; Deng, Y.; Alshurafa, N. Deep Learning in Human Activity Recognition with Wearable Sensors: A Review on Advances. Sensors 2022, 22, 1476. [Google Scholar] [CrossRef]

- Chen, L.; Nugent, C.D.; Wang, H. A knowledge-driven approach to activity recognition in smart homes. IEEE Transactions on Knowledge and Data Engineering 2011, 24, 961–974. [Google Scholar] [CrossRef]

- Kwapisz, J.R.; Weiss, G.M.; Moore, S.A. Activity Recognition using Cell Phone Accelerometers. ACM SIGKDD Explorations Newsletter 2011, 12, 74–82. [Google Scholar] [CrossRef]

- Vijayvargiya, A.; Singh, P.; Kumar, R.; Dey, N. Hardware Implementation for Lower Limb Surface EMG Measurement and Analysis using Explainable AI for Activity Recognition. IEEE Transactions on Instrumentation and Measurement 2022, 71, 1–9. [Google Scholar] [CrossRef]

- Yin, X.; Liu, Z.; Liu, D.; Ren, X. A Novel CNN-based Bi-LSTM Parallel Model with Attention Mechanism for Human Activity Recognition with Noisy Data. Scientific Reports 2022, 12, 7878. [Google Scholar] [CrossRef]

- Khatun, M.A.; Yousuf, M.A.; Ahmed, S.; Uddin, M.Z.; Alyami, S.A.; Al-Ashhab, S.; Akhdar, H.F.; Khan, A.; Aziz, A.A.; Moni, M.A. Deep CNN-LSTM With Self-Attention Model for Human Activity Recognition Using Wearable Sensor. IEEE Journal of Translational Engineering in Health and Medicine 2022, 10, 1–16. [Google Scholar] [CrossRef]

- Qi, W.; Lin, C.; Qian, K. Multimodel Lightweight Transformer Framework for Human Activity Recognition. In Proceedings of the 2024 46th Annual International Conference of the IEEE Engineering in Medicine and Biology Society (EMBC), 2024; IEEE; pp. 1–4. [Google Scholar]

- Liang, Q.; Jitpattanakul, A.; Mekruksavanich, S. Robust Human Activity Recognition Using a Transformer-Based Model for Aging Society. In Proceedings of the 2025 6th International Conference on Big Data Analytics and Practices (IBDAP), 2025; IEEE; pp. 272–277. [Google Scholar]

- Mennella, C.; Esposito, M.; De Pietro, G.; Maniscalco, U. Multiscale activity recognition algorithms to improve cross-subjects performance resilience in rehabilitation monitoring systems. Computer Methods and Programs in Biomedicine 2025, 108792. [Google Scholar] [CrossRef] [PubMed]

- Khan, A.u.H.; Hussain, S.; Alromema, N.; Iqbal, S.; Mustafa, G.; Khattak, M.A.; Nasim, A.; Rizwan, A. An Explainable EEG-Based Human Activity Recognition Model Using Machine-Learning Approach and LIME. Sensors 2023, 23, 7452. [Google Scholar]

- Qureshi, T.S.; Shahid, M.H.; Farhan, A.A.; Alamri, S. A systematic literature review on human activity recognition using smart devices: advances, challenges, and future directions. Artificial Intelligence Review 2025, 58, 276. [Google Scholar] [CrossRef]

- Huang, Y.; Zhou, Y.; Zhao, H.; Riedel, T.; Beigl, M. Explainable Deep Learning Framework for Human Activity Recognition. arXiv 2024, arXiv:2408.11552. [Google Scholar] [CrossRef]

- Ribeiro, M.T.; Singh, S.; Guestrin, C. Why Should I Trust You? Explaining the Predictions of Any Classifier. In Proceedings of the Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining. ACM, 2016; pp. 1135–1144. [Google Scholar]

- Lundberg, S.M.; Lee, S.I. A unified approach to interpreting model predictions. Advances in neural information processing systems 2017, 30. [Google Scholar]

- Sundararajan, M.; Taly, A.; Yan, Q. Axiomatic Attribution for Deep Networks. In Proceedings of the International Conference on Machine Learning. PMLR, 2017; pp. 3319–3328. [Google Scholar]

- Ma, H.; Li, W.; Zhang, X.; Gao, S.; Lu, S. AttnSense: Multi-level attention mechanism for multimodal human activity recognition. In Proceedings of the IJCAI, 2019; pp. 3109–3115. [Google Scholar]

- Wei, X.; Wang, Z. TCN-attention-HAR: Human Activity Recognition Based on Attention Mechanism Time Convolutional Network. Scientific Reports 2024, 14, 7414. [Google Scholar] [CrossRef]

- Arrotta, L.; Barsocchi, P.; Calabrò, A.; Crivello, A.; La Morgia, M. Comparing LIME and SHAP Global Explanations for Human Activity Recognition. In Proceedings of the Springer Series in Bio-/Neuroinformatics, 2024; Springer; pp. 207–218. [Google Scholar]

- Borella, E.; Çakmakçı, U.B.; Gottardis, E.; Buratto, A.; Marchioro, T.; Badia, L. Effective Sensor Selection for Human Activity Recognition via Shapley Value. In Proceedings of the 2024 IEEE International Workshop on Metrology for Living Environment. IEEE, 2024; pp. 22–27. [Google Scholar]

- Pellano, K.N.; Strümke, I.; Ihlen, E.A.F. From Movements to Metrics: Evaluating Explainable AI Methods in Skeleton-Based Human Activity Recognition. Sensors 2024, 24, 1940. [Google Scholar] [CrossRef] [PubMed]

- Banos, O.; Garcia, R.; Holgado-Terriza, J.A.; Damas, M.; Pomares, H.; Rojas, I.; Saez, A.; Villalonga, C. mHealthDroid: A Novel Framework for Agile Development of Mobile Health Applications. In Proceedings of the Ambient Assisted Living and Daily Activities; Cham, Pecchia, L., Chen, L.L., Nugent, C., Bravo, J., Eds.; 2014; pp. 91–98. [Google Scholar]

- Ullah, N.; Khan, J.A.; De Falco, I.; Sannino, G. Explainable artificial intelligence: importance, use domains, stages, output shapes, and challenges. ACM Computing Surveys 2024, 57, 1–36. [Google Scholar] [CrossRef]

- Adesina, A.; Atoyeshe, A. MSANet: A Hybrid Deep Learning Framework with Self-Attention for Human Activity Recognition. Available at SSRN 5573238 2025.

- Cleland, I.; Nugent, L.; Cruciani, F.; Nugent, C. Leveraging large language models for activity recognition in smart environments. In Proceedings of the 2024 International Conference on Activity and Behavior Computing (ABC). IEEE, 2024; pp. 1–8. [Google Scholar]

- Ullah, N.; Guzmán-Aroca, F.; Martínez-Álvarez, F.; De Falco, I.; Sannino, G. A novel explainable AI framework for medical image classification integrating statistical, visual, and rule-based methods. Medical Image Analysis 2025, 103665. [Google Scholar] [CrossRef]

- Ullah, N.; Hassan, M.; Khan, J.A.; Anwar, M.S.; Aurangzeb, K. Enhancing explainability in brain tumor detection: A novel DeepEBTDNet model with LIME on MRI images. International Journal of Imaging Systems and Technology 2024, 34, e23012. [Google Scholar] [CrossRef]

- Ullah, N.; Khan, J.A.; De Falco, I.; Sannino, G. Bridging clinical gaps: multi-dataset integration for reliable multi-class lung disease classification with deepcrinet and occlusion sensitivity. In Proceedings of the 2024 IEEE Symposium on Computers and Communications (ISCC). IEEE, 2024; pp. 1–6. [Google Scholar]

- Mekruksavanich, S.; Jitpattanakul, A. Efficient and Explainable Human Activity Recognition Using Deep Residual Network with Squeeze-and-Excitation Mechanism. Applied System Innovation 2025, 8, 57. [Google Scholar] [CrossRef]

- Tempel, F.; Ihlen, E.A.F.; Adde, L.; Strümke, I. Explaining Human Activity Recognition with SHAP: Validating insights with perturbation and quantitative measures. Computers in Biology and Medicine 2025, 188, 109838. [Google Scholar] [CrossRef]

- Abdelaal, Y.; Aupetit, M.; Baggag, A.; Al-Thani, D. Exploring the Applications of Explainability in Wearable Data Analytics: Systematic Literature Review. Journal of Medical Internet Research 2024, 26, e53863. [Google Scholar] [CrossRef]

- Mankodiya, H.; Jadav, D.; Gupta, R.; Tanwar, S.; Alharbi, A.; Tolba, A.; et al. XAI-Fall: Explainable AI for fall detection on wearable devices using sequence models and XAI techniques. Mathematics 2022, 10, 1990. [Google Scholar] [CrossRef]

- Sundararajan, M.; Taly, A.; Yan, Q. Axiomatic attribution for deep networks. In Proceedings of the International conference on machine learning. PMLR, 2017; pp. 3319–3328. [Google Scholar]

- Tzanetis, G.; Toumpas, A.; Vasileiou, Z.; Meditskos, G.; Vrochidis, S.; Kompatsiaris, I. Enhancing Health Risk Monitoring: An Explainable Human-Centric Approach Using Wearable Data and Sensor Integration. In Proceedings of the International Conference on Pattern Recognition, 2024; Springer; pp. 407–419. [Google Scholar]

- Troncoso, Á.; Ortega, J.A.; Seepold, R.; Madrid, N.M. Non-invasive devices for respiratory sound monitoring. Procedia Computer Science 2021, 192, 3040–3048. [Google Scholar] [CrossRef]

- Troncoso-García, A.R.; Martínez-Ballesteros, M.; Martínez-Álvarez, F.; Troncoso, A. Explainable machine learning for sleep apnea prediction. Procedia Computer Science 2022, 207, 2930–2939. [Google Scholar] [CrossRef]

- Vanhaeren, T.; Troncoso-García, A.; Maldonado, J.F.T.; Divina, F.; Martínez-García, P.M. Application of XAI to the prediction of CTCF binding sites. Results in Engineering 2025, 25, 103776. [Google Scholar] [CrossRef]

- Fiori, M.; Mor, D.; Civitarese, G.; Bettini, C. GNN-XAR: A Graph Neural Network for Explainable Activity Recognition in Smart Homes. arXiv arXiv:2502.17999. [CrossRef]

- Harris, C.R.; Rouse, E.J.; Prost, L.E.; Kilgas, M.A.; Nakamura-Pereira, M.; Macaluso, R.L.; Gregg, R.D. Ablation Analysis to Select Wearable Sensors for Classifying Standing, Walking, and Running. Sensors 2021, 21, 194. [Google Scholar]

- Ronao, C.A.; Cho, S.B. Human Activity Recognition with Smartphone Sensors using Deep Learning Neural Networks. Expert Systems with Applications 2016, 59, 235–244. [Google Scholar] [CrossRef]

- Jeyakumar, J.V.; Sarker, A.; Garcia, L.A.; Srivastava, M. X-CHAR: A Concept-based Explainable Complex Human Activity Recognition Model. Proceedings of the ACM on Interactive, Mobile, Wearable and Ubiquitous Technologies 2023, 7, 1–28. [Google Scholar] [CrossRef]

- Wang, H.; Zhao, J.; Li, J.; Tian, L.; Tu, P.; Cao, T.; An, Y.; Wang, K.; Li, S. Human Activity Recognition using Wearable Sensors by Heterogeneous Convolutional Neural Networks. Engineering Applications of Artificial Intelligence 2022, 112, 104867. [Google Scholar]

- El-Adawi, E.; Essa, E.; Handosa, M.; Fahmy, D.; Attallah, O. Wireless Body Area Sensor Networks based Human Activity Recognition using Deep Learning. Scientific Reports 2024, 14, 2702. [Google Scholar] [CrossRef]

- Kim, K.; Jeong, W.; Park, S.; Kim, H. Granular and Explainable Human Activity Recognition through Sound Segmentation and Deep Learning. Journal of Computational Design and Engineering 2025, 12, 252–268. [Google Scholar] [CrossRef]

- Arrotta, L.; Civitarese, G.; Bettini, C. Using Large Language Models to Compare Explainable Models for Smart Home Human Activity Recognition. In Proceedings of the Companion of the 2024 ACM International Joint Conference on Pervasive and Ubiquitous Computing. ACM, 2024; pp. 704–709. [Google Scholar]

- Bulling, A.; Blanke, U.; Schiele, B. A tutorial on human activity recognition using body-worn inertial sensors. ACM Computing Surveys (CSUR) 2014, 46, 1–33. [Google Scholar] [CrossRef]

- Ordóñez, F.J.; Roggen, D. Deep convolutional and lstm recurrent neural networks for multimodal wearable activity recognition. Sensors 2016, 16, 115. [Google Scholar] [CrossRef] [PubMed]

- Jeyakumar, J.V.; Sarker, A.; Garcia, L.A.; Srivastava, M. X-char: A concept-based explainable complex human activity recognition model. Proceedings of the ACM on interactive, mobile, wearable and ubiquitous technologies 2023, 7, 1–28. [Google Scholar] [CrossRef]

- Ellis, C.A.; Sendi, M.S.; Zhang, R.; Carbajal, D.A.; Wang, M.D.; Miller, R.L.; Calhoun, V.D. Novel methods for elucidating modality importance in multimodal electrophysiology classifiers. Frontiers in Neuroinformatics 2023, 17, 1123376. [Google Scholar] [CrossRef]

- Strumbelj, E.; Kononenko, I. An efficient explanation of individual classifications using game theory. The Journal of Machine Learning Research 2010, 11, 1–18. [Google Scholar]

| Parameter | Value |

|---|---|

| Data Configuration | |

| Window size (T) | 500 samples (10 s) |

| Stride | 50 samples (1 s) |

| Overlap | 90% |

| Training subjects | 1–8 |

| Test subjects | 9, 10 |

| Input features (F) | 23 channels |

| Number of classes | 12 |

| Preprocessing | |

| Normalization | Z-score (per-channel) |

| Data augmentation | None |

| Model Architecture | |

| Time-distributed dense 1 | 128 units, ReLU, BatchNorm |

| Time-distributed dense 2 | 128 units, ReLU, BatchNorm |

| Temporal pooling | Max-pooling |

| LSTM hidden units | 256 |

| Output layer | Dense, softmax (12 classes) |

| Training Configuration | |

| Optimizer | Adam |

| Learning rate | |

| Batch size | 32 |

| Epochs | 100 |

| Early stopping patience | 15 epochs |

| Loss function | Cross-entropy |

| Weight decay | |

| Random seed | 42 |

| XAI Configuration | |

| IG integration steps | 50 |

| IG samples per class | 32 |

| Shapley permutations | 20 |

| Shapley test samples | 32 |

| Sensor Group | Description | Channel Indices |

|---|---|---|

| Chest_ACC | Chest accelerometer (3-axis) | 0, 1, 2 |

| Chest_ECG | Chest ECG (2-lead) | 3, 4 |

| Ankle_ACC | Ankle accelerometer (3-axis) | 5, 6, 7 |

| Ankle_GYRO | Ankle gyroscope (3-axis) | 8, 9, 10 |

| Ankle_MAG | Ankle magnetometer (3-axis) | 11, 12, 13 |

| Wrist_ACC | Wrist accelerometer (3-axis) | 14, 15, 16 |

| Wrist_GYRO | Wrist gyroscope (3-axis) | 17, 18, 19 |

| Wrist_MAG | Wrist magnetometer (3-axis) | 20, 21, 22 |

| Class | Precision | Recall | F1-score | Support |

|---|---|---|---|---|

| Standing still | 0.97 | 0.99 | 0.98 | 118 |

| Sitting and relaxing | 0.95 | 1.00 | 0.98 | 123 |

| Lying down | 1.00 | 0.95 | 0.97 | 123 |

| Walking | 1.00 | 0.99 | 1.00 | 123 |

| Climbing stairs | 0.99 | 0.97 | 0.98 | 123 |

| Waist bends forward | 0.96 | 1.00 | 0.98 | 107 |

| Frontal elevation of arms | 1.00 | 0.97 | 0.99 | 112 |

| Knees bending | 0.97 | 0.97 | 0.97 | 117 |

| Cycling | 1.00 | 1.00 | 1.00 | 123 |

| Jogging | 0.98 | 0.99 | 0.99 | 122 |

| Running | 0.99 | 0.96 | 0.98 | 124 |

| Jump front & back | 0.92 | 1.00 | 0.96 | 36 |

| Accuracy | 0.98 | 1351 | ||

| Macro avg | 0.98 | 0.98 | 0.98 | 1351 |

| Weighted avg | 0.98 | 0.98 | 0.98 | 1351 |

| Sensor group | Abl. Acc | Abl. F1 | ||

|---|---|---|---|---|

| Ankle_MAG | 0.511 | 0.471 | 0.42 | 0.50 |

| Wrist_ACC | 0.824 | 0.158 | 0.81 | 0.28 |

| Ankle_ACC | 0.830 | 0.152 | 0.82 | 0.25 |

| Wrist_MAG | 0.877 | 0.105 | 0.86 | 0.18 |

| Chest_ACC | 0.881 | 0.101 | 0.87 | 0.22 |

| Wrist_GYRO | 0.945 | 0.038 | 0.94 | 0.08 |

| Chest_ECG | 0.982 | 0.000 | 0.98 | 0.00 |

| Ankle_GYRO | 0.984 | 0.98 |

| Sensor group | % Total | |

|---|---|---|

| Wrist_ACC | 2.07 | 48.6 |

| Chest_ACC | 0.95 | 22.2 |

| Ankle_ACC | 0.78 | 18.2 |

| Ankle_GYRO | 0.18 | 4.3 |

| Wrist_GYROC | 0.15 | 3.6 |

| Ankle_MAG | 0.06 | 1.4 |

| Wrist_MAG | 0.05 | 1.1 |

| Chest_ECG | 0.03 | 0.6 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).