Submitted:

23 December 2025

Posted:

24 December 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Background and Preliminaries

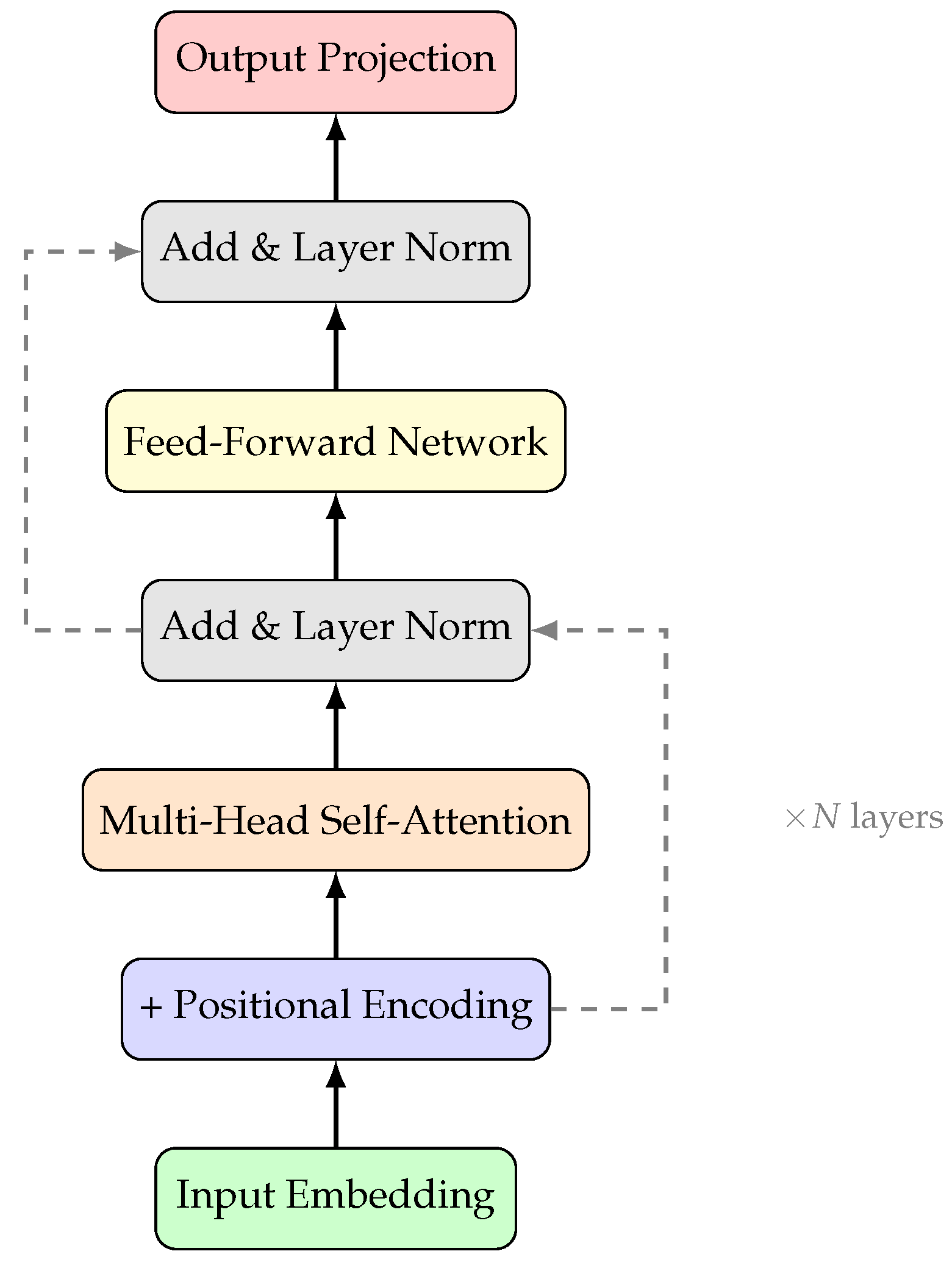

2.1. Large Language Models: Foundations

2.2. Defining Reasoning in LLMs

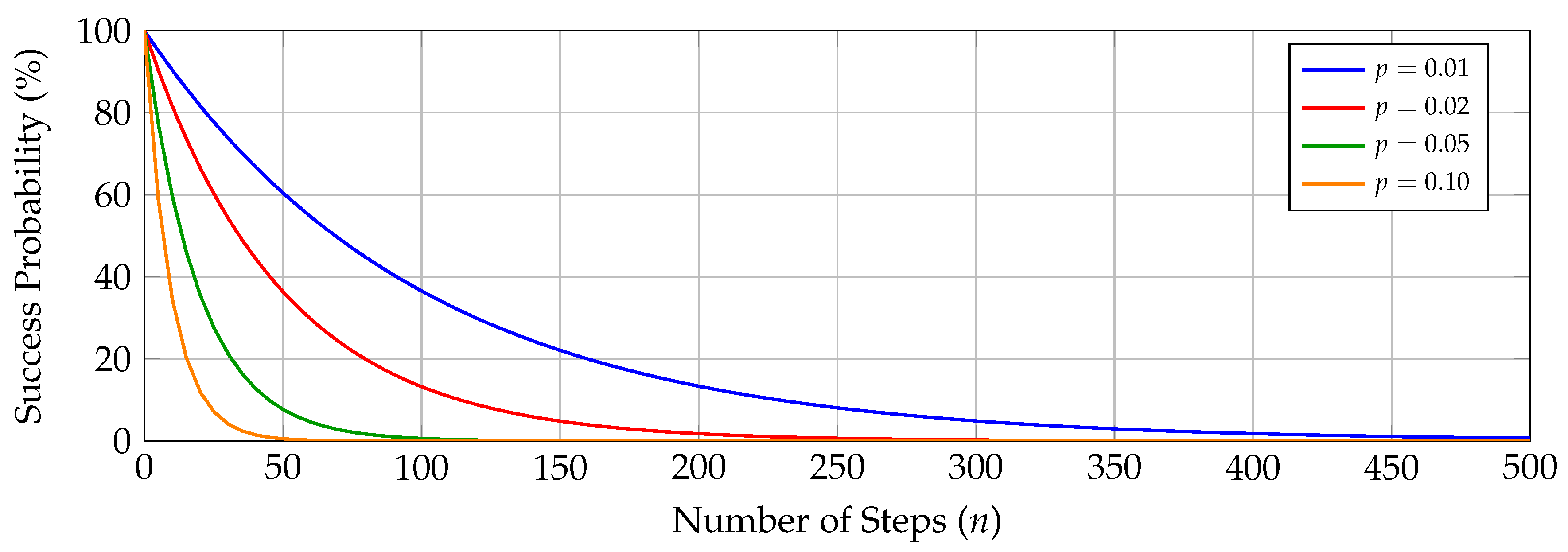

2.3. The Error Propagation Problem

2.4. Evaluation Metrics and Benchmarks

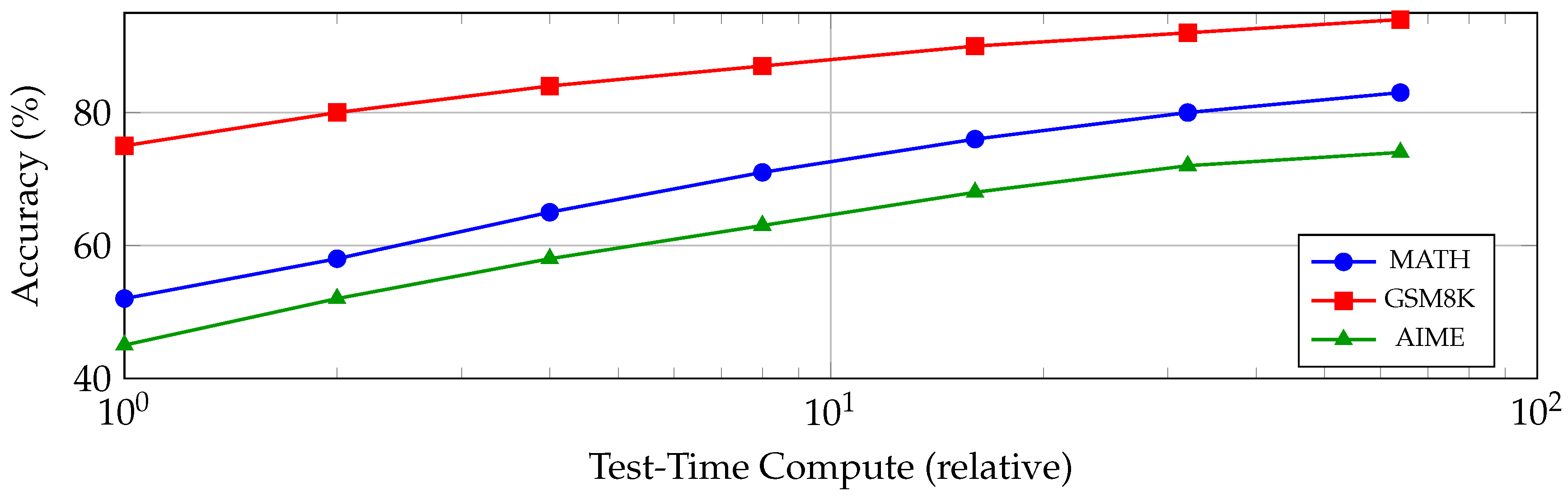

2.5. Test-Time Compute and Inference Scaling

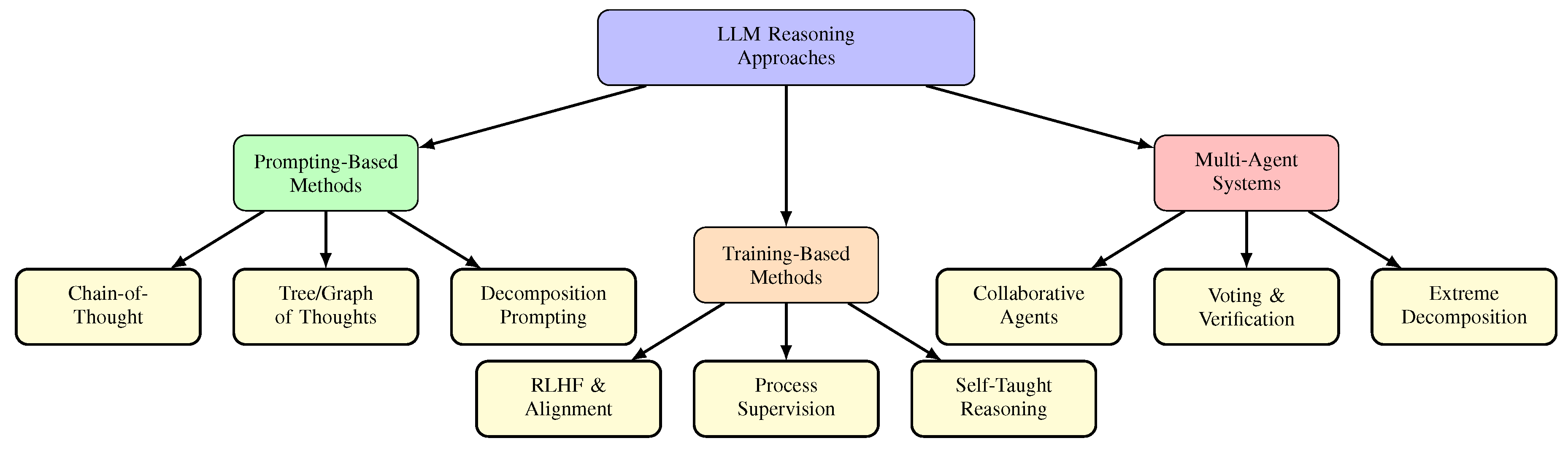

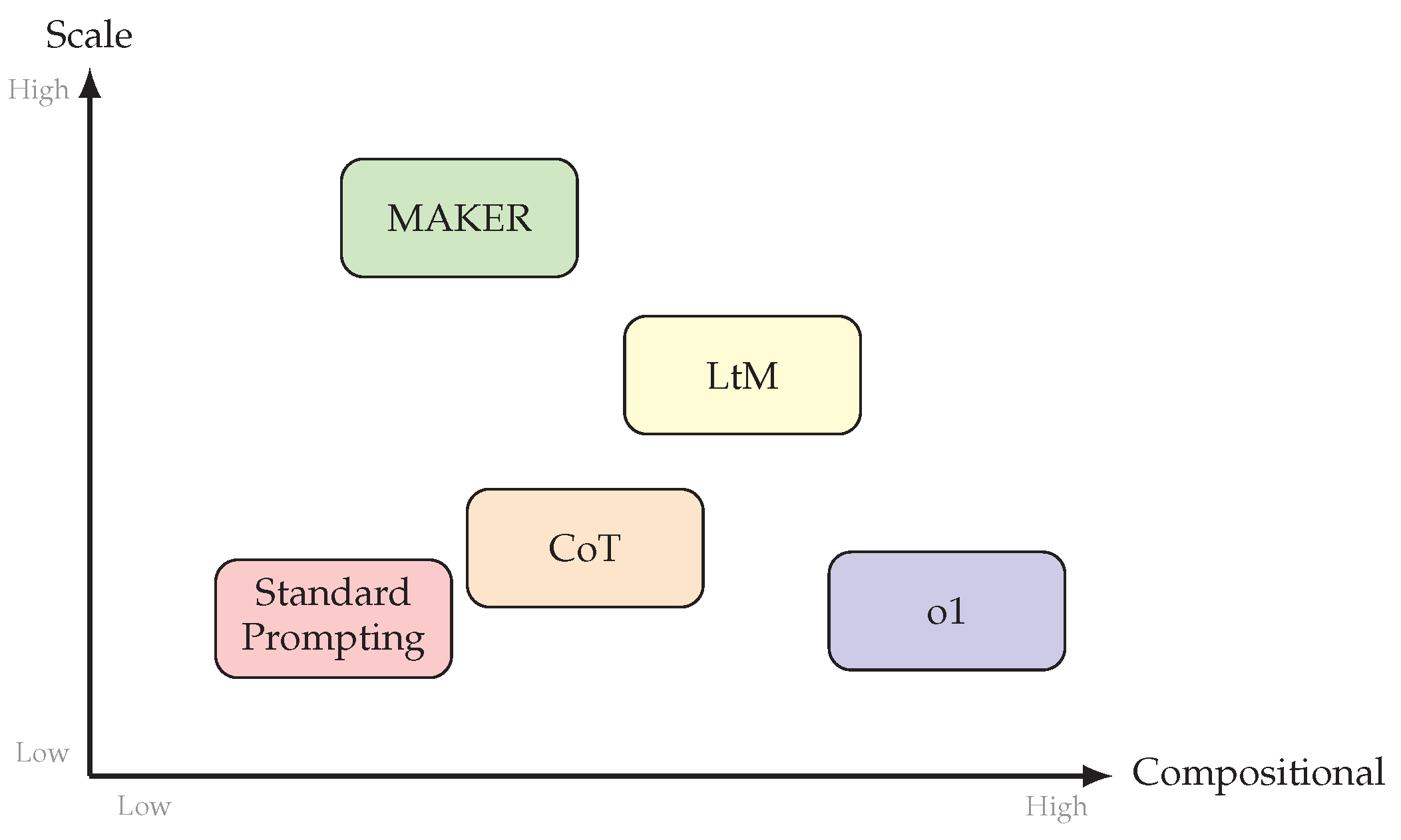

3. Taxonomy of LLM Reasoning Approaches

3.1. Taxonomy Overview

3.2. Prompting-Based Methods

3.3. Training-Based Methods

3.4. Multi-Agent Systems

3.5. Relationships Among Paradigms

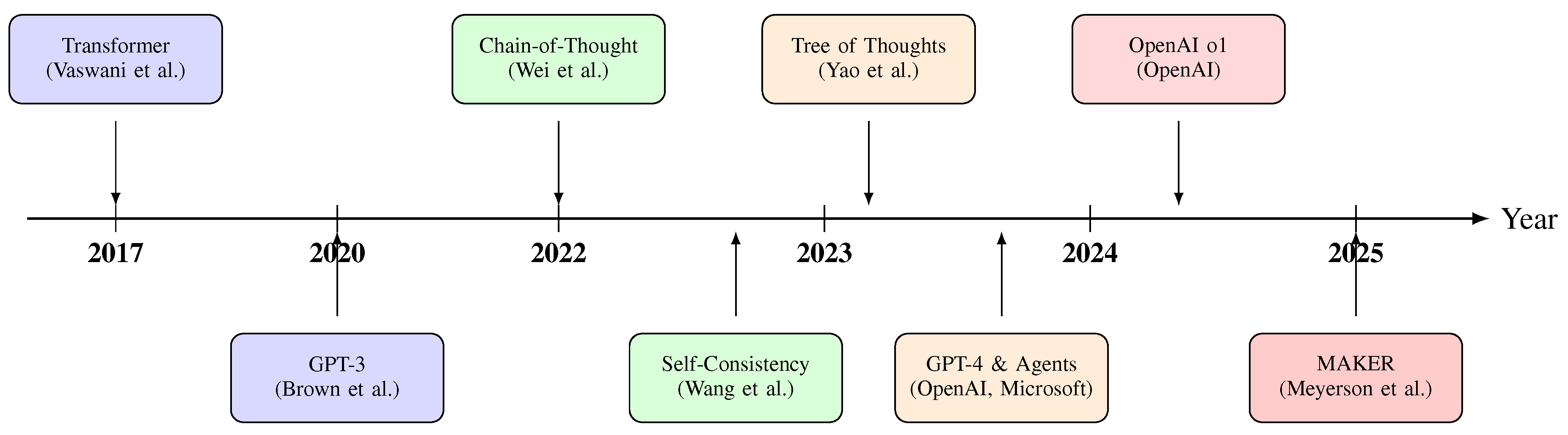

3.6. Evolution Timeline

4. Prompting-Based Reasoning Methods

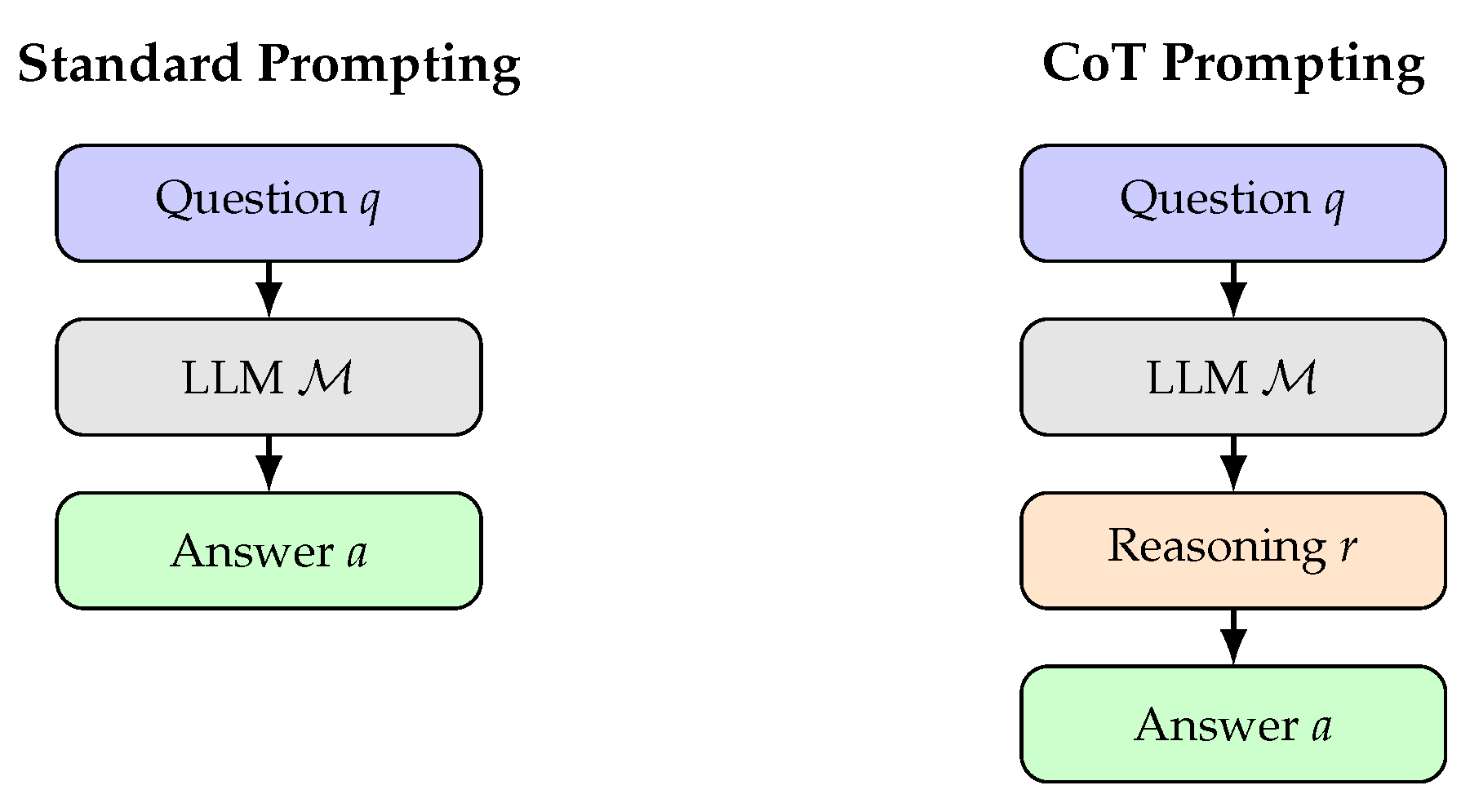

4.1. Chain-of-Thought Prompting

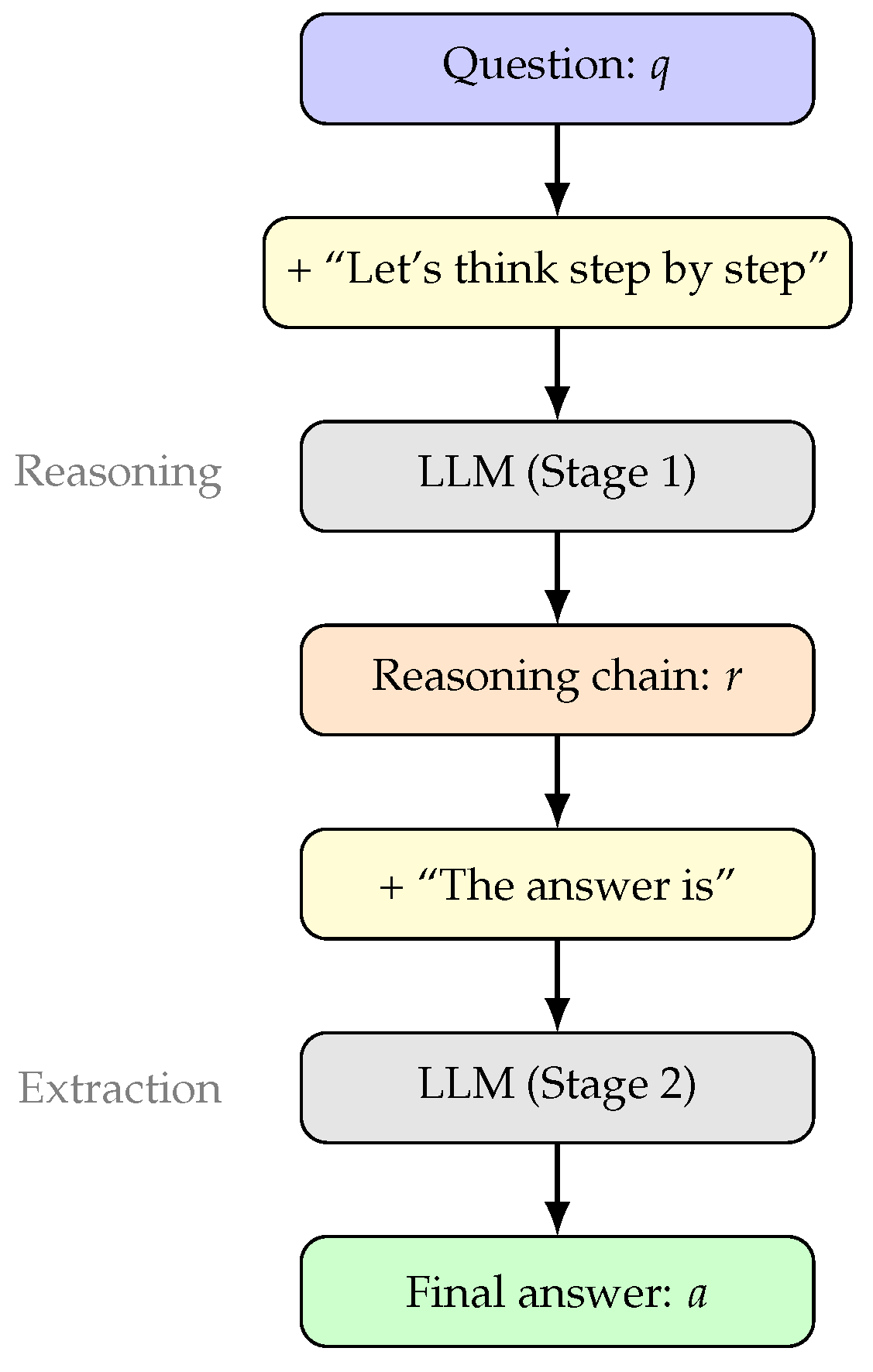

4.2. Zero-Shot Chain-of-Thought

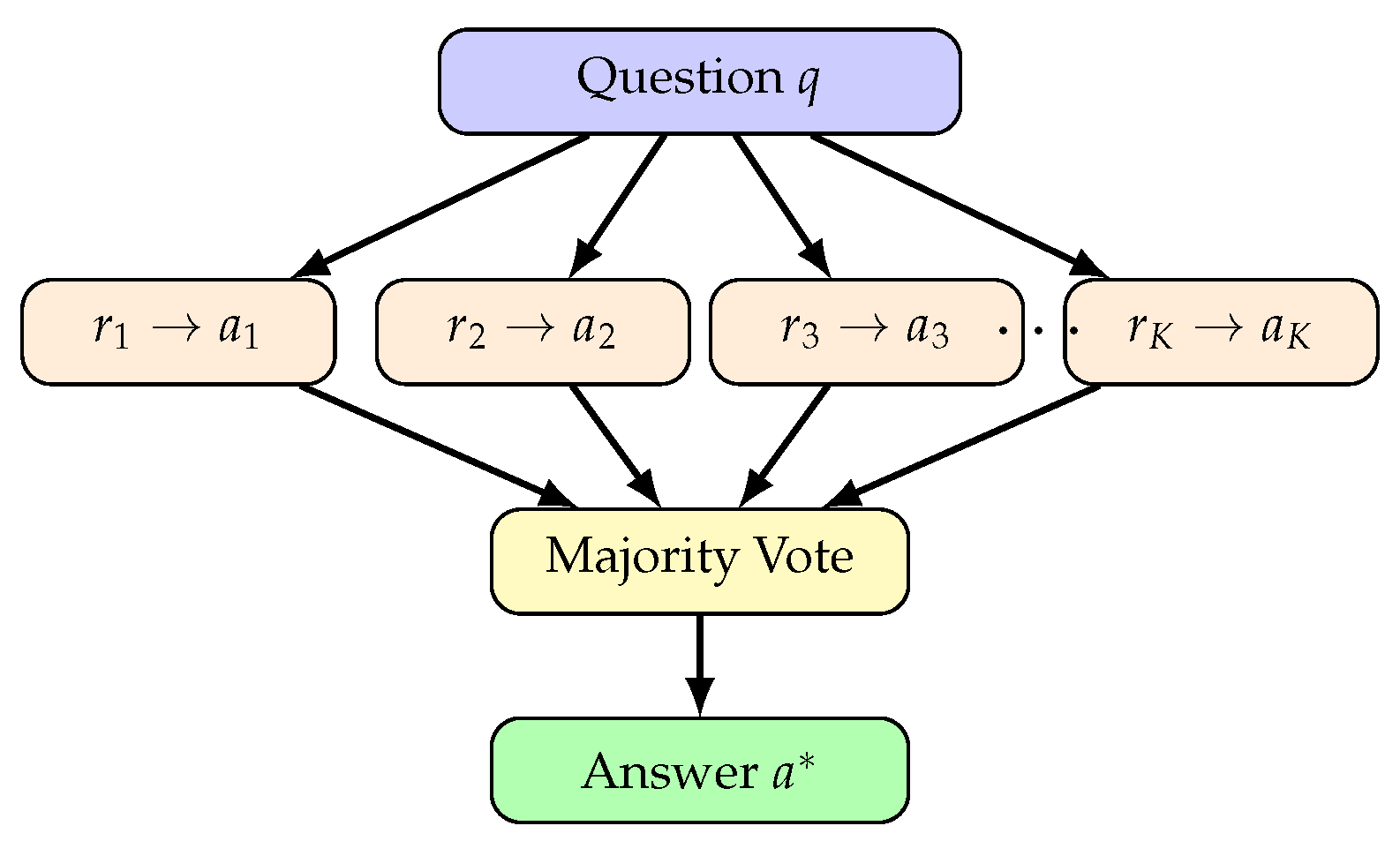

4.3. Self-Consistency

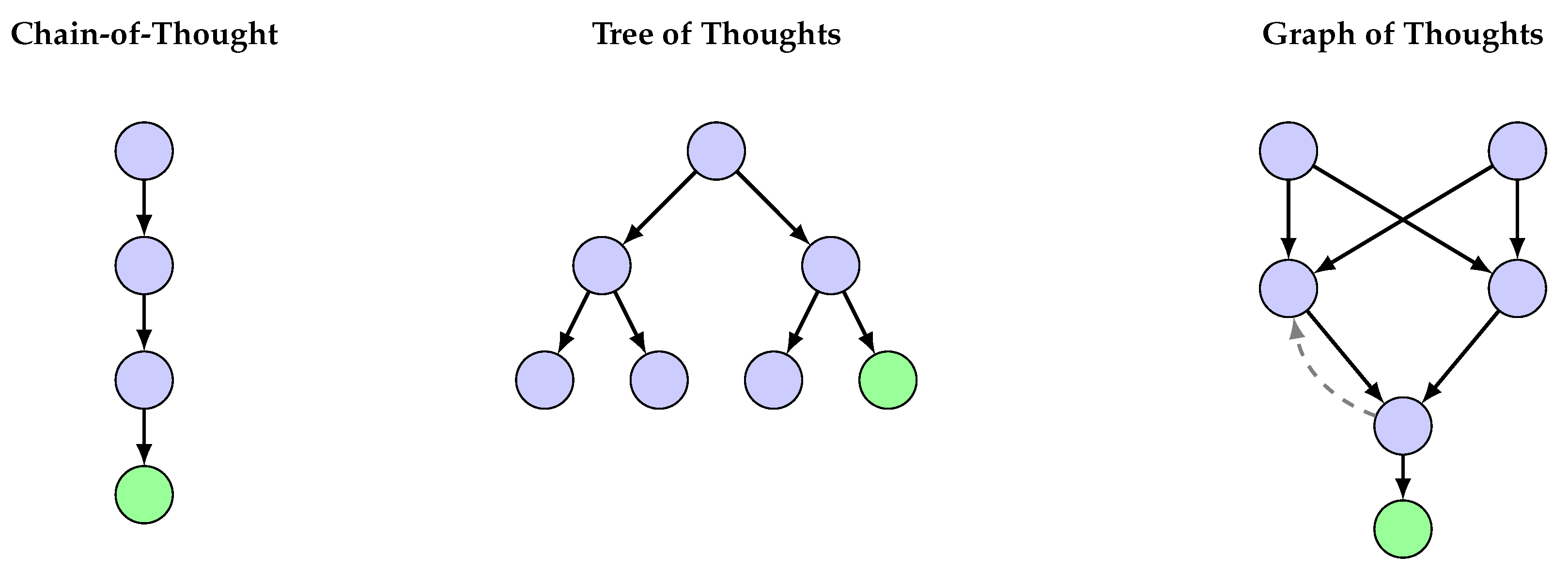

4.4. Tree and Graph of Thoughts

4.5. Decomposition-Based Prompting

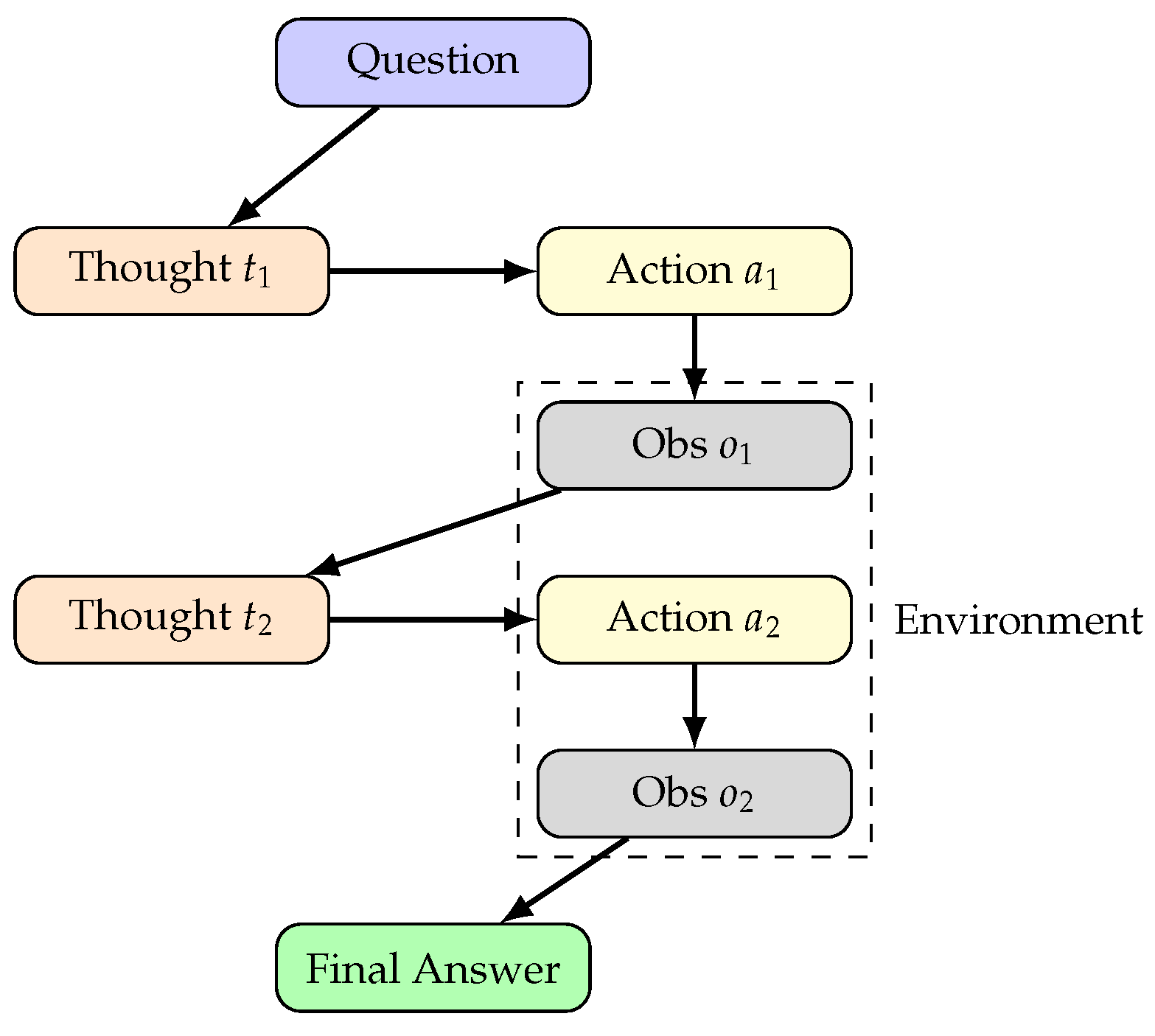

4.6. Reasoning with Tool Use

5. Training-Based Reasoning Methods

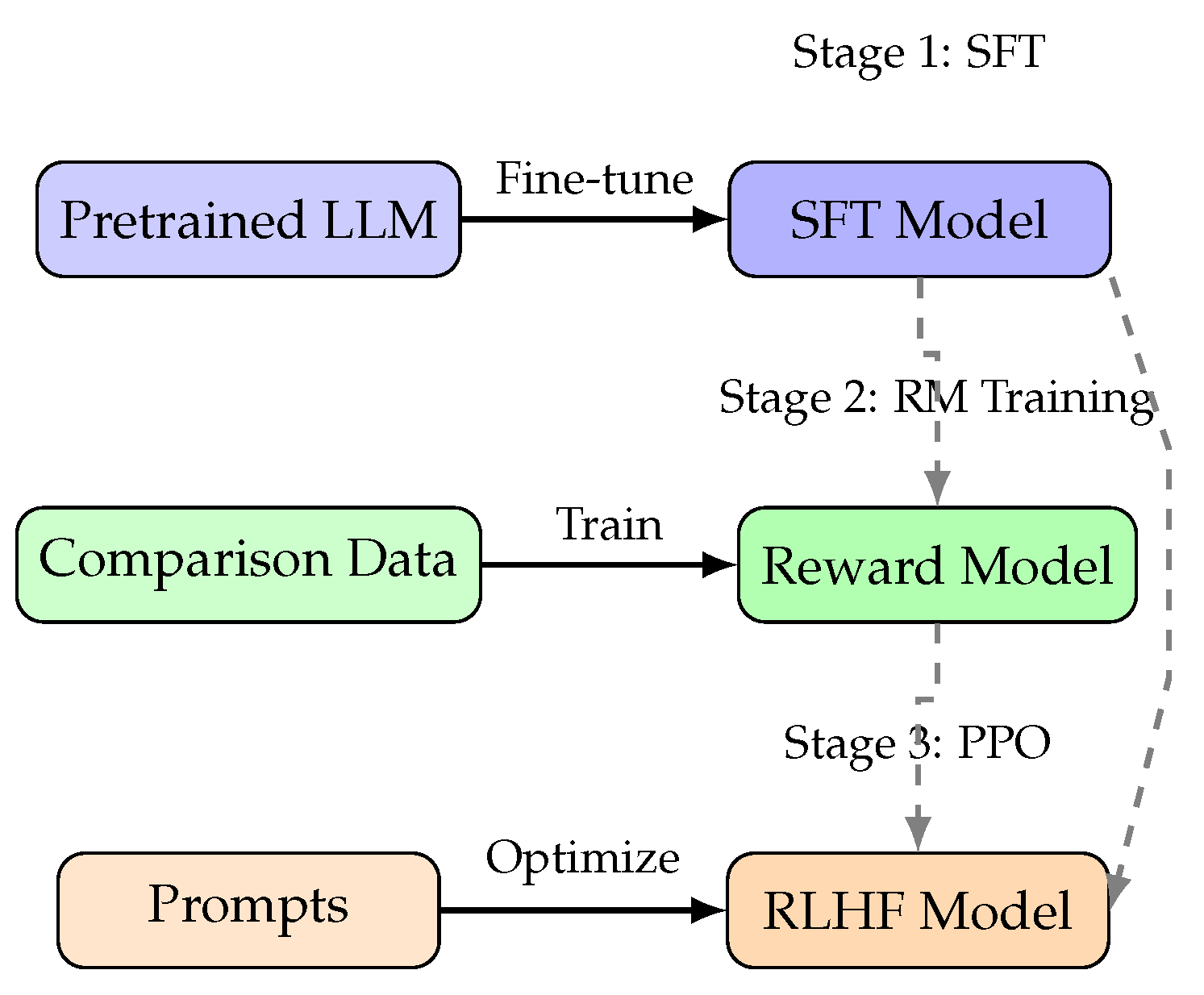

5.1. Reinforcement Learning from Human Feedback

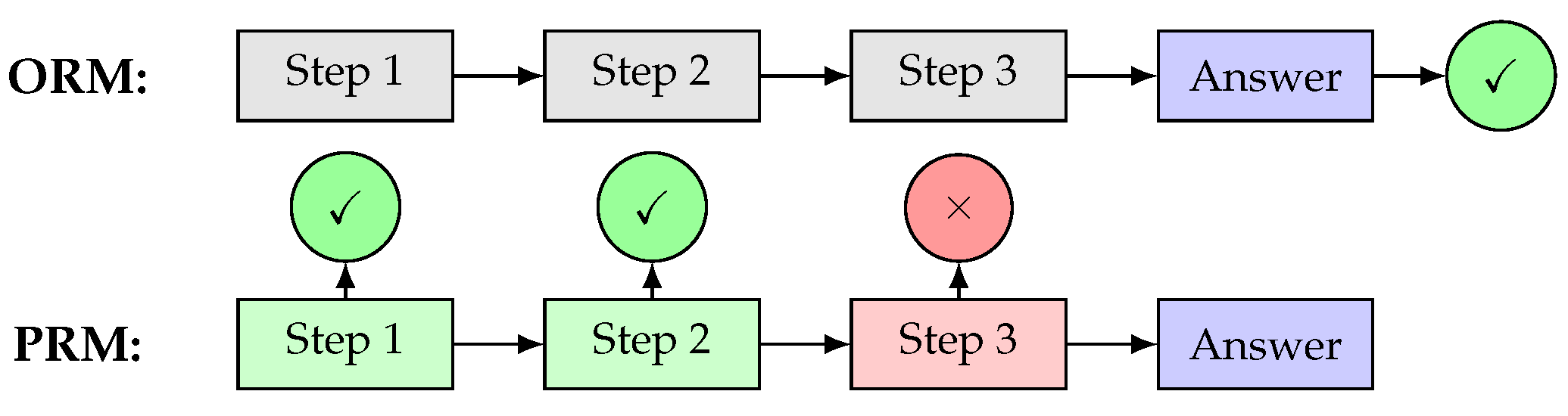

5.2. Process Reward Models

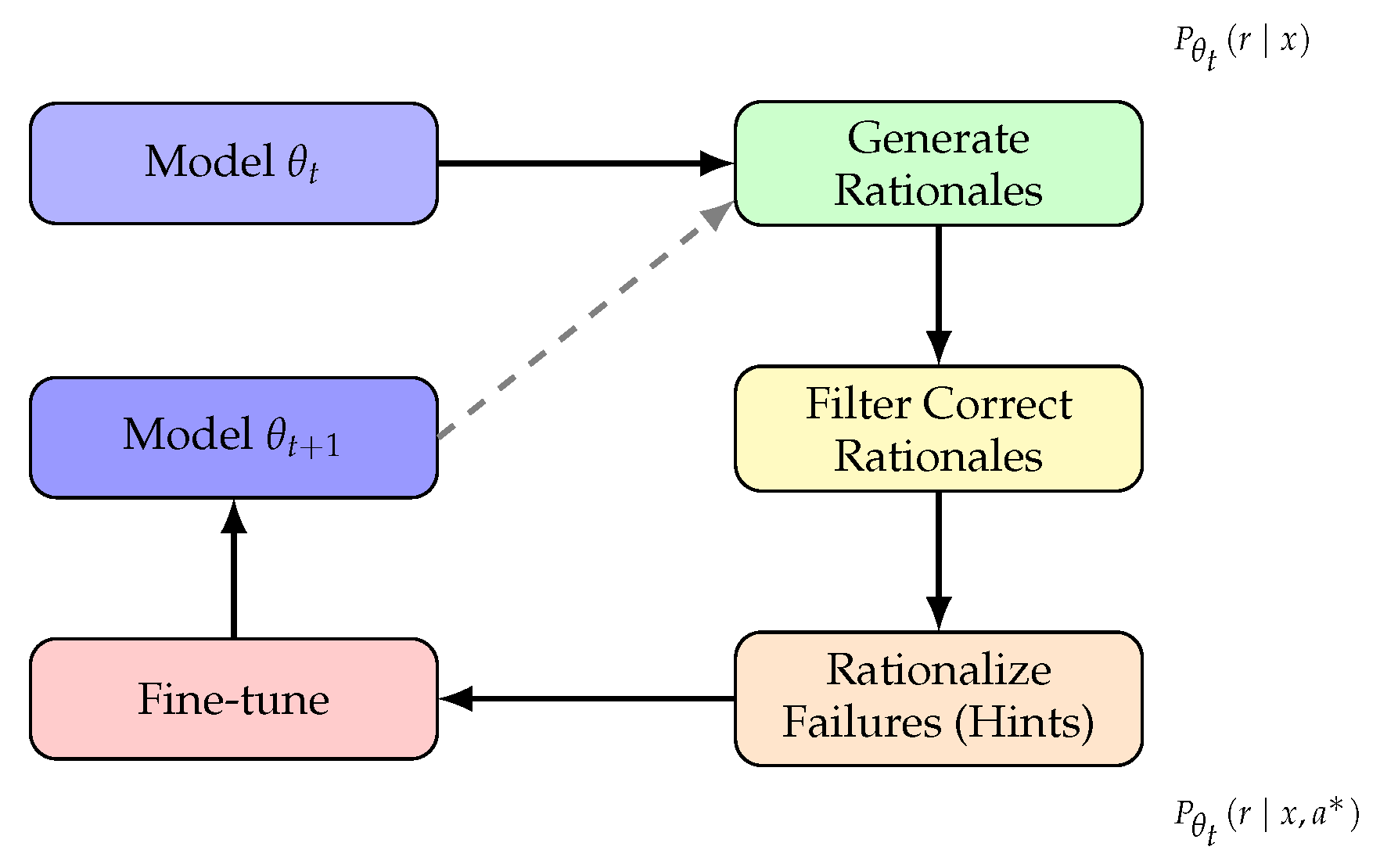

5.3. Self-Taught Reasoning

5.4. Constitutional AI and RLAIF

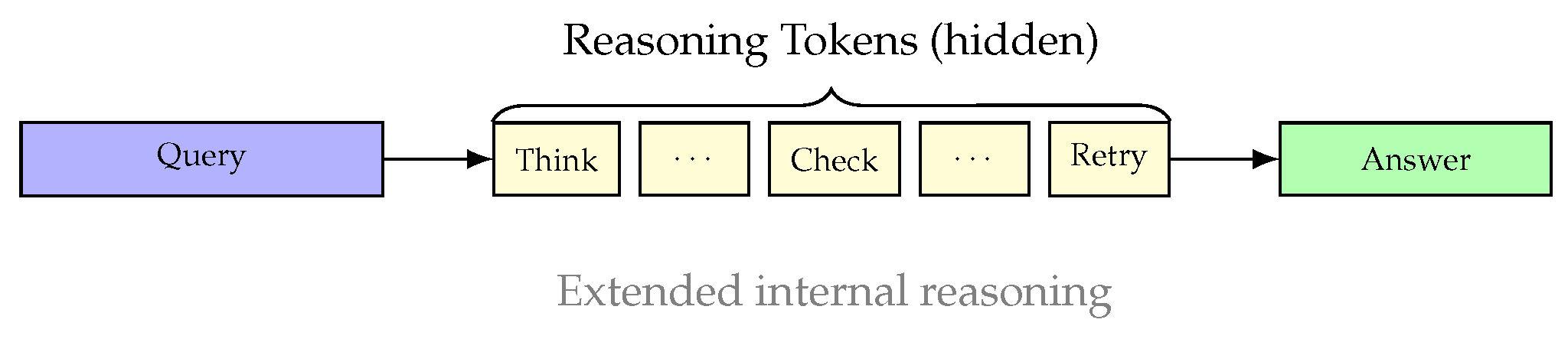

5.5. Reasoning-Specialized Models

5.6. Comparative Analysis

6. Multi-Agent Systems for Reasoning

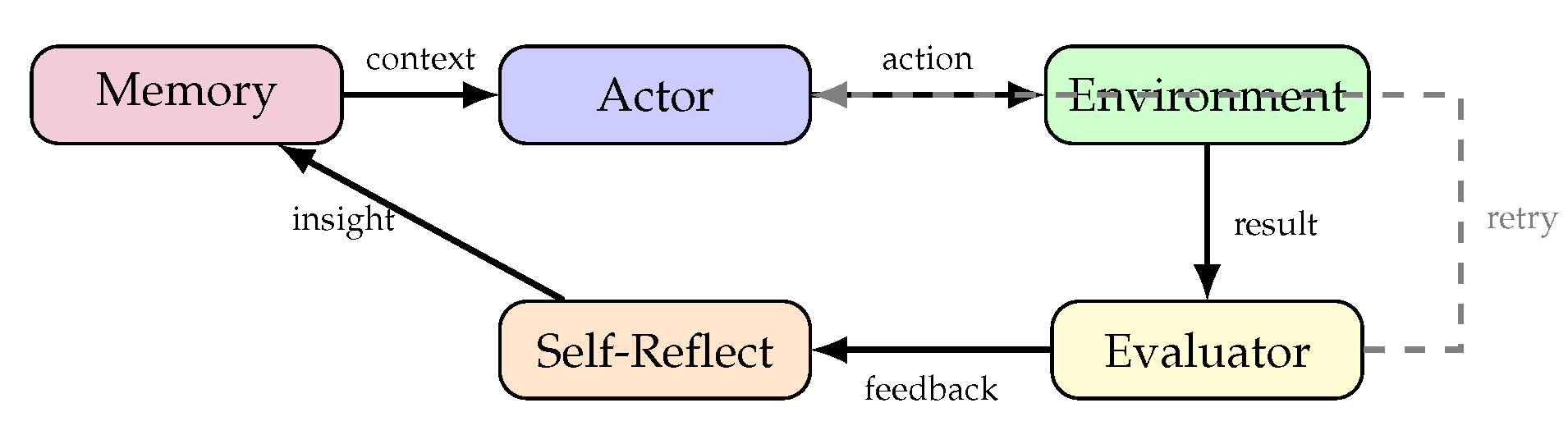

6.1. Foundations of Multi-Agent LLM Systems

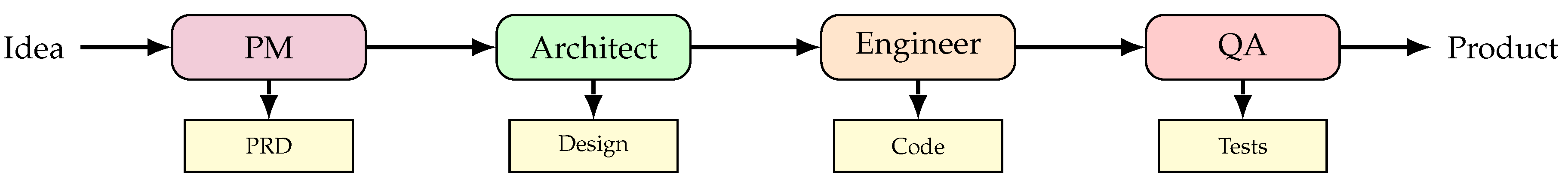

6.2. Collaborative Agent Frameworks

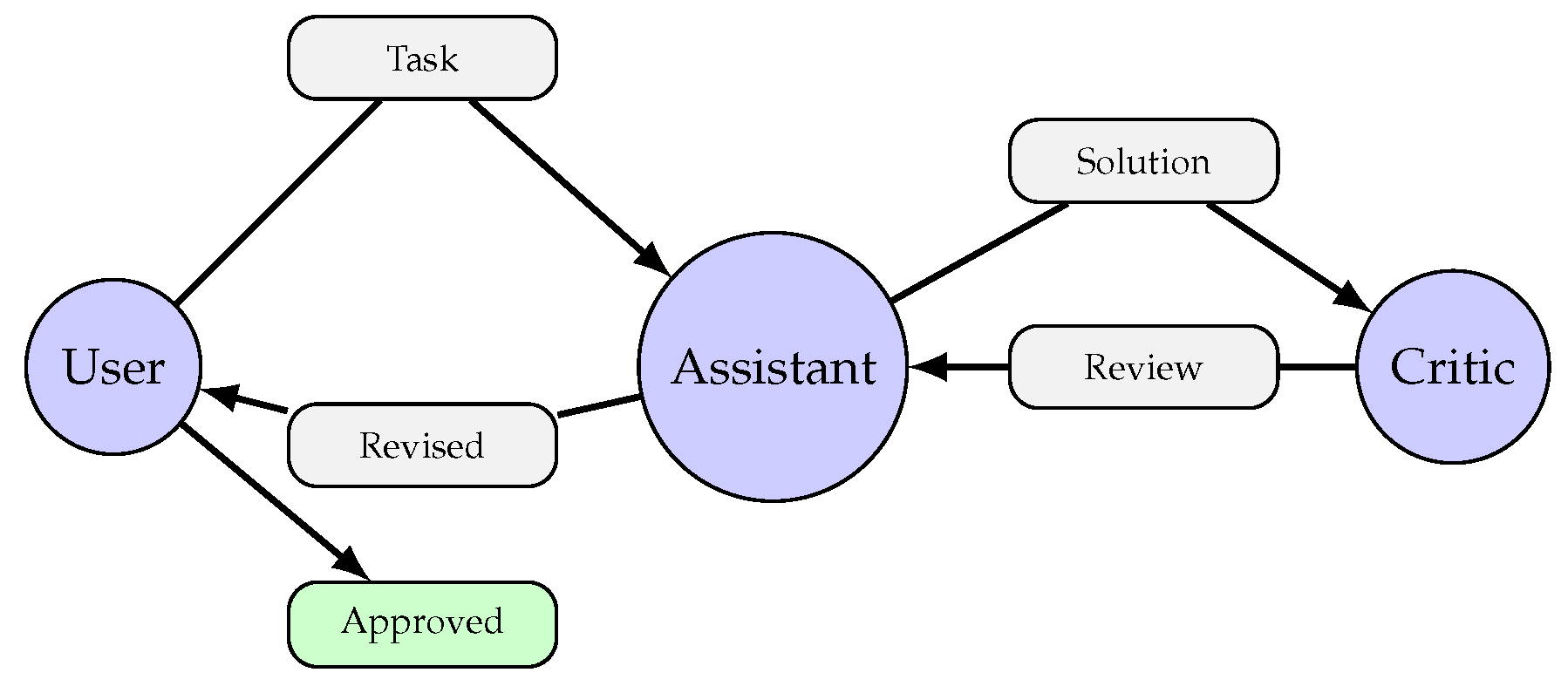

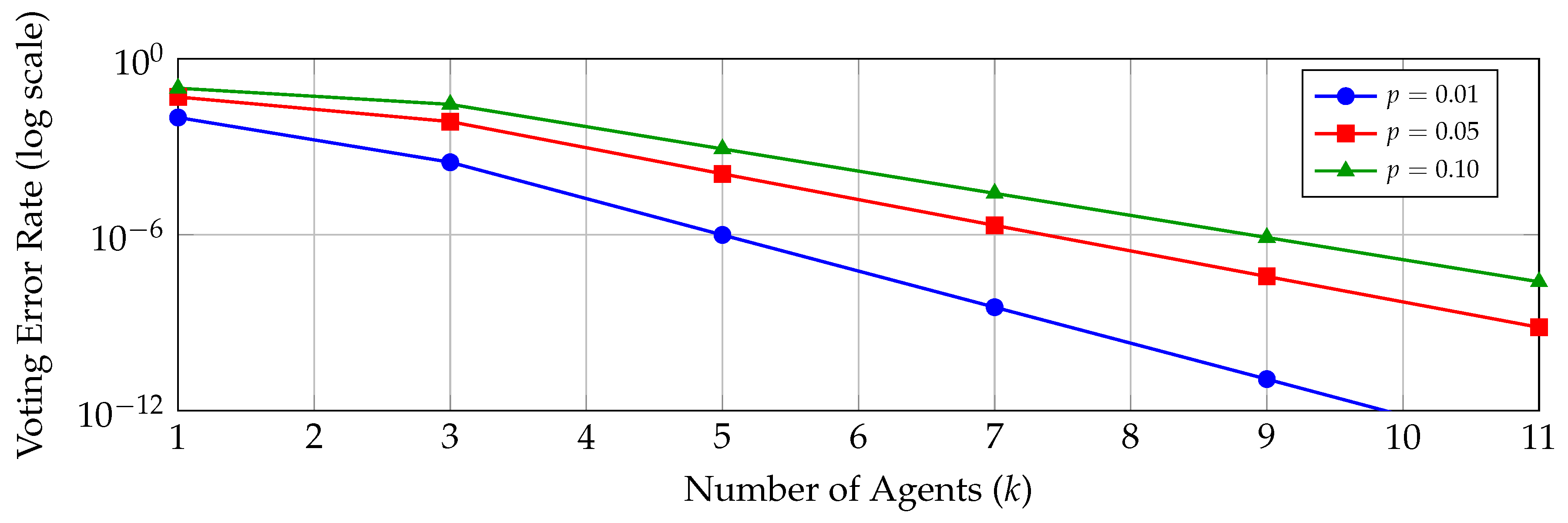

6.3. Debate and Verification Mechanisms

6.4. MAKER: Massively Decomposed Agentic Processes

6.5. Theoretical Analysis of Error Correction

6.6. Implications for Organizational-Scale Problems

7. Experimental Analysis

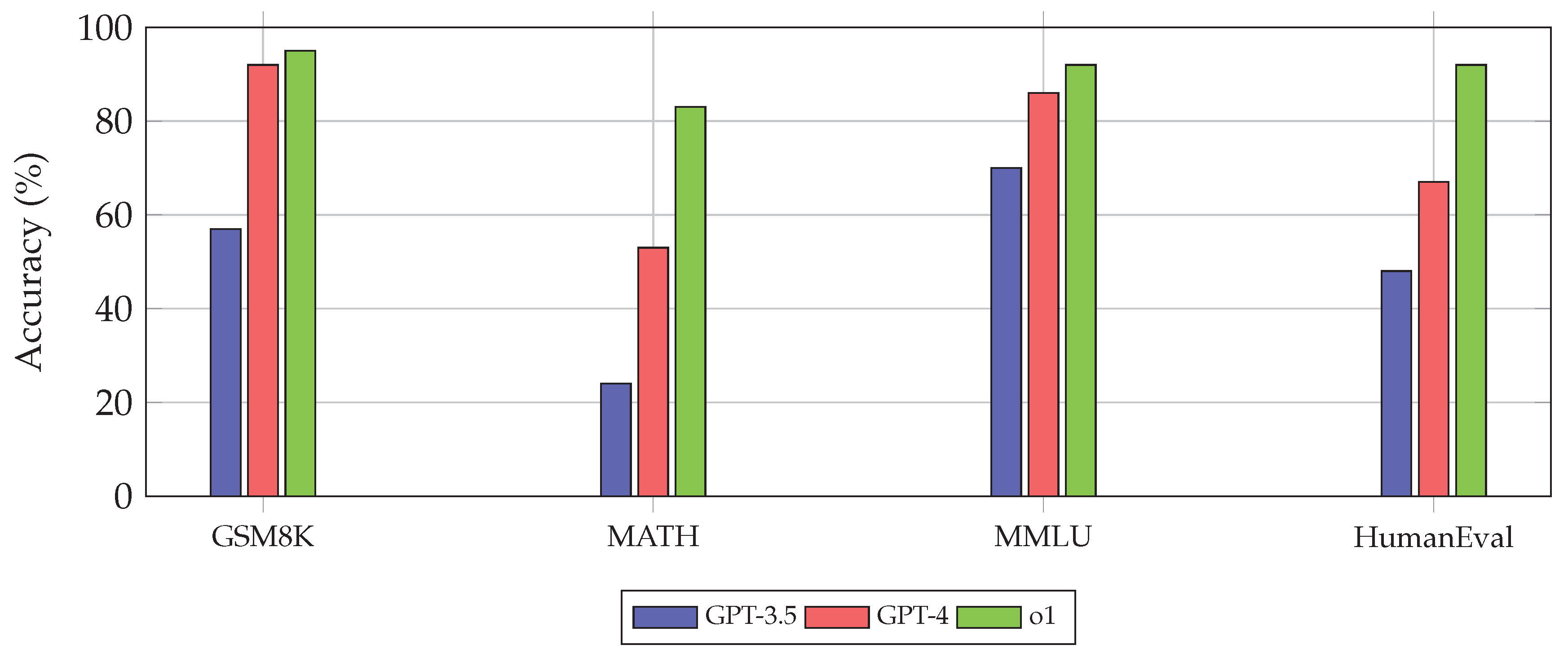

7.1. Benchmark Performance Overview

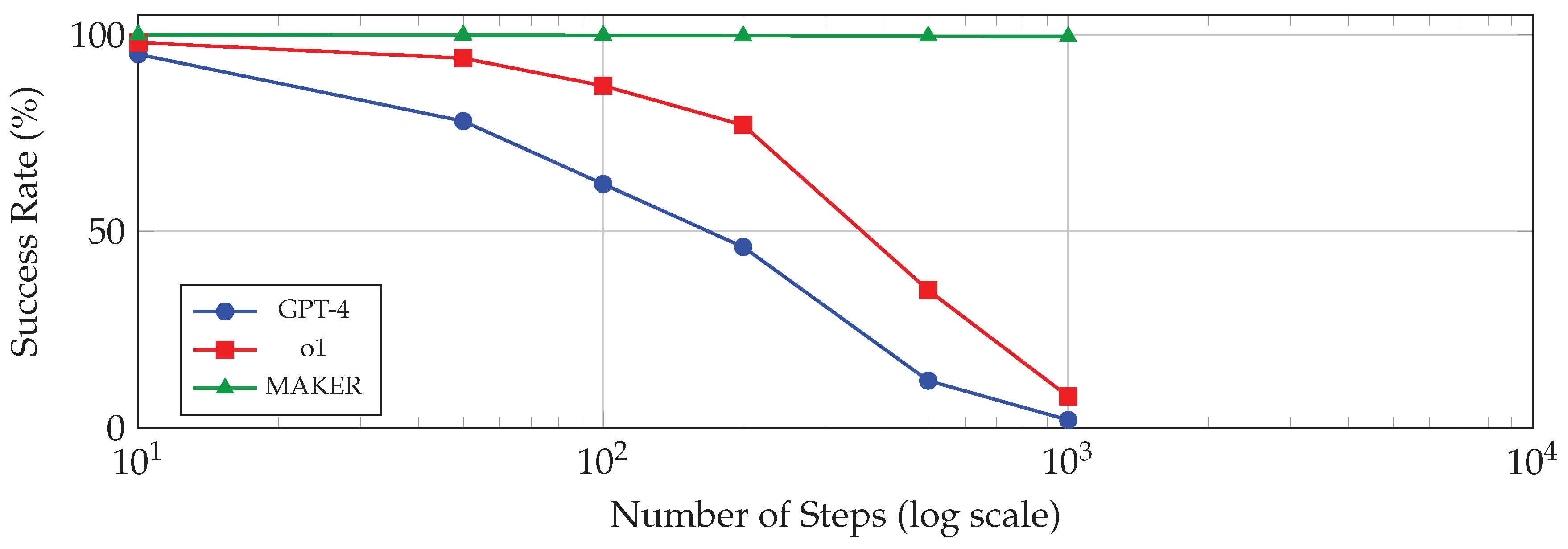

7.2. Performance on Long-Horizon Tasks

7.3. Analysis of Reasoning Errors

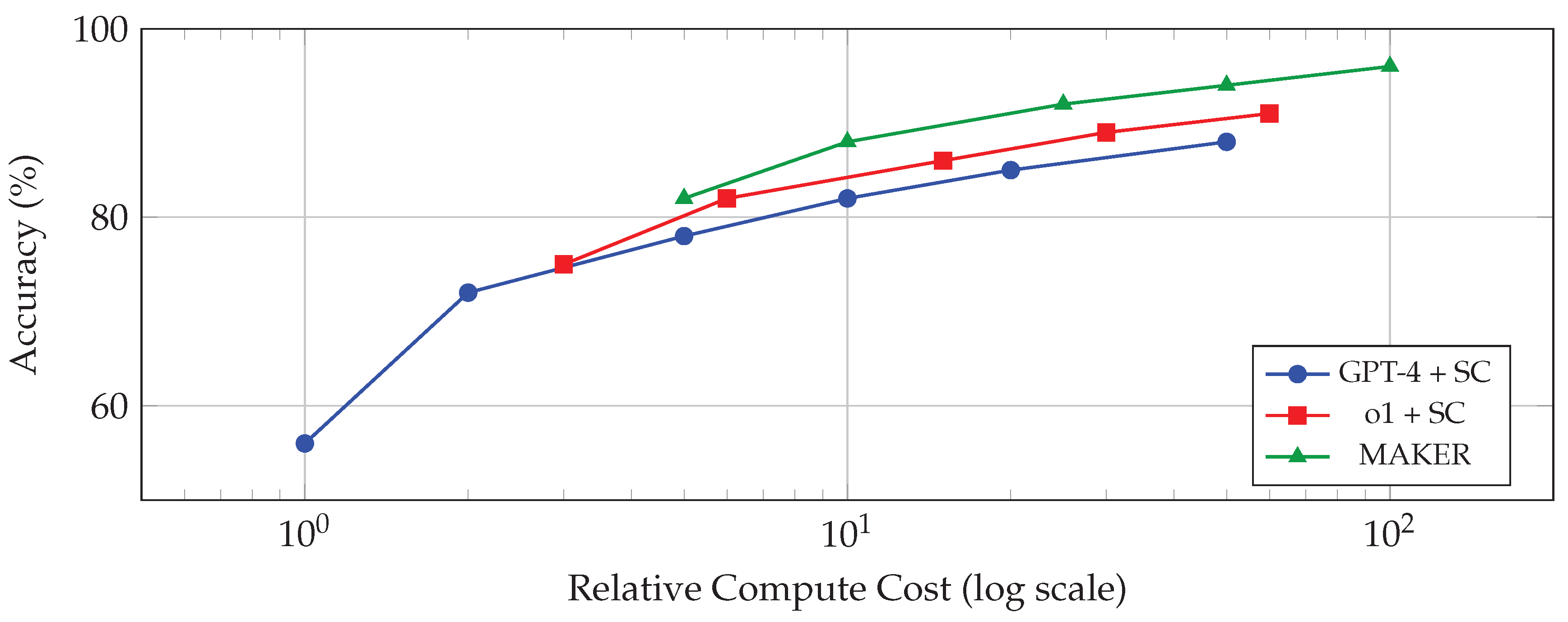

7.4. Cost-Accuracy Trade-Offs

7.5. Generalization Analysis

8. Discussion

8.1. Key Insights and Lessons Learned

8.2. The Error Propagation Barrier

8.3. Relationship to Human Reasoning

8.4. Safety and Alignment Considerations

8.5. Computational Economics

9. Future Research Directions

9.1. Compositional Generalization

9.2. Hybrid Neurosymbolic Reasoning

9.3. Scaling Multi-Agent Architectures

9.4. Continual Learning and Adaptation

9.5. Interpretability and Transparency

9.6. Multimodal Reasoning

9.7. Theoretical Foundations

9.8. Real-World Deployment Challenges

10. Conclusion

References

- Brown, T.B.; Mann, B.; Ryder, N.; Subbiah, M.; Kaplan, J.D.; Dhariwal, P.; Neelakantan, A.; Shyam, P.; Sastry, G.; Askell, A.; et al. Language Models are Few-Shot Learners. Quarterly journal of the royal meteorological society 2020, 33, 1877–1901. [Google Scholar]

- OpenAI. GPT-4 Technical Report. arXiv 2023, arXiv:2303.08774. [Google Scholar] [CrossRef]

- Ruan, Y.; et al. Emergent Abilities in Large Language Models: A Survey. arXiv 2024, arXiv:2503.05788. [Google Scholar]

- Yu, Z.; Idris, M.Y.I.; Wang, P. Visualizing our changing Earth: A creative AI framework for democratizing environmental storytelling through satellite imagery. In Proceedings of the NeurIPS Creative AI Track, 2025. [Google Scholar]

- Yu, Z.; Idris, M.Y.I.; Wang, P.; Qureshi, R. CoTextor: Training-free modular multilingual text editing via layered disentanglement and depth-aware fusion. In Proceedings of the NeurIPS Creative AI Track, 2025. [Google Scholar]

- Yu, Z. AI for science: A comprehensive review on innovations, challenges, and future directions. International Journal of Artificial Intelligence for Science (IJAI4S) 2025, 1. [Google Scholar] [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser; Polosukhin, I. Attention Is All You Need. 2017. [Google Scholar] [CrossRef]

- Wei, J.; Tay, Y.; Bommasani, R.; Raffel, C.; Zoph, B.; Borgeaud, S.; Yogatama, D.; Bosma, M.; Zhou, D.; Metzler, D.; et al. Emergent Abilities of Large Language Models. arXiv 2022, arXiv:2206.07682. [Google Scholar] [CrossRef]

- Snell, C.; Lee, J.; Xu, K.; Kumar, A. Scaling LLM Test-Time Compute Optimally can be More Effective than Scaling Model Parameters. arXiv 2024, arXiv:2408.03314. [Google Scholar] [CrossRef]

- Lin, S. LLM-Driven Adaptive Source-Sink Identification and False Positive Mitigation for Static Analysis. arXiv 2025, arXiv:2511.04023. [Google Scholar]

- Xin, Y.; Yan, J.; Qin, Q.; Li, Z.; Liu, D.; Li, S.; Huang, V.S.J.; Zhou, Y.; Zhang, R.; Zhuo, L.; et al. Lumina-mgpt 2.0: Stand-alone autoregressive image modeling. arXiv 2025, arXiv:2507.17801. [Google Scholar]

- Xin, Y.; Du, J.; Wang, Q.; Yan, K.; Ding, S. Mmap: Multi-modal alignment prompt for cross-domain multi-task learning. Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence 2024, 38, 16076–16084. [Google Scholar] [CrossRef]

- Huang, J.; Chang, K.C.C. Towards Reasoning in Large Language Models: A Survey. In Proceedings of the Findings of the Association for Computational Linguistics: ACL 2023, 2023; pp. 1049–1065. [Google Scholar]

- Wang, J.; He, Y.; Zhong, Y.; Song, X.; Su, J.; Feng, Y.; Wang, R.; He, H.; Zhu, W.; Yuan, X.; et al. Twin co-adaptive dialogue for progressive image generation. In Proceedings of the Proceedings of the 33rd ACM International Conference on Multimedia, 2025; pp. 3645–3653. [Google Scholar]

- Wu, X.; Zhang, Y.T.; Lai, K.W.; Yang, M.Z.; Yang, G.L.; Wang, H.H. A novel centralized federated deep fuzzy neural network with multi-objectives neural architecture search for epistatic detection. IEEE Transactions on Fuzzy Systems 2024, 33, 94–107. [Google Scholar] [CrossRef]

- Wei, J.; Wang, X.; Schuurmans, D.; Bosma, M.; Ichter, B.; Xia, F.; Chi, E.; Le, Q.V.; Zhou, D. Chain-of-Thought Prompting Elicits Reasoning in Large Language Models. Proceedings of the Advances in Neural Information Processing Systems 2022, 35, 24824–24837. [Google Scholar]

- Xin, Y.; Luo, S.; Liu, X.; Zhou, H.; Cheng, X.; Lee, C.E.; Du, J.; Wang, H.; Chen, M.; Liu, T.; et al. V-petl bench: A unified visual parameter-efficient transfer learning benchmark. Advances in neural information processing systems 2024, 37, 80522–80535. [Google Scholar]

- Wang, H.; Zhang, X.; Xia, Y.; Wu, X. An intelligent blockchain-based access control framework with federated learning for genome-wide association studies. Computer Standards & Interfaces 2023, 84, 103694. [Google Scholar]

- Wang, X.; Wei, J.; Schuurmans, D.; Le, Q.V.; Chi, E.H.; Narang, S.; Chowdhery, A.; Zhou, D. Self-Consistency Improves Chain of Thought Reasoning in Language Models. In Proceedings of the International Conference on Learning Representations, 2023. [Google Scholar]

- Chen, X.; Aksitov, R.; Alon, U.; Ren, J.; Xiao, K.; Yin, P.; Prakash, S.; Sutton, C.; Wang, X.; Zhou, D. Universal Self-Consistency for Large Language Model Generation. arXiv 2024, arXiv:2311.17311. [Google Scholar]

- Xin, Y.; Zhuo, L.; Qin, Q.; Luo, S.; Cao, Y.; Fu, B.; He, Y.; Li, H.; Zhai, G.; Liu, X.; et al. Resurrect mask autoregressive modeling for efficient and scalable image generation. arXiv 2025, arXiv:2507.13032. [Google Scholar] [CrossRef]

- Tian, Y.; Yang, Z.; Liu, C.; Su, Y.; Hong, Z.; Gong, Z.; Xu, J. CenterMamba-SAM: Center-Prioritized Scanning and Temporal Prototypes for Brain Lesion Segmentation. arXiv 2025, arXiv:2511.01243. [Google Scholar]

- Xin, Y.; Luo, S.; Jin, P.; Du, Y.; Wang, C. Self-training with label-feature-consistency for domain adaptation. In Proceedings of the International Conference on Database Systems for Advanced Applications, 2023; Springer; pp. 84–99. [Google Scholar]

- Cao, Z.; He, Y.; Liu, A.; Xie, J.; Wang, Z.; Chen, F. PurifyGen: A Risk-Discrimination and Semantic-Purification Model for Safe Text-to-Image Generation. In Proceedings of the Proceedings of the 33rd ACM International Conference on Multimedia, 2025; pp. 816–825. [Google Scholar]

- Kojima, T.; Gu, S.S.; Reid, M.; Matsuo, Y.; Iwasawa, Y. Large Language Models are Zero-Shot Reasoners. Proceedings of the Advances in Neural Information Processing Systems 2022, 35, 22199–22213. [Google Scholar]

- Xin, Y.; Du, J.; Wang, Q.; Lin, Z.; Yan, K. Vmt-adapter: Parameter-efficient transfer learning for multi-task dense scene understanding. Proceedings of the Proceedings of the AAAI conference on artificial intelligence 2024, 38, 16085–16093. [Google Scholar] [CrossRef]

- Gao, B.; Wang, J.; Song, X.; He, Y.; Xing, F.; Shi, T. Free-Mask: A Novel Paradigm of Integration Between the Segmentation Diffusion Model and Image Editing. In Proceedings of the Proceedings of the 33rd ACM International Conference on Multimedia, 2025; pp. 9881–9890. [Google Scholar]

- Zhou, D.; Schärli, N.; Hou, L.; Wei, J.; Scales, N.; Wang, X.; Schuurmans, D.; Cui, C.; Bousquet, O.; Le, Q.V.; et al. Least-to-Most Prompting Enables Complex Reasoning in Large Language Models. In Proceedings of the International Conference on Learning Representations, 2023. [Google Scholar]

- Yao, S.; Yu, D.; Zhao, J.; Shafran, I.; Griffiths, T.L.; Cao, Y.; Narasimhan, K. Tree of Thoughts: Deliberate Problem Solving with Large Language Models. In Proceedings of the Advances in Neural Information Processing Systems, 2023; Vol. 36. [Google Scholar]

- Yao, S.; et al. Tree of Thoughts: Deliberate Problem Solving with Large Language Models. In Proceedings of the NeurIPS, 2024. [Google Scholar]

- Yang, C.; He, Y.; Tian, A.X.; Chen, D.; Wang, J.; Shi, T.; Heydarian, A.; Liu, P. Wcdt: World-centric diffusion transformer for traffic scene generation. In Proceedings of the 2025 IEEE International Conference on Robotics and Automation (ICRA). IEEE, 2025; pp. 6566–6572. [Google Scholar]

- He, Y.; Li, S.; Li, K.; Wang, J.; Li, B.; Shi, T.; Xin, Y.; Li, K.; Yin, J.; Zhang, M.; et al. GE-Adapter: A General and Efficient Adapter for Enhanced Video Editing with Pretrained Text-to-Image Diffusion Models. Expert Systems with Applications 2025, 129649. [Google Scholar] [CrossRef]

- Besta, M.; Blach, N.; Kubicek, A.; Gerstenberger, R.; Podstawski, M.; Gianinazzi, L.; Gajda, J.; Lehmann, T.; Nyczyk, H.; Muller, P.; et al. Graph of Thoughts: Solving Elaborate Problems with Large Language Models. Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence 2024, Vol. 38, 17682–17690. [Google Scholar] [CrossRef]

- Yao, Y.; et al. Beyond Chain-of-Thought, Effective Graph-of-Thought Reasoning in Language Models. arXiv 2024, arXiv:2305.16582. [Google Scholar]

- Liang, X.; Tao, M.; Xia, Y.; Wang, J.; Li, K.; Wang, Y.; He, Y.; Yang, J.; Shi, T.; Wang, Y.; et al. SAGE: Self-evolving Agents with Reflective and Memory-augmented Abilities. Neurocomputing 2025, 130470. [Google Scholar] [CrossRef]

- Xia, Y.; Wang, R.; Liu, X.; Li, M.; Yu, T.; Chen, X.; McAuley, J.; Li, S. Beyond Chain-of-Thought: A Survey of Chain-of-X Paradigms for LLMs. Proceedings of COLING, 2025; pp. 10795–10809. [Google Scholar]

- Chen, Q.; Qin, L.; Liu, J.; Peng, D.; Guan, J.; Wang, P.; Hu, M.; Zhou, Y.; Gao, T.; Che, W. Towards Reasoning Era: A Survey of Long Chain-of-Thought for Reasoning Large Language Models. arXiv 2025, arXiv:2503.09567. [Google Scholar]

- Xin, Y.; Luo, S.; Zhou, H.; Du, J.; Liu, X.; Fan, Y.; Li, Q.; Du, Y. Parameter-efficient fine-tuning for pre-trained vision models: A survey. arXiv e-prints 2024, arXiv–2402. [Google Scholar]

- Wu, X.; Wang, H.; Zhang, Y.; Zou, B.; Hong, H. A tutorial-generating method for autonomous online learning. IEEE Transactions on Learning Technologies 2024, 17, 1532–1541. [Google Scholar] [CrossRef]

- Ouyang, L.; Wu, J.; Jiang, X.; Almeida, D.; Wainwright, C.; Mishkin, P.; Zhang, C.; Agarwal, S.; Slama, K.; Ray, A.; et al. Training Language Models to Follow Instructions with Human Feedback. Advances in Neural Information Processing Systems 2022, 35, 27730–27744. [Google Scholar]

- Wu, X.; Zhang, Y.; Shi, M.; Li, P.; Li, R.; Xiong, N.N. An adaptive federated learning scheme with differential privacy preserving. Future Generation Computer Systems 2022, 127, 362–372. [Google Scholar] [CrossRef]

- Wu, X.; Dong, J.; Bao, W.; Zou, B.; Wang, L.; Wang, H. Augmented intelligence of things for emergency vehicle secure trajectory prediction and task offloading. IEEE Internet of Things Journal 2024, 11, 36030–36043. [Google Scholar] [CrossRef]

- Lightman, H.; Kosaraju, V.; Burda, Y.; Edwards, H.; Baker, B.; Lee, T.; Leike, J.; Schulman, J.; Sutskever, I.; Cobbe, K. Let’s Verify Step by Step. arXiv 2023, arXiv:2305.20050. [Google Scholar]

- Zhang, J.N.; et al. The Lessons of Developing Process Reward Models in Mathematical Reasoning. arXiv 2025, arXiv:2501.07301. [Google Scholar] [CrossRef]

- Wang, X.; et al. Improve Mathematical Reasoning in Language Models by Automated Process Supervision. arXiv 2024, arXiv:2406.06592. [Google Scholar]

- Wu, X.; Wang, H.; Tan, W.; Wei, D.; Shi, M. Dynamic allocation strategy of VM resources with fuzzy transfer learning method. Peer-to-Peer Networking and Applications 2020, 13, 2201–2213. [Google Scholar] [CrossRef]

- Valmeekam, K.; Marquez, M.; Olmo, A.; Sreedharan, S.; Kambhampati, S. PlanBench: An Extensible Benchmark for Evaluating Large Language Models on Planning and Reasoning about Change. In Proceedings of the Advances in Neural Information Processing Systems, 2023; Vol. 36. [Google Scholar]

- Kambhampati, S.; Valmeekam, K.; Guan, L.; Stechly, K.; Verma, M.; Bhambri, S.; Saldanha, L.; Murthy, A. LLMs Can’t Plan, But Can Help Planning in LLM-Modulo Frameworks. arXiv 2024, arXiv:2402.01817. [Google Scholar]

- Qi, H.; Hu, Z.; Yang, Z.; Zhang, J.; Wu, J.J.; Cheng, C.; Wang, C.; Zheng, L. Capacitive aptasensor coupled with microfluidic enrichment for real-time detection of trace SARS-CoV-2 nucleocapsid protein. Analytical chemistry 2022, 94, 2812–2819. [Google Scholar] [CrossRef]

- Xin, Y.; Qin, Q.; Luo, S.; Zhu, K.; Yan, J.; Tai, Y.; Lei, J.; Cao, Y.; Wang, K.; Wang, Y.; et al. Lumina-dimoo: An omni diffusion large language model for multi-modal generation and understanding. arXiv 2025, arXiv:2510.06308. [Google Scholar]

- Cao, Z.; He, Y.; Liu, A.; Xie, J.; Wang, Z.; Chen, F. CoFi-Dec: Hallucination-Resistant Decoding via Coarse-to-Fine Generative Feedback in Large Vision-Language Models. In Proceedings of the Proceedings of the 33rd ACM International Conference on Multimedia, 2025; pp. 10709–10718. [Google Scholar]

- Meyerson, E.; Nelson, M.J.; Lehman, J.; Clune, J.; Stanley, K.O. Solving a Million-Step LLM Task with Zero Errors. arXiv 2025, arXiv:2511.09030. [Google Scholar] [CrossRef]

- Zhou, Y.; He, Y.; Su, Y.; Han, S.; Jang, J.; Bertasius, G.; Bansal, M.; Yao, H. ReAgent-V: A Reward-Driven Multi-Agent Framework for Video Understanding. arXiv 2025, arXiv:2506.01300. [Google Scholar]

- Lin, S. Abductive Inference in Retrieval-Augmented Language Models: Generating and Validating Missing Premises. arXiv 2025, arXiv:2511.04020. [Google Scholar] [CrossRef]

- Touvron, H.; Lavril, T.; Izacard, G.; Martinet, X.; Lachaux, M.A.; Lacroix, T.; Rozière, B.; Goyal, N.; Hambro, E.; Azhar, F.; et al. LLaMA: Open and Efficient Foundation Language Models. arXiv 2023, arXiv:2302.13971. [Google Scholar] [CrossRef]

- Cao, Z.; He, Y.; Liu, A.; Xie, J.; Chen, F.; Wang, Z. TV-RAG: A Temporal-aware and Semantic Entropy-Weighted Framework for Long Video Retrieval and Understanding. In Proceedings of the Proceedings of the 33rd ACM International Conference on Multimedia, 2025; pp. 9071–9079. [Google Scholar]

- Zhang, G.; Chen, K.; Wan, G.; Chang, H.; Cheng, H.; Wang, K.; Hu, S.; Bai, L. Evoflow: Evolving diverse agentic workflows on the fly. arXiv 2025, arXiv:2502.07373. [Google Scholar] [CrossRef]

- Chen, K.; Lin, Z.; Xu, Z.; Shen, Y.; Yao, Y.; Rimchala, J.; Zhang, J.; Huang, L. R2I-Bench: Benchmarking Reasoning-Driven Text-to-Image Generation. arXiv 2025, arXiv:2505.23493. [Google Scholar]

- Chen, H.; Peng, J.; Min, D.; Sun, C.; Chen, K.; Yan, Y.; Yang, X.; Cheng, L. MVI-Bench: A Comprehensive Benchmark for Evaluating Robustness to Misleading Visual Inputs in LVLMs. arXiv 2025, arXiv:2511.14159. [Google Scholar] [CrossRef]

- Lin, S. Hybrid Fuzzing with LLM-Guided Input Mutation and Semantic Feedback. arXiv 2025, arXiv:2511.03995. [Google Scholar] [CrossRef]

- Song, X.; Chen, K.; Bi, Z.; Niu, Q.; Liu, J.; Peng, B.; Zhang, S.; Yuan, Z.; Liu, M.; Li, M.; et al. Transformer: A Survey and Application. 2025. [Google Scholar]

- Cobbe, K.; Kosaraju, V.; Bavarian, M.; Chen, M.; Jun, H.; Kaiser, L.; Plappert, M.; Tworek, J.; Hilton, J.; Nakano, R.; et al. Training Verifiers to Solve Math Word Problems. arXiv 2021, arXiv:2110.14168. [Google Scholar] [CrossRef]

- Hendrycks, D.; Burns, C.; Kadavath, S.; Arora, A.; Basart, S.; Tang, E.; Song, D.; Steinhardt, J. Measuring Mathematical Problem Solving With the MATH Dataset. In Proceedings of the Thirty-fifth Conference on Neural Information Processing Systems Datasets and Benchmarks Track, 2021. [Google Scholar]

- Hendrycks, D.; Burns, C.; Basart, S.; Zou, A.; Mazeika, M.; Song, D.; Steinhardt, J. Measuring Massive Multitask Language Understanding. In Proceedings of the International Conference on Learning Representations, 2021. [Google Scholar]

- Srivastava, A.; Rastogi, A.; Rao, A.; Shoeb, A.A.M.; Abid, A.; Fisch, A.; Brown, A.R.; Santoro, A.; Gupta, A.; Garriga-Alonso, A.; et al. Beyond the Imitation Game: Quantifying and Extrapolating the Capabilities of Language Models. Transactions on Machine Learning Research; 2023. [Google Scholar]

- Yang, Z.; Qi, P.; Zhang, S.; Bengio, Y.; Cohen, W.W.; Salakhutdinov, R.; Manning, C.D. HotpotQA: A Dataset for Diverse, Explainable Multi-hop Question Answering 2018, 2369–2380.

- Chen, M.; Tworek, J.; Jun, H.; Yuan, Q.; Pinto, H.P.d.O.; Kaplan, J.; Edwards, H.; Burda, Y.; Joseph, N.; Brockman, G.; et al. Evaluating Large Language Models Trained on Code. arXiv 2021, arXiv:2107.03374. [Google Scholar] [CrossRef]

- OpenAI. Learning to Reason with LLMs. 2024. Available online: https://openai.com/index/learning-to-reason-with-llms/.

- Bi, Z.; Chen, L.; Song, J.; Luo, H.; Ge, E.; Huang, J.; Wang, T.; Chen, K.; Liang, C.X.; Wei, Z.; et al. Exploring efficiency frontiers of thinking budget in medical reasoning: Scaling laws between computational resources and reasoning quality. arXiv 2025, arXiv:2508.12140. [Google Scholar] [CrossRef]

- Dong, Q.; Li, L.; Dai, D.; Zheng, C.; Wu, Z.; Chang, B.; Sun, X.; Xu, J.; Sui, Z. A Survey on In-context Learning. arXiv 2023, arXiv:2301.00234. [Google Scholar] [CrossRef]

- Agarwal, R.; et al. Many-Shot In-Context Learning. NeurIPS Spotlight; 2024. [Google Scholar]

- Qin, B.; et al. More Samples or More Prompts? Exploring Effective Few-Shot In-Context Learning for LLMs with In-Context Sampling. Findings of NAACL; 2024. [Google Scholar]

- Li, W.; et al. Process Reward Model with Q-Value Rankings. arXiv 2024, arXiv:2410.11287. [Google Scholar] [CrossRef]

- Zelikman, E.; Wu, Y.; Mu, J.; Goodman, N.D. STaR: Bootstrapping Reasoning With Reasoning. Proceedings of the Advances in Neural Information Processing Systems 2022, Vol. 35, 15476–15488. [Google Scholar]

- DeepSeek-AI. DeepSeek-R1: Incentivizing Reasoning Capability in LLMs via Reinforcement Learning. arXiv 2025, arXiv:2501.12948. [Google Scholar]

- Wang, L.; Ma, C.; Feng, X.; Zhang, Z.; Yang, H.; Zhang, J.; Chen, Z.; Tang, J.; Chen, X.; Lin, Y.; et al. A Survey on Large Language Model Based Autonomous Agents. Frontiers of Computer Science 2024, 18, 186345. [Google Scholar] [CrossRef]

- Wu, Q.; Bansal, G.; Zhang, J.; Wu, Y.; Zhang, S.; Zhu, E.; Li, B.; Jiang, L.; Zhang, X.; Wang, C. AutoGen: Enabling Next-Gen LLM Applications via Multi-Agent Conversation. arXiv 2023, arXiv:2308.08155. [Google Scholar]

- Tran, K.T.; Dao, D.; Nguyen, M.D.; Pham, Q.V.; O’Sullivan, B.; Nguyen, H.D. Multi-Agent Collaboration Mechanisms: A Survey of LLMs. arXiv 2025, arXiv:2501.06322. [Google Scholar] [CrossRef]

- Zhang, Y.; et al. Chain of Agents: Large Language Models Collaborating on Long-context Tasks. In Proceedings of the NeurIPS, 2024. [Google Scholar]

- Chen, S.; et al. Reflective Multi-Agent Collaboration based on Large Language Models. In Proceedings of the NeurIPS, 2024. [Google Scholar]

- Hong, S.; Zhuge, M.; Chen, J.; Zheng, X.; Cheng, Y.; Zhang, C.; Wang, J.; Wang, Z.; Yau, S.K.S.; Lin, Z.; et al. MetaGPT: Meta Programming for A Multi-Agent Collaborative Framework. In Proceedings of the International Conference on Learning Representations, 2024. [Google Scholar]

- Bi, Z.; Chen, K.; Wang, T.; Hao, J.; Song, X. CoT-X: An Adaptive Framework for Cross-Model Chain-of-Thought Transfer and Optimization. arXiv 2025, arXiv:2511.05747. [Google Scholar]

- Xiong, L.; et al. Confidence Improves Self-Consistency in LLMs. Findings of ACL 2025; 2025. [Google Scholar]

- Weng, Y.; et al. Large Language Models are Better Reasoners with Self-Verification. Findings of EMNLP; 2024. [Google Scholar]

- Chen, W.; Ma, X.; Wang, X.; Cohen, W.W. Program of Thoughts Prompting: Disentangling Computation from Reasoning for Numerical Reasoning Tasks. Transactions on Machine Learning Research; 2023. [Google Scholar]

- Yao, S.; Zhao, J.; Yu, D.; Du, N.; Shafran, I.; Narasimhan, K.; Cao, Y. ReAct: Synergizing Reasoning and Acting in Language Models. In Proceedings of the International Conference on Learning Representations, 2023. [Google Scholar]

- Bai, Y.; Kadavath, S.; Kundu, S.; Askell, A.; Kernion, J.; Jones, A.; Chen, A.; Goldie, A.; Mirhoseini, A.; McKinnon, C.; et al. Constitutional AI: Harmlessness from AI Feedback. arXiv 2022, arXiv:2212.08073. [Google Scholar] [CrossRef]

- Gao, Y.; et al. Retrieval-Augmented Generation for Large Language Models: A Survey. arXiv 2024, arXiv:2312.10997. [Google Scholar] [CrossRef]

- Edge, D.; et al. GraphRAG: Unlocking LLM Discovery on Narrative Private Data. In Microsoft Research; 2024. [Google Scholar]

- Shinn, N.; Cassano, F.; Gopinath, A.; Narasimhan, K.; Yao, S. Reflexion: Language Agents with Verbal Reinforcement Learning. In Proceedings of the Advances in Neural Information Processing Systems, 2023; Vol. 36. [Google Scholar]

- Wang, T.; Wang, Y.; Zhou, J.; Peng, B.; Song, X.; Zhang, C.; Sun, X.; Niu, Q.; Liu, J.; Chen, S.; et al. From aleatoric to epistemic: Exploring uncertainty quantification techniques in artificial intelligence. arXiv 2025, arXiv:2501.03282. [Google Scholar] [CrossRef]

- Wang, X.; et al. Chain-of-Thought Reasoning Without Prompting. In Proceedings of the NeurIPS, 2024. [Google Scholar]

- Fang, M.; et al. Large Language Models Are Neurosymbolic Reasoners. Proceedings of AAAI; 2024. [Google Scholar]

- Sarker, M.K.; et al. Neuro-Symbolic Artificial Intelligence: Towards Improving the Reasoning Abilities of Large Language Models. arXiv 2024, arXiv:2508.13678. [Google Scholar]

- Chen, S.; Wang, T.; Jing, B.; Yang, J.; Song, J.; Chen, K.; Li, M.; Niu, Q.; Liu, J.; Peng, B.; et al. Ethics and Social Implications of Large Models. 2024. [Google Scholar]

- Press, O.; Zhang, M.; Min, S.; Schmidt, L.; Smith, N.A.; Lewis, M. Measuring and Narrowing the Compositionality Gap in Language Models. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2023, 2023; pp. 5687–5711. [Google Scholar]

- Dziri, N.; Lu, X.; Sclar, M.; Li, X.L.; Jiang, L.; Lin, B.Y.; West, P.; Bhagavatula, C.; Le Bras, R.; Hwang, J.D.; et al. Faith and Fate: Limits of Transformers on Compositionality 2024. 36.

- Pan, R.; et al. NeuroSymbolic LLM for Mathematical Reasoning and Software Engineering. In Proceedings of the IJCAI, 2024. [Google Scholar]

- Liu, Y.; et al. Code to Think, Think to Code: A Survey on Code-Enhanced Reasoning and Reasoning-Driven Code Intelligence in LLMs. arXiv 2024, arXiv:2502.19411. [Google Scholar]

- Yang, L.; et al. Buffer of Thoughts: Thought-Augmented Reasoning with Large Language Models. In Proceedings of the NeurIPS, 2024. [Google Scholar]

- Gu, Y.; et al. MiniLLM: Knowledge Distillation of Large Language Models. Proceedings of ICLR; 2024. [Google Scholar]

- Xu, X.; et al. A Survey on Knowledge Distillation of Large Language Models. arXiv 2024, arXiv:2402.13116. [Google Scholar] [PubMed]

- Ho, T.; et al. Beyond Answers: Transferring Reasoning Capabilities to Smaller LLMs Using Multi-Teacher Knowledge Distillation. Proceedings of WSDM; 2024. [Google Scholar]

| Model | Parameters | Year | Type |

|---|---|---|---|

| GPT-3 | 175B | 2020 | Dense |

| GPT-4 | ∼1.8T | 2023 | MoE |

| LLaMA | 7-65B | 2023 | Dense |

| LLaMA 2 | 7-70B | 2023 | Dense |

| Claude 3 | – | 2024 | Dense |

| DeepSeek-R1 | 671B | 2025 | MoE |

| OpenAI o1 | – | 2024 | Dense |

| Type | Description |

|---|---|

| Arithmetic | Mathematical calculations and word problems |

| Commonsense | Everyday knowledge inference |

| Symbolic | Rule-based symbol manipulation |

| Multi-hop | Combining multiple information sources |

| Causal | Cause-effect relationship inference |

| Analogical | Pattern-based similarity reasoning |

| Benchmark | Domain | Size | Steps | Metric | SOTA |

|---|---|---|---|---|---|

| GSM8K [62] | Math (Grade School) | 8.5K | 2-8 | Accuracy | 97.3% |

| MATH [63] | Math (Competition) | 12.5K | 5-20 | Accuracy | 85.5% |

| MMLU [64] | Multitask | 15.9K | 1-3 | Accuracy | 92.3% |

| BIG-Bench [65] | Diverse | 200+ tasks | Varies | Varies | – |

| HotpotQA [66] | Multi-hop QA | 113K | 2-4 | F1/EM | 72.0% |

| PlanBench [47] | Planning | 2K | 10-50 | Validity | 35.2% |

| HumanEval [67] | Code | 164 | Varies | Pass@1 | 92.4% |

| AIME | Math (Competition) | 30/year | 10-30 | Accuracy | 83.0% |

| Benchmark | Standard | CoT | |

|---|---|---|---|

| GSM8K | 17.9% | 56.6% | +38.7 |

| SVAMP | 38.9% | 79.0% | +40.1 |

| ASDiv | 49.0% | 74.0% | +25.0 |

| AQuA | 25.5% | 48.3% | +22.8 |

| MAWPS | 63.3% | 93.3% | +30.0 |

| Samples | 1 | 5 | 10 | 20 | 40 | 64 |

|---|---|---|---|---|---|---|

| Accuracy | 56.5 | 68.2 | 72.1 | 74.0 | 74.4 | 74.5 |

| Task | CoT | ToT | GoT |

|---|---|---|---|

| Game of 24 | 4% | 74% | – |

| Sorting | 62% | 76% | 95% |

| Crosswords | 16% | 60% | – |

| Set Operations | – | 82% | 91% |

| Method | Accuracy | Steps |

|---|---|---|

| Standard CoT | 63.1% | Natural language |

| Least-to-Most | 68.4% | Subquestions |

| PoT | 71.6% | Code execution |

| PAL | 72.0% | Code execution |

| Method | Structure | Samples | Tools | Search | Key Strength |

|---|---|---|---|---|---|

| CoT [16] | Linear | 1 | No | None | Simplicity |

| Zero-Shot CoT [25] | Linear | 1 | No | None | No demonstrations needed |

| Self-Consistency [19] | Parallel | K | No | Voting | Error reduction |

| ToT [29] | Tree | Variable | No | BFS/DFS | Exploration |

| GoT [33] | Graph | Variable | No | Custom | Aggregation |

| Least-to-Most [28] | Sequential | 1 | No | None | Compositional |

| PoT [85] | Linear | 1 | Code | None | Precise computation |

| ReAct [86] | Interleaved | 1 | Yes | None | Grounded reasoning |

| Model | MATH | AIME | Codeforces |

|---|---|---|---|

| GPT-4 | 52.9% | 12% | 11% |

| Claude 3.5 | 71.1% | – | – |

| o1-preview | 74.6% | 44% | 62% |

| o1-mini | 70.0% | 56% | 73% |

| o1 | 83.3% | 74% | 89% |

| DeepSeek-R1 | 79.8% | 79.8% | 96.3% |

| Method | Supervision | Data Cost | Focus |

|---|---|---|---|

| RLHF | Human | High | Alignment |

| PRM | Step-level | Very High | Reasoning |

| STaR | Self-generated | Low | Reasoning |

| RLAIF | AI-generated | Medium | Alignment |

| o1/R1 | RL-based | Very High | Reasoning |

| Framework | Agents | Communication | Coordination | Verification | Application |

|---|---|---|---|---|---|

| AutoGen | Dynamic | Conversational | Turn-based | Self-check | General |

| MetaGPT | Role-based | Structured SOP | Sequential | Cross-role | Software Dev |

| Debate | 2+ | Adversarial | Rounds | Judge | Truthfulness |

| Reflexion | Single | Self-reflection | Episodic | Memory | Decision-making |

| MAKER | Many | Minimal | Parallel | Voting | Long-horizon |

| Model/Method | GSM8K | MATH | MMLU | HumanEval | AIME |

|---|---|---|---|---|---|

| GPT-3.5 (Standard) | 57.1 | 23.5 | 70.0 | 48.1 | – |

| GPT-4 (Standard) | 92.0 | 52.9 | 86.4 | 67.0 | 12.0 |

| GPT-4 + CoT | 94.2 | 58.4 | 87.1 | 70.2 | 14.0 |

| Claude 3 Opus | 95.0 | 60.1 | 86.8 | 84.9 | – |

| Llama 2 70B | 56.8 | 13.5 | 68.9 | 29.9 | – |

| DeepSeek-R1 | 97.3 | 79.8 | 90.8 | 71.5 | 79.8 |

| OpenAI o1 | 94.8 | 83.3 | 92.3 | 92.4 | 74.0 |

| o1 + SC@64 | 95.8 | 85.5 | – | 93.4 | 83.0 |

| Human Expert | 95+ | 90+ | 89.8 | – | 80+ |

| Model | 50 Steps | 200 Steps | 500 Steps |

|---|---|---|---|

| GPT-4 | 78.2% | 45.6% | 12.3% |

| Claude 3 Opus | 81.5% | 52.1% | 18.7% |

| OpenAI o1 | 94.3% | 76.8% | 35.2% |

| MAKER | 99.8% | 99.7% | 99.6% |

| Error Type | Frequency | Mitigation |

|---|---|---|

| Calculation | 25% | PoT/PAL |

| Logical | 30% | CoT/Verification |

| Comprehension | 20% | Prompt refinement |

| Hallucination | 15% | Grounding |

| Attention | 10% | Decomposition |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).