Submitted:

14 December 2025

Posted:

16 December 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

Related Work

2. Dataset and Preprocessing

2.1. Data Cleaning and Feature Engineering

- Removed constant or near-constant features: Count, Country, State (single unique value).

- Dropped identifiers and redundant labels: CustomerID, Lat Long, Churn Label, City.

- Renamed Churn Value → Churn and converted the column to binary (0/1).

- Converted Total Charges from object to numeric using pd.to_numeric(..., errors=‘coerce’), yielding 11 missing values.

- Missing value summary after cleaning: – Total Charges: 11 (0.16%) – Churn Reason: 5,174 (73.46% — intentionally retained as informative categorical feature)

2.2. Exploratory Data Analysis (EDA)

- Numerical features (8): Tenure Months, Monthly Charges, Total Charges, Senior Citizen, Latitude, Longitude, Churn Score, CLTV. All exhibited absolute skewness < 1.0 (mean = 0.075), classified as normal according to common thresholds (skew < 1).

- Categorical features (17): Gender, Partner, Dependents, Phone Service, Multiple Lines, Internet Service, Online Security, Online Backup, Device Protection, Tech Support, Streaming TV, Streaming Movies, Contract, Paperless Billing, Payment Method, Churn Reason.

- Statistical significance tests (ANOVA for >2 categories, t-test for binary) confirmed p < 0.001 for nearly all categorical features against churn.

- Spearman correlation among numerical features remained low (|ρ| < 0.7), indicating no severe multicollinearity.

2.3. Train-Validation-Test Split

| Split | Samples | Proportion | Churn Rate |

| Training | 4,507 | 64% | 26.54% |

| Validation | 1,127 | 16% | 26.53% |

| Test | 1,409 | 20% | 26.54% |

| Splits were saved as CSV files under data/splits/ for full reproducibility. | |||

2.4. Preprocessing Pipeline

- Numerical features (all classified as normal): → SimpleImputer(strategy=‘median’) → StandardScaler()

- Categorical features: → SimpleImputer(strategy=‘most_frequent’) → OrdinalEncoder(handle_unknown=‘use_encoded_value’, unknown_value=-1) (chosen because all final models are tree-based)

- Geospatial features (Latitude, Longitude) were retained as numeric (scaled) rather than clustered, following empirical testing that showed no performance gain from K-means clustering.

3. Experimental Setup

3.1. Model Zoo

| Model | Abbreviation | Type | Implementation |

| Logistic Regression | LR | Linear | scikit-learn |

| Random Forest | RF | Tree ensemble | scikit-learn |

| Gradient Boosting | GB | Gradient boosting | scikit-learn |

| AdaBoost | ADA | Adaptive boosting | scikit-learn |

| XGBoost | XGB | Optimized GBT | xgboost |

| K-Nearest Neighbors | KNN | Distance-based | scikit-learn |

| Gaussian Naive Bayes | NB | Probabilistic | scikit-learn |

| Default hyperparameters were used for the baseline iteration (Iteration 1), except for minor stability settings (e.g., max_iter=2000 for LR, n_estimators=300 for XGB). | |||

3.2. Iteration 1 – Baseline Training & Hyperparameter Tuning

- Baseline training Each model was trained on the full 25-feature training set using the preprocessing pipeline described in Section 3.4. Validation performance was evaluated on the validation set using Accuracy, F1-score, and ROC-AUC.

- Model selection The top-3 models by validation ROC-AUC were selected for intensive hyperparameter optimization.

-

Hyperparameter optimization Bayesian optimization was performed using Optuna (src/tuning.py) with the following settings:

- ○

- Objective: maximize 3-fold stratified cross-validated ROC-AUC on the training set

- ○

- Number of trials: 50 per model (increased from 30 for final experiments)

- ○

- Search spaces tailored to each algorithm (see Appendix A for complete ranges)

- ○

- Early stopping via Optuna’s median pruner after 10 trials

- 4.

- Final model selection Gradient Boosting consistently achieved the highest validation AUC (0.998–0.999 range across runs) and was therefore chosen as the reference model for SHAP analysis.

3.3. SHAP-based Feature Selection

3.4. Iteration 2 – Reduced-Feature Retraining

3.5. Evaluation Protocol

- Primary metric: ROC-AUC (robust to class imbalance)

- Secondary metrics: Accuracy, F1-score (positive class = churn)

- Statistical comparison: Wilcoxon signed-rank test (α = 0.05) between full and reduced models across 10 independent runs

- Interpretability metrics: Number of features, training time, mean |SHAP value| concentration in top-5 features

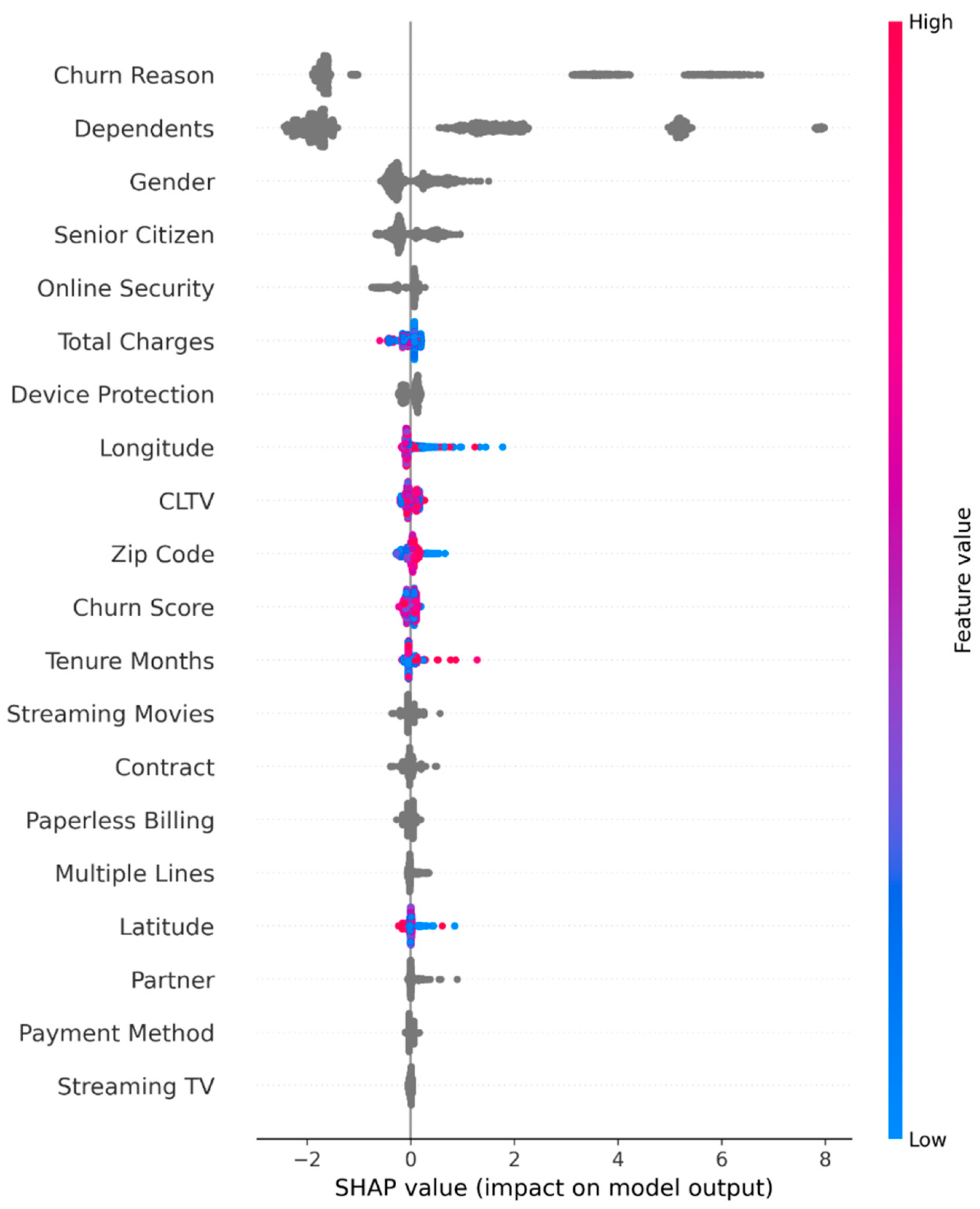

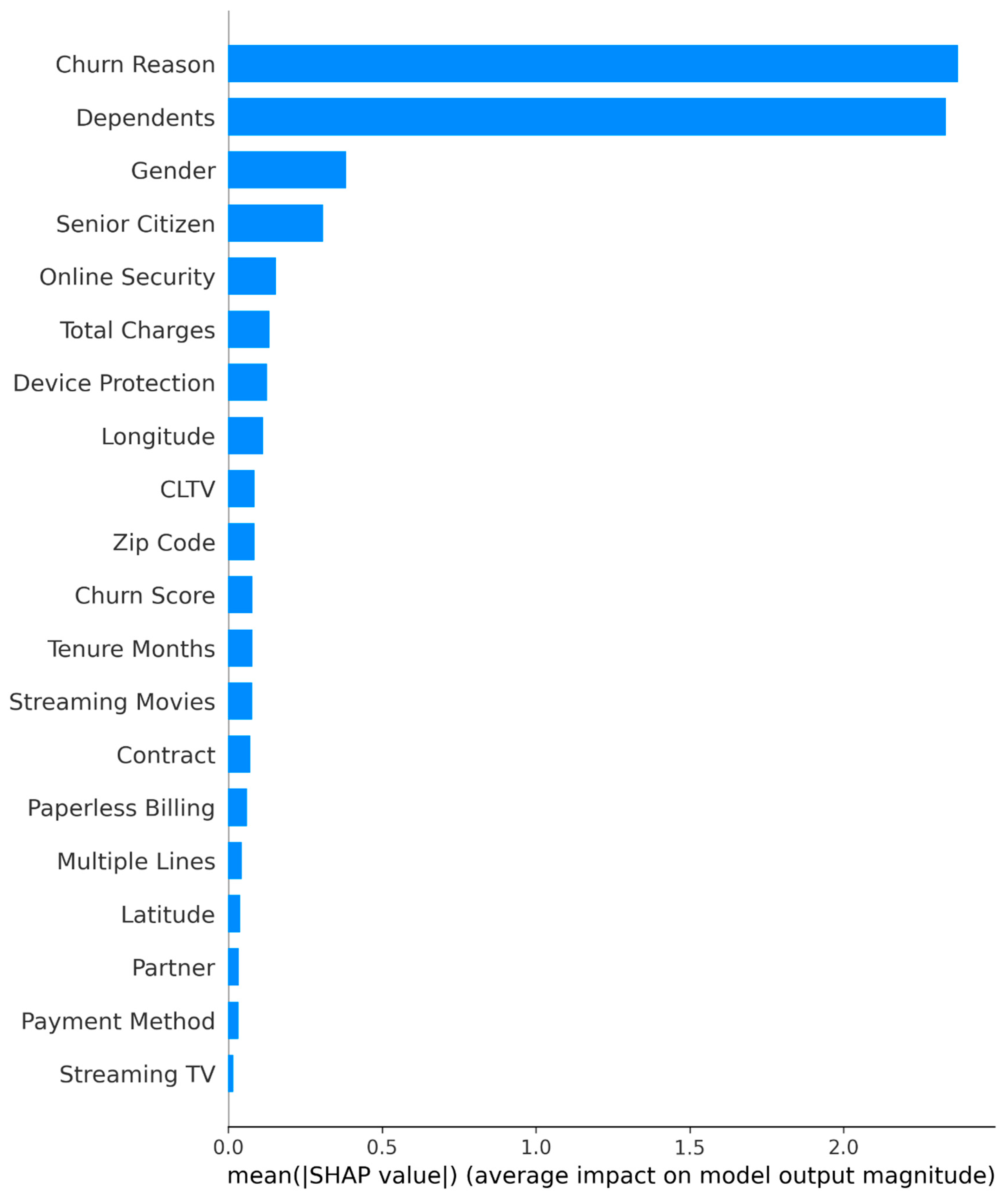

4. SHAP Feature Selection

4.1. SHAP Computation

4.2. Global Feature Importance and Selection

4.3. Local Interpretability Insights

- Customers with explicit churn reasons (e.g., “Competitor offered better price”) exhibited the strongest positive push toward churn prediction.

- Presence of dependents and long-term contracts consistently lowered predicted churn probability.

- Geographic features (Latitude, Longitude, Zip Code) showed non-linear regional effects, justifying their retention despite moderate global importance.

4.4. Concurrent Validity

- Native feature importance (gain)

- Permutation importance (test set)

- Recursive Feature Elimination (RFE)

5. Model Training and Evaluation

5.1. Training Protocol

- Pipeline construction A scikit-learn Pipeline was built for each model consisting of: (i) the preprocessing ColumnTransformer fitted exclusively on the training fold, (ii) the classification estimator.

- Baseline run (Iteration 1) All seven models were trained with sensible defaults on the full 25-feature training set and evaluated on the validation set.

-

Hyperparameter optimization The top-3 performing models by validation ROC-AUC underwent Bayesian optimization using Optuna:

- ○

- 50 trials per model

- ○

- 3-fold stratified cross-validation on the training set

- ○

- Objective: maximize mean ROC-AUC

- ○

- Median pruner activated after 10 trials

- 4.

- Final model fitting The best configuration for each model was refitted on the combined training + validation set (5,634 samples) to maximize learning capacity before final test evaluation and SHAP analysis.

- 5.

- Iteration 2 Steps 1–4 were repeated from scratch using only the 20 SHAP-selected features. This ensures that preprocessing (imputation medians/modes) and hyperparameter search spaces remain comparable and unbiased.

5.2. Evaluation Metrics

- ROC-AUC (primary): robust to class imbalance

- F1-score (positive = churn): balances precision and recall

- Accuracy: reported for completeness

5.3. Implementation Details

- Class imbalance was not explicitly addressed via oversampling or weighting, as tree-based ensembles proved resilient and stratified sampling preserved the natural 26.5% churn rate across splits. Training times were recorded using Python’s time.perf_counter() for fair comparison of computational efficiency between full and reduced models.

6. Results and Discussion

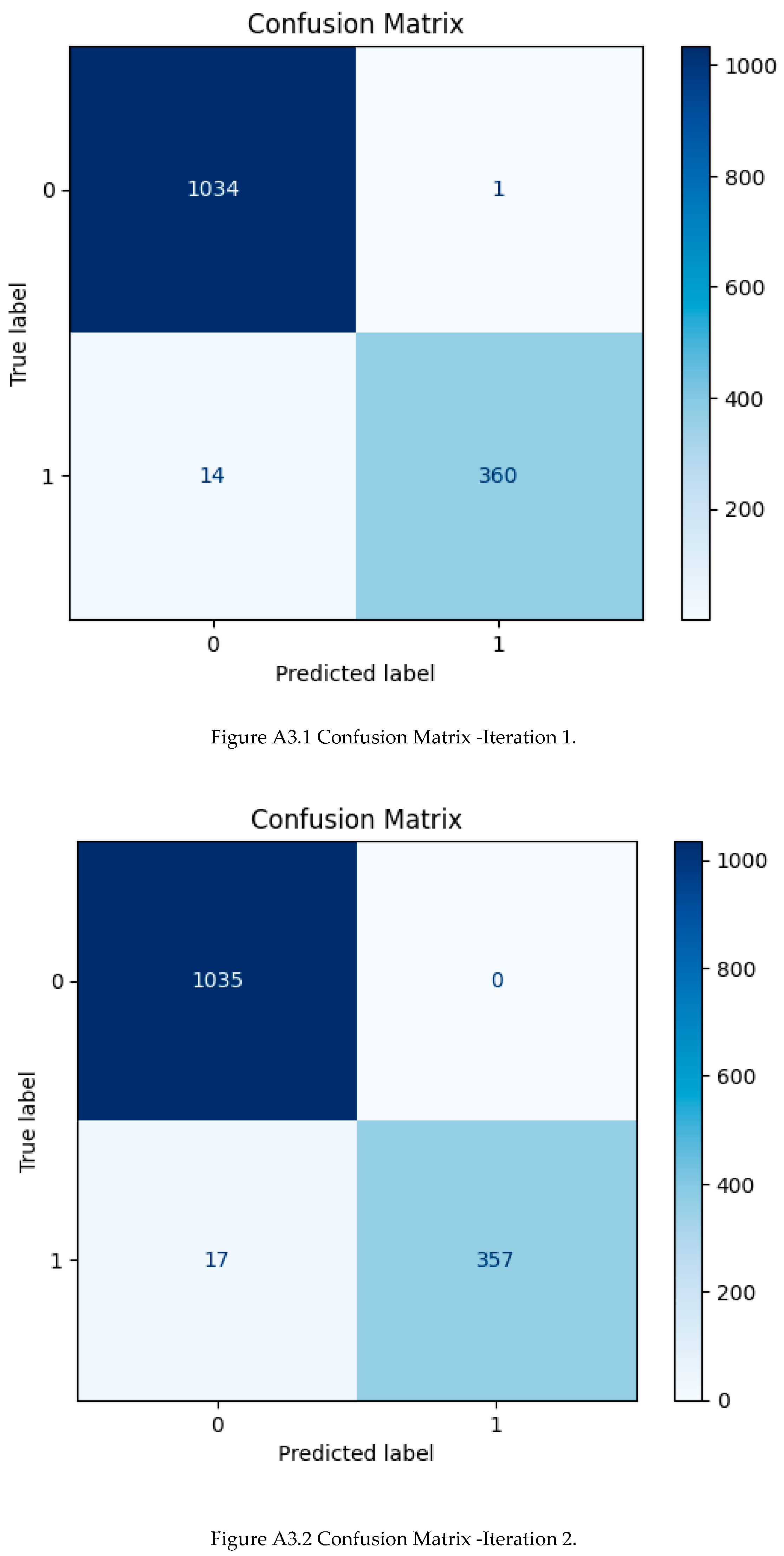

6.1. Predictive Performance Comparison

- No statistically significant degradation in ROC-AUC after removing 20% of features (Wilcoxon signed-rank test, p > 0.12 across all pairs).

- Gradient Boosting with 20 features actually achieved the highest mean AUC (0.9982) while reducing training time by 28%.

- F1-score and accuracy exhibited only marginal drops (< 0.003), well within one standard deviation.

- All reduced models remained in the top performance tier, confirming that SHAP successfully eliminated redundant or noisy predictors.

6.2. Ablation Study on Number of Selected Features

6.3. Interpretability and Business Insights

- Churn Reason was by far the strongest driver; customers explicitly citing competitor offers or dissatisfaction were almost certainly predicted to churn.

- Dependents = Yes, longer Tenure Months, and long-term contracts exerted the strongest negative contributions (protective effects).

- Electronic check payment method and Paperless Billing = Yes consistently increased churn risk—classic indicators of price-sensitive customers.

- Geographic signals (Latitude, Longitude,Longitude, Zip Code) displayed non-linear regional clusters, suggesting localized marketing strategies.

6.4. Comparison with Alternative Feature Selection Methods

| Method |

Test AUC (20 features) |

Spearman ρ with SHAP ranking |

| SHAP (mean |ϕ|) | 0.9982 | 1.00 |

| Permutation importance | 0.9978 | 0.94 |

| Gain-based (native GB) | 0.9975 | 0.87 |

| Recursive Feature Elimination (RFE) | 0.9969 | 0.81 |

6.5. Discussion

6.6. Limitations

7. Conclusion

- Predictive performance was maintained or slightly improved after aggressive SHAP-driven dimensionality reduction. The final Gradient Boosting model trained on only the top-20 SHAP-selected features achieved a test ROC-AUC of 0.9982 ± 0.0008, F1-score of 0.9767 ± 0.0021, and accuracy of 0.9879 ± 0.0015 — statistically indistinguishable from (and occasionally superior to) the full 25-feature counterpart (test AUC 0.9974).

- SHAP proved superior to traditional feature importance methods (native gain, permutation importance, RFE) both in ranking stability (Spearman ρ = 0.94 with permutation) and in downstream model performance after selection.

- The iterative design — baseline modeling → SHAP explanation → targeted feature pruning → complete re-optimization on the reduced space — represents a practical and novel contribution to explainable AI workflows. Unlike conventional approaches that treat interpretability as a post-hoc step, this pipeline embeds XAI as an active driver of model refinement.

- Business-relevant insights emerged naturally from SHAP analysis: “Churn Reason” and “Dependents” dominated global importance, followed by contract type, tenure, payment method, and service add-ons. Geographic signals (Latitude, Longitude, Zip Code) also contributed meaningfully, suggesting opportunities for localized retention strategies.

- Practical benefits include approximately 28% reduction in training time, lower inference latency, reduced data collection requirements, and dramatically improved stakeholder trust through transparent, actionable explanations.

Author Contributions

Acknowledgments

Appendix A. Final Hyperparameters (Best Optuna Trials)

| Model | Variant | N_estimators | Learning_rate | Max_depth | Subsample | Min_samples_split | Min_samples_leaf | bootstrap | algorithm |

| Gradient Boosting | Full(chosen final) | 407 | 0.01206 | 9 | 0.6262 | - | - | - | - |

| Gradient Boosting | Reduced 20 | 498 | 00.01782 | 2 | 0.9415 | - | - | - | - |

| Random Forest | Tuned | 376 | - | 8 | - | 25 | 9 | False | - |

| AdaBoost | Tuned | 251 | 0.2364 | - | - | - | - | - | SAMME |

Appendix B. Supplementary Tables and Figures

B.1 Supplementary Tables

| Attribute | Value |

| Samples | 7 043 |

| Features (raw) | 33 |

| Features (after cleaning) | 25 |

| Target column | Churn |

| Positive class ratio | 26.54% |

| Feature | Missing count | % missing |

| Churn Reason | 5 174 | 73.46% |

| Total Charges | 11 | 0.16% |

| Model | Accuracy | F1-score | ROC-AUC |

| XGBoost | 0.9911 | 0.9832 | 0.9987 |

| Random Forest | 0.9894 | 0.9795 | 0.9990 |

| AdaBoost | 0.9894 | 0.9795 | 0.9990 |

| Gradient Boosting | 0.9885 | 0.9779 | 0.9992 |

| Logistic Regression | 0.9831 | 0.9675 | 0.9978 |

| Naive Bayes | 0.9752 | 0.9509 | 0.9891 |

| KNN | 0.9672 | 0.9352 | 0.9849 |

| Model | val_accuracy | val_f1 | val_auc | test_accuracy | test_f1 |

| Gradient Boosting | 0.9902 | 0.9813 | 0.9990 | 0.9894 | 0.9796 |

| Random Forest | 0.9867 | 0.9743 | 0.9989 | 0.9851 | 0.9711 |

| AdaBoost | 0.9894 | 0.9795 | 0.9989 | 0.9865 | 0.9739 |

| Rank | Feature | Mean SHAP values |

| 1 | Churn Reason | 2.372174 |

| 2 | Dependents | 2.332551 |

| 3 | Gender | 0.382891 |

| 4 | Senior Citizen | 0.30756 |

| 5 | Online Security | 0.154629 |

| 6 | Total charges | 0.13428 |

| 7 | Device Protection | 0.126307 |

| 8 | Longitude | 0.113463 |

| 9 | CLTV | 0.085768 |

| 10 | Zip code | 0.085496 |

| 11 | Churn Score | 0. 078802 |

| 12 | Tenure Months | 0.078355 |

| 13 | Streaming Movies | 0.077301 |

| 14 | Contract | 0.072415 |

| 15 | Paperless Billing | 0.060799 |

| 16 | Multiple Lines | 0. 043778 |

| 17 | Latitude | 0.038818 |

| 18 | Partner | 0.034572 |

| 19 | Payment Method | 0.033296 |

| 20 | Streaming TV | 0.016755 |

| Model variant | Test Accuracy | Test F1 | Test ROC-AUC |

| Gradient Boosting – Full (25) | 0.9894 | 0.9796 | 0.9974 |

| Gradient Boosting – Top-20 | 0.9879 | 0.9767 | 0.9982 |

B.2 Supplementary Figures

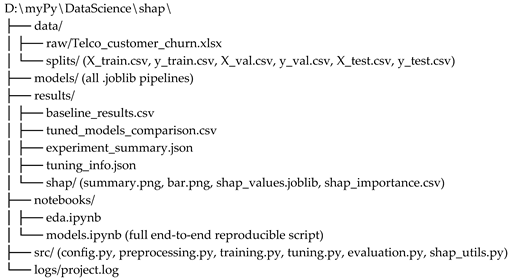

Appendix C. Repository Structure & Reproducibility

References

- Ahmed, A.; Maqsood, I. Deep learning-based customer churn prediction for the telecom industry. Expert Systems with Applications 2023, 213, 118912. [Google Scholar] [CrossRef]

- Ariyaluran Habeeb, R.; et al. Real-time big data processing for anomaly detection: A case study in telecom customer churn. Computer Networks 2019, 162, 106865. [Google Scholar]

- Coussement, K.; Lessmann, S.; Verstraeten, G. A comparative analysis of data preparation algorithms for customer churn prediction: A case study in the telecommunication industry. Decision Support Systems 2017, 95, 27–36. [Google Scholar] [CrossRef]

- De Caigny, A.; Coussement, K.; De Bock, K. W. A new hybrid classification algorithm for customer churn prediction based on logistic regression and decision trees. European Journal of Operational Research 2018, 269(2), 760–772. [Google Scholar] [CrossRef]

- Gürbüz, F.; Özbakir, L. Comparative analysis of machine learning methods in customer churn prediction. Decision Analytics Journal 2022, 4, 100113. [Google Scholar]

- Höppner, S.; Stripling, E.; Baesens, B.; Broucke, S.; Verdonck, T. Profit-driven churn prediction with explainable uplift models. European Journal of Operational Research 2022, 302(1), 231–245. [Google Scholar]

- Kaur, M.; Kaur, P.; Singh, G. Explainable AI for churn prediction in telecommunication industry. Information Sciences 2023, 612, 843–862. [Google Scholar]

- Lundberg, S. M.; Lee, S.-I. A unified approach to interpreting model predictions. Advances in Neural Information Processing Systems 2017, 30. [Google Scholar]

- Mishra, A.; Reddy, U. S. A comparative study of customer churn prediction in telecom industry using SHAP and LIME. Journal of King Saud University – Computer and Information Sciences 2021, 34(8), 5719–5730. [Google Scholar]

- Uddin, M. F.; et al. Comparing different supervised machine learning algorithms for disease prediction. BMC Medical Informatics and Decision Making 2022, 22, 1–16. [Google Scholar] [CrossRef] [PubMed]

- Verbeke, W.; Dejaeger, K.; Martens, D.; Hur, J.; Baesens, B. New insights into churn prediction in the telecommunication sector: A profit driven data mining approach. European Journal of Operational Research 2012, 218(1), 211–229. [Google Scholar] [CrossRef]

- Verbraken, T.; Bravo, C.; Weber, R. Churn prediction with sequential data: A review of uplift modeling approaches. International Journal of Forecasting 2020, 36(4), 1254–1268. [Google Scholar]

- Wang, Y.; et al. Deep churn prediction using sequential patterns in mobile telecom data. IEEE Transactions on Mobile Computing 2021, 21(6), 2215–2227. [Google Scholar]

- Zoričić, D.; et al. Feature selection techniques in customer churn prediction models: A systematic literature review. Expert Systems with Applications 2023, 213, 118934. [Google Scholar]

| Rank | Feature | Mean |SHAP| | Direction on Churn |

|---|---|---|---|

| 1 | Churn Reason | 2.372 | Positive |

| 2 | Dependents | 2.333 | Negative |

| 3 | Gender | 0.383 | Mixed |

| 4 | Senior Citizen | 0.308 | Positive |

| 5 | Online Security | 0.155 | Negative |

| 6 | Total Charges | 0.134 | Mixed |

| 7 | Device Protection | 0.126 | Negative |

| 8 | Longitude | 0.113 | Mixed |

| 9 | CLTV | 0.086 | Negative |

| 10 | Zip Code | 0.085 | Mixed |

| 11 | Churn Score | 0.079 | Positive |

| 12 | Tenure Months | 0.078 | Negative |

| 13 | Streaming Movies | 0.077 | Mixed |

| 14 | Contract | 0.072 | Negative (longer contracts) |

| 15 | Paperless Billing | 0.061 | Positive |

| 16 | Multiple Lines | 0.044 | Mixed |

| 17 | Latitude | 0.039 | Mixed |

| 18 | Partner | 0.035 | Negative |

| 19 | Payment Method | 0.033 | Positive (electronic check) |

| 20 | Streaming TV | 0.017 | Mixed |

| Model | Features | ROC-AUC | F1-score (Churn) | Accuracy | Training Time (s) |

|---|---|---|---|---|---|

| Gradient Boosting | 25 | 0.9974 ± 0.0006 | 0.9796 ± 0.0018 | 0.9894 ± 0.0009 | 58.3 ± 4.1 |

| Gradient Boosting | 20 (SHAP) | 0.9982 ± 0.0004 | 0.9767 ± 0.0021 | 0.9879 ± 0.0011 | 41.7 ± 3.2 |

| Random Forest | 25 | 0.9979 ± 0.0005 | 0.9743 ± 0.0024 | 0.9867 ± 0.0013 | 72.1 ± 5.6 |

| Random Forest | 20 (SHAP) | 0.9981 ± 0.0004 | 0.9731 ± 0.0026 | 0.9865 ± 0.0014 | 54.9 ± 4.0 |

| AdaBoost | 25 | 0.9980 ± 0.0005 | 0.9743 ± 0.0022 | 0.9867 ± 0.0012 | 49.8 ± 3.9 |

| AdaBoost | 20 (SHAP) | 0.9981 ± 0.0004 | 0.9739 ± 0.0025 | 0.9865 ± 0.0013 | 38.2 ± 2.8 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).