Submitted:

10 December 2025

Posted:

14 December 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Works

2.1. LLM for Robot Control

2.2. Visual Target Navigation

3. Proposed Method

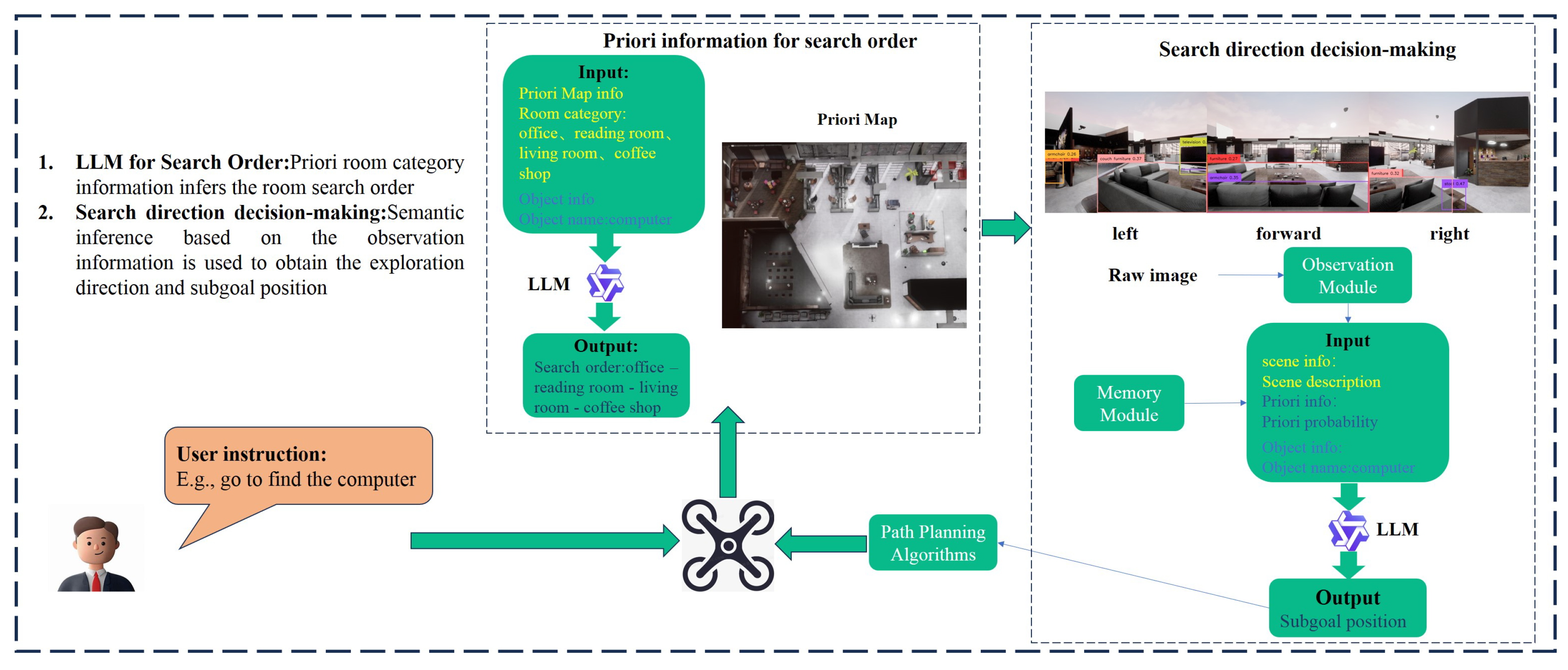

3.1. Overview

3.2. Priori Information for Search Order

3.3. Search Direction Decision-Making

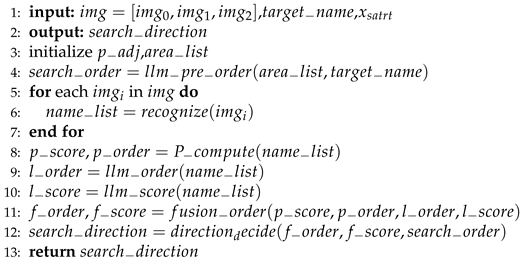

| Algorithm 1 Search Direction Decision-Making |

|

3.3.1. Prior Probabilities for Search Direction

3.3.2. LLM for Search Direction

3.3.3. Fusing Prior Probabilities with LLM for Search Direction

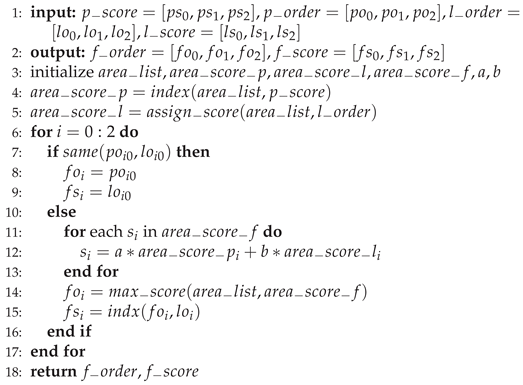

| Algorithm 2 Fusion Strategy |

|

4. Experiments

4.1. Airsim-Based Simulation Experiments

4.2. Physical Flight Experiments

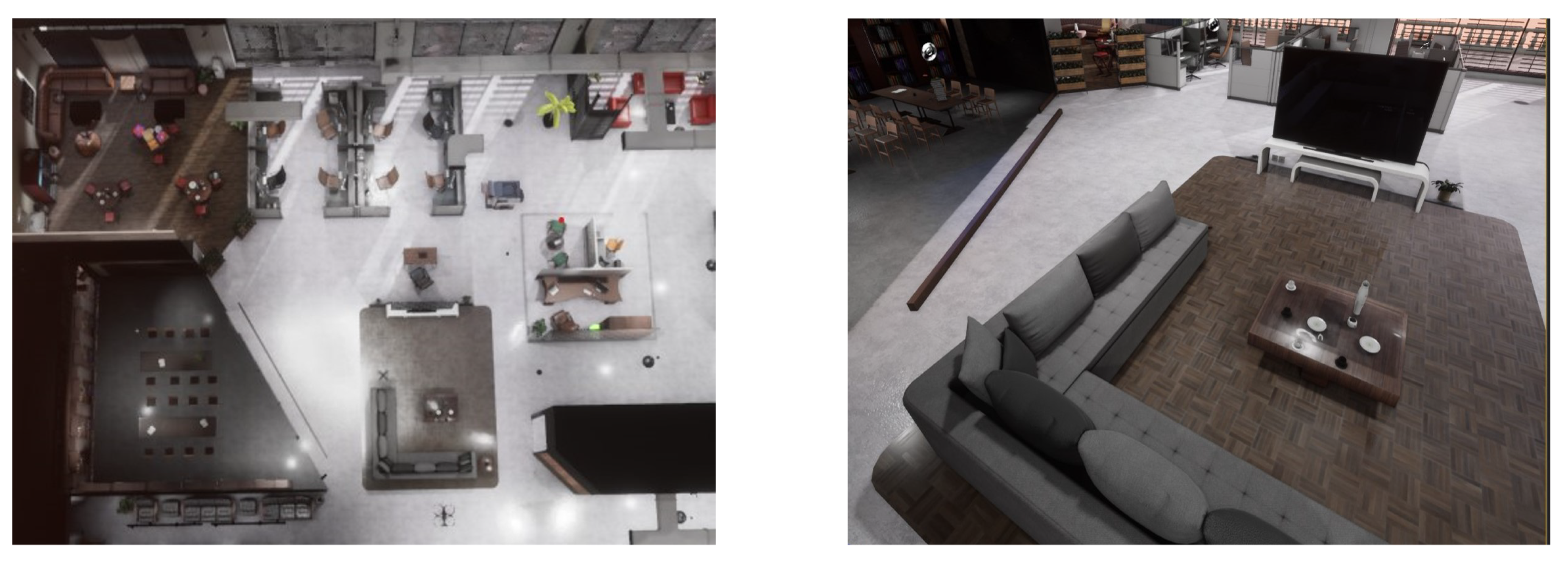

4.2.1. Customized Indoor Scenes

4.2.2. Indoor Structured Environment

5. Conclusions

References

- Chaplot, D.S.; Gandhi, D.P.; Gupta, A.; Salakhutdinov, R.R. Object goal navigation using goal-oriented semantic exploration. Advances in Neural Information Processing Systems 2020, 33, 4247–4258. [Google Scholar]

- Gervet, T.; Chintala, S.; Batra, D.; Malik, J.; Chaplot, D.S. Navigating to objects in the real world. Science Robotics 2023, 8, eadf6991. [Google Scholar] [CrossRef] [PubMed]

- Dharmadhikari, M.; Dang, T.; Solanka, L.; Loje, J.; Nguyen, H.; Khedekar, N.; Alexis, K. Motion primitives-based path planning for fast and agile exploration using aerial robots. In Proceedings of the 2020 IEEE International Conference on Robotics and Automation (ICRA), 2020; IEEE; pp. 179–185. [Google Scholar]

- Yang, W.; Wang, X.; Farhadi, A.; Gupta, A.; Mottaghi, R. Visual semantic navigation using scene priors. arXiv preprint 2018, arXiv:1810.06543. [Google Scholar] [CrossRef]

- Devlin, J.; Chang, M.W.; Lee, K.; Toutanova, K. Bert: Pre-training of deep bidirectional transformers for language understanding. In Proceedings of the Proceedings of the 2019 conference of the North American chapter of the association for computational linguistics: human language technologies, 2019; pp. 4171–4186. [Google Scholar]

- Brown, T.; Mann, B.; Ryder, N.; Subbiah, M.; Kaplan, J.D.; Dhariwal, P.; Neelakantan, A.; Shyam, P.; Sastry, G.; Askell, A.; et al. Language models are few-shot learners. Advances in neural information processing systems 2020, 33, 1877–1901. [Google Scholar]

- Achiam, J.; Adler, S.; Agarwal, S.; Ahmad, L.; Akkaya, I.; Aleman, F.L.; Almeida, D.; Altenschmidt, J.; Altman, S.; Anadkat, S.; et al. Gpt-4 technical report. arXiv preprint 2023, arXiv:2303.08774. [Google Scholar] [CrossRef]

- Liu, B.; Jiang, Y.; Zhang, X.; Liu, Q.; Zhang, S.; Biswas, J.; Stone, P. Llm+ p: Empowering large language models with optimal planning proficiency. arXiv preprint 2023, arXiv:2304.11477. [Google Scholar] [CrossRef]

- Silver, T.; Hariprasad, V.; Shuttleworth, R.S.; Kumar, N.; Lozano-Pérez, T.; Kaelbling, L.P. PDDL planning with pretrained large language models. In Proceedings of the NeurIPS 2022 foundation models for decision making workshop, 2022. [Google Scholar]

- Xie, Y.; Yu, C.; Zhu, T.; Bai, J.; Gong, Z.; Soh, H. Translating natural language to planning goals with large-language models. arXiv preprint 2023, arXiv:2302.05128. [Google Scholar] [CrossRef]

- Kim, B.; Kim, J.; Kim, Y.; Min, C.; Choi, J. Context-aware planning and environment-aware memory for instruction following embodied agents. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2023; pp. 10936–10946. [Google Scholar]

- Hu, Y.; Lin, F.; Zhang, T.; Yi, L.; Gao, Y. Look before you leap: Unveiling the power of gpt-4v in robotic vision-language planning. arXiv preprint 2023, arXiv:2311.17842. [Google Scholar]

- Zhu, M.; Zhu, Y.; Li, J.; Wen, J.; Xu, Z.; Che, Z.; Shen, C.; Peng, Y.; Liu, D.; Feng, F.; et al. Language-conditioned robotic manipulation with fast and slow thinking. In Proceedings of the 2024 IEEE International Conference on Robotics and Automation (ICRA), 2024; IEEE; pp. 4333–4339. [Google Scholar]

- Yang, Y.; Neary, C.; Topcu, U. Multimodal Pretrained Models for Verifiable Sequential Decision-Making: Planning, Grounding, and Perception. arXiv preprint 2023, arXiv:2308.05295. [Google Scholar]

- Zhou, Z.; Song, J.; Yao, K.; Shu, Z.; Ma, L. Isr-llm: Iterative self-refined large language model for long-horizon sequential task planning. In Proceedings of the 2024 IEEE International Conference on Robotics and Automation (ICRA). IEEE, 2024; pp. 2081–2088. [Google Scholar]

- Majumdar, A.; Shrivastava, A.; Lee, S.; Anderson, P.; Parikh, D.; Batra, D. Improving vision-and-language navigation with image-text pairs from the web. In Proceedings of the Computer Vision–ECCV 2020: 16th European Conference, Glasgow, UK, August 23–28, 2020, Proceedings, Part VI 16, 2020; Springer; pp. 259–274. [Google Scholar]

- Shah, D.; Osiński, B.; Levine, S.; et al. Lm-nav: Robotic navigation with large pre-trained models of language, vision, and action. In Proceedings of the Conference on robot learning. PMLR, 2023; pp. 492–504. [Google Scholar]

- Xie, Q.; Zhang, T.; Xu, K.; Johnson-Roberson, M.; Bisk, Y. Reasoning about the unseen for efficient outdoor object navigation. arXiv preprint 2023, arXiv:2309.10103. [Google Scholar] [CrossRef]

- Chen, W.; Hu, S.; Talak, R.; Carlone, L. Leveraging large (visual) language models for robot 3D scene understanding. arXiv preprint 2022, arXiv:2209.05629. [Google Scholar]

- Yu, B.; Kasaei, H.; Cao, M. L3mvn: Leveraging large language models for visual target navigation. In Proceedings of the 2023 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS). IEEE, 2023; pp. 3554–3560. [Google Scholar]

- Zhang, Y.; Huang, X.; Ma, J.; Li, Z.; Luo, Z.; Xie, Y.; Qin, Y.; Luo, T.; Li, Y.; Liu, S.; et al. Recognize anything: A strong image tagging model. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2024; pp. 1724–1732. [Google Scholar]

- Liu, S.; Zeng, Z.; Ren, T.; Li, F.; Zhang, H.; Yang, J.; Jiang, Q.; Li, C.; Yang, J.; Su, H.; et al. Grounding dino: Marrying dino with grounded pre-training for open-set object detection. In Proceedings of the European Conference on Computer Vision, 2025; Springer; pp. 38–55. [Google Scholar]

- Zhou, B.; Zhao, H.; Puig, X.; Fidler, S.; Barriuso, A.; Torralba, A. Scene parsing through ade20k dataset. In Proceedings of the Proceedings of the IEEE conference on computer vision and pattern recognition, 2017; pp. 633–641. [Google Scholar]

- Anderson, P.; Chang, A.; Chaplot, D.S.; Dosovitskiy, A.; Gupta, S.; Koltun, V.; Kosecka, J.; Malik, J.; Mottaghi, R.; Savva, M.; et al. On evaluation of embodied navigation agents. arXiv preprint 2018, arXiv:1807.06757. [Google Scholar] [CrossRef]

- He, Y.; Zhou, K.; Tian, T.L. Multi-modal scene graph inspired policy for visual navigation. The Journal of Supercomputing 2025, 81, 1–22. [Google Scholar] [CrossRef]

- Xu, W.; Zhang, F. Fast-lio: A fast, robust lidar-inertial odometry package by tightly-coupled iterated kalman filter. IEEE Robotics and Automation Letters 2021, 6, 3317–3324. [Google Scholar] [CrossRef]

| Method | Success rate | SPL | DTG(m) |

|---|---|---|---|

| Qwen2+Prior probabilities(Proposed) | 60% | 0.3816 | 2.4618 |

| Qwen2 | 44% | 0.2750 | 2.9901 |

| Prior probabilities | 36% | 0.2196 | 2.7473 |

| Ref [25] | 40% | 0.2752 | 2.8949 |

| Model | Success Rate | SPL | DTG (m) |

|---|---|---|---|

| Qwen2 | 60% | 0.3816 | 2.4618 |

| Qwen2.5-VL | 64% | 0.3859 | 2.2545 |

| ERNIE-3.5 | 52% | 0.3064 | 2.6239 |

| Layout | Success Rate | SPL | DTG |

|---|---|---|---|

| Cross | 56% | 0.3069 | 1.7741 |

| T-shaped | 48% | 0.3782 | 1.6327 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).