1. Introduction

The design of neuromorphic systems is traditionally framed as a trade-off between biological plausibility and computational efficiency [

1]. Biophysically detailed neuron models, such as Hodgkin–Huxley [

2], reproduce the ionic and membrane dynamics of real neurons with high fidelity, but at the cost of substantial computational and hardware complexity [

2,

3]. At the opposite extreme, the abstract neurons used in second-generation artificial neural networks (ANNs), rooted in the McCulloch–Pitts model [

5] and later refined through nonlinear activation functions [

6] and backpropagation [

4], favor mathematical simplicity and have enabled the success of deep learning, but discard the temporal and event-driven nature of neural computation.

Spiking neural networks (SNNs) occupy an intermediate position by encoding information in the timing of discrete spike events [

5]. This event-driven paradigm supports sparse communication, massive parallelism, and in-memory computation, which are key to the remarkable energy efficiency of biological nervous systems and to neuromorphic hardware platforms [

6]. However, most widely used spiking neuron models, including Izhikevich’s [

7] and Hodgkin–Huxley–based formulations, still rely internally on high-precision continuous-state variables and arithmetic-intensive operations, particularly multiplications. While effective in software, this reliance on complex arithmetic severely limits their scalability and efficiency in digital hardware [

8].

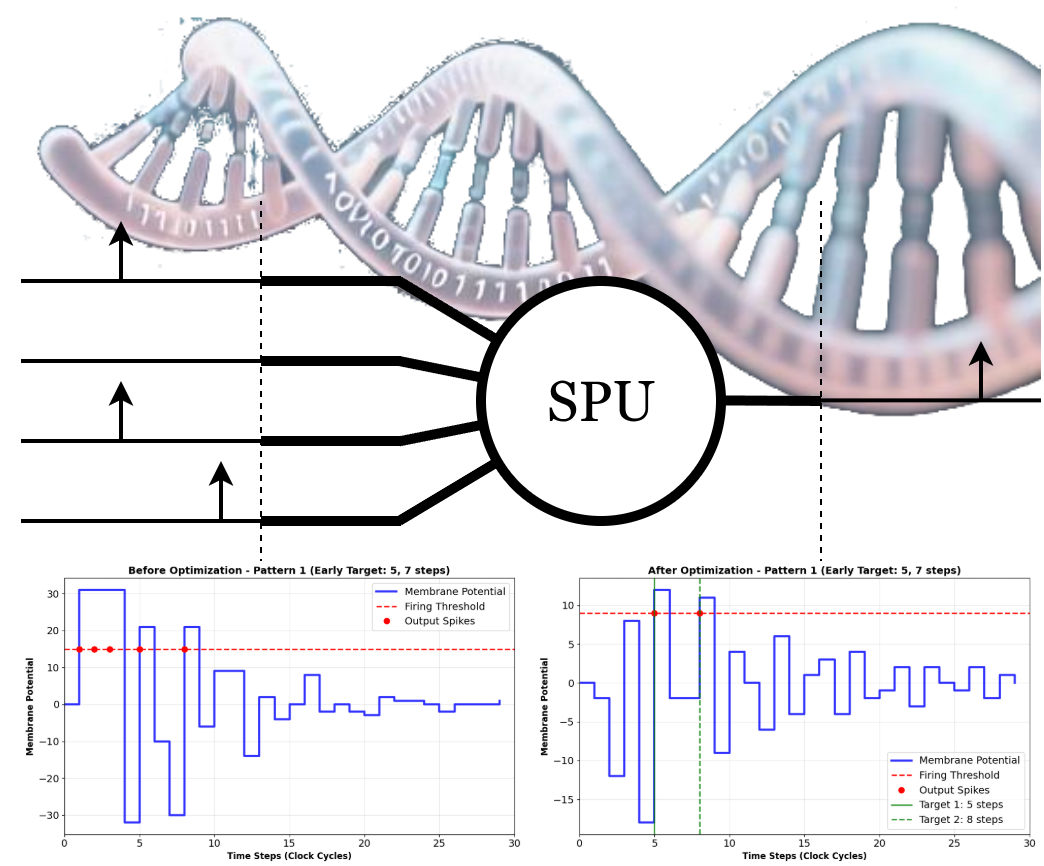

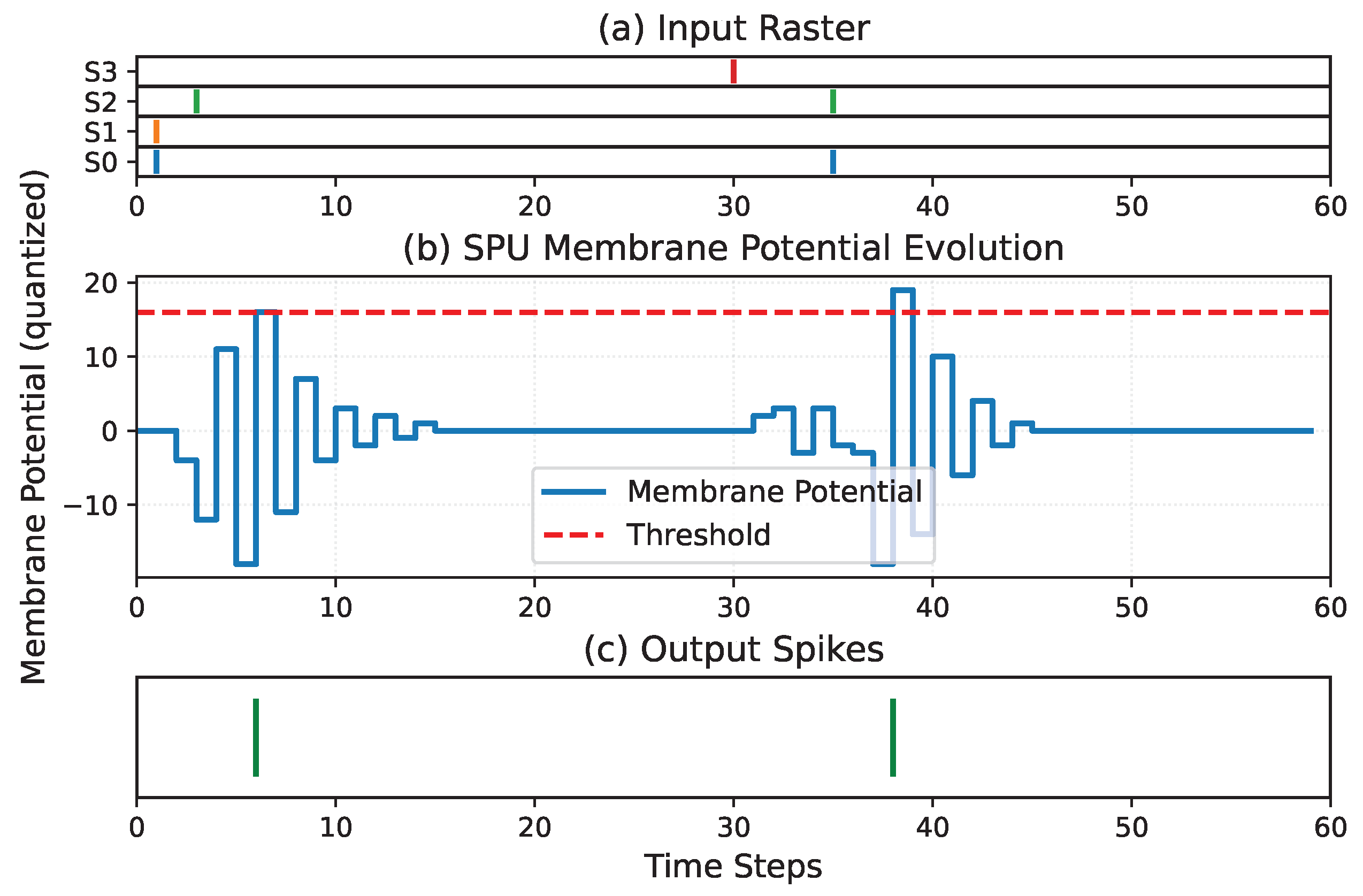

Rather than viewing spiking computation primarily through the lens of biological realism, an alternative perspective is to treat spikes as a computational format for representing and processing temporal information. In this view, the fundamental variable is not a continuous membrane voltage but the timing of discrete events. A particularly efficient temporal coding scheme is the inter-spike interval (ISI), in which information is represented by the time difference

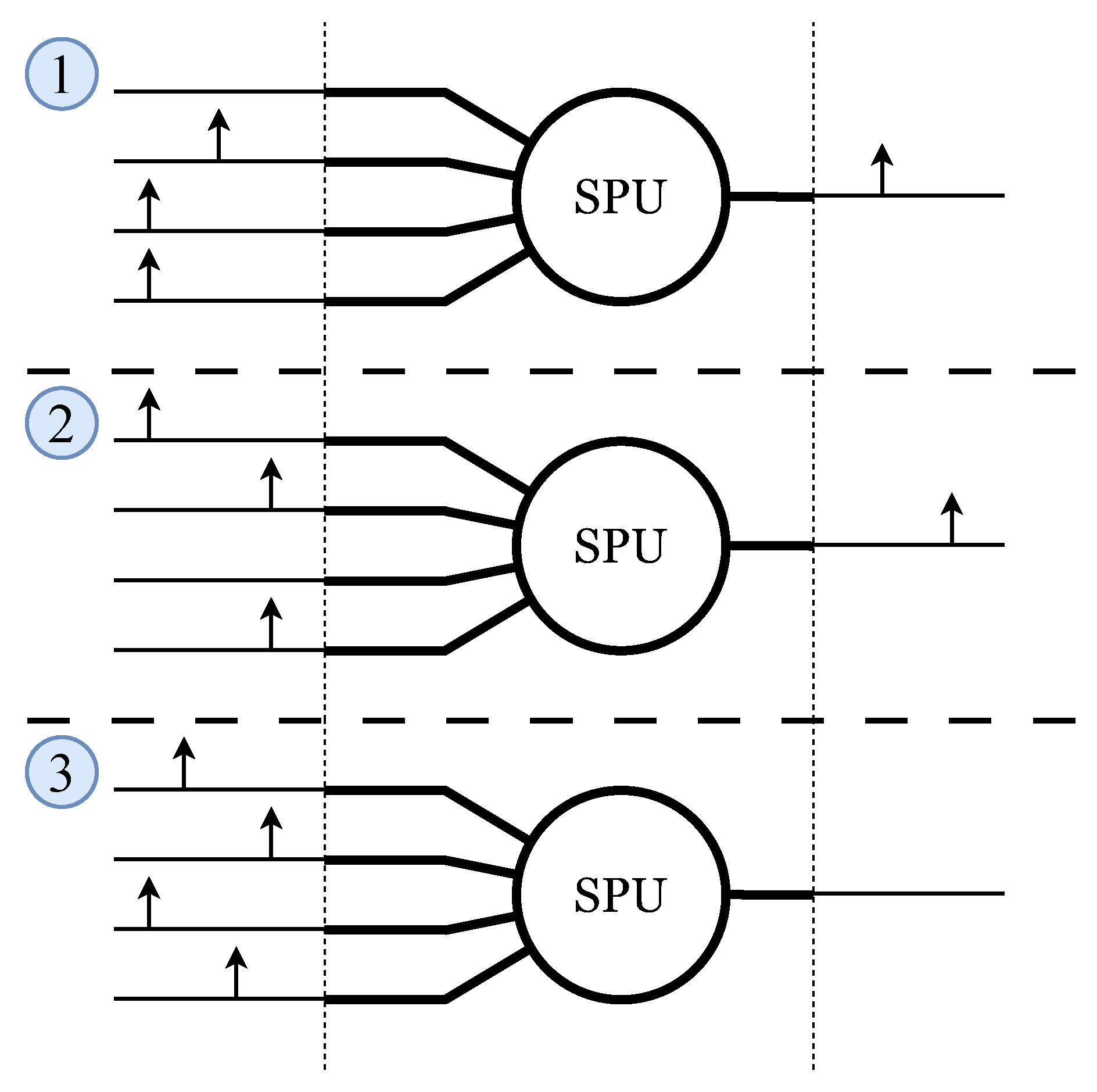

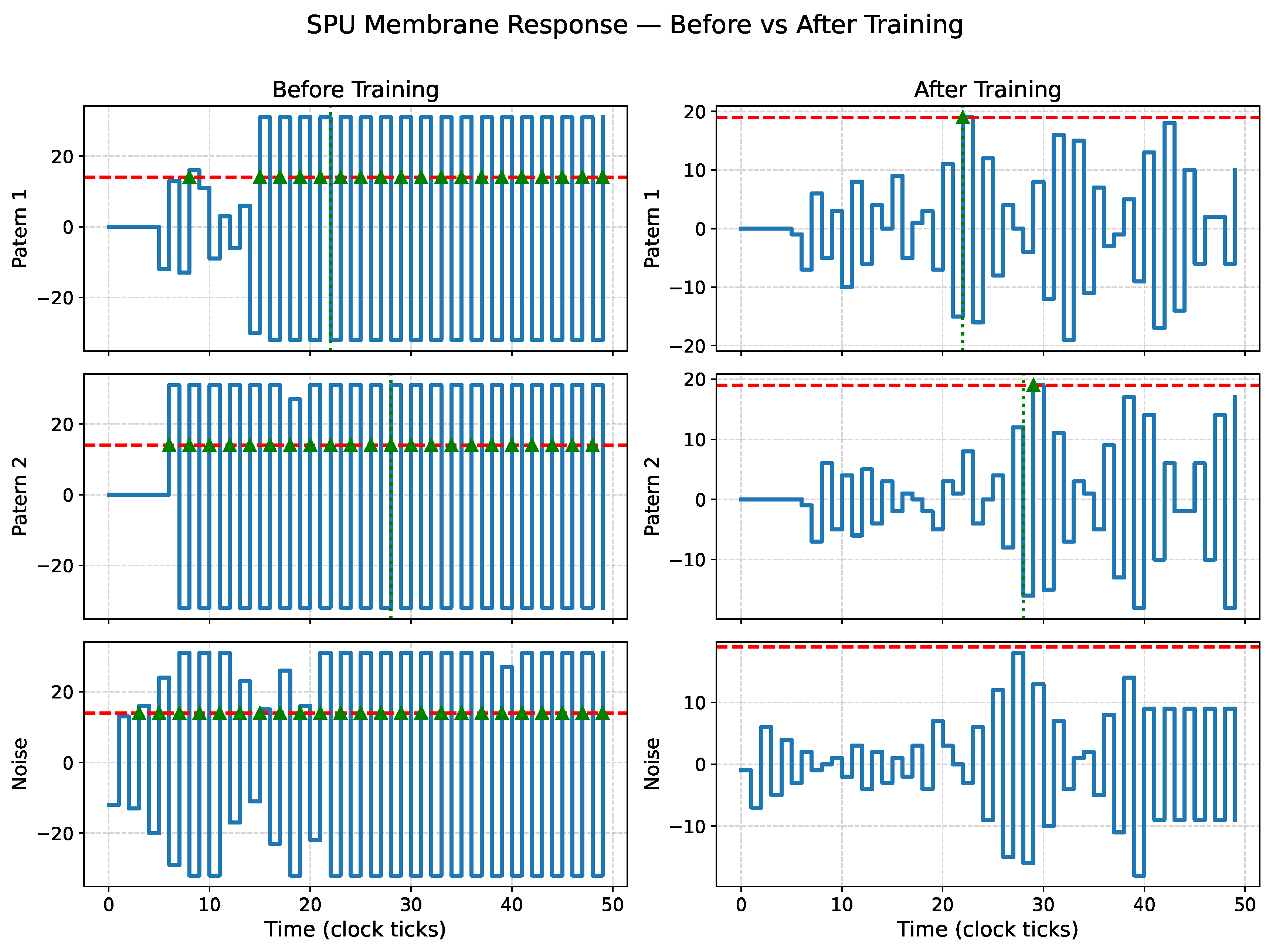

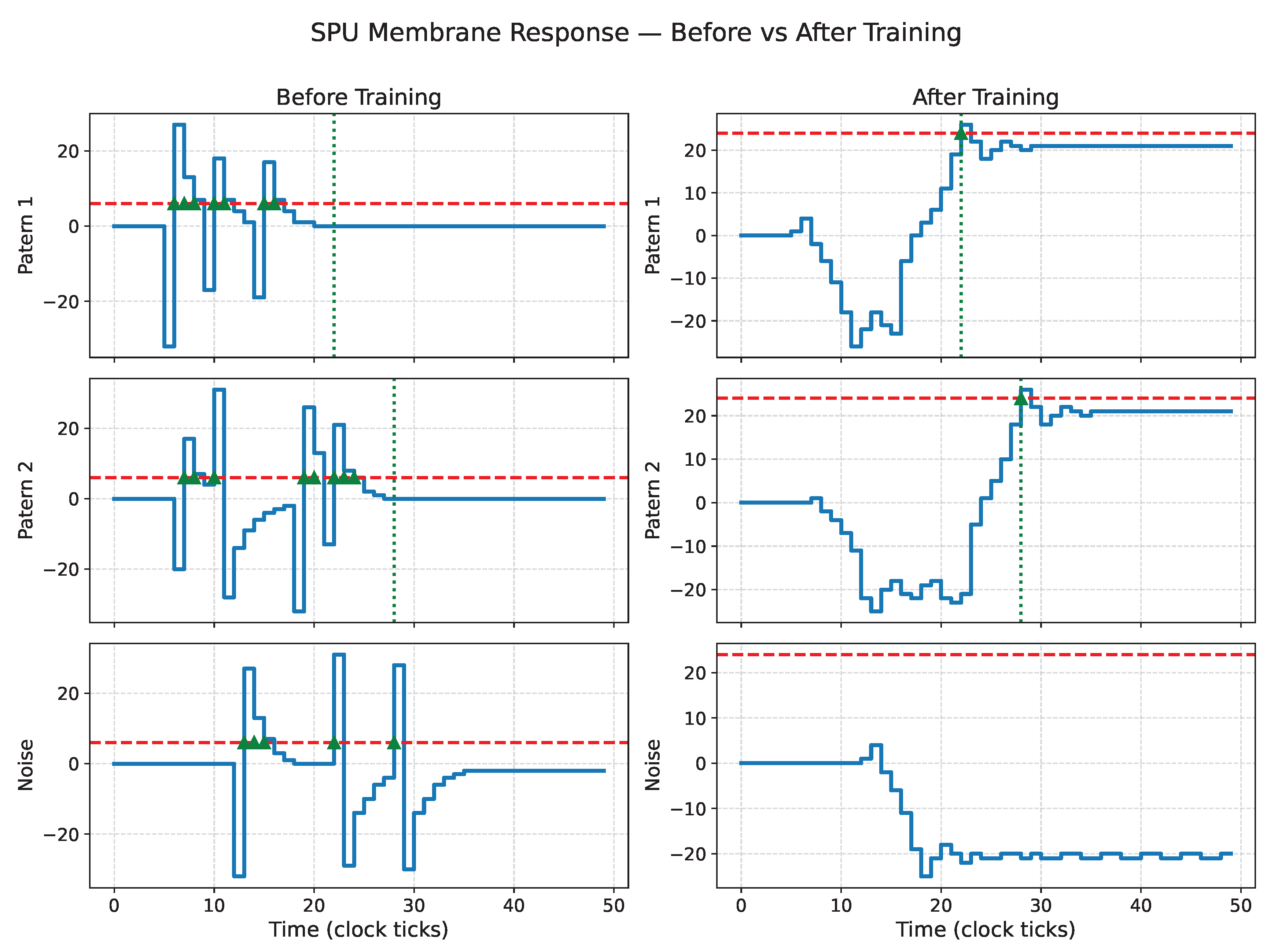

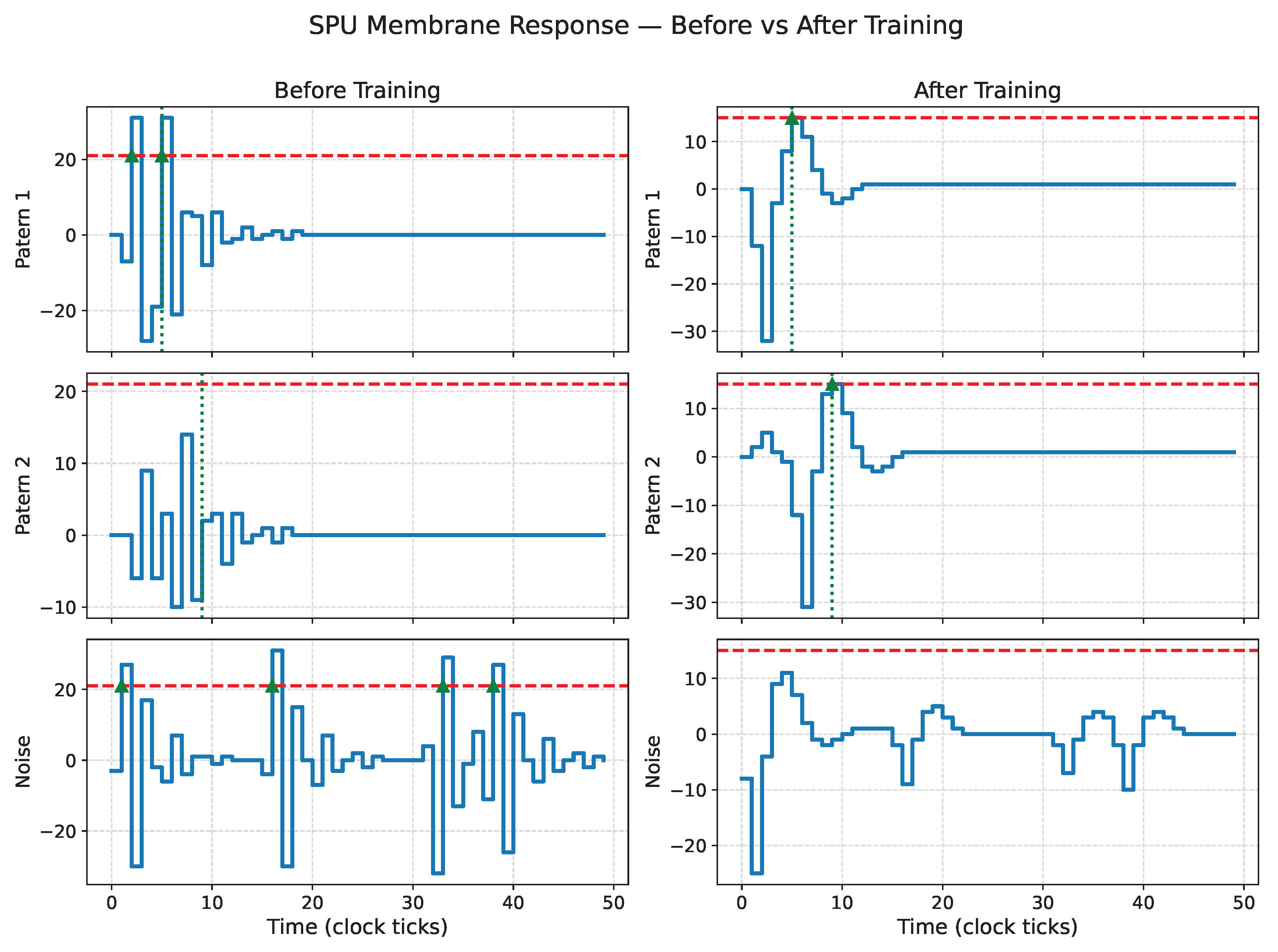

between consecutive spikes. As illustrated in

Figure 1, ISI coding can be implemented either within a single neuron or across a population, enabling both compact and distributed temporal representations.

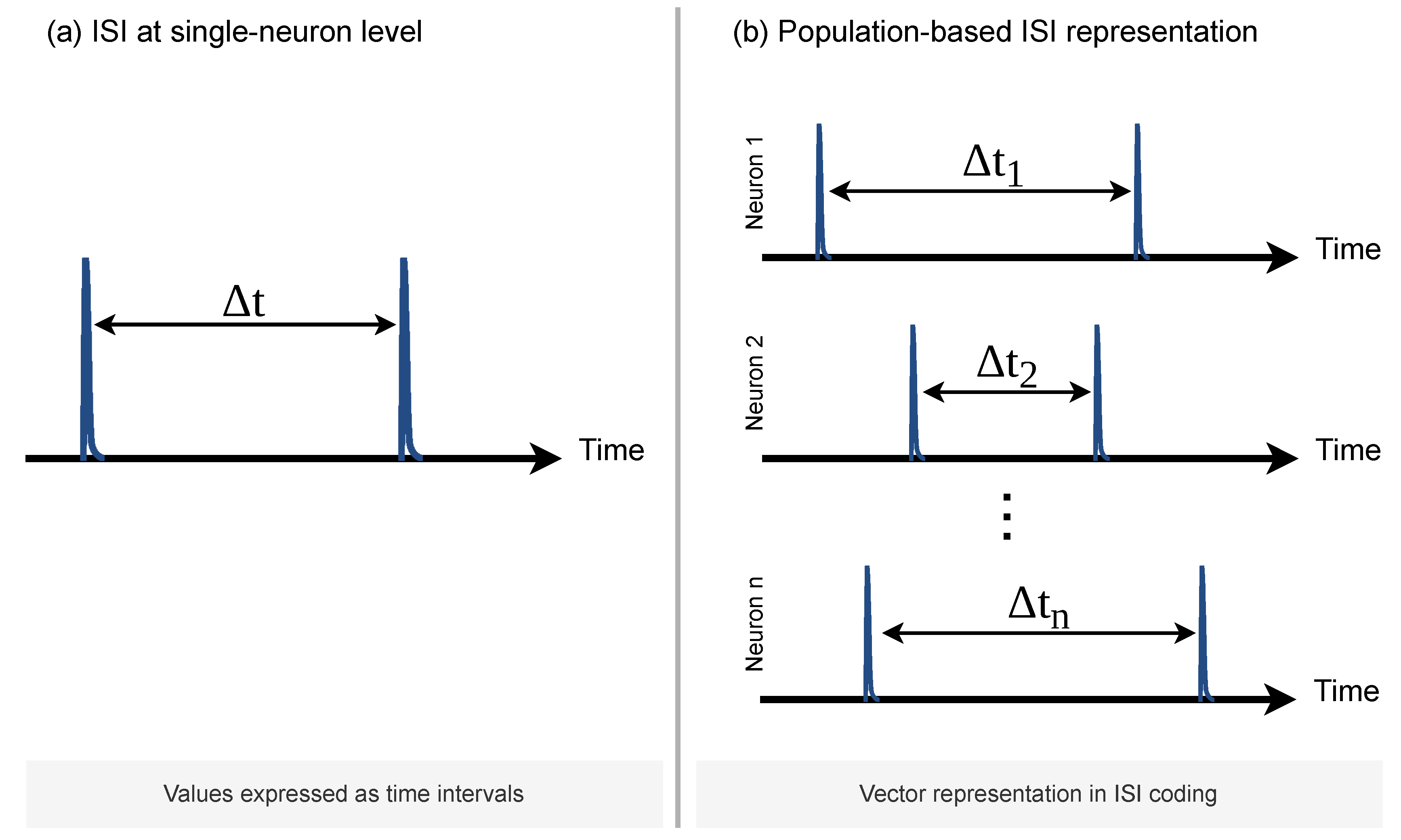

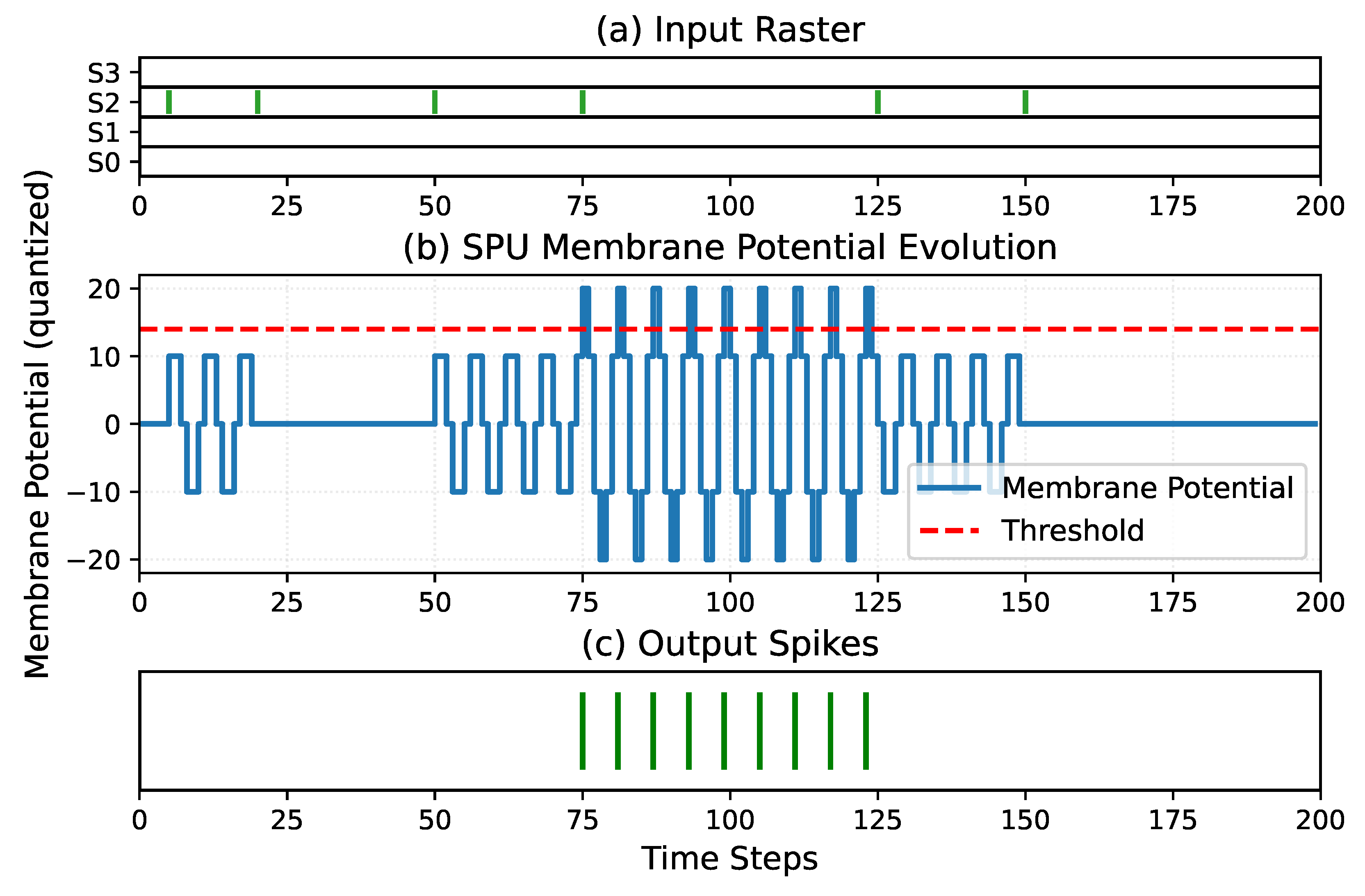

In conventional spiking neuron models, spike timing emerges from the evolution of an analog or high-precision state variable that crosses a threshold. In such systems, small numerical errors in the internal state can translate into large timing errors in the output spike train. An alternative is to generate spike timing directly from the dynamics of a discrete-time system. When spike timing is governed by a quantized IIR-like state evolution, temporal precision becomes primarily a function of the system clock and filter dynamics rather than of numerical resolution, as illustrated in

Figure 2.

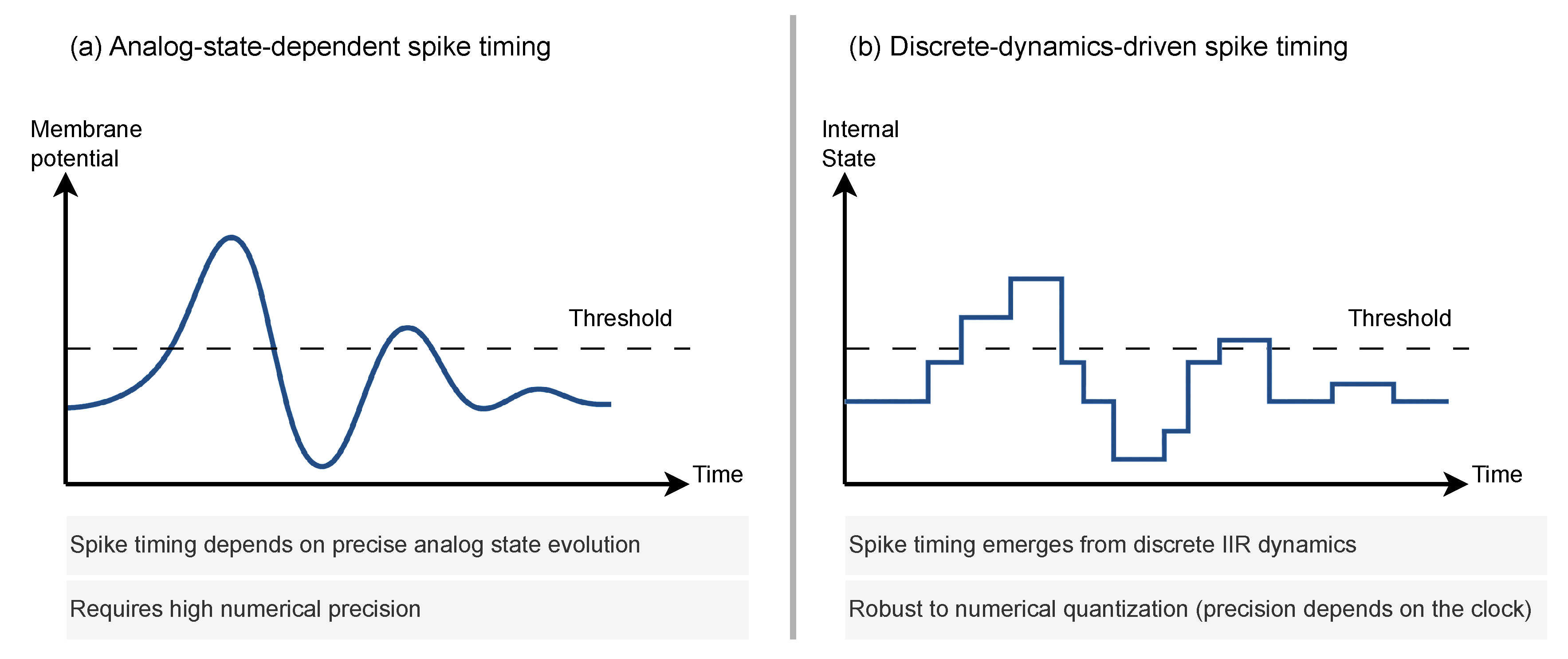

The ISI format is advantageous not only due to its sparsity—fewer spike events result in reduced power consumption—but also for its compatibility with digital hardware and control systems. At the network output, ISI-encoded signals can be used directly for classification via time-to-first-spike decoding, or converted into pulse-width modulation (PWM) signals for continuous control, as shown in

Figure 3. This enables end-to-end spike-based pipelines that interface naturally with sensors and actuators without requiring expensive analog-to-digital or floating-point processing stages.

In many spiking systems, decision making can be naturally expressed in terms of time-to-first-spike: in a population of output neurons, the neuron that fires first represents the selected class. This temporal winner-takes-all mechanism is well suited to event-driven hardware, as it avoids the need for explicit normalization or high-precision accumulation.

More generally, SNNs can be interpreted as cascaded timing systems. Neurons in intermediate layers are not required to fire first, but to fire at the appropriate time so as to activate downstream neurons. The final layer then implements a temporal competition, transforming distributed spike timing into a discrete decision.

Conventional ANNs and many SNN models propagate information between layers in the form of numerical intensities, whether as real-valued activations, spike counts, or firing rates. Even when spikes are used, neurons typically integrate these signals to reconstruct an analog quantity before deciding whether to emit a new spike.

In contrast, the paradigm explored in this work is fundamentally temporal. Neurons do not attempt to transmit intensities to downstream layers. Instead, they aim to emit spikes at the correct time so as to trigger subsequent neurons at their own appropriate times. Computation emerges from the interaction between internal dynamics and the relative timing of incoming spikes, rather than from the accumulation of signal magnitude.

From this perspective, spikes and ISI codes form a computational abstraction for temporal signal processing rather than merely a biological metaphor. This view opens the possibility of designing spiking neurons as discrete-time dynamical systems optimized directly for digital hardware.

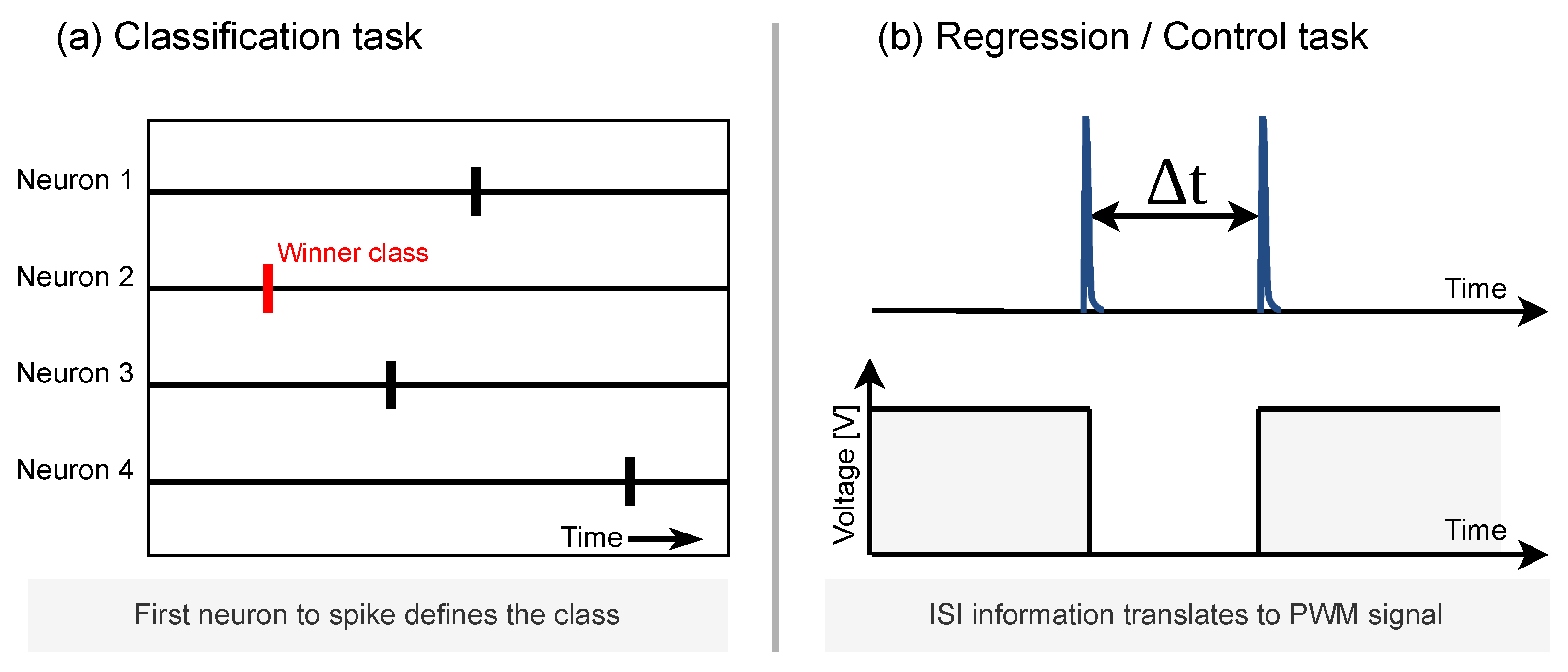

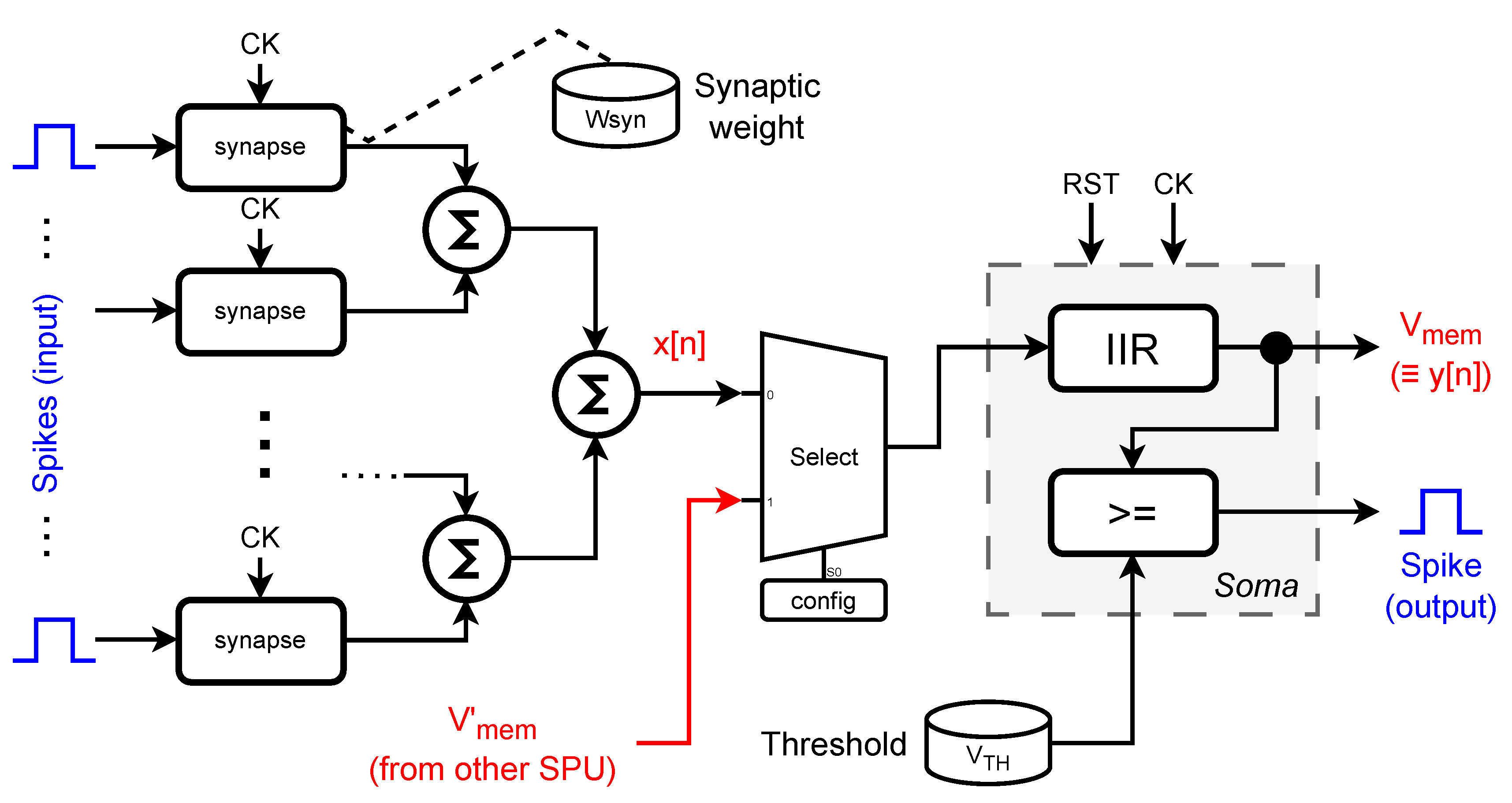

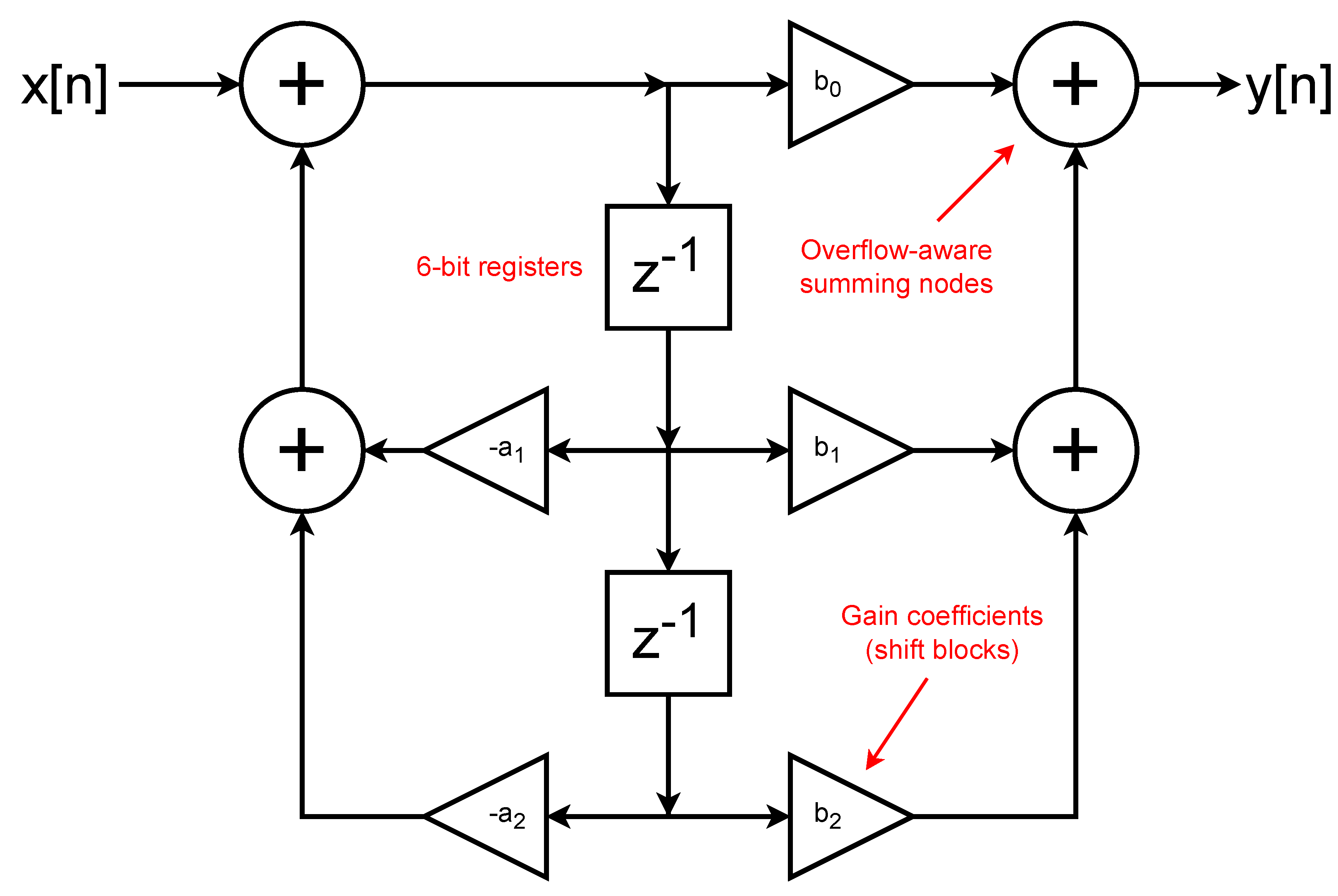

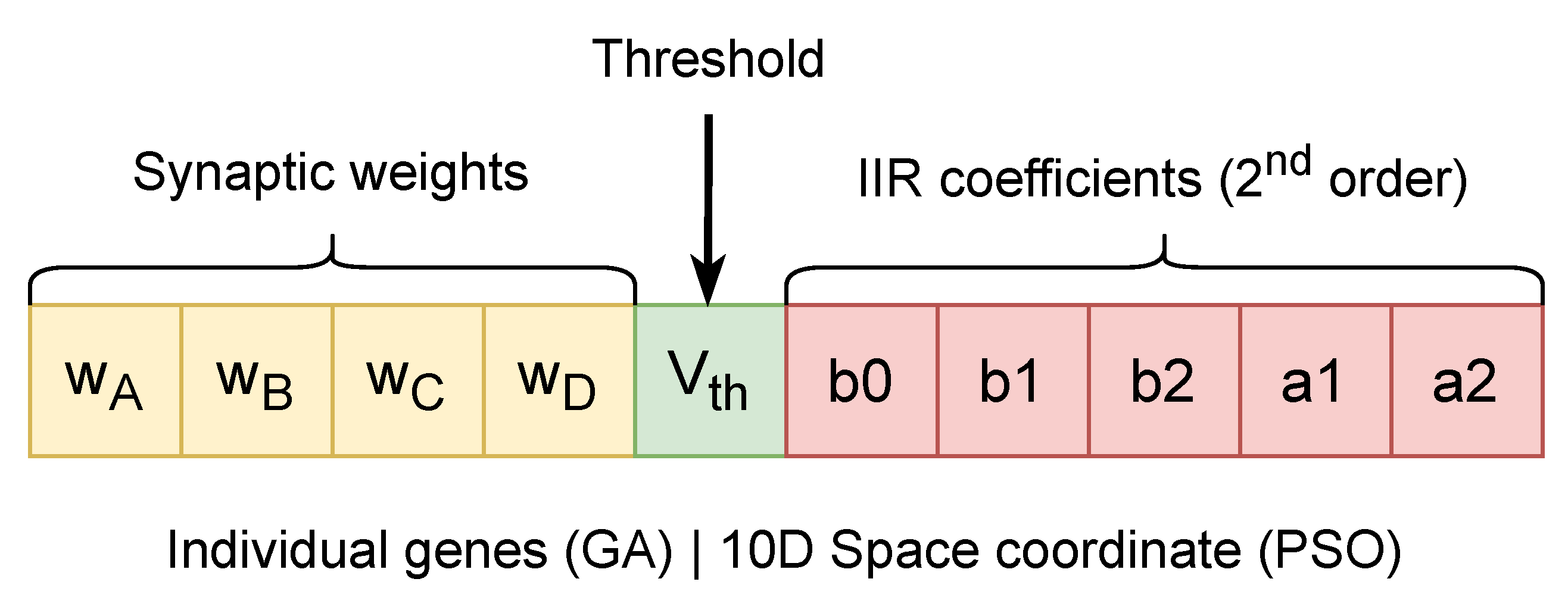

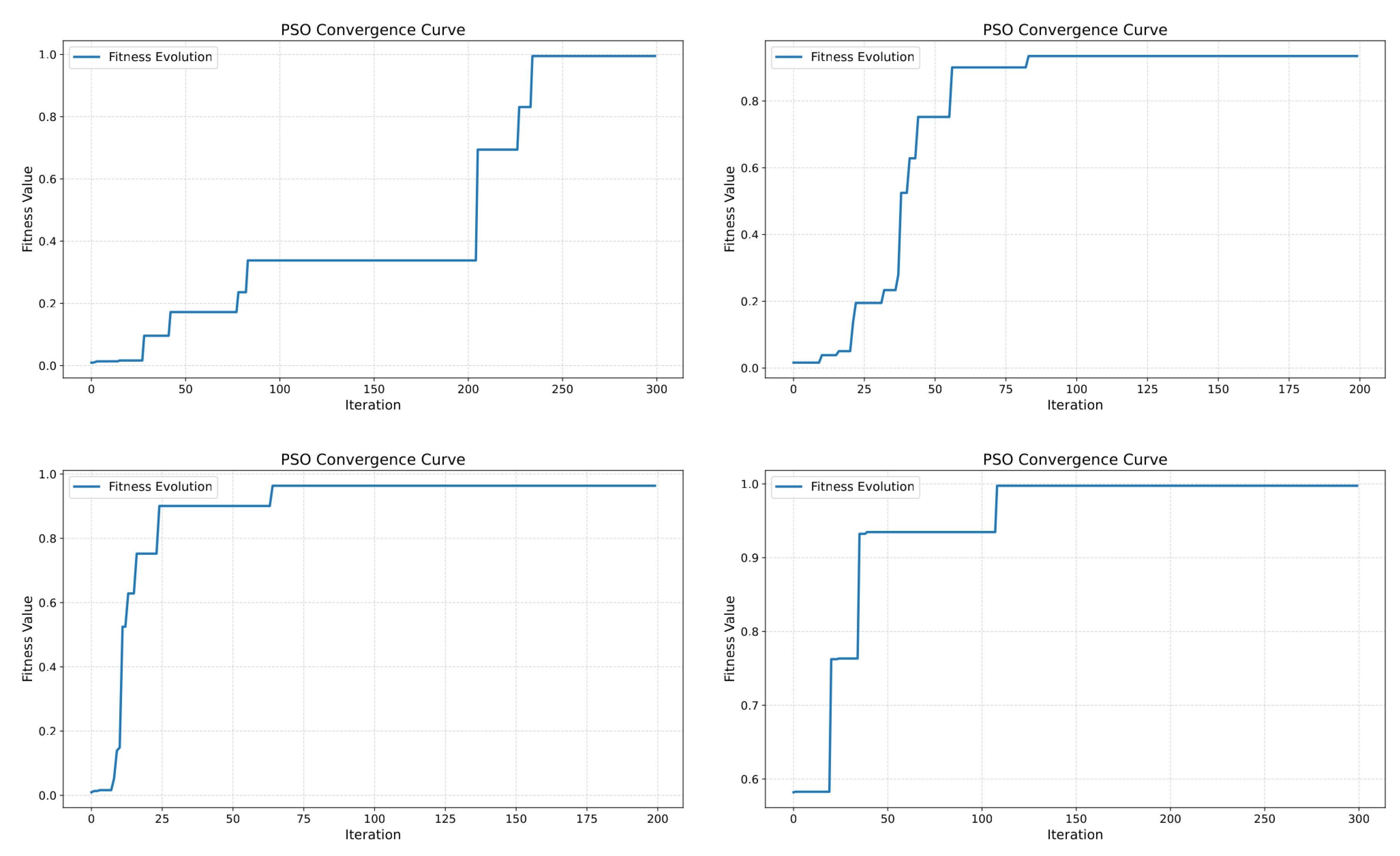

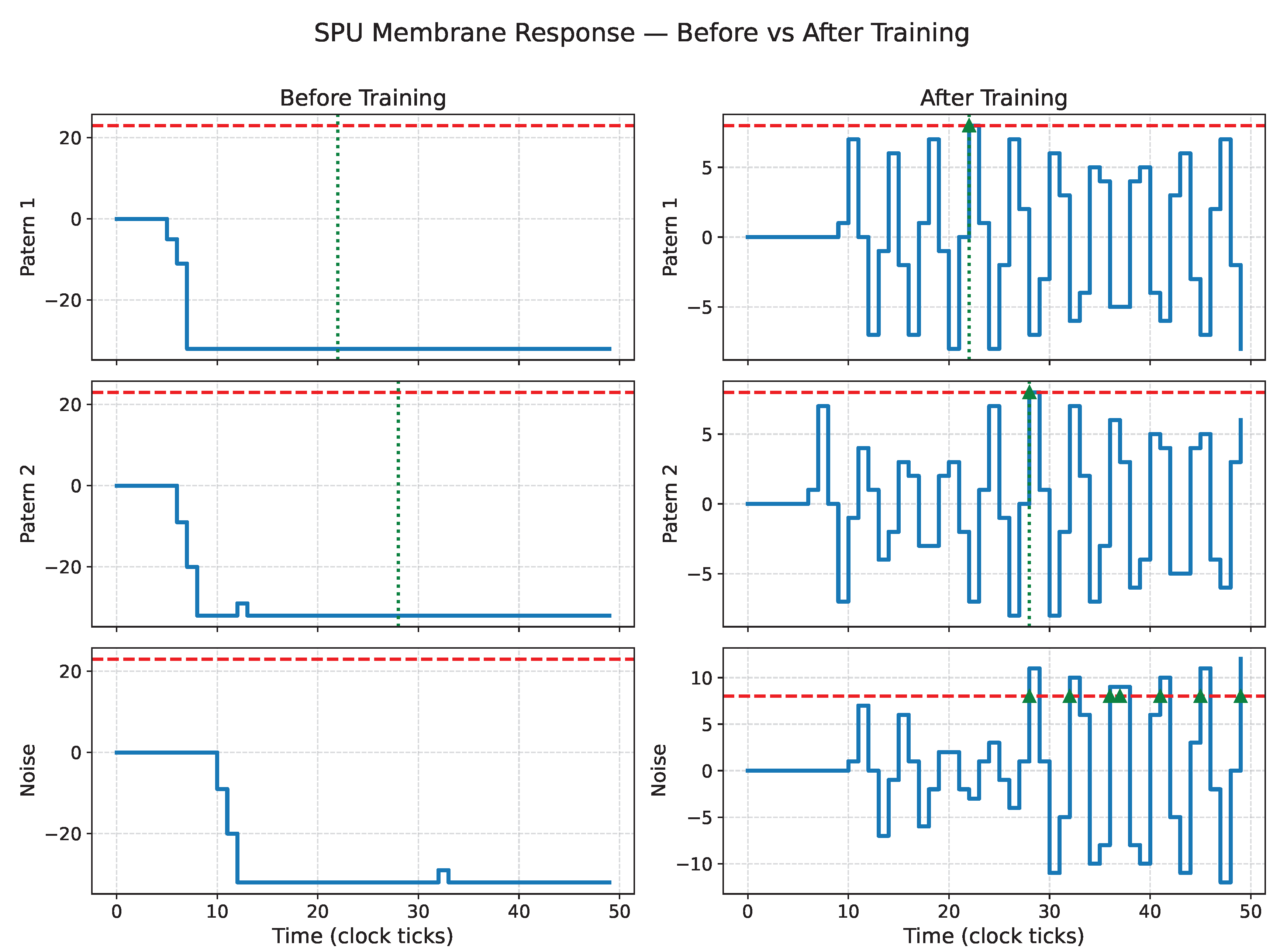

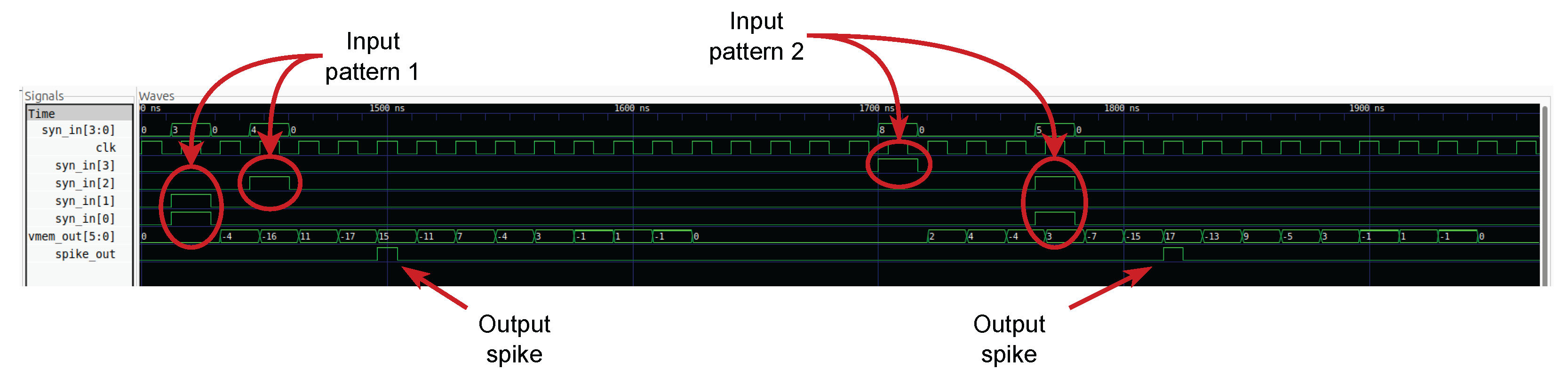

This work introduces the Spike Processing Unit (SPU), a spiking neuron model designed from the outset as a low-precision, multiplier-free, discrete-time system. The SPU implements second-order IIR dynamics on 6-bit signed state variables and generates output spikes when its internal state crosses a threshold. Temporal selectivity and spike timing arise from filter dynamics rather than from analog state precision.

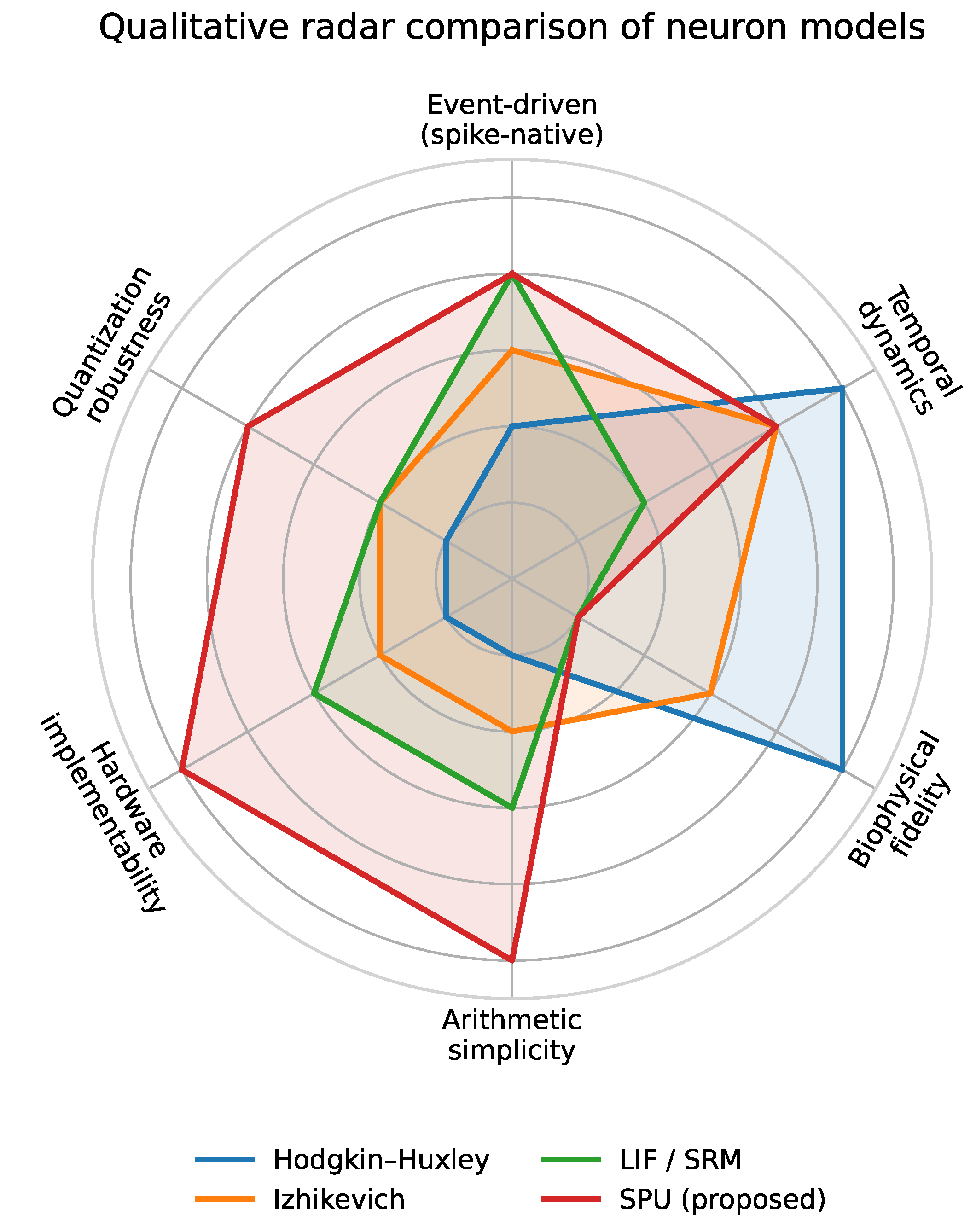

Figure 4 provides a qualitative comparison of representative neuron models from this hardware-oriented viewpoint. While biophysically detailed and simplified spiking models emphasize biological fidelity and rich internal dynamics, the SPU prioritizes arithmetic simplicity, quantization robustness, and hardware implementability, while retaining sufficient temporal dynamics for meaningful spike-based computation.

The remainder of this paper presents the SPU architecture, its discrete-time operational principles, and its training for temporal pattern discrimination. Through simulation and hardware synthesis, we show that meaningful spiking computation can be achieved with extremely low numerical precision and without multipliers, enabling a new class of temporally expressive yet highly efficient neuromorphic building blocks.