Introduction

Population ageing is accelerating worldwide, shifting the balance between those needing care and the working-age population available to provide it. Globally, dementia imposes substantial health-system and societal costs—estimated at US

$1.3 trillion in 2019 and projected to rise sharply in coming decades—while informal carers already deliver roughly half of total care hours [

1,

2,

3,

4]. In Europe, older adults (≥65 years) accounted for 21.6% of the EU population on 1 January 2024, and projections indicate a sustained increase in the old-age dependency ratio through mid-century [

2]. United Nations projections similarly forecast a rapid expansion of the ≥65 population over the next three decades [

3]. Against this backdrop, enabling safe, scalable home-based care for people living with dementia is a pressing priority, both to preserve independence and to mitigate escalating institutional and family costs [

1,

4]. In many cases, in-home caregiving is provided by workers with limited or no formal training at all; services can be expensive, and reliable high-quality support is not readily available on demand. In this work, we explore the integration of audio-based signal processing with new possibilities offered by artificial intelligence (AI) tools within the care pipeline for elderly patients with dementia. Our aim is to leverage recent advances in AI, and large language models (LLMs) in particular, to deliver an affordable service designed primarily for people of low-income and/or remote locations where full-time human care is not practically feasible. Our approach is also applicable to the important and pressing case of childless elderly people the live alone and develop mobility and mental health conditions.

Ambient audio sensing is an attractive modality for home care: microphones are inexpensive, unobtrusive, and easily installed; they can capture both speech and non-speech events (e.g., coughs, thuds, alarms, cane taps, expressions of pain or distress) without the visual privacy trade-offs of cameras and without the adherence burden of wearables. Prior research has demonstrated that speech carries information relevant to cognitive status. Early work using automatic speech analysis differentiated healthy controls, mild cognitive impairment (MCI), and Alzheimer’s disease (AD), establishing the feasibility of acoustic biomarkers [

5]. Subsequent reviews and empirical studies have evaluated acoustic (paralinguistic) features—prosody, timing, pauses—alone and alongside linguistic variables for AD screening and monitoring, including remote collection paradigms [

6,

7,

8,

9]. Recent studies have further explored non-semantic, acoustic-only features and the feasibility and test–retest reliability of multi-day, remote assessments of speech acoustics, including associations with amyloid status and deep-learning approaches to voice recordings [

10,

11,

12,

13,

14,

15]. Collectively, this body of work supports speech-based digital biomarkers as a noninvasive window into cognitive health, but translation to day-to-day home-care workflows remains limited [

8].

Beyond speech, audio has also been investigated for characterizing behavioral and psychological symptoms of dementia and care environments. Persistent or inappropriate vocalizations are a common and burdensome symptom in advanced dementia; integrative reviews synthesize their phenomenology and implications for care [

16]. Soundscape interventions in nursing homes show that targeted monitoring and staff feedback can improve acoustic environments and staff evaluations, suggesting that environmental audio is a modifiable determinant of wellbeing [

17]. Concurrently, a growing literature examines audio-based detection of safety-critical events such as falls [

18,

19], spanning classical machine-learning methods and transformer-based approaches and complemented by reviews of fall-detection methods and wearable sensing for older adults [

20,

21]; related work has demonstrated real-time acoustic detection of critical incidents on edge devices, underscoring feasibility for low-latency deployment [

22]. However, most prior studies focus on single tasks (e.g., diagnostic screening or environmental monitoring), use longer or highly structured recordings, or are conducted in clinical or institutional settings rather than ordinary homes [

23,

24,

25,

26,

27,

28]. Integration of heterogeneous audio evidence into actionable, caregiver-facing summaries is rarely addressed.

Smart-home and telecare research for older adults has advanced through successive projects that illustrate different sensing and inference paradigms. An adaptive framework for activity recognition in domestic environments incorporated user feedback to refine models, thereby embedding personalization into resident-centered automation [

29,

30]. Practical issues of scaling were examined in multi-country deployments of assistive equipment for people with dementia, where installation procedures and technical support were identified as critical for sustained operation [

31]. Inference methods progressed with plan-recognition models capable of interpreting incomplete observations to detect disorientation and atypical behaviors in Alzheimer’s patients [

32]. Multimodal capture of daily living activities was enabled through wearable audio–video devices, with indexing strategies to allow efficient clinical review of extended recordings [

33]. For nocturnal monitoring, unobtrusive load-cell systems placed under beds were developed to quantify movement and posture changes, supporting analysis of sleep patterns and risk states [

34]. Activity recognition in naturalistic home settings using simple state-change sensors demonstrated that ubiquitous instrumentation could yield meaningful behavior classification without requiring cameras [

35]. Within clinical contexts, models were proposed for quantifying patient activity in hospital suites, producing indicators such as mobility levels and displacement distributions that could be tracked continuously [

36]. Earlier, lifestyle-monitoring systems established the feasibility of using ambient data streams to identify deviations from typical behavior and support independent living [

37]. Finally, integrated e-healthcare prototypes in experimental “techno houses” is suggested in [

38] combined physiological and environmental sensors to enable continuous monitoring across multiple aspects of daily life, including personal hygiene and rest [

39,

40].

This work targets that translational gap by proposing and evaluating a home-based pipeline that couples short-duration audio sensing (15 s snippets) with an Audio Spectrogram Transformer (AST) [

41] trained on the Audioset database [

42] for non-speech event detection and a LLM (ChatGPT-5) constrained to emit auditable, structured reports. Speech and non-speech events salient for home care—coughs (including chronic cough burden), cane-tap sequences (as a mobility proxy via cadence variability), thuds, appliance beeps, and alarms—are time-stamped by AST and exported as a JSON record. The LLM receives as text input the context of the application, dialogue transcripts, acoustic features, and event metadata together with a small, consented household knowledge base, and returns reports covering orientation/disorientation (person/place/time), delusion themes and their persistence (e.g., fixed beliefs about “another home”), agitation likelihood, comprehension difficulty, instruction follow-through, and safety flags (e.g., possible fall). Unlike diagnostic-only approaches [

43,

44,

45,

46,

47,

48,

49,

50], the outputs are designed to guide daily care (e.g., de-escalation prompts, check-ins) and to populate longitudinal dashboards for trend analysis.

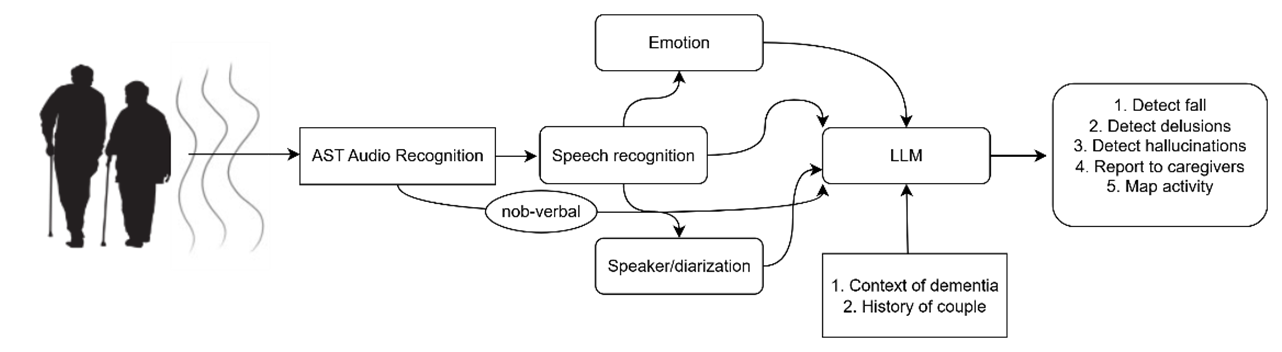

Our contributions are threefold. First, we integrate speech and non-speech audio within a home pipeline, moving beyond single-task speech-only screening to multi-task monitoring that reflects real-world caregiving priorities (safety, agitation, communication efficacy). We use recently developed AI tools that were not available ten years ago (i.e., transformers, large databases and LLMs). Second, we develop a real-time, affordable, hardware solution and we open source its code. Third, we use an LLM to integrate explicitly separate acoustic observations to reach a higher level of interpretation that cannot be achieved using single audio transcription—for example, detecting delusional themes. In aggregate, the combination of audio processing, transformer classifiers, and LLM receiving input from real patients reframes ambient home audio from a raw signal into validated, longitudinal indicators of safety and wellbeing, complementing—not replacing—clinical assessment while addressing pressing needs in dementia care. A depiction of our approach can be seen in

Figure 1.

Materials & Methods

The study participants are a couple living in their own home—a woman aged eighty-seven and a man aged ninety (as of 2025)—both formally diagnosed with stage-2 dementia. The main symptoms are: Increasing difficulty recalling recent events; forgetting names of familiar people, word-finding problems, short sentences, confusion about time and place, repetitive questioning. The female subject has developed delusions and increased risk for falls. Both subjects have additional mobility problems and use cane and other mobility aids.

The subjects are attended by two caregivers in two 8-hour shifts per day and occasionally receive additional care from practitioners. The establishment has in-house cameras with audio in all rooms attended by professional security personnel during nights. Due to low mobility, the couple moves only to one floor and only to the bedroom, the bathroom, and the living room.

This study is a proof-of-concept demonstrating the feasibility of an audio processed by AI pipeline for home dementia care. Our goal is methodological and systems-oriented—to show that ambient audio can be captured on very low-cost hardware, transformed into text labels corresponding to the recognized audio events out of 527 audio classes, and summarized by an LLM (in this work ChatGPT 5 in thinking mode) that receives the labels and the context of the application into auditable, caregiver-facing outputs. We do not claim population-level diagnostic performance; instead, we establish engineering viability, safety guardrails, and a structured scheme for longitudinal monitoring.

Although the cohort is small, the dataset is longitudinal and dense (thousands of 15 s snippets across weeks), enabling robust within-subject demonstrations of trend tracking (e.g., cough burden, exit-seeking themes, cane-tap variability). For personalization-centric care, repeated measures from the same individuals are more informative than sparse cross-sectional samples.

We acknowledge that two participants do not support population-level inference. However, we believe that reported evidence in this work justifies the position that LLM and AI-bots can provide significant value to this problem by first monitoring and in the near future engaging the task with actions. Accordingly, we: (i) report per-subject results and descriptive statistics rather than pooled significance tests; (ii) release code and de-identified audio to enable replication; and (iii) outline a prospective, multi-site study with power considerations for clinical endpoints (e.g., sensitivity to agitation/exit-seeking, caregiver workload impact). This sequencing—proof-of-concept → pilot → powered trial—is standard for translational systems entering safety-critical care.

Hardware

The approach presented is based on the ESP32 and MEMS type microphone that are very low cost. We implemented a distributed architecture that supports cloud, on-premises, and edge deployments. A network of ESP32-S3-DevKitC-1-N8R8 nodes with SPH0645LM4H digital microphones transmits mp3 compressed 15 s audio snippets over Wi-Fi to a secure remote server for classification.

The SPH0645LM4H provides a low-power, bottom-port I

2S output, eliminating the need for an external codec. Its flat 0.1–8 kHz response aligns with our 16 kHz sampling rate for AST-based analysis. The ESP32-S3, a dual-core Xtensa LX7 MCU with Wi-Fi/BLE, vector instructions, ample GPIO, and I

2S, was selected to handle audio capture and MQTT transport. The total cost of the device is at the order of 20 Euros (as per 14/8/2025). A more detailed description of the hardware can be found in [

22]. However, the same service can be provided by a smartphone that streams audio snippets to Wi-Fi, captured by a voice activity detector (VAD).

Embedded Software in ESP32

Using Espressif IoT Development Framework version 5.3 (ESP-IDF 5.3), the embedded nodes in C language capture audio from the microphone via the Inter-IC Sound (I2S) digital audio interface in non-overlapping 1024-sample windows at 16 kHz. Then, they perform basic level checks using the Root Mean Square (RMS) value, encode the audio into MP3 format with an embedded MP3 Encoder (LAME) library, and publish the compressed data using the Message Queuing Telemetry Transport (MQTT) protocol secured with Transport Layer Security (TLS). Processing is divided between the two cores of the ESP32-S3 microcontroller (Core 0 handles capture and processing, while Core 1 handles encoding and transmission), with a ring buffer used for inter-task communication. Configuration is stored in Non-Volatile Storage (NVS) and supports remote updates via MQTT. Pseudo-Static Random Access Memory (PSRAM) is used for efficient buffering. Networking is provided over Wi-Fi by default.

MQTT was chosen for its reliability under constrained bandwidth and variable link quality. Streams are handled by a multithreaded MQTT client with Quality of Service (QoS) tuning, buffering, error detection, and fast reconnection protocols. Latency is reduced through optimized packetization, I2S Direct Memory Access (DMA) buffering, and adaptive send intervals; a User Datagram Protocol (UDP) fallback is enabled if MQTT delays increase. Local brokers operating over 2.4 GHz Wi-Fi minimize Wide Area Network (WAN) hops, while server-side asynchronous processing and Network Time Protocol (NTP) synchronization maintain throughput and temporal alignment across nodes.

A Python 3.11 wrapper (see Appendix) manages audio acquisition, preprocessing, and model execution. At the server, MP3 clips are decoded using Fast Forward Moving Picture Experts Group (ffmpeg), converted into spectrograms via the Short-Time Fourier Transform (STFT), and rendered as time–frequency images for the Audio Spectrogram Transformer (AST). The AST, which follows a Vision Transformer (ViT) architecture, operates directly on spectrogram patches for audio event classification. Spectrograms for spoken snippets and environmental events (e.g., discussions, cough, cane taps) are processed to produce labeled, timestamped outputs suitable for downstream integration.

The Elders with Dementia Dataset

The ESP devices work 24/7 and are activated by voice to record at 16kHz sampling rate, the subsequent 15 seconds. No audio recordings are kept on the device. Depending on the activity inside home, several hundred recordings can be produced per day. The recording sessions started on 4/8/2025 and continue up to now reaching several thousands of recordings. Recordings contain mainly speech, non-verbal human sounds, cane hits and impact sound of various objects (e.g., drawers, closets, doors, switches). The database they produced is the subject of this study.

The Audio Event Recognizer

The Audio Spectrogram Transformer (AST) [

41] formulates audio tagging as patch-based transformer classification on log-Mel spectrograms and is commonly pre-trained or fine-tuned on AudioSet. Concretely, a waveform is converted to 128-dimensional log-Mel filterbanks using a 25 ms window with 10 ms hop; the 2-D time–frequency map is then split into overlapping 16×16 patches that are linearly projected to tokens, augmented with positional embeddings, and processed by a ViT-style encoder (12 layers, 12 heads, 768-dim embeddings). The model is initialized from ImageNet ViT weights via patch and positional-embedding adaptation and optimized with binary cross-entropy using standard audio augmentations (mixup, SpecAugment) and weight-averaged checkpoints. Evaluated on AudioSet—the 2.1 M-clip, 5.8 k h, 527-class collection of human-labeled 10-second YouTube excerpts—AST reports mean average precision (mAP) 0.4590 for a single weight-averaged model and up to 0.4850 with model ensembling on the full training split, indicating that transformer attention over spectrogram patches is competitive with or superior to CNN/attention hybrids for weakly labeled sound event recognition. In this configuration, AST leverages AudioSet’s breadth to learn general sound representations that transfer to downstream tagging tasks without architectural changes.

AudioSet [

42] is a large-scale, ontology-driven dataset of human-labeled sound events released by Google, comprising over 2 million 10-second audio clips drawn from YouTube videos. Its ontology includes 527 distinct classes spanning speech, environmental sounds, animal vocalizations, and human activities, providing a broad coverage for training and evaluating audio event recognition models. The AudioSet ontology includes the sounds that daily activity care produces, namely, impact, collisions, and knocks labels that are falls / accidents proxies. Specifically, the Knock, Tap, Bang, Thump/thud, Slam, Smash, crash, Clatter, Shatter (glass) are included in the ontology. Regarding sounds of human vocal distress, pain, and agitation that can be associated with elderly activity and accidents, the following labels are included in the ontology and are semantically related: Screaming, Yell, Shout, Wail/moan, Whimper, Groan, Gasp, Sigh. We are also interested in monitoring chronic conditions related to respiratory and health-conditions and the following are included in the available set of labels: Cough, Sneeze, Breathing, Snoring, Throat clearing and Wheeze appears under respiratory sounds in the ontology as well. Regarding mobility and movement (activity / restlessness cues), the labels: Footsteps, bouncing, wood, Stomp, stamp, Surface contact (e.g., scrape, scratch), Creak (e.g., floorboards/chairs), Door (open/close). Safety / alerting signals (hazard or attention) are included in the ontology. Smoke detector, smoke alarm / Fire alarm, Alarm clock, Siren, Buzzer, Beep, bleep, Doorbell, Telephone ringing. Conversation & caregiver interaction (dialog state cues) are handled by the Speech, Conversation, Whispering, and loudness--related: Shout / Yell categories.

The wide representation of audio categories in Audioset ontology, practically covers all cases appearing in the context of our work.

Ordinary Automatic Speech Recognition Models Do Not Currently Work Properly for People with Dementia

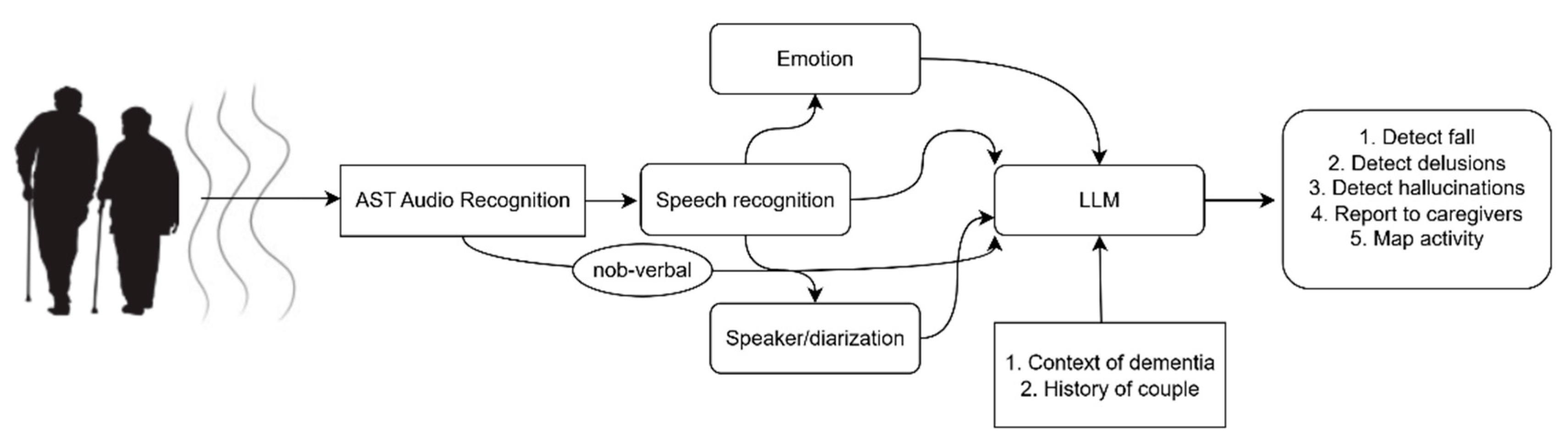

Whisper large-v3 is an open, transformer-based encoder–decoder ASR model that delivers strong accuracy across many languages with competitive inference speed. Automatic speech recognition (ASR) with Whisper 3-large (see Appendix) underperformed on conversational Greek from older adults with dementia because multiple, interacting factors degrade the signal and violate the model’s assumptions. We subsequently analyze the reasons for this failure. First, speaker physiology changes with age—presbyphonia, breathy or tremulous phonation, reduced articulatory precision from dentures or dry mouth, and frequent comorbid dysarthria—alter formant structure and sibilants and reduce the effective signal-to-noise ratio, making phonetic cues less distinct. Second, cognitive–linguistic patterns characteristic of dementia (see also

Figure 2)—long hesitations, false starts, repetitions, elliptical utterances, and frequent repair sequences—disrupt the fluent, clause-level structures that end-to-end ASR models implicitly expect from their training data, increasing deletions and punctuation errors. Third, the home acoustic environment adds nonstationary interference: televisions and radios, and hard-surface reverberation all produce cross-talk and spectral interference that cannot be discriminated by front-end feature extraction. Fourth, Greek-specific variability—regional accents, intonation patterns, and rich inflectional morphology with clitics and enclitics—is underrepresented in common web-scale corpora, so systems tuned on broadcast or YouTube speech from younger speakers generalize poorly to elderly, informal Greek dialogue. Fifth, pipeline constraints compound errors: tight voice-activity detection and diarization cuts fragment utterances; 10–15 s windows limit language-model context for disfluency recovery. In combination, these factors yield low confidence scores, insertion/deletion spikes, and semantic drift in transcripts, which in turn mislead downstream LLM components tasked with behavioral inference. Model adaptation of transformers to include the speech of elders is feasible but nontrivial: since systems like Whisper are trained on paired audio–text annotations, effective domain adaptation would require a sizable, consented corpus of elderly Greek conversational speech with accurate transcripts spanning dialects, acoustic conditions, and symptom severities—resources that are rarely available and costly to curate (see [

51] for an adaptation of ASR models to elders using a 12 hours adaptation corpus).

Voice Activity Detection

A VAD marks the part of the recording that is related to speech. There is a vast number of algorithms and implementations available. We have chosen a new approach from the TEN-VAD which has demonstrated better results compared with other state-of-the-art algorithms (see Appendix). This algorithm is a small hybrid digital signal processing (DSP) followed by a recurrent neural network VAD. It is lightweight, supports streaming and uses classic signal processing features and a small LSTM-style ONNX model for sequence modeling. It computes a 16 kHz STFT with a Hann window, applies a 40-band Mel filterbank, logs/normalizes features, and appends a pitch feature. These per-frame features are stacked over a short temporal context. The model emits a frame-level speech probability and a binary VAD flag after thresholding.

Speech Diarization and Speaker Recognition

Speaker diarization splits a clip into speaker-homogeneous time segments and can flag overlaps. It does not know the identities of people talking. Speaker recognition is a different task and tags “who is this speaker.” It matches a segment to an enrolled voiceprint (verification/identification) assuming the segment is single speaker. Determining who spoke when—is essential in monitoring elderly people with dementia because it attributes each utterance to the correct person (e.g., patient, caregiver, visitor). There are many approaches and implementations dealing with this task but in our case few-shot learning seems the appropriate solution. Few-shot learning relies on few samples to build a model for each speaker (~20 recordings of 15 seconds each), and the in-home application is expected to deal with a small number of speakers. In our setting, speaker tagging is convenient since the AST selects out speech that is subsequently tagged by a label of few speakers (six in our case: two patients, two caregivers, two relatives) or any of their combinations. By segmenting audio into speaker-specific streams before ASR, diarization reduces attribution errors (such as mistaking a caregiver prompt for a patient belief) and yields cleaner, per-speaker transcripts for the LLM. This improves LLM’s reasoning about orientation, agitation, and instruction follow-through, enables role-aware prompts and alerts, and supports longitudinal tracking of patient speech while preserving context about overlapping talk and background voices. Speech diarization has been quite successful in our experiments because, as usually the case is, there were a limited number of people in a house of elders. This allows modeling of the voices based on few examples and reliable attribution of speech queues. In this work we take the pyannote approach that implements diarization as a three-stage neural pipeline—local speaker segmentation, speaker embedding, and global clustering—that operates directly on mono 16 kHz audio and returns a time-stamped “who-spoke-when” annotation (see Appendix). In version 2.1, the default pipeline applies a neural speaker--segmentation model on short sliding windows (≈5 s with 0.5 s hop), producing frame-level (≈16 ms) posteriors for the activity of up to Kmax speakers; a related “powerset” segmentation variant explicitly models single- and two/three-speaker overlap classes to handle simultaneous talkers. Local speaker traces are then converted to speaker embeddings and aggregated globally via hierarchical agglomerative clustering (AHC) to obtain a consistent speaker inventory for the whole recording; the number of speakers can be estimated automatically or constrained by the user when known. The released embedding model is an x-vector TDNN architecture augmented with SincNet learnable filterbanks (trainable sinc convolutions replacing fixed Mel filters), yielding compact speaker representations suited to clustering. The toolkit also exposes overlapped-speech detection that can be combined with the pipeline to better account for concurrent speech. Collectively, this design frames diarization as end-to-end neural segmentation on overlapping windows, representation learning for speakers, and global AHC on embeddings—rather than K-means on spectra or hand-engineered MFCCs—to robustly infer speaker turns.

Emotion Recognition

In elderly people with dementia, depending on the age, health problems and dementia stage, the emotional expression often differs significantly from that of younger populations and may be subtle, context-dependent, and shaped by the progression of cognitive decline. Neutral or baseline states, which can blend into apathy, are common and characterized by long silences, flat prosody, and reduced motivation or expressivity. Moments of happiness or contentment do occur, often triggered by familiar people, music, or reminiscence, and may be marked by brief laughter or a brighter tone. Sadness and depressive states manifest as softer speech, a slower pace, sighing, or tearfulness, and are often accompanied by social withdrawal. Anger, irritability, and agitation are also possible, presenting as raised or shaky pitch, louder vocal bursts, or interruptions, particularly during care tasks, refusals, or episodes of confusion. Fear and anxiety are typically expressed through a tense or pressed voice, vocal tremor, and repetitive questioning, and are especially prevalent during sundowning. Apathy, a low-arousal negative state distinct from simple neutrality, can be pervasive, with minimal speech initiation and muted effect.

Emotion recognition algorithms extract low-level acoustic descriptors (pitch, loudness, speech rate and rhythm, etc.) from the audio and then use statistical models to classify into emotions (e.g., Neutral, Happy, Sad, Angry, Afraid). Since they rely on acoustic–prosodic features (i.e., paralinguistic cues) rather than speech content, they can be largely language-independent—meaning they can work on any language, but accuracy may be slightly lower if the prosody differs from the languages it was trained on (typically English). Since the algorithms are not ASR-based, unclear or slurred speech will not cause the same errors as in transcription. Note that speech melody and expression vary between languages and cultures, which can affect accuracy. For example, Greek has naturally higher pitch variation than English, which might be misread as “excited” by some models. Many speech-based emotion recognition datasets use young-to-middle-aged actors. Age-related changes in the voice (pitch lowering in women, reduced clarity, slower speech) can cause misclassification. Moreover, in noisy, reverberant environments accuracy drops.

In this work we use the HuBERT-Large backbone fine-tuned for the SUPERB Emotion Speech Recognition (ESR) task. The base encoder is hubert-large-ll60k, a self-supervised model trained on approximately 60,000 hours of 16-kHz speech (LibriLight). During pretraining, HuBERT learns robust speech representations by masking spans of audio and predicting discrete pseudo-labels obtained from clustering acoustic features. For ESR, a lightweight classification head is attached to the frozen or partially fine-tuned encoder. The resulting checkpoint accepts raw 16-kHz mono waveforms and outputs posterior probabilities over four emotions. We did not finetune to our data.

In the SUPERB benchmark, the ESR task is framed as four-class utterance-level classification with the categories angry, happy, sad, and neutral. The widely used setup draws on the Interactive Emotional Dyadic Motion Capture (IEMOCAP) database and follows the common practice of focusing on these four balanced classes (other minority labels are omitted). The superb/hubert-large-superb-er checkpoint is fine-tuned to predict the label set angry, happy, sad, and neutral.

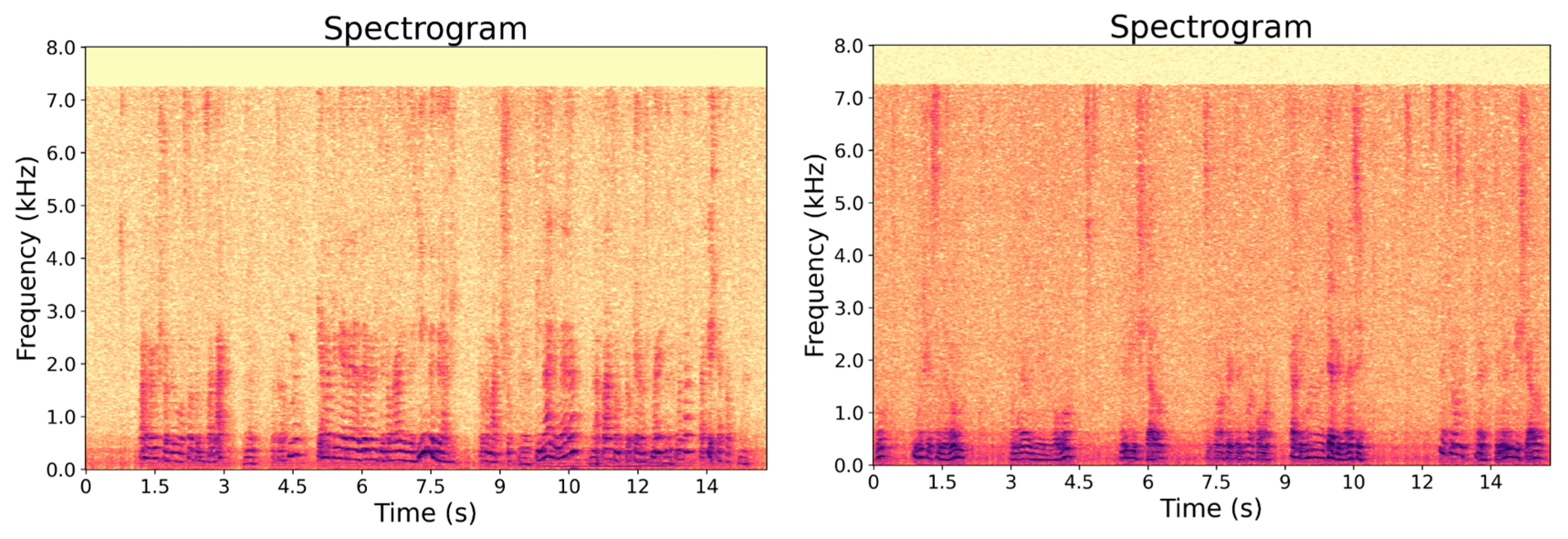

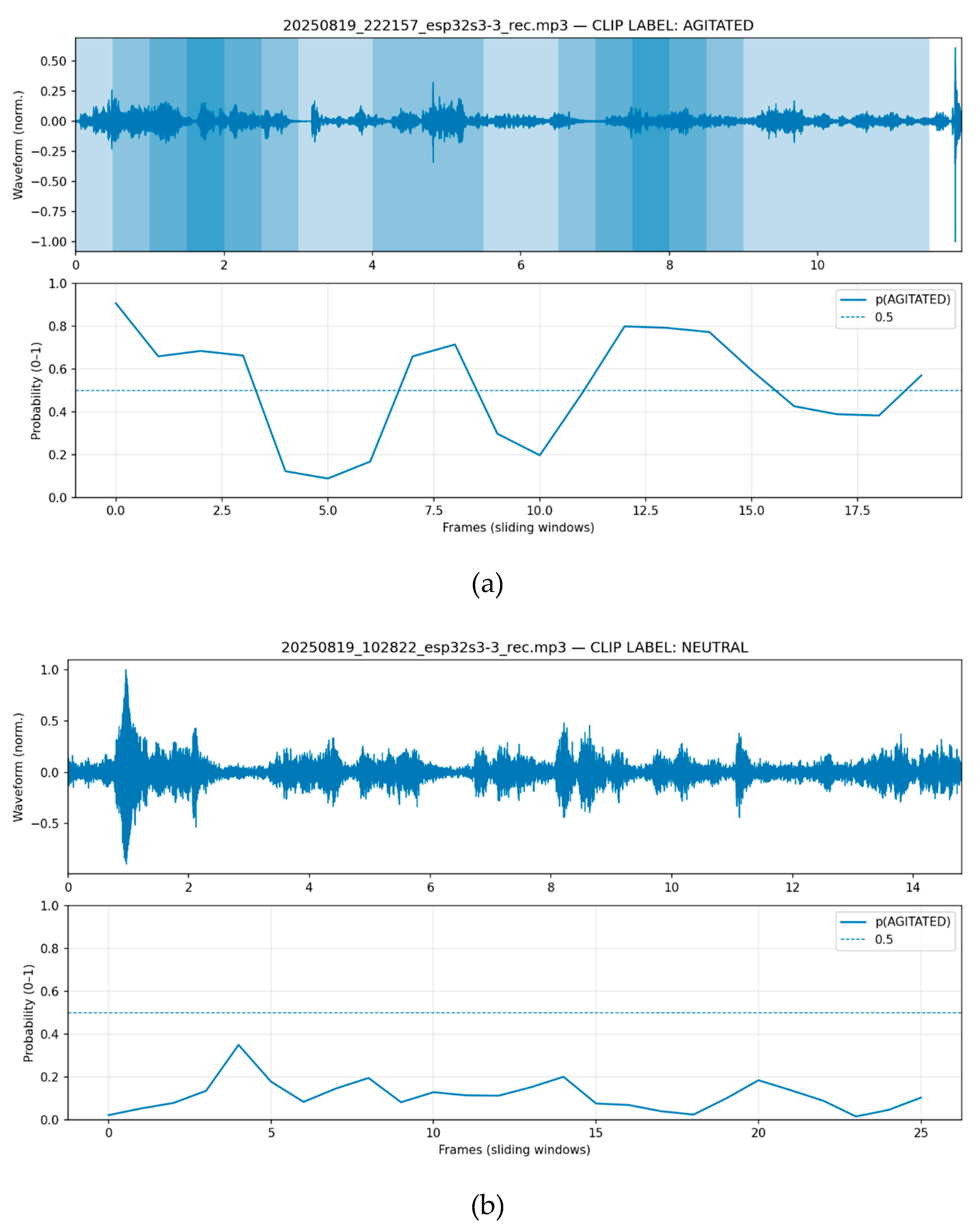

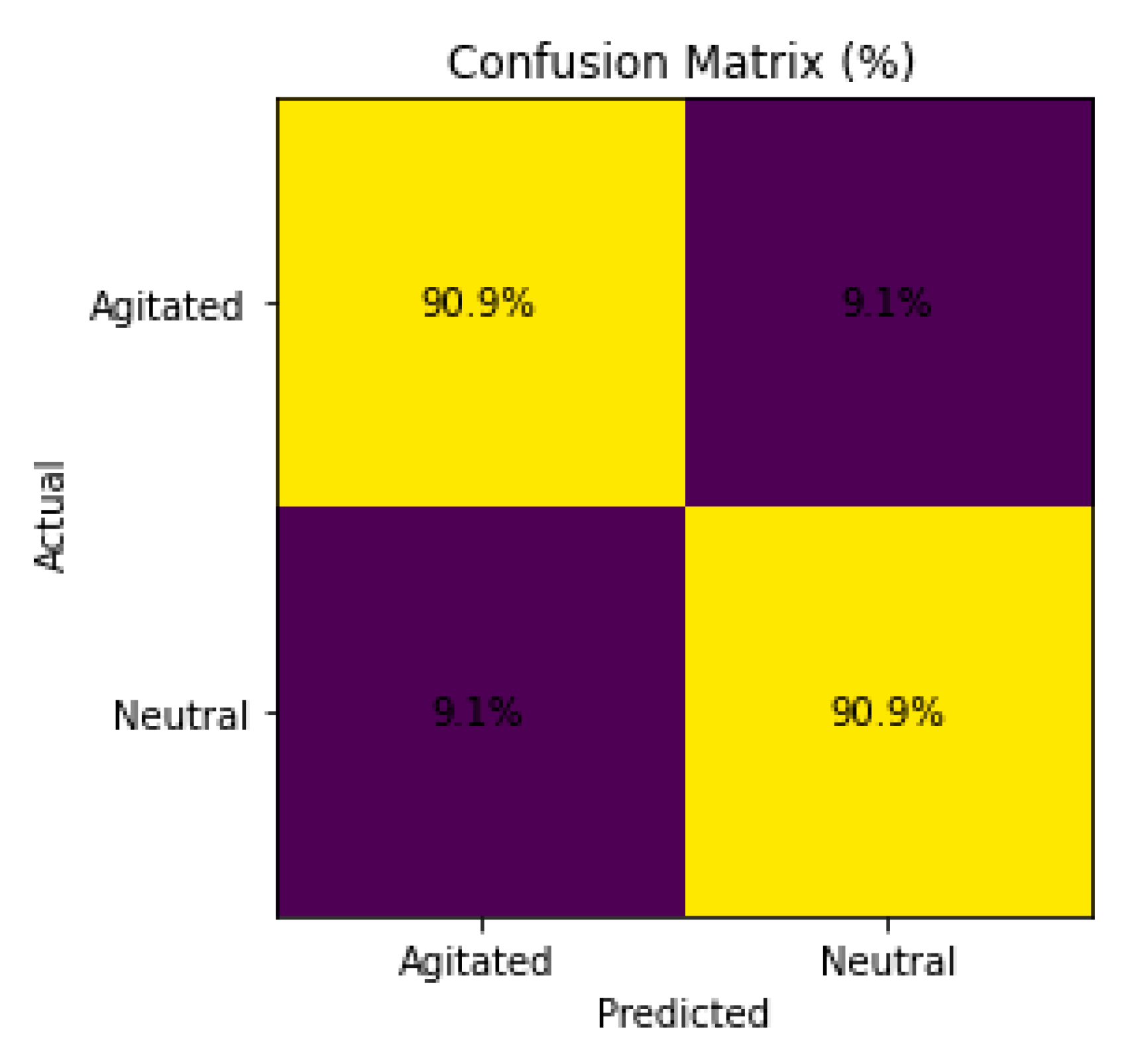

Old adults with dementia do not demonstrate the expressivity of the young. Therefore, we merge the initial labels to improve robustness in this task. To meet the binary requirement of the application, we map categories into two states: AGITATED includes angry and happy, while NEUTRAL includes neutral and sad. Regarding the decision procedure, to handle long, real-world recordings, we apply sliding-window inference. Each input file is segmented into fixed windows (default 2.0s) with overlap (0.5s hop). For every window, the model produces a probability distribution over the four emotions. For each window we compute p(agitated) as the sum of the angry and happy probabilities, and we mark the window as AGITATED when p(agitated) ≥ 0.5. A clip-level decision then uses a simple fraction rule: if at least 20% of windows are AGITATED, the entire clip is labeled AGITATED (see

Figure 3a); otherwise, it is NEUTRAL (see

Figure 3b). Window length, hop size, and the fraction threshold can be tuned to trade off sensitivity (catching brief shouts) versus specificity (avoiding false alarms on quiet, neutral speech).

Discussion

Practical Achievements so Far

Our proposal is an affordable plug-and-play device whose data are classified at multiple levels, with results jointly interpreted by a large language model (LLM). Caregiving is typically an arrangement under budget constraints, and the configuration studied here—without loudspeakers or actuators—targets practical gains from passive audio monitoring. Hereinafter, we gather practical gains after using the system for over two months continuously: a) Our approach for overnight surveillance lowers staffing needs for night shifts, which are costly and often inefficient. Continuous fall monitoring at night, reducing family anxiety given the difficulty of calling for help after a fall. b) The recognition of audio and its hourly distribution revealed that after caregivers left the subjects frequently rose, walked, and conversed, leading to daytime exhaustion that had been misattributed; inspection of the system’s “speech” and “conversation” labels between 00:00 and 07:00 prompted a medication adjustment by a doctor that resolved this pattern. c) The suggested pipeline quantifies delusional episodes over time, enabling trend tracking, and measures cough burden before and after treatment to evaluate treatment’s efficacy. d) Systematic analysis of conversational content provided actionable insight into psychological status and stressors, enabling individualized care plans that target minor yet recurring practical stressors (e.g., arrangement of objects, room temperature, lighting conditions) that degrade well-being of dementia patients and are commonly not reported the following day due to memory impairment. Finally, e) We valued the system’s uninterrupted (24/7) reliability, which ensured steady monitoring and augmented care during staffing constraints or caregiver absence, easing the burden on adult children with legal guardianship responsibilities.

Scaling Up the Service

Although our primary goal is to deliver affordable, automated in-home services, the architecture scales directly to facility-wide deployments. Field visits to elderly care facilities in the city of Patras, Greece revealed multi-floor layouts with consecutive rooms housing 2–4 residents in heterogeneous clinical states, often with only a subset of institutions admitting people with dementia or Alzheimer’s disease and with wide variation in service level and cost. These characteristics—repeatable room topology, diverse care needs, and operational heterogeneity—make a centralized, multi-room deployment both feasible and valuable, enabling consistent monitoring policies across wards while preserving room-level personalization. The proposed combination of audio signal processing and AST classifiers combined with LLMs stack yields a live “situational awareness board” that could monitor all rooms simultaneously, surface deviations (e.g., sudden spikes in cough burden, impact-like thuds, delusional dialogues), enabling oversight across an entire ward. The infrastructure needed can be deployed unobtrusively, it is low-cost, and all nodes can be processed by a single session software on a laptop. Bedsides evolve into conversational endpoints where the LLM engages residents and staff through brief, goal-directed dialogues and concise screen summaries—probing orientation, interpreting confusion or fixed false beliefs, and continuously “listening” for accidents, pain, distress and alarms—while emitting auditable, structured assessments and suggested de-escalation or check-in scripts. Cameras with audio are viable, but audio-only modality is more discreet, less invasive, lower cost, and requires no infrastructure changes. In large facilities, processing can run on an on-premises server for security, whereas in home deployments inference can be hosted on a remote server.

In the envisioned deployment, large language models (LLMs) coordinate with assistive robots and screens—both mobile platforms and bedside devices—within a closed-loop control framework. The LLM translates detected needs into verifiable action primitives, monitors execution via on-board sensor feedback, and hands off to human staff when uncertainty or risk thresholds are exceeded. Continuous monitoring and self-adaptation target conservative escalation policies that prioritize precision for non-urgent events and immediate alerts for hazards. Over time, federated learning enables room- and resident-specific personalization while preserving central governance and auditability. This approach is intended to augment—not replace—human care by converting ambient sound into continuously updated, clinically relevant signals and delegating low-risk, routine assistance to restless machines, thereby reserving clinician and caregiver effort for difficult judgment and empathy that are far from what a machine can provide.

Retrieval-Augmented Generation

LLMs can hallucinate as well and to reduce this chance future work will rely on retrieval-augmented generation (RAG) to keep the LLM factual, personalized, and auditable. Retrieval-augmented generation (RAG) pairs a generator (e.g., an LLM) with a search/retrieval step that pulls relevant, up-to-date documents or records at query time; the model then grounds its answer onto those sources. Its use can reduce hallucinations, inject patient/home context (logs, protocols, prior episodes), and enable auditable, citation-backed outputs for care summaries, alerts, and decision support.

AST event tags (e.g., cough, cane taps), diarization spans, N-best ASR hypotheses with word confidences, keyword-spotting hits, and per-snippet acoustics—can be serialized into time-stamped “episode” documents and stored locally in a vector+BM25 index. A second index can hold a consented household knowledge base (names, address, routines, safety rules), caregiver playbooks (de-escalation scripts, orientation prompts), and facility protocols (alert thresholds, fall procedures). For each new snippet, a compact “query document” (current features + intent, e.g., “assess exit-seeking/place disorientation”) retrieves the top-k episodes and relevant playbook passages with time decay and speaker filters; retrieval scoring weights high-confidence words, repeated themes, and corroborating non-speech events. The LLM is then constrained to emit a structured JSON record (observations vs. inferences, confidence, and source references to retrieved snippets) and never reason beyond retrieved evidence.

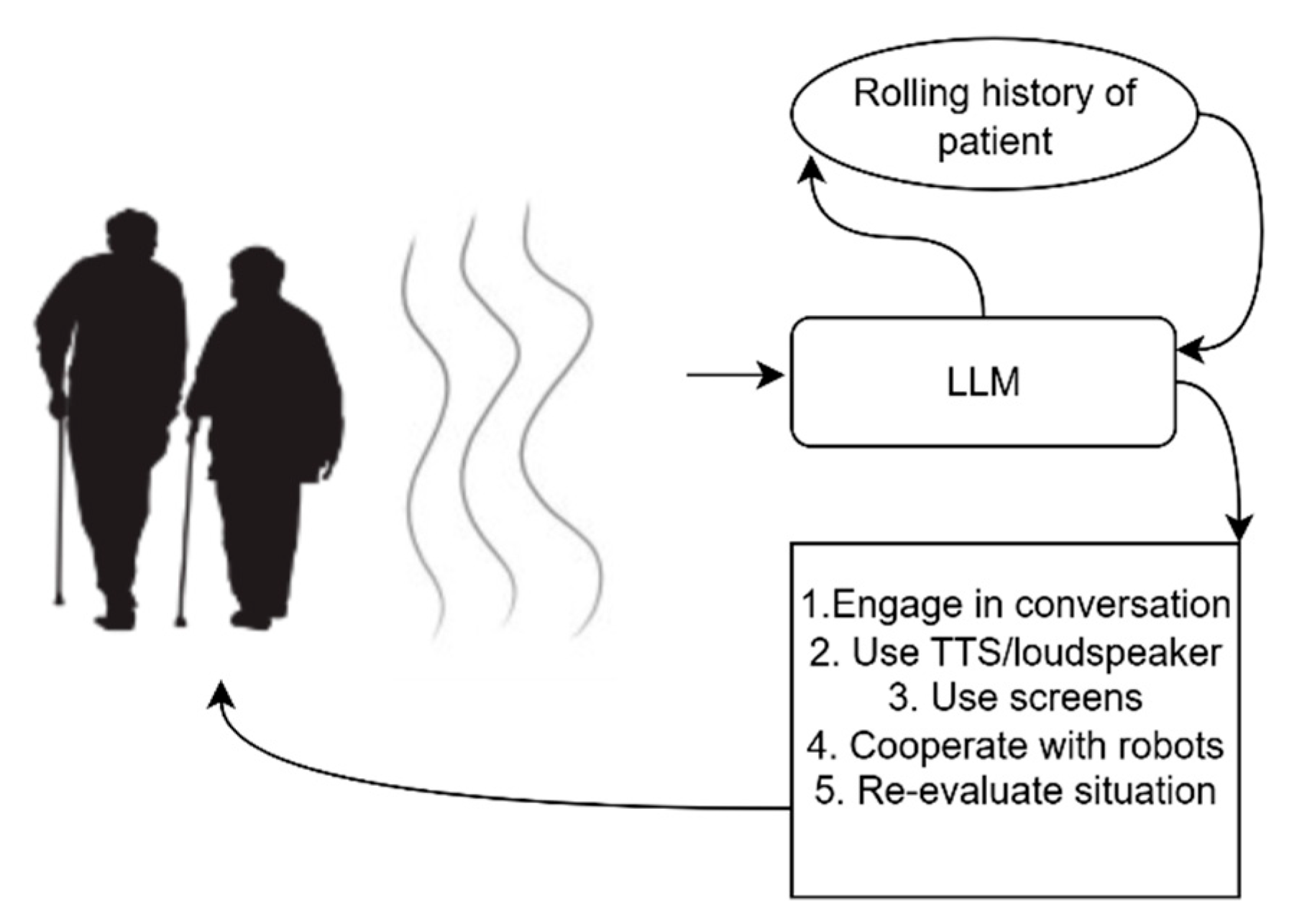

Conversation & Cognitive Support Using Actuators

We envision that an LLM will finally autonomously engage in conversations (AI-bots/agents) that stimulate patients’ memory, monitor for hazardous situations and mitigate the sense of loneliness. Our aim is to provide this service under the unconditional restriction of financial affordability.

In dementia care, an effective agent must integrate six capabilities within explicit safety and ethics guardrails. First, reasoning is bounded and evidence-driven: the agent fuses multimodal inputs (e.g., short audio snippets, ASR key phrases, affect tags, time of day, and a consented personal profile) to draw low-risk inferences and select pre-approved utterances rather than generate open-ended advice. Second, acting is constrained and assistive, not clinical: the agent performs digital actions—brief TTS prompts via WiFi loudspeakers, on-screen cues, and caregiver notifications. This would be useful, especially in the case of the important category of an alone elder that will otherwise not speak a lot. It will operate in supervised autonomy and will generate speech only from curated templates. Third, observing relies on privacy-preserving sensing (on-device preprocessing where feasible, minimal metadata upstream) to maintain situational awareness while protecting dignity. Fourth, planning converts near-term goals (hydration, toileting, calm bedtime, orientation) into simple, time-appropriate steps with escalation paths to humans when uncertainty or risk is detected. Fifth, collaborating treats caregivers as partners, exposing transparent logs, rationales, and easy overrides so human judgment remains central. Finally, self-refining allows personalization via supervised updates: The system adapts speaking rate, prosody, and linguistic complexity to the user’s current cognitive state; maintains a consented personal knowledge store to tailor references and improve recognition; and learns daily routines so that reminders and prompts are context- and time-appropriate. The LLM will self-adapt its form of interaction to the special case of each patient’s history and behavioral patterns but also will perform primary diagnostical tests by gathering evidence from the dialogue and ESR (see

Figure 9). This implies that the speech recognizer is already adapted for elderly speech. Note that LLMS and TTS are available for most languages, making the service multilingual and globally available. The agent would conduct brief, socially engaging exchanges and deliver multimodal memory prompts (spoken and on-screen) that queue names, relationships, and upcoming events. When agitation or persevering questioning is detected by upstream classifiers or conversational signals, the agent will resort to gentle redirection to de-escalate. It will also elicit autobiographical reminiscence to stimulate memory. There remain technical challenges to fully autonomous operation; however, they are tractable with appropriate safeguards. Key risks include erroneous guidance, automatic speech-recognition (ASR) bias for older adult speech, propagation of false positives from downstream classifiers, and the need to enforce strict topic boundaries for the LLM (e.g., no medical diagnosis or medication advice). Accordingly, the system will remain assistive: it will generate caregiver-facing reports, monitor safety hazards, reduce loneliness, and support memory—under supervised autonomy, curated message templates, rate limits, and explicit human override.

Adapting Speech Processing Modules

As the older-to-younger demographic imbalance is likely to persist in the Western world—driven by cultural factors and advances in medicine—older adults present a significant opportunity for advanced AI applications. Existing ASR, emotion-recognition, and diarization systems should be adapted to this population.

Conclusions

Affordable, commodity sensors and AI can supplement—and in underserved settings partially substitute—in-home caregiving for older adults with dementia when dependable services are unaffordable or unavailable. We integrated recent advances in audio processing with LLM-based reasoning and highlighted the limitations of ASR in age-related voice changes, motivating a pipeline that leverages non-speech acoustic events alongside transcripts. This manuscript is a proof-of-concept, not a definitive clinical study. We prototyped an ESP32-S3 node with an onboard MEMS microphone and note that commodity smartphones and smart TVs are viable, low-cost recorders. Short audio snippets from daily activity are streamed almost in real time to a recognizer with 527 acoustic classes, with speech transcription and emotion inference producing structured cues that are passed to an LLM for situation assessment. We demonstrate end-to-end feasibility in a private home with real patients, introduce reusable tooling (open code/data schemas, structured outputs, safety policies), and provide early longitudinal evidence that clinically relevant signals can be tracked. The range of services under the same concept can be significantly expanded with the future incorporation of loudspeakers, house-robots and screens.

Appendix

The links of all toolboxes used can be found below.

General Audio Recognition

Audio Spectrogram Transformer (AST) applies a Vision Transformer to audio by converting waveforms to log-Mel spectrograms, tokenizing them into patches, and feeding them to a transformer encoder for classification. The library provides ASTFeatureExtractor to compute and normalize Mel features (AudioSet mean/std by default) and ASTForAudioClassification/ASTModel for inference or fine-tuning, with configuration parameters like patch size, time/frequency strides, and number of Mel bins exposed via ASTConfig. The docs highlight practical tips and show ready-to-run examples with the pretrained MIT/ast-finetuned-audioset-10-10-0.4593 checkpoint.

VAD

TEN is an open-source ecosystem for creating, customizing, and deploying real-time conversational AI agents with multimodal capabilities including voice, vision, and avatar interactions.

ASR

Whisper is a state-of-the-art model for automatic speech recognition (ASR) and speech translation. The Whisper large-v3 model was trained on 1 million hours of weakly labeled audio and 4 million hours of pseudo-labeled audio collected using Whisper large-v2. The model was trained for 2.0 epochs over this mixture dataset. The large-v3 model shows improved performance over a wide variety of languages, showing 10% to 20% reduction in errors compared to Whisper large-v2

ESR

superb/hubert-large-superb-er is a HuBERT-Large (hubert-large-ll60k) model fine-tuned for the SUPERB Emotion Recognition task. It takes 16-kHz mono audio and predicts one of four utterance-level emotions—angry, happy, sad, or neutral—following the standard SUPERB/IEMOCAP setup that drops minority classes to balance the dataset. The checkpoint is a port of the S3PRL implementation, providing a simple sequence-classification head on top of HuBERT’s self-supervised speech representations. Usage is straightforward via the Transformers audio-classification pipeline or the underlying model/feature extractor; the model card reports accuracy on the SUPERB demo split and includes example code. In practice, it’s a compact, well-documented baseline for categorical speech emotion recognition that you can adapt or fold into application-specific labels (e.g., “agitated” vs “neutral”).

Diarization

pyannote.audio is an open-source toolkit written in Python for speaker diarization. Based on PyTorch machine learning framework, it comes with state-of-the-art pretrained models and pipelines, that can be further finetuned to our data for even better performance.

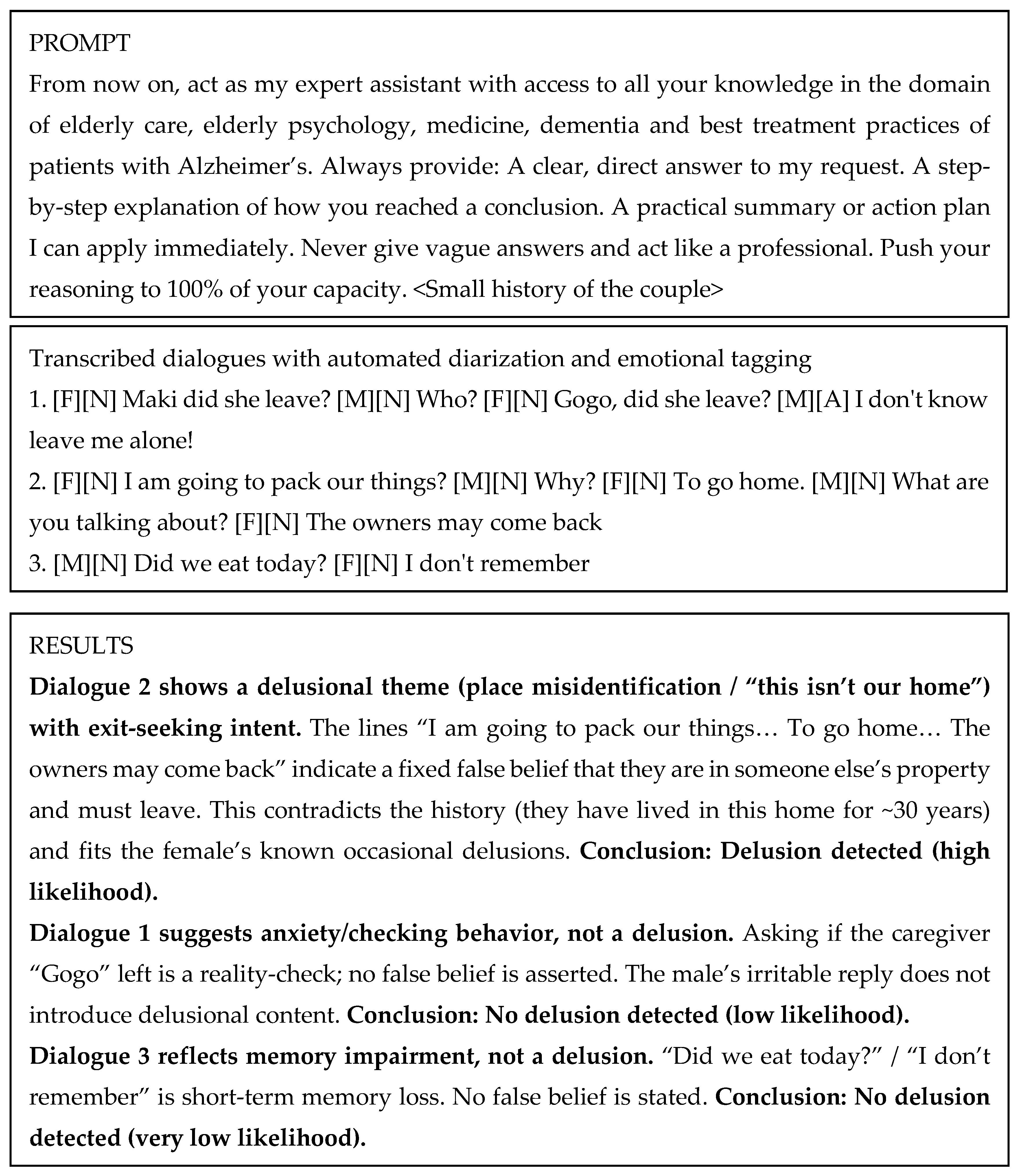

Delusion Detection

GPT-5 set at ‘thinking mode’. The prompts, the history and the dialogues should recreate the results on the delusional patterns detection.

PROMPT: From now on, function as my expert assistant with access to all your knowledge in the domain of elderly care, elderly psychology, medicine, dementia and best treatment practices of patients with Alzheimer’s. Always provide: A clear, direct answer to my request. A step-by-step explanation of how you reached a conclusion. A practical summary or action plan I can apply immediately. Never give vague answers and act like a professional. Push your reasoning to 100% of your capacity.

TASK: We have in-house recordings of speech/conversations of a couple with dementia stage 2. I will provide their discussions, and you need to answer queries based on these discussions and the small history of the couple.

SMALL HISTORY OF THE COUPLE

a) Female: 87; name variants: Vagelia / Vagelio / Vago / Vangelitsa. Male: 90+; name variants: Maki / Makis / Mak.

b) Diagnosis: both have dementia (stage 2); only the female has occasional delusions.

c) Mobility: both have mobility impairments; use canes; no capability to leave the home; they can’t access the garden due to a steep staircase; they can’t cook or make hot beverages.

d) Memory: acute short-term memory loss/forgetfulness.

e) Care context: they live in their own home; two daytime caregivers (Eve and Gogo) cover 8-hour shifts; caregivers have been working with the couple for two years; one adult daughter and one other relative visit occasionally; no other people are in the home; Their parents died fifty years ago.

f) Residency & property: they have lived in the current home for ~30 years; before that, only their youth home (>30 years ago); they do not own any other house.

Query #1: Detect delusional expressions in dialogues. [M]; Male, [F] Female, [A] Agitated emotional state. In absence of [A], emotional state Neutral

DIALOGUES

Dialogues and results on Q1 are appended to the manuscript as supportive material.

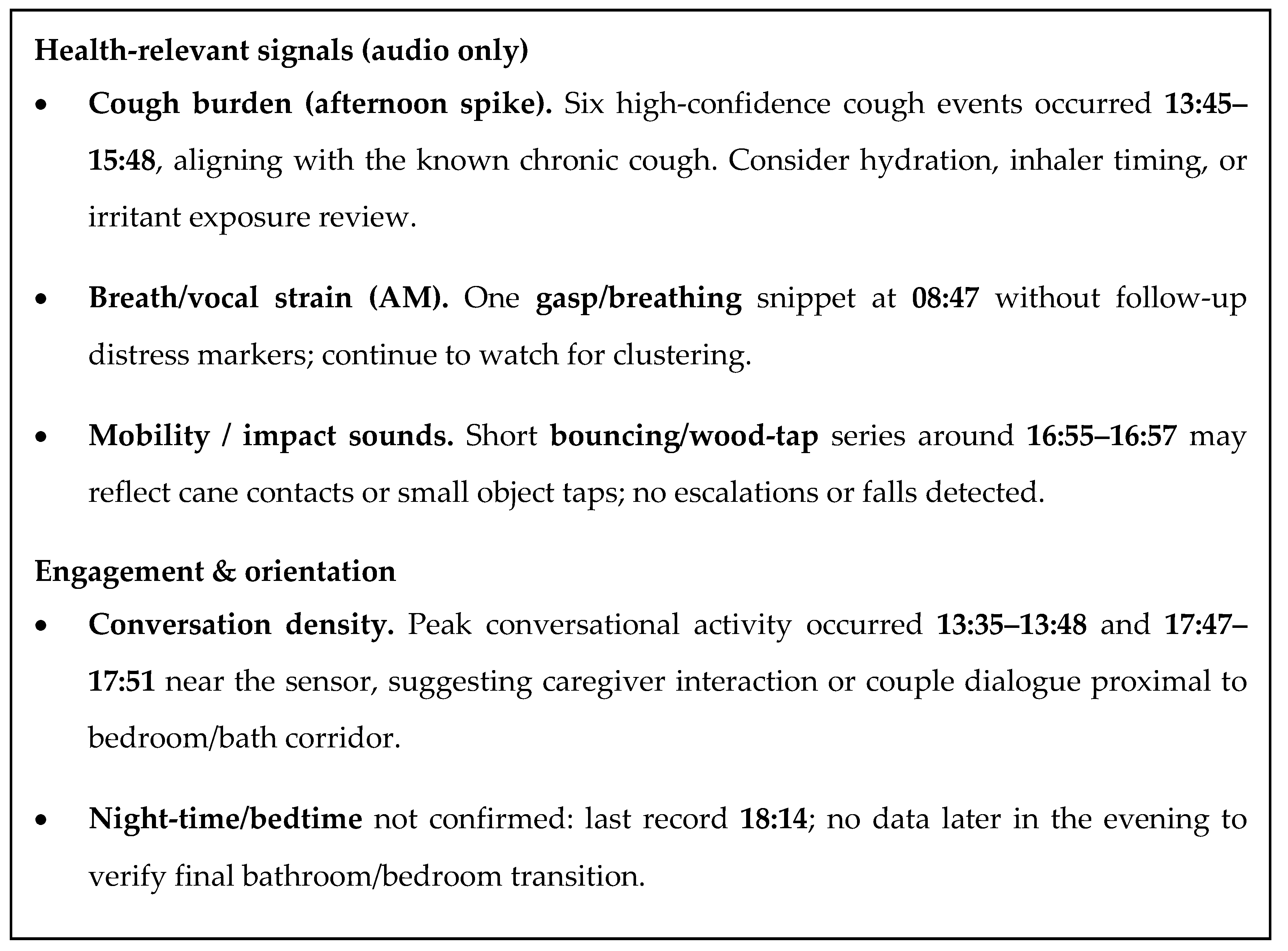

Caregivers’ daily summary

Query #2: Prepare a text-only report summarizing the main events of the previous day by parsing the attached JSON file containing tagged audio events of the previous day <day>. Each event receives the three higher rank labels and their corresponding probabilities and a timestamp. Consider all three classes as possible valid classes. Be brief, prioritize events that can be related to health, nocturnal activity and movement patterns.

ChatGPT-5 response: Here’s your concise daily audio report for Wednesday, Sep 10, 2025 (Europe/Athens)—focused on health, nocturnal activity, and movement patterns.

1) Direct answer—What mattered yesterday

Nocturnal cough episode from 04:32–05:34 with multiple high-confidence cough events (peaks up to 0.81). Likely disturbed sleep and airway irritation.

Evening respiratory activity around 21:26–22:36, including a strong cough at 22:35:48 (0.82).

Door/movement events at typical shift/transition times: 09:17, 10:25, 12:20, 18:12, 18:47, 20:45, 21:30–21:32. No clear fall pattern.

Single low-confidence impact (“thump/thud”) at 11:25:53—not suggestive of a fall given low probability and surrounding context.

Overall counts: 133 events total. Health-related: 47 (of which 18 nocturnal). Door/movement: 9. No high-confidence alarms/falls.

2) Practical action plan (apply today)

Health (coughs & sleep):

Log this as a significant nocturnal cough episode (04:32–05:34). Ask caregivers to note any triggers (dry air, reflux after late meal, recent cold).

Interventions today: ensure hydration, warm fluids (prepared by caregiver), avoid late heavy meals, keep head elevated during rest, check bedroom humidity (~40–50%).

If cough clusters repeat ≥3 nights/week or intensify, consider GP review (rule out GERD, post-nasal drip, infection).

Night routine:

Given repeated late-evening/early-morning events, consider a calmer wind-down from 20:30 (reduced TV volume, warm drink, bathroom check, brief reassurance).

Movement & safety:

Door activity matches shift changes; no fall signature detected. Keep current fall-prevention setup (clear pathways, canes nearby, night lights). Caregivers to quickly check after any future “thud/door-slam” timestamps

Query #3: At night, the couple is unattended by caregivers and there is no one other than the couple in home. There are no pets, no music, no tv set on. Pay attention to transcribed audio patterns in the attached JSON file that may involve a hit, fall, followed by vocal expressions of distress, pain and/or anxiety.

Figure 1.

Audio based care of elders with dementia based on the cooperation of audio processing and AI. Audio events are classified as verbal/non-verbal by an AST transformer. The verbal queues are directed at an emotion recognizer, a speaker diarization module and a speech recognizer. All audio events transcribed to text are fed to an LLM that detects events of interest (falls, delusions, pain) by integrating multiple information and reasons so that it prepares reports for the caregivers.

Figure 1.

Audio based care of elders with dementia based on the cooperation of audio processing and AI. Audio events are classified as verbal/non-verbal by an AST transformer. The verbal queues are directed at an emotion recognizer, a speaker diarization module and a speech recognizer. All audio events transcribed to text are fed to an LLM that detects events of interest (falls, delusions, pain) by integrating multiple information and reasons so that it prepares reports for the caregivers.

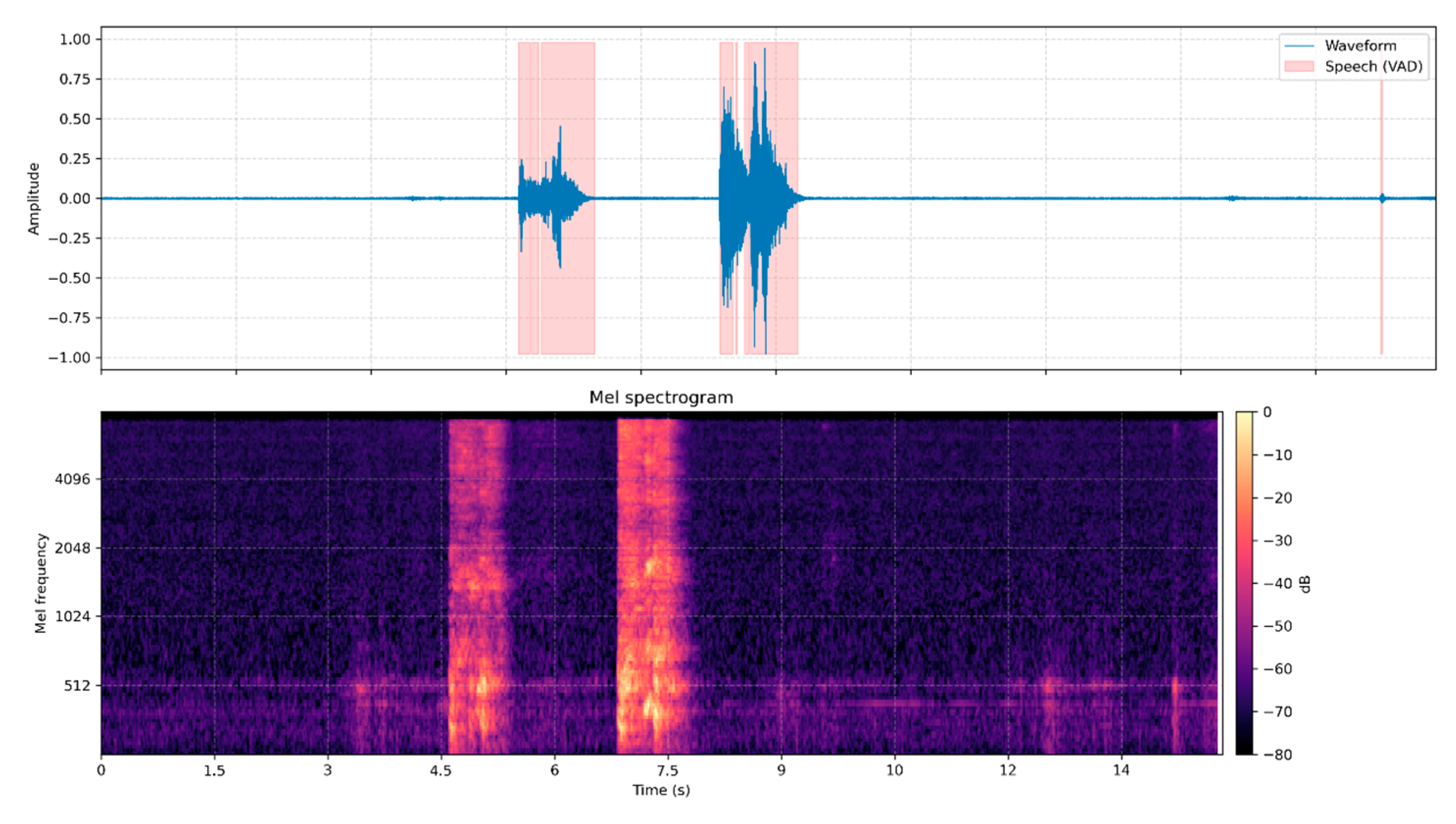

Figure 2.

(Left) An example of female elder speech (Event_id: 172751). (Right) Male elder (Event_id: 172738). Both speakers were 1-1.5m away from the microphone. Notice the lack of frequencies above 2 kHz and the pauses.

Figure 2.

(Left) An example of female elder speech (Event_id: 172751). (Right) Male elder (Event_id: 172738). Both speakers were 1-1.5m away from the microphone. Notice the lack of frequencies above 2 kHz and the pauses.

Figure 3.

(a) ESR predicts an emotional state for each speech frame because agitation sometimes manifests itself in bursts, (b) A calm conversation.

Figure 3.

(a) ESR predicts an emotional state for each speech frame because agitation sometimes manifests itself in bursts, (b) A calm conversation.

Figure 4.

Asking the system to report the audio incidents of ‘cough’, ‘sneeze’, and ‘throat cleaning’ of an elderly couple in an in-house setting.

Figure 4.

Asking the system to report the audio incidents of ‘cough’, ‘sneeze’, and ‘throat cleaning’ of an elderly couple in an in-house setting.

Figure 5.

(Top) Hourly activity of coughing from 17/08/2025-1/9/2025 and, (Bottom) time series visualization that assesses rate and trend in cough incidents. Note that the audio category is configurable and we can visualize any of the 527 audio categories of the AST ontology.

Figure 5.

(Top) Hourly activity of coughing from 17/08/2025-1/9/2025 and, (Bottom) time series visualization that assesses rate and trend in cough incidents. Note that the audio category is configurable and we can visualize any of the 527 audio categories of the AST ontology.

Figure 6.

Voice activity detection on a cough recording. (Top) The vocal segments are identified and shaded, and the RMS value of the event and the number of events can be measured precisely. (Bottom) Spectrogram of a typical cough event.

Figure 6.

Voice activity detection on a cough recording. (Top) The vocal segments are identified and shaded, and the RMS value of the event and the number of events can be measured precisely. (Bottom) Spectrogram of a typical cough event.

Figure 7.

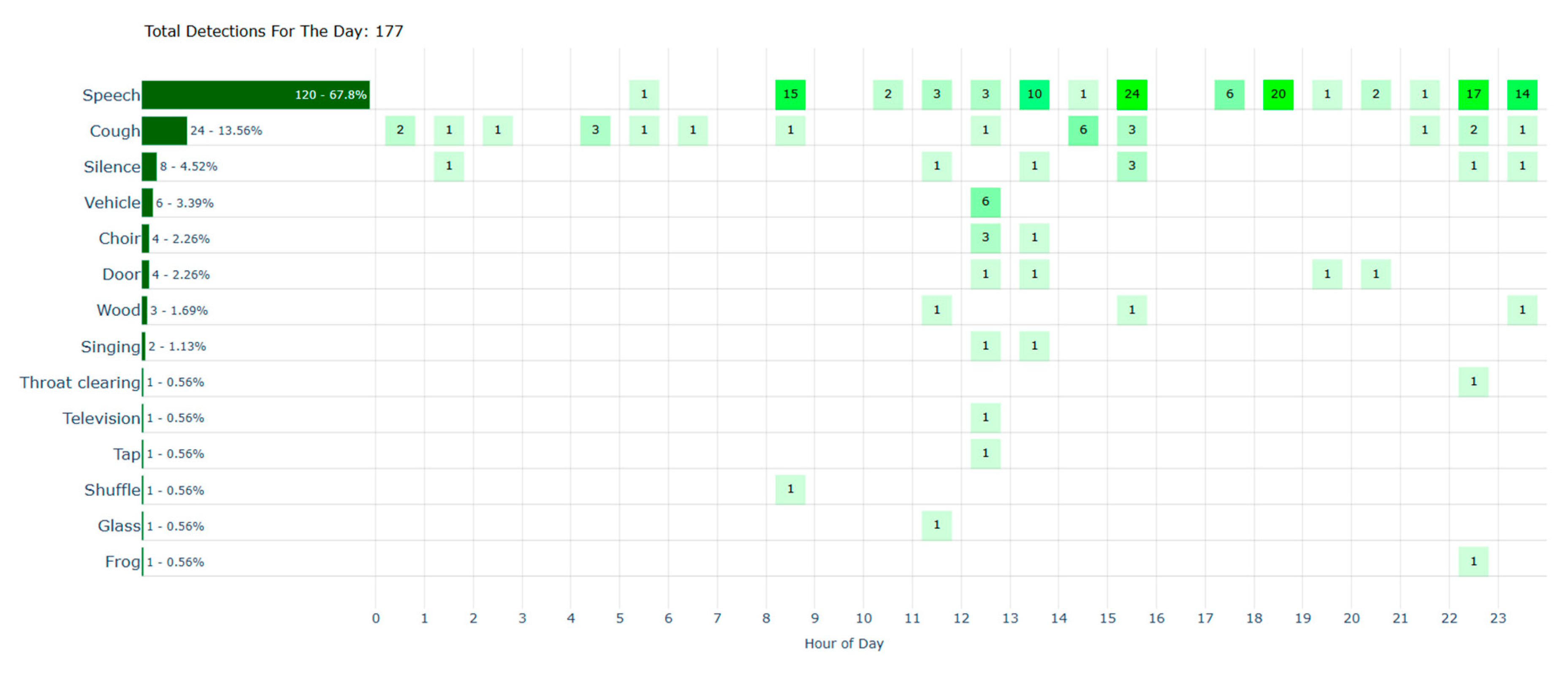

Audio event classification and hourly distribution (n=177) of an elderly couple with dementia in home. The Y-axis shows the detected classes and green boxes their relative rates, while the X-axis localizes their occurrence over time.

Figure 7.

Audio event classification and hourly distribution (n=177) of an elderly couple with dementia in home. The Y-axis shows the detected classes and green boxes their relative rates, while the X-axis localizes their occurrence over time.

Figure 8.

Confusion Matrix of emotion recognition between the ‘agitated’ and ‘neutral’ class.

Figure 8.

Confusion Matrix of emotion recognition between the ‘agitated’ and ‘neutral’ class.

Figure 9.

Future audio based elderly care with dementia based on audio and AI. The LLM engages in conversations with the subjects through a TTS module and loudspeakers and re-evaluates the situation as it evolves in time. It cooperates with actuators (screens, robots, telephone) and answers queries of a caregiver about the patients based on historical audiovisual data.

Figure 9.

Future audio based elderly care with dementia based on audio and AI. The LLM engages in conversations with the subjects through a TTS module and loudspeakers and re-evaluates the situation as it evolves in time. It cooperates with actuators (screens, robots, telephone) and answers queries of a caregiver about the patients based on historical audiovisual data.

Table 1.

Multilabel, Multiclass Metrics.

Table 1.

Multilabel, Multiclass Metrics.

| Metric |

Value |

| Subset accuracy |

0.66 |

| Hamming loss |

0.07 |

| Jaccard (samples) |

0.80 |

| Jaccard (micro) |

0.73 |

| Jaccard (macro) |

0.80 |

| F1 (samples) |

0.86 |

| F1 (micro) |

0.84 |

| F1 (macro) |

0.88 |

Table 2.

Single class Metrics.

Table 2.

Single class Metrics.

| Speaker |

Precision |

Recall |

F1-score |

Support |

| A |

1.00 |

0.92 |

0.96 |

12 |

| B |

0.98 |

0.77 |

0.86 |

57 |

| C |

0.97 |

0.60 |

0.74 |

53 |

| D |

1.00 |

0.89 |

0.94 |

9 |

| E |

0.78 |

0.95 |

0.86 |

19 |

| F |

0.89 |

1.00 |

0.94 |

8 |

Table 3.

Micro and macro-averaging Metrics.

Table 3.

Micro and macro-averaging Metrics.

| Average type |

Precision |

Recall |

F1-score |

Support |

| Micro avg |

0.94 |

0.77 |

0.84 |

158 |

| Macro avg |

0.94 |

0.85 |

0.88 |

158 |

| Weighted avg |

0.95 |

0.77 |

0.84 |

158 |

| Samples avg |

0.95 |

0.82 |

0.86 |

158 |

Table 4.

Metrics of the emotion recognition task. There are two classes: ‘agitated’ and ‘neutral’.

Table 4.

Metrics of the emotion recognition task. There are two classes: ‘agitated’ and ‘neutral’.

| Class |

Precision |

Recall |

F1 |

| Neutral |

0.91 |

0.91 |

0.91 |

| Agitated |

0.91 |

0.91 |

0.91 |

| Accuracy |

|

|

0.91 |

| Macro avg |

0.91 |

0.91 |

0.91 |

| Weighted avg |

0.91 |

0.91 |

0.91 |

Table 5.

ChatGPT-5 prepares a report summarizing key points of health-relevant notes for supporting the caregiver based on a daily file of recognized and timestamped audio events, the context of the application and a short history of the couple. At this stage of analysis, speech and conversations are not transcribed.

Table 5.

ChatGPT-5 prepares a report summarizing key points of health-relevant notes for supporting the caregiver based on a daily file of recognized and timestamped audio events, the context of the application and a short history of the couple. At this stage of analysis, speech and conversations are not transcribed.

Table 6.

Prompting ChatGPT-5 with a) small history of the couple, b) the context of the application, c) discussions, audio labels, diarization (Male, Female), emotion tagging (Neutral, Agitated). Diarization and emotion tags are in brackets.

Table 6.

Prompting ChatGPT-5 with a) small history of the couple, b) the context of the application, c) discussions, audio labels, diarization (Male, Female), emotion tagging (Neutral, Agitated). Diarization and emotion tags are in brackets.