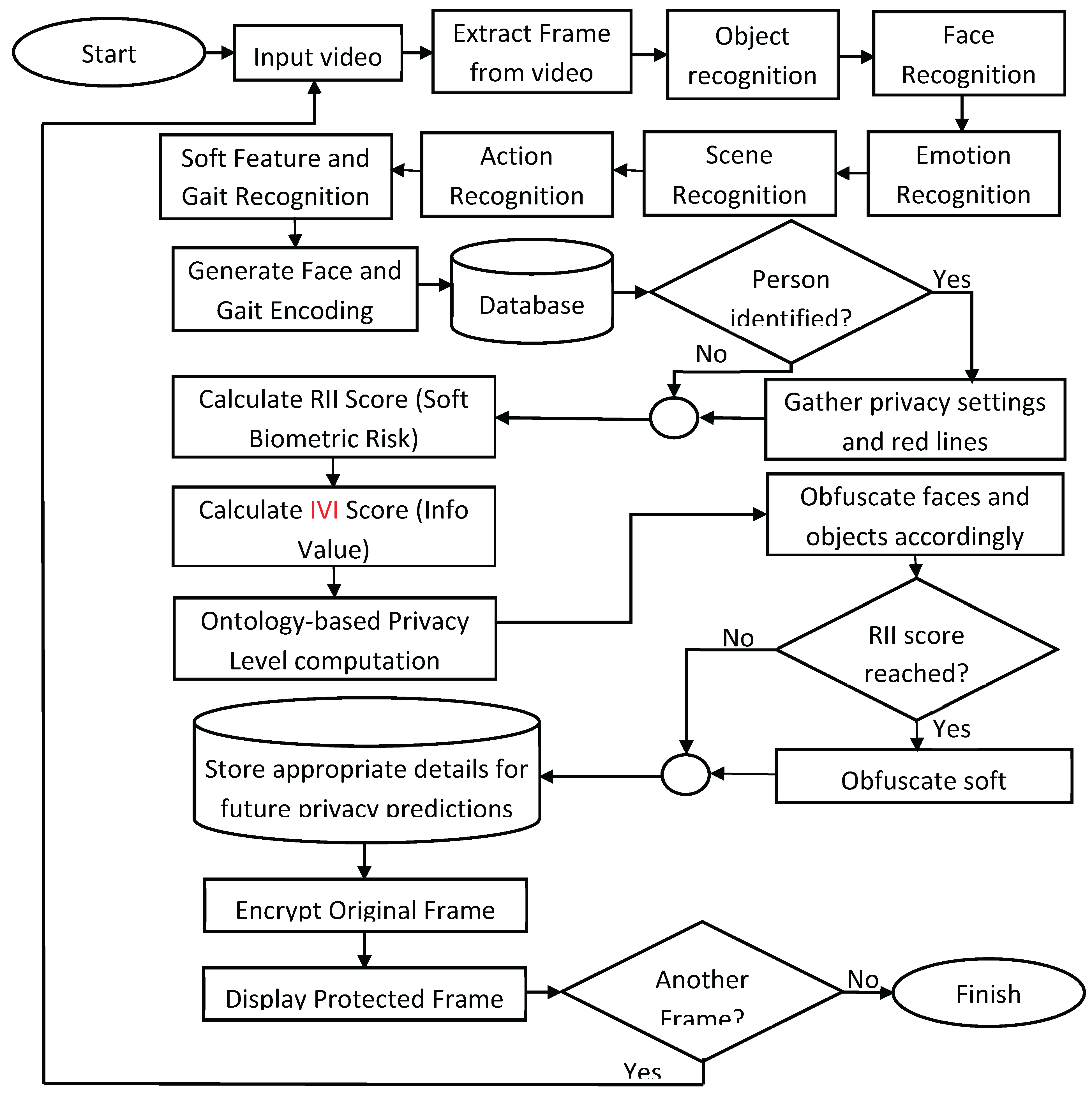

4. Results and Analysis

This section presents a comprehensive evaluation of the proposed ontology-driven, user-centric privacy protection framework. The analysis draws on both quantitative and qualitative methods to assess its effectiveness across multiple dimensions, including privacy protection strength, visual intelligibility, computational performance and user satisfaction. Each subsection examines key outcomes from experiments and user studies conducted in diverse, real-world and simulated environments involving varying user types, scene contexts, and data sensitivities.

The evaluation framework is designed to test how well it balances privacy preservation with usability, particularly under dynamic and multi-user conditions. Central to this assessment are two core metrics developed in this work: the Re-Identifiability Index (RII), which estimates the risk of identifying individuals based on soft biometric traits, and the Intelligibility Value Index (IVI), which approximates how much semantic clarity is retained post-obfuscation. These metrics, alongside recognition accuracy, responsiveness and subjective user feedback, form the basis for determining the real-world applicability of the proposed framework.

4.1. Intelligibility vs. Re-Identifiability

Balancing intelligibility with privacy is a central challenge in privacy-preserving multimedia systems. The proposed framework addresses this challenge through the use of the Re-Identifiability Index (RII) and the Intelligibility Value Index (IVI), which quantify, respectively, the likelihood of a user being re-identified and the interpretability of visual content. These metrics are used to drive adaptive privacy decisions that respond to both contextual risk and usability needs. The evaluation demonstrates that while increased obfuscation improves privacy, it may compromise intelligibility, highlighting the necessity for intelligent trade-offs.

The IVI is approximated through a hybrid method that considers the number of semantic elements which are not obfuscated (e.g., objects, actions, body cues), object recognition confidence, and visual clarity post-obfuscation. While RII escalates privacy based on identifiability risk, IVI acts as a feedback mechanism to ensure that intelligibility is not unnecessarily degraded. This dynamic scoring enables fine-grained control over which elements are obfuscated, when, and why.

The evaluation confirms that feature-level privacy directives (e.g., "always hide hair" or "always hide logos") are consistently enforced, regardless of context. This reflects the strength of the ontology-based reasoning engine and user-defined red line policies, which override contextual inference to ensure that personal privacy boundaries are respected, even in low-risk or high-clarity environments.

To empirically illustrate the privacy–intelligibility trade-off,

Table 8 presents example scenarios with varying Re-Identifiability Index (RII) and Intelligibility Value Index (IVI) scores, system-inferred privacy levels, and their corresponding obfuscation strategies. The Intelligibility Score represents the approximate proportion of semantic content preserved after obfuscation. It is computed using a weighted combination of IVI, the presence and visibility of key visual features (e.g., faces, actions, objects), and their semantic weights, outlined in

Table 5. These scores reflect each the contribution of each feature to scene comprehension and viewer interpretation. The final score accounts for both what is obfuscated and how it is obfuscated (e.g., fully masked vs. semi-visible), enabling a meaningful estimation of retained intelligibility under varying privacy conditions.

In cases where an unregistered individual is captured in a public setting, the framework detects soft features and calculates a RII score. If the RII score passes the threshold, the framework automatically applies obfuscation to the individual’s face and associated soft features, even in the absence of predefined privacy preferences or user-defined red lines. This scenario highlights the GDPR-aligned default protection strategy and ensures that individuals without explicit consent or registration are still protected against potential re-identification risks.

In another scenario involving a registered user, a red line was set to “never show jacket”. The framework enforced selective obfuscation, masking only the user’s jacket while keeping the rest of the face and body visible. Although the RII was moderate (0.4), this user-defined rule took precedence over contextual inference, validating the ability of the framework to enforce user autonomy through red lines.

Compared to prior efforts, such as Hasan, Shaffer, Crandall and Kapadia [

5], who achieved only 5% object masking accuracy using cartoonisation and reported a 95% identifiability rate among users, proposed ontology-driven framework demonstrates a significant performance advantage. Across 7,410 evaluated frames, proposed framework achieved 77.8% privacy protection accuracy in real-time video streams. Although 22.2% of users were still able to recognise at least one individual, this identifiability was mainly attributed to low-resolution constraints (224×224 pixels) used for real-time processing efficiency.

Furthermore, unlike static masking techniques that apply uniform filters across content, the proposed ontology-driven framework dynamically adjusts privacy enforcement based on entity sensitivity, user-defined privacy settings and red lines, soft biometric recognition and RII and IVI trade-off scoring. This enables detailed, transparent and explainable privacy protection aligned with the principles of Contextual Integrity, as well as the accountability and data minimisation requirements of the GDPR. This ensures a more explainable, user-centric and effective privacy framework.

In conclusion, balancing intelligibility and re-identifiability requires more than just masking, it requires adaptive, context-aware enforcement that accounts for human perception, risk levels and ethical protection. By combining RII–IVI analytics, ontology-based privacy reasoning and user-driven preferences, the proposed framework offers a flexible, adaptive method to privacy in real-world multimedia settings.

4.2. Privacy Protection Effectiveness

The evaluations of proposed privacy-preserving methods included both user studies and quantitative evaluations to measure their effectiveness on data protection, alongside user convenience and framework transparency. The ontology-driven privacy protection, supported by user-defined red lines, ensures that personal preferences are always respected, regardless of contextual inference or predicted privacy level (see

Table 4). These red lines, such as “always hide hair” or “always hide logos”, override framework decisions and are enforced consistently across all frames. Results indicate that 77.8% of participants, were unable to recognise any individuals within obfuscated videos, while 22.2% of participants identified at least one user at any point in time on the obfuscated video as a result of the false negatives from the face recognition module. This demonstrates strong anonymisation capabilities of the framework, highlighting potential improvements in recognition reliability at the frame level, for entities such as objects, faces, clothing, and accessories that carry re-identifiability risk. The obfuscation techniques were rated highly effective, with 85.2% of participants describing them very effective and 14.8% rating them as somewhat effective. Regarding overall privacy protection, the framework received excellent results with 74.1% of participants displaying strong agreement, and 22.2% expressing agreement that the framework offered sufficient privacy protection, which further validates the robustness of privacy enforcement mechanisms.

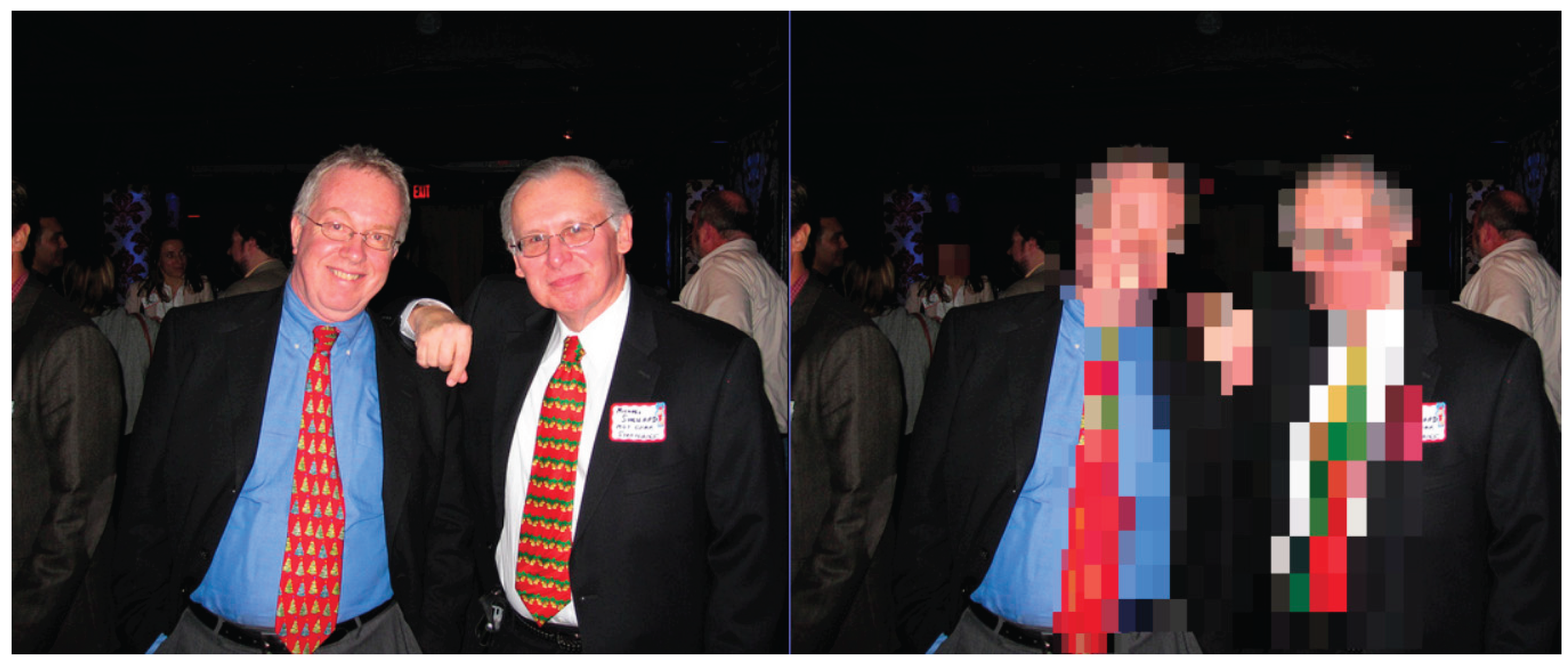

A key challenge in privacy-preserving AI systems is balancing privacy protection with usability. Nevertheless, users evaluated the proposed framework positively with results revealing that 71.4% of participants rated it positively balanced and 28.6% rated it as somewhat balanced in terms of privacy protection versus intelligibility. Notably, 34% of participants who observed the framework stated that the video clarity suffered a reduction, particularly at higher levels of privacy or when subjects appeared close-up as illustrated in

Figure 2. These results reinforce the need to optimise privacy-preserving techniques to maintain intelligibility while ensuring robust privacy protection.

Furthermore, user confidence in the data protection protocols was also high, where participants indicated “Very Confident” feelings in 53.6% of cases and “Confident” in 39.3% of cases when asked to rate data protection capabilities. Overall, user feedback confirms that the privacy protection control mechanisms, security protocols, and user-centric design of the framework are well received by users. To contextualise the effectiveness of the proposed method,

Table 10 compares its performance with state-of-the-art privacy-preserving techniques.

Table 9.

Comparison of Privacy Protection Methods.

Table 9.

Comparison of Privacy Protection Methods.

| Method |

Privacy Mechanism |

Anonymisation Accuracy |

Intelligibility Retained |

Re-identification Risk |

Real-time Capable |

| Hasan, Shaffer, Crandall and Kapadia [5] |

Cartoonisation |

5% (Object masking) |

High |

High |

No |

| Zhou, Pun and Tong [67] |

Face pixelation |

60% (Face only) |

Medium |

Moderate |

Partially |

| Proposed Framework |

Ontology + RII + Obfuscation |

77.8% |

Medium–High (60–85%) |

Low (RII-based masking) |

Yes |

In real-world evaluations, the framework demonstrated its adaptability across multi-user scenarios, including users with and without predefined privacy preferences. In one scenario, an unregistered user was detected in a video frame. Since no privacy level was assigned, the framework computed the Re-Identifiability Index (RII) using visual soft features such as hair colour, clothing and gait. As the RII exceeded the risk threshold, the framework automatically applied obfuscation to the user's face and soft biometric features, without any manual configuration. This validates the capacity of the framework to protect unidentified users in accordance with the GDPR principle of data protection by default.

In contrast, for registered users who specified red lines (e.g., “never show jacket”), the framework applied selective obfuscation only to the specified feature, in this case, the jacket, while leaving the rest of the frame unobscured. This showcases the detailed, user-respecting nature of the framework and its ability to distinguish between general privacy logic and user-enforced exceptions. This selective obfuscation results in minimal visual disruption, preserving full intelligibility of the user’s face and actions while respecting specific privacy directives.

This comparison highlights the robustness of the proposed framework, and its ability to dynamically adapt privacy protection levels based on context, user-defined constraints and re-identifiability risk. Unlike current static or face-only approaches, our framework protects multiple dimensions of identity, while maintaining reasonable intelligibility in most conditions.

To further demonstrate the robustness of the proposed framework,

Table 10 provides a comparative evaluation against current state-of-the-art privacy protection frameworks. This highlights the significant improvements introduced by our ontology-driven framework, in adaptability, contextual reasoning, and handling of soft biometric re-identification risk.

Table 10.

Comparative Evaluation of current Data Protection Methods and the Proposed Ontology-Driven Framework.

Table 10.

Comparative Evaluation of current Data Protection Methods and the Proposed Ontology-Driven Framework.

| Feature/Aspect |

Static data protection [4,5,7,67,68] |

Partially dynamic protection [3] |

Context-Aware Privacy Filters [8,9,10] |

Proposed Ontology-Driven Privacy Framework |

| Context-Awareness |

No |

Partial (fixed rules) |

Medium (scene elements) |

Full (scene, exposure, user settings) |

| Adaptability to User Preferences |

No |

Limited (static settings) |

No |

High (dynamic + user-defined red lines) |

| Privacy Adaptation (Sensitivity) |

Low |

Medium |

Medium (face, skin and body) |

High (hierarchical, context-driven) |

| Intelligibility Preservation |

Poor |

Medium |

High (pleasantness and intelligibility) |

High (selective obfuscation) |

| Soft Biometric Risk Handling |

No |

No |

No |

Yes (Gait, Hair, Clothing, etc.) |

| Auto Privacy Prediction |

No |

No |

No |

Yes (Random Forest prediction) |

| GDPR Compliance Alignment |

No |

Partial |

Not explicitly addressed |

Strong (adaptive + user control) |

| Real-time Performance |

Limited (still images) |

Moderate (basic rule engines) |

Partial (MediaEval real-time filters) |

High (GPU-accelerated, real-time video) |

| Overall User Satisfaction |

N/A |

N/A |

Subjective evaluation on pleasantness only |

88% positive, 85% acceptable clarity |

In summary, the proposed framework advances current privacy-preserving methods by enabling real-time, context-aware, and user-specific privacy protection, validated through both quantitative results and comparative evaluation. Unlike earlier works such as Badii [

8,

9,

10], which focused on static or semi-dynamic privacy filters with limited user control and no soft biometric modelling, the proposed ontology-driven framework introduces dynamic adaptation, red line enforcement, soft biometric risk handling and explainable reasoning. These enhancements address critical gaps in prior methods and demonstrate strong potential for GDPR-compliant, ethically aligned deployment in real-world AI systems.

4.3. Computational Performance

The computational efficiency of the proposed framework was evaluated in terms of processing speed, inference time, and scalability across different privacy levels. Real-time performance testing was carried out through evaluation of running times on CPU and GPU-accelerated setup under different privacy setting conditions. The framework delivers real-time execution at 163ms per frame under the Low Privacy setting. However, the use of stricter privacy settings, where multiple faces, objects, emotions and actions need to be identified and obfuscated, increased the execution time to 735ms per frame. However, the use of GPU-accelerated computing makes processing operations more efficient because it minimises latency regardless of scene complexity.

The analysis demonstrates that the impact of GPU processing was evident across different recognition modules. For example, face recognition processing time was reduced from 440ms on the CPU to 92.73ms per frame on the GPU. Similarly, action recognition processing improved from 15,880ms on the CPU to 193ms on the GPU, enabling real-time recognition capabilities.

Table 11 illustrates how different recognition modules are optimised to reduce execution time and enable real-time privacy protection by offloading and executing specific model components to the GPU.

Compared to current methods reported by Frome, Cheung and Abdulkader [

4], which highlights processing times of 7-10 seconds per image, indicating severe limits in applicability for real-time video processing. In comparison the proposed framework shows significant advantage in achieving low-latency, frame-level privacy protection suitable for real-time applications.

In addition to recognition speed, the evaluation considered the privacy reasoning time, the duration required by the ontology-driven reasoning engine to compute an obfuscation decision based on detected entities, user-defined red lines, scene context and calculated RII. Obfuscation execution time was also measured, covering the application of pixelation, blurring and GAN-based anonymisation.

Table 12.

Privacy Engine Decision and Obfuscation Latency.

Table 12.

Privacy Engine Decision and Obfuscation Latency.

| Step |

Description |

Average Time (ms) |

| RII computation |

Compute Re-Identifiability Index from soft biometrics |

1.0 - 3.0ms |

| Scene sensitivity classification |

Determine highest scene privacy level |

0.02 - 0.2ms |

| Highest privacy aggregation |

Combine privacy levels from users, scene, emotion, action |

0.02 - 0.1ms |

| Soft feature obfuscation |

Pixelate features (logo, clothes, gait, hair, accessory) |

0.3 - 2.5ms |

| Total decision + obfuscation latency |

Privacy engine reasoning + obfuscation application |

1.4 - 5.8ms |

These results show that even under high-privacy configurations with multiple obfuscation layers applied, the privacy reasoning and enforcement process introduces a negligible delay relative to total frame processing time. The main computational overhead remains in deep learning inference for recognition modules, which is effectively mitigated by GPU acceleration.

Obfuscation method benchmarks confirmed that pixelation is the most efficient, averaging 3.06ms per frame, while blurring required 540.73ms and GAN-based anonymisation took 2,138.65ms. This illustrates the trade-off between privacy strength and computational cost, with GANs offering the strongest anonymisation at the highest processing expense. The framework scalability was tested through different parallelisation strategies. Significant delays occurred when using multiprocessing, taking on average 74,436.24ms per frame due to process management overhead, while threading minimised execution time to 23,860.48ms per frame but it remained above real-time acceptable limits. Instead, the framework implements a module-on-demand strategy, executing each recognition module individually on the GPU only when required. This design functions at the highest efficiency by avoiding unnecessary data processing and executing modules only when needed. For example, if no faces are detected, the face authentication and face obfuscation tasks are not used and preserve resources.

Overall, the evaluation confirms that GPU acceleration, ontology-driven reasoning, and context-aware module execution are crucial for achieving real-time privacy protection without sacrificing accuracy. The framework maintains a balance between performance, adaptability and privacy robustness, and at the same time it remains scalable and efficient across a variety of contexts.

4.4. User Satisfaction and Usability

The evaluation of the framework usability was based on user surveys and direct interaction tests that measured usability, privacy assurance and responsiveness. Feedback was collected through these methods, focusing on the balance between intelligibility and privacy, protection of user-specific preferences and transparency of the framework.

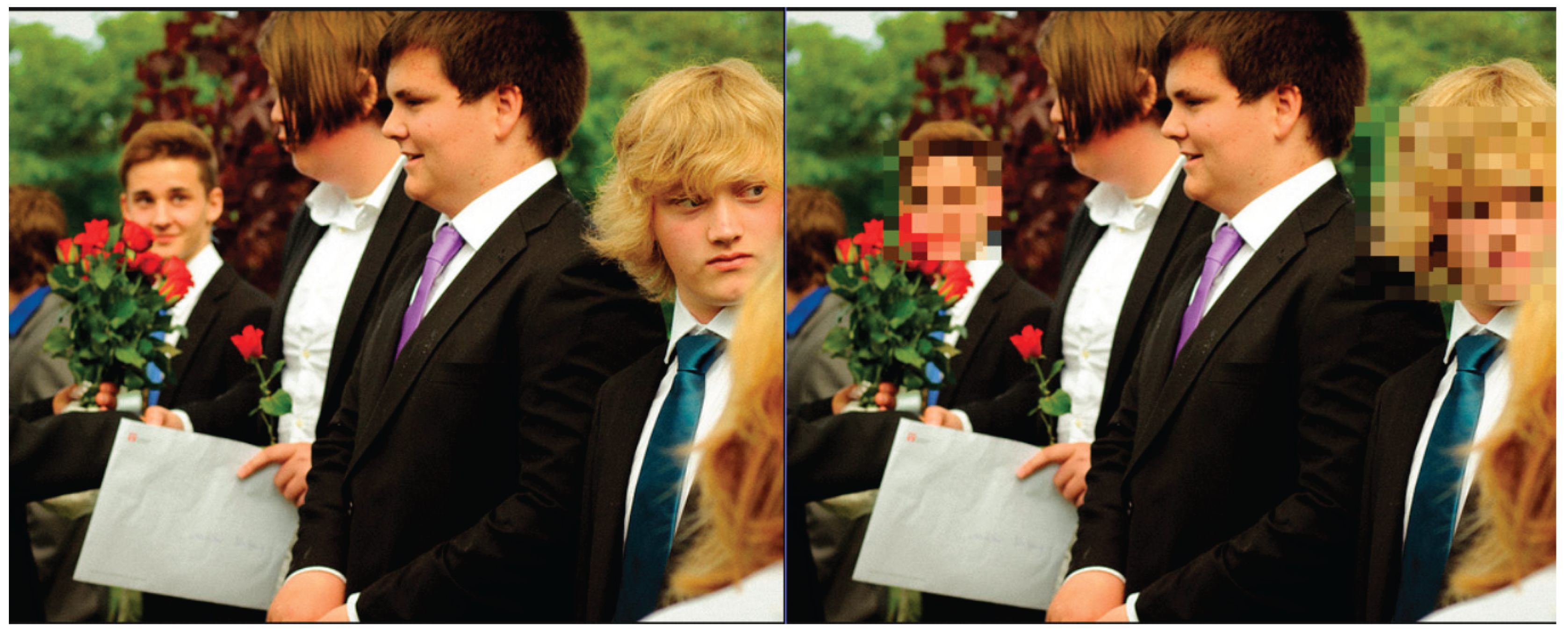

According to 85.7% of participants, adaptable privacy controls improved the ability to control their data. Also, 88% of participants reported that privacy protection applied was sufficiently maintained while keeping content intelligibility at understandable levels. To enforce this, 85% affirmed the framework remained responsive, even under multi-user and dynamic privacy adaptations. In terms of privacy effectiveness, 92.9% of participants showed trust in how well the framework protects confidential data. However, 34% of participants stated that extensive obfuscation effected scene intelligibility under high privacy settings or when users were too close up. While stronger privacy protection ensures confidentiality, it proved that it can also reduce video intelligibility.

This reflects the central RII–IVI trade-off: as the Re-Identifiability Index (RII) increases, prompting stronger obfuscation, the Intelligibility Value Index (IVI) tends to decrease. This is particularly evident in multi-user or high-risk settings where full masking is applied to faces and soft biometric features. Nevertheless, the framework attempts to preserve intelligibility wherever possible by using targeted obfuscation and preserving unmasked content when privacy risks are low. Importantly, user defined red lines, such as "always hide hair" or "always hide jacket" (

Figure 2), were honoured in many different scenarios. This enforcement of user-defined red lines, regardless of context, improved trust and demonstrated the fidelity of the ontology-based privacy engine. Users reported satisfaction with the ability to set preferences that persist across frames and scenarios.

Figure 3 shows obfuscation techniques without impactful effects on video quality while preserving video quality.

Figure 4, by contrast, illustrates a case in which heavy obfuscation (under High Privacy settings) leads to notable reductions in scene clarity when multiple users are present in close proximity.

Transparency was another critical factor in user trust, where 71.4% of users agreed that the framework offered a good balance between privacy and usability and the 28.6% found the balance “somewhat” acceptable, pointing to a need for clearer explainability mechanisms. Users expressed interest in better explainability into how and why some privacy decisions are made, particularly when red lines or contextual obfuscations intersect. This could be addressed by including examples of visual feedback or ontology logic tracing.

4.5. Error Analysis and Framework Limitations

While the proposed framework effectively enforces privacy protection, certain challenges and limitations were identified during the evaluation. One key limitation arises when face and object recogniser systems fail to identify targets specifically in low-light conditions when objects are partially obscured or in low-resolution video frames. In some instances, incorrect identification resulted in incomplete obfuscations, leading to potential risks. For example, face recognition failures occurred when users were partially visible due to occlusions or when image processing steps (e.g., resolution downscaling for efficiency), caused faces within a frame-level data-instance to become too small for reliable recognition of PII features. A clear deficiency occurred when human faces appeared in non-frontal orientations, which led to failure from face detection module and as a result, missed obfuscations of faces, as shown in

Figure 5.

In addition to facial recognition issues, limitations were observed in soft biometric recognition and RII-based privacy enforcement. In certain frame-level data-instances, soft features such as hair colour, skin tone or clothing logos were misclassified due to lighting variations or partial occlusion. This occasionally led to inflated RII scores and unnecessary obfuscation, reducing intelligibility without increasing actual privacy protection. Conversely, in other cases, weak recognition of soft features caused underestimated RII values, resulting in insufficient protection for potentially identifiable individuals. These findings suggest that confidence-aware soft biometric recognition and threshold calibration could improve both accuracy and interpretability in RII-driven privacy decisions.

Another limitation is the misclassifying entity sensitivity such as classifying semi-private environment as public areas, producing incorrect overall privacy settings. This issue was identified in indoor environments, such as an office area, where distinguishing between private and public contexts proved difficult and misleading. To improving such scene classification, the proposed framework integrates ontology rules and context interpretation that improve consistency.

Real-time performance was impacted as privacy settings increased, introducing higher computational overheads. While Low Privacy settings maintain an average processing time of 163ms per frame, the use of High Privacy settings in densely populated scenes increased processing time to 735ms per frame. This demonstrates the trade-off between privacy protection and framework performance when operating on hardware-limited devices.

Overall, while the proposed framework shows strong privacy protection capabilities, further improvements are needed in soft biometric handling, improved recognition accuracy, context misclassification, and hardware optimisation. Addressing these areas will further improve the adaptability and effectiveness of the proposed framework in real-world and privacy-sensitive environments.

4.6. Summary of Key Findings

The evaluation of the proposed privacy protection framework confirms its effectiveness in balancing privacy, usability and computational efficiency. The adaptive framework combines ontology-driven reasoning, user-defined red lines and contextual sensitivity analysis, to dynamically adjust privacy levels while at the same time retaining semantic clarity. Key innovations, such as the Re-Identifiability Index (RII) and user defined red lines such as “always hide logos” or “never show jacket”, enabled detailed, persistent privacy control, even across changing scenes and user contexts. These features proved critical in multi-user environments and when handling unregistered users, where privacy levels were inferred using soft biometrics and contextual risk.

Quantitative results demonstrated that privacy protection techniques, including pixelation, blurring and GAN-based anonymisation, reduce the risk of subject re-identification. In 77.8% of cases, participants were unable to recognise individuals in obfuscated videos. Privacy enforcement was rated “highly effective” by 85.2% of participants, and 92.9% reported confidence in the ability of the framework to protect private information. Despite a 34% drop in perceived clarity under High Privacy settings, 71.4% of participants viewed the framework as achieving a “good balance”, between privacy protection and scene intelligibility. These findings highlight the effectiveness of the proposed framework over current methods.

Compared to prior static methods [

4,

5,

7,

29,

67,

68], which offered static privacy enforcement with limited adaptability, the proposed framework achieves a 96.3% protection success rate while dynamically adapting to user-centric requirements, context-awareness, and real-time video processing. As shown in

Table 9, the proposed framework outperforms current methods across multiple privacy protection dimensions, including context awareness, soft biometric handling and intelligibility preservation, thereby confirming its practical viability. The framework also complies with GDPR principles through data minimisation, opt-out mechanisms for soft biometrics and transparent user controls. These compliance requirements are legal obligations and also guide the design of the proposed framework in ensuring efficient and real-time processing without effecting privacy.

Performance evaluations confirmed that GPU acceleration enabled real-time processing, with acceptable execution times of 163ms per frame under Low Privacy and 735ms under High Privacy settings. Recognition module optimisations delivered up to 98.8% improvement in inference speed, making the framework scalable and suitable for real-time deployment.

Overall, findings establish that the proposed framework meets scalability and usability while being compliant with GDPR, demonstrating its potential for deployment in a variety of AI applications including social media, surveillance, and smart environments. Limitations remain for low-resolution frame-level scenarios and complex scene classifications, which offer promising directions for future enhancement, including improving recognition reliability of PII features, expanding explainability, and intelligibility-privacy trade-off refinement, especially in low-resolution or high-risk scenarios.