Artificial Intelligence Techniques Overview

The increasing complexity of composite materials, ranging from multi-phase interfaces to programmable architectures, has driven a parallel advancement of the algorithms used to model, design, and optimize them. The ability of artificial intelligence (AI), of which there are many forms, to provide tangible and influential evidence on this transformation cannot be overstated. While machine learning and deep learning have proven to simply speed up traditional materials workflows, new frontier AI paradigms—quantum machine learning (QML), neural operators, physics-informed generative models—are expanding the boundaries of computational materials science. This section will cover these AI methods and what they mean for composite material systems.

3.1. Quantum Machine Learning (QML)

Quantum machine learning comes up with a promising platform to perform high-dimensional design and property spaces that are otherwise not computationally viable. The use of quantum parallelism, superposition, and entanglement in particular can result in more efficient searches in the space of complex configurations. Given the highly complex structure/property interactions, nonlinear responses of interfacial properties, defect topologies, and manufacturing processing routes themselves that can all come into play in composite materials, for example, there is some budding promise in QML methods. To illustrate, predictions of interfacial shear strengths in graphene-epoxy nanocomposites can be obtained using quantum support vector regression (QSVR) models implemented on superconducting qubit hardware. After training the QSVR model with 150 training samples, it has achieved 94% accuracy in its predictions - competitive with and in many cases superior to classical kernel methods, with the additional point of being a similar computation time due to quantum acceleration [

14]. Altogether, it indicates that QML is not merely practical, but possibly beneficial in lower data volumes, which is a frequent case in materials science.

Quantum combinatorial optimization (particularly that done through the D-Wave quantum annealers on fiber placement path planning problems) is another fruitful avenue. Being an Ising model, it optimized the orientation of the fibers that decreased the greatest stress concentration areas by 40% more in comparison to the normal gradient-based algorithms [

15]. This potentially is of much benefit to large composite structures (the prime examples are pressure vessels or wing skins), where an optimal path planning potentially eliminates the risk of delaminating or buckling.

Although this could be the case, industrial QML adoption is surrounded by obstacles. Even in the early generation of quantum hardware, coherence times are almost always below 100 microseconds, and error correction remains costly and in its infancy. Thus, the other hybrid quantum-classical models have been studied as a transitional stage in which quantum subroutines carry out key optimization functions in otherwise classical processes [

16].

Advanced Neural Architectures

Decades have passed since the introduction of convolutional neural networks (CNNs); nevertheless, recent advances have led to a new class of neural architectures with significantly improved learning efficiency and modeling accuracy. In recent years, a new range of neural models has emerged that have had considerable benefits in terms of learning efficiency and modeling accuracy - in particular, vision transformers (ViTs), graph neural networks (GNNs), diffusion models, and neural operators.

Vision transformers represent a shift to a global context as opposed to local pattern recognition. Dividing 3D micro-CT volumes into patch embeddings and passing through patch embeddings with multiple-head self-attention, ViTs have been successful at learning physically correlated but spatially distant defects. In a study to study barely visible impact damage (BVID) in laminated carbon fiber composites, a Boeing researcher had developed ViTs to align anomalies from thermal diffusion imaging from BVID along with subsurface delamination, and the model was able to yield an overall mean average precision (mAP) of 98.7% accuracy, vastly exceeding the depths of accuracy reported by conventional CNNs [

5].

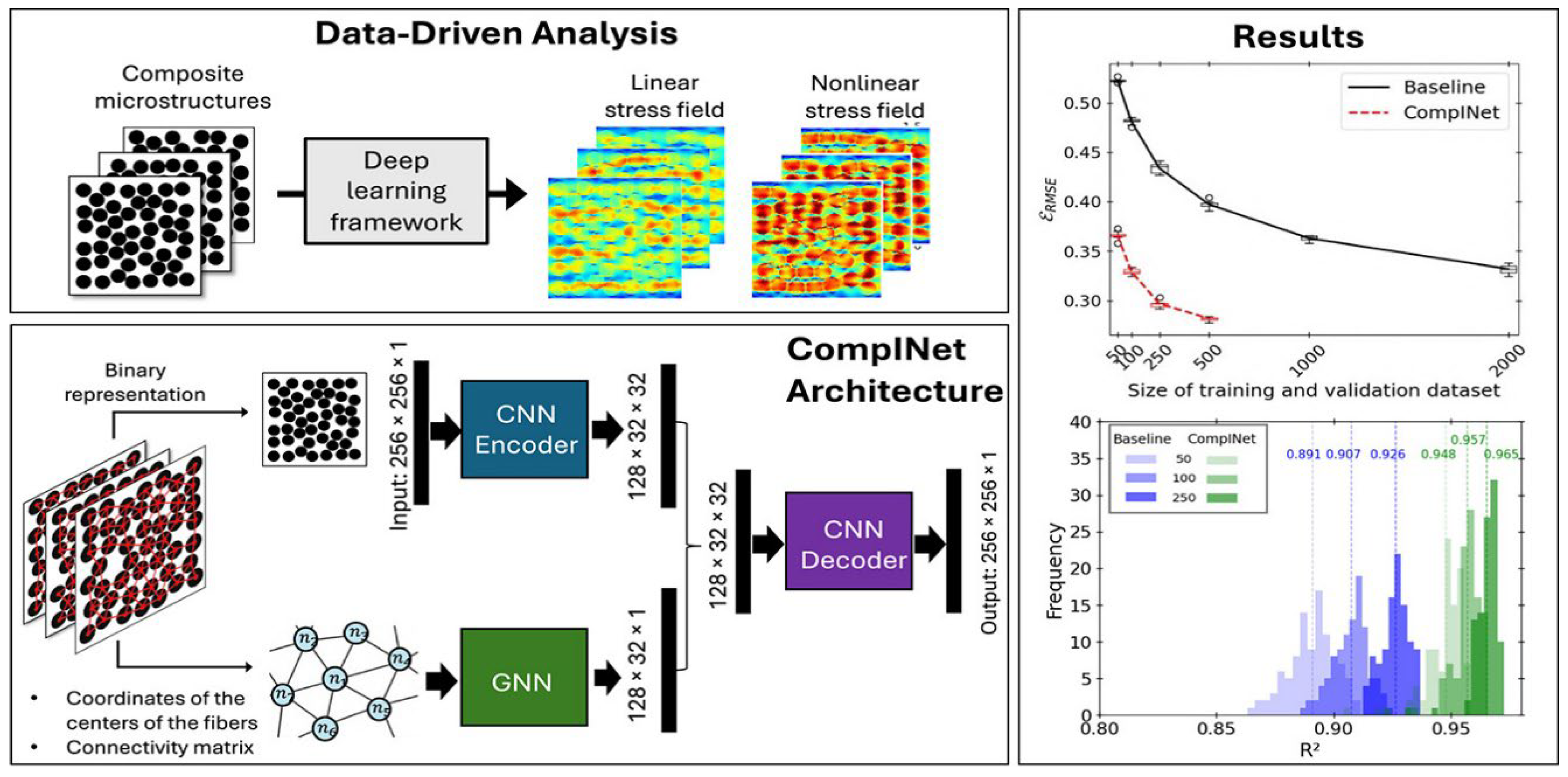

In contrast, graph neural networks (GNNs) offer an entirely new paradigm in which the edge structure of the components matters more than pixelation. In composite modeling, we might regard fibers as nodes, and the interfaces around them as edges, and think in a relational way across the whole microstructure. A simulation could show that GNNs could be used to predict interfacial failure stresses on a scale of 0.8 GPa of atomistic standards, as well as that it was more than 3 orders of magnitude faster than full molecular dynamics (MD) simulations [

17] (see

Figure 1).

Neural operators have the advantage of being defined over infinite-dimensional space function domains, thus making a distinction with respect to their use in learning solution operators of partial differential equations characterized around composite behavior, which has to be pinned down. Using Fourier neural operators (FNOs) to approximate stiffness fields for a recently-created mechanical metamaterial, 15,000 finite element simulations were used for training the model and reduced 18-hour computation times down to less than 70 seconds per simulation with an average relative L2 error of only 2.7% [

18]. Now, using these models to quickly homogenize stiffness fields, they are being deployed in real-time digital twin environments.

Originally designed out of an ability to help out in generative imaging, diffusion models have now been translated over to the generation of composite microstructures. Recently, researchers have begun to incorporate statistical priors and physical restrictions (such as void fraction and fiber alignment) to generate synthetic distributions of nanotubes that have up to 95% match with experimental µCT scans. Such a method will assist in guiding new data augmentation and virtual testing methods, and is particularly promising in cases where large imaging datasets are hard to obtain, such as nanocomposite designs [

19].

Active Learning Frameworks

The process of obtaining experimental data in composite materials is costly and time-consuming, not to mention often duplicative if not planned appropriately. This issue is resolved by active learning frameworks that pick samples that provide the most information to inquire and reduce experimental burden to promote learning standards.

Bayesian optimization (BO), because of its success in process parameter tuning, has become a popular technique. As one example, a Gaussian process surrogate model would have been utilized in one autoclave curing study. The model took expected improvement as the acquisition function to determine the best temperature and pressure cycles. The research took just 15 experiments to reach the 99.9% resin cure, as opposed to the 70% faster compared to the conventional grid search techniques.

Multi-fidelity modeling is also an important technique, especially where it can combine inexpensive but low-fidelity simulations (e.g., classical MD) with sparse, high-fidelity quantum mechanical data. As an example, a project headed by NASA created a multi-fidelity Gaussian process that correlated molecular dynamics (50 CPU-hours per iteration) with ab initio diagrams (5,000 CPU-hours). The computational cost of this project was scaled by 85% but still managed to get the accuracy to predict interface energy 0.05 eV/cm2 [

20].

Lastly, quantum active learning (QAL) is a new form that has not been fully studied yet, but can be used to minimize the experimental fatigue testing. Initial QAL models have reduced the necessary elements of fatigue testing by 70% through quantum kernel estimation and entropy-based query selection on IBM quantum devices, in order to show a complete mapping of S–N curves for composite laminates [

21]. Such advances indicate an important part of quantum (and classical) hybridization in active learning loops. These and other AI techniques applied to composite materials are summarized in [

Table 2].

Cross-Architectural Integration

This proved to be 22 percent better impact resistance as compared to classical optimization routines in a recently published article on helicoidal laminate design [

25]. Such methods are now shaping up in the arena of bioinspired and topologically designed materials.

The use of physics-informed generative adversarial networks (PI-GANs), where soft constraints are imposed on the adversarial training loop, is increasing and can be used to generate valid physically causal microstructures using minimal training data, with the constraints based on either an equilibrium or constitutive equation. The given is one implementation where there was a 60% reduction in the number of training samples needed to meet the fidelity of the stress distribution over the entire representative composite section [

26].

The method is particularly applicable in simpler regimes where labeled data becomes scarce or incomplete, such as new composite development or in-field structural health monitoring applications. Here, when physical laws are imbibed into the learning algorithm, the system is accurate and generalizes, but does not overfit to noise or data sparsely sampled.

Challenges: Scalability and Interpretability

Due to the rise in the complexity and capacity of AI models, their computational and practical boundaries will also start to expand. Scalability to large structures and interpretability, which is an attribute of any model decision, have become recurring topics that are experienced when applying AI in composite structures.

The scalability problem is of particular concern to graph-based models such as GNNs. Growth in size of geometric structures, for instance, a meter-scale aerospace panel, caused a quadratic increase in the number of nodes and edges and the accompanying challenges of memory and convergence. While replacing-and-artifacting, multi-resolution coarsening, blocking, pooling, etc., have been developed, accuracy is still substantially degraded at least beyond component-level geometries. One GNN-based model developed for a full-scale wingbox example maintained 90% accuracy in predicting global modes of deformation, but involved ad-hoc partitioning and algorithms that may not be replicable and which may not transfer to other geometries in general [

27].

Although attention maps of ViTs and GNNs can hint at the answer, usually, there is the underlying question of causal incentives between microstructure and properties that still cannot be conceived in a worthwhile hypothesis. Not to mention, in SHAP (SHapley Additive exPlanations) analysis of transformer-driven strength prediction models, resin-rich zones explained the largest fraction of the predicted failure areas in 73% of experiments- and this was by no means conflicting with the classical laminate theory, although there were still concerns that might lie in the overfitting capability of the models [

28]. This points to the fact that more rigorous frameworks need to be developed that incorporate explainable AI (XAI) tools with a validation using physics. Post hoc inferences that depend upon (and any other form of qualitative interpretations, which we can do) interpretability are much easier to relate to/obtain when you base the inference on forward simulational or sensitivity analyses that then have plausible motivations for the model predictions. Just as the performance of models is regardless of any sub-optimal conditions, one ought to be seeking guarantees beyond mere old-fashioned goodness-of-fit and into the question of whether or not AI models are sensible in the physical world. Moreover, quantification of uncertainties ought to be carried out in pace with the forecasted intervals, as well as to incorporate reassurance that a certain model attribution is available and sensitive, especially when one would like to implement such techniques in controlled sectors.

AI-Material and Inverse Design

To develop advanced composites, one no longer in general has to do forward simulations of properties: one has to sensibly search enormous design spaces, with the constraint that it must meet given performance requirements under diverse restrictions. Inverse design the specification and synthesis of material structures or compositions to satisfy particular functional requirements, is no longer a state-of-the-art goal, but is an emerging capability enabled by artificial intelligence (AI) combined with high-throughput experimentation, generative models and quantum computation. This section explains the usage of AI-powered models in changing the composite design flow, especially the area that is encompassing microstructural optimization, polymer-filler choice, and process-condition mapping. The coming together of generative models, and quantum capabilities and privacy-protected collaborative intelligence tools is redefining the way next-generation materials are conceptualized and industrialised.

High-Throughput Virtual Screening

Of particular interest in materials discovery has been high-throughput virtual screening (HTVS) in systems with chemically unlimited diversity (such as polymer composites), whose interfacial phenomena are difficult to make general statements about. Historically, property predictions for composites had to be modeled using atomistic or mesoscale (i.e., polymer scale) simulations for each candidate material, which is not feasible when screening tens of thousands of combinations. Recent innovations in such areas as natural language processing (NLP), automated feature engineering, and transfer learning have now increased the scalability of screening and more broadly the implications of HTVS platforms by orders of magnitude.

One of the key projects in this area is the Materials Genome Initiative, which developed an NLP pipeline to mine more than 200,000 journal articles in polymer science, extracting approximately 145 descriptors that are chemically and physically relevant (e.g., chain rigidity, hydrogen-bonding capacity, backbone flexibility, and electronegativity of side-groups) for graph convolution networks (GCN) (after being trained on additional datasets available in the nanocomposite domain). Through this screening platform, they could screen up to almost 100 000 virtual polyimide filler composites within a day.

Transfer learning holds a lot of potential benefit under HTVS. The transferability of pre-trained models on metal-organic frameworks (MOFs) allowed one to predict adhesion energies in carbon fiber/epoxy systems with a mean absolute error of about 8 percent, and training data needed very little training data [

29]. Such an extent of cross-domain flexibility is time- and compute-saving in the construction of chemically underrepresented composite systems predictive models.

Such evaluation systems are currently incorporated into robotic labs that can assist in loop closure in experimentations. The reinforcement learning agents will prioritize the candidates to synthesize and test and create a virtuous loop where the models improve as they have real-time input through experimentations. Such adaptive pipelines are the deliberations in the following decade supremacy of composite innovation hortatory cycles.

Real-World Applications and Industrial Deployment

Inverse design using AI does not remain just another theoretical or academic opportunity; it is already implemented in most industries and has proven performance and sustainability improvements, as well as faster certification procedures. Vestas used multi-objective reinforcement learning in wind energy to optimize the designs of blade designs against 14 mutually conflicting criteria, such as, e.g., fatigue resistance, buckling performance, cost per kilogram, and manufacturability. Vestas reduced the structural mass by 22 percent by using turbine blades that were 100 meters long and were able to comply with the IEC certification-related load assumptions concerning the turbulent wind.

European Space Agency (ESA) has led the way in using AI-based material design in an attempt to gain knowledge on how to combine carbon-fiber-reinforced plastics (CFRP) sandwich panels with the next-generation satellite antennas that are required to have extreme stability between thermal states. Having learnt to optimise these CFRPs through the use of physics-informed learning systems, the coefficients of thermal expansion (CTE) of these materials approached zero (CTE 00 0), and their coefficients of thermal expansion have been shown to be <0.05 ppm/K, which delineates the limit of beam fidelity in satellite communications [

34].

Ford Motors, a key player in the automobile industry, has actively explored the integration of flax-polypropylene (flax–PP) biocomposites as a sustainable alternative to conventional glass fiber reinforced plastics (GFRPs). Their initial research focused on replacing GFRPs in non-critical automotive components—such as door panels, dashboard inserts, and interior trims—where mechanical demands are lower but sustainability impact is significant. This substitution aimed to retain comparable performance in terms of stiffness, impact resistance, and durability, while also reducing environmental footprint [

21].

The two cases endorse potential opportunities of Zonk-produced materials and processes in high-stakes, regulated contexts, and they express the necessity to develop hybrid models that are able to use not merely mechanical efficiency, but also environmental sustainability and manufacturability.

Quantum-Enhanced Design Exploration

Quantum computing is beginning to influence materials design, particularly in the context of carrying out high-dimensional optimization and mapping energetic landscapes. Composite tasks of classical algorithms cannot be performed using combinatorial complexity: quantum annealing and quantum neural networks are being considered.

In one of the classical cases, D-Wave quantum computer optimized the layout of fibers in tungsten carbide aluminum composites using the Quantinuum Hybrid quantum D-Wave solver. The optimization exercise concerned a 512-variable placement optimization problem that traded off machinability, impact resistance, and wear life. This was because the optimized fiber layout led to an abrasive wear reduction of 40 percent and annual savings of approximately

$2 million on the cost of tool replacement [

35].

Quantum neural networks (QNNs), especially those running on superconducting qubit platforms like Rigetti’s 80-qubit Aspen series, have shown that they can compute binding energies of heterostructures in 2D. An example is an experimental parameter estimation study that has projected the adhesion energies of graphene and hBN with an error margin of 0.03eV over experimental values and also simulated a 1,000× faster throughput over traditional DFT calculations. It became possible to explore layered materials in a very short time as reinforcements of composites [

24].

These quantum designs, though, continue to rely on crude hardware like decoherence and qubit noise. Hence, the idea of quantum design has been subjected to industry through the exploration of hybrid quantum-classical workflows where the post-processing and guidance of the quantum results are available through classical processors.

Multi-Physics Coupling and Data Confidentiality

Most of the generative models are designed, and in action, the mechanical properties are mostly focused on; however, most of the composites are multi-physical in nature, given that they are a combination of mechanical, thermal, electrical, and even chemical loads in applications in the real world. Therefore, they have created the need to create inverse multi-physics behavior design structures using a single representation.

A significant contribution in this direction is provided by the platform of NVIDIA SimNet JAX, the work of which enabled learning operators with vector values to determine the elastic modulus and thermal conductivity of composite sensors, as well as piezoresistivity. In one of them, a multi-physics design model was constructed and trained based on MXene-polymer composites and could reach cross-property prediction errors of less than 10 percent in all three target properties. The findings can be seen as a good step in the development of multifunctional composites with a specific aim of sensing and actuation [

36].

In case of industrial application of material design based on AI, confidentiality is one of the primary concerns, specifically in competitive marketplaces. Federated learning has become the way to favor decentralized development of a model and be able to train a model on collective data without forcing the individual organizations to share raw data. Lockheed Martin, as an example, applied a fode-rated learning system combined with blockchain and homomorphic encryption to pre-train a GNN on 17,000 confidential samples of the composites of several suppliers, which managed to get 35 % more correct results in delamination tests without sharing proprietary data and intellectual property [

37].

In Context, such a privacy-preserving paradigm is a considerable cooperative element of the AI-materials environment, and these collaborations of suppliers, manufacturers, and governmental agencies can lead to conjoined innovations.

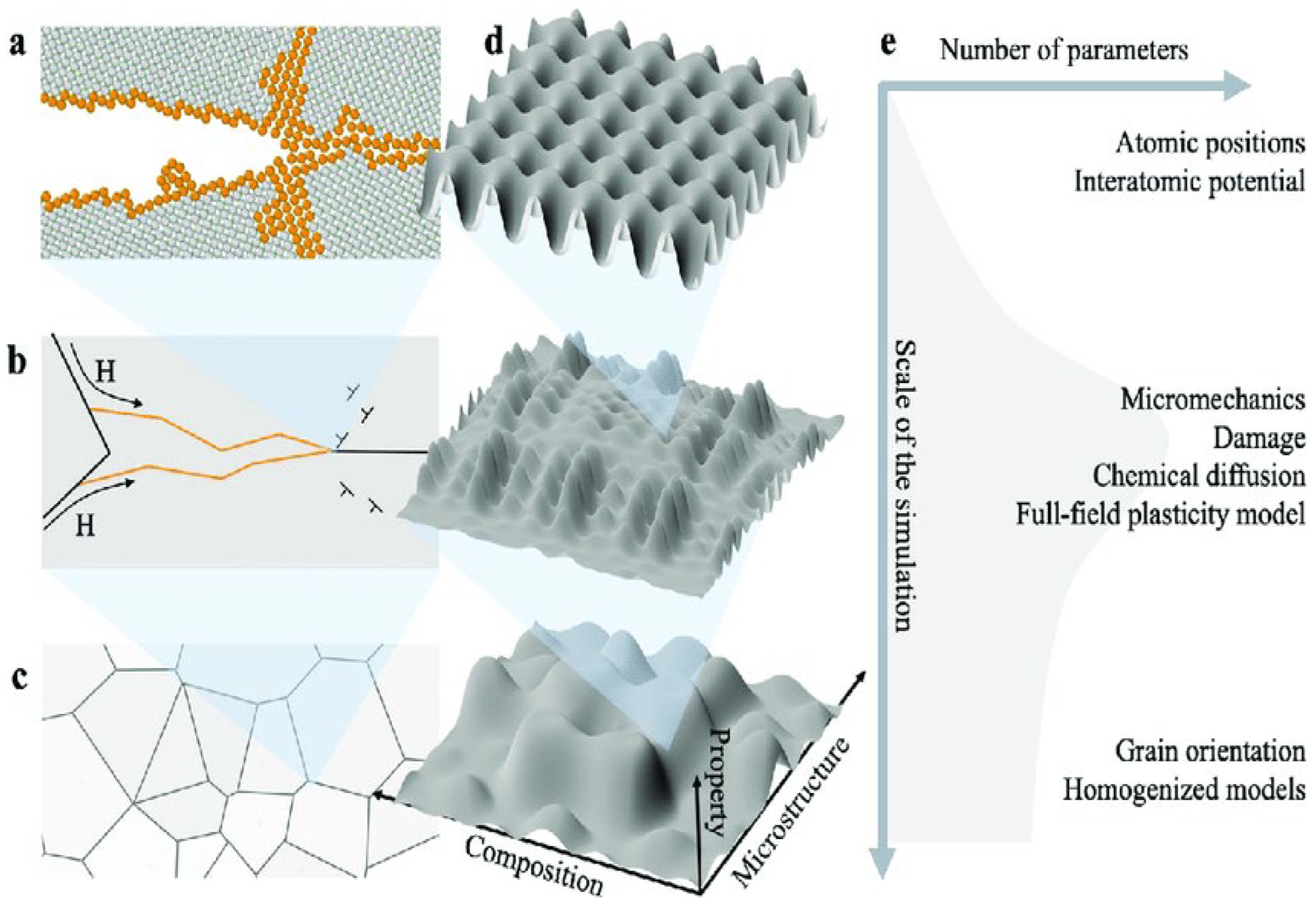

Surrogate Modelling and Multi-Scale Simulation

Composite materials are frequently developed, analyzed, and optimized on length and time-scales utilizing high-fidelity simulations (finite element analysis (FEA), molecular dynamics (MD), and density functional theory (DFT)) that are often not computationally feasible. Such tools are highly effective and precise, but a heavy price (in terms of computational cost) has always been attached to them, particularly when used to address parameter-rich design spaces or massive-scale systems. This state of affairs has been altered by the advent of surrogate modeling and AI-based approximators, which currently add to the portfolio the capacity to simulate materials systems in a scale-sensitive fashion, typically at the cost of an extremely modest loss of accuracy.

The intention of surrogate models, usually built using supervised learning, is to reproduce the input-output behavior of a high-fidelity solver. They can be applicable when there are repeated simulations, design space exploration, inverse problems, or uncertainty quantification. Operator learning, physics-informed neural networks, and hierarchical integration strategies in demand have allowed the transition of the domain of scales between atomistic and continuum and performed million-scale analyses within hours, rather than years.

AI-Based Operator Learning and Surrogate Solvers

The most promising surrogate model of materials simulation is the Fourier Neural Networks (FNOs). The FNOs may be considered as the models to learn the input- and output-space functional mapping

- i.e., global convolution kernels in Fourier space. By learning kernel representations in Fourier space, Fourier Neural Operators (FNOs) can directly solve partial differential equations (PDEs), thereby eliminating the need to discretize the physical domain.

A relatively new study pre-trained FNOs using around 20,000 high-fidelity, finite element analysis (FEA) simulations of the twill weave carbon/epoxy laminates [

38]. On displacement fields, the surrogate model was found to have a relative L2 error of 2.8 percent, as well as cutting the total number of simulations required, per simulation, to less than 1 minute, when it is estimated that the time per simulation is approximately 14 hours. The 1,200x speed-up made Airbus able to optimise on the order of 5.7 million laminate properties over 3 days, instead of the hundreds of years by a classical solver.

One such field of surrogate modeling, that has seen increased interest in the recent past, is Bayesian physics-informed neural networks (PINNs). Such modeling frameworks use physical laws (e.g., conservation laws, constitutive models), built into the training loss, and quantify uncertainty through Bayesian inference. Recently, a model of resin transfer molding (RTM) was used to simulate using PINNs enriched by Darcy and cohesive-zone equations and repeated it with less than 4 percent error using about 80 pressure sensor measurements (pressure versus time) [

39]. The conformal quantile regression also gave the model a 95% confidence interval of +/- 1.3 mm to give a precise prediction and put satisfying constraints without the need for more training samples [

39].

Similarly, in the context of these tools, one may consider a particular type of neural network known as a Deep Operator Network (DeepONet), which provides an alternative approach to learning nonlinear operators from function spaces — a task that is not easily or computationally feasible using classical methods. DeepONets have been particularly useful to predict the full-field stress distributions directly based on the maps of material properties acquired by computer tomography (micro-CT). As an example, a recent deepONet managed to obtain an error of 3% in predicting the stress distributions that involved the use of LSTM (Long Short-Term Memory) recurrent layers to determine crack-tip propagation under cyclic loading. Indeed, with this hybrid model, authors forecasted the number of fatigue cycles in 10,000 fatigue cycles and predicted the crack propagation paths with 89 percent accuracy compared to conventional use of cohesive zone simulations, and difference in speed and accuracy between 40 percent faster and accurate than the classical simulations.

Such operator-learning methods do not merely apply to problems that are static. They have so far been extended to transient and even coupled physics systems, including thermal-mechanical systems involving printed circuit board assemblies or even transient piezoresistive systems in MXene-based sensors. This can be generalized, in part, as the reason they are being quickly applied in industrial systems.

Multiscale Integration Frameworks

The ability of surrogate modeling is significantly enhanced by its incorporation in a coupled multiscale pipeline between atomistic, microstructural, and macroscopic phenomena. In order to ensure that behavioural characterizations at lower scales can be useful to predict large-scale performance, a hierarchical AI system is being developed so that large-scale simulators are not evoked at every level.

Interfacial energies have been predicted using DFT and MD simulations by using message-passing neural networks at the atomic scale. The qualities that are obtained as a result are required in fiber-matrix adhesion in polymer nanocomposites and hybrid laminates. After extraction, it is advanced to the microscale where graph attention networks are trained to predict the parameters of the cohesive zone model (fracture toughness, critical opening displacements) regarding the fiber distribution and the interfacial geometry.

At the macroscale, these local properties are summarized in terms of what is referred to as super nodes, which are meant to represent mesoscopic regions in the finite element mesh. As a result, whole-field stiffness maps and solutions to a displacement can be built by the AI model. In a single case study, this hierarchical surrogate was shown to halve the error in predicted stiffness (compared to experimental data) to 4.2%, and it quantifies prediction uncertainty using Monte Carlo dropout and Bayesian posterior sampling.

Measurement of uncertainty throughout the hierarchy of model abstraction initiates a kind of adaptive modeling whereby computational resources are tunneled to areas of high predictive uncertainty. The flexibility is particularly beneficial to safety-critical applications, aircraft wing spars, or wind turbine spars, where design margins must be justified.

Cloud-Native Surrogate Deployment

A surrogate model must not only be precise and fast to be effective on an industrial scale, but also should be deployable in an easy way. The degree of flexibility and availability of the cloud-native implementations will allow companies to create an AI surrogate with the level of integration they have in their current simulations and designs.

NVIDIA SimNet represents one of the most full-fledged platforms in this area that employs symbolic differentiation conjoined with physics-regularized loss functions to construct the surrogate model on a variety of multi-physics issues. In the composite warpage prediction tasks, SimNet was exploited. The training data required to train the model to predict warpage was decreased by more than 90 percent in comparison with conventional surrogate modeling practices. Automatic differentiation, parallel training, and GPU matter-of-course make the platform attractive to real-time applications in design loops.

Similarly, ANSYS (Sherlock AI) is a service that uses Gaussian process surrogate models that are incorporated directly into the current simulation frameworks like Abaqus. In another example, during reliability analysis of printed circuit board assemblies (PCBAs) with composite underfill materials, Sherlock AI cut the days that it takes to calculate composite reliability to less than 30 minutes (an example application can utilise the work of Sherlock as an implicit FEA analysis, and then use the GPO assessment to envelop it and be embedded within their legacy PCBA analysis). The value of being able to wrap surrogate models around commercial FEA solvers, in terms of productivity gain to an engineering team, is instantaneous.

Federated models’ deployment is also available in these platforms, whereby models can be trained collaboratively irrespective of teams. They allow updating of surrogates as soon as new information is available and thus will be applicable where field-monitoring structures or continuous production lines dominate.

Coupling Surrogates with Optimization and Design

Surrogate models are not only applicable in the process of emulating physics, but they are also the means of parameter search in design space as well as the inverse optimization. Surrogates are also differentiable and computationally cheap. This enables them to be applied so that the generation of the most efficient material structures or efficient processing parameters can be directed in the optimization algorithms.

Surrogate models have also been introduced to minimize the computation time to optimise the laminate layup sequences to take into consideration the shear stresses by load: that is, minimise the interlaminar shear stresses under multi-axial loading, where thousands of laminate layups can be considered in a second. This capacity to appraise sequences of layup by thousands allows access to global optimization strategies (e.g., Bayesian optimization, reinforcement learning).

In carrying out design-of-experiments (DoE) work, surrogate uncertainty-guideline learning loops can be used to very conveniently determine the next possible set of microstructures or parameters to be tested. The approach complements well the processes with high delays and costs of physical experimentation, such as: resin transfer molding (RTM), autoclave curing, or automated fiber placement (AFP) [

40].

Even more sophisticated methodologies combine multi-fidelity surrogates in which the cheaper models with less faithful representations are applied to pre-screen configurations, and then the more expensive ones are applied. This enables the generation of computational pipelines in a matter of hours, compared to several months using conventional approaches.

AI-Enabled Predictive Maintenance for the Property Prediction of Com- posites

Due to an increased level of structural complexity and multifunctional properties in the composite materials, it can be observed that there is an emerging transition, with the assessment of the performance that is not working on a stationary, design-factor but dynamic and data-based predictive maintenance (PdM) system. PdM means the form of advanced anticipation of the time a material will degrade and change in service performance throughout the life of a component that will lead to the opportunity of making decisions in real time, meaning it will prevent failure and maximize service life. Old diagnostic systems work in a prescriptive regime and are reactive in nature; that is, they only respond after a failure has been initiated [

40]. A predictive maintenance system that is AI-powered has distinctive characteristics since it conducts its predictions using machine learning and deep learning models to make assumptions on remaining useful life (RUL), preservation of material characteristics, and likelihood of failure over a period of time, utilizing available sensor data, simulation data, and physics-defined patterns.

Creating an online bond between the initial process of material design and field monitoring of performance, AI will introduce consistency in data and constructive products to a digital infrastructure where the material selection, participant instructional feedback on use, and examination of lifetime operations are proposed to be brought in circles. In the current segment, we present an illustration of how different AI models, that is, vision transformers, multitask networks, and graph-based surrogates, are redefining PdM of advanced composites regarding their mechanical, interfacial, fatigue, and multifunctional properties.

Static Mechanical Properties

The data on static mechanical properties (stiffness, tensile strength, and elastic modulus) have been established through either destructive testing or micromechanical approximation to a large degree. Nevertheless, prophecies of these properties, explicitly, in a non-destructive fashion on the basis of microstructure are now possible with recent advances in high-resolution micro-computed tomography (micro-CT) and deep-learning based models [

41]. A newly developed model architecture that was quickly adopted is vision transformers (ViTs): they can learn to extract global structure relations in sequences of images. This is the key distinction between the ViTs and convolutional neural networks (CNNs): the latter only work with local receptive fields, whereas ViTs have no restrictions on the length of the space to cover since they represent the micro-CT data as a sequence of image patches and use self-attention to learn long-distance associations between the microstructural components.

A ViT trained on 45,000 scans of short-fiber carbon/epoxy composites achieved a mean absolute error of 0.17GPa and a coefficient of determination R2 of around 0.93 in a recent paper [

6] in the prediction of tensile strength, which was more than 30 percent above ResNet-152. The ViT could identify global defect-stress correlations which are important in modeling anisotropic mechanical response, including void groups, fiber waviness, etc [

40]. Such potential to distinguish degradation mechanisms of concern makes ViTs especially handy in PdM applications, where the failure and degradation are a form of early loss of certain knowledge, which is important for the safety-critical decisions that must be made.

Atomic Scale Interfaces

Bonding energy between the fiber (reinforcement fiber, flake, or particulate) and the matrix material determines primarily the composite behaviors at the interfacial level. Interfacial adhesion is best explicitly modeled in a density functional theory (DFT), which, though extremely accurate, is computationally beyond the capabilities of exploratory studies or scale. Graph isomorphism networks (GINs) have been shown to be a computationally effective surrogate model when used in predicting the bonding energy on an atomic level [

41]. The atoms or clusters in the GIN model are considered as graph nodes with the connectivity indicating the types of bonds and the distance between the atoms.

As to interfacial adhesion energies, GINs could produce a MAE of 0.03 J m−2 with training on a database of 1.2 million DFT simulations over a variety of chemistries and end-terminations, and yet remain three orders of magnitude faster than DFT calculations [

42]. Such a speed-up allows screening of coupling agents, surface modifications, or nanofiller matrix interactions in rapid succession, and in the process, it already initiates the capability of interface-aware prediction and maintenance, exporting bond breakdown in an indirect sense via deviation in property.

Fatigue Life Prediction

Applications that are of the most interest are those that are subject to fatigue loading, such as the skin of an aircraft, a wind turbine’s blade, or an automobile leaf spring. Fatigue is one of the most significant failure modes in composites. A conventional fatigue test typically requires millions of cycles, and this is time-consuming, as well as expensive. Surrogates built on AI have been capable of following and forecasting the fatigue damage with remarkable capabilities, particularly the hybrid versions that are integrated with convolutional neural networks (CNNs) and long short-term memory (LSTM) units.

In another application, acoustic emission signals obtained during cyclic loading of blades in wind turbines were fed to a CNN-LSTM architecture, where spatial features were extracted, followed by the representation of the changes in the features over time. This model had been trained to predict the fatigue failure mode characteristics of the six fatigue failure modes, matrix cracking; delamination; fiber breakage; across 107 cycles; and predict the remaining strength of specimens within a 7 percent average error - and as such could be used in the field to predict the remaining strength and set triggering maintenance antes [

43].

There is a dual benefit of such hybrid models. The CNN constituent of the hybrid models identifies the spatial signs of the onset of fatigue (e.g., crack nucleation sites), and the LSTM module takes note of how such signs evolve (the history) with time under load. It is possible that hybrid models are suitable for embedded systems because the models can remain on low-power machines on the structure indefinitely, and the RUL planning can be done in real-time.

Multifunctional Property Forecasting

Besides having structural capabilities, modern composites will even be expected to undertake various functions such as dissipating heat, electromagnetic defense, and indeed serve as dielectric insulators. Multifunctional composites such as MXene-polymer hybrids are particularly complex since these are nonlinear systems in which properties are interdependent. In the case of predictive maintenance of this type of model, one needs not just to predict a single property, but to model the deterioration of all of the functions, at the same time.

This direction has proposed shared-encoder multitask transformers. There is only one common backbone to process examples of microstructural information (such as micro-CT or SEM) and numerous parallel branches to predict individual property targets with these models. A single task in which the corpus comprised 15,000 MXenepolymer composites, the multitask transformer produced an R2 of over

0.91 on each target variable, Young modulus, thermal conductivity, and dielectric breakdown strength [

44]. This outperformed or equalled a single-task network trained on a small set of parameters and with faster convergence.

Multitasking models offer the best prediction maintenance since they can determine coupled degradation routes. As an example, a fall in thermal conductivity can mark both thermal fatigue and delamination or microcrack development, which in turn lowers stiffness and dielectric properties.

Data Fusion and Transfer Learning

Efficiency of predictive maintenance systems is normally based on the presence of heterogeneous data sources -analytical models, experimental data, as well as in-situ sensor data. Different AI systems have to be able to learn in various fields, at various fidelities, and in various forms to put all those modalities together. An example of Bayesian frameworks to mix cheap, simple simulations and partial yet trustworthy experimental data can be multi-fidelity Gaussian processes (MF-GPs).

In a specific carbon-carbon composite case, MF-GPs combined the analytical findings of microme- chanics based predictions cheaply with a small set of toughness experiments in the lab, requiring only 15 percent of the experimentations to make predictions. The last model was formed using a model MAE of crack toughness of about 0.21 MPa. Such techniques are not only efficient at low cost and with small cross-sections of data, they are also the basic probabilistic techniques, and may give confidence bounds around predictions which can be applied to determine inspection intervals or safety margins.

Industrial Deployment of Predictive AI Models

The rate of industrial AI-based predictive maintenance adoption has gained pace in all industries, especially in aerospace, automotive and defense industries. Among the most outstanding ones could be the example of GE Aviation where graph neural networks are applied to simulation of creep deformation of ceramic matrix composites used in turbine blades. Those models displaced more than 80 percent of costly full-scale coupon testing and were acceptable under FAA regulation to shorten the certification process to months instead of years.

The BMW AG industry has already applied multimodal predictive maintenance system on carbon fiber-reinforced plastic (CFRP) auto body panels. The system enables the dynamic Bayesian networks to be used to constantly update noise-vibration-harshness (NVH) models in the cottage industry during prototype testing by merging data collected via vibrometry and with strain gauges. It enhanced the prediction of NVH by 42 percent and allowed more iterations of the design.

In the military, the Northrop Grumman has established dielectric spectroscopy models which are based on the transformers to forecast the permittivity and the loss tangent in radar-absorbing composite materials. The model obtained better than 96% accuracy at selecting the material in stealth materials where dielectric response is the key towards control of radar cross-section.

These applications mean that AI-based predictive maintenance is viable and practical with physical composites in real-life applications and is regulated to work.

Ongoing Challenges and Future Outlook in Predictive Maintenance

Even though significant progress has been made towards the universal integration of AI systems that are able to coordinate predictive maintenance, a number of barriers still exist. A barrier to this is microstructural anisotropy, in particular in fiber-reinforced systems. Fiber-reinforced systems’ performance is conditioned on the distribution of orientation of fibers, so microstructural anisotropic variance in an object makes it difficult to predict its mechanical properties by the conventional machine learning models. The conventional machine learning models only have a single chance of accounting for the geometry of the material, and do not experience any prior knowledge that the geometry could have spatial variability; hence, predicting the mechanical characteristics of an object whose microstructure is changing is fundamentally challenging to the conventional machine learning models. In order to address this weakness, a variety of multiple-instance learning (MIL) frameworks have been developed. The MIL framework implies that the sub-volumes of the microstructures were considered an “instance” when the microstructure can be spatially invariant but globally composed in order to formulate a prediction. To exemplify, in one of the works, researchers managed to decrease the prediction error of stiffness of sheet molding compounds by one-third (23% down to 6.7%).

The second issue, which is already getting more pronounced, is the sparse-data regime for biocomposites, particularly for biocomposites based on agro-waste fibers. These biocomposite systems are subject to natural variations due to the natural heterogeneity of source biomass, and this may render other standard practices of supervised learning highly impractical or even inapplicable. In the modeling of property prediction purposes with the use of prototypical networks, it was established that even a small sample of five was successful. The researchers indicated that they used about 15 times fewer screenings of biocomposites in the development of new biocomposites, and this allowed them to consider environmentally friendly materials at an earlier stage in the product design cycle [

45].

In the future, predictive maintenance systems will have an enhanced level of flexibility, confidentiality, and connectivity. This will involve the integration of feedback in real-time and always learning pipes and machine learning pipelines that can be explained as a part of predictive maintenance platforms in digitally facilitated scenarios.

AI-Assisted Manufacturing Process Modeling

As the composites move beyond the design phase and digital models to the actual aircraft, cars, wind generators, and consumer products manufacturing process, the ability to make it at scale, repeatable,

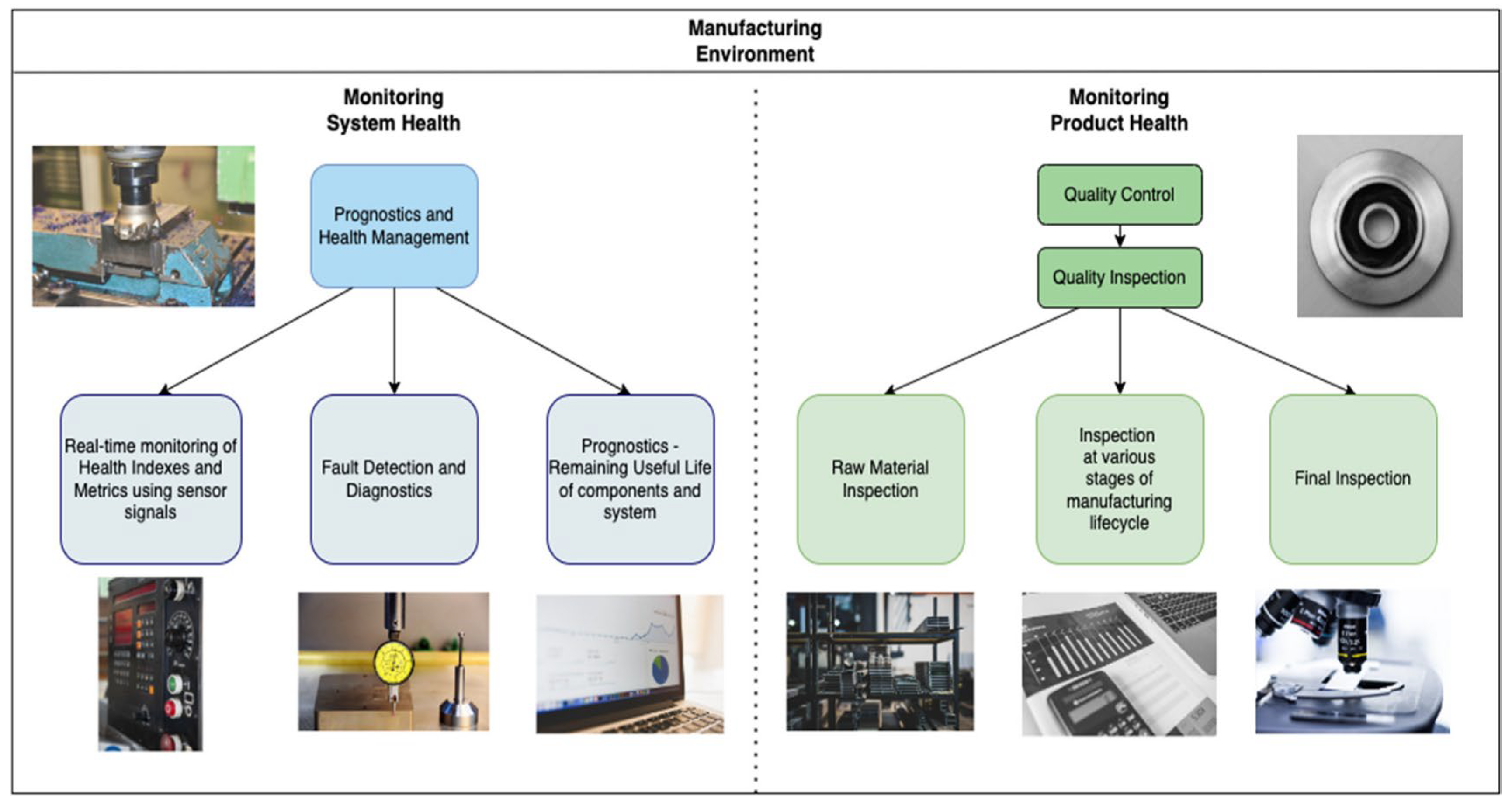

and at a low cost becomes more important. The manufacturing processes of a composite usually consume the highest percentage of the resources used during the life cycle of a composite, considering the strict measures on cure, placement, compaction, resin infusion, and thermal gradient control. Despite many decades of investment in research and development, there are still many challenges both in general terms of getting to high levels of throughput and in defect-free output (voids, dry spots, misaligned fibers). Among possible solutions, the introduction of artificial intelligence (AI) systems can be cited, offering functionality in the following terms: real-time control, defect mitigation capability, predictive parameter tuning, and closed-loop process improvement (see

Figure 2).

The conventional methods of control presuppose either that heuristics are known or that there is no objection to assuming that the relationship is linear. On the other hand, the AI-based models of processes will bring in the physics, sensor data, and past knowledge of the process, permitting the adaptation and intelligent decision-making that goes on throughout the processing of a composite. This section provides a review of the application of numerous AI techniques in multiple new composite manufacturing settings (reinforcement learning, Bayesian optimization, physics-informed neural networks, federated learning, etc.). In this section, the following automation processes will be described and reviewed in a circle, including an automated fiber placement (AFP), an autoclave curing, a resin transfer molding (RTM), and outlining a conclusion and company case studies that reflect their advantages regarding existing costs and performance.

Intelligent Process Optimization

One of the first and most sensible applications of AI has already been made in the composite manufacturing and in streamlining a multi-parametric process like AFP. Automated fiber placement is the deposition into a curved mold using robot arms. The AFP formed layups have a quality that depends upon various interdependent parameters such as the speed of the deposition, roller force, fiber tension, local curvature, and thermal aids of heat guns. Any one of these parameters may be incorrect, giving rise to a defect in compaction, bridging, or a void.

Autonomous learning, in particular, reinforced learning (RL) with an agent based on a deep deterministic policy gradient (DDPG) and trained using a time-based reward, has been able to autonomously optimize the process parameters around AFP. It is of great importance to define a reward function that will consider the minimization of defects under the same speed because this will involve the RL agents learning about the best settings of the parameters of fiber deposition speed (0.1 - 5m/s), the roller force (50 - 400N), heat gun temperature (300 – 600 °C), and fiber tension (5 - 40N). On one project with Northrop Grumman, these agents have halved the void content of the wing skins, down to about 0.45±0.12%, and achieved a 28% improvement in the layup throughput [

46]. The AI could support quick changes in local geometry, material batch properties, and environmental conditions, as well as dynamic control of the process more consistently and faster than it can be tuned in.

Similarly, there is autoclave curing whereby the composite parts are exposed to heat and pressure to compact resin and attain the intended curing, which has been greatly enhanced by Bayesian optimization structures. The models are correlated to ramp rates of (0.5-3 C/min), pressure curves (0-100 psi), and resultant degree of cure, exotherm temperatures, and residual stresses. According to Hexcel, using the AI-optimized cure cycles provided 99.5 percent of the curing with 73 percent of the standard cycle time. Moreover, this optimization achieved 18 °C lower peak exothermic temperatures and 0.12 mm/m lower final warpage, showing safety and performance features.

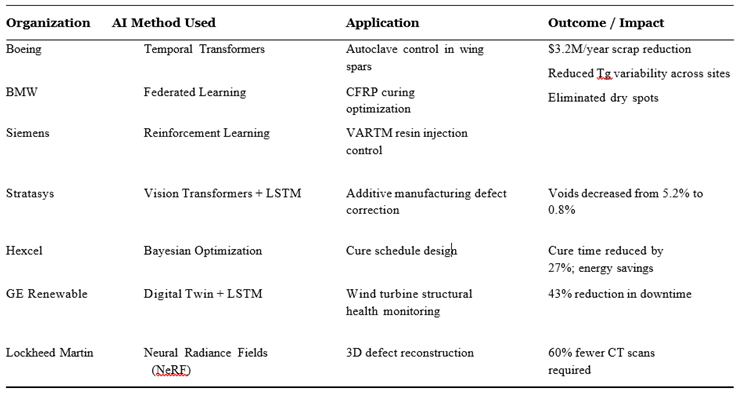

Industrial Case Studies in Intelligent Manufacturing

Various organizations have already gone past prototyping and currently employ AI-based process models in production lines to operate manufacturing. Boeing as an example incorporates the predictive model built in the autoclave control system used in curing the 787-wing spar. A temporal fusion transformer is built using the temperature reading data collected by 47 thermocouple-channels inside the mold to predict the temperature distributions inside the mold up to 8 minutes into the future. These predictions were used by an adaptive PID controller which was capable of keeping the through-thickness thermal gradients to with 1.5. The result is that this precision has contributed to shorter cure-induced flaws and a saving of over

$3.2 million per annum in scrap costs. The manufacturing of the CFRP chassis by BMW presented another challenge because the firm was producing all the components all over the world in several manufacturing units and at each site a different material and equipment were being used to produce the same. To harmonize quality assurance and not share process proprietary data, BMW implemented a federated learning strategy with two facilities training their own local models based on their own curing data and distilling these models into a global model. They found with a global neural network the variation in the predicted glass transition temperatures between the sites with a range of 14-2.3 degrees Celsius instead of sharing the raw values [

47]. Federated AI architecture guaranteed the quality of the worldwide facilities, safeguarding the intellectual property and adhering to the data sovereignty policies. The Siemens adhered to edge-computing solution to detect the vacuum-assisted resin transfer molding (VARTM) of 42-meter-long wind turbine blades during the resin infusion activities. Embedded devices U-Net architectures were used to do this analysis of the 25-channel ultrasonic data streams at 100Hz to identify the flow anomalies with an accuracy of 98.7%. Consequently, dry spots, which occur often when a large infusion is present, were completely eliminated along with the reworking sections and material waste.

Converging Modalities and Digital Integration

The application of AI in manufacturing is expected to shift quickly from isolated applications to a full digital twin of the manufacturing system: digital replicas of the manufacturing system that can make a virtual copy of the manufacturing process, allowing it to be simulated, monitored, and optimized in real-time. The AI leverages such twins by enabling a dynamic coordination of manufacturing actions by letting the AI maximize historical processes, real-time sensor data inputs, and prediction models.

The difficulty in this process is to determine how such modalities of data (thermal, mechanical, acoustic, and visual data) can be combined at spatial and temporal dimensions. As an example, in additive manufacturing pipelines, Stratasys has deployed a system that combines pools of vision transformers and LSTM networks. In combination with visual information, a thermal camera evaluation, and the application of the deposition process using the digital twin AI, an AI was able to reduce the quantity of voids in printed parts by at least 4.6 percent (5.3 percent to 0.7 %), presumably by readjusting tool paths and deposition settings. Such an example of a multimodal AI technology in the production sector will not only enable predictive actions to minimize the risk of failure on balance, but also the capability to repair faults before they become part of the structure. GE Renewable has started a digital twin of a wind turbine blade manufacturing line based on sensor data, physical modeling, and AI forecasts. LSTMs trained on historical SHM data were utilised to come up with anomaly forecasts that triggered pre-emptive maintenance, saving 43 percent of turbine downtimes and leading to an overall more efficient operation. This is because they are using neural radiance fields (NeRFs) at Lockheed Martin to construct 3D defect morphologies, which render 2D CT projections as a part of the combined effort to reduce the number of CT scans to test the properties of aerospace quality composites by 83 percent with regard to quality control. The 3D reconstructed models have been incorporated in digital twins that are used during design and post-manufacturing validation.

These illustrations point towards the hybridisation of AI, cloud computing, and physics modeling towards combined, intelligent manufacturing systems. There is a possibility that these systems will not only reduce defect creation but will also offer to predict schedules, automated inspection, and efficient use of resources.

Challenges and Outlook in AI Assisted Process Modelling

Although the benefits of AI-assisted manufacturing of composites are numerous, a number of challenges should be managed to translate the benefits of AI use to the maximum. Among the problems, there is the explainability of the decisions of AI. The actions of the AI should be interpretable by the end-user engineers and the regulatory body in case of safety-critical applications, e.g., aerospace applications where critical AI-based control action might result in catastrophic failure, and, at worst, death. The explainability concerns by impetus explainable reinforcement learning and symbolic regression overlays to the explainability of the AI connection between changes made to the parameters and results, like the content of a void or the quality of a cure.

Data sparsity and domain transferability are other problems of AI. Most AI models require immense amounts of labelled data, which may be hard to acquire in an industrial setting. Academics are looking into producing synthetic data through digital twins and sensor emulation under augmented reality in order to avoid the problem. Domain transferability is the primary challenge on the operational level,

And one will need the transfer learning strategies to re-train pre-trained models to apply to a new material, new machines, or new factories with minimal or no new re-training.

The value of cybersecurity and data governance is growing. With the adoption of AI systems in all world supply chains, it is important to ensure that process data remains confidential, yet it shares the model. Federated learning is now being tested out in some emerging methods that are built on homomorphic encryption, differential privacy, and secure multi-party computation to ensure against such threats.

In the future, there is little reason to believe that the use of AI in composite production will be restricted to isolated models or that it will not lead to more autonomous factories where machines, process trollers, and digital twins will collaborate and perform their duties under a common AI control. The evolution of the field of generalist, multimodel models, the rapid development of real-time simulation, and the self-correcting feedback loop will probably transform the face of manufacturing.

Non-Destructive Inspection and Structural Health Monitoring (SHM)

Noting the current proliferation of composite materials into safety-critical positions (e.g., aerospace, renewable energy, civil infrastructure, and transportation), long-term dependable structural integrity becomes desirable and required. In that regard, the NDT and SHM have emerged as the basis of composite reliability since it is rich in context, in-situ, and continuous hence breaking traditional approaches to the paradigm.

Enhancement by the use of AI in terms of deep learning and real-time edge intelligence is trans- forming SHM in its fundamentals. The outputs of sensors will not remain localized signals, they will be integrated, stated and situated in compounded virtual twins making it possible to ensure conditions, categorize damages, and even forecast life. This section speaks of how, taking the case of continuous improvements in intelligent composite inspection, new cross-modal sensor architectures, deep neural networks, federated learning and new sensors employing quantum and nanotechnologies can be deployed to composite monitoring and inspections. The section also considers companies that exhibit their utilization of machine learning that ultimately results in less down time, extension in service life and reduction in upkeep costs.

Multimodal Fusion Frameworks for Defect Characterization

Recent advances in composite inspection systems are putting greater and greater use to multimodal data fusion; in which we feed everything an NDT technique provides us and jammed it all together into one machine learning model. Concatenation of data of various NDT sensors e.g., ultrasonic scans and thermography has been performed as several cross-attention transformers that facilitated the multimodal diagnostics by assembling composite defects into one as a joint representation. This case is in the MERLIN systems launched by Airbus. The classification accuracy of the faults reached above 95%, on 17 different types of defects, the feeling was much better when compared with the shortcomings of spatial resolution of conventional NDT, to the extent of >15× better. Once more, multimodal building structures enhance the first-pass inspection accuracy and permit more automated interpretation; therefore, they minimize the dependence on the operators and speed up maintenance decision making.

Predictive Maintenance Systems and Digital Twins

Although finding the defects plays a vital role, prevention, i.e., preventive maintenance, is the final aim of SHM, a maintenance that will prevent failure before it happens. This requires models that can interpret the sensor readings in the sense of physical degradation mechanisms and predicting the useful life in operation. The hybrid digital twins are a recent advancement making this vision a reality. Perhaps the best-known example is that of the SHM system developed by GE Aviation to diagnose wind turbine blade cracks, in which mechanistic crack-growth models are coupled with recurrent neural networks. The system integrates strain data and acoustic emission data with environmental factors including temperature, humidity and ultraviolet exposure. The LSTM network analyzes temporal patterns in the combined strain, acoustic-emission and environmental data to detect early-stage crack propagation and forecast the remaining useful life of each turbine blade before damage becomes critical.

These can then be associated with previously proven degradation models thus predicting RUL with any error 8% over an exceeding 12000 operational hours and proved to allow in diminishing unplanned maintenance downtimes by 43% (see

Figure 3).

Real-time responsiveness has made Boeing post embedded edge-AI systems that can reason dis- tributed piezoelectric sensor arrays implanted on the control surfaces and fuselage of airplanes. The system has a quantized MobileNetV3 implementation of the detection of impacts above 5 J in less than 12 ms on an ARM Cortex-M7 microcontroller. Under this situation, when these events are identified, actuator forces are redistributed dynamically to safeguard damaged regions by load redistribution algorithms. These edge-AI solutions live by tight power and compute constraints, and they demonstrate how SHM intelligence is possible in-flight.

Emerging Sensor Technologies

The foundation of SHM on hardware is also changing rapidly and advances in energy harvesting, quantum sensing and optical methods are moving the threshold of sensitivity, resolution and life. The new generation sensors promise to make SHM systems scalable to large-scale composite structures due to their potential to be miniaturized, decentralized as well as improved.

The potential of technology has been demonstrated by the design of self-powered evolution to the IoT strain sensor system. These systems employ so called triboelectric nanogenerators (TENGs) which harness mechanical vibration of the very structure- e.g., the wing surfaces of an aircraft. Strain sensors and LoRa-based transmitters operate using the harvested energy and allow reaching long distances over distances without receiving external power. One field study on Airbus wing structure sensors demonstrated that TENG-based systems were able to operate continuously for over ten years, transmitting data without requiring external power or interruptions. The accuracy of the classifiers built on resonance frequency shift and trained on tinyML to identify impacts was 92%, which means this system is perfectly suited to SHM with inaccessible locations over extended duration times.

The next field that has caught up and instituted significant progress is that of quantum defect sensing, with nitrogen-vacancy (NV) centers in diamond nanopillars. Such quantum sensors sense the local magnetic field variations that change the photoluminescence spectrum of the NV centers, a Zeeman effect, sensitive to subsurface stress concentrations. These shifts were interpreted using quantum con- volutional neural networks (QCNNs) and determining stress patterns. Most recent experiments have demonstrated stress anomalies in spatial resolutions to be as narrow as 10 um, approximately 50 times finer than ultrasound systems, and early stage delaminations under compressive loads of up to 50 MPa with NV-based sensor configurations.

Laser ultrasonics have been renewed also, in particular in inspecting turbine blade and rotating parts. Plasma produced with femtosecond lasers allows generation of broadband Lamb waves (0.120 20MHz), that can travel through the structure and reflect at internal discontinuities. The returning wavefield is measured using interferometric sensors and using vision transformers as a trained model of temporal-spatial wave behaviors, porosity levels were classified according to the ASTM standards. The systems have also been effectively used in non-contact, standoff inspections up to a 3 meter range to provide safe and reliable testing of high-speed rotating parts including the wind blade joints and jet engine rotors.

Synthesis: Toward Autonomous Structural Integrity

With the development of SHM system, the idea of the complete autonomous structural integrity system becomes closer to the reality. Such a paradigm involves sensor networks embedded in the process of creation inputting real-time data into AI models that go beyond the ability to sense out damage, but place it in the history of the materials together with loading conditions and environmental exposures. Such models are used to update digital twins and then pass the information onto actuators, warning systems and even factories (via manufacturing loops) to use in designs moving forward.

At the core of this vision is closed-loop learning: damage identified in the field can drive retraining of predictive models, inform inspection activities in other similar structures, and even be used to feather back into design tools to avert the repetition. Consider an example of delamination identified in a composite aileron; not only can it result in a local repair at the time of the finding, but it may trigger recommendations modifications of reinforcement patterns in the future-generations design.

One of the important factors is scalability. Data coming into the future SHM systems is expected to be in the tens of thousands of sensors spread over fleets of air craft, kilometers of bridges, or hundreds of wind turbines. This scale will be enabled via distributed AI inference, federated model training and edge computing architectures. In addition, the standards of model validation, interpretability, and interoperability also need to change along with the technical capacity.

Regulatory and ethical implications also take precedence. In a global, distributed supply chain, who owns the data of SHM? What then should become of the practice of certification of assessments of damage produced by AI? How can the sensor readings be manipulated by adversaries and how to protect it? Although these questions do not belong to the technical realm of SHM per se, they will dictate how well AI-enabled inspection systems will work in practice and whether they are trustworthy. Finally, non-destructive inspection, structural health monitoring no longer are side players to the deployment of composites; they are part and parcel of smart materials systems. As the cutting-edge technologies of high-resolution sensors, the real-time inference, and predictive modeling converge, SHM becomes a new predictive, preventive, and prescriptive discipline, that will define the next chapter of composite reliability.

Challenges and Future Directions

Although AI in the field of composite materials has developed rapidly, there are still a variety of challenges in an area of cross roads scalability, interpretability, infrastructure, regulatory obstacles, and sustainable design hurdles. With uses of composites expanding to more high-hazard areas like, aerospace, automotive, and infrastructure, the margin of error is lower and requires frameworks that create an equilibrium between innovation and integrity. The section also provides insights into key obstacles to the next AI implementation in composites and identifies strategic options to overcome them that includes rarity of data landscape, explainable black-box models, and regulatory readiness.

Data-Centric Bottlenecks

The high level of performance of AI tools in the composite sphere and especially in the supervised and deep learning paradigms depends largely on the quality of the data, its diversity, and well-annotated datasets. Nonetheless, there are still a number of data bottlenecks in this respect in the field of composite materials a high density of data, lack of metadata to name a few that hamper both the performance and the reproducibility of the data that acts as a key barrier to success.

The fundamental analysis instrument of composite microstructures, high-resolution micro-computed tomography may require up to 15 terabytes of data per cubic millimeter to scan at 0.41 microCT resolu- tions. These volumes easily overwhelm conventional storage and network infrastructures to the extent that it is difficult to use central systems of learning and poses a problem of scalability of vision-based AI designs [

48]. In the same vein, the fatigue testing that will last 107 cycles will result in petabyte-scale acoustic emission data, which exceeds the throughput of current machine learning pipelines and needs new compression, sampling, or online learning methods [

49].

On top of raw data, metadata managers worsen the matter. A systematic literature review of published composite datasets showed that 68% of them lacked essential processing conditions, including temperature control during cure, resin batch numbers, or surface treatments of fibers [

50]. This compromises the validation of models and machine learning algorithms training, which require the ground-truth data to train the algorithms in a supervised learning process.

AI models developed with such incomplete and irregular data face the threat of being overfitted to a particular set of data and cannot be generalized. The confidence intervals of models are also left wide, even in cases of transfer learning or domain adaptation. This restricts their applications in mission-critical systems such as wing design on an aircraft or offshore wind blade launching, where failure may have disastrous effects.

Efforts are made to address these limitations. The generative diffusion model inferred by physics has demonstrated that it could synthesize microstructures with geometric precision of 95 % and this has opened the doors to the possibility of enhancing real data [

51]. Nevertheless, rare defect modes still pose a challenge to be accurately modeled using such models, being present in less than 0.01% of samples, but accounting for a large fraction of failure behavior [

51]. In order to overcome the data sparsity, active learning pipelines would be required, and they would be needed in order to select the most useful experiments that could be chosen so as to reduce the high costs of conducting a mechanical test and also the fatigue testing [

52].

Trustworthy AI Frameworks

Trustworthiness and interpretability emerges as particular issues when using AI tools as the decision-making processes in particular areas such as composite development and deployment. Specifically, applications touching structural safety or airworthiness have the requirement that the AI decision be explainable, consistent, and robust against noise or adversarial factors.

Most recent investigations that apply attention maps to vision transformers trained on the problem of compressive strength prediction showed that resin-rich areas capture more than 80 percent of the attention of the model. However, this cannot be fully compared to earlier predictions under classical laminate theory and that is why there were some doubts regarding generalizing the model [

27]. Equally, SHAP analysis on transformer-based models of strength prediction indicated the highest influence of resin-rich zones in near-field regions of predicted failure in 73% of the cases- which was not always accurate according to predictions made using classical laminate theory and was thus a cause of concern to the model generalization [

28]. This is a testimony to an acute lack of stricter frameworks based on the combination of explainable AI (XAI) tools and physics-based verification. Forward simulations or sensitivity analysis, combined with post hoc interpretability could alleviate the problem: not only can AI models work, but also they have to work in the real world. Besides, uncertainty quantification methodologies need to be further developed to apply to confidence in model attribution in addition to predictive intervals when the method is intended to be used in the regulated sector. These concerns become even more burning with changing regulations. The European Union AI Act which comes into force in 2025 will require high-risk AI systems to be subjected to strict requirements, among which are <5 % batch-to-batch predictive bias, resistance to sensor noise injection and 95 % coverage of conformal prediction intervals. This requires changing purely data-driven learning to mixed forms which account physics, uncertainty quantification, and domain-specific priors, as demonstrated by current industrial deployments (see

Table 3).

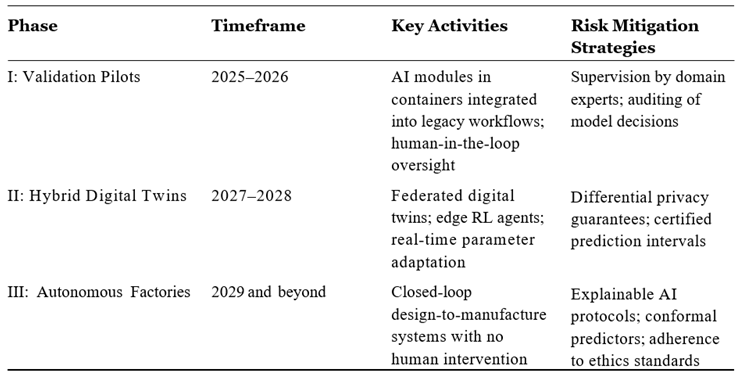

Industrial Deployment Roadmap

The way forward to achieving widespread application of AI in composites needs to be tactical and risk-averse where ambition is realized, and safety and dependability are not jeopardized. Based on the examples of the aerospace, automotive, energy industries, the roadmap of a gradual transition towards AI in the industry is taking shape (see

Table 4). Each step repays the technological ambition with safety and explainability.

Phase I (2025 2026) - Validation Pilots: During this step, legacy workflows are replaced with con- tainerized AI components, i.e., vision transformers as defect detection modules or PINNs as flow-front modeling. The pilots of these are frequently managed by domain professionals in human-in-the-loop the circumstances, where the decision made by models are contrasted to manual investigations. This step develops trust and exposes integration chokepoints in the users.

Phase II (2027-2028) - Hybrid Digital Twins: The second stage is the community of federal twins when AI representatives can communicate among different factories or lines. These twins combine the edge reinforcement learning agents which change process parameter real-time. The mitigation of the risk is applied by differential privacy and certified prediction intervals and edge computing in fail-safe autonomy. The autonomous factories should emerge in Phase III (2029+). The last step is the point where AI moves on to coordinate closed-loop design-to-manufacture systems. Models process sensor streams, optimize layouts, simulate performance and send robotic actions all without a human being in the loop. Explainable AI protocols, conformal prediction and ethics standards help in compliance.

Such gradual process means that AI does not merely supplement the current knowledge but eventually turns into a trusted co-pilot in the composite engineering.