3. Stage 1: Shannon Information and Riemann Surface of Logarithms

By the Hinton Hypothesis and its extension stated in

Section 1, we assume that there is an AI system which can duplicate a scientific theory such as quantum electrodynamics (QED). Regardless of the issue of understanding, what the AI duplicates is much of the information from QED. Shannon defines the notion of information mathematically in terms of the logarithm of probability. Self-information is mathematically defined as follows:

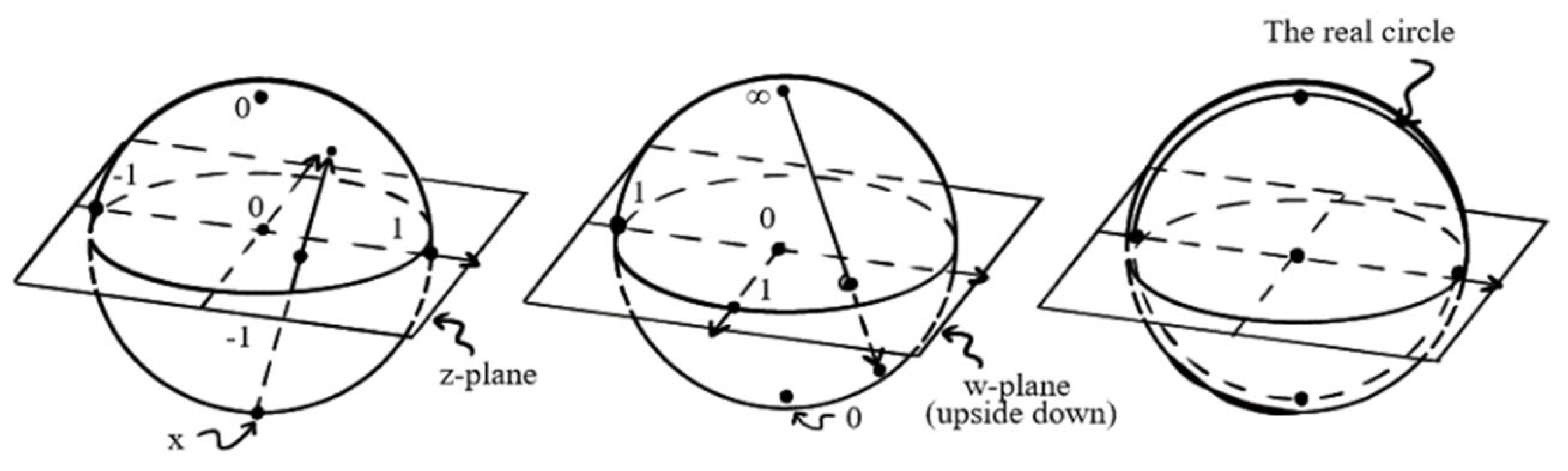

We know that in LLMs, the transformer embeds the input knowledge into vectors with a probability distribution. Thus, we may obtain the corresponding self-information by the Shannon definition. In differential geometry, the logarithm is an example of a Riemann surface. Specifically, the logarithm is a periodic function and accordingly, its Riemann surface has multiple zero points. This characterization is particularly important in machine learning.

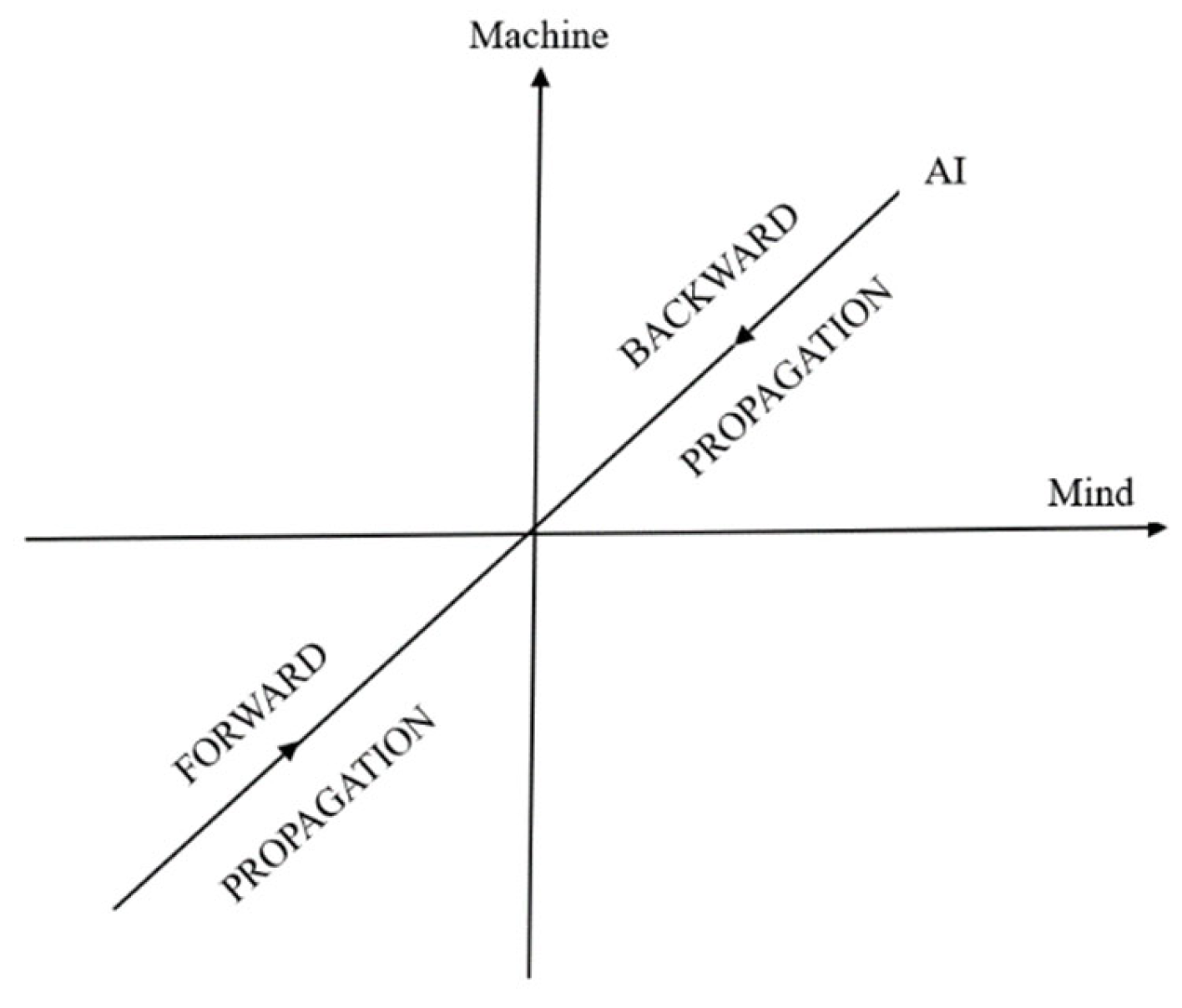

Analogical analysis is an essential method in advancing sciences, even more so for interdisciplinary studies and integration science. Not only is it important to new scientific discoveries, but it is also a cognitive routine for us to understand a structure embodied in different theories. This idea conforms to the structuralism of the Bourbaki school of mathematical thought. Suppose an AI-agent is trained to learn some particular structure, say, the gauge structure with U(1) symmetry. This structure can be learned from the context of QED, or from other contexts such as market dynamics (Yang, 2023, 2024) or reasoning dynamics (Yang, 2024). Training and learning processes of a structure from different contexts are not necessarily periodic. However, from cognitive perspectives, multiple trainings are obviously helpful to our understanding. Thus, mathematically, multiple zero points of the Riemann surface of the logarithmic function allow repeated learning over multiple trainings. In general, the stronger the training of an AI-agent, the better the cognitive attractor.

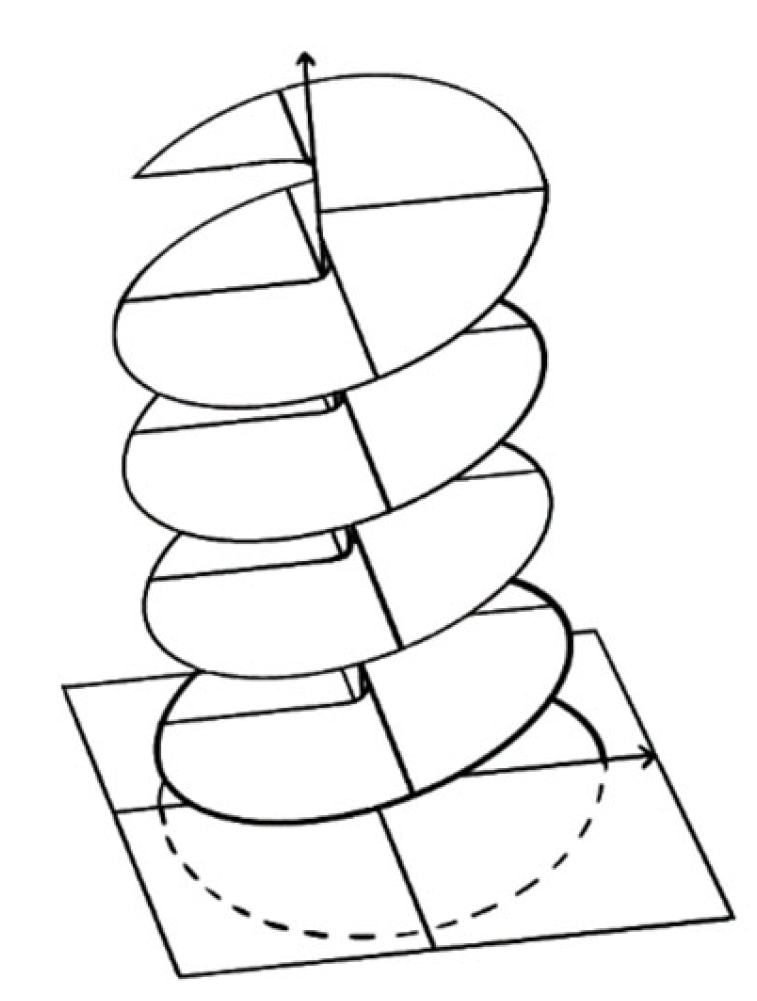

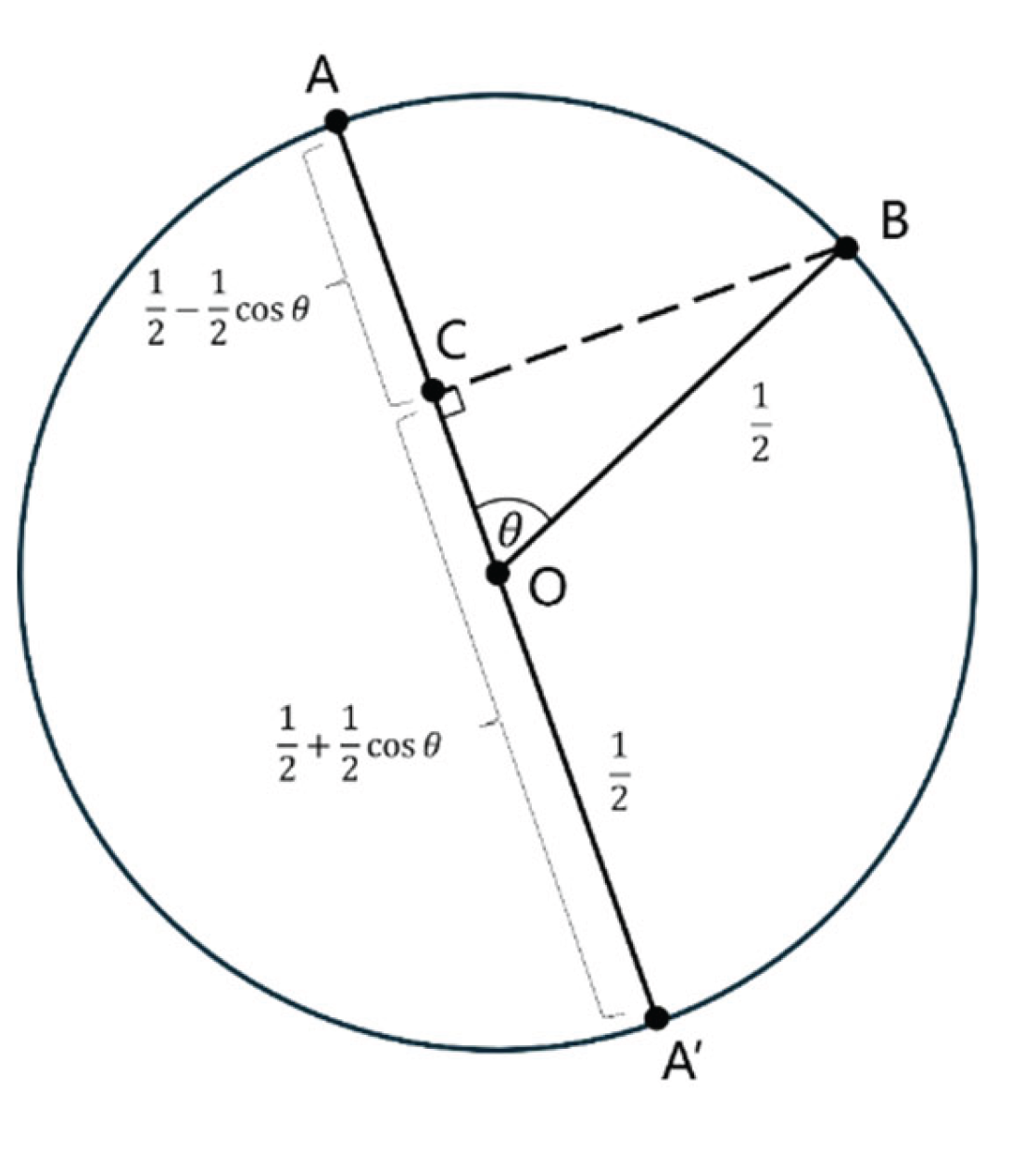

More interestingly, and ideally, assume that after repeated duplications of the same structure from multiple contexts, the AI-agent starts to understand the structure as Hinton predicts. If this happened, then the AI-agent could apply this structure to develop new contexts; i.e., to develop new theories. In this case, we may reasonably assume the learning processes are periodic with certain probabilities. As such, the information duplications form a perfect logarithmic function. Logarithmic function is a many-valued function, whose analytic continuation is a special Riemann surface. To explain, let us quote from Penrose (2004, §8.1):

“There is a way to understand what is going on with this analytic continuation of the logarithm function – or of any other ‘many-valued function’ – in terms of what is called Riemann surface. Riemann’s idea was to think of such functions as being defined on a domain which is not simply a subset of the complex plane, but as a many-sheeted region. In the case of log

, we can picture this as a kind of spiral ramp flattened down vertically to the complex plane. I have tried to indicate this in Fig. 8.1 (see

Figure 2 below). The logarithm is single-valued on this winding many-sheeted version of the complex plane because each time we go around the origin, and

has to be added to the logarithm, we find ourselves on another sheet of the domain. There is no conflict between the different values of the logarithm now, because its domain is this more extended winding space – an example of a Riemann surface – a space subtly different from the complex plane itself.”