1. Introduction

Robot-based reinforcement learning [

1,

2] usually depends on simulation models for learning robotic applications and transferring the learned knowledge to real-world robots. This is a critical stage where most simulation frameworks face challenges in effectively showcasing how to transfer the learned behaviors from simulation models to real robots. One of the main challenges is that the currently available robotics simulators cannot fully capture the exact varying dynamics and intrinsic parameters of the real world. Therefore, the agents trained in the simulation models cannot typically be directly generalized to the real world due to the domain gap (reality gap) [

2] introduced by the simulators’ discrepancies and inaccuracies. To overcome this issue, the experimenters must perform additional steps to the learning task, which require applying Sim-to-real [

3] or domain adaptation [

4] techniques to transfer the learned policies from simulation to the real world.

Even after addressing these concerns, one key challenge in real-world robotic learning is managing sensorimotor data in the context of RL real-time scenarios [

5]. In robotic RL,

‘real-time’ refers to the environment’s ability to operate at a pace where the robot’s decision-making and execution of actions must occur within a specific time frame. This rapid pace is essential for the robot to interact with its environment effectively, ensuring that the processing of sensory data and the execution of actuator responses are both timely and accurate. This aspect is particularly critical when creating simulation-based learning tasks to transfer the learning to real-world robots. Currently, in most simulation-based learning tasks, computations related to environment-agent interaction are typically performed sequentially. Therefore, to comply with the Markov Decision Process (MDP) architecture [

6], which assumes no delay between observing and acting, most simulation frameworks pause the simulation to construct the observations, reward, and other computations. In contrast, time advances continuously between agent and environment-related interactions in the real world. Hence, the learning is typically done with delayed sensorimotor information, potentially impacting the synchronization and effectiveness of the agent’s learning process in real-world settings [

7].

Therefore, these turn-based systems do not mirror the continuous and dynamic nature of real-world interactions and can lead to a mismatch in the timing of sensorimotor events compared to real-world situations. These issues stem from the agent receiving outdated information about the state of the environment and the robot not receiving the proper actuation commands to execute the task. While several solutions exist in the literature, they predominantly focus on single-robot scenarios or systems comprising robots from the same manufacturer, limiting their applicability in heterogeneous multi-robot settings [

8]. Furthermore, they often overlook the computational challenges inherent in scaling to multiple robots, particularly the CPU bottlenecks that can arise from processing data from various sensors, such as vision systems, which may require CPU-intensive preprocessing operations [

9,

10].

Another challenge in this process is the difference in the programming languages of simulation frameworks from those of real robots. Most current simulation frameworks used in RL are commonly implemented in languages like Python, C#, or C++. However, real robots typically have proprietary programming languages such as RAPID, Karel, and URScript or may utilize the Robot Operating System (ROS) for communication and control. Therefore, it is not possible to transfer the learned knowledge directly without recreating the RL environment in the recommended robot programming language to communicate with the physical hardware [

7]. Furthermore, this challenge also applies when learning needs to happen directly in the real world without relying on knowledge transferred from a digital model. These include cases such as dealing with liquids, soft fabrics, or granular materials, where the physical properties are challenging to model precisely in simulations [

11]. In these scenarios, the experimenters must establish a communication interface with the physical robots to enable the agent to interact with the real world directly.

Fortunately, utilizing the Robot Operating System (ROS) presents a promising solution to some of the earlier challenges. This is due to ROS being widely acknowledged as the standard for programming real robots, as well as the massive support it receives from manufacturers and the robotics community. This makes it an ideal platform for constructing learning tasks applicable in simulations and real-world settings. Currently, numerous simulation frameworks are available for creating RL environments with ROS, with most prioritizing simulation over real-world applications. A fundamental limitation of these simulation frameworks, such as OpenAI_ROS (

http://wiki.ros.org/openai_ros), gym-gazebo [

12], ros-gazebo-gym (

https://github.com/rickstaa/ros-gazebo-gym), and FRobs_RL [

13], lies in their inability to support creating real-time RL simulation environments due to their use of turn-based learning approaches. Therefore, the full potential of ROS for setting up learning tasks that can easily transfer the learning to the real world is not utilized correctly. Furthermore, with the current offerings, ROS lacks Python bindings for some crucial system-level features needed to create RL environments, such as launching multiple roscores, nodes, and launch files, which are currently confined to manual configurations (Command Line Interface – CLI approaches). Moreover, the full potential of ROS in creating real-time RL environments that achieve precise time synchronization, which is essential for aligning the sequence and timing of sensor data acquisition, decision-making processes, and actuator responses, thereby reducing latency in agent-environment interactions, has not been thoroughly studied yet. Addressing these gaps in ROS could further streamline the development of effective and efficient RL environments for robotics.

Therefore, this study addresses the question of “

how to set up ROS-based learning tasks to learn across simulation and real robots”. This approach proposes a comprehensive framework designed for creating RL environments that cater to both simulation and real-world applications. This includes adding support for ROS-based concurrent environment creation, which is a requirement for multi-robot/task learning techniques such as multi-task [

14] and meta-learning [

15], enabling it to simultaneously handle learning across multiple simulated and/or real RL environments. Furthermore, the study explores how this framework can be utilized to create real-time RL environments, leveraging a ROS-centric environment implementation strategy that can bridge the gap between transferring the learning from simulation to the real world. This aspect is vital for ensuring reduced latency in agent-environment interactions, which is crucial for the success of real-time tasks.

Furthermore, the study introduces benchmark learning tasks to evaluate and demonstrate some of the use cases of the proposed approach. These learning tasks are built around the ReactorX200 (Rx200) robot by Trossen Robotics and the NED2 robot by Niryo and are used to explain the design choices. This study also lays the groundwork for multi-robot/task learning techniques, allowing for the sampling of experiences from multiple concurrent environments, whether they are simulations, real, or a combination of both.

Summary of Contributions:

Unified RL Framework: Development of a comprehensive, ROS-based framework (UniROS) for creating reinforcement learning environments that work seamlessly across simulation and real-world settings.

Concurrent Env Learning Support: Enhancement of the framework to support vectorized [

16], multi-robot/task learning techniques, enabling efficient learning across multiple environments.

Real-Time Capabilities: Introduction of a ROS-centric implementation strategy for real-time RL environments, ensuring reduced latency and synchronized agent-environment interactions.

Benchmarking and Evaluation: Empirical demonstration through benchmark learning tasks, addressing these challenges using the proposed framework, using three distinct scenarios.

2. Background

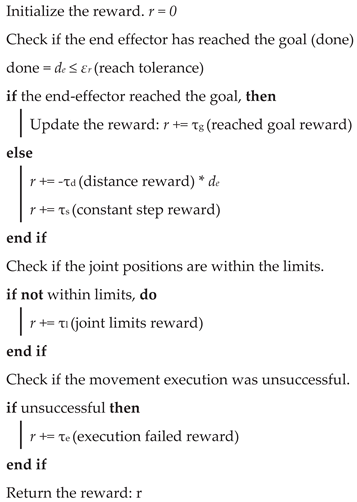

2.1. Formulation of Reinforcement Learning Tasks for Robotics

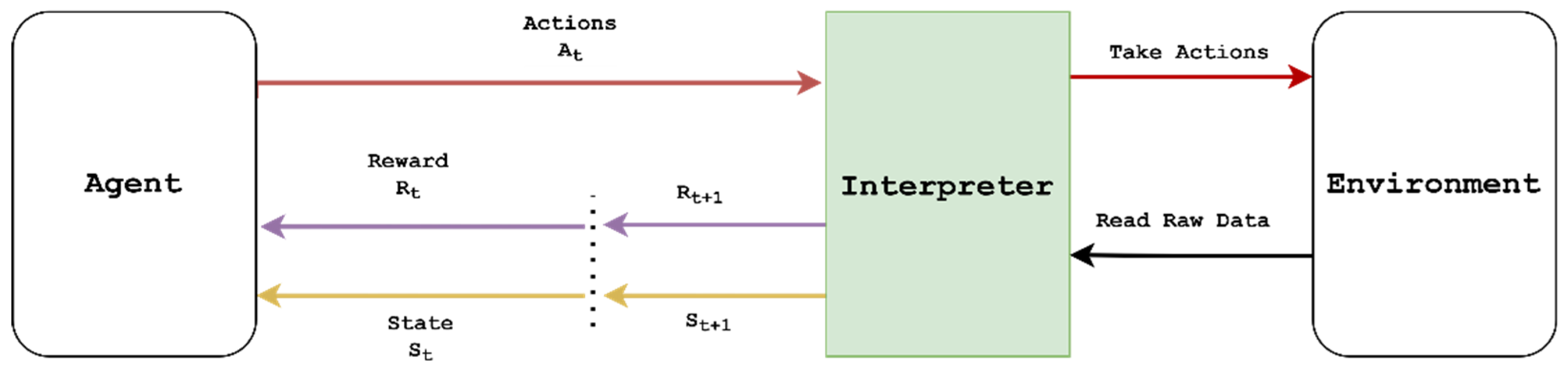

A reinforcement learning task comprises two main essential components: an “

Agent” and an “

Environment”, where they interact with each other as modeled by the

Markov decision process (MDP), as illustrated in

Figure 1. In an MDP, the role of the agent is to interact with the environment at discrete time steps

, where at each time step

, the environment receives an action

and returns the state of the environment

and a scalar feedback reward

back to the agent. The agent follows a stochastic policy

characterized by a probability distribution

to choose action

. The execution of the action pushes the environment into a new state

and produces a new scalar reward

at next time step

according to a state transition probability

. The primary goal of the agent is typically to find the optimal policy that maximizes the total discounted reward, also defined as the expected return

, where γ

is the discount factor.

However, the agent typically cannot directly interact with the environment and requires the assistance of an interpreter to mediate between them. The interpreter’s role is to transform raw environmental states into a format compatible with the agent and to process the actions received from the agent into commands that can affect the elements in the environment. Therefore, from the agent’s perspective, the combination of interpreter and environment constitutes the effective “RL environment,” replacing the abstract “environment” in the classical MDP formulation. In robotics, this process involves mapping observations and actions to their real-world or simulated counterparts, ensuring the RL agent sends actuator commands to control the robots and receives accurate sensor readings as observations from the real world or simulation.

In most robot-based learning tasks, the state of the environment is not fully observable, and the agent relies on real-time sensor data to partially observe the environment using the observation vector

. This observation space generally contains continuous values as it usually holds perception and sensory data. Therefore, most robot-based learning tasks incorporate deep learning techniques as function approximators in conjunction with reinforcement learning (also known as deep reinforcement learning, or DRL) to effectively handle continuous state spaces. In traditional RL, these techniques and methods typically center around learning the optimal policy through evaluating action values, as in the Deep Q-Network (DQN) [

17], or directly parameterizing the policy with neural networks and optimizing them, as in the Twin Delayed Deep Deterministic Policy Gradient (TD3) [

18] algorithms. Currently, various third-party frameworks provide libraries for state-of-the-art DRL algorithms. These frameworks provide clean, robust code with simple and user-friendly APIs (Application Programming Interfaces), enabling users to experiment with and monitor the learning of various DRL algorithms. Therefore, if users are not benchmarking their custom algorithms, they can employ the functionality of these packages, such as Stable Baselines3 (SB3) [

19], Tianshou [

20], Tensorforce [

21], CleanRL [

22], or RLlib [

23], to effectively train robotic agents.

2.2. Applying Reinforcement Learning to Real-World Robots

Training real robots directly using reinforcement learning from scratch can be challenging, and most of the time, it is not actively considered due to the random nature of the exploration of the RL algorithms [

24]. Initially, the agent must explore the environment thoroughly to collect data, which often requires a certain level of randomness in the agent’s actions. However, as the learning progresses, it is desirable for the agent’s actions to become less random and more consistent with each iteration. [

6] This is vital to ensure that the agent does not exhibit unexpected or unpredictable behavior when deployed in the real world. Nevertheless, with careful safety considerations, handpicked learning parameters, and a well-defined observation space, action space, and reward structure for the task, it is possible to train a robot in the real world directly [

25,

26]. Furthermore, incorporating techniques such as Curriculum Learning [

27], where the agent maneuvers through complex tasks by breaking down tasks into simpler subtasks and gradually increasing the task’s difficulty during learning, can help train robots directly without a simulation environment [

28].

While it is possible to learn directly with real robots, there are several constraints in applying RL to real-world learning tasks. One of the significant hurdles is ensuring the safety of the robot, its platform, and the surrounding environment. Due to the arbitrary nature of the initial learning stage, where the agent attempts to learn more about the environment through random actions, the robot can potentially cause damage to itself and nearby expensive equipment. Another challenge is sample inefficiency in real-world RL environments. Most RL-based algorithms require a large number of samples to find the optimal policy. However, unlike in a digital simulation model, it is difficult for real-world RL environments to provide a fast and nearly unlimited number of samples for the agent to learn. It becomes especially apparent in episodic tasks that require environment resetting at the end of each episode. This process is relatively straightforward in a digital model where the environment can be reset via the physics simulator’s API and a function that programmatically sets the initial conditions of the environment. However, in the real world, it is a tedious task where an operator may often have to constantly monitor and physically rearrange the environment at the end of each training episode. Similarly, even if it is possible to speed up the learning using an environment vectorizing approach for sampling experience with multiple concurrent environment instances, the constant monitoring and associated costs may render it unfeasible for many robotics tasks.

2.3. Use Of Simulation Models For Robotic Reinforcement Learning

Simulation-based robot learning begins by establishing a programmatic interface with a physics simulator [

29] to interact with sensors and actuators to read sensory data and execute actuator commands. These types of interfaces enable the creation of RL environments that follow the conventional reinforcement learning architecture illustrated in

Figure 1. One of the most effective structures for creating RL environments was introduced with the OpenAI Gym [

30]. It became the widely adopted standard for RL-based environment creation, and a significant portion of research in the field follows a variation of this standard or builds upon the Gym package for different robotic and physics simulators. Currently, a plethora of RL simulation frameworks [

31] are available for robotic task learning and typically provide prebuilt environments or the tools to create custom environments. The prebuilt learning tasks are primarily used for benchmarking new learning algorithms and are extensively employed by researchers to demonstrate the RL approaches in robotics. The popularity of these learning tasks is due to relieving users from many task setup details, such as defining the observation space, action space, and reward architecture. However, custom environment creation is generally more challenging, as it requires users to become familiar with the API of the RL simulation framework and task setup details, which also includes providing a detailed description of the robot and its surrounding environment in a format [32] compatible with the chosen simulator. Once the environment is created, users can utilize third-party RL library packages such as SB3 or custom learning algorithms to find the optimal policy for the custom learning task.

3. Related Work

Most RL-based simulation frameworks for robots built on simulators such as MuJoCo [33], PyBullet [34], and Gazebo [35] prioritize offering accelerated simulations for developing complex robotic behaviors, often with less emphasis on the seamless transition of policies to real-world robots. A recent advancement in this field is the Orbit [36], a framework built upon Nvidia’s Isaac Gym [37] to provide a comprehensive modular environment for robot learning with photorealistic scenes. It stands out for its extensive library of benchmarking tasks and the capabilities that potentially ease the policy transfer to physical robots with ROS integration. However, at the current stage, its focus remains mainly on simulation rather than direct real-world learning. As such, while it provides tools for simulated training and real-world applications, it may not yet serve as a complete solution for real-world robotics learning without additional customization and system integration efforts. Furthermore, the high hardware requirements (

https://docs.omniverse.nvidia.com/isaacsim/latest/installation/requirements.html#isaac-sim-requirements-isaac-sim-system) of Isaac Sim may restrict accessibility for many researchers and roboticists, limiting its widespread adoption at present.

SenseAct [

7] is a notable contribution that highlighted the challenges of real-time interactions with the physical world and the importance of sensor-actuator cycles in realistic settings. They have proposed a computational model that utilizes multiprocessing and threading to perform asynchronous computations between the agent and the real environment, aiming to minimize the delay between observing and acting. However, this design is primarily tailored for single-task environments and shows limitations when extended to multi-robot/task research, including learning together with simulation frameworks or concurrently with multiple environments. This limitation partly stems from its architecture, which allocates a single process with separate threads for the agent and the environment. The scalability of this approach, particularly for concurrent learning with multiple RL environments, is hindered by Python’s Global Interpreter Lock (GIL) (

https://wiki.python.org/moin/GlobalInterpreterLock), which restricts parallel execution of CPU-intensive tasks. Hence, incorporating multiple RL environment instances within a single process is not computationally efficient, especially when real-time interactions are critical. Furthermore, the difficulty of synchronizing the different processes and establishing communication layers with various robots and sensors from different manufacturers may limit the potential of their proposed approach.

Table 1 provides a comprehensive comparison between UniROS and existing RL frameworks with a focus on ROS integration, real-time capabilities, and multi-robot support. Unlike most prior tools, which are either simulation-centric or designed for single-robot real-world use, UniROS is uniquely positioned to support scalable, low-latency training across both simulation and physical robots concurrently.

Beyond comprehensive frameworks, several research efforts have tackled specific aspects of bridging simulation and real-world robot learning. There are many domain randomization approaches [38–40] that either dynamically adjust simulation parameters based on real-world data or vary simulation parameters to improve sim-to-real transfer. However, their methods often require extensive manual tuning of randomization ranges and do not address the fundamental timing mismatches between simulation and real-world execution. While other domain adaption approaches [41,42] leverage demonstrations in both simulation and real-world settings to accelerate robot learning, their approach requires separate implementations for each domain. Since these approaches do not provide a unified interface for concurrent learning across simulation and real environments, they highlight the need for more efficient frameworks that can leverage both simulation and real-world data concurrently.

4. Learning Across Simulated and Real-World Robotics Using UniROS

This section provides a high-level overview of the proposed UniROS framework formulation, which facilitates learning across both simulated and real-world domains. The aim is to present a comprehensive overview of the framework’s architecture and functionalities, setting the stage for a more detailed examination of its components in subsequent sections.

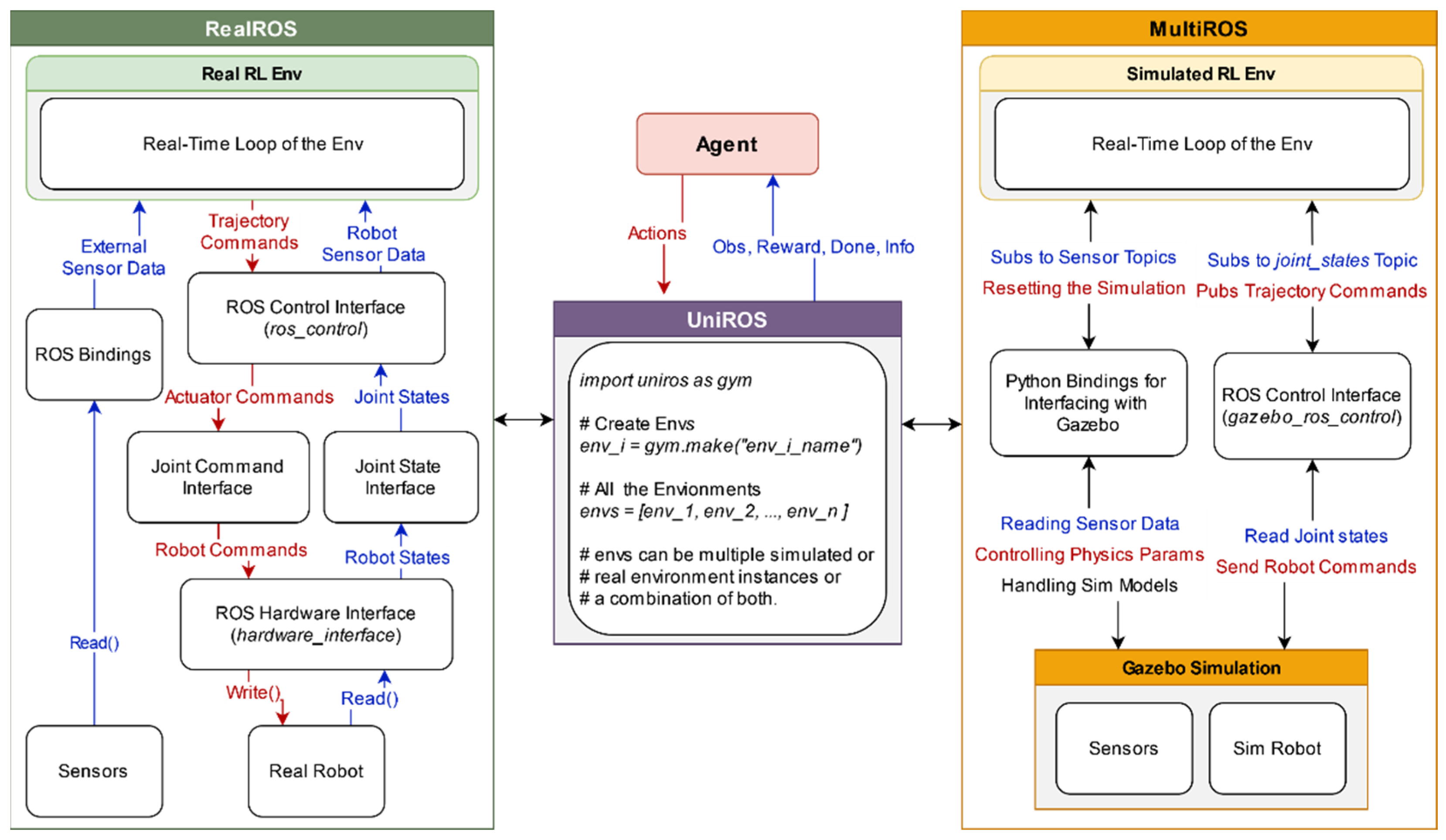

4.1. Unified Framework Formulation

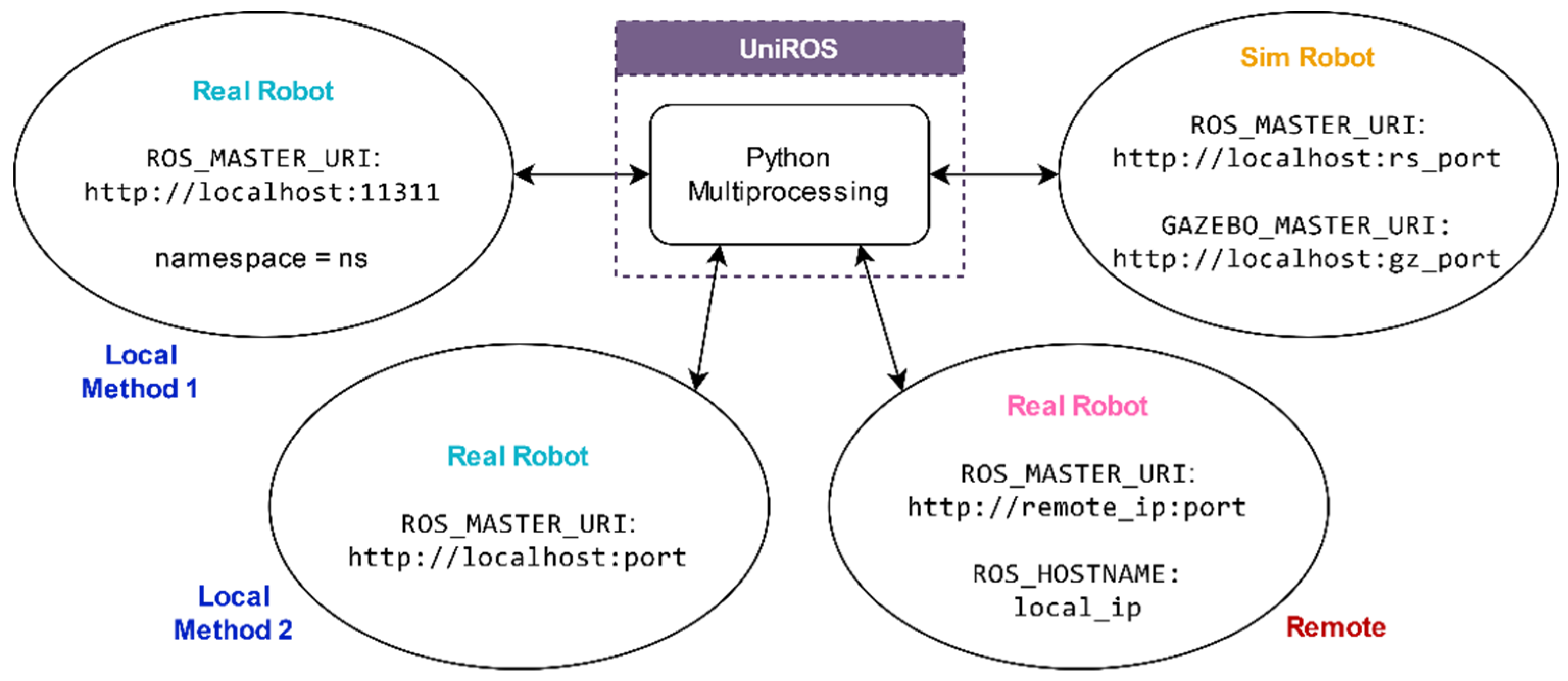

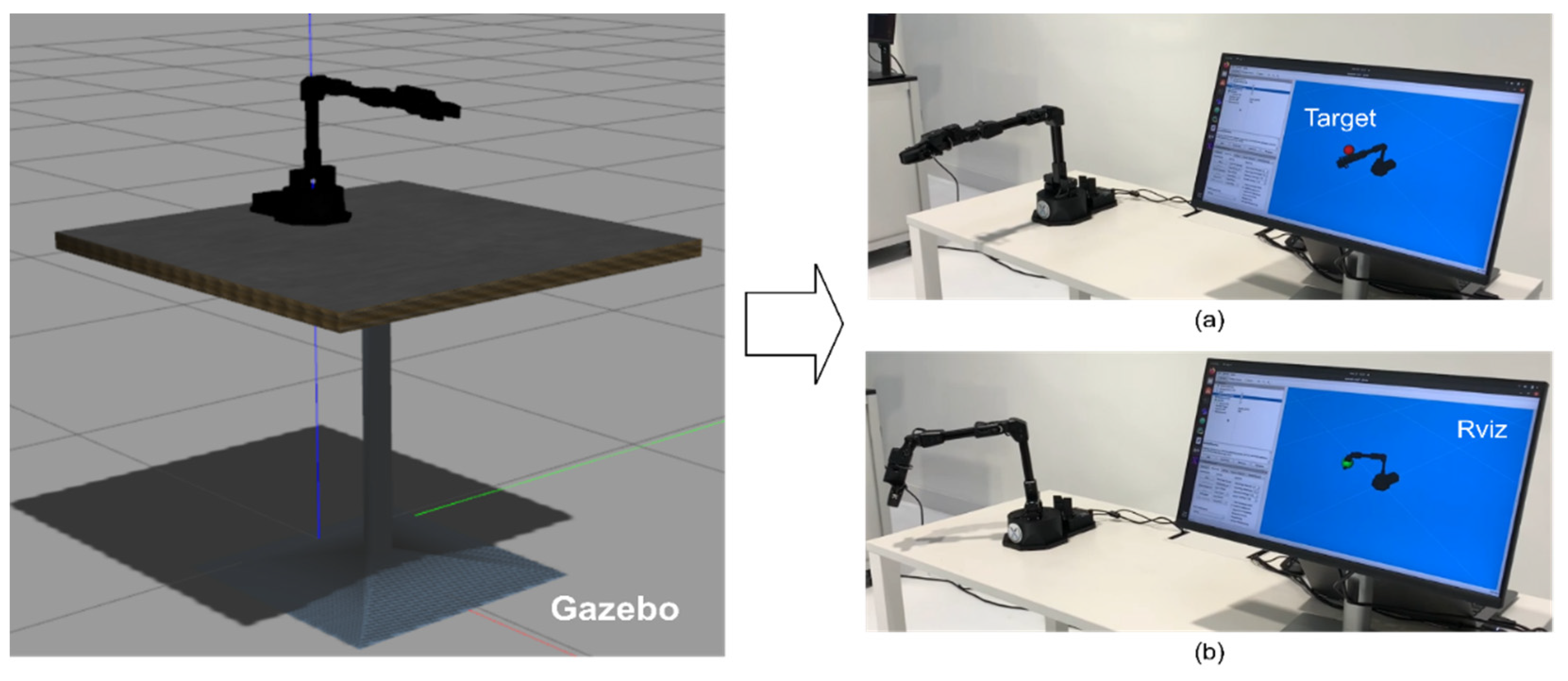

As illustrated in

Figure 2, a ROS-based unified framework is proposed, containing two distinct yet interoperable packages to bridge the learning across simulated and real-world RL environments. Our prior work, MultiROS [43], provides simulation environments using ROS and Gazebo as its core. In contrast, the newly introduced RealROS package, detailed in

Section 5, is designed explicitly for real-world learning applications. The intuition behind dividing the framework into two packages is to offer users flexibility. Depending on specific requirements, users can utilize each package independently, focusing solely on either simulated or real-world scenarios or leverage them collectively for comprehensive simulation-to-reality learning tasks. Furthermore,

Section 7 presents a ROS-centric RL environment implementation strategy to bridge the learning gap between the two domains. It aligns the conditions and dynamics of the Gazebo simulation more closely with those of the real world to allow for a smoother deployment of policies from simulation to the real world. This environment implementation strategy can also be employed with the RealROS package to develop and deploy robust policies by sampling directly in real-world environments without relying on a simulated approach.

4.2. Modularity of the Framework

The architecture of both MultiROS and RealROS leverages a modular design by segmenting the RL environment creation into three distinct classes. The primary focus of this segmentation is to enable flexibility and encourage efficient code reuse during the design of RL environments. These segmented environment classes (

Env) provide a structure for users to format their code easily and minimize the system integration efforts when transferring policies from the simulation interface to the real world. Therefore, this framework provides an architecture comprising three major components to deliver these services. They are (A) the

Base Env, the foundational layer of the RL environment, which facilitates the main interface of the standard RL structure for the agent. It inherits its core functionality from the OpenAI Gym and includes static code essential for the basic functioning of any RL task. (B) The

Robot Env, which is built upon the

Base Env, outlines the sensors and robots used in the learning task. It encapsulates all the hardware components involved in the task and serves as the bridge that connects the RL environment with the ROS middleware suite. (C) The

Task Env extends from the

Robot Env and specifies the structure of the task that the agent needs to learn, which includes learning objectives and task-specific parameters. These modular

Envs provide a significant degree of flexibility, allowing the users to create multiple

Task Envs with a single

Robot Env, as with the Fetch environments (

https://robotics.farama.org/envs/fetch/index.html) (

FetchReach,

FetchPush,

FetchSlide, and

FetchPickAndPlace) of OpenAI Gym robotics. It is important to note that while each

Task Env is compatible with its respective

Robot Env (which may include multiple robots and different sensors), they are not universally interchangeable across different

Robot Env. Therefore, modularity is for diverse task development within the basis of a specific

Robot Env. The main reason for this composition is due to the

Base Env inheriting from OpenAI Gym to retain the compatibility of third-party reinforcement learning frameworks such as Stable Baselines3, RLlib, CleanRL, and others.

4.3. Role of Concurrent Environments

In modern RL research, there is a growing interest in leveraging knowledge from multiple RL environments instead of training standalone models. One of the advantages of this approach is that it can improve the agent’s learning by generalizing knowledge across different scenarios [44]. Furthermore, combining concurrent environments with diverse sampling strategies can effectively speed up the agent’s learning process [45]. This leveraging process can expose the agent to learning multiple tasks simultaneously rather than learning each task individually (multi-task learning). It is also similar in meta-learning-based RL applications, where the agent can quickly adapt and acquire new skills in new environments by leveraging prior knowledge and experiences through learning-to-learn approaches. Another advantage of the concurrent environments is scalability, which allows for the simultaneous training of multiple robots in parallel, whether in a vectorizing fashion or for different tasks or domain learning applications [46]. Therefore, creating concurrent environments is crucial for efficiently utilizing computing resources to accelerate learning in real-world applications where multiple robots need to be trained and deployed efficiently. Hence, this study investigated how to create ROS-based concurrent RL environments (sim and real) with seamless simultaneous communication between each environment, as described in

Section 6.

4.4. Python Bindings For ROS

One of the drawbacks of ROS is that some crucial system-level components required for creating RL environments do not have Python or C++ bindings. For example, executing and terminating ROS nodes or launch files requires terminal commands (CLIs). Similarly, running multiple roscore instances concurrently using Python or C++ interfaces and managing communication with each other is currently not natively supported in ROS. These functions also require command-line interfaces, making them undesirable for seamless RL environment creation. Therefore, the UniROS framework contains comprehensive Python-based bindings for ROS, enabling users to utilize the full potential of ROS for RL environment creation. Key features include the ability to launch multiple roscores on distinct or random ports without overlap, manage simultaneous communication between concurrent roscores, run roslaunch and ROS nodes with specific arguments, terminate specific ROS masters, nodes, or roslaunch processes within an environment, retrieve and load YAML files from a package to the ROS Parameter Server, and upload a URDF to the parameter server or process URDF data as a string.

4.5. Additional Supporting Utilities

Apart from the stated ROS bindings, the framework also provides utilities based on Python for users to quickly start creating environments without wasting too much time on ROS implementations. It is also beneficial for users unfamiliar with ROS to create environments without expert knowledge of programming with ROS. These utilities include the

ROS Controllers module, which allows comprehensive control over ROS controllers to load, unload, start, stop, reset, switch, spawn, and unspawn controllers. The

ROS Markers module provides methods for initializing and publishing Markers or Marker arrays to visualize vital components of the task, such as the current goal, the pose of the robot’s end-effector, and the robot’s trajectory. This makes it easy to monitor the status of the environment using Rviz (

http://wiki.ros.org/rviz), a 3D visualization tool for ROS, to visualize the Markers. The

ROS kinematics module provides forward (FK) and inverse kinematics (IK) functionality for robot manipulators. This class uses the KDL (

https://www.orocos.org/kdl.html) library to perform kinematics calculations. The

MoveIt Module offers essential functionalities for managing ROS MoveIt [47] in manipulation tasks, including collision checking, planning, and executing trajectories. Additionally, common

wrappers are included to limit the number of steps in the environment and to normalize actions and observation spaces.

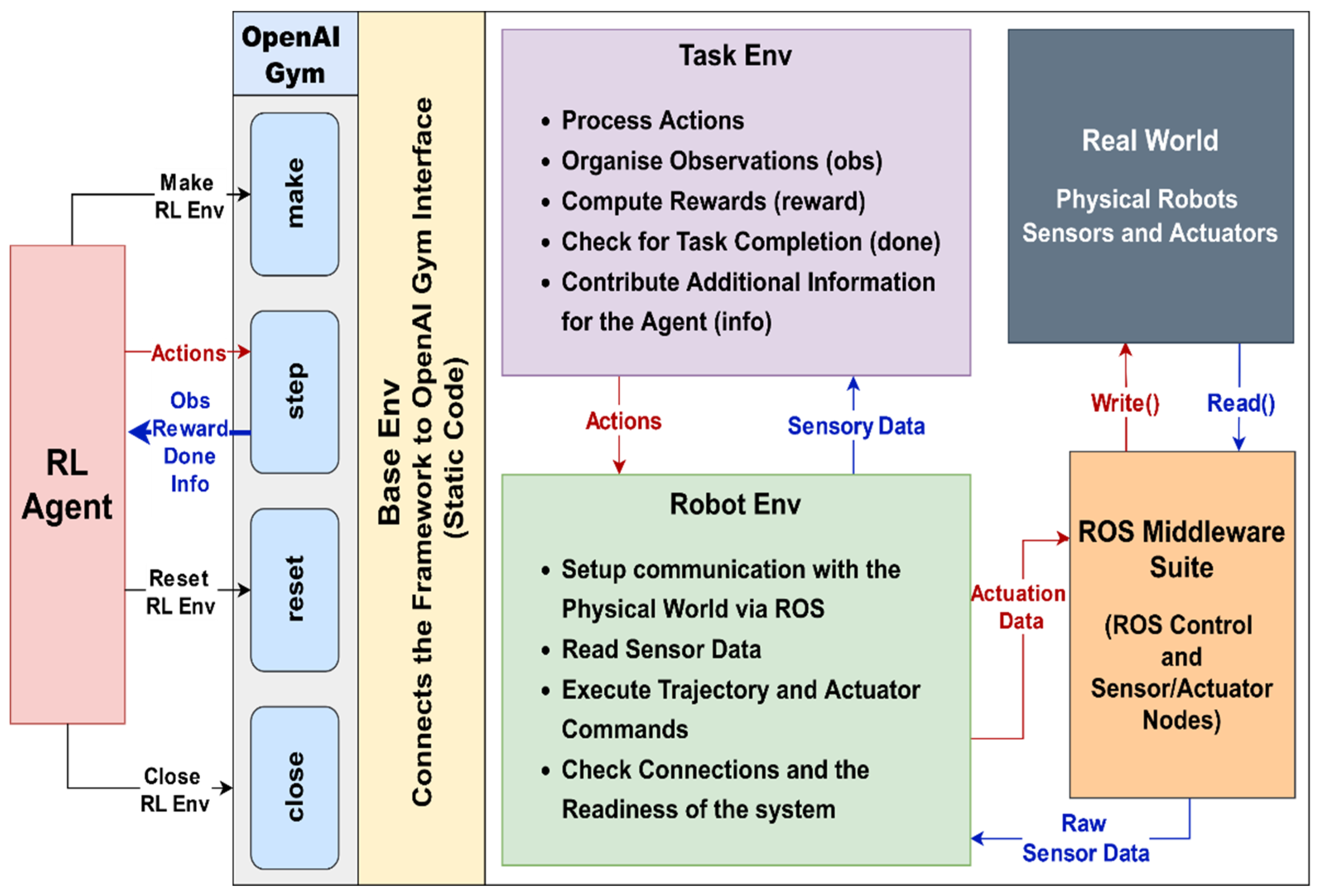

5. An In-Depth Look into ROS-Based Reinforcement Learning Package for Real Robots (RealROS)

This section describes the overall system architecture of the RealROS package. It is designed to be compatible with the MultiROS simulation package and is structured to minimize the typical extensive learning curve associated with switching from simulation to real environments. The three core components (

Base Env,

Robot Env, and

Task Env) provide the main API for the experimenters to encapsulate the task, as shown in

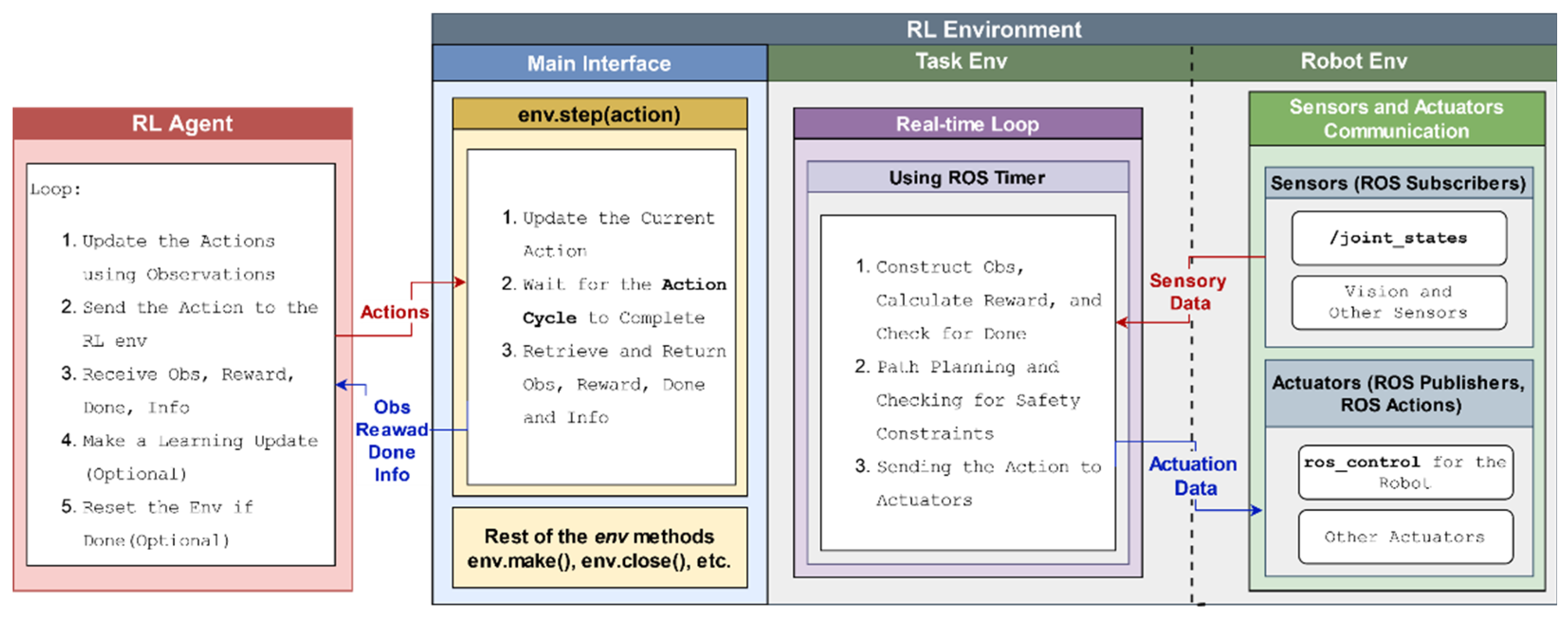

Figure 3. The integration of stated attributes and the structure of the three main components of the framework are described in more detail in the following sections.

5.1. Base Env

The Base Env serves as the superclass that provides the foundation and main interface that specifies the standard RL structure for the RealROS. It provides the necessary infrastructure and other essential components for RL agents to interact with the environment. Here, RealROS offers two options for users to create environments. The default “standard” (RealBaseEnv) is based on the inheritance of , and the other “goal-conditioned” (RealGoalEnv) is from the of the OpenAI Gym package. This Base Env defines the , , and methods, which are the main standardized interface of OpenAI gym-based environments. When initializing the Base Env, experimenters can pass arguments from the Robot Env to perform several optional functions based on user preferences. These include starting communication with real robots, changing the current ROS environment variables, loading ROS controllers for robot control, and setting a seed for the random number generator.

5.2. Robot Env

The Robot Env is a crucial component for describing the robots, sensors, and actuators used in the real-world environment using the ROS middleware suite. It inherits from the Base Env, allowing it to initialize and access methods defined in the superclass. However, it adds additional functionalities specific to robots and sensors used in the real world. This Robot Env encapsulates the following.

Robot Description: One of the primary tasks of the

Robot Env is to define the physical robot’s characteristics, such as its kinematics, dynamics, and available sensors, using a format that ROS can understand. For that purpose, the ROS requires the robot description to be loaded into the ROS parameter server (

http://wiki.ros.org/Parameter%20Server) (a shared, multi-variate dictionary that is accessible via ROS network APIs). In ROS, the robot description is in the Universal Robot Description Format (URDF (

http://wiki.ros.org/urdf)) and contains all the relevant information about the composition of the robot. These include the joint types, joint limits, link lengths, and other intrinsic parameters of the robot. By loading the robot description, ROS packages can utilize it to perform collision detection, inverse kinematics (IK), and forward kinematics (FK) calculations of the robot arm. Suppose multiple robots are needed for the learning task (inside the same RL environment). In that case, each robot’s description can be loaded with a unique ROS namespace identifier to distinguish it from the others.

Set up communications with robots: Currently, most robot manufacturers or the ROS community typically provide essential ROS packages specifically designed for commercial robots to establish a communication channel with their robots. One of the prime examples is the ROS-Industrial (

https://rosindustrial.org/) project, which extends the advanced capabilities of ROS for industrial robots from manufacturers (

http://wiki.ros.org/Industrial/supported_hardware) such as ABB, Fanuc, and Universal Robots. These packages include the robot’s controller software, which is responsible for managing the robot’s motion and maintaining communication with the robot’s motors and sensors, as well as with external systems. As for custom robots, the official ROS tutorials (

http://wiki.ros.org/ROS/Tutorials) provide details on creating a custom URDF file and ROS controllers (

http://wiki.ros.org/ros_control/Tutorials) for interfacing with physical hardware. Once these packages are properly configured, the RL environment can send commands to control the robot’s motors and access its sensor readings (including motor encoders and others) through ROS.

Set up communication with the Sensors: Similarly, connecting external sensors using ROS enables the acquisition of data from various sensors such as cameras, lidars, proximity sensors, and force/torque sensors. This can help portray a vivid picture of the real world, enabling the RL agent to perceive the current state of the environment (observations). Currently, most vision-based sensors (

https://rosindustrial.org/3d-camera-survey) and others (

http://wiki.ros.org/Sensors) have ROS packages provided by the manufacturers or the ROS community, allowing users to easily plug and play the devices.

Robot Env specific methods: These methods are for the RL agent to interface with robots and the other equipment in the environment. They can be in the form of planning trajectories, calculating IK, and controlling the joint positions, joint forces, and speed/acceleration of the robot. These methods are then used in the

Task Env to execute the agents’ actions in the real world. Furthermore, ROS’s inbuilt utilities and other packages, such as MoveIt, provide functionality for obtaining the transformations of each joint (tf (

http://wiki.ros.org/tf)) and the robot end-effector’s current pose (3D position and orientation) for portraying the current state of the robot. Combining them with the data acquired from custom methods for interfacing the external sensors enables the

Task Env to construct observations of the environment.

5.3. Task Env

The

Task Env serves as the module that outlines the structure and requirements of the task that the RL agent needs to learn. It inherits from the

Robot Env and builds upon its functionalities to create a real-time loop (

Section 7) that executes actions, constructs observations, calculates the reward, and checks for the termination of the task (optional). Therefore,

Task Env defines the observation space, action space, goal or objectives, rewards architecture, termination conditions, and other task-specific parameters. These components help with the main

Step function of the environment to take a step in the real world and send feedback (observations, reward, done, and info) to the agent. Furthermore, knowledge acquired in simulation (MultiROS) can be efficiently transferred to real-world environments by reusing the same code to create the Task Env, thanks to the modular design of the UniROS framework and the compatibility between the MultiROS and RealROS packages, with the caveat that the new Robot Env having the same helper functions.

6. ROS-Based Concurrent Environment Management

This section discusses how the UniROS framework sets up ROS-based concurrent environments to maintain seamless communication with each other. It first deliberates the challenges and provides solutions for initiating multiple ROS-based simulated and real-world environment instances inside the same script. Then, it describes steps for launching multiple Gazebo simulation instances and connecting real-world robots over local and remote connections while ensuring that each environment operates independently and interacts effectively without interference.

6.1. Launching ROS-Based Concurrent Environments

Launching OpenAI Gym-based concurrent environments presents several unique challenges when working with ROS. One challenge is the functionality of the OpenAI Gym interface, which executes all the environment instances within the same process when launched using the standard

function. In contrast, ROS requires each environment instance (sim or real) or process to initialize a unique ROS node (

http://wiki.ros.org/Nodes) to utilize ROS’s built-in functions and to communicate with corresponding sensors and robots via the ROS middleware suite. However, ROS typically does not support initializing multiple nodes inside the same Python process due to its fundamental design architecture, which is centered around process isolation for enhanced reliability, modularity, and robustness. Therefore, launching multiple ROS-based environments within the same script is challenging, as it typically requires initializing separate ROS nodes for each instance. While there is a

roscpp (

http://wiki.ros.org/roscpp) (C++ client of ROS) feature for launching multiple nodes inside the same script called

nodelet (

http://wiki.ros.org/nodelet), the Python client

rospy (

http://wiki.ros.org/rospy) does not currently support this function.

One workaround is employing Python multi-threading to launch each RL environment instance, allowing the execution of multiple ROS nodes within the same process. However, this can lead to unexpected behaviors, especially for efficiently utilizing computational resources and parallel processing between each instance. One limiting factor with multi-threading is that Python’s Global Interpreter Lock (GIL) prevents multiple threads from executing Python bytecodes simultaneously. This is unfavorable for working with concurrent environments, as each environment instance requires performing CPU-intensive real-time agent-environment interactions, as highlighted in the following

Section 7.

Therefore, the UniROS framework utilizes a Python multiprocessing wrapper on top of the OpenAI Gym interface to launch each environment instance as a separate process to address these limitations. Initializing separate processes for each environment instance also launches a separate Python interpreter for each process, allowing them to overcome the bottleneck placed by the Python GIL. This process isolation can significantly contribute to optimized resource allocation for efficiently performing parallel computations with each environment instance. For users, this transition is virtually effortless as they need to utilize UniROS, as in

Figure 2, instead of the standard

function to launch environments. This adaption can effortlessly leverage optimized parallel processing capabilities that are crucial for real-time, CPU-intensive computation tasks in ROS-based concurrent environments.

6.2. Maintaining Communication with Concurrent Environments

In managing concurrent environments, it is essential to have a robust communication infrastructure to ensure seamless interaction between multiple real robots and Gazebo simulation instances.

Figure 4 outlines the methodologies employed in the UniROS framework to facilitate such communication across sim and real environments. In MultiROS-based simulation environments, this was achieved by launching an individual roscore (

http://wiki.ros.org/roscore) for each environment instance. In ROS, roscore provides necessary services such as naming and registration to a collection (graph) of ROS nodes to communicate with each other. Without a roscore, nodes would be unable to locate each other, exchange messages, or establish parameter settings, making it a critical component in ensuring coordinated operations and data exchange within the ROS ecosystem. Therefore, by utilizing separate roscores, each roscore acts as the server and creates a dedicated communication domain for each node (clients) within the environment instance to exchange data without cross-interference from other concurrent instances. Therefore, for simulations, MultiROS utilizes separate roscores and dynamically assigns the environment variables mentioned in

Figure 4 inside each simulated environment, allowing for precise management of interactions and data flow across concurrent environments. Here, the ROS Python bindings of the UniROS framework were employed for launching roscores with non-overlapping ports and Gazebo simulations, as well as functions for handling ROS-related environment variables to streamline the process without relying on CLI configurations.

As for real-world learning tasks, the RealROS package facilitates robot connections via both local and remote methods. In local setups, the default configuration typically involves using the standard roscore, which uses port

‘11311’ as the ROS master (

http://wiki.ros.org/rosmaster) by default. In such arrangements, each robot and its corresponding sensors can be assigned a distinct namespace to distinguish themselves from the other robots within the same shared roscore. This unique namespace can be used in the respective environment to ensure orderly and isolated interactions despite the shared communication space with other robots. However, for scenarios requiring enhanced communication isolation, each robot can be configured to connect to different roscores, each operating on unique, non-overlapping ROS master ports. Then, setting the appropriate

ROS_MASTER_URI environment variable in each environment instance adds an extra layer of process isolation to effectively eliminate the potential for cross-interference typically inherited with namespace-based setups.

In remote configurations where robots are connected to the same network, robots can be connected in ROS multi-device mode, where the local PC functions as a client capable of reading and writing data to the remote server (robot) that runs the master roscore. This setup eliminates the need for Secure Shell (SSH) approaches and allows all the processing required for learning to be conducted on the local PC. This approach is particularly beneficial for robots created for research where the robot’s ROS controller is based on less powerful edge devices such as the Raspberry Pi, Intel NUC, and Nvidia Jetson. This ROS multi-device mode can be configured by setting the environment variables on both the robot and the local PC, as depicted in

Figure 4. This setup allows for multiple concurrent environments, each connecting to a remote robot by setting the ROS environment variables utilizing the Python ROS binding of the UniROS framework. This approach ensures a clear distinction between each remote robot, facilitating organized communication across concurrent environments.

With these methodologies, the UniROS framework can efficiently manage multiple concurrent environments, whether they are purely simulated robots, real robots, or a combination of both. Furthermore, this study provides ready-to-use templates within the respective MultiROS or RealROS packages to create and manage their RL environments, eliminating the need to handle these ROS Python bindings directly. These templates, including the respective Base Env of the packages, relieve experimenters from explicitly handling ROS system-level configurations, allowing them to focus more on implementing their learning tasks.

7. Setting Up Real-Time RL Environments with the Proposed Framework

This section outlines the essential components necessary for establishing real-time learning tasks using ROS. It explores various options for each component and details the specific recommendations for implementing real-time RL environments using the UniROS framework. The ROS-based environment implementation strategy described here ensures that simulated environments closely mirror real-world scenarios for seamless policy transfer and that the real-world environments are safe and effective in facing the challenges of real-world conditions and dynamics.

7.1. Overview of The Real-Time Environment Implementation Strategy

One key challenge when working on real-time learning tasks is that sensory data and control commands are typically processed in a sequence that inherently introduces latency. This latency results from the time it takes the environment to construct observations and rewards and then for the agent to decide on the subsequent action. Since all calculations in the typical MDP architecture are performed sequentially, this can lead to a misalignment between the environment’s state and the agent’s perception of it. Therefore, it makes the learning problem more complex and may push the agent to develop suboptimal strategies simply because it reacts to outdated information. From the agent’s side, augmenting the state space with a history of actions may help the agent anticipate and adapt to delays. However, these methods do not reduce the actual latency of the system but merely help the agent cope with it [48].

Therefore, this study proposes an asynchronous scheduling approach for agent-environment interactions, allowing concurrent processing to minimize overall system latency. This design partitions the reinforcement learning agent and the environment into two distinct processes, as depicted in

Figure 5. Inside the environment process, multi-threading is employed to concurrently read sensor data and send the robot’s actuator commands via the sensorimotor interface to avoid unnecessary system delays. Similarly, an additional dedicated environment loop thread is used to periodically oversee the construction of observations, the calculation of rewards, and the verification of task completion. It also iteratively performs safety checks and updates actuation commands in response to the agent’s actions in real time. The intuition behind employing an environmental loop is to allow the agent to make decisions and send actions without waiting for sensor readings or actuator commands to be processed, which helps minimize the agent-environment latency.

On the other hand, the RL agent process updates the actions using the observations and performs learning updates to refine its policy. It also defines the task-specific duration between successive actions (action cycle/step size), a hyperparameter to set the rate of agent-environment interactions. This computational model of the framework is scalable for learning scenarios that require multiple concurrent environments by utilizing one process per environment, as described in

Section 6.1, enabling the agent to use multi-robot/task learning approaches. Here, the proposed approach does not use multi-threading packages of Python and, instead, utilizes the offerings of ROS to implement the proposed real-time RL environment implementation strategy. The primary reason for this selection is the specialized capabilities of ROS, which offer optimized low-latency communication and a flexible, scalable architecture better suited for complex robotic systems.

7.2. Reading Sensor Data with ROS

In the ROS framework, sensor packages typically employ ROS Publishers to publish data across various ROS topics (

http://wiki.ros.org/Topics). For instance, the

joint_state_controller (

http://wiki.ros.org/joint_state_controller) of the

ros_control (

http://wiki.ros.org/ros_control) package launches a ROS node, essentially a separate process dedicated to continuously monitoring the robot’s joint positions, velocities, and efforts based on sensor (joint encoders) feedback. This node then periodically publishes captured data to the

joint_states topic, allowing other packages to monitor the robot’s status by subscribing to it. The ROS Subscriber is a built-in ROS component that listens to messages on a specific topic, which triggers a callback function to handle the incoming data each time a message is published into the topic. Since the handling of callbacks of subscribers in

rospy (Python client library for ROS) is inherently multi-threaded, each sensor callback is handled in a separate thread, allowing for concurrent processing of sensor data from different topics. This approach is adaptable and can be extended to integrate additional sensors, such as a Kinect camera (RGBD), into ROS using respective sensor packages. These packages are responsible for interfacing with the sensor hardware, processing the data, and publishing them to relevant ROS topics, which can be used as part of the observations to provide a comprehensive overview of the environment. However, it should be noted that while Python GIL does not hamper the I/O-bound operations such as ROS subscribers, it can cause a bottleneck if the callback function contains CPU-intensive operations (e.g., preprocessing camera data for object detection). In such scenarios, using a separate ROS node, which creates a separate process, may be beneficial for handling CPU-intensive computations.

7.3. Sending Actuator Commands with Ros

In real-time learning tasks, controlling the robot by directly accessing the low-level control interface of ROS is needed instead of relying on high-level motion planning packages such as MoveIt. This choice was motivated by the need for real-time control of trajectories, a key feature better managed by the robot’s low-level controllers. This approach provides the flexibility necessary for dynamic behavior, such as smoothly and rapidly switching between trajectories. While MoveIt is excellent for planning and executing trajectories, its higher level of abstraction limits its capability for instant preemption of trajectories and swift adjustments while maintaining a continuous motion. Therefore, this implementation strategy bypasses MoveIt and directly interacts with the controllers by publishing to the respective ros_control topic since real-time learning requires rapid trajectory updates.

7.4. Environment Loop

The environment loop is the cornerstone of the proposed RL environment implementation strategy that facilitates interaction between the RL agent and the robotic environment. It manages the timing and synchronization to ensure seamless integration between the agent’s decision-making process and the robot’s trajectory executions. This loop is implemented using ROS timers, a built-in feature of ROS that enables the scheduling of periodic tasks. One significant advantage of this method is that the callback function for a ROS timer is executed in a separate thread, similar to the callbacks of the ROS Subscribers. Therefore, this approach ensures that the computational loads of other threads (mostly I/O-bound) do not interfere with the performance of the environment loop.

Hence, the proposed implementation strategy initializes a ROS timer for the environment loop to trigger at a fixed frequency (environment loop rate) throughout the entire operation runtime to execute a sequence of operations, as shown in

Figure 5. At each trigger, it captures the current state of the environment through data acquired from sensors and formulates them into observation. Then, it calculates the reward and assesses whether the conditions for a done flag are met to indicate the end of an episode. Finally, it checks for safety constraints and executes the last action received from the RL agent. When executing actions, it repeats the previous action if no new action is received during the next timer trigger. This approach, also known as action repeats [

17], reduces the agent’s computation load and allows for smoother and more stable manipulations as the robot maintains its course of action for a consistent duration. Typically, the environment loop rate is multiple times higher than the action cycle time (step size) of the robot. Therefore, every time the agent process sends an action, the environment process does not need to wait to process the relevant returns (observations, reward, done, and info) after the step size passes, as it can retrieve the latest returns already constructed in the environment loop and pass it to the agent.

Here, the environment loop rate is one of the hyperparameters used to configure real-time environment implementation. However, it is essential to mention that each robot has its own hardware control loop frequency, which determines how quickly it can process information, execute relevant control commands, and read sensor (joint encoder) readings. Therefore, setting the environment loop rate higher than the hardware control loop frequency of the robot is counterintuitive, as the robot cannot physically execute control commands or retrieve the robot’s status at a higher frequency than its hardware control loop allows. This hardware control loop frequency depends on the robot’s capabilities and is typically set by the manufacturer in the

hardware_interface (

http://wiki.ros.org/hardware_interface) of the robot’s ROS controller.

The ROS Hardware Interface of a robot is responsible for reading sensor data, updating the robot’s state via the

joint_states topic, and issuing commands to the actuators. This loop runs continuously during the entire robot’s operation and is tightly synchronized with the robot’s control system. Hence, operating at the hardware control loop frequency ensures that the sensor data readings and actuator command executions are closely aligned with the robot’s operational capabilities. This loop rate is usually included in the robot’s documentation or inside a configuration file (YAML file) of the robot’s ROS controller repository. However, it is also possible to select an environment loop rate lower than the robot’s hardware control loop frequency, as this does not hinder the robot’s normal operation. The effects of choosing a lower or higher frequency for the environment loop rate, along with the impact of action cycle time on learning, are further discussed in

Section 9, using benchmark learning tasks introduced in

Section 8.

8. Benchmark Tasks Creation

This section discusses the development of benchmark tasks using both the MultiROS and the RealROS packages. These simulated and real environments are then used in the subsequent sections to explain and evaluate the proposed real-time environment implementation strategy and to demonstrate some of the use cases of the UniROS framework. These tasks are modeled closely after the Reach task of the OpenAI Gym Fetch robotics environments [49], where an agent learns to reach random target positions in 3D space. In each Reach task, the robot’s initial pose is the default “home” position of the robot (typically set zero for all the joint angles of the robot), and the agent’s goal is to move the end-effector to a target position to complete the task. Therefore, each task generates a random 3D point as the target at each environment reset, and the task is completed when the end-effector reaches the goal within Euclidean distance where (reach tolerance) is set to 0.02 m. However, unlike the Fetch environments, where the action space represents the Cartesian displacement of the end effector, these tasks use the joint positions of the robot arm as actions. This selection was motivated because the joint position control typically aligns better with realistic robot manipulations, offering enhanced precision and simpler action spaces. The following describes the details of the ReactorX 200 and NED 2 robots and the Reacher tasks (Rx200 Reacher and Ned2 Reacher) creation.

The ReactorX 200 robot arm (Rx200) by Trossen Robotics is a five-degree-of-freedom (5-DOF) arm with a 550 mm reach. It moderately operates at a hardware control loop frequency of 10 Hz. This compact robotic manipulator is most suitable for research work and natively supports ROS Noetic (

http://wiki.ros.org/noetic) without requiring additional configuration or setup. It connects directly to a PC via a USB cable for communication and control, providing a reliable and straightforward method of connectivity (uses the default ROS master port). All the necessary packages for controlling the Rx200 using ROS are currently available from the manufacturer as a public repository on GitHub (

https://github.com/Interbotix/interbotix_ros_manipulators). Similarly, the NED2 robot by Niryo is also designed for research work and features six degrees of freedom (6 DOF) with a 490mm reach. It has a slightly higher control loop frequency of 25 Hz and natively runs ROS Melodic (

https://wiki.ros.org/melodic) on an enclosed Raspberry Pi. Niryo offers three communication options for connecting the NED2, including a Wi-Fi hotspot, direct Ethernet, or connecting both devices to the same local network. As SSH-based access was not desirable, this study opted for a direct Ethernet connection and utilized the ROS multi-device mode, as described in

Section 6.2, to ensure a robust communication setup. Furthermore, Niryo also provides the necessary ROS packages (

https://github.com/NiryoRobotics/ned_ros) to be installed on the local system, enabling custom messaging and service interfaces to access and control the remote robot through ROS.

Since two variants of the

Base Env (standard and goal-conditioned) are available in this framework, two types of RL environments were created for both simulation and the real world. These simulated and real environments bear continuous actions and observations and support sparse and dense reward architectures. In the created goal-conditioned environments, the agent receives observations as a Python dictionary containing the typical observation, achieved goal, and desired goal. The achieved goal is the current 3D position of the end-effector

, which is obtained using FK calculations, and the desired goal is the randomly generated 3D target

. One of the decisions made during task creation is to include the previous action as part of the observation vector, as this can minimize the adverse effects of delays on the learning process [50]. Additionally, the observation vector also includes the position of the end effector (EE) with respect to the base of the robot, the current joint angles of the robot, the Cartesian displacement, and the Euclidean distance between the EE position and the goal. Additional experiment information, including details on actions, observations, and the reward architecture, is explained further in

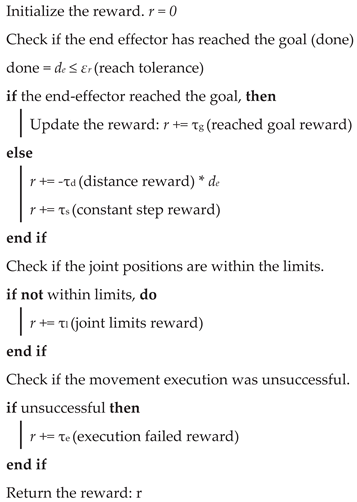

Appendix A.

Furthermore, specific constraints were implemented on the operational range of both types of environments to ensure the safe operation of the robot and prevent any harm to itself or its surroundings. One of the steps taken here is to limit the goal space of the robot so it cannot sample negative values in the z-direction in the 3D space. This is vital since the robot is mounted on a flat surface, making it impossible to reach locations below it. Additionally, before the agent executes the actions with the robot, the environments check for potential self-collision and verify whether the action would cause the robot to move towards a position in the negative z-direction. Therefore, forward kinematics are calculated using the received actions before executing them to avoid unfavorable trajectories, allowing the robot to operate within a safe 3D space. Hence, considering the complexity of the tasks and compensating for the gripper link lengths, the goal space was meticulously refined to have maximum 3D coordinates of and minimum of (in meters) for both robots.

As for the learning agents of the experiments in this study, the vanilla TD3 was used for standard-type environments and TD3+HER for goal-conditioned environments. TD3 is an off-policy RL algorithm and can only be used for environments with continuous action spaces. It was introduced to curb the overestimation bias and the other shortcomings of the Deep Deterministic Policy Gradient (DDPG) algorithm [51]. Here, TD3 was extended by combining it with Hindsight Experience Replay (HER) [52], which encourages better exploration for goal-conditioned environments with sparse rewards. By incorporating HER, TD3+HER improves sample efficiency due to HER utilizing unsuccessful trajectories and adapting them into learning experiences. This study implemented these algorithms using the custom TD3+HER implementations and the Stable Baselines3 (SB3) library, adding ROS support to facilitate their integration into the UniROS framework. The source code and supporting utilities are available on GitHub (

https://github.com/ncbdrck/sb3_ros_support), allowing other researchers and developers to leverage and build upon this work. The detailed information on the RL hyperparameters used in the experiments is summarized in

Appendix B. Furthermore, all compute during experiments were conducted on a PC with Nvidia 3080 GPU (10 GB VRAM) and Intel i7-12700 processor with 64 GB DDR4 RAM.

9. Evaluation And Discussion of the Real-Time Environment Implementation Strategy

This section examines the intricacies of the proposed ROS-based real-time RL environment implementation strategy, utilizing benchmark environments as the experimental setup. The primary goal here is to discuss the two main hyperparameters of the proposed implementation strategy and gain an understanding of how to select suitable values for them, as they largely depend on the hardware capabilities of the robot(s) used in the learning task. Initially, experiments were conducted to investigate different action cycle times and environment loop rates, aiming to uncover the intricate balance between control precision and learning efficiency. Then, exploration was extended to include an empirical evaluation of the asynchronous scheduling within the proposed environment implementation strategy. This process involves thoroughly analyzing the time taken for each action and actuator command cycle across numerous episodes.

9.1. Impact of Action Cycle Time on Learning

In the real-time RL environment implementation strategy, the action cycle time (step size) is a crucial hyperparameter that determines the duration between two subsequent actions from the agent. The selection of this duration impacts the learning performance due to the use of action repeats in the environment loop. Action repeats ensure the robot can perform smooth and continuous motion over a given period, especially when the action cycle time is longer. This technique helps stabilize the robot’s movements and maintain consistent interaction with the environment between successive actions.

Selecting a shorter action cycle time, close to the environment loop rate, would reduce reliance on action repeats and enable faster data sampling from the environment due to more frequent agent-environment interactions. This would allow the agent to have finer control (high precision) over the environment at the cost of seeing minimal changes in the observations. Such minimal changes can adversely affect training, as the agent may not perceive significant variations in the observations necessary for effectively updating deep neural network-based policies, such as TD3. Conversely, selecting a longer action cycle time could lead to more action repeats and substantial changes in observations between successive actions, potentially easing and enhancing the learning for the agent. However, this comes at the risk of reduced control precision and potentially slower reaction times to environmental changes, which can be detrimental when working in highly dynamic environments. Furthermore, this could potentially slow down the agent’s data collection rate, leading to a longer training time.

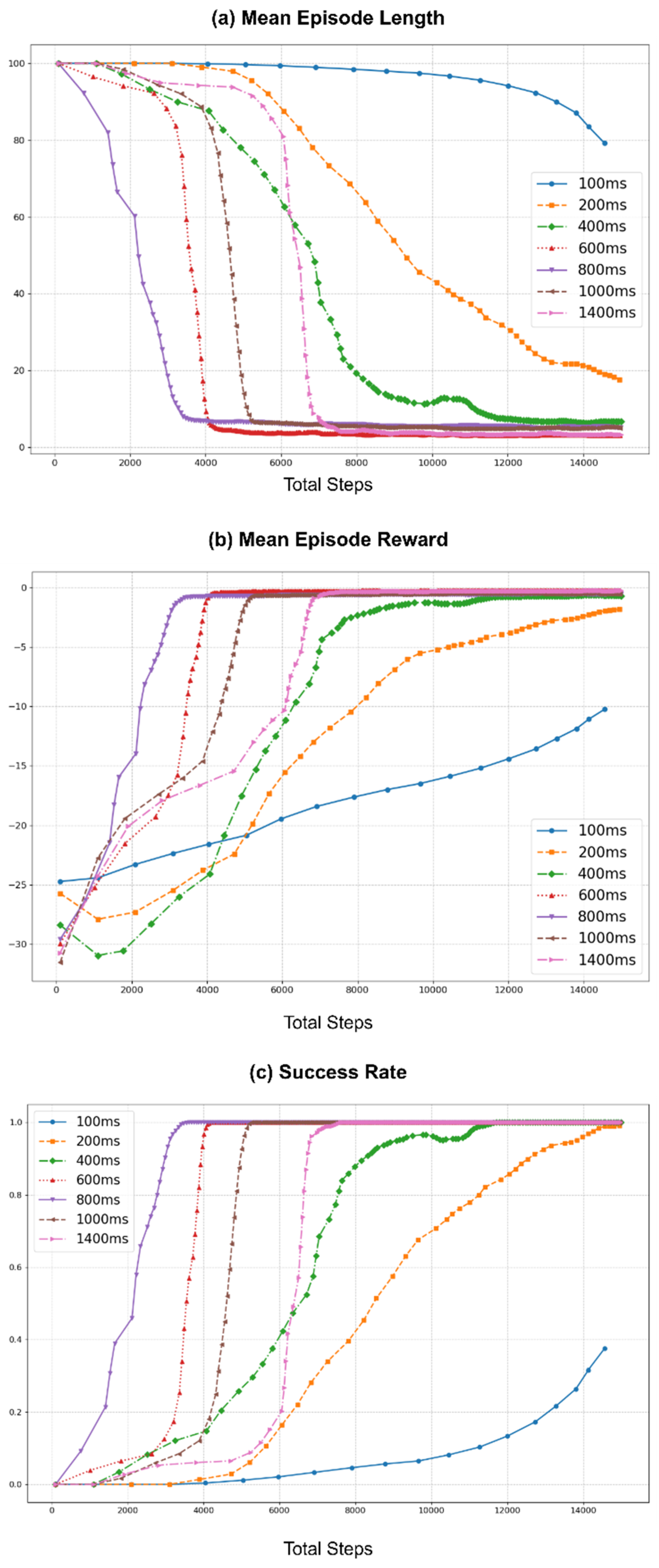

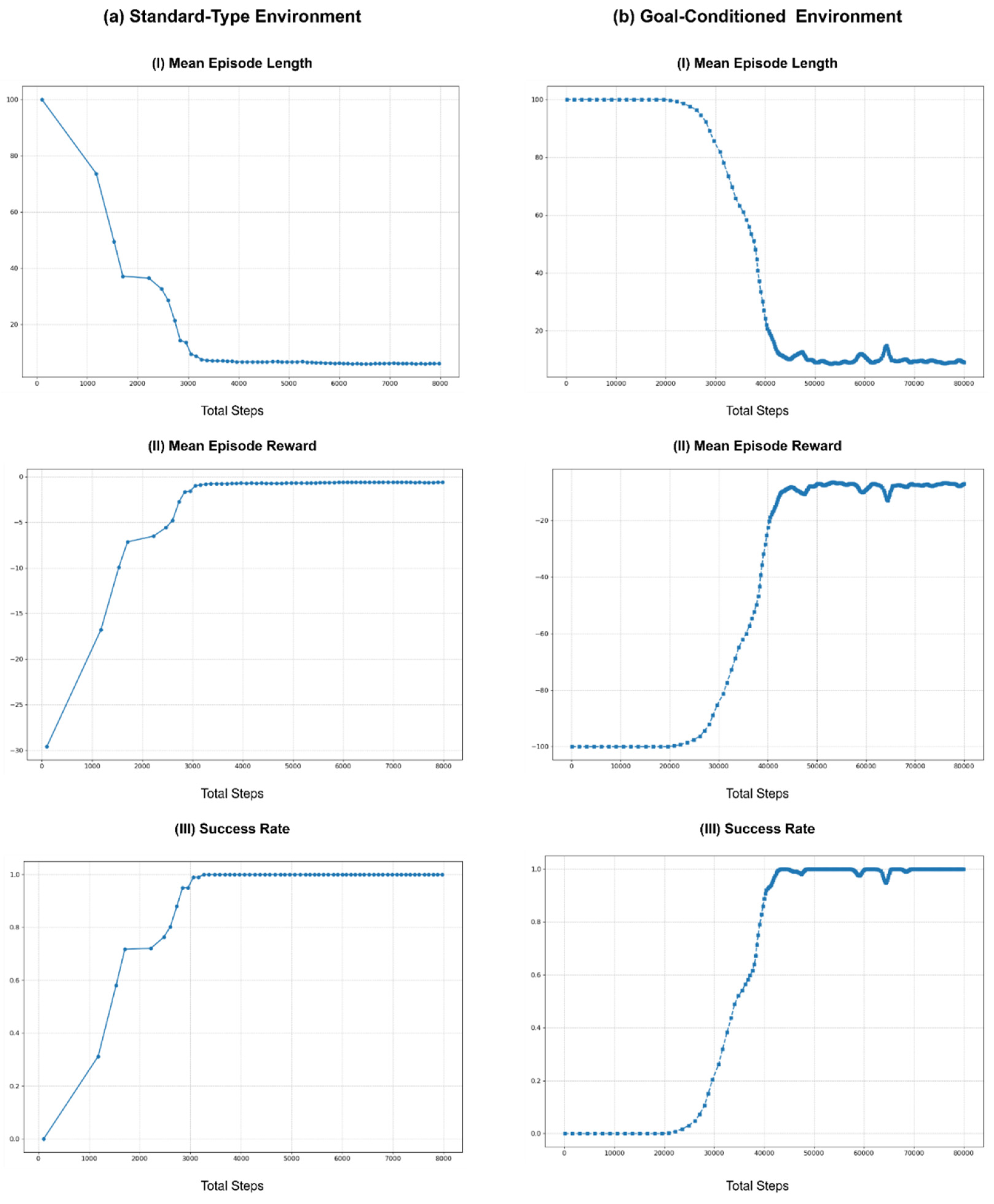

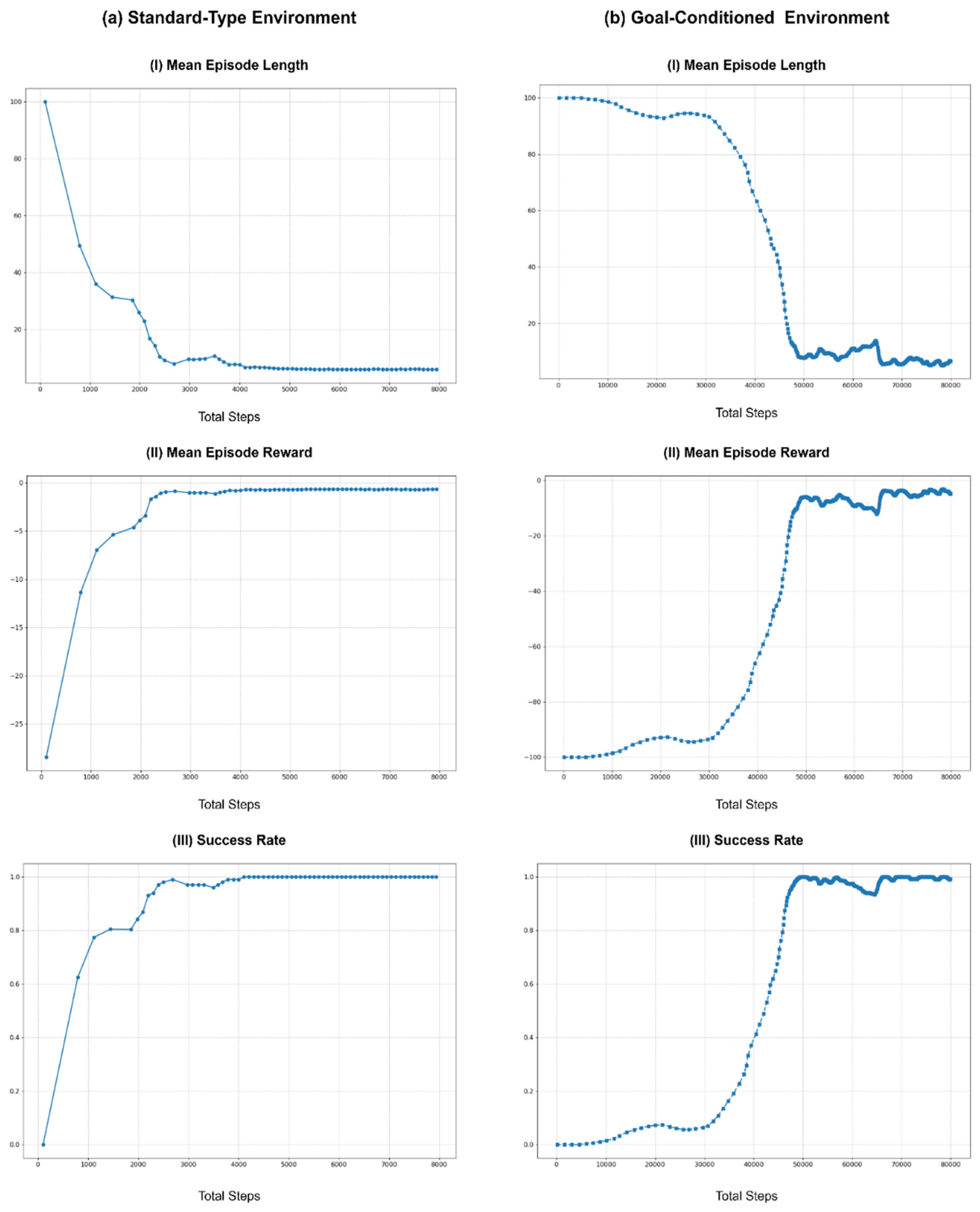

Therefore, to study the effect of action cycle time on learning, experiments were conducted with multiple durations, selecting a baseline and comparing the effects of longer action cycle times, as depicted in

Figure 6. These experiments were conducted in the real-world Rx200 Reacher task, using the same initializing values and conditions and employing the vanilla TD3 algorithm. This figure contains three graphs that illustrate the learning curves of the training process for all the selected action cycle times.

Figure 6 (a) shows the mean episode length, representing the mean number of interactions the agent had with the environment while trying to achieve the goal within an episode. Ideally, the episode length should shorten over time as the agent learns the optimal way to behave in the environment. Similarly,

Figure 6 (b) depicts the mean total reward obtained per episode during training. The agent’s goal is to maximize this reward by improving its policy and learning to complete the task efficiently. Furthermore, in the benchmark tasks, the maximum allowed number of steps per episode is set to 100, providing a maximum of 100 agent-environment interactions to achieve the task. Exceeding this limit results in an episode reset and failure to complete the task. These failure conditions and successful task completion conditions are used to illustrate the success rate curve in

Figure 6 (c) (refer to

Appendix A for more information).

In the experiments, the baseline was set at 100 ms, matching the duration of the hardware control loop frequency of the Rx200 robot (10 Hz), which is used as the default environment loop rate (10 Hz) of the Rx200 Reacher benchmark task. This selection represents the shortest action cycle time that can be used in this benchmark task, as the Rx200 robot does not function properly below this duration. Then, the training was repeated to obtain learning curves for action cycle times of 200 ms, 400 ms, 600 ms, 800 ms, 1000 ms, and 1400 ms. Furthermore, every run of the experiment was conducted for 30,000 steps, allowing sufficient time for each run to find the optimal policy. However, data points illustrated in

Figure 6 were smoothed using a rolling mean with a window size of 10, plotted every 10th step, and shortened to the first 15K steps to improve readability.

As shown in

Figure 6, increasing the action cycle time can improve performance up to a certain point compared to using the same time duration as the environment loop rate (100ms). For this benchmark task, the learning curves for action cycle times of 600ms and 800ms showed the best performance, quickly stabilizing with shorter episode lengths, higher total rewards, and higher success rates. This improvement can be attributed to the balance between sufficient observation changes and the agent’s ability to interact effectively with the environment. However, as the action cycle time increased beyond 800 ms, the performance started to degrade, as the learning curves for action cycle times of 1000 ms and 1400 ms required a larger number of steps to stabilize to an optimal policy. This decline in performance is likely due to the agent receiving less frequent updates, which introduces potentially more significant errors in the policy updates, causing the agent to struggle with maintaining optimal behavior.

Overall, the experiments demonstrate that while increasing the action cycle time can initially improve learning by providing more substantial observation changes, there is a threshold beyond which further increases become detrimental. Therefore, the choice of action cycle time and the use of action repeats must be balanced based on the specific requirements of the task and the capabilities of the robot. Fine-tuning these parameters is crucial for optimizing learning performance and ensuring robust real-time agent-environment interactions.

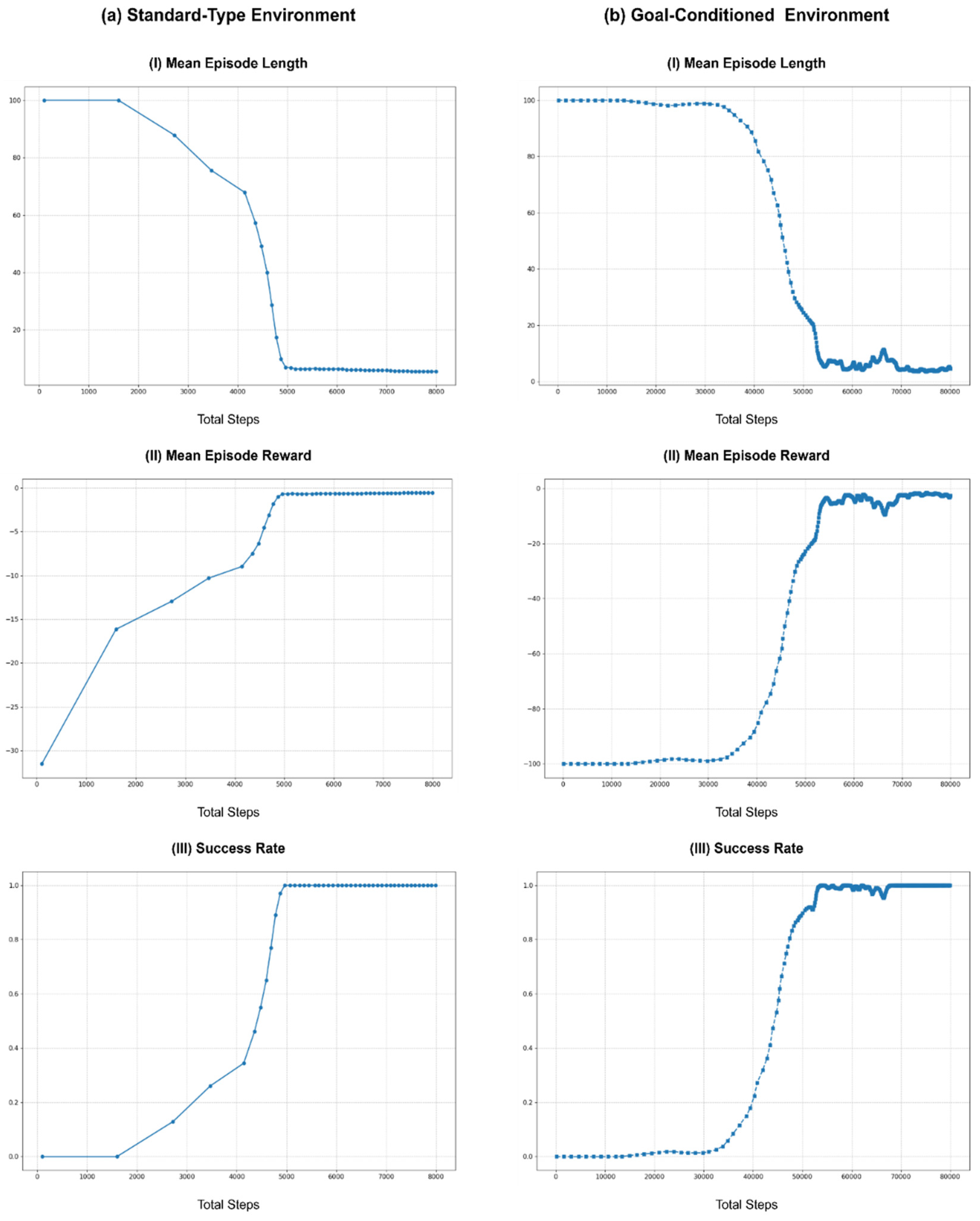

9.2. Impact Of Environment Loop Rate On Learning

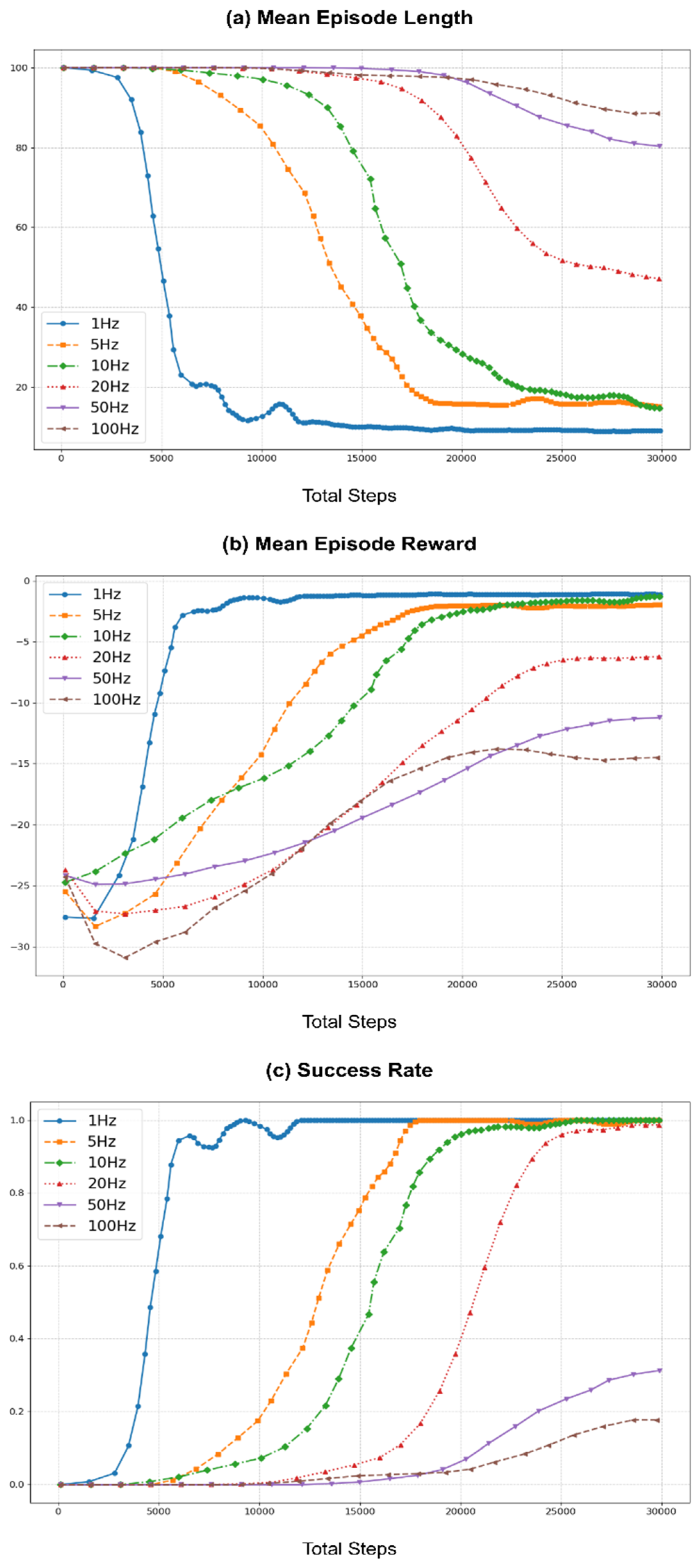

To assess the impact of various environment loop rates, the same learning process was repeated using rates of 1 Hz, 5 Hz, 10 Hz, 20 Hz, 50 Hz, and 100 Hz. Here, the action cycle time was set for each run to match the environment loop rate to simplify the task by eliminating action repeats. Furthermore, the baseline for these experiments was set at 10 Hz to align with the hardware control loop frequency of the Rx200 robot used in the benchmark task. Similar to the previous section,

Figure 7 illustrates the learning curves across different environment loop rates, with the same post-processing methods employed to enhance the readability of the curves.

As shown in

Figure 7, at lower environment loop frequencies (1 Hz and 5 Hz), the learning performance was better compared to the baseline of 10 Hz. This improvement can be attributed to the longer action cycle times that take larger actions (joint positions), which result in more significant variability in observations, aiding the learning process. However, performance starts to degrade as the environment loop rate increases beyond 10 Hz. The learning curves for higher loop rates (20 Hz, 50 Hz, and 100 Hz) show increased mean episode lengths and lower mean rewards, indicating less efficient learning. This decline is due to robots’ inability to effectively process commands and read joint states at these higher frequencies. Although control commands are sent at a higher rate, the robot’s hardware control loop operates at 10 Hz, causing instability in command execution. Furthermore, as ROS controllers typically do not buffer control commands, the hardware control loop processes only the most recent command on the relevant ros topic, leading to instability in training.

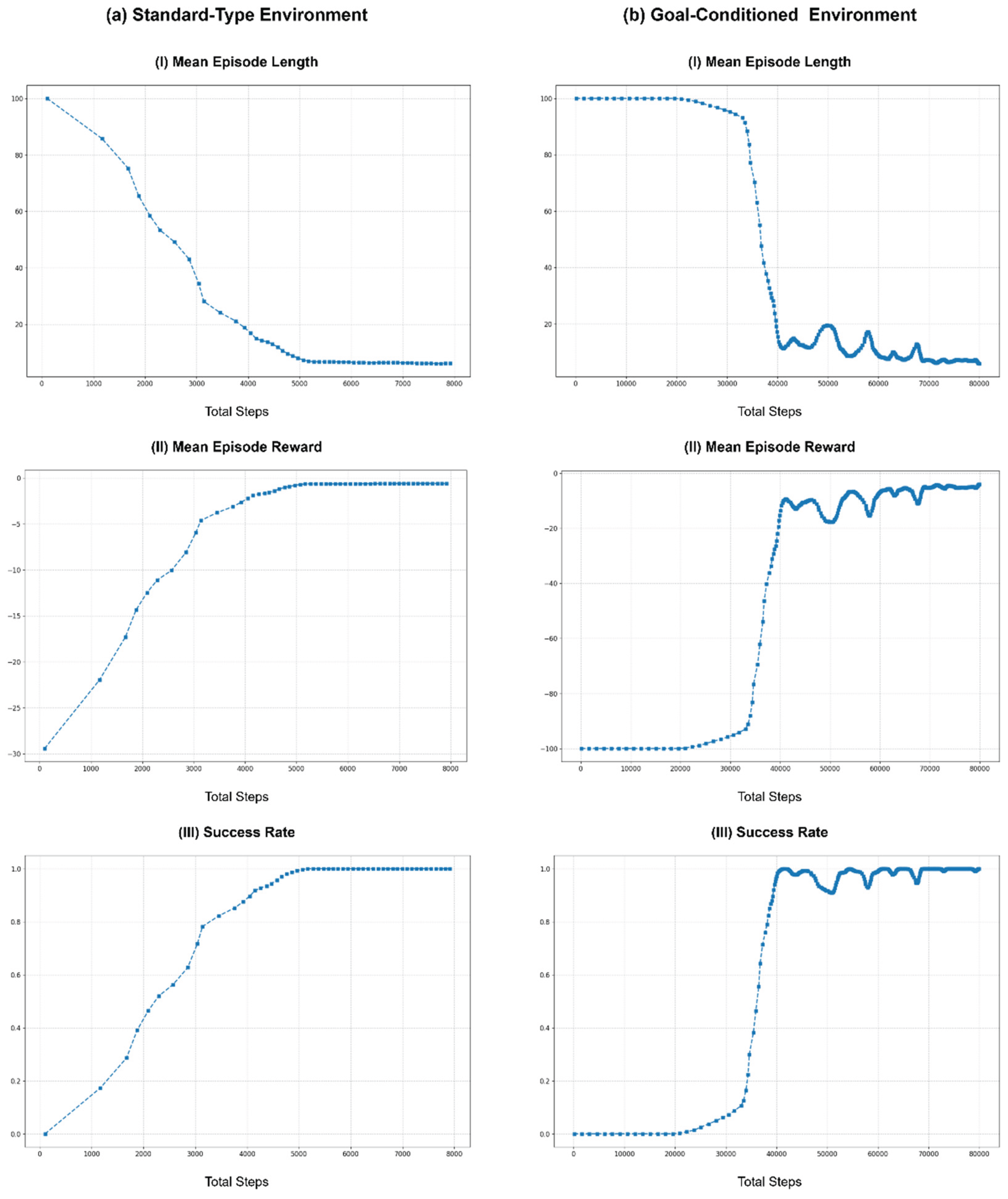

9.3. Empirical Evaluation of Asynchronous Scheduling of the Real-Time Environment Implementation Strategy

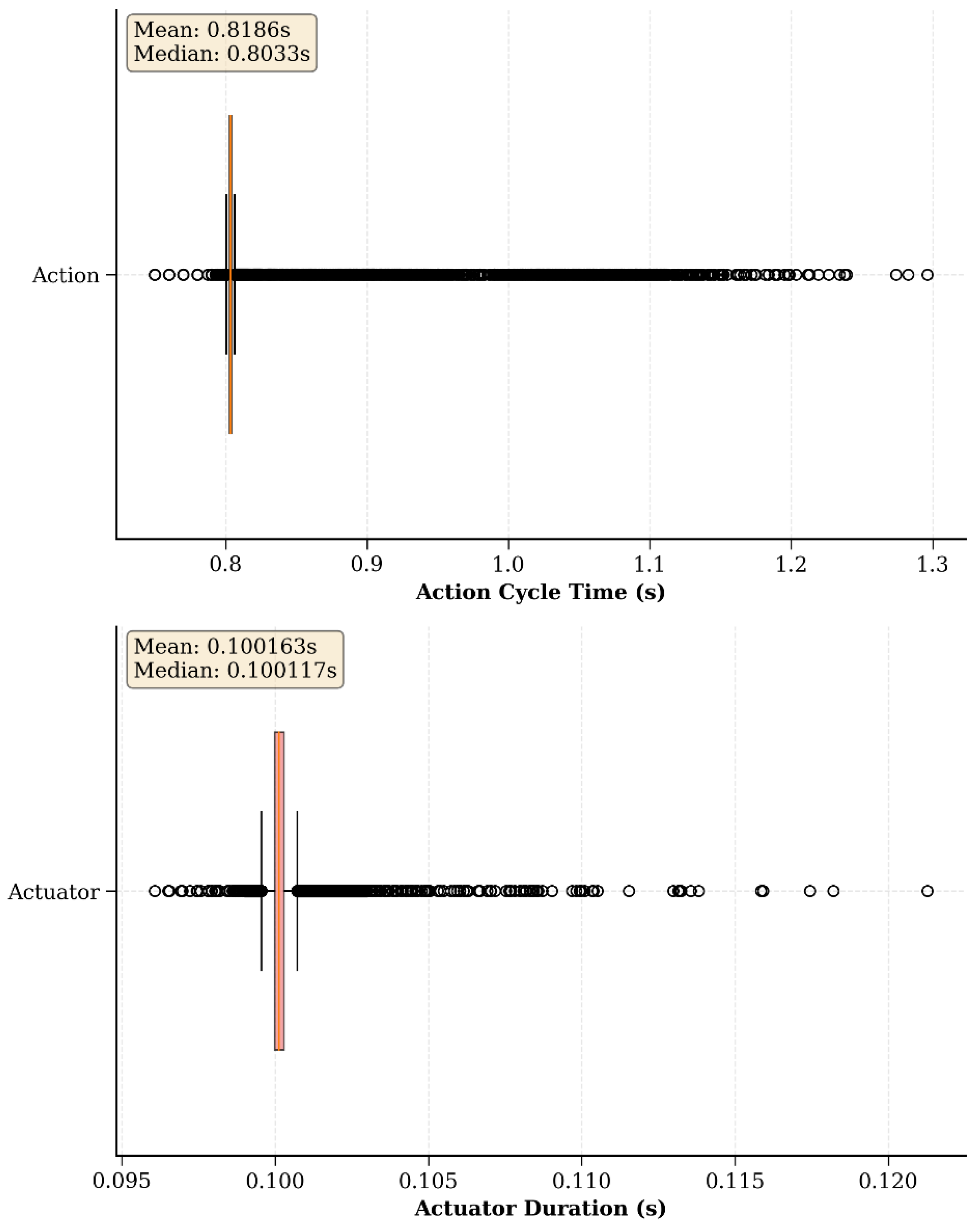

To empirically validate the real-time RL environment implementation strategy, the time taken for each action cycle and each actuator command cycle across numerous episodes was logged. The experiments were conducted using both the Rx200 Reacher and the Ned2 Reacher, with environment loop rates of 10 Hz and 25 Hz, respectively. These rates correspond to a 100 ms period and 40 ms to send actuator commands to the robot, with an action cycle time set at 800 ms, providing ample time to execute action repeats. The goal was to observe the effectiveness of the asynchronous scheduling approach in managing agent-environment interactions and to measure the latency inherent in the system quantitatively.

The boxplot in

Figure 8 a) depicts the distribution of cycle durations for the actuator and action cycles within the Rx200 Reacher Task during training in the real world. It shows a median action cycle time of 803.3 ms and a median actuator cycle of 100.11 ms, which closely approximates the respective preset threshold values for the benchmark task. Furthermore, despite the presence of some outliers, the compact interquartile ranges in both plots indicate that the system performs with a high degree of consistency overall with negligible variability. However, it should be noted that the variations observed in the action cycle durations are partly due to the use of the TD3 implementation in the Stable Baselines3 (SB3) package as the learning algorithm. SB3 is a robust framework for reinforcement learning. However, it is not explicitly designed for robotic applications or real-time training scenarios in mind and is more of a general-purpose RL library. Therefore, SB3 does not typically schedule policy updates immediately after sending an action or use asynchronous processing to update the policy, which can introduce delays and variations. Therefore, the absence of asynchronous scheduling in SB3 means the agent waits for the policy update to be completed before proceeding with the following action. This synchronous approach can lead to slight variations in the action cycle times, as seen in the box plot.

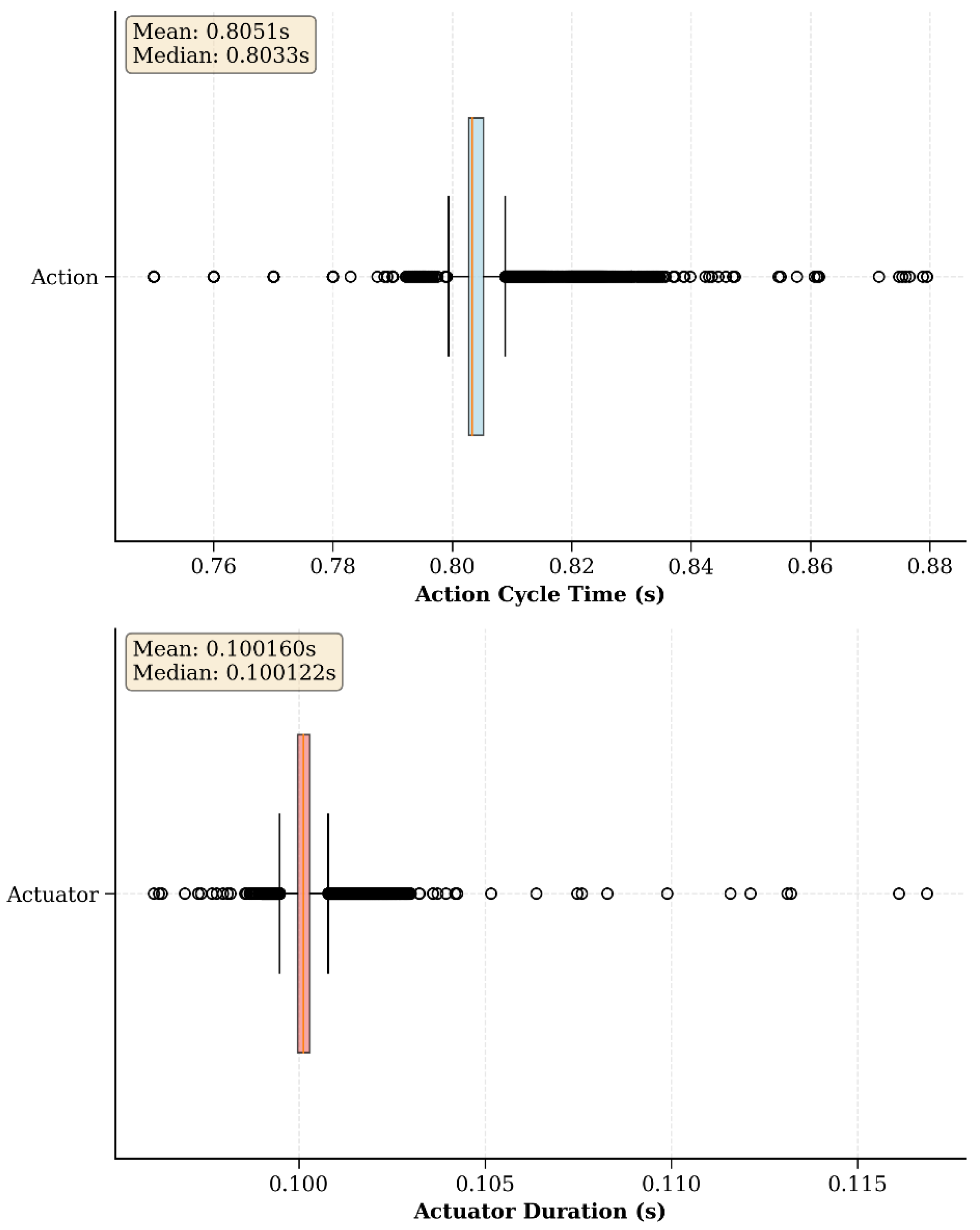

One solution to this issue is to develop custom RL implementations that incorporate asynchronous policy updates [53]. This approach allows the policy to be updated in the background while the agent continues to interact with the environment, thereby reducing latency and improving the efficiency of real-time learning. By scheduling policy updates asynchronously, these methods can ensure that agent-environment interactions are not interrupted, maintaining the consistency and precision required for effective real-time learning. To evaluate the impact of this approach, additional experiments were conducted using a custom TD3 implementation in which policy updates were explicitly scheduled asynchronously relative to data collection. As illustrated in

Figure 9, the Rx200 Reacher demonstrated improved temporal consistency in action execution. The Rx200 Reacher task displayed a similar median action cycle time of 803.3 ms, with a narrower interquartile range compared to the standard SB3 implementation. This reduction in variability confirms that asynchronous scheduling effectively mitigates timing disruptions introduced by synchronous policy updates, leading to improved temporal consistency during task execution.

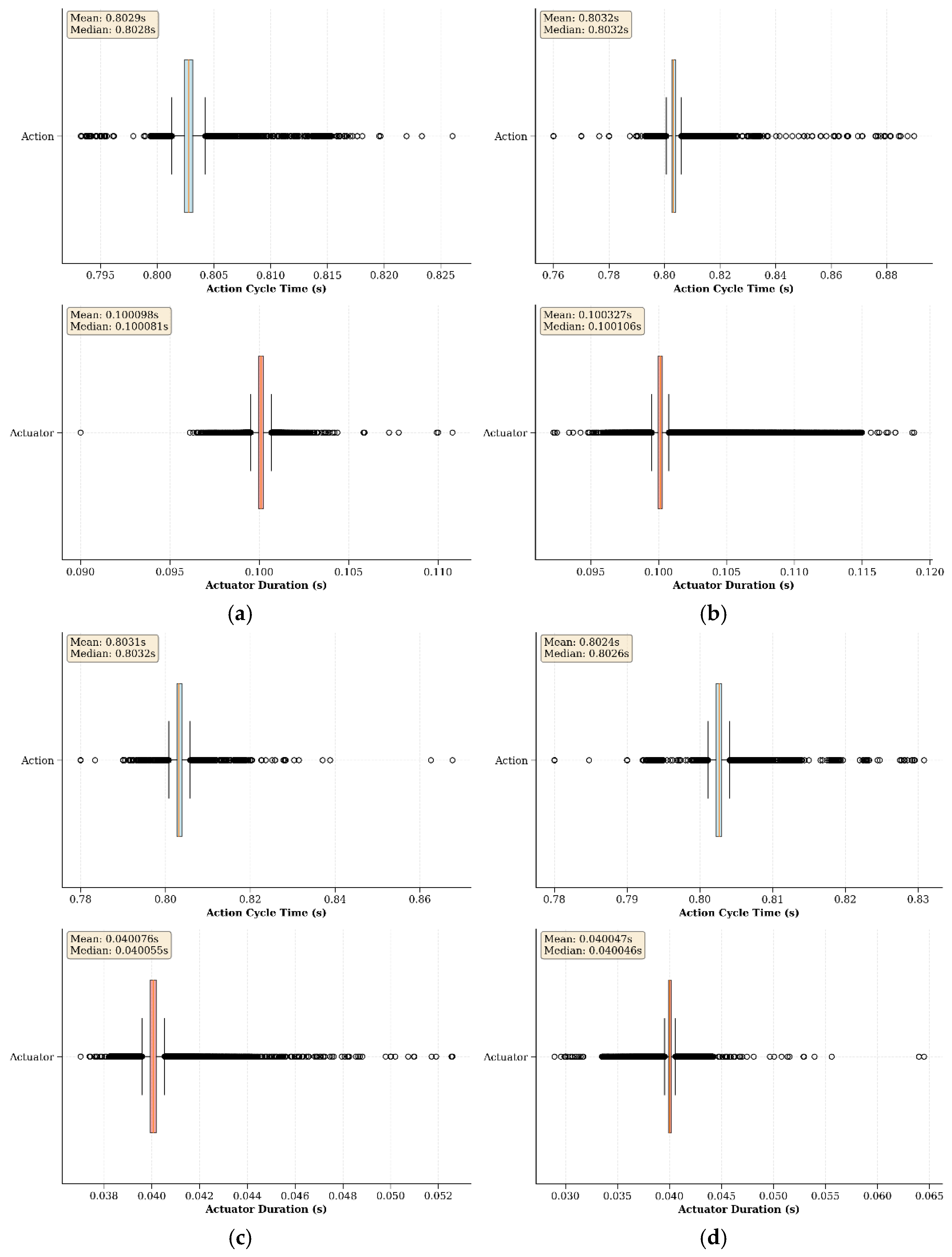

Furthermore, to evaluate the proposed approach under high computational load, the experiments were extended to support concurrent learning across both simulated and real environments. In this setup, all four environments (two simulated and two real environments - Rx200 Reacher and Ned2 Reacher) were trained simultaneously using the asynchronous policy update mechanism. The results, as depicted in

Figure 10, show that the distribution of action and actuator cycle times across all four tasks remains consistent with those observed in the single-environment experiments. Each subplot within the composite boxplot illustrates minimal variation, with median values closely aligning with the predefined cycle thresholds, indicating that asynchronous scheduling sustains reliable timing even under multi-environment execution. These results support the proposed concurrent processing methodology, which minimizes overall system latency and facilitates real-time agent-environment interactions.

9.4. Discussion

All the experiments performed in this study were conducted with a single robot in each RL environment. However, as discussed in the previous sections, the proposed UniROS framework enables the use of multiple robots in the same RL environment, particularly when these robots need to collaborate to complete a task. If the robots used in the task are of the same make and model, the experimenters can use the hardware control loop frequency of the robots as the environment loop rate. However, this setup could introduce additional complexities, particularly when the robots have different hardware control loop frequencies. For instance, consider that the two robots used in this study are combined in a single RL environment, where the Rx200 robot has a hardware control loop frequency of 10 Hz, and the Niryo NED2 operates at 25 Hz. In such a scenario, it is desirable to use the lower hardware control loop frequency (in this scenario, 10 Hz) as the environment loop rate for the entire system to ensure synchronized operation. This approach prevents the faster robot from issuing more frequent commands that the slower robot cannot keep up with, thereby maintaining consistent interaction with both robots. Furthermore, pairing a slower robot like the Rx200 (10 Hz) with a more industrial-grade manipulator, such as the UR5e robot, which has a hardware control loop frequency of 125 Hz, may not be ideal. The disparity in control loop rates could lead to inefficiencies and instability in the learning process. The slower robot would become a bottleneck, hindering the performance of the faster robot and potentially disrupting the overall task execution.

Additionally, when initializing the joint_state_controller that publishes the robot’s joint state information, the publishing rate can be set at any frequency. This will lead to the ROS controller publishing at the specified rate, even though it differs from the hardware control loop frequency of the said robot. While setting a higher frequency does not impact learning, as the ROS controller publishes the same joint state information multiple times, setting a lower frequency will lead to degraded performance because the agent will not receive the most up-to-date information from the robot. Therefore, the most straightforward solution is to adjust the publishing rate of the joint_state_controller to match the hardware control loop frequency or higher, as this ensures that each published message corresponds to an actual update from the hardware.

Similarly, suppose an external sensor in the task operates at a lower rate than the hardware control loop frequency of the robot. In that case, it is essential to account for this in the environment loop of the RL environment. This could mean using the latest available sensor data, even if it is not updated every loop iteration. In these scenarios, action repeats can be beneficial, especially when dealing with robots with higher hardware control loop frequencies. It will make learning easier for the RL agent by receiving observations that display more substantial changes at each instance rather than infrequent and minimal changes that make learning harder.

10. Use Cases

Here, three possible use cases of UniROS are exhibited, each highlighting a unique aspect of its application. The first use case demonstrates training a robot directly in the real world, showcasing how to utilize the framework for learning without relying on simulation. The second use case demonstrates zero-shot policy transfer from simulation to the real world, highlighting the capability of the proposed framework for transferring learned policies from simulation to the real world. Finally, the last use case demonstrates the framework’s ability to learn policies applicable in both simulated and real-world environments. In these use cases, the environment-loop rate was set to 10 Hz and the action cycle time to 800 ms, as this configuration showed the best results for the Rx200 Reacher task in

Section 9.1. Similarly, an environment-loop rate of 25 Hz and the same action cycle time of 800 ms were used for the Ned2 Reacher due to the multi-task learning setup in one of the use cases (

Section 10.3), ensuring that both robots received actions at the same temporal frequency. This consistency in action dispatching across tasks facilitated stable training and improved coordination when learning shared representations in the multi-robot learning setups.

10.1. Training Robots Directly in The Real World