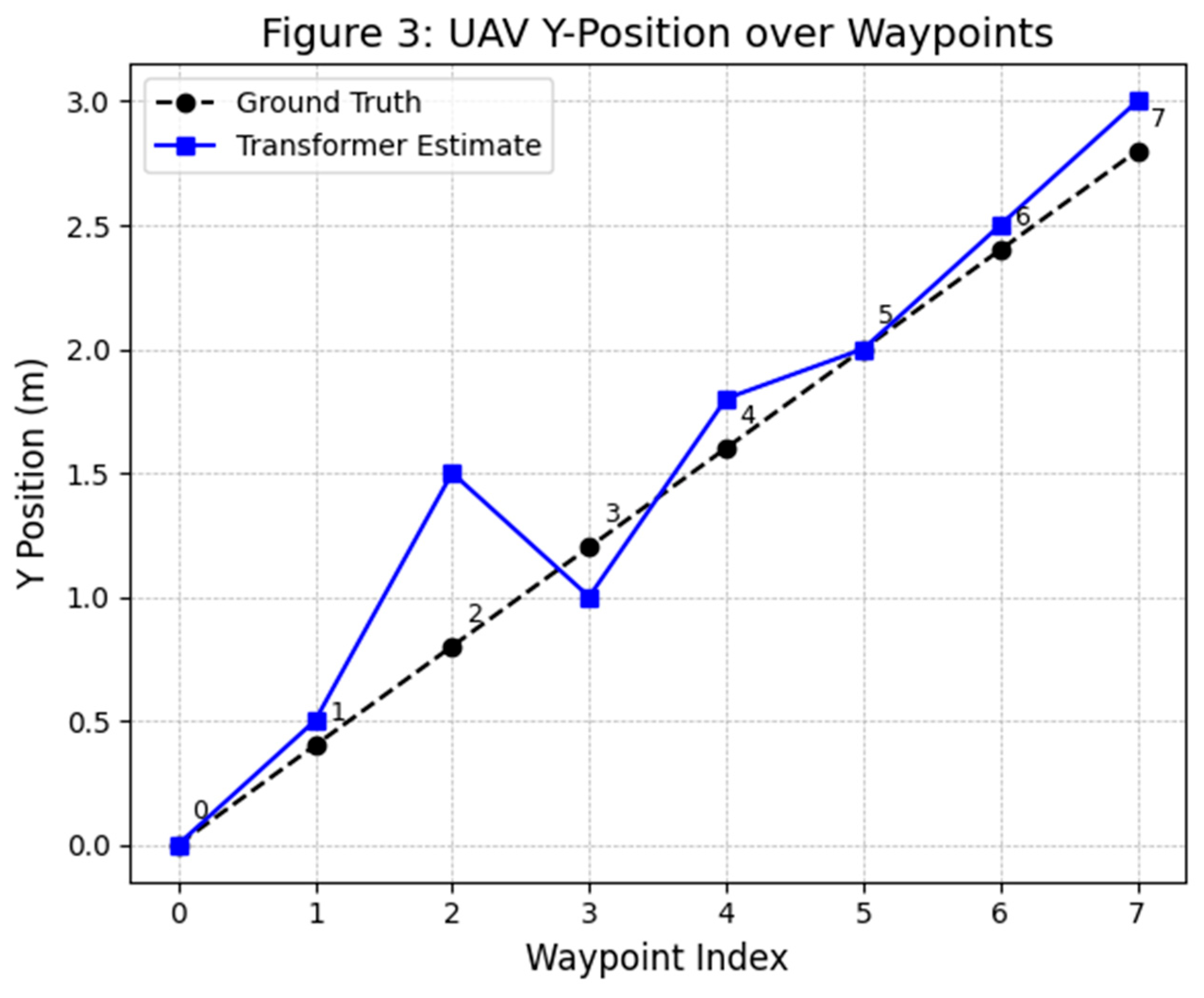

3. Methodology

The Transformer-based sensor fusion architecture systematically integrates heterogeneous sensor data to provide comprehensive, real-time situational awareness and state estimation for UAM vehicles. Below, each phase of the fusion process aligns explicitly clearly outlining the symbols, mathematical formulations, and practical implications.

3.1. Fusion Algorithm Overview

The proposed fusion architecture employs a Transformer-based model inspired by the Perceiver IO architecture. At each timestep t, diverse sensor data are encoded into structured embeddings, and latent vectors dynamically fuse these embeddings. The goal is real-time processing, robustness and certifiable performance, integrating data from LiDAR, EO/IR cameras, GNSS, ADS-B, IMU, and radar.

At the core of our system is a Transformer-based sensor fusion algorithm that ingests heterogeneous sensor inputs and produces a fused state estimation and situational awareness output which aligns with recent deep learning-based sensor fusion strategies designed specifically for urban air mobility aircraft [

11]. The design is inspired by Perceiver IO, which employs a latent-space Transformer to handle arbitrary inputs. We define the following inputs to the model at each time step

t:

LiDAR point cloud Pt : , (and possibly reflectance or intensity). This is high-volume data.

EO/IR camera images( , ) contains large 2D arrays of pixels, potentially combined as multi-channel embeddings.

GNSS reading Gt providing latitude, longitude, altitude and time, plus estimated accuracy.

ADS-B messages At = Messages from other aircraft, each containing an identifier and state vector (e.g. another vehicle’s reported position/velocity).

IMU (accelerometer & gyroscope) data Ut, typically 3-axis acceleration and rotation rates, typically integrated at high rate.

Radar detections Rt = includes embeddings of detected objects with range, azimuth, elevation, and Doppler information.

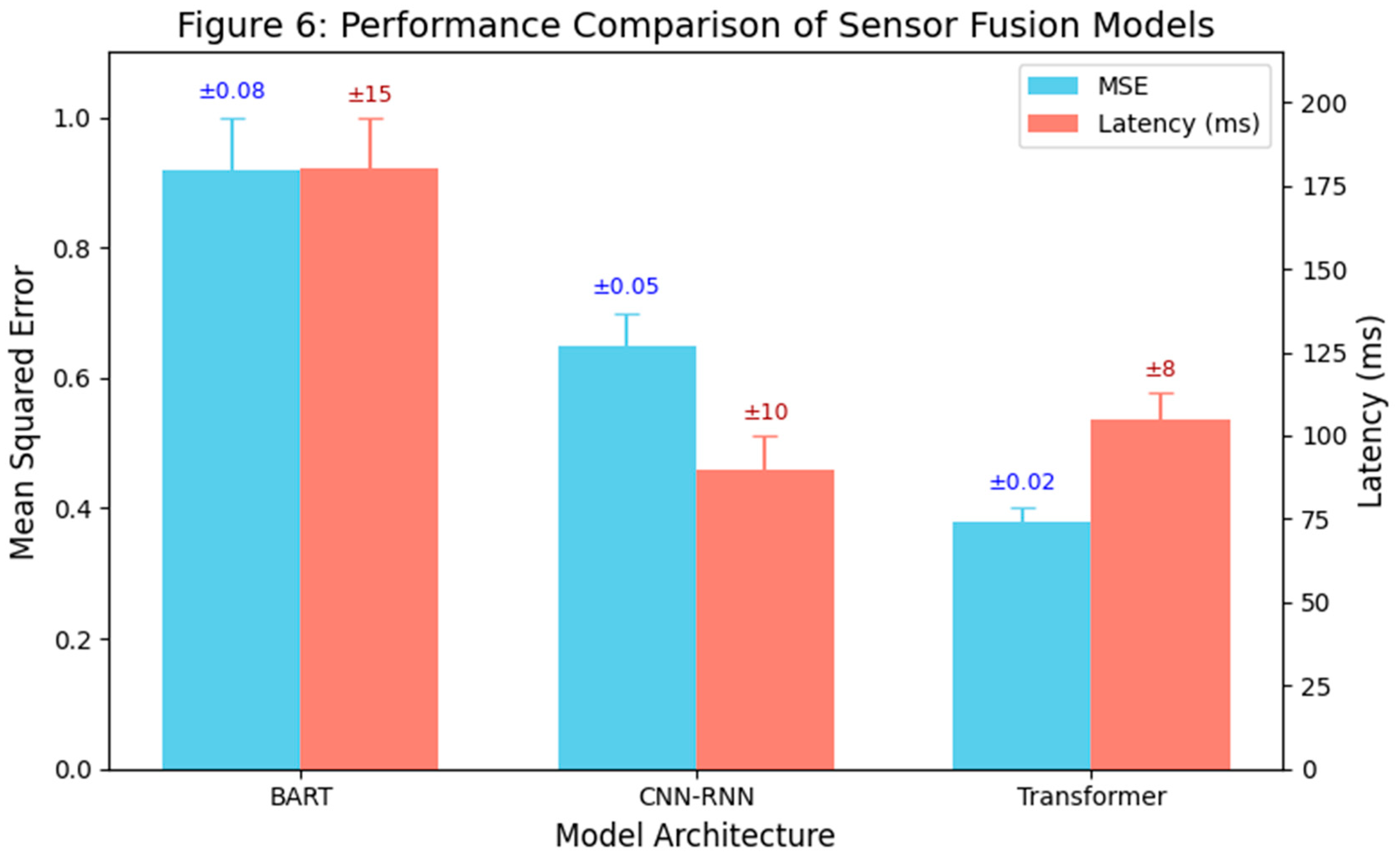

Below (

Figure 1 illustrates the overall architecture, including sensors, the fusion core, and the safety monitor.

3.2. Cross-Attention Encoding

Cross-attention is the primary mechanism through which latent vectors aggregate sensor embeddings dynamically. Each sensor modality has different data rates and dimensions. To fuse them, we first apply a preprocessing and encoding step. Continuous data like images and point clouds are transformed into a set of discrete feature embeddings (tokens). For example, we use a sparse voxel encoder for LiDAR (projecting Pt into a set of voxels with feature descriptors) and a convolutional neural encoder for images (producing a patch-wise embedding). Let Zt denote the collection of all encoded sensor tokens at time t. We also append any low-rate structured data (GNSS position, etc.) as small token vectors (or as extra features to a latent). This yields:

Sensor Embeddings encoded at timestep

t as:

where each

represents sensor-specific learned features:

LiDAR: Voxelized embeddings from 3D points.

EO/IR cameras: Patch embeddings from CNNs.

GNSS: Numeric embeddings for position and reliability.

ADS-B: State vector embeddings from nearby aircraft.

IMU: Embeddings of acceleration and rotation rates.

Radar: Embeddings capturing detected object characteristics.

We also include positional encodings or timestamp information as appropriate.

Next, we introduce a fixed set of N latent vectors L = [ℓ1, ℓ2, … , ℓN] that serve as the query backbone of the Transformer each ℓi ∈ In our implementation, N=256 latents of dimension dlatent = 1280 (following Perceiver IO defaults). The fusion Transformer operates in two phases at each timestep:

The latent queries attend to the sensor tokens. That helps to compute attention weights and updates using the cross-attention encoding process which involves projecting latents and sensor embeddings into query (

), key (

), and value (

) spaces:

where

WQ, WK, WV are learned projection matrices.

The cross-attention output updates each latent

ℓ𝑖 by attending over all sensor tokens:

where attention weights

quantify relevance of sensor embeddings to latents as:

The factor ensures numerical stability during computations.

This operation integrates information from every sensor modality into the latent space. Intuitively, each latent can be thought of as querying a combination of sensor features (e.g. one latent might focus on “obstacle ahead” features by attending to both LiDAR depth and camera appearance for an object).

3.3. Latent Self-Attention and Feedforward

After cross-attention, we perform several layers of standard Transformer self-attention on the latent set L (which now contains sensor-integrated info). This further mixes and propagates information globally.

Multi-head self-attention enables latents to interact dynamically, capturing complex global dependencies across sensor-fused data. It allows the model to form higher-level features (e.g. correlating an obstacle’s position with the vehicle’s own motion).

Position-wise feedforward networks within each Transformer block provide nonlinearity and feature transformation. The latent Transformer layers are analogous to an encoder that produces a fused latent representation of the world state. This additional refinement stage ensures robust, context-aware representations suitable for accurate UAM navigation and situational awareness[

10].

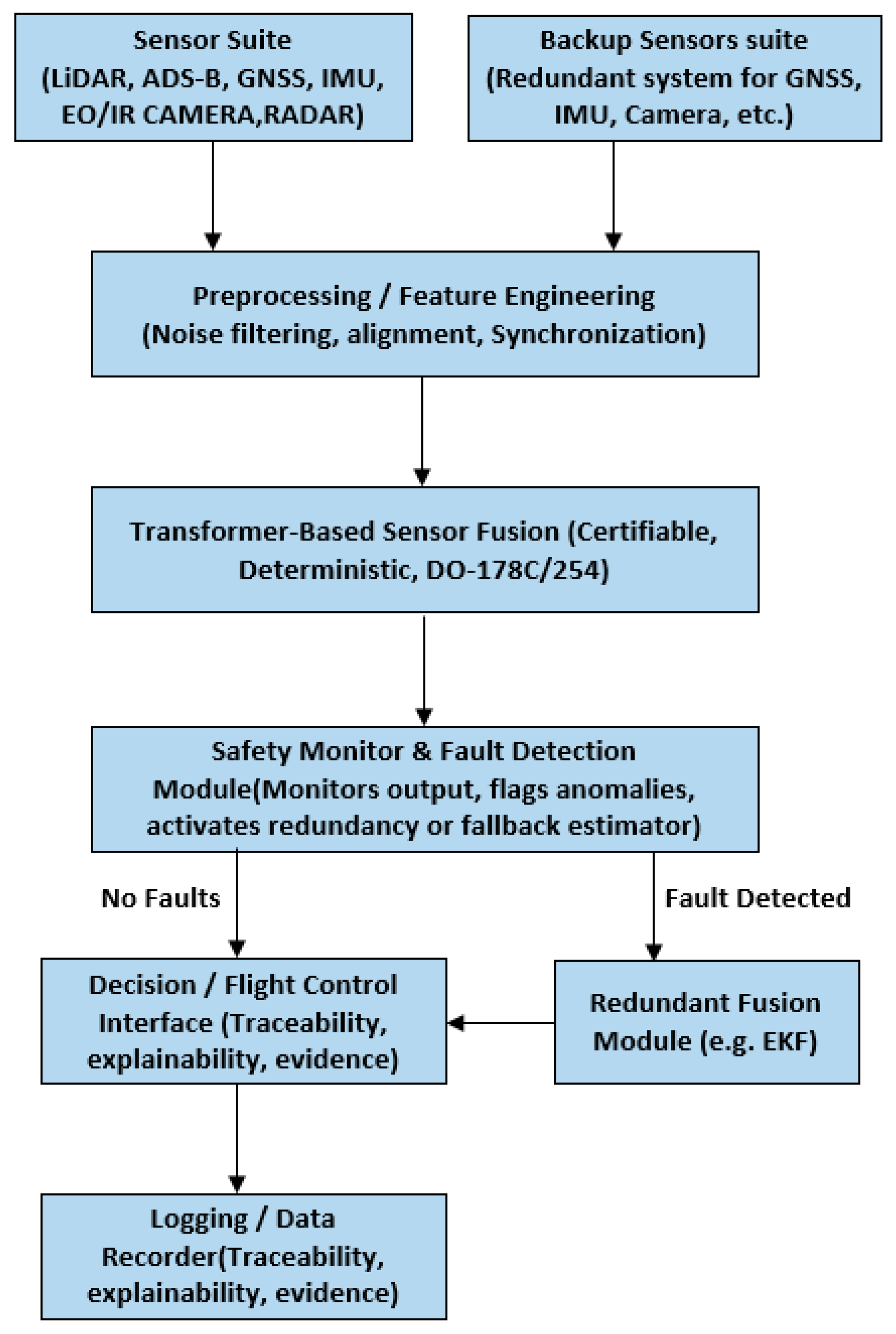

Below (

Figure 2) heatmap illustrates the attention distribution of a transformer layer correlating LiDAR and radar input channels in a UAM sensor fusion setup.

The Heatmap shows Cross-modal attention visualization between LiDAR and radar frames. Diagonal values indicate self-attention; off-diagonal interactions imply sensor cooperation. The diagonally dominant attention weights highlight strong temporal self-alignment, while the off-diagonal weights imply cross-sensor fusion benefits.

3.4. Decoding and Output Generation

After these steps, the final latent vectors encode the fused state. We then use a task-specific decoder to produce outputs. In our UAM case, two key outputs are generated: (a) the Ego-state estimate (own vehicle’s 6-DoF pose, velocities, and their uncertainties), and (b) an environment map or list of tracked objects (positions of other aircraft or obstacles with confidence scores). The decoder is implemented as a set of learned query vectors for each output element. For example, to estimate own pose, we use a decoder query that attends to all latents and regresses a pose. For mapping, we use a set of queries (or an autoregressive decoder) to output a variable number of detected obstacles, each with location and classification. Perceiver IO’s flexibility allows decoding to structured outputs of arbitrary size.

Mathematically, the fusion algorithm learns an approximate function that maps the entire sensor history to a state estimate: It does so by encoding prior knowledge in attention weights rather than explicit motion models. Unlike traditional Bayesian filters, our Transformer-based fusion dynamically encodes prior knowledge, reliability, and relevance of sensors via learned attention mechanisms, enabling robust performance in challenging environments. We impose training objectives that include supervised losses (comparing to ground-truth state) and consistency losses (e.g. predicting the same obstacle position from different sensor combinations) which is later discussed in Results section.

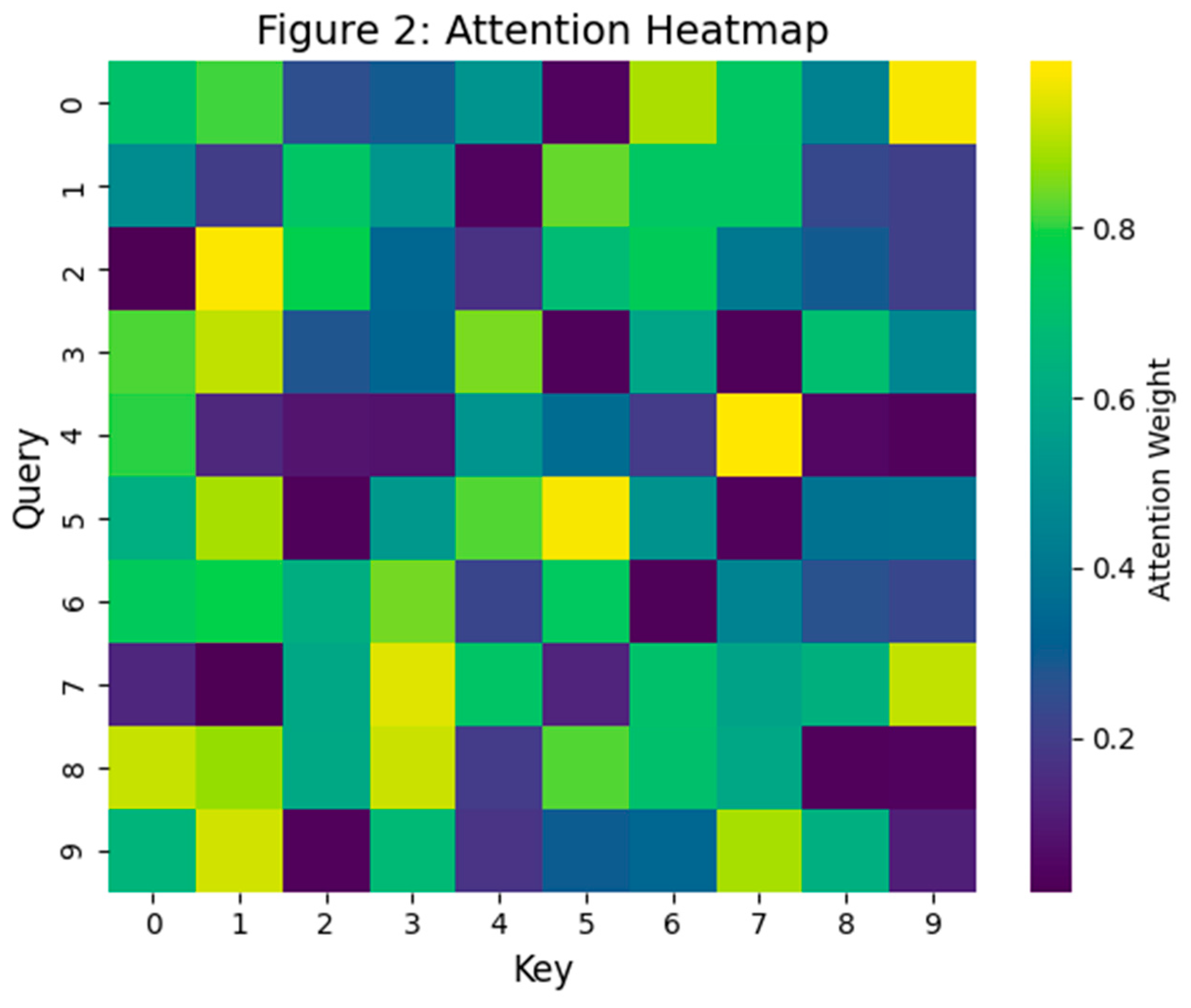

(

Figure 3 shows a top-down view of the ego vehicle’s flight trajectory. The Transformer-based sensor fusion estimate (blue line with square markers) is overlaid on the ground-truth path (black dashed line with circular markers). Waypoint indices (0–7) are labeled along the paths. This figure compares the estimated 2D trajectory produced by the Transformer-based fusion algorithm to the true trajectory of the eVTOL UAV in an urban environment. The black dashed line indicates the ground truth path (e.g., from simulation), and the blue line shows the Transformer's fused state estimation. As depicted, the Transformer's trajectory closely follows the ground truth through the urban corridor. Minor deviations are observed at a couple of waypoints (e.g., around index 2 and 3, the blue line dips slightly relative to truth), but overall the error remains small (on the order of a few tenths of a meter). In the GNSS-degraded zones (such as when flying between tall buildings creating an urban canyon), the Transformer’s estimation still stays on track with only a modest drift, thanks to the integration of vision and LiDAR data to correct the INS drift. The error margins (uncertainty bounds) were reported to be stable and small throughout the run, indicating high confidence in the fused solution. Notably, this Transformer-based approach outperforms a traditional Extended Kalman Filter (EKF) baseline – the EKF would have shown a significantly larger deviation in such degraded conditions, whereas the Transformer's trajectory remained much closer to ground truth. This demonstrates the efficacy of the fusion algorithm in maintaining accurate navigation even when one of the sensors (GNSS) becomes unreliable.

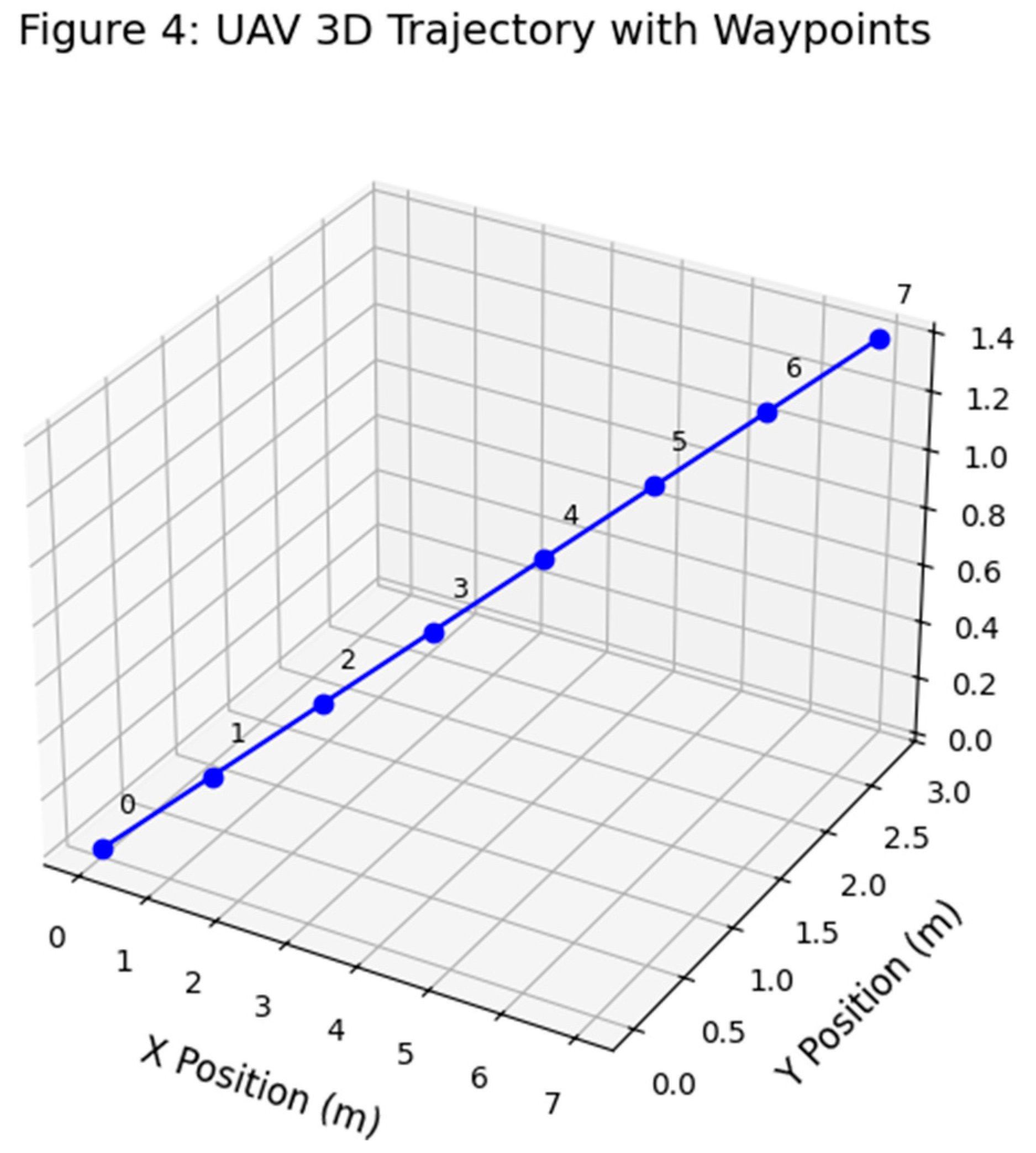

(

Figure 4 shows a spatial visualization of the UAV trajectory across three axes, reconstructed using transformer-based sensor fusion. Each waypoint corresponds to a multimodal input frame, and the continuity of the path highlights the model’s ability to maintain positional consistency under noisy sensor conditions. This spatial stability is essential for downstream control and obstacle avoidance modules in UAM systems.

Figure 4 provides a 3D visualization of the UAV’s reconstructed trajectory under full sensor availability. Each waypoint represents a timestamped output from the Transformer-based fusion system, illustrating the model’s ability to maintain geometric consistency in both horizontal and vertical dimensions. This spatial coherence is particularly critical in urban air mobility (UAM) corridors, where deviations in altitude can increase collision risk. The smooth transitions between waypoints indicate temporal continuity across fused sensor frames, and the stable Z-axis alignment confirms that altitude-aware data from LiDAR and radar are successfully integrated—addressing a common failure mode of IMU-only pipelines. This level of fidelity supports downstream control logic and enables real-time trajectory tracking essential for UAM certification.

3.5. Determinism and Quantization for Real-Time

Large Transformer models can be computationally intensive, challenging real-time constraints on embedded hardware. We mitigate this using several strategies. First, by using a latent bottleneck (N =256), the cross-attention step cost is which is linear in input size, and (the total tokens) is controlled via downsampling (e.g. using only ~1024 tokens per sensor). The latent self-attention is which is manageable. We further reduce complexity by utilizing 16-bit quantization and pruning insignificant attention heads after training. The resulting model has ~5 million parameters, fitting in a few MB of memory. A lightweight version of our model (inspired by the HiLO object fusion Transformer) can run in under ~3.5 ms per inference on modern embedded CPUs/GPUs, and our design leverages an onboard GPU/FPGA for acceleration.

To ensure deterministic execution suitable for certification (DO-178C, DO-254), the model avoids non-deterministic operations by employing fixed random seeds for inference-time dropout and deterministic softmax implementations. Execution frequency is fixed (e.g., 50 Hz), and a Worst-Case Execution Time (WCET) analysis is conducted. Software implementation follows MISRA-C++ guidelines, with static buffer allocations sized to worst-case sensor loads, and the neural network weights remain fixed post-training to eliminate non-deterministic behaviors during flight.

While the architectural design supports embedded real-time deployment using GPU/FPGA acceleration and safety-partitioned execution, the current evaluation is conducted exclusively in a high-fidelity simulation environment. Hardware deployment and certification testing are planned for future work.

3.6. Certifiability Measures

It is crucial to our methodology to integrate certifiability requirements into the design:

Requirements Traceability: We decompose the overall fusion function into high-level and low-level requirements. Example of the high-level requirements would be providing position estimate within 2 min accuracy 95% of time. The system shall detect loss of GNSS within 1 sec and re-weight sensors accordingly would be considered as low-level requirements. Each network component or module is linked to these requirements, and test cases are derived. By treating the neural network as an implementation detail fulfilling these requirements, we maintain traceability.

Determinism & Testability: As highlighted by AFuzion’s guidance, true AI that learns in operation is problematic for certification because identical inputs must always yield identical outputs. Our model is frozen and deterministic at runtime. We also implement a test oracle for the network: using recorded sensor data, we verify that the same input sequence produces the same outputs (bit-wise), and we capture coverage metrics on the network’s computation graph analogous to structural coverage on code.

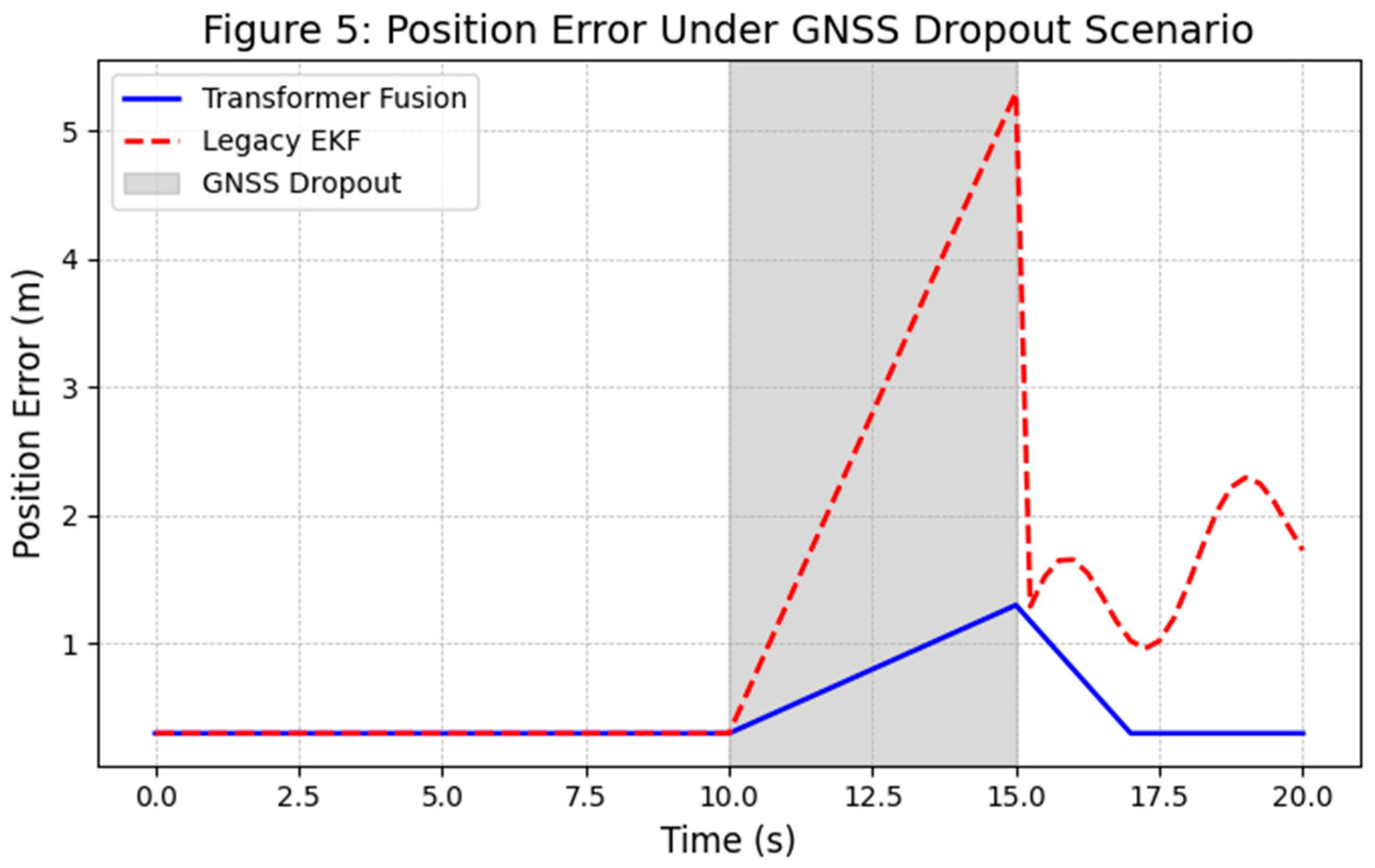

Robustness and Fail-Safe Mechanisms: DO-178C requires robust handling of abnormal conditions. We incorporate a Safety Monitor & Redundancy module which is external to the fusion network. This monitor executes in parallel, a simplified state estimator like an EKF using a subset of sensors and compares results. It also monitors network outputs for anomalies such as sudden jumps or outputs outside physical bounds. If a discrepancy or anomaly is detected, the system can revert to a safe mode. For instance, use the backup EKF for state estimation and issue alerts or commands to enter a holding pattern. This approach aligns with recommendations for deploying AI in safety-critical systems: having a deterministic guardian that can override or limit the AI’s actions.

Explainability: For certification credit, one must justify that the system decisions are understandable. We log the attention weights of the Transformer as a form of explanation of which sensors contributed to a decision. For example, if the fused position shifts, we can show whether it was due to LiDAR seeing an obstacle or GPS re-gaining lock. Additionally, by design, our architecture allows tracing outputs back to specific inputs – a property Intelligent Artifacts refers to as traceable AI. This traceability is crucial for the certification authority to have confidence in the AI as it addresses network’s decision making.

Multiple sensors feed into a Transformer-based fusion core which produces fused state estimates and environment data. A Safety Monitor with redundant logic checks the outputs and can initiate fallback modes. This co-architecture is designed to meet DO-178C/254 certifiability, with deterministic processing on embedded hardware (e.g., a DAL A certifiable CPU/GPU platform) and traceable data flows.

3.7. Hardware-Software Architecture

To implement the described Transformer-based sensor fusion methodology in a UAM avionics environment, we propose a comprehensive hardware-software co-architecture explicitly aligned with certification constraints and real-time performance requirements. The architecture is modular and comprises the following subsystems:

Sensors and Interface Subsystem: Each onboard sensor (LiDAR, EO/IR camera, GNSS receiver, radar, IMU, and ADS-B receiver) interfaces through dedicated modules performing precise time-stamping and preliminary data preprocessing. For high-bandwidth sensors (e.g., LiDAR, radar, and cameras), front-end processing such as filtering, downsampling, and region-of-interest extraction occurs via FPGA or specialized DSP hardware to effectively reduce data throughput. All sensor inputs are synchronized using a common timing source, typically disciplined by GNSS, ensuring synchronization accuracy within milliseconds.

Main Fusion Processor: This central computational unit executes the Transformer fusion model and related algorithms. We assume a Commercial-Off-The-Shelf (COTS) processor providing robust certification artifacts, such as the Mercury ROCK3 (Intel i7 with integrated GPU) or a comparable certifiable computing system. A Real-Time Operating System (RTOS), compliant with ARINC 653 standards or equivalent robust partitioning schemes (e.g., Wind River VxWorks 653 or seL4 separation kernel), manages computational tasks. The RTOS partitions run specific tasks independently for the Transformer-based sensor fusion model (leveraging onboard GPU via CUDA or FPGA acceleration), the Safety Monitor subsystem, and additional critical tasks such as flight control and communication. Employing a CAST-32A compliant RTOS ensures isolation and interference-free multi-core operations. Note that, this hardware configuration is currently proposed and not yet implemented in real-world testing. Performance evaluation is based on simulated constraints.

Acceleration Hardware: We might implement computational acceleration for neural-network inference that leverages Field-Programmable Gate Arrays (FPGAs) or integrated GPUs. FPGA implementation—favored due to deterministic timing—typically utilizes fixed-point arithmetic for matrix multiplication operations. Recent developments in GPU certification, especially when treated as complex COTS devices with safety-monitoring wrappers, also enable GPU usage. These acceleration mechanisms significantly reduce inference latency, crucial for real-time operation.

Safety Monitor & Redundant Computer: Critical avionics applications, meeting Design Assurance Level (DAL) A, necessitate redundancy. Hence, our architecture incorporates a parallel redundancy computing channel executing simplified algorithms, such as an Extended Kalman Filter (EKF), independently on isolated hardware. Continuous cross-verification by comparators or voters assesses consistency between primary (Transformer fusion) and secondary (simplified redundancy) computational outputs. Redundant configurations also extend to critical sensor suites (e.g., dual IMUs, multiple cameras positioned differently) to mitigate single-point failures.

Communications and Outputs: Processed state estimation and environment mapping outputs are disseminated to downstream flight-control, navigation, and decision-making algorithms, which are partitioned by time-critical scheduling. Communication to actuators, controllers, and potentially other vehicles employs reliable avionics communication protocols (e.g., ARINC-429, CAN bus, or Ethernet with Time-Sensitive Networking (TSN)). Additionally, data-sharing mechanisms such as Vehicle-to-Vehicle (V2V) and Vehicle-to-Everything (V2X) communication protocols enable cooperative awareness within urban airspace.

3.8. Hardware Certifiability Considerations

Ensuring compliance with DO-254 guidelines, all hardware elements within the architecture adhere to rigorous, traceable development cycles comprising:

Requirements Definition: It includes clearly documented functional, performance, and safety requirements for each hardware component.

Design and Implementation: Implementation via Hardware Description Languages (HDLs) for FPGAs or structured circuit-board designs for CPUs.

Verification and Validation: Extensive validation using verification methods, such as property checking and formal analysis of FPGA logic to confirm operational determinism and safety compliance.

Leveraging certifiable COTS components, such as the Mercury ROCK3 mission computer, which includes comprehensive certification artifacts and evidence of testing up to DAL-A, significantly streamlines the certification process. Custom FPGA acceleration logic is intentionally simplified and rigorously verified to ensure compliance with certification objectives.

3.9. Software Certifiability Considerations

Software elements follow stringent DO-178C standards to guarantee certifiable, deterministic, and traceable behavior:

Certification Planning and Documentation: Essential documents such as the Plan for Software Aspects of Certification (PSAC), detailed software requirements specification, design documents, and rigorous test cases ensure complete transparency and traceability of development processes.

Network Verification: Given the Transformer-based fusion model's learned nature, explicit verification and review are critical. We document the neural network as static, deterministic code, presenting equivalent fixed algorithms, equations, or network descriptions to regulatory bodies. The neural network is extensively tested as a black-box entity, with exhaustive inputs validating deterministic outputs, complying with structural and functional coverage criteria.

Tool Qualification (DO-330 Compliance): While the neural network training utilizes industry-standard machine learning tools (e.g., PyTorch), these training environments are generally not certifiable directly. Therefore, we freeze the trained neural network, export it as static inference code, and rigorously validate it against clearly defined requirements. Qualification of neural-network code-generation tools under DO-330 standards or employing thorough black-box validation using deterministic testing scenarios ensures regulatory confidence.

Testing and Validation: Comprehensive validation through simulation-based and scenario-driven testing confirms the network’s robustness, deterministic behavior, and fault-tolerance. Specific scenarios, including sensor failures, anomalies, and adversarial inputs, validate the safety and reliability of the fusion architecture, satisfying certification requirements.

This structured software certification strategy ensures compliance with aviation regulatory bodies' expectations, facilitating acceptance of advanced, AI-based avionics solutions for critical UAM operations.