Submitted:

02 November 2025

Posted:

13 November 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Schrödinger’s Question

- ’events’ Unlike non-living matter, living matter is dynamic, changing autonomously by its internal laws; we must think differently about it, including making hypotheses and testing them in the labs (including computing methods). Processes (and not only jumps) occur within it, and we can observe some characteristic points.

- ’space and time’ Those characteristic points are significant changes resulting from processes that have material carriers, which change their positions with finite speed, so (unlike in classical science) the events also have the characteristics ’time’ in addition to their ’position’. In biology, the spatiotemporal behavior is implemented by slow ion currents. In other words, instead of ’moments’, we sometimes must consider ’periods’, and, in the interest of mathematical description, we model slow processes by closely matching ’instant’ processes.

- ’living organism’ To describe its dynamic behavior, we must introduce a dynamic description.

- ’within the spatial boundary’ Laws of physics are usually derived for stand-alone systems, in the sense that the considered system is infinitely far from the rest of the world; also, in the sense that the changes we observe do not significantly change the external world, so its idealized disturbing effect will not change it. In biology, we must consider changing resources.

- ’accounted for by physics’[by extraordinary laws] We are accustomed to abstracting and testing a static attribute, and we derive the ’ordinary’ laws of motion for the ’net’ interactions. In the case of physiology, nature prevents us from testing ’net’ interactions. We must understand that some interactions are non-separable, and we must derive ’non-ordinary’ laws [4,5]. The forces are not unknown, but the known ’ordinary’ laws of motion of physics are about single-speed interactions.

- ’yet tested in the physical laboratory’[including physiological ones] We need to test those ’constructions’ in laboratories, in their actual environment, and in ’working state’. As we did with non-living matter, we need to develop and gradually refine the testing methods and the hypotheses. Moreover, we must not forget that our methods refer to ’states’, and this time, we test ’processes’. Not only in measuring them but also in handling them computationally, we need slightly different algorithms.

1.2. Notion and Time of Event

1.3. Time

- biological time that physiologists record when observing biologically meaningful events

- simulated time is a logical time (a biologically faithful time scale) maintained by a user-level scheduler. The simulations of the biological events are scheduled to happen exactly at the true biological time, independent of the computer facilities

- processor time that the processor spends with the simulation task (instruction time and processor speed dependent)

- wall clock time that the programmer records when the computation reaches code parts that simulate biologically meaningful events

- non-payload time that the HW/SW parts of the system spend with needed but not directly task-related activities (system load, task type, and architecture dependent)

- time step (or grid time) a global time step on wall-clock time scale in parallelized computations where different threads/processors wait each for other’s results

- heartbeat time is a per-object and per-stage time step on simulated time scale where values of the simulated variables of a process are calculated

- time resolution on the simulated time scale is the period within which the exact time makes no significant difference (The simulator digitizes the continuous time)

- quasi-biological time assumes a linear dependence between the wall-clock time or the processor time, and the simulated time; used by non-time-aware simulations

1.3.1. Time in Technical Computing

1.3.2. Time Scales

| Algorithm 1 The basic clock-driven algorithm [53], Figure 1 |

|

1.3.3. Aligning the Time Scales

1.3.4. Time Resolution

1.3.5. Time Stamping

1.3.6. Simulating Time

2. Spiking and Information

2.1. Information Coding

2.2. Spiking

2.3. Neuronal Learning

2.4. Information Density

3. Technical Aspects

3.1. Neural Connectivity

| Algorithm 2 The basic event-driven algorithm with instantaneous synaptic interactions [53], Figure 2 |

|

| Algorithm 3 The basic event-driven algorithm with non-instantaneous synaptic interactions [53], Figure 3 |

|

3.1.1. Queue Handling

- the two latter algorithms comprise a deadlock, as all neurons expect the others to compute inputs (or work with values calculated in previous cycles, mixing "this" and "previous" values)

- after processing a spike initiation, the membrane potential is reset, excluding the important role of local neuronal memory (also learning)

- the algorithms are optimized for single-thread processing by applying a single event queue

3.1.2. Limiting Computing Time

3.1.3. Sharing Processing Units

3.1.4. Pruning Connections

3.2. Hardware/Software Limitations

4. Biological Computing

4.1. Conceptual Operation

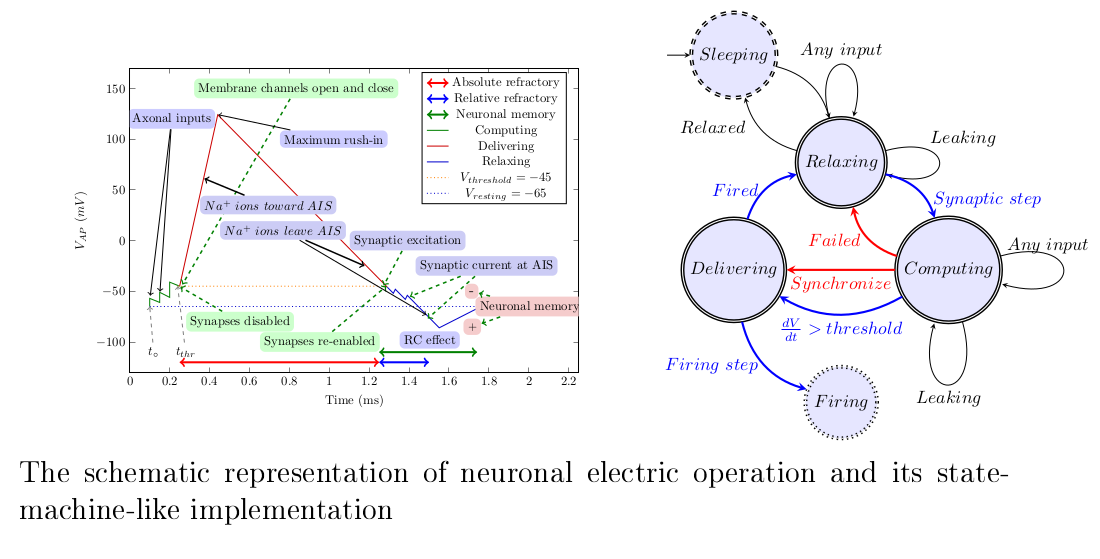

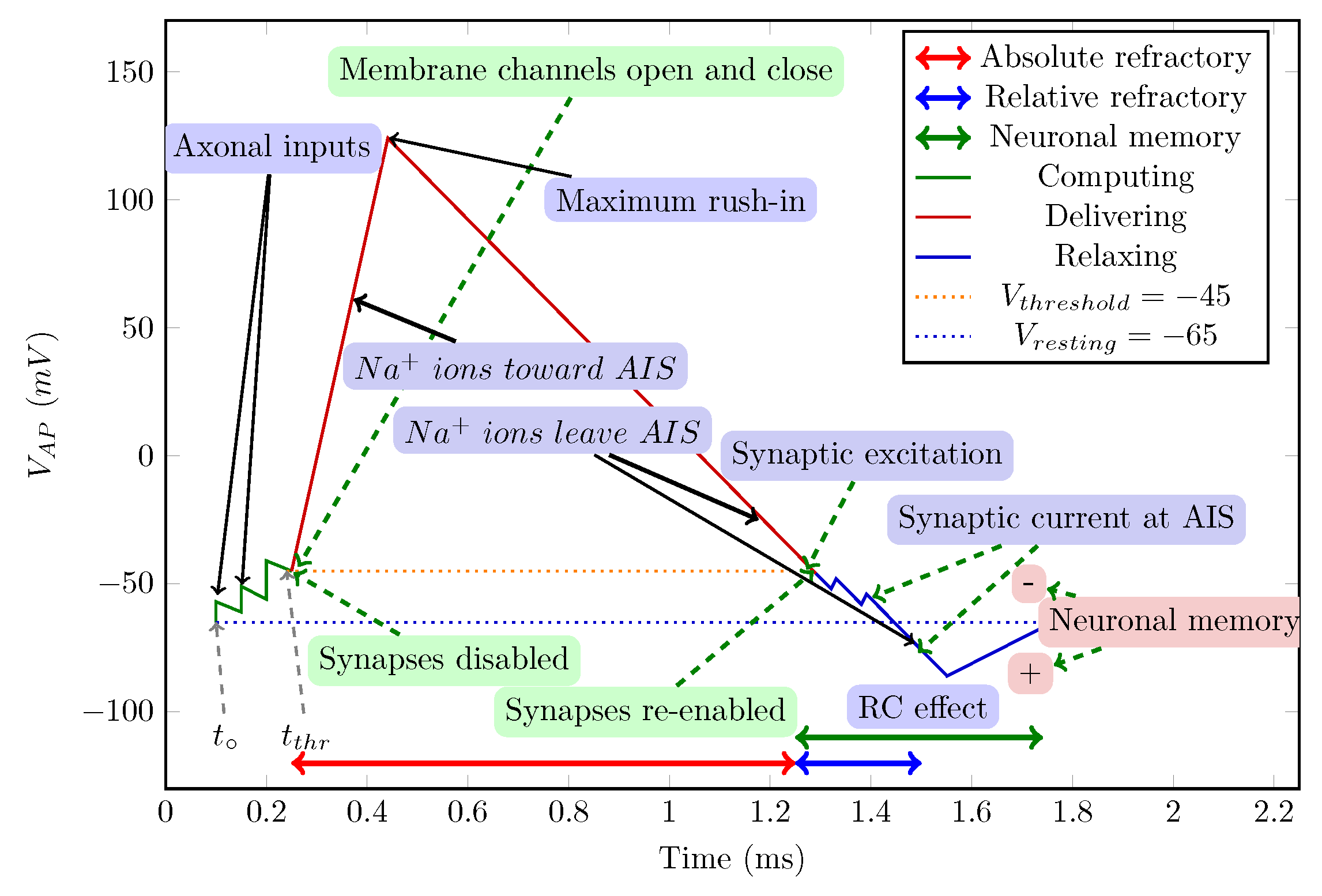

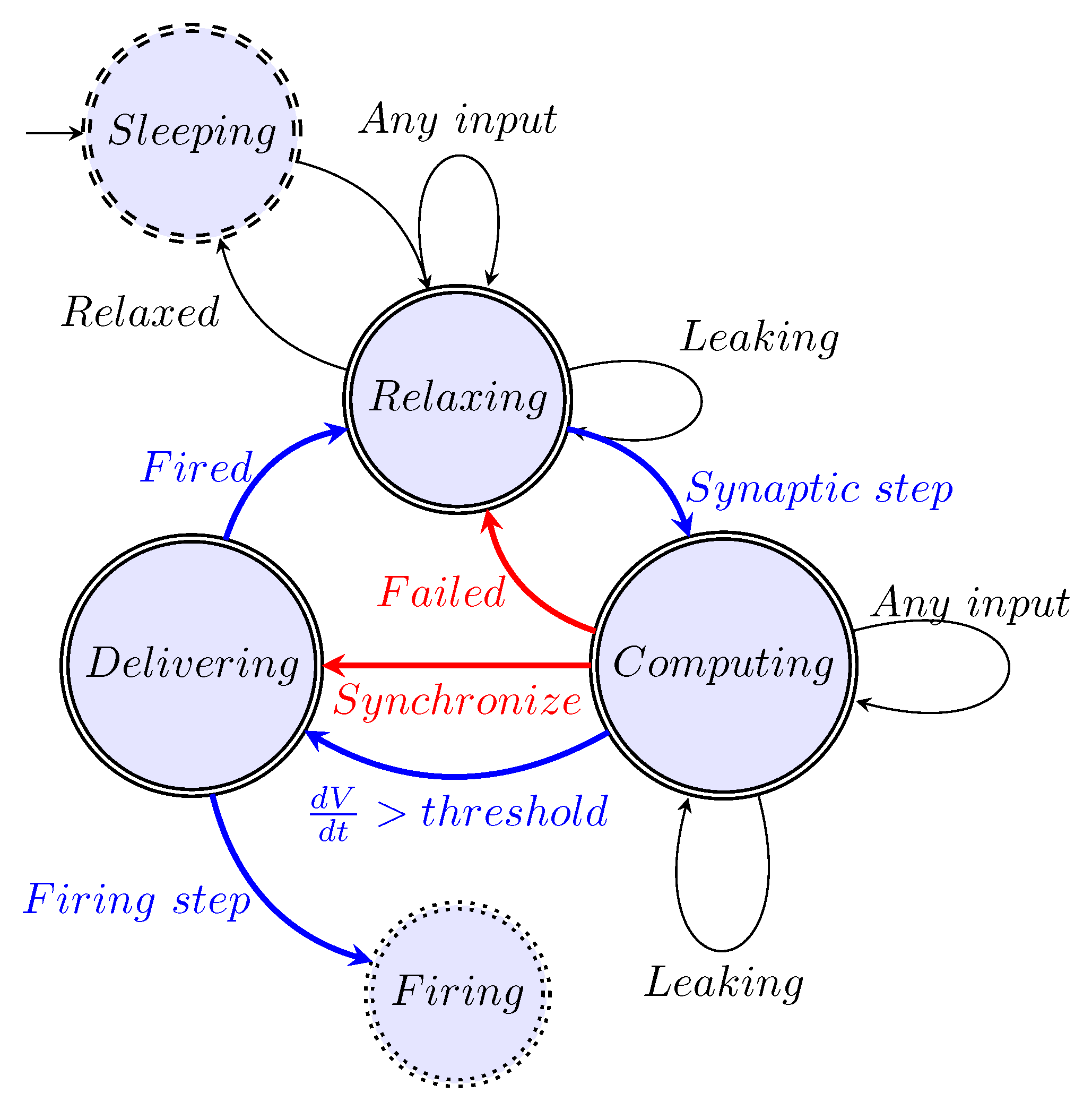

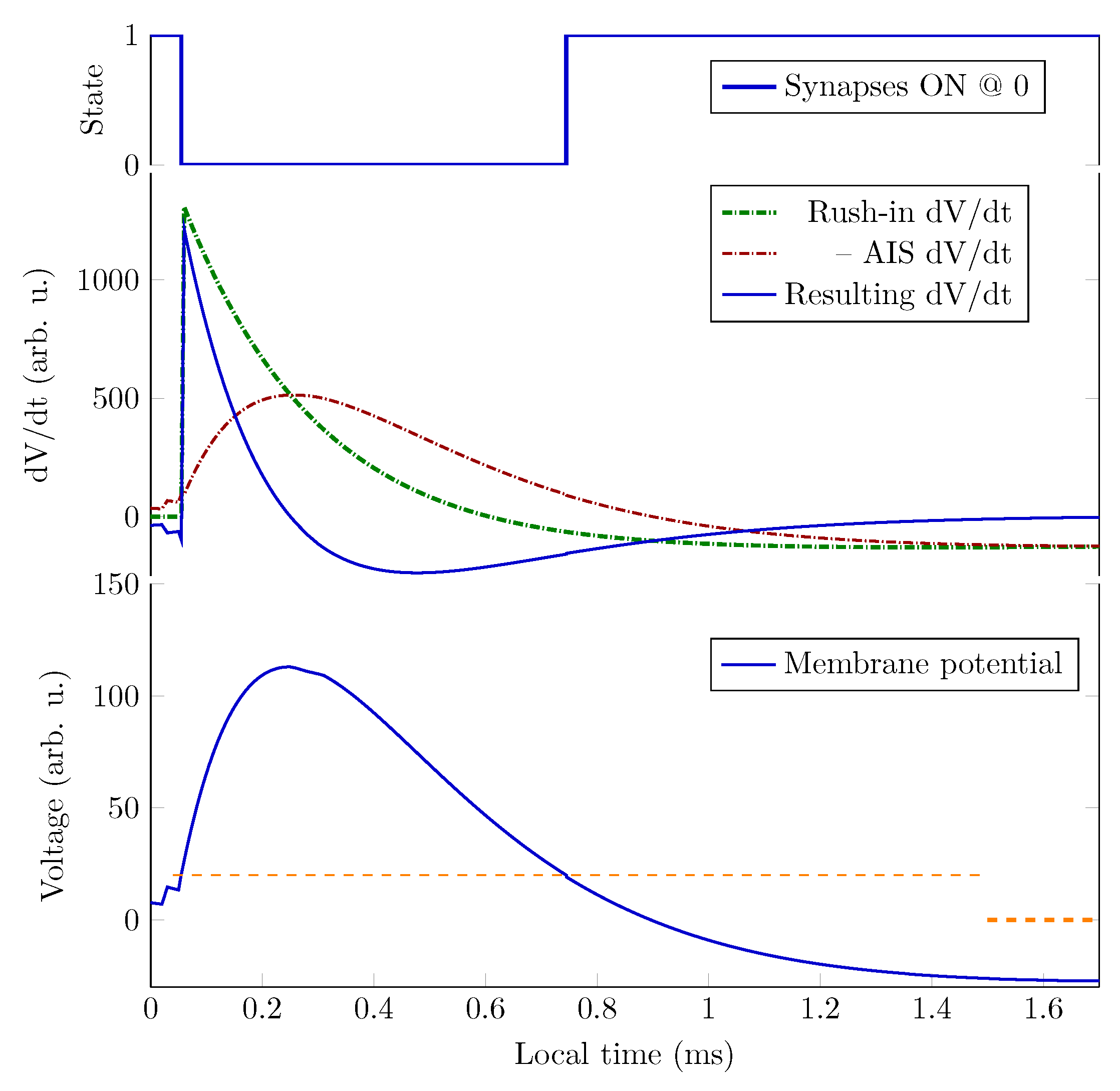

4.2. Stage Machine

4.2.1. Stage ’Relaxing’

4.2.2. Stage ’Computing’

4.2.3. Stage ’Delivering’

4.2.4. Extra Stages

4.2.5. Synaptic Control

4.2.6. Timed Cooperation of Neurons

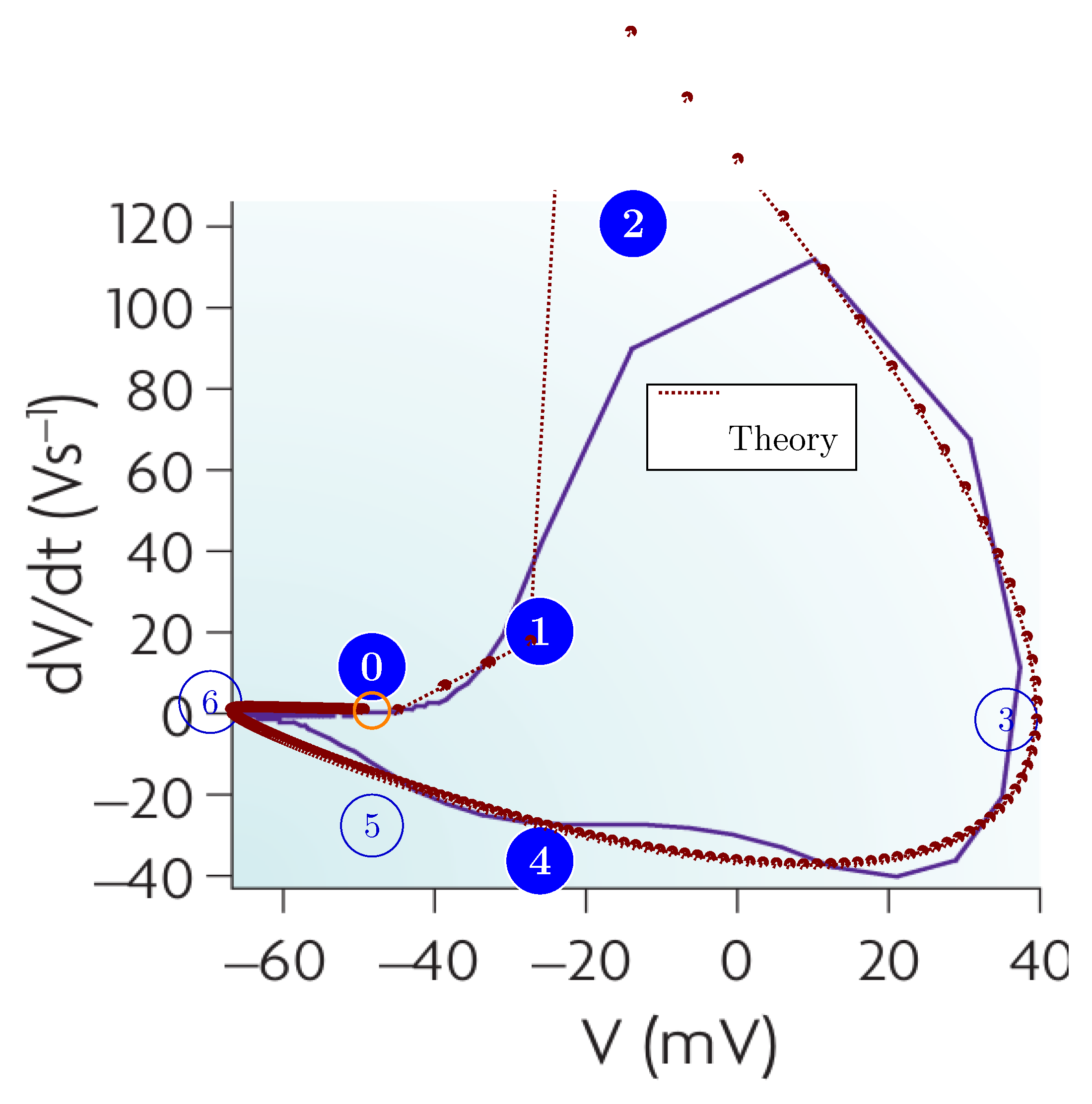

4.3. Classic Stages

4.4. Mathematics of Spiking

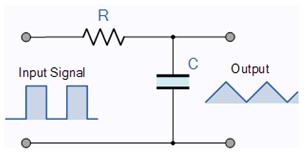

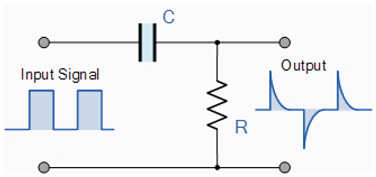

| The RC Integrator | The RC differentiator |

| Low Pass Filter | High Pass Filter |

|

|

4.5. Algorithm for the Operation

| Algorithm 4 The main computation |

|

| Algorithm 5 The heartbeat computation |

|

4.6. Operating Diagrams

4.7. Implications of the Model and the Algorithm

5. Summary

References

- Human Brain Project. New HBP-book: A user’s guide to the tools of digital neuroscience, 2023.

- Bell, G.; Bailey, D.H.; Dongarra, J.; Karp, A.H.; Walsh, K. A look back on 30 years of the Gordon Bell Prize. The International Journal of High Performance Computing Applications 2017, 31, 469–484.

- Ngai, J. BRAIN @ 10: A decade of innovation. Neuron 2024, 112. [CrossRef]

- Schrödinger, E., Is life based on the laws of physics? In What is Life?: With Mind and Matter and Autobiographical Sketches; Cambridge University Press: Canto, 1992; p. 76–85.

- Végh, J. The non-ordinary laws of physics describing life. European Biophysical Journal 2025, 1, in review.

- Végh, J.; Berki, Á.J. Towards generalizing the information theory for neural communication. Entropy 2022, 24, 1086–. [CrossRef]

- Drukarch, B.; Wilhelmus, M. Thinking about the nerve impulse: A critical analysis of the electricity-centered conception of nerve excitability. Progress in Neurobiology 2018, 169, 172–185. [CrossRef]

- Drukarch, B.; Wilhelmus, M. Thinking about the action potential: the nerve signal as a window to the physical principles guiding neuronal excitability. Frontiers in Cellular Neuroscience 2023, 17. [CrossRef]

- Feynman, R.P. Feynman Lectures on Computation; CRC Press, 2018.

- Végh, J. On Implementing Technomorph Biology for Inefficient Computing. Applied Sciences 2025, 15. [CrossRef]

- von Neumann, John. The Computer and the Brain; New Haven, Yale University Press, 1958.

- Abbott, L.; Sejnowski, T.J. Neural Codes and Distributed Representations; Cambridge, MA: MIT Press, 1999.

- Chu, D.; Prokopenko, M.; Ray, J.C. Computation by natural systems. Interface Focus 2018, 8. Accessed: 2025-03-20, . [CrossRef]

- Végh, J. Revising the Classic Computing Paradigm and Its Technological Implementations. Informatics 2021, 8. [CrossRef]

- Mehonic, A.; Kenyon, A.J. Brain-inspired computing needs a master plan. Nature 2022, 604, 255–260. [CrossRef]

- Markovic, D.; Mizrahi, A.; Querlioz, D.; Grollier, J. Physics for neuromorphic computing. Nature Reviews Physics 2020, 2, 499–510.

- Johnston, D.; Wu, S.M.S. Foundations of Cellular Neurophysiology; Massachusetts Institute of Technology: Cambridge, Massachusetts and London, England, 1995.

- Kandel, E.R.; Schwartz, J.H.; Jessell, T.M.; Siegelbaum, S.A.; Hudspeth, A.J. Principles of Neural Science, 5 ed.; The McGraw-Hill Medical: New York Chicago etc., 2013.

- Hebb, D. The Organization of Behavior; New York: Wiley and Sons, 1949.

- Caporale, N.; Dan, Y. Spike Timing–Dependent Plasticity: A Hebbian Learning Rule. Annual Review of Neuroscience 2008, 31, 25–46. PMID: 18275283, . [CrossRef]

- MacKay, D.M.; McCulloch, W.S. The limiting information capacity of a neuronal link. The bulletin of mathematical biophysics 1952, 14, 127–135.

- Sejnowski, J.T. The Computer and the Brain Revisited. Annals Hist Comput 1989, pp. 197–201.

- von Neumann, J. First draft of a report on the EDVAC. IEEE Annals of the History of Computing 1993, 15, 27–75. [CrossRef]

- Nature. Documentary follows implosion of billion-euro brain project. Nature 2020, 588, 215–216. [CrossRef]

- Human Brain Project. A closer look at scientific advances, 2023.

- Nemenman, I.; Lewen, G.D.; Bialek, W.; de Ruyter van Steveninck, R.R. Neural Coding of Natural Stimuli: Information at Sub-Millisecond Resolution. PLOS Computational Biology 2008, 4, 1–12. [CrossRef]

- Backus, J. Can Programming Languages Be liberated from the von Neumann Style? A Functional Style and its Algebra of Programs. Communications of the ACM 1978, 21, 613–641.

- Black, C.D.; Donovan, J.; Bunton, B.; Keist, A. SystemC: From the Ground Up, second ed.; Springer: New York, 2010.

- Végh, J. Which scaling rule applies to Artificial Neural Networks. Neural Computing and Applications 2021, 33, 16847–16864. [CrossRef]

- Végh, J. Finally, how many efficiencies the supercomputers have? The Journal of Supercomputing 2020, 76, 9430–9455, regularly updated at https://arxiv.org/abs/2001.01266.

- Végh, J. How Amdahl’s Law limits performance of large artificial neural networks. Brain Informatics 2019, 6, 1–11. [CrossRef]

- D’Angelo, G.; Rampone, S. Towards a HPC-oriented parallel implementation of a learning algorithm for bioinformatics applications. BMC Bioinformatics 2014, 15.

- D’Angelo, G.; Palmieri, F. Network traffic classification using deep convolutional recurrent autoencoder neural networks for spatial–temporal features extraction. Journal of Network and Computer Applications 2021, 173, 102890. [CrossRef]

- hpcwire.com. TOP500: Exascale Is Officially Here with Debut of Frontier. https://www.hpcwire.com/2022/05/30/top500-exascale-is-officially-here-with-debut-of-frontier/ , 2022. Accessed: 2023-09-10.

- van Albada, S.J.; Rowley, A.G.; Senk, J.; Hopkins, M.; Schmidt, M.; Stokes, A.B.; Lester, D.R.; Diesmann, M.; Furber, S.B. Performance Comparison of the Digital Neuromorphic Hardware SpiNNaker and the Neural Network Simulation Software NEST for a Full-Scale Cortical Microcircuit Model. Frontiers in Neuroscience 2018, 12, 291.

- de Macedo Mourelle, L.; Nedjah, N.; Pessanha, F.G., Reconfigurable and Adaptive Computing: Theory and Applications; CRC press, 2016; chapter 5: Interprocess Communication via Crossbar for Shared Memory Systems-on-chip. [CrossRef]

- IEEE/Accellera. Systems initiative. http://www.accellera.org/downloads/standards/systemc, 2017.

- Brette, R. Is coding a relevant metaphor for the brain? The Behavioral and brain sciences 2018, 42, e215. [CrossRef]

- Somjen, G. SENSORY CODING in the mammalian nervous system; New York, MEREDITH CORPORATION, 1972. [CrossRef]

- Shannon, C.E. A mathematical theory of communication. The Bell System Technical Journal 1948, 27, 379–423. [CrossRef]

- Shannon, C.E. The Bandwagon. IRE Transactions in Information Theory 1956, 2, 3.

- Nizami, L. Information theory is abused in neuroscience. Cybernetics & Human Knowing 2019, 26, 47–97.

- Young, A.R.; Dean, M.E.; Plank, J.S.; S. Rose, G. A Review of Spiking Neuromorphic Hardware Communication Systems. IEEE Access 2019, 7, 135606–135620. [CrossRef]

- Moradi, S.; Manohar, R. The impact of on-chip communication on memory technologies for neuromorphic systems. Journal of Physics D: Applied Physics 2018, 52, 014003.

- Stone, J.V. Principles of Neural Information Theory; Sebtel Press, Sheffield, UK, 2018.

- Antle, M. C. and Silver, R.. Orchestrating time: arrangements of the brain circadian clock. Trends Neurosci. 2005, 28, 145–151.

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep learning. Nature 2015, 521, 436–444. [CrossRef]

- de Ruyter van Steveninck, R.R.; Lewen, G.D.; Strong, S.P.; Koberle, R.; Bialek, W. Reproducibility and Variability in Neural Spike Trains. Science 1997, 275, 1805–1808. [CrossRef]

- Sengupta, B.; Laughlin, S.; Niven, J. Consequences of Converting Graded to Action Potentials upon Neural Information Coding and Energy Efficiency. PLoS Comput Biol 2014, 1. [CrossRef]

- Strong, S.P.; Koberle, R.; de Ruyter van Steveninck, R.R.; Bialek, W. Entropy and Information in Neural Spike Trains. Phys. Rev. Lett. 1998, 80, 197–200. [CrossRef]

- Gordon, S., Ed. The Synaptic Organization of the Brain, 5 ed.; Oxford Academic, New York, 2005.

- Berger, T.; Levy, W.B. A Mathematical Theory of Energy Efficient Neural Computation and Communication. IEEE Transactions on Information Theory 2010, 56, 852–874. [CrossRef]

- Brette, R. Simulation of networks of spiking neurons: a review of tools and strategies. J Comput. Neurosci., 23, 349–98. [CrossRef]

- Keuper, J.; Pfreundt, F.J. Distributed Training of Deep Neural Networks: Theoretical and Practical Limits of Parallel Scalability. In Proceedings of the 2nd Workshop on Machine Learning in HPC Environments (MLHPC). IEEE, 2016, pp. 1469–1476. [CrossRef]

- Tsafrir, D. The Context-switch Overhead Inflicted by Hardware Interrupts (and the Enigma of Do-nothing Loops). In Proceedings of the Proceedings of the 2007 Workshop on Experimental Computer Science, San Diego, California, New York, NY, USA, 2007; ExpCS ’07, pp. 3–3.

- David, F.M.; Carlyle, J.C.; Campbell, R.H. Context Switch Overheads for Linux on ARM Platforms. In Proceedings of the Proceedings of the 2007 Workshop on Experimental Computer Science, San Diego, California, New York, NY, USA, 2007; ExpCS ’07. [CrossRef]

- Bengio, E.; Bacon, P.L.; Pineau, J.; Precu, D. Conditional Computation in Neural Networks for faster models. https://arxiv.org/pdf/1511.06297, 2016, [arXiv:cs.LG/1511.06297]. Accessed: 2023-08-30.

- Xie, S.; Sun, C.; Huang, J.; Tu, Z.; Murphy, K. Rethinking Spatiotemporal Feature Learning: Speed-Accuracy Trade-offs in Video Classification. In Proceedings of the Computer Vision – ECCV 2018; Ferrari, V.; Hebert, M.; Sminchisescu, C.; Weiss, Y., Eds., Cham, 2018; pp. 318–335.

- Xu, K.; Qin, M.; Sun, F.; Wang, Y.; Chen, Y.K.; Ren, F. Learning in the Frequency Domain. https://arxiv.org/abs/2002.12416, 2020. Accessed: 2025-02-08, . [CrossRef]

- Kunkel, S.; Schmidt, M.; Eppler, J.M.; Plesser, H.E.; Masumoto, G.; Igarashi, J.; Ishii, S.; Fukai, T.; Morrison, A.; Diesmann, M.; et al. Spiking network simulation code for petascale computers. Frontiers in Neuroinformatics 2014, 8, 78. [CrossRef]

- Singh, J.P.; Hennessy, J.L.; Gupta, A. Scaling Parallel Programs for Multiprocessors: Methodology and Examples. Computer 1993, 26, 42–50. [CrossRef]

- Lowel, S.; Singer, W. Selection of intrinsic horizontal connections in the visual cortex by correlated neuronal activity. Science 1992, 255, 209–212, [https://science.sciencemag.org/content/255/5041/209.full.pdf]. [CrossRef]

- Iranmehr, E.; Shouraki, S.B.; Faraji, M.M.; Bagheri, N.; Linares-Barranco, B. Bio-Inspired Evolutionary Model of Spiking Neural Networks in Ionic Liquid Space. Frontiers in Neuroscience 2019, 13, 1085. [CrossRef]

- Furber, S.B.; Lester, D.R.; Plana, L.A.; Garside, J.D.; Painkras, E.; Temple, S.; Brown, A.D. Overview of the SpiNNaker System Architecture. IEEE Transactions on Computers 2013, 62, 2454–2467.

- Ousterhout, J.K. Why Aren’t Operating Systems Getting Faster As Fast As Hardware? http://www.stanford.edu/~ouster/cgi-bin/papers/osfaster.pdf, 1990. Accessed: 2023-09-10.

- Kendall, J.D.; Kumar, S. The building blocks of a brain-inspired computer. Appl. Phys. Rev. 2020, 7, 011305. [CrossRef]

- TOP500. Top500 list of supercomputers. https://www.top500.org/lists/top500/ (Accessed on Oct 24, 2021), 2021.

- Han, S.; Pool, J.; Tran, J.; Dally, W.J. Learning both Weights and Connections for Efficient Neural Networks. https://arxiv.org/pdf/1506.02626.pdf, 2015.

- Liu, C.; Bellec, G.; Vogginger, B.; Kappel, D.; Partzsch, J.; Neumärker, F.; Höppner, S.; Maass, W.; Furber, S.B.; Legenstein, R.; et al. Memory-Efficient Deep Learning on a SpiNNaker 2 Prototype. Frontiers in Neuroscience 2018, 12, 840. [CrossRef]

- Johnson, D.H., Information theory and neuroscience: Why is the intersection so small? In 2008 IEEE Information Theory Workshop; IEEE, 2008; pp. 104–108. [CrossRef]

- Leterrier, C. The Axon Initial Segment: An Updated Viewpoint. Journal of Neuroscience 2018, 38, 2135–2145. [CrossRef]

- Buzsáki, G. Neural syntax: cell assemblies, synapsembles, and readers. Neuron 2010, 68, 362–85. [CrossRef]

- Levenstein, D.; Girardeau, G.; Gornet, J.; Grosmark, A.; Huszar, R.; Peyrache, A.; Senzai, Y.; Watson, B.; Rinzel, J.; Buzsáki, G. Distinct ground state and activated state modes of spiking in forebrain neurons. bioRxiv 2021.

- Hodgkin, A.L.; Huxley, A.F. A quantitative description of membrane current and its application to conduction and excitation in nerve. J. Physiol. 1952, 117, 500–544.

- Tschanz, J.W.; Narendra, S.; Ye, Y.; Bloechel, B.; Borkar, S.; De, V. Dynamic sleep transistor and body bias for active leakage power control of microprocessors. IEEE Journal of Solid State Circuits 2003, 38, 1838 – 1845.

- Susi, G.; Garcés, P.; Paracone, E.; Cristini, A.; Salerno, M.; Maestú, F.; Pereda, E. FNS allows efficient event-driven spiking neural network simulations based on a neuron model supporting spike latency. Nature Scientific Reports 2021, 11. [CrossRef]

- Onen, M.; Emond, N.; Wang, B.; Zhang, D.; Ross, F.M.; Li, J.; Yildiz, B.; del Alamo, J.A. Nanosecond protonic programmable resistors for analog deep learning. Science 2022, 377, 539–543, [https://www.science.org/doi/pdf/10.1126/science.abp8064]. [CrossRef]

- Losonczy, A.; Magee, J. Integrative properties of radial oblique dendrites in hippocampal CA1 pyramidal neurons. Neuron 2006, 50, 291–307. [CrossRef]

- Buzsáki, G.; Mizuseki, K. The log-dynamic brain: how skewed distributions affect network operations. Nature Reviews Neuroscience 2014, 15, 264–278. [CrossRef]

- Végh, J. Dynamic Abstract Neural Computing with Electronic Simulation. https://jvegh.github.io/DANCES/ (Accessed on July 20, 2025), 2025.

- Kole, M.H.P.; Ilschner, S.U.; Kampa, B.M.; Williams, S.R.; Ruben, P.C.; Stuart, G.J. Action potential generation requires a high sodium channel density in the axon initial segment. Nature Neuroscience 2008, 11, 178–186. [CrossRef]

- Rasband, M. The axon initial segment and the maintenance of neuronal polarity. Nat Rev Neurosci 2010, 11, 552–562. [CrossRef]

- Kole, M.; Stuart, G. Signal Processing in the Axon Initial Segment. Neuron 2012, 73, 235–247. [CrossRef]

- Huang, C.Y.M.; Rasband, M.N. Axon initial segments: structure, function, and disease. Annals of the New York Academy of Sciences 2018, 1420. [CrossRef]

- Heimburg, T. Die Physik der Nerven. Physik Journal 2009, 8, 33–39.

- Antolini, A.; Lico, A.; Zavalloni, F.; Scarselli, E.F.; Gnudi, A.; Torres, M.L.; Canegallo, R.; Pasotti, M. A Readout Scheme for PCM-Based Analog In-Memory Computing With Drift Compensation Through Reference Conductance Tracking. IEEE Open Journal of the Solid-State Circuits Society 2024, 4, 69–82.

- Wang, Y.; Wang, R.; Xu, X. Neural Energy Supply-Consumption Properties Based on Hodgkin-Huxley Model. Neural Plast. 2017, p. 6207141. [CrossRef]

- Alonso1, L.M.; Magnasco, M.O. Complex spatiotemporal behavior and coherent excitations in critically-coupled chains of neural circuits. Chaos: An Interdisciplinary Journal of Nonlinear Science 2018, 28, 093102. [CrossRef]

- Li, M.; Tsien, J.Z. Neural Code-Neural Self-information Theory on How Cell-Assembly Code Rises from Spike Time and Neuronal Variability. Frontiers in Cellular Neuroscience 2017, 11. [CrossRef]

- Kneip, A.; Lefebvre, M.; Verecken, J.; Bol, D. IMPACT: A 1-to-4b 813-TOPS/W 22-nm FD-SOI Compute-in-Memory CNN Accelerator Featuring a 4.2-POPS/W 146-TOPS/mm2 CIM-SRAM With Multi-Bit Analog Batch-Normalization. IEEE Journal of Solid-State Circuits 2023, 58, 1871–1884. [CrossRef]

- Bean, B. The action potential in mammalian central neurons. Nature Reviews Neuroscience 2007, 8. [CrossRef]

- Goikolea-Vives, A.; Stolp, H. Connecting the Neurobiology of Developmental Brain Injury: Neuronal Arborisation as a Regulator of Dysfunction and Potential Therapeutic Target. Int J Mol Sci 2021, 15. [CrossRef]

- Hasegawa, K.; ichiro Kuwako, K. Molecular mechanisms regulating the spatial configuration of neurites. Seminars in Cell & Developmental Biology 2022, 129, 103–114. Special Issue: Emerging biology of cellular protrusions in 3D architecture by Mayu Inaba and Mark Terasaki, . [CrossRef]

- Végh, J. The unified cross-disciplinary model of the operation of neurons. European Biophysics Journal 2025, 1. [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).