5. Results and Discussion

The developed concept is intended to address the research questions posed in Chapter 1. The goal is to present a solution for calculating semi-global influencing factors and, subsequently, communicate them in an accessible manner. There is a research gap in communicating spatial influencing factors. The prototypical dashboard implementation is intended to present a possible solution to this issue. In the use case, accident data, ML results, and influencing factors will be dynamically summarized for in-depth analysis. This allows specialist users, such as road planners, accident researchers, ML experts, and road users, to explore the large number of individual ML predictions or evaluate the quality of the ML model. Geo-referencing accidents makes it possible to show spatial patterns. This prototype was developed for German analysts, so only the German version will be shown in the upcoming screenshots.

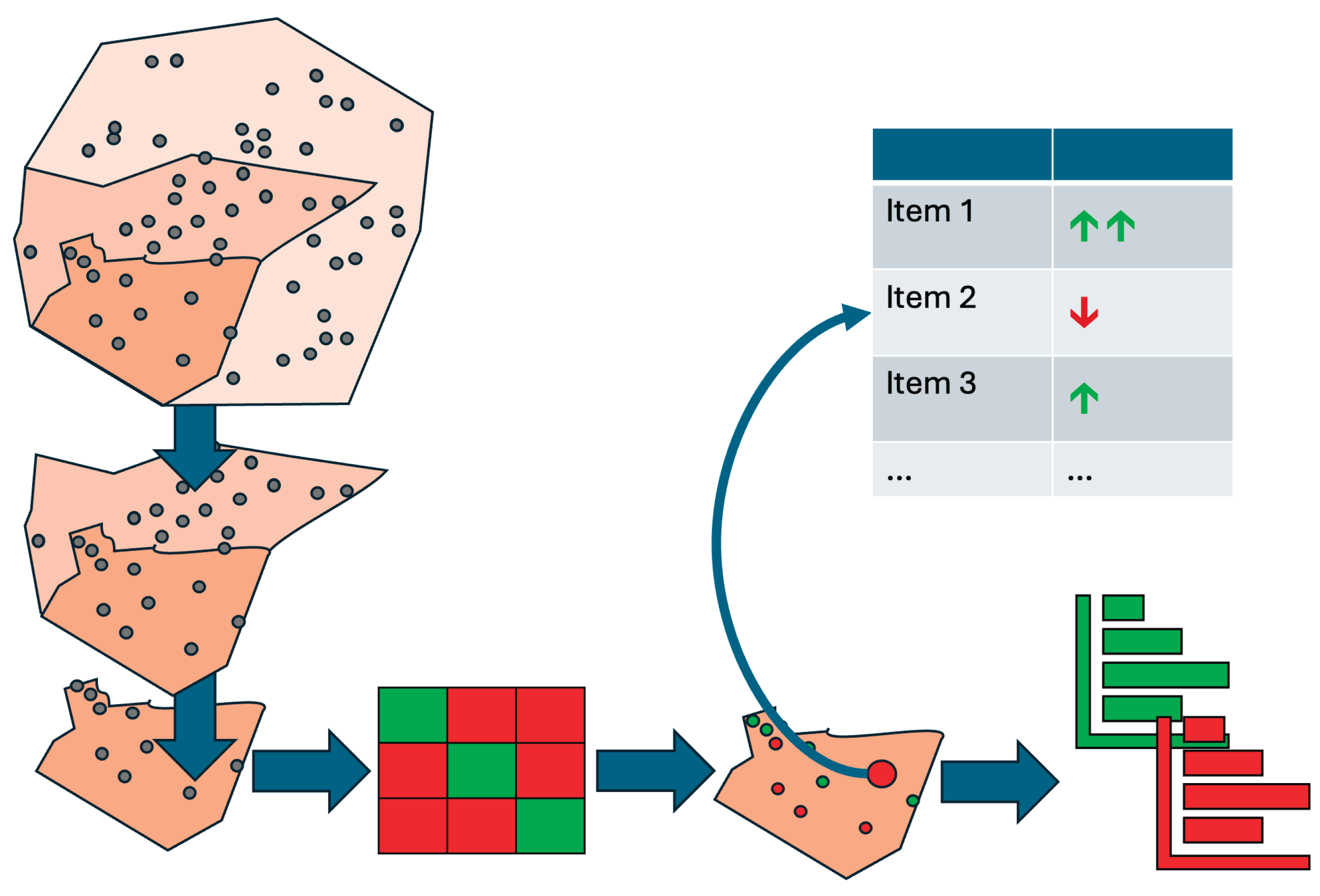

As shown in the concept, we sorted the Mainz accident data by their spatial location. Here, we use the districts of Mainz as an example. Other administrative units or socio-spatial boundaries can also be used as subdivisions. However, we have not examined these spatial units in depth, so we cannot identify small-scale influences, such as dangerous traffic junctions. The subdivided point data can now be visualized on a map using areal representations (see

Figure 7). Additionally, other visualization concepts can highlight differences between districts. In the study, the number of accidents per district and the model's prediction quality were shown using coloring and error measures per district. Depending on the specific influencing factors, other colorations could also be conceivable to achieve even better spatial comparisons with the aid of maps. When comparing city districts, it's clear that areas in the city center have a much higher accident rate, primarily due to higher traffic volume rather than an increased risk of accidents. Accident frequency should be included as an additional target variable in an ML model to specify accident frequency in addition to accident severity. Therefore, the districts can only be compared to a limited extent based on their numbers. Additionally, the influencing factors vary by district. Using a single ML model does not account for district-specific characteristics.

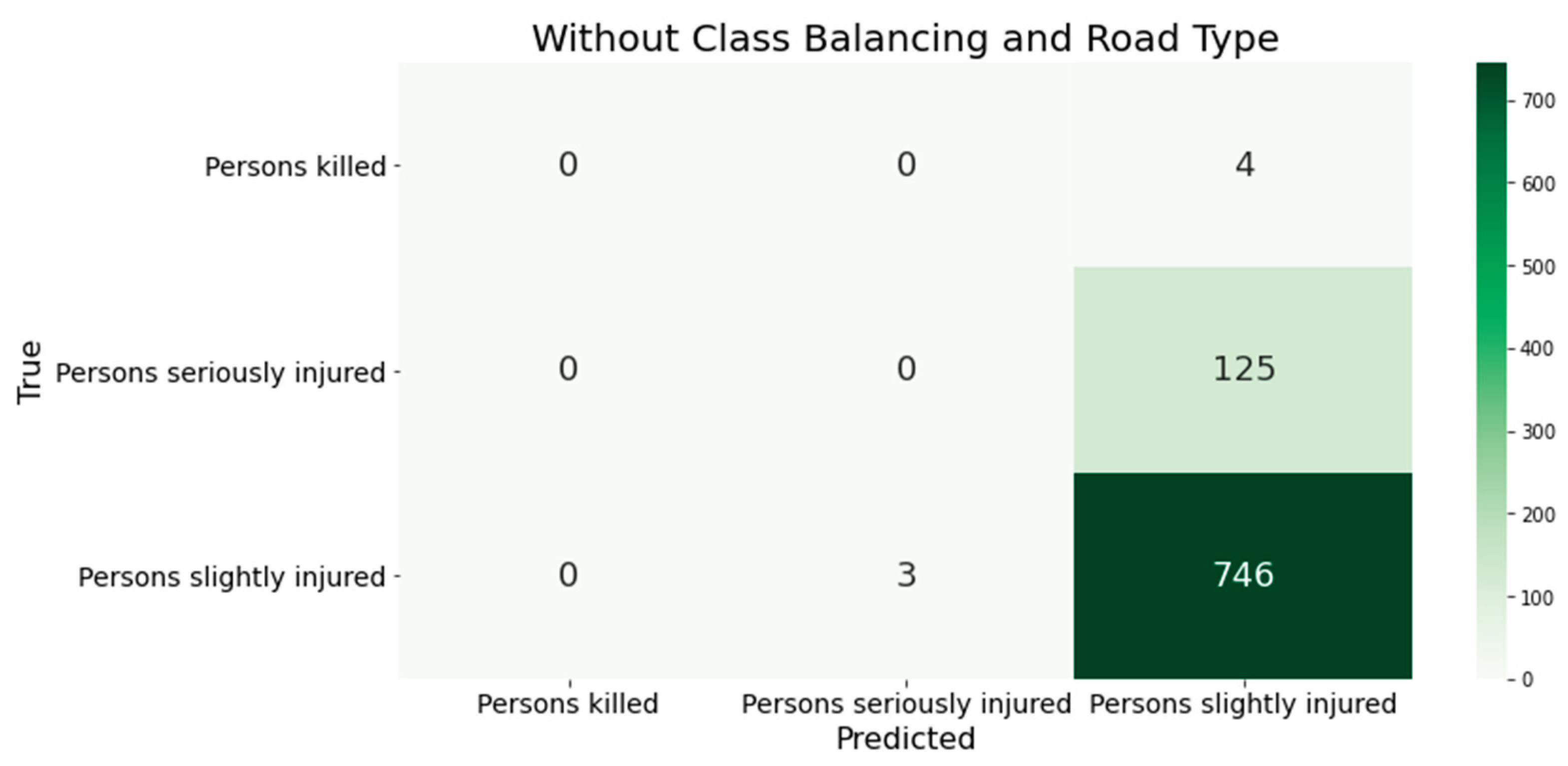

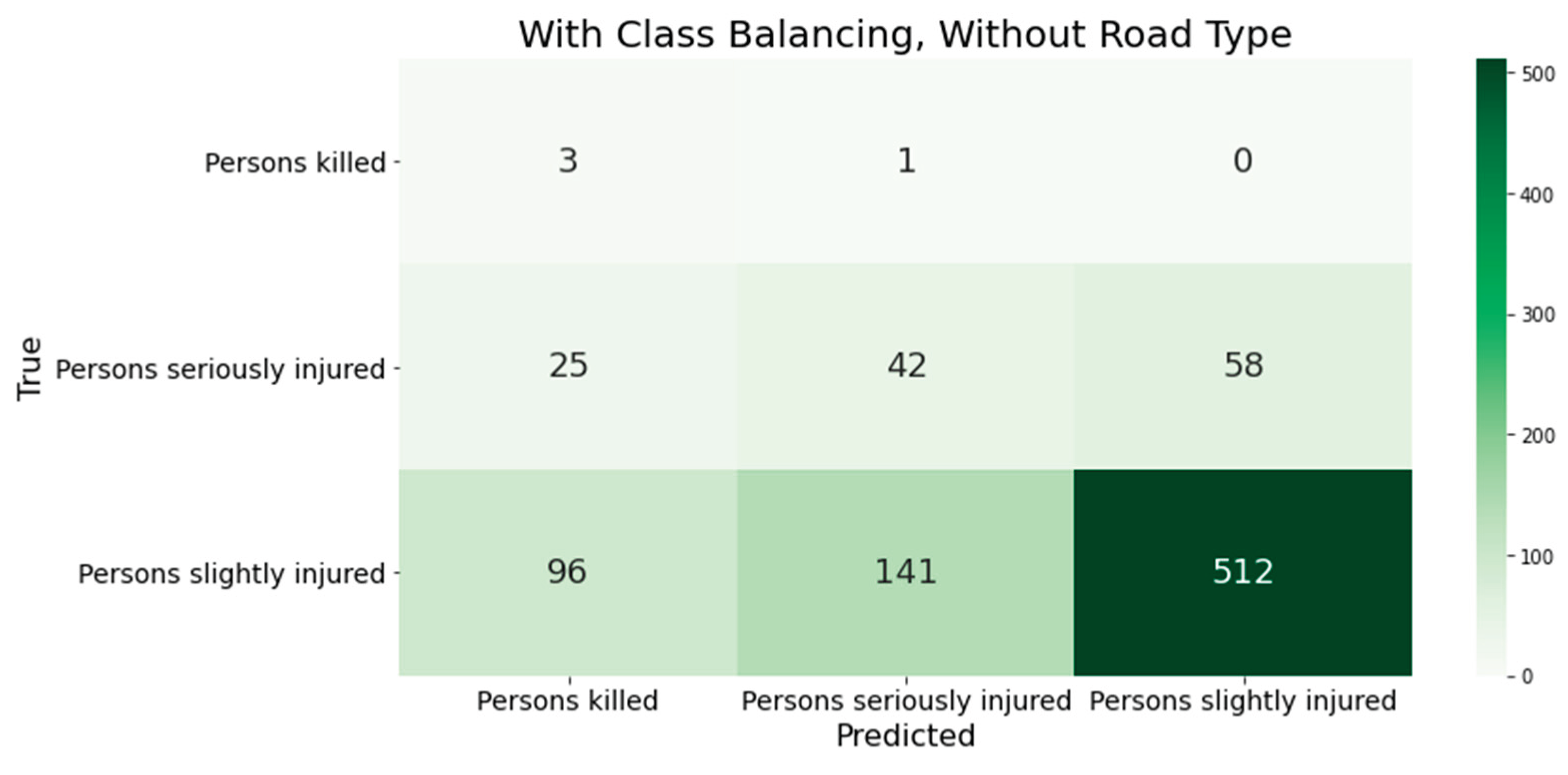

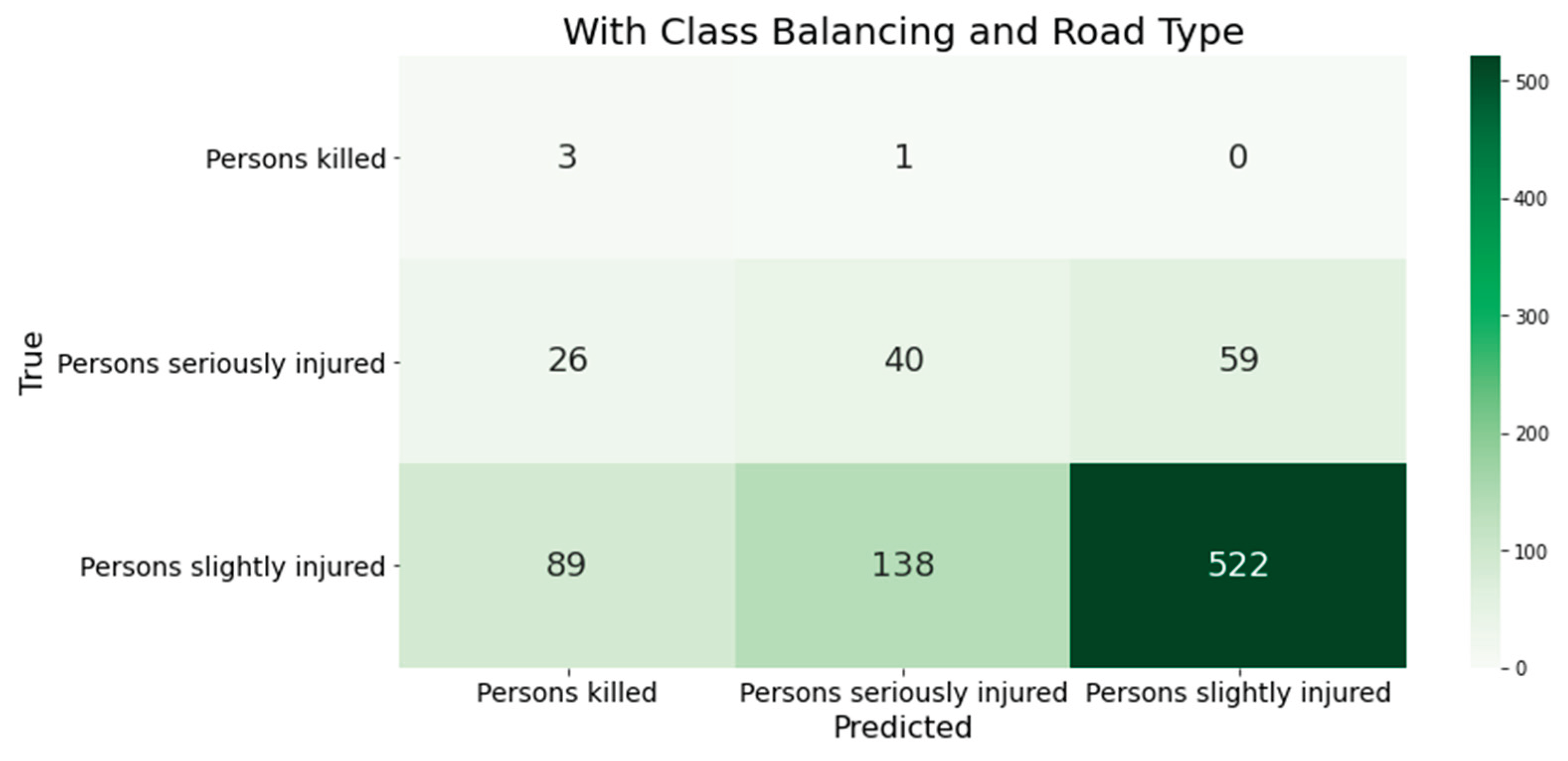

Clicking on a district reveals a dynamic, interactive confusion matrix for the ML predictions of accident severity in that district. This matrix compares the predicted and actual accident severity in the selected district. This makes it easy for users to understand how error measures are calculated and identify potential classification errors in the model.

Figure 16 shows a district overview from the web dashboard.

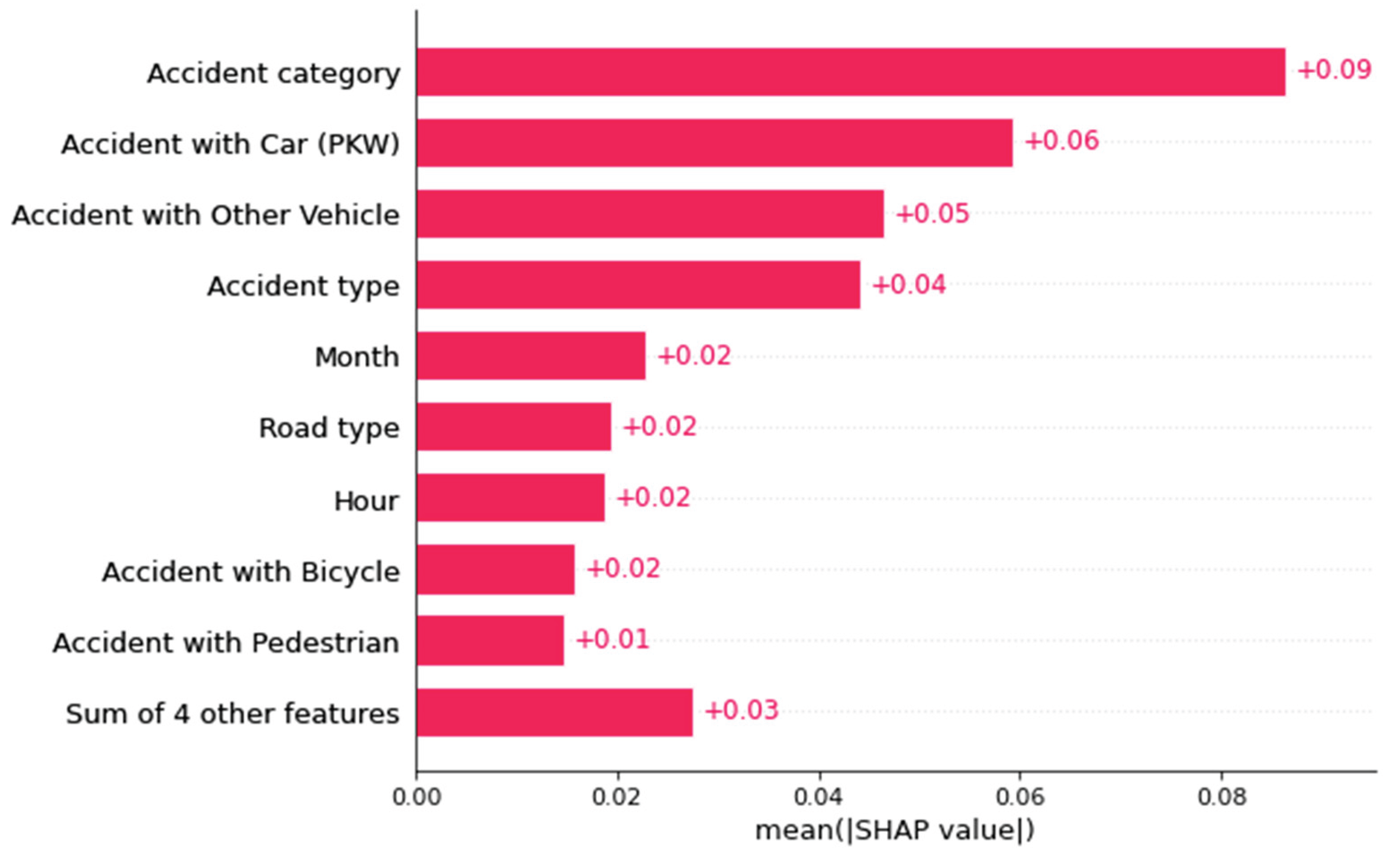

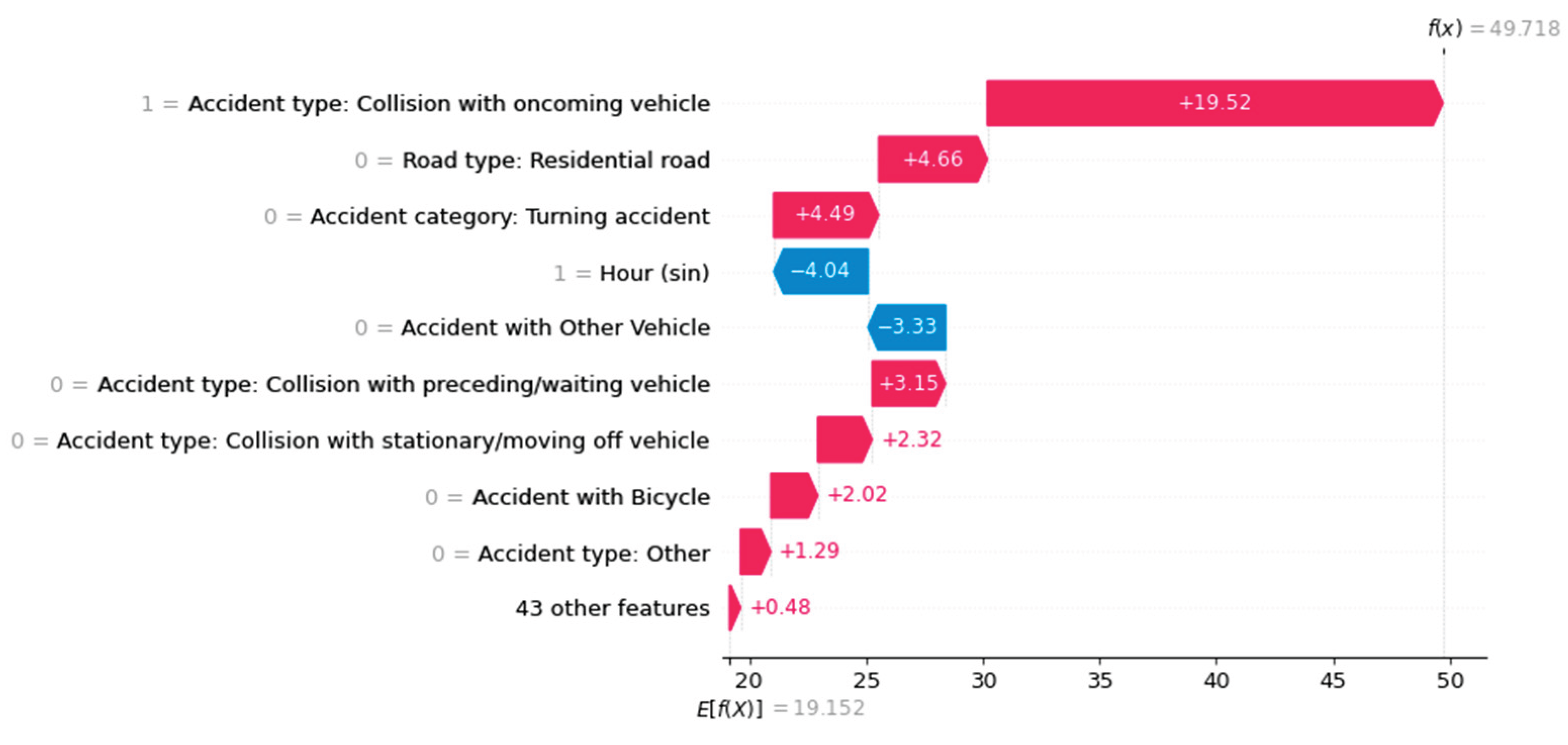

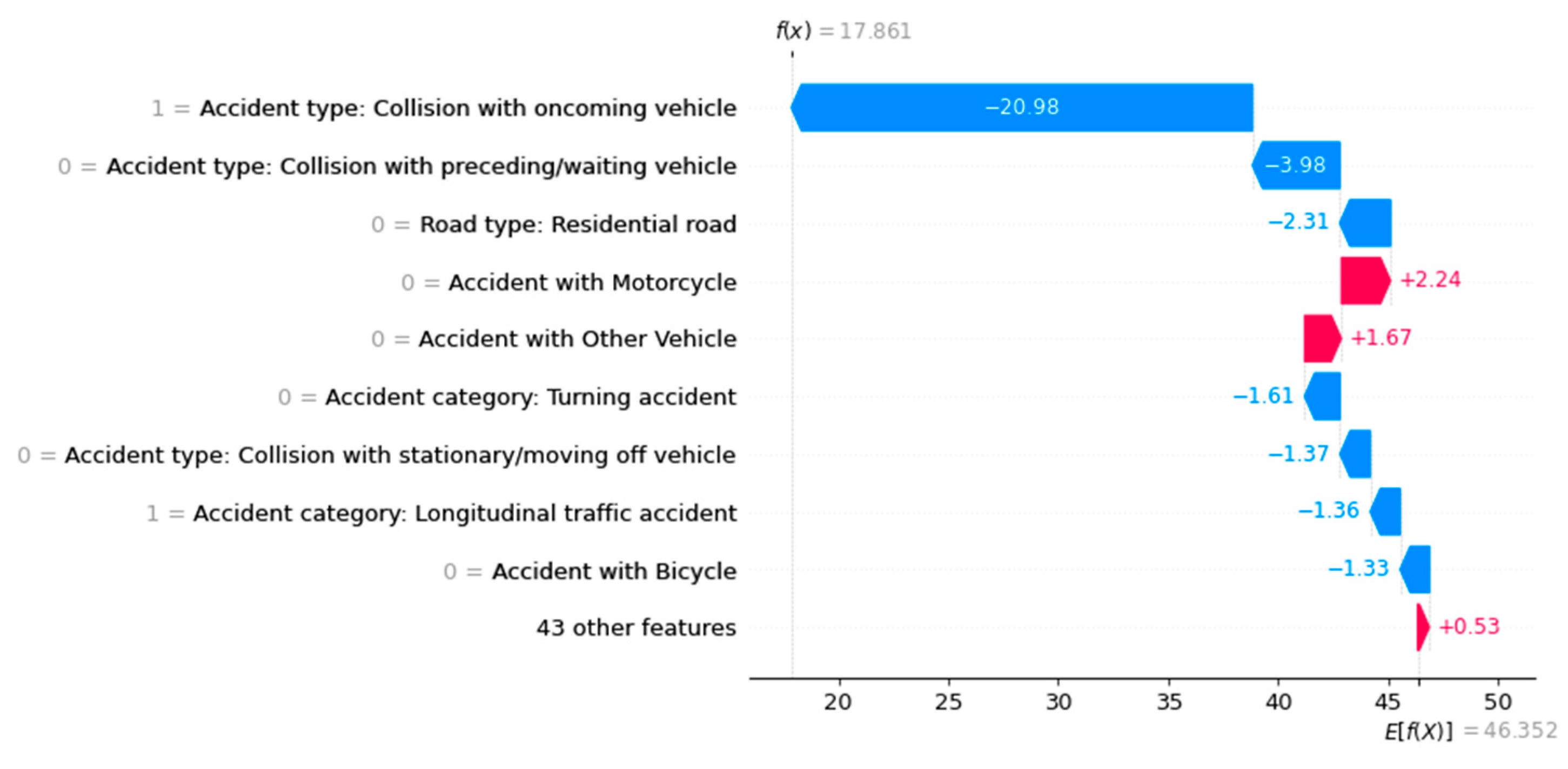

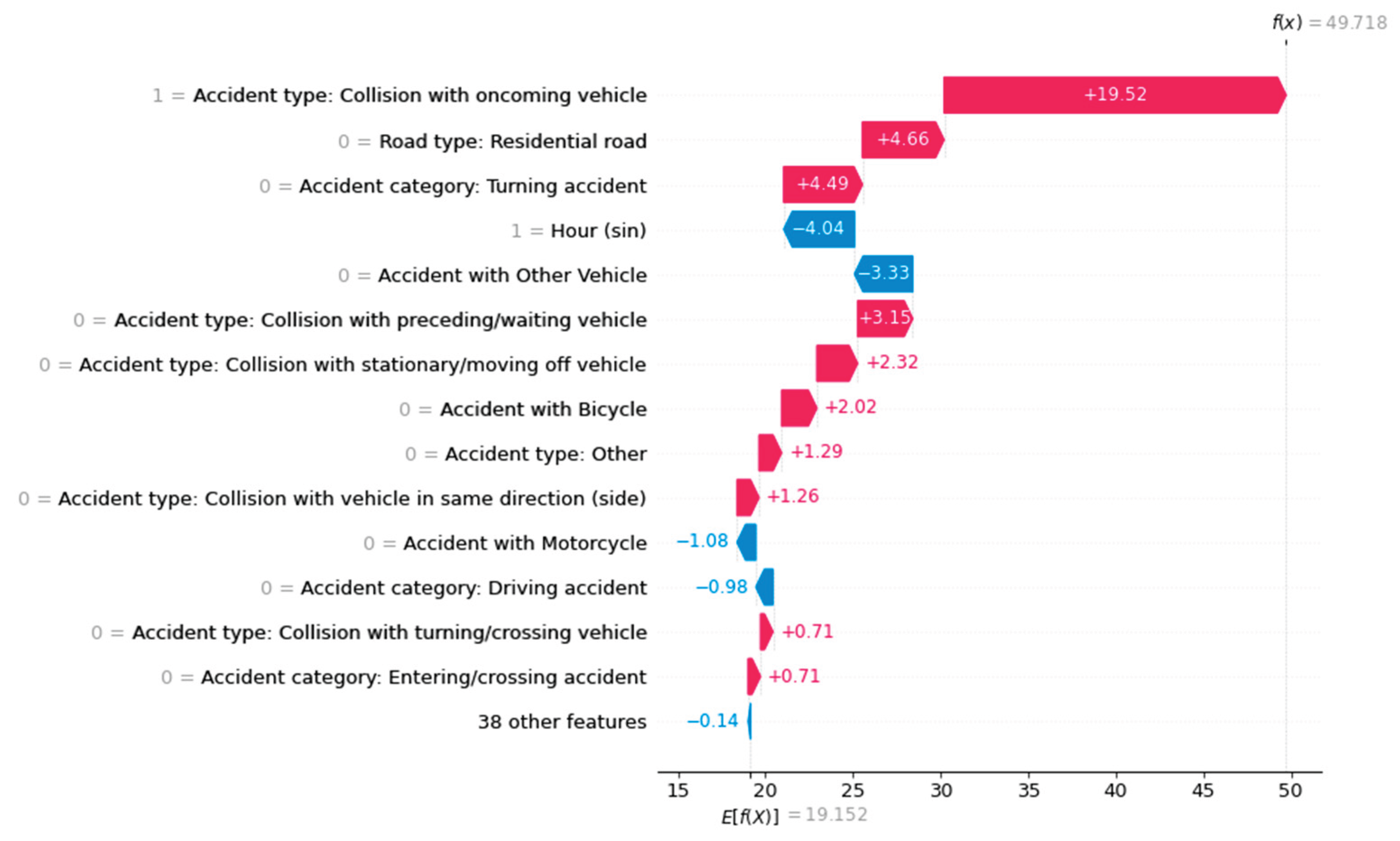

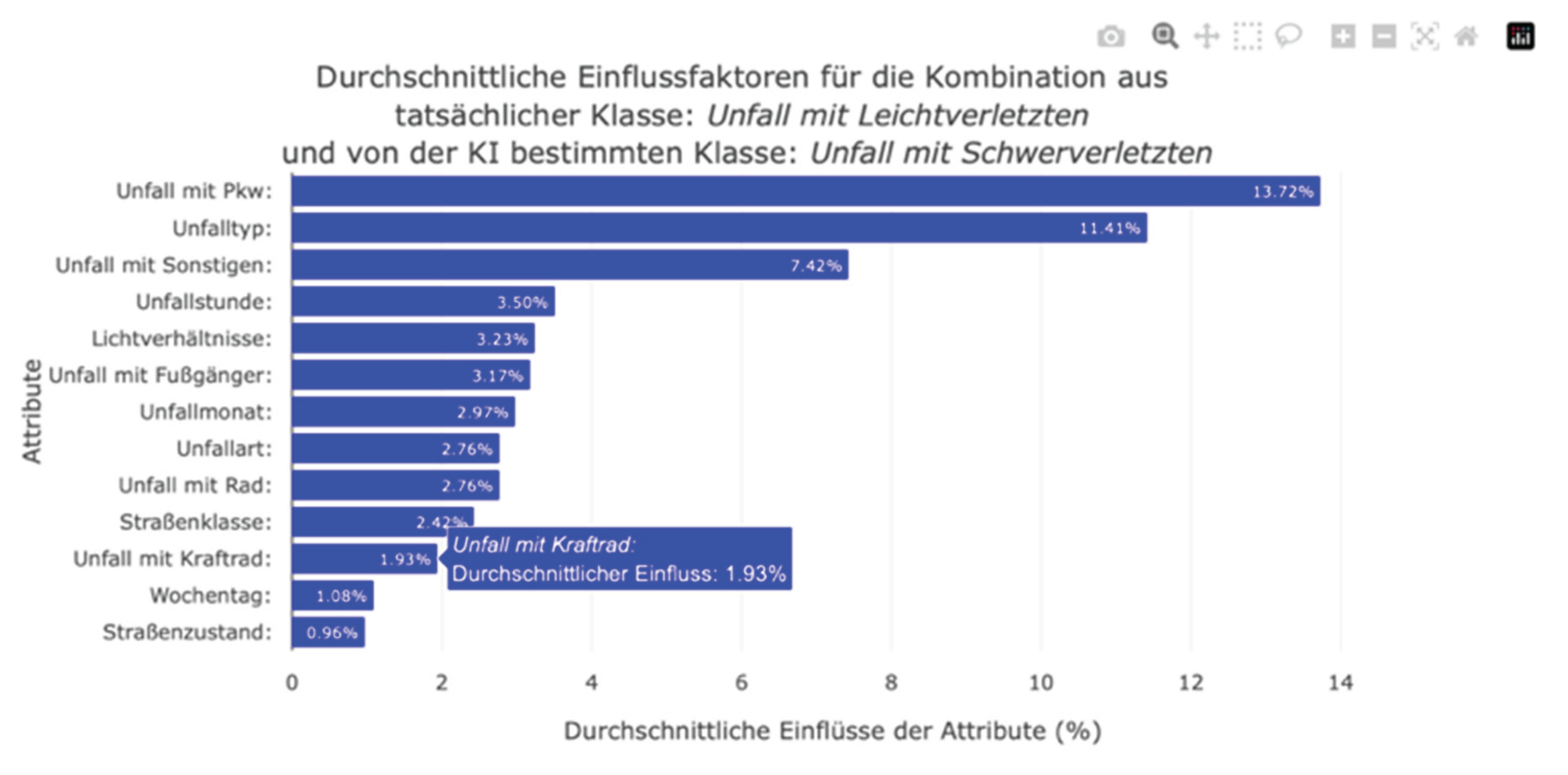

Clicking on a cell in the confusion matrix generates a bar plot showing the average absolute SHAP values for this accident severity. One disadvantage of the bar plot from the SHAP library is that it generalizes the data too much. When a bar plot was generated for an accident severity class, the algorithm calculated the average SHAP value of a feature from all instances, even when the classifier predicted the investigated class as the least likely for an instance. Furthermore, it was not possible to consider correctly or incorrectly classified instances separately. Therefore, the presentation of the results in the bar plot was distorted. It was not possible to conduct a differentiated analysis of which features had the greatest influence on incorrect classifications. However, by dynamically calculating the average absolute influencing factors depending on the actual and predicted accident severity in the web dashboard, users can now access the influencing factors specifically (see

Figure 17). Thus, we eliminated the limitations of the global bar plot to gain a clearer understanding of the ML prediction and its influencing factors. However, the average absolute SHAP values represented by the bar plot do not indicate whether a feature increased or decreased the probability of predicting accident severity. Therefore, the bar plot expresses the importance of a feature in the ML prediction rather than providing information on how a feature value influences the prediction of individual accident severity. Additionally, very large or very small SHAP values can distort the average data in individual cases.

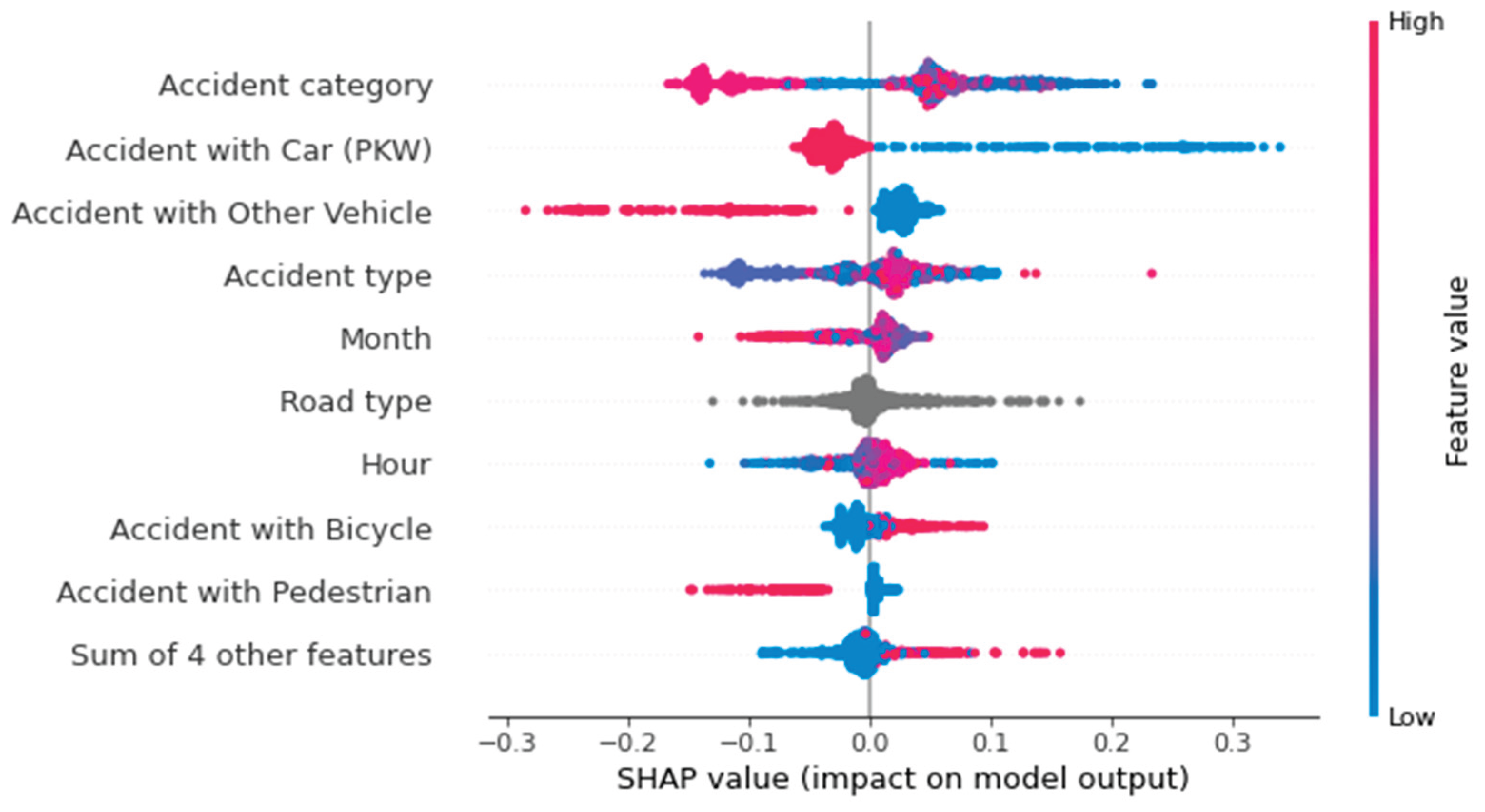

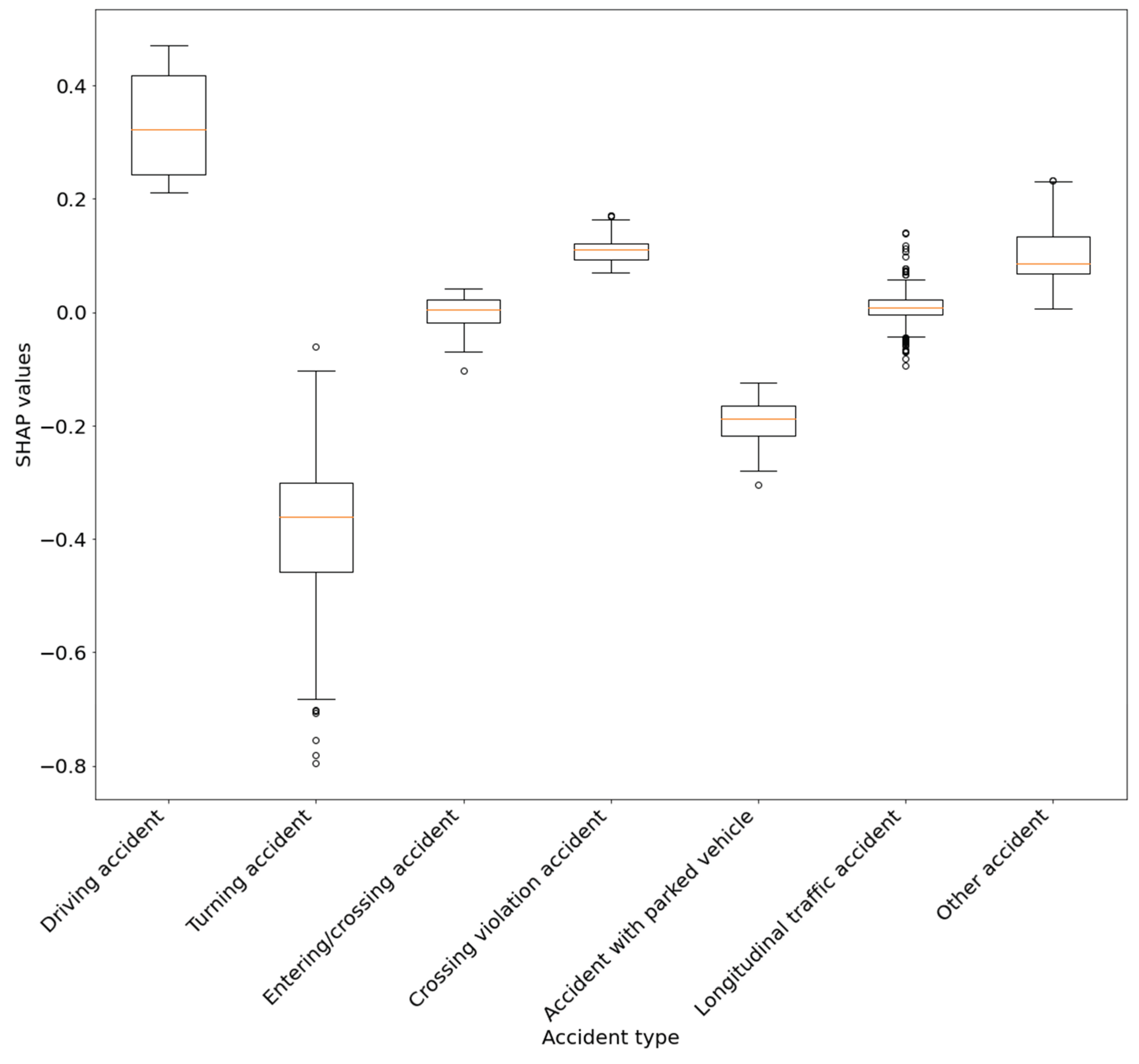

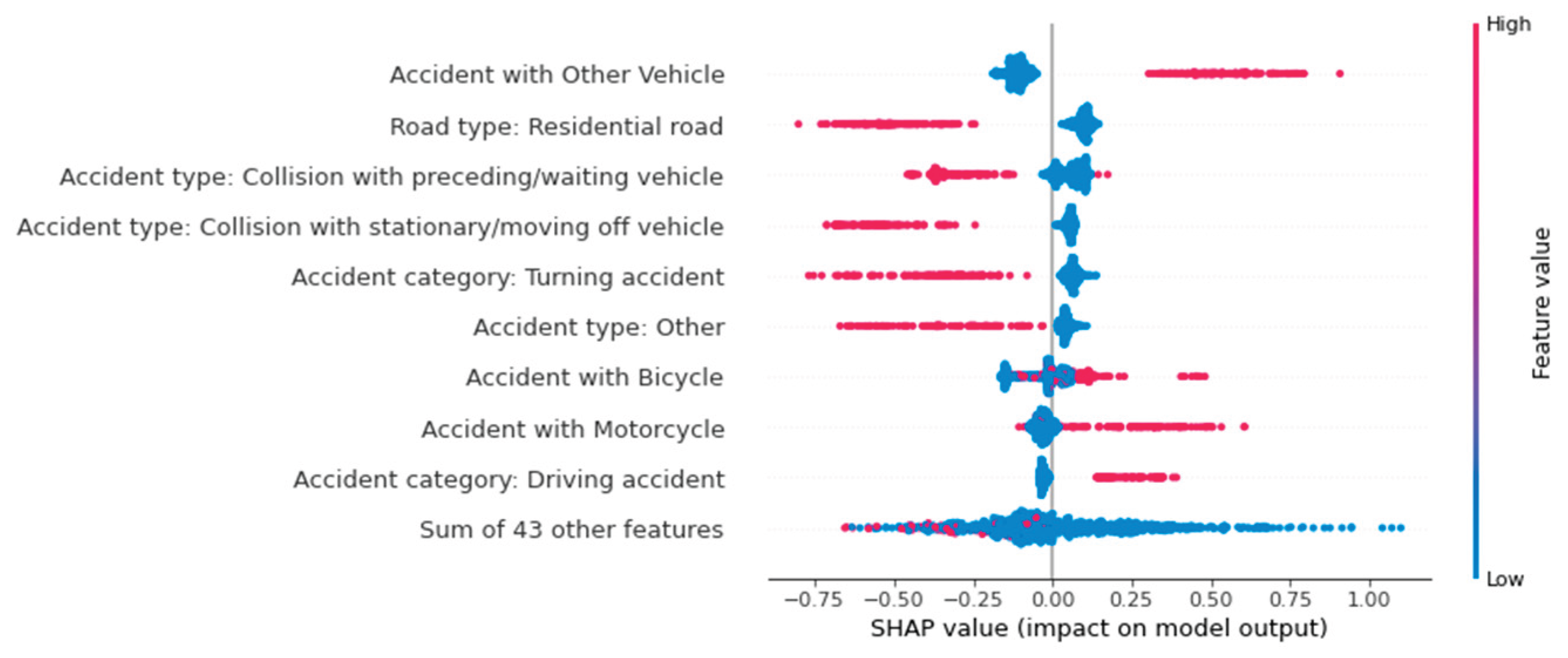

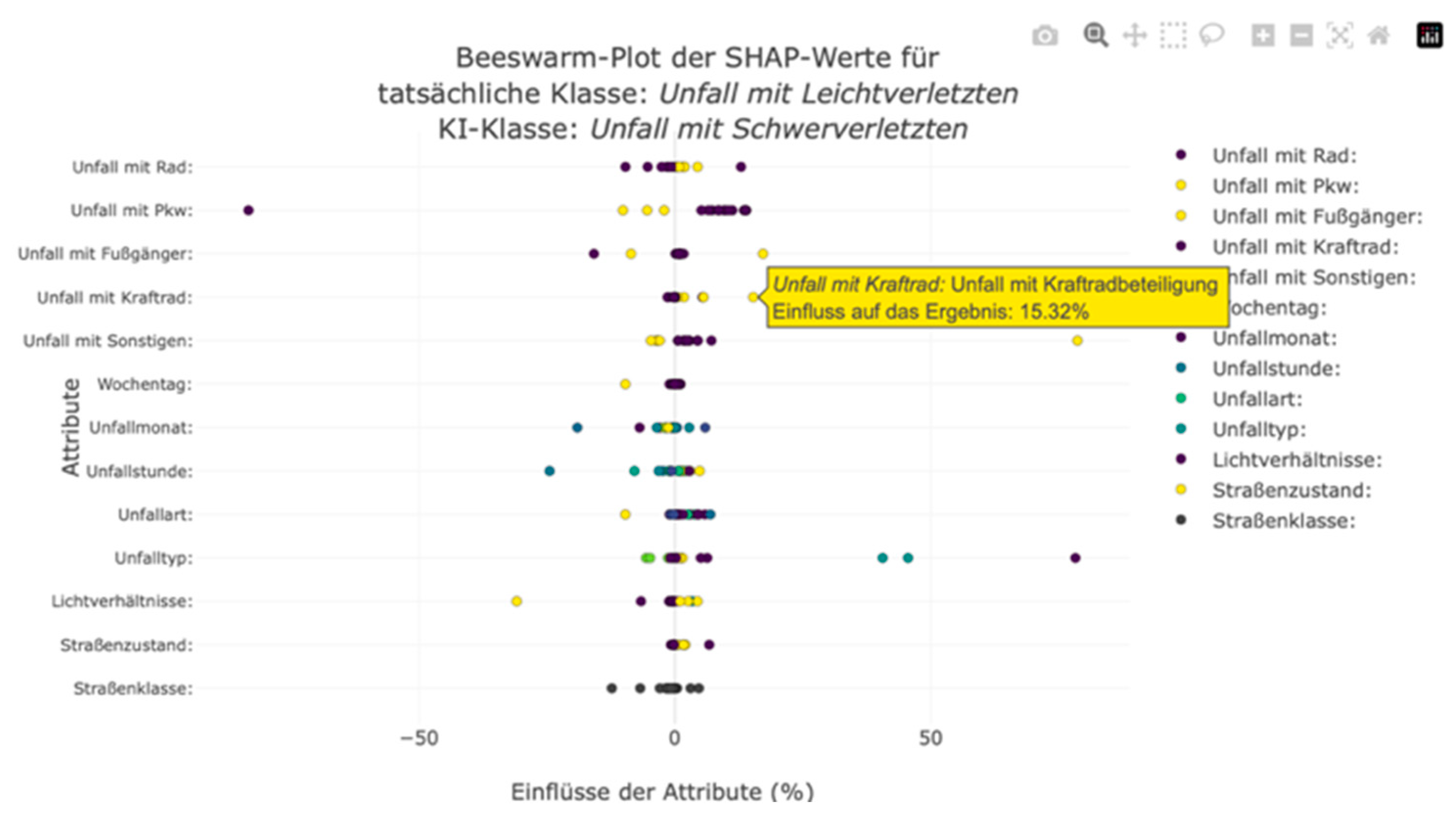

We also integrated a beeswarm plot to enable users to take a closer look at the composition of the average absolute SHAP values shown in the bar plot and to gain a deeper understanding of the influencing factors and their interrelationships. This plot shows the individual SHAP values of the instances. An axis is created for each feature, and the SHAP values are marked as points on each axis. Interacting with the points displays the respective SHAP value and the decisive feature value. This allows users to understand the direction of importance and composition of the SHAP values. As with a typical beeswarm plot, the points are color-coded according to their feature value. However, the markings do not have a continuous color gradient because the nominally scaled features are not sorted. Nevertheless, this highlighting allows users to recognize possible correlations between feature and SHAP values.

Figure 18 shows an example. This diagram illustrates the factors that influenced the misclassification of minor accidents as major accidents in a neighborhood. Looking at the feature and SHAP values together makes it possible to identify the patterns that caused the machine learning (ML) model to misclassify these accidents. As can be seen here, the involvement of a motorcycle in an accident increases the probability of serious accident severity. The lack of motorcycle involvement minimally reduced the predicted probability of accident severity. However, the bar plot does not show this correlation. Moreover, it was not possible to determine whether indicating motorcycle involvement lowered or increased the probability (see

Figure 17). Therefore, it can be deduced from the visualization of the individual SHAP values using the beeswarm plot that the involvement of a motorcycle increases the predicted probability of a serious accident in this district. Similar correlations and influences can be derived from the confusion matrix diagrams.

The developed concept enables targeted analysis of accident patterns at the district level, reduces the amount of displayed data, and combines interactive visualizations with differentiated model evaluation. Separating correctly and incorrectly classified accidents and using common slide types supports transparent and low-threshold interpretation of the influencing factors.

In addition to displaying accident severity and its influencing factors at the district level, the dashboard allows users to examine individual accidents freely. This encourages users to explore individual accidents. When examining local influencing factors, the dashboard primarily serves as a visualization and navigation tool to display the results of individual calculations rather than offering analysis options.

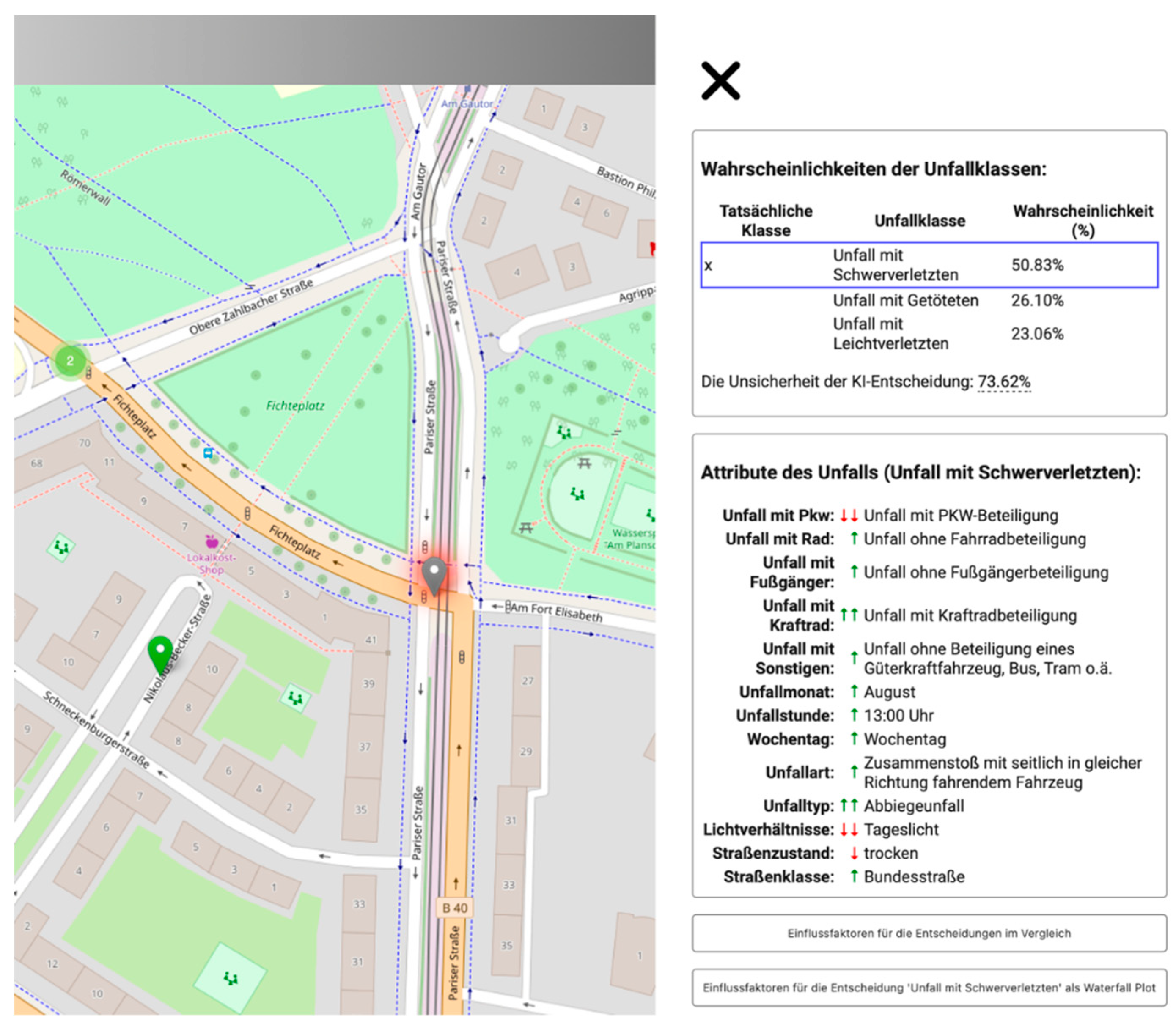

The developed process sequence can provide non-case-dependent statements on the formation probability of an ML model. Each accident contains unique information, yet all accidents are based on the same structure. To this end, accidents are color-coded on an overview map of a city district. Roussel et al. (2024) investigated the possibilities of map-based mediation of influencing factors. In addition to the accident class, additional information, such as the most influential feature in the decision-making process, a comparison of actual and predicted accident severity, or prediction uncertainty, could increase the map's informational content. Interacting with a marker displays the details of an individual accident. Accident severity is the most important information and the main inspection feature. The user is shown the probabilities of the individual accident classes, as determined by the classifier, in the information window. Along with the probability of the prediction, a comparison with the actual accident severity class is provided, and the uncertainty of the prediction is indicated. This extensive information can be displayed in table form for the clicked marker so that the map is not overloaded with complex visualizations. Nevertheless, the map and accident-related information can be viewed simultaneously. The information window lists the classification features and their respective values. This gives the user an easy overview of the accident. Details from the database enable reconstruction of the accident. Meaningful descriptions replace coded values in the database, allowing users unfamiliar with statistical office accident statistics to understand the information describing the accident.

Another difficulty was the low-threshold communication of the influences of this accident information. We developed a concept to address the following user question: How did the lighting conditions at the time of the accident affect the likelihood of serious injuries?

Influencing factors describe how individual features influence the probability of a class in the classification. Depending on whether they have increased or decreased the probability of accident severity for the model, these influencing factors can be positive or negative. The number of influencing factors determines how much a feature influences the prediction. To provide users with a simple approach to understanding these factors, they are indicated by symbols in the list of accident information next to each feature. Rather than indicating the influence as a percentage, arrows were used to visualize the direction of influence (downward arrows ”↓” express a negative influence on the prediction, and upward arrows ” ↑” express a positive influence). This relative indication of influences simplifies traceability in an ML classification. In addition, features with the largest SHAP values (i.e., the features with the greatest influence) are marked with a double arrow. This allows users to identify the most influential features without examining the exact SHAP values.

The dynamic dashboard enables users to select and analyze class-specific local SHAP values. Selecting a different accident class automatically updates the symbols and diagrams. Thus, the composition of the model's influencing factors can be read and compared directly.

Figure 19 shows a screenshot of the dashboard.

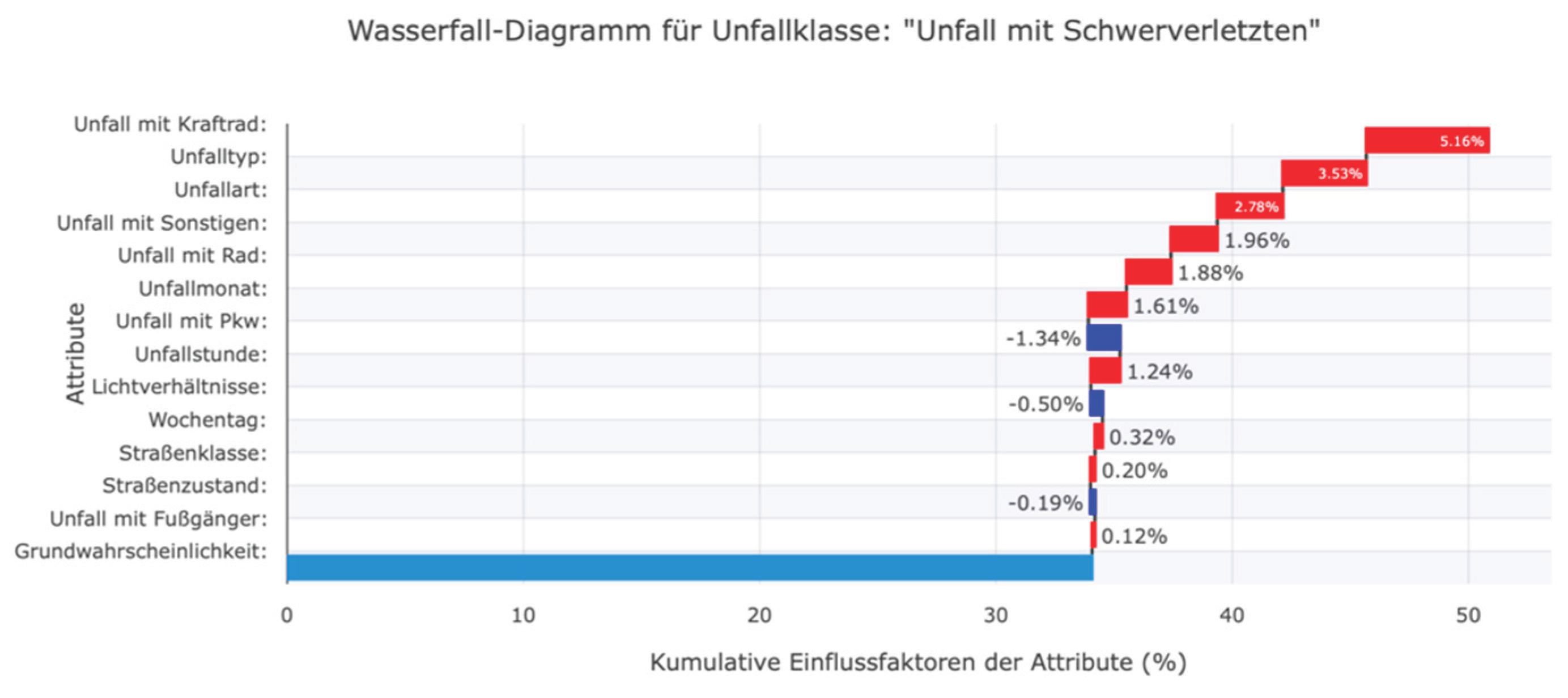

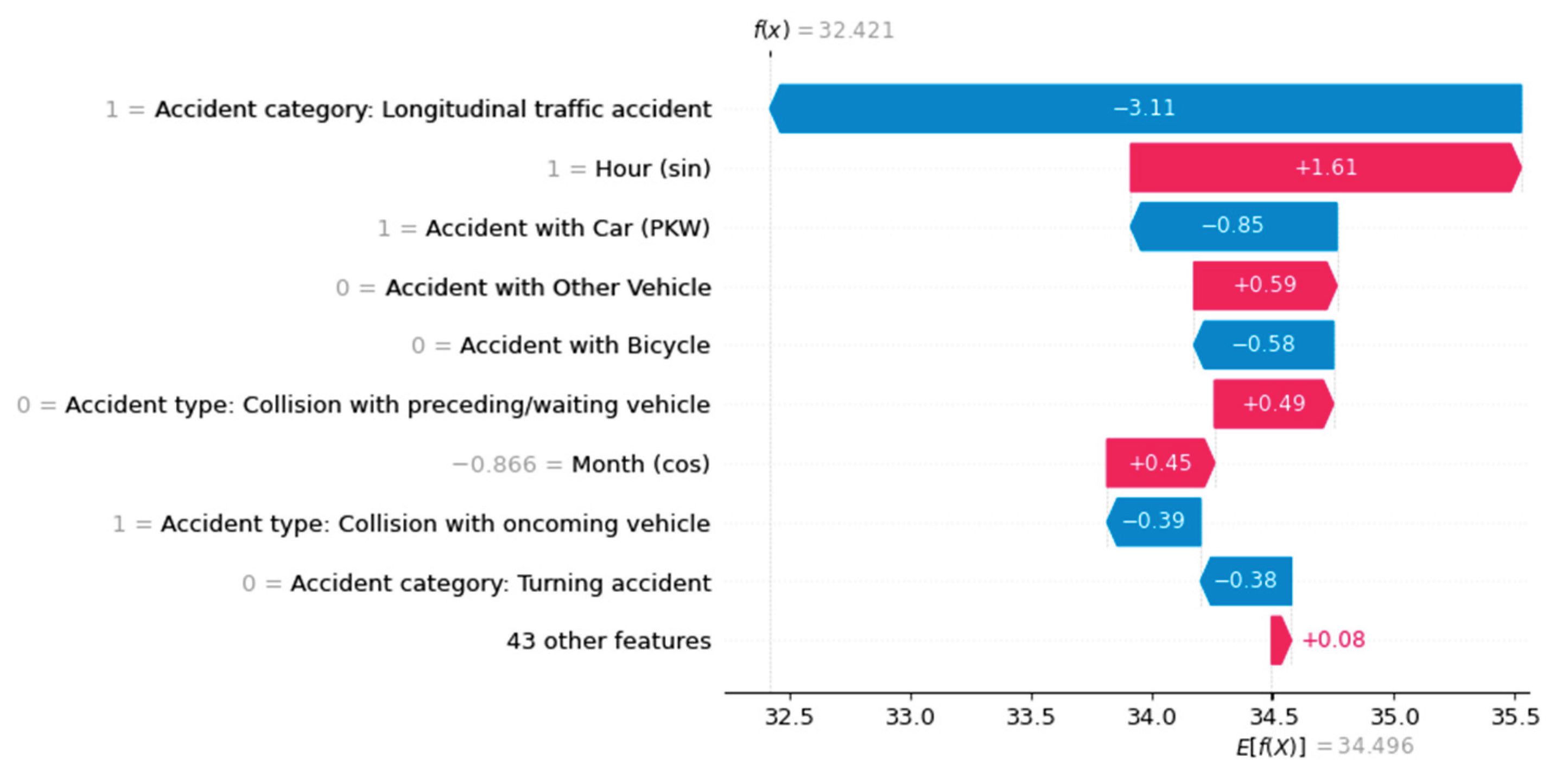

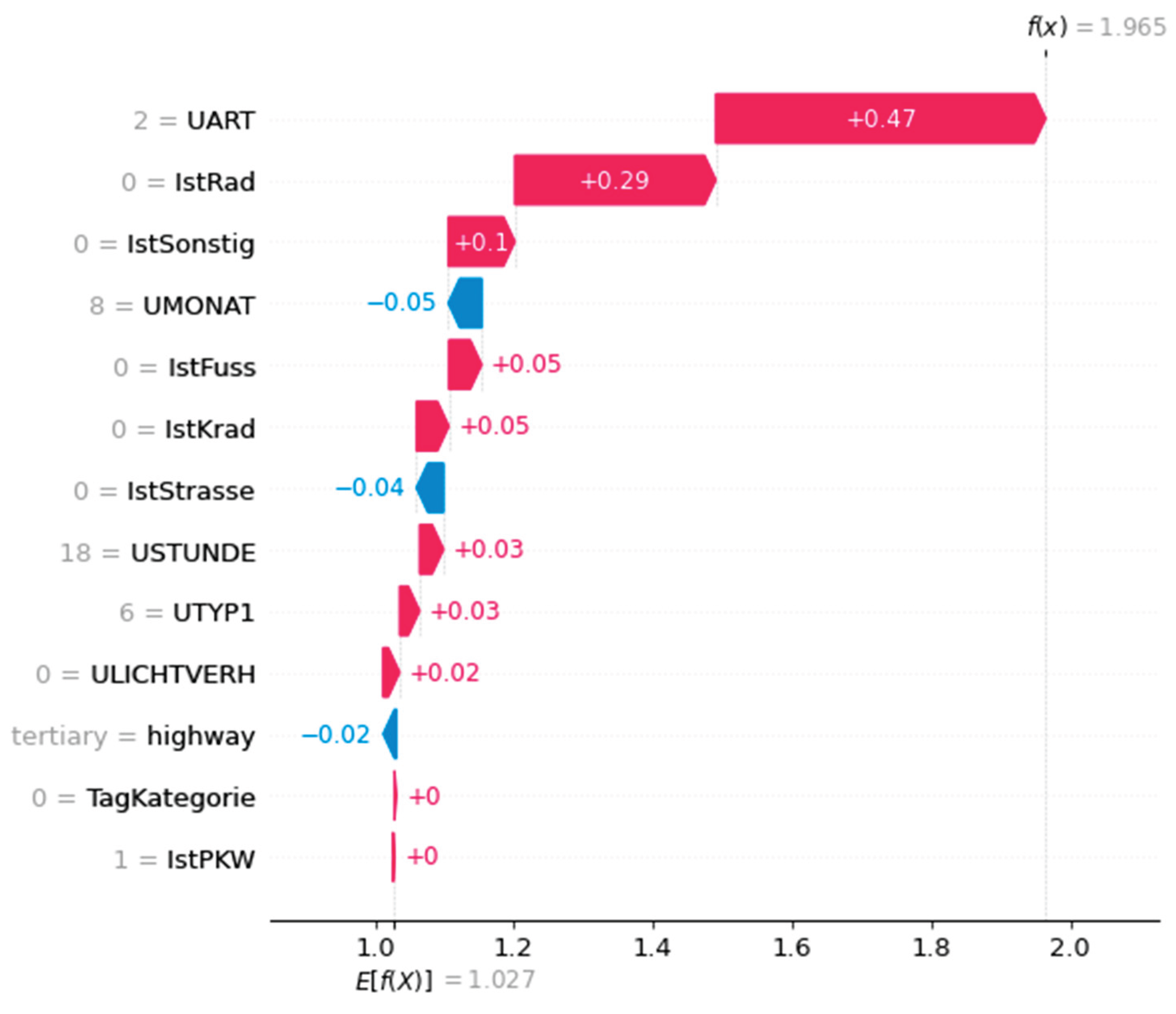

Users are gradually introduced to increasingly detailed and comprehensive information on local ML decisions, culminating in the complete display of waterfall and decision plots (see

Figure 20 and

Figure 21). Here, experts can view all values simultaneously. However, it should be noted that a potential user has no prior knowledge of ML classifications and therefore cannot understand the specified class probabilities and SHAP values. In extreme cases, this could lead the user to interpret the specified influencing factors as the importance of a feature for accident severity in the real world. It is not sufficiently communicated that the calculated influencing factors only affect an ML prediction and do not affect the actual cause of the accident.

The work primarily focused on creating a concept for calculating and visualizing the semi-global influencing factors of spatial data sets. This concept was confirmed through a practical application. This concept made it easy to identify misclassifications and their respective semi-global influencing factors. The dashboard made it possible to analyze how the machine learning (ML) decision was made. This allows for many subsequent investigations, such as those of accident data provided by federal and state statistical offices. As discussed in Chapter 2, accidents must be described by relevant characteristics. During publication, information such as the exact date and details about the individuals involved (e.g., age, gender, and driving experience) or possible drug or alcohol influences was removed. Other details, such as weather conditions, the number of people involved, speeds, and road data, were generalized or summarized. The absence of these proven relevant influencing factors can lead to a simpler, and therefore less meaningful, model. Important accident characteristics were not considered when training the ML model. This has resulted in either incorrect correlations between the accident data or information gaps in the accident description. These issues become apparent when analyzing the model using SHAP. For example, misclassified accidents in which the severity of the accident was incorrectly stated as fatal instead of minor disproportionately influenced the feature "Involvement of another means of transport" (e.g., truck, bus, or streetcar), since motor vehicles were predominantly involved in fatal accidents (see

Figure 4a). The model, which was trained using German accident data, can only predict accident severity based on the 13 accident features. This resulted in information gaps in the data. Consequently, accidents involving serious injuries were classified the same as those involving minor injuries. Due to the small number of features, the model could not assign accidents to the correct severity level. The high misclassification rate of the trained ML model demonstrates this. In this context, the use of freely available OpenStreetMap (OSM) data also requires critical consideration. Since OpenStreetMap (OSM) relies on the voluntary collection of data by its users, the completeness and timeliness of the data are not assured. This primarily refers to the completeness of a road's properties, rather than the road network. It is necessary to investigate whether additional information could further improve quality. In addition to additional road characteristics, this includes, above all, weather data and information on those involved in accidents. Efficient approaches to data enrichment must be developed, as well as new sources of information. Additionally, we must check whether an ML model can handle greater data complexity. It was found that the MLP-NN did not produce improved predictions with the additional road class as a feature in the training. Investigating more complex ML models may also be necessary to determine their suitability for predicting accident severity.

Figure 20.

Visualisierung eines Unfalls mittels Karte und Informationsfenster im Web-Dashboard.

Figure 20.

Visualisierung eines Unfalls mittels Karte und Informationsfenster im Web-Dashboard.

Figure 21.

Waterfall plot in the web dashboard to visualize the local factors influencing the forecast: Persons seriously injured.

Figure 21.

Waterfall plot in the web dashboard to visualize the local factors influencing the forecast: Persons seriously injured.

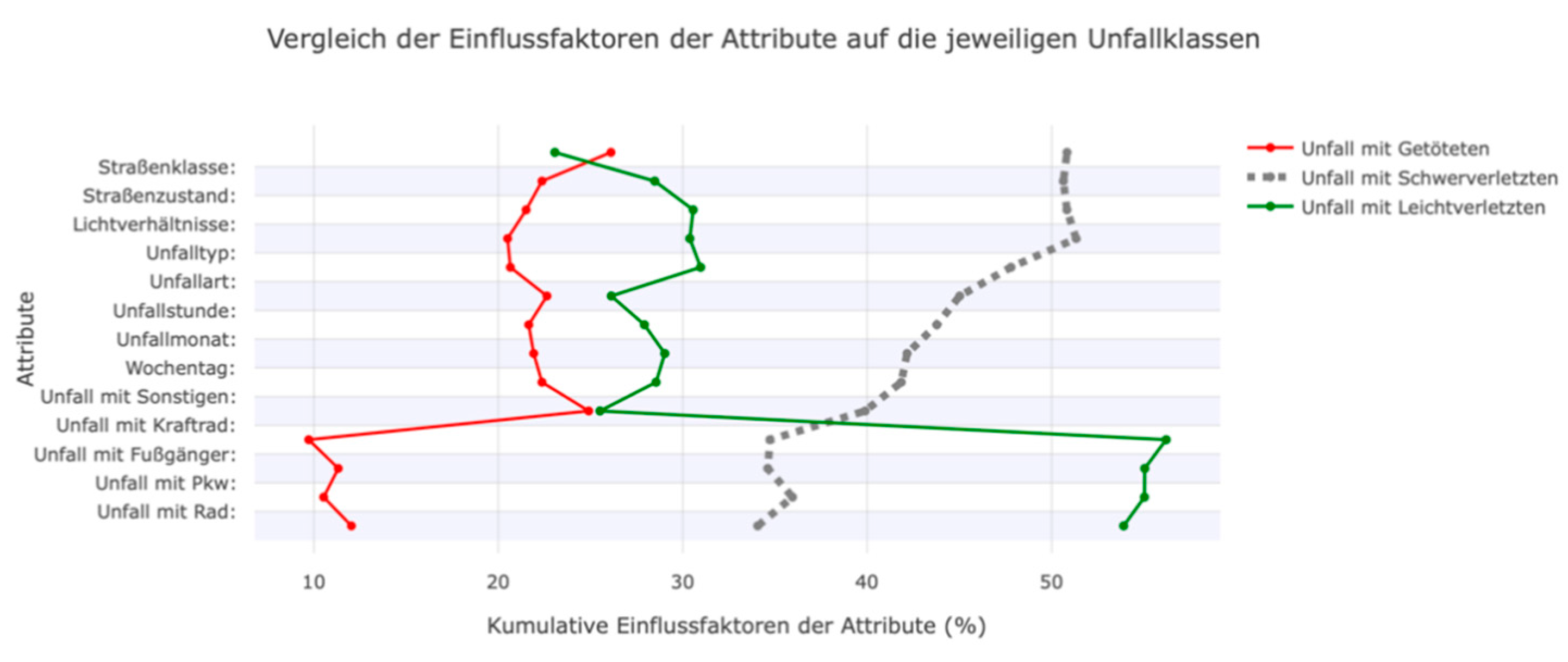

Figure 22.

Decision plot in the web dashboard to compare the factors influencing the accident classes.

Figure 22.

Decision plot in the web dashboard to compare the factors influencing the accident classes.

The use of unbalanced accident data quickly made a sampling approach necessary. Unlike other studies, we employed a combination of Undersampling and oversampling. While this was the only way to distinguish between the individual accident classes, the combination of the few categorical features and the unbalanced distribution resulted in poorer outcomes than those of other studies, such as Satu et al. (2018) and Pourroostaei Ardakani et al. (2023). This could be related to the conservative "macro averaging" assumed for the calculation.

Thanks to the dashboard, it is possible to conduct deeper investigations into the factors that influence ML decisions. The spatial and thematic breakdowns of the data enabled a thorough investigation of the accident data and the classifier.