Submitted:

26 May 2025

Posted:

27 May 2025

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Overview of the BondMachine Architecture

2.1. Architecture Specification

2.2. Architecture Handling

3. Strategy and Ecosystem Improvements to Accelerate DL Inference Tasks

3.1. A Flexible ISA: Combining Static and Dynamic Instructions

3.2. Extending Numerical Representations in the BM Ecosystem: FloPoCo, Linear Quantization and the Bmnumbers Package

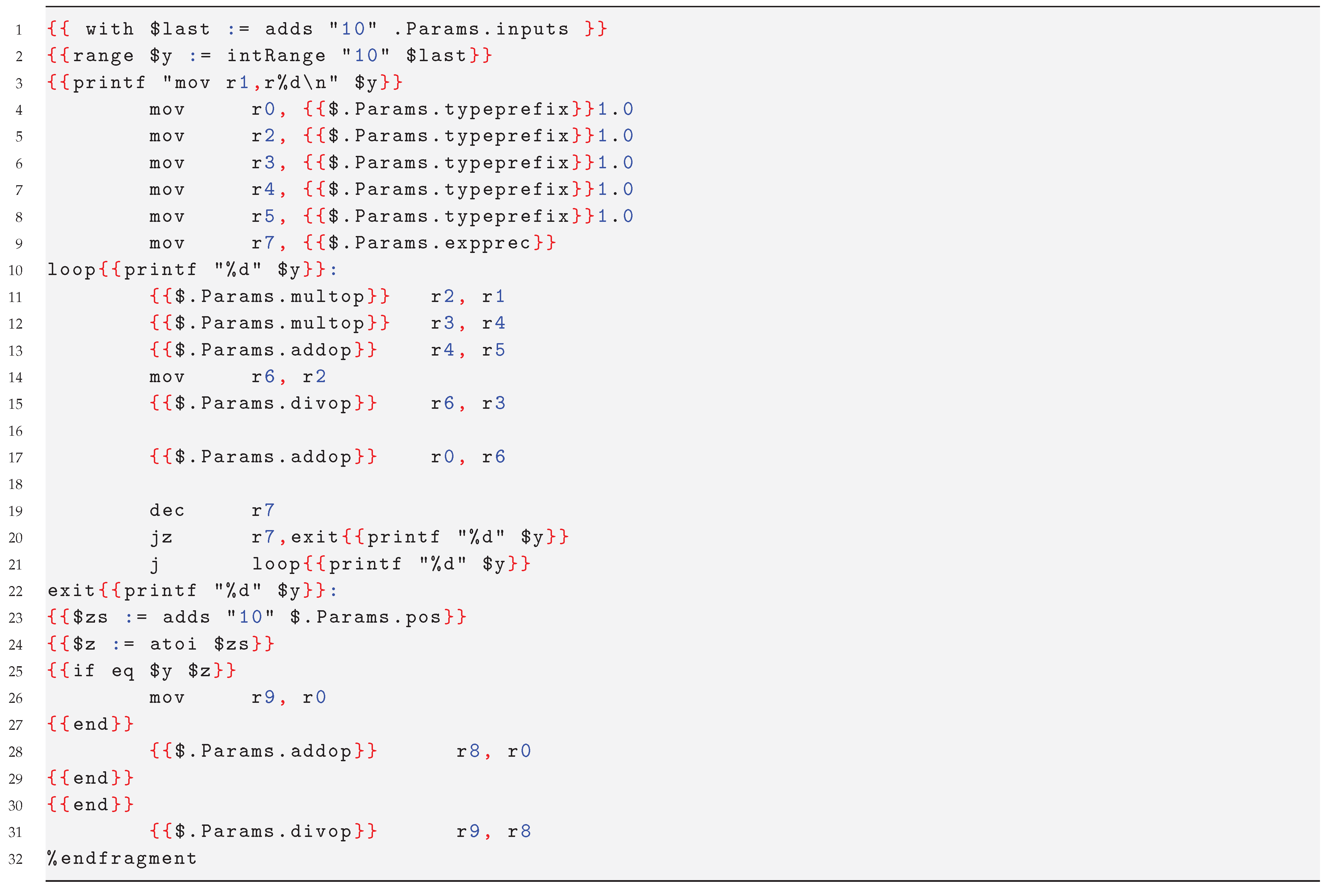

3.3. BASM and Fragments

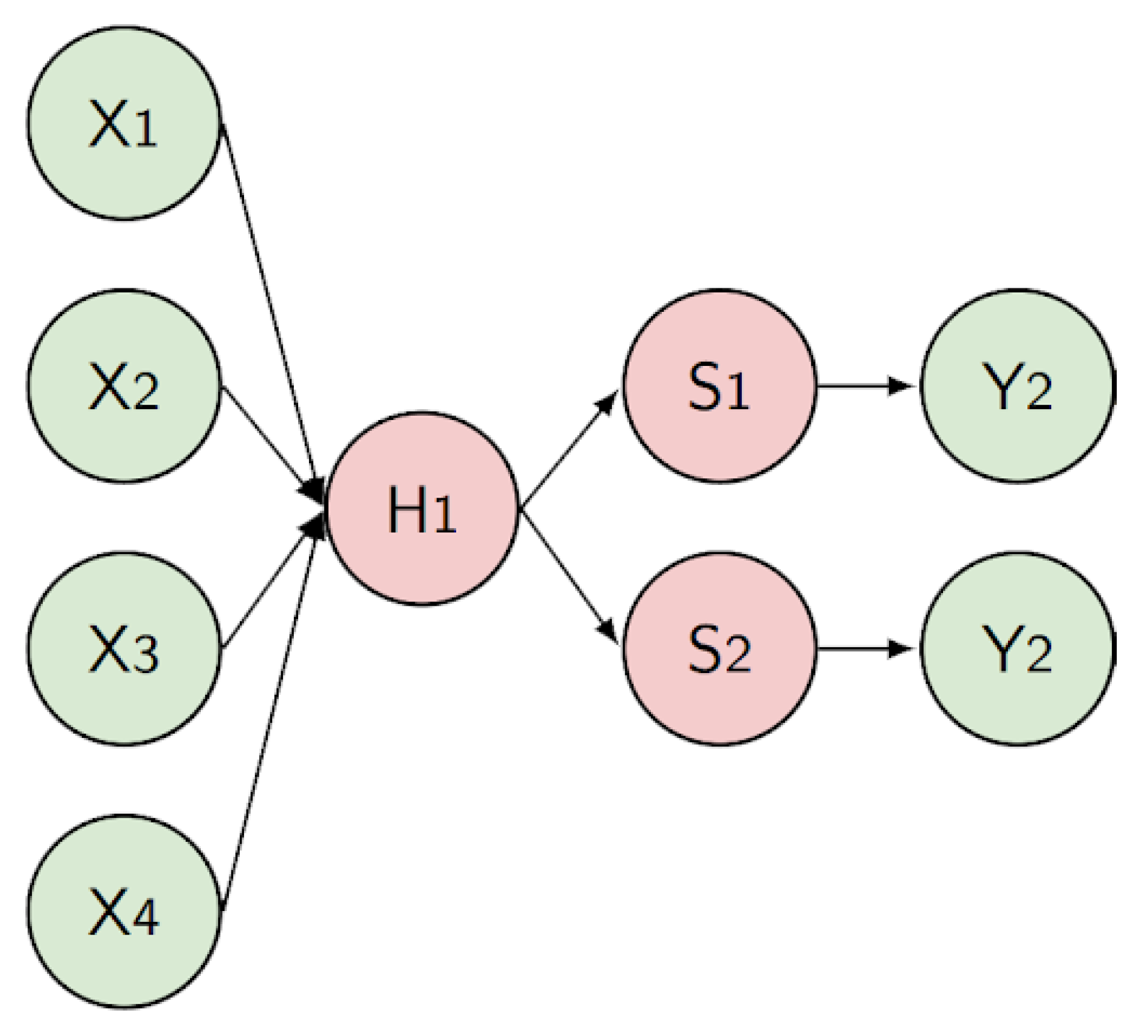

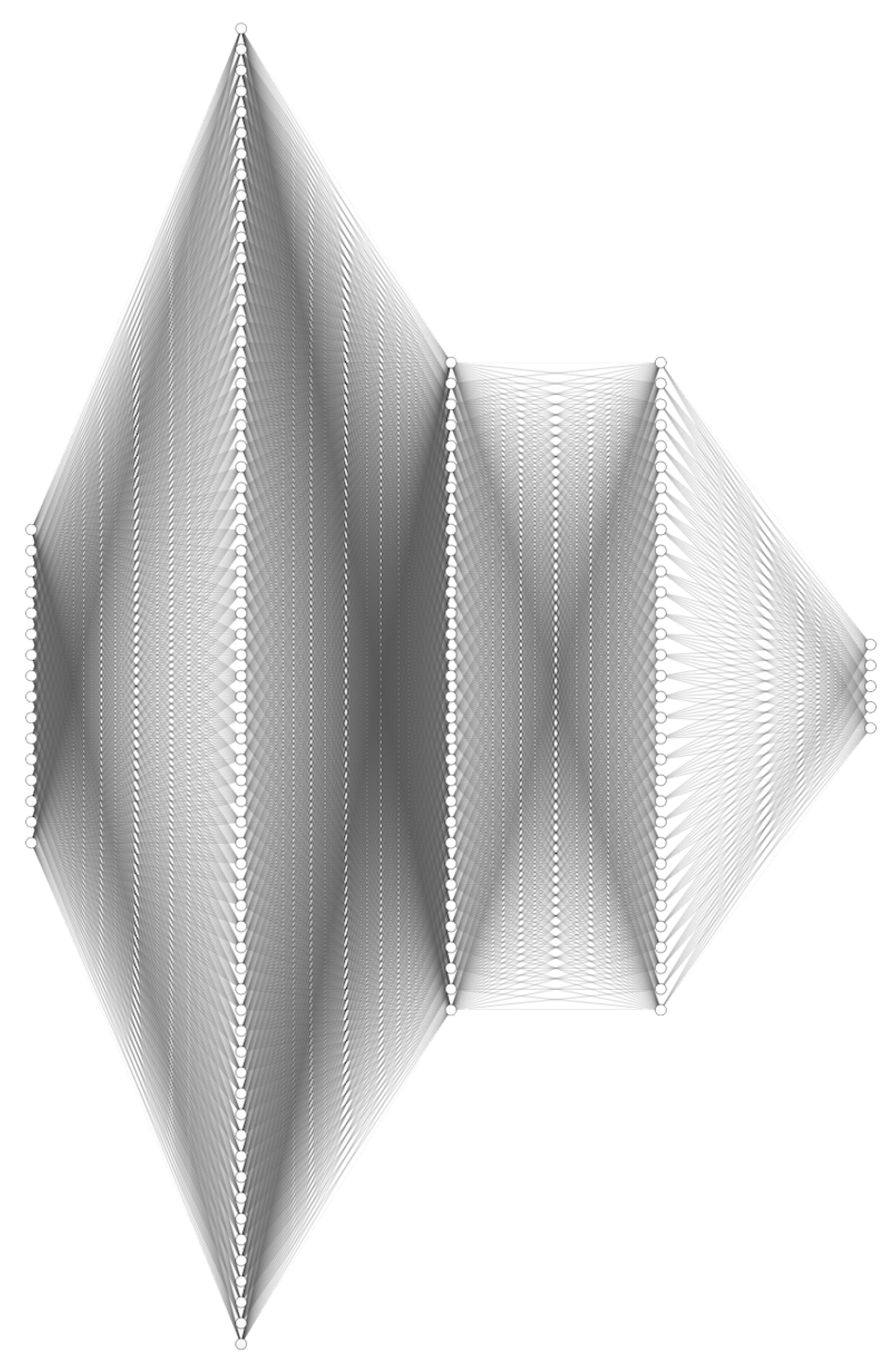

3.4. Mapping a DNN as an Heterogeneous Set of CPs

3.5. BM as Accelerator

3.6. Real-Time Power Measurement and Energy Profiling

4. Benchmarking the DL Inference FPGA-Based System for Jet Classification in LHC Experiments

| Data Type | LUTs | REGs | DSPs | |||

|---|---|---|---|---|---|---|

| Count | (%) | Count | (%) | Count | (%) | |

| float32 | 476416 | 36.54 | 456235 | 17.50 | 954 | 10.57 |

| float16 | 288944 | 22.16 | 298191 | 11.44 | 479 | 5.31 |

| flpe7f22 | 423915 | 32.52 | 352113 | 13.50 | 950 | 10.53 |

| flpe5f11 | 393657 | 30.20 | 318821 | 12.23 | 477 | 5.29 |

| flpe6f10 | 442809 | 33.97 | 334414 | 12.83 | 4 | 0.04 |

| flpe4f9 | 347633 | 26.67 | 275653 | 10.57 | 4 | 0.04 |

| flpe5f8 | 299033 | 22.94 | 261403 | 10.03 | 4 | 0.04 |

| flpe6f4 | 274523 | 21.06 | 236429 | 9.07 | 4 | 0.04 |

| fixed<16,8> | 205071 | 15.73 | 207670 | 7.94 | 477 | 5.29 |

| Data Type | Latency ( ) | Accuracy (%) |

|---|---|---|

| float32 | 12.29 ± 0.15 | 100 |

| float16 | 8.65 ± 0.15 | 99.17 |

| flpe7f22 | 6.23 ± 0.18 | 100.00 |

| flpe5f11 | 4.46 ± 0.21 | 100.00 |

| flpe6f10 | 4.49 ± 0.18 | 100.00 |

| flpe4f9 | 2.80 ± 0.15 | 97.78 |

| flpe5f8 | 3.31 ± 0.12 | 99.74 |

| flpe6f4 | 2.72 ± 0.23 | 96.39 |

| fixed<16,8> | 1.39 ± 0.06 | 86.03 |

4.1. Comparing HLS4ML and BM

5. Conclusions

Acknowledgments

References

- Mariotti, M.; Magalotti, D.; Spiga, D.; Storchi, L. The BondMachine, a moldable computer architecture. Parallel Computing 2022, 109, 102873. [Google Scholar] [CrossRef]

- Jordan, M.I.; Mitchell, T.M. Machine learning: Trends, perspectives, and prospects. Science 2015, 349, 255–260. [Google Scholar] [CrossRef] [PubMed]

- Abiodun, O.I.; Jantan, A.; Omolara, A.E.; Dada, K.V.; Mohamed, N.A.; Arshad, H. State-of-the-art in artificial neural network applications: A survey. Heliyon 2018, 4. [Google Scholar] [CrossRef] [PubMed]

- Storchi, L.; Cruciani, G.; Cross, S. DeepGRID: Deep Learning Using GRID Descriptors for BBB Prediction. Journal of Chemical Information and Modeling 2023, 63, 5496–5512. [Google Scholar] [CrossRef]

- Hong, Q.; Storchi, L.; Bartolomei, M.; Pirani, F.; Sun, Q.; Coletti, C. Inelastic N 2+ H 2 collisions and quantum-classical rate coefficients: large datasets and machine learning predictions. The European Physical Journal D 2023, 77, 128. [Google Scholar] [CrossRef]

- Hong, Q.; Storchi, L.; Sun, Q.; Bartolomei, M.; Pirani, F.; Coletti, C. Improved Quantum–Classical Treatment of N2–N2 Inelastic Collisions: Effect of the Potentials and Complete Rate Coefficient Data Sets. Journal of Chemical Theory and Computation 2023. [Google Scholar] [CrossRef]

- Tedeschi, T.; Baioletti, M.; Ciangottini, D.; Poggioni, V.; Spiga, D.; Storchi, L.; Tracolli, M. Smart Caching in a Data Lake for High Energy Physics Analysis. Journal of Grid Computing 2023, 21, 42. [Google Scholar] [CrossRef]

- Hua, H.; Li, Y.; Wang, T.; Dong, N.; Li, W.; Cao, J. Edge computing with artificial intelligence: A machine learning perspective. ACM Computing Surveys 2023, 55, 1–35. [Google Scholar] [CrossRef]

- Capra, M.; Bussolino, B.; Marchisio, A.; Masera, G.; Martina, M.; Shafique, M. Hardware and software optimizations for accelerating deep neural networks: Survey of current trends, challenges, and the road ahead. IEEE Access 2020, 8, 225134–225180. [Google Scholar] [CrossRef]

- Ngadiuba, J.; Loncar, V.; Pierini, M.; Summers, S.; Di Guglielmo, G.; Duarte, J.; Harris, P.; Rankin, D.; Jindariani, S.; Liu, M.; et al. Compressing deep neural networks on FPGAs to binary and ternary precision with hls4ml. Machine Learning: Science and Technology 2020, 2, 015001. [Google Scholar] [CrossRef]

- Thomas, D. Reducing machine learning inference cost for pytorch models 2020.

- Plumed, F.; Avin, S.; Brundage, M.; Dafoe, A.; hÉigeartaigh, S.; Hernandez-Orallo, J. Accounting for the Neglected Dimensions of AI Progress 2018.

- Samayoa, W.F.; Crespo, M.L.; Cicuttin, A.; Carrato, S. A Survey on FPGA-based Heterogeneous Clusters Architectures. IEEE Access 2023. [Google Scholar]

- Zhao, T. FPGA-Based Machine Learning: Platforms, Applications, Design Considerations, Challenges, and Future Directions. Highlights in Science, Engineering and Technology 2023, 62, 96–101. [Google Scholar] [CrossRef]

- Liu, X.; Ounifi, H.A.; Gherbi, A.; Li, W.; Cheriet, M. A hybrid GPU-FPGA based design methodology for enhancing machine learning applications performance. Journal of Ambient Intelligence and Humanized Computing 2020, 11, 2309–2323. [Google Scholar] [CrossRef]

- Ghanathe, N.P.; Seshadri, V.; Sharma, R.; Wilton, S.; Kumar, A. MAFIA: Machine learning acceleration on FPGAs for IoT applications. In Proceedings of the 2021 31st International Conference on Field-Programmable Logic and Applications (FPL). IEEE, 2021, pp. 347–354.

- Shawahna, A.; Sait, S.M.; El-Maleh, A. FPGA-based accelerators of deep learning networks for learning and classification: A review. ieee Access 2018, 7, 7823–7859. [Google Scholar] [CrossRef]

- Monmasson, E.; Cirstea, M.N. FPGA design methodology for industrial control systems—A review. IEEE transactions on industrial electronics 2007, 54, 1824–1842. [Google Scholar] [CrossRef]

- Lattner, C.; Adve, V. LLVM: A compilation framework for lifelong program analysis & transformation. Proceedings of the international symposium on Code generation and optimization: feedback-directed and runtime optimization, 2004; 75–88. [Google Scholar]

- Mariotti, M.; Storchi, L.; Spiga, D.; Salomonie, D.; Boccalif, T.; Bonacorsid, D. The BondMachine toolkit: Enabling Machine Learning on FPGA. In Proceedings of the International Symposium on Grids & Clouds 2019, 2019, 20. [Google Scholar]

- Meyerson, J. The go programming language. IEEE software 2014, 31, 104–104. [Google Scholar] [CrossRef]

- Dinechin, F.d.; Lauter, C.; Tisserand, A. FloPoCo: A generator of floating-point arithmetic operators for FPGAs. ACM Transactions on Reconfigurable Technology and Systems (TRETS) 2009, 2, 10. [Google Scholar]

- Gholami, A.; Kim, S.; Dong, Z.; Yao, Z.; Mahoney, M.W.; Keutzer, K. A survey of quantization methods for efficient neural network inference. In Low-Power Computer Vision; Chapman and Hall/CRC, 2022; pp. 291–326.

- de Dinechin, F.; Pasca, B. Designing Custom Arithmetic Data Paths with FloPoCo. IEEE Design & Test of Computers 2011, 28, 18–27. [Google Scholar] [CrossRef]

- Haykin, S. Neural networks. A comprehensive foundation 1994. [Google Scholar]

- Kljucaric, L.; George, A.D. Deep learning inferencing with high-performance hardware accelerators. ACM Transactions on Intelligent Systems and Technology 2023, 14, 1–25. [Google Scholar] [CrossRef]

- AMBA AXI Protocol Specification, 2023.

- Denby, B. The Use of Neural Networks in High-Energy Physics. Neural Computation 1993, 5, 505–549. [Google Scholar] [CrossRef]

- Cagnotta, A.; Carnevali, F.; De Iorio, A. Machine Learning Applications for Jet Tagging in the CMS Experiment. Applied Sciences 2022, 12. [Google Scholar] [CrossRef]

- Savard, Claire. Overview of the HL-LHC Upgrade for the CMS Level-1 Trigger. EPJ Web of Conf. 2024, 295, 02022. [CrossRef]

- Aarrestad, T.; Loncar, V.; Ghielmetti, N.; Pierini, M.; Summers, S.; Ngadiuba, J.; Petersson, C.; Linander, H.; Iiyama, Y.; Di Guglielmo, G.; et al. Fast convolutional neural networks on FPGAs with hls4ml. Machine Learning: Science and Technology 2021, 2, 045015. [Google Scholar] [CrossRef]

- Fahim, F.; Hawks, B.; Herwig, C.; Hirschauer, J.; Jindariani, S.; Tran, N.; Carloni, L.P.; Di Guglielmo, G.; Harris, P.; Krupa, J.; et al. hls4ml: An open-source codesign workflow to empower scientific low-power machine learning devices. arXiv preprint, 2021; arXiv:2103.05579. [Google Scholar]

| Numerical representation | Prefix | Description |

|---|---|---|

| Binary | 0b | Binary number |

| Decimal | 0d | Decimal number |

| Hexadecimal | 0x | Hexadecimal number |

| Floating point 32 bits | 0f<32> | IEEE 754 single precision floating point number |

| Floating point 16 bits | 0f<16> | IEEE 754 half precision floating point number |

| Linear Quantization | 0lq<s,t> | Linear quantized number with size s and type t |

| FloPoCo | 0flp<e,f> | FloPoCo floating point number with exponent e and mantissa f |

| Fixed-point | 0fps<s,f> | Fixed-point number with s total bits and f fractional bits |

| System | Time / Inf (s) | En. / Inf (J) |

|---|---|---|

| ARM Cortex A9 | ||

| Intel i7-1260P | ||

| ZedBoard BM |

| Data type | LUTs | Luts % | REGs | REGs % | DSPs | DSPs % | Latency ( ) | Acc % |

|---|---|---|---|---|---|---|---|---|

| fixed<16,6> | 134373 | 10.31 | 136113 | 6.89 | 255 | 2.83 | 0.17 ± 0.01 | 95.11 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2025 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).