1. Introduction

Autonomous vehicles (AVs) are envisioned to become integral to future transportation systems, offering numerous benefits such as improved mobility, reduced emissions, and enhanced safety by minimizing human-related errors [

1,

2,

3]. Additionally, AVs offer users the opportunity to engage in non-driving-related tasks (NDRTs) while being transported, thereby transforming the concept of private vehicle driving [

4]. To better understand the benefits, it is essential to recognize that each automation level has distinct characteristics, allowing for specific advantages. The SAE International’s “Taxonomy and Definitions for Terms Related to Driving Automation Systems for On-Road Motor Vehicles” provides a well-known categorization of automation, dividing autonomous driving into six levels. While Levels 0 to 2 require human drivers to monitor and control the vehicle, Levels 4 and 5 presuppose high and full automation respectively. Among them, Level 3 refers to a type of conditional automation, also known as partial or semi-autonomous driving, requiring the driver to take vehicle control in situations unmanageable by the autonomous system [

5]. In all five levels, AVs benefit from automation capabilities but also face challenges that could potentially undermine their benefits.

Semi-autonomous vehicles (Level 3) have attracted attention for their potential to allow interaction between drivers and autonomous systems [

2,

6,

7]. This level of automation has advantages and disadvantages, making it a contentious design choice. On the positive side, interaction with semi-autonomous vehicles enhances their acceptance, addressing one of the key cognitive barriers to widespread adoption [

8,

9,

10,

11]. However, requiring drivers to be ready to take over control conflicts with one of the main benefits of AVs: allowing drivers to engage in non-driving-related tasks. Asking users to remain alert throughout the ride due to potential critical situations negatively impacts their experience, ultimately reducing AVs acceptance [

8]. Thus, the primary issue with such vehicles is often categorized as the "out-of-the-loop" problem. The problem is defined as the absence of appropriate perceptual information and motor processes to manage the driving situation [

12,

13]. The out-of-the-loop problem occurs when sudden take-over requests (TORs) by the vehicle disrupts drivers’ motor calibration and gaze patterns, ultimately delaying reaction times, deteriorating driving performance, and increasing the likelihood of accidents [

12,

13,

14,

15]. Appropriate interface designs that facilitate driver-vehicle interaction and prepare drivers to take over control can mitigate the out-of-the-loop problem.

To ensure a safe and efficient transition from autonomous to manual control, it is crucial to provide drivers with appropriate Human-Machine Interfaces (HMIs) that bring them back into the loop. Inappropriate or delayed responses to TORs frequently lead to accidents that pose considerable harm to drivers, passengers, and those on the road. HMIs that provide appropriate warning signals can facilitate this transition, making it faster and more efficient by enhancing situational awareness and performance [

1,

3,

16,

17]. For instance, multimodal warning signals allow users to perform non-driving-related tasks while ensuring their attention can be quickly recaptured when necessary, thereby preventing dangerous driver behavior [

18]. Warning signals such as auditory, visual, and tactile convey semantic and contextual information about the driving situation that is crucial when retrieving control [

18,

19,

20]. The process of taking control of the vehicle involves several information processing stages, including the perception of stimuli (visual, auditory, or tactile), cognitive processing of the traffic situation, decision-making, resuming motor readiness, and executing the action [

17,

21,

22]. Effective multimodal warning signals play an indispensable role in moderating information processing during take-over by enhancing situational awareness and performance.

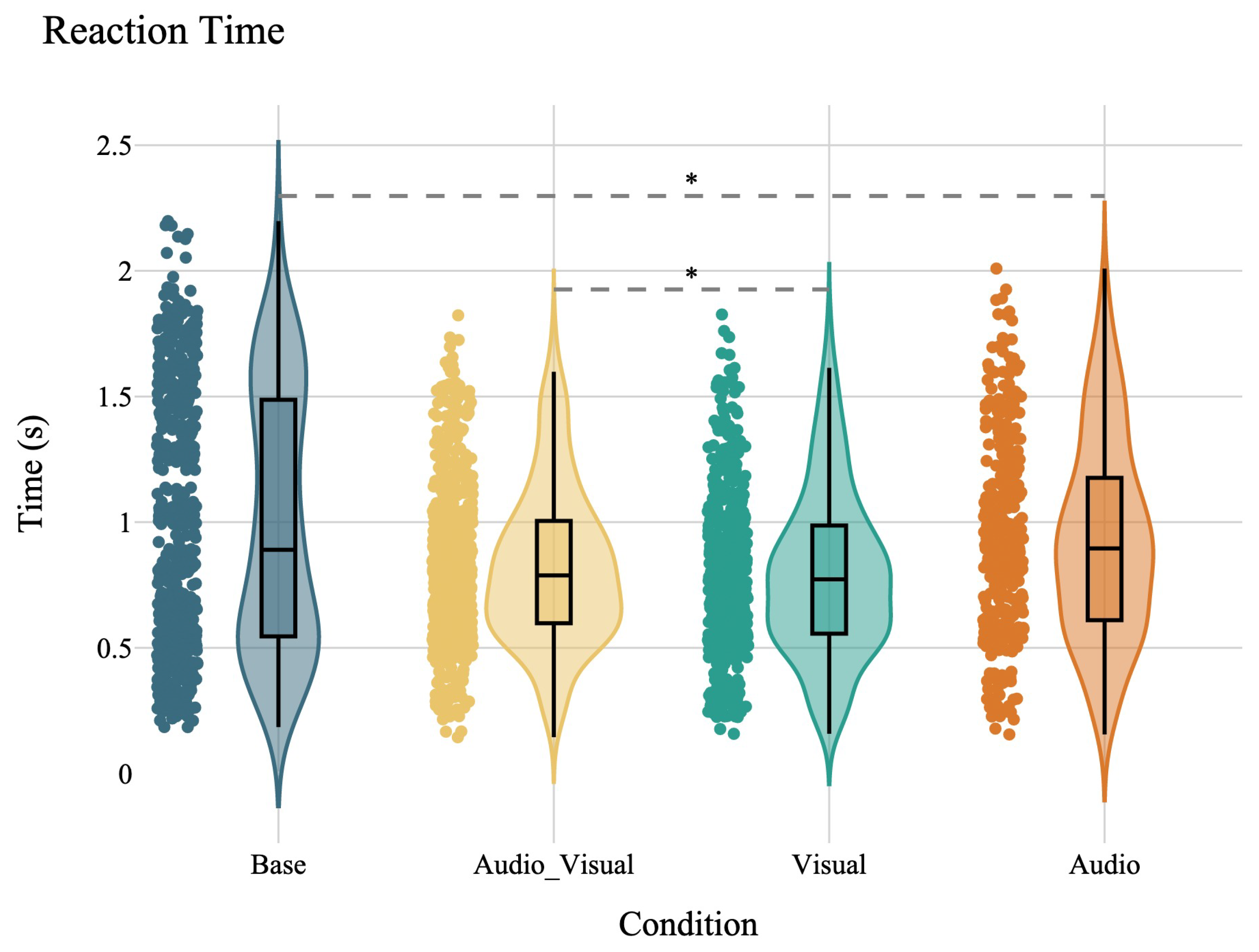

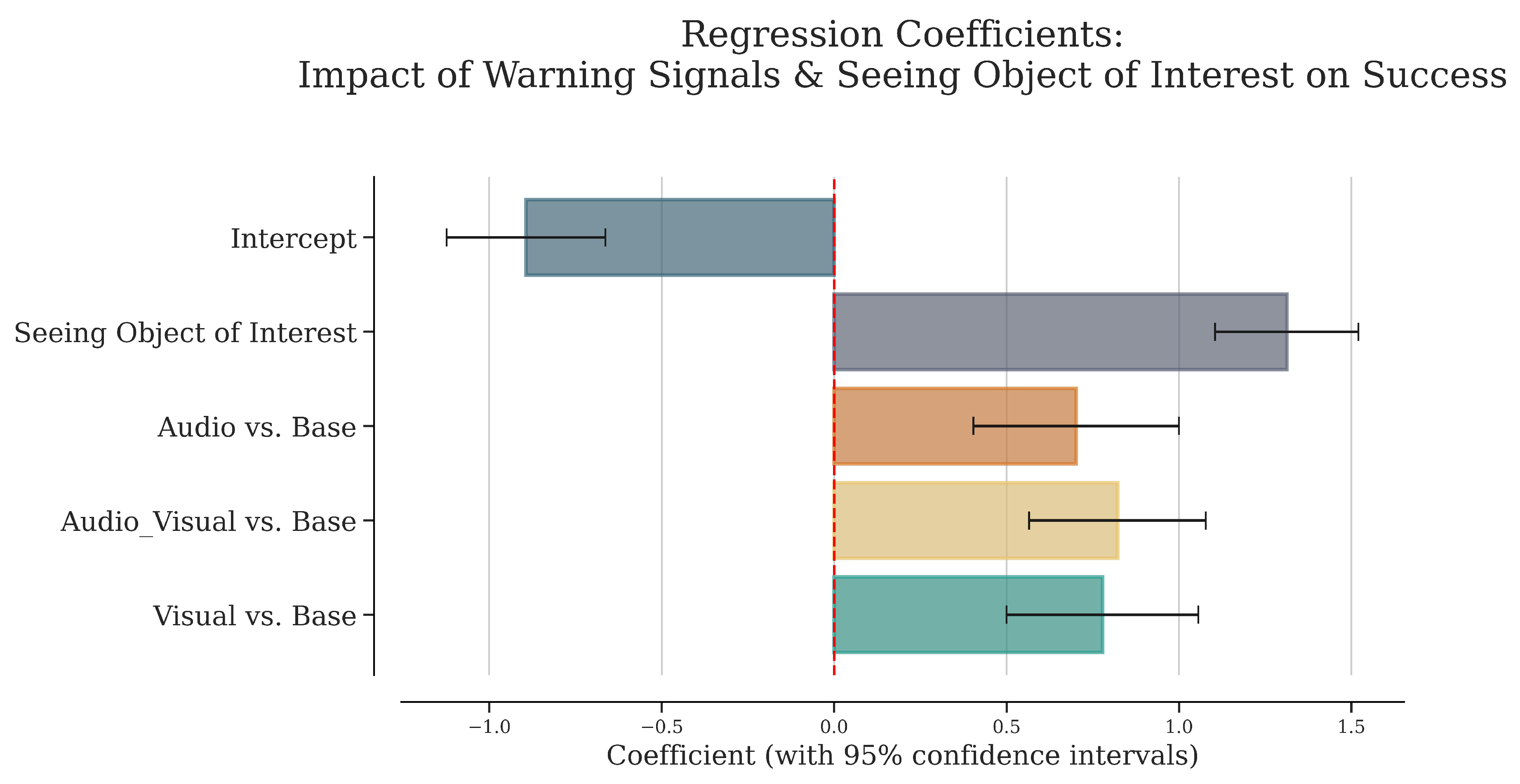

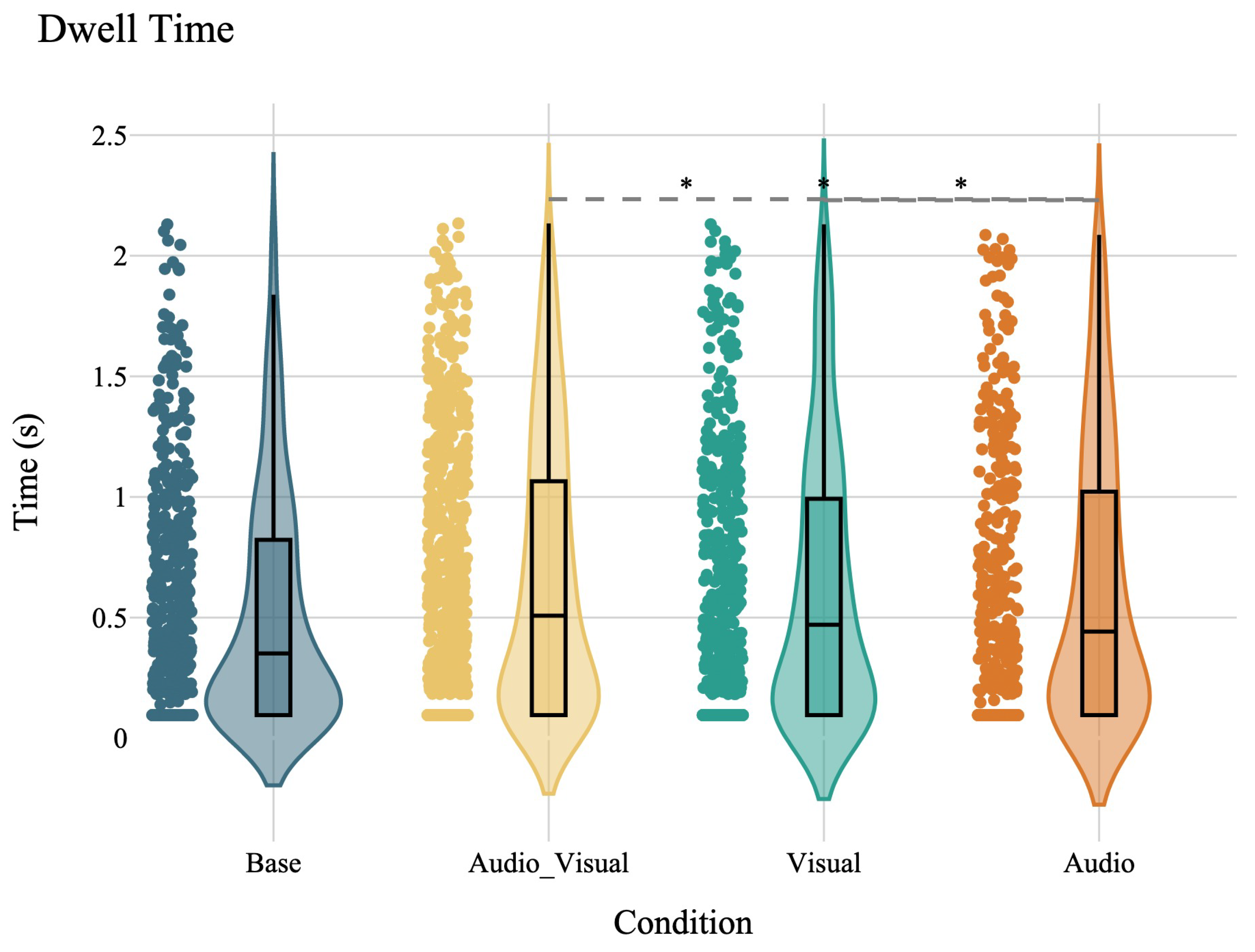

Situational awareness and reaction time are two critical factors within the process of taking over control. Situational awareness is defined as "the perception of elements in the environment within a volume of time and space, the comprehension of their meaning, and the projection of their status in the near future" [

23]. According to this definition, the perception of potential threats and their comprehension constitute the first stages in the information processing stages, where the driver’s attention is captured and the context of the critical situation is understood [

18,

23]. A unimodal or multimodal signal that is able to deliver urgency can already impact in these early stages [

18,

24]. For instance, auditory non verbal sounds are shown to enhance perceived urgency and draw back attention to the driving tasks [

25,

26,

27]. Similarly, visual warnings alone or combined with auditory signals are successful in retrieving attention and increasing awareness of a critical situation [

20,

25,

28]. Besides situational awareness, reaction times are commonly used metrics to evaluate drivers’ behavior during TOR. In general, reaction times encompasses the entire take-over process, from perceiving the stimuli (perception reaction time) to performing the motor action (movement reaction time) [

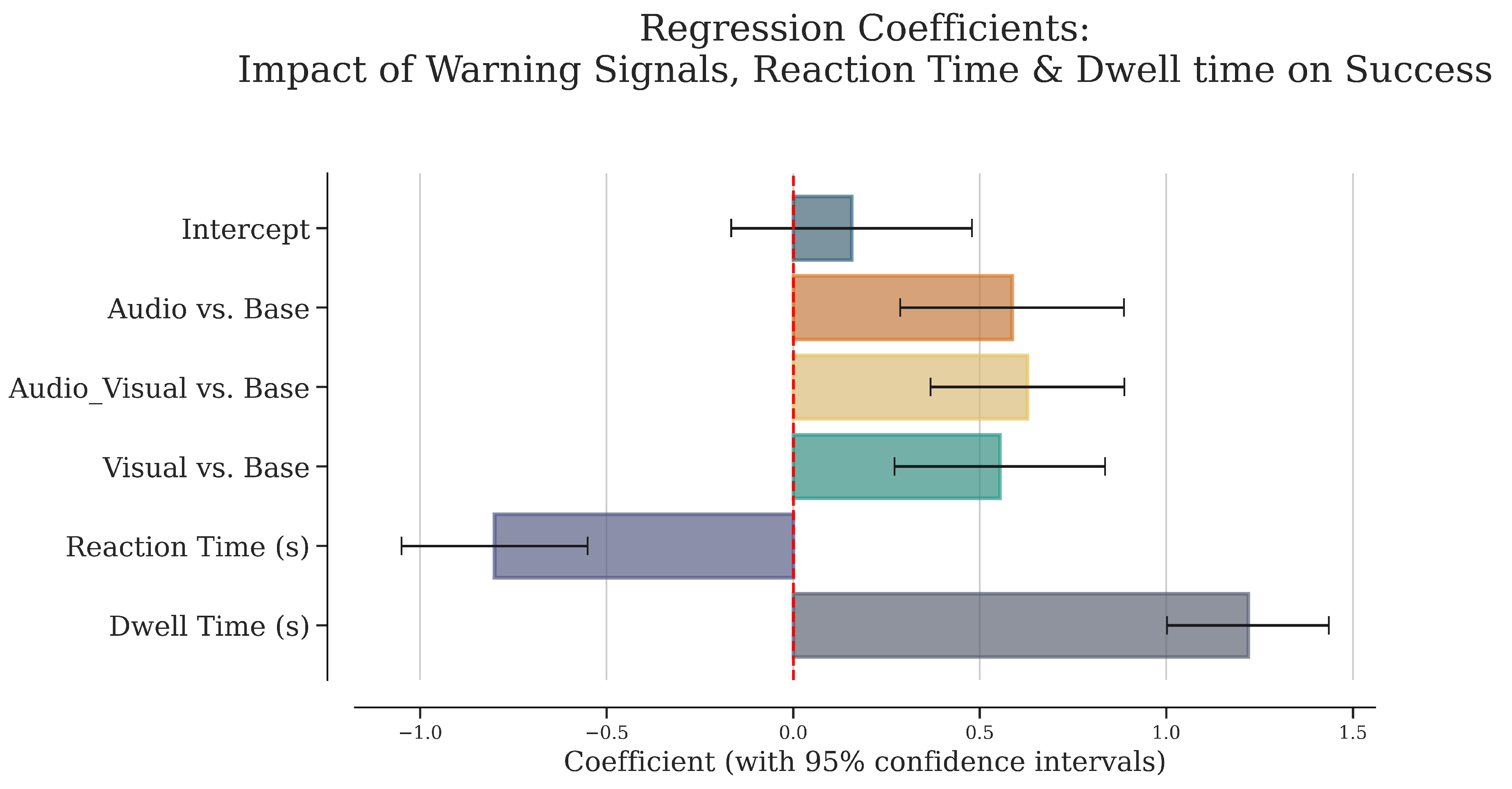

22,

29]. Differentiating between these stages is crucial for calculating the effect of a warning signal on reaction time. Discrete results in reaction time studies have revealed that the method of calculation should be considered precisely [

22]. Perception reaction time is defined as the time required to perceive the stimuli, cognitively process the information, and make a decision. Movement reaction times is the time needed to perform the motor action [

29]. While perception reaction times can be influenced by modality and characteristic of a signal, movement reaction time is more driven by the complexity of the situation and the expected maneuver [

22,

30]. As a result, the visual modality can affect movement reaction times by alerting the driver while simultaneously providing complex spatial information, such as distance and direction [

31]. Understanding the roles of situational awareness and reaction times in the take-over process, and the effects of warning signals on each of them, allows us to determine when and where different types of signals are beneficial or detrimental to ensure a safer transition during TORs.

In addition to safe transitions that facilitate the integration of AVs into everyday life, acceptance factors play a crucial role in determining the feasibility of such integration. Increasing user acceptance can be effectively achieved through collaborative control and shared decision-making strategies, such as TORs [

7,

8,

32]. However, providing TORs in semi-autonomous vehicles has a dual effect on their acceptance. While such requests can increase trust through enhanced interaction, they also induce anxiety during the take-over process [

8,

33]. Therefore, measuring perceived feelings of anxiety and trust is essential when studying conditional AVs. Subjective measures, like acceptance questionnaires, have been used to determine acceptance, with recent examples specifically designed for AVs. The Autonomous Vehicle Acceptance Model (AVAM) provides a multi-dimensional approach in quantifying acceptance aspects across different levels of automation [

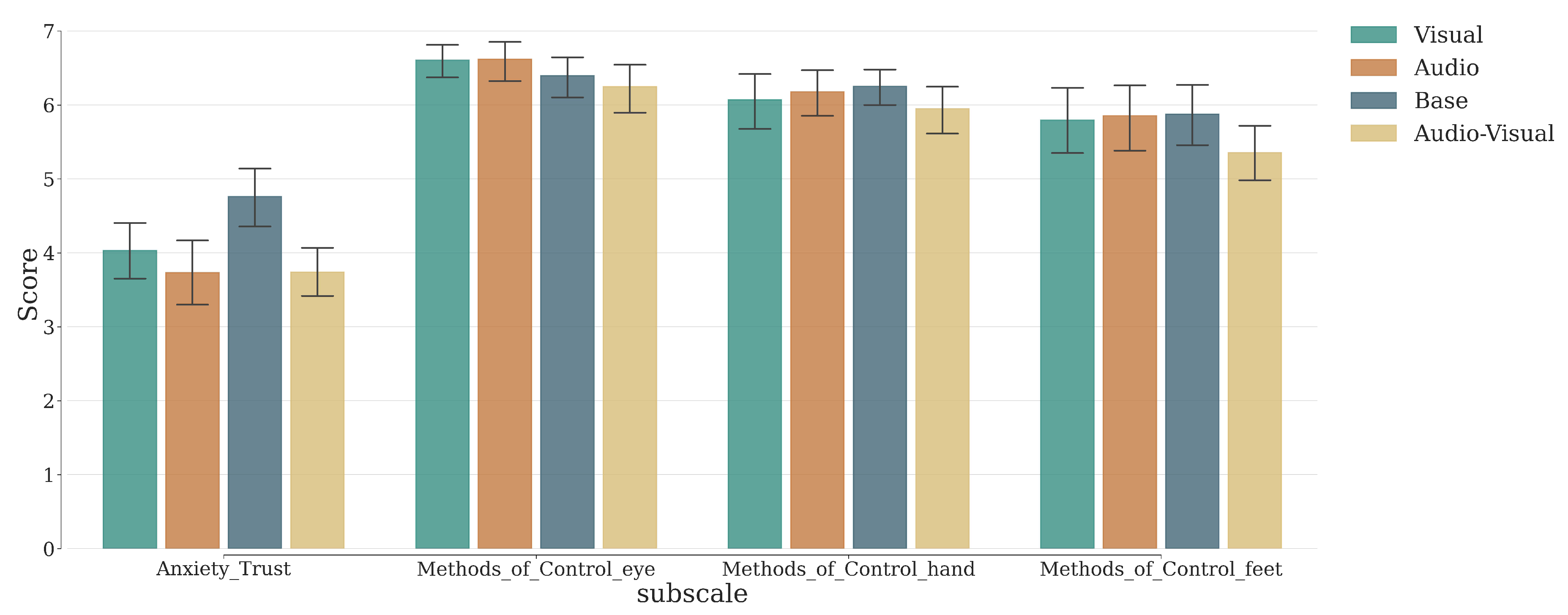

34].

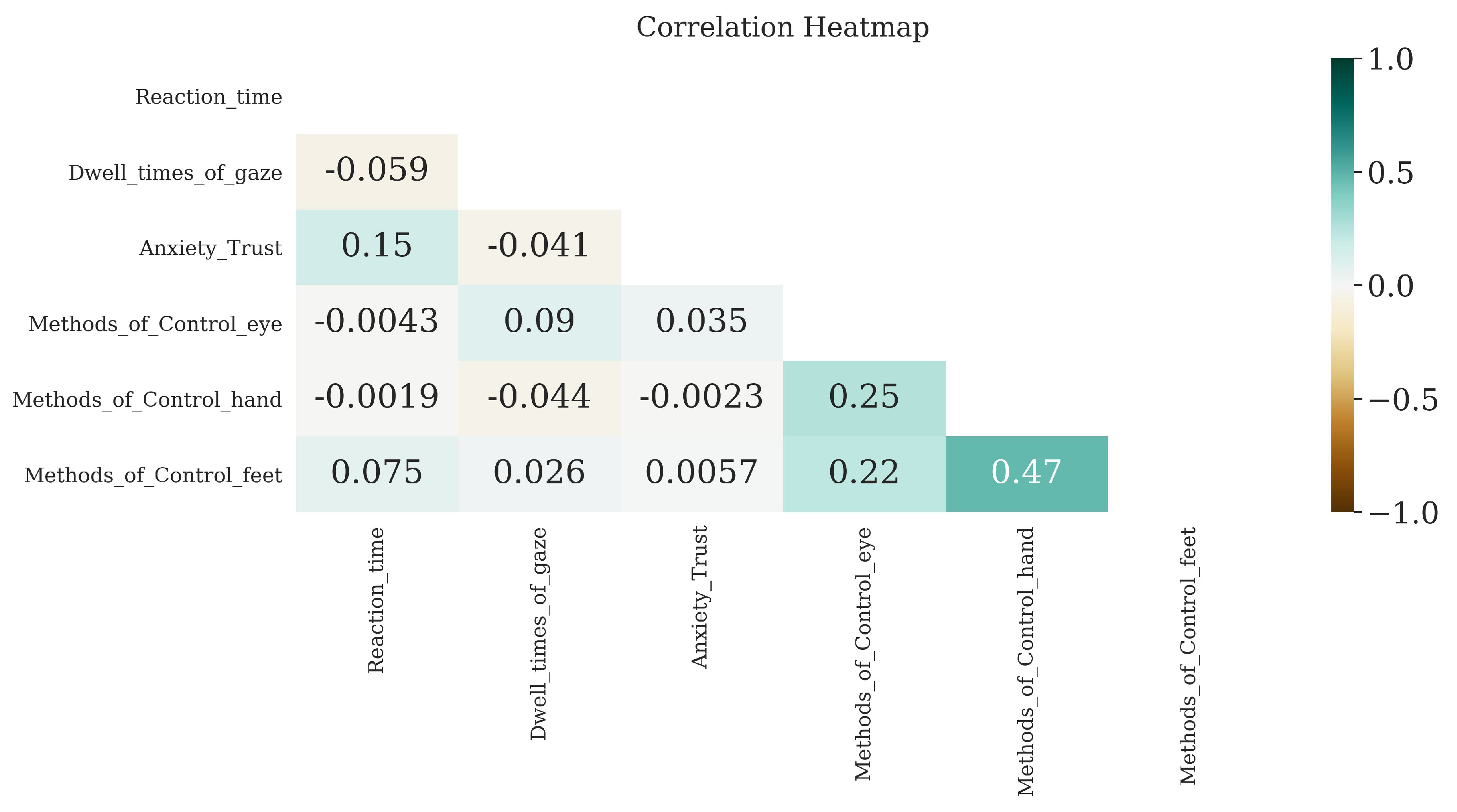

The measuring methods used to understand drivers situational awareness and reaction times during TOR are as crucial as their definition. Differences in measuring methods lead to incommensurable results that make reproducibility difficult [

35,

36]. For instance, studies have used different metrics to understand drivers’ situational awareness and reaction time , including gaze related reaction time, gaze-on-road duration, hands-on-wheel reaction time, actual intervention reaction time, left-right car movement time [

17,

35,

37,

38]. Therefore, most research on driver behavior during critical events has produced mixed results. Additionally, most studies have been conducted in risk-free simulated environments due to the dangerous nature of the situations [

39,

40], usually carried out in laboratory-based setups, which are costly, space-consuming, and lack realism. As a result, research has questioned the extent to which findings under such conditions generalize to the real world [

41]. One of the main reasons is the fact that participants in conventional driving simulations remain conscious of their surroundings, reducing the perceived realism of the driving scenario. For results to be generalizable to the real world, it is particularly important to simulate driving conditions that elicit similar natural driving behavior, including realistic ambience, increased workload, prolonged driving, meaningful signals, various behavioral measures, and diverse population sample [

41,

42]. To mitigate these limitations, virtual reality (VR) has been proposed as an inexpensive and compact solution, providing a higher immersion feeling [

43,

44,

45,

46]. Unlike conventional driving simulations, VR offers a more immersive driving experience, allowing researchers to isolate and fully engage drivers in critical situations. By enhancing perceived realism, VR makes simulated events feel more real [

47,

48], resulting in drivers’ behaviors closer to real life and findings that are more ecologically valid than those obtained through conventional driving simulations [

49,

50]. In addition, VR-based driving simulations offer other advantages which make them preferred over traditional systems, including integrated eye-tracking to collect visual metrics to understand situational awareness [

38,

51], controllability and repeatability [

52], as well as usability for education and safety training [

53,

54,

55]. Hence, VR holds the potential to investigate drivers’ behavior in a safe, efficient, and reproducible manner, which is of particular interest when designing multimodal interfaces for future autonomous transport systems.

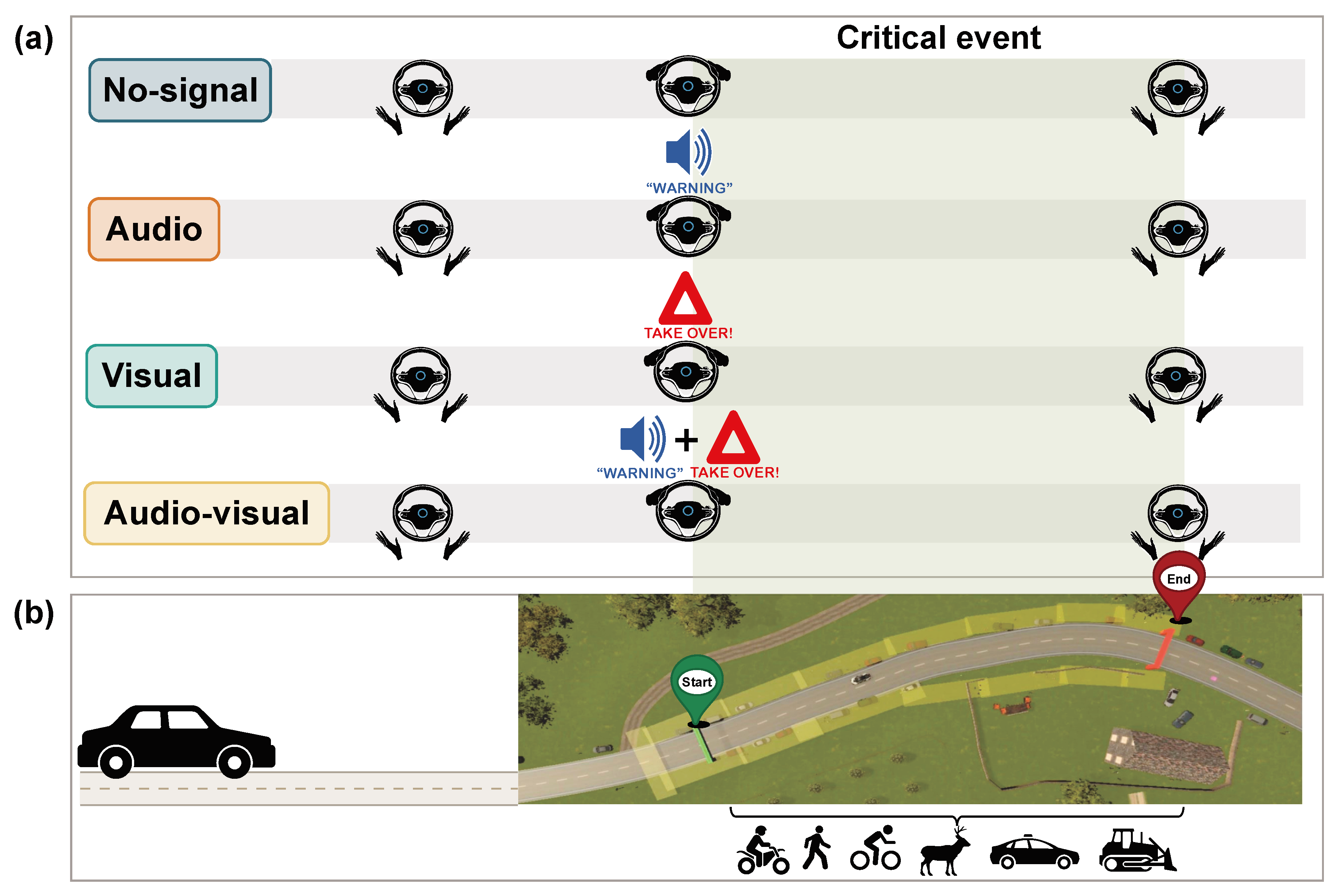

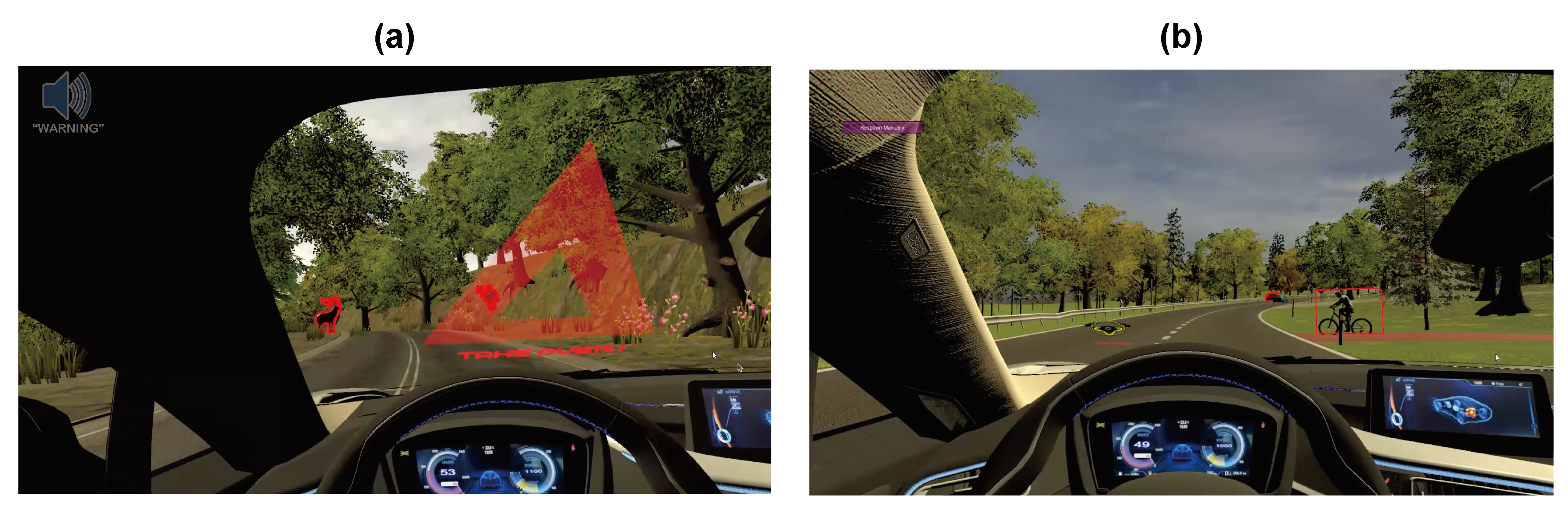

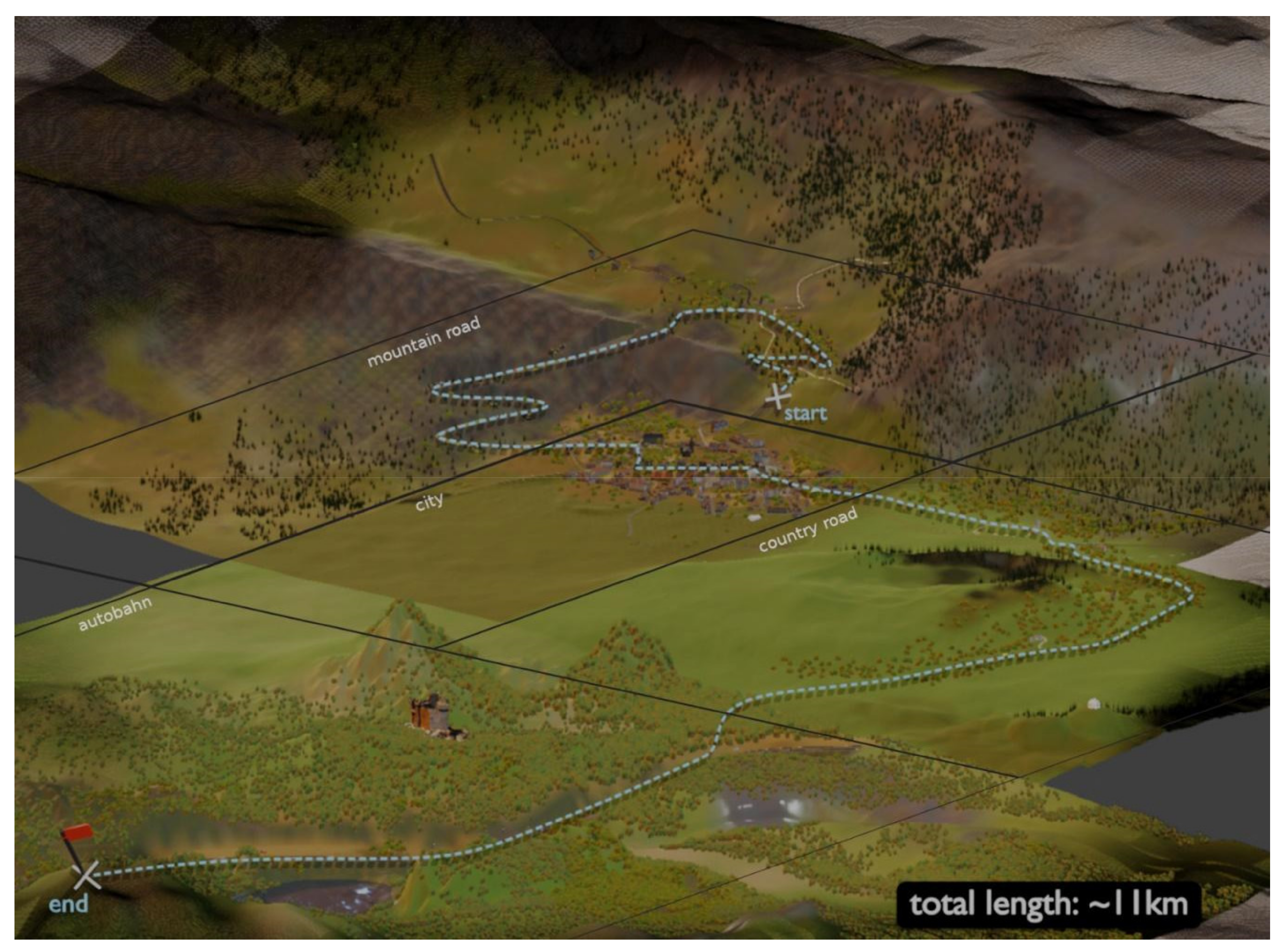

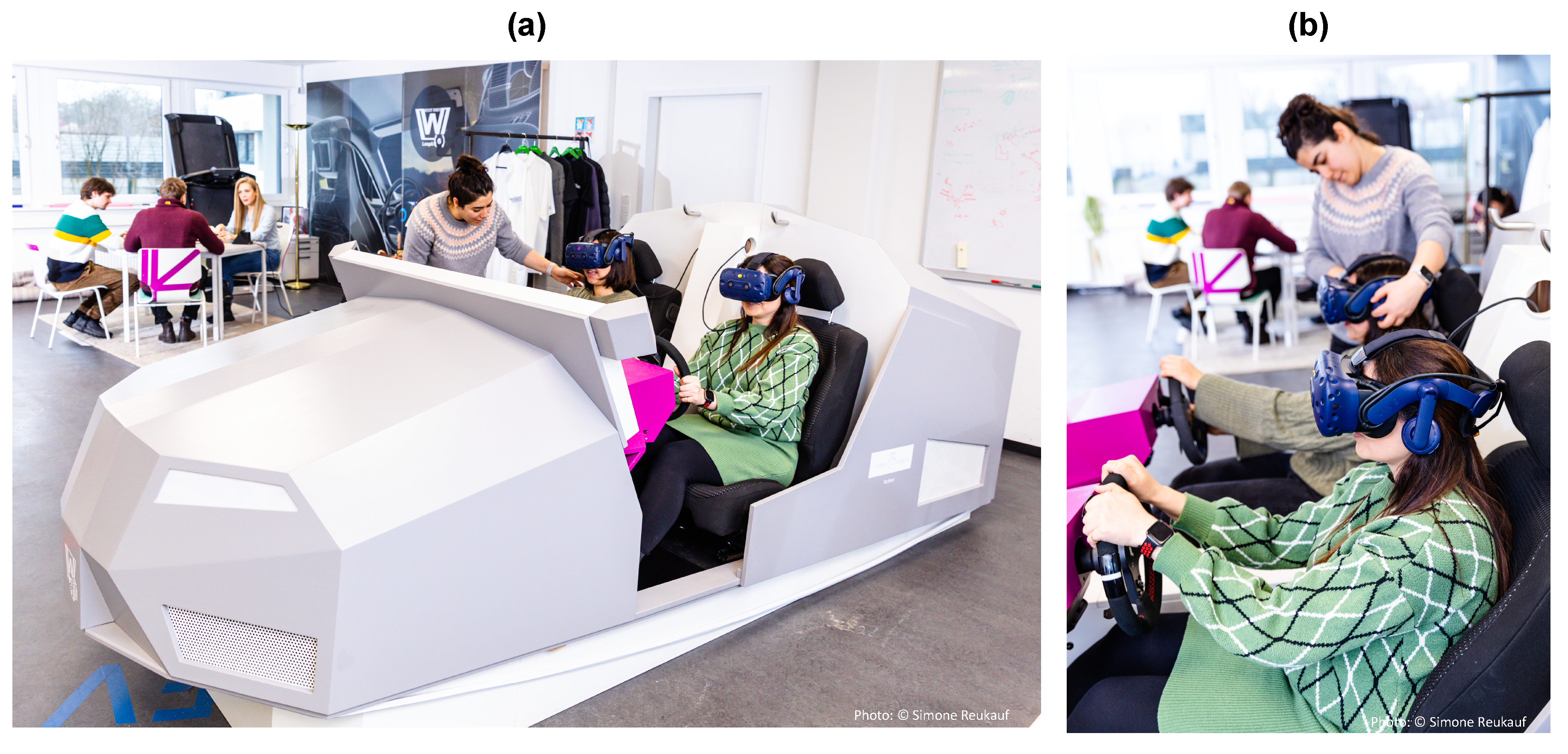

In this study we used VR to investigate the impact of signal modalities—audio, visual, and audio-visual—on situational awareness and reaction time during TOR in semi-autonomous vehicles. We collected quantitative data on both objective behavioral metrics (visual and motor behavior) and subjective experience (AVAM questionnaire) from a large and diverse population sample, encompassing varying ages and driving experience. We hypothesized that the presence of any warning signal (audio, visual, or audio-visual) would lead to a higher success rate compared to a no-warning condition. Specifically, we posited that the audio warning signal would enhance awareness of the critical driving situation, while a visual signal would lead to a faster motor response. Furthermore, we predicted that the audio-visual modality, by combining the benefits of both signals, would result in the fastest reaction times and the highest level of situational awareness. Additionally, we expected that seeing the object of interest, regardless of the warning modality, would directly improve the success rate. Finally, we expected a positive correlation between self-reported preferred behavior and actual behavior during critical events and hypothesized that the presence of warning signals would be associated with increased perceived trust and decreased anxiety levels in drivers. By examining drivers’ behavior under these conditions, we can gain a better understanding of the effect of signal modalities on performance.