Submitted:

13 June 2024

Posted:

20 June 2024

You are already at the latest version

Abstract

Keywords:

Introduction

Motivation

Problem Statement

- Dynamic obstacle avoidance- Developing an algorithm that can effectively handle obstacles in real-time, allowing the robot to navigate safely and efficiently.

- Object recognition- The robot will identify objects that appears in front of the RGB camera on its way to the goal or after reaching the goal point.

- Low Power Consumption and cost effectiveness- Developing a system that utilizes low-power and cost effective hardware components.

Objectives

- Study of Obstacle avoidance using Gazebo simulation

- Study of kinematic model using Matlab simulation

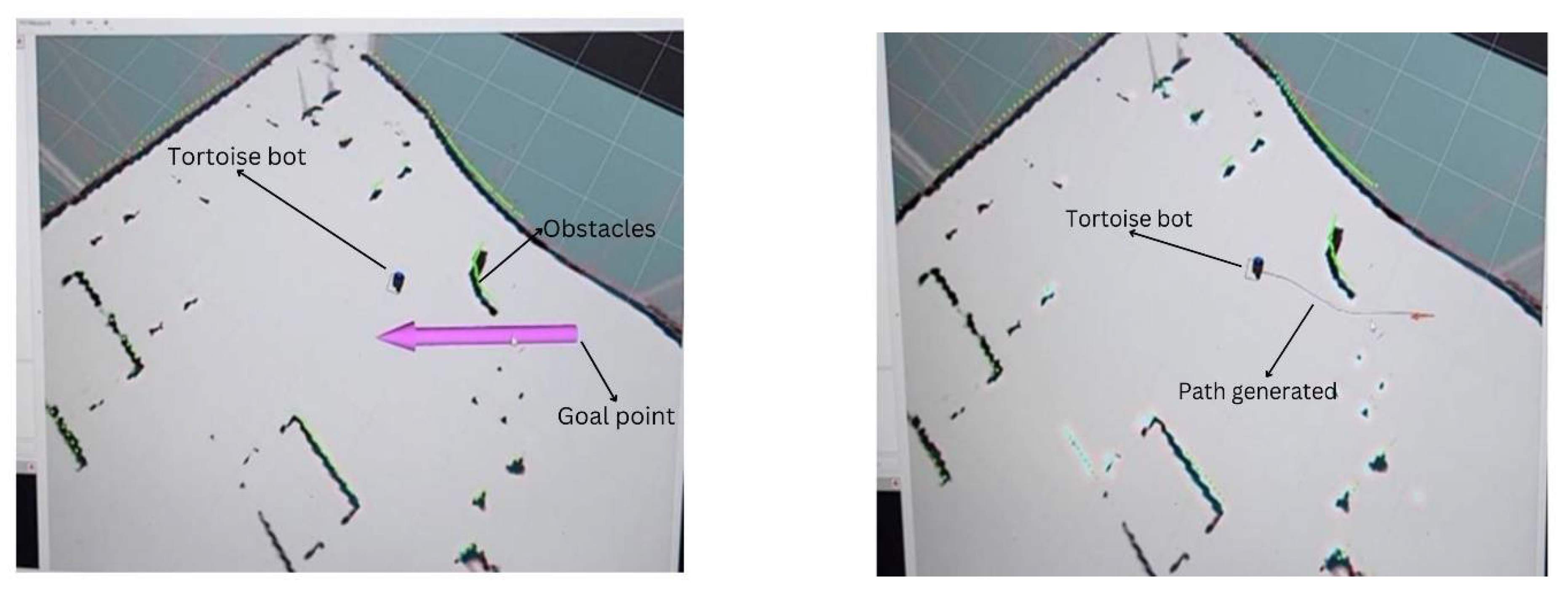

- To implement online navigation and path planning(using DWA algorithm) of Tortoise bot(AMR) using ROS

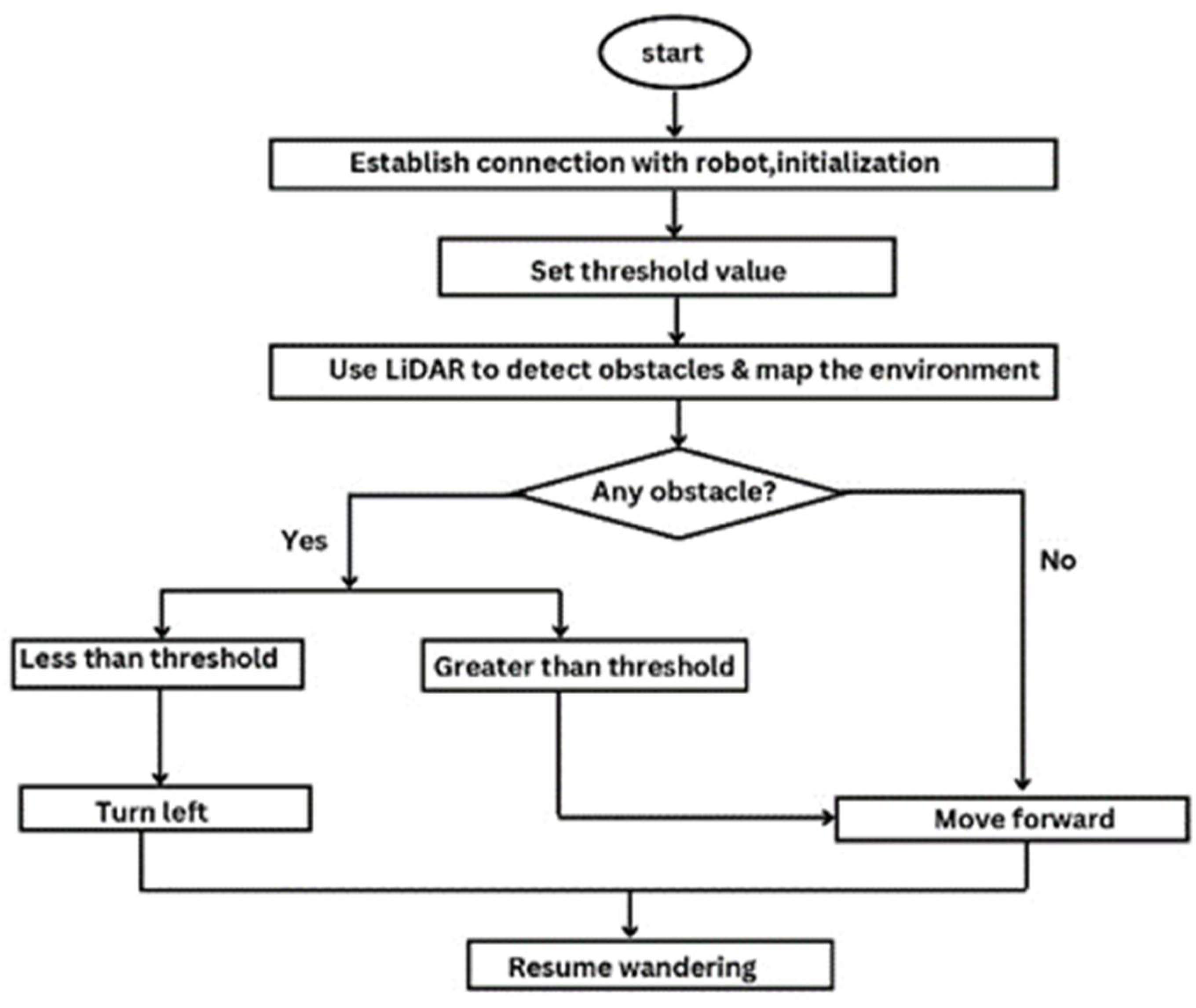

- Wander algorithm along with obstacle avoidance

- Object Recognition

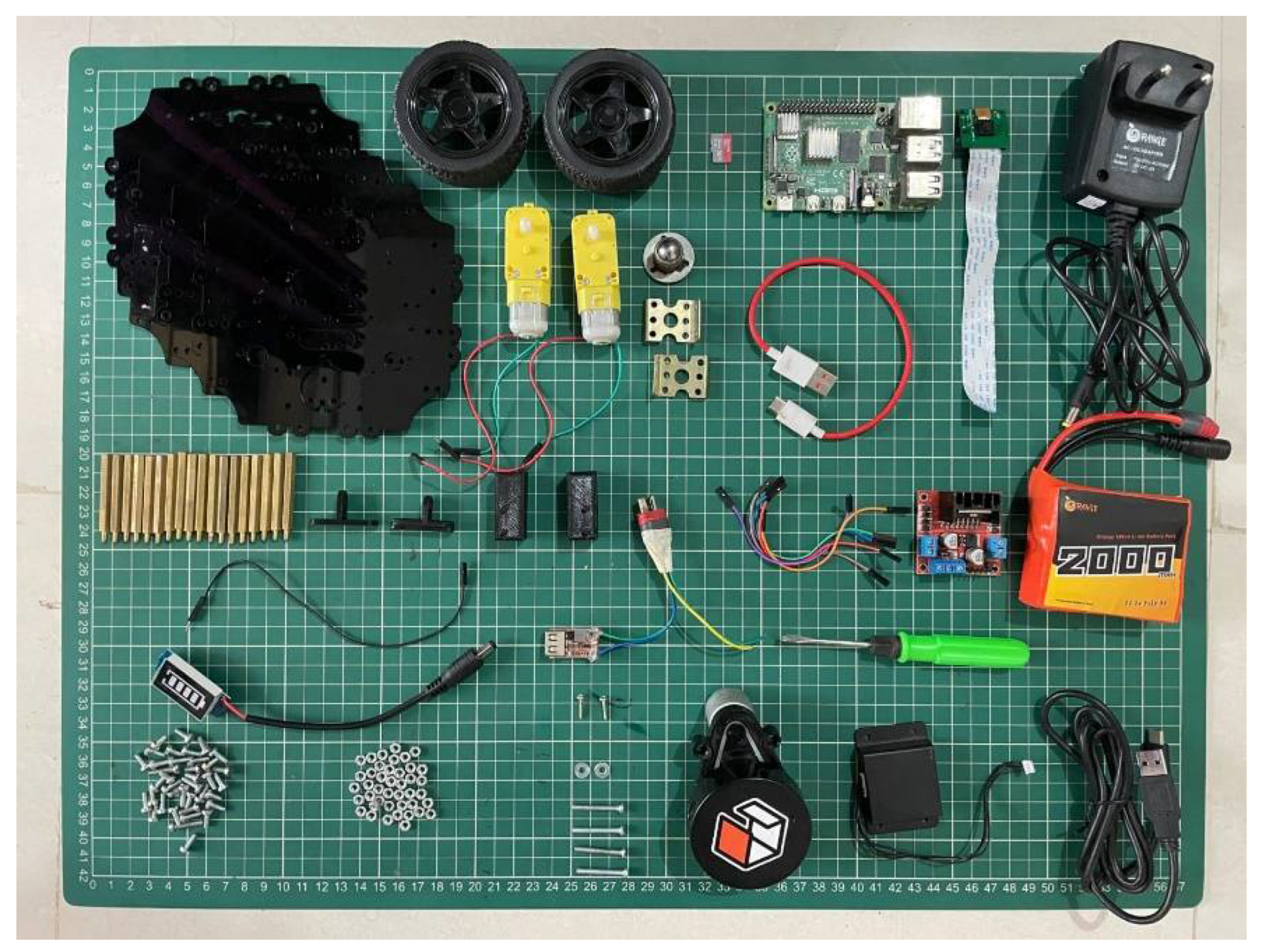

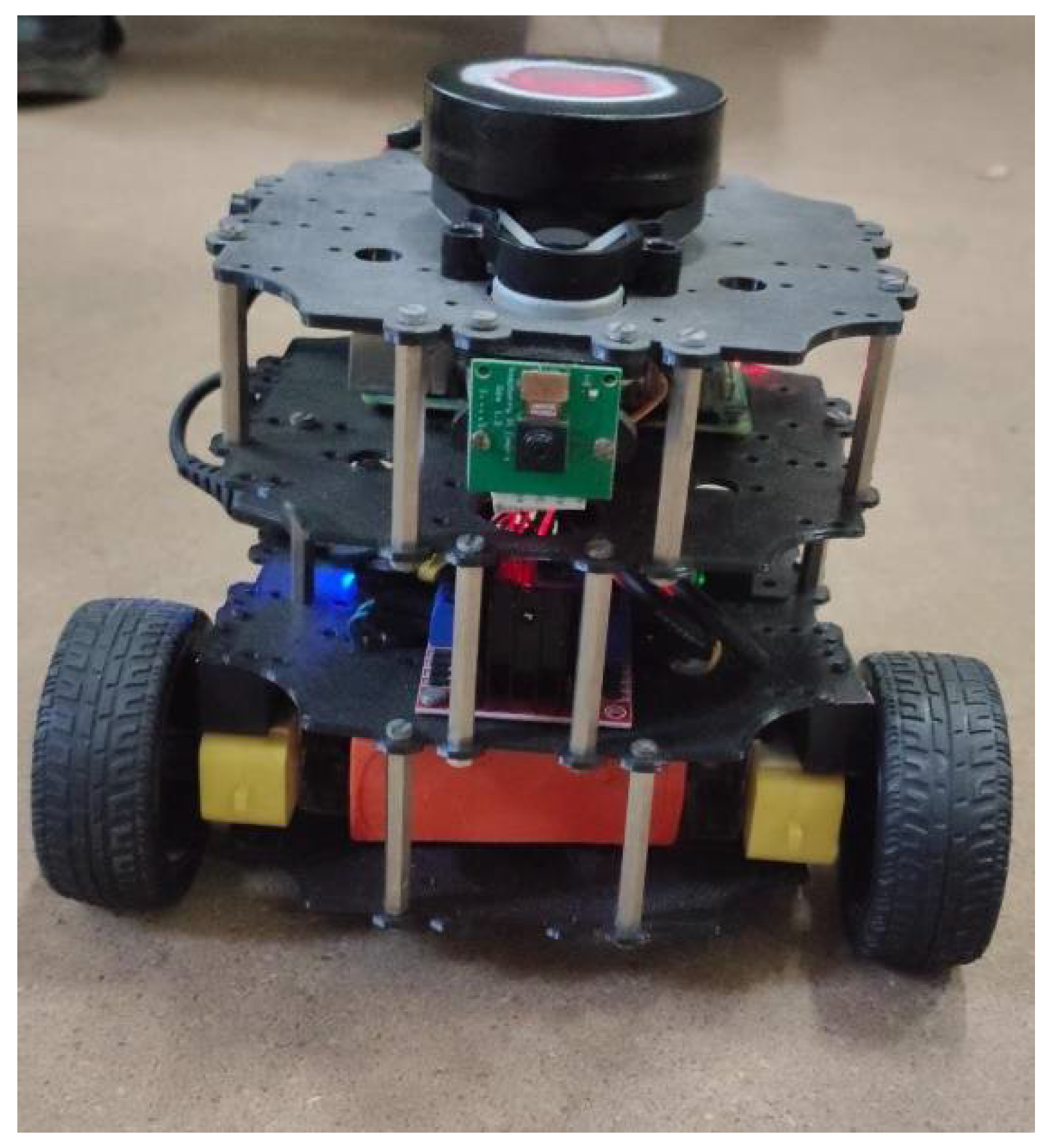

Methodology and Hardware Details

- Obstacle Detection and Avoidance-LiDAR sensors can be used to create a detailed 3D map of the robot’s surroundings. This map can be used to identify obstacles in the environment, allowing the robot to plan its movements and avoid collisions.

- Navigation-LiDAR data can assist in navigation tasks. By continuously scanning the surroundings, the robot can localize itself and navigate through complex environments.

- Mapping- LiDAR can be used to create detailed maps of indoor or outdoor spaces. This is particularly useful for tasks such as exploration, surveillance, or search and rescue.

- Object Recognition-data can contribute to object recognition and classification, allowing the robot to identify and interact with specific objects in its environment.

- Autonomous Operation- In combination with other sensors and algorithms, LiDAR can contribute to the development of autonomous robot behaviour. For example, a robot equipped with LiDAR can navigate a room, avoiding obstacles and reaching its destination autonomously.

- Simultaneous Localization and Mapping (SLAM)- LiDAR is often used in SLAM algorithms, where the robot builds a map of its environment while simultaneously determining its own location within that map

- Visual Sensing -The RGB camera serves as the eyes of the Tortoise Bot, allowing it to perceive and interpret the surrounding environment in colour. This visual input can be crucial for tasks such as object recognition, pathfinding, and navigation.

- Colour-Based Object Detection- Leveraging the RGB capabilities, the Tortoise Bot can identify and differentiate objects based on their colours. This can be useful in scenarios where the bot needs to interact with or avoid specific objects based on their visual characteristics.

- Environment Mapping- The RGB camera enables the Tortoise Bot to capture images of its surroundings, facilitating the creation of a visual map. This map can be utilized for navigation, helping the bot to plan its movements and avoid obstacles with a heightened level of precision.

- Line Following- For applications involving line-following tasks, an RGB camera can detect colour variations on the surface, allowing the Tortoise Bot to follow a designated path accurately. This is particularly relevant for tasks in controlled environments or on specialized tracks.

- Visual Feedback for Users- Integrating the RGB camera into the Tortoise Bot’s user interface provides real-time visual feedback to users. This visual information can be displayed on a monitor or interface, allowing users to see what the bot ”sees” and aiding in task monitoring.

- Adaptive Lighting Conditions- RGB cameras can adjust to different lighting conditions, providing flexibility for the Tortoise Bot to operate in various environments. This adaptability ensures reliable performance in both indoor and outdoor settings.

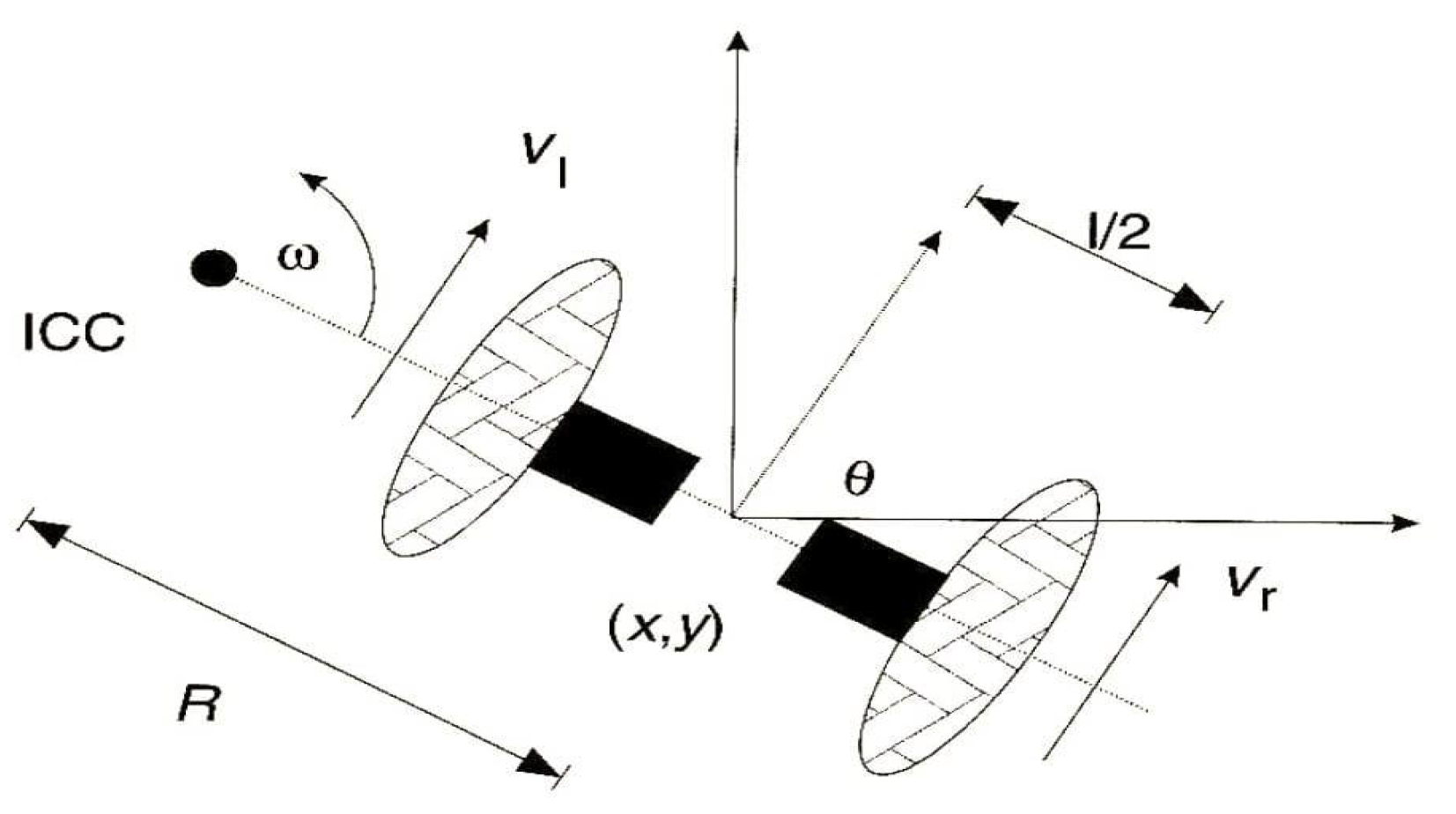

Methodology

- Set initial position

- Set left and right wheel velocities

- Introduce noise into velocity commands if necessary using rand function, for realistic behaviour

- Obtain the trajectory, position and orientation graphs

Optimization of Objective Function

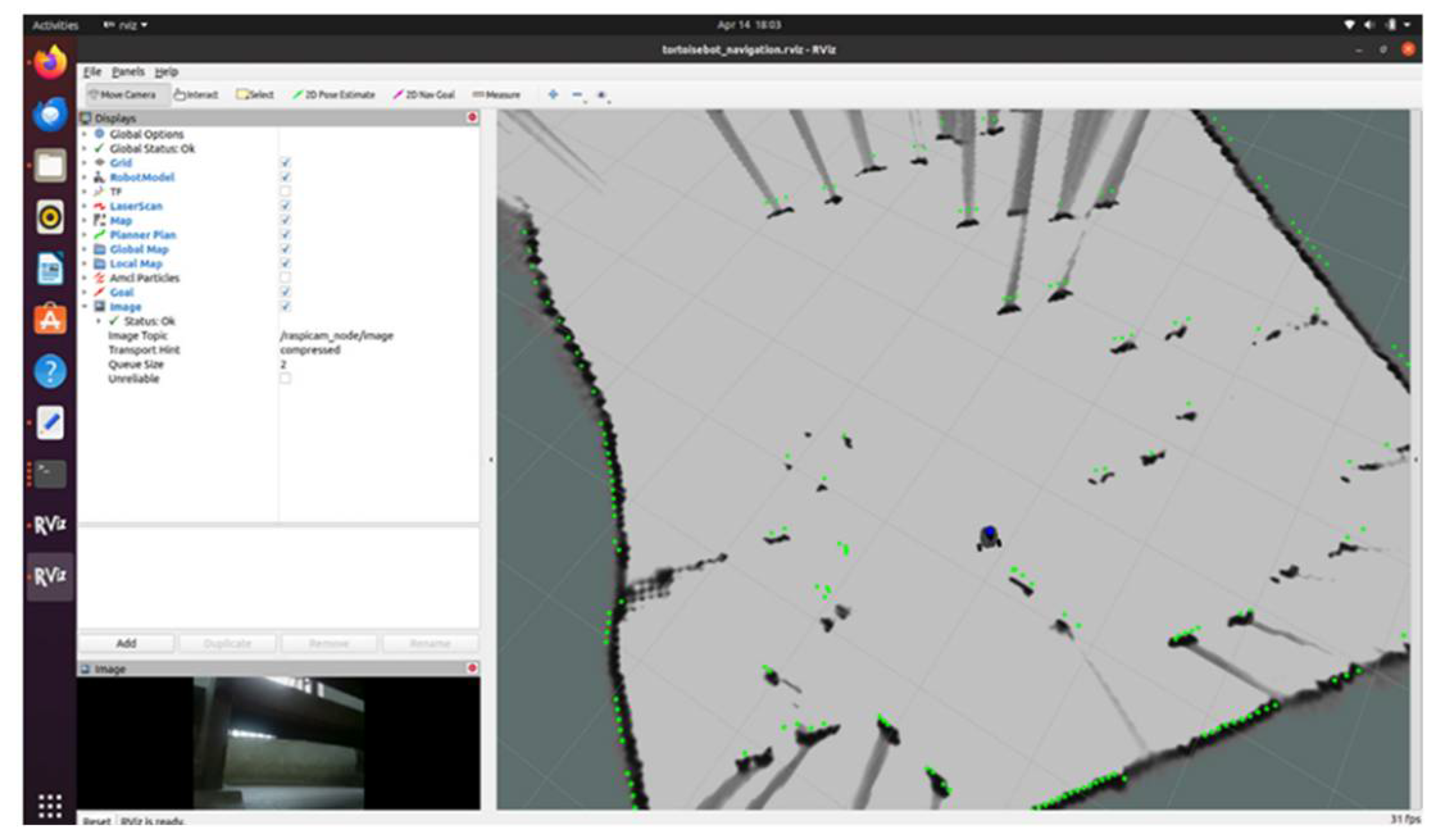

Lidar:Data Transmission and Reception

Teleoperation

Map Generation Using SLAM

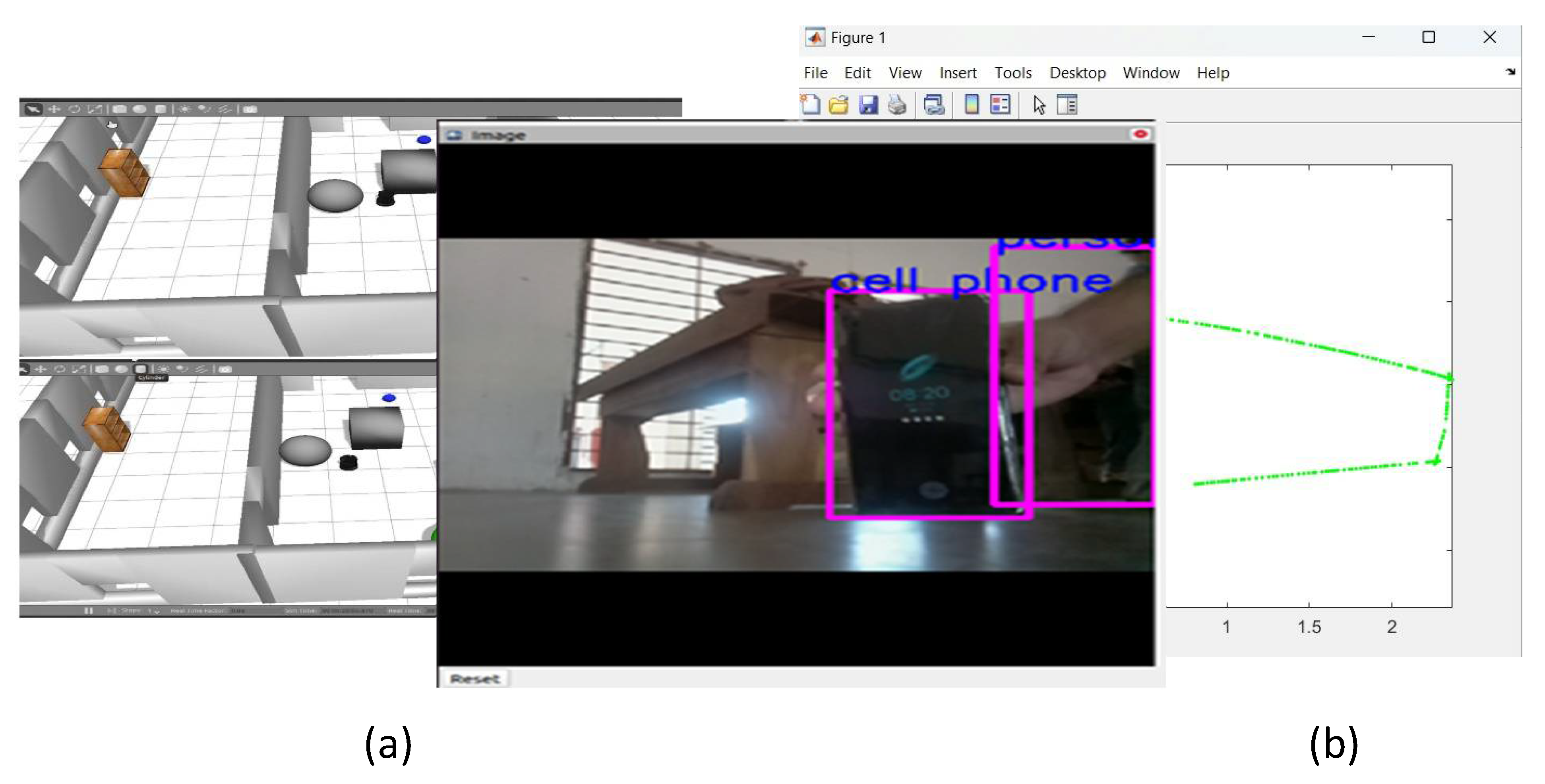

- Input Acquisition: The robot collects visual data using onboard cameras or sensors, capturing images or video frames of its surroundings.

- Preprocessing: The acquired images may undergo preprocessing steps such as resizing, normalization, or augmentation to enhance the quality and suitability for analysis. • YOLO Algorithm Implementation: YOLO is employed as a deep learning based object recognition algorithm. It operates by dividing the input image into a grid and simultaneously predicts bounding boxes and class probabilities for each grid cell.

- Object Recognition: YOLO processes the image and identifies regions containing objects, drawing bounding boxes around them and assigning a class label to each detected object. YOLO is known for its real-time performance and accuracy in detecting multiple objects within a single frame.

- Object Classification: Once objects are detected, the robot can classify them into predefined categories such as ”person,” ”phone,” ”chair,” etc. This classification enables the robot to understand the identity of the objects it perceives.

- Feedback and Iteration: The robot continually refines its object recognition capabilities through feedback mechanisms, such as learning from misclassifications or updating its object database over time. Object recognition empowers the robot to interpret its environment, navigate safely, and perform tasks effectively in diverse real-world scenarios.

Results

| No | Cases | Output |

| 1 | Equal, constant wheel velocity | Straight line |

| 2 | Unequal, constant wheel velocity | Circle |

| 3 | Equal, noisy wheel velocity | Distorted straight line |

| 4 | Unequal, noisy wheel velocity | Multiple Circles |

Conclusion

References

- Murphy, R. R., Nomura, T., Billard, A., & Burke, J. L. (2010). Human–robot interaction. IEEE robotics & automation magazine, 17(2), 85-89.

- Marken, R., Kennaway, R., & Gulrez, T. (2022). Behavioral illusions: The snark is a boojum. Theory & Psychology, 32(3), 491-514. [CrossRef]

- Zaheer, S., & Gulrez, T. (2015). A Path Planning Technique For Autonomous Mobile Robot Using Free-Configuration Eigenspaces. International Journal of Robotics and Automation (IJRA), 6(1), 14.

- Al-Hmouz, R., Gulrez, T., & Al-Jumaily, A. (2004, December). Probabilistic road maps with obstacle avoidance in cluttered dynamic environment. In Proceedings of the 2004 Intelligent Sensors, Sensor Networks and Information Processing Conference, 2004. (pp. 241-245). IEEE.

- Nguyen, Q. H., Johnson, P., & Latham, D. (2022). Performance evaluation of ROS-based SLAM algorithms for handheld indoor mapping and tracking systems. IEEE Sensors Journal, 23(1), 706-714. [CrossRef]

- Zaheer, S., Jayaraju, M., & Gulrez, T. (2015, March). Performance analysis of path planning techniques for autonomous mobile robots. In 2015 IEEE international conference on electrical, computer and communication technologies (ICECCT) (pp. 1-5). IEEE.

- Kazerouni, I. A., Fitzgerald, L., Dooly, G., & Toal, D. (2022). A survey of state-of-the-art on visual SLAM. Expert Systems with Applications, 205, 117734. [CrossRef]

- Poh, C., Gulrez, T., & Konak, M. (2021, March). Minimal neural networks for real-time online nonlinear system identification. In 2021 IEEE Aerospace Conference (50100) (pp. 1-9). IEEE.

- Zhang, L., Zhang, Y., & Li, Y. (2020). Path planning for indoor mobile robot based on deep learning. Optik, 219, 165096. [CrossRef]

- Gulrez, T., Zaheer, S., & Abdallah, Y. (2009, December). Autonomous trajectory learning using free configuration-eigenspaces. In 2009 IEEE International Symposium on Signal Processing and Information Technology (ISSPIT) (pp. 424-429). IEEE.

- Pour, P. A., Gulrez, T., AlZoubi, O., Gargiulo, G., & Calvo, R. A. (2008, December). Brain-computer interface: Next generation thought controlled distributed video game development platform. In 2008 IEEE Symposium On Computational Intelligence and Games (pp. 251-257). IEEE.

- Gulrez, T., Kekoc, V., Gaurvit, E., Schuhmacher, M., & Mills, T. (2023, March). Machine Learning Enabled Mixed Reality Systems-For Evaluation and Validation of Augmented Experience in Aircraft Maintenance. In Proceedings of the 2023 7th International Conference on Virtual and Augmented Reality Simulations (pp. 77-83).

- Kavaliauskaitė, D., Gulrez, T., & Mansell, W. (2023). What is the relationship between spontaneous interpersonal synchronization and feeling of connectedness? A study of small groups of students using MIDI percussion instruments. Psychology of Music, 03057356231207049. [CrossRef]

- Gulrez, T., & Yoon, W. J. (2018). Cutaneous haptic feedback system and methods of use. US Patent, (9,946).

- Cabibihan, J. J., Alhaddad, A. Y., Gulrez, T., & Yoon, W. J. (2021). Influence of Visual and Haptic Feedback on the Detection of Threshold Forces in a Surgical Grasping Task. IEEE Robotics and Automation Letters 6, 5525–5532. [CrossRef]

- Gulrez, T., & Tognetti, A. (2014). A sensorized garment controlled virtual robotic wheelchair. Journal of Intelligent & Robotic Systems, 74, 847-868. [CrossRef]

- Satoshi, T., Gulrez, T., Herath, D. C., & Dissanayake, G. W. M. (2005). Environmental recognition for autonomous robot using slam. real time path planning with dynamical localised voronoi division. International Journal of Japan Society of Mech. Engg (JSME), 3, 904-911.

- Zaheer, S., & Gulrez, T. (2015). A Path Planning Technique For Autonomous Mobile Robot Using Free-Configuration Eigenspaces. International Journal of Robotics and Automation (IJRA), 6(1), 14.

- Gulrez, T., Tognetti, A., Yoon, W. J., Kavakli, M., & Cabibihan, J. J. (2016). A hands-free interface for controlling virtual electric-powered wheelchairs. International Journal of Advanced Robotic Systems, 13(2), 49. [CrossRef]

- Wu, P., Cao, Y., He, Y., & Li, D. (2017). Vision-based robot path planning with deep learning. In Computer Vision Systems: 11th International Conference, ICVS 2017, Shenzhen, China, July 10-13, 2017, Revised Selected Papers 11 (pp. 101-111). Springer International Publishing.

- Gulrez, T., & Mansell, W. (2022). High Performance on Atari Games Using Perceptual Control Architecture Without Training. Journal of Intelligent & Robotic Systems, 106(2), 45. [CrossRef]

- Zaheer, S., Gulrez, T., & Thythodath Paramabath, I. A. (2022). From sensor-space to eigenspace–a novel real-time obstacle avoidance method for mobile robots. IETE Journal of Research, 68(2), 1512-1524. [CrossRef]

- Gulrez, T., Meziani, S. N., Rog, D., Jones, M., & Hodgson, A. (2016). Can autonomous sensor systems improve the well-being of people living at home with neurodegenerative disorders?. In Cross-Cultural Design: 8th International Conference, CCD 2016, Held as Part of HCI International 2016, Toronto, ON, Canada, July 17-22, 2016, Proceedings 8 (pp. 649-658). Springer International Publishing.

- Aravindan, A., Zaheer, S., & Gulrez, T. (2016, September). An integrated approach for path planning and control for autonomous mobile robots. In 2016 International Conference on Next Generation Intelligent Systems (ICNGIS) (pp. 1-6). IEEE.

- Gulrez, T. (2021). Robots Used Today that we Did Not Expect 20 Years Ago (from the Editorial Board Members). Journal of Intelligent & Robotic Systems, 102(3). [CrossRef]

- Zaheer, S., Jayaraju, M., & Gulrez, T. (2014, March). A trajectory learner for sonar based LEGO NXT differential drive robot. In 2014 International Electrical Engineering Congress (iEECON) (pp. 1-4). IEEE.

- Zaheer, S., & Gulrez, T. (2011, April). Beta-eigenspaces for autonomous mobile robotic trajectory outlier detection. In 2011 IEEE Conference on Technologies for Practical Robot Applications (pp. 31-34). IEEE.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).