1. Introduction

Remote sensing land cover segmentation is critical for analyzing remote sensing images, playing a key role in processing and utilizing remote sensory data. By employing image semantic segmentation algorithms, this technique assigns categories to each pixel in remote sensing data, identifying various landforms and extracting essential information. In military contexts, it provides crucial intelligence for tactical and strategic operations. Environmentally, it aids in quickly and accurately detecting ecological changes, while in urban development, it supports city planning and infrastructure enhancement. Geospatial clarity is also improved in geoscience, establishing an important base for earth studies. The wide-ranging utility of this technology underscores the need for precision in remote sensing segmentation methods.

Zheng [

1] developed FarSeg, a foreground-aware relational network designed to address significant intra-class variance in background classes and the imbalance between foreground and background in remote sensing images. In 2021, Li [

2] introduced Fctl, a geospatial segmentation approach based on location-aware contexts, which systematically crops and independently segments images, later merging these to form a cohesive output. Additionally, Ma [

3] introduced FactSeg, utilizing the FA object representation to enhance detection and differentiation of smaller objects.

Recent studies on object detection highlight the critical role of foreground saliency in remote sensing image analysis. Various researchers, inspired by these findings, have been adapting Transformer architectures for remote object detection. Xu introduced the Efficient Transformer [

4], based on the Swin Transformer, which exhibits improved computational efficiency and uses explicit and implicit edge-enhancement techniques for precise segmentation. Wang [

5] presented a design using the Swin Transformer for context extraction from images and introduced a densely connected feature aggregation setup for resolution restoration and intricate segmentation. Xu proposed the RSSFormer [

6], a remote sensing object segmentation framework with an adaptive Transformer conjunction module, attention layer, and a foreground prominence-based loss function, designed to reduce background noise and enhance foreground differentiation.

Land cover segmentation poses distinct challenges compared to standard semantic segmentation, which include: Significant variance in object sizes even within the same class, like large forests versus isolated trees. Complex background components in remote sensing images, making it difficult to classify certain elements into defined segments. Prevalence of background over foreground, which could bias the model to favor background segmentation during training, potentially affecting its optimization path.

In this paper, the prominent contributions are as follows:

We propose a decoder called MDCFD based on multi-dilation-rate convolution fusion. A corresponding module integrates outputs from convolutions with different dilation rates, addressing dilated convolution’s information loss and improving scale-variant target segmentation.

We introduce a hybrid attention mechanism called LKSHAM, grounded on large kernel convolutions. In the spatial attention submodule, we embed a convolutional kernel selection strategy to accommodate varying segmentation scales. For channel attention, we consider a large kernel convolution-based attention to enhance the model’s receptive scope, thereby improving its foreground-background distinction and suppressing unrelated background noise.

The rest of this paper is organized as follows.

Section 2 introduces the principles and network structure of the fusion decoder based on multi-dilation rate convolution along with the hybrid attention module based on the large-kernel convolution selection.

Section 3 describes the datasets and implementation details used.

Section 4 provides discussions.

Section 5 draws conclusions.

2. Methods

2.1. Multi-Dilation Rate Convolutional Fusion Module

To counter these challenges, we delineate the construction of a decoder tailored for the segmentation of objects within remote sensing imagery, dubbed the Multi-Dilation Rate Convolutional Fusion Decoder (MDCFD). Drawing inspiration from the decoders of Segformer [

7] and SETR [

8], alongside extant encoder-decoder frameworks, the conceived MDCFD embodies a comparatively simplistic structure of multiple Multi-Layer Perceptrons (MLPs). In a bid to augment the saliency of foreground features within the decoder’s feature maps, this exposition integrates the Rssformer paradigm into the architecture of MDCFD through the adoption of dilated convolutions, with the intent to accentuate foreground details during the decoding phase. Dilated convolutions leverage enlarged kernels to span a wider sampling domain, thereby bridging distant pixels and infusing additional contextual data.

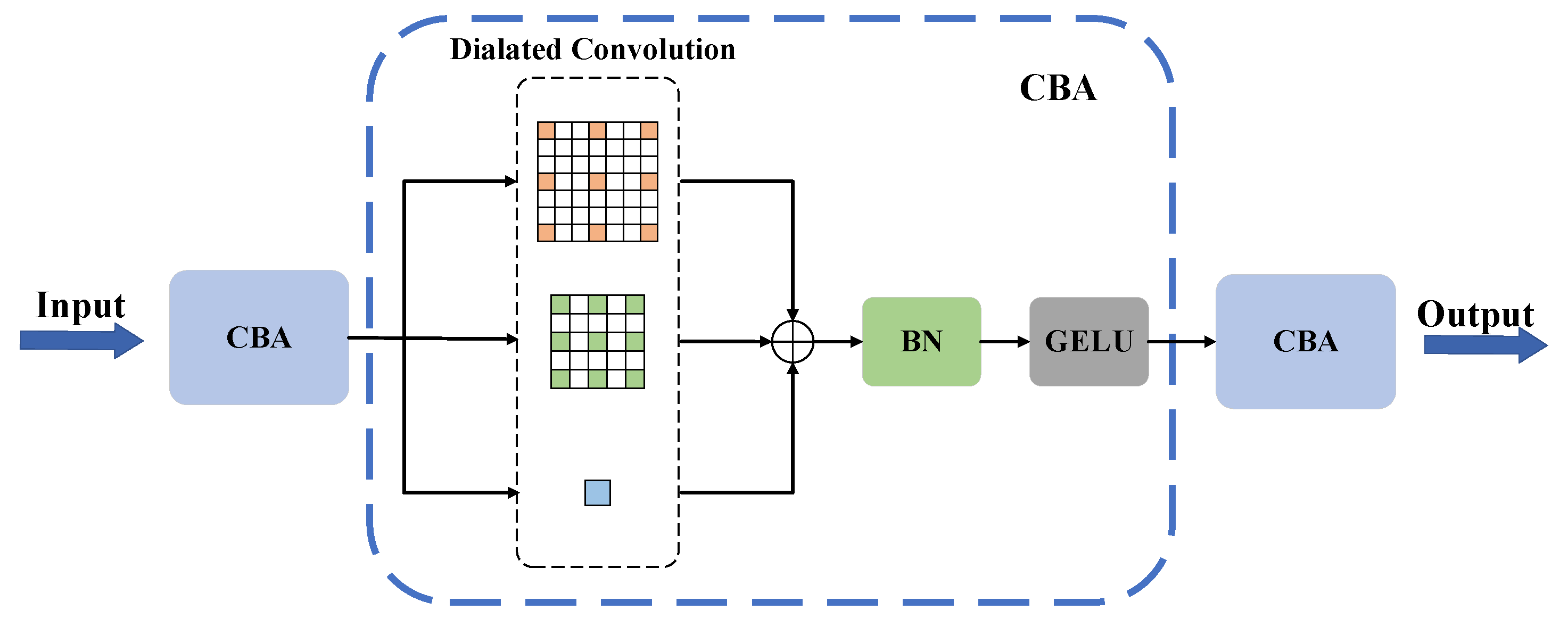

To adeptly tackle the issue of pronounced size variability among analogously classified objects within remote sensing imagery, we propose the Multi-Dilation Rate Convolutional Fusion Module (MDCFM). Said module employs dilated convolutions with minimal dilation rates for the delineation of fine-detail features inherent to smaller objects, while those with escalated dilation rates apprehend an extended feature spectrum. Subsequently, the MDCFM amalgamates the feature maps, processed via convolutional layers possessing divergent receptive fields across multiple convolutional trajectories, through an element-wise addition methodology. The structure of the MDCFM is as depicted in

Figure 1.

Input feature maps undergo sequential processing through three CBA (Convolution-BatchNorm-Activation) modules, each a composite of convolutional, batch normalization, and activation layers in a serial configuration. We incorporate the Gaussian Error Linear Unit (GELU) as the activation function within the CBA modules, with the computational progression of these modules delineated as

where

is the input feature map and

is the CBA output, with

indicating batch normalization. The CBA in the middle includes two dilated convolutions with dilations of 2 and 3, plus a convolution (dilation of 1), facilitating feature integration across varied receptive fields via element-wise addition. The module’s computational progression is outlined as

where

represents the output feature map produced by the fusion of multiple dilations, and

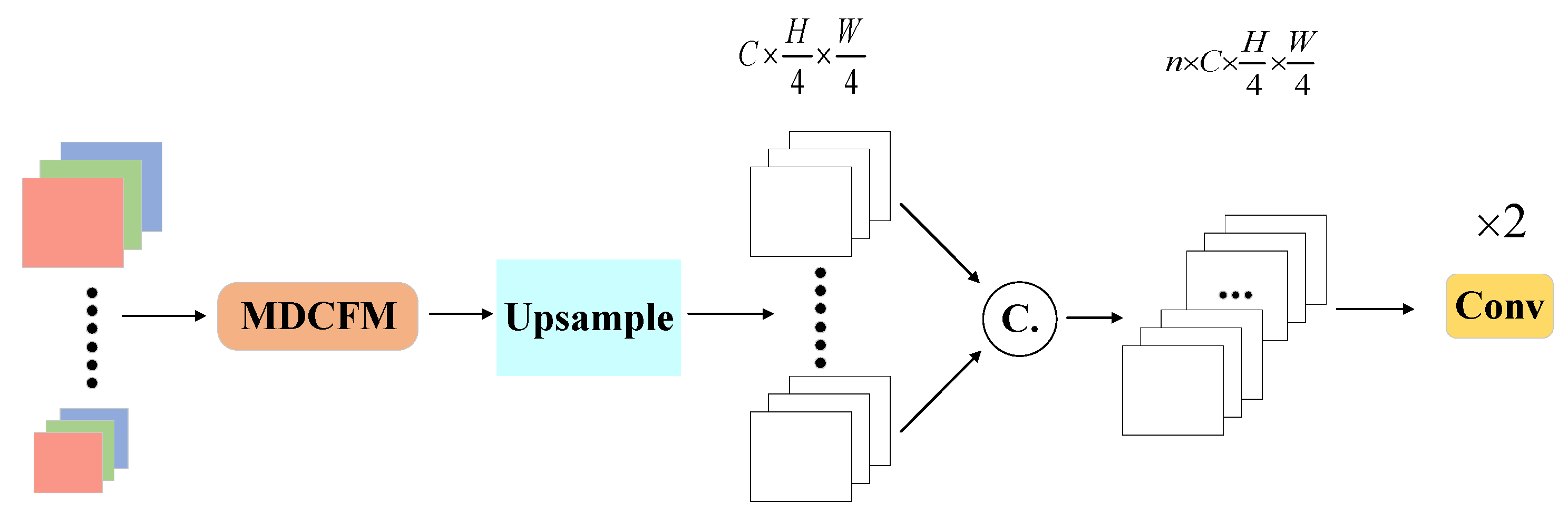

refers to the dilated convolution operation with a kernel size of 3 and a dilation rate of 2. This setup captures multilevel contextual information through different dilation rates, effectively generating enriched feature representation and enhancing the model’s adaptability to complex, varied spatial structures in remote sensing images via element-wise addition operation. The construction of MDCFD is shown in

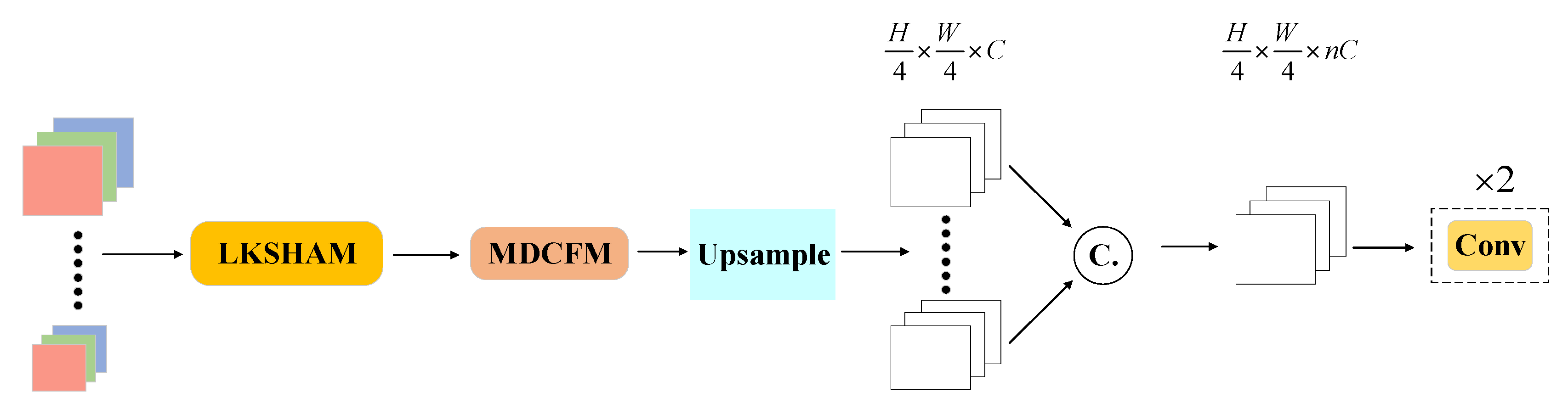

Figure 2.

After MDCFM processing, the n-channel feature maps are subjected to bilinear upsampling within the upsampling module, ensuring spatial feature consistency. The interpolation increases the map resolution to a quarter of its original width and height, avoiding direct return to original resolution. This approach reduces computational and memory demands while maintaining the spatial detail needed for accurate semantic segmentation. Lastly, a channel concatenation followed by a convolutional layer with output channels merges the feature maps to produce the decoder’s output.

2.2. Large Kernel Selection Hybrid Attention Module

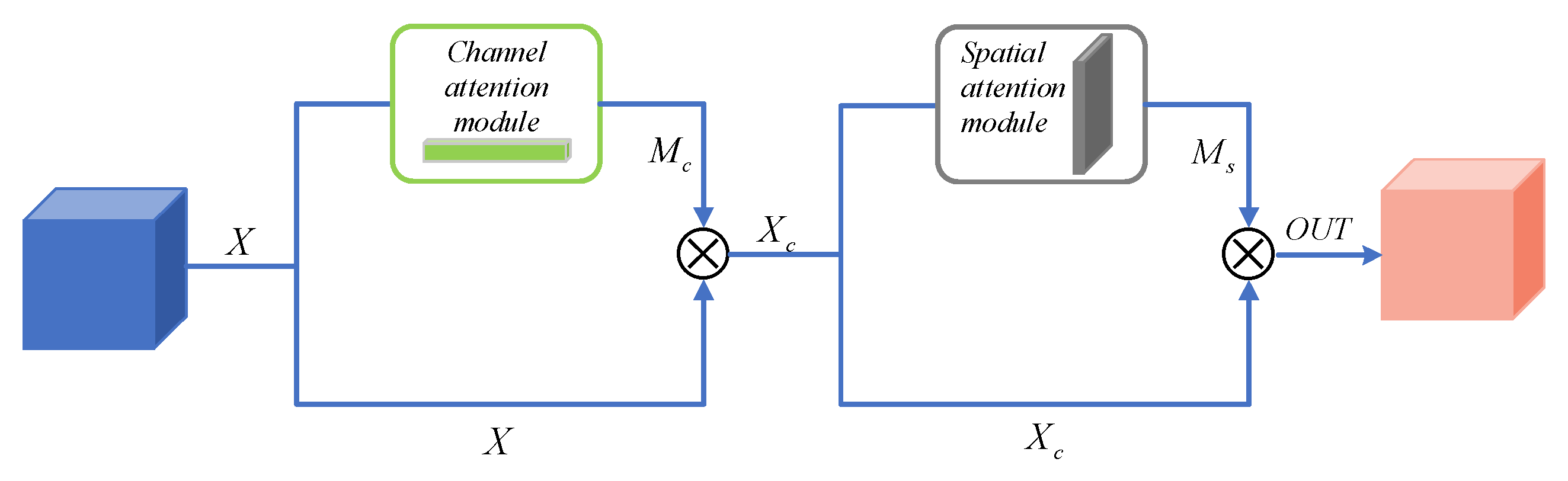

Inspired by the CBAM, we devise a Large Kernel Selection Hybrid Attention Module (LKSHAM) that adopts similar dual-submodule framework, as detailed in

Figure 3. In this structure, the preliminary output

is passed through a channel attention module to generate an attention mask

(Equation (3)). This mask is then element-wise multiplied with

, resulting in a channel-enhanced feature map

(Equation (4)). The enhanced map

is then processed by the spatial attention module, generating a spatial attention mask

for boosting spatial focus (Equation (5)). The combination of the mask

and the enhanced feature map

returns

, an output denoting increased attention concentration as shown in Equation (6).

2.2.1. Large Kernel Selection Spatial Attention Module

Taking inspiration from SKNet and LSKNet [

9], the present manuscript introduces a novel spatial attention module, denoted as the Large Kernel Selection Spatial Attention Module (LKSSAM), which integrates large kernel convolutions with a convolutional kernel selection mechanism.

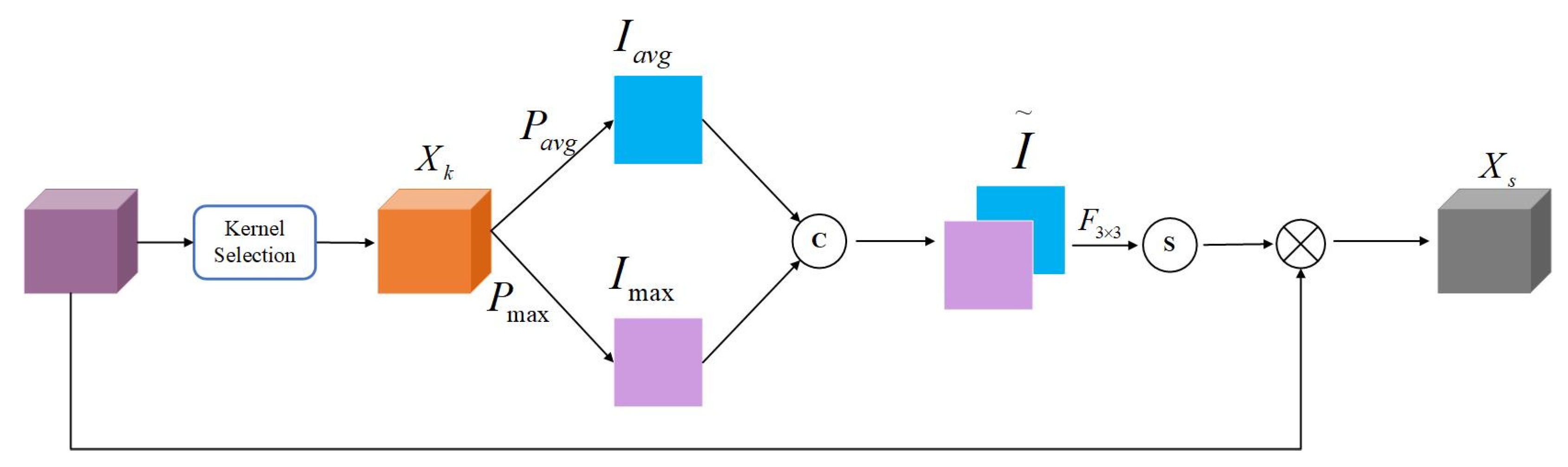

Figure 4 delineates the procedural mechanics of the convolutional kernel selection mechanism. The output feature map

, emanating from the antecedent network layer characterized by a batch size

and a channel count

, is subjected to a triad of depthwise convolutions—operations

,

, and

—originating from an expansive kernel convolution with a perceptive expanse of 17x17. Within this study, the dimensions of the kernel for operation

are stipulated as 3x3 with a corresponding dilation rate of 1, for

a kernel size of 5x5 with a dilation rate of 2, and for

, a kernel size of 3x3 with a dilation rate of 3 is established.

traverses the aforementioned paths

,

, and

to procure respective outputs

,

, and

, as expounded in the subsequent Equation.

After individual operations,

,

, and

are combined through concatenation at the channel dimension (dim=1), forming

as shown in the Equation (8).

undergoes global average pooling

to become

.

, after a 1x1 convolution maintaining channels at

and then expanding and reshaping, becomes the five-dimensional matrix

, as described in the Equations (9) and (10). Applying Softmax

to

’s next-to-last dimension yields the kernel selection weights matrix

, per Equation (11).

contains

sets of convolutional kernel selection weights, where the three elements within the kernel selection weights correspond to the selection coefficients for feature maps with different receptive fields after operations

,

, and

. After reshaping

to the shape of

, multiplicative interaction with

yields

, as depicted in Equation (12). Subsequently,

is divided along the penultimate dimension into three matrices, each with the shape of

. By performing an element-wise addition operation on these three matrices, the feature map

, post adaptive receptive field selection, is obtained. This process is detailed in Equation (13), where

denotes the matrix partitioning operation.

Figure 5 elucidates the comprehensive schema of the LKSSAM as proffered in this article. Emergent from the kernel selection module, the feature map

is subjected to both average pooling operation

and maximal pooling operation

, culminating in the respective outputs

and

, explicated by Equation (14). The execution of a concatenative operation along the channel axis synthesizes

and

into matrix

, characterized in Equation (15). Subsequent to the processing of

through a convolutional maneuver

with an output channel quantum fixed at one and a 3x3 kernel dimension, succeeded by a Sigmoid function, a spatial attention mask matrix

with elemental values confined within the interval (0,1) comes to fruition, as delineated in Equation (16). The element-wise product of

with the antecedent input

begets the LKSSAM-augmented construct

, as depicted in Equation (17).

2.2.2. Large Kernel Channel Attention Module

We introduce the Large Kernel Channel Attention Module (LKCAM), predicated on substantial kernel convolutions. The integration of this module in tandem with the LKSSAM begets the LKSHAM, a hybrid attention assembly. This synthesized configuration is engineered to adeptly harness and synthesize informational vectors across both spatial and channel dimensions.

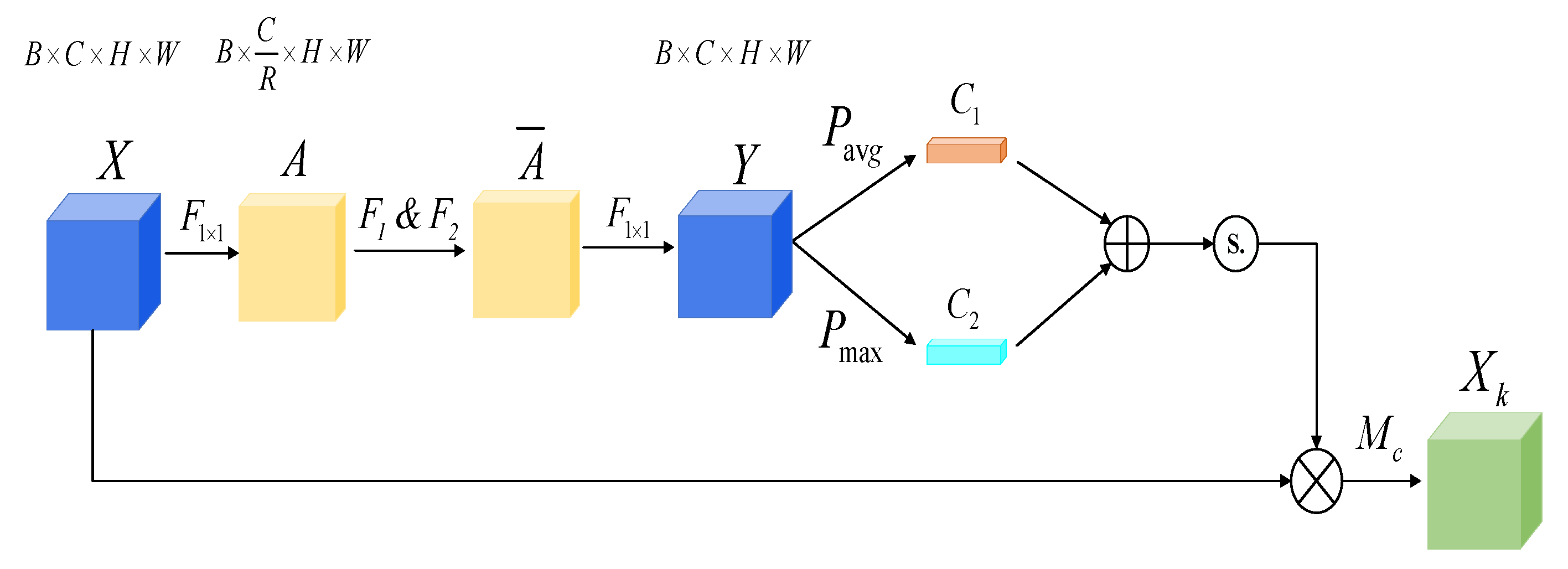

The architectural delineation of the LKCAM formulated within this paper is characterized in

Figure 6. As the output feature map

, bearing a batch size

and channel enumeration

from the neural network’s antecedent stratum, enters the module, it is initially engaged by a 1x1 convolutional exertion

outputting a channel number commensurate with

. To curtail the parameterization scale of the model, a channel reduction factor denoted as

is invoked, effectively attenuating the channel count of the input feature map to a fraction

, as elucidated in Equation (18).

Subsequent to operation

, the convolutional output

is processed through two subsequent depthwise convolutional operations, designated as

and

, encapsulated within Equation (19). Within the scope of this research, the kernel dimension for operation

is configured to a scale that corresponds to a dilation rate of 1, while operation

utilizes a kernel size of 7x7, paired with a dilation rate of 4. The sequential application of operations

and

is tantamount to a singular large kernel convolution with a receptive field of 29x29. The feature map

, refined by the large kernel convolution, progresses through a 1x1 convolutional layer with an output channel tally equating to

. This procedure culminates in the reinstatement of the channel magnitude for the resultant feature map

to

, as elucidated in Equation (20).

is concurrently processed by a global max pooling layer and a global average pooling layer, with their respective outputs being matrices

and

, as presented in Equation (21). After an element-wise addition of

and

, the outcome enters a Sigmoid activation layer. The output from the Sigmoid activation layer constitutes the channel attention mask

, this process being referenced in Equation (22). The elemental values within

span between 0 and 1, representing the weight values assigned by the channel attention module to

channels. By element-wise multiplication of the output from the previous layer of the neural network with

, the LKCAM-enhanced output

is obtained, as depicted in Equation (23). This design enables the model to discern the significance of different channels, which aids in emphasizing channel features that contribute significantly to the task at hand, while suppressing irrelevant channel information.

The construction of the model is shown in

Figure 7.

3. Results

3.1. Datasets and Data Pre-Processing

In order to ascertain the efficacy of the MDCFD decoder delineated, empirical assessments were conducted on the decoder’s ability to augment segmentation precision within models, utilizing the ISPRS Potsdam and ISPRS Vaihingen datasets. This was followed by a thorough examination and dialectic of the obtained outcomes.

(1) Potsdam Dataset: The Potsdam dataset, recognized for advancing object segmentation and scene analysis, includes various image formats such as RGB, IRRG, and DSM. Our study selectively employed the RGB format for evaluation. Categorically, it covers six types of land cover: roads, buildings, low vegetation, trees, cars, and miscellaneous areas (commonly termed as ‘clutter’).

(2) Vaihingen Dataset: This public dataset is key for advancing remote sensing object segmentation research and applications. It mainly includes 33 ‘TOP’ high-resolution aerial images, with a typical resolution of 2494*2064. The Vaihingen dataset additionally provides detailed elevation data through Digital Surface Models (DSM) and Normalized Digital Surface Models (NDSM), with precise ground sampling accuracy up to 9 centimeters. Its object categories align with those in the Potsdam dataset. We exclusively utilized the ‘TOP’ images, without DSM and NDSM data.

(3) Data Pre-Processing: The high-resolution images from the Potsdam and Vaihingen datasets necessitate preprocessing through cropping to reduce memory load, hence images were resized to 512*512. We analyzed six land cover categories from Potsdam and five corresponding categories from Vaihingen, excluding clutter, to evaluate the segmentation capability of our model.

3.2. Implementation Details

3.2.1. Hardware Environment

The investigative methodology employed herein pivots around the following hardware orchestration: an Intel Core i9-9900K CPU indexed at a base frequency of 3.6GHz and structured with an aggregate of 16 threads; an NVIDIA GeForce GTX 2080Ti GPU; alongside a computational platform appointed for experimental conduction, provisioned with a memory allocation of 64 GB and storage capability of 4 TB.

3.2.2. Software Environment

The computational experiments outlined in this article employed Ubuntu 20.04 as the operating system, alongside CUDA 11.3 for the parallel computing framework. To facilitate the management of virtual environments crucial for the training and inference of models, this research makes use of the Anaconda virtual environment management system. Detailed configurations are delineated in the table below:

Table 1.

The list of hardware and software environments relied on by the experiment.

Table 1.

The list of hardware and software environments relied on by the experiment.

| Hardware/Software |

Parameter/Version |

| CPU |

Intel Core i9-9900K |

| GPU |

GeForce GTX 2080Ti |

| Memory Allocation |

64GB |

| Storage Capability |

4TB |

| Operation System |

Ubuntu 20.04 |

| Python |

3.8.2 |

| CUDA |

11.3 |

| PyTorch |

1.12.1 |

| mmcv |

2.0.0 |

| mmsegementation |

1.2.2 |

| numpy |

1.24.4 |

| opencv |

4.9.0 |

3.2.3. Hyperparameter Settings

During the training regimen, the Adam optimizer is selected, featuring the beta coefficients of the Adam algorithm designated as 0.9 for and 0.999 for . The instructional rate for model training is established at 6e-5, while the regularization term for weight decay is anchored at 0.01. The learning rate experiences adaptive alterations in line with the Poly learning rate policy, where the Poly power is defined as 1. We stop after 160000 epochs.

3.3. Ablation Study

To establish the MDCFD decoder and hybrid attention module’s accuracy, universality, and effectiveness in remote sensing segmentation, we merged them with top-performing models for comparative evaluation against original models. The hybrid attention module was also added to MDCFD-enhanced models to determine its performance impact. In our ablation studies, we first paired the MDCFD and LKSHAM with the Segformer’s MiT encoder, choosing the parameter-rich MiT-B5 encoder. Secondly, we combined them with HRNetV2 [

10], using the robust HRNetV2-W48 model. The outcomes are presented in

Table 2.

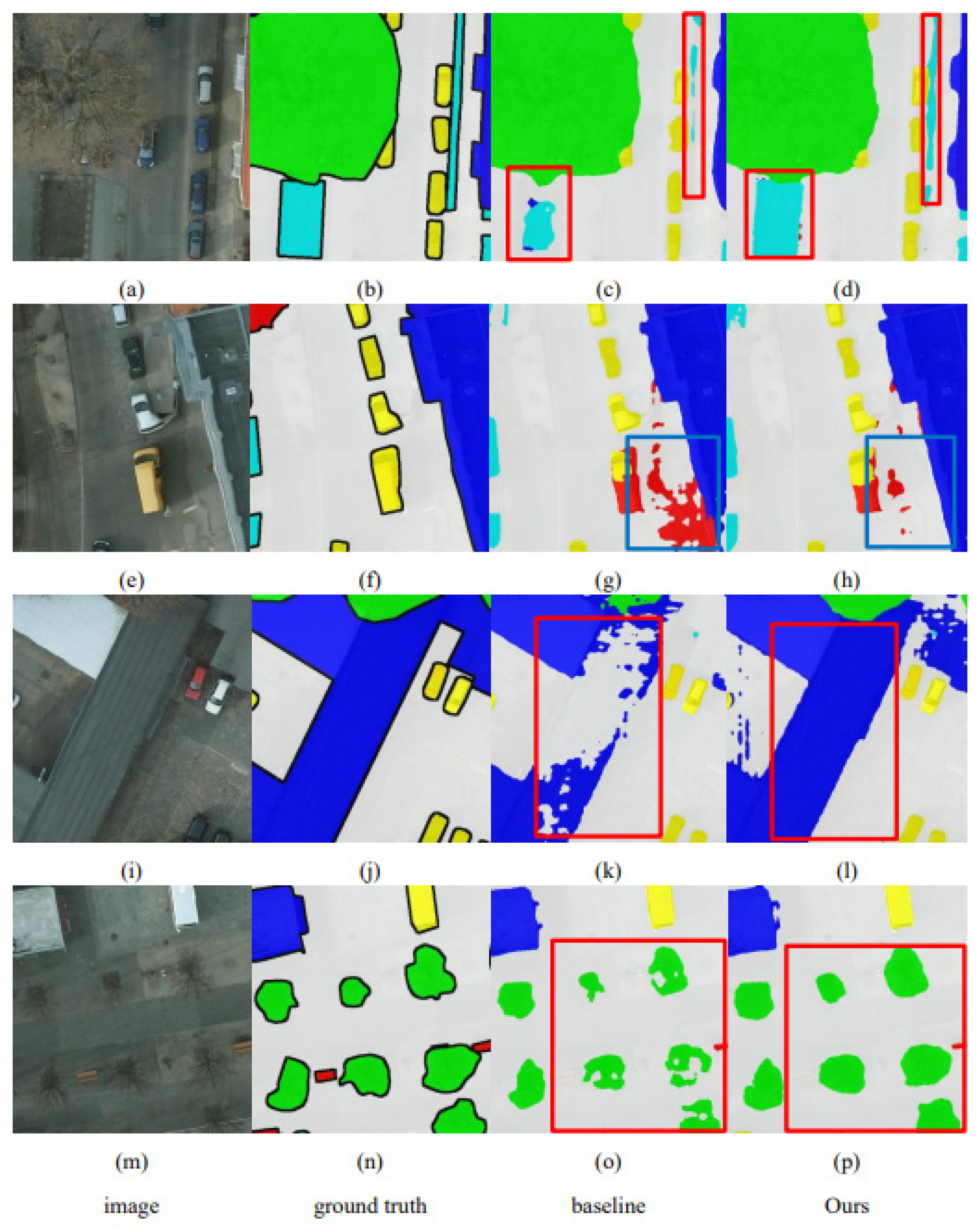

Figure 7 graphically shows the improved segmentation accuracy of the model due to the decoder, using a set of image comparisons. The first column displays the input images for the neural network, the second column presents the labels (ground truth), as manually marked by the data compilers. The third column illustrates the predictions from the baseline model used as the control, and the fourth column shows the predicted results from the enhanced model per the architectural advancements discussed in this paper.

To assess the detection performance of the two structures suggested in this article (‘Ours’), we have compared the improved Segformer and HRNetV2 models based on our proposal with conventional land cover segmentation algorithms. The results are illustrated in

Table 3

Study results confirm the effectiveness of the two designs introduced in this paper for improving remote sensing-based land cover segmentation. Implemented designs in models from our experiments showed marked improvements in mIoU and mF1 accuracy metrics. Specifically, models using these designs in the Potsdam dataset improved mIoU by up to 1.5% and mF1 by up to 1.2%. All categories saw F1 score increases, especially ‘Car’ by 1.8%. Vaihingen dataset models noted mIoU rises of up to 1.7%, mF1 by up to 1.2%, with ‘car’ category F1 jumping 2.4%. These restructured models outperformed traditional segmentation algorithms in precision.

Figure 8 visually displays how the two architectural configurations discussed in this paper enhance model segmentation precision, as illustrated by four image set examples.

Figure 8c,d with red borders highlight the model’s advanced land cover classification accuracy after adopting the architectural upgrades discussed. While

Figure 8c shows the baseline model’s misclassified pixels over a large area,

Figure 8d demonstrates significant improvement, indicating precise pixel classification due to the new designs. This suggests the proposed architecture enhances land cover differentiation. The azure borders in

Figure 8g depict many background misclassifications, greatly reduced in

Figure 8h by the modified model, showing the designed attention modules reduce background noise.

Figure 8o,p,k,l represent small and large land cover features, respectively, illustrating the model’s improved segmentation across different scales, highlighting the system’s adaptability to process varied target sizes efficiently.

4. Discussion

We introduced a multi-dilation rate convolution fusion decoder and a large-core convolution-based hybrid attention module LKSHAM, aiming to boost remote sensing image segmentation accuracy. These designs aim to broaden the model’s receptive field, enhancing global feature perception and foreground-background distinction. Notably, our approach considerably enhanced mIoU and mF1 segmentation metrics. On the Potsdam dataset, our model showed achieved mIoU improvements of 1.5% and 1.4% and mF1 gains of 1.2% and 1.1%. The Vaihingen dataset results corroborated this, with mIoU rises of 1.7% and 1.2%, and mF1 improvements of 1.2% and 0.8%. These achievements were made possible by our convolution kernel redesign, which not only minimized computational demands but also elevated model segmentation efficiency across varying scales through tailored convolution kernel strategies.

5. Conclusions

Our study advances remote sensing image segmentation by integrating novel structural enhancements into models, particularly a multi-dilation rate convolution fusion decoder (MDCFD) and a large-kernel based hybrid attention module (LKSHAM). These innovations have proven to enhance accuracy in differentiating complex landscape features. By addressing common challenges such as uneven foreground-background distribution and varying target scales, our work significantly improves segmentation capabilities across varied scenarios and scales depicted in remote sensing imagery. The consistent improvement in performance metrics on the Potsdam and Vaihingen datasets validates the effectiveness of our approach.

Author Contributions

Conceptualization, G.L. and X.W.; methodology, G.L. and C.L.; software, C.L. and X.Z.; validation, Y.L.; formal analysis, X.Z. and X.W.; writing—original draft preparation, J.X. and X.W.; visualization, J.X.; funding acquisition, X.W. All authors have read and agreed to the published version of the manuscript.

Funding

This research was funded by the China Postdoctoral Science Foundation (2013M540735); by the National Nature Science Foundation of China under Grant 61901388, 61301291, 61701360; by the 111 Project under Grant B08038; by the Shaanxi Provincial Science and Technology Innovation Team; by the Fundamental Research Funds for the Central Universities; by the Youth Innovation Team of Shaanxi Universities.

Data Availability Statement

Data is available on request.

Conflicts of Interest

The authors declare no conflicts of interest.

References

- Zheng, Z.; Zhong, Y.; Wang, J.; Ma, A. Foreground-Aware Relation Network for Geospatial Object Segmentation in High Spatial Resolution Remote Sensing Imagery. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition; 2020; pp. 4096–4105.

- Liu, W.; Li, Q.; Lin, X.; Yang, W.; He, S.; Yu, Y. Ultra-High Resolution Image Segmentation via Locality-Aware Context Fusion and Alternating Local Enhancement. arXiv 2021, arXiv:2109.02580. [Google Scholar]

- Ma, A.; Wang, J.; Zhong, Y.; Zheng, Z. FactSeg: Foreground Activation-Driven Small Object Semantic Segmentation in Large-Scale Remote Sensing Imagery. IEEE Transactions on Geoscience and Remote Sensing 2021, 60, 1–16. [Google Scholar] [CrossRef]

- Xu, Z.; Zhang, W.; Zhang, T.; Yang, Z.; Li, J. Efficient Transformer for Remote Sensing Image Segmentation. Remote Sensing 2021, 13, 3585. [Google Scholar] [CrossRef]

- Wang, L.; Li, R.; Duan, C.; Zhang, C.; Meng, X.; Fang, S. A Novel Transformer Based Semantic Segmentation Scheme for Fine-Resolution Remote Sensing Images. IEEE Geoscience and Remote Sensing Letters 2022, 19, 1–5. [Google Scholar] [CrossRef]

- Xu, R.; Wang, C.; Zhang, J.; Xu, S.; Meng, W.; Zhang, X. Rssformer: Foreground Saliency Enhancement for Remote Sensing Land-Cover Segmentation. IEEE Transactions on Image Processing 2023, 32, 1052–1064. [Google Scholar] [CrossRef] [PubMed]

- Xie, E.; Wang, W.; Yu, Z.; Anandkumar, A.; Alvarez, J.M.; Luo, P. SegFormer: Simple and Efficient Design for Semantic Segmentation with Transformers. Advances in neural information processing systems 2021, 34, 12077–12090. [Google Scholar]

- Zheng, S.; Lu, J.; Zhao, H.; Zhu, X.; Luo, Z.; Wang, Y.; Fu, Y.; Feng, J.; Xiang, T.; Torr, P.H. Rethinking Semantic Segmentation from a Sequence-to-Sequence Perspective with Transformers. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition; 2021; pp. 6881–6890. [Google Scholar]

- Li, Y.; Hou, Q.; Zheng, Z.; Cheng, M.-M.; Yang, J.; Li, X. Large Selective Kernel Network for Remote Sensing Object Detection. In Proceedings of the IEEE/CVF International Conference on Computer Vision; 2023; pp. 16794–16805. [Google Scholar]

- Wang, J.; Sun, K.; Cheng, T.; Jiang, B.; Deng, C.; Zhao, Y.; Liu, D.; Mu, Y.; Tan, M.; Wang, X. Deep High-Resolution Representation Learning for Visual Recognition. IEEE transactions on pattern analysis and machine intelligence 2020, 43, 3349–3364. [Google Scholar] [CrossRef] [PubMed]

- Li, G.; Yun, I.; Kim, J.; Kim, J. Dabnet: Depth-Wise Asymmetric Bottleneck for Real-Time Semantic Segmentation. arXiv 2019, arXiv:1907.11357. [Google Scholar]

- Hu, P.; Perazzi, F.; Heilbron, F.C.; Wang, O.; Lin, Z.; Saenko, K.; Sclaroff, S. Real-Time Semantic Segmentation with Fast Attention. IEEE Robotics and Automation Letters 2020, 6, 263–270. [Google Scholar] [CrossRef]

- Li, R.; Zheng, S.; Zhang, C.; Duan, C.; Wang, L.; Atkinson, P.M. ABCNet: Attentive Bilateral Contextual Network for Efficient Semantic Segmentation of Fine-Resolution Remotely Sensed Imagery. ISPRS journal of photogrammetry and remote sensing 2021, 181, 84–98. [Google Scholar] [CrossRef]

- Srinivas, A.; Lin, T.-Y.; Parmar, N.; Shlens, J.; Abbeel, P.; Vaswani, A. Bottleneck Transformers for Visual Recognition. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition; 2021; pp. 16519–16529. [Google Scholar]

- Wang, L.; Li, R.; Wang, D.; Duan, C.; Wang, T.; Meng, X. Transformer Meets Convolution: A Bilateral Awareness Network for Semantic Segmentation of Very Fine Resolution Urban Scene Images. Remote Sensing 2021, 13, 3065. [Google Scholar] [CrossRef]

- Strudel, R.; Garcia, R.; Laptev, I.; Schmid, C. Segmenter: Transformer for Semantic Segmentation. roceedings of the IEEE/CVF international conference on computer vision; 2021; pp. 7262–7272. [Google Scholar]

- Fu, J.; Liu, J.; Tian, H.; Li, Y.; Bao, Y.; Fang, Z.; Lu, H. Dual Attention Network for Scene Segmentation. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition; 2019; pp. 3146–3154. [Google Scholar]

- Li, X.; Zhong, Z.; Wu, J.; Yang, Y.; Lin, Z.; Liu, H. Expectation-Maximization Attention Networks for Semantic Segmentation. In Proceedings of the IEEE/CVF international conference on computer vision; 2019; pp. 9167–9176. [Google Scholar]

- Li, X.; He, H.; Li, X.; Li, D.; Cheng, G.; Shi, J.; Weng, L.; Tong, Y.; Lin, Z. Pointflow: Flowing Semantics through Points for Aerial Image Segmentation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition; 2021; pp. 4217–4226. [Google Scholar]

|

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).